1. Introduction

During recent decades, eye gaze analysis and eye state recognition have made up an active research field due to their direct implication in emerging areas such as clinical diagnosis or Human–Machine Interface (HMIs). The ocular state of the user and his/her gaze movements can reveal important features from its cognitive condition, which can be crucial for health care purposes but also for the analysis of daily life activities. Hence, it has been studied and applied in several domains such as driver drowsiness detection [

1,

2,

3], robot control [

4], infant sleep–waking state identification [

5] or seizure detection [

6], among others [

7,

8].

Different techniques have been proposed for studying eye gaze and eye state, such as Videooculography (VOG), Electrooculography (EOG) and Electroencephalography (EEG). In VOG [

9,

10], several cameras record videos or pictures of the user’s eyes and, by applying image processing and artificial vision algorithms, provide an accurate analysis of the eye state of the user. In EOG [

11,

12,

13,

14,

15], some electrodes are placed on the user’s skin near to the eyes in order to capture the electrical signals produced by the ocular activity. On the other hand, in the EEG technique [

16,

17], the electrical signals produced by the brain are measured using electrodes placed on the scalp of the user. The computational complexity associated with the algorithms employed in the image-based methods, such as VOG, is considerably higher than those used in EOG and EEG due to the costly process of analyzing and classifying multiple images [

18]. The EOG method seems to be an interesting technique for building HMIs based on eye movements or blinking, but the placement of electrodes on the user’s face might be uncomfortable and not usable in practical applications [

19]. Thus, the EEG technique is an attractive solution for developing new interfaces that, based on the eye state of the user, can analyze and infer its cognitive state (relaxed, stressed, asleep, etc.), which could be crucial information for the implementation of real applications.

EEG is a popular technique for neuroimaging and brain signal acquisition widely used in the study of brain disorders [

20] and in Brain–Computer Interface (BCI) systems [

21]. EEG has several advantages such as its high portability and temporal resolution, its relatively low cost, and its ease of use [

22,

23] when compared to other brain signal acquisition techniques such as Magnetoencephalography (MEG), Electrocorticography (ECoG) or functional Magnetic Resonance Imaging (fMRI). Particularly, EEG-based eye state detection has been applied in several domains, such as, for example, clinical diagnosis and health care. In this regard, Naderi et al. [

6] propose a technique based on EEG time series and Power Spectral Density (PSD) features and on the use of a Recurrent Neural Network (RNN) for its classification. Their technique distinguished a relaxed and open eye state from an epileptic seizure with an accuracy of

. In another study on the same data set, Acharya et al. [

24] propose the employment of Convolutional Neural Networks (CNNs) for the development of a Computer-Aided Diagnosis (CAD) system that automatically detects seizures. Their technique achieves an accuracy, specificity, and sensitivity of

,

, and

, respectively. Moreover, EEG-based eye state detection has been successfully applied for automatic driver drowsiness detection. Yeo et al. [

25] proposed to use Support Vector Machine (SVM) as classification algorithm to identify and differentiate EEG changes that occur between alert and drowsy states. Four main EEG rhythms (delta, theta, alpha and beta) were employed for extracting different frequency features, such as dominant frequency, frequency variability, center of gravity frequency and the average power of dominant peak. Their method reached a classification accuracy of

and was also able to predict the transition from alertness to drowsiness with an accuracy over

. Furthermore, EEG eye state identification has been employed for the interaction with BCIs. For instance, Kirkup et al. [

26] present a home automation control system for a rapid ON/OFF switch appliance. This calculates a threshold employing alpha band to determine the user’s eye state and control external devices.

Due to the wide variety of areas where the EEG eye state detection can be applied, several methods have been presented to achieve higher classification accuracies. In this sense, Rösler and Sunderman [

27] tested 42 classification algorithms in terms of their performances to predict the eye state. For this purpose, a dataset containing the two possible ocular states was recorded using the 14 channels of the Emotiv EPOC headset. The reported results showed that standard classifiers such as naïve Bayes, Artificial Neural Networks (ANNs) or Logistic Regression (LR) offered poor classification accuracies, while instance-based algorithms such as IB1 or KStar offered significantly higher results. The latter classifier achieved the best performance with a classification accuracy of

. However, it took at least

to classify the state of new instances. Moreover, the dataset included the data of only one subject, so the authors cannot assure that the obtained results are generalizable. Several works have presented new classification methods based on this dataset. For example, Wang et al. [

17] proposed to extract channel standard deviations and averages as features for an Incremental Attribute Learning (IAL) algorithm and achieve an error rate of

for eye state classification. In a more recent study, Saghafi et al. [

28] propose to study the maximum and minimum values in the EEG signals in order to detect any eye state change. Once this change has been detected, the last two seconds of the signal are low-pass filtered below

and passed through Multivariate Empirical Mode Decomposition (MEMD) for feature extraction. These features are fed into a classification algorithm to confirm the eye state change. For this purpose, they tested ANNs, LR and SVM. Their proposed algorithm using LR as a classifier detected the eye state with an accuracy of

in less than

. Hamilton et al. [

29] proposed a new system based on eager learners (e.g., decision trees) in order to improve the classification time achieved by Rösler and Sunderman [

27]. For this purpose, three ensemble learners were evaluated: a rotational forest that implements random forests as its base classifiers, a rotational forest that implements J48 trees as its base classifiers and is boosted by adaptive boosting, and an ensemble of the rotational random forest model with the KStar classifier. The results achieved in the study showed that the approach using J48 trees and adaptive boosting offered accurate classification rates within the time constraints of real-time classification.

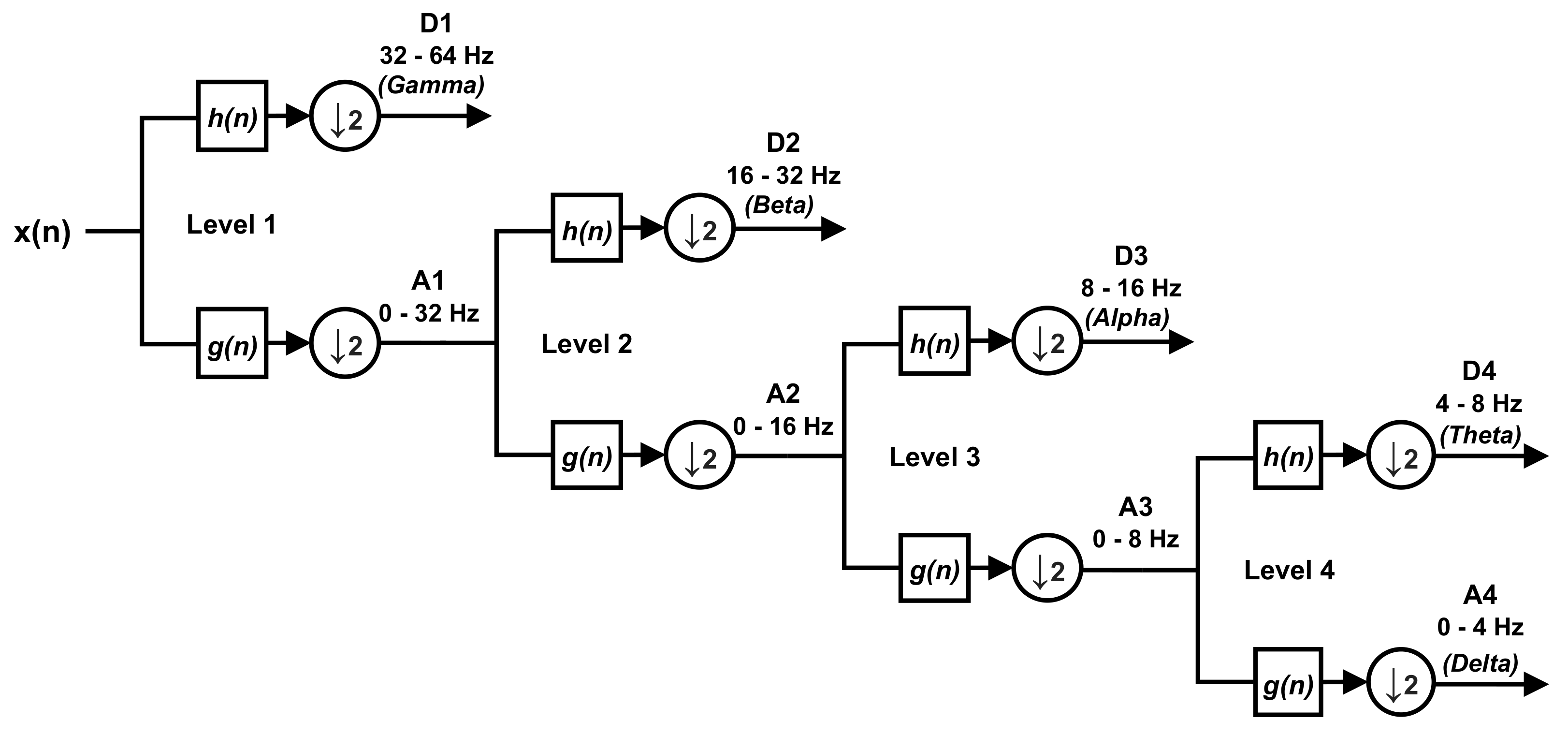

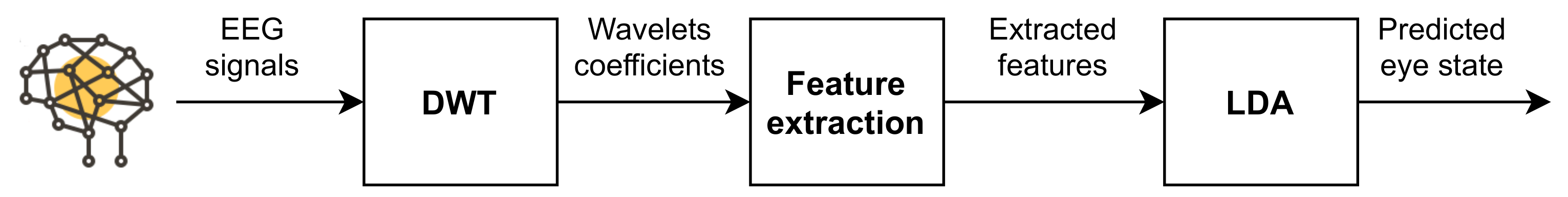

Although the aforementioned papers show methods to detect eye states with high accuracy, they usually gather the brain activity using a large number of electrodes and voluminous EEG devices, which might be cumbersome and uncomfortable for real-life applications. In order to avoid these limitations, we present an EEG-based system that employs a reduced number of electrodes for capturing the brain signals. For this purpose, we extend our prototype presented in [

16] to the case of two input channels in order to build a multi-dimensional feature set that improves the detection rates and reduces the response time of the system. We study and compare two algorithms with low computational complexity for eye state detection. For feature extraction, we employed the Discrete Wavelet Transform (DWT), which presents lower computational complexity than other widely known algorithms such as the Fast-Fourier Transform (FFT) [

30]. For feature classification, we applied Linear Discriminant Analysis (LDA), a popular technique in BCI systems, which also presents low computational requirements [

23,

31].

The paper is organized as follows.

Section 2 shows the theoretical background of DWT and features classifiers.

Section 3 describes the proposed system.

Section 4 defines the materials and methods employed in the experiments.

Section 5 shows the obtained results. Finally,

Section 6 analyzes these results and

Section 7 presents the most relevant conclusions of this work.

4. Materials and Methods

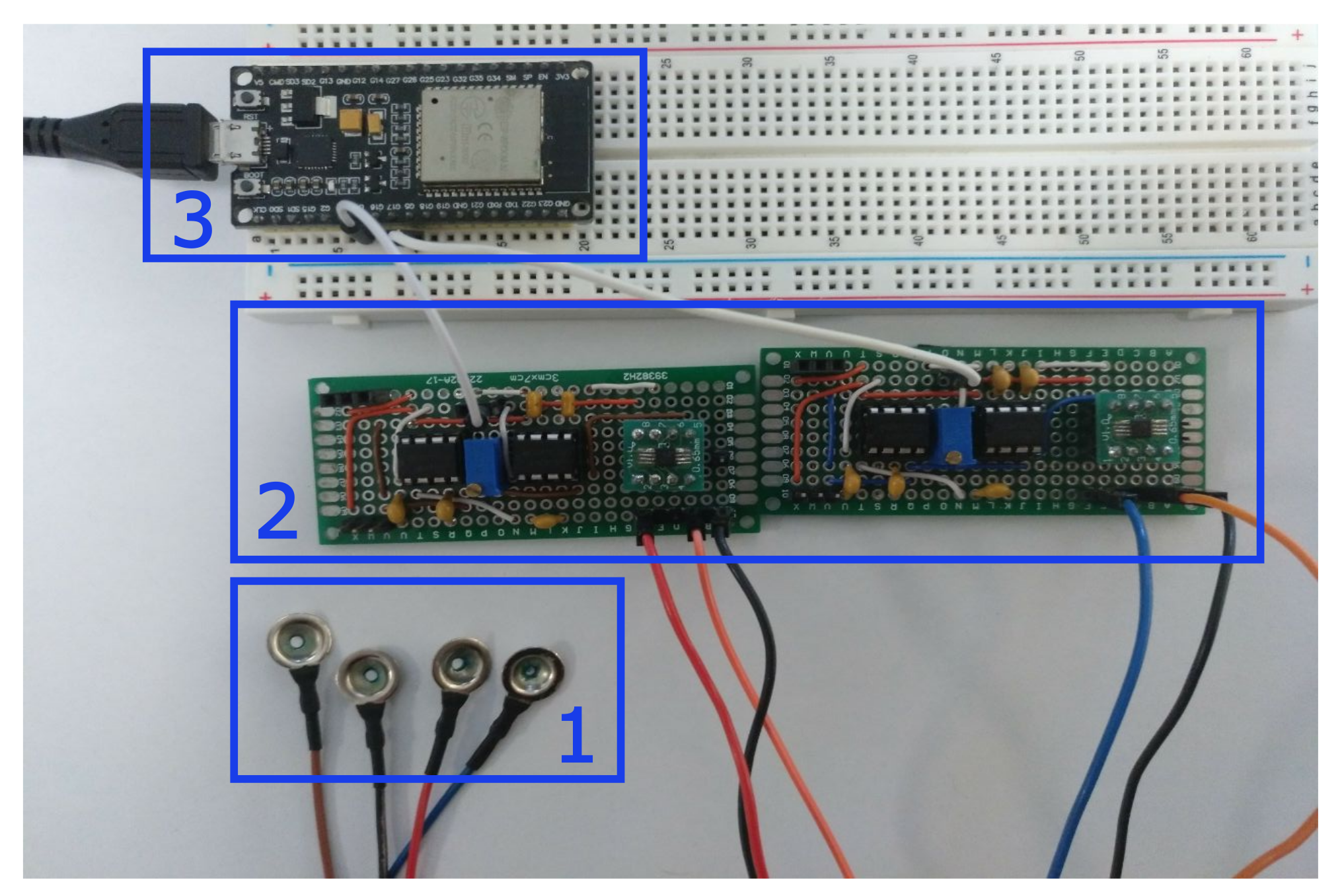

To evaluate the suitability of the proposed system, we have carried out a series of experiments with a participant group who agreed to participate in the research. This participant group included a total of 7 volunteers with an average age of (range 24–56). The participants indicated that they do not have hearing or visual impairments. Participation was voluntary and informed consent was obtained for each participant in order to employ their EEG data in our study.

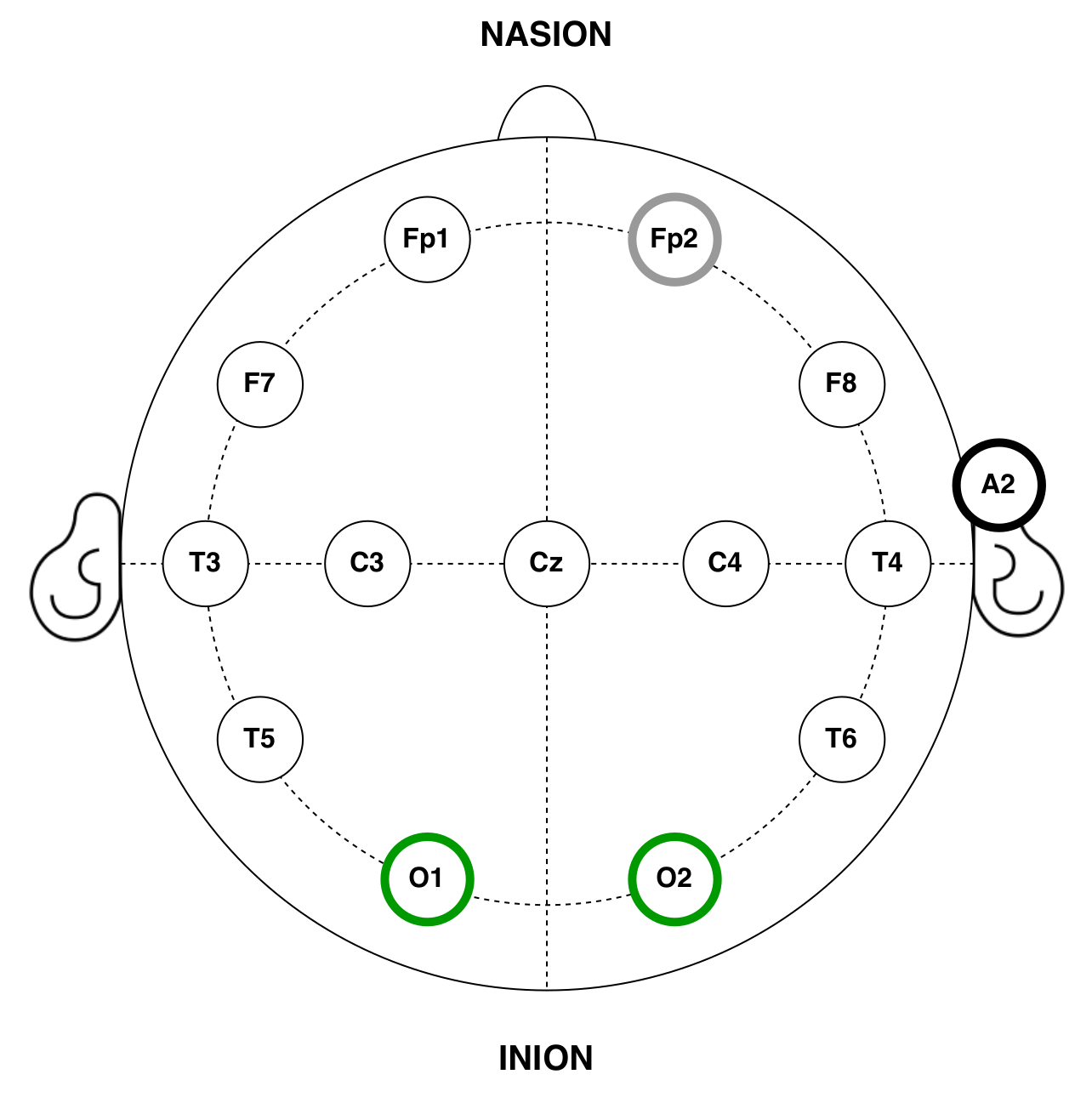

Our EEG prototype was used to capture the brain activity of the subjects. Gold cup electrodes were placed in accordance with the 10–20 international system for electrode placement [

54] and attached to the subjects scalp using a conductive paste. Electrode–skin impedances were below

at all electrodes.

Several studies have proved that the alpha rhythm predominates in the occipital area of the brain when subjects remain with their eyes closed and it is reduced when visual stimulation takes place [

55,

56,

57]. In accordance with these works, the input channels of the EEG devices were located in the O1 and O2 positions. Moreover, to optimize the setup time and EEG signal quality, the reference and ground electrodes were placed in the FP2 and A1 positions, respectively, where the absence of hair facilitates its placement [

58] (see

Figure 4).

All the experiments were conducted in a sound-attenuated and controlled environment. Participants were seated in a comfortable chair, and asked to be relaxed and focused on the task, trying to avoid any distraction or external stimulus. Experiments were composed of 2 tasks: the first one, of oE and the second, of cE. In order to simulate a real-life situation, the subject could freely move his gaze during the eye-open tasks, without the need to keep it at a fixed point. The procedure was conveniently explained in advance allowing the participants to feel comfortable and familiar with the test environment. Moreover, possible artifacts were minimized by asking them not to speak, move or blink (or at least as little as possible) throughout the oE task. Electrode–skin impedance was below at all the electrodes.

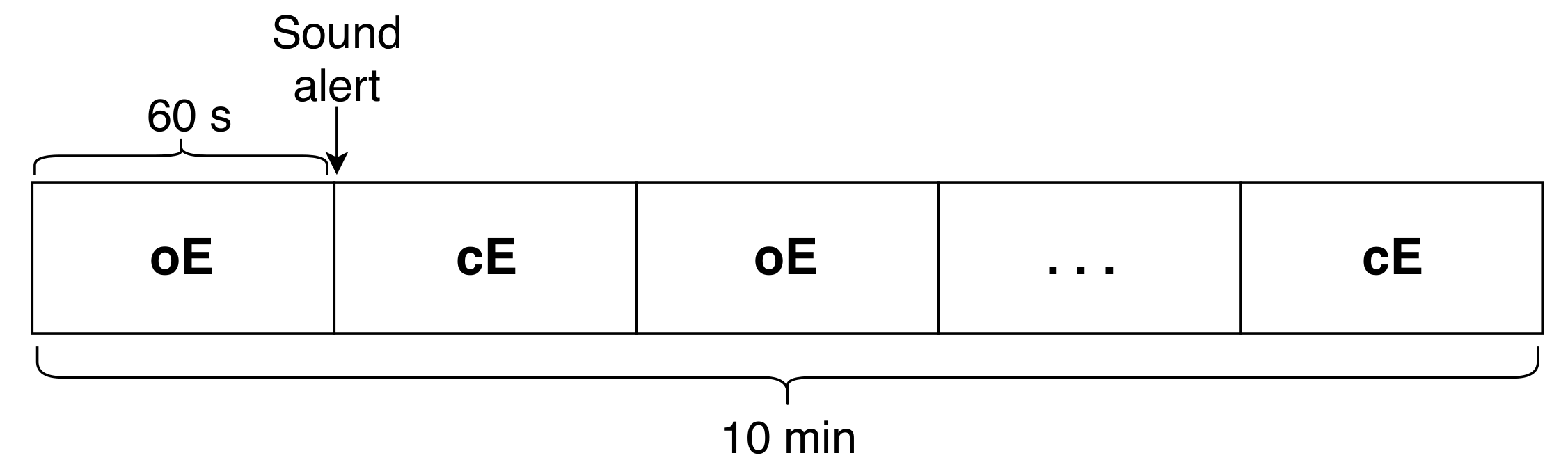

A total of 10 tasks (i.e.,

) were continuously recorded for each participant, which corresponds to 5 tasks of oE and 5 tasks of cE. Each task was separated by a sound alert, which indicated the user to change the state. All the experiments started with oE as the initial state (see

Figure 5). The captured signals were filtered between 4 and

and the mean of the signal was subtracted.

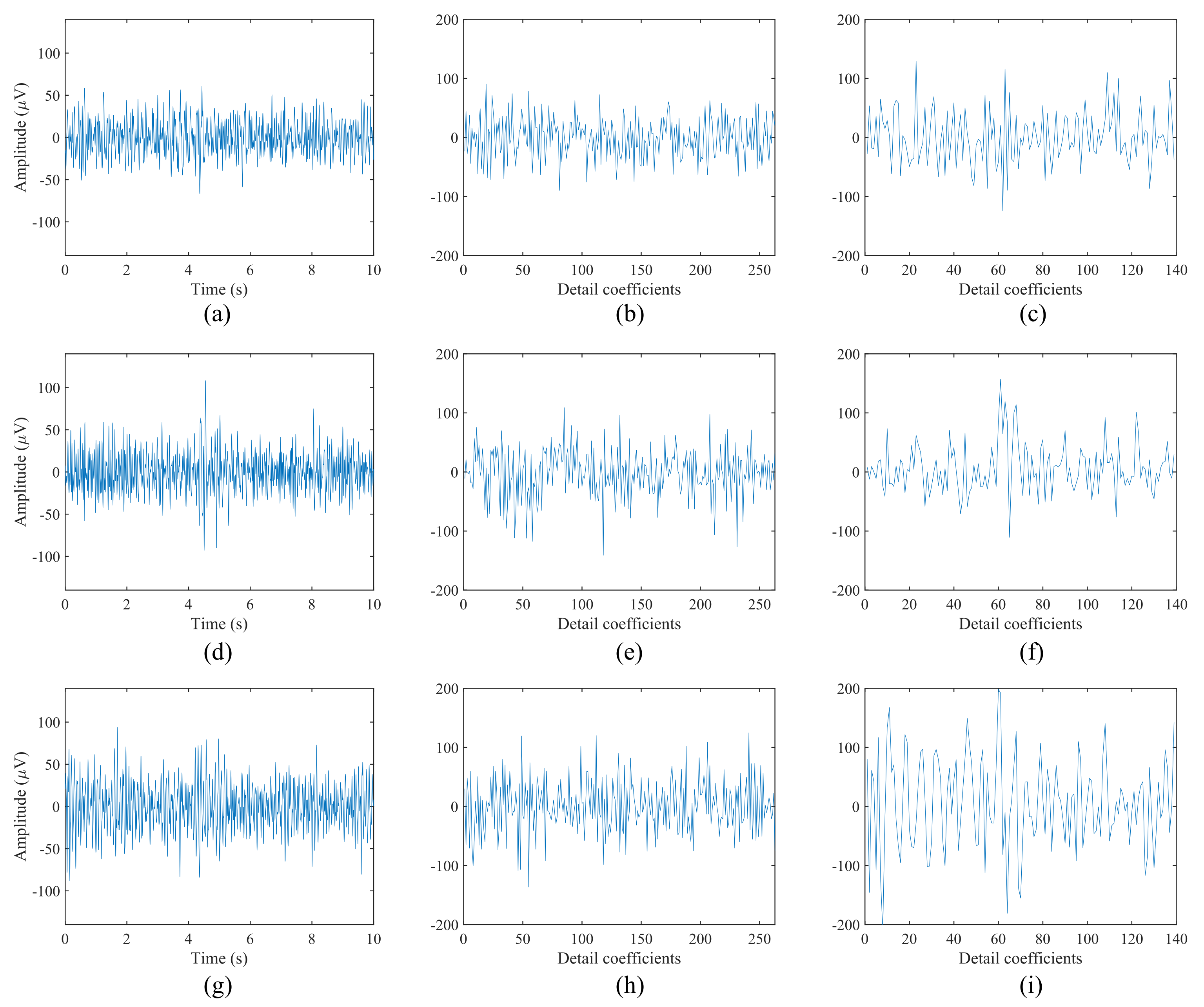

Since an essential feature of our study is to provide a reliable system with high accuracy rates, several types of wavelets, already used in previous works for EEG analysis, were evaluated and compared for extracting the features. In particular, nine types of wavelets were tested: db2, db4, db8, coif1, coif4, haar, sym2, sym4, and sym10.

Moreover, overlapped windows have been used for extracting the features. We have considered time windows of D seconds and an overlapped time slot of d seconds. It is important to note that, using this technique, the response time of the system is directly related to D and d, i.e., the decision delay, which is the wait time for a new classifier decision, is given by s. Hence, in order to find the shortest response time with a reliable accuracy rate, we have evaluated our system using several window sizes, ranging from 1 to 10 s. The size selected for d was constant for all the experiments: of the size of D.

To avoid classification bias, a 5-fold cross-validation technique is applied for training and evaluating the classifier—that is, of the data were used for training the algorithm and the remaining were used for testing it. In our experiments, it means that 8 out of the ( for each eye state) were used to train the LDA classifier and the remaining ( for each eye state) were used for testing it. This process was repeated 5 times using each minute of each eye state once for testing the classifier. Therefore, the accuracy results shown throughout this work correspond to an average of all these executions using the different training and test sets.

6. Discussion

Several solutions have been proposed during recent decades for the detection of the eye state through EEG activity [

17,

27,

29]. However, these solutions usually capture the brain signals using large and voluminous devices, which are cumbersome and uncomfortable for the final user. The main goal of the presented study is to develop a new system for eye state identification based on an open EEG device that gathers the brain activity using a reduced number of electrodes. For this purpose, the DWT and the LDA were applied for feature extraction and feature classification, respectively.

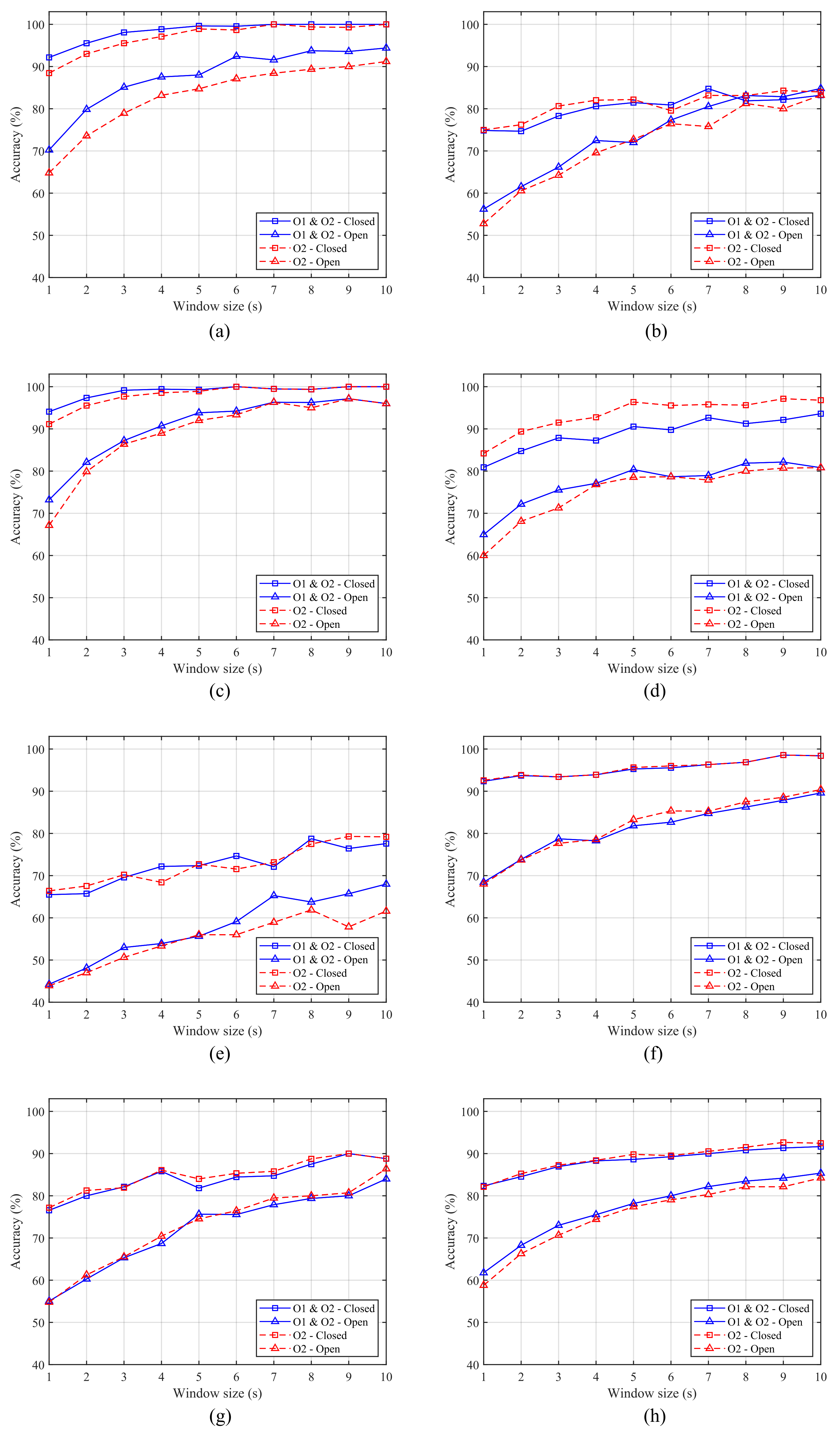

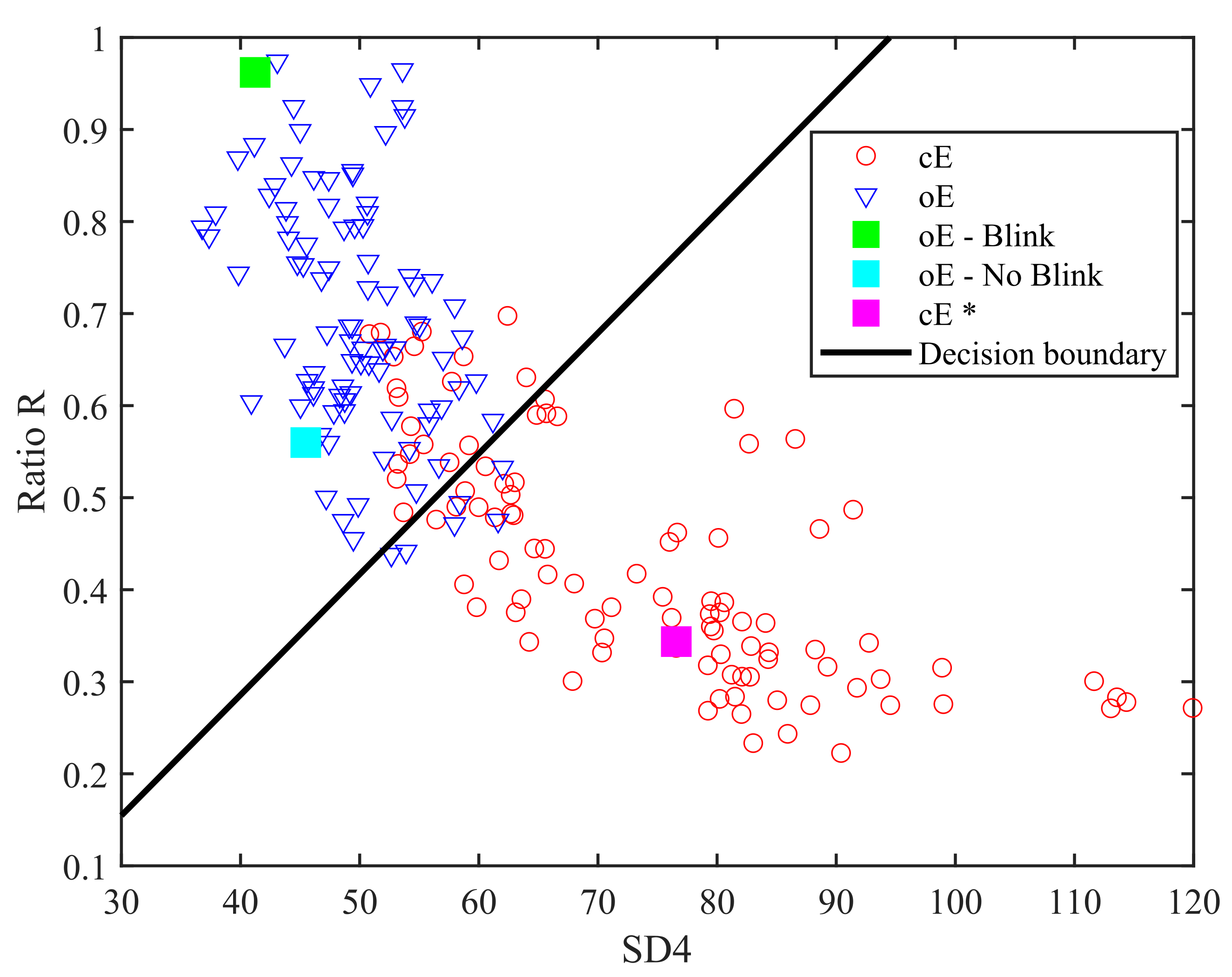

Furthermore, different feature schemes are compared in order to determine which of them offers the best classification accuracy and response time. From

Table 2,

Table 3,

Table 4 and

Table 5, we can see that the scheme, which considers two features (

and the ratio

R) offers higher results than those achieved by the scheme composed of a single feature for all the mother wavelets (

Table 2 and

Table 4) and six of the seven subjects (

Table 3 and

Table 5). This difference becomes more apparent for the oE case, especially when small window sizes are employed (see

Figure 6 and

Figure 9). Moreover, considering that for the real implementation of the system an average accuracy greater than

is required for both ocular states, we can see from

Figure 6h and

Figure 9h that Scheme 2 achieves it at

, while Scheme 1 needs

.

Several shapes for wavelet functions have been proposed for the analysis of EEG signals, such as Haar, Daubechies (db2, db4 and db8), Coiflets (coif1, coif4) or Symlets (sym2, sym4, sym10). However, depending on the application or analysis where they are involved, a particular wavelet family will result in a more efficient performance than the others [

33,

34,

35,

59]. Therefore, the selection of an appropriate mother wavelet is crucial for the correct performance of the system. From

Table 2 and

Table 4, we can see the average results obtained for each wavelet type for both ocular states. For Scheme 1 with a single feature, there are remarkable differences between each one of the wavelets. Furthermore, it can be observed that the results for cE are significantly higher than those obtained for oE. Conversely, for Scheme 2, the results obtained by the different wavelets are very similar and there is no big differences between oE and cE. Therefore, this second approach should be selected for the implementation of the system in a real scenario since it offers more robust results.

The response time of the system is also a key aspect when developing real-time and online applications. Consequently, we tested our system for small window sizes with short response times.

Figure 6 and

Figure 9 show the results for each subject and eye state using coif4 with a single feature and db8 with the two features, respectively. As previously mentioned, Scheme 2 offers higher accuracy and more robust results than Scheme 1, especially for the oE case and small window sizes. Moreover, similar results are achieved for one and two-channel data in the case of Scheme 1. However, for Scheme 2, the results obtained by the two-channel data are higher for some subjects. This difference is more apparent for small size windows (see

Figure 9a,c,e).

Taking into account the filter lengths shown in

Table 2, the number of operations needed to compute the db8 in Scheme 2 is considerably lower that the needed to compute the coif4 used in Scheme 1.

We can conclude that Scheme 2, composed by the two features, is the most suitable option for implementing the system since it offers the best performance in terms of accuracy and response time. There is no significant difference between the use of one or two sensors for large window sizes; however, we consider that the use of both channels could be more suitable for the system since in some subjects it did show an improvement for small window sizes. Therefore, considering this system configuration with two input channels and two extracted features, an average accuracy of for cE and for oE was obtained for the shortest window size, , with five of the seven subjects being above . Using a window size of , six of the seven subjects achieve an accuracy above in both ocular states and, with , those six subjects exceed of accuracy in both eye states. The response time of the system is of D, and therefore it would be for , for and for . Thus, the system offers a reliable classification accuracy for short response times, suitable for the implementation of non-critical applications.