1. Introduction

Service robots are becoming common in our everyday life in an increasing number of scenarios. They can perform tasks autonomously and cooperate with humans to guide people in airports [

1], assist the elderly [

2], help in the education of children [

3], aid staff and patients in hospitals [

4], and be of service in work environments in general [

5]. An essential skill in these environments is the ability to perceive the surroundings and the aspects of relevance for operations in social contexts. To achieve this, it is not only necessary to accurately detect people but also to identify those objects essential to enable the coexistence and the interaction between robots and humans.

In indoor environments, recognizing objects like doors, chairs, and stairs (among others), can allow robots to understand better their environment and operate more efficiently with humans. For instance, detecting whether a room is closed or not, or whether a person is sitting in a room, is useful information that robots could use to react and adjust their ongoing plans accordingly. Moreover, if robots are to navigate in dynamic environments, object detection needs to be performed online in an efficient manner, so that the robots can react promptly to the presence of people or objects of interest. Ideally, we also want to avoid the need for extensive pre-training tasks.

Nowadays, object recognition is performed mostly through the use of high-resolution visual cameras. This brings up issues related to human privacy, especially in public spaces, as service robots could perform face recognition or store/share recorded videos. However, alternative sensors like 3D LiDARs are becoming more and more common, extending the range of options for perception. Even though they are not still so widely used in indoor environments, their characteristics like Field of View (FoV) and detection distance, make them a valuable option to perceive objects efficiently and reduce privacy concerns, as confirmed by recent market trends [

6,

7].

Table 1 compares the main characteristics of some typical sensors for perception in service robots, which we use in our experimentation.

As we will discuss in

Section 2, there exist multiple methods for adding, on top of the geometric map, a semantic layer including detected objects of relevance for robot navigation. We take navigation of service robots as the reference task, as any other task is likely to be based on navigation. In particular, we consider navigation in a 2D plane, according to the nature of service robots operating indoors. In this context, there are 2D and 3D approaches using different types of sensors to build geometric maps. From these maps, classification algorithms can recognize specific objects and add a new layer with semantic information on top of the physical one, the so-called semantic maps. Semantic maps can then be used to refine robot behaviors, computing more efficient plans depending on their surrounding information. At the best of our knowledge, however, existing solutions for building semantic maps either rely on rich information, as provided by visual cameras and/or high-end processing resources, to apply complex classification algorithms.

In our previous work [

13], we identified a set of effective techniques to process point-cloud data for the detection of people in motion. In particular, our goal was to exploit a set of specialized filters to process both high- as well as low-density depth information with constrained computing resources. This work extends our previous approach (

Section 3) to address also static people as well as different objects of relevance (like doors and chairs) for navigation tasks in social contexts. In particular, we introduce (a) height, (b) angle, and (c) depth segmentation as a way to differentiate among objects depending on their physical properties. By analyzing the information along these directions, our approach offers a set of tools that can be combined together in different ways to specify the unique features of the objects to be detected. In this way, generic pre-training tasks can be replaced by specific rules able to perform detection more efficiently in the operational environment. The extensive evaluation of our approach (

Section 4), in comparison with established solutions, shows that we can offer a similar or better detection accuracy and positioning precision with a reduced processing time.

As a result, the main contributions of this work are the following:

We propose different classes of segmentation methods to compute semantic maps with low-density point-clouds;

Our object detection methods are effective with a performance similar to those of more complex approaches, but using less processing time;

We present experimental results comparing the performance of our approach with different semantic mapping solutions for different sensor types as well as point-cloud densities.

In conclusion (

Section 5), we show that semantic mapping for indoor robot navigation can be performed effectively even without high-end processing resources or high-density point-clouds. This poses the basis for privacy preserving, low-end robotic platforms able to coexist and cooperate with humans in everyday indoor scenarios.

3. Semantic Mapping via Segmentation

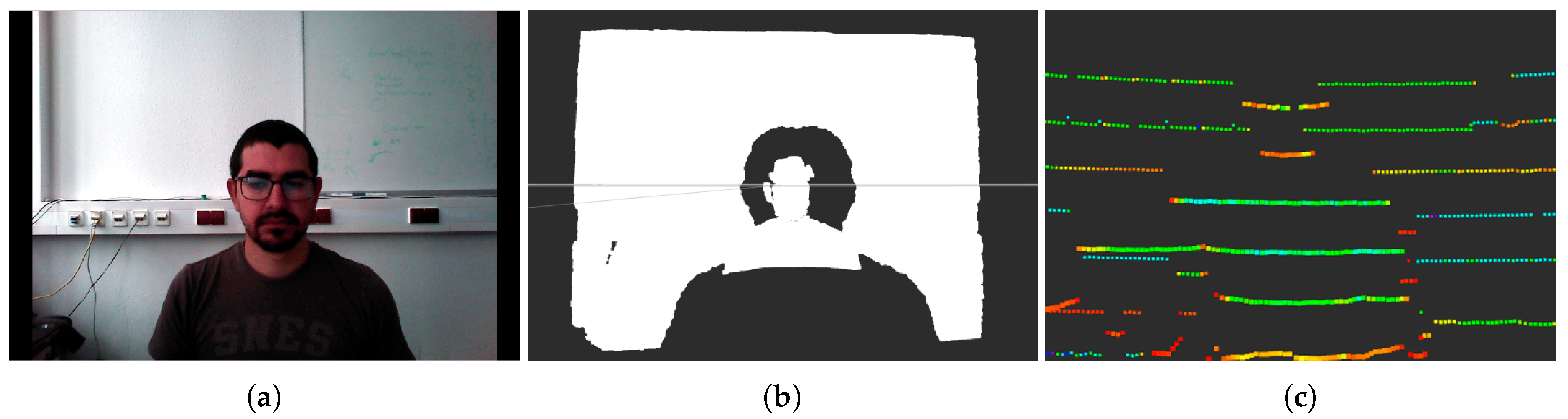

We now introduce our approach to solve the problem of creating semantic maps of relevance for the navigation of service ground robots in indoor environments. While RGB-D cameras allow one to exploit color images together with depth information to classify objects, we limit ourselves to depth information only and challenge ourselves further by looking at point-clouds with low-density. Specifically, we are interested in studying how sensors with low resolution, e.g., 3D LiDARs, can be used to identify different objects in an indoor scenario. As reported in

Table 1, state-of-the-art 3D LiDARs have a significantly lower density in comparison with RGB-D cameras within a similar FoV. However, by looking at how the point-cloud is perceived, we offer a way to recognize the physical structure of different objects. Therefore, we introduce several segmentation methods along which point-clouds can be processed and unique, distinctive features can be extracted. Through this approach, we avoid complex processing tasks performed in classical deep learning approaches, which still should be trained for the specific objects to be detected. In addition, by applying these techniques to low-density point-clouds, our solution can offer higher privacy as personal and private details are not visible to the sensor. Finally, by operating on point-clouds, our methods can be applied to 3D LiDARs as well as RGB-D cameras, or any other sensor providing the same type of measurement.

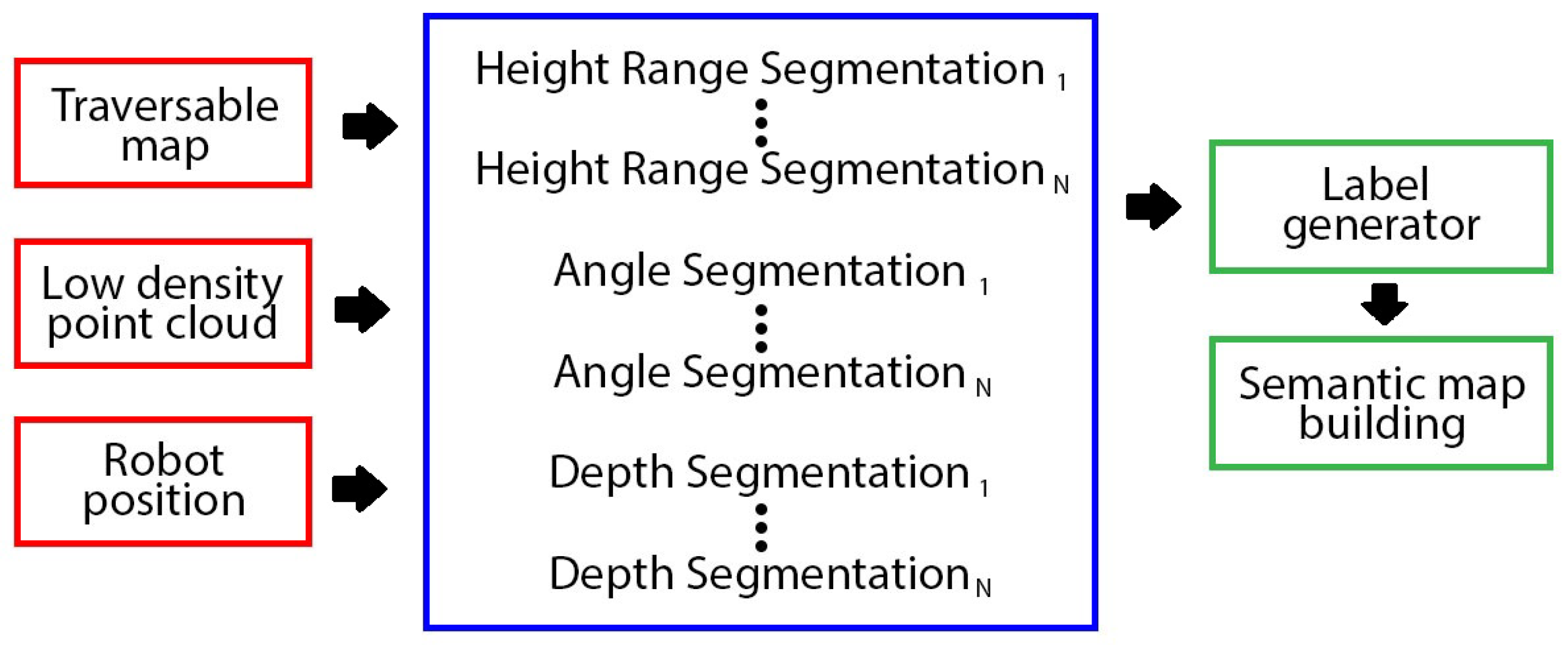

As depicted in

Figure 2, we propose different types of segmentation methods to detect different objects, focusing on their physical characteristics. Then, several of these methods could be executed in parallel with different configurations, enlarge the classes of objects to detect. For clarity of presentation, we introduce each segmentation method with its application to the detection of one specific object of interest in our indoor scenario. In particular, we are interested in detecting humans as well as objects like chairs and doors that could affect the ability of the robot to perform its service tasks, e.g., by navigating socially respecting proxemics rules or using the semantic information to search for humans or interact with them. In general, however, the same object can be detected by using different segmentation methods (alone or also in combination) if properly configured.

In the remaining discussion, we assume that the information about the robot position is available together with a traversable map of the environment, like the one we computed in our previous work [

22]. As mentioned before, the segmentation is performed on low-density point-clouds provided, e.g., by a 3D LiDAR. The output of our method is then a semantic map composed of the positions within the geometric map of all the objects detected, together with their labeling.

3.1. Height Segmentation

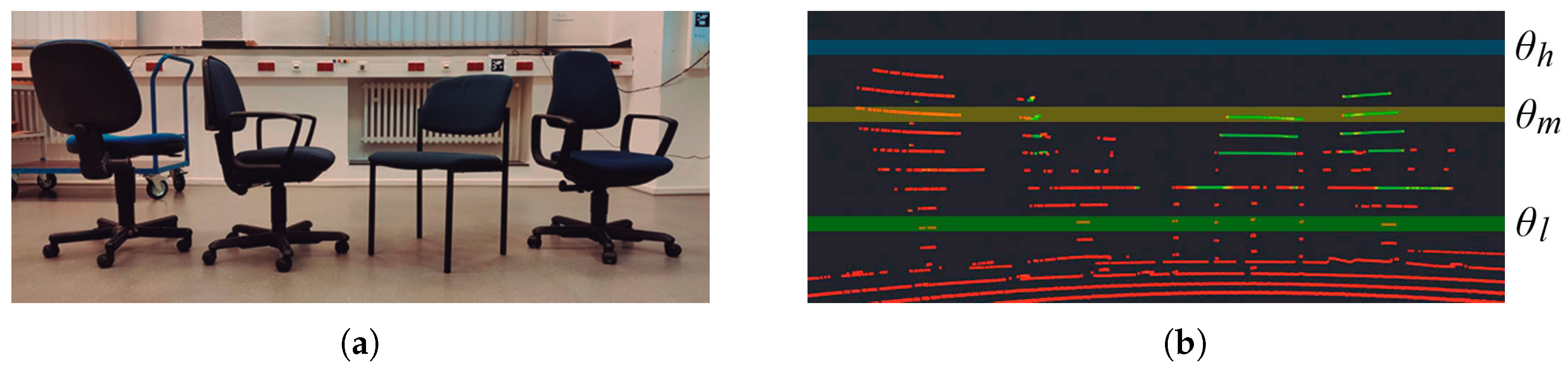

The first segmentation approach that we investigate focuses on the detection of objects that have peculiar characteristics at different heights. Low-density point-clouds typically provide information in layers, as depicted in

Figure 3. For mechanical 3D LiDARs, the layers correspond to the array of rotating lasers, each tilted with a different angle. For this reason, the obstacles that are at a further distance from the sensor have bigger gaps between layers.

Figure 3 shows how the number and position of useful points to detect a person drastically change with distance.

To implement such detection, it is necessary to define the various height ranges where the object of interest has distinctive features. If one or more perceived layers fall in such ranges and the measurements correspond to the physical structure of the target object, a match is found. This type of detection matches well with the perception of the human body, for instance. As represented in

Figure 4, it is possible to identify two sectors of relevance: a height range with legs and one with the trunk of the human body. More specifically, denoting the height as

h, we distinguish between a

Lower Section (LS), defined as

, and a

Higher Section (HS), as

. Nonetheless, our method is more general, and we could define more sectors with different height ranges to detect other objects with different physical characteristics.

This type of segmentation is a variation of the one proposed in our previous work [

13] for the detection of moving people. The complete procedure is exemplified in

Figure 5. Once the height ranges have been defined, all the points belonging to the different ranges are extracted and stored in different

Height Range Clouds (HRC), and the remaining points are discarded. To obtain these clouds it is also necessary to define how many points should each have, according to the horizontal angle resolution (

) of the sensor. Taking into account that multiple layers could fall in the same range, among vertically aligned points, the nearest one is selected. This is done for the complete FoV at the given

resolution. At the end of this step, one layer is constructed for each HRC with

points, as shown in

Figure 5c.

Within the layer of each HRC, clusters of points with a maximal Euclidean distance

between them are identified. Points in the point-cloud are visited in increasing angle, and the Euclidean distance (on the XY plane) between two consecutive points is measured. If greater than

, a new cluster is created and the previous one is completed. Once this process is finished, the width of each cluster is compared to a set of reference widths for the parts of the object to be detected according to the different sections. In this particular case, a lower section minimum width (

), a lower section maximum width (

), a higher section minimum width (

), and a higher section maximum width (

) are defined. If the width of a cluster is outside these ranges, all its points are deleted from the HRC. For the remaining clusters, only the information about its centroid is preserved, as shown in

Figure 5d.

Finally, the centroids from clusters in the two different HRC are compared with each other, looking for a match. In the case of people detection, only the single points (i.e., a trunk) in with a corresponding pair of points (i.e., legs) in are preserved. These points are then labeled as the possible position of a detected human person. However, service robots in indoor environments are exposed to many different objects some of which could be falsely classified according to the presented scheme. To increase accuracy, we make use of traversable maps of the scenario to filter out objects placed in unlikely positions.

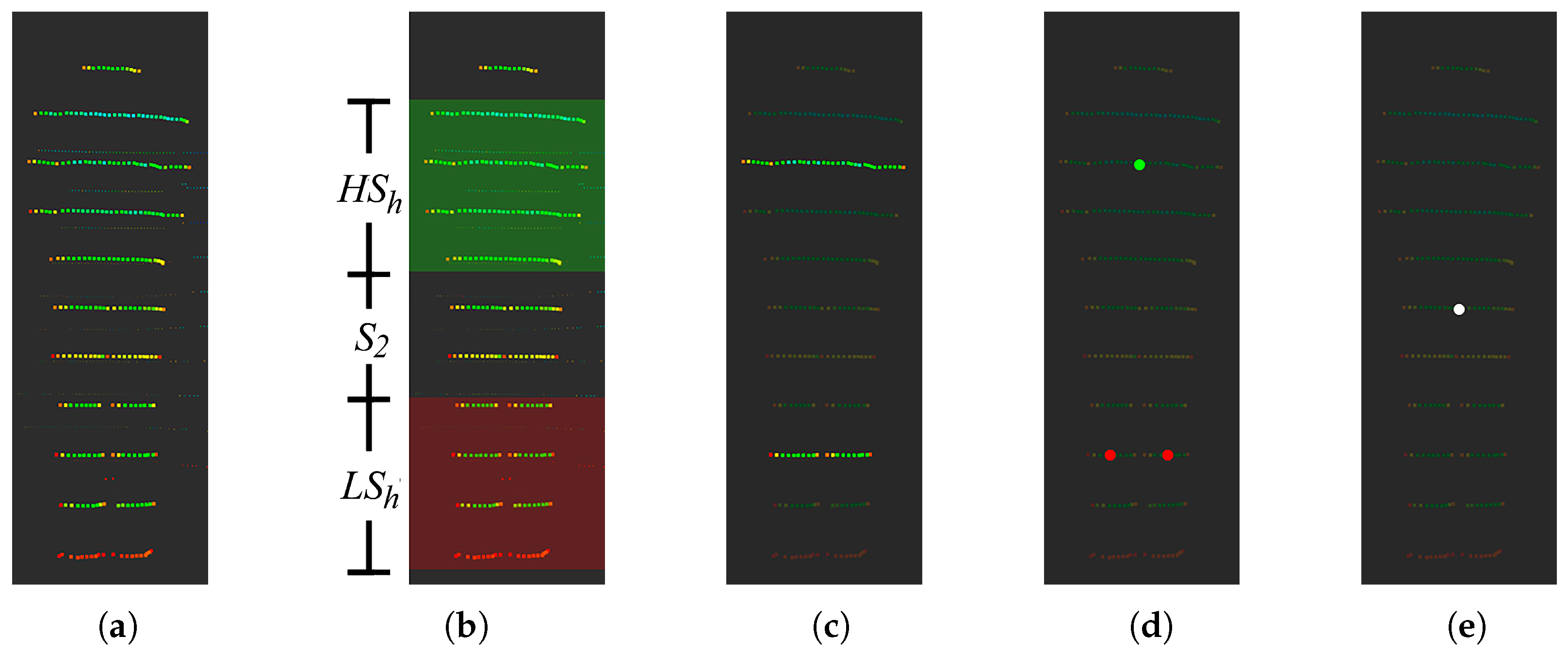

3.2. Angle Segmentation

We now introduce a second and alternative approach for object detection that is based not anymore on the absolute height of the measurements but rather on their relative position with respect to the robot. In particular, we look at the vertical angle of the observed points, searching for layers at certain reference angles. An example of the application of the method is shown in

Figure 6. In this example, 3 reference angles are used for the detection of a chair: a low angle (

) to detect the legs of the chair; a medium angle (

) where the back of a chair can be found; a high angle (

) to determine the height of the object. This last angle marks the biggest difference with respect to the previous segmentation method. In fact, by estimating the height of the objects, it is possible to discard those objects that may have similar structures to the desired one and produce false positives. The definition of these angles includes a vertical tolerance (

), which allows us to consider points within a range of angles. In the case of the VLP-16, which has a vertical resolution of 2°, the

value might permit only one layer. However, for an Astra camera, more layers might be accepted since its vertical resolution is approximately 0.1°. If multiple layers are selected, it is then necessary to extract the nearest point for each horizontal angle to build individual layers as presented in

Section 3.1. The point layers at the two lower reference angles are then processed in terms of clustering and matching, as explained in

Section 3.1. To make this approach possible, a reference distance (

D) needs to be defined between the sensor and the object to be detected. This distance will determine the adequate values of the reference angles that are representative of the object of interest. For this angle segmentation, we only process objects observed at this reference distance (within a tolerance interval) and discard others, as measurements closer or further may present different physical proportions to those in our configuration. Nonetheless, our method could consider a dynamic reference distance

D and adapt the reference angles according to that.

Differently from the procedure discussed in

Section 3.1, there is no need to extract the nearest points for each reference angle, considering that only one layer is observed (in the height segmentation multiple layers had to be merged together at each section). However, it is still necessary to define a lower angle minimum width (

), a lower angle maximum width (

), a medium angle minimum width (

), and a medium angle maximum width (

). They represent the minimum and maximum allowed width for the object clusters to be detected within the layers at

and

, respectively. Thus, the clusters extracted from the two lowest reference angles are filtered out according to these parameters, and their centroids are computed.

In this angle segmentation, the highest reference angle (

) is used to check the height of the object. Depending on the type of object to detect and the value of the reference distance

D,

is selected so that it falls within the FoV of the sensor and above the typical height of the objects of that class. In conclusion, the final object detection is confirmed by matching centroids from clusters in the two lowest reference angles (

and

), and checking that the object height (i.e., the top layer detected by the sensor) is below

.

Figure 7 depicts an example of sensing different models of chairs and the different segmentation angles.

In the case of chairs, applying angle segmentation for detection can also be exploited for detecting sitting people. In particular, after having detected a chair and estimated its position, it is possible to modify the algorithm to detect changes in the height of an already detected chair by looking at the highest layer detected by the sensor. An increase in height could be associated with the presence of a person sitting on the chair.

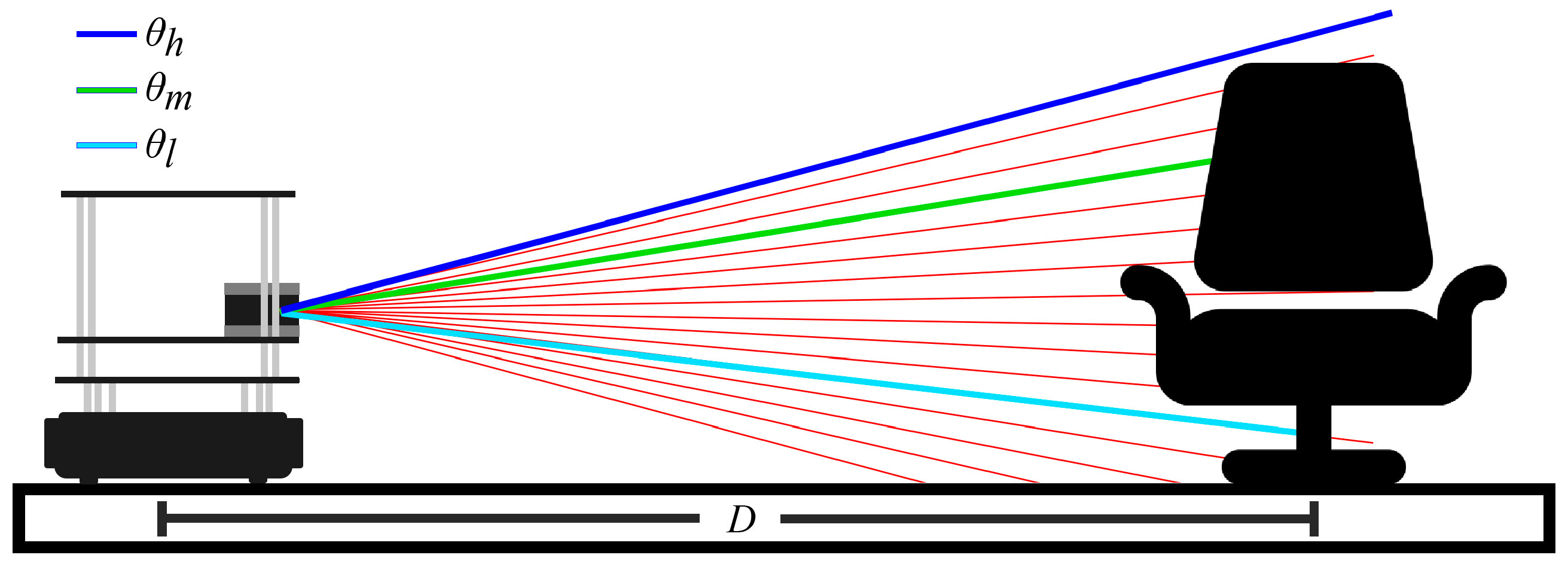

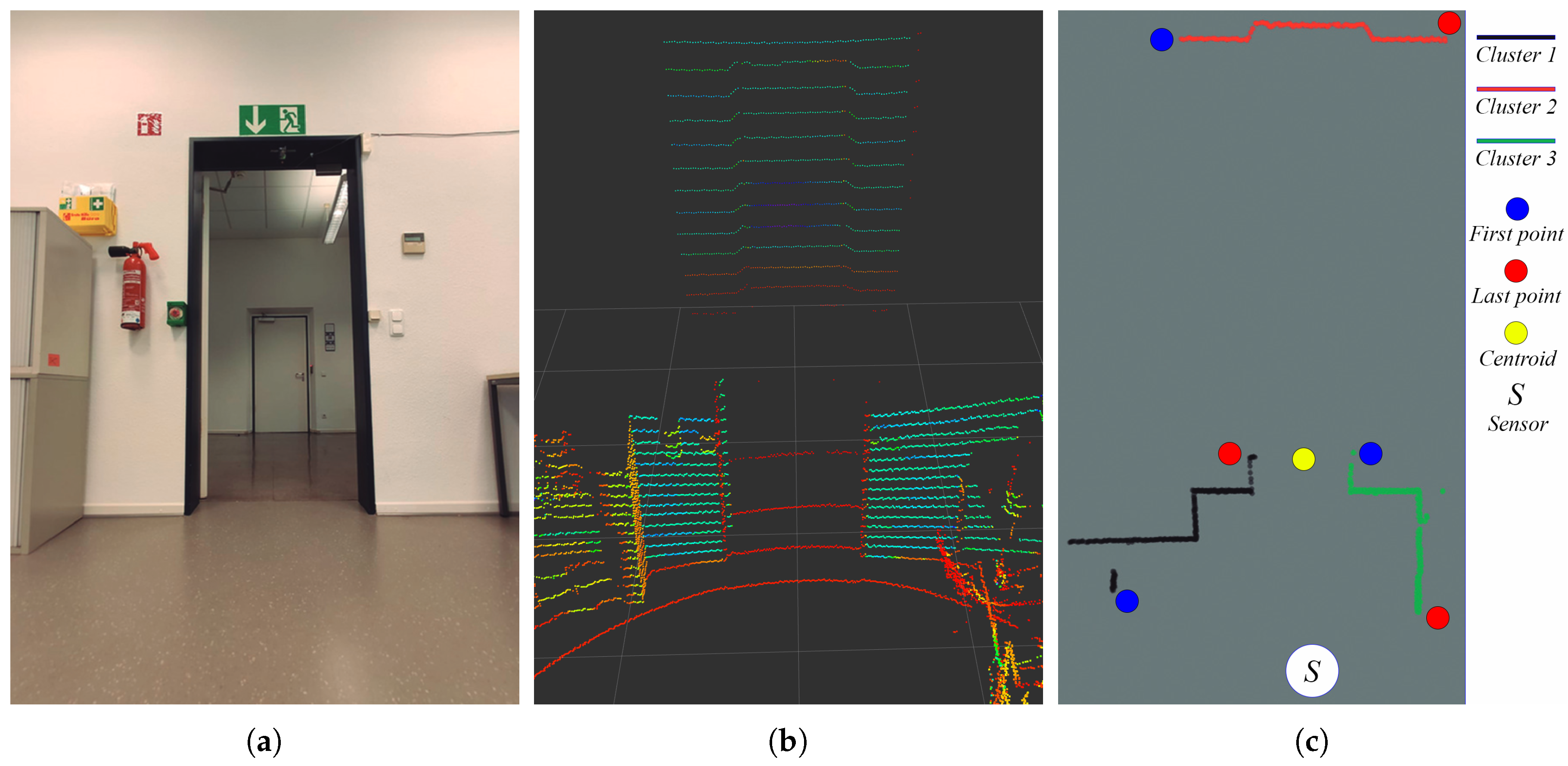

3.3. Depth Segmentation

The methods discussed until now focus on features that change along the vertical dimension of the object. For this reason, the key was to distinguish among different vertical layers, either in specific ranges (for height segmentation) or individually (for angle segmentation). We now look into objects that maintain the same structure throughout their vertical extension but that manifests a characteristic depth profile. In particular, we are interested in identifying objects like doors or cabinets.

For the sake of simplicity, we focus here on the detection of doors, but the method may apply to other objects with similar characteristics. In this case, it is possible to identify the reference top and bottom heights of a door and points above it, or below (i.e., reflected on the floor), can be discarded. All the layers in between can then be merged, throwing away the height information and preserving only the nearest points for each horizontal angle step, as done in the height segmentation. Again, clusters of consecutive points with a distance smaller than are computed. We visit all points ordered by a horizontal angle and calculate the Euclidean distance (on the XY plane) with the next point. If this distance is greater than , the cluster is closed and a new one is opened. In the case of a door, for instance, the value of needs to be chosen so that it is smaller than the width of the door frame. Otherwise, all significant points would be fused into the same cluster, making the definition of the object more complex.

An example of this segmentation method for the detection of doors is presented in

Figure 8. First, the point-cloud is sectioned in clusters and the distance between each pair of clusters is computed. This distance is the Euclidean distance on an XY plane, from the last point of one cluster (red circles in

Figure 8c) to the first point of the next cluster (blue circles in

Figure 8c). If those distances are within the tolerance interval defined by a minimum object width (

) and maximum object width (

), there is a match, and the middle point between the corresponding clusters is computed (yellow circle in

Figure 8c) and labeled as the position of the detected object.

4. Results and Discussion

We now evaluate the performance of our approach for semantic mapping. First, we analyze the processing time from the delivery of the sensor readings to the estimation of the object’s positions. Furthermore, we assess the detection accuracy in terms of correct and false identifications. Finally, we quantify the precision of the position estimation in comparison to the real placement of the object in the environment.

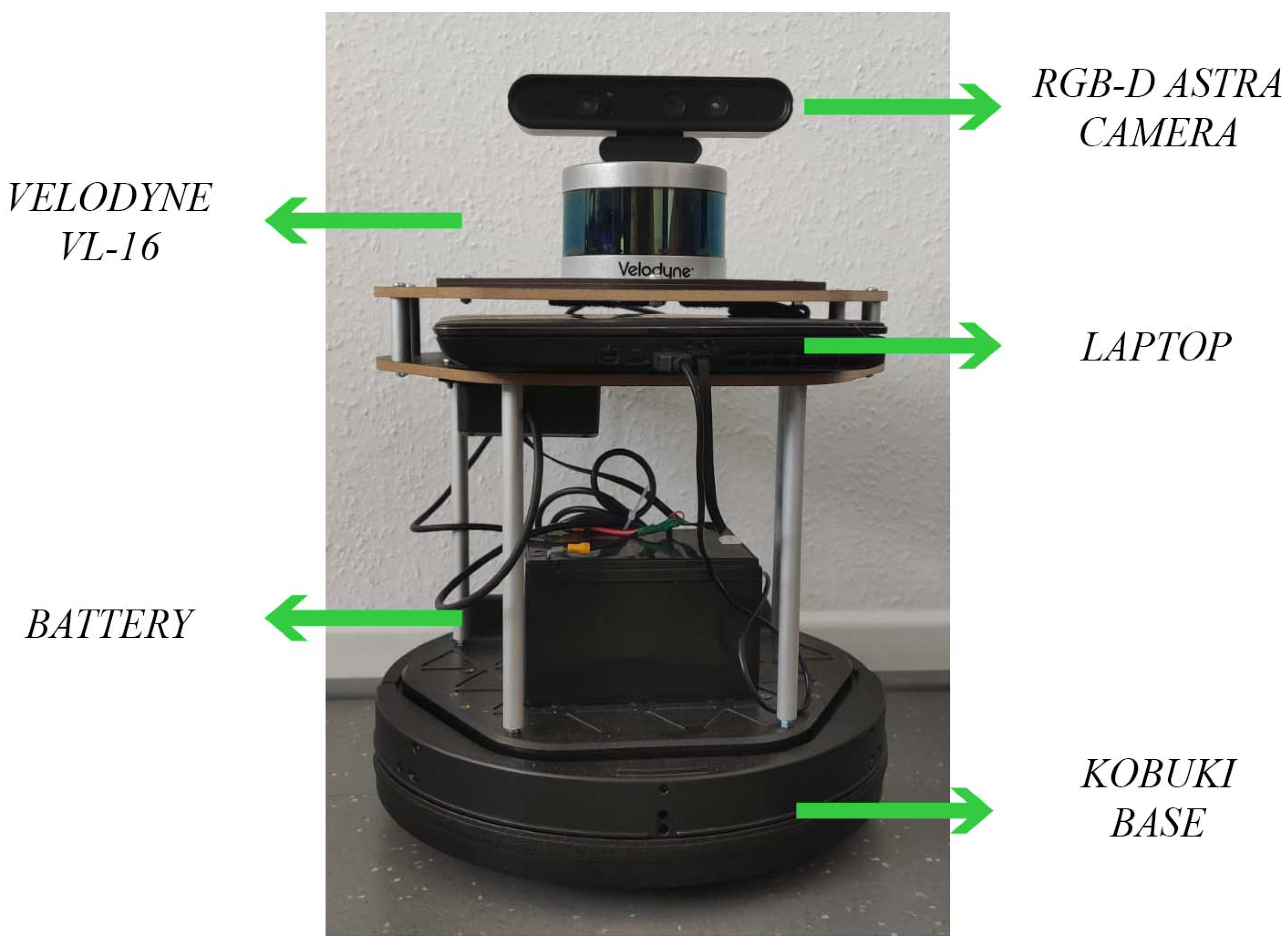

We implemented the segmentation methods for semantic mapping introduced in

Section 3 in C++ and integrated them into ROS Kinetic Kame. We tested the algorithm on a machine with an i7@2.2 GHz processor and 16 GB of RAM to which we connected a Velodyne VLP-16 3D LiDAR. We focus the experimentation on the VLP-16, but our method would work with any sensor providing point-clouds, like a solid-state LiDAR (e.g., the CE30 with the SDK for ROS [

36]) or an RGB-D camera (e.g., the Astra camera through the package ros_astra_camera [

37]). Actually, by using the VLP-16 we put ourselves in the condition of having a significantly lower point density than what should be expected from other technologies (see

Table 1). Moreover, we avoid privacy issues related to visual cameras. The sensor was located 40 cm above the floor on a Turtlebot2. On top of the LiDAR, we also placed an RGB-D Astra camera that we used to compare against alternative solutions.

Figure 9 shows the hardware platform used for the experiments.

The experiments were performed in the indoor environment depicted in

Figure 10 with an approximate area of 450 square meters. The scenario is divided into multiple rooms and corridors where heterogeneous objects typical of office environments were placed. The movements of the robot were controlled remotely via teleoperation, as we were mainly interested in the mapping part. In each test, the robot was driven through all the rooms, which took between 20 and 25 minutes to complete. The segmentation methods previously discussed were configured to detect people (height segmentation), chairs (angle segmentation), and doors (depth segmentation). The parameters used in the experimentation are presented in

Table 2. For these experiments, we used a traversable map of the environment that accounts for the height of the robot, as built by existing libraries [

22].

We compare our technique against other alternative approaches. For people detection, we employed the ELD algorithm [

17], which performs the detection of legs through readings obtained by a 2D LiDAR. We also tested the ABT package [

23], which can detect human skeletons with an RGB-D camera, i.e., the Astra camera we installed on our robot. Last, we compare also against our previous approach, PFF_PeD [

13]. Considering that the Velodyne driver for ROS can provide both 2D and 3D measurements, we used the same sensor to test the segmentation methods presented in this work as well as the ELD algorithm. As one of our goals is to preserve the privacy of people moving in the environment, we refrained from comparing our solution against approaches like face recognition.

For object detection, instead, we compare our approach against the Find Object (FO) package [

38], which exploits information from existing datasets as well as images from the scenario. Furthermore, we tested the semantic_slam package (SS) [

39], which uses CNN and an RGB-D camera to perform the detection based on color and depth information. This approach, together with the ORB-SLAM2 and Octomap packages, can build a full 3D semantic map. In our evaluation, we discarded solutions like Semantic Fusion [

24] that explicitly require a powerful GPU to perform the computation.

At first, we trained the FO package with the dataset provided by Mathieu Aubry [

40], which contains a large number of models of chairs, but without success in the detection. Therefore, we trained this approach locally, adding images of the scene for each object from different points of view. In total, we provided 50 images for each design of chair present in the environment, 50 images for the detection of people, and 20 for the detection of doors. Instead, the SS solution was trained with the ADE20K dataset [

41].

4.1. Processing Time

To quantify the performance of our solution, we analyze first the processing speed of the different segmentation methods. We define processing time as the time that the algorithm takes since it receives a new point-cloud from the sensor until it is processed resulting in the detector output. The results presented in this section are the average time required by the different algorithms to process 1000 samples (point-clouds) provided by the sensor in use. The results for the processing time are presented in

Table 3.

Considering that the algorithms based on CNN can distinguish between multiple classes of objects simultaneously, we also ran our solution according to the scheme presented in

Figure 2, i.e., we executed the different segmentation methods in parallel to detect multiple object types simultaneously. In this case, our algorithm takes an average of 21.22 ms to process a point-cloud for object detection. This approach is simpler than our previous PFF_PeD work, which required two further steps to detect moving persons. As a result, the processing time decreases by approximately 6 ms. Apart from this increase in detection speed, we are now able to identify both moving and stationary persons. In contrast, the pure 2D solutions detecting legs (ELD) is 4 times slower than our approach.

The Find Object (FO) solution takes an average of 29.65 ms to detect any of the objects we are interested in identifying. In this case, the processing time depends on the number of images that the algorithm uses for feature extraction (FO uses a database with multiple images for comparison to process each sensor sample). In our experiments, we used 170 images for processing each sensor sample; if the number of images increases, this will have a negative impact on the processing time. For SS, instead, the dataset employed provides a large number of labels to distinguish a larger set of objects. However, this type of solution based on CNN has a high computation cost and are usually executed on machines with high-performance GPUs. For our scenario and our reference hardware platform, this resulted in 325.75 ms.

In conclusion, the results show that our proposed segmentation methods can significantly decrease the processing time, without imposing any strict hardware requirement. Moreover, the comparable approaches, which can distinguish among different objects, are based on the use of cameras, which offer a limited FoV (60° for the Astra camera in comparison to 360° for the Velodyne 3D LiDAR). They also require high-density point-clouds, which our reference 3D LiDAR cannot provide. Nonetheless, the lower density of the point-clouds exploited in our approach does not affect significantly the detection accuracy, as we discuss next.

4.2. Detection Accuracy

We also evaluated the detection accuracy of our method with the same experimental setup. For that, we propose as metric the

True Positive Rate, which is calculated dividing the true positive detections (objects correctly detected) by the number of samples.

Table 4 shows the results obtained with several approaches, using a number of 1000 samples (frames or point-clouds) for each case. The results are separated depending on the type of object to detect. It is also important to highlight that, in the case of approaches based on cameras, unlike it happens with LiDARs, illumination conditions may affect significantly the results. Therefore, we selected favorable illumination conditions to run the experiments and compare them fairly.

First, we analyze the detection of chairs. These are objects hard to detect, as they can present multiple shapes, sizes, and colors, depending on the model. Our Angle Segmentation method achieves a fairly good detection accuracy, only 6% below the best method, which was SS. However, as already discussed, worse lighting conditions can jeopardize SS results. Indeed, repeating our experiments with sunlight through the windows, detection accuracy for the SS method was reduced up to a 50%. In the case of the FO package, we had to add 50 images of each chair model in the environment, taken from different angles and distances. We tried first using only 10 images, but the accuracy was below 40%.

In the case of doors, there are fewer works able to detect them, so we compared our Depth Segmentation method against the packages FO and SS. Our method outperformed clearly the alternatives, as the others found difficulties to detect closed doors, as in this case, relevant characteristics are harder to be distinguished, mistaking it with the wall, which had the same color too. Even with the doors open, another wall of the same color could be seen through the door, which confused the visual-based approaches.

Finally, regarding the detection of people, our Height Segmentation method achieved a very high detection accuracy (96.95%), quite close to the best method, which was SS using CNNs (97.5%). We improved results from our previous work (PFF_PeD) when using both the LiDAR and the Astra camera. The ABT based on skeleton detection presented results slightly worse than our method, while the leg detection (ELD) performed considerably worse, presenting also a high amount of false-positives.

4.3. Positioning Precision

In this section, we show results to assess the accuracy of our method in terms of position estimation of the detected objects in the map, in relation to their real positions in the scenario.

Table 5 depicts the results of our different methods with FO and SS as alternatives. The precision error is computed comparing the estimated position with the actual geometrical center of each object, that we measured by hand as ground truth. In the case of the chairs. two different models were considered with a different number of legs. Chairs of type 1 are like the first, second, and last chairs from left to right in

Figure 7a, whereas chairs of type 2 were like the remaining model with 4 legs. For the chairs of type 1, our Angle Segmentation method got an error of less than 5 cm, as the estimated position depends on the width of the leg of the chair. For the chairs of type 2, our solution had an error of almost 16 cm, as the estimation in this model depends on the number of legs that are detected to estimate the position of the geometric center. For instance, if only one leg is detected the error is greater than when detecting all 4.

As for the results obtained with the FO package, two aspects can be observed. For chairs of type 1 the error is larger than with our method. FO makes detections using the color image and once the detection is made, the 3D position is estimated from the depth information of the camera. Part of the error comes from using pixels of the back of the chair to estimate the depth, instead of the center. For chairs of type 2, the error is considerably higher. In both cases, it is important to note that FO makes use of CNNs that are trained with rectangular images to detect objects on the images, and then, a 3D position is extracted from the depth information of that noisy detection. Using 3D information from point-clouds directly to train the CNNs would improve results. Moreover, in the case of SS, this algorithm created a 3D map with the information sensed using OctoMap with a resolution of 5 cm for each voxel. In part due to this, errors were in that order, 6.35 cm for chairs of type 1 and 8.36 cm for chairs of type 2.

For the door detection, our Depth Segmentation method reports an error significantly lower than the others. In part, this is because, on many occasions, FO and SS are estimated as the position of the door a point in the left or right side of the door frame, instead of the center. Instead, using LiDAR information, the middle point can be estimated with higher precision. Regarding people pose estimation, our proposal for Height Segmentation achieved the best performance. It is important to note that this test was carried out with static people for comparison. Our solution presents a better estimation of the position than methods that use cameras. Besides, these methods based on cameras had a limited range of up to 5 m to detect people, while our method could detect people up to 12 m thanks to the VLP-16 LiDAR.

Figure 10 presents the final results for our semantic map method in an indoor office scenario. As already explained, we teleoperated our service robot as the semantic map was built. In particular, a map considering doors, chairs, and people detections was created, though our method could also process other types of objects of help for service robots, only tuning any of the segmentation methods accordingly. For instance, in this experiment, the information about chairs can help the robot to understand whether there is a person sitting in the room or not, for whom the robot may be looking to deliver a package. Information about doors is interesting when the robot was following someone and lost track of him/her. It could predict possible paths from his/her last known position considering the doors around the office.

Regarding the mapping results, 11 doors with a width of 95 cm were detected in our experiment, although one of them was detected in the wrong position. For the chairs, in the tested environment, there were 44 chairs (36 of type 1 and 8 of type 2). 47 chairs were detected, including 8 false-positive detections and 5 false-negatives. Moreover, a moving person was included in the scenario to check the detection of moving people. The detected trajectory when the robot passes nearby is shown in

Figure 10.