Abstract

Currently, new technologies have enabled the design of smart applications that are used as decision-making tools in the problems of daily life. The key issue in designing such an application is the increasing level of user interaction. Mixed reality (MR) is an emerging technology that deals with maximum user interaction in the real world compared to other similar technologies. Developing an MR application is complicated, and depends on the different components that have been addressed in previous literature. In addition to the extraction of such components, a comprehensive study that presents a generic framework comprising all components required to develop MR applications needs to be performed. This review studies intensive research to obtain a comprehensive framework for MR applications. The suggested framework comprises five layers: the first layer considers system components; the second and third layers focus on architectural issues for component integration; the fourth layer is the application layer that executes the architecture; and the fifth layer is the user interface layer that enables user interaction. The merits of this study are as follows: this review can act as a proper resource for MR basic concepts, and it introduces MR development steps and analytical models, a simulation toolkit, system types, and architecture types, in addition to practical issues for stakeholders such as considering MR different domains.

1. Introduction

The development of new technologies has enabled the design of applications to create decision-making tools to solve the problems of daily life. For example, a mobile pathfinding application finds an optimum path and visualizes it for the user, which facilitates quick and accurate decision-making during an emergency situation [1]. In fact, in designing such an application, it is very important to design easy user interaction using appropriate visualization strategies. Many computing ideas have emerged to achieve these aims, such as virtual reality (VR), augmented reality (AR), and mixed reality (MR). VR provides a computer-generated environment wherein the user can enter a virtual environment with a VR headset and interact with it. Although a lack of relation with real space was a problem in VR, AR technology solved this problem and presented a new method of visualization to enable the addition of computer-generated content to the real world. This technology creates an augmented world that the user can interact with. Despite the importance of AR, the separation of the real and virtual world is a serious challenge. This problem decreases the user immersion level during AR scenarios. MR emerged to tackle this challenge with the creation of the MR environment. This environment merges the real and virtual worlds in such a manner that a window is created between them. As a result, a real-world object interacts with a virtual object to execute practical scenarios for the user [2]. There are three important features of any MR system: (1) combining the real-world object and the virtual object; (2) interacting in real-time; and (3) mapping between the virtual object and the real object to create interactions between them [2]. Ref. [3] implemented an MR visualizing system to show blind spots for drivers. In this application, the driver uses MR see-through devices. This device shows blind spots to the driver to decrease the risk of traffic accidents. In fact, this application enhances the real environment by making invisible information visible to the user.

MR-based applications are one of the top 10 ranked ICT technologies in 2020 [4]. Various studies have been conducted on MR technology, and different survey categories created in relation to the technology. Some surveys have separately included the MR component. Ref. [5] performed a comprehensive review of related MR applications and the technical limitations and solutions available to practically develop such applications. Ref. [6] discusses solutions to maintain privacy-related user data. Ref. [7] conducted a survey related to recent progress in MR interface design. Ref. [8] conducted an overview associated with issues for the evaluation of MR systems with respect to user view, and discussed challenges and barriers in MR system evaluation. Ref. [9] performed a survey to provide a guideline related to MR visualization methods such as an illumination technique. Other types of surveys have focused on a single domain of MR applications. Ref. [10] prepared an overview regarding the specialized use of MR applications for healthcare. Ref. [11] performed a review for education applications. Some surveys have discussed MR technology alongside other ideas such as AR and VR. Ref. [12] performed a survey on MR along with other computing ideas, such as virtual and augmented reality; that book provides useful information related to MR basics. Ref. [13] published a book that primarily focuses on basic concepts and general applications of MR.

Despite the useful information provided in previous literature, a comprehensive study that presents a generic framework comprising all MR components needs to be undertaken. Proposing such a framework requires a comprehensive review of different domains of MR applications such as large-scale, small-scale, indoor, and outdoor applications, to make MR applications feasible for the practical use of stockholders.

Therefore, to solve this problem, we have performed a comprehensive study by reviewing published papers in academic databases and finding their logical relation to a generic framework for MR applications. This framework contains the necessary components of MR, but also addresses existing challenges and prospects. This study will be of great benefit, as it discusses the basic concepts related to MR space to help the reader become familiar with MR technology. It includes different key points associated with MR applications and the implementation such as system development steps, simulation environments, models, architecture issues, and system types. It introduces different MR application domains, providing practical perspectives to academic and private sectors. The remainder of this paper is structured as follows. Section 2 discusses the details of the research process. Section 3, Section 4, Section 5, Section 6 and Section 7 are related to different layers of the proposed framework, and Section 8 summarizes our research and highlights its future scope.

2. Research Process

2.1. Research Question

Our research aims to propose a comprehensive framework comprising all components of MR applications. This framework addresses the following questions. Q1: Which concepts are necessary to create a generic framework for MR applications? Q2: Which components are necessary in MR applications to create a general MR framework? Q3: What are the future prospects of the proposed framework?

2.2. Research Methodology

This research involved reviewing previously published articles related to MR. These articles were searched using keywords such as “mixed reality” in databases such as Science Direct, IEEE Xplore, Springer Link, Wiley Interscience, ACM Library, Scopus, and Google search. The research articles searched in these databases are related to the Computer Science and Engineering domains.

- The search period for this survey ranged from 1992 to 2019. This interval was chosen because most MR-related articles were published within this period.

- Our research methodology was as follows: as a first step, recent articles were selected from the aforementioned databases using the keyword “mixed reality” in the title, keywords, and abstract sections. Then, the papers were evaluated to establish a logical relationship between them as a basis for the proposed framework. Next, the framework of the research was fixed. To complete some related components that were critical to our framework, the research domain was increased to the years 1992–2019. In total, 117 articles were selected based on their relevance to the proposed framework. These articles are reviewed in detail within this manuscript.

2.3. Research Results

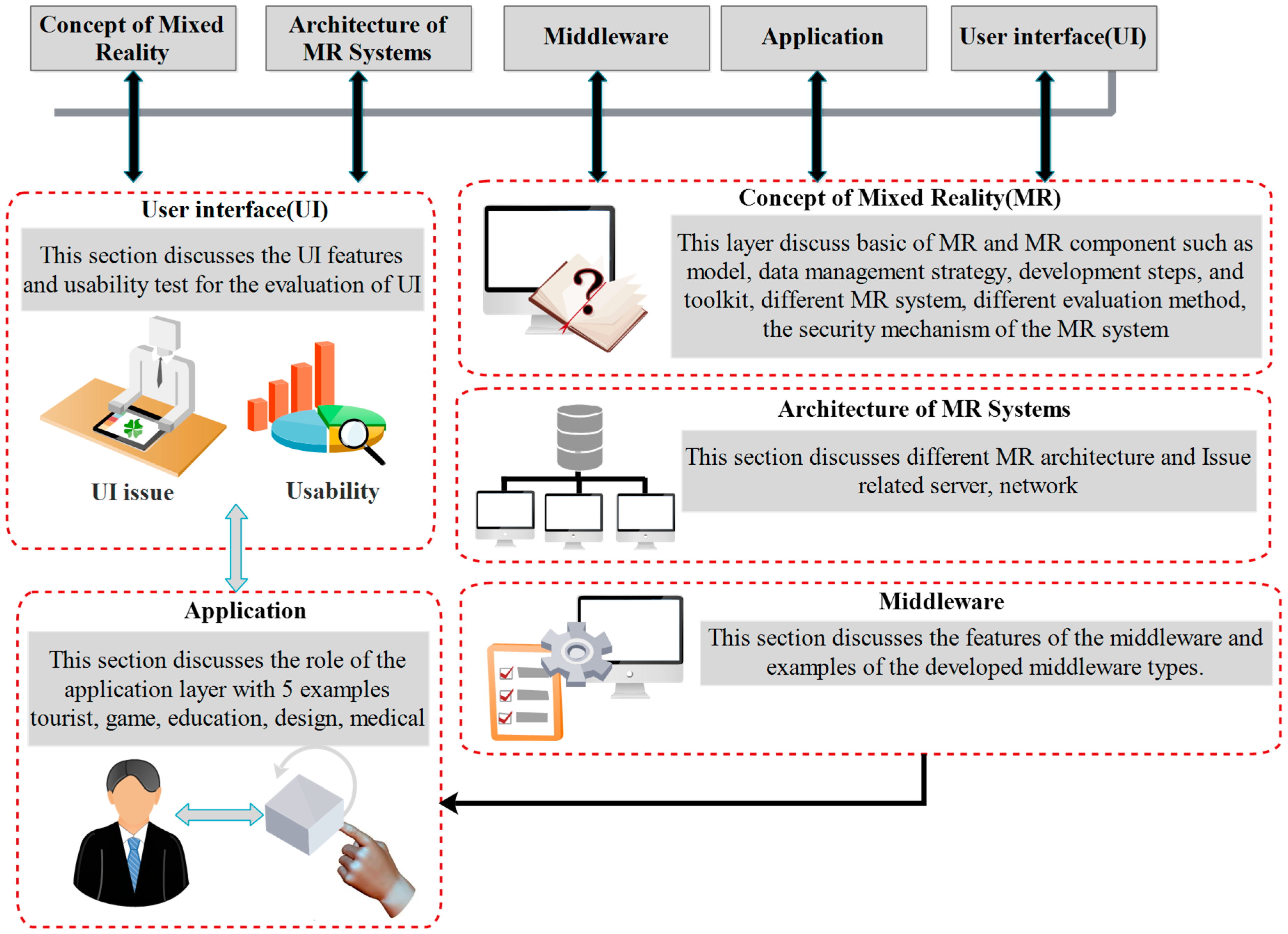

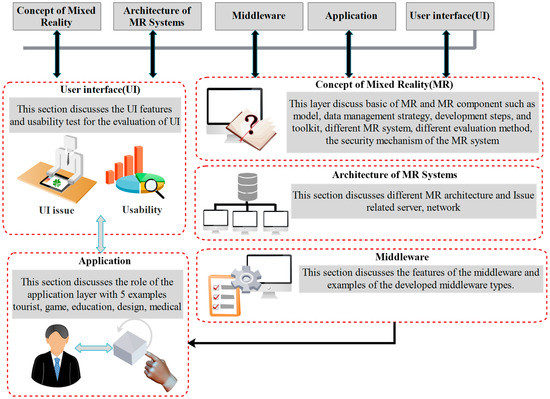

The research results were twofold, comprising a generic framework and its necessary components for MR applications. The proposed framework is made up of five layers: MR concepts, MR systems architecture, MR Middleware, MR applications, and MR user interface (UI) (Figure 1). The first layer describes the components of MR applications; the second and third layers are about the architecture used to integrate these components; and the final layers include the application and UI layers. Each layer is discussed in detail herein.

Figure 1.

Research layers.

3. Concept of Mixed Reality

This first layer contains the overview and different components of MR, such as algorithms, data management, development guidelines, frameworks, evaluations, and security and privacy.

3.1. Overview

This section discusses the basics of MR space, covering its definition, characters, first MR implementation, MR benefits, and MR technologies.

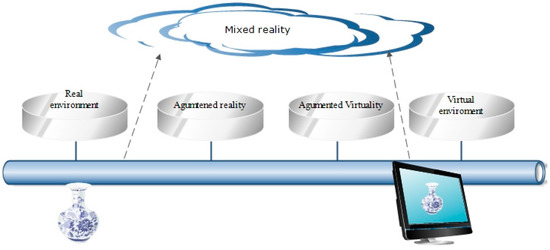

Definition 1.

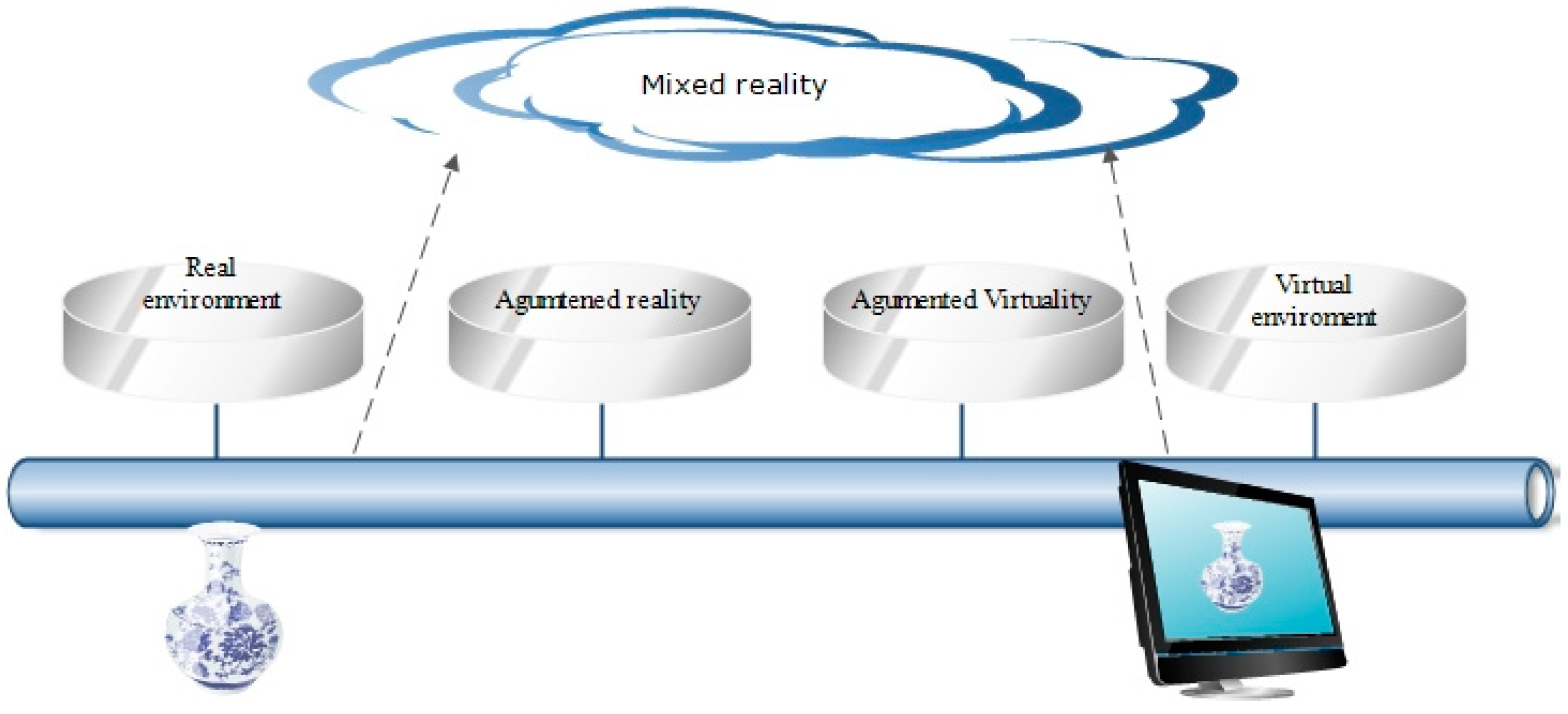

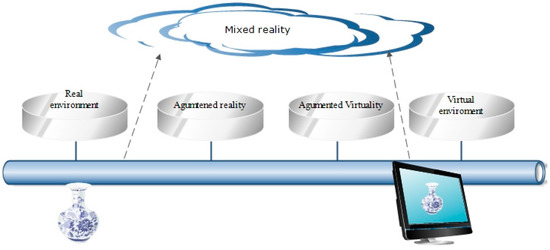

The first definition of MR is presented by the reality–virtuality continuum, which is a scale modeling real-world representation of classes based on computing techniques. On the left side of this scale is real space and on the right side virtual space (i.e., an environment generated using computer graphical techniques). Figure 2 shows that MR is everything between augmented reality (AR) and augmented vitality (AV). The main goal of MR is the creation of a big space by merging real and virtual environments wherein real and virtual objects coexist and interact in real-time for user scenarios [14,15,16,17,18,19,20,21]. Mixed Reality is a class of simulators that combines both virtual and real objects to create a hybrid of the virtual and real worlds [13].

Figure 2.

Reality–Virtuality continuum (Adopted from [18]).

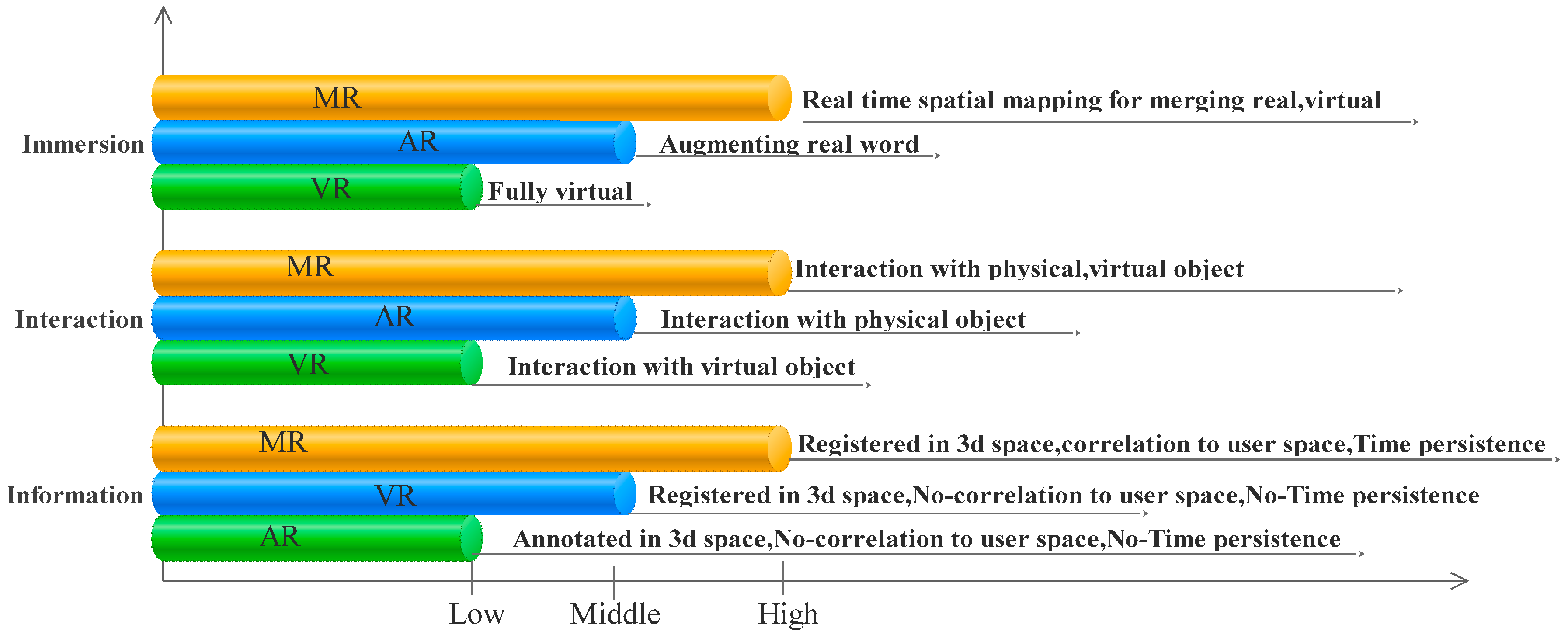

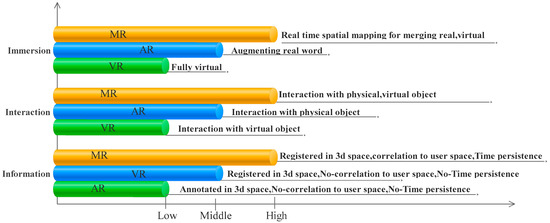

MR characters: MR characters may be defined using three terms: immersion, information, and interaction. Immersion refers to the real-time processing and interpretation of the user’s environment. User interaction with MR space is performed without any controller, using natural communication modes such as gestures, voice, and gaze. Information refers to virtual objects being registered in time and space in the user environment. This allows the user to interact with real and virtual objects in the user environment [22].

First MR implementation: The first MR prototype was implemented by the US Army MR in 1990. This implementation, a virtual fixture, refers to overlaying a registered virtual object on the real space of the user to increase the performance of implemented systems [23].

MR benefits: MR space provides many benefits in comparison to other computing ideas such as AR and VR (Figure 3 and Table 1) [22,24].

Figure 3.

Based on three main characters (Adopted from [22]).

Table 1.

Comparison according to three key space specifications (Adopted from [24]).

MR challenges: Despite the importance of MR environments, there are two major challenges for creating such a platform: display technology and tracking. The MR platform needs to use appropriate display technology to provide a reasonable output with appropriate resolution and contrast. In some instances, the system needs to consider the user’s perspective. Interaction between virtual and real objects requires the use of precise methods in order to track both objects [5].

MR technologies: Optical see-through and video-see-through are the two types of technologies used for developing the MR space. In optical see-through technology, the real-world can be seen directly in tools such as transparent glass. In video-see-through technology, both virtual and real objects are present in an LCD (Liquid Crystal Display) [25]. The display tools in MR can be categorized into four classes: head-mounted display, handheld display devices, monitor-based, and projection-based displays [26]. To use a display tool in the MR space, it is necessary to pay attention to the two concepts, i.e., comfort (e.g., thermal management) and immersion (e.g., the field of view). These concepts provide ease of use that enables the execution of the scenario in an appropriate way for the user [27].

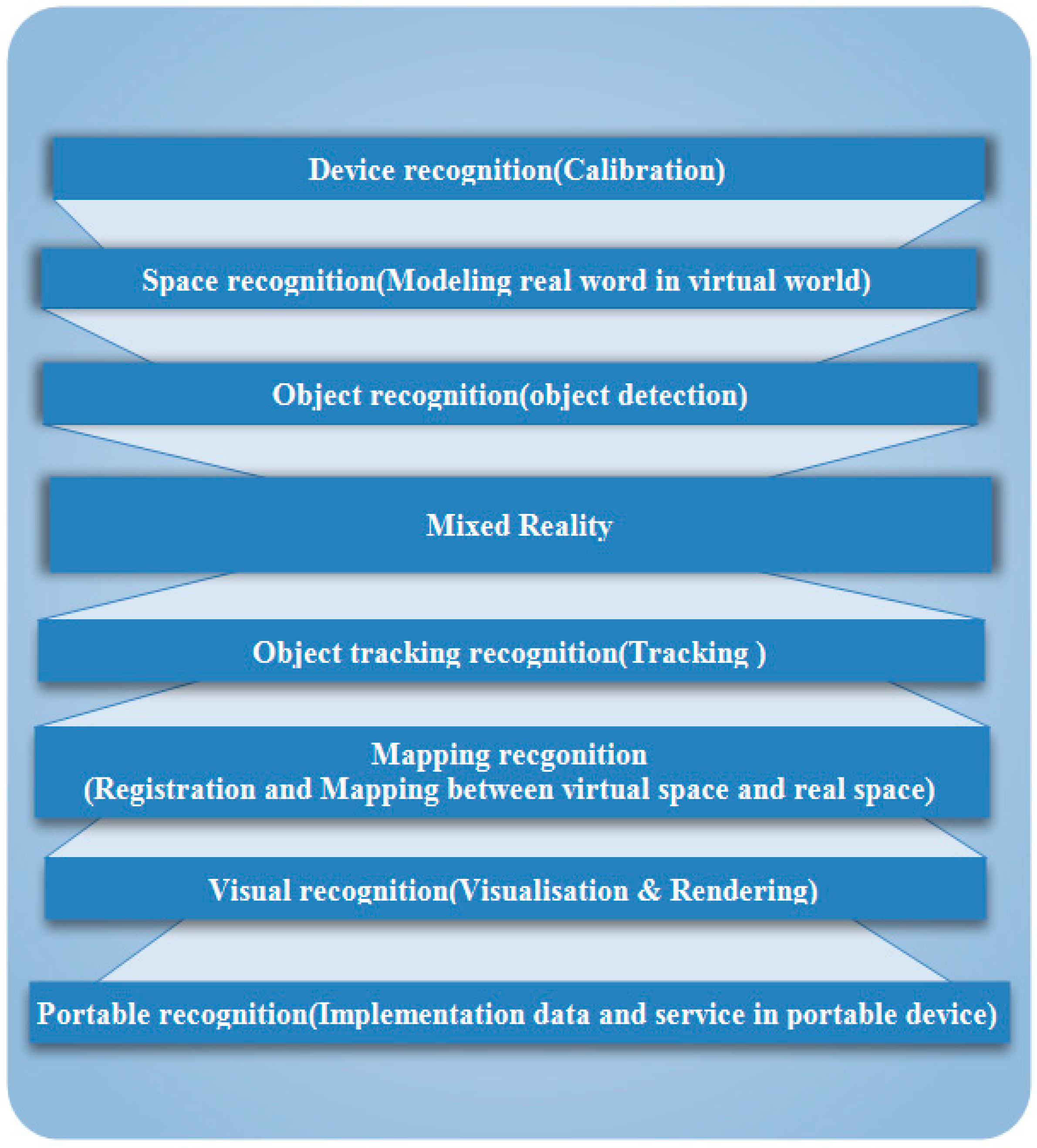

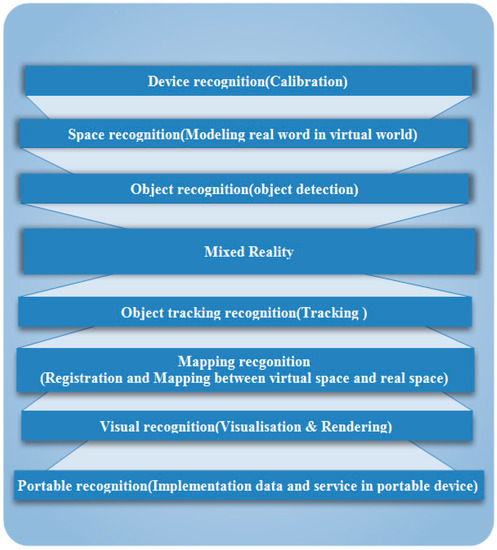

To create an MR environment using see-through technologies, some steps are required (Figure 4). In the device recognition step, the types of MR tools to be used, such as see-through or camera, are identified based on the project aims. Then, these devices are set using a calibration model. In the space recognition step, modeling space is performed to model some part of the real world in the virtual environment to run the user scenario. In the object and tracking recognition steps, the real-world object is identified and tracked. In mapping recognition, registration and mapping are performed to communicate between real and virtual objects. This provides interaction between the virtual and the real object. In visual recognition, an MR service is implemented and then visualized using appropriate computer graphic techniques. This step overlays virtual objects in the real-world scene. In portable recognition, an appropriate model is used for data delivery and scene reconstruction. This model reduces unnecessary information. Portable recognition is used for the mobile device. All of these steps need an appropriate analytical model. An explanation for this method is discussed in the algorithm section.

Figure 4.

Mixed reality steps.

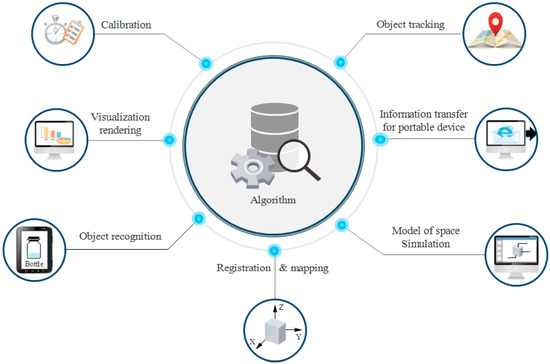

3.2. Algorithm

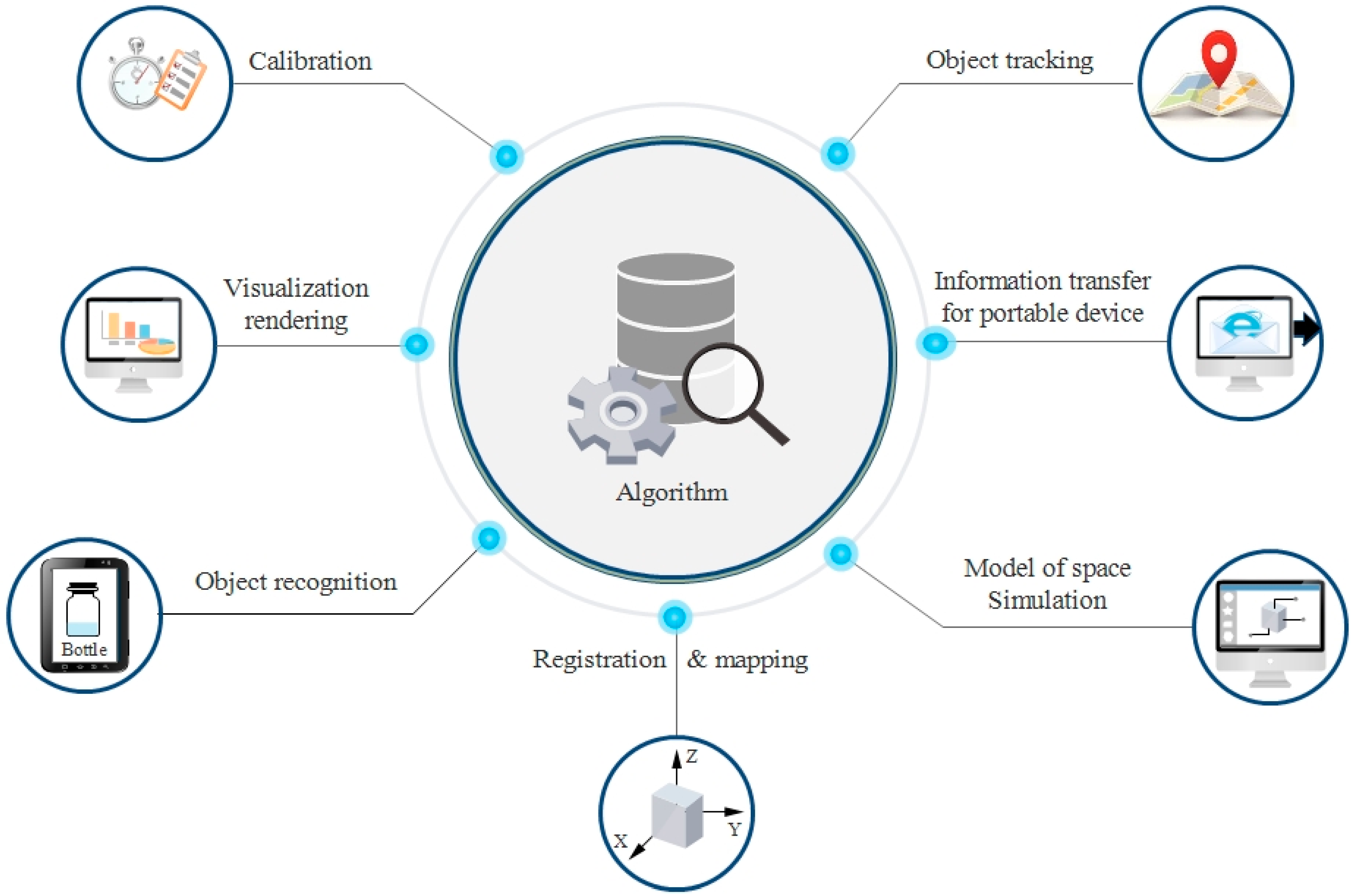

The algorithm is an important part of any MR application. It contains analytical mathematical models to improve the accuracy of the MR system. Algorithm models are used for different tasks, e.g., calibrating the MR devices for proper use and better user interaction, modeling the real word to create the MR environment, identifying and tracking the real-world object in order to overlay virtual information related to that object, three-dimensional (3D) mapping and registration of virtual models in real space, to create interactions between real and virtual content, close-to-reality visualization of MR object and MR scene, and sending the MR output in the lowest volume to a portable device such as a mobile. The following section discusses the details of the MR algorithm by dividing it into seven categories: calibration, the model of space and simulation, object recognition, object tracking, registration and mapping, visualization and rendering, and information transfer for portable implementation (Figure 5).

Figure 5.

Mixed reality algorithm.

3.2.1. Calibration

Calibration models are used to set MR devices, such as see-through tools and cameras. Such models offer many benefits. For example, the calibration model in HMD installed on the user’s eyes solves the instability between the tool and the hand to enable good interaction with the virtual object (such as a virtual button), thereby allowing for more accurate camera parameter estimation. This model is crucial to improve scene reconstruction from camera images. Ref. [28] used the latent active correction algorithm to tackle the problem of instability between the optical-see-through and the hand for hand–eye coordination. This algorithm is important for the user interaction that is achieved using a virtual button. Ref. [29] used a projection model using the matrix transformation formula to calculate the parameters of the fish-eye camera parameters. These parameters were useful for the creation of a full-view spherical image for scene reconstruction. Ref. [30] proposed a method in a surgical application using iterative closest point (ICP) with a fast point feature histogram. This model registered the appropriate cone-beam computed tomography (CBCT) volumes and 3D optical (RGBD) camera.

Ref. [31] implemented an MR-based needle navigation system for application in surgery. This system enables the rendering of tools such as needle trajectory and ultrasonic scanning images using an HMD device. It employs calibration techniques such as the procrustean method for ultrasound transducer calibration, and a template-based approach for needle calibration.

Despite the great benefits of the proposed calibration models, there are various challenges that need to be addressed to improve these models. In using the calibration models for MR devices such as HMD, inappropriate tracking of the user’s hand leads to a lack of proper fusion between the real and virtual hand. This effect reduces the stability accuracy between the user and the HMD device [28]. The camera calibration model requires the inclusion of additional parameters such as lens distortion or environmental effects (such as image brightness) to increase model accuracy [29]. The camera calibration model is sensitive to possible changes such as a camera or tools (e.g. surgery table) [30].

3.2.2. Model of Space and Simulation

Implementing any MR scenario requires real space modeling to create the MR environment. The space modeling contains two parts: modeling a large space such as a room, and real object such as tissue. The modeling of a large space such as a room should preserve constraints such as relationships related to room’s objects and users. The simulation of a real-world object such as tissue must consider constraints such as the modeling deformation of the object. Ref. [32] used geometry, symbolic modeling using VRML format to model a room for MR application. In this model, the descriptor was used to provide information regarding the user and user location in the MR space. Ref. [33] suggested a toolkit for building a large-space MR environment, such as a room or a museum based on a Voronoi spatial analysis structure. In this toolkit, virtual scenes are built in the form of a two-dimensional (2D) map; a 3D scene was also constructed using sweeping analysis. Then, arranging virtual objects in a real scene is performed using transformation between the real and virtual spaces. At this step, the toolkit uses anchor data located in the environment to reduce the error of matching the virtual model and the reality. It also eventually uses a Voronoi-based structure to enable the management of editing paths, adjusting the virtual object layer and fast rendering visibility preservation for the user. Ref. [34] proposed a method based on machine learning and Delaunay triangulation to create a mixed reality application for storytelling. In this method, the MR environment was created using the 3D data obtained via scanning and using a specified rule. For this purpose, the mesh was first calculated for the 3D data obtained from the scan, and resampling was used for error reduction. Continuing with the region classification, the previous data were subdivided into a region based on the use of a normal surface vector with a specified threshold limit, which was then used to generate a plane for region subdivision using the Delaunay and RANSAC algorithms. Finally, the model used specific rules such as type, area, and height for the generated data to place scene objects in the classified region. Ref. [35] used a point-primitive and elastic theory model to simulate the deformation of soft tissue for a surgical application.

Despite the great benefits of the proposed models, there are various challenges that still need to be addressed. In modeling a large space, one needs to consider sharing between different coordinate systems using standard schema such as ontologies, the possibility of using an authoring system, which enables combing, raster, and vector in 2D and 3D using appropriate tracking systems to determine the status of objects with sensor fusion [32]. In MR environment reconstruction using 3D data and machine learning algorithms, it is important to use the appropriate setting of the model, such as the appropriate threshold, and model parameters such as size, etc. [34]. In a simulation model, increasing the output and rendering it at a higher speed requires optimization and computational techniques such as GPU processing [35].

3.2.3. Object Recognition

Identifying a real object and tracking enables the embedding of a virtual object in real space in order to implement an MR scenario. This section discusses object recognition methods, and the next section describes tracking techniques. In object recognition, the object may be static or dynamic in nature. Dynamic object recognition is an interesting topic. A good example of dynamic object recognition is moving human recognition. Ref. [36] employed an image-processing-based approach using probability-based segmentation to detect a moving object (a human) from the foreground to insert the virtual object, such as a home, in a real scene. Ref. [37] proposed the use of image processing based on semantic segmentation using conditional random fields as recurrent neural networks (CRF-RNN) to provide an appropriate context for display in a material-aware platform. Ref. [38] proposed image processing using deep learning and labeling based on voice commands for object detection from images. This method was suitable for small-size classification. Ref. [39] employed spatial object recognition for displaying information (such as a building) based on the user field of view. This model used filtering based on the distance from the user to display information.

Despite the great benefits of the proposed models, there are various challenges that still need to be addressed. In image-based approaches based on deep learning, choosing the right training data set to identify the general object of the MR system is an important point. This data provides a useful context in MR systems to improve the quality of interaction [37]. In the image-processing-based approach based on labeling with voice commands, the accuracy of the method depends on concepts such as good synchronization of voice commands with the gaze. Additionally, the scalability of this method for large classification is a challenge and needs future research [38]. Spatial object detection encounters unwanted errors caused by tracking tools such as the global positioning system (GPS). This problem needs to be combined with an image-processing technique to extract information such as contour data, followed by comparison of the extracted data with the map to correct errors [39].

3.2.4. Object Tracking

The interaction of virtual and real objects requires them to be positioned, which is achieved using appropriate tracking techniques. The MR tracking method is divided into three classes: sensor-based, vision-based, and hybrid. The hybrid method is a combination of the first two methods to improve the shortcomings associated with each of them. Sensor-based tracking uses sensing devices. This method is categorized into categories such as GPS, visual markers, acoustical tracking systems, magnetic and inertial sensors, and hybrid systems [5]. The sensing device estimates a value such as the position of a real object, for example, a game player for an MR application such as a race. Ref. [40] suggested using GPS to track multiplayer game play in outdoor spaces to determine players’ relative positions. MR attributes such as motion properties requires the use of different sensors and fusion strategies to find the motion property. Ref. [41] proposed using a multimodal tracking model based on sensor fusion information for person tracking. Vision-based tracking is based on the use of a computer vision technique that utilizes image processing. This strategy may be feature-based or model-based. Ref. [42] suggested using image processing based on circle-based tracking in a virtual factory layout planning. Ref. [43] proposed using markerless tracking that utilizes feature-based tracking in a BIM-based markerless MR. Ref. [44] proposed markerless tracking driven by an ICP algorithm for an MR application for trying on shoes. Ref. [45] proposed three mechanisms containing feature-based tracking (using feature detection and SLAM), model-based tracking (comprising contour detection), and spatial-based tracking (using depth information) for self-inspection in the BIM model.

Although the discussed methods are important for tracking, their practical use involves several challenges. In the sensor-based method, installing the device in an appropriate position, as well as power management, are important points. A good example in this regard is installing a local transmitter in the appropriate place to take advantage of existing satellites in the GPS method. Finding precise locations is necessary when using visual markers as well. The sensor-based method requires the use of a digital model of a real environment to map sensor data to a precise location. This is used to determine the location of the virtual object [5]. Removing the defect within an important method is a big challenge. For GPS data, noise removal using a gyroscope is a strategy for defect removal [40]. In cases that need sensor fusion, optimum allocation is necessary. The optimum allocation requires an appropriate analysis to obtain coherent data related to the object [41]. Vision-based tracking needs to consider new topics such as the use of multiple markers and multiple cameras to increase accuracy and coverage [43]. Practical use of the vision-based model needs to consider additional constraints such as different reference models for tracking. A good example in this regard is using one reference shoe model for a virtual shoe application. This renders the result of the system unsuitable for different users [44].

3.2.5. Registration and Mapping

Interaction among virtual and real objects requires mapping and registration of the virtual model in real space. Ref. [46] proposed the use of the ICP algorithm for overlaying the virtual model of a building in real space using user position. This model was implemented for heritage building inspections. This method handles the problem of mapping and registration for large data using the preferred key object. The key objects were selected by the user on a presented miniature virtual model. Ref. [47] proposed a markerless registration model for surgery to register a medical image on the patient. This method used facial features to find the transformation parameters for registration. The model contains steps such as generating a 3D model of the patient, detecting facial features, and finding the transformation for registration using these features based on the camera’s intrinsic parameters and the headset position. This model was followed by the ray tracing algorithm to locate an object in a real scene. Ref. [48] proposed a block box approach to align the virtual cube to the real world using a mathematical transformation. To achieve this goal, different transformation models were studied, such as perspective transformation, affine transformation, and isometric transformation. The transformation model aims to match the coordinate system of the tracker and the virtual object. This model is a good solution to achieve good accuracy for HoloLens calibration. Ref. [49] employed tetrahedral meshes to register a virtual object in a real object in a virtual panel application.

Despite the importance of the proposed method, there are many challenges involved in the use of these models. Using the ICP algorithm in MR application based on the use of HoloLens requires a device with good accuracy. This is important because this operation is based on matching between a two-point set data related to the HoloLens and the virtual model. Handling large data using user interaction during registration needs a well-designed interface. Such an interface facilitates user operation during key object selection [46]. Markerless registration requires the use of a high-resolution device to manage complex tasks such as the Web-based application of MR [47]. The alignment methods require the simultaneous use of sensor fusion, that handles limitations such as field of view, and occlusion, in addition to providing opportunities for tracking different objects [48].

3.2.6. Visualization and Rendering

Displaying MR content requires the use of appropriate analytical algorithms that handle different challenges, e.g., adding a virtual file to video images to enrich it, close-to-reality representation of the virtual object using lighting and shadow, managing the level of details regarding user position, solving occlusion and screen off for an invisible object, using standard schema for faster display, managing bulky 3D large data such as point cloud, and synthetizing different display information sources.

Ref. [50] proposed using chroma-keying with PCA for the immersion of virtual objects in video images. This method was useful for matting, which is a process used for foreground identification with separation images to the back and foreground. Ref. [51] used the spherical harmonics light probe estimator for light estimation based on deep learning. This method processes a raw image without the need for previous knowledge about the scene. Ref. [52] proposed a lighting model including a refractive object in the instant radiosity (DIR) model. Ref. [53] recommended using the fuzzy logic model to add soft shadows to a virtual object during embedding in a real scene. Ref. [54] proposed the use of a model containing precomputed radiance transfer, approximate bidirectional texture function, and filtering with adaptive tone mapping to render materials with shading and noise. Ref. [55] suggested using the level of detail (LOD) model for the visualization of a virtual historical site. Ref. [56] proposed a method based on holographic rendering using window MR to place geospatial raster-based data in real terrain. This method uses large-scale data from different sources and places it in the real world. In this model, the real world is simulated with a virtual globe, and the data is collected at different LOD from different sources and is then positioned on a virtual globe based on hierarchical LOD rendering with respect to the camera. This model contains the following steps: checking the view through the intersection with the bounding box of the interest area, using transformation to project data on the virtual globe, and performing rendering by running the virtual coarser tile. Ref. [57] proposed a combination of visibility-based blending and semantic segmentation to handle the occlusion problem in space MR. Ref. [58] recommend a model that uses space-distorting visualizations for handling screen-off and occlusion while displaying POI. Ref. [59] suggested taxonomy containing schema-related data, visualization, and views to retrieve the best display model and display information for medical applications. Ref. [60] used the Octree model to handle large volumetric data such as those obtained from point cloud during rendering. A spatial data structure model based on Voronoi-diagrams was proposed by [33]. This model was used for fast-path editing and fast rendering in the user’s place. The model process stopped rendering models that were not in real visibility. Ref. [61] recommended using 3D web synthetic visualizations with HDOM to combine information such as the QRcode-AR-Marker during embedding into real space.

Although the discussed methods have great benefits for close-to-reality visualizations in MR applications, there are important challenges impeding their use. In the chroma key, the use of statically-based techniques for temporal matting and Holoing artifacts is needed [50]. Improvement of the light and shadow models requires temporal and dynamic effects modeling (such as abrupt changes in lighting, dynamic scene, and local light source) in addition to specifying classes of different algorithms for different light paths [51,54]. The LOD model requires study of the appropriate architecture to determine the volume of the process in the server and client [55]. The occlusion model needs to model temporal effects, as well as to consider complex scenes (such as complex plant effects) [57]. Web synthetic visualization requires research in different domains such as data mapping and visual vocabulary, as well as spatial registration mechanisms [61]. The development of geospatial data rendering requires the addition of labeling and typonyms, and the use of certain methods for vector-based data rendering [56].

3.2.7. Information Transfer for Portable Devices

Portable MR applications require the use of appropriate models to transfer information for reconstructing scenes using mobile camera images. This model enhances the necessary objects such as a player in a game. Ref. [62] used a probabilistic method based on the expectation-maximization (EM) algorithm to create a camera-based model to send video files for scene reconstruction. This model used two main strategies: sending a 3D model parameter for scene reconstruction and using a virtual model to display unnecessary objects. Ref. [63] proposed a data layer integration model which uses spatial concepts to enable uniform data gathering, processing, and interaction for diverse sources such as sensors and media. This model finds a spatial relation between the target object and the graphic media and user interaction, in addition to handling display information based on its importance. This helps to superimpose important layers on top of less important information.

Although the proposed research is of great benefit to Mobile MR, some challenges exist. The algorithms mentioned in [62] use concepts such as parallel processing for real-time processing. Accordingly, this algorithm can be divided into several general sections, i.e., lossless decoding of object information such as player information in a match and creating a virtual model based on user preference in multiple CPUs in real-time. The data layer integration model needs to preserve the integrity of data between layers [63].

3.3. Data Management

The data management strategy and the data itself are significant parts of any MR application. The former includes the presentation of data related to both the real and virtual objects. This also comprises certain relevant data sections such as a standard markup language, enabling it for use across different platforms, a search schema, providing meaningful information, a heterogeneous and big volume data management section known as Big Data, a proper filtering strategy, and the extraction of user-based information (context such as proximity or user view), as described in the following studies.

Ref. [64] developed XML-based data markup representation based on the 4WIH structure containing real and virtual world information, as well as a link between them. The use of this structure increases the flexibility of MR content for use across various platforms to enable interoperability. Ref. [65] proposed the use of ontology in this regard. This concept generates standard metadata that could be used during an object search on an MR platform. Ref. [66] proposed an appropriate strategy for big data handling in the MR space using a graph and manifold learning. This strategy handles heterogeneous structures such as temporal, geospatial, network graph, and high-dimensional structures. Ref. [67] employed filtering in low-resolution devices such as mobile devices. In that study, three methods of filtering were used: spatial, visibility, and context filtering. Spatial filtering was based on assessing the proximity of the object to the user. Visibility filtering evaluates the visibility of an object to the user. Context filtering aims to extract useful context-related user and user environment data via the computer vision method for feature extraction.

Despite the importance of the proposed methods, various different challenges remain. In big data management with graph visualization, the synchronization concept is an important problem, particularly in collaborative applications. This concept enables all users to see the same graph in the same place and interpret it. Furthermore, a new concept such as graph movement can be considered to improve the algorithm [66]. Using the content filtering strategy produces problems in accessing content when the receiver is not near the information [67].

3.4. Development Guideline

After identifying the key components for MR system development, familiarizing oneself with the development steps of the MR application is important. This step contains two parts; the first is related to the necessary steps to develop the MR system, and the second is related to the programming languages and simulation toolkits required for developing the MR platform.

Development steps: [68] considered the following four phases in the development of any MR system. Step 1. Requirement analysis for identification of essential components; Step 2. Matching components and customization; Step 3. Component composition for proper interaction, and running in a runtime framework; Step 4. Calibration, which is an essential part, particularly in some cases, for determining the position of the virtual object during embedding in a real space. The simulation environment includes software development, toolkits, and the programming interface, as summarized in Table 2 [6].

Table 2.

MR software, toolkits, and application programming interface [6].

3.5. Framework

After becoming acquainted with the MR components, it is important to be familiar with the types of such systems and their functionality. This section follows two general concepts. The first part introduces the features of MR systems, and the second provides examples of MR frameworks that have been practically implemented. Ref. [69] describes the characteristics of MR systems as follows: (1) real and digital content is integrated into an application; (2) an MR application is interactive in real-time; and (3) an MR application is registered in three dimensions. Regarding these three features, various types of MR systems have been presented in various studies such as, multimodal MR [70], visuohaptic MR [71], tangible MR [72], context-aware real-time MR [37], markerless MR [43], adaptive MR [55], and multiscale MR [73]. This framework provides many benefits for users. For example, in multimodal MR, the user can switch between several display modes (such as AR, VR, and cyberspace) to select the appropriate method for a scenario. In a visuohaptic MR, real–virtual interaction is created. For example, a real scene can involve touching a virtual object with a haptic tool. Tangible MR provides the user with the ability to manipulate virtual objects. For example, a user can move, tilt, and rotate the 3D design model in front of their eyes. In adaptive MR, the system sets the level of detail and lighting of the virtual model (such as ruins or tourist sites) according to the user’s location. Multiscale MR creates a multiscale interaction between AR and virtual content as an element of the MR environment. One of the best examples of this is the Snow Dome app. In this application, if a virtual object such as a virtual user enters a virtual dome, the user shrinks to miniature size and enlarges when exiting the app. In this application, the user can retrieve a virtual miniature tree. When this tree comes out, it enlarges and the environment is filled with virtual objects.

However, despite the importance of the proposed frameworks, attention to the following matters is important. Visuohaptic MR needs an appropriate strategy to handle dynamic scene problems when combined with virtual object haptic devices [71]. The context-aware real-time MR needs datasets with a more general feature to obtain more context and to use complex interactions based on realistic physical-based sound propagation and physical-based rendering [37]. The use of multicamera-based tracking is an important issue that improves markerless MR [43]. The use of the appropriate mechanism to create a balance between what is performed in the server and client to preserve real-time responses improves the adaptive MR framework [55]. Tangible MR requires different features such as the zoom feature and a camera with autofocus. The zoom feature helps identify tiny objects inside the dense construction model. Using a camera with autofocus handles occlusion using shading or a shadow mechanism. This improves the visibility of the model. Such a framework needs to handle the problem of delay due to a lack of synchronization when the model is observed [72].

3.6. Evaluation

After the development of each MR application, evaluation of the implemented system to understand its potential shortcomings is important. This evaluation is conducted from several perspectives, e.g., based on a user survey, a comparison with a common system, or based on an environmental reference. Evaluation based on user surveys shows the user satisfaction level with the system output. A comparison of results with a common system helps to determine the potential advantages or disadvantages of the MR application in practice, and evaluating the system output by observing environmental references is a practical MR-based navigation application.

Evaluation based on user survey. To improve MR systems, evaluating user satisfaction is critical; this process has been considered in several ways. A common method used in several studies is to conduct surveys based on questionnaire items. Ref. [74] used questionnaires incorporating the Likert scale to evaluate different items, such as fantasy, immersion, and system feedback in MR game applications. Ref. [75] created MR matrices comprising cognitive load, user experience, and gestural performance for surveys, and used variance analyses to identify inconsistent views during the evaluation of MR learning applications. Ref. [76] employed a questionnaire with statistical tests such as the t-test to assess user feedback in learning applications. Ref. [77] employed a combination of the creative product semantic scale (CPSS) -based method and the Likert scale in MR storytelling applications. This evaluation uses different items, such as novelty, resolution, and elaboration for evaluation. Ref. [37] proposed the use of surveys with the ANOVA test in learning applications to detect inconsistent views. Ref. [78] proposed the questionnaire approach to evaluate MR applications in construction jobs using parameters such as accuracy, efficiency, ease of use, and acceptability. This method was based on many analytical methods, such as the use of the Likert index for scoring the questionnaire, assessing the reliability of parameters with the coefficient of Cronbach Alpha, and evaluation of the agreement of the groups’ views using a statistical inference model such as the students t-test. Evaluation based on common system. Ref. [79] used surveying to compare the MR guidance output with that of a common system, such as computed tomography imaging for surgery. This evaluation was performed on the basis of different parameters, such as visualization, guidance, operation efficiency, operation complexity, operation precision, and overall performance. Evaluation based on environmental reference checking. Ref. [80] discussed the evaluation of navigation systems using the precision term, and proposed using the reference position in order to achieve increased precision. In this example, the user moves from the reference point or reads a visual marker in his/her path using a camera.

3.7. Security and Privacy

Although the development of an MR application provides great benefits, the practical use of it requires user acceptance. One issue with respect to user acceptance is preserving security and privacy. Privacy and security strategies need to consider different aspects, including the ability to gather user information, using MR information provided by third parties, the ability to share these systems, and providing security in the environment of these applications. Ref. [6] presented a comprehensive classification of all current security strategies for MR spaces into five classes: input protection, data access protection, output protection, interaction protection, and device protection (Table 3). Input protection refers to the protection of sensitive user information or information of users entering the MR platform that needs to be hidden. Data protection refers to the protection of information collected by MR sources, such as sensors, or wearable or mobile devices, which may be used by the 3D agent. Some concepts such as accuracy and accessibility are important in this regard. Output protection refers to the protection of information against external access and modification by other MR applications during rendering or display. User interaction protection refers to a mechanism that is needed to allow users to share their MR experience. Device protection refers to the mechanism that is used to maintain security in the MR tools and the input and output interfaces [6]. Ref. [81] presented a secure prototype called SecSpace for collaborative environments using ontology reasoning, a distributed communication interface. Ref. [82] used a security mechanism comprising three classes, i.e., spatial access, media access, and object access, to control access to the virtual elements and media used during teaching through user authorization.

Table 3.

Security and privacy approach for MR [6].

In large-scale MR applications such as MR social media platforms, the security mechanism is more complex. The security strategy needs to consider different levels of privacy, such as privacy for social network data and privacy for MR system objects. A good example in this regard is the Geollery platform proposed by [83]. This platform combines spatial resources, such as 3D geometry and social media (using Twitter) on an MR platform. In such an environment, the user can navigate in a 3D urban environment, chat with friends through a virtual avatar, and even paint a comment on a street wall in a collaborative environment. In this platform, the security mechanism is preserved on two levels: privacy for social media data and privacy for the virtual avatar. In social media, users have different modes such as preserving privacy for the public, a friend, and a virtual avatar, and also they can hide their display name in the system. Ref. [84] proposed a model for preserving the security of 3D MR data (such as point clouds). This model formulated the security of 3D data and the necessary metrics using the adversarial inference model. Of course, the strategies used in this section provide great benefit, but they need improvements. To develop security strategies, one must consider concepts such as unlinkability to specify the proper management of the user, entity, party, and links with data, flow, and process, in addition to considering the concept of plausible deniability to manage the denial of individuals (i.e., toward each other or the entity during the process) [6]. The geometry-based inference model for 3D MR could be improved by incorporating photogrammetric information such as an RGB color profile [84].

4. Architecture of MR Systems

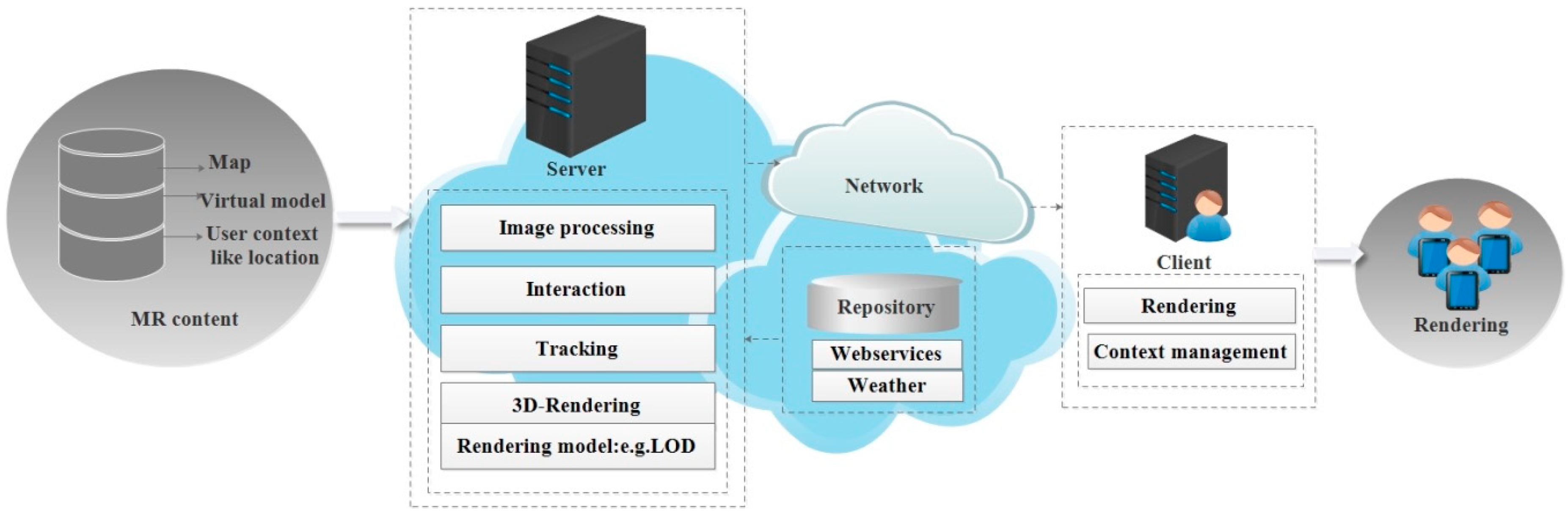

The development of an MR system to integrate the aforementioned components requires the use of a suitable software architecture. Using a suitable architecture while integrating components facilitates the relationship between them and leads to faster execution of MR algorithms in MR applications. This architecture comprises three parts: the server, client, and network. The server manages data and processes the execution. The network enables communication between the server and the client to execute the MR application. Additionally, the middleware, which is discussed in the next section, is a supplementary part between the system core and the application which augments the speed of the system. Therefore, this section describes the MR architecture layer. The architecture layer contains three sections: architecture issues, server issues, and network issues. The first section provides examples of architectural structures in MR systems and then describes the overall architecture of an MR system. The second section presents the different types of servers in previous studies and describes their roles. The third section outlines the importance of the network in the MR architecture and discusses some important features for implementing a better networking mechanism, such as the privacy and network protocol, and discusses new network architectures.

4.1. Architecture Issues

Different architectural structures have been used in previous studies for MR applications. Ref. [85] proposed a client–server architecture for collaborative design environments. This architecture can support multiple users, who can simultaneously edit features in a 3D environment. Ref. [86] proposed the client–server architecture for MR mobile platforms, which comprises four features: the MR server, stream server, client, and the thin client. In this architecture, after the content is received by the client, the mediator is responsible for managing and updating the system. In addition, the stream server provides rendering capabilities along with user tracking, and the client provides the user with the rendering service, which changes based on the stream server. Ref. [87] proposed a resource-oriented architecture to create a restful web service platform. This platform can support geospatial multimedia, such as 3D objects, point cloud, and street panorama, as well as a social network to support mobile clients. Ref. [88] suggested a cloud client–server architecture, which is multimodal and supports various tools and virtual models in a collaborative environment. In this architecture, the servers provide consistency between different clients to simultaneously provide access to visualization or editing virtual models. Ref. [89] proposed a service-oriented (SOA) web architecture that provides a runtime to support distributed services. This architecture contains a lookup discovery mechanism to provide healthcare services on the web platform. Ref. [90] proposed an innovative architecture that was an atomic model between peer-to-peer and the client–server to create a distributed MR. This architecture manages data collection from a sensor installed in real space and manages the resources and communication of the sensor node using an agent-based structure. In this architecture, the GUI agent displays the interface, the sensor agent collects data, and the rendering agent shares the scene with the MR device. The behavior agent tools request new data. Such an architecture plays an important role in using the system for a dynamic environment. Ref. [91] proposed an architecture by which to use MR on the Internet of Things (IoT), based on the integration of OneM2M as a standard architecture for IoT platforms and three different use cases for the MR device, i.e., sensor data visualization, actuator control, and MR-sensor data transmission. This architecture combines Mobius as one M2M platform for IoT and Hololens as an MR device. Ref. [92] proposed a client–server architecture that supports the geospatial information system (GIS) spatial functions to display the model of an ancient building superimposed upon ruins. This architecture comprises three components: servers, mobile units, and networks. The server manages data storage and delivery, as well as the necessary processing, e.g., of user tracking. The mobile unit provides the MR experience for users through server connections. The network provides the connection between the mobile unit and the server.

The general architecture of an MR system is illustrated in Figure 6. The MR content includes useful data for MR applications, such as maps, three virtual-mode models, and user context. User context contains data such as location. These data are obtained from external databases, sensors or wearable devices, and social networks. The server component is the core of the system, which manages the MR content, executes the necessary processes, and sends the result along with the network mechanism to the client. Servers perform different analyses, such as image processing, user tracking, and 3D rendering, and set properties, such as the LOD. If necessary, additional data through the repository is provided for the server. A good example in this regard is weather data, which is obtained from online sources. The client responds to the user to visualize the result using mechanisms such as rendering and context management. The context management increases the level of inclusion of user preferences during rendering.

Figure 6.

General architecture of mixed reality system.

4.2. Server Issues

Different types of servers provide different benefits for the MR platform. Ref. [82] considered the virtual network computing (VNC) server in an MR application for classrooms. This server shares executable applications on the server for users (real students or virtual students). It also provides synchronization between the real-world (real-world student) and the virtual world (online student in a virtual classroom) to access identical slides during teaching with the avatar. Ref. [93] employed the UPnP media server in smart home applications to send media files (photos, videos, and images) for display on home screens. This mechanism uses UPnP to browse content and then provides content with access to the server. This mechanism has a lookup service that is able to locate and store content on the server for the client. Ref. [94] employed a web server with VRML and X3D to retrieve digital content (such as a virtual artifact) in a virtual museum. This server creates an appropriate template for the display result on a webpage using XSLT. It also allows access to the AR application to display the 3D result in real space. Ref. [39] employed web technology to create an ambient environment to enable the display of various information, such as maps or multimedia, using GPS data based on user positions. Ref. [95] employed a geospatial webserver to create 3D views for a 3D town project. This server allows the combination of various sources, such as urban 3D models stored in the geo-server and sensor information for tracking the urban mobility to visualize the urban change events, such as traffic. Ref. [96] employed a ubiquitous server in an airport application. This server contains a database of the object and its spatial relationships and tracking information, and provides tracking information for the creation of new relationships (such as the pattern of motion). This application is able to track the position of the guard to check passenger entry into a shop, and visualize the 3D object in the real scene.

4.3. Network Issues

The network is an important part of the MR architecture that provides communication between the client and the server through a configuration mechanism. When the IP of the network is achieved, each client can communicate with other clients and the server to access the MR package containing the virtual model [97]. In this mechanism, considering privacy issues is essential. Privacy in MR spaces contains two channels, i.e., real and virtual, which require separate mechanisms [81]. For example, when content is shared, concern regarding access and encryption is important; however, simultaneously, it is important to understand who is inside the remote controller. The network performs the process by following standard protocol. The network protocol (such as TCP/IP) provides the ability to combine various sources of tracking information, justification, registry, and other sources, such as AI and analytical engines, and then runs the online script [98]. Using the appropriate protocol is critical, especially when uploading a video file during which there is an increase in the number of clients and a decrease in bandwidth. Ref. [99] proposed a demand driven approach (which, of course, has the challenge of delays) or a solution, such as the upload of a video with separate wiring (which suffers from synchronization issues) and IP multicast architecture based on 1 to N communication. Structures, such as distributed interactive simulation (DIS), take latency into account [100]. Apart from these issues, MR platforms require a new generation of networks that allow adaptation based on the individual (user). For example, when a video file is sent, each user needs to focus on a specific area and content. This problem requires greater network bandwidth. Using a 5G system solves the problem by using multiple-input multiple-output (mMIMO), or broadband created by millimeter-wave systems [101].

Of course, despite the importance of the proposed architectures, studies on proposing new Web architecture are needed. This architecture supports concepts such as shape dynamics and geometries. This architecture provides more contextual data and discovers relevant information through associative browsing and orchestrating content to support data from different sources in a dynamic environment [87,88]. In distributed MR based on using sensor data, increasing the remote participant will require the use of several centralized controls and dynamic shared state management techniques that maintain interactivity [90]. Edge computing can be used to decrease delays and increase service quality while sending a portion of large data required for display. For example, we can refer to the MR network-oriented application that operates a robot through user gestures and displays location-aware information through the edge server. In this structure, one edge server is provided for the robot while another is used for user information. This architecture sends only the portion of the information required for display for the application [102].

5. Middleware

In this research, the middleware contains two subsections. The first part introduces the features of the middleware for MR support, and the second part provides examples of the developed middleware types.

Ref. [103] discussed the features of the MR middleware capabilities, such as the ability of networking to support multiple users, entering dynamic spaces in mobile, web, or head-up modes, display support to enter the interactive space containing the virtual space and real space, supporting both VR and AR capabilities and switching between them, supporting AI for animals, humanoid VR, and the ability to change the properties of virtual reality and the settings required for users. Different middleware structures have been proposed in previous studies. Ref. [104] used the virtual touch-middleware, which creates a relationship between the sensor information and the virtual world. This middleware detects the object in real space using image processing and a sensing device, and then transmits this information via scripting to the virtual environment. Ref. [105] employed the Corba-based-middleware to display media information based on the user’s location. The middleware facilitates the configuration and connection of the components to allow the selection of the best tool to display media. Ref. [106] proposed middleware based on the standard electronic product code (EPC) structure in learning applications. This middleware facilitates the configuration for education format, virtual environment, and sensing technology. Ref. [107] proposed the IoT-based middleware to generate global information to support MR applications. Ref. [108] proposed message-oriented middleware (MOM), which provides synchronization in response to the service, and then creates the ability to run appropriate messages regarding the order.

Despite the benefits of proposed middleware, they need to be improved. The response time is an important issue for this improvement. This concept is especially important in virtual touch middleware. In a common structure, users involve different messaging through middleware modules because of processing on different servers; therefore, these servers need to be installed on the same local network to achieve an acceptable response time; however, modern structures will facilitate the process [104]. The improvement in middleware helps support real-time capability for video streaming [105]. The improvement in middleware helps implement interactive middleware that supports media services [108].

6. Application

By choosing the components of the MR core application and deploying it with a proper architecture and simulation toolkits, an MR application is implemented. The MR application provides preferred services to users. To achieve this, an application first provides connectivity to sensing devices and MR devices such as an HMD installed on the user’s face, and enables user interaction by using applications through the UI. User interaction is possible in different ways, such as by voice commands. At this time, an application is performed and, hence, an appropriate analytical algorithm-related scenario is executed. The result of this process is an MR service that renders the result with appropriate visualization techniques. MR applications are practical for decision-making in daily activities.

MR provides great benefits for tourist applications by overcoming the limitations of common systems. MR applications provide immersive experiences by merging virtual and real objects. With this, the user can see a virtual representation of a ruined physical object (such as a 3D model) embedded in a real scene in real size. In this visualization, the details of the model and lighting are set according to the user’s position [92]. In such immersive experiences, the user can interact with the virtual model in real space, such as opening a door in a historical building. MR applications also handle displaying vivid scenes related to historical objects embedded in a real environment with immersive experiences. For example, a digital diorama visualizes the movement of a historical object, such as an old locomotive, by pointing to them in the mobile device [109], while AR-based applications only augment historical content such as images, videos, etc. with weak interactions.

MR-based game applications overcome the limitations of common systems. They provide interaction with game objects embedded in real scenes. For example, in a strategic game, the user moves a game agent on a board using a preferred method, such as voice commands. Then, the system shows the movement of the agent on the board in real space [110], while previous game applications just visualize the game in a digital environment, such as a PC or mobile device, without providing interaction with the game object in the real environment.

There are various limitations in common education systems that are overcome by MR concepts. MR-based applications provide an immersive experience for so-called embodied learning. Imaging in an astronomy application, the user moves on a board in a real environment and interacts with virtual objects such as asteroids. Then, the user perceives the external forces affecting the asteroid trajectory using embodied learning [111]. In contrast, in common learning systems, the user utilizes a PC or Mobile to observe astronomy objects without an immersive experience in a real environment.

MR opens new capabilities for medical applications with fewer limitations compared to non-MR tools. In MR-based applications all medical data are displayed with the proper size. This information is embedded in the real scene, such as a surgery room. The user, such as a surgeon, can interact with this information using a surgical device. A good example is an MR guidance platform for mitral valve repair surgery. This seamless platform contains the integration of the heart anatomy model, image data, surgery tools, and display devices in the real environment. This application is used as a guidance platform for the surgeon during the mitral repair operation [112]. In contrast, in AR-based medical applications, information is simply overlaid on the patient’s body without preserving the actual size for an accurate visualization process.

MR provides great benefits in design applications that overcome the limitations of common systems. MR applications create a collaborative environment for design. In such an environment, multiple users can see the virtual object of the design, such as a 3D model. This model is embedded in a real environment with proper size. The user can interact with this model to manipulate it (through moving, tilting, and rotating) using MR devices such as Hololense [113]. In contrast, in previous design applications, the user could see the design model in a design software without interacting with it or observing the visualization embedded in real space.

7. UI

As discussed in the previous section, running each MR application comes under the predominance of a user interface (UI) that connects the user and the application. The user interacts with the UI using the interaction method. To increase the quality of the UI designs, evaluation of user satisfaction is important. This is called the usability test. This section first introduces important UI features, and then introduces the conventional techniques used to evaluate the usability test for MR UI.

7.1. UI Issue

The UI has changed from the traditional mode of WIMP (Windows, Icons, Menus, and Pointing) in MR applications. This interface uses wearable technologies and different displaying mechanisms that superimpose virtual content on the environment. Such systems use user tracking to provide a service at the user’s location. These interfaces are multimodal to enable the user to switch from different modes, such as web-based 2D or 3D to VR or AR mode. This benefit enhances the rendering result for the user [94,114,115]. The user interacts with UI using different interaction strategies such as gesture-based or audio-based interaction. This simplifies communication for the user. The emergence of multiscale MR also created multiscale interaction using recursive scaling. This is important, especially in learning applications. Imaging a virtual user such as a student can change their size during interaction with virtual objects in the learning environment. The student can shrink to the microscale to observe cellular mechanisms, or enlarge again in the MR environment to interact with a virtual astronomical object.

UI adaptation to display the appropriate virtual element is important. This concept provides ease of use within an MR application. This can be performed with techniques such as optimization. This method enables the creation of a rule to decide when, where, and how to display the virtual element. With the lowest cognitive load, the majority of the elements and details are shown. Ref. [116] offered a combination of rule-based decision-making and optimization for UI adaption using virtual element LOD and cognitive load.

Ref. [7] discussed the importance of user-friendliness in UI design. User friendliness is related to concepts such as display and interaction. MR applications use different displaying techniques, as discussed in Visualization, and rendering to visualize the virtual content embedded in real space. MR applications interact with the user by using the appropriate interaction methods. The selection of the interaction method depends on the application. For example, in construction work, interaction using HMD is preferable over mobile. The HMD does not require the user’s hands; hence, the worker can use it easily in complex tasks, such as pipeline assembly.

While UI is important for appropriate user interaction, designing UI involves many challenges. UI design needs both software and hardware development. The transmission of information from traditional 3D rendering to the MR environment requires the application of registry, display, and occlusion management techniques. For Mobile UI, computational power and capacity management need to be considered [7]. User interaction with UI interfaces needs to consider supporting dynamic multimodal interaction. This is performed using different inputs such as standard input, sensor, and tangible input [94]. Sound-based interaction needs to be improved using additional sound sources and sound recognition methods to support multi-user interaction [115]. UI adaptation involves problems such as limitations of the MR headset FOV and cognitive load estimation. FOV influences how much information is displayed. Cognitive load estimation requires techniques that utilize sensor fusion to handle sudden interruption and errors during this estimation [116].

7.2. Usability

The usability test evaluates the MR UI interface concerning the compatibility and quality of virtual object visualization [117]. Usability can be evaluated in a variety of ways. Ref. [8] suggested questionnaires/interviews, methods, and user testing based on ergonomic evaluation methods. [97] and [72] suggested the combination of heuristic evaluation and formative user testing in collaborative MR.

Although the usability assessment is an important issue for MR UI design, several challenges exist in this assessment. Ref. [8] discussed different challenges, such as limitations due to physical space (e.g., temperature change during the video-projected example), subject restrictions (e.g., when different sections must be evaluated, such as the use of gesture and voice), and setup user testing (because the user may not know all the parts of the system and learning may take time), along with the existence of different events (such as multiple video files or multiple modes).

8. Conclusions and Future Research

This research surveys previously published papers to propose a comprehensive framework comprising the various components of MR applications. This was performed with a focus on current trends, challenges, and future prospects. The framework comprises five layers: the concept layer, architecture layer, middleware layer, application layer, and UI layer. The first layer is about the component, the second and third layers describe issues related to architecture, the fourth layer is about the execution of the architecture, and the final layer discusses the UI design to execute the application with user interaction. The proposed framework considers important and practical points. This framework is a very useful guide to the MR space; it discusses different issues related to MR systems, such as the various development phases, analytical models, the simulation toolkit, and system types and architectures. The variety of MR samples considered in this research are from the practical perspective of different stakeholders.

To continue the proposed research, many research trends exist. MR applications need an appropriate strategy to handle dynamic effects, such as sudden environmental changes and the movement of objects. The improvement of MR mobile applications requires further research related to proposing a new computer graphic algorithm to handle automatic environment construction and large data visualization with a focus on close-to-reality simulation of an important scene. Large-scale MR needs to incorporate security mechanisms owing to the presence of different levels of information such as organizations, social network data, virtual objects, users, and environmental sensors. Enriching MR content using IoT requires new architectures to handle the complexity of MR integration within the IoT platform. The design of future MR systems needs to consider related interface automation. This concept provides adaptability for the user. All of the aforementioned domains need to consider the use of new strategies based on spatial analyses with methods such as Delaunay triangulation and Voronoi diagrams to handle memory capacity and increase the quality of MR content reconstruction.

Author Contributions

Conceptualization, S.R. and A.S.-N.; methodology, S.R., A.S.-N. and S.-M.C.; validation, S.-M.C.; formal analysis, S.R.; investigation, A.S.-N.; resources, S.R.; data curation, S.R.; writing—original draft preparation, S.R.; writing—review and editing, A.S.-N. and S.-M.C.; visualization, S.R. and A.S.-N.; supervision, A.S.-N. and S.-M.C.; project administration, S.-M.C.; funding acquisition, S.-M.C. All authors have read and agreed to the published version of the manuscript

Funding

This research was supported by the MSIT (Ministry of Science and ICT), Korea, under the ITRC (Information Technology Research Center) support program (IITP-2019-2016-0-00312) supervised by the IITP (Institute for Information and communications Technology Planning and Evaluation).

Conflicts of Interest

The authors declare no conflict of interest.

References

- Jiang, H. Mobile Fire Evacuation System for Large Public Buildings Based on Artificial Intelligence and IoT. IEEE Access 2019, 7, 64101–64109. [Google Scholar] [CrossRef]

- Hoenig, W.; Milanes, C.; Scaria, L.; Phan, T.; Bolas, M.; Ayanian, N. Mixed reality for robotics. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015; IEEE: Piscataway, NJ, USA, 2015. [Google Scholar]

- Hashimoto, S.; Tan, J.K.; Ishikawa, S. A method of creating a transparent space based on mixed reality. Artif. Life Robot. 2007, 11, 57–60. [Google Scholar] [CrossRef]

- Flavián, C.; Ibáñez-Sánchez, S.; Orús, C. The impact of virtual, augmented and mixed reality technologies on the customer experience. J. Bus. Res. 2019, 100, 547–560. [Google Scholar] [CrossRef]

- Costanza, E.; Kunz, A.; Fjeld, M. Mixed reality: A survey. In Human Machine Interaction; Springer: Berlin/Heidelberg, Germany, 2009; pp. 47–68. [Google Scholar]

- De Guzman, J.A.; Thilakarathna, K.; Seneviratne, A. Security and privacy approaches in mixed reality: A literature survey. arXiv 2018, arXiv:180205797. [Google Scholar] [CrossRef]

- Cheng, J.C.; Chen, K.; Chen, W. State-of-the-Art Review on Mixed Reality Applications in the AECO Industry. J. Constr. Eng. Manag. 2019, 146, 03119009. [Google Scholar] [CrossRef]

- Bach, C.; Scapin, D.L. Obstacles and perspectives for evaluating mixed reality systems usability. In Acte du Workshop MIXER, IUI-CADUI; Citeseer: Funchal, Portugal, 2004. [Google Scholar]

- Jacobs, K.; Loscos, C. Classification of illumination methods for mixed reality. In Computer Graphics Forum; Wiley Online Library: Hoboken, NJ, USA, 2006. [Google Scholar]

- Stretton, T.; Cochrane, T.; Narayan, V. Exploring mobile mixed reality in healthcare higher education: A systematic review. Res. Learn. Technol. 2018, 26, 2131. [Google Scholar] [CrossRef]

- Murphy, K.M.; Cash, J.; Kellinger, J.J. Learning with avatars: Exploring mixed reality simulations for next-generation teaching and learning. In Handbook of Research on Pedagogical Models for Next-Generation Teaching and Learning; IGI Global: Hershey, PA, USA, 2018; pp. 1–20. [Google Scholar]

- Brigham, T.J. Reality check: Basics of augmented, virtual, and mixed reality. Med. Ref. Serv. Q. 2017, 36, 171–178. [Google Scholar] [CrossRef]

- Ohta, Y.; Tamura, H. Mixed Reality: Merging Real and Virtual Worlds; Springer Publishing: New York, NY, USA, 2014. [Google Scholar]

- Aruanno, B.; Garzotto, F. MemHolo: Mixed reality experiences for subjects with Alzheimer’s disease. Multimed. Tools Appl. 2019, 78, 13517–13537. [Google Scholar] [CrossRef]

- Chen, Z.; Wang, Y.; Sun, T.; Gao, X.; Chen, W.; Pan, Z.; Qu, H.; Wu, Y. Exploring the design space of immersive urban analytics. Vis. Inform. 2017, 1, 132–142. [Google Scholar] [CrossRef]

- Florins, M.; Trevisan, D.G.; Vanderdonckt, J. The continuity property in mixed reality and multiplatform systems: A comparative study. In Computer-Aided Design of User Interfaces IV; Springer: Berlin/Heidelberg, Germany, 2005; pp. 323–334. [Google Scholar]

- Fotouhi-Ghazvini, F.; Earnshaw, R.; Moeini, A.; Robison, D.; Excell, P. From E-Learning to M-Learning-The use of mixed reality games as a new educational paradigm. Int. J. Interact. Mob. Technol. 2011, 5, 17–25. [Google Scholar]

- Milgram, P.; Kishino, F. A taxonomy of mixed reality visual displays. IEICE Trans. Inf. Syst. 1994, 77, 1321–1329. [Google Scholar]

- Nawahdah, M.; Inoue, T. Setting the best view of a virtual teacher in a mixed reality physical-task learning support system. J. Syst. Softw. 2013, 86, 1738–1750. [Google Scholar] [CrossRef]

- Olmedo, H. Virtuality Continuum’s State of the Art. Procedia Comput. Sci. 2013, 25, 261–270. [Google Scholar] [CrossRef]

- Wang, K.; Iwai, D.; Sato, K. Supporting trembling hand typing using optical see-through mixed reality. IEEE Access 2017, 5, 10700–10708. [Google Scholar] [CrossRef]

- Parveau, M.; Adda, M. 3iVClass: A new classification method for virtual, augmented and mixed realities. Procedia Comput. Sci. 2018, 141, 263–270. [Google Scholar] [CrossRef]

- Rosenberg, L.B. The Use of Virtual Fixtures as Perceptual Overlays to Enhance Operator Performance in Remote Environments. In Stanford University Ca Center for Design Research; Stanford University: Stanford, CA, USA, 1992. [Google Scholar]

- McMillan, K.; Flood, K.; Glaeser, R. Virtual reality, augmented reality, mixed reality, and the marine conservation movement. Aquat. Conserv. Mar. Freshw. Ecosyst. 2017, 27, 162–168. [Google Scholar] [CrossRef]

- Potemin, I.S.; Zhdanov, A.; Bogdanov, N.; Zhdanov, D.; Livshits, I.; Wang, Y. Analysis of the visual perception conflicts in designing mixed reality systems. In Proceedings of the Optical Design and Testing VIII, International Society for Optics and Photonics, Beijing, China, 5 November 2018. [Google Scholar]

- Claydon, M. Alternative Realities: From Augmented Reality to Mobile Mixed Reality. Master’s Thesis, University of Tampere, Tampere, Finland, 2015. [Google Scholar]

- Kress, B.C.; Cummings, W.J. 11–1: Invited paper: Towards the ultimate mixed reality experience: HoloLens display architecture choices. In SID Symposium Digest of Technical Papers; Wiley Online Library: Hoboken, NJ, USA, 2017. [Google Scholar]

- Zhang, Z.; Li, Y.; Guo, J.; Weng, D.; Liu, Y.; Wang, Y. Task-driven latent active correction for physics-inspired input method in near-field mixed reality applications. J. Soc. Inf. Disp. 2018, 26, 496–509. [Google Scholar] [CrossRef]

- Nakano, M.; Li, S.; Chiba, N. Calibration of fish-eye camera for acquisition of spherical image. Syst. Comput. Jpn. 2007, 38, 10–20. [Google Scholar] [CrossRef]

- Lee, S.C.; Fuerst, B.; Fotouhi, J.; Fischer, M.; Osgood, G.; Navab, N. Calibration of RGBD camera and cone-beam CT for 3D intra-operative mixed reality visualization. Int. J. Comput. Assist. Radiol. Surg. 2016, 11, 967–975. [Google Scholar] [CrossRef]

- Groves, L.; Li, N.; Peters, T.M.; Chen, E.C. Towards a Mixed-Reality First Person Point of View Needle Navigation System. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Berlin/Heidelberg, Germany, 2019. [Google Scholar]