Anthropomorphic AI and Consumer Skepticism: A Behavioral Study of Trust and Adoption in Fragile Economies

Abstract

1. Introduction

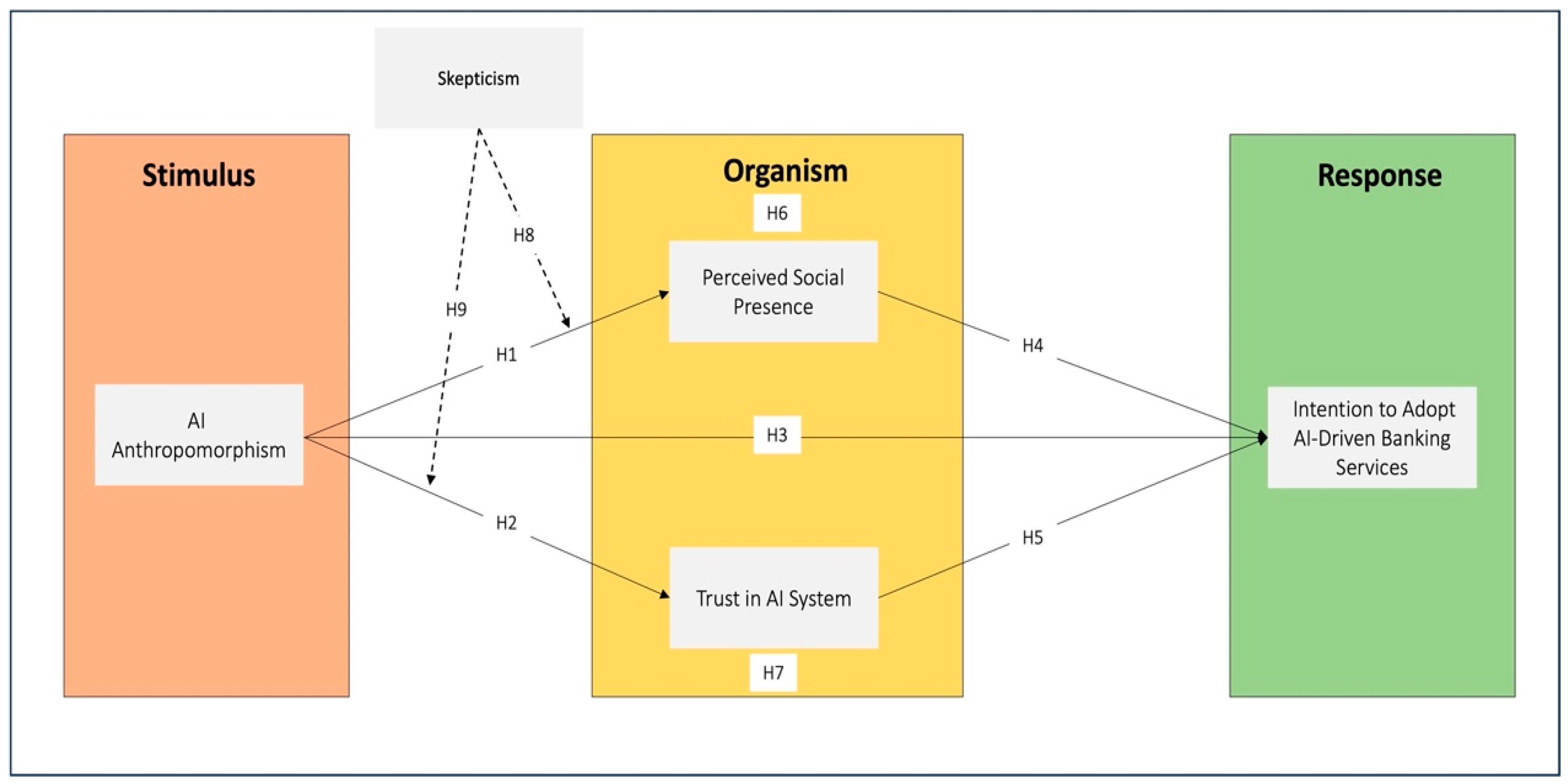

- How does AI anthropomorphism influence customers’ intention to adopt AI-driven banking services?

- To what extent are the effects of AI anthropomorphism influenced by perceived social presence and trust in the AI system?

- How does customer skepticism moderate the psychological pathways linking anthropomorphism to adoption intention?

2. Literature Review and Hypotheses

2.1. Theoretical Framework

2.2. Hypotheses Development and Conceptual Framework

2.2.1. AI Anthropomorphism as a Stimulus in Digital Service Environments

2.2.2. The Role of Perceived Social Presence

2.2.3. The Role of Trust in AI

2.2.4. The Mediating Role of Perceived Social Presence and Trust in AI System Outcomes

2.2.5. The Moderating Role of Skepticism

2.3. Synthesis of Hypotheses

3. Methodology

3.1. Research Strategy and Design

3.2. Population and Sampling Procedure

3.3. Measurement Scales

3.4. Data Collection and Analysis

4. Results

4.1. Respondents’ Descriptive Analysis

4.2. Assessment of Measurement Model

4.2.1. Structural Model Predictive Power

4.2.2. Common Method Bias (CMB)

4.3. Hypothesis Results

4.3.1. Direct Effects

4.3.2. Mediation Effects

4.3.3. Moderation Effects

4.3.4. Robustness Analysis

5. Discussion

5.1. AI Anthropomorphism and Adoption Intention

5.2. The Dual Mediating Pathways: Social Presence and Trust

5.3. The Contingent Role of Skepticism

6. Implications of the Study

6.1. Theoretical Implications

6.2. Practical Implications

6.3. Research Limitations and Future Research

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. Measurement Scales

| Variable | Items | Source |

| AI Anthropomorphism | The AI assistant seems to have human-like qualities. | (Bartneck et al., 2009) |

| The way the AI speaks sounds warm and friendly, like a person. | ||

| The AI assistant appears to understand my emotions. | ||

| The AI has a personality that feels real. | ||

| The AI explains decisions in plain language you understand. | ||

| Perceived Social Presence | I feel like I am interacting with a real person. | (Gefen & Straub, 2004) |

| The AI assistant gives me a sense of being “together” during our interaction. | ||

| I feel that the AI is attentive to what I say. | ||

| The interaction feels socially engaging, not mechanical. | ||

| Trust in the AI System | Even though data laws are weak here, this AI tries to protect my information. | (Chowdhury et al., 2022) |

| The AI is competent in handling my banking needs. | ||

| I feel confident that the AI will act in my best interest. | ||

| The AI is honest and truthful in its responses. | ||

| I can rely on the AI to perform as expected. | ||

| Intention to Adopt AI-Driven Banking Services | I intend to use AI-driven banking services in the future. | (Liu et al., 2022) |

| I plan to rely on AI for my banking inquiries. | ||

| I would recommend using AI banking services to others. | ||

| I will continue using AI banking services if available. | ||

| I am willing to adopt new AI features offered by my bank. | ||

| Skepticism Toward AI | I doubt that an AI can truly understand my financial needs. | (Zhang et al., 2016) |

| I am suspicious about how my data is used by AI banking systems. | ||

| I believe AI banking services are more about corporate control than customer benefit. |

References

- Aguinis, H., Gottfredson, R. K., & Joo, H. (2013). Best-practice recommendations for defining, identifying, and handling outliers. Organizational Research Methods, 16(2), 270–301. [Google Scholar] [CrossRef]

- Akanfe, O., Bhatt, P., & Lawong, D. A. (2025). Technology advancements shaping the financial inclusion landscape: Present interventions, emergence of artificial intelligence and future directions. Information Systems Frontiers, 27(5), 2189–2212. [Google Scholar] [CrossRef]

- Alzubaidi, L., Al-Sabaawi, A., Bai, J., Dukhan, A., Alkenani, A. H., Al-Asadi, A., Alwzwazy, H. A., Manoufali, M., Fadhel, M. A., Albahri, A. S., Moreira, C., Ouyang, C., Zhang, J., Santamaría, J., Salhi, A., Hollman, F., Gupta, A., Duan, Y., Rabczuk, T., … Gu, Y. (2023). Towards risk-free trustworthy artificial intelligence: Significance and requirements. International Journal of Intelligent Systems, 2023(1), 4459198. [Google Scholar] [CrossRef]

- Bartneck, C., Kulić, D., Croft, E., & Zoghbi, S. (2009). Measurement instruments for the anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety of robots. International Journal of Social Robotics, 1(1), 71–81. [Google Scholar] [CrossRef]

- Bhatnagr, P., & Rajesh, A. (2025). Artificial intelligence features and expectation confirmation theory in digital banking apps: Gen Y and Z perspective. Management Decision, 63(10), 3642–3675. [Google Scholar] [CrossRef]

- Bhatnagr, P., Rajesh, A., & Misra, R. (2024). Continuous intention usage of artificial intelligence enabled digital banks: A review of expectation confirmation model. Journal of Enterprise Information Management, 37(6), 1763–1787. [Google Scholar] [CrossRef]

- Blut, M., Wang, C., Wünderlich, N. V., & Brock, C. (2021). Understanding anthropomorphism in service provision: A meta-analysis of physical robots, chatbots, and other AI. Journal of the Academy of Marketing Science, 49(4), 632–658. [Google Scholar] [CrossRef]

- Brandizzi, N. (2023). Toward more human-like AI communication: A review of emergent communication research. IEEE Access, 11, 142317–142340. [Google Scholar] [CrossRef]

- Brislin, R. W. (1970). Back-translation for cross-cultural research. Journal of Cross-Cultural Psychology, 1(3), 185–216. [Google Scholar]

- Calhoun, C. S., Bobko, P., Gallimore, J. J., & Lyons, J. B. (2019). Linking precursors of interpersonal trust to human-automation trust: An expanded typology and exploratory experiment. Journal of Trust Research, 9(1), 28–46. [Google Scholar] [CrossRef]

- Chae, M.-J., & Kim, M. (2026). Too human to trust? How AI human-likeness and context orientation shape consumer preferences in premium high-tech markets. Journal of Retailing and Consumer Services, 88, 104513. [Google Scholar] [CrossRef]

- Chang, W.-L., Chan, C. L., & Hsieh, Y.-H. (2025). Antecedents of user satisfaction and trust in digital banking: An examination of the ISSM factors. International Journal of Bank Marketing, 43(8), 1756–1778. [Google Scholar] [CrossRef]

- Cheng, X., Zhang, X., Cohen, J., & Mou, J. (2022). Human vs. AI: Understanding the impact of anthropomorphism on consumer response to chatbots from the perspective of trust and relationship norms. Information Processing & Management, 59(3), 102940. [Google Scholar] [CrossRef]

- Chowdhury, S., Budhwar, P., Dey, P. K., Joel-Edgar, S., & Abadie, A. (2022). AI-employee collaboration and business performance: Integrating knowledge-based view, socio-technical systems and organisational socialisation framework. Journal of Business Research, 144, 31–49. [Google Scholar] [CrossRef]

- Couldry, N., & Mejias, U. A. (2019). Data colonialism: Rethinking big data’s relation to the contemporary subject. Television & New Media, 20(4), 336–349. [Google Scholar]

- Curtis, R. G., Bartel, B., Ferguson, T., Blake, H. T., Northcott, C., Virgara, R., & Maher, C. A. (2021). Improving user experience of virtual health assistants: Scoping review. Journal of Medical Internet Research, 23(12), e31737. [Google Scholar] [CrossRef]

- da Costa Filho, M. C. M., & da Costa Hernandez, J. M. (2025). The influence of consumer skepticism toward online reviews in product evaluations. International Journal of Electronic Commerce, 29(4), 528–556. [Google Scholar] [CrossRef]

- De Freitas, J., Oğuz-Uğuralp, Z., Uğuralp, A. K., & Puntoni, S. (2025). AI companions reduce loneliness. Journal of Consumer Research, ucaf040. [Google Scholar] [CrossRef]

- Dinev, T., & Hart, P. (2006). An extended privacy calculus model for e-commerce transactions. Information Systems Research, 17(1), 61–80. [Google Scholar] [CrossRef]

- Donner, J. (2015). After access: Inclusion, development, and a more mobile Internet. MIT Press. [Google Scholar]

- Gefen, D., & Straub, D. W. (2004). Consumer trust in B2C e-commerce and the importance of social presence: Experiments in e-products and e-services. Omega, 32(6), 407–424. [Google Scholar] [CrossRef]

- Greilich, A., Bremser, K., & Wüst, K. (2025). Consumer response to anthropomorphism of text-based AI chatbots: A systematic literature review and future research directions. International Journal of Consumer Studies, 49(5), e70108. [Google Scholar] [CrossRef]

- Grover, V., & Lyytinen, K. (2023). The pursuit of innovative theory in the digital age. Journal of Information Technology, 38(1), 45–59. [Google Scholar] [CrossRef]

- Hair, J. F., Jr., Hult, G. T. M., Ringle, C. M., Sarstedt, M., Danks, N. P., & Ray, S. (2021). Partial least squares structural equation modeling (PLS-SEM) using R: A workbook. Springer Nature. [Google Scholar]

- Kamara, A. K., & Oppong, E. O. (2025). Mobile FinTech and financial inclusion in sub-Saharan Africa: A comparative analysis. African Journal of Science, Technology, Innovation and Development, 17(7), 1051–1063. [Google Scholar] [CrossRef]

- Kandampully, J., Bilgihan, A., & Amer, S. M. (2023). Linking servicescape and experiencescape: Creating a collective focus for the service industry. Journal of Service Management, 34(2), 316–340. [Google Scholar] [CrossRef]

- Keating, B. W., Mulcahy, R., Riedel, A., Beatson, A., & Letheren, K. (2025). Designing AI to elicit positive word-of-mouth in service recovery: The role of stress, anthropomorphism, and personal resources. International Journal of Information Management, 84, 102916. [Google Scholar] [CrossRef]

- Kebe, I. A., Kahl, C., & Liu, Y. (2024). Charting success: The influence of leadership styles on driving sustainable employee performance in the Sierra Leonean banking sector. Sustainability, 16(21), 9600. [Google Scholar] [CrossRef]

- Königstorfer, F., & Thalmann, S. (2020). Applications of Artificial Intelligence in commercial banks—A research agenda for behavioral finance. Journal of Behavioral and Experimental Finance, 27, 100352. [Google Scholar] [CrossRef]

- Kuen, L., Westmattelmann, D., Bruckes, M., & Schewe, G. (2023). Who earns trust in online environments? A meta-analysis of trust in technology and trust in provider for technology acceptance. Electronic Markets, 33(1), 61. [Google Scholar] [CrossRef]

- Kumar, S., Jain, R., & Sharma, A. (2025). Anthropomorphic artificial intelligence drives consumer behavior: Comprehensive literature review and research agenda. Journal of Internet Commerce, 24(4), 185–223. [Google Scholar] [CrossRef]

- Li, J., Wang, N., & Wang, Y. (2025). The double-edged sword effect of generative AI anthropomorphism on users’ emotional attachment: The moderating role of task types. Aslib Journal of Information Management, 1–24. [Google Scholar] [CrossRef]

- Li, T., Wang, M., & Wang, F. (2025). Anthropomorphism of artificial intelligence service agent and consumer responses: A systematic literature review and future research agenda. International Journal of Consumer Studies, 49(3), e70066. [Google Scholar] [CrossRef]

- Li, Y., Zhou, X., Jiang, X., Fan, F., & Song, B. (2024). How service robots’ human-like appearance impacts consumer trust: A study across diverse cultures and service settings. International Journal of Contemporary Hospitality Management, 36(9), 3151–3167. [Google Scholar] [CrossRef]

- Liu, C.-F., Chen, Z.-C., Kuo, S.-C., & Lin, T.-C. (2022). Does AI explainability affect physicians’ intention to use AI? International Journal of Medical Informatics, 168, 104884. [Google Scholar] [CrossRef]

- Maduku, D. K. (2016). The effect of institutional trust on internet banking acceptance: Perspectives of South African banking retail customers. South African Journal of Economic and Management Sciences, 19(4), 533–548. [Google Scholar] [CrossRef]

- Mayer, R. C., Davis, J. H., & Schoorman, F. D. (1995). An integrative model of organizational trust. Academy of Management Review, 20(3), 709–734. [Google Scholar] [CrossRef]

- Mcknight, D. H., Carter, M., Thatcher, J. B., & Clay, P. F. (2011). Trust in a specific technology. ACM Transactions on Management Information Systems, 2(2), 1–25. [Google Scholar] [CrossRef]

- Mehrabian, A., & Russell, J. A. (1974). An approach to environmental psychology. The MIT Press. [Google Scholar]

- Meikle, N. L., & Bonner, B. L. (2024). Unaware and unaccepting: Human biases and the advent of artificial intelligence. American Psychological Association. [Google Scholar]

- Ministry of Finance—Government of Sierra Leone. (2023). Digital financial services in Sierra Leone. Available online: https://mof.gov.sl/wp-content/uploads/2024/11/Digital-Financial-Services-in-Sierra-Leone.pdf (accessed on 7 January 2026).

- Nass, C., & Moon, Y. (2000). Machines and mindlessness: Social responses to computers. Journal of Social Issues, 56(1), 81–103. [Google Scholar] [CrossRef]

- North, D. C. (1990). Institutions, institutional change and economic performance. Cambridge University Press. [Google Scholar]

- Ofori-Okyere, I., & Edghiem, F. (2026). An exploration of financial services digital encounters in developing countries. Journal of Services Marketing, 1–28. [Google Scholar] [CrossRef]

- Okeke, L. (2025). AI-powered chatbots and customer experience in Nigeria’s banking sector: Opportunities and challenges. Nnadiebube Journal of Social Sciences, 6(1), 71–88. [Google Scholar]

- Pan, S., Qin, Z., & Zhang, Y. (2024). More realistic, more better? How anthropomorphic images of virtual influencers impact the purchase intentions of consumers. Journal of Theoretical and Applied Electronic Commerce Research, 19(4), 3229–3252. [Google Scholar] [CrossRef]

- Peter, S., Riemer, K., & West, J. D. (2025). The benefits and dangers of anthropomorphic conversational agents. Proceedings of the National Academy of Sciences, 122(22), e2415898122. [Google Scholar] [CrossRef]

- Podsakoff, P. M., MacKenzie, S. B., Lee, J.-Y., & Podsakoff, N. P. (2003). Common method biases in behavioral research: A critical review of the literature and recommended remedies. Journal of Applied Psychology, 88(5), 879–903. [Google Scholar] [CrossRef]

- Podsakoff, P. M., MacKenzie, S. B., & Podsakoff, N. P. (2012). Sources of method bias in social science research and recommendations on how to control it. Annual Review of Psychology, 63(1), 539–569. [Google Scholar] [CrossRef]

- Riedel, A., Mulcahy, R., & Northey, G. (2022). Feeling the love? How consumer’s political ideology shapes responses to AI financial service delivery. International Journal of Bank Marketing, 40(6), 1102–1132. [Google Scholar] [CrossRef]

- Schrank, J. (2025). The impact of artificial intelligence on behavioral intentions to use mobile banking in the post-COVID-19 era. Frontiers in Artificial Intelligence, 8, 1649392. [Google Scholar] [CrossRef] [PubMed]

- Truong, T. T. H., & Chen, J. S. (2025). When empathy is enhanced by human–AI interaction: An investigation of anthropomorphism and responsiveness on customer experience with AI chatbots. Asia Pacific Journal of Marketing and Logistics, 37(12), 3908–3925. [Google Scholar] [CrossRef]

- Tsekouras, D., Gutt, D., & Heimbach, I. (2024). The robo bias in conversational reviews: How the solicitation medium anthropomorphism affects product rating valence and review helpfulness. Journal of the Academy of Marketing Science, 52(6), 1651–1672. [Google Scholar] [CrossRef]

- van Norren, D. E. (2023). The ethics of artificial intelligence, UNESCO and the African Ubuntu perspective. Journal of Information, Communication and Ethics in Society, 21(1), 112–128. [Google Scholar] [CrossRef]

- Vazquez, E. E., Patel, C., Alvidrez, S., & Siliceo, L. (2023). Images, reviews, and purchase intention on social commerce: The role of mental imagery vividness, cognitive and affective social presence. Journal of Retailing and Consumer Services, 74, 103415. [Google Scholar] [CrossRef]

- Venkatesh, V., Morris, M. G., Davis, G. B., & Davis, F. D. (2003). User acceptance of information technology: Toward a unified view1. MIS Quarterly, 27(3), 425–478. [Google Scholar] [CrossRef]

- von Kalckreuth, N., & Feufel, M. A. (2023). Extending the privacy calculus to the mHealth domain: Survey study on the intention to use mHealth apps in Germany. JMIR Human Factors, 10, e45503. [Google Scholar] [CrossRef]

- von Schenk, A., Klockmann, V., & Köbis, N. (2025). Social preferences toward humans and machines: A systematic experiment on the role of machine payoffs. Perspectives on Psychological Science, 20(1), 165–181. [Google Scholar] [CrossRef] [PubMed]

- Wakunuma, K., Ogoh, G., Akintoye, S., & Eke, D. O. (2025). Decoloniality as an essential trustworthy AI requirement. In Trustworthy AI (pp. 255–276). Springer Nature Switzerland. [Google Scholar] [CrossRef]

- Wang, J., Zhou, Z., Xu, S., Yan, X., Zhang, Y., & Morrison, A. M. (2025). The importance of human touch: How robot anthropomorphism impacts customer engagement in tourism and hospitality. Journal of Vacation Marketing, 13567667251367435. [Google Scholar] [CrossRef]

- Wang, Z., Yuan, R., Li, B., Kumar, V., & Kumar, A. (2026). An empirical study of AI financial advisor adoption through technology vulnerabilities in the financial context. Journal of Product Innovation Management, 43(1), 14–30. [Google Scholar] [CrossRef]

- Yu, D., Zhao, J., Tang, R., Han, C., & Yang, M. (2025). Unlocking the service attractiveness of AI assistants: Does multi-modal anthropomorphic interaction dynamically manipulate users’ mindset metrics? Journal of Consumer Behaviour, 24(6), 2772–2792. [Google Scholar] [CrossRef]

- Zhang, X., Ko, M., & Carpenter, D. (2016). Development of a scale to measure skepticism toward electronic word-of-mouth. Computers in Human Behavior, 56, 198–208. [Google Scholar] [CrossRef]

- Zhou, T., & Ma, X. (2025). Examining generative AI user continuance intention based on the SOR model. Aslib Journal of Information Management. [Google Scholar] [CrossRef]

- Zucker, L. G. (1986). Production of trust: Institutional sources of economic structure, 1840–1920. Research in Organizational Behavior, 8, 53–111. [Google Scholar]

| Characteristic | Category | n | % |

|---|---|---|---|

| Gender | Male | 117 | 42.2 |

| Female | 160 | 57.8 | |

| Age | 18–25 years | 39 | 14.1 |

| 26–35 years | 70 | 25.3 | |

| 36–45 years | 128 | 46.2 | |

| 46+ years | 40 | 14.4 | |

| Education Level | Secondary or below | 25 | 9.0 |

| Diploma/Certificate | 73 | 26.4 | |

| Bachelor’s degree or higher | 179 | 64.6 | |

| Employment Status | Employed (formal sector) | 137 | 49.5 |

| Self-employed/Informal | 84 | 30.3 | |

| Unemployed/Student/Others | 56 | 20.2 | |

| Smartphone Ownership | Yes | 277 | 100.0 |

| Bank Type Used | Domestic commercial bank | 93 | 33.6 |

| Foreign commercial bank | 217 | 78.3 | |

| Prior Experience with AI Banking Services | Used chatbot/virtual assistant | 189 | 68.2 |

| Heard of but never used | 88 | 31.8 | |

| Region | Freetown (Western Area) | 150 | 54.2 |

| Makeni (Northern Province) | 44 | 15.9 | |

| Bo (Southern Province) | 45 | 16.2 | |

| Kenema (Eastern Province) | 38 | 13.7 |

| Construct | Items | VIF | FL | CA | CR | AVE |

|---|---|---|---|---|---|---|

| AI Anthropomorphism | 0.840 | 0.850 | 0.608 | |||

| AIA1 | 1.743 | 0.744 | ||||

| AIA2 | 1.940 | 0.785 | ||||

| AIA3 | 1.768 | 0.761 | ||||

| AIA4 | 2.150 | 0.816 | ||||

| AIA5 | 1.854 | 0.792 | ||||

| Perceived Social Presence | 0.855 | 0.878 | 0.696 | |||

| PSP1 | 1.857 | 0.787 | ||||

| PSP2 | 1.842 | 0.791 | ||||

| PSP3 | 2.850 | 0.906 | ||||

| PSP4 | 1.944 | 0.846 | ||||

| Trust in the AI System | 0.858 | 0.877 | 0.636 | |||

| TAI1 | 2.103 | 0.808 | ||||

| TAI2 | 2.162 | 0.857 | ||||

| TAI3 | 1.716 | 0.721 | ||||

| TAI4 | 1.868 | 0.787 | ||||

| TAI5 | 1.987 | 0.81 | ||||

| Intention to Adopt AI-Driven Banking Services | 0.899 | 0.904 | 0.713 | |||

| INT1 | 2.974 | 0.889 | ||||

| INT2 | 2.327 | 0.822 | ||||

| INT3 | 2.640 | 0.851 | ||||

| INT4 | 2.076 | 0.813 | ||||

| INT5 | 2.401 | 0.844 | ||||

| Skepticism | 0.772 | 0.774 | 0.688 | |||

| SKP1 | 1.937 | 0.859 | ||||

| SKP2 | 1.770 | 0.847 | ||||

| SKP3 | 1.385 | 0.780 |

| Construct | AIA | INT | PSP | SKP | TAI |

|---|---|---|---|---|---|

| AIA | 0.780 | ||||

| INT | 0.492 | 0.844 | |||

| PSP | 0.601 | 0.732 | 0.834 | ||

| SKP | 0.573 | 0.712 | 0.634 | 0.829 | |

| TAI | 0.587 | 0.742 | 0.750 | 0.659 | 0.798 |

| Construct | AIA | INT | PSP | SKP | TAI |

|---|---|---|---|---|---|

| AIA | |||||

| INT | 0.548 | ||||

| PSP | 0.687 | 0.817 | |||

| SKP | 0.707 | 0.847 | 0.749 | ||

| TAI | 0.667 | 0.816 | 0.816 | 0.794 |

| Construct | R2 | R2 Adjusted | Q2 Predict | RMSE | MAE | Path | f2 | Effect Size Interpretation |

|---|---|---|---|---|---|---|---|---|

| INT | 0.621 | 0.616 | 0.49 | 0.729 | 0.568 | AIA → INT | 0 | Negligible |

| PSP | 0.623 | 0.619 | 0.581 | 0.666 | 0.499 | AIA → PSP | 0.224 | Medium–Large |

| TAI | 0.515 | 0.509 | 0.497 | 0.715 | 0.586 | AIA → TAI | 0.135 | Small–Medium |

| PSP → INT | 0.173 | Medium | ||||||

| TAI → INT | 0.214 | Medium–Large |

| Hypothesis | Path | β | t | p | 95% CI (Lower, Upper) | Supported? |

|---|---|---|---|---|---|---|

| H1 | AIA → PSP | 0.354 | 7.804 | <0.001 | (0.269, 0.445) | Yes |

| H2 | AIA → TAI | 0.312 | 4.743 | <0.001 | (0.190, 0.448) | Yes |

| H3 | AIA → INT | −0.013 | 0.305 | 0.760 | (−0.096, 0.072) | No |

| H4 | PSP → INT | 0.406 | 3.755 | <0.001 | (0.180, 0.604) | Yes |

| H5 | TAI → INT | 0.445 | 5.538 | <0.001 | (0.297, 0.611) | Yes |

| Hypothesis | Mediation Pathway | Specific Indirect Effect (β) | Bootstrapped 95% CI | t | p | Proportion of Total Indirect Effect |

|---|---|---|---|---|---|---|

| H6 | AIA → PSP → INT | 0.144 | [0.062, 0.226] | 3.433 | 0.001 | 50.9% |

| H7 | AIA → TAI → INT | 0.139 | [0.068, 0.210] | 3.889 | <0.001 | 49.1% |

| — | Total Indirect Effect | 0.283 | [0.172, 0.401] | 5.127 | <0.001 | 100% |

| — | Direct Effect (AIA → INT) | −0.013 | [−0.096, 0.072] | 0.305 | 0.760 | — |

| — | Total Effect | 0.270 | [0.168, 0.379] | 4.984 | <0.001 | — |

| Hypothesis | Interaction Path | β | t | p | Supported? |

|---|---|---|---|---|---|

| H8 | SKP × AIA → PSP | −0.268 | 5.657 | 0.000 | Yes |

| H9 | SKP × AIA → TAI | −0.090 | 3.231 | 0.001 | Yes |

| Mediator | Skepticism Level | Conditional Indirect Effect (β) | Bootstrapped 95% CI |

|---|---|---|---|

| PSP | Low (−1 SD) | 0.253 | [0.162, 0.358] |

| PSP | High (+1 SD) | 0.035 | [−0.012, 0.098] |

| Index of moderated mediation | — | −0.109 | [−0.187, −0.042] |

| TAI | Low (−1 SD) | 0.179 | [0.112, 0.264] |

| TAI | High (+1 SD) | 0.099 | [0.041, 0.172] |

| Index of moderated mediation | — | −0.04 | [−0.081, 0.003] |

| Path | Full Model (With Controls) | Reduced Model (Without Controls) | Δβ | p-Value (Difference) |

|---|---|---|---|---|

| AIA → PSP | 0.354 *** | 0.361 *** | 0.007 | 0.682 |

| AIA → TAI | 0.312 *** | 0.325 *** | 0.013 | 0.541 |

| AIA → INT | −0.013 | −0.009 | 0.004 | 0.891 |

| PSP → INT | 0.406 *** | 0.398 *** | −0.008 | 0.723 |

| TAI → INT | 0.445 *** | 0.451 *** | 0.006 | 0.814 |

| SKP × AIA → PSP | −0.268 *** | −0.274 *** | −0.006 | 0.765 |

| SKP × AIA → TAI | −0.090 *** | −0.095 *** | −0.005 | 0.832 |

| R2 (INT) | 0.621 | 0.618 | −0.003 | — |

| R2 (PSP) | 0.623 | 0.615 | −0.008 | — |

| R2 (TAI) | 0.515 | 0.509 | −0.006 | — |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Mackay, A.C.D.; Zuo, L.; Kebe, I.A. Anthropomorphic AI and Consumer Skepticism: A Behavioral Study of Trust and Adoption in Fragile Economies. Behav. Sci. 2026, 16, 496. https://doi.org/10.3390/bs16040496

Mackay ACD, Zuo L, Kebe IA. Anthropomorphic AI and Consumer Skepticism: A Behavioral Study of Trust and Adoption in Fragile Economies. Behavioral Sciences. 2026; 16(4):496. https://doi.org/10.3390/bs16040496

Chicago/Turabian StyleMackay, Agnes Caroline Dontina, Li Zuo, and Ibrahim Alusine Kebe. 2026. "Anthropomorphic AI and Consumer Skepticism: A Behavioral Study of Trust and Adoption in Fragile Economies" Behavioral Sciences 16, no. 4: 496. https://doi.org/10.3390/bs16040496

APA StyleMackay, A. C. D., Zuo, L., & Kebe, I. A. (2026). Anthropomorphic AI and Consumer Skepticism: A Behavioral Study of Trust and Adoption in Fragile Economies. Behavioral Sciences, 16(4), 496. https://doi.org/10.3390/bs16040496