1. Introduction

Autism spectrum disorder (ASD) is a common and highly heritable neurodevelopmental disorder characterized not only by difficulties in social communication and interaction, sensory abnormalities, and repetitive behaviors but also, more notably, by varying degrees of cognitive impairment (

American Psychiatric Association, 2013;

DeMyer, 1975). Cognitive skills are essential for children with ASD in terms of scholastic endeavors and everyday existence. Among several higher-order cognitive skills, analogical reasoning is widely recognized as one of the most pivotal. Sternberg proposed the fundamental form of analogical reasoning, represented as A:B::C:D. First, individuals encode objects A and B; then, they consider the relationship between A and B, mapping the relationship between A and C (common attributes); and last, extending this analogy to the correspondence between B and D, generating an appropriate response (

Mulholland et al., 1980;

Sternberg, 1977). Analogical reasoning involves the ability to understand relationships with similar structures and link them to new relationships to gain knowledge (

Stevenson et al., 2013a). It allows individuals to learn from one interaction and apply it to another (

Green et al., 2014). This ability enables individuals to effectively evaluate and comprehend the intricate connections and patterns among external information elements, ultimately guiding them toward achieving effective problem-solving solutions (

Krulik & Rudnick, 1993). This also enables children to organize and construct new information based on previously acquired knowledge, enhancing their knowledge across various domains (

Brown & Kane, 1988;

Goswami, 1991). Therefore, analogical reasoning plays a crucial role in children’s developmental processes (

Brown & Kane, 1988;

Goswami, 1991).

There was a significant correlation between cognitive and analogical reasoning skills. Cognitive dysfunction in children with ASD reduces their ability to understand all of these relationships (

Krawczyk et al., 2014). Children with ASD tend to focus on similarities in object features rather than similarities in the relationships between objects (

Hetzroni et al., 2019;

Krawczyk et al., 2014). Additionally, they often lean toward processing details and trivial information rather than abstract and overall meanings (

Aljunied & Frederickson, 2013;

Shah & Frith, 1983). Children with ASD often lack the skills necessary to effectively adapt to specific environments (

Schopler & Mesibov, 2013). These factors contribute to the difficulty in identifying crucial information in analogical reasoning problems and fully comprehending abstract relational similarities (

Green et al., 2014). Consequently, difficulties may arise when there is no apparent literal or surface similarity present (

Pexman, 2008).

However, the analogical reasoning abilities of children with ASD may not necessarily be immutable. With proper guidance and support during analogical reasoning tasks, children with ASD are capable of recognizing abstract analogical similarities similar to typically developing (TD) children (

Green et al., 2014). For example, virtual reality and other tools can effectively enhance the analogical reasoning abilities of children with ASD by training their social cognition. (

Didehbani et al., 2016). However, these studies focused primarily on high-functioning children with ASD (

Didehbani et al., 2016), with scant attention given to the broader population of children with ASD. Moreover, most studies have relied on static assessments that capture children’s abilities in the past (

Touw et al., 2019), potentially underestimating the cognitive capacities of children with developmental disorders such as autism (

Veerbeek & Vogelaar, 2023).

The traditional assessment model separates teaching and assessment, revealing only the learner’s prior knowledge and skills while ignoring their latent abilities. Unlike traditional static assessment methods, dynamic assessment (DA) is believed to capture a person’s learning potential by focusing on abilities that are not yet fully developed (

Lidz & Gindis, 2003). It is an assessment method that embeds interventions in the testing procedure (

Rashidi & Bahadori Nejad, 2018). DA provides gradual prompts until children are able to complete the task. It focuses on the process of learning rather than the outcome (

Lidz & Gindis, 2003). During the assessment process, the mediator endeavors to identify the individual’s potential differences and promptly adjust and improve the teaching. Meanwhile, learners can surpass their actual performance levels with the prompts and guidance of the mediator (

Campione & Brown, 1987;

Lidz & Gindis, 2003;

Rassaei, 2021).

Computerized dynamic assessment (CDA) is a type of DA. The emergence of CDA involves interventions through technology devices such as computers. An appealing computer interface can help increase children’s attention and reduce their agitation (

Boucenna et al., 2014). Importantly, CDA can provide more detailed records of children’s learning processes and results reports (

Poehner, 2008), which can help researchers comprehensively analyse their performance. The use of CDA to help identify and improve the graphic analogical reasoning ability of children with ASD is an optional approach. However, the amount of research on CDA applications involving analogical reasoning for ASD is still limited. Based on existing research, it is unclear whether CDA has a positive impact on analogical reasoning in children with ASD. Therefore, this experiment utilized techniques such as eye-tracking to monitor learners’ eye movement patterns during the learning process to better comprehend the cognitive processes of children with ASD. By integrating performance analysis with eye-tracking data analysis, we aimed to investigate the impact of CDA on children with ASD. The main research questions (RQs) of the study are as follows.

RQ1. Can computerized dynamic assessment unlock the graphical analogical reasoning potential of children with autism spectrum disorder?

RQ2. What is the impact of the initial abilities of children with autism on their analogical reasoning potential?

3. Materials and Methods

3.1. Participants

To ensure that the experimental design of this study was sufficient, G*power 3.1 software (University of Düsseldorf, Düsseldorf, Germany) (

Faul et al., 2007) was used to estimate the required sample size. An effect size of 0.25 and an alpha of 0.05 were used. The analysis showed that a total of at least 44 participants were needed to achieve a statistical power of 0.95.

This study was conducted at a special education institution in a certain city in Hubei Province, China. All children have experience using electronic devices such as tablets in their daily study or life, which provides the necessary basic conditions for this research. In addition, all participants were required to meet the following criteria: (1) meet the criteria for Autism Spectrum Disorder (ASD) in the Diagnostic and Statistical Manual of Mental Disorders, 5th Edition (DSM-5); (2) be diagnosed with autism by a top-level (Grade III Class A or above) professional hospital; (3) have no history of other mental illnesses or brain injuries; (4) have normal vision; (5) have flexible fingers; (6) be able to understand basic instructions; (7) have not participated in similar experiments and have never seen the materials of this experiment before.

This study recruited 71 children with autism from the institution. Five children did not participate in the posttest, so they were excluded from the data analysis, resulting in a total of 66 Children with ASD (51 boys, accounting for 77%, and 15 girls, accounting for 23%). The ratio of male to female autistic individuals ranges from 2:1 to 5:1 (

Lord et al., 2020), and the sex ratio of participants in this study is consistent with this report. The average age of the 66 participants was 5.80 years (SD: 1.39, min: 3.00, max: 9.00). This study adopted a single-factor pre- and post-test design. Participants were randomly assigned to two groups, namely the CDA group and the Non-CDA (NDA) group. The CDA group received computerized dynamic assessment, while the NDA group served as the control group, only completing the corresponding tests without any intervention or assistance. The learning and testing contents of the two groups were exactly the same. A Mann–Whitney U test revealed no significant differences in age between the two groups (z = −1.897,

p = 0.058). There was also no statistical difference in the prior knowledge abilities between the two groups of children (see the specific data in

Section 4.1 and

Section 4.2). Basic demographic information is presented in

Table 1.

Informed consent was obtained from the special education agency and parents prior to the start of the experiment. Our research team signed a confidentiality agreement with the special education agency. The study was approved and supported by the institutional review board.

3.2. Learning Materials

This study adapted the research materials from Whitely’s study (

Whitely & Schneider, 1981) and established eight transformation rules: quantity, size, color, shape, position (nesting, overlapping), and transformation (inversion, rotation). Each rule was accompanied by a corresponding question, as outlined in

Table 2.

The experiment was divided into a total of three phases, i.e., pretest–intervention–posttest. Each phase consisted of eight questions, and the eight questions followed the transformation rules described above. The content of the questions in the pretest and intervention phases was identical, while the questions in the posttest phase were isomorphic to the questions in the first two phases.

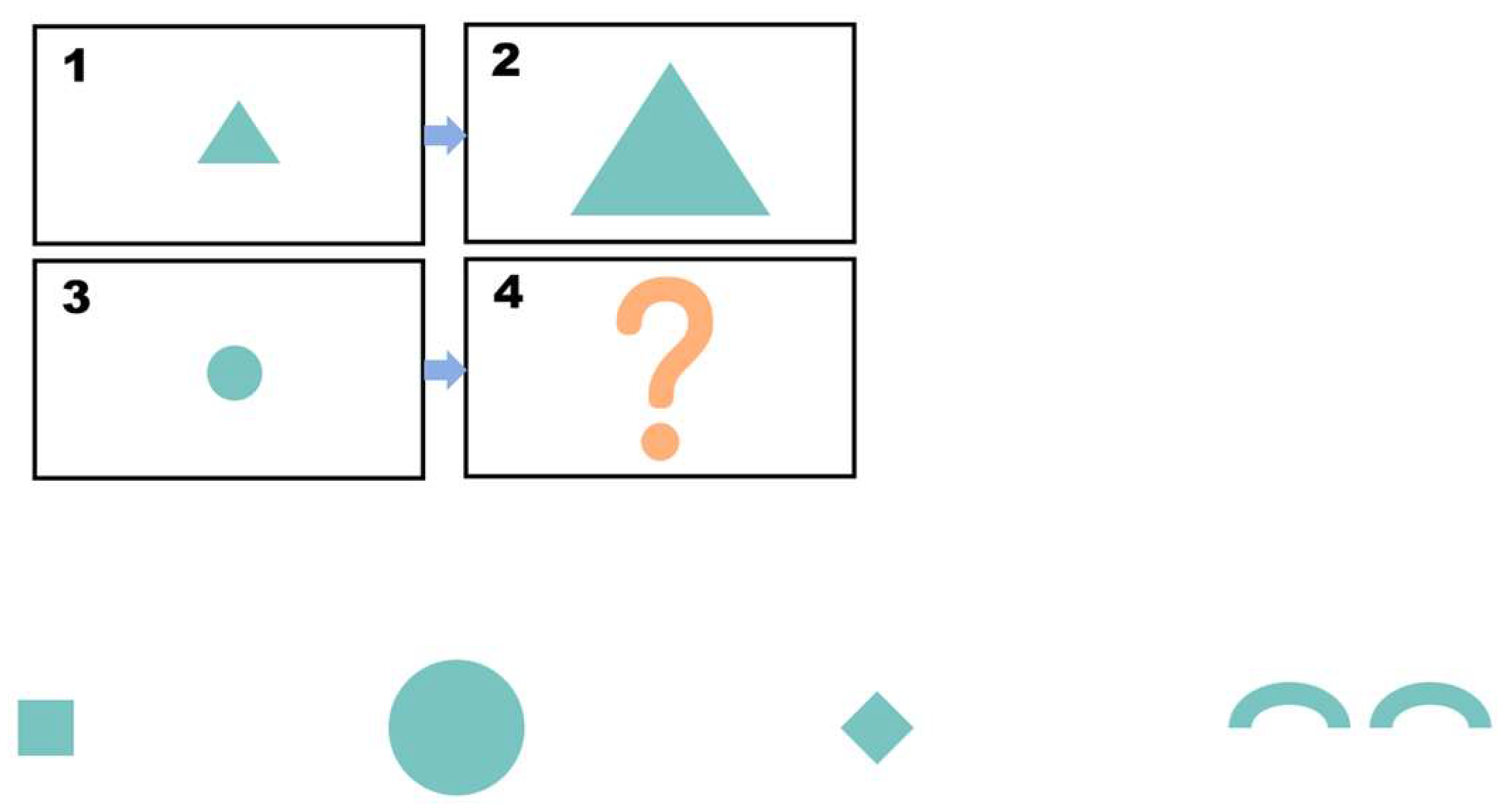

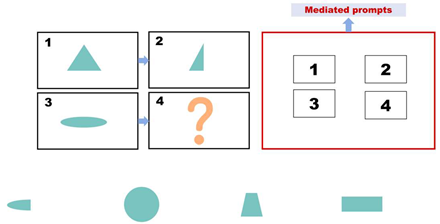

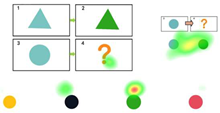

Pretest. This assessment consists of eight items designed to evaluate children’s prior knowledge of graphic analogical reasoning. Each item includes four options, with only one being the correct answer, as shown in

Figure 1. A correct answer is awarded four points, and incorrect answers receive no points. The total pretest score is calculated by adding the scores of all correct answers, with a maximum score of 32. The Cronbach’s alpha coefficient of the test was 0.770. Participants were asked to complete this section of the test within 5 min.

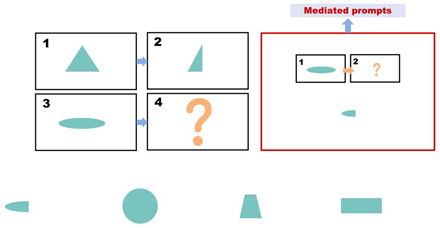

During the intervention phase, the same set of questions used in the pre-test phase is employed. However, for each incorrect answer, participants receive a prompt (as shown in

Figure 2). The prompts are divided into three levels, with each level lasting approximately 20 s.

Table 3 presents the prompt design and scoring rules used. If a child answers incorrectly after receiving prompts from all three levels, the question is scored as zero, and the participant proceeds to the next question. The prompts are presented in the form of animated videos. Participants are required to complete the test within 10 min during this phase.

Posttest. The posttest consists of 8 questions. The questions are like the pretest questions, as shown in

Figure 3. Each correct answer is worth 4 points, and each incorrect answer is worth 0 points. No hints are provided, and the maximum score is 32 points. The Cronbach’s alpha coefficient for the post-test is 0.759. Participants are required to complete this part of the test within 5 min.

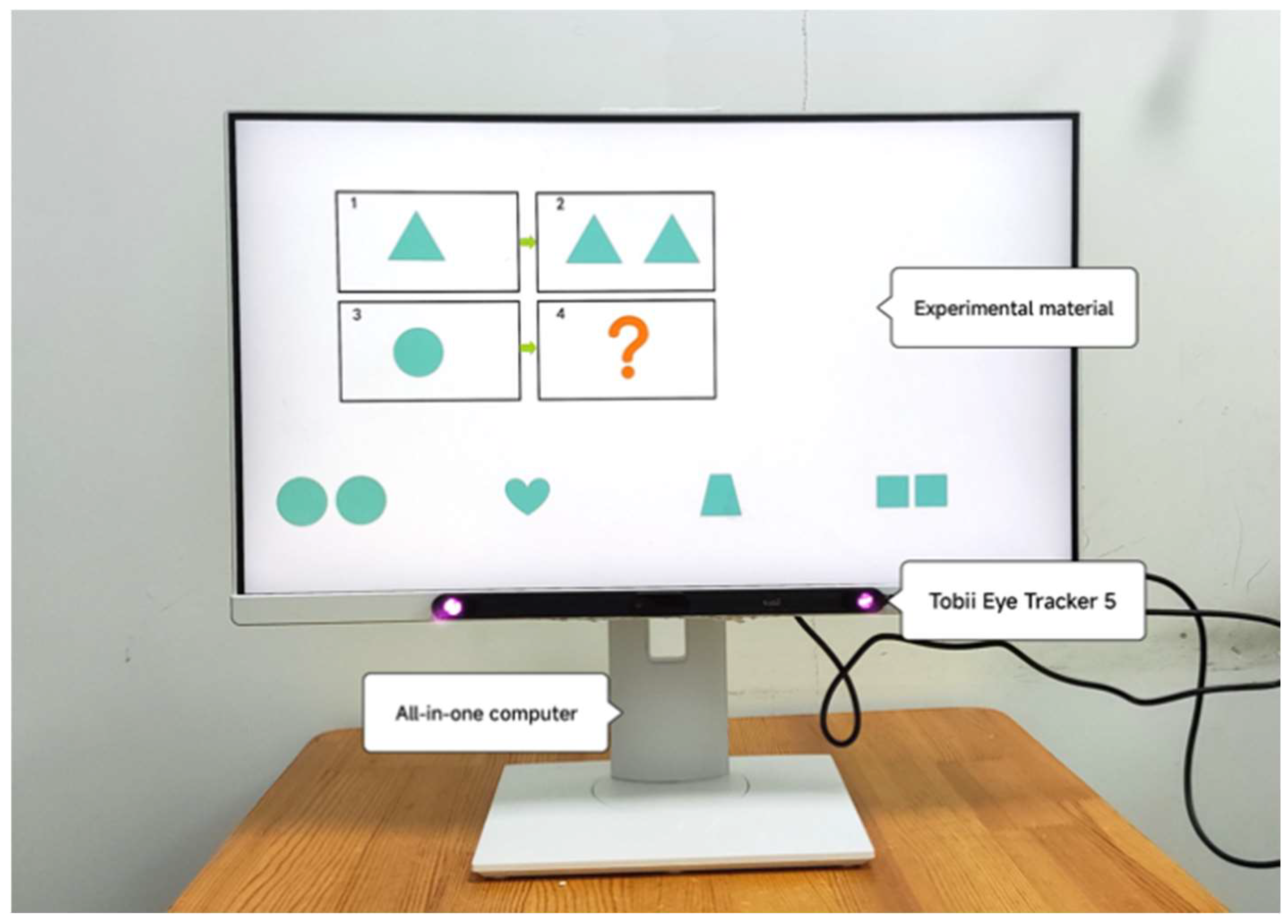

3.3. Hardware and Software Equipment

The experimental materials were designed with user-friendliness in mind to prevent fatigue among children with autism during the intervention process (

Zhang et al., 2017). The experimental materials were presented to participants on an all-in-one machine with a screen resolution of 1920 × 1080 pixels. The touchscreen capability of an all-in-one machine enhances participants’ engagement and attention (

Foshay & Ludlow, 2005).

Eye tracking is a sensing technology that utilizes a camera and near-infrared light source to track real-time eye movement through certain algorithms. In this study, eye movement data were collected using the Tobii Eye Tracker 5 (Tobii, Stockholm, Sweden) desktop eye tracker. The eye tracker was positioned beneath the all-in-one machine screen, connected to the machine, and had a viewing angle of 40 × 40 degrees as per the experimental setup shown in

Figure 4 and on-site experimentation in

Figure 5. We divided the areas of interest into a question section, an answer section, and a mediated prompt section. The eye-tracking data were imported into OGAMA 5.1 (open-source software) to define regions of interest, filter participant data, and generate eye-tracking heatmaps and trajectories.

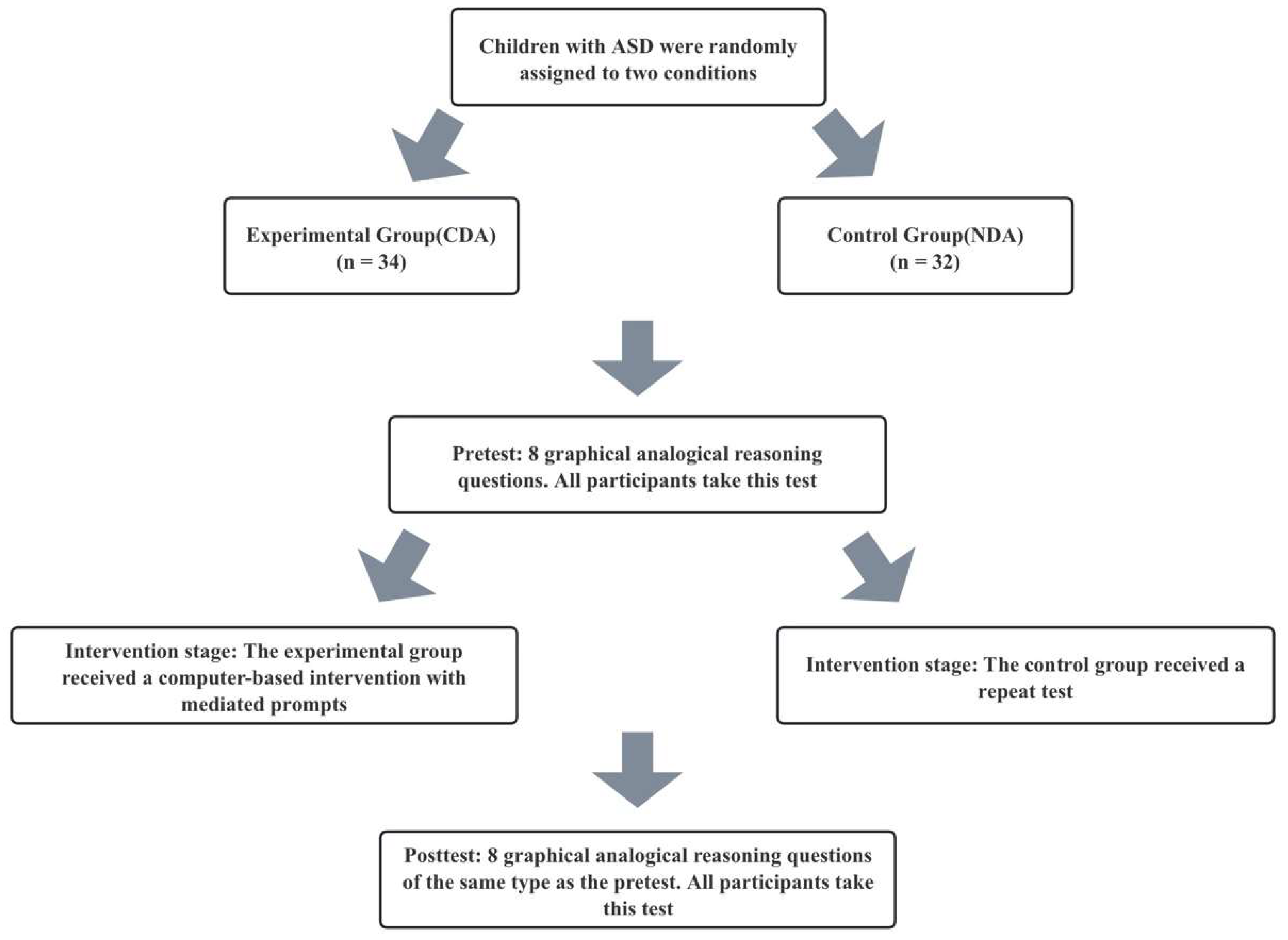

3.4. Experimental Design

This study was conducted in a well-lit classroom where children sat in front of all-in-one machines and other devices. Prior to the start of the experiment, demographic questions were answered by the parents or teachers of the participants. Subsequently, the experimenter introduced the experiment and explained the rules of the analogy reasoning test to the children. Under the guidance of the experimenter, the participants performed eye-tracking calibration using Tobii Eye Tracker 5 to adjust the distance between themselves and the computer display for optimal eye-tracking data recording. Once calibration was completed, both groups of participants independently completed the same pretest. Later, in the “CDA” group, participants first independently selected their answer choices, advancing to the next question if the response was correct. If the answer was incorrect, the participants received three levels of prompt intervention. In the “NDA” group, participants underwent testing with the same questions as those in the “CDA” group but without any prompts. Finally, both the “CDA” and “NDA” groups underwent the same posttest. The experimental procedure for each child lasted approximately 20 min. The flowchart of the experiment is shown in

Figure 6.

This study adheres to the principles of Universal Design for Learning (UDL) by incorporating computerized technologies, such as dynamic assessment platforms and eye-tracking systems (

Rose, 2000). During the intervention stage, 2D animations with synchronized sound and images are used as an intervention measure to support learners with visual and hearing impairments. Additionally, non-invasive data collection captures eye movement patterns. This allows learners with diverse expressive capabilities to demonstrate their understanding through alternative modalities. The CDA framework incorporates multi-level prompts, and the intervention’s prompt hierarchy is presented dynamically based on the learner’s mediated score, constructing personalized learning pathways. Thus, this systematic technological integration transforms CDA into an instrument of inclusive pedagogy.

3.5. Data Analysis

In the data analysis process, this study excluded samples with missing data for any of the three tests and included only complete sample data. Due to the special characteristics of children with ASD, they typically exhibit symptoms such as hyperactivity and lack of focus, resulting in suboptimal eye-tracking data. Many children were unable to remain seated in front of the computer continuously or maintain an appropriate eye-tracking distance, resulting in incomplete eye-tracking data recording and less time recording gaze duration compared to their actual test duration. During the eye-tracking data analysis, 23 participants were excluded, resulting in a final sample size of 43 participants.

This study first conducted a Shapiro–Wilk normality test on the test scores, response times, and various eye-tracking data. Many of the data did not meet the requirements for a normal distribution (p < 0.05). Therefore, to analyse these data, a generalized estimating equation (GEE) approach was used, and the median and quartiles were used to describe the distribution characteristics. During this process, DA (CDA, NDA) was used as a between-subject factor, and the testing phase (pretest, intervention, posttest) was used as a within-subject factor. GEE analyses were also conducted on learning outcomes and eye-tracking measures, focusing on main and interaction effects, as well as simple effects, to measure the impact of CDA on participants’ analogy reasoning ability and gaze patterns. The significance level was set to 0.05, and Bonferroni corrections were used.

Data analysis and visualization were carried out using SPSS 29.0.1, Ogama 5.1, G*Power 3.1, Origin 2021, and GraphPad Prism 10.0.2 for this study.

4. Results

4.1. Analysis of Test Scores

GEE analyses revealed a significant main effect of group (CDA group and NDA group) on learners’ test scores (Wald

= 5.539,

p = 0.019), a significant main effect of phase (pretest–intervention–posttest phase) on test scores (Wald

= 30.886,

p < 0.001), and a highly significant interaction of group and phase (Wald

= 15.711,

p < 0.001). The changes in the scores of the two groups are presented in violin plots showing the median, upper quartile and lower quartile of the data, as well as the central distribution of all data, as shown in

Figure 7.

Simple between-group effect analyses (Mann–Whitney U test) revealed no significant differences between the two groups at both pretest (z = −1.213, p = 0.225) and posttest (z = −1.900, p = 0.057). However, the CDA group performed significantly better than the NDA group during the intervention phase (z = −3.214, p = 0.001).

The results of the within group simple effects analysis (Friedman’s test) showed that there was no significant difference between the test scores of the NDA group in the three different phases (pretest, intervention prompt and posttest) (

= 5.614,

p = 0.060). However, there was a significant difference in scores across the three phases for the CDA group (

= 16.467,

p < 0.001). A Wilcoxon signed rank test was performed to determine which phases were significantly different. The results showed that scores at the intervention prompt stage were significantly higher than at the pretest stage (z = −3.775,

p < 0.001), but scores at the posttest stage were significantly lower than scores at the intervention prompt stage (z = −4.002,

p < 0.001). There was no significant difference between posttest and pretest scores (z = −0.548,

p = 0.584). Specific results are presented in

Table 4.

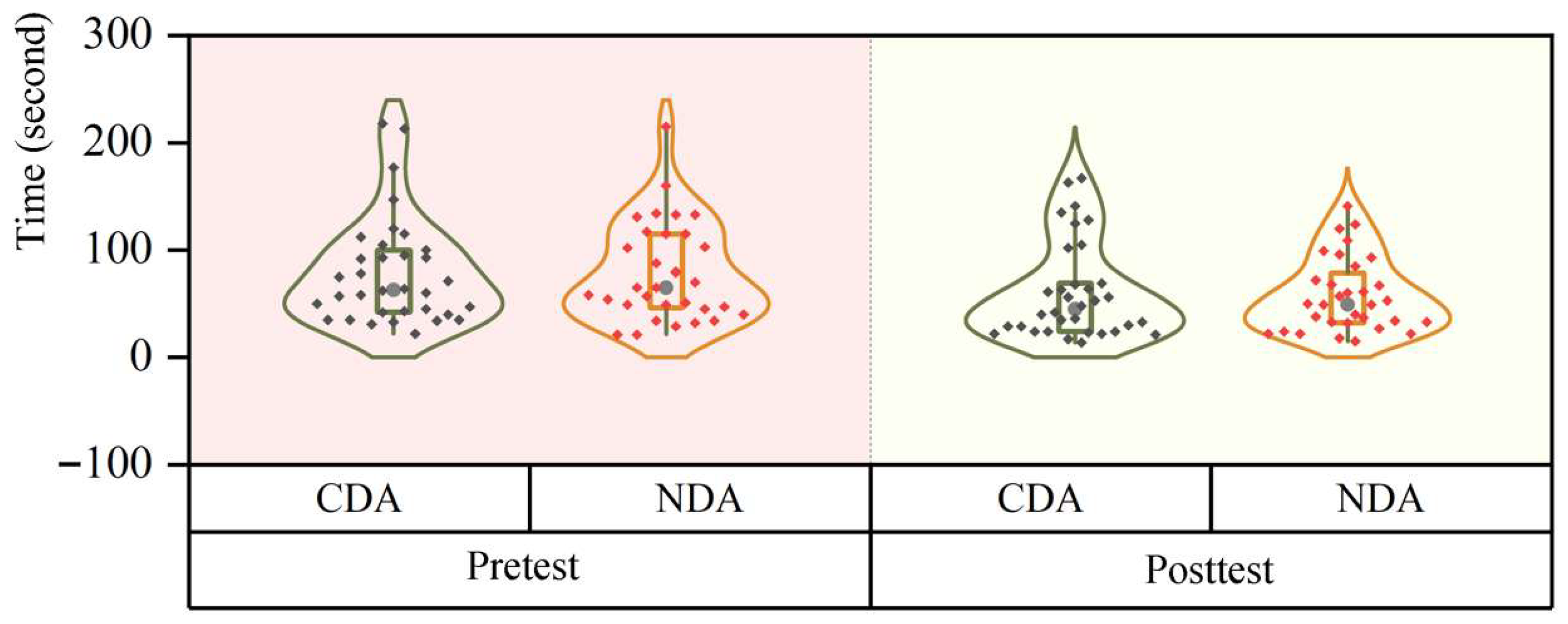

4.2. Time Use Analysis

The GEE results showed that there was no significant main effect of group (Wald

= 0.887,

p = 0.346) and stage (Wald

= 0.032,

p = 0.858) on the time learners took to answer the questions, and there was no significant interaction between the two (Wald

= 0.961,

p = 0.327), as shown in

Figure 8 (in seconds).

Results of between-group simple effects (Mann–Whitney U test) showed no significant difference in answer times between the CDA and NDA groups at both the pretest (z = 0.160, p = 0.873) and posttest (z = −0.154, p = 0.878) stages.

Within-group simple effects analyses (Wilcoxon signed-rank test) revealed that in the CDA group, there was a significant difference in answer times between the two phases (z = −2.078,

p = 0.038), with a significant reduction in posttest time compared to pretest time. In the NDA group, there was also a significant difference in the answer times between the two phases (z = −3.498,

p < 0.001), and the answer times in the posttest phase were similarly significantly shorter than in the pretest phase. The results of the time spent analysis are in

Table 5.

4.3. Learning Potential Analysis

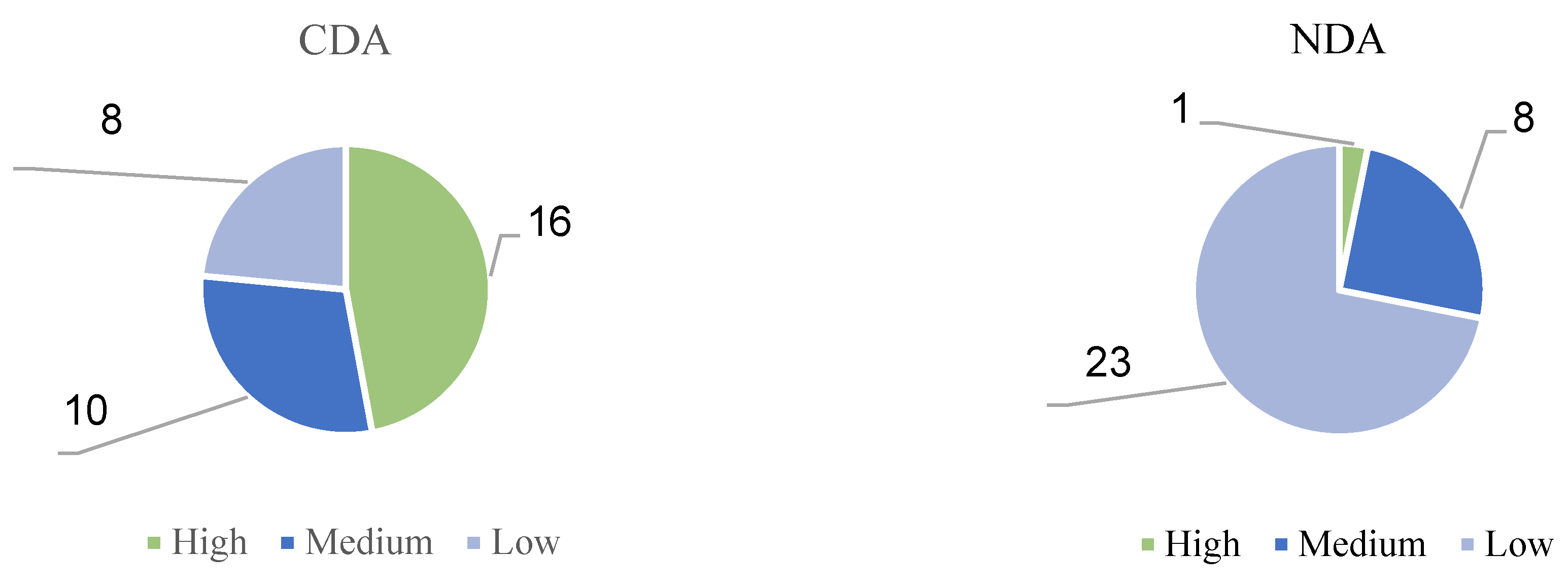

As shown in

Figure 9, in the CDA group, 16 children, or 47 percent of the group, had high LPS levels. In addition, 10 children (29%) achieved a moderate LPS level. The proportion of children with low LPS levels was relatively low at 24%. In the NDA group, only one child had a high LPS level, and eight children had a moderate LPS level. It is noteworthy that the number of children with low LPS levels in the NDA group was quite high, with a total of 23 children, or 72% of the total number of children in the experiment.

Figure 10 shows the relationship between the pretest scores and the LPS for the two groups of children. The graph shows that when the children’s pretest scores were the same, their LPS scores differed. Specifically, for children with similar prior knowledge levels, their learning potential is different, which shows that each autistic child has individual characteristics. CDA can stimulate children’s learning potential and help children reach a higher level of learning potential (

Tudge, 1992;

Vygotsky & Cole, 1978).

4.4. Fixation Count Analysis

GEE analyses revealed no significant main effect between groups (Wald

= 3.454,

p = 0.063), a significant main effect between stages (Wald

= 6.938,

p = 0.031), and a non-significant interaction between group and stage in terms of the number of gaze points (Wald

= 3.180,

p = 0.204), as shown in

Table 6 shows.

Between-group comparisons using the Mann–Whitney U test indicated that there was no significant difference between the two groups at the pretest (z = −0.139, p = 0.890) or posttest (z = −1.096, p = 0.273) stages. Notably, a significant difference was observed between the two groups during the intervention phase (z = −2.116, p = 0.034), in which the CDA group had a significantly higher number of gazes than the control group.

Within-group analyses using Friedman’s test indicated that there was no significant difference between the number of gazes across the three phases for either the CDA group ( = 2.154, p = 0.341) or the NDA group ( = 0.651, p = 0.722).

4.5. Fixation Duration Mean Analysis

According to the GEE analysis, there were no significant main effects of group (Wald

= 0.954,

p = 0.329) or phase (Wald

= 2.694,

p = 0.260) on fixation duration mean. Additionally, there was no significant interaction effect between group and phase (Wald

= 1.448,

p = 0.485), as presented in

Table 7 (unit in milliseconds).

Further analysis using the Mann–Whitney U test revealed no significant differences among the three phases for group comparisons.

Similarly, within-group analysis using the Friedman test revealed no significant differences in gaze time across the three phases for either the CDA group ( = 0.316, p = 0.854) or the NDA group ( = 1.000, p = 0.607). Pairwise comparisons between the three phases in each group also indicated no significant differences between the CDA and NDA groups.

4.6. Eye Movement Hotspot Map

Table 8 presents the results of the eye-tracking analysis using Ogama software. The data show that in all experimental phases, the CDA group and the NDA group had significantly higher gaze concentration in regions C and D than in regions A and B. (Analogical Reasoning topic form A:B::C:D) It is noteworthy that the mediating cue that appeared in the CDA group greatly attracted the participants’ attention.

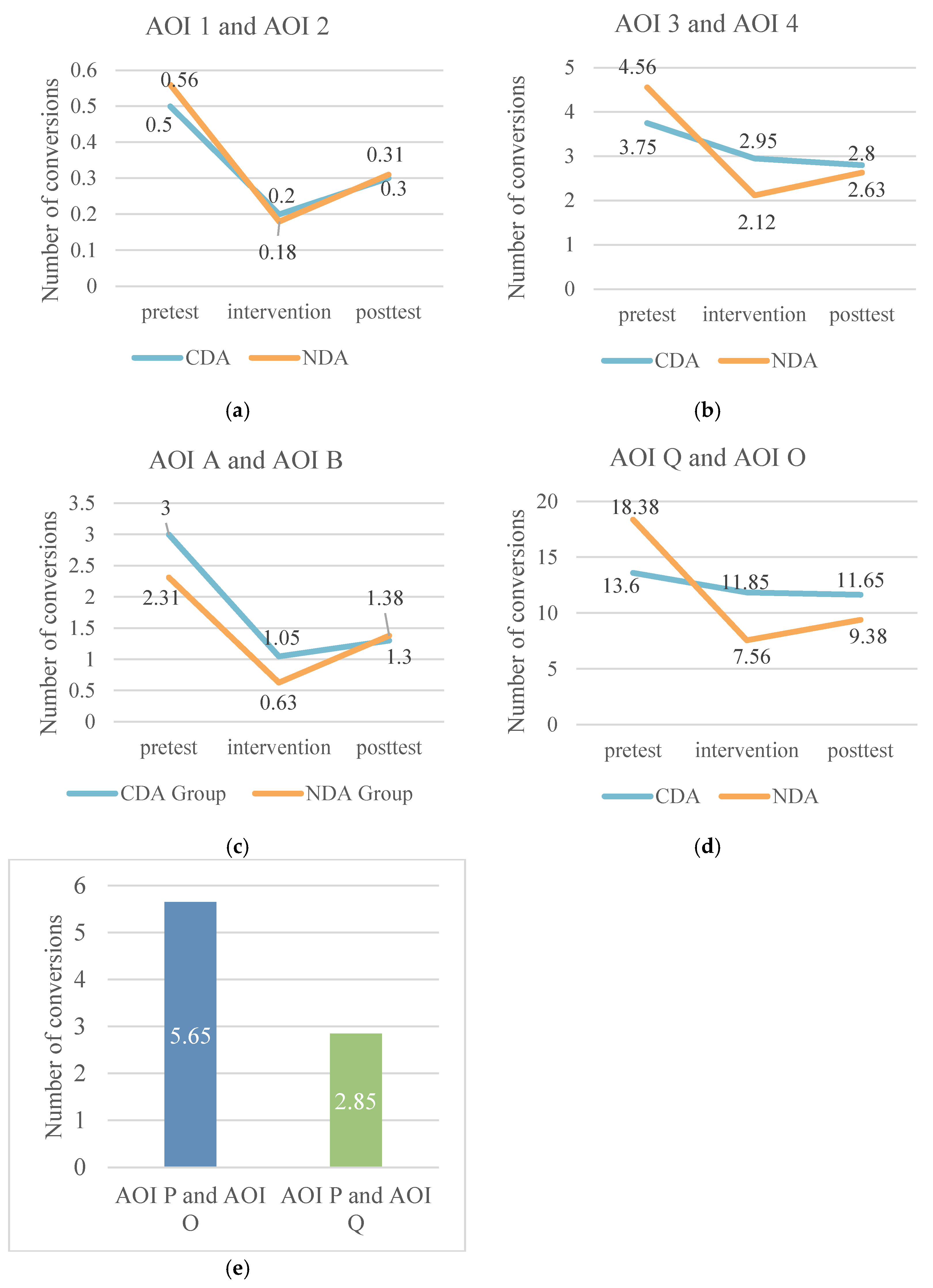

4.7. Analysis of the Number of Transitions Between Areas of Interest

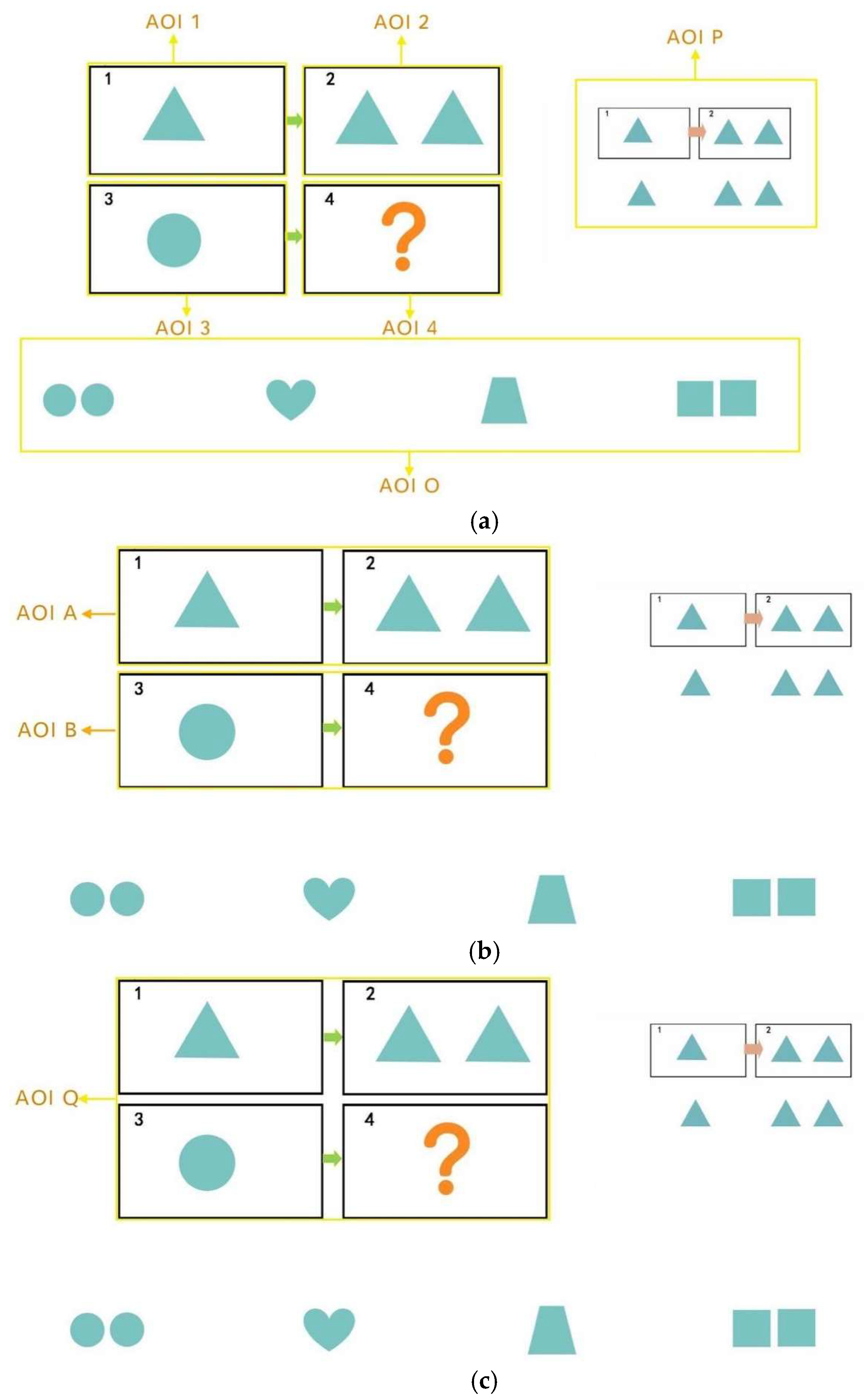

In this study, the question items A, B, C, and D (A:B::C:D) were divided into four Areas of Interest (AOI), i.e., A: AOI 1, B: AOI 2, C: AOI 3, and D: AOI 4 by combining AB into AOI A, and CD into AOI B. We designated the entire group of question items as AOI Q, the response options as AOI O, and the prompt section as AOI P, as shown in

Figure 11.

The study analyzed the number of area-of-interest transitions between the control and experimental groups, using the number of gaze transitions per subject per capita normalized by the number of people for comparison between groups. Overall, the number of gaze transitions between AOIs 3 and 4 was generally higher than that between AOIs 1 and 2, and the number of transitions between Q and O was significantly higher than that between A and B. Specifically, the number of gaze transitions decreased in all groups at the intervention stage compared to the pretest stage, with the largest decreases occurring between AOIs 1-2 and A-B. Except for the CDA group, the number of transitions between AOIs 3 and 4 and Q and O showed a slight decrease at the posttest stage, and the remaining groups showed a slight decrease in the number of transitions between AOIs 3 and 4. The rest of the groups showed a rebound trend in the posttest stage, except for the CDA group in the posttest of AOI 3-4 and Q-O, where the number of transitions still decreased slightly. Notably, the CDA group had a significantly higher number of gaze transitions to P-O than to P-Q during the intervention phase. Specifically, as shown in

Figure 12.

4.8. Correlation Analysis of Time and Test Scores

This study adopted the calculation method used by scholar Izadi in his study to determine the actual score, mediator score and gain score (

Izadi et al., 2023). Where gain score is the difference between mediator score and actual score.

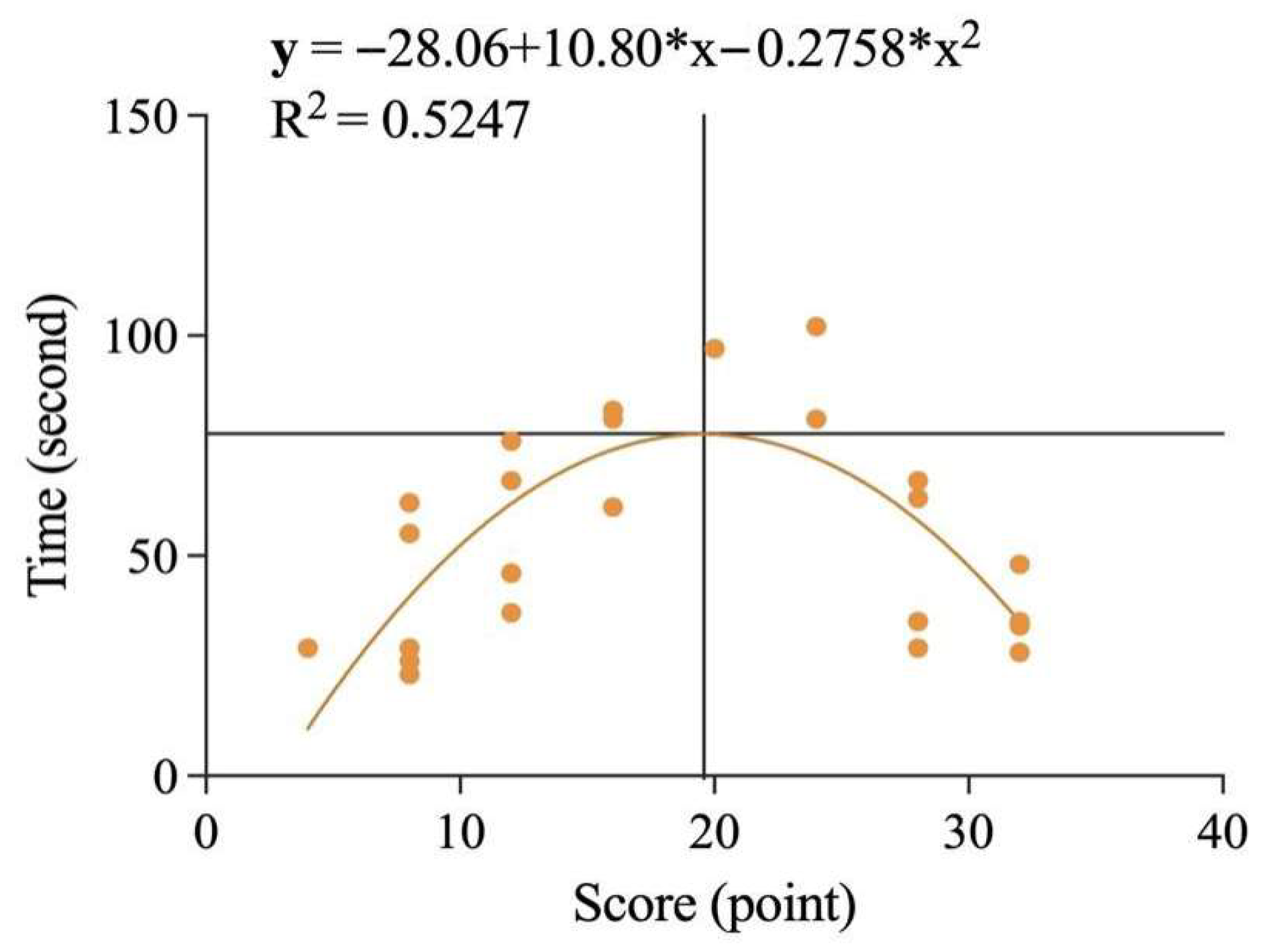

After the Shapiro–Wilk test revealed that the data did not conform to a normal distribution, this study conducted Spearman’s correlation analysis between the time spent and the scores of the two groups of students at the pretest, intervention, and posttest stages. The results showed that there was no significant correlation (

p > 0.05) between time spent and scores for either the CDA or NDA groups, either at the pretest or posttest stage. However, during the intervention phase, there was a significant correlation between scores and response times for both groups. As shown in

Figure 13, in the CDA group, there was a significant negative correlation between score and time spent (rs = 0.4738,

p < 0.0001), indicating that the higher the score, the shorter the reaction time and vice versa. For the NDA group, although direct analysis showed no significant correlation between scores and response times, we found when plotting the data, as shown in

Figure 14, that the fit was good after removing outliers and applying a second-order polynomial fit (y = −28.06 + 10.80*x − 0.2758*x2, R2 = 0.5247). Based on this figure, it was found that there was a positive correlation between score and reaction time when children’s scores were below 19.58 out of 32, and a negative correlation between score and reaction time when children’s scores were above 19.58.

4.9. Correlation Analysis Between Actual Scores and Mediator Scores

A Spearman correlation analysis was conducted to examine the relationship between actual scores and mediation scores, and the results showed a significant positive correlation between the two (rs = 0.9066, p < 0.0001). As shown in

Figure 15, the higher the actual score, the higher the mediation score.

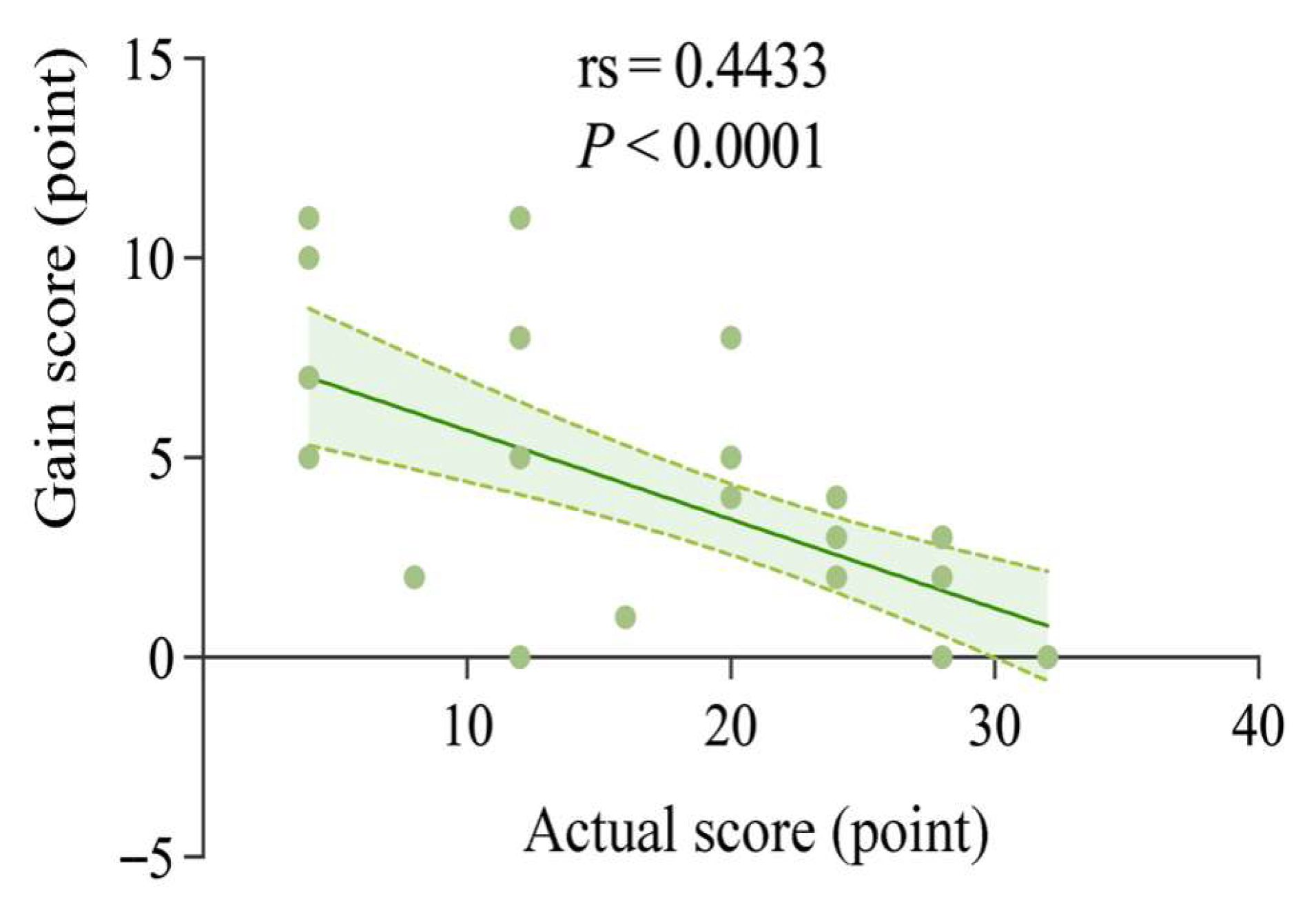

4.10. Correlation Analysis of Actual Scores and Gain Scores

Spearman’s correlation analysis of the relationship between the actual and mediator scores showed a significant negative correlation (rs = 0.4433, p < 0.0001). As shown in

Figure 16, the higher the actual score, the lower the gain score; conversely, the higher the gain score, the lower the actual score.

7. Conclusions and Implications

This study delved into the effects of computerized dynamic assessment on the graphical analogical reasoning ability of children with autism. The results show that computerized dynamic assessment is effective in stimulating the analogical reasoning potential of children with autism, but the effect is short-lived and has not yet developed a sustained impact. At the same initial ability level, the presence or absence of a prompt caused children to show different learning potentials; children with lower initial levels benefited more from computerized dynamic assessment. At the same time, there were differences in the cognitive patterns of children at different initial levels, with children at higher initial levels recognizing graphical information more quickly and accurately and achieving better results, while children at lower initial levels needed more effort to improve their graphical analogical reasoning. Overall, these results help to accurately identify individual differences in children with autism and provide a basis for individualized education.

In future educational practices, whether it is cognitive learning or non-cognitive learning such as social skills and emotion recognition, CDA can be adopted. When selecting mediators, in addition to teachers, interesting devices such as robots can also be chosen to enhance the learning interest of children with autism (

Resing et al., 2020b). When designing the prompt content, it is recommended that designers first prepare a basic version. During subsequent one-on-one personalized training, personalized plans can be developed according to the children’s preferences and needs (

Naeini & Duvall, 2012). In addition, considering the cognitive peculiarities of children with autism, they may need more levels of prompts (

Missiuna & Samuels, 1989). When presenting prompts, multimedia means such as audio and video can be used. Most learners prefer visual prompts; for example in scenarios such as social interactions, VR technology can be used for auxiliary presentation (

Bakhoda & Shabani, 2019). In terms of intervention time, an intervention plan with a semester as the unit can be formulated, since significant learning outcomes for children require a relatively long-term intervention. In conclusion, CDA has broad application prospects and important practical value in the education of children with autism. Reasonable design can help them achieve better development in cognitive and non-cognitive fields, gradually integrate into society, and embark on a better future.