Three-Dimensional Intelligent Understanding and Preventive Conservation Prediction for Linear Cultural Heritage

Abstract

1. Introduction

2. Low-Altitude Oblique Image-Based Intelligent Gaussian Splatting Generation

2.1. Principles of Gaussian Splatting for Linear Cultural Heritage Scenes

- (1)

- Source Point Generation and Influence Control

- (2)

- Multi-source Influence Accumulation Modeling

- (3)

- Region-of-Interest (ROI) Density Control Strategy

2.2. Intelligent Generation of 3D Gaussian Splatting Models

2.3. Model Evaluation, Comparison, and Applicability Analysis

2.3.1. Evaluation of Preservation Accuracy and Cultural Feature Fidelity

Evaluation of Preservation Accuracy

Evaluation of Cultural Feature Fidelity

2.3.2. Performance Comparison and Resource Consumption

3. Intelligent Understanding Based on 3D Gaussian Splatting Model

3.1. AHLLM-3D Network Design for Linear Cultural Heritage Understanding

3.2. Multimodal Understanding and Generation of the Great Wall Heritage Based on 3DGS

3.2.1. Dataset Construction

3.2.2. AHLLM-3D Model Distributed Training

3.3. Experimental Results and Analysis

3.3.1. Staged Training Metrics Analysis and Parameter Optimization Process

3.3.2. Evaluation of Command Comprehension Effectiveness and Verification of Semantic Reasoning Ability

4. Intelligent Understanding Based on 3D Gaussian Splatting Model

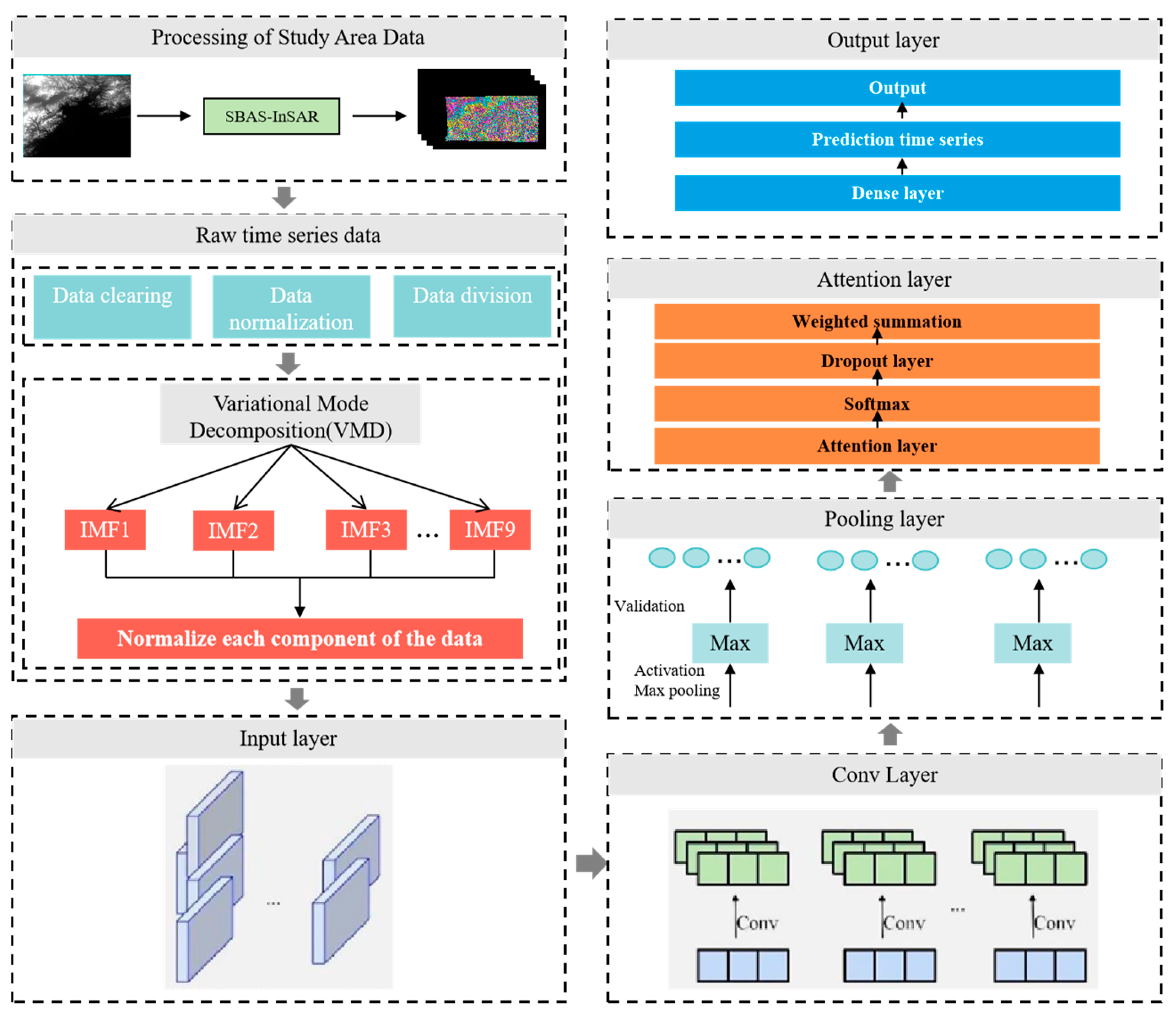

4.1. Research Methods and Technical Routes for Intelligent Forecasting

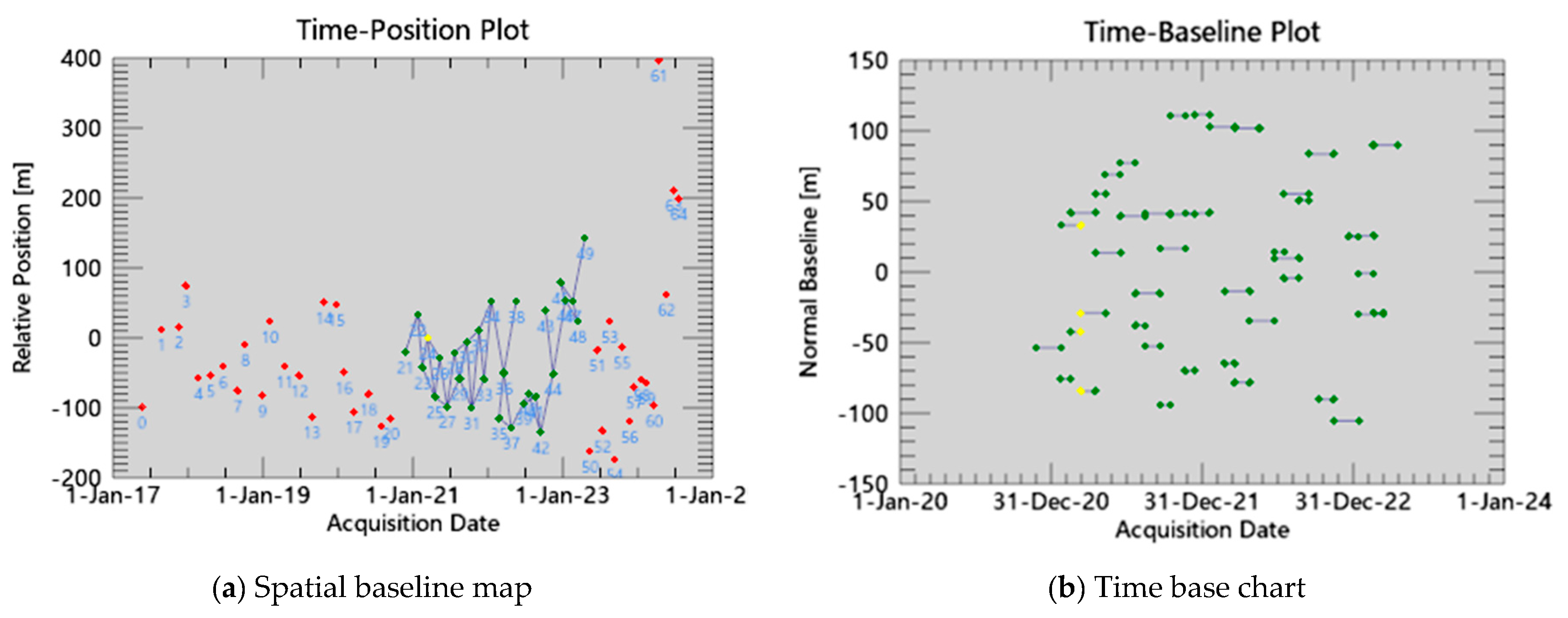

4.1.1. Data Acquisition for the Study Area

4.1.2. Technical Lines of Research

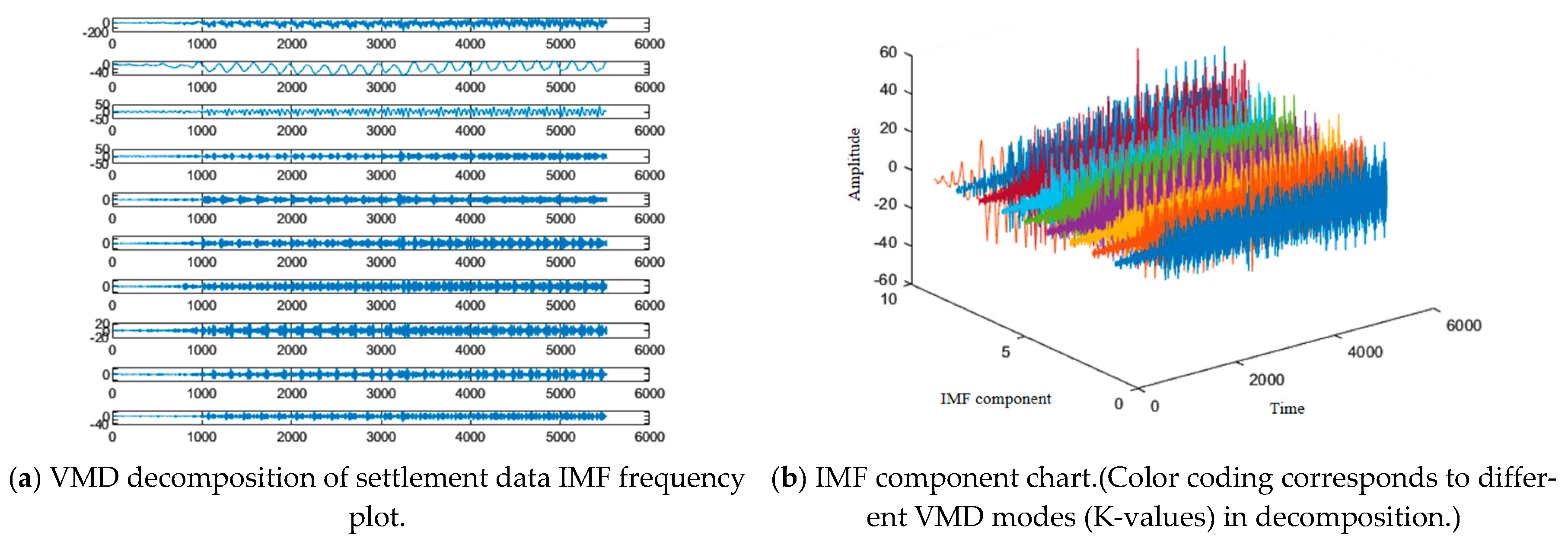

4.1.3. Principles of Snake Optimization Network Prediction Model Based on Variational Modal Decomposition

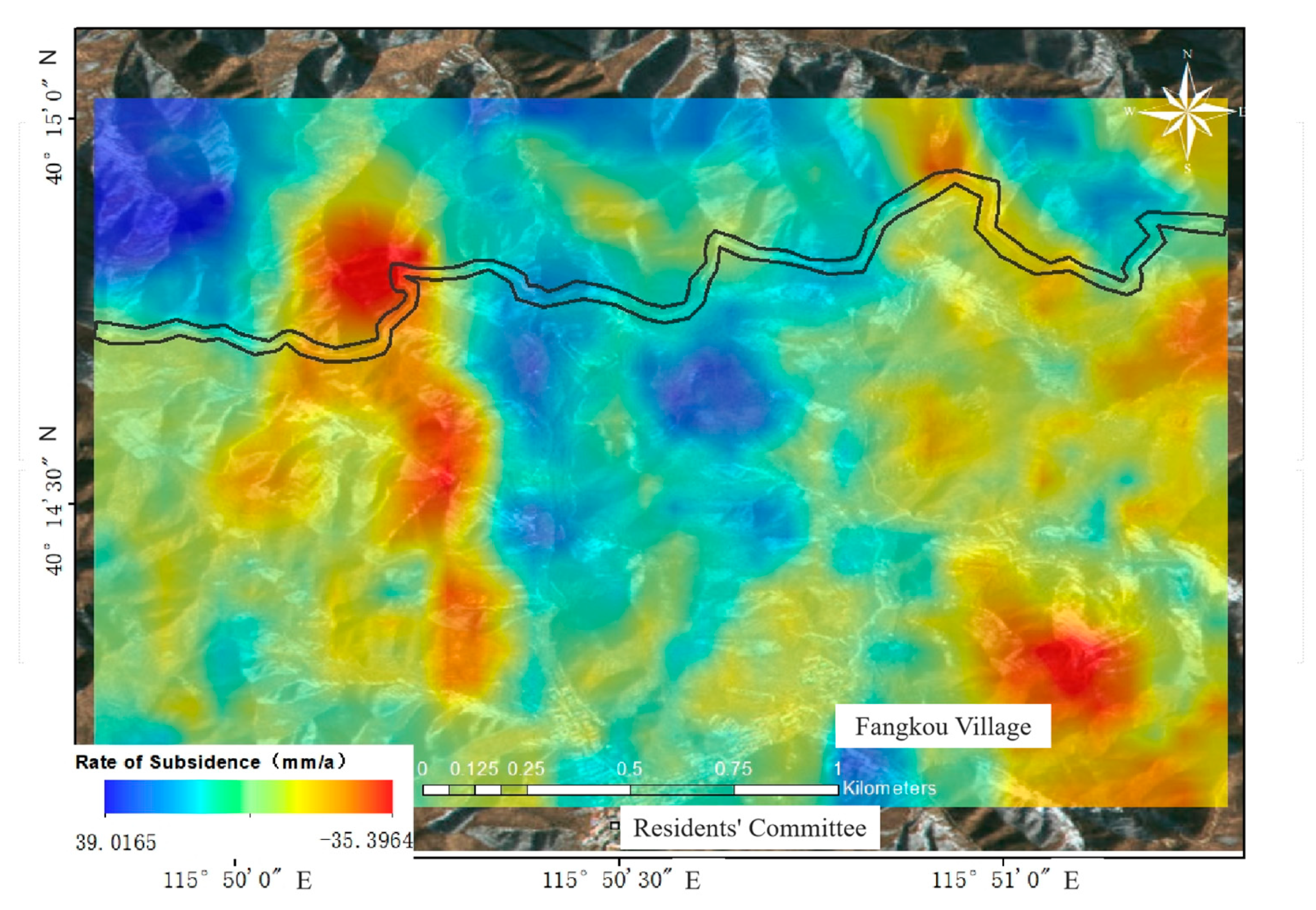

4.2. Synthetic Aperture Radar (SAR) Monitoring Time Series Analysis

4.3. Linear Cultural Heritage Deformation Displacement Prediction

4.3.1. Prediction Model Design for Variational Modal Decomposition Networks Incorporating Snake Optimization Algorithm

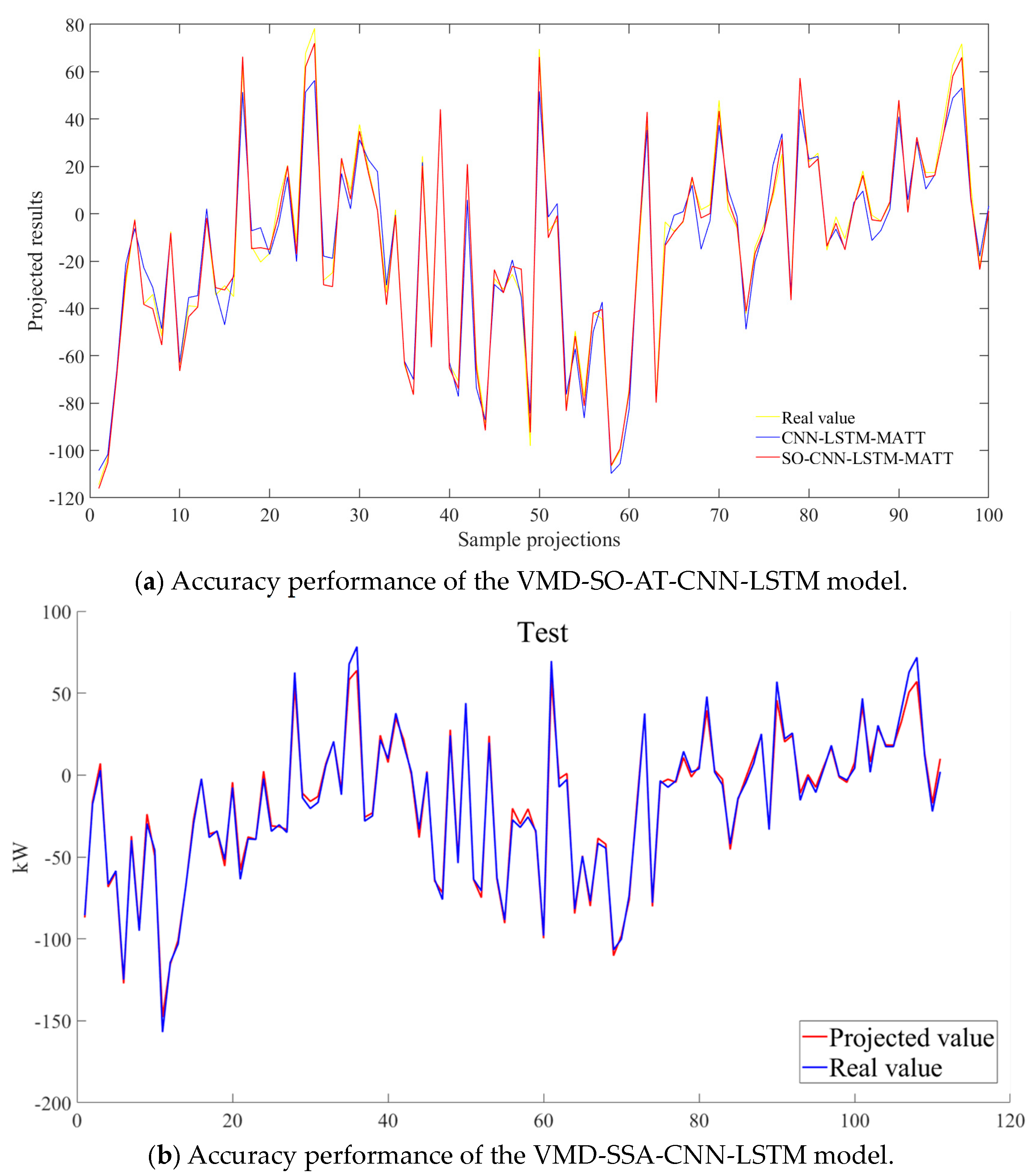

4.3.2. Forecast Results and Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Kioussi, A.; Karoglou, M.; Bakolas, A.; Labropouloset, K.; Moropoulou, A. Documentation protocols to generate risk indicators regarding degradation processes for cultural heritage risk evaluation. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2013, 40, 379–384. [Google Scholar] [CrossRef]

- Tang, P.; Chen, F.; Zhu, X.; Zhou, W. Monitoring Cultural Heritage Sites with Advanced Multi-Temporal InSAR Technique: The Case Study of the Summer Palace. Remote Sens. 2016, 8, 432. [Google Scholar] [CrossRef]

- Vileikis, O.; Cesaro, G.; Santana Quintero, M.; Van Balen, K.; Paolini, A.; Vafadari, A. Documentation in world heritage conservation: Towards managing and mitigating change—The case studies of Petra and the Silk Roads. J. Cult. Herit. Manag. Sustain. Dev. 2012, 2, 130–152. [Google Scholar] [CrossRef]

- Xu, H.; Chen, F.; Zhou, W. A comparative case study of MTInSAR approaches for deformation monitoring of the cultural landscape of the Shanhaiguan section of the Great Wall. Herit. Sci. 2021, 9, 71. [Google Scholar] [CrossRef]

- Agapiou, A.; Lysandrou, V.; Hadjimitsis, D.G. Earth observation contribution to cultural heritage disaster risk management: Case study of Eastern Mediterranean open air archaeological monuments and sites. Remote Sens. 2020, 12, 1330. [Google Scholar] [CrossRef]

- Vafadari, A.; Philip, G.; Jennings, R. Damage assessment and monitoring of cultural heritage places in a disaster and post-disaster event–a case study of Syria. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 695–701. [Google Scholar] [CrossRef]

- Noszczyk, T.; Gawronek, P. Remote sensing and GIS for environmental analysis and cultural heritage. Remote Sens. 2020, 12, 3960. [Google Scholar] [CrossRef]

- Ilies, D.C.; Caciora, T.; Herman, G.V.; Llies, A.; Ropa, M.; Baias, S. Geohazards affecting cultural heritage monuments. A complex case study from Romania. Geoj. Tour. Geosites 2020, 31, 1103–1112. [Google Scholar]

- Van Balen, K. Challenges that preventive conservation poses to the cultural heritage documentation field. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 713–717. [Google Scholar] [CrossRef]

- Gasparoli, P. Prevenzione e manutenzione nelle aree archeologiche. LANX. Riv. Sc. Spec. Beni Archeol. Univ. Studi Milano 2014, 19, 168–188. [Google Scholar]

- Schonberger, J.L.; Frahm, J.M. Structure-from-Motion Revisited. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 4104–4113. [Google Scholar]

- Guo, M.; Wu, X.; Qi, H.; Zhang, Y.; Chen, J.; Wei, Y.; Zhang, X.; Shang, X. A methodology for Sky-Space-Ground Integrated Remote Sensing monitoring: A Digital Twin Framework for Multi-Source Data-BIM Integration in Residential Quality Monitoring. J. Build. Eng. 2025, 102, 111976. [Google Scholar] [CrossRef]

- Li, W.; Zhang, Y.; Fang, Y.; Zhu, Q.; Zhu, X. Review of Deep Learning-Based 3D Reconstruction. Laser Optoelectron. Prog. 2025, 62, 1000003. [Google Scholar] [CrossRef]

- Mildenhall, B.; Srinivasan, P.P.; Tancik, M.; Ramamoorthi, R.; Ng, R. Nerf: Representing scenes as neural radiance fields for view synthesis. Commun. ACM 2021, 65, 99–106. [Google Scholar] [CrossRef]

- Liu, Y.; Wu, X. Survey of texture optimization algorithms for 3D reconstructed scenes. J. Image Graph. 2024, 29, 2303–2318. [Google Scholar] [CrossRef]

- Tancik, M.; Casser, V.; Yan, X.; Pradhan, S.; Mildenhall, B.; Srinivasan, P.P.; Barron, J.T.; Kretzschmar, H. Block-Nerf: Scalable Large Scene Neural View Synthesis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 8248–8258. [Google Scholar]

- Wang, W.; Tang, B.; Gu, Z.; Wang, S. Overview of Multi-View 3D Reconstruction Techniques in Deep Learning. Comput. Eng. Appl. 2025, 61, 22–35. [Google Scholar]

- Kerbl, B.; Kopanas, G.; Leimkühler, T.; Drettakis, G. 3d gaussian splatting for real-time radiance field rendering. ACM Trans. Graph. 2023, 42, 139–141. [Google Scholar] [CrossRef]

- Stuart, L.A.; Pound, M.P. 3DGS-to-PC: Convert a 3D Gaussian Splatting Scene into a Dense Point Cloud or Mesh. arXiv 2025, arXiv:2501.07478. [Google Scholar]

- Wan, D.; Lu, R.; Zeng, G. Superpoint gaussian splatting for real-time high-fidelity dynamic scene reconstruction. arXiv 2024, arXiv:2406.03697. [Google Scholar]

- Kotovenko, D.; Grebenkova, O.; Ommer, B. EDGS: Eliminating Densification for Efficient Convergence of 3DGS. arXiv 2025, arXiv:2504.13204. [Google Scholar]

- Cui, J.; Cao, J.; Zhao, F.; He, Z.; Chen, Y.; Zhong, Y.; Xu, L.; Shi, Y.; Zhang, Y.; Yu, J. Letsgo: Large-scale garage modeling and rendering via lidar-assisted gaussian primitives. ACM Trans. Graph. (TOG) 2024, 43, 1–18. [Google Scholar] [CrossRef]

- Gao, P.; Geng, S.; Zhang, R.; Ma, T.; Fang, R.; Zhang, Y.; Li, H.; Qiao, Y. Clip-adapter: Better vision-language models with feature adapters. Int. J. Comput. Vis. 2024, 132, 581–595. [Google Scholar] [CrossRef]

- Alayrac, J.B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: A visual language model for few-shot learning. Adv. Neural Inf. Process. Syst. 2022, 35, 23716–23736. [Google Scholar]

- Li, J.; Li, D.; Savarese, S.; Hoi, S. Blip-2: Bootstrapping Language-Image Pre-Training with Frozen Image Encoders and Large Language Models. In Proceedings of the International Conference on Machine Learning, Honolulu, HI, USA, 23–29 July 2023; pp. 19730–19742. [Google Scholar]

- Wen, S.; Fang, G.; Zhang, R.; Gao, P.; Dong, H.; Metaxas, D. Improving compositional text-to-image generation with large vision-language models. arXiv 2023, arXiv:2310.06311. [Google Scholar]

- Choudhary, T.; Dewangan, V.; Chandhok, S.; Priyadarshan, S.; Jain, A.; Singh, A.; Srivastava, S.; Jatavallabhula, K.; Krishna, K. Talk2bev: Language-Enhanced Bird’s-Eye View Maps for Autonomous Driving. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 13–17 May 2024; pp. 16345–16352. [Google Scholar]

- Huang, W.; Wang, C.; Zhang, R.; Li, Y.; Wu, J.; Fei-Fei, L. Voxposer: Composable 3d value maps for robotic manipulation with language models. arXiv 2023, arXiv:2307.05973. [Google Scholar]

- Wang, M.; Cheng, X.; Pan, J.; Pi, Y.; Xiao, J. Large models enabling intelligent photogrammetry: Status, challenges and prospects. Acta Geod. Cartogr. Sin. 2024, 53, 1955–1966. [Google Scholar]

- Qian, Q.; Sun, L.P.; Liu, J.L.; Du, H.; Ling, C. Medical imaging report generation via multi-modal large language models with discrimination-enhanced fine-tuning. Appl. Res. Comput. 2025, 42, 762–769. [Google Scholar] [CrossRef]

- Zhang, Y.J.; Li, Y.S.; Dang, B.; Wu, K.; Guo, X.; Wang, J.; Chen, J.; Yang, M. Multi-modal remote sensing large foundation models: Current research status and future prospect. Acta Geod. Cartogr. Sin. 2024, 53, 1942–1954. [Google Scholar]

- Zhang, R.; Guo, Z.; Zhang, W.; Li, K.; Miao, X.; Cui, B.; Qiao, Y.; Gao, P.; Li, H. Pointclip: Point Cloud Understanding by Clip. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 8552–8562. [Google Scholar]

- Xue, L.; Gao, M.; Xing, C.; Martín-Martín, R.; Wu, J.; Xiong, C.; Xu, R.; Niebles, J.; Savarese, S. Ulip: Learning a Unified Representation of Language, Images, and Point Clouds for 3d Understanding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 11–15 June 2023; pp. 1179–1189. [Google Scholar]

- Liu, M.; Shi, R.; Kuang, K.; Zhu, Y.; Li, X.; Han, S.; Cai, H.; Porikli, F.; Su, H. Openshape: Scaling up 3d shape representation towards open-world understanding. Adv. Neural Inf. Process. Syst. 2023, 36, 44860–44879. [Google Scholar]

- Huang, K.C.; Li, X.; Qi, L.; Yan, S.; Yang, M. Reason3d: Searching and Reasoning 3d Segmentation via Large Language Model. In Proceedings of the International Conference on 3D Vision 2025, Singapore, 25–28 March 2025. [Google Scholar]

- Chen, B.; Xu, Z.; Kirmani, S.; Ichter, B.; Sadigh, D.; Guibas, L.; Xia, F. Spatialvlm: Endowing Vision-Language Models with Spatial Reasoning Capabilities. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 17–18 June 2024; pp. 14455–14465. [Google Scholar]

- Ma, C.; Lu, K.; Cheng, T.Y.; Trigoni, N.; Markham, A. Spatialpin: Enhancing Spatial Reasoning Capabilities of Vision-Language Models Through Prompting and Interacting 3d Priors. In Proceedings of the Thirty-Eighth Annual Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 10–15 December 2024. [Google Scholar]

- Cheng, A.C.; Yin, H.; Fu, Y.; Guo, Q.; Yang, R.; Kautz, J.; Wang, X.; Liu, S. Spatialrgpt: Grounded spatial reasoning in vision language model. arXiv 2024, arXiv:2406.01584. [Google Scholar]

- Yang, S.; Liu, J.; Zhang, R.; Pan, M.; Guo, Z.; Li, X.; Chen, Z.; Gao, P.; Li, H.; Guo, Y.; et al. Lidar-Llm: Exploring the Potential of Large Language Models for 3d Lidar Understanding. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 9247–9255. [Google Scholar]

- Tang, Y.; Han, X.; Li, X.; Yu, Q.; Xu, J.; Hao, Y.; Hu, L.; Chen, M. More Text, Less Point: Towards 3d Data-Efficient Point-Language Understanding. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 7284–7292. [Google Scholar]

- Zheng, D.; Huang, S.; Wang, L. Video-3d Llm: Learning Position-Aware Video Representation for 3d Scene Understanding. arXiv 2024, arXiv:2412.00493. [Google Scholar]

- Li, X.; Ding, J.; Chen, Z.; Elhoseiny, M. Uni3dl: Unified model for 3d and language understanding. arXiv 2023, arXiv:2312.03026. [Google Scholar]

- Liu, S.C.; Tran, V.N.; Chen, W.; Cheng, W.; Huang, Y.; Liao, I.; Li, Y.; Zhang, J. Pavlm: Advancing point cloud based affordance understanding via vision-Language model. arXiv 2024, arXiv:2410.11564. [Google Scholar]

- Zhou, J.; Wang, J.; Ma, B.; Liu, Y.; Huang, T.; Wang, X. Uni3d: Exploring unified 3d representation at scale. arXiv 2023, arXiv:2310.06773. [Google Scholar]

- Guo, M.; Sun, M.; Pan, D.; Wang, G.; Zhou, Y.; Yan, B.; Fu, Z. High-precision deformation analysis of yingxian wooden pagoda based on UAV image and terrestrial LiDAR point cloud. Herit. Sci. 2023, 11, 1. [Google Scholar] [CrossRef]

- Carnec, C.; Massonnet, D.; King, C. Two examples of the use of SAR interferometry on displacement fields of small spatial extent. Geophys. Res. Lett. 1996, 23, 3579–3582. [Google Scholar] [CrossRef]

- Guo, M.; Tang, X.; Liu, Y.; Wang, C.; Wei, Y. Ground deformation analysis along the island subway line by integrating time-series InSAR and LiDAR techniques. Opt. Precis. Eng. 2023, 31, 1988–1999. [Google Scholar] [CrossRef]

- Ferretti, A.; Prati, C.; Rocca, F. Analysis of Permanent Scatterers in SAR interferometry. In Proceedings of the IGARSS 2000: IEEE 2000 International Geoscience and Remote Sensing Symposium. Taking the Pulse of the Planet: The Role of Remote Sensing in Managing the Environment, Honolulu, HI, USA, 24–28 July 2000; pp. 761–763. [Google Scholar]

- Du, Q.; Li, G.; Zhou, Y.; Chai, M.; Chen, D.; Qi, S.; Wu, G. Deformation Monitoring in an Alpine Mining Area in the Tianshan Mountains Based on SBAS-InSAR Technology. Adv. Mater. Sci. Eng. 2021, 2021, 9988017. [Google Scholar] [CrossRef]

- Wang, R.; Feng, Y.; Tong, X.; Li, P.; Wang, J.; Tang, P.; Tang, X.; Xi, M.; Zhou, Y. Large-scale surface deformation monitoring using SBAS-InSAR and intelligent prediction in typical cities of Yangtze River Delta. Remote Sens. 2023, 15, 4942. [Google Scholar] [CrossRef]

- Hashim, F.A.; Hussien, A.G. Snake Optimizer: A novel meta-heuristic optimization algorithm. Knowl. Based Syst. 2022, 242, 108320. [Google Scholar] [CrossRef]

- Jiang, H.; Balz, T.; Cigna, F.; Tapete, D.; Li, J.; Han, Y. Multi-sensor InSAR time series fusion for long-term land subsidence monitoring. Geo-Spat. Inf. Sci. 2023, 27, 1424–1440. [Google Scholar] [CrossRef]

- Dong, J.; Niu, R.; Li, B.; Xu, H.; Wang, S. Potential landslides identification based on temporal and spatial filtering of SBAS-InSAR results. Geomat. Nat. Hazards Risk 2023, 14, 52–75. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, J. Integrating SBAS-InSAR and AT-LSTM for Time-Series Analysis and Prediction Method of Ground Subsidence in Mining Areas. Remote Sens. 2023, 15, 3409. [Google Scholar] [CrossRef]

- Wang, T.; Zhang, Q.; Wu, Z. A Deep-Learning-Facilitated, Detection-First Strategy for Operationally Monitoring Localized Deformation with Large-Scale InSAR. Remote Sens. 2023, 15, 2310. [Google Scholar] [CrossRef]

- Raspini, F.; Caleca, F.; Del Soldato, M.; Festa, D.; Confuorto, P.; Bianchini, S. Review of satellite radar interferometry for subsidence analysis. Earth-Sci. Rev. 2022, 235, 104239. [Google Scholar] [CrossRef]

- Li, Z.; He, W.; Cheng, M.; Hu, J.; Yang, G.; Zhang, H. SinoLC-1: The first 1 m resolution national-scale land-cover map of China created with a deep learning framework and open-access data. Earth Syst. Sci. Data 2023, 15, 4749–4780. [Google Scholar] [CrossRef]

- Ullah, K.; Ahsan, M.; Hasanat, S.M.; Haris, M.; Yousaf, H.; Raza S, F. Short-Term Load Forecasting: A Comprehensive Review and Simulation Study With CNN-LSTM Hybrids Approach. IEEE Access 2024, 12, 111858–111881. [Google Scholar] [CrossRef]

- Shi, H.; Wei, A.; Xu, X.; Zhu, Y.; Hu, H.; Tang, S. A CNN-LSTM based deep learning model with high accuracy and robustness for carbon price forecasting: A case of Shenzhen’s carbon market in China. J. Environ. Manag. 2024, 352, 120131. [Google Scholar] [CrossRef]

- Liu, L.; Wu, W. Application of GPS and GIS integration technology in mine subsidence monitoring. Surv. Mapp. Sci. 2009, 34, 101–102. [Google Scholar]

- Guo, M.; Zhang, X.; Cheng, P.; Huang, M.; Liao, L. Multi-region Group Sampling Radius Semantic Segmentation Network Guided by Spatial Information for Highway. J. Nondestruct. Eval. 2025, 44, 52. [Google Scholar] [CrossRef]

- Shang, X.; Guo, M.; Wang, G.; Zhao, J.; Pan, D. Behavioral model construction of architectural heritage for digital twin. npj Herit. Sci. 2025, 13, 129. [Google Scholar] [CrossRef]

- Shi, Y.; Guo, M.; Zhao, J.; Liang, X.; Shang, X.; Huang, M.; Guo, S.; Zhao, Y. Optimization of structural reinforcement assessment for architectural heritage digital twins based on LiDAR and multi-source remote sensing. Herit. Sci. 2024, 12, 310. [Google Scholar] [CrossRef]

- Guo, M.; Qi, H.; Zhao, Y.; Liu, Y.; Zhao, J.; Zhang, Y. Design and Management of a Spatial Database for Monitoring Building Comfort and Safety. Buildings 2023, 13, 2982. [Google Scholar] [CrossRef]

- Guo, M.; Shang, X.; Zhao, J.; Huang, M.; Zhang, Y.; Lv, S. Synergy of LIDAR and hyperspectral remote sensing: Health status assessment of architectural heritage based on normal cloud theory and variable weight theory. Herit. Sci. 2024, 12, 217. [Google Scholar] [CrossRef]

- Tapete, D.; Cigna, F. Rapid Mapping and Deformation Analysis over Cultural Heritage and Rural Sites Based on Persistent Scatterer Interferometry. Int. J. Geophys. 2012, 19, 618609. [Google Scholar] [CrossRef]

- Guo, M.; Zhao, J.; Pan, D.; Sun, M.; Zhou, Y.; Yan, B. Normal cloud model theory-based comprehensive fuzzy assessment of wooden pagoda safety. J. Cult. Herit. 2022, 55, 1–10. [Google Scholar] [CrossRef]

- Zhang, Z.; Hu, C.; Wu, Z.; Zhang, Z.; Yang, S.; Yang, W. Monitoring and analysis of ground subsidence in Shanghai based on PS-InSAR and SBAS-InSAR technologies. Sci. Rep. 2023, 13, 8031. [Google Scholar] [CrossRef] [PubMed]

- Guo, M.; Sun, M.; Pan, D.; Huang, M.; Yan, B.; Zhou, Y.; Nie, P.; Zhou, T.; Zhao, Y. High-precision detection method for large and complex steel structures based on global registration algorithm and automatic point cloud generation. Measurement 2021, 172, 108765. [Google Scholar] [CrossRef]

- Guo, M.; Yan, B.; Zhou, T.; Chen, Z.; Zhang, C.; Liu, Y. Application of LiDAR technology in the deformation analysis of Yingxian wooden tower. J. Build. Sci. Eng. 2020, 37, 109–117. [Google Scholar]

- Maghsoudi, Y.; Amani, R.; Ahmadi, H. A study of land subsidence in west of Tehran using Sentinel-1 data and permanent scatterer interferometric technique. Arab J Geosci. 2021, 14, 30. [Google Scholar] [CrossRef]

- Khorrami, M.; Abrishami, S.; Maghsoudi, Y.; Alizadeh, B.; Perissin, D. Extreme subsidence in a populated city (Mashhad) detected by PSInSAR considering groundwater withdrawal and geotechnical properties. Sci. Rep. 2020, 10, 11357. [Google Scholar] [CrossRef]

- Zhao, X.; He, L.; Li, H.; He, L.; Liu, S. Multi-Scale Debris Flow Warning Technology Combining GNSS and InSAR Technology. Water 2025, 17, 577. [Google Scholar] [CrossRef]

- Berardino, P.; Fornaro, G.; Lanari, R.; Sansosti, E. A new algorithm for surface deformation monitoring based on small baseline differential SAR interferograms. IEEE TGRS 2002, 40, 2375–2383. [Google Scholar] [CrossRef]

- Cuca, B.; Zaina, F.; Tapete, D. Monitoring of damages to cultural heritage across Europe using remote sensing and earth observation: Assessment of scientific and grey literature. Remote Sens. 2023, 15, 3748. [Google Scholar] [CrossRef]

- Rinaudo, F.; Chiabrando, F.; Lingua, A.; Spanò, A. Archaeological site monitoring: UAV photogrammetry can be an answer. The International archives of the photogrammetry. Remote Sens. Spat. Inf. Sci. 2012, 39, 583–588. [Google Scholar]

- Carlucci, R.; Di Iorio, A. MONITORAGGIO DI SITI ARCHEOLOGICI DA SATELLITE UN METODO IBRIDO TRAMITE REMOTE SENSING, TECNICHE GIS E UN ALGORITMO DI RILEVAMENTO DELLA FORMA UTILIZZABILE SU IMMAGINI SAR. Archeomatica 2010, 1, 22–27. [Google Scholar]

- Chen, F.; Lasaponara, R.; Masini, N. An overview of satellite synthetic aperture radar remote sensing in archaeology: From site detection to monitoring. J. Cult. Herit. 2017, 23, 5–11. [Google Scholar] [CrossRef]

- Zhang, Z.; Chen, X.; Wu, Y. Research on Protection and Utilization of Linear Cultural Heritage—Taking the Planning and Design of the Shandong Section of the Grand Canal National Cultural Park as an Example. Design 2024, 9, 772–782. [Google Scholar] [CrossRef]

- Wang, M.; Liu, H.; Zhao, D.; Hu, Y. Construction of Tianjin Cultural Heritage Spatial Protection System in the Context of Territorial Spatial Planning. Landsc. Archit. 2023, 30, 46–50. [Google Scholar]

| Data Source | Submodule | Specific Use | Processing Method |

|---|---|---|---|

| InSAR | Deformation Monitoring and Prediction | Provides large-scale surface deformation data | Generates time series deformation information using SBAS-InSAR technology for large-scale region monitoring. |

| UAV | Low-altitude Tilt Photogrammetry and Modeling | Provides high-resolution 3D reconstruction models | Uses SfM and MVS technologies for feature extraction, sparse point cloud generation, and texture mapping. |

| Point Cloud | 3D Modeling and Semantic Analysis | Provides 3D geometric information of cultural heritage | Combines high-density sampling and 3DGS technology, integrates point cloud data with large language models for semantic understanding and spatial analysis. |

| Multimodal Data | Intelligent Understanding and Generation | Conducts semantic understanding and multimodal data fusion for cultural heritage | Combines 3DGS with the AHLLM-3D model; integrates point cloud data and text for dual-level annotation and semantic analysis. |

| SBAS-InSAR | Deformation Monitoring and Trend Analysis | Monitors ground subsidence of linear cultural heritage | Uses SBAS-InSAR technology for high-precision surface subsidence prediction and analysis. |

| Metric | 3DGS | PhotoScan |

|---|---|---|

| Points/Faces | 20.48 M Gaussians | 145 M dense points, 28.98 M faces |

| Model Size | 2.6 GB | 3.2 G |

| Rendering Speed | 32 FPS (4090) | No real-time rendering |

| Total Modeling Time | 3 d 15 h (4090)/ 2 h 32 m (H800) | >5 days |

| Rendering Type | Real time; interactive | Static, non-interactive |

| Best Use Case | Visualization + AI | Static documentation and measurement |

| Model | Iterations | Model Size (GB) | GPU Use | Time | |||

|---|---|---|---|---|---|---|---|

| 3DGS | 30k | 22.91 | 0.650 | 0.357 | 3.48 | 74.34 | 2 h 19 min |

| CityGaussian | 30k | 22.88 | 0.647 | 0.360 | 3.58 | 76.89 | 2 h 39 min |

| gsplat | 30k | 21.10 | 0.476 | 0.718 | 1.92 | 55.35 | 2 h 17 min |

| Ours | 30k | 22.94 | 0.655 | 0.357 | 3.57 | 78.86 | 2 h 32 min |

| k | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| k = 3 | 0.0044 | 0.0716 | 0.1691 | ||||||||

| k = 4 | 0.0023 | 0.0272 | 0.0820 | 0.1959 | |||||||

| k = 5 | 0.0021 | 0.0247 | 0.0774 | 0.1470 | 0.2164 | ||||||

| k = 6 | 0.0020 | 0.0238 | 0.0758 | 0.1356 | 0.1929 | 0.3144 | |||||

| k = 7 | 0.0029 | 0.0226 | 0.0728 | 0.1141 | 0.1558 | 0.2197 | 0.3755 | ||||

| k = 8 | 0.0019 | 0.0225 | 0.0706 | 0.1025 | 0.1509 | 0.2044 | 0.2676 | 0.3802 | |||

| k = 9 | 0.0019 | 0.0226 | 0.0703 | 0.1013 | 0.1499 | 0.2006 | 0.2538 | 0.3241 | 0.3877 | ||

| k = 10 | 0.0019 | 0.0225 | 0.0700 | 0.0998 | 0.1485 | 0.1935 | 0.2250 | 0.2675 | 0.3395 | 0.3913 | |

| k = 11 | 0.0019 | 0.0225 | 0.0697 | 0.0982 | 0.1470 | 0.1855 | 0.2062 | 0.2345 | 0.2720 | 0.3922 | 0.3471 |

| Predictive Model | RMSE (mm) | MAE (mm) | R2 |

|---|---|---|---|

| VMD-SO-AT-CNN-LSTM (ours) | 3.5209 | 2.7584 | 0.9932 |

| VMD-SSA-CNN-LSTM | 9.5036 | 4.3827 | 0.9670 |

| CNN-LSTM-MATT | 8.4487 | 6.8381 | 0.9610 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, R.; Guo, M.; Zhang, Y.; Chen, J.; Wei, Y.; Zhu, L. Three-Dimensional Intelligent Understanding and Preventive Conservation Prediction for Linear Cultural Heritage. Buildings 2025, 15, 2827. https://doi.org/10.3390/buildings15162827

Wang R, Guo M, Zhang Y, Chen J, Wei Y, Zhu L. Three-Dimensional Intelligent Understanding and Preventive Conservation Prediction for Linear Cultural Heritage. Buildings. 2025; 15(16):2827. https://doi.org/10.3390/buildings15162827

Chicago/Turabian StyleWang, Ruoxin, Ming Guo, Yaru Zhang, Jiangjihong Chen, Yaxuan Wei, and Li Zhu. 2025. "Three-Dimensional Intelligent Understanding and Preventive Conservation Prediction for Linear Cultural Heritage" Buildings 15, no. 16: 2827. https://doi.org/10.3390/buildings15162827

APA StyleWang, R., Guo, M., Zhang, Y., Chen, J., Wei, Y., & Zhu, L. (2025). Three-Dimensional Intelligent Understanding and Preventive Conservation Prediction for Linear Cultural Heritage. Buildings, 15(16), 2827. https://doi.org/10.3390/buildings15162827