Building Envelope Thermal Anomaly Detection Using an Integrated Vision-Based Technique and Semantic Segmentation

Abstract

1. Introduction

1.1. Thermal Anomaly Detection

1.2. Semantic Segmentation

2. Research Objectives

- To collect visible and thermal imagery of a case study building using UAVs and to implement a deep learning–based segmentation approach that distinguishes between envelope components prior to anomaly detection.

- To compare the detection accuracy of workflows with and without semantic segmentation using standard evaluation metrics.

- To assess the potential of this workflow for scalable, component-level diagnostics in building envelope evaluations (e.g., pre- and post-retrofit evaluation).

- An automated vision-based workflow was developed for building envelope thermal anomaly detection using visible and thermal imagery.

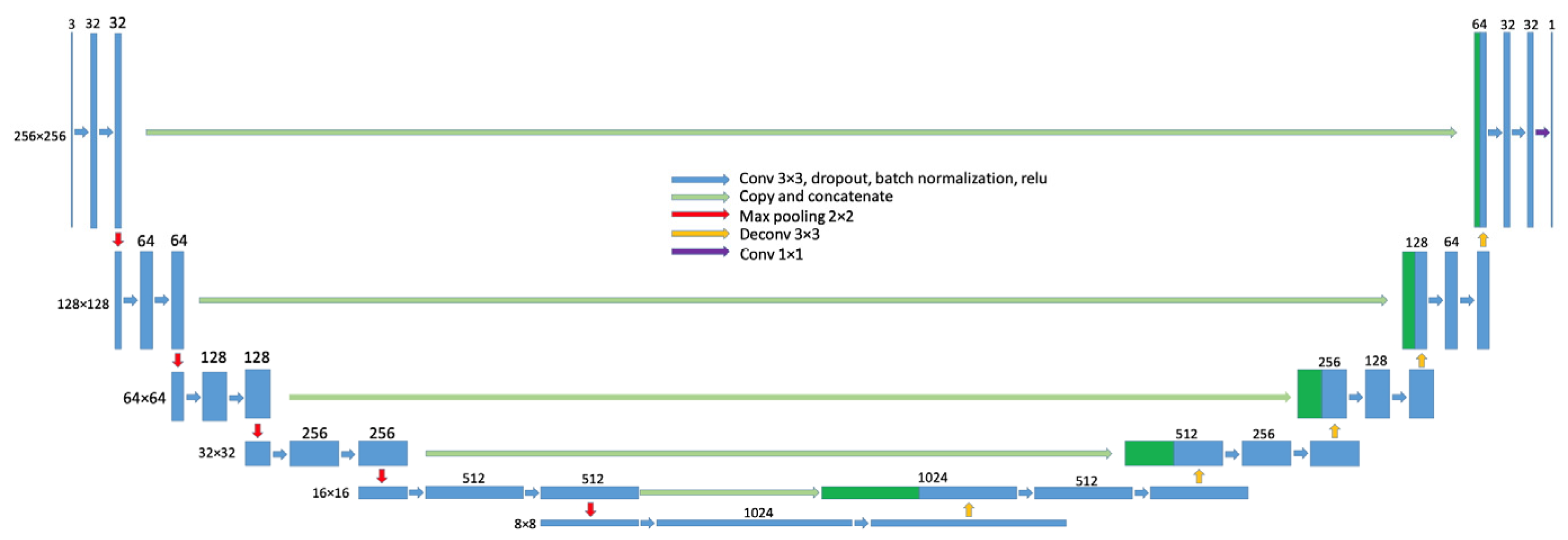

- A deep learning–based semantic segmentation method (U-Net) was integrated into the workflow to isolate building envelope components (e.g., walls and windows).

- The impact of segmentation on detection accuracy was quantitatively assessed using precision, recall, F1 score, and Intersection over Union (IoU), with a significant improvement observed when component-level segmentation was applied.

- A drone-based case study was conducted to validate the framework.

- The study demonstrated that segmented anomaly detection achieved a higher accuracy than the baseline workflow.

- The work offers insight into challenges related to training data, and thermal imaging conditions, and outlines considerations for future large-scale applications.

3. Materials and Methods

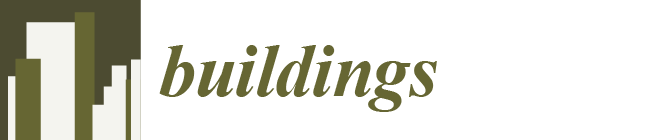

3.1. Flight Path Design and Data Collection

3.2. Thermal Anomaly Detection Approach

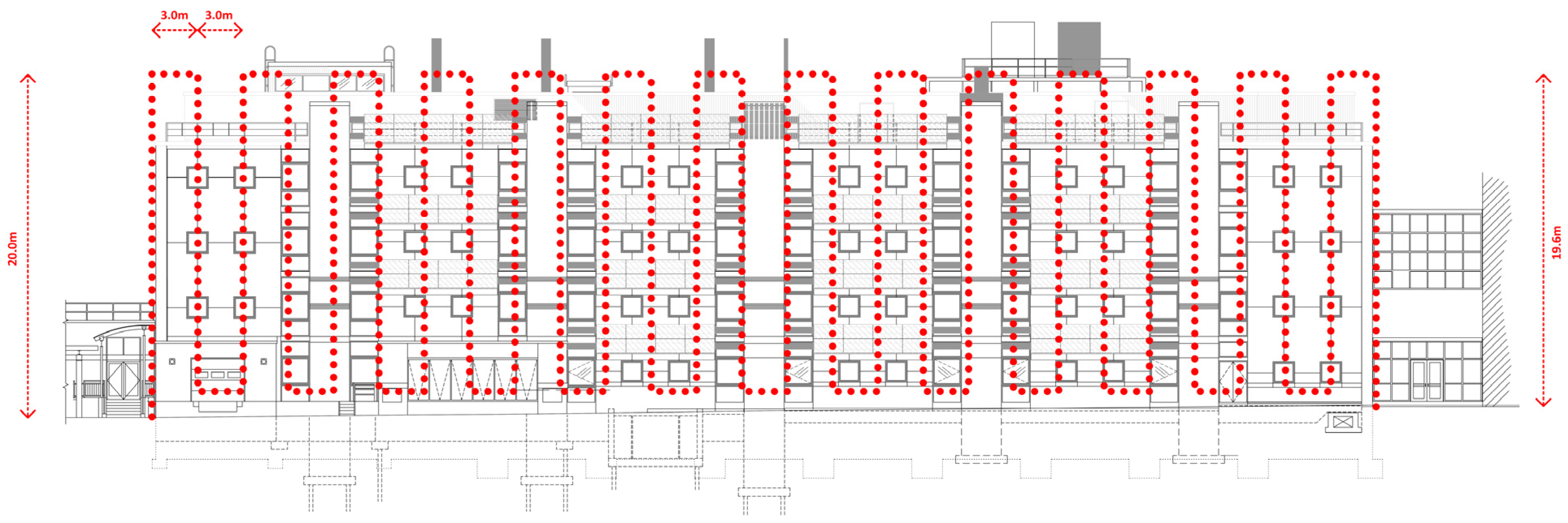

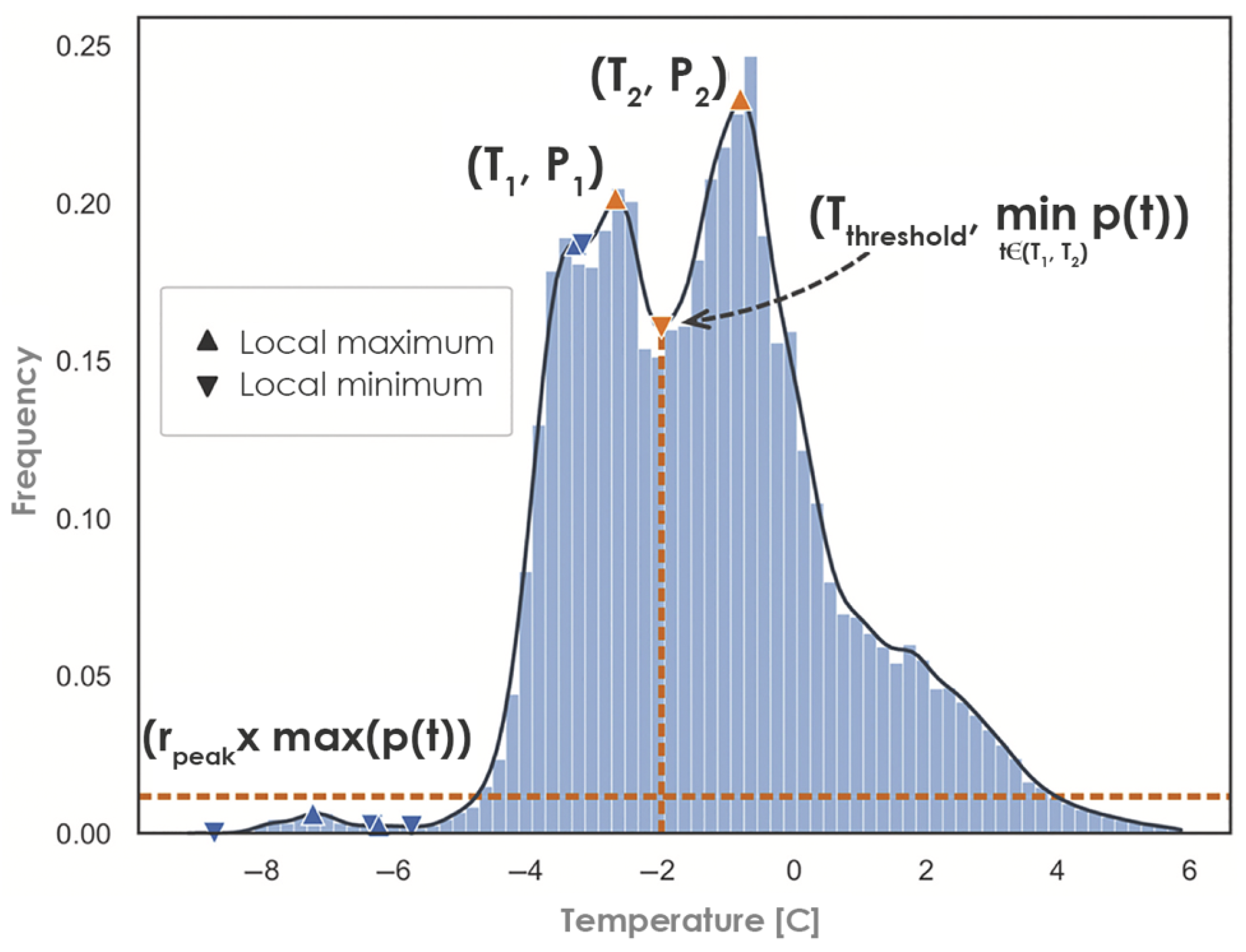

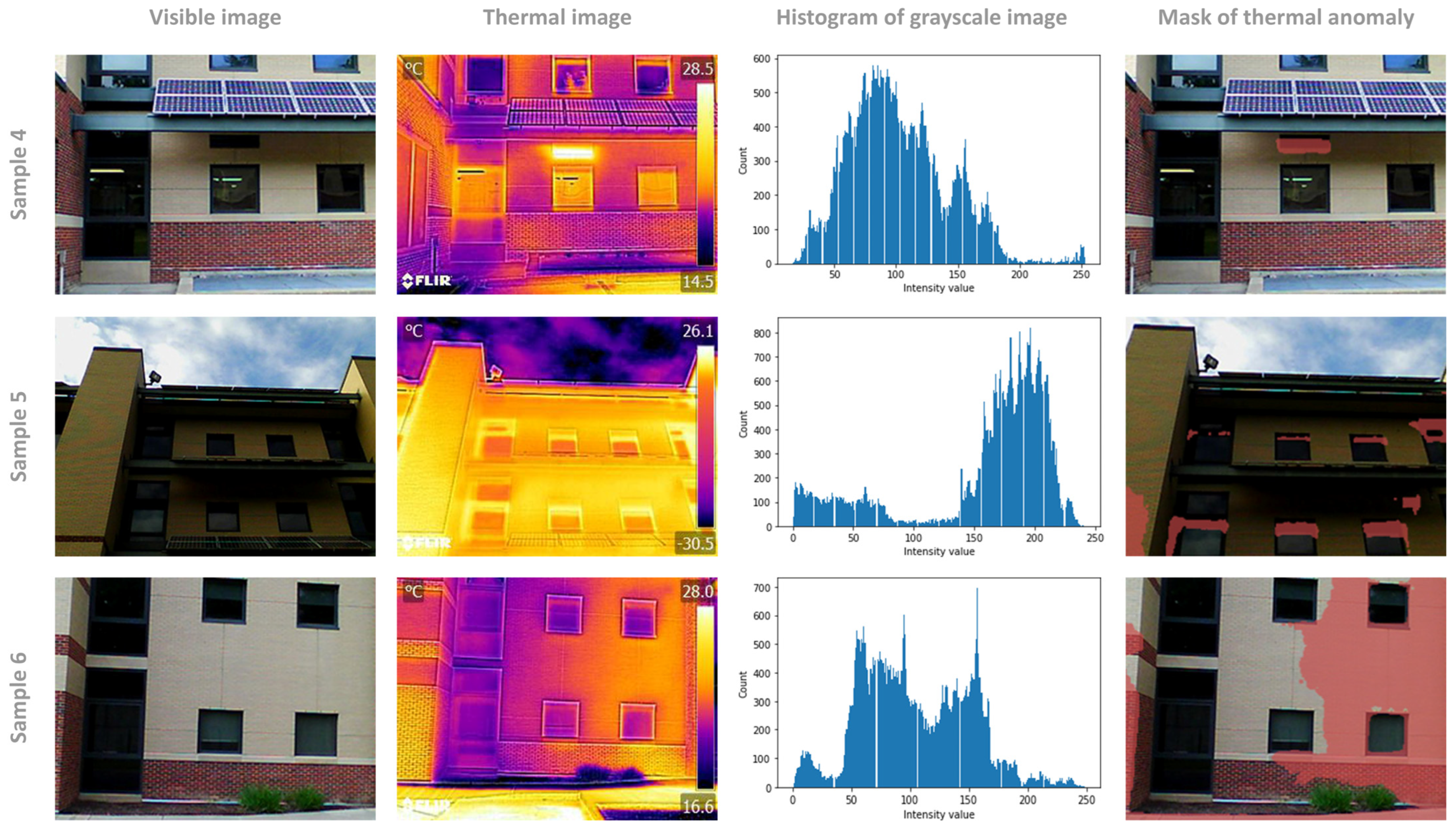

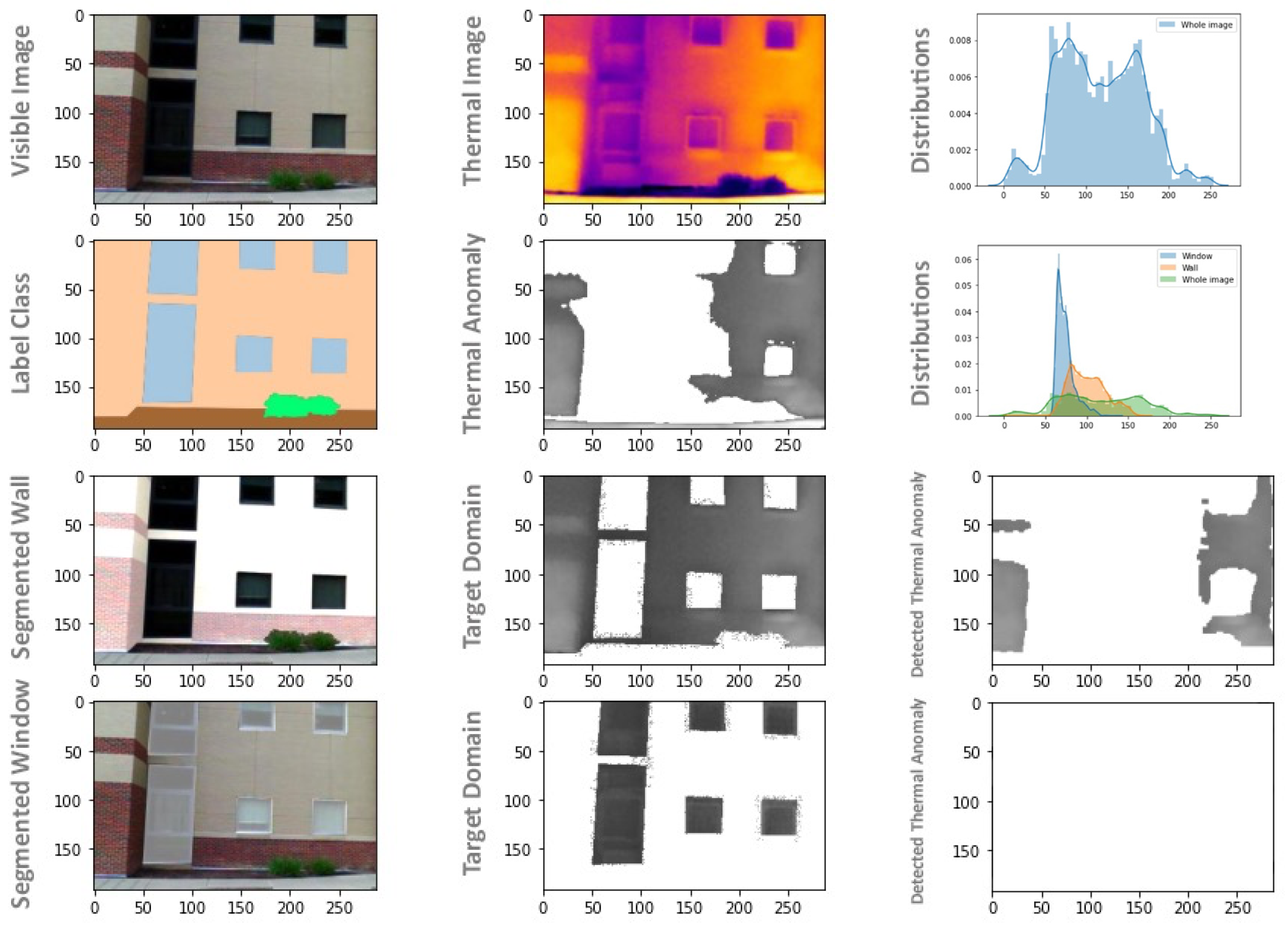

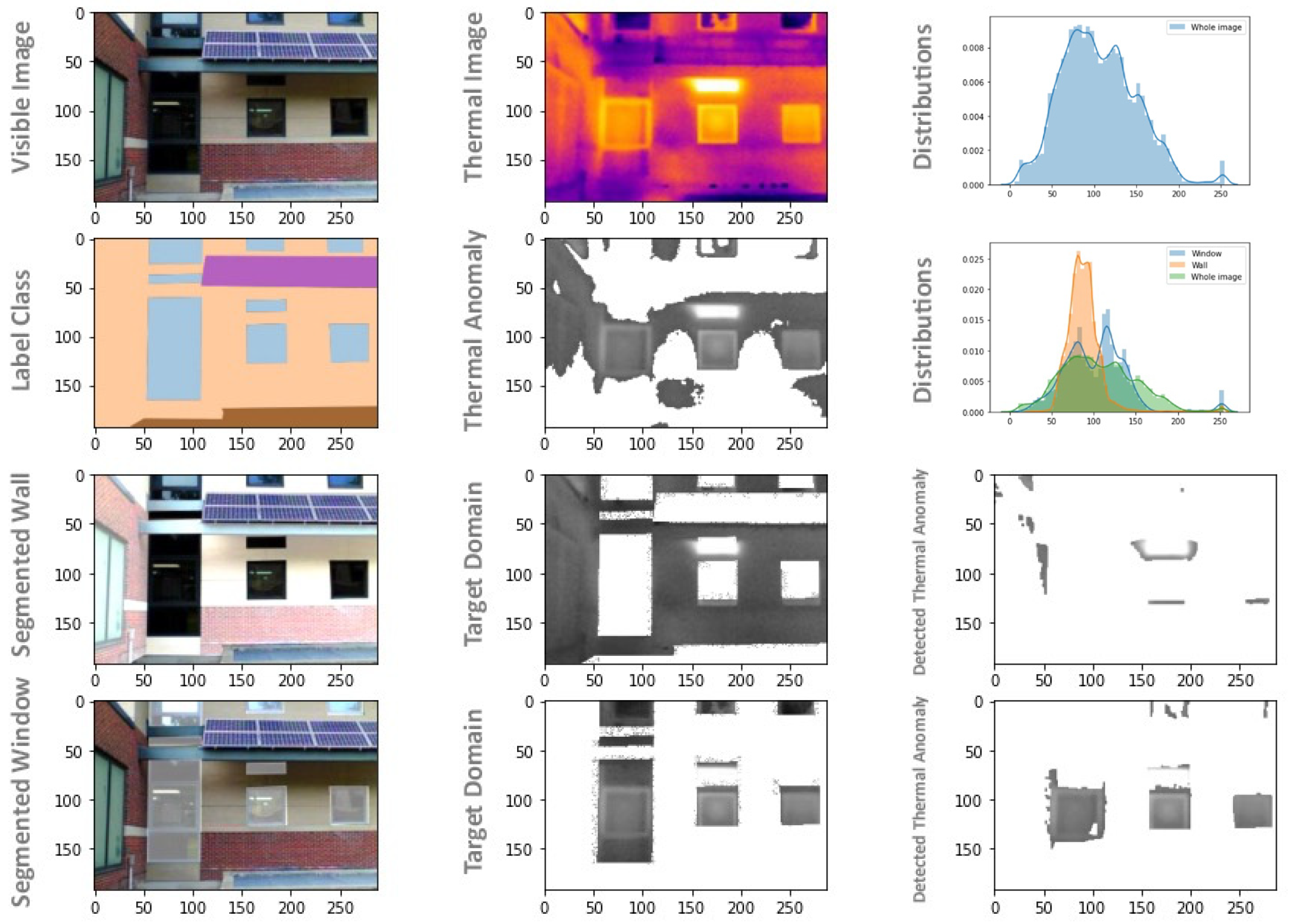

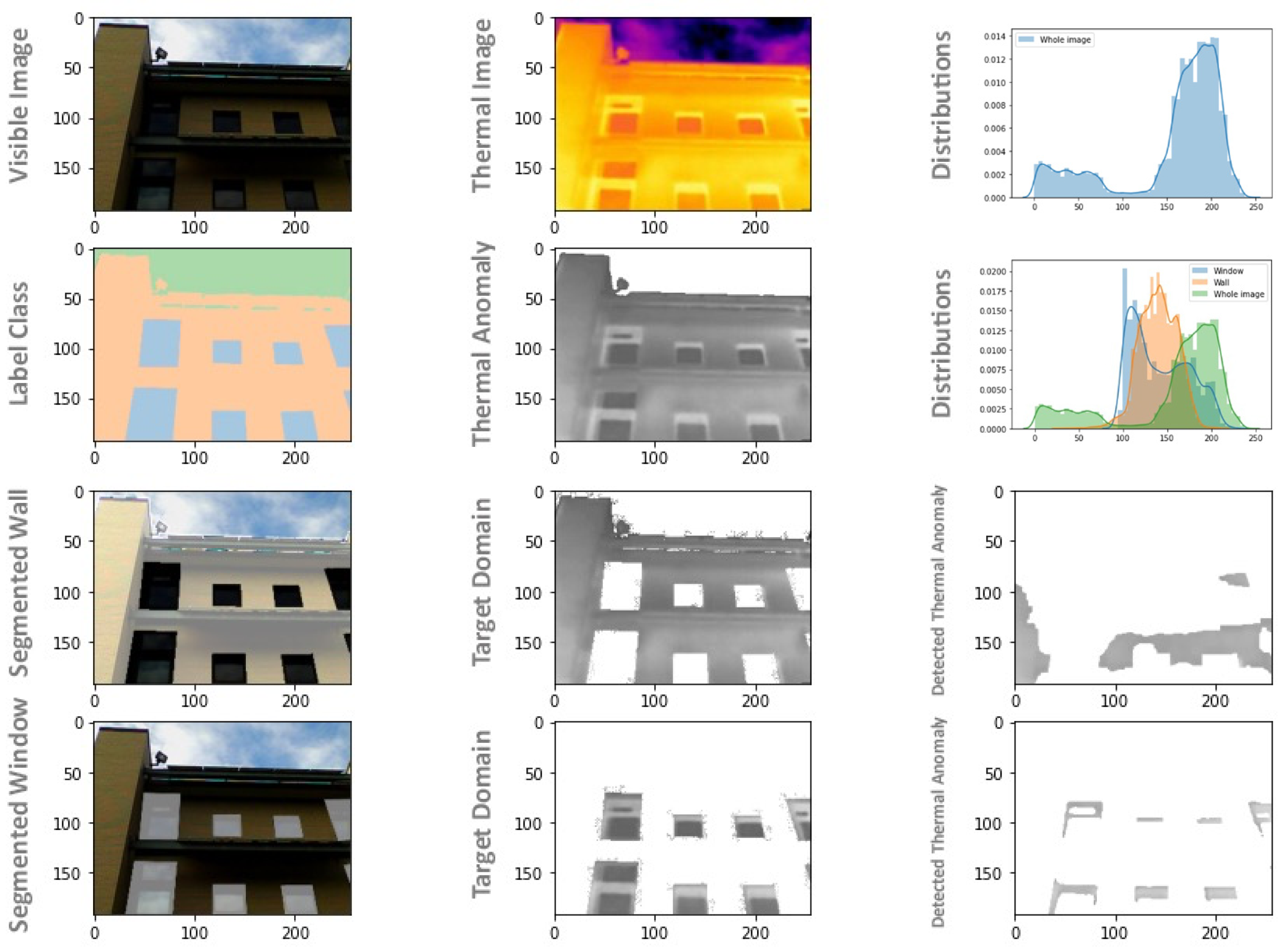

3.2.1. A Vision-Based Workflow for Thermal Anomaly Detection Based on Whole Image

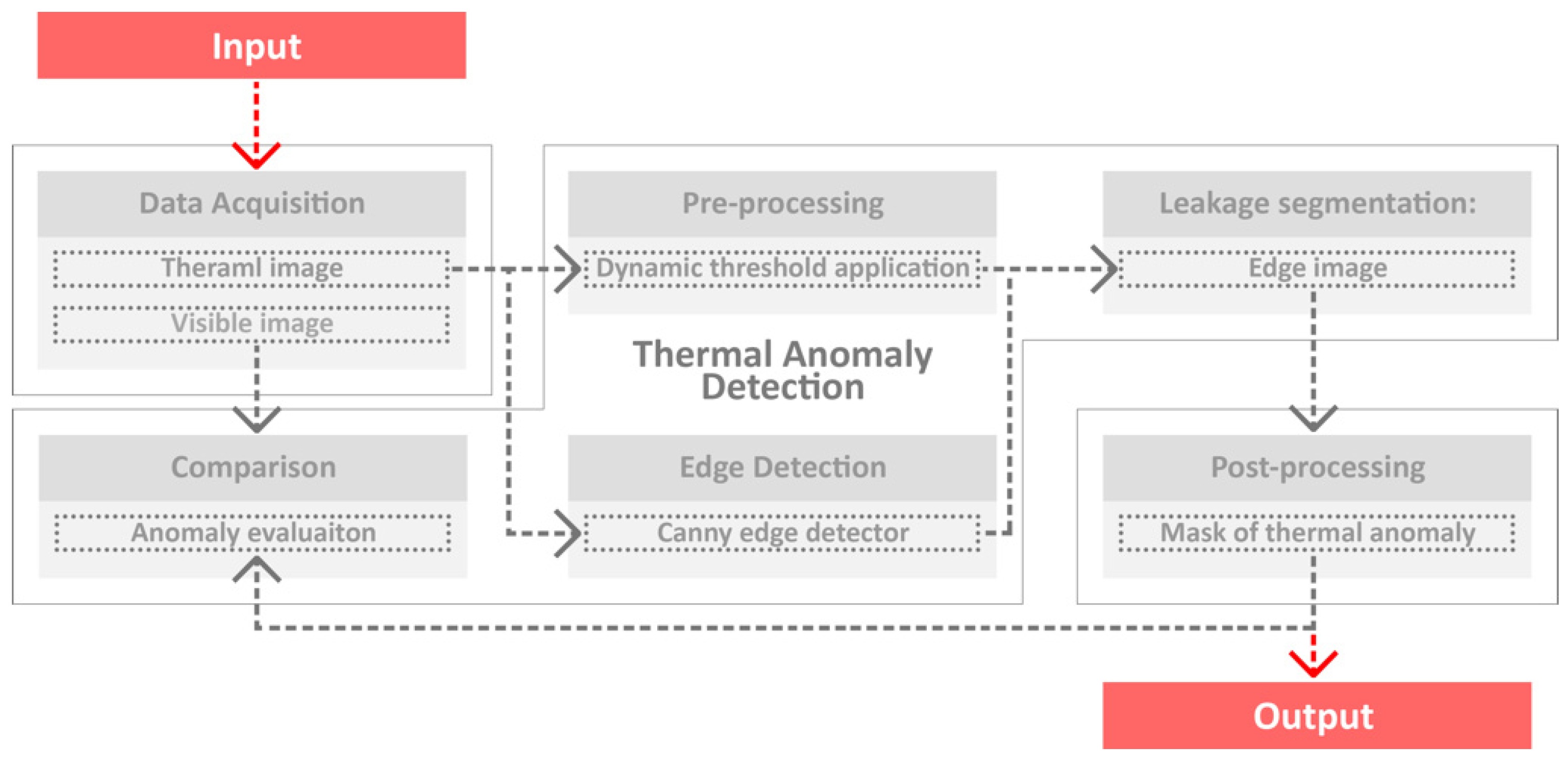

3.2.2. Thermal Anomaly Detection Based on Segmented Building Envelope Images

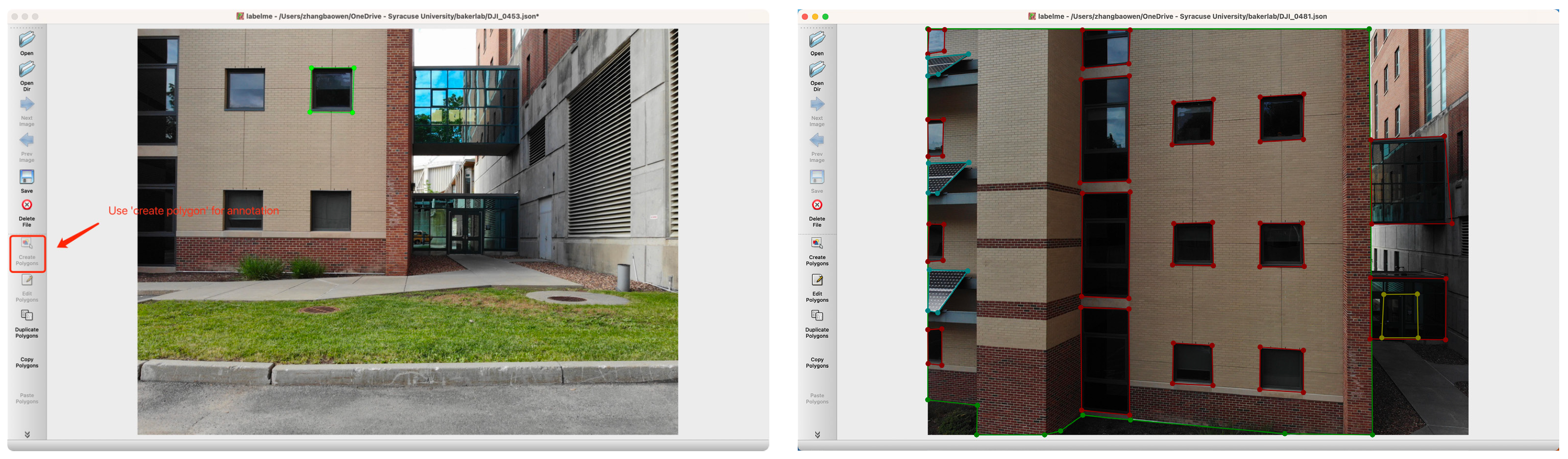

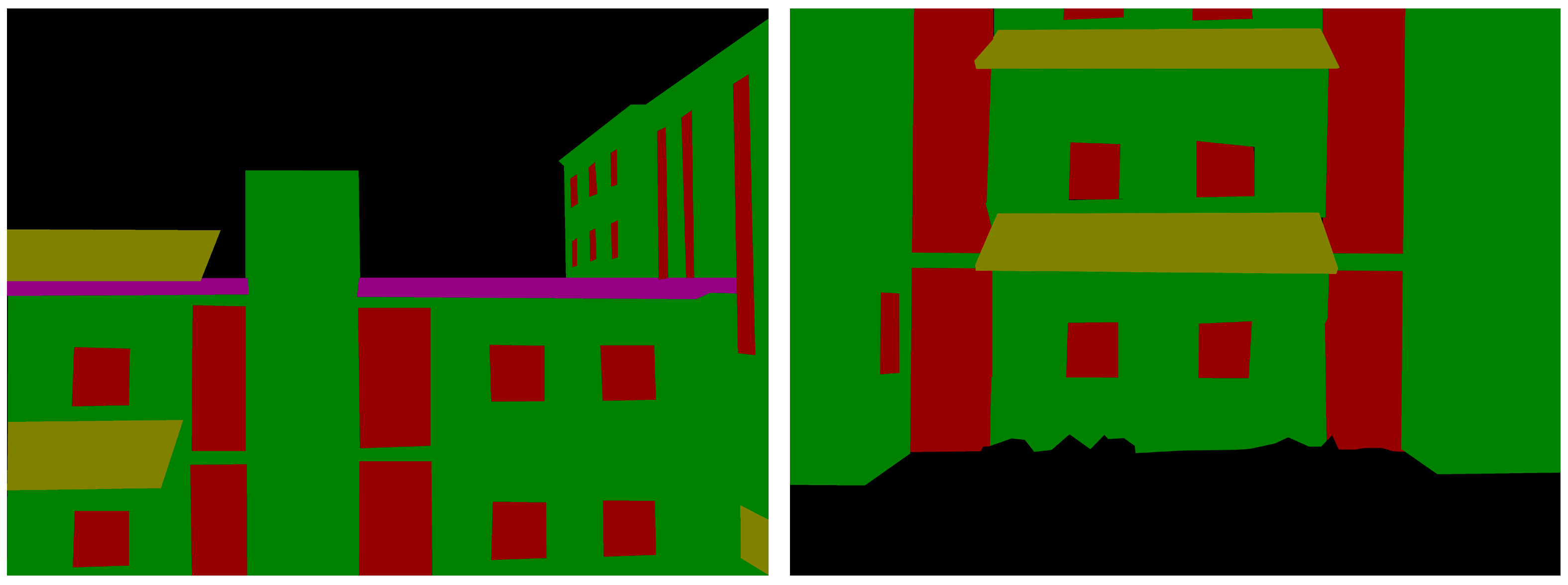

3.2.3. Semantic Segmentation

3.3. Dataset

3.4. Performance Metrics

4. Results and Discussion

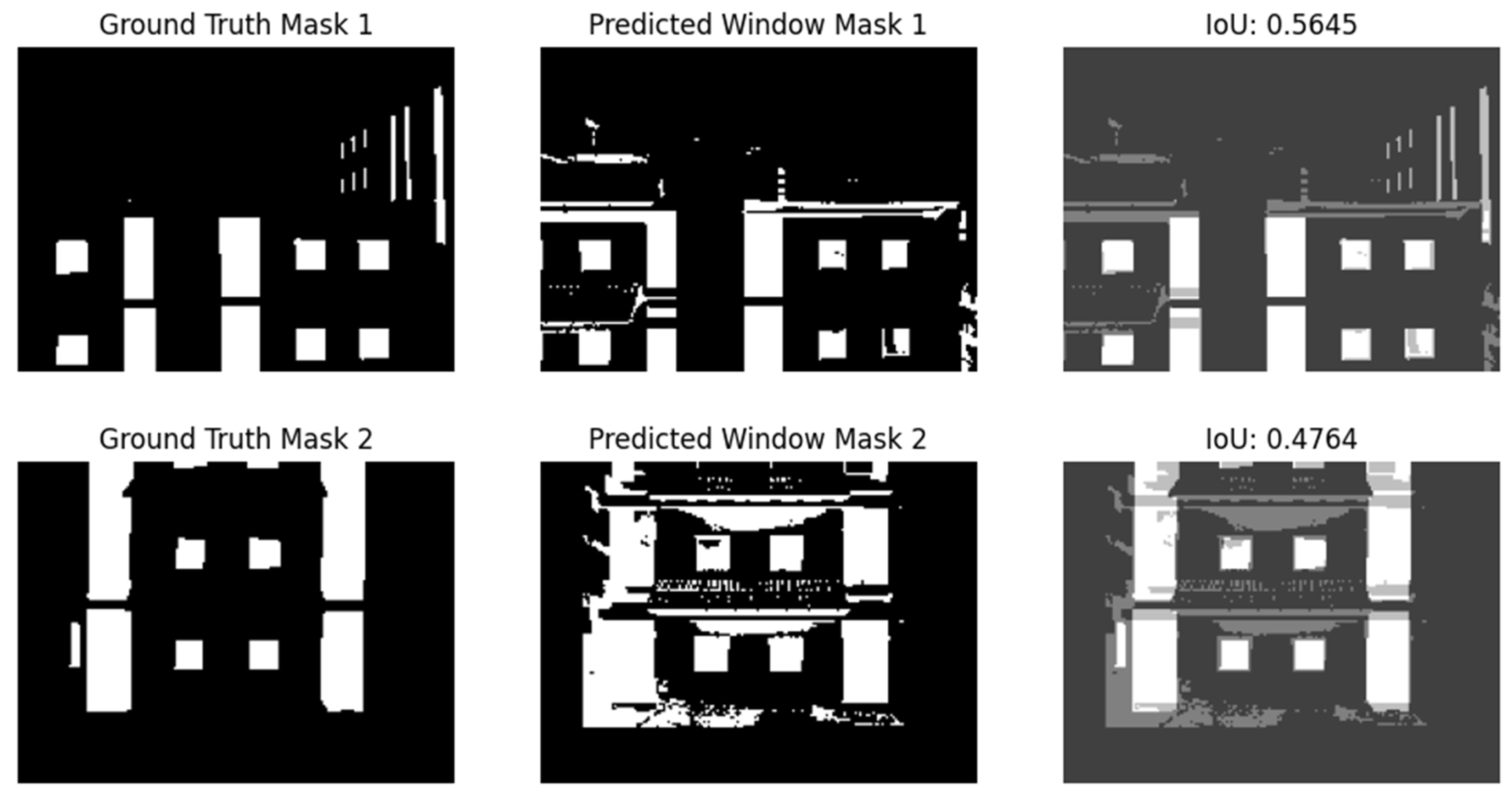

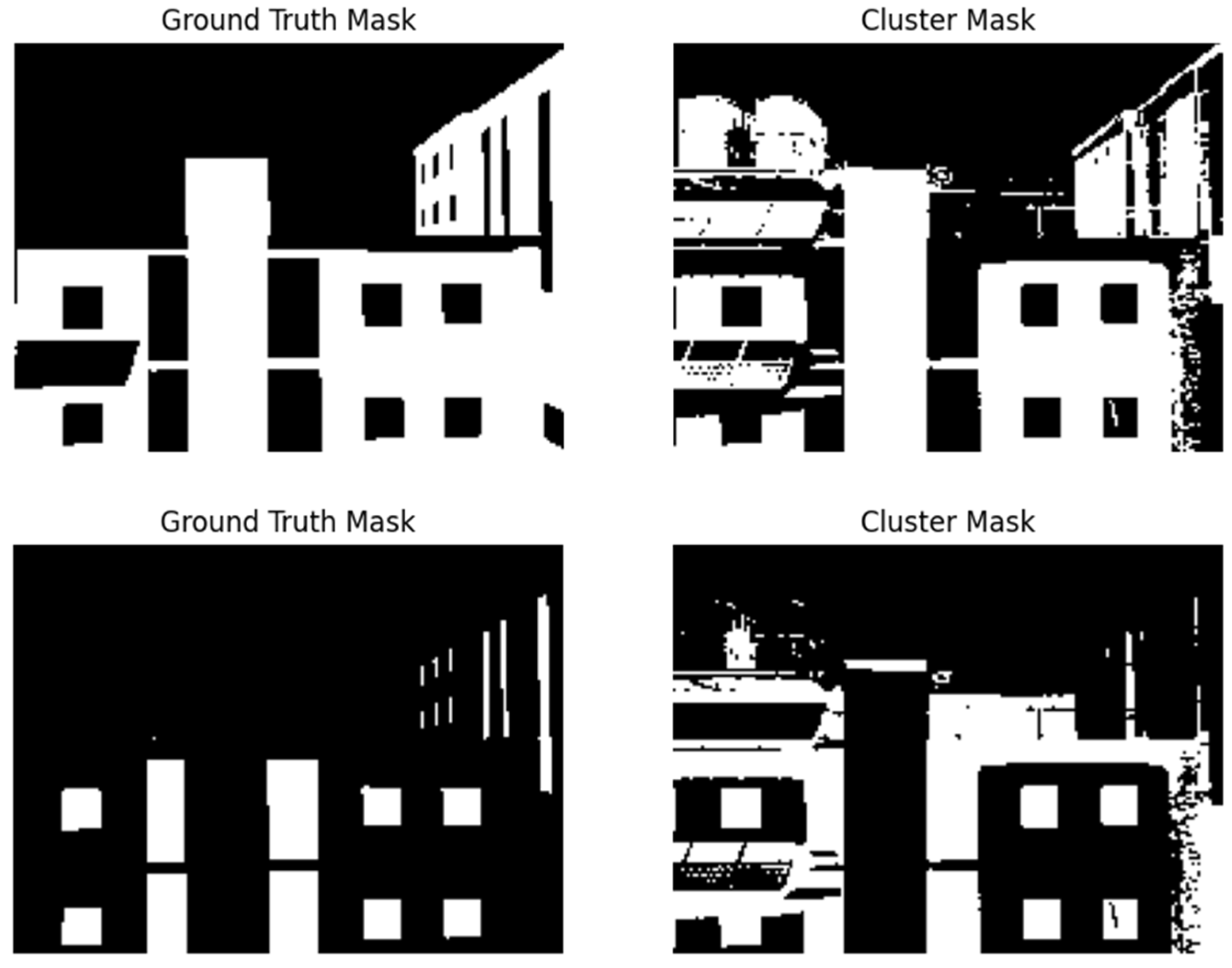

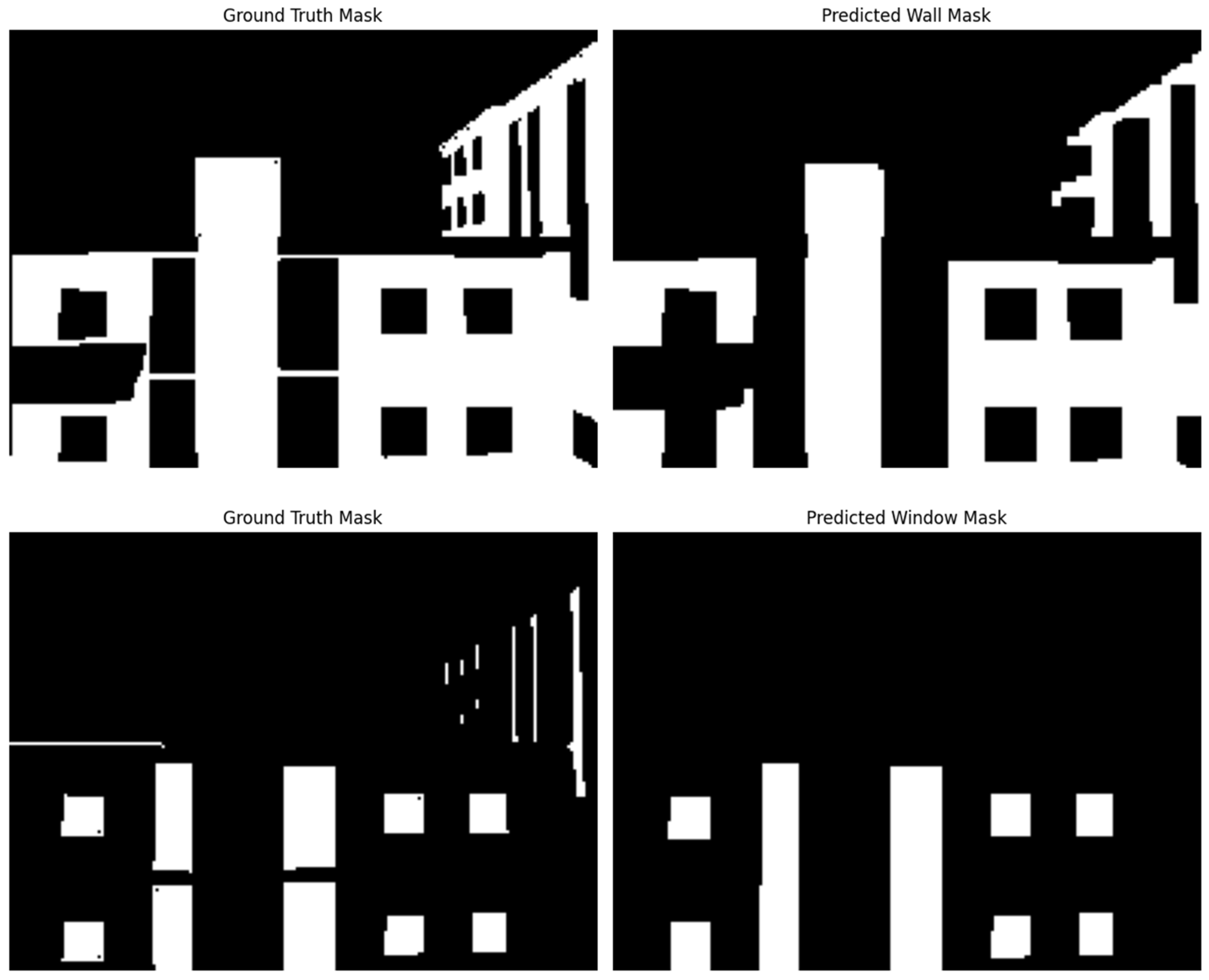

4.1. Comparison of Semantic Segmentation Models

4.2. Thermal Anomaly Detection Based on Whole Image

4.3. Thermal Anomaly Detection Based on Segmented Envelope Images

5. Conclusions

- Developed and validated a deep learning-enhanced workflow for building envelope inspection using RGB and thermal images.

- Achieved significant improvements in detection performance using semantic segmentation: precision increased from 72% to 92% and recall from 72% to 88%.

- Demonstrated the ability to distinguish thermal anomalies at the component level (e.g., wall vs. window), reducing false positives and enhancing interpretability.

- Quantified the trade-off in computational time (6 h for segmentation) and data storage (13% increase in dataset size), which is justified by performance gains.

- By addressing the high costs, lengthy inspection times, and safety risks of traditional methods, our approach significantly improves the practicality and efficiency of building envelope diagnostics. The ability to quickly and accurately detect defects, such as thermal anomalies, including thermal bridges and air leakage, using drone-based imagery and automated processing makes this method well-suited for energy retrofit assessments. The proposed method can be applied not only for pre-retrofit diagnostics but also for post-retrofit verification of envelope improvements. It enables building owners and energy auditors to prioritize retrofit interventions more effectively and to scale inspections across portfolios of buildings with reduced labor and cost.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Nomenclature

| CNN | Convolutional Neural Network |

| FAA | Federal Aviation Administration |

| FCN | Fully Convolutional Network |

| FN | False Negative |

| FP | False Positive |

| HSV | Hue, Saturation, Value |

| IoU | Intersection over Union |

| IR | Infrared |

| LAANC | Low Altitude Authorization and Notification Capability |

| RCNN | Region Based Convolutional Neural Networks |

| RGB | Red, Green, Blue |

| SLAM | Simultaneous Localization and Mapping |

| TN | True Negative |

| TP | True Positive |

| TPR | True Positive Rate |

| TNR | True Negative Rate |

| UAV | Unmanned Aerial Vehicle |

References

- United Nation Environment Programme (UNEP). Global Status Report: Towards a Zero-Emission, Efficient, and Resilient Buildings and Construction Sector; United Nation Environment Programme (UNEP): Nairobi, Kenya, 2017. [Google Scholar]

- United States Department of Energy. Increasing Efficiency of Building Systems and Technologies. In Quadrennial Technology Review; The United States Department of Energy: Washington, DC, USA, 2015. [Google Scholar]

- Mirzabeigi, S.; Razkenari, M. Multiple Benefits through Residential Building Energy Retrofit and Thermal Resilient Design. In Proceedings of the 2022 (6th) Residential Building Design & Construction Conference, University Park, PA, USA, 11 May 2022; pp. 456–465. [Google Scholar]

- Mirzabeigi, S.; Homaei, S.; Razkenari, M.; Hamdy, M. The Impact of Building Retrofitting on Thermal Resilience Against Power Failure: A Case of Air-Conditioned House. In Proceedings of the Environmental Science and Engineering, Leuven, Belgium, 8–10 September 2023; Springer Science and Business Media Deutschland GmbH: Singapore, 2023; pp. 2609–2619. [Google Scholar]

- Shapiro, I. Energy Audits in Large Commercial Office Buildings. ASHRAE J. 2009, 51, 18. [Google Scholar]

- Lucchi, E. Applications of the Infrared Thermography in the Energy Audit of Buildings: A Review. Renew. Sustain. Energy Rev. 2018, 82, 3077–3090. [Google Scholar] [CrossRef]

- Kylili, A.; Fokaides, P.A.; Christou, P.; Kalogirou, S.A. Infrared Thermography (IRT) Applications for Building Diagnostics: A Review. Appl. Energy 2014, 134, 531–549. [Google Scholar] [CrossRef]

- BRE Group. The Importance of Thermal Bridging. Available online: https://tools.bregroup.com/certifiedthermalproducts/page.jsp?id=3073 (accessed on 24 July 2025).

- Mirzabeigi, S.; Khalili Nasr, B.; Mainini, A.G.; Blanco Cadena, J.D.; Lobaccaro, G. Tailored WBGT as a Heat Stress Index to Assess the Direct Solar Radiation Effect on Indoor Thermal Comfort. Energy Build. 2021, 242, 110974. [Google Scholar] [CrossRef]

- Taylor, T.; Counsell, J.; Gill, S. Energy Efficiency Is More than Skin Deep: Improving Construction Quality Control in New-Build Housing Using Thermography. Energy Build. 2013, 66, 222–231. [Google Scholar] [CrossRef]

- Ficapal, A.; Mutis, I. Framework for the Detection, Diagnosis, and Evaluation of Thermal Bridges Using Infrared Thermography and Unmanned Aerial Vehicles. Buildings 2019, 9, 179. [Google Scholar] [CrossRef]

- Janssens, A.; Van Londersele, E.; Vandermarcke, B.; Roels, S.; Standaert, P.; Wouters, P. Development of Limits for the Linear Thermal Transmittance of Thermal Bridges in Buildings. In Proceedings of the 10th Thermal Performance of the Exterior Envelopes of Whole Buildings Conference: 30 Years of Research, Clearwater, FL, USA, 2–7 December 2007; American Society of Heating, Refrigerating and Air-Conditioning Engineers: Atlanta, GA, USA, 2007. [Google Scholar]

- Rakha, T.; Liberty, A.; Gorodetsky, A.; Kakillioglu, B.; Velipasalar, S. Heat Mapping Drones: An Autonomous Computer-Vision-Based Procedure for Building Envelope Inspection Using Unmanned Aerial Systems (UAS). Technol.|Archit. + Des. 2018, 2, 30–44. [Google Scholar] [CrossRef]

- De Filippo, M.; Asadiabadi, S.; Ko, N.; Sun, H. Concept of Computer Vision Based Algorithm for Detecting Thermal Anomalies in Reinforced Concrete Structures. In Proceedings of the 15th International Workshop on Advanced Infrared Technology and Applications (AITA 2019), Florence, Italy, 16–19 September 2019. [Google Scholar]

- Asdrubali, F.; Baldinelli, G.; Bianchi, F.; Costarelli, D.; Rotili, A.; Seracini, M.; Vinti, G. Detection of Thermal Bridges from Thermographic Images by Means of Image Processing Approximation Algorithms. Appl. Math. Comput. 2018, 317, 160–171. [Google Scholar] [CrossRef]

- Garrido, I.; Lagüela, S.; Arias, P.; Balado, J. Thermal-Based Analysis for the Automatic Detection and Characterization of Thermal Bridges in Buildings. Energy Build. 2018, 158, 1358–1367. [Google Scholar] [CrossRef]

- Park, G.; Lee, M.; Jang, H.; Kim, C. Thermal Anomaly Detection in Walls via CNN-Based Segmentation. Autom. Constr. 2021, 125, 103627. [Google Scholar] [CrossRef]

- Baldinelli, G.; Bianchi, F.; Rotili, A.; Costarelli, D.; Seracini, M.; Vinti, G.; Asdrubali, F.; Evangelisti, L. A Model for the Improvement of Thermal Bridges Quantitative Assessment by Infrared Thermography. Appl. Energy 2018, 211, 854–864. [Google Scholar] [CrossRef]

- Garrido, I.; Lagüela, S.; Arias, P. Autonomous Thermography: Towards the Automatic Detection and Classification of Building Pathologies. In Proceedings of the 14th Quantitative InfraRed Thermography Conference Autonomous, Berlin, Germany, 25–29 June 2018. [Google Scholar]

- Kim, C.; Choi, J.; Jang, H.; Kim, E. Automatic Detection of Linear Thermal Bridges from Infrared Thermal Images Using Neural Network. Appl. Sci. 2021, 11, 931. [Google Scholar] [CrossRef]

- Mirzabeigi, S.; Zhang, J.; Razkenari, M. Exterior Retrofitting Systems for Energy Conservation and Efficiency in Cold Climates: A Systematic Review. In Proceedings of the Environmental Science and Engineering, Leuven, Belgium, 8–10 September 2023; Springer Science and Business Media Deutschland GmbH: Singapore, 2023; pp. 413–422. [Google Scholar]

- Aly, A.A.; Deris, S.B.; Zaki, N. Research review for digital image segmentation techniques. Int. J. Comput. Sci. Inf. Technol. (IJCSIT) 2011, 3, 99–106. [Google Scholar] [CrossRef]

- Guo, Y.; Liu, Y.; Georgiou, T.; Lew, M.S. A Review of Semantic Segmentation Using Deep Neural Networks. Int. J. Multimed. Inf. Retr. 2018, 7, 87–93. [Google Scholar] [CrossRef]

- Girshick, R.; Donahue, J.; Darrell, T.; Berkeley, U.C.; Malik, J. Rich Feature Hierarchies for Accurate Object Detection and Semantic Segmentation. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 8–10 June 2015; Volume 39. [Google Scholar]

- Eigen, D.; Fergus, R. Predicting Depth, Surface Normals and Semantic Labels with a Common Multi-Scale Convolutional Architecture. In Proceedings of the ICCV, Washington, DC, USA, 7–13 December 2015; pp. 2650–2658. [Google Scholar]

- Dai, J.; He, K.; Sun, J. BoxSup: Exploiting Bounding Boxes to Supervise Convolutional Networks for Semantic Segmentation. In Proceedings of the ICCV, Washington, DC, USA, 7–13 December 2015; pp. 1635–1643. [Google Scholar]

- Pinheiro, P.O.; Collobert, R.; Epfl, D.L. From Image-Level to Pixel-Level Labeling with Convolutional Networks. In Proceedings of the CVPR, Boston, MA, USA, 7–12 June 2015; pp. 1713–1721. [Google Scholar]

- Reznik, S.; Mayer, H. Implicit Shape Models, Self-Diagnosis, and Model Selection for 3D Facade Interpretation. Photogramm.-Fernerkund.-Geoinf. 2008, 3, 187–196. [Google Scholar]

- Rahmani, K.; Mayer, H. High quality facade segmentation based on structured random forest, region proposal network and rectangular fitting. In Proceedings of the ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Karlsruhe, Germany, 10–12 October 2018; Copernicus GmbH: Göttingen, Germany, 2018; Volume 4, pp. 223–230. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation; Springer: Cham, Switzerland, 2015. [Google Scholar]

- Dai, M.; Meyers, G.; Tingley, D.D.; Dai, M.; Meyers, G.; Tingley, D.D.; Mayfield, M. Initial Investigations into Using an Ensemble of Deep Neural Networks for Building Façade Image Semantic Segmentation. In Proceedings of the SPIE Remote Sensing, Strasbourg, France, 9–12 September 2019; Volume 11157. [Google Scholar]

- Agapaki, E.; Nahangi, M. Scene Understanding and Model Generation. In Infrastructure Computer Vision; Elsevier Inc.: Amsterdam, The Netherlands, 2020; pp. 65–167. ISBN 9780128155035. [Google Scholar]

- Zhang, J.; Zhao, X.; Chen, Z.; Lu, Z. A Review of Deep Learning-Based Semantic Segmentation for Point Cloud. IEEE Access 2019, 7, 179118–179133. [Google Scholar] [CrossRef]

- Mirzabeigi, S.; Razkenari, R.; Crovella, P. A Review of the Potential of Drone-Based Approaches for Integrated Building Envelope Assessment. Buildings 2025, 15, 2230. [Google Scholar] [CrossRef]

- Waqas, A.; Araji, T. Machine learning-aided thermography for autonomous heat loss detection in buildings. Energy Energy Convers. Manag. 2024, 304, 118243. [Google Scholar] [CrossRef]

- Li, Q.; Peng, X.; Zhong, X.; Xiao, X.; Wang, H.; Zhao, C.; Zhou, K. Quantitative identification of debonding defects in building façades based on UAV-thermography using a two-stage network integrating dual attention mechanism. Infrared Phys. Technol. 2024, 138, 105241. [Google Scholar] [CrossRef]

- Lin, D.; Yang, N.; Miao, Q.; Cui, X.; Xu, D. True 3D thermal inspection of buildings using multimodal UAV images. J. Build. Eng. 2025, 100, 111806. [Google Scholar] [CrossRef]

- Federal Aviation Administration. Become a Drone Pilot. Available online: https://www.faa.gov/uas/commercial_operators/become_a_drone_pilot (accessed on 24 July 2025).

- Rakha, T.; Gorodetsky, A. Review of Unmanned Aerial System (UAS) Applications in the Built Environment: Towards Automated Building Inspection Procedures Using Drones. Autom. Constr. 2018, 93, 252–264. [Google Scholar] [CrossRef]

- Mirzabeigi, S.; Razkenari, M. Automated Vision-Based Building Inspection Using Drone Thermography. In Proceedings of the Construction Research Congress 2022: Computer Applications, Automation, and Data Analytics, Arlington, VA, USA, 9–12 March 2022; American Society of Civil Engineers (ASCE): Reston, VA, USA, 2022; Volume 2-B, pp. 737–746. [Google Scholar]

- Bradski, G. The OpenCV Library. Dr. Dobb’s J. Softw. Tools 2000, 120, 122–125. [Google Scholar]

- Harris, C.R.; Millman, K.J.; Van Der Walt, S.J.; Gommers, R.; Virtanen, P.; Cournapeau, D.; Wieser, E.; Taylor, J.; Berg, S.; Smith, N.J.; et al. Array Programming with NumPy. Nature 2020, 585, 357–362. [Google Scholar] [CrossRef] [PubMed]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B. Scikit-Learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Dai, M.; Ward, W.O.C.; Meyers, G.; Densley, D.; Mayfield, M. Residential Building Facade Segmentation in the Urban Environment. Build. Environ. 2021, 199, 107921. [Google Scholar] [CrossRef]

- Karthik, G. Image Segmentation with Unet Pytorch. Available online: https://www.kaggle.com/gokulkarthik/image-segmentation-with-unet-pytorch (accessed on 24 July 2025).

- Mirzabeigi, S.; Razkenari, M.; Crovella, P. Automated Thermal Anomaly Detection through Deep Learning-based Semantic Segmentation of Building Envelope Images. In Proceedings of the ASCE International Conference on Computing in Civil Engineering (i3CE 2024), Pittsburgh, PA, USA, 28–31 July 2024. [Google Scholar]

| Method | Advantage | Disadvantage |

|---|---|---|

| Inverse dynamics method |

|

|

| Active contour method |

|

|

| Watersheds Method |

|

|

| Novel edge-based method |

|

|

| Topological alignments method |

|

|

| Pattern Recognition method |

|

|

| Threshold method |

|

|

| Region-based method |

|

|

| Clustering-based method |

|

|

| Graph-based method |

|

|

| Morphological method |

|

|

| Template Matching |

|

|

| Level Set method |

|

|

| Study | Methodology | Imaging | Application | Key Findings |

|---|---|---|---|---|

| Rakha et al. (2018) [13] | Dynamic thresholding + Canny edge + segmentation | Thermal | Drone-based inspection | Detected leakage using dynamic thresholds and edge filtering; sky and background filtering needed |

| De Filippo et al. (2019) [14] | CV-based filtering + Otsu thresholding | Thermal | Concrete structure defect detection | Automated pipeline using thermal edges for anomaly detection |

| Asdrubali et al. (2018) [15] | Sampling Kantorovich operator | Thermal | Thermal bridge detection | Bimodal temperature distribution used; thresholding based on histogram analysis |

| Park et al. (2021) [17] | CNN-based segmentation | Thermal + RGB | Wall defect detection | Improved performance over traditional thresholding; limited to linear thermal bridges |

| Kim et al. (2021) [20] | Neural network + clustering | Thermal | Linear thermal bridge detection | Used artificial neural networks and shape clustering for classification |

| Garrido et al. (2018) [19] | PCA + geometric analysis | Thermal | Thermal bridge quantification | Used histogram and geometric boundaries to characterize defects |

| Waqas et al. (2024) [37] | Deep residual CNN | Thermal | Building façades inspection | Achieved high classification accuracy using supervised deep learning for automatic anomaly detection |

| Li et al. (2024) [38] | YOLOv7 | Thermal + RGB | Thermal defect detection | Real-time thermal defect segmentation; robust performance under varying environmental conditions |

| Lin et al. (2025) [39] | 3D thermal-RGB point cloud fusion | Thermal + RGB | 3D inspection of façades | Created 3D thermographic models from UAV imagery; enhanced fault localization and visualization |

| Type | Accuracy | Precision | Recall | F1 | IoU |

|---|---|---|---|---|---|

| wall | 0.7442 | 0.9345 | 0.5297 | 0.6524 | 0.5143 |

| window | 0.8875 | 0.6112 | 0.7809 | 0.6835 | 0.5205 |

| Type | Accuracy | Precision | Recall | F1 | IoU |

|---|---|---|---|---|---|

| wall | 0.7818 | 0.7538 | 0.7655 | 0.7596 | 0.6124 |

| window | 0.8012 | 0.3719 | 0.8496 | 0.8496 | 0.3489 |

| Type | Accuracy | Precision | Recall | F1 | IoU |

|---|---|---|---|---|---|

| wall | 0.9313 | 0.9376 | 0.8893 | 0.9128 | 0.8396 |

| window | 0.9639 | 0.8532 | 0.8251 | 0.8389 | 0.7225 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mirzabeigi, S.; Razkenari, R.; Crovella, P. Building Envelope Thermal Anomaly Detection Using an Integrated Vision-Based Technique and Semantic Segmentation. Buildings 2025, 15, 2672. https://doi.org/10.3390/buildings15152672

Mirzabeigi S, Razkenari R, Crovella P. Building Envelope Thermal Anomaly Detection Using an Integrated Vision-Based Technique and Semantic Segmentation. Buildings. 2025; 15(15):2672. https://doi.org/10.3390/buildings15152672

Chicago/Turabian StyleMirzabeigi, Shayan, Ryan Razkenari, and Paul Crovella. 2025. "Building Envelope Thermal Anomaly Detection Using an Integrated Vision-Based Technique and Semantic Segmentation" Buildings 15, no. 15: 2672. https://doi.org/10.3390/buildings15152672

APA StyleMirzabeigi, S., Razkenari, R., & Crovella, P. (2025). Building Envelope Thermal Anomaly Detection Using an Integrated Vision-Based Technique and Semantic Segmentation. Buildings, 15(15), 2672. https://doi.org/10.3390/buildings15152672