Predicting the Compressive Strength of Environmentally Friendly Concrete Using Multiple Machine Learning Algorithms

Abstract

1. Introduction

1.1. Literature Review

1.2. Objecitves

- Constructing the predicting model for the compressive strength of concrete containing coal fly ash using six different ML algorithms.

- Synthesizing the standalone models with a PSO algorithm, so as to optimize the hyperparameters of each model automatically.

- Evaluating the applicability of each hybrid ML model using comprehensive statistic indicators.

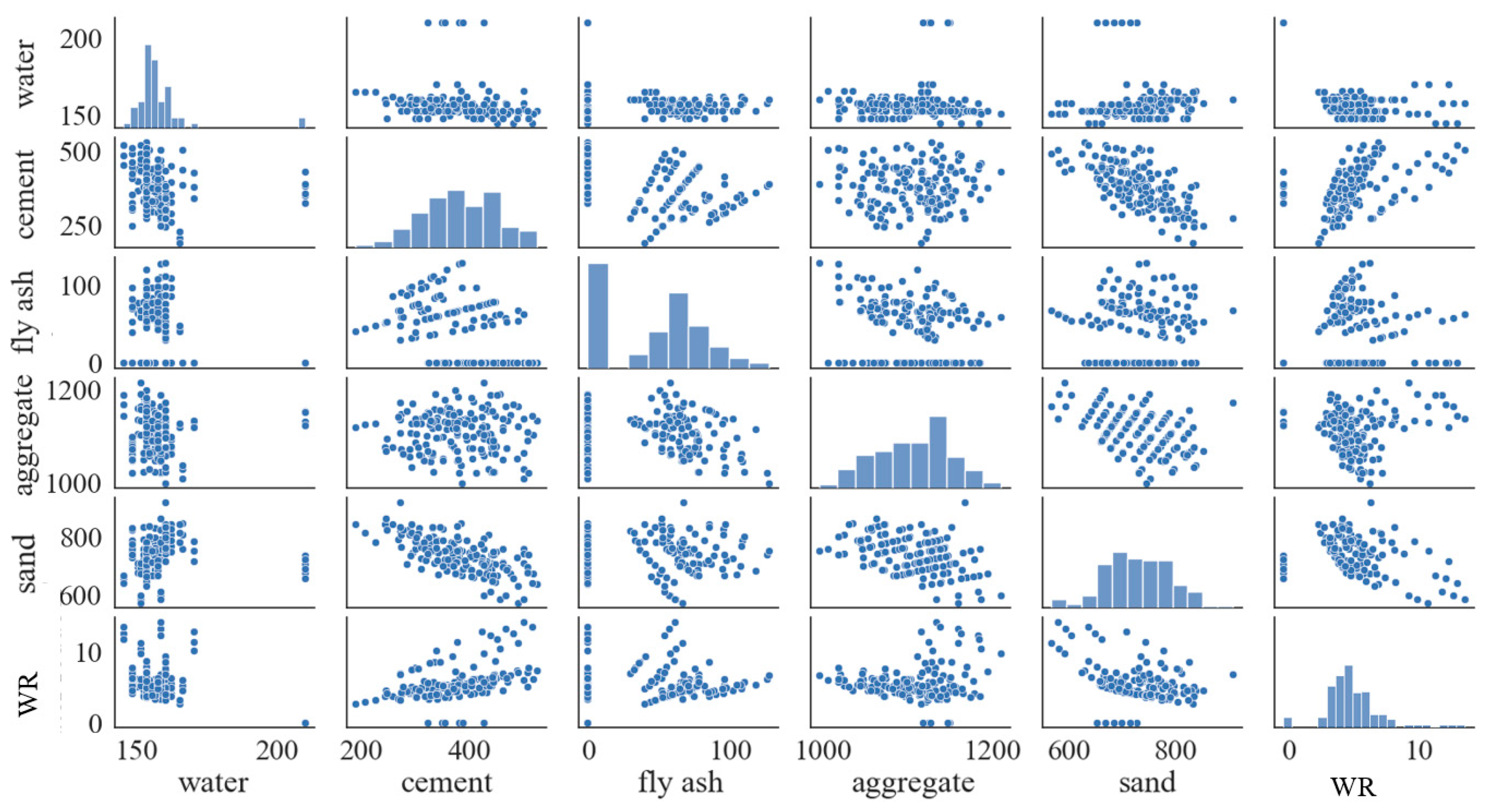

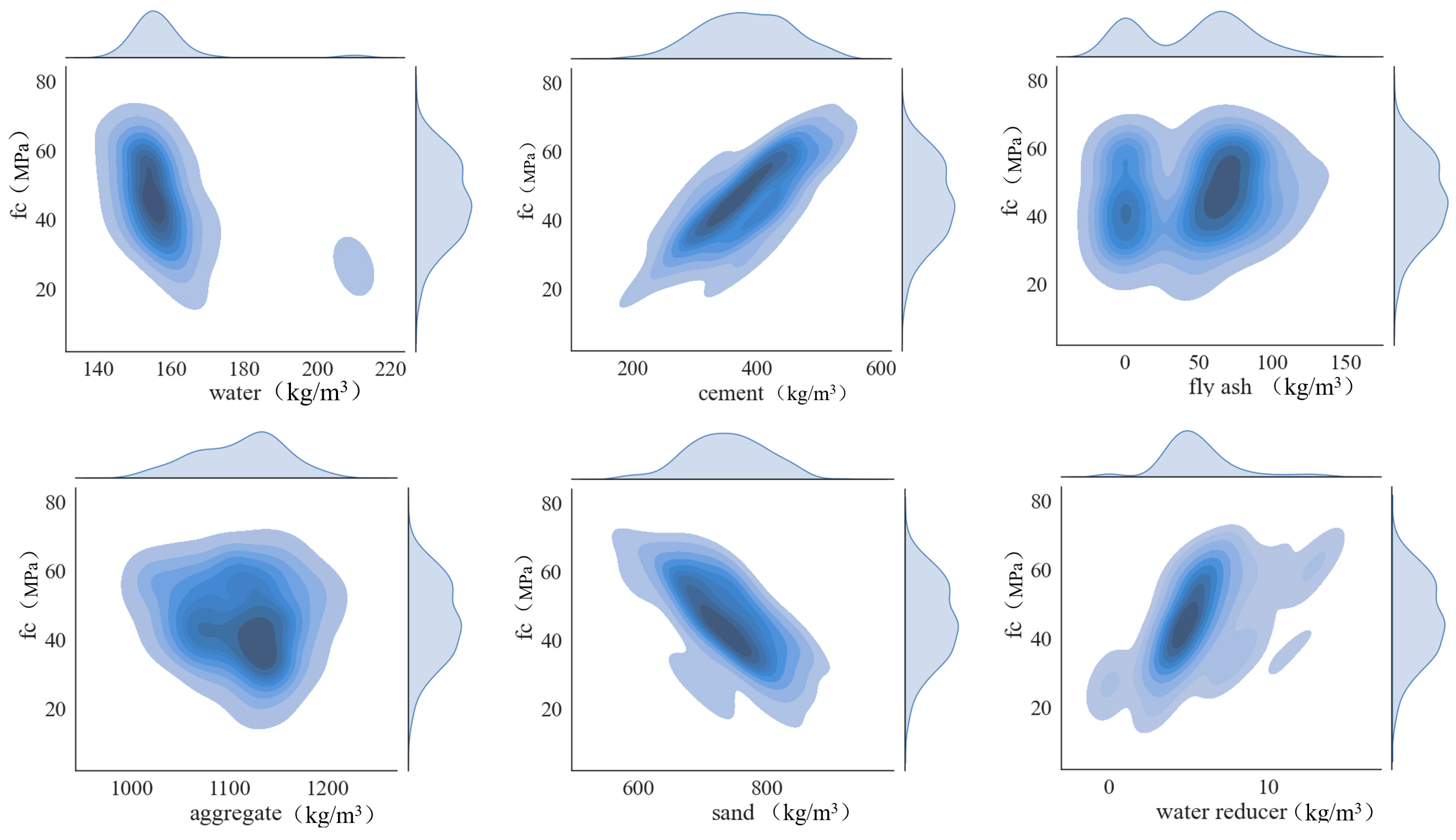

2. Data Collection

- (1)

- The box chart was used to highlight outliers in the data of each input parameter, and then 23 data sets with abnormal distribution were excluded from the 200 data sets; the statistical characteristics of the remaining 177 data sets are shown in Table 1.

- (2)

- In order to reduce the influence of data scales on the prediction performance and efficiency of the ML algorithm, the data of input parameters were normalized based on Equation (1).

- (3)

- Subsequently, the database was randomly divided into a training set and testing set through the split function in scikit-learn library, and the proportion of division was 75% for training and 25% for testing.

3. Machine Learning Algorithms

3.1. PSO Algorithm

3.2. BP-ANN

3.3. ANFIS

- (1)

- The first layer is the fuzzy layer, which undertakes fuzzy processing of input data by membership function. The selection of the type and number of membership function is usually subjective. When there are more membership functions of each input parameter, there will also be more membership degrees, so more if–then rules will be generated, which may improve the prediction accuracy to a certain level but also significantly increase the requirement of computer performance.

- (2)

- The second layer is to calculate the firing strength of each if–then rule.

- (3)

- The third layer normalizes the firing strength and obtains the trigger intensity of the if–then rule relative to the others.

- (4)

- The fourth layer calculates the output value of each if–then rule by multiplying the original input data and the relative trigger intensity obtained in the third layer.

- (5)

- The fifth layer is the output layer, which weights and sums the output values obtained in the fourth layer and defuzzies them.

3.4. SVR

3.5. XGBoost

3.6. RF

3.7. GP

4. Results and Discussions

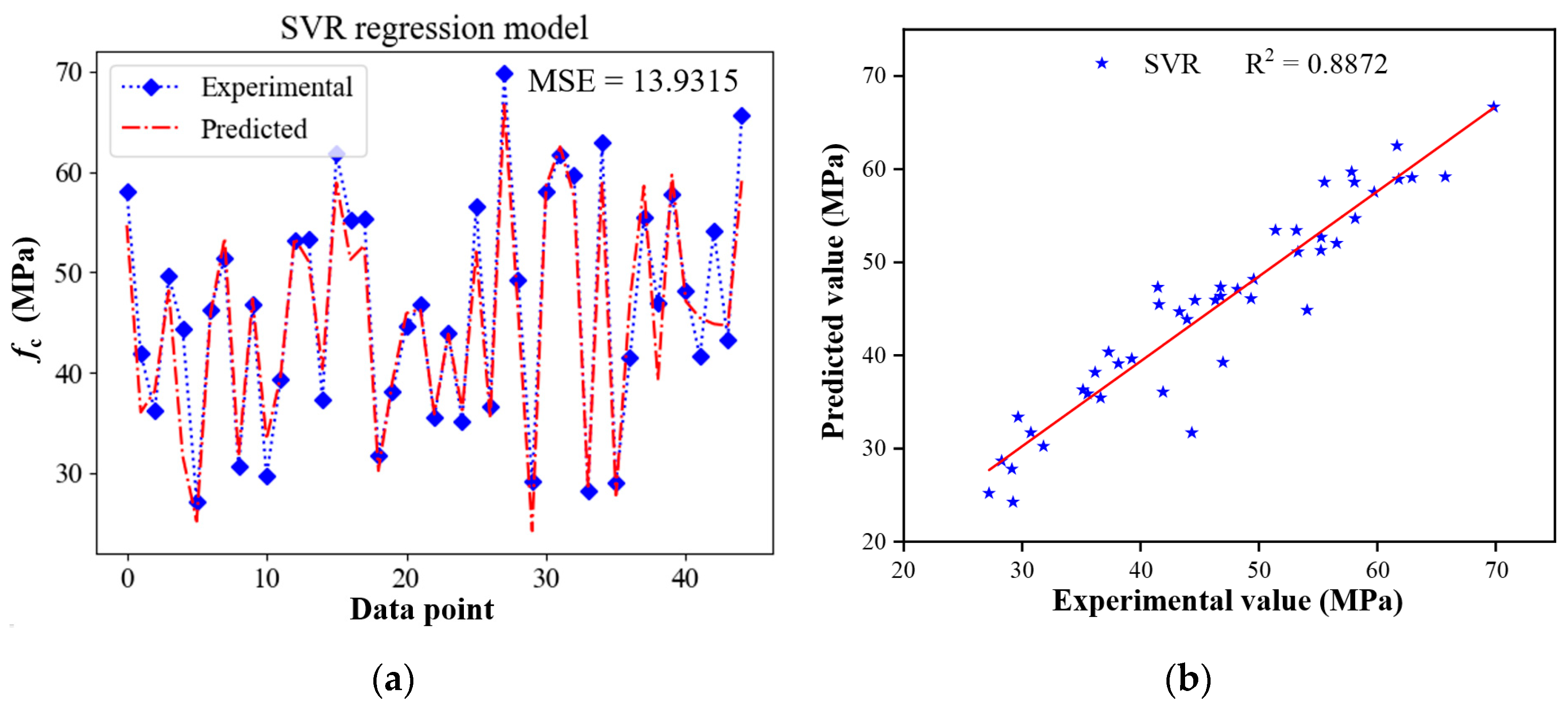

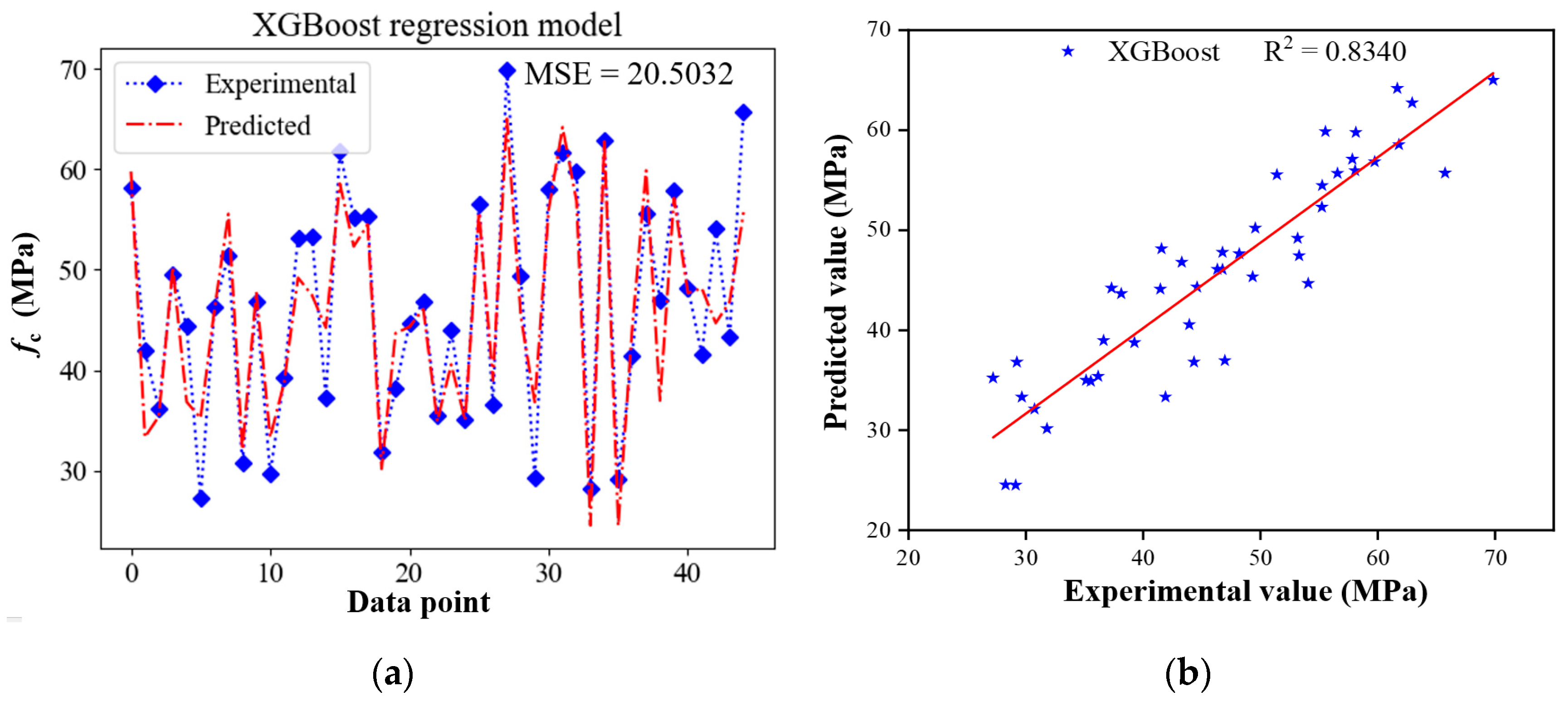

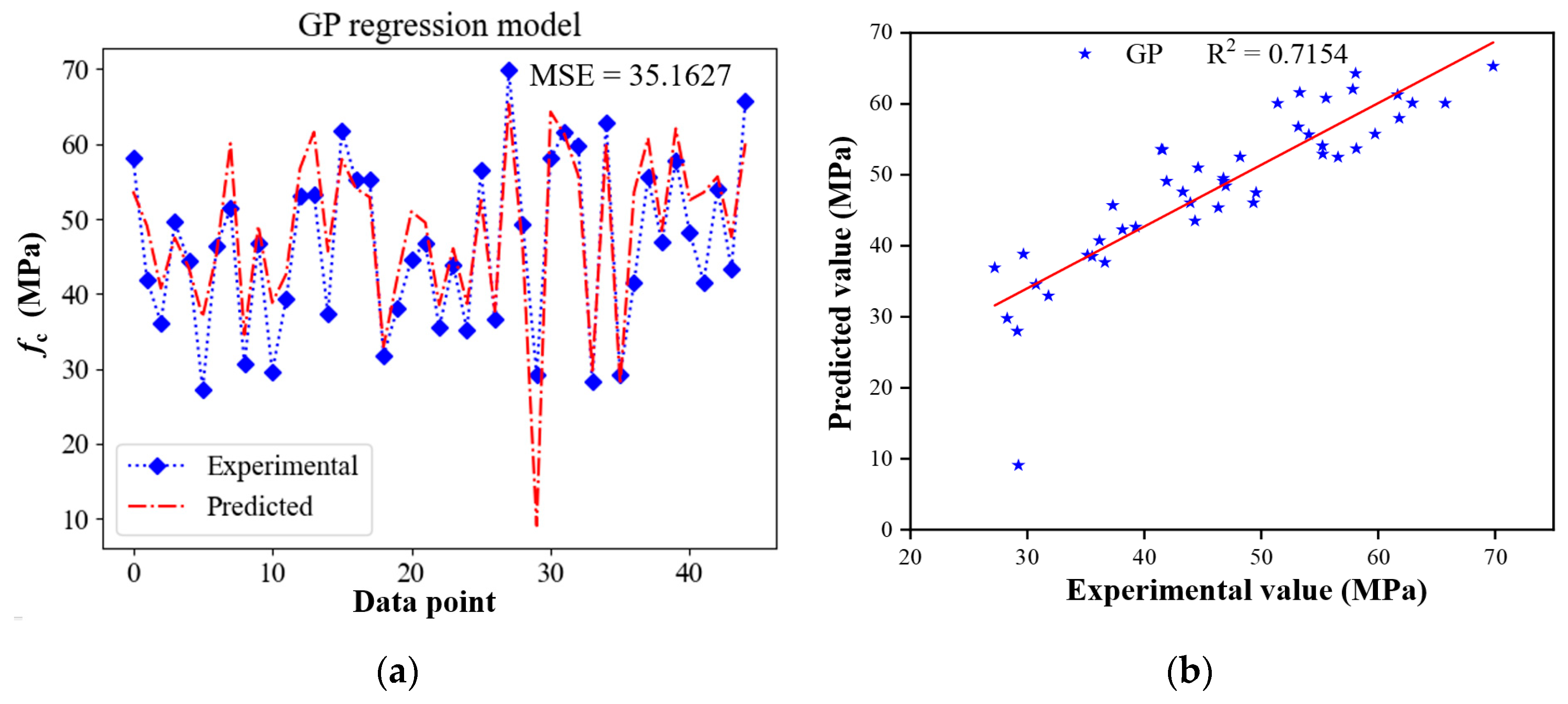

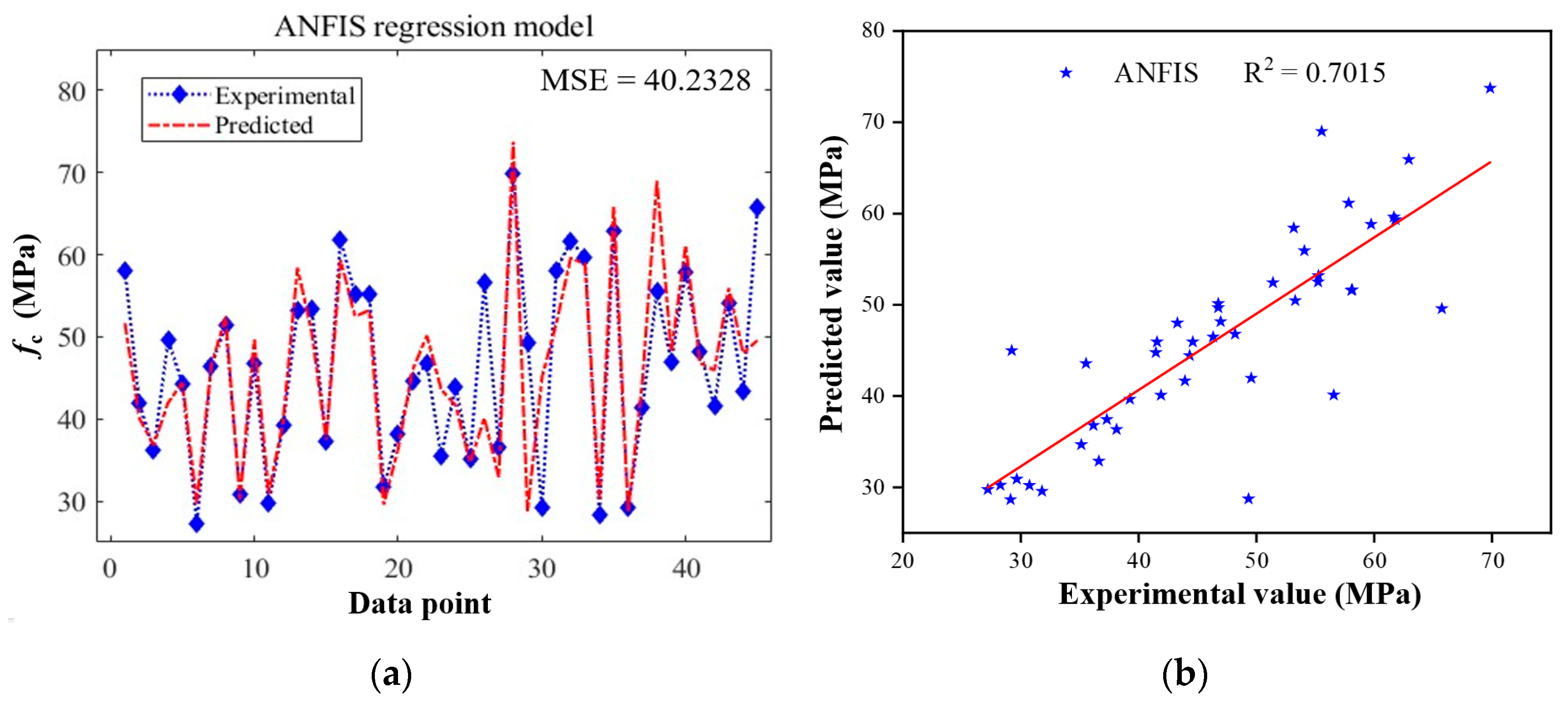

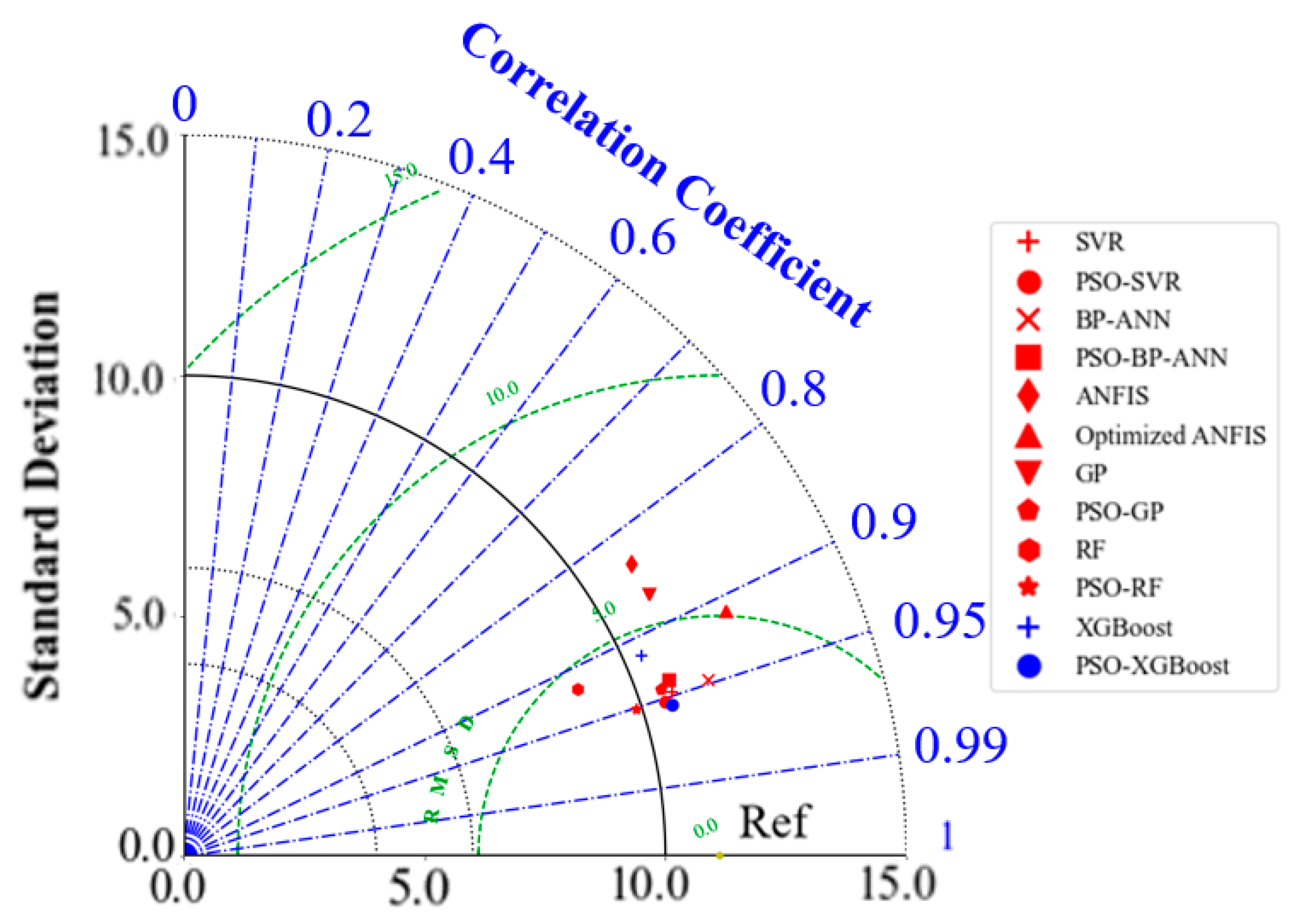

4.1. Prediction Performance of Standalone Models

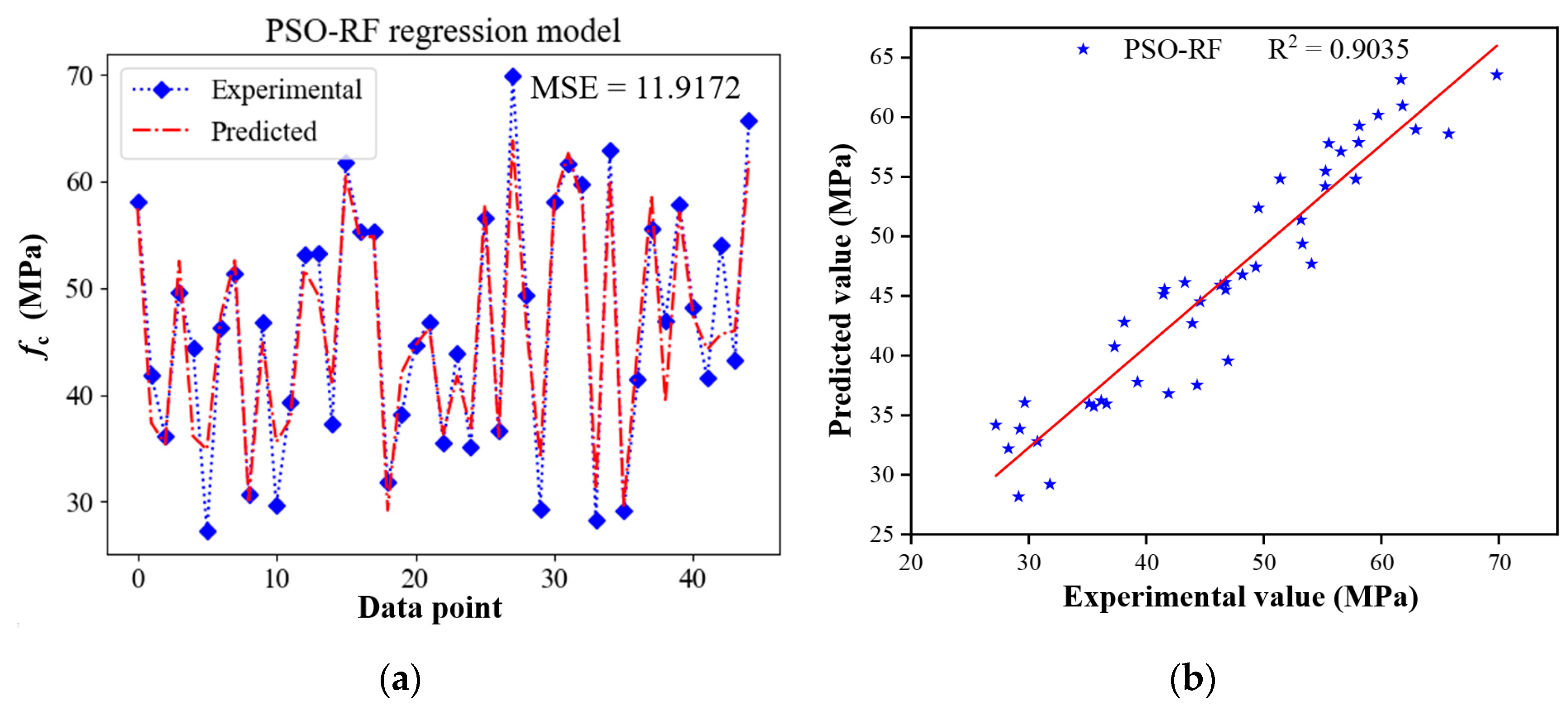

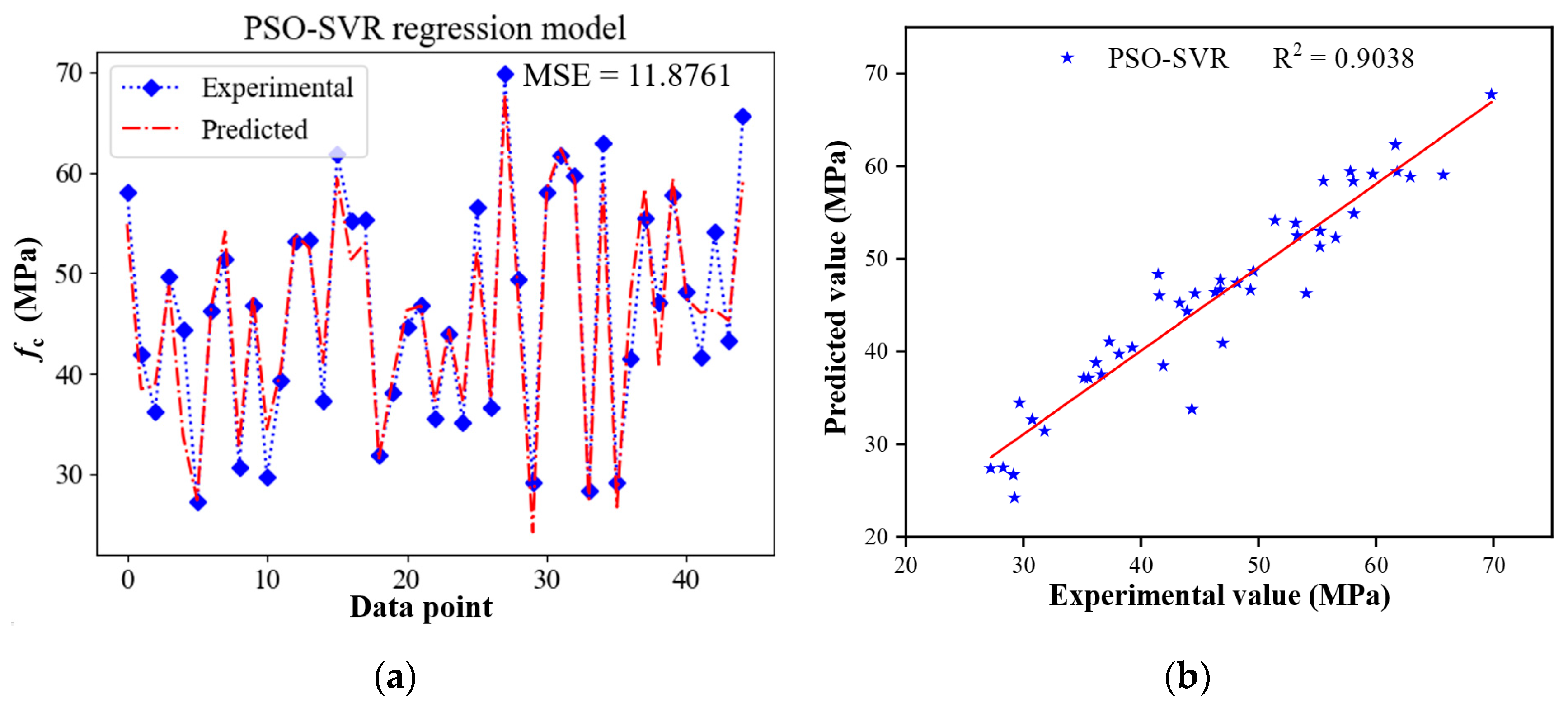

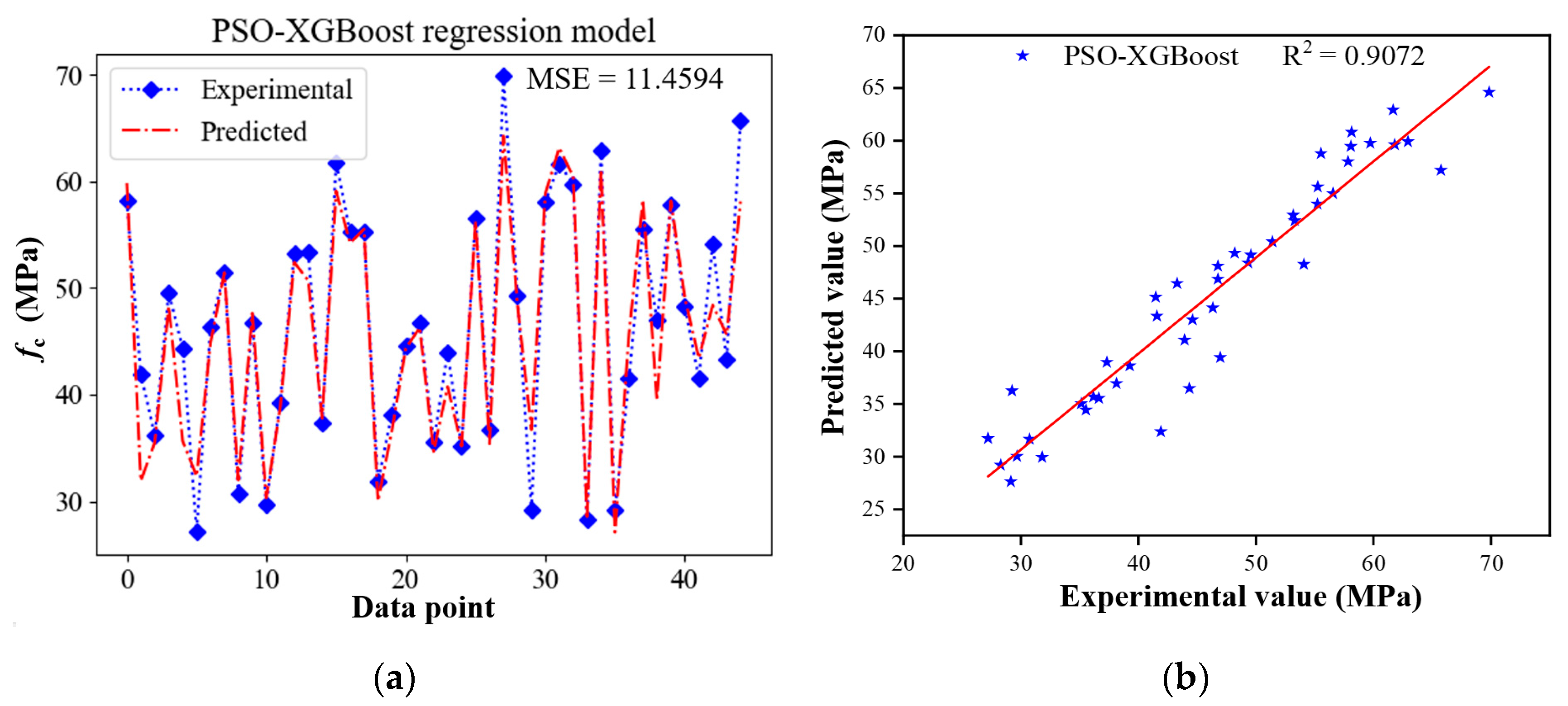

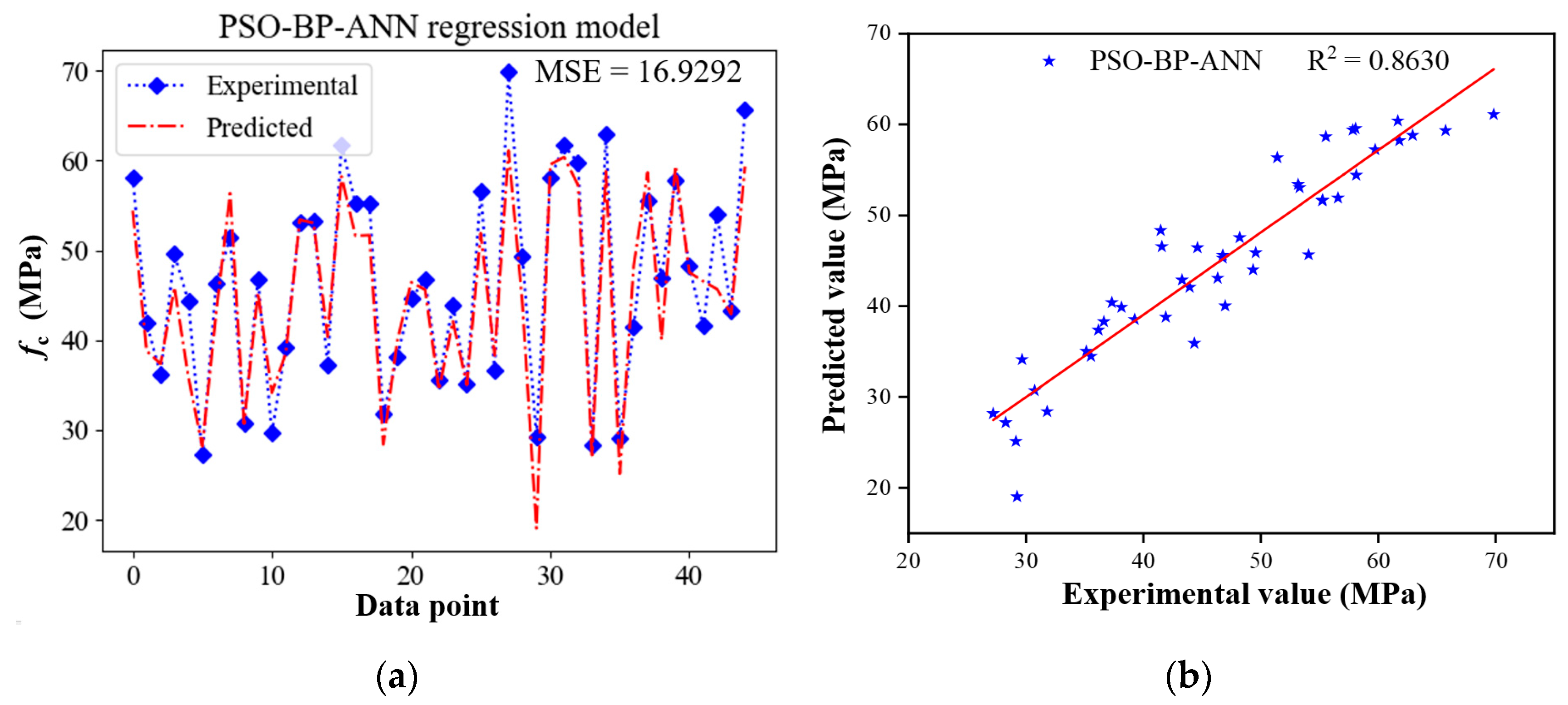

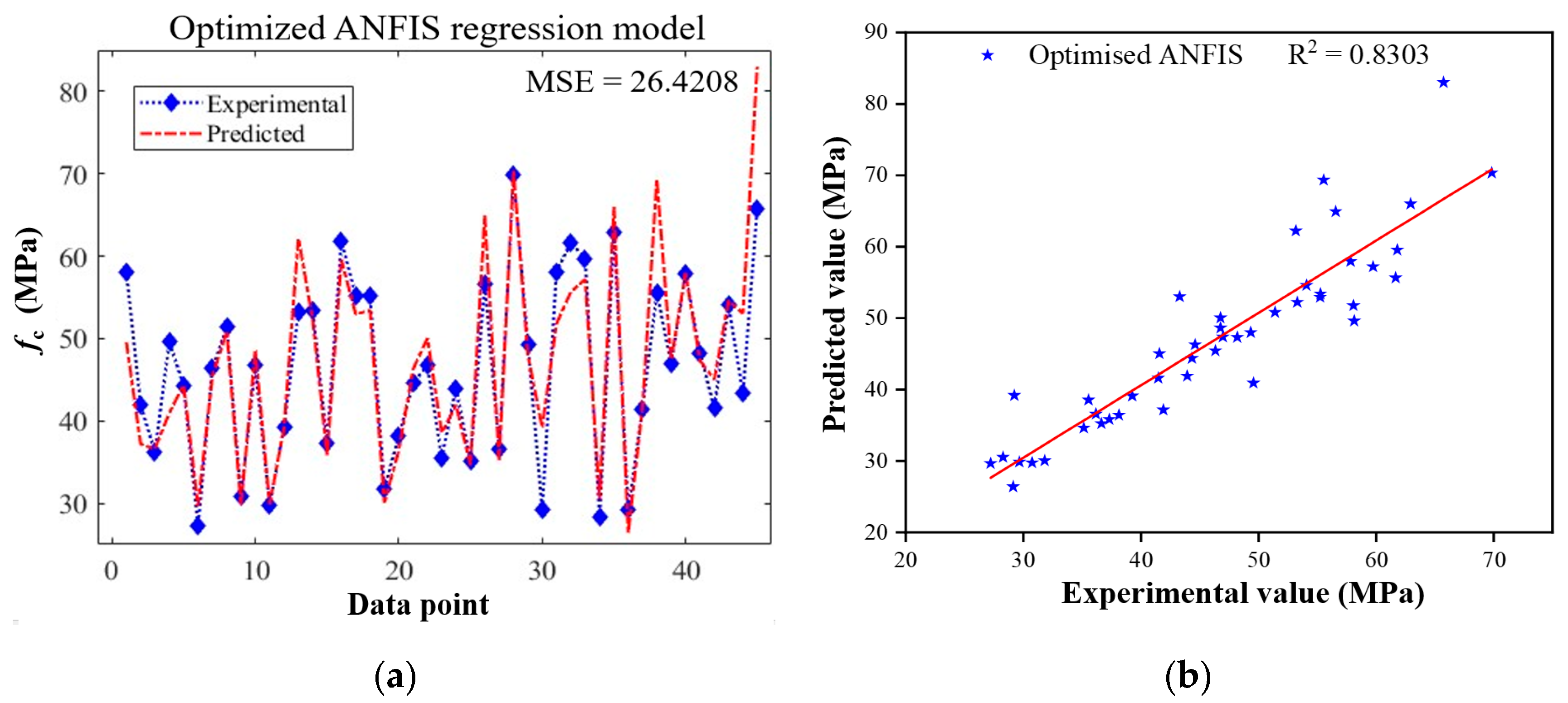

4.2. Prediction Performance of Hybrid Models

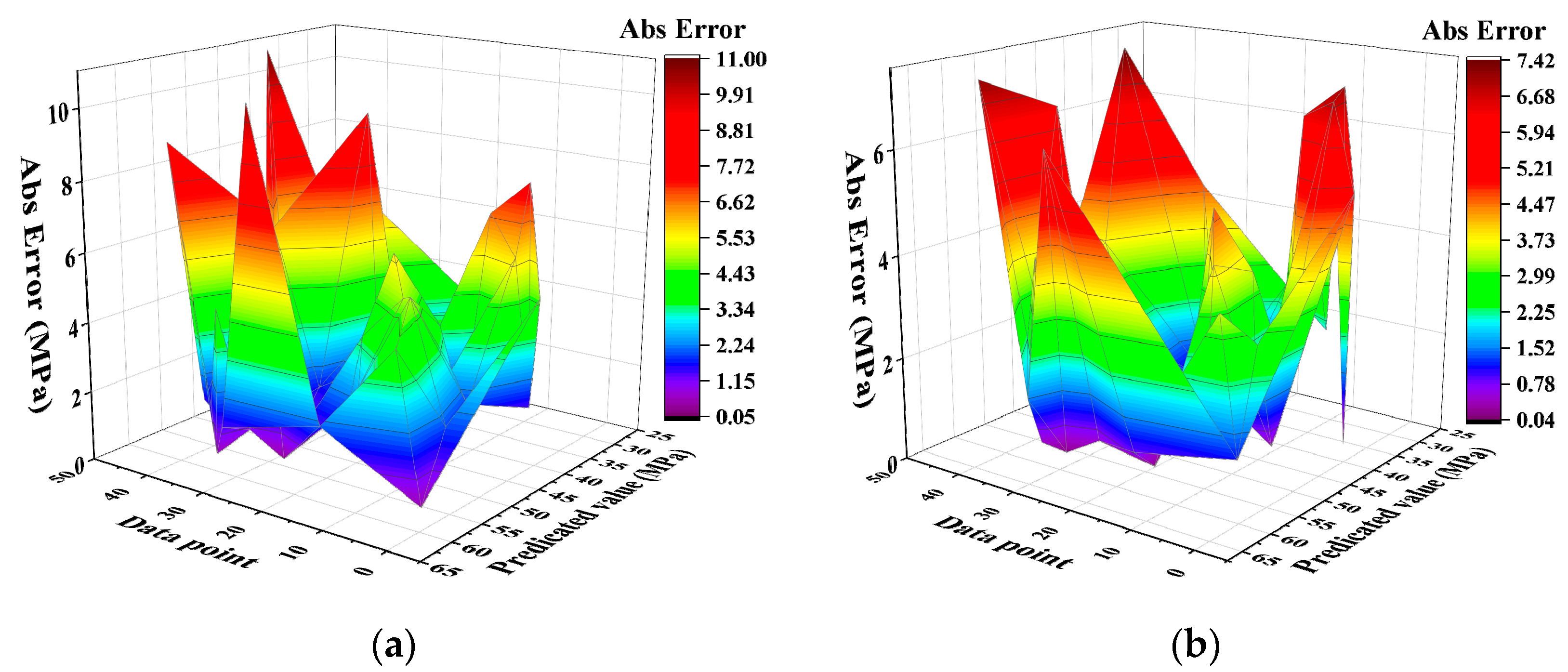

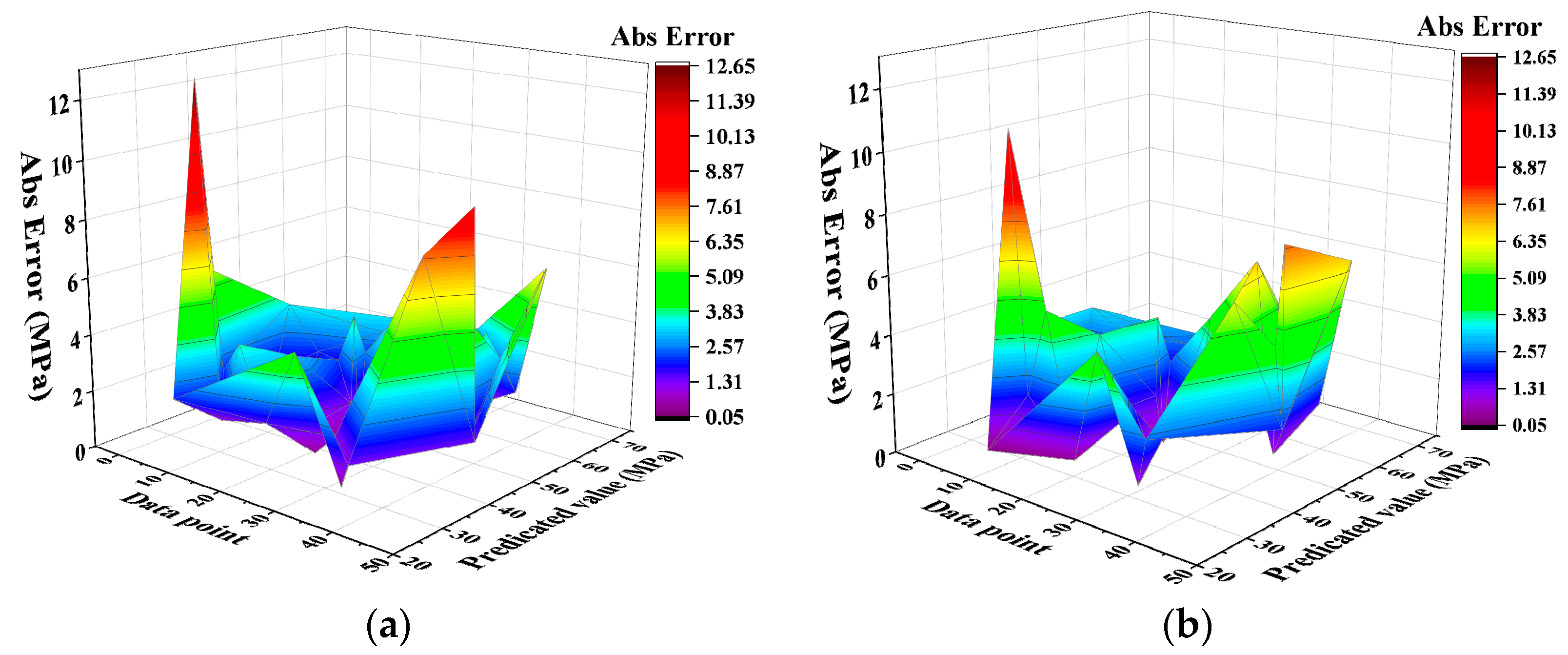

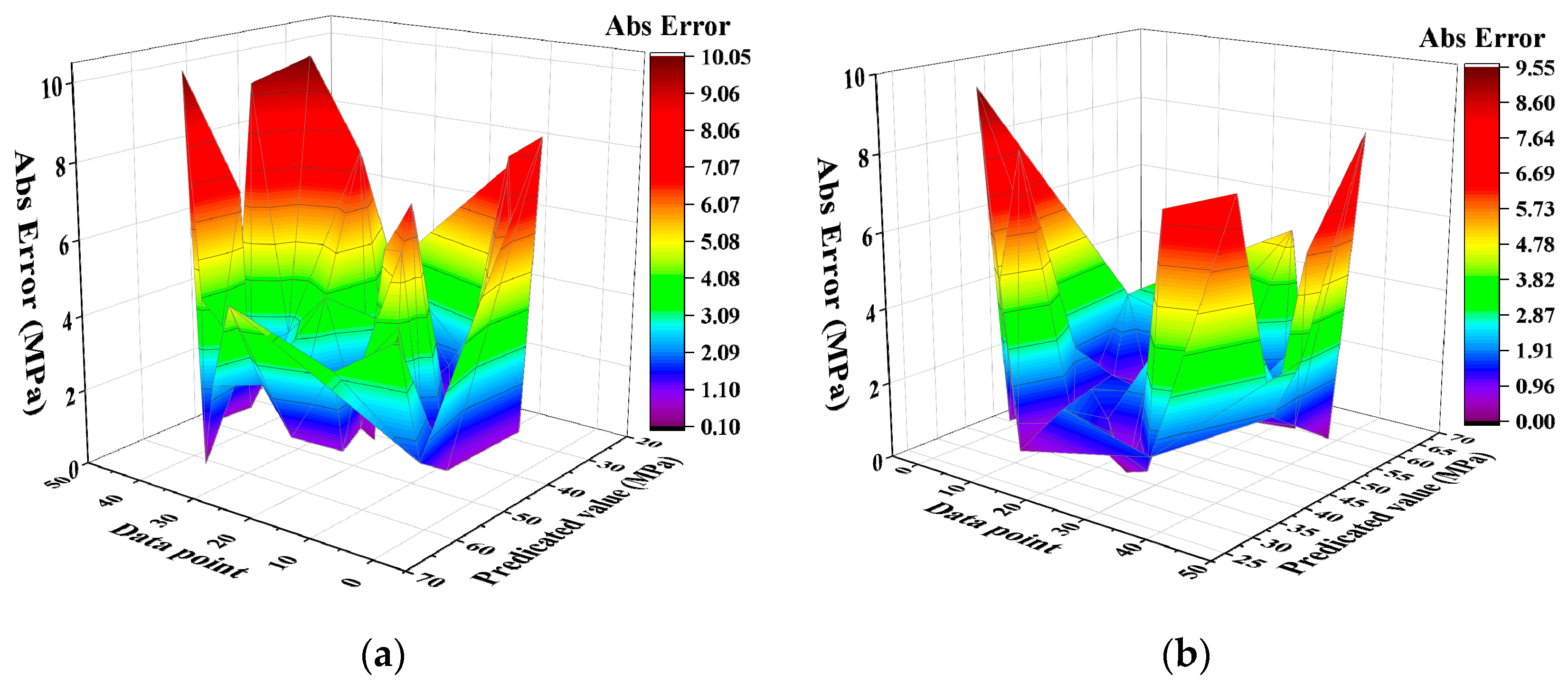

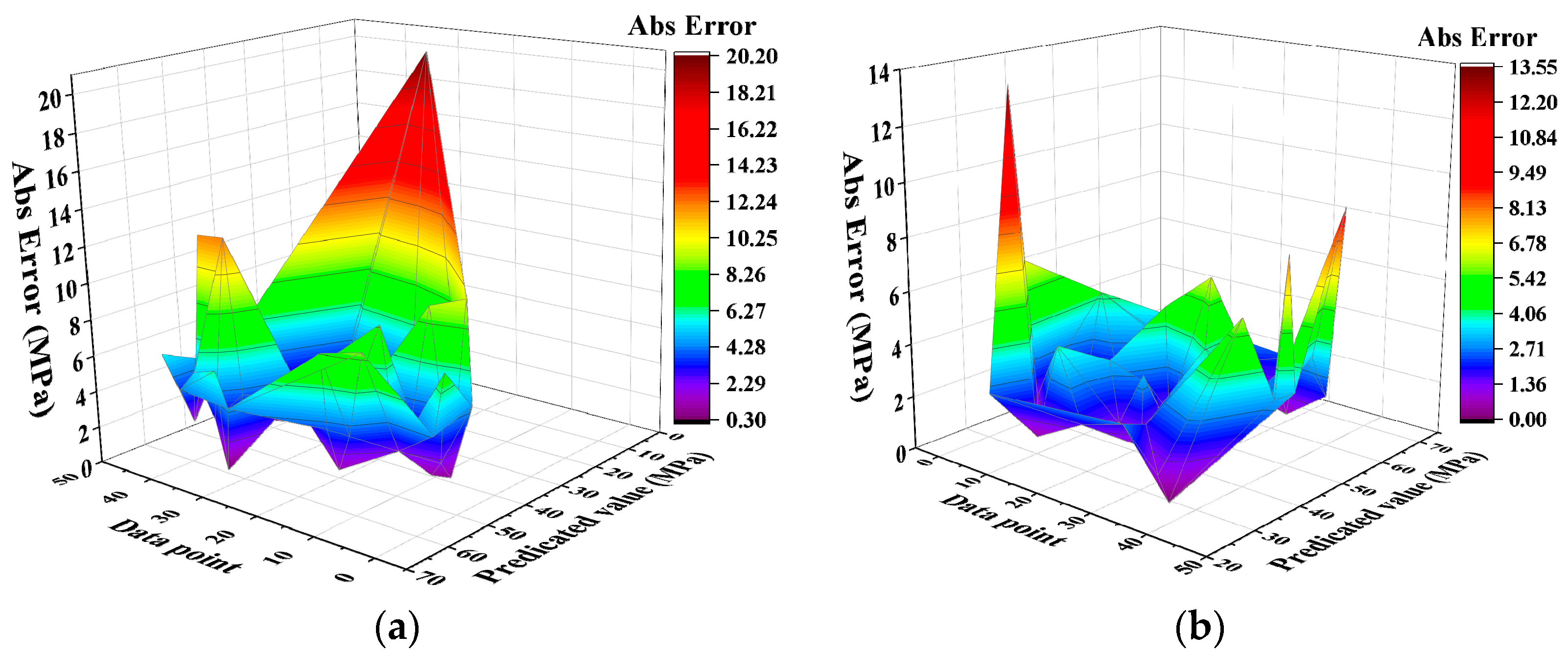

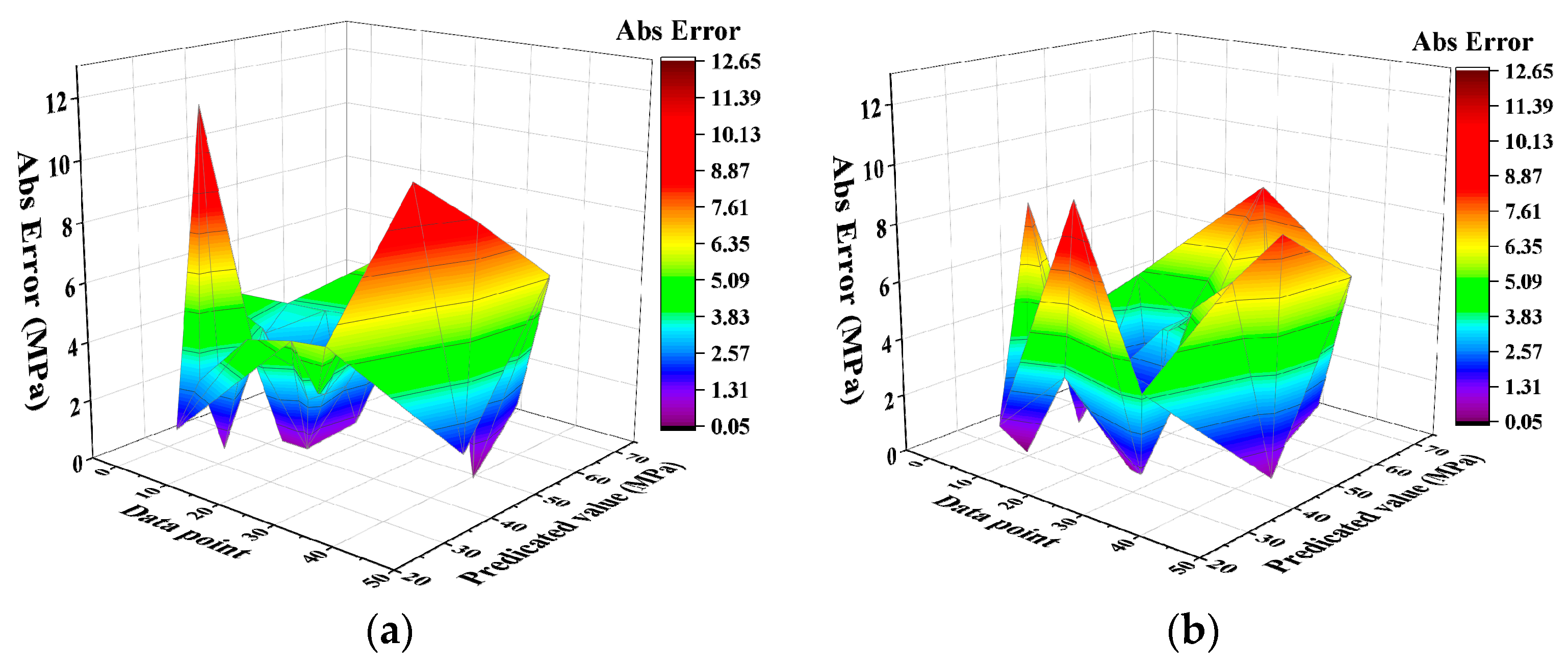

4.3. Analysis of Error Distribution

4.4. Accuracy Analysis

5. Conclusions

- (1)

- As a standalone model, the SVR algorithm has the highest R2 of 0.8837 and lowest MSE of 13.9315 with good generalization. In addition, the assembled algorithm outperforms the NN-based algorithm.

- (2)

- The PSO algorithm can effectively improve the prediction accuracy of all the ML models. Among them, the improvement in prediction accuracy of GP is the highest; its MSE decreased by 56.2% and R2 increased by 22.4% after cooperating with PSO. In addition, the R2 of the PSO-RF, PSO-XGBoost and PSO-SVR models are all greater than 0.9.

- (3)

- The absolute error distribution of the PSO-GP and SVR algorithms is relatively uniform, which means that there are fewer large error points in their prediction results, so it is not easy to have a large prediction error under a certain set of features. According to the statistical indicators of each standalone and hybrid algorithm, PSO-XGBoost has the best comprehensive performance.

- (4)

- Given the specificity of each predicting scenario, the same predicting models which have an appropriate accuracy in the fc prediction may not have performed excellently in the other scenarios such as anti-chloride diffusion, carbonization and so forth. Therefore, the applicability of each model should be carefully discussed in the others’ predicting scenarios.

- (5)

- Although six different machine learning algorithms were used to predict the fc of the concrete containing coal fly ash, the kinds of machine learning algorithms are still limited. Future research could discuss the applicability of other machine learning algorithms, even constructing a synthesizing operational interface to improve usability in the field.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sahoo, S.; Mahapatra, T.R. ANN Modeling to study strength loss of Fly Ash Concrete against Long term Sulphate Attack. Mater. Today Proc. 2018, 5, 24595–24604. [Google Scholar] [CrossRef]

- Mohamed, O.; Kewalramani, M.; Ati, M.; Hawat, W.A. Application of ANN for prediction of chloride penetration resistance and concrete compressive strength. Materialia 2021, 17, 101123. [Google Scholar] [CrossRef]

- Mohamed, O.A.; Najm, O.F. Compressive strength and stability of sustainable self-consolidating concrete containing fly ash, silica fume, and GGBS. Front. Struct. Civ. Eng. 2017, 11, 406–411. [Google Scholar] [CrossRef]

- Huang, H.; Yuan, Y.J.; Zhang, W.; Zhu, L. Property Assessment of High-Performance Concrete Containing Three Types of Fibers. Int. J. Concr. Struct. Mater. 2021, 15, 39. [Google Scholar] [CrossRef]

- Zheng, Z.; Tian, C.; Wei, X.; Zeng, C. Numerical investigation and ANN-based prediction on compressive strength and size effect using the concrete mesoscale concretization model. Case Stud. Constr. Mater. 2022, 16, e01056. [Google Scholar] [CrossRef]

- Ullah, H.S.; Khushnood, R.A.; Farooq, F.; Ahmad, J.; Vatin, N.I.; Ewais, D.Y. Prediction of Compressive Strength of Sustainable Foam Concrete Using Individual and Ensemble Machine Learning Approaches. Materials 2022, 15, 3166. [Google Scholar] [CrossRef] [PubMed]

- Khan, M.A.; Farooq, F.; Javed, M.F.; Zafar, A.; Ostrowski, K.A.; Aslam, F.; Malazdrewicz, S.; Maślak, M. Simulation of Depth of Wear of Eco-Friendly Concrete Using Machine Learning Based Computational Approaches. Materials 2022, 15, 58. [Google Scholar] [CrossRef]

- Petković, D.; Ćojbašić, Ž.; Nikolić, V.; Shamshirband, S.; Mat Kiah, M.L.; Anuar, N.B.; Abdul Wahab, A.W. Adaptive neuro-fuzzy maximal power extraction of wind turbine with continuously variable transmission. Energy 2014, 64, 868–874. [Google Scholar] [CrossRef]

- Shamshirband, S.; Petković, D.; Amini, A.; Anuar, N.B.; Nikolić, V.; Ćojbašić, Ž.; Mat Kiah, M.L.; Gani, A. Support vector regression methodology for wind turbine reaction torque prediction with power-split hydrostatic continuous variable transmission. Energy 2014, 67, 623–630. [Google Scholar] [CrossRef]

- Petković, B.; Agdas, A.S.; Zandi, Y.; Nikolić, I.; Denić, N.; Radenkovic, S.D.; Almojil, S.F.; Roco-Videla, A.; Kojić, N.; Zlatković, D.; et al. Neuro fuzzy evaluation of circular economy based on waste generation, recycling, renewable energy, biomass and soil pollution. Rhizosphere 2021, 19, 100418. [Google Scholar] [CrossRef]

- Nguyen, T.-D.; Cherif, R.; Mahieux, P.-Y.; Lux, J.; Aït-Mokhtar, A.; Bastidas-Arteaga, E. Artificial intelligence algorithms for prediction and sensitivity analysis of mechanical properties of recycled aggregate concrete: A review. J. Build. Eng. 2023, 66, 105929. [Google Scholar] [CrossRef]

- Taffese, W.Z.; Sistonen, E.; Puttonen, J. CaPrM: Carbonation prediction model for reinforced concrete using machine learning methods. Constr. Build. Mater. 2015, 100, 70–82. [Google Scholar] [CrossRef]

- Adeli, H.; Cheng, N.T. Integrated Genetic Algorithm for Optimization of Space Structures. J. Aerosp. Eng. 1993, 6, 315–328. [Google Scholar] [CrossRef]

- Xu, J.; Wang, Y.; Ren, R.; Wu, Z.; Ozbakkaloglu, T. Performance evaluation of recycled aggregate concrete-filled steel tubes under different loading conditions: Database analysis and modelling. J. Build. Eng. 2020, 30, 101308. [Google Scholar] [CrossRef]

- Dantas, A.T.A.; Batista Leite, M.; de Jesus Nagahama, K. Prediction of compressive strength of concrete containing construction and demolition waste using artificial neural networks. Constr. Build. Mater. 2013, 38, 717–722. [Google Scholar] [CrossRef]

- Huang, W.; Zhou, L.; Ge, P.; Yang, T. A Comparative Study on Compressive Strength Model of Recycled Brick Aggregate Concrete Based on PSO-BP and GA-BP Neural Networks. Mater. Rep. 2021, 35, 15026–15030. (In Chinese) [Google Scholar]

- Ahmadi, M.; Kioumarsi, M. Predicting the elastic modulus of normal and high strength concretes using hybrid ANN-PSO. Mater. Today Proc. 2023, in press. [Google Scholar] [CrossRef]

- Kim, S.; Choi, H.-B.; Shin, Y.; Kim, G.-H.; Seo, D.-S. Optimizing the Mixing Proportion with Neural Networks Based on Genetic Algorithms for Recycled Aggregate Concrete. Adv. Mater. Sci. Eng. 2013, 2013, 527089. [Google Scholar] [CrossRef]

- Zheng, W.; Zaman, A.; Farooq, F.; Althoey, F.; Alaskar, A.; Akbar, A. Sustainable predictive model of concrete utilizing waste ingredient: Individual alogrithms with optimized ensemble approaches. Mater. Today Commun. 2023, 35, 105901. [Google Scholar] [CrossRef]

- Ababneh, A.; Alhassan, M.; Abu-Haifa, M. Predicting the contribution of recycled aggregate concrete to the shear capacity of beams without transverse reinforcement using artificial neural networks. Case Stud. Constr. Mater. 2020, 13, e00414. [Google Scholar] [CrossRef]

- Jin, L.; Dong, T.; Fan, T.; Duan, J.; Yu, H.; Jiao, P.; Zhang, W. Prediction of the chloride diffusivity of recycled aggregate concrete using artificial neural network. Mater. Today Commun. 2022, 32, 104137. [Google Scholar] [CrossRef]

- Hiew, S.Y.; Teoh, K.B.; Raman, S.N.; Kong, D.; Hafezolghorani, M. Prediction of ultimate conditions and stress–strain behaviour of steel-confined ultra-high-performance concrete using sequential deep feed-forward neural network modelling strategy. Eng. Struct. 2023, 277, 115447. [Google Scholar] [CrossRef]

- Minaz Hossain, M.; Nasir Uddin, M.; Abu Sayed Hossain, M. Prediction of compressive strength ultra-high steel fiber reinforced concrete (UHSFRC) using artificial neural networks (ANNs). Mater. Today Proc. 2023, in press. [Google Scholar] [CrossRef]

- Allouzi, R.; Almasaeid, H.; Alkloub, A.; Ayadi, O.; Allouzi, R.; Alajarmeh, R. Lightweight foamed concrete for houses in Jordan. Case Stud. Constr. Mater. 2023, 18, e01924. [Google Scholar] [CrossRef]

- Kursuncu, B.; Gencel, O.; Bayraktar, O.Y.; Shi, J.; Nematzadeh, M.; Kaplan, G. Optimization of foam concrete characteristics using response surface methodology and artificial neural networks. Constr. Build. Mater. 2022, 337, 127575. [Google Scholar] [CrossRef]

- Salami, B.A.; Iqbal, M.; Abdulraheem, A.; Jalal, F.E.; Alimi, W.; Jamal, A.; Tafsirojjaman, T.; Liu, Y.; Bardhan, A. Estimating compressive strength of lightweight foamed concrete using neural, genetic and ensemble machine learning approaches. Cem. Concr. Compos. 2022, 133, 104721. [Google Scholar] [CrossRef]

- Asteris, P.G.; Lourenço, P.B.; Roussis, P.C.; Elpida Adami, C.; Armaghani, D.J.; Cavaleri, L.; Chalioris, C.E.; Hajihassani, M.; Lemonis, M.E.; Mohammed, A.S.; et al. Revealing the nature of metakaolin-based concrete materials using artificial intelligence techniques. Constr. Build. Mater. 2022, 322, 126500. [Google Scholar] [CrossRef]

- Bhuva, P.; Bhogayata, A.; Kumar, D. A comparative study of different artificial neural networks for the strength prediction of self-compacting concrete. Mater. Today Proc. 2023. [Google Scholar] [CrossRef]

- Gao, X.; Yang, J.; Zhu, H.; Xu, J. Estimation of rubberized concrete frost resistance using machine learning techniques. Constr. Build. Mater. 2023, 371, 130778. [Google Scholar] [CrossRef]

- Naseri Nasab, M.; Jahangir, H.; Hasani, H.; Majidi, M.-H.; Khorashadizadeh, S. Estimating the punching shear capacities of concrete slabs reinforced by steel and FRP rebars with ANN-Based GUI toolbox. Structures 2023, 50, 1204–1221. [Google Scholar] [CrossRef]

- Bardhan, A.; Biswas, R.; Kardani, N.; Iqbal, M.; Samui, P.; Singh, M.P.; Asteris, P.G. A novel integrated approach of augmented grey wolf optimizer and ANN for estimating axial load carrying-capacity of concrete-filled steel tube columns. Constr. Build. Mater. 2022, 337, 127454. [Google Scholar] [CrossRef]

- Zhao, B.; Li, P.; Du, Y.; Li, Y.; Rong, X.; Zhang, X.; Xin, H. Artificial neural network assisted bearing capacity and confining pressure prediction for rectangular concrete-filled steel tube (CFT). Alex. Eng. J. 2023, 74, 517–533. [Google Scholar] [CrossRef]

- Concha, N.C. Neural network model for bond strength of FRP bars in concrete. Structures 2022, 41, 306–317. [Google Scholar] [CrossRef]

- Huang, L.; Chen, J.; Tan, X. BP-ANN based bond strength prediction for FRP reinforced concrete at high temperature. Eng. Struct. 2022, 257, 114026. [Google Scholar] [CrossRef]

- Zhang, F.; Wang, C.; Liu, J.; Zou, X.; Sneed, L.H.; Bao, Y.; Wang, L. Prediction of FRP-concrete interfacial bond strength based on machine learning. Eng. Struct. 2023, 274, 115156. [Google Scholar] [CrossRef]

- You, X.; Yan, G.; Al-Masoudy, M.M.; Kadimallah, M.A.; Alkhalifah, T.; Alturise, F.; Ali, H.E. Application of novel hybrid machine learning approach for estimation of ultimate bond strength between ultra-high performance concrete and reinforced bar. Adv. Eng. Softw. 2023, 180, 103442. [Google Scholar] [CrossRef]

- Sun, L.; Wang, C.; Zhang, C.W.; Yang, Z.Y.; Li, C.; Qiao, P.Z. Experimental investigation on the bond performance of sea sand coral concrete with FRP bar reinforcement for marine environments. Adv. Struct. Eng. 2023, 26, 533–546. [Google Scholar] [CrossRef]

- Gehlot, T.; Dave, M.; Solanki, D. Neural network model to predict compressive strength of steel fiber reinforced concrete elements incorporating supplementary cementitious materials. Mater. Today Proc. 2022, 62, 6498–6506. [Google Scholar] [CrossRef]

- Fakharian, P.; Rezazadeh Eidgahee, D.; Akbari, M.; Jahangir, H.; Ali Taeb, A. Compressive strength prediction of hollow concrete masonry blocks using artificial intelligence algorithms. Structures 2023, 47, 1790–1802. [Google Scholar] [CrossRef]

- Owusu-Danquah, J.S.; Bseiso, A.; Allena, S.; Duffy, S.F. Artificial neural network algorithms to predict the bond strength of reinforced concrete: Coupled effect of corrosion, concrete cover, and compressive strength. Constr. Build. Mater. 2022, 350, 128896. [Google Scholar] [CrossRef]

- Rehman, F.; Khokhar, S.A.; Khushnood, R.A. ANN based predictive mimicker for mechanical and rheological properties of eco-friendly geopolymer concrete. Case Stud. Constr. Mater. 2022, 17, e01536. [Google Scholar] [CrossRef]

- Sadowski, Ł.; Hoła, J. ANN modeling of pull-off adhesion of concrete layers. Adv. Eng. Softw. 2015, 89, 17–27. [Google Scholar] [CrossRef]

- Imran Waris, M.; Plevris, V.; Mir, J.; Chairman, N.; Ahmad, A. An alternative approach for measuring the mechanical properties of hybrid concrete through image processing and machine learning. Constr. Build. Mater. 2022, 328, 126899. [Google Scholar] [CrossRef]

- Nikolić, V.; Mitić, V.V.; Kocić, L.; Petković, D. Wind speed parameters sensitivity analysis based on fractals and neuro-fuzzy selection technique. Knowl. Inf. Syst. 2017, 52, 255–265. [Google Scholar] [CrossRef]

- Wang, Q.; Xia, C.; Alagumalai, K.; Thanh Nhi Le, T.; Yuan, Y.; Khademi, T.; Berkani, M.; Lu, H. Biogas generation from biomass as a cleaner alternative towards a circular bioeconomy: Artificial intelligence, challenges, and future insights. Fuel 2023, 333, 126456. [Google Scholar] [CrossRef]

- Cao, B.T.; Obel, M.; Freitag, S.; Mark, P.; Meschke, G. Artificial neural network surrogate modelling for real-time predictions and control of building damage during mechanised tunnelling. Adv. Eng. Softw. 2020, 149, 102869. [Google Scholar] [CrossRef]

- Felix, E.F.; Carrazedo, R.; Possan, E. Carbonation model for fly ash concrete based on artificial neural network: Development and parametric analysis. Constr. Build. Mater. 2021, 266, 121050. [Google Scholar] [CrossRef]

- Payton, E.; Khubchandani, J.; Thompson, A.; Price, J.H. Parents’ Expectations of High Schools in Firearm Violence Prevention. J. Community Health 2017, 42, 1118–1126. [Google Scholar] [CrossRef]

- Çevik, A.; Kurtoğlu, A.E.; Bilgehan, M.; Gülşan, M.E.; Albegmprli, H.M. Support vector machines in structural engineering: A review. J. Civ. Eng. Manag. 2015, 21, 261–281. [Google Scholar] [CrossRef]

| Statistic Index | W (kg/m3) | C (kg/m3) | FA (kg/m3) | A (kg/m3) | S (kg/m3) | WR (kg/m3) | fc (MPa) |

|---|---|---|---|---|---|---|---|

| Count | 177 | 177 | 177 | 177 | 177 | 177 | 177 |

| Mean | 157.39 | 380.71 | 45.93 | 1110.27 | 737.49 | 5.55 | 45.54 |

| Std | 10.88 | 69.52 | 36.66 | 44.60 | 61.20 | 2.37 | 11.29 |

| Minimum | 145.00 | 189.30 | 0 | 999.70 | 572.90 | 0 | 16.30 |

| Maximum | 210.00 | 527.60 | 129.00 | 1214.50 | 920.60 | 14.10 | 69.80 |

| Skewness | 3.84 | −0.12 | −0.06 | −0.26 | −0.06 | 1.09 | −0.14 |

| Mode | 153. | 442. | 0 | 1136.79 | 726.80 | 5.20 | 36.90 |

| Kurtosis | 16.33 | −0.42 | −1.19 | −0.53 | −0.53 | 2.96 | −0.61 |

| SEM | 0.82 | 5.23 | 2.76 | 3.35 | 4.60 | 0.18 | 0.85 |

| ML Model | Value of Hyperparameters |

|---|---|

| BPNN | Two hidden layers, and the first layer has 18 neurons, the second layer has 12 neurons. |

| RF | n_estimate = 15, random state = 45, max_depth = 3 |

| SVR | kernel = rbf |

| XGBoost | default |

| GP | population_size = 5000, generations = 20, stopping_criteria = 0.01, p_crossover = 0.7, p_subtree_mutation = 0.1, p_hoist_mutation = 0.1, p_point_mutation = 0.1, max_samples = 0.9, verbose = 1, parsimony_coefficient = 0.01, random_state = 0 |

| ANFIS | membership type = graussf, membership grade = (2, 2, 2, 2, 2, 2, 2, 2) |

| PSO Hyperparameters Setting | Predicting Model | Searching Hyperparameters |

|---|---|---|

| population size = 20 generation = 20 | BPNN | Neurons number of each hidden layer |

| RF | n_estimators, random state, max_depth | |

| XGBoost | max_depth, learning_rate, n_estimators | |

| SVR | C, epsilon, gamma | |

| GP | population_size, generations stopping_criteria, max_samples, verbose, parsimony_coefficient, random_state |

| ML Model | Evaluating Index | |||

|---|---|---|---|---|

| R2 | MSE | STD | MAE | |

| RF | 0.8309 | 20.885 | 8.8893 | 3.6563 |

| PSO-RF | 0.9035 | 11.9172 | 9.8887 | 2.6271 |

| SVR | 0.8872 | 13.9315 | 10.7003 | 2.7257 |

| PSO-SVR | 0.9038 | 11.8761 | 10.5086 | 2.5996 |

| XGBoost | 0.8340 | 20.5032 | 10.3703 | 3.4999 |

| PSO-XGBoost | 0.9072 | 11.4594 | 10.6130 | 2.3637 |

| GP | 0.7154 | 35.1627 | 11.0829 | 4.6226 |

| PSO-GP | 0.8753 | 15.4052 | 10.5057 | 2.9886 |

| BP-ANN | 0.8368 | 20.1649 | 11.5004 | 3.6589 |

| PSO-BP-ANN | 0.8630 | 16.9292 | 10.7190 | 3.2411 |

| Optimized ANFIS | 0.8303 | 26.4208 | 12.3607 | 3.3869 |

| ANFIS | 0.7015 | 40.2328 | 11.0996 | 4.1215 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, Y.; Liu, G.; Zhang, H.; Zhang, Y.; Yang, X. Predicting the Compressive Strength of Environmentally Friendly Concrete Using Multiple Machine Learning Algorithms. Buildings 2024, 14, 190. https://doi.org/10.3390/buildings14010190

Yang Y, Liu G, Zhang H, Zhang Y, Yang X. Predicting the Compressive Strength of Environmentally Friendly Concrete Using Multiple Machine Learning Algorithms. Buildings. 2024; 14(1):190. https://doi.org/10.3390/buildings14010190

Chicago/Turabian StyleYang, Yanhua, Guiyong Liu, Haihong Zhang, Yan Zhang, and Xiaolong Yang. 2024. "Predicting the Compressive Strength of Environmentally Friendly Concrete Using Multiple Machine Learning Algorithms" Buildings 14, no. 1: 190. https://doi.org/10.3390/buildings14010190

APA StyleYang, Y., Liu, G., Zhang, H., Zhang, Y., & Yang, X. (2024). Predicting the Compressive Strength of Environmentally Friendly Concrete Using Multiple Machine Learning Algorithms. Buildings, 14(1), 190. https://doi.org/10.3390/buildings14010190