Deep Learning-Based Dental Caries Diagnosis on Panoramic Radiographies: Performance of YOLOv8 Versus Human Observers

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Design and Ethical Approval

2.2. Study Population and Image Acquisition

- Patient age between 5 and 12 years;

- Absence of motion artefacts or severe image distortion;

- Presence of at least one primary molar in any quadrant;

- Absence of radiographic findings suggestive of syndromic conditions (e.g., ectodermal dysplasia, cleidocranial dysostosis, Down syndrome).

2.3. Dataset Composition and Splitting

2.4. Image Preprocessing

2.5. Caries Annotation and Ground Truth Definition

- A final-year undergraduate dental student (Intern Dentist, ID);

- A first-year paediatric dentistry specialist student (Novice Specialist Student, NSS);

- A second-year paediatric dentistry specialist student (Experienced Specialist Student, ESS).

2.6. Observer Performance Evaluation

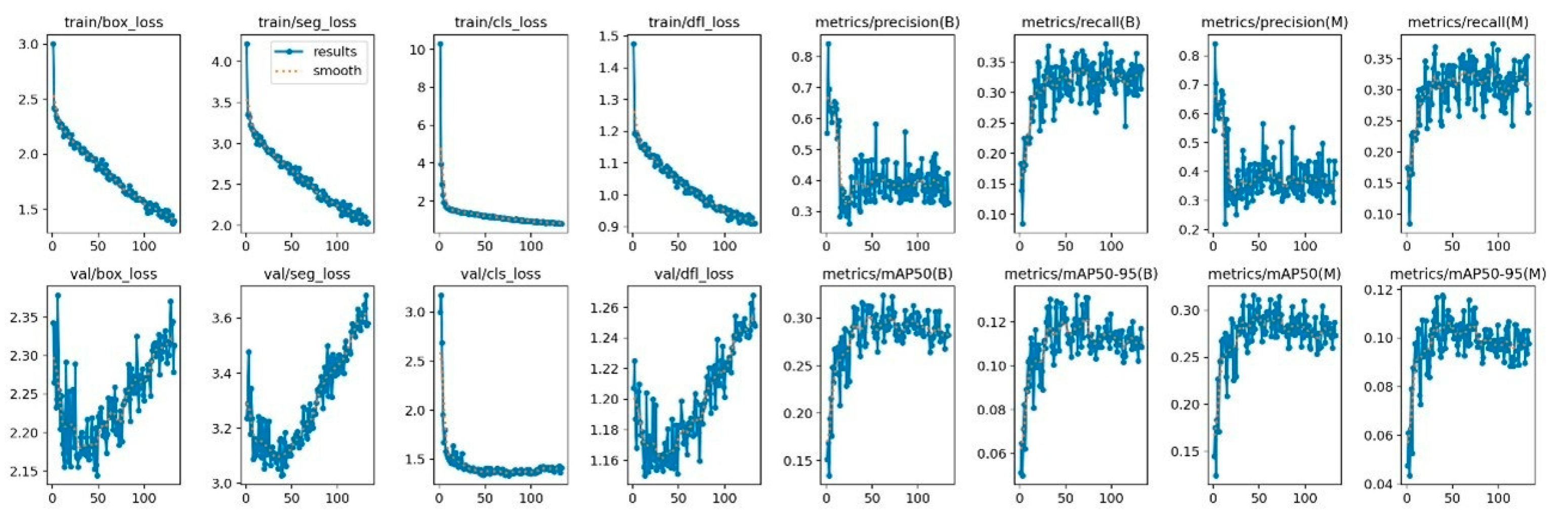

2.7. Deep Learning Model Architecture and Training

2.8. Model Evaluation and Performance Metrics

2.9. Additional Performance Analysis

2.10. Statistical Analysis

3. Results

3.1. Approximal Caries Detection

3.2. Buccal Caries Detection

3.3. Occlusal Caries Detection

3.4. Overall Diagnostic Performance

3.5. Inter-Observer Agreement and Disagreement Analysis

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CNN | Convolutional neural networks |

| AI | Artificial intelligence |

| ID | Intern dentist |

| ESS | Experienced specialist student |

| NSS | Novice specialist student |

| TP | True positives |

| FP | False positives |

| FN | False negatives |

References

- Broadbent, J.; Thomson, W.; Poulton, R. Trajectory patterns of dental caries experience in the permanent dentition to the fourth decade of life. J. Dent. Res. 2008, 87, 69–72. [Google Scholar] [CrossRef] [PubMed]

- American Academy of Pediatric Dentistry (AAPD). The State of Little Teeth in Report. Available online: https://www.mychildrensteeth.org/key-stats-state-of-little-teeth-report/ (accessed on 8 January 2026).

- Selwitz, R.H.; Ismail, A.I.; Pitts, N.B. Dental caries. Lancet 2007, 369, 51–59. [Google Scholar] [CrossRef] [PubMed]

- Fejerskov, O.; Nyvad, B.; Kidd, E. Dental Caries: The Disease and Its Clinical Management; John Wiley & Sons: Hoboken, NJ, USA, 2015. [Google Scholar]

- Petersen, P.E.; Bourgeois, D.; Ogawa, H.; Estupinan-Day, S.; Ndiaye, C. The global burden of oral diseases and risks to oral health. Bull. World Health Organ. 2005, 83, 661–669. [Google Scholar]

- Filstrup, S.L.; Briskie, D.; da Fonseca, M.; Lawrence, L.; Wandera, A.; Inglehart, M.R. Early childhood caries and quality of life: Child and parent perspectives. Pediatr. Dent. 2003, 25, 431–440. [Google Scholar] [PubMed]

- Beltrán-Aguilar, E.D.; Barker, L.K.; Canto, M.T.; Dye, B.A.; Gooch, B.F.; Griffin, S.O.; Hyman, J.; Jaramillo, F.; Kingman, A.; Nowjack-Raymer, R.; et al. Surveillance for dental caries, dental sealants, tooth retention, edentulism, and enamel fluorosis—United States, 1988–1994 and 1999–2002. MMWR Surveill. Summ. 2005, 54, 1–43. [Google Scholar]

- Schwendicke, F.; Tzschoppe, M.; Paris, S. Radiographic caries detection: A systematic review and meta-analysis. J. Dent. 2015, 43, 924–933. [Google Scholar] [CrossRef] [PubMed]

- Stephens, R. A comparison of panorex and intraoral surveys for routin dental radiography. J. Can. Dent. Assoc. 1977, 6, 281–286. [Google Scholar]

- Rushton, V.; Horner, K.; Worthington, H. The quality of panoramic radiographs in a sample of general dental practices. Br. Dent. J. 1999, 186, 630–633. [Google Scholar] [CrossRef] [PubMed]

- Nampim, N.; Panyarak, W.; Suttapak, W.; Wantanajittikul, K.; Nirunsittirat, A.; Wattanarat, O. Deep learning-based caries lesion classification of primary teeth using bitewing radiographs and its comparison with dental professionals. Eur. Arch. Paediatr. Dent. 2025, 1–12. [Google Scholar] [CrossRef]

- Cantu, A.G.; Gehrung, S.; Krois, J.; Chaurasia, A.; Rossi, J.G.; Gaudin, R.; Elhennawy, K.; Schwendicke, F. Detecting caries lesions of different radiographic extension on bitewings using deep learning. J. Dent. 2020, 100, 103425. [Google Scholar] [CrossRef]

- Chen, X.; Guo, J.; Ye, J.; Zhang, M.; Liang, Y. Detection of Proximal Caries Lesions on Bitewing Radiographs Using Deep Learning Method. Caries Res. 2022, 56, 455–463. [Google Scholar] [CrossRef]

- Oztekin, F.; Katar, O.; Sadak, F.; Yildirim, M.; Cakar, H.; Aydogan, M.; Ozpolat, Z.; Yildirim, T.T.; Yildirim, O.; Faust, O.; et al. An explainable deep learning model to prediction dental caries using panoramic radiograph images. Diagnostics 2023, 13, 226. [Google Scholar] [CrossRef] [PubMed]

- Hintze, H.; Wenzel, A. Diagnostic outcome of methods frequently used for caries validation: A comparison of clinical examination, radiography and histology following hemisectioning and serial tooth sectioning. Caries Res. 2003, 37, 115–124. [Google Scholar] [CrossRef]

- Prados-Privado, M.; García Villalón, J.; Martínez-Martínez, C.H.; Ivorra, C.; Prados-Frutos, J.C. Dental caries diagnosis and detection using neural networks: A systematic review. J. Clin. Med. 2020, 9, 3579. [Google Scholar] [CrossRef]

- Schwendicke, F.; Rossi, J.G.; Göstemeyer, G.; Elhennawy, K.; Cantu, A.; Gaudin, R.; Chaurasia, A.; Gehrung, S.; Krois, J. Cost-effectiveness of Artificial Intelligence for Proximal Caries Detection. J. Dent. Res. 2021, 100, 369–376. [Google Scholar] [CrossRef] [PubMed]

- Schwendicke, F.; Golla, T.; Dreher, M.; Krois, J. Convolutional neural networks for dental image diagnostics: A scoping review. J. Dent. 2019, 91, 103226. [Google Scholar] [CrossRef] [PubMed]

- Mertens, S.; Krois, J.; Cantu, A.G.; Arsiwala, L.T.; Schwendicke, F. Artificial intelligence for caries detection: Randomized trial. J. Dent. 2021, 115, 103849. [Google Scholar] [CrossRef]

- Dayı, B.; Üzen, H.; Çiçek, İ.B.; Duman, Ş.B. A novel deep learning-based approach for segmentation of different type caries lesions on panoramic radiographs. Diagnostics 2023, 13, 202. [Google Scholar] [CrossRef]

- Karakuş, R.; Öziç, M.Ü.; Tassoker, M. AI-assisted detection of interproximal, occlusal, and secondary caries on bite-wing radiographs: A single-shot deep learning approach. J. Imaging Inform. Med. 2024, 37, 3146–3159. [Google Scholar] [CrossRef] [PubMed]

- Lee, K.; Kwak, H.; Oh, J.; Jha, N.; Kim, Y.; Kim, W.; Baik, U.; Ryu, J. Automated detection of TMJ osteoarthritis based on artificial intelligence. J. Dent. Res. 2020, 99, 1363–1367. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar]

- He, K.; Gkioxari, G.; Dollar, P.; Girshick, R. Mask R-CNN. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 386–397. [Google Scholar] [CrossRef]

- Lin, H.; Cui, G.; Liu, Z.; Pang, L. Artificial intelligent model for detecting caries in primary teeth. Int. Dent. J. 2024, 74, S403. [Google Scholar] [CrossRef]

- Azhari, A.A.; Helal, N.; Sabri, L.M.; Abduljawad, A. Artificial intelligence (AI) in restorative dentistry: Performance of AI models designed for detection of interproximal carious lesions on primary and permanent dentition. Digit. Health 2023, 9, 20552076231216681. [Google Scholar] [CrossRef]

- Moharrami, M.; Farmer, J.; Singhal, S.; Watson, E.; Glogauer, M.; Johnson, A.E.W.; Schwendicke, F.; Quinonez, C. Detecting dental caries on oral photographs using artificial intelligence: A systematic review. Oral Dis. 2024, 30, 1765–1783. [Google Scholar] [CrossRef] [PubMed]

- Li, R.; Zhu, J.; Wang, Y.; Zhao, S.; Peng, C.; Zhou, Q.; Sun, R.; Hao, A.; Li, S.; Wang, Y.; et al. Development of a deep learning based prototype artificial intelligence system for the detection of dental caries in children. Chin. J. Stomatol. 2021, 56, 1253–1260. [Google Scholar]

- Bayraktar, Y.; Ayan, E. Diagnosis of interproximal caries lesions with deep convolutional neural network in digital bitewing radiographs. Clin. Oral Investig. 2022, 26, 623–632. [Google Scholar] [CrossRef] [PubMed]

- Schwendicke, F.; de Oro, J.C.G.; Garcia Cantu, A.; Meyer-Lueckel, H.; Chaurasia, A.; Krois, J. Artificial intelligence for caries detection: Value of data and information. J. Dent. Res. 2022, 101, 1350–1356. [Google Scholar] [CrossRef]

- Bader, J.D.; Shugars, D.A. The Evidence Supporting Alternative Management Strategies For Early Occlusal Caries and Suspected Occlusal Dentinal Caries. J. Evid. Based Dent. Pract. 2006, 6, 91–100. [Google Scholar] [CrossRef]

- Aps, J.; Lim, L.; Tong, H.; Kalia, B.; Chou, A. Diagnostic efficacy of and indications for intraoral radiographs in pediatric dentistry: A systematic review. Eur. Arch. Paediatr. Dent. 2020, 21, 429–462. [Google Scholar] [CrossRef]

- Caceda, J.H.; Jiang, S.; Calderon, V.; Villavicencio-Caparo, E. Sensitivity and specificity of the ICDAS II system and bitewing radiographs for detecting occlusal caries using the SpectraTM caries detection system as the reference test in children. BMC Oral Health 2023, 23, 896. [Google Scholar] [CrossRef]

- Bayati, M.; Alizadeh Savareh, B.; Ahmadinejad, H.; Mosavat, F. Advanced AI-driven detection of interproximal caries in bitewing radiographs using YOLOv8. Sci. Rep. 2025, 15, 4641. [Google Scholar] [CrossRef] [PubMed]

- Ulya, S.; Santoso, H.A.; Basuki, R.S.; Syifa, L.L. A Comparative Study of YOLOv7 and YOLOv8 for Identification of Dental Caries on Intraoral RGB Images. In Proceedings of the 2025 International Seminar on Application for Technology of Information and Communication (iSemantic), Semarang, Indonesia, 18–20 September 2025; pp. 402–409. [Google Scholar] [CrossRef]

- Kamburoglu, K.; Kolsuz, E.; Murat, S.; Yüksel, S.; Ozen, T. Proximal caries detection accuracy using intraoral bitewing radiography, extraoral bitewing radiography and panoramic radiography. Dentomaxillofacial Radiol. 2012, 41, 450–459. [Google Scholar] [CrossRef]

- Jin, L.; Zhou, W.; Tang, Y.; Yu, Z.; Fan, J.; Wang, L.; Liu, C.; Gu, Y.; Zhang, P. Detection of C-shaped mandibular second molars on panoramic radiographs using deep convolutional neural networks. Clin. Oral Investig. 2024, 28, 646. [Google Scholar] [CrossRef] [PubMed]

- Çelebi, A.; Küçük, D.B.; Adaş, G.N.; Küçük, A.Ö.; Türkoğlu, M.; Atıl, F. Panoramik Radyografilerde Foramen Mentalenin Yapay Zeka Tabanlı Sistemler ile Tespiti. J. Health Inst. Türkiye 2025, 8, 1–11. [Google Scholar] [CrossRef]

- Yiğit, M.K.; Akyol, R.; Yalvaç, B.; Dilek, F.; Canger, E.M. How Available are Panoramic Radiographs in the Diagnosis of Interproximal Caries? A Study with Dental Students and Dentists. Türk Diş Hekim. Araştırma Derg. 2023, 2, 139–145. [Google Scholar] [CrossRef]

- Güneç, H.G.; Ürkmez, E.Ş.; Danaci, A.; Dilmaç, E.; Onay, H.H.; Aydin, K.C. Comparison of artificial intelligence vs. junior dentists’ diagnostic performance based on caries and periapical infection detection on panoramic images. Quant. Imaging Med. Surg. 2023, 13, 7494. [Google Scholar] [CrossRef]

- Tichý, A.; Kunt, L.; Nagyová, V.; Kybic, J. Automatic caries detection in bitewing radiographs-Part II: Experimental comparison. Clin. Oral Investig. 2024, 28, 133. [Google Scholar] [CrossRef]

| Caries Class | PEM | ID | NSS | ESS | AI Model |

|---|---|---|---|---|---|

| Approximal Caries | TP | 226 | 275 | 415 | 408 |

| FP | 268 | 331 | 116 | 215 | |

| FN | 414 | 365 | 225 | 385 | |

| Precision | 0.458 | 0.454 | 0.782 | 0.655 | |

| Sensitivity | 0.353 | 0.430 | 0.648 | 0.515 | |

| F1 Score | 0.399 | 0.441 | 0.709 | 0.576 | |

| Buccal Caries | TP | 6 | 2 | 12 | 0 |

| FP | 133 | 60 | 11 | 7 | |

| FN | 40 | 44 | 34 | 22 | |

| Precision | 0.043 | 0.032 | 0.522 | 0 | |

| Sensitivity | 0.130 | 0.044 | 0.261 | 0 | |

| F1 Score | 0.065 | 0.037 | 0.348 | 0 | |

| Occlusal Caries | TP | 53 | 59 | 82 | 69 |

| FP | 149 | 152 | 61 | 72 | |

| FN | 157 | 151 | 128 | 365 | |

| Precision | 0.262 | 0.280 | 0.573 | 0.489 | |

| Sensitivity | 0.252 | 0.281 | 0.391 | 0.159 | |

| F1 Score | 0.257 | 0.280 | 0.465 | 0.240 | |

| Overall Results | TP | 285 | 336 | 509 | 477 |

| FP | 550 | 543 | 188 | 294 | |

| FN | 611 | 560 | 387 | 772 | |

| Precision | 0.341 | 0.382 | 0.730 | 0.619 | |

| Sensitivity (Recall) | 0.318 | 0.375 | 0.568 | 0.382 | |

| F1 Score | 0.329 | 0.379 | 0.639 | 0.473 |

| Lesion Type | Reader | n | Sensitivity (95% CI) | Precision (95% CI) | F1 Score (95% CI) | Accuracy |

| Buccal caries | NSS | 800 | 0.222 (0.112–0.371) | 0.161 (0.080–0.277) | 0.187 (0.088–0.286) | 0.891 |

| Buccal caries | ESS | 800 | 0.267 (0.146–0.419) | 0.600 (0.361–0.809) | 0.369 (0.207–0.511) | 0.949 |

| Buccal caries | ID | 800 | 0.267 (0.146–0.419) | 0.088 (0.046–0.148) | 0.132 (0.064–0.201) | 0.802 |

| Buccal caries | AI | 800 | 0.022 (0.001–0.118) | 1.000 (0.025–1.000) | 0.043 (0.000–0.140) | 0.945 |

| Approximal caries | NSS | 800 | 0.835 (0.801–0.865) | 0.930 (0.904–0.951) | 0.880 (0.858–0.901) | 0.845 |

| Approximal caries | ESS | 800 | 0.811 (0.775–0.843) | 0.969 (0.949–0.983) | 0.883 (0.861–0.904) | 0.854 |

| Approximal caries | ID | 800 | 0.754 (0.715–0.789) | 0.930 (0.902–0.952) | 0.832 (0.806–0.856) | 0.794 |

| Approximal caries | AI | 800 | 0.860 (0.828–0.888) | 0.919 (0.892–0.942) | 0.889 (0.868–0.909) | 0.854 |

| Occlusal caries | NSS | 800 | 0.651 (0.575–0.722) | 0.589 (0.516–0.660) | 0.619 (0.558–0.677) | 0.828 |

| Occlusal caries | ESS | 800 | 0.570 (0.492–0.645) | 0.778 (0.695–0.847) | 0.658 (0.594–0.716) | 0.873 |

| Occlusal caries | ID | 800 | 0.587 (0.510–0.662) | 0.510 (0.438–0.582) | 0.546 (0.483–0.603) | 0.790 |

| Occlusal caries | AI | 800 | 0.424 (0.350–0.502) | 0.820 (0.725–0.894) | 0.559 (0.482–0.627) | 0.856 |

| Overall pooled | NSS | 2400 | 0.757 (0.725–0.787) | 0.778 (0.747–0.808) | 0.767 (0.744–0.791) | 0.855 |

| Overall pooled | ESS | 2400 | 0.724 (0.691–0.756) | 0.917 (0.892–0.938) | 0.809 (0.785–0.831) | 0.892 |

| Overall pooled | ID | 2400 | 0.687 (0.653–0.720) | 0.674 (0.640–0.707) | 0.681 (0.654–0.706) | 0.795 |

| Overall pooled | AI | 2400 | 0.712 (0.679–0.744) | 0.905 (0.878–0.927) | 0.797 (0.773–0.820) | 0.885 |

| Lesion Type | Comparison | n | Discordant Pairs (AI Correct/Comparator Correct) | Exact McNemar p | BH-Adjusted p |

| Buccal caries | AI vs. NSS | 800 | 52/9 | <0.001 | <0.001 |

| Buccal caries | AI vs. ESS | 800 | 8/11 | 0.648 | 0.706 |

| Buccal caries | AI vs. ID | 800 | 125/11 | <0.001 | <0.001 |

| Approximal caries | AI vs. NSS | 800 | 73/66 | 0.611 | 0.706 |

| Approximal caries | AI vs. ESS | 800 | 63/63 | 1.000 | 1.000 |

| Approximal caries | AI vs. ID | 800 | 95/47 | <0.001 | <0.001 |

| Occlusal caries | AI vs. NSS | 800 | 75/52 | 0.050 | 0.087 |

| Occlusal caries | AI vs. ESS | 800 | 28/41 | 0.148 | 0.222 |

| Occlusal caries | AI vs. ID | 800 | 100/47 | <0.001 | <0.001 |

| Overall pooled | AI vs. NSS | 2400 | 200/127 | <0.001 | <0.001 |

| Overall pooled | AI vs. ESS | 2400 | 99/115 | 0.305 | 0.407 |

| Overall pooled | AI vs. ID | 2400 | 320/105 | <0.001 | <0.001 |

| Lesion Type | Comparison | Metric | n | Difference (95% CI) | Bootstrap p | BH-Adjusted p |

| Buccal caries | AI − NSS | Recall | 800 | −0.200 (−0.324 to −0.089) | <0.001 | <0.001 |

| Buccal caries | AI − NSS | F1 score | 800 | −0.143 (−0.254 to −0.035) | 0.011 | 0.019 |

| Buccal caries | AI − ESS | Recall | 800 | −0.244 (−0.373 to −0.125) | <0.001 | <0.001 |

| Buccal caries | AI − ESS | F1 score | 800 | −0.326 (−0.475 to −0.172) | <0.001 | <0.001 |

| Buccal caries | AI − ID | Recall | 800 | −0.244 (−0.378 to −0.119) | <0.001 | <0.001 |

| Buccal caries | AI − ID | F1 score | 800 | −0.088 (−0.176 to 0.010) | 0.077 | 0.116 |

| Approximal caries | AI − NSS | Recall | 800 | 0.026 (−0.009 to 0.061) | 0.166 | 0.209 |

| Approximal caries | AI − NSS | F1 score | 800 | 0.009 (−0.014 to 0.033) | 0.442 | 0.506 |

| Approximal caries | AI − ESS | Recall | 800 | 0.050 (0.015 to 0.084) | 0.006 | 0.010 |

| Approximal caries | AI − ESS | F1 score | 800 | 0.006 (−0.016 to 0.029) | 0.602 | 0.629 |

| Approximal caries | AI − ID | Recall | 800 | 0.107 (0.071 to 0.140) | <0.001 | <0.001 |

| Approximal caries | AI − ID | F1 score | 800 | 0.056 (0.032 to 0.081) | <0.001 | <0.001 |

| Occlusal caries | AI − NSS | Recall | 800 | −0.227 (−0.302 to −0.152) | <0.001 | <0.001 |

| Occlusal caries | AI − NSS | F1 score | 800 | −0.059 (−0.133 to 0.011) | 0.104 | 0.146 |

| Occlusal caries | AI − ESS | Recall | 800 | −0.145 (−0.219 to −0.074) | <0.001 | <0.001 |

| Occlusal caries | AI − ESS | F1 score | 800 | −0.098 (−0.167 to −0.033) | 0.002 | 0.005 |

| Occlusal caries | AI − ID | Recall | 800 | −0.163 (−0.246 to −0.081) | <0.001 | <0.001 |

| Occlusal caries | AI − ID | F1 score | 800 | 0.013 (−0.064 to 0.087) | 0.751 | 0.751 |

| Overall pooled | AI − NSS | Recall | 2400 | −0.045 (−0.077 to −0.013) | 0.004 | 0.008 |

| Overall pooled | AI − NSS | F1 score | 2400 | 0.030 (0.005 to 0.055) | 0.022 | 0.035 |

| Overall pooled | AI − ESS | Recall | 2400 | −0.012 (−0.043 to 0.020) | 0.504 | 0.549 |

| Overall pooled | AI − ESS | F1 score | 2400 | −0.012 (−0.035 to 0.011) | 0.312 | 0.374 |

| Overall pooled | AI − ID | Recall | 2400 | 0.025 (−0.009 to 0.060) | 0.150 | 0.199 |

| Overall pooled | AI − ID | F1 score | 2400 | 0.117 (0.090 to 0.144) | <0.001 | <0.001 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Biçengil, K.; Kurt, A.; Naralan, M.E.; Okumuş, İ. Deep Learning-Based Dental Caries Diagnosis on Panoramic Radiographies: Performance of YOLOv8 Versus Human Observers. Diagnostics 2026, 16, 1150. https://doi.org/10.3390/diagnostics16081150

Biçengil K, Kurt A, Naralan ME, Okumuş İ. Deep Learning-Based Dental Caries Diagnosis on Panoramic Radiographies: Performance of YOLOv8 Versus Human Observers. Diagnostics. 2026; 16(8):1150. https://doi.org/10.3390/diagnostics16081150

Chicago/Turabian StyleBiçengil, Kader, Ayça Kurt, Muhammed Enes Naralan, and İrem Okumuş. 2026. "Deep Learning-Based Dental Caries Diagnosis on Panoramic Radiographies: Performance of YOLOv8 Versus Human Observers" Diagnostics 16, no. 8: 1150. https://doi.org/10.3390/diagnostics16081150

APA StyleBiçengil, K., Kurt, A., Naralan, M. E., & Okumuş, İ. (2026). Deep Learning-Based Dental Caries Diagnosis on Panoramic Radiographies: Performance of YOLOv8 Versus Human Observers. Diagnostics, 16(8), 1150. https://doi.org/10.3390/diagnostics16081150