1. Introduction

The pancreas is a retroperitoneal organ with a complex anatomical structure extending transversely from the duodenal curve to the splenic hilum. This structure is anatomically divided into four sections: the head, the uncinate process, the body, and the tail [

1]. Contrast-enhanced computed tomography (CT) is the primary imaging method for the pancreas. It enables a detailed evaluation of the pancreatic parenchyma and clearly visualizes the surrounding anatomical relationships thanks to its high resolution [

2,

3]. A normal (healthy) pancreas is typically seen on CT as a structure with homogeneous soft tissue density, smooth contours, and relatively heterogeneous contrast enhancement [

4]. However, its morphology and density characteristics may vary depending on patient-related factors and the imaging phase. Pathological processes affecting the pancreas often cause changes in its CT appearance, such as focal or diffuse enlargement, irregular contours, heterogeneous density, and involvement of adjacent tissues [

5]. In particular, inflammatory conditions may be accompanied by increased density and heterogeneity of peripancreatic adipose tissue, fluid collections, vascular complications, and parenchymal heterogeneity. These findings are the basis for radiological evaluations in clinical practice [

6].

Acute pancreatitis (AP) is defined as inflammation of the pancreatic parenchyma [

7]. This inflammation can be caused by several factors, including chronic alcoholism, gallstones, hypertriglyceridemia syndromes, drugs, and chemical agents. AP is one of the most common gastrointestinal causes of hospitalization worldwide. It presents with a wide range of clinical courses, from mild, self-limiting inflammation to severe disease associated with permanent organ failure and high mortality rates [

8,

9]. Mortality rates of 30–40% have been reported, particularly among patients who develop organ failure [

10]. The diagnosis of AP is based on abdominal pain, predominantly in the epigastric region, amylase and lipase levels more than three times higher than normal, and imaging findings (peripancreatic fluid, pancreatic inflammation), with at least two of these criteria being positive [

8,

11].

The deep location of the pancreas, its proximity to other organs, and the inflammatory changes in the pancreas and surrounding tissues following AP led to blurring of the pancreatic boundaries. This makes it difficult to assess necrosis and peripancreatic involvement rates, resulting in interobserver variability in radiological severity classification [

12,

13]. Therefore, difficulties may arise in reporting CT scans and in determining the severity of the disease. An early and accurate diagnosis is critical for determining appropriate treatment strategies, preventing complications, and reducing mortality rates. Reliably differentiating AP from normal pancreatic tissue plays a significant role in clinical decision-making. Accurate and early classification of AP relative to normal pancreas tissue is crucial for predicting the disease’s clinical course and for guiding clinical decisions, such as admission to the intensive care unit, monitoring strategy determination, and intervention timing.

In AP, the revised Atlanta classification is used clinically, while the Balthazar classification and the CT severity index are used radiologically [

8,

14]. However, both types of scoring rely heavily on subjective evaluation, which can lead to discrepancies in assessment among clinicians and radiologists, particularly in the early stages of the disease or with borderline patients. Furthermore, manually evaluating CT images depends on the specialist’s experience and can present diagnostic difficulties, particularly in cases of early-stage acute pancreatitis or cases with subtle morphological changes. Additionally, manually analyzing high volumes of image data is time-consuming and increases the clinical workload.

Previous studies on the analysis of pancreatic images mostly focus on pancreatic segmentation or pancreatic cancer detection [

15,

16,

17,

18,

19]. Recently, artificial intelligence-based approaches have been used to improve the standardization of classification in AP [

20,

21]. In addition, applications based on deep learning technologies have significantly advanced the analysis of medical images in recent years [

22,

23,

24]. Moreover, deep learning models applied to CT images have shown promising results in differentiating between mild and severe disease, either alone or in combination with clinical and laboratory parameters from radiological images [

25,

26]. However, studies on the automatic detection of AP and the classification of AP and normal pancreas are limited. Many current studies focus on CNN-based deep learning methods, but the effectiveness of ViT-based architectures for this purpose has not been sufficiently addressed. In addition, while many studies evaluate on a slice-by-slice basis, only a few studies address the more clinically meaningful patient-level classification approach. Patient-level classification provides results that are more consistent with the clinical diagnostic process because it enables a holistic evaluation of the information in the entire CT scan [

27]. This study proposes a transformer-based deep learning model for the automatic classification of AP and normal pancreas from CT images. The model effectively learns local and global features from CT images for patient-level classification. The main contributions of this study are summarized below:

- i.

Automatic classification of AP and normal pancreas from CT images using a ViT-based deep learning model.

- ii.

A patient-level classification approach that is more meaningful in terms of clinical applications.

- iii.

A comprehensive comparison of the performance of the transformer-based model with that of other state-of-the-art deep learning architectures.

- iv.

Improving classification performance by effectively learning local and global features from CT images.

- v.

Demonstration of the usability of artificial intelligence–based decision support systems in diagnosing AP and contributing to clinical applications.

3. Results

In this study, a classification system based on ViT and CNN deep learning architectures was developed for the automatic classification of AP and normal pancreas from CT images. Various experimental analyses were conducted to evaluate the system’s performance comprehensively, and different state-of-the-art deep learning architectures, such as Swin, ConvNeXtV2, EfficientNet, and ResNet50, were comparatively examined. All experiments were conducted using the Python programming language (v3.11.9). The PyTorch deep learning framework (v2.6.0) was used in the development, training, and test of the deep learning models. PyTorch is a popular choice for medical image analysis studies due to its dynamic computational graphics, GPU acceleration support, and flexible model development infrastructure. Moreover, the PyTorch image models (TIMM) library (v1.0.14) was employed to facilitate the straightforward and efficient implementation of contemporary, optimized deep learning architectures.

All experimental processes were performed on a workstation running the high-performance Linux Ubuntu operating system. During training and testing, GPU acceleration was used to reduce model training time significantly. The experimental environment on the workstation featured a Gigabyte B650M motherboard and an AMD Ryzen 5–5.17 GHz processor, equipped with a total of 64 GB of DDR4 RAM, enabling efficient processing of large-scale medical image data in memory. The graphics processing unit (GPU) was an NVIDIA GeForce RTX 4070 Ti SUPER with 16 GB RAM. Thanks to GPU acceleration, training deep learning models was sped up significantly, enabling efficient processing of high-resolution CT images. Data storage was performed using a 5 TB disk with high read-write speeds.

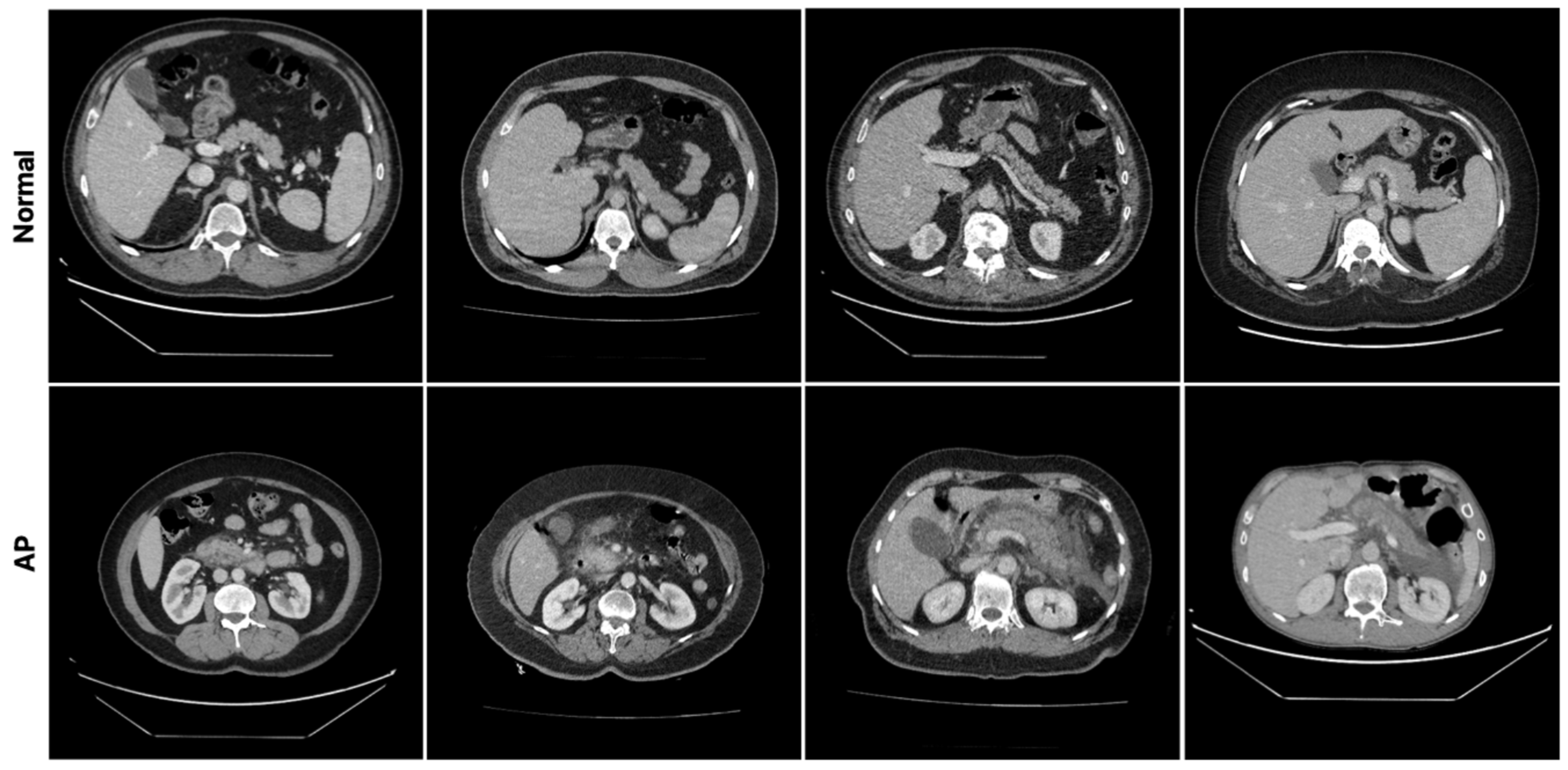

The pancreatic dataset, which was created specifically for this study, was generated from CT images of patients with AP and normal pancreatic tissue. The dataset contains CT images from 183 patients, 103 of whom are in the normal group and 80 of whom are in the AP group. At the beginning of the data preparation process, the patients were divided into two classes according to their clinical diagnoses: normal and AP. The appearance of pancreatic tissue can be influenced by CT acquisition protocols and contrast enhancement phases, not only due to pathology, but also due to imaging conditions. In our dataset, CT scans were acquired under routine clinical conditions. Therefore, images obtained using different contrast enhancement phases may be included in both normal and AP cases. The image windowing parameters were set to a window width (WW) of 400 and a window level (WL) of 50 to ensure optimal contrast and visibility of the pancreatic tissue. These settings allow for more distinct visualization of the pancreatic parenchyma and surrounding anatomical structures, highlighting the model’s features. To prevent slice-level from the same patient from appearing in both the training and test sets and to reliably evaluate the model’s generalization ability, the dataset was split into training and test sets on a patient-by-patient basis. To avoid analyzing anatomical regions that could affect classification performance, only slices with clearly visible pancreatic tissue were selected for the analysis.

Each patient’s CT scan consists of 200 to 450 axial slices. To train deep learning models, all CT slices were converted from the original DICOM format to PNG format. In patient-level, approximately 80% of the data was allocated to the training set, and the remaining 20% was allocated to the test set. Of the patients in the normal group, 82 were in the training set and 21 were in the test set. Of the patients in the AP group, 64 were in the training set and 16 were in the test set. This distribution provides a balanced training and testing structure for both groups. When evaluated on a slice-level basis, 4929 out of 6225 (79.18%) slices in the normal group were in the training set, and 1296 out of 6225 (20.82%) were in the test set. In the AP group, out of 5542 slices, 4309 (77.75%) were in the training set, and 1233 (22.25%) were in the test set. In addition, the model training did not include demographic data or laboratory findings.

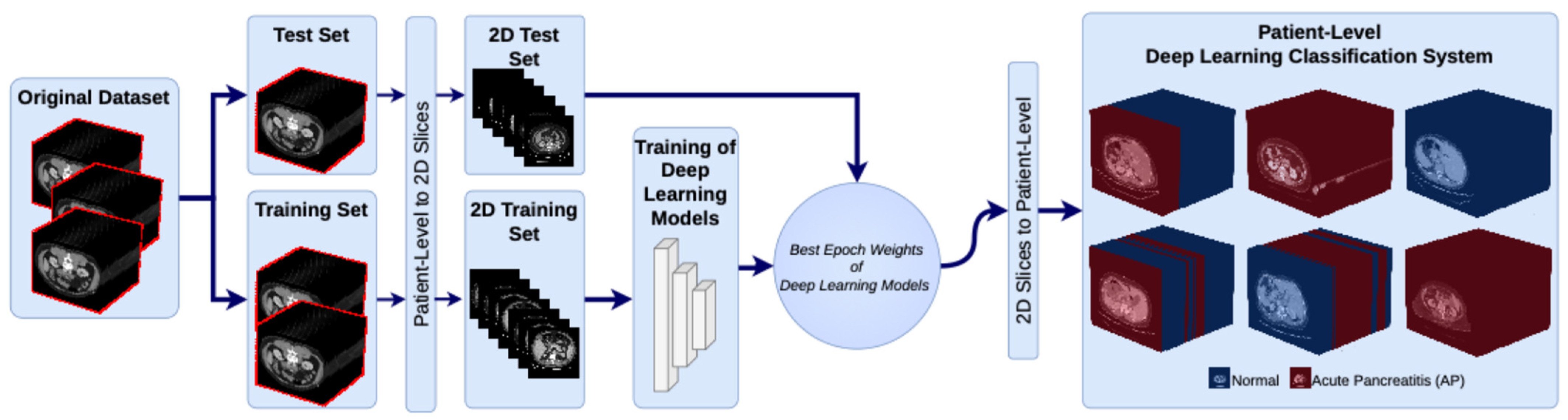

During the training process, the deep learning models were trained using only the training set. The weighting parameters that provided the best performance for each model were then determined. Afterwards, a test dataset was used to evaluate the performance of the models with these optimal weights. Model performance was analyzed at two levels. First, we evaluated slice-level classification performance, followed by patient-level classification performance, the latter of which is more suitable for clinical applications. In this study, patient-level classification was performed using an outcome-level aggregation strategy rather than a feature-level one. Specifically, the model independently classified each CT slice, and the final patient-level decision was obtained by aggregating the predictions of all slices belonging to the same patient. The aggregation was performed using a majority voting approach, whereby the final class label was determined based on the most common prediction across all slices. This approach reduces the impact of potential misclassifications at slice level and provides a more stable, clinically meaningful prediction at patient level.

In this study, both CNN-based and transformer-based deep learning architectures were used to classify AP and normal pancreas from CT images. During the experimental evaluation process, all models were trained and tested using the same dataset and the same training and test splitting strategy. The same preprocessing steps were also used for all models. In addition, training and test sets were created using a patient-level data splitting method to prevent data leakage. To ensure a fair and objective comparison of the models, common hyperparameters were defined for all architectures and the same training configuration was applied. This allows the results to more accurately reflect the system’s real-world performance in clinical settings. First, all models were trained for 100 epochs. During training, the batch size parameter was set to 64, representing the number of image slices presented to the model simultaneously in each iteration. This value optimizes training time and ensures stable model learning. The memory capacity of the GPU and the number of parameters of the model architecture were considered when determining this parameter. The learning rate, a critical hyperparameter that determines the step size used to update network weights during training, was set to 0.0001. The image size parameter determines the resolution at which the CT images given to the model are resized. To ensure comparisons across all architectures are fair, the input image size was standardized to 224 × 224 pixels. Since this study includes two classes (AP and normal pancreas), the num_classes value was set to 2. The Adam optimization algorithm, which has an adaptive learning rate update mechanism, was used to optimize the model. The cross-entropy loss function, which is commonly used for classification problems, was used as the loss function.

While powerful, ViT models may struggle with overfitting and limited generalization when trained on relatively small or imbalanced medical datasets, such as pancreatic CT images. These issues are particularly relevant in medical imaging tasks, where acquiring data is difficult and class distributions may be imbalanced. In this study, MixUp was applied to dataset during the training phase. MixUp is a data augmentation technique which generates new training samples by combining pairs of input images and their corresponding labels in a linear way [

44,

45]. This approach enables the model to learn smoother decision boundaries and reduces the risk of memorization. For this study, MixUp was only applied during the training phase, with an alpha parameter of 0.2. The validation and test datasets remained unchanged. The aim of integrating MixUp into the training pipeline is to improve the model’s ability to capture subtle differences between AP and normal pancreatic tissue.

Figure 4 shows the training loss and accuracy values obtained during the training process for the ResNet50, EfficientNet, ConvNeXtV2, Swin, and ViT models evaluated in this study.

Figure 4a shows that all of the deep learning models exhibit rapid loss reduction in the early stages of training, indicating that they can quickly learn the dataset’s fundamental features. Notably, the ViT and ConvNeXtV2 models achieved lower loss values within the initial epochs of training. This demonstrates that transformer-based and modern CNN architectures can effectively learn local and global features in image data. In the later stages, the loss values of all models converge to zero and stabilize. However, some models, particularly the Swin and ResNet50 architectures, exhibited small fluctuations at certain epochs.

Figure 4b shows that the training accuracy of all models quickly reaches high values. In later epochs, the models’ accuracy stabilized at high levels. Notably, the ViT and ConvNeXtV2 models converged faster in terms of training accuracy and exhibited more stable learning behavior. This indicates that these models have a high representation learning capacity. Although the EfficientNet and ResNet50 models achieved high accuracy, their convergence speeds were relatively slower compared to transformer-based models. Overall, all models successfully converged during training and effectively learned the distinctive features in the dataset.

In this study, several key metrics were used to evaluate the classification performance of deep learning models. These metrics are Accuracy, Precision, Recall, and F1-score, respectively. They are given in Equations (1)–(4). These metrics are commonly used in binary classification problems and are based on true positives (TPs), true negatives (TNs), false positives (FPs), and false negatives (FNs) in the confusion matrix. Accuracy expresses the rate at which the model correctly classifies samples and measures its overall success. Precision shows the accuracy rate of positive predictions and measures how many of the samples predicted as positive by the model are actually positive. Recall, on the other hand, shows how many of the true positive samples were correctly classified by the model. It is particularly important in cases where false negatives (FNs) are critical. The F1-score is the harmonic mean of precision and recall and is preferred in imbalanced datasets.

Figure 5 shows how the ResNet50, EfficientNet, ConvNeXtV2, Swin, and ViT models classify patients using confusion matrices on the test set. The analysis evaluates the models’ ability to distinguish between the AP and normal classes using clinically significant performance metrics. The ResNet50 model correctly classified 10 out of 16 patients in the AP group and incorrectly classified 6 patients as normal. It correctly classified 19 out of 21 normal patients, while incorrectly classifying 2 as AP. These results suggest that the ResNet50 model is more effective in identifying normal patients, though it has relatively lower sensitivity in detecting AP cases. The EfficientNet model correctly classified 11 patients in the AP group and incorrectly classified 5 patients. In the normal class, it achieved 17 correct classifications and 4 incorrect ones. The EfficientNet model indicated the balanced performance for both classes but limited performance in terms of overall classification accuracy. The ConvNeXtV2 model achieved 12 correct classifications and 4 incorrect classifications in the AP class and 18 correct classifications and 3 incorrect classifications in the normal class. These results demonstrate that the ConvNeXtV2 model has a more balanced accuracy rate for both the AP and normal classes. Specifically, the lower number of incorrect classifications compared to other CNN-based models indicates that the ConvNeXtV2 architecture has stronger feature representation capabilities. In addition, Swin model obtained 10 correct and 6 incorrect classifications in the AP group and 19 correct and 2 incorrect classifications in the normal group. While the Swin model performed well in identifying the normal class, it struggled to detect AP cases. This suggests that the model tends to misclassify pathological cases as normal. The ViT model, on the other hand, shown the best classification performance of all the models. It correctly classified 13 out of 16 patients in the AP class and incorrectly classified only 3 patients. In the normal class, the ViT model correctly classified 20 out of 21 patients, misclassifying only 1. These results show that the ViT model reveals the greatest sensitivity and specificity in distinguishing between the AP and normal classes.

The metric-based results presented in

Table 3 reveal the performance of deep learning models evaluated on a patient-level test set in classifying AP and normal pancreas. Compared to other models, the ViT model achieved the highest results in all performance metrics, with a Precision of 92.86%, a Recall of 81.25%, an F1-score of 86.67%, and an Accuracy of 89.19%. The high Precision value indicates that the model correctly identified the vast majority of patients classified as AP. Similarly, the high Recall value shows that the ViT model is more successful than other models at identifying AP patients. The high F1-score indicates that the model has successfully achieved a balance between the Precision and Recall metrics. These results demonstrate that, thanks to its self-attention mechanism, the ViT architecture can learn pathological changes in pancreatic tissue more effectively and possesses stronger representational abilities. On the other hand, the ConvNeXtV2 model demonstrated the highest performance among the other models, achieving 81.08% Accuracy and a 77.42% F1-score. Its 75.00% Recall rate shows that it is more successful at detecting AP patients than the EfficientNet, ResNet50, and Swin models. This suggests that the ConvNeXtV2 architecture can perform more powerful feature extraction due to its modern CNN design.

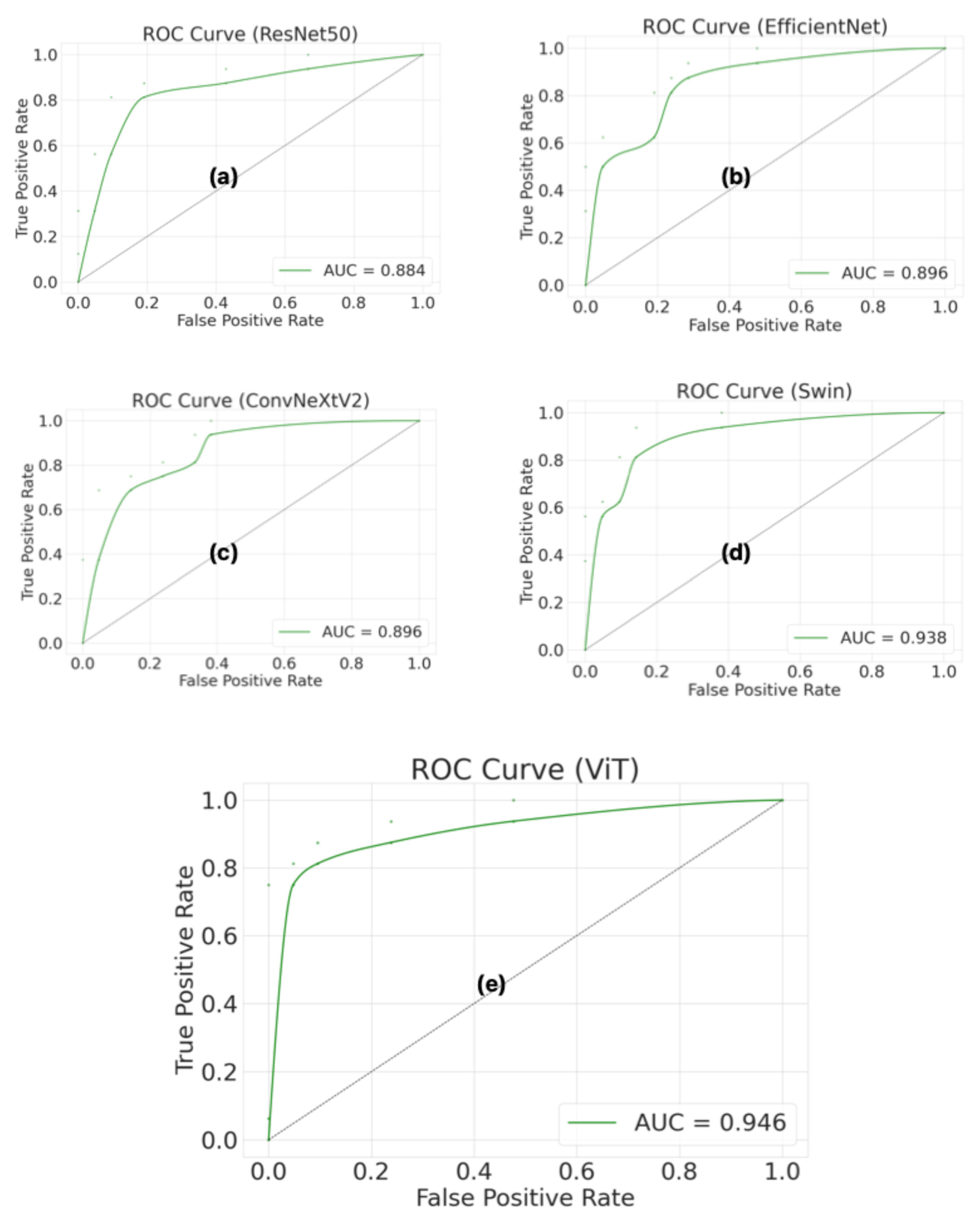

Figure 6 shows the receiver operating characteristic (ROC) curves and area under the curve (AUC) values for the ResNet50, EfficientNet, ConvNeXtV2, Swin, and ViT models, which were evaluated using a patient-level test set. The ROC curve and the AUC were used to evaluate the model’s ability to discriminate across different classification thresholds. The ViT model achieved the highest AUC value (0.946). The ViT model’s ROC curve is closest to the upper left corner, indicating that it performs better than other models in distinguishing between AP and normal pancreas classes. Furthermore, the high AUC value shows that the ViT model can provide high sensitivity and a low false positive rate at different thresholds. The Swin model indicated the closest performance to the ViT model, with an AUC of 0.938. The Swin model’s ROC curve is significantly distant from the diagonal line, indicating strong classification performance. The ConvNeXtV2 and EfficientNet models showed similar performance, with an approximately 0.896 AUC value for both. However, the ResNet50 model showed the lowest performance, with an AUC of 0.884. Additionally, the Swin model’s higher AUC value despite having the same confusion matrix results as ResNet50 indicates stronger overall classification performance. These findings confirm that the Swin model reveals more consistent and robust discrimination across all thresholds, not just at a single threshold.

In this study, a data augmentation strategy was employed to mitigate the impact of the dataset’s limited size on model performance and enhance its generalization ability. To this goal, the dataset was expanded to approximately three times its original size by applying ±20° rotation and ±20% vertical shift to the images in the training and test datasets. Examining the results presented in

Table 4 reveals that transformer-based models in particular gained significantly from this process. The ViT model achieved the highest sensitivity, with a recall value of 90.90%, making it the most successful model for accurately detecting AP cases. From a clinical perspective, a high recall value is crucial for minimizing false negatives. Similarly to ViT, the Swin model stands out as another model demonstrating balanced performance, with an accuracy of 85.14% and an F1-score of 82.16%. This approach has increased the robustness of the model against different spatial variations and reduced the risk of overfitting.

A majority of deep learning studies based on pancreatic CT images focus on detecting and segmenting pancreatic tumors, as well as determining pancreatic necrosis and disease severity [

46,

47,

48,

49]. However, studies focused on classifying normal pancreases and AP are quite limited [

20,

50]. Of the existing studies, most use a slice-level classification approach [

20]. In this study, we also evaluated the performance of deep learning models for slice-level results. According to the results presented in

Table 5, the Swin model achieved the best classification performance with 93.78% of Precision, 72.10% of Recall, an F1-score of 81.52%, and 84.06% of Accuracy. In contrast, the ViT model showed balanced performance, achieving 83.75% of Accuracy and an F1-score of 82.90% in the slice-level evaluation. Among CNN-based models, ResNet50, EfficientNet, and ConvNeXtV2 showed similar performance values. However, although slice-level classification methods can provide high accuracy values from a technical standpoint, they have limitations in clinical applications. In clinical practice, diagnostic and treatment decisions are performed on a patient-by-patient basis, not on a slice-by-slice basis. Slices containing the pancreas constitute approximately 20% of abdominal CT scans [

51,

52]. Therefore, slice-level evaluation methods may be affected by slices other than those containing the pancreas. This may limit the direct applicability of model performance in a clinical setting. Thus, high slice-level accuracy rates do not guarantee the model’s success in the clinical diagnostic process.

4. Discussion

In this study, CNN and transformer-based deep learning architectures were compared for the automatic classification of AP and normal pancreas from contrast-enhanced CT images, and the patient-level classification performance of the ViT architecture was evaluated in particular. The results revealed that the ViT model achieved higher Accuracy (89.19%), F1-score (86.67%), and area under the curve (AUC) with 0.946 than other CNN and transformer-based models. These results demonstrate that transformer-based architectures have a stronger representational capacity for distinguishing pathological changes in pancreatic CT images.

In this study, a Wilcoxon signed-rank test was applied to evaluate the statistical significance of the performance difference between the proposed ViT model for classification of AP and normal pancreas from CT scans and other state-of-the-art models. The test was performed using the classification accuracy results for the test set (37 patients) (correct = 1, incorrect = 0). The significance level was set at

p = 0.05. As shown in

Table 6, the Wilcoxon signed-rank test results revealed that the ViT model performed statistically significantly better than the EfficientNet and Swin Transformer models (

p = 0.031). However, the difference in performance between the ViT model and the ConvNeXtV2 (

p = 0.109) and ResNet50 (

p = 0.068) models was not statistically significant. These results demonstrate that the ViT architecture provides better, reliable performance in classifying pancreatic CT images due to its ability to model global contextual features.

In this study, 95% confidence intervals (CI) for the classification accuracy of deep learning models were calculated using Bootstrap analysis as shown in

Table 7. The ViT model achieved an accuracy rate of 89.19% (95% CI = 75.3–96.4%), demonstrating higher performance than all other models. Furthermore, odds ratio values between the ViT model and the other models were calculated using an effect size analysis. The results of this analysis show that the ViT model has clinically significant performance in terms of odds ratio values when compared to other methods.

Overall, the enhanced performance of the transformer-based ViT architecture can be explained by its global contextual feature learning capability provided by its self-attention mechanism. Unlike CNN-based architectures, which generally focus on local feature extraction, transformer architectures can model long-range relationships between different regions within an image. This feature enables more accurate learning of complex pathological findings, such as inflammation, edema, and changes in tissue density in pancreatic tissue. ROC analysis results also support this, with the ViT model having the highest AUC value. This indicates that the model performs stronger discriminative performance across different threshold values. These results show that the ViT architecture is a powerful alternative for solving medical image classification problems and can be used as an effective decision support system for the automated diagnosis of critical diseases, such as acute pancreatitis.

Figure 7 shows sample CT slices of patients that were misclassified by the ViT-based, patient-level classification approach. In

Figure 7a, a patient with normal pancreatic tissue being classified as having AP by the proposed model. This may be due to the model confusing parenchymal density heterogeneity, which is particularly prevalent in the corpus and tail regions of the pancreas, with inflammatory changes. By contrast, in

Figure 7b–d, patients diagnosed with AP were classified as normal. In these false-negative cases, the expected heterogeneity in the pancreatic parenchyma was not sufficiently prominent due to the images being acquired in the venous phase. In addition, the presence of free air around the liver and gallbladder alongside pancreatitis findings in some sections is noteworthy and is considered potentially related to perforation of the hollow viscus. Such complex, concomitant pathologies can make it difficult for the model to distinguish features, leading to misclassifications. Overall, these findings suggest that the model has difficulty with borderline cases, situations involving low contrast differences and cases with concomitant abdominal pathologies. Such cases are also clinically challenging from a diagnostic perspective.

In this study, the classification of normal pancreases and AP was primarily performed at the patient-level by evaluating CT images containing the pancreas. In addition, data split technique was also employed to prevent data leakage between the training and test sets in patient-level classification. Therefore, patient-level classification was obtained by aggregating slice-level predictions, reducing the impact of misclassified individual slices and providing a more stable, clinically meaningful decision. This significantly increases the number of training and test samples, despite the limited number of patients. Although the number of patients with AP may appear limited in patient-level classification, several factors support the reliability of the proposed classification framework. Each patient contributes a large number of CT slices, enabling the model to recognize a variety of anatomical and pathological patterns. Furthermore, the dataset includes patients with varying degrees of AP and different clinical presentations, enhancing the robustness of the model by providing heterogeneity. To mitigate potential limitations arising from the size of the dataset, data augmentation techniques were also applied during training to improve generalization and reduce overfitting.

The clinical applicability of AI systems in medical imaging goes beyond model performance and depends on several critical factors, including data quality, generalizability and integration into clinical workflows. While deep learning models, particularly transformer-based architectures, have demonstrated encouraging outcomes in medical image analysis, their implementation in the real world remains challenging. One important aspect is data quality and representativeness. In this study, the dataset was collected from a single center and reflects real-world clinical conditions, including variations in imaging protocols and patient characteristics. Furthermore, translating AI models into clinical practice requires robust validation across multicenter datasets, standardized imaging protocols and prospective evaluation. The variability encountered in routine clinical settings may not be fully captured by models trained on retrospective datasets. Another key consideration is interpretability and clinical acceptability. While the proposed model shows promise, its adoption in clinical workflows would require explainability mechanisms and integration with radiological decision-making processes.