Object or Background: An Interpretable Deep Learning Model for COVID-19 Detection from CT-Scan Images

Abstract

:1. Introduction

2. Materials and Methods

2.1. Related Work

2.2. Data

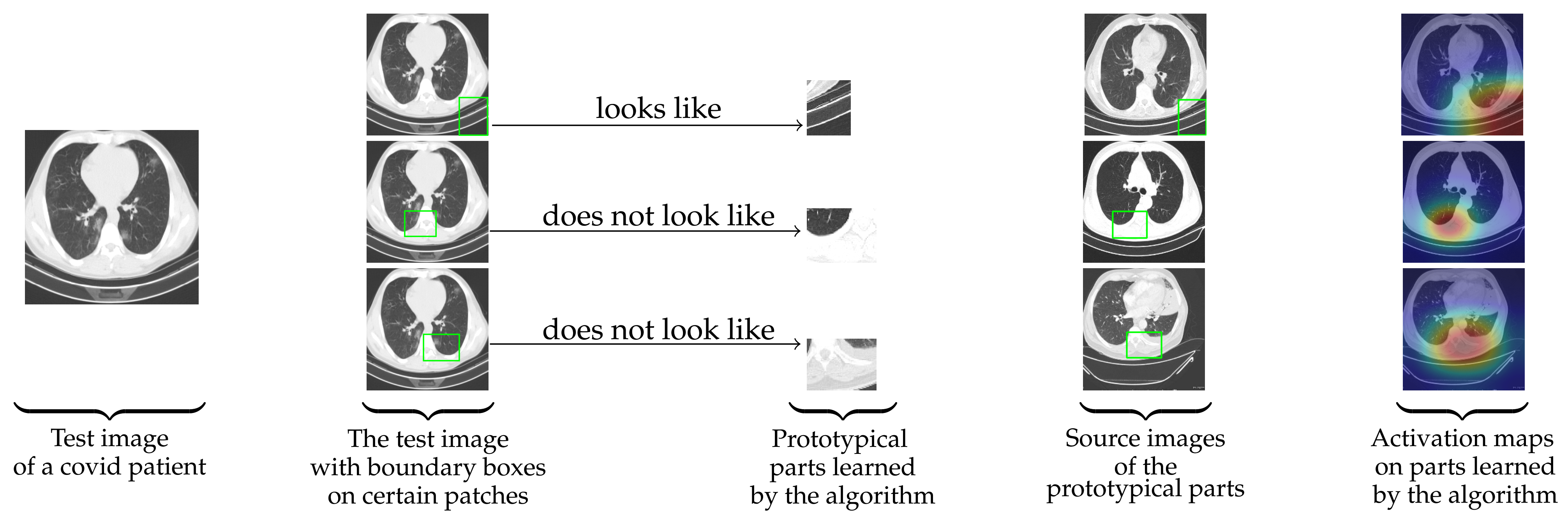

2.3. Working Principal and Novelty of Ps-ProtoPNet

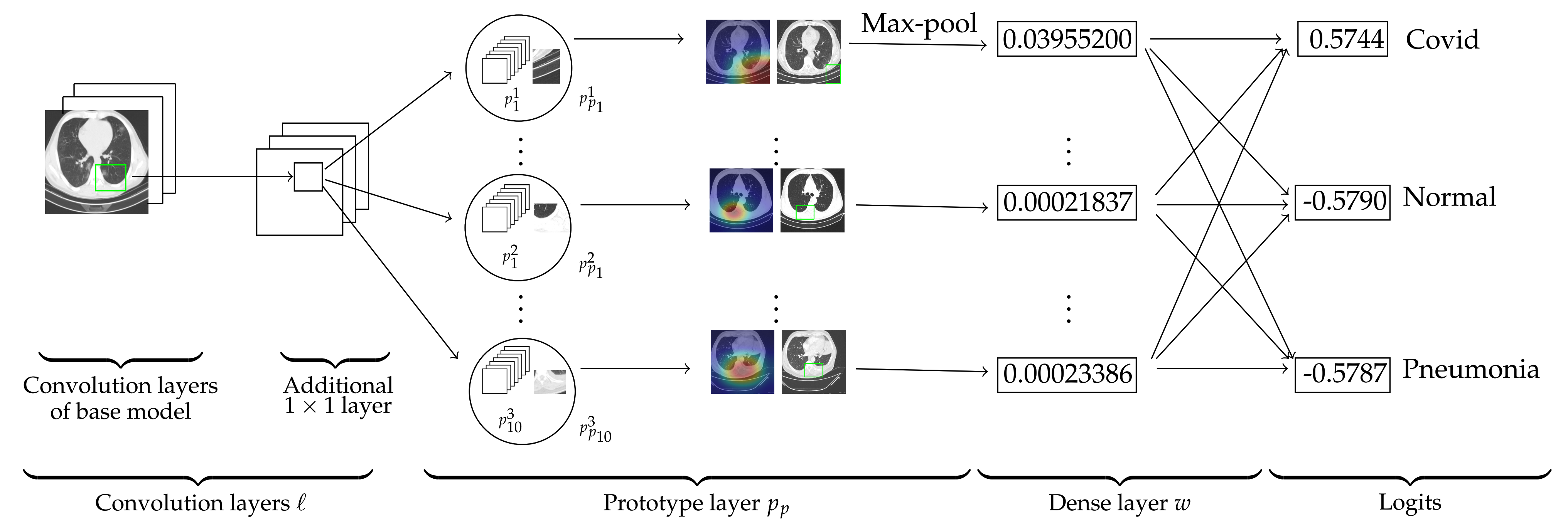

2.4. Ps-ProtoPNet Architecture

2.5. The Training of Ps-ProtoPNet

2.5.1. Optimization of All Layers before the Dense Layer

2.5.2. Push of Prototypical Parts

2.6. Explanation of Ps-ProtoPNet with an Example

3. Results

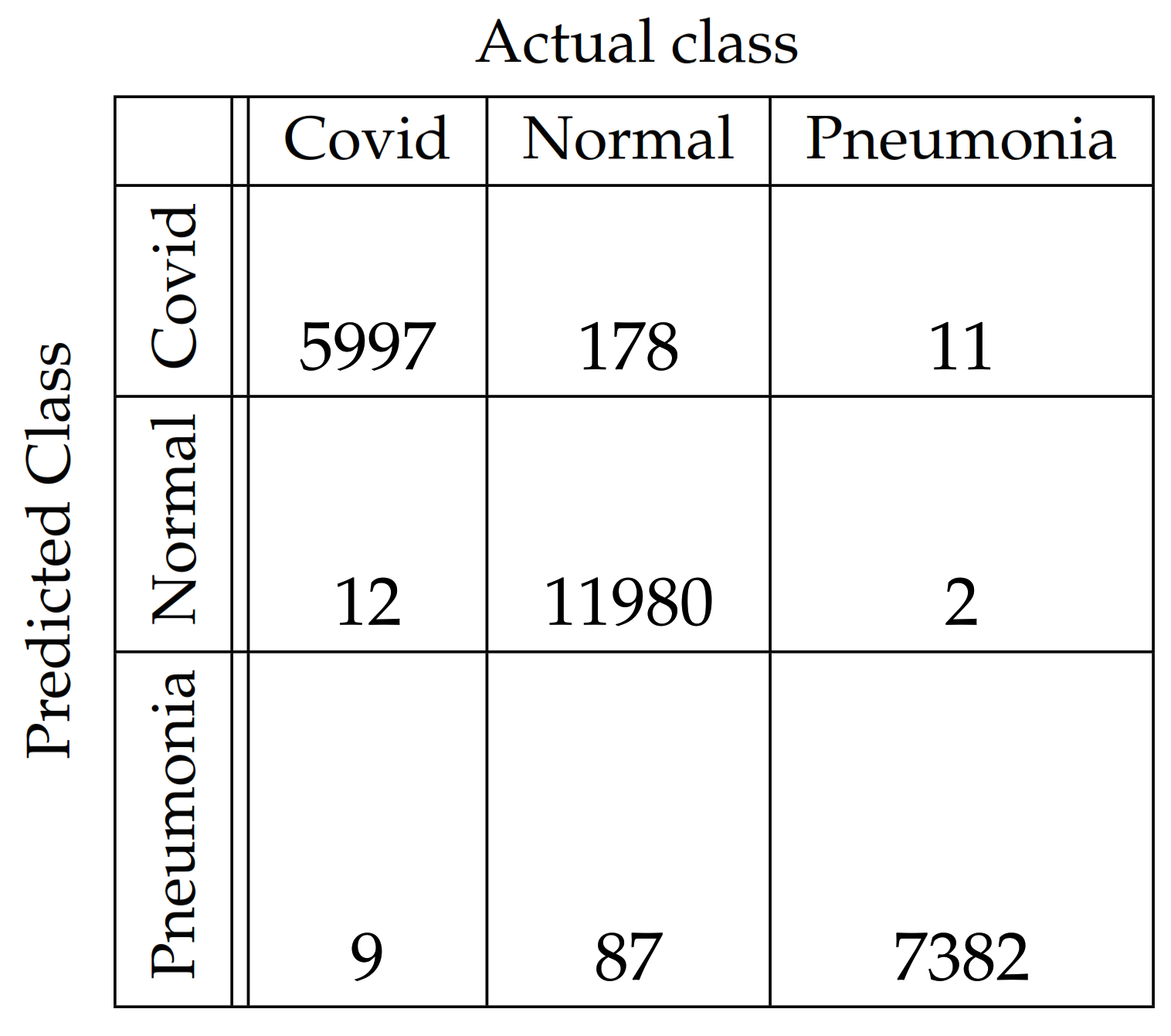

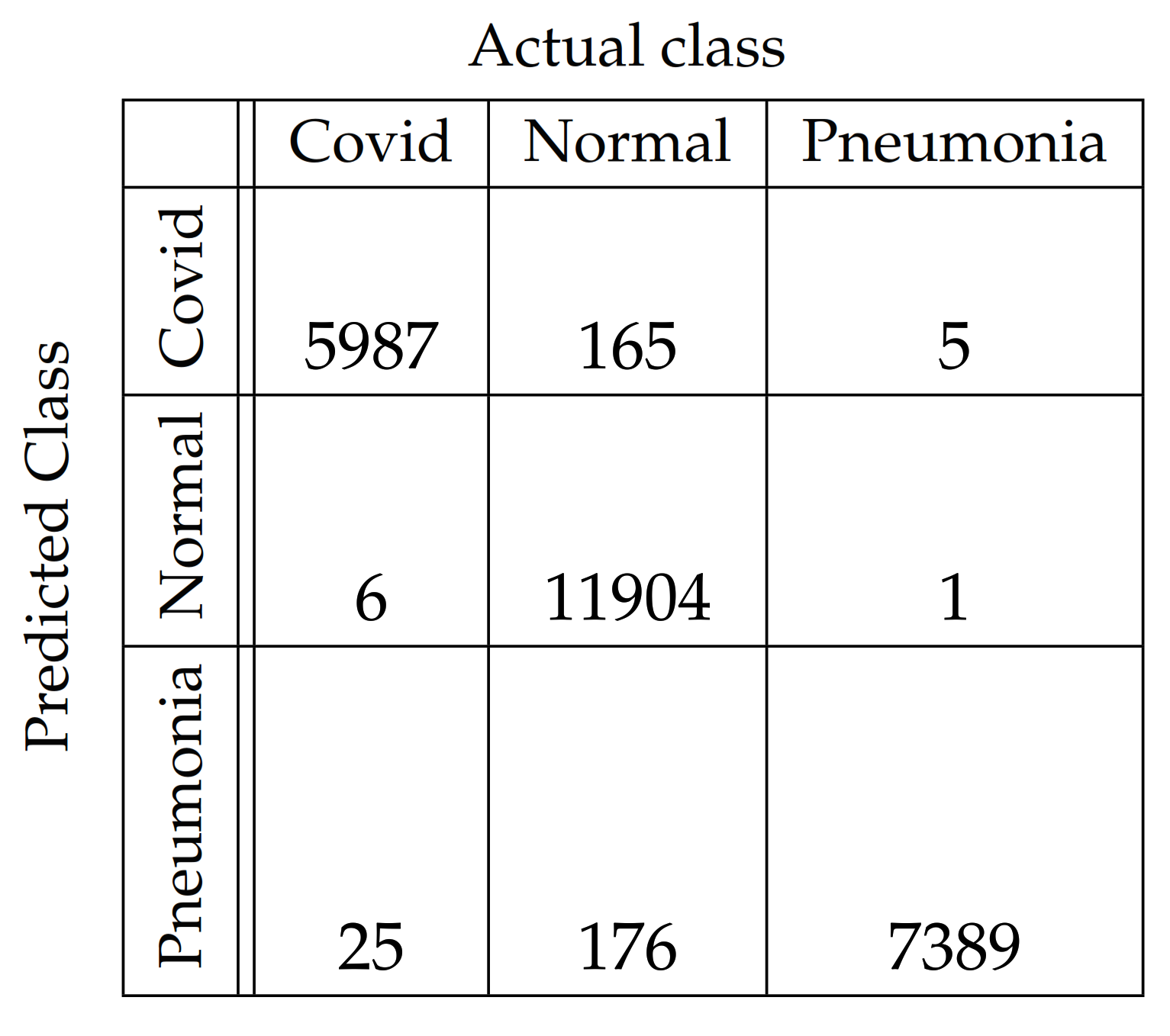

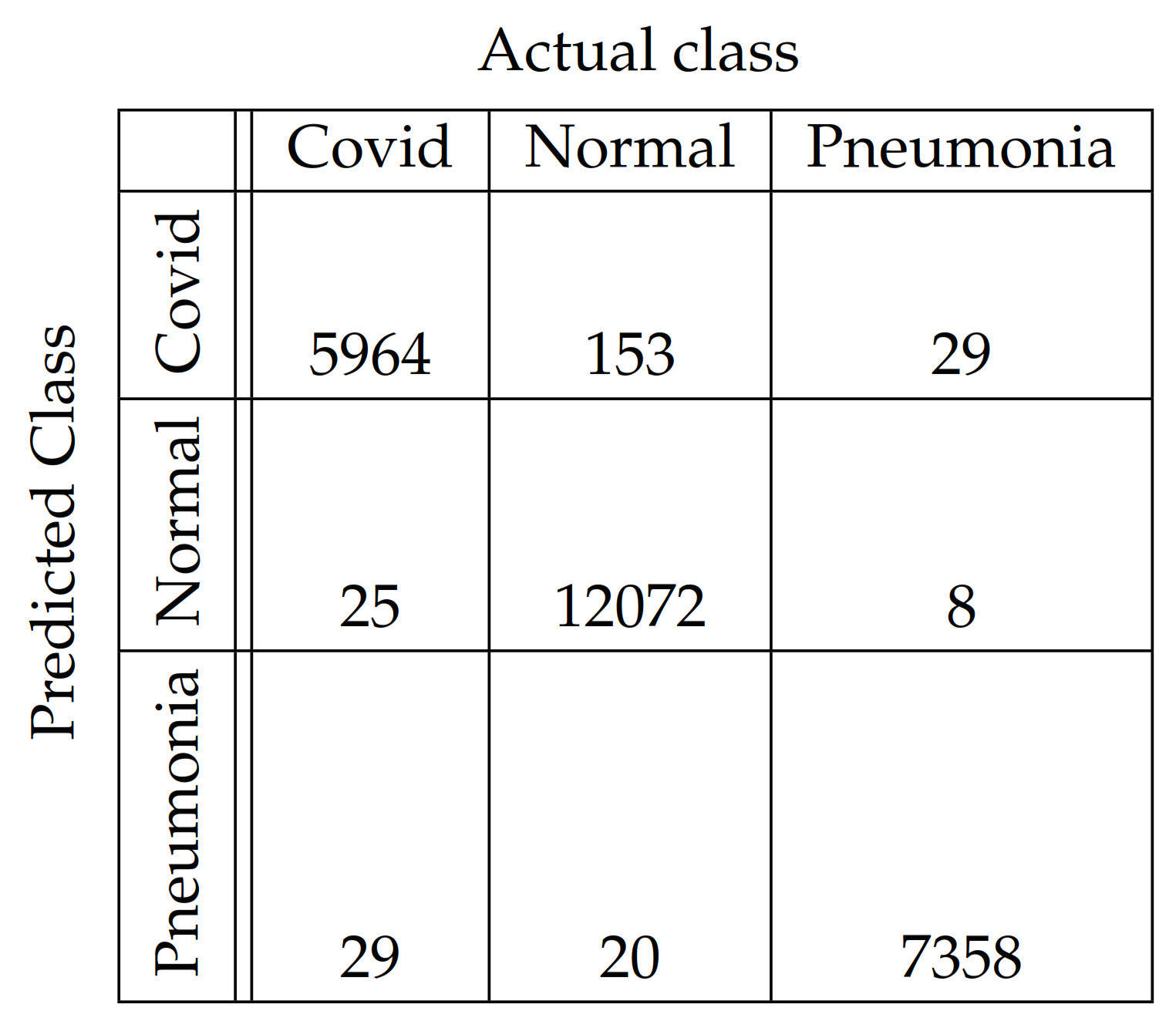

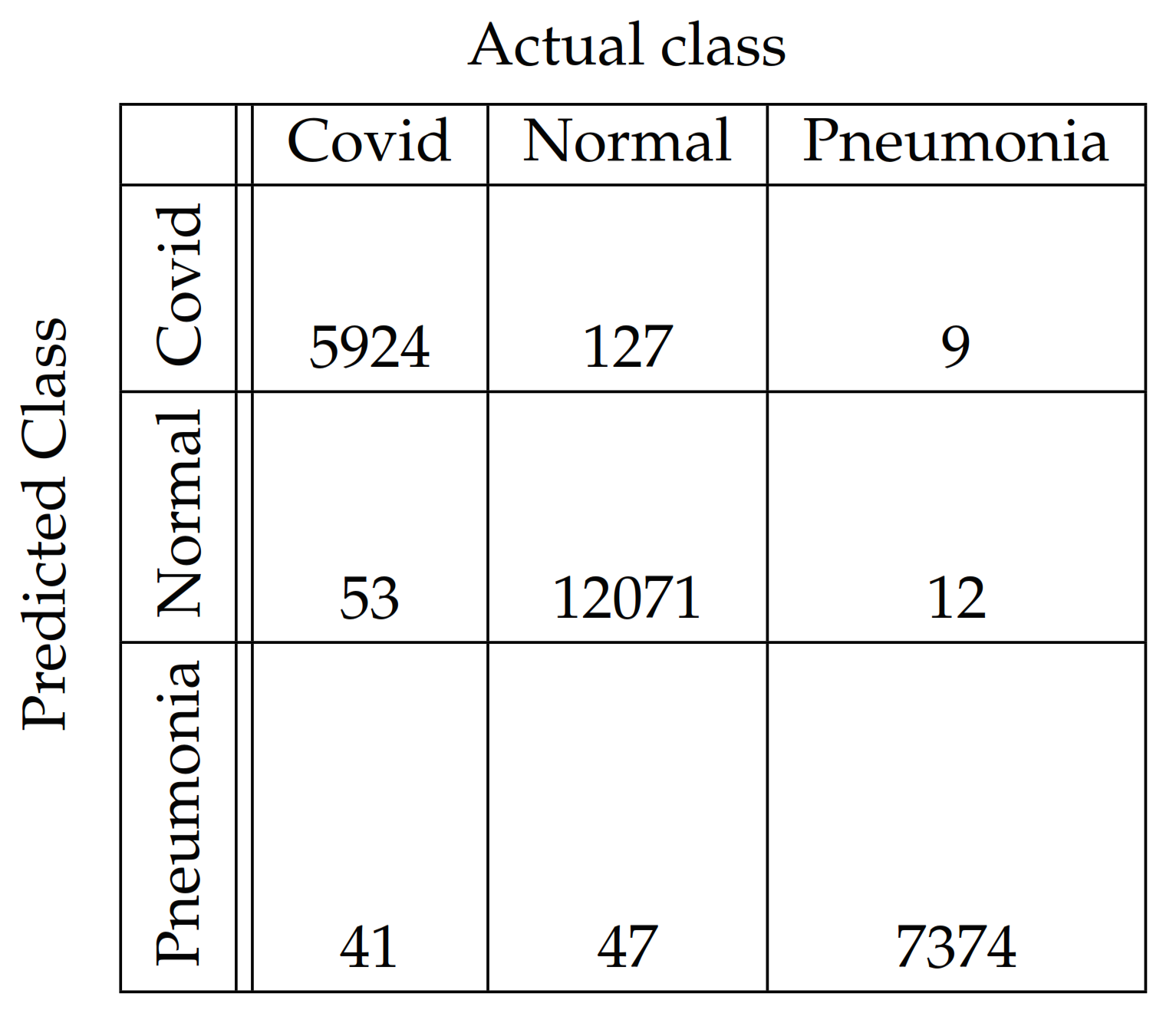

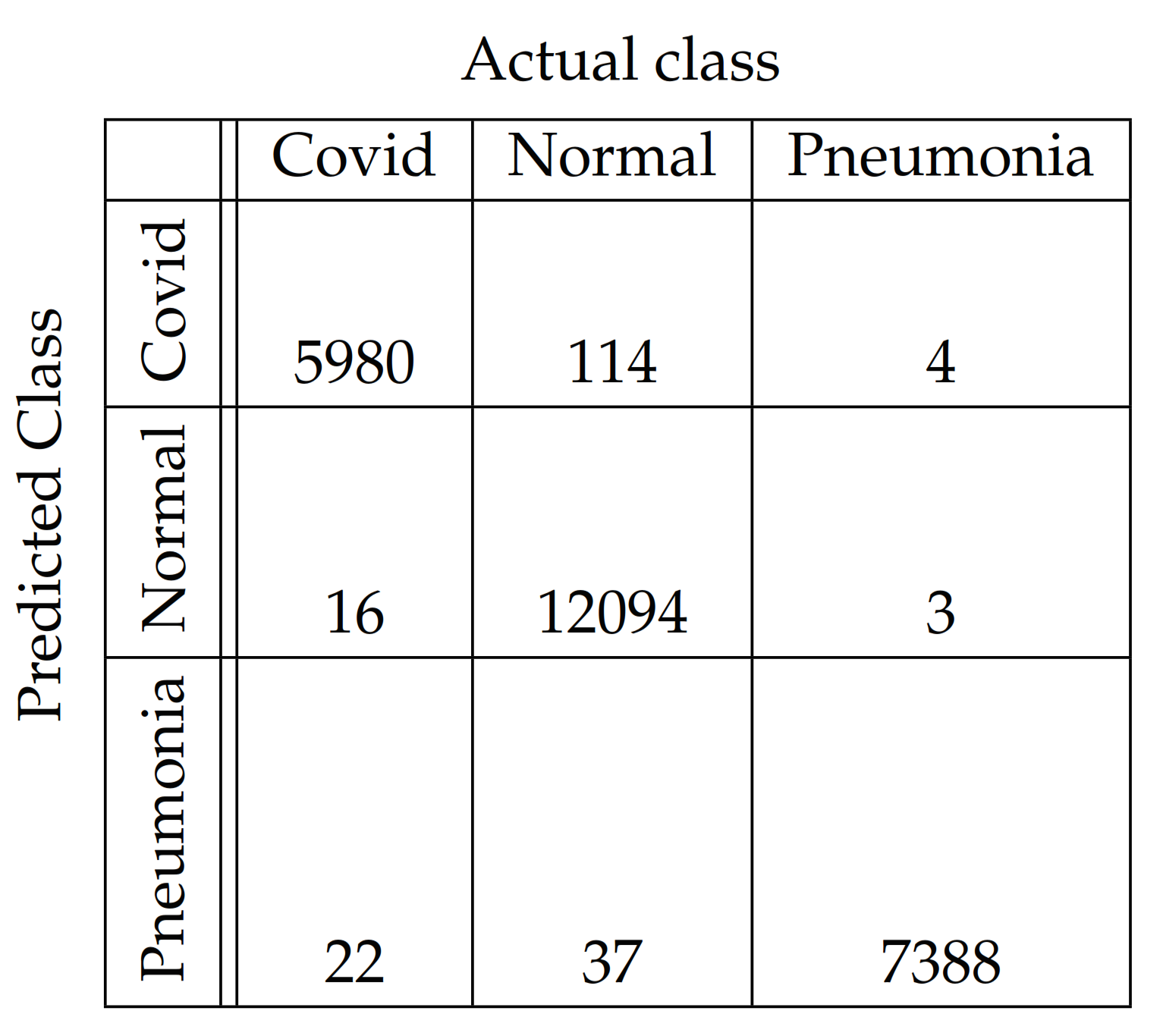

3.1. The Metrics and Confusion Matrices

3.2. The Performance Comparison of the Models

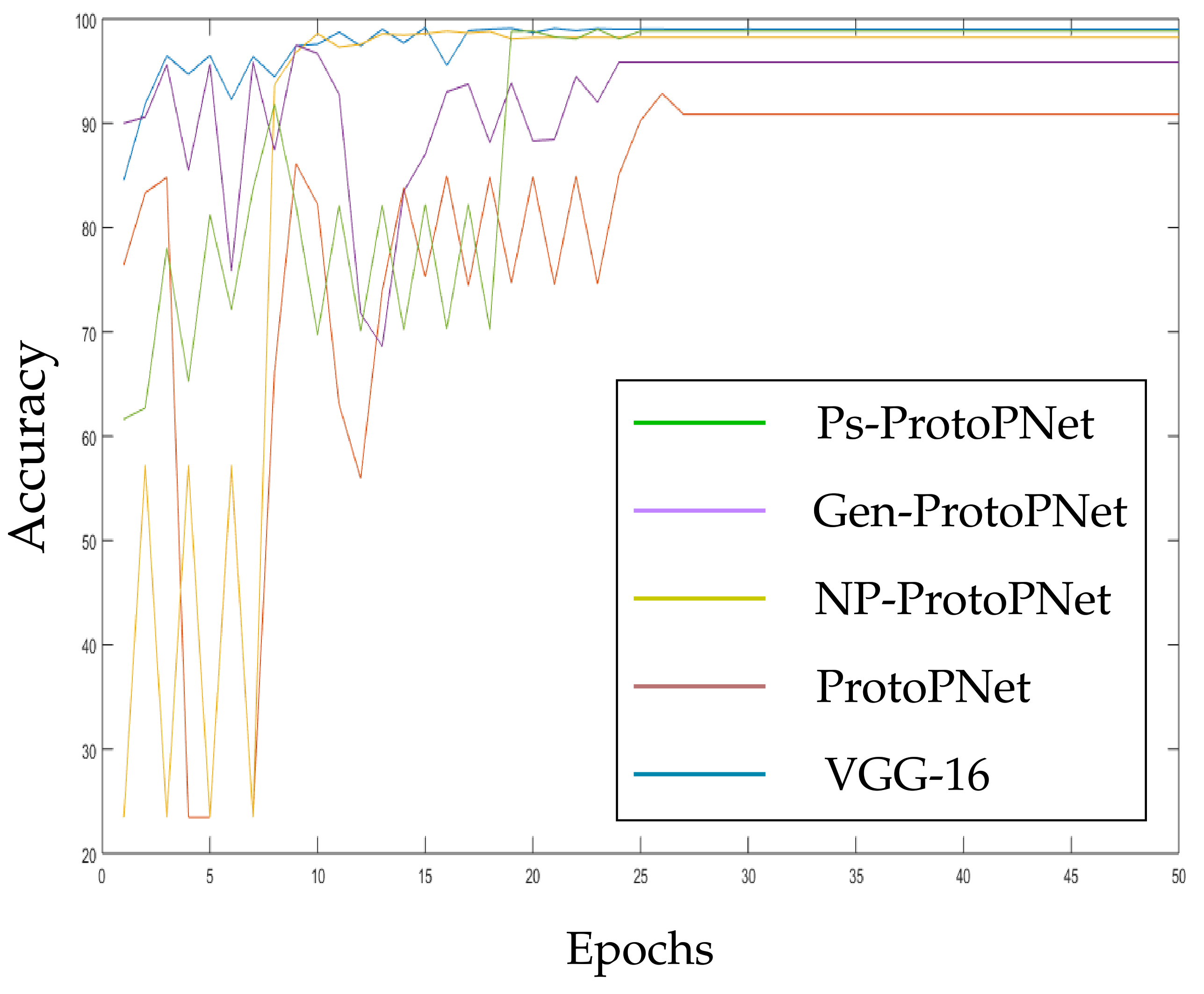

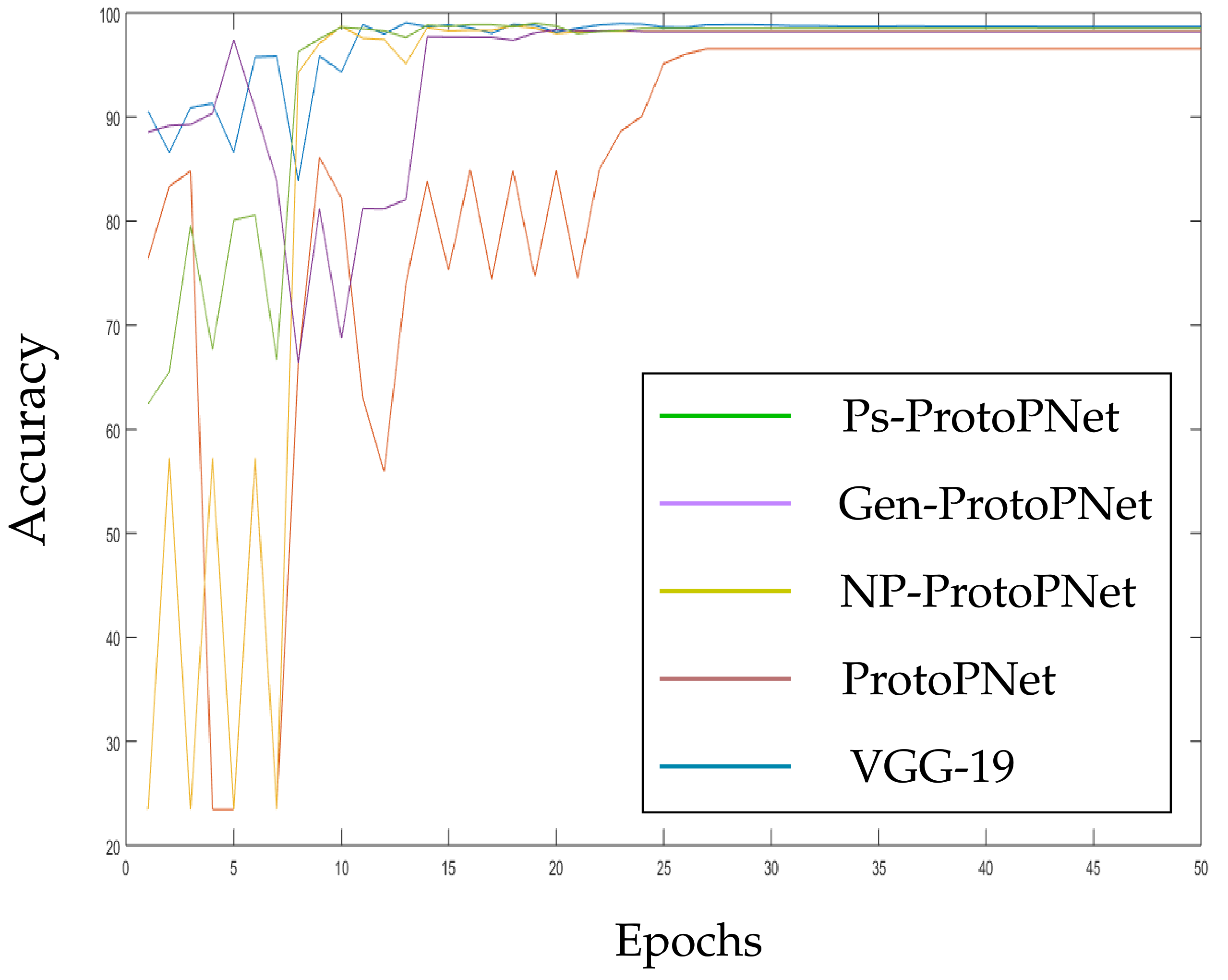

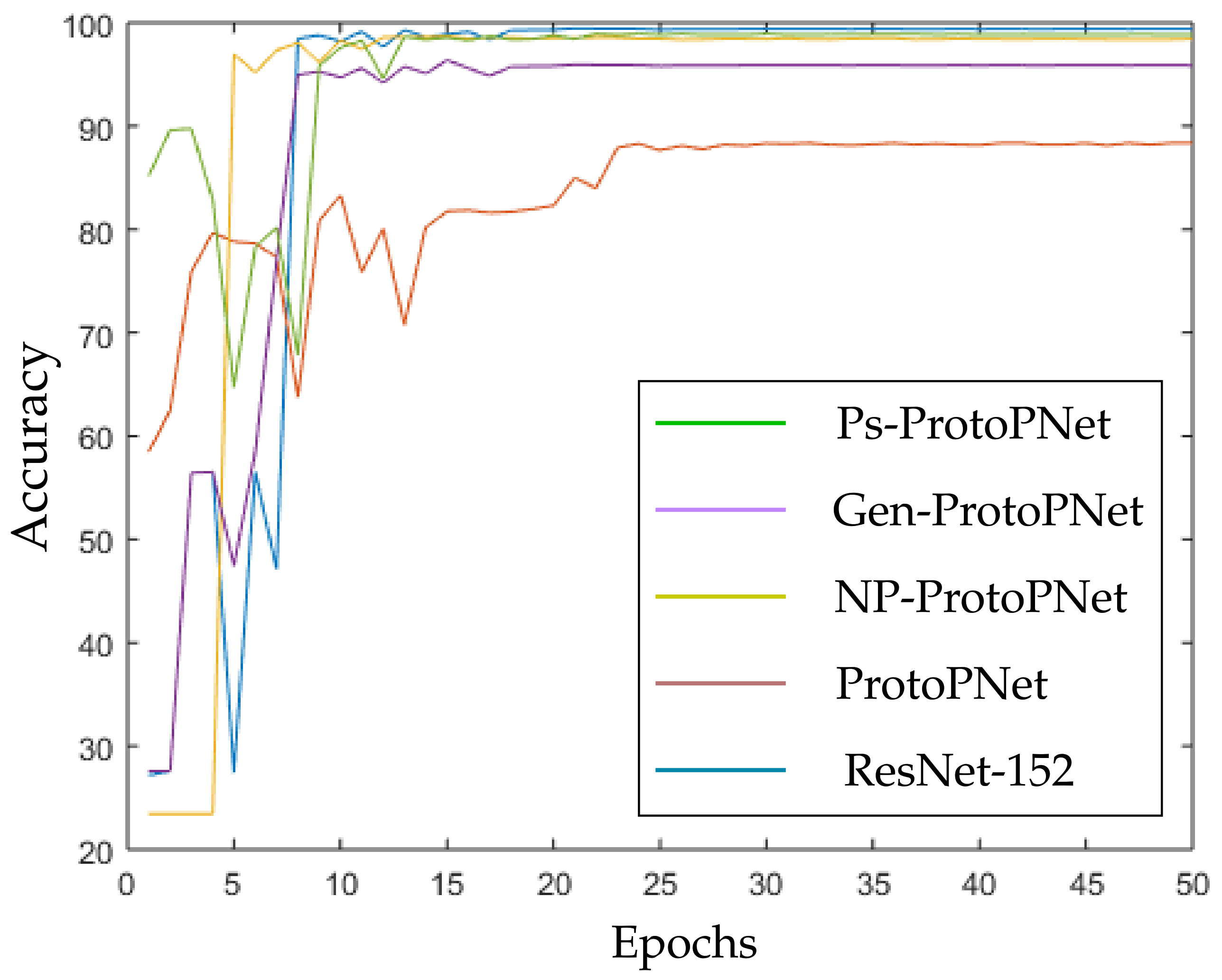

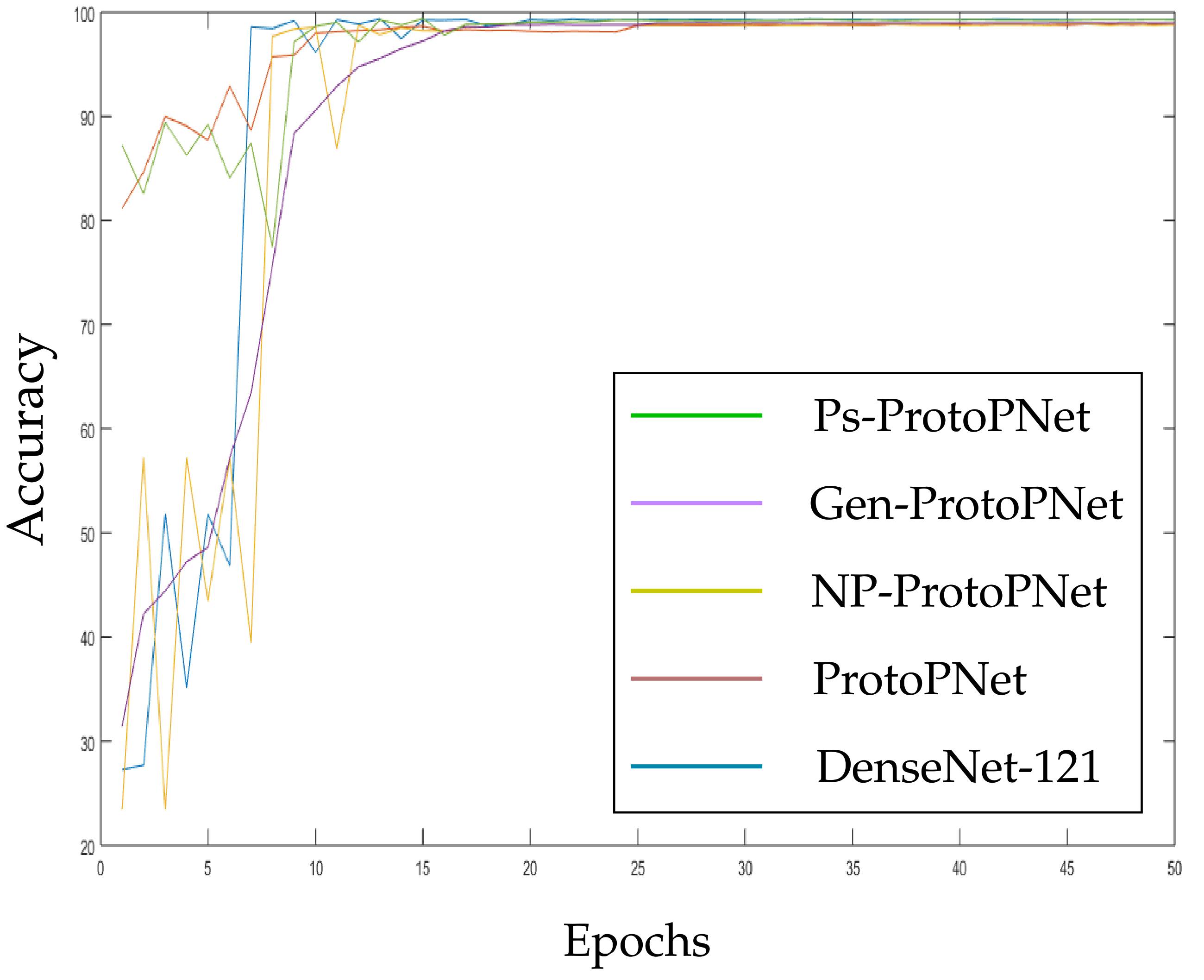

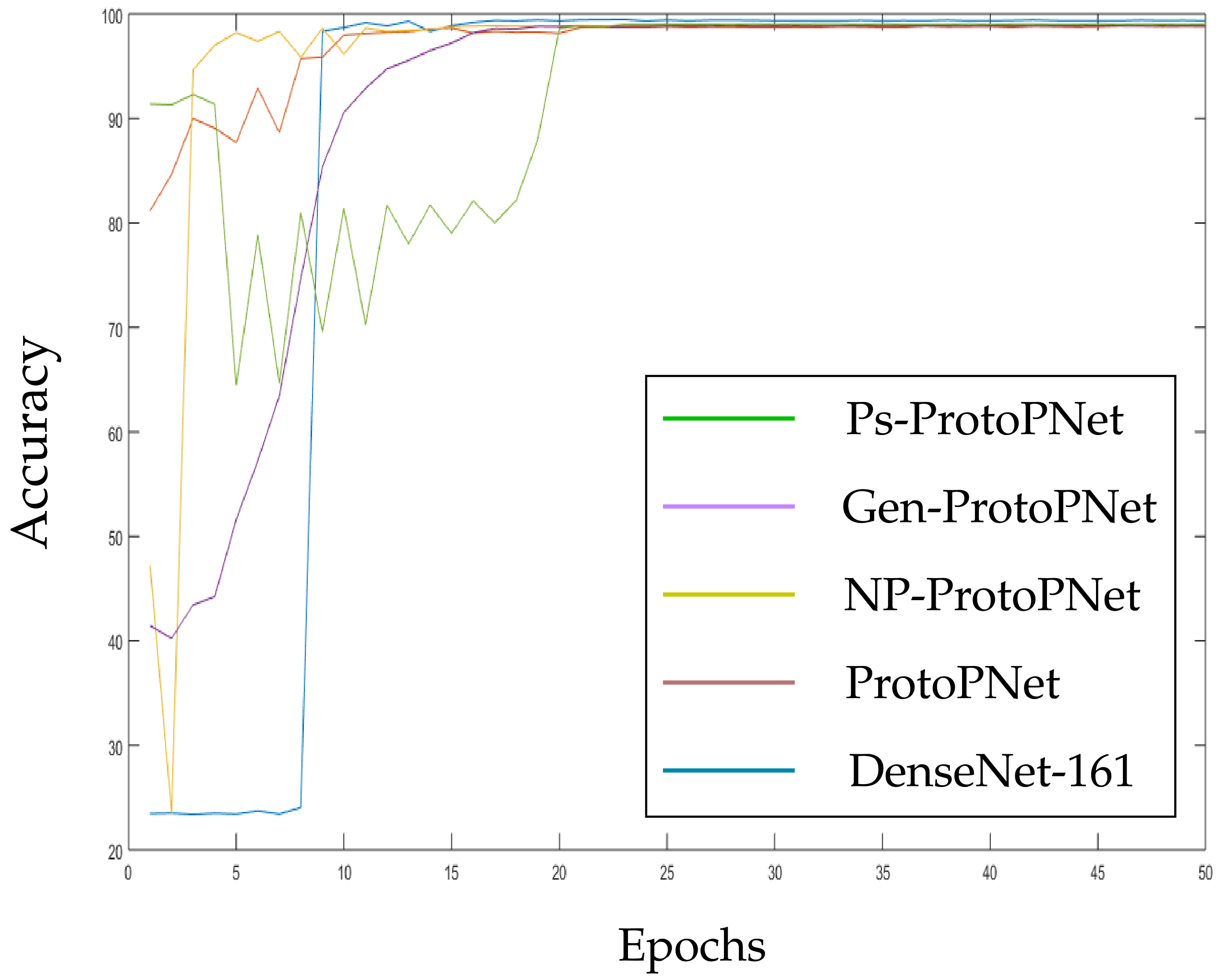

3.3. The Graphical Comparison of the Accuracies

3.4. The Test of Hypothesis for the Accuracies

3.5. The Impact of Change in the Hyperparameters of the Last Layer

- A1

- ;

- A2

- there exists some δ with such that:

- A2a

- for all incorrect classes and , we have , where ϵ is given by and ;

- A2b

- for all , we have

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Wikipedia. Variants of SARS-CoV-2. Available online: https://en.wikipedia.org/wiki/Variants_of_SARS-CoV-2#Variants_of_Interest_(WHO) (accessed on 30 June 2021).

- Wikipedia. COVID-19 Testing. Available online: https://en.wikipedia.org/wiki/COVID-19_testing (accessed on 24 August 2021).

- Al-Waisy, A.S.; Mohammed, M.A.; Al-Fahdawi, S.; Maashi, M.S.; Garcia-Zapirain, B.; Abdulkareem, K.H.; Mostafa, S.A.; Kumar, N.M.; Le, D.-N. COVID-DeepNet: Hybrid Multimodal Deep Learning System for Improving COVID-19 Pneumonia Detection in Chest X-ray Images. Comput. Mater. Contin. 2021, 67, 2409–2429. [Google Scholar] [CrossRef]

- Al-Waisy, A.S.; Al-Fahdawi, S.; Mohammed, M.A.; Abdulkareem, K.H.; Mostafa, S.A.; Maashi, M.S.; Arif, M.; Garcia-Zapirain, B. COVID-CheXNet: Hybrid deep learning framework for identifying COVID-19 virus in chest X-rays images. Soft Comput. 2020, 1–16. [Google Scholar] [CrossRef]

- Azemin, M.Z.C.; Hassan, R.; Tamrin, M.I.M.; Ali, M.A.M. COVID-19 Deep Learning Prediction Model Using Publicly Available Radiologist-Adjudicated Chest X-Ray Images as Training Data: Preliminary Findings. Hindawi Int. J. Biomed. Imaging 2020, 2020, 8828855. [Google Scholar] [CrossRef] [PubMed]

- Chaudhary, Y.; Mehta, M.; Sharma, R.; Gupta, D.; Khanna, A.; Rodrigues, J.J.P.C. Efficient-CovidNet: Deep Learning Based COVID-19 Detection From Chest X-Ray Images. In Proceedings of the 2020 IEEE 22nd International Conference on e-Health Networking, Applications and Services, Shenzhen, China, 1–2 March 2020. [Google Scholar] [CrossRef]

- Cohen, J.P.; Dao, L.; Roth, K.; Morrison, P.; Bengio, Y.; Abbasi, A.; Shen, B.; Mahsa, H.; Ghassemi, M.; Li, H. Predicting COVID-19 Pneumonia Severity on Chest X-ray With Deep Learning. Cureus 2020. [Google Scholar] [CrossRef] [PubMed]

- Gunraj, H.; Wang, L.; Wong, A. COVIDNet-CT: A Tailored Deep Convolutional Neural Network Design for Detection of COVID-19 Cases From Chest CT Images. Front. Med. 2020, 7, 1025. [Google Scholar] [CrossRef] [PubMed]

- Jain, G.; Mittal, D.; Thakur, D.; Mittal, M. A deep learning approach to detect COVID-19 coronavirus with X-Ray images. Biocybern. Biomed. Eng. 2020, 40, 1391–1405. [Google Scholar] [CrossRef] [PubMed]

- Jain, R.; Gupta, M.; Taneja, S.; Hemanth, D.J. Deep learning based detection and analysis of COVID-19 on chest X-ray images. Appl. Intell. 2021. [Google Scholar] [CrossRef]

- Kumar, R.; Arora1, R.; Bansal, V.; Sahayasheela, V.; Buckchash, H.; Imran, J.; Narayanan, N.; Pandian, G.N.; Raman1, B. Accurate Prediction of COVID-19 using Chest X-Ray Images through Deep Feature Learning model with SMOTE and Machine Learning Classifiers. medRxiv 2020. [Google Scholar] [CrossRef]

- Ozturk, T.; Talo, M.; Yildirim, E.A.; Baloglu, U.B.; Yildirime, O.; Acharya, U.R. Automated detection of COVID-19 cases using deep neural networks with X-ray images. Comput. Biol. Med. 2020, 121, 103792. [Google Scholar] [CrossRef] [PubMed]

- Reddy, G.T.; Bhattacharya, S.; Ramakrishnan, S.S.; Chowdhary, C.L.; Hakak, S.; Kaluri, R.; Reddy, M.P.K. An ensemble based machine learning model for diabetic retinopathy classification. In Proceedings of the 2020 international conference on emerging trends in information technology and engineering (ic-ETITE), Vellore, India, 26 December 2020; pp. 1–6. [Google Scholar]

- Sharma, A.; Rani, S.; Gupta, D. Artificial Intelligence-Based Classification of Chest X-Ray Images into COVID-19 and Other Infectious Diseases. Hindawi Int. J. Biomed. Imaging 2020, 2020, 8889023. [Google Scholar] [CrossRef]

- Zebin, T.; Rezvy, S. COVID-19 detection and disease progression visualization: Deep learning on chest X-rays for classification and coarse localization. Appl. Intell. 2020. [Google Scholar] [CrossRef]

- Chen, C.; Li, O.; Tao, C.; Barnett, A.J.; Su, J.; Rudin, C. This Looks Like That: Deep Learning for Interpretable Image Recognition. In Proceedings of the 33rd Conference on Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 8–14 December 2019. [Google Scholar]

- Singh, G.; Yow, K.-C. An Interpretable Deep Learning Model for COVID-19 Detection With Chest X-Ray Images. IEEE Access 2021, 9, 85198–85208. [Google Scholar] [CrossRef]

- Singh, G.; Yow, K.-C. These Do Not Look Like Those: An Interpretable Deep Learning Model. IEEE Access 2021, 9, 41482–41493. [Google Scholar] [CrossRef]

- Erhan, D.; Bengio, Y.; Courville, A.; Vincent, P. Visualizing Higher-Layer Features of a Deep Network. Technical Report 1341, the University of Montreal, June 2009. Also presented at theWorkshop on Learning Feature Hierarchies. In Proceedings of the 26th International Conference on Machine Learning (ICML 2009), Montreal, QC, Canada, 14–18 June 2009. [Google Scholar]

- Hinton, G.E. A Practical Guide to Training Restricted Boltzmann Machines. In Neural Networks: Tricks of the Trade; Springer: Berlin/Heidelberg, Germany, 2012; pp. 599–619. [Google Scholar]

- Lee, H.; Grosse, R.; Ranganath, R.; Ng, A.Y. Convolutional Deep Belief Networks for Scalable Unsupervised Learning of Hierarchical Representations. In Proceedings of the 26th International Conference on Machine Learning (ICML), Montreal, QC, Canada, 14–18 June 2009; pp. 609–616. [Google Scholar]

- Nguyen, A.; Dosovitskiy, A.; Yosinski, J.; Brox, T.; Clune, J. Synthesizing the preferred inputs for neurons in neural networks via deep generator networks. In Advances in Neural Information Processing Systems 29 (NIPS); NIPS: Grenada, Spain, 2016; pp. 3387–3395. [Google Scholar]

- Simonyan, K.; Vedaldi, A.; Zisserman, A. Deep Inside Convolutional Networks: Visualising Image Classification Models and Saliency Maps. In Proceedings of the Workshop at the 2nd International Conference on Learning Representations (ICLR Workshop), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Oord, A.v.; Kalchbrenner, N.; Kavukcuoglu, K. Pixel Recurrent Neural Networks. In Proceedings of the 33rd International Conference on Machine Learning (ICML), New York, NY, USA, 19–24 June 2016; pp. 1747–1756. [Google Scholar]

- Yosinski, J.; Clune, J.; Fuchs, T.; Lipson, H. Understanding Neural Networks through Deep Visualization. In Proceedings of the Deep Learning Workshop at the 32nd International Conference on Machine Learning (ICML), Lille, France, 6–11 July 2015. [Google Scholar]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 5–12 September 2014; pp. 818–833. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017. [Google Scholar]

- Smilkov, D.; Thorat, N.; Kim, B.; Viégas, F.; Wattenberg, M. SmoothGrad: Removing noise by adding noise. arXiv 2017, arXiv:1706.03825. [Google Scholar]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic Attribution for Deep Networks. In Proceedings of the 34th International Conference on Machine Learning (ICML), San Diego, CA, USA, 7–9 May 2017; pp. 3319–3328. [Google Scholar]

- Fu, J.; Zheng, H.; Mei, T. Look Closer to See Better: Recurrent Attention Convolutional Neural Network for Fine-grained Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 26 July 2017; pp. 4438–4446. [Google Scholar]

- Girshick, R. Fast R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Washington, DC, USA, 7–13 December 2015; pp. 1440–1448. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 28 June 2014; pp. 580–587. [Google Scholar]

- Huang, S.; Xu, Z.; Tao, D.; Zhang, Y. Part-Stacked CNN for Fine-Grained Visual Categorization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 30 June 2016; pp. 1173–1182. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. In Proceedings of the Advances in Neural Information Processing Systems 28 (NIPS), Montreal, QC, Canada, 7–12 December 2015; pp. 91–99. [Google Scholar]

- Simon, M.; Rodner, E. Neural Activation Constellations: Unsupervised Part Model Discovery with Convolutional Networks. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 1143–1151. [Google Scholar]

- Uijlings, J.R.; Sande, K.E.V.D.; Gevers, T.; Smeulders, A.W. Selective Search for Object Recognition. Int. J. Comput. Vis. 2013, 104, 154–171. [Google Scholar] [CrossRef] [Green Version]

- Xiao, T.; Xu, Y.; Yang, K.; Zhang, J.; Peng, Y.; Zhang, Z. The Application of Two-Level Attention Models in Deep Convolutional Neural Network for Fine-grained Image Classification. In Proceedings of the Computer Vision and Pattern Recognition (CVPR), 2015 IEEE Conference, Boston, MA, USA, 12 June 2015; pp. 842–850. [Google Scholar]

- Zhang, N.; Donahue, J.; Girshick, R.; Darrell, T. Part-based R-CNNs for Fine-grained Category Detection. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 5–12 September 2014; pp. 834–849. [Google Scholar]

- Zheng, H.; Fu, J.; Mei, T.; Luo, J. Learning Multi-Attention Convolutional Neural Network for Fine- Grained Image Recognition. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 5209–5217. [Google Scholar]

- Zhou, B.; Khosla, A.; Lapedriza, A.; Oliva, A.; Torralba, A. Learning Deep Features for Discriminative Localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 2921–2929. [Google Scholar]

- Zhou, B.; Sun, Y.; Bau, D.; Torralba, A. Interpretable Basis Decomposition for Visual Explanation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 119–134. [Google Scholar]

- Li, O.; Liu, H.; Chen, C.; Rudin, C. Deep Learning for Case-Based Reasoning through Prototypes: A Neural Network that Explains Its Predictions. In Proceedings of the Thirty-Second AAAI Conference on Artificial Intelligence (AAAI), New Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- European Institute for Biomedical Imaging Research. COVID-19 Imaging Datasets. Available online: https://www.eibir.org/COVID-19-imaging-datasets/ (accessed on 23 August 2021).

- Kaggle. COVIDx CT-2 Dataset. Available online: https://www.kaggle.com/hgunraj/covidxct (accessed on 7 June 2021).

- Zaffino, P.; Marzullo, A.; Moccia, S.; Calimeri, F.; Momi, E.D.; Bertucci, B.; Arcuri, P.P.; Spadea, M.F. An Open-Source COVID-19 CT Dataset with Automatic Lung Tissue Classification for Radiomics. Bioengineering 2021, 8, 26. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Vegas, NV, USA, 30 June 2016; pp. 770–778. [Google Scholar]

- Huang, G.; Liu, Z.; Maaten, L.v.; Weinberger, K.Q. Densely Connected Convolutional Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.-J.; Li, K.; Fei-Fei, L. ImageNet: A Large-Scale Hierarchical Image Database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), San Juan, PR, USA, 17–19 June 2009; pp. 248–255. [Google Scholar]

- Ghiasi-Shirazi, K. Generalizing the Convolution Operator in Convolutional Neural Networks. Neural Process. Lett. 2019. [Google Scholar] [CrossRef] [Green Version]

- Nalaie, K.; Ghiasi-Shirazi, K.; Akbarzadeh-T, M.R. Efficient Implementation of a Generalized Convolutional Neural Networks based on Weighted Euclidean Distance. In Proceedings of the 2017 7th International Conference on Computer and Knowledge Engineering (ICCKE), Mashhad, Iran, 26–27 October 2017; pp. 211–216. [Google Scholar]

- Wikipedia. Sensitivity and Specificity. Available online: https://en.wikipedia.org/wiki/Sensitivity_and_specificity (accessed on 2 April 2021).

- Wikipedia. Precision and Reacall. Available online: https://en.wikipedia.org/wiki/Precision_and_recall (accessed on 2 April 2021).

- Wikipedia. F-Score. Available online: https://en.wikipedia.org/wiki/F-score (accessed on 2 April 2021).

- Wikipedia. Accuracy and Precision. Available online: https://en.wikipedia.org/wiki/Accuracy_and_precision (accessed on 2 April 2021).

- Wikipedia. Confusion Matrix. Available online: https://wikipedia.org/wiki/Confusion_matrix (accessed on 2 April 2021).

- Johnson, R.A. Miller and Freund’s Probability and Statistics for Engineers, 9th ed.; Prentice Hall International: Harlow, UK, 2011. [Google Scholar]

| Base (B) | Metric | Ps-ProtoPNet | Gen-ProtoPNet [14] | NP-ProtoPNet [17] | ProtoPNet [5] | B Only |

|---|---|---|---|---|---|---|

| VGG-16 | 3 × 4 | |||||

| accuracy | 98.83 | 95.85 | 98.23 | 90.84 | 99.03 | |

| precision | 0.96 | 0.93 | 0.93 | 0.89 | 0.98 | |

| recall | 0.98 | 0.95 | 0.95 | 0.91 | 0.99 | |

| F1-score | 0.97 | 0.94 | 0.94 | 0.90 | 0.98 | |

| VGG-19 | 3 × 6 | |||||

| accuracy | 98.53 | 98.17 | 98.23 | 96.54 | 98.71 | |

| precision | 0.97 | 0.95 | 0.91 | 0.93 | 0.98 | |

| recall | 0.99 | 0.99 | 0.96 | 0.95 | 0.99 | |

| F1-score | 0.98 | 0.97 | 0.93 | 0.94 | 0.98 | |

| ResNet-34 | 3 × 3 | |||||

| accuracy | 98.97 ± 0.05 | 98.40 ± 0.12 | 98.45 ± 0.07 | 97.05 ± 0.06 | 99.24 ± 0.10 | |

| precision | 0.97 | 0.96 | 0.96 | 0.95 | 0.99 | |

| recall | 0.99 | 0.99 | 0.99 | 0.96 | 0.99 | |

| F1-score | 0.98 | 0.97 | 0.97 | 0.96 | 0.99 | |

| ResNet-152 | 2 × 3 | |||||

| accuracy | 98.85 ± 0.04 | 95.90 ± 0.09 | 98.48 ± 0.06 | 88.20 ± 0.08 | 99.40 ± 0.05 | |

| precision | 0.97 | 0.93 | 0.99 | 0.87 | 0.99 | |

| recall | 0.98 | 0.93 | 0.99 | 0.87 | 0.99 | |

| F1-score | 0.97 | 0.93 | 0.99 | 0.87 | 0.99 | |

| DenseNet-121 | 3 × 5 | |||||

| accuracy | 99.24 ± 0.05 | 98.97± 0.02 | 98.83 ± 0.10 | 98.81 ± 0.07 | 99.32 ± 0.03 | |

| precision | 0.98 | 0.98 | 0.99 | 0.98 | 0.99 | |

| recall | 0.99 | 0.99 | 0.98 | 0.98 | 0.99 | |

| F1-score | 0.98 | 0.98 | 0.98 | 0.98 | 0.99 | |

| DenseNet-161 | 2 × 2 | |||||

| accuracy | 99.02 ± 0.03 | 98.87 ± 0.02 | 98.88 ± 0.03 | 98.76 ± 0.07 | 99.41 ± 0.07 | |

| precision | 0.96 | 0.98 | 0.97 | 0.97 | 0.99 | |

| recall | 0.99 | 0.99 | 0.99 | 0.99 | 0.99 | |

| F1-score | 0.97 | 0.98 | 0.97 | 0.98 | 0.99 |

| Base (B) | Gen-ProtoPNet [17] | NP-ProtoPNet [18] | ProtoPNet [16] | B Only |

|---|---|---|---|---|

| VGG-16 | 0.0002 | 0.0002 | 0.0002 | 0.0367 |

| VGG-19 | 0.0007 | 0.0036 | 0.0002 | 0.0409 |

| ResNet-34 | 0.0002 | 0.0002 | 0.0002 | 0.0002 |

| ResNet-152 | 0.0002 | 0.0002 | 0.0002 | 0.0002 |

| DenseNet-121 | 0.0002 | 0.0002 | 0.0002 | 0.0582 |

| DenseNet-161 | 0.0467 | 0.0582 | 0.0075 | 0.0002 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Singh, G.; Yow, K.-C. Object or Background: An Interpretable Deep Learning Model for COVID-19 Detection from CT-Scan Images. Diagnostics 2021, 11, 1732. https://doi.org/10.3390/diagnostics11091732

Singh G, Yow K-C. Object or Background: An Interpretable Deep Learning Model for COVID-19 Detection from CT-Scan Images. Diagnostics. 2021; 11(9):1732. https://doi.org/10.3390/diagnostics11091732

Chicago/Turabian StyleSingh, Gurmail, and Kin-Choong Yow. 2021. "Object or Background: An Interpretable Deep Learning Model for COVID-19 Detection from CT-Scan Images" Diagnostics 11, no. 9: 1732. https://doi.org/10.3390/diagnostics11091732

APA StyleSingh, G., & Yow, K.-C. (2021). Object or Background: An Interpretable Deep Learning Model for COVID-19 Detection from CT-Scan Images. Diagnostics, 11(9), 1732. https://doi.org/10.3390/diagnostics11091732