Super Resolution for Mangrove UAV Remote Sensing Images

Abstract

1. Introduction

- (1)

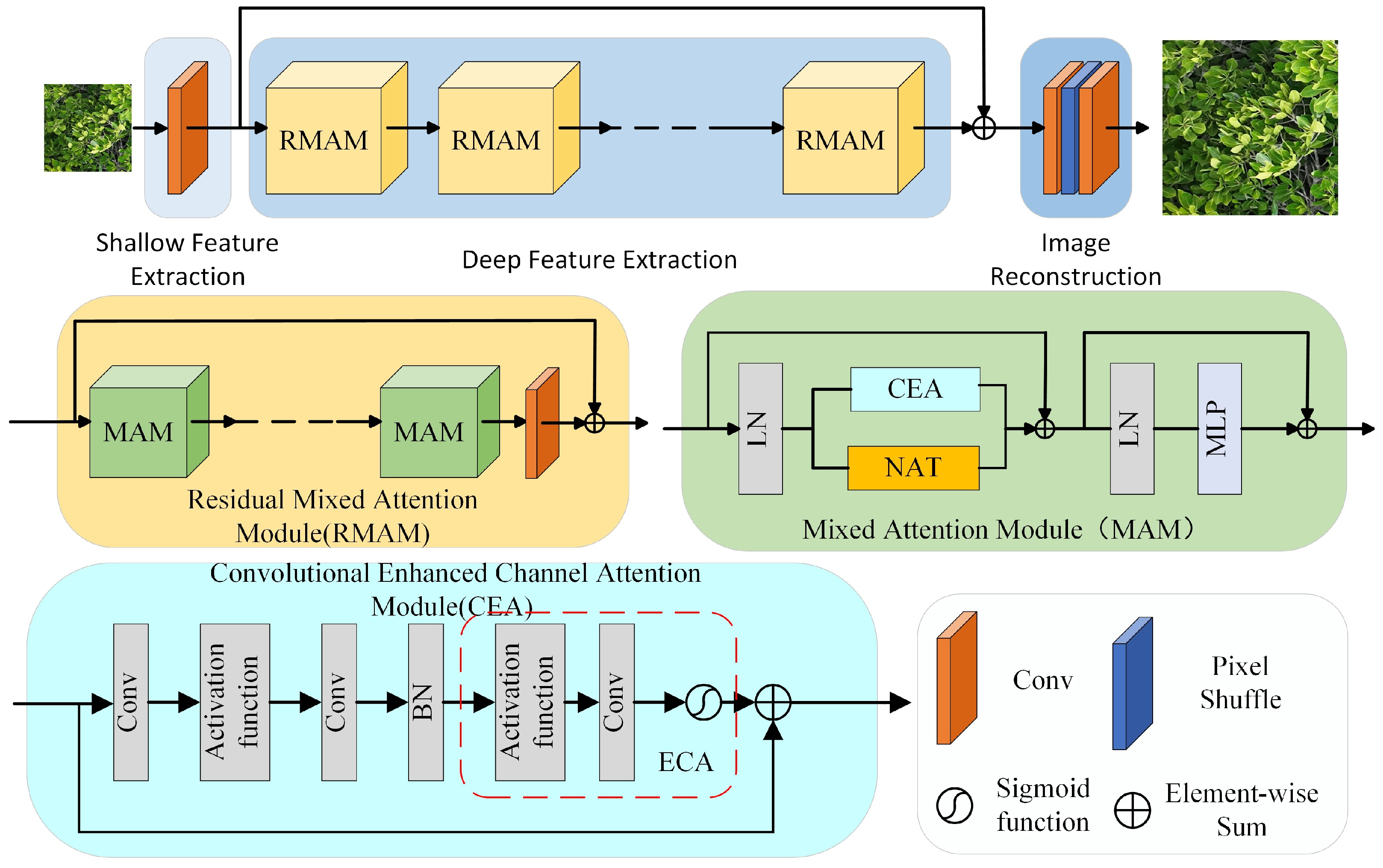

- Designed a CEA channel attention module, which combines ECA attention to enhance the network’s feature extraction on channel attention.

- (2)

- Introduced the NAT module into the SR network to enhance the network’s ability to extract leaf detailed features.

- (3)

- The images reconstructed using an SR reconstruction network enhance the segmentation performance of mangrove species in UAV remote sensing images.

2. Methods

2.1. Network Architecture

2.2. Residual Mixed Attention Module

3. Dataset

4. Experiments

4.1. Experimental Setup

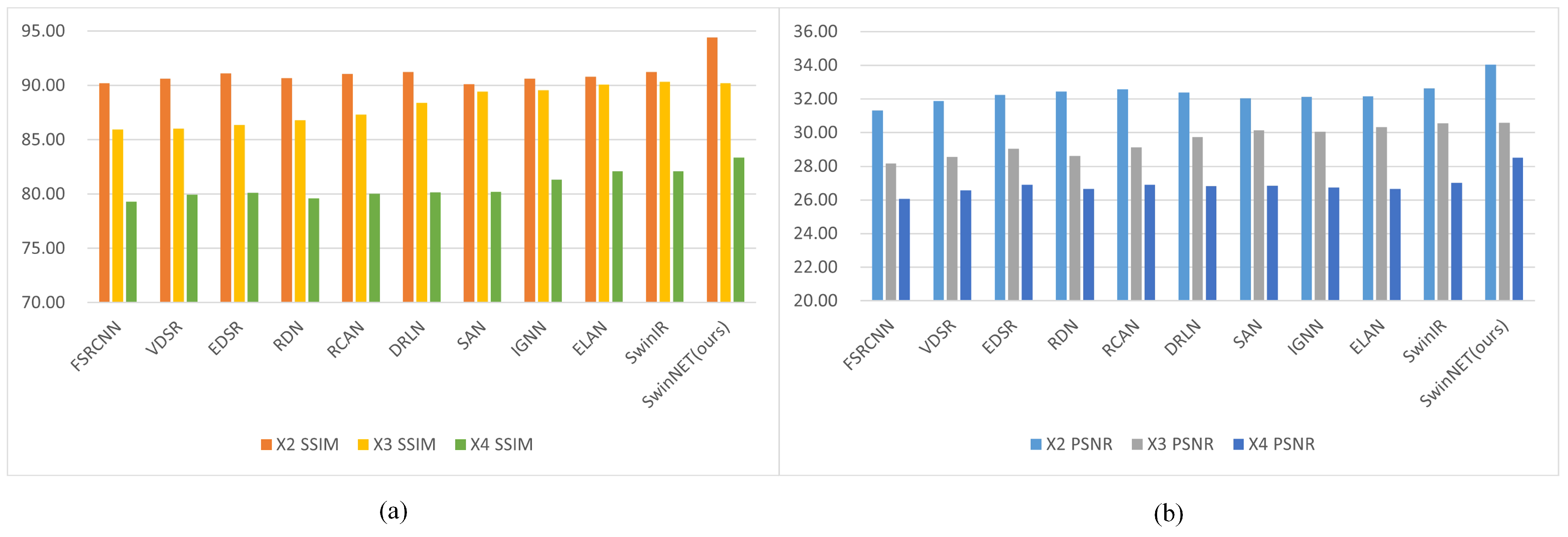

4.2. Results on Image SR

4.3. Visual Comparison

4.4. Results of Mangrove Species Classification

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Jia, M.; Liu, M.; Wang, Z.; Mao, D.; Ren, C.; Cui, H. Evaluating the effectiveness of conservation on mangroves: A remote sensing-based comparison for two adjacent protected areas in Shenzhen and Hong Kong, China. Remote Sens. 2016, 8, 627. [Google Scholar] [CrossRef]

- Shiwen, W.; Hongchang, H.; Bolin, F. Analysis of physiological structure parameters of Shankou mangrove based on Sentinel-2 data and space-time characteristics. Sci. Technol. Eng. 2021, 21, 3698–3707. [Google Scholar]

- Kabiri, K.; Abedi, E. Rapid mangrove dieback in the northern Persian Gulf driven by anthropogenic activities and environmental stressors. Discov. Environ. 2025, 3, 22. [Google Scholar] [CrossRef]

- Cao, J.; Leng, W.; Liu, K.; Liu, L.; He, Z.; Zhu, Y. Object-based mangrove species classification using unmanned aerial vehicle hyperspectral images and digital surface models. Remote Sens. 2018, 10, 89. [Google Scholar] [CrossRef]

- Maurya, K.; Mahajan, S.; Chaube, N. Remote sensing techniques: Mapping and monitoring of mangrove ecosystem—A review. Complex Intell. Syst. 2021, 7, 2797–2818. [Google Scholar] [CrossRef]

- Kai, L.; Hui, G.; Jingjing, C.; Yuanhui, Z. Comparison of mangrove remote sensing classification based on multi-type UAV data. Trop. Geogr. 2019, 39, 492–501. [Google Scholar]

- You, H.; Liu, Y.; Lei, P.; Qin, Z.; You, Q. Segmentation of individual mangrove trees using UAV-based LiDAR data. Ecol. Inform. 2023, 77, 102200. [Google Scholar] [CrossRef]

- Wang, X.; Zhang, Y.; Ca, J.; Qin, Q.; Feng, Y.; Yan, J. Semantic segmentation network for mangrove tree species based on UAV remote sensing images. Sci. Rep. 2024, 14, 29860. [Google Scholar] [CrossRef] [PubMed]

- Kabiri, K. Mapping coastal ecosystems and features using a low-cost standard drone: Case study, Nayband Bay, Persian gulf, Iran. J. Coast. Conserv. 2020, 24, 62. [Google Scholar] [CrossRef]

- Wen, X.; Jia, M.; Li, X.; Wang, Z.; Zhong, C.; Feng, E. Identification of mangrove canopy species based on visible unmanned aerial vehicle images. J. For. Environ. 2020, 40, 486–496. [Google Scholar]

- Jin, B.; Gonçalves, N.; Cruz, L.; Medvedev, I.; Yu, Y.; Wang, J. Simulated multimodal deep facial diagnosis. Expert Syst. Appl. 2024, 252, 123881. [Google Scholar] [CrossRef]

- Cheng, Y.; Yan, J.; Zhang, F.; Li, M.; Zhou, N.; Shi, C.; Jin, B.; Zhang, W. Surrogate modeling of pantograph-catenary system interactions. Mech. Syst. Signal Process. 2025, 224, 112134. [Google Scholar] [CrossRef]

- Yan, J.; Cheng, Y.; Wang, Q.; Liu, L.; Zhang, W.; Jin, B. Transformer and graph convolution-based unsupervised detection of machine anomalous sound under domain shifts. IEEE Trans. Emerg. Top. Comput. Intell. 2024, 8, 2827–2842. [Google Scholar] [CrossRef]

- Zhang, Q.; Yang, G.; Zhang, G. Collaborative network for super-resolution and semantic segmentation of remote sensing images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 4404512. [Google Scholar] [CrossRef]

- Liang, J.; Cao, J.; Sun, G.; Zhang, K.; Van Gool, L.; Timofte, R. Swinir: Image restoration using swin transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 1833–1844. [Google Scholar]

- Vaswani, A. Attention is all you need. In Proceedings of the 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Shi, W.; Caballero, J.; Huszár, F.; Totz, J.; Aitken, A.P.; Bishop, R.; Rueckert, D.; Wang, Z. Real-time single image and video super-resolution using an efficient sub-pixel convolutional neural network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1874–1883. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021; pp. 10012–10022. [Google Scholar]

- Hassani, A.; Walton, S.; Li, J.; Li, S.; Shi, H. Neighborhood attention transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 6185–6194. [Google Scholar]

- Dong, C.; Loy, C.C.; Tang, X. Accelerating the super-resolution convolutional neural network. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; Proceedings, Part II 14. Springer: Berlin/Heidelberg, Germany, 2016; pp. 391–407. [Google Scholar]

- Kim, J.; Lee, J.K.; Lee, K.M. Accurate image super-resolution using very deep convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1646–1654. [Google Scholar]

- Lim, B.; Son, S.; Kim, H.; Nah, S.; Mu Lee, K. Enhanced deep residual networks for single image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017; pp. 136–144. [Google Scholar]

- Zhang, Y.; Tian, Y.; Kong, Y.; Zhong, B.; Fu, Y. Residual dense network for image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 2472–2481. [Google Scholar]

- Zhang, Y.; Li, K.; Li, K.; Wang, L.; Zhong, B.; Fu, Y. Image super-resolution using very deep residual channel attention networks. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 286–301. [Google Scholar]

- Anwar, S.; Barnes, N. Densely residual laplacian super-resolution. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 44, 1192–1204. [Google Scholar] [CrossRef] [PubMed]

- Dai, T.; Cai, J.; Zhang, Y.; Xia, S.T.; Zhang, L. Second-order attention network for single image super-resolution. In Proceedings of the IEEE/CVF conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 11065–11074. [Google Scholar]

- Zhou, S.; Zhang, J.; Zuo, W.; Loy, C.C. Cross-scale internal graph neural network for image super-resolution. Adv. Neural Inf. Process. Syst. 2020, 33, 3499–3509. [Google Scholar]

- Zhang, X.; Zeng, H.; Guo, S.; Zhang, L. Efficient long-range attention network for image super-resolution. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 649–667. [Google Scholar]

- Timofte, R.; Agustsson, E.; Van Gool, L.; Yang, M.H.; Zhang, L. Ntire 2017 challenge on single image super-resolution: Methods and results. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017; pp. 114–125. [Google Scholar]

| Method | Training Dataset | ×2 | ×3 | ×4 | |||

|---|---|---|---|---|---|---|---|

| PSNR (dB) | SSIM (%) | PSNR (dB) | SSIM (%) | PSNR (dB) | SSIM (%) | ||

| FSRCNN | mangrove | 31.31 | 90.19 | 28.16 | 85.92 | 26.07 | 79.30 |

| VDSR | mangrove | 31.87 | 90.64 | 28.57 | 86.02 | 26.56 | 79.97 |

| EDSR | mangrove | 32.25 | 91.11 | 29.03 | 86.38 | 26.91 | 80.11 |

| RDN | mangrove | 32.44 | 90.70 | 28.62 | 86.81 | 26.66 | 79.61 |

| RCAN | mangrove | 32.57 | 91.08 | 29.12 | 87.30 | 26.90 | 80.02 |

| DRLN | mangrove | 32.38 | 91.23 | 29.75 | 88.39 | 26.81 | 80.16 |

| SAN | mangrove | 32.06 | 90.11 | 30.13 | 89.45 | 26.86 | 80.21 |

| IGNN | mangrove | 32.13 | 90.65 | 30.04 | 89.55 | 26.73 | 81.33 |

| ELAN | mangrove | 32.17 | 90.80 | 30.32 | 90.07 | 26.64 | 82.09 |

| SwinIR | mangrove | 32.65 | 91.24 | 30.55 | 90.32 | 27.02 | 82.09 |

| SwinNET (ours) | mangrove | 34.04 | 94.43 | 30.59 | 90.21 | 28.52 | 83.35 |

| Method | Scale | Set5 | Set14 | BSDS100 | Urban100 | Manga109 | |||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | PSNR | SSIM | ||

| SRCNN | ×2 | 36.59 | 95.31 | 32.44 | 90.61 | 31.31 | 88.72 | 29.49 | 89.41 | 35.51 | 96.60 |

| FSRCNN | 36.95 | 95.55 | 32.59 | 90.90 | 31.44 | 89.10 | 29.78 | 90.12 | 36.61 | 97.02 | |

| VDSR | 37.47 | 95.79 | 33.02 | 91.21 | 31.88 | 89.53 | 30.68 | 91.32 | 37.16 | 97.40 | |

| EDSR | 37.03 | 95.96 | 33.89 | 91.95 | 32.27 | 90.12 | 32.82 | 93.40 | 39.00 | 97.68 | |

| RDN | 37.62 | 96.03 | 33.51 | 92.06 | 32.33 | 90.14 | 32.82 | 93.48 | 39.06 | 97.62 | |

| RCAN | 37.26 | 96.14 | 33.08 | 92.07 | 32.37 | 90.25 | 33.28 | 93.76 | 39.39 | 97.58 | |

| DRLN | 37.24 | 96.10 | 33.22 | 92.05 | 32.34 | 90.25 | 33.30 | 93.82 | 39.12 | 97.79 | |

| SAN | 37.88 | 96.12 | 33.67 | 92.02 | 32.40 | 90.20 | 33.08 | 93.63 | 39.22 | 97.85 | |

| IGNN | 38.03 | 96.10 | 33.07 | 91.87 | 32.39 | 90.15 | 33.23 | 93.73 | 39.26 | 97.78 | |

| ELAN | 38.09 | 96.11 | 33.17 | 92.03 | 32.38 | 90.30 | 33.26 | 93.86 | 39.41 | 97.84 | |

| SwinIR | 38.15 | 96.12 | 33.33 | 92.05 | 32.36 | 90.27 | 33.31 | 93.23 | 39.12 | 97.89 | |

| SwinNET | 38.10 | 96.15 | 33.91 | 92.06 | 32.97 | 91.33 | 33.35 | 94.19 | 39.44 | 98.02 | |

| SRCNN | ×3 | 32.72 | 90.84 | 29.25 | 82.06 | 28.36 | 78.61 | 26.22 | 79.87 | 30.44 | 91.14 |

| FSRCNN | 33.09 | 91.33 | 29.31 | 82.08 | 28.50 | 79.05 | 26.43 | 80.76 | 31.09 | 92.04 | |

| VDSR | 33.59 | 92.10 | 29.68 | 83.15 | 28.81 | 79.84 | 27.14 | 82.90 | 32.01 | 93.34 | |

| EDSR | 34.14 | 92.13 | 30.13 | 84.12 | 29.22 | 80.88 | 27.73 | 84.47 | 33.16 | 93.69 | |

| RDN | 34.16 | 92.18 | 30.23 | 84.13 | 29.22 | 80.91 | 27.75 | 84.49 | 33.07 | 93.74 | |

| RCAN | 34.23 | 92.39 | 30.18 | 84.13 | 29.31 | 81.08 | 27.07 | 84.95 | 33.44 | 93.99 | |

| DRLN | 34.18 | 93.01 | 30.14 | 84.10 | 29.33 | 81.17 | 27.15 | 84.12 | 33.65 | 93.09 | |

| SAN | 34.13 | 92.19 | 30.21 | 84.13 | 29.28 | 81.02 | 27.84 | 84.61 | 33.23 | 93.90 | |

| IGNN | 34.15 | 92.32 | 30.24 | 84.18 | 29.24 | 80.95 | 27.94 | 84.86 | 33.38 | 94.92 | |

| ELAN | 34.15 | 93.07 | 30.25 | 84.19 | 29.35 | 81.23 | 28.30 | 84.38 | 33.69 | 93.09 | |

| SwinIR | 34.20 | 92.35 | 30.24 | 84.13 | 29.20 | 80.38 | 28.26 | 84.14 | 33.38 | 93.89 | |

| SwinNET | 34.35 | 92.62 | 30.30 | 84.20 | 29.37 | 82.31 | 28.51 | 85.88 | 33.76 | 94.58 | |

| SRCNN | ×4 | 30.38 | 86.27 | 27.43 | 75.09 | 26.80 | 70.95 | 24.43 | 72.15 | 27.54 | 85.49 |

| FSRCNN | 30.72 | 86.51 | 27.55 | 75.45 | 26.93 | 71.47 | 24.58 | 72.77 | 27.84 | 86.08 | |

| VDSR | 31.31 | 88.25 | 27.93 | 76.75 | 27.23 | 72.26 | 25.09 | 75.30 | 28.72 | 88.60 | |

| EDSR | 32.43 | 83.59 | 28.70 | 78.73 | 27.64 | 74.10 | 26.56 | 80.30 | 30.95 | 91.42 | |

| RDN | 32.39 | 89.84 | 28.80 | 78.64 | 27.67 | 74.16 | 26.60 | 80.19 | 30.99 | 91.43 | |

| RCAN | 32.56 | 89.95 | 28.85 | 78.89 | 27.77 | 74.34 | 26.76 | 80.81 | 31.20 | 91.65 | |

| DRLN | 32.55 | 90.01 | 28.86 | 78.91 | 27.80 | 74.40 | 26.94 | 81.14 | 31.44 | 91.89 | |

| SAN | 32.57 | 90.00 | 28.89 | 78.84 | 27.77 | 74.28 | 26.70 | 80.61 | 31.12 | 91.64 | |

| IGNN | 32.47 | 89.98 | 28.75 | 78.85 | 27.75 | 74.34 | 26.83 | 80.82 | 31.27 | 91.81 | |

| ELAN | 32.64 | 90.18 | 28.88 | 79.06 | 27.77 | 74.52 | 27.13 | 81.58 | 31.61 | 92.22 | |

| SwinIR | 32.65 | 90.24 | 29.05 | 79.44 | 27.89 | 74.88 | 27.46 | 82.52 | 32.05 | 92.48 | |

| SwinNET | 32.79 | 90.32 | 29.23 | 79.54 | 28.13 | 75.43 | 27.84 | 83.22 | 32.16 | 92.61 | |

| HR Images | SwinNET | |||

|---|---|---|---|---|

| Class | IOU | ACC | IOU | ACC |

| Rhizophora stylosa | 91.85 | 97.79 | 93.42 | 98.34 |

| Bruguiera gymnorhiza | 66.35 | 69.09 | 74.13 | 80.26 |

| Aegiceras corniculata | 74.36 | 74.64 | 95.56 | 96.86 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Qin, Q.; Dai, W.; Wang, X. Super Resolution for Mangrove UAV Remote Sensing Images. Symmetry 2025, 17, 1250. https://doi.org/10.3390/sym17081250

Qin Q, Dai W, Wang X. Super Resolution for Mangrove UAV Remote Sensing Images. Symmetry. 2025; 17(8):1250. https://doi.org/10.3390/sym17081250

Chicago/Turabian StyleQin, Qin, Wenlong Dai, and Xin Wang. 2025. "Super Resolution for Mangrove UAV Remote Sensing Images" Symmetry 17, no. 8: 1250. https://doi.org/10.3390/sym17081250

APA StyleQin, Q., Dai, W., & Wang, X. (2025). Super Resolution for Mangrove UAV Remote Sensing Images. Symmetry, 17(8), 1250. https://doi.org/10.3390/sym17081250