Symmetry in the Multidimensional Dynamical Analysis of Iterative Methods with Memory

Abstract

:1. Introduction

2. Theoretical Concepts

- If , for , then x is attracing.

- If , for , then x is super attracing.

- If one eigenvalue has , then x is repelling or saddle.

- If , for , then x is repelling.

3. Experimental Results

3.1. Uncoupled Third Order System

3.1.1. Kurchatov’s Method

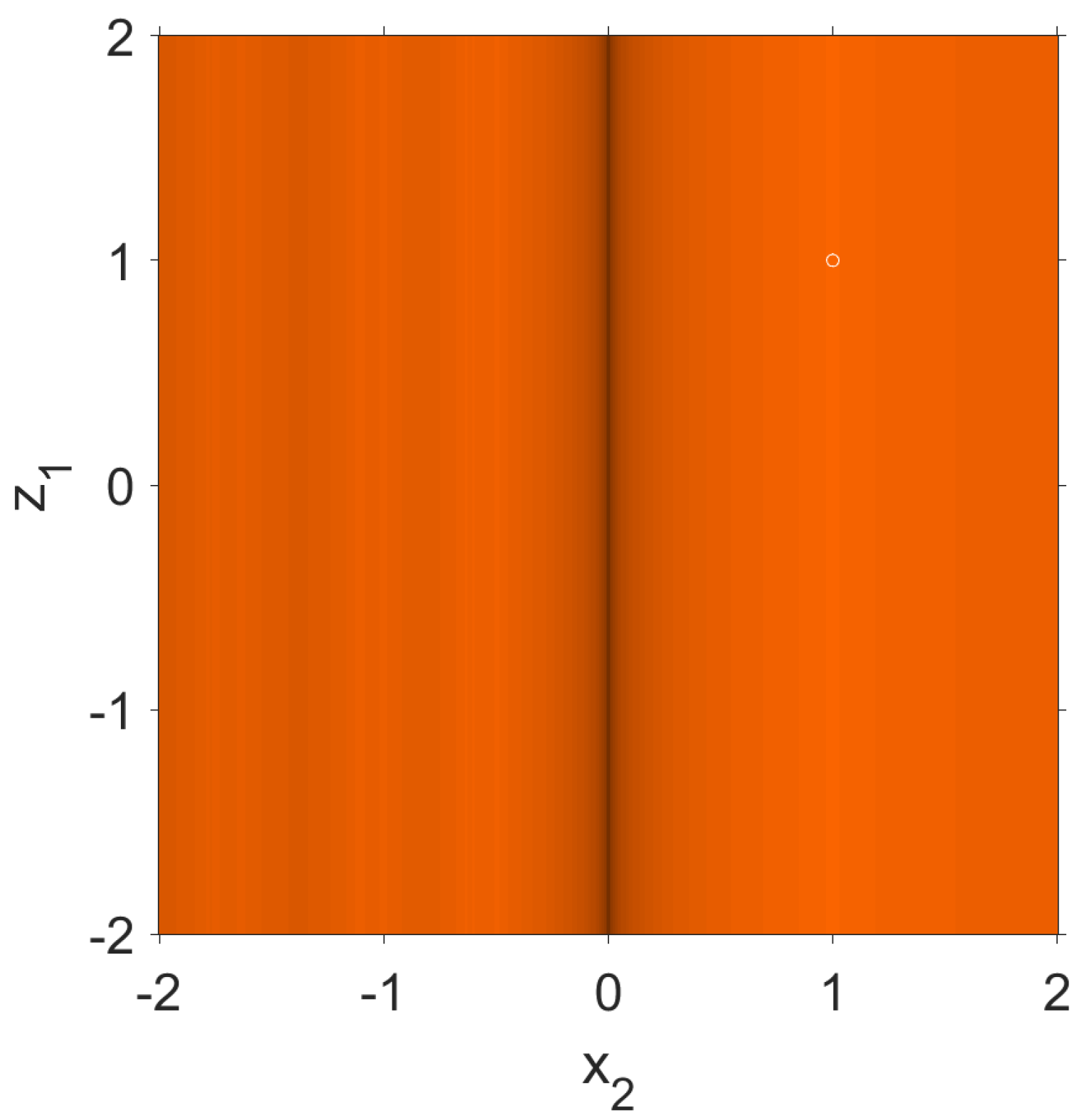

- Operator evaluated at the critical points of type has the following formThe convergence of these points only depends on and , as we can see in the expression of . For this reason, we draw planes of convergence of these points with these two variables.Now, we are going to describe how we generate the planes of convergence [4]. To draw these planes of the points of the type , what we are going to do is to see which of these points belong to the basins of attraction of the attractor fixed points, that is, which of these points converge to the attractor.We make a mesh of points of the set . On one of the axes, we have , and on the other, , and with them we construct our points of type . We take each of these points and apply the operator on it. If it converges to the only attractor fixed point, which is , then we paint it in orange. As convergence criterion, we have used that the distance from the iteration to the fixed point is less than in less than 40 iterations. If this is not verified, the mesh point is painted black.As can be seen in Figure 1 that we have a slower convergence when approaches the value 0 because of the shape of the operator, but we still have convergence. In the rest of the cases, the convergence to the point is clear.

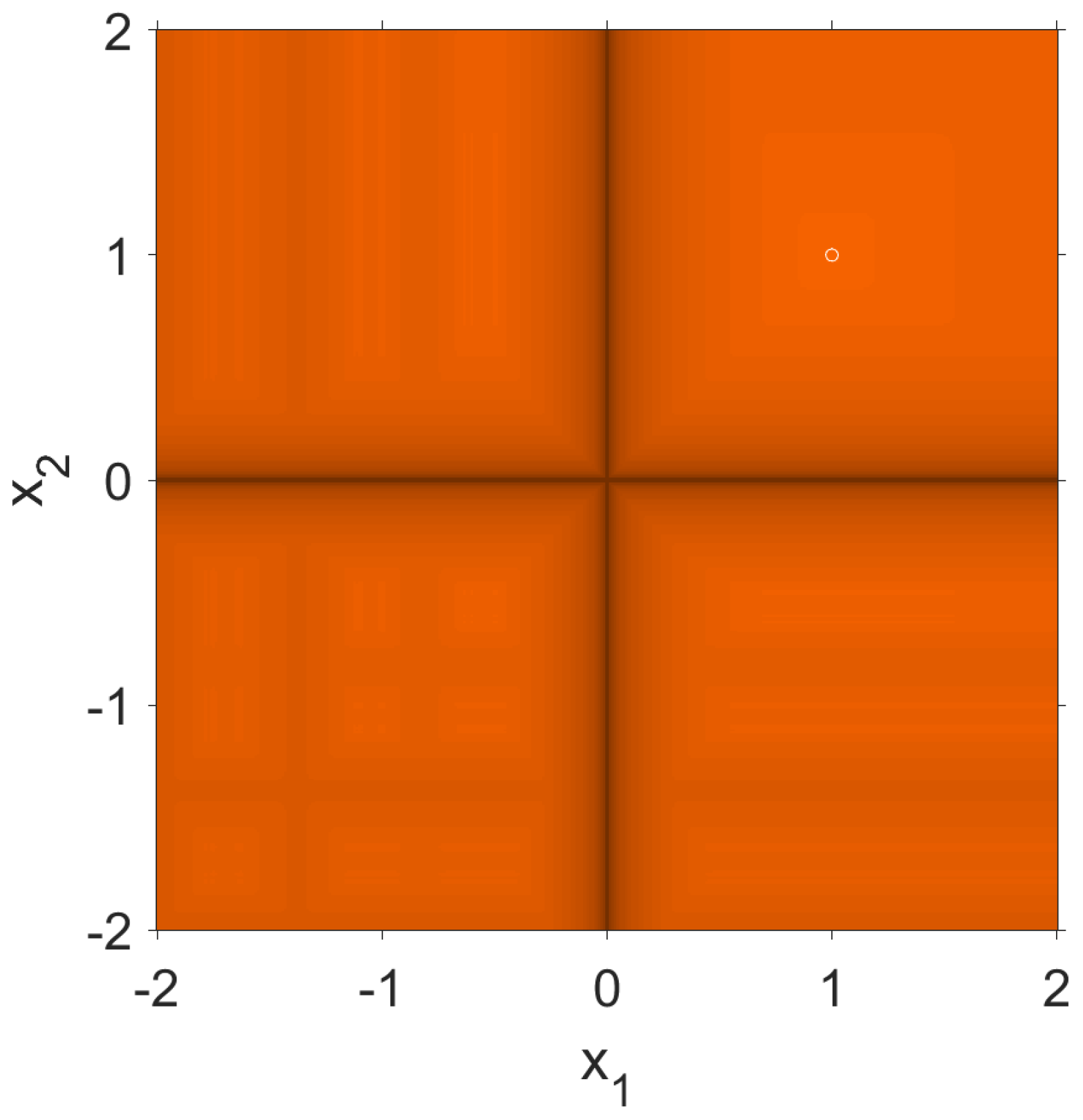

- Next, we evaluate the operator at the critical points of type . In this case, the operator isIn this case, the critical points of type depend on variables and . For this reason, we draw the convergence plane of the critical points depending on these variables.As in the previous case, it is shown in Figure 2 that if any of the variables approach the value 0 we have slow convergence but that in the rest of the points the convergence to the point is clear.

3.1.2. Steffensen’s Scheme

- fixed point , with ,

- strange fixed point , being ,

- strange fixed point , being ,

- strange fixed point , with .

- For the point associated to , the related matrices areandFrom Theorem 2, we conclude that the fixed point is super attracting point.

- For the point associated to , we obtainandBy applying Theorem 2, the eigenvalues of this point are the values satisfyingIt follows that the eigenvalues are 0 and 1, so we cannot conclude anything about the character of this strange fixed point as it is not hyperbolic.

- For the fixed point associated to , the matrices areandThe eigenvalues associated with this fixed point are those values that satisfyIt follows that the eigenvalues are 0 and 1, so again the point is not hyperbolic.

- Finally, let us study the character of the fixed point associated with . The matrices for this fixed point areandSo the eigenvalues of the fixed point associated with are the values that satisfyIt follows that the eigenvalues are 0 and 1, so again the point is not hyperbolic. □

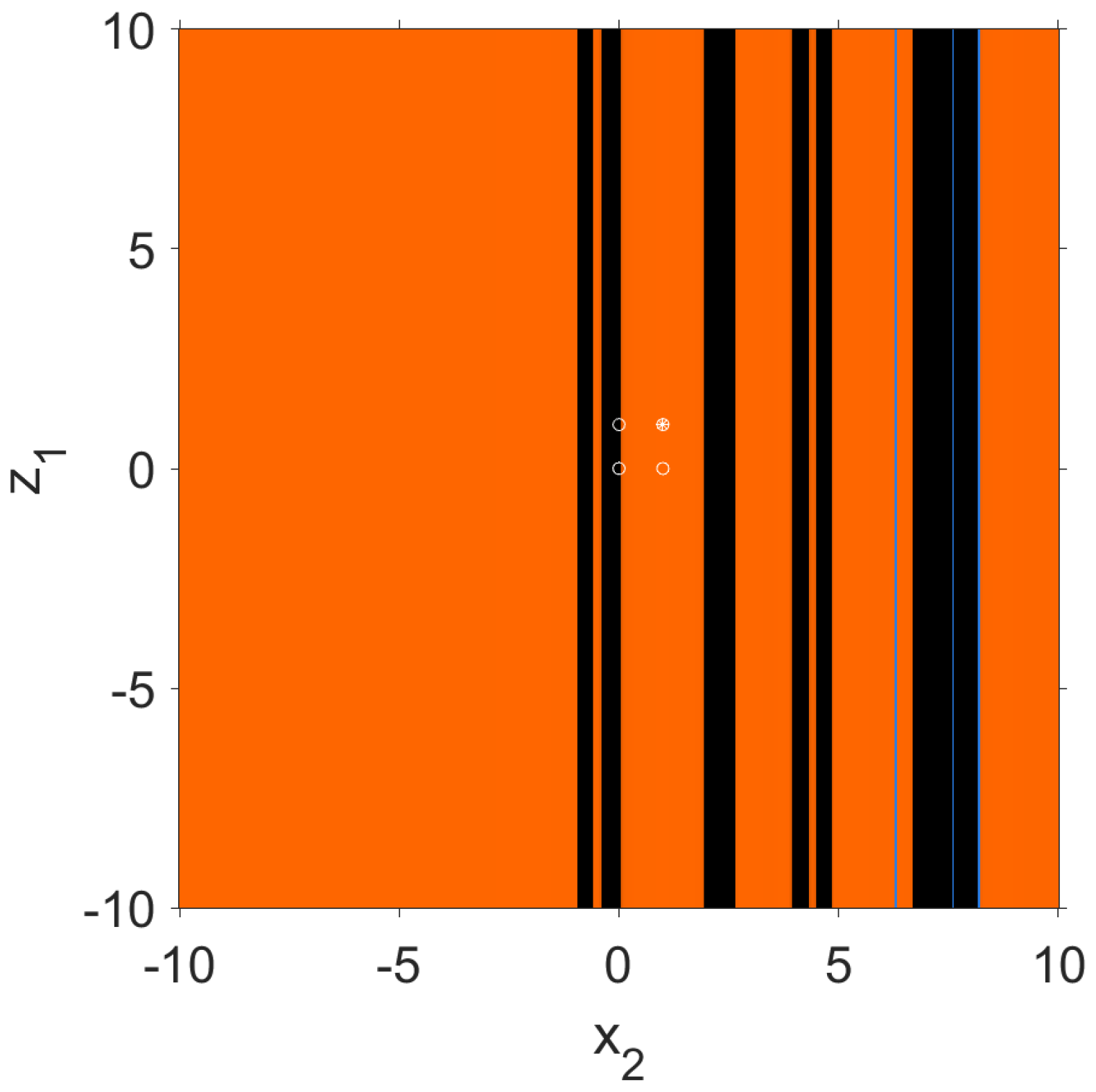

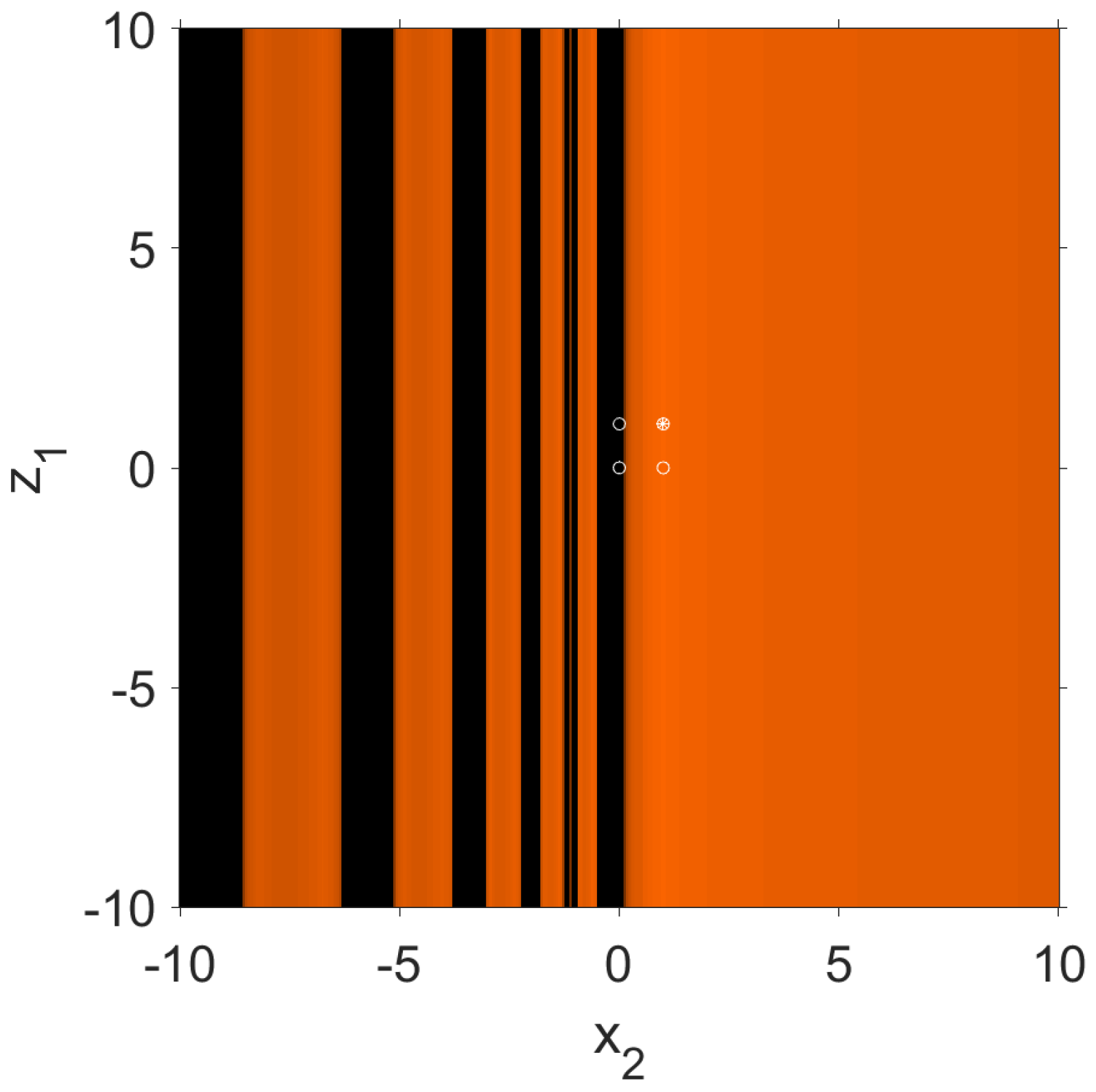

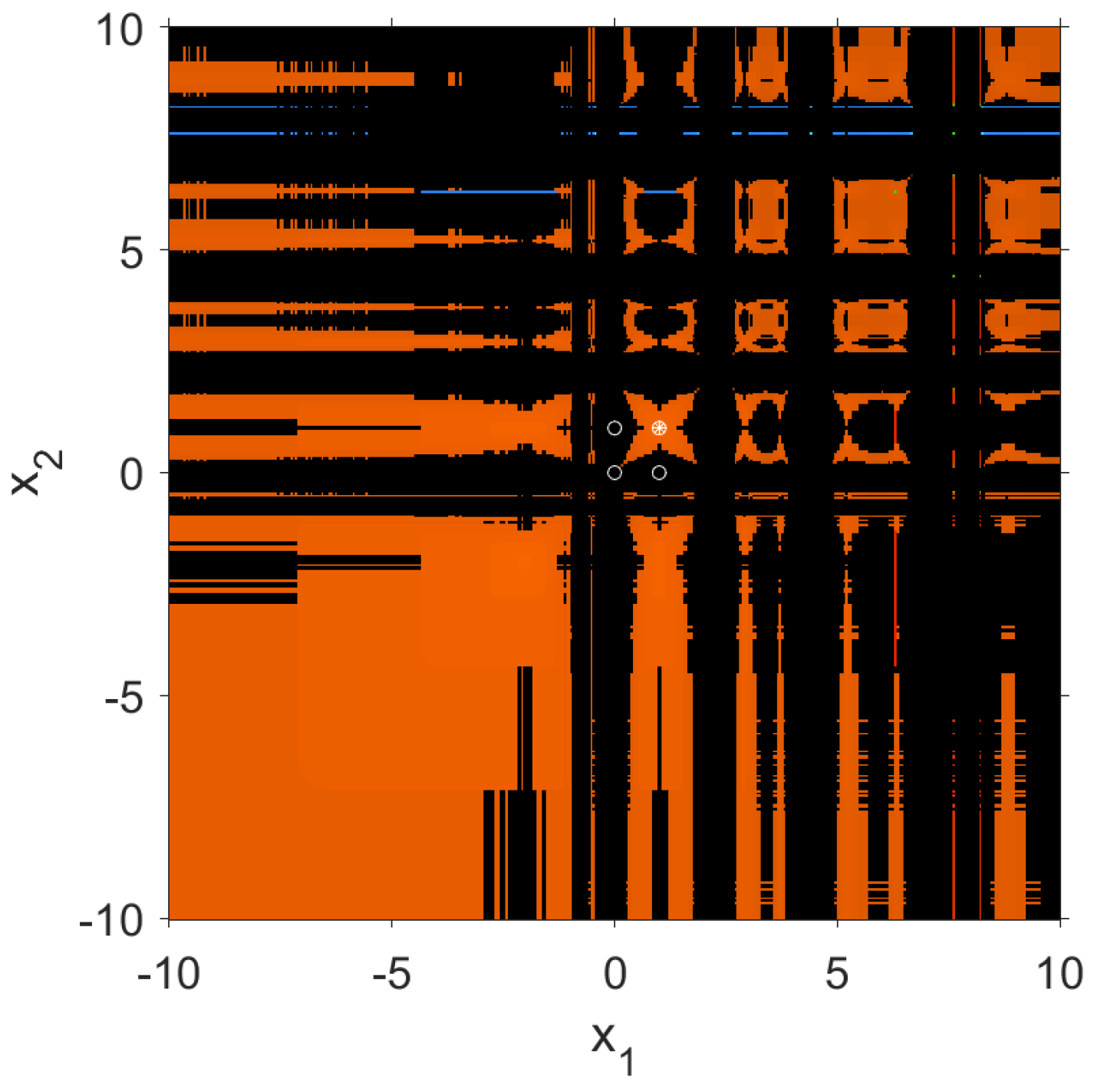

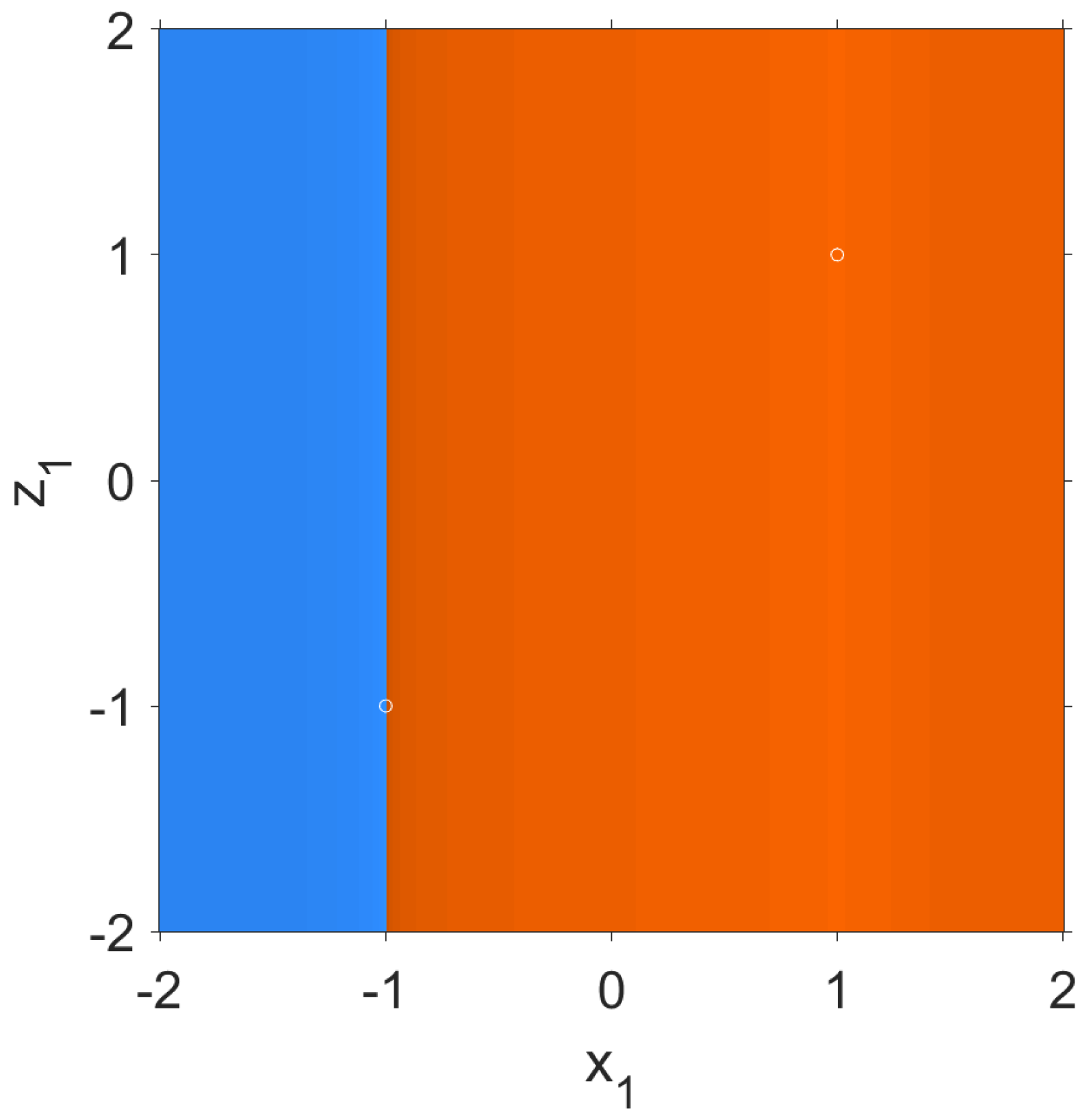

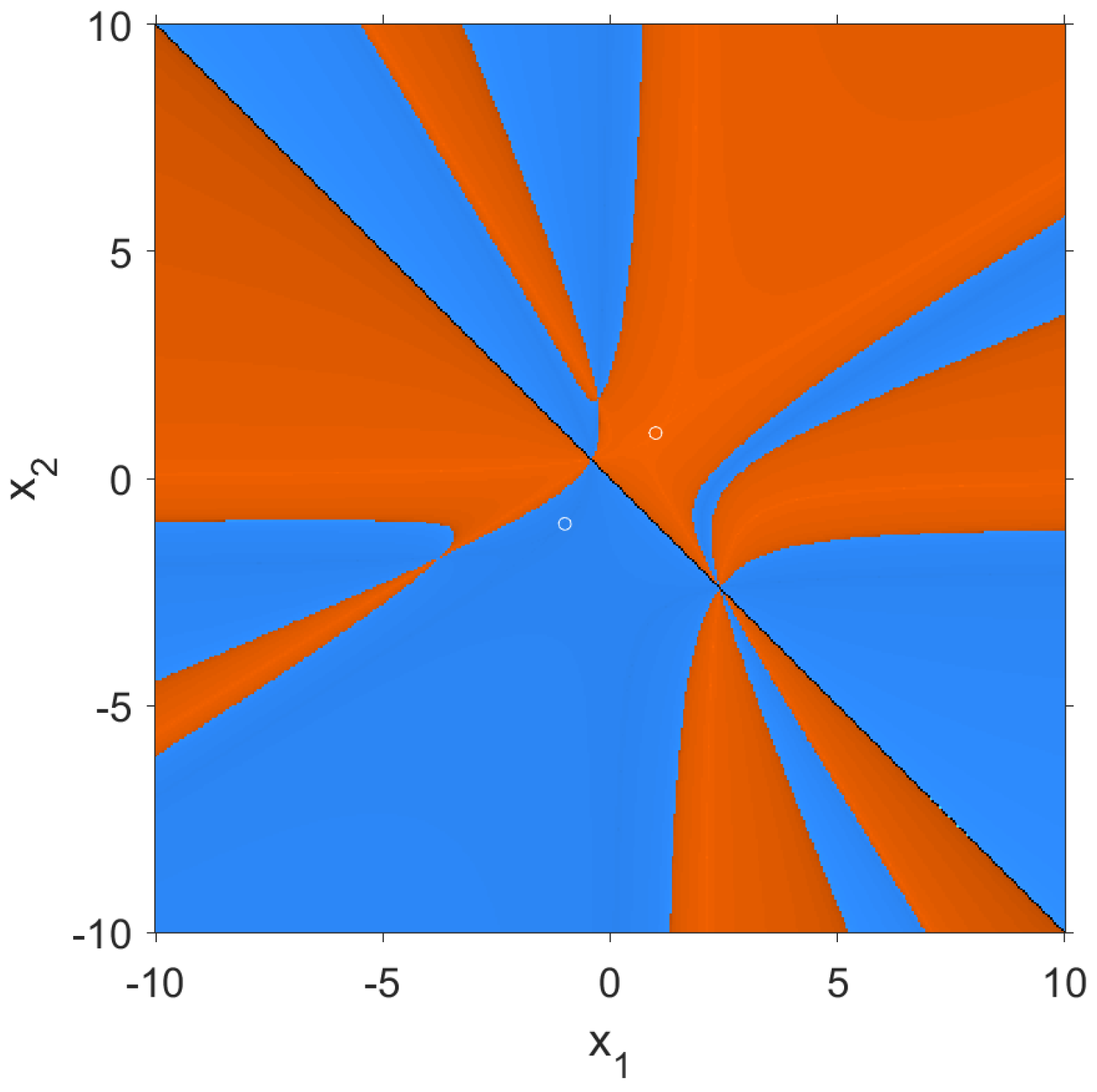

- The asymptotic behaviour of the critical points is analysed with the following plane where the convergence to the fixed points is shown in different colours. In this case, if the distance from the iteration to the fixed point is less than , we say that the iteration is in the basin of attraction of the fixed point. In this case, it is painted orange if the critical point converges to , blue if it converges to the strange fixed point , red if it converges to the strange fixed point and green if it converges to the point . If the points are painted black, they have not converged to any of the fixed points in less than 40 iterations. In this case, we have a fixed value , and the value depends on , so the variables of the axes are and as shown in Figure 4.

- In a similar way to the previous case, we study the convergence of the critical points of type and of type . In these cases, the value is also fixed and the value depends on ; for this reason, the variables of the axes are and as in the previous cases and as can be seen in Figure 5. In this case, we have that the behaviour of both types of critical points is the same; for that reason, we only show one dynamical plane.

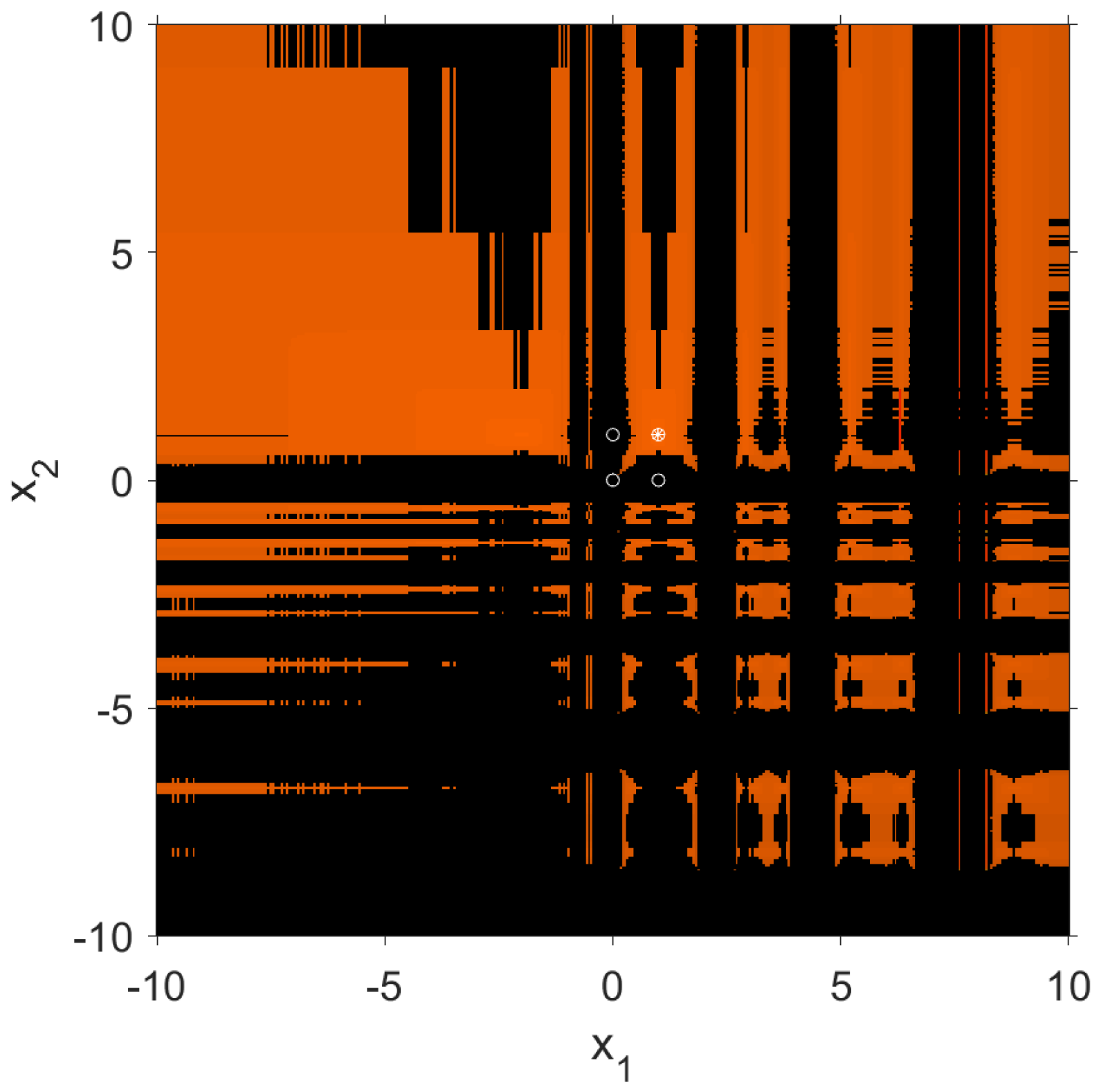

- For the critical points of type , the convergence study is similar to the previous ones, but in this case none of the variables are fixed, and it is and that depend on and , respectively; for this reason, the dynamical plane has as axis variables the values of and , as shown in Figure 6.

- For the critical points of type and , we also have as variables on the axes the values of and , as shown in Figure 7. In this case, we have decided to show only one dynamical plane because the behaviour of both types of critical points is the same.

- For the critical points of type , and , we also have as variables on the axes the values of and . In this case, we have that the behaviour of these 3 types of critical points is the same; for that reason, we only show one dynamical plane (Figure 8).

3.2. A Coupled Second-Order System

3.2.1. Kurchatov’s Scheme

- where .

- where .

3.2.2. Steffensen’s Scheme

- , which is a superattractor point.

- , which is a superattractor point.

- , which is a strange fixed point and not hyperbolic.

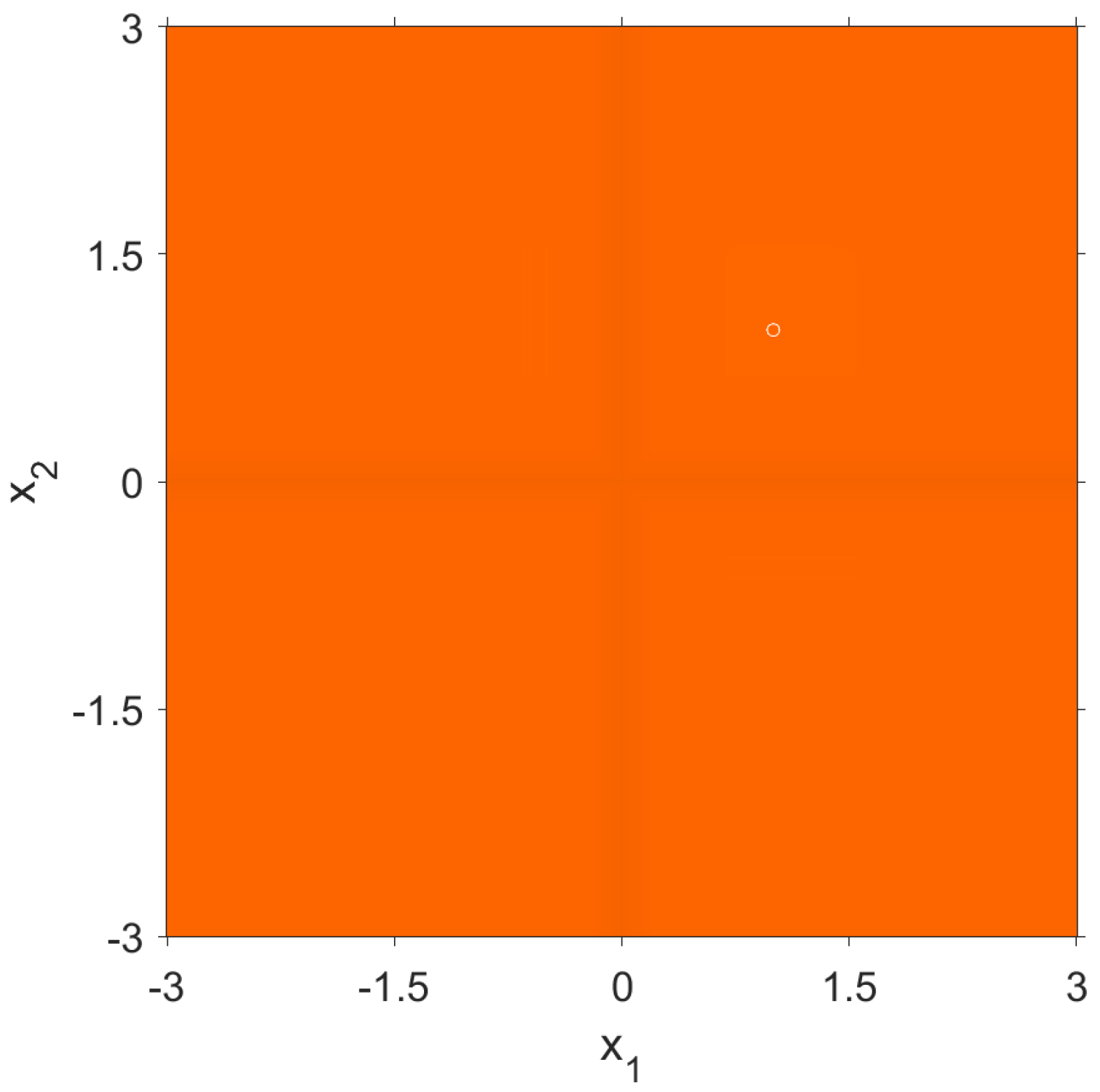

- Since evaluated at the , and points is , then those types of critical points belongs to the basin of attraction of .

- Since evaluated at the , and points is , then those types of critical points belongs to the basin of attraction of .

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ardelean, G. A comparison between iterative methods by using the basins of attraction. Appl. Math. Comput. 2011, 218, 88–95. [Google Scholar] [CrossRef]

- Susanto, H.; Karjanto, N. Newton’s method’s basins of attraction revisited. Appl. Math. Comput. 2009, 215, 1084–1090. [Google Scholar] [CrossRef] [Green Version]

- Epureanu, B.; Greenside, H. Fractal Basins of Attraction Associated with a Damped Newton’s Method. SIAM Rev. 1998, 40, 102–109. [Google Scholar] [CrossRef]

- Magreñán, A. A new tool to study real dynamics: The Convergence Plane. Appl. Math. Comput. 2014, 248, 215–224. [Google Scholar] [CrossRef] [Green Version]

- Neta, B.; Chun, C.; Scott, M. Basins of attraction for optimal eighth order methods to find simple roots of nonlinear equations. Appl. Math. Comput. 2014, 227, 567–592. [Google Scholar] [CrossRef]

- Salimi, M.; Lotfi, T.; Sharifi, S.; Siegmund, S. Optimal Newton Secant like methods without memory for solving nonlinear equations with its dynamics. Int. J. Comput. Math. 2017, 94, 1759–1777. [Google Scholar] [CrossRef] [Green Version]

- Soleimani, F.; Soleymani, F.; Shateyi, S. Some Iterative Methods Free from Derivatives and Their Basins of Attraction for Nonlinear Equations. Discret. Dyn. Nat. Soc. 2013, 2013, 301718. [Google Scholar] [CrossRef] [Green Version]

- Campos, B.; Cordero, A.; Torregrosa, J.R.; Vindel, P. Stability of King’s family of iterative methods with memory. Appl. Math. Comput. 2015, 271, 701–715. [Google Scholar] [CrossRef] [Green Version]

- Wang, X.; Zhang, T.; Qin, Y. Efficient two-step derivative-free iterative methods with memory and their dynamics. Int. J. Comput. Math. 2016, 93, 1423–1446. [Google Scholar] [CrossRef]

- Campos, B.; Cordero, A.; Torregrosa, J.; Vindel, P. A multidimensional dynamical approach to iterative methods with memory. Comput. Appl. Math. 2017, 318, 504–514. [Google Scholar] [CrossRef] [Green Version]

- Chicharro, F.; Cordero, A.; Garrido, N.; Torregrosa, J.R. On the effect of the multidimensional weight functions on the stability of iterative processes. Comput. Appl. Math. 2022, 405, 113052. [Google Scholar] [CrossRef]

- Cordero, A.; Jordán, C.; Sanabria-Codesal, E.; Torregrosa, J.R. Design, convergence and stability of a fourth-order class of iterative methods for solving nonlinear vectorial problems. Fractal Fract. 2021, 5, 125. [Google Scholar] [CrossRef]

- Cordero, A.; Soleymani, F.; Torregrosa, J.R. Dynamical analysis of iterative methods for nonlinear systems or how to deal with the dimension? Appl. Math. Comput. 2014, 244, 398–412. [Google Scholar] [CrossRef]

- Wang, X.; Chen, X. Derivative-Free Kurchatov-Type Accelerating Iterative Method for Solving Nonlinear Systems: Dynamics and Applications. Fractal Fract. 2022, 6, 59. [Google Scholar] [CrossRef]

- Liu, Y.; Pang, G. The basin of attraction of the Liu system. Commun. Nonlinear Sci. Numer. Simul. 2011, 16, 2065–2071. [Google Scholar] [CrossRef]

- Robinson, R.C. An Introduction to Dynamical Systems: Continuous and Discrete; Pearson Education: Cranbury, NJ, USA, 2004. [Google Scholar]

- Silvester, J. Determinants of Block Matrices. Math. Assoc. 2000, 84, 460–467. [Google Scholar] [CrossRef] [Green Version]

- Kurchatov, V.A. On a method of linear interpolation for the solution of funcional equations (Russian). Dolk. Akad. Nauk. SSSR 1971, 198, 524–526, Translation in Soviet Math. Dolk. 1971, 12, 835–838. [Google Scholar]

- Traub, J.F. Iterative Method for the Solution of Equations; Prentice Hall: New York, NJ, USA, 1964. [Google Scholar]

| if | if | ||

| if | if | ||

| if | |||

| if |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cordero, A.; Garrido, N.; Torregrosa, J.R.; Triguero-Navarro, P. Symmetry in the Multidimensional Dynamical Analysis of Iterative Methods with Memory. Symmetry 2022, 14, 442. https://doi.org/10.3390/sym14030442

Cordero A, Garrido N, Torregrosa JR, Triguero-Navarro P. Symmetry in the Multidimensional Dynamical Analysis of Iterative Methods with Memory. Symmetry. 2022; 14(3):442. https://doi.org/10.3390/sym14030442

Chicago/Turabian StyleCordero, Alicia, Neus Garrido, Juan R. Torregrosa, and Paula Triguero-Navarro. 2022. "Symmetry in the Multidimensional Dynamical Analysis of Iterative Methods with Memory" Symmetry 14, no. 3: 442. https://doi.org/10.3390/sym14030442

APA StyleCordero, A., Garrido, N., Torregrosa, J. R., & Triguero-Navarro, P. (2022). Symmetry in the Multidimensional Dynamical Analysis of Iterative Methods with Memory. Symmetry, 14(3), 442. https://doi.org/10.3390/sym14030442