Fabric Surface Defect Detection Using SE-SSDNet

Abstract

1. Introduction

2. Related Work—Deep Learning-Based Method for Fabric Defect

- (1)

- We propose a novel deep learning algorithm SE-SSD Net which can be used for fabric defect detection.

- (2)

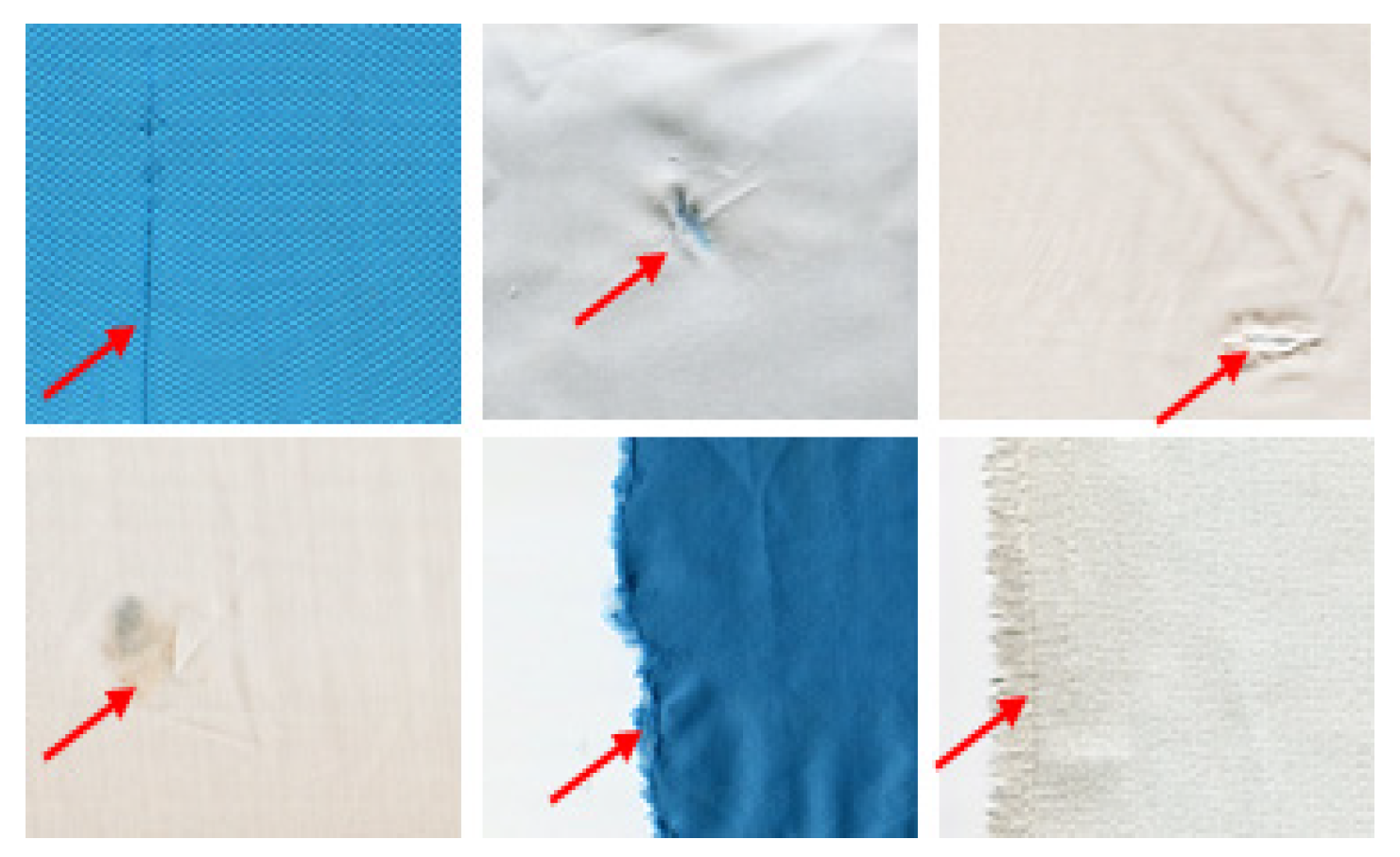

- Compared with traditional methods, our proposed method can detect six different types of defects in colored fabrics.

- (3)

- Our proposed method can adapt to the textured background of fabrics of varying complexity.

- (4)

- The method overcomes the interference of image noise and improves the efficiency of detection. In addition, to better suit the industrialization needs, we conducted experimental validation on the textile defect dataset from Xi’an Polytechnic University and our own dataset, and the results proved that the method is the state-of-the-art method to solve such problems.

3. Proposed Approach

3.1. Overview

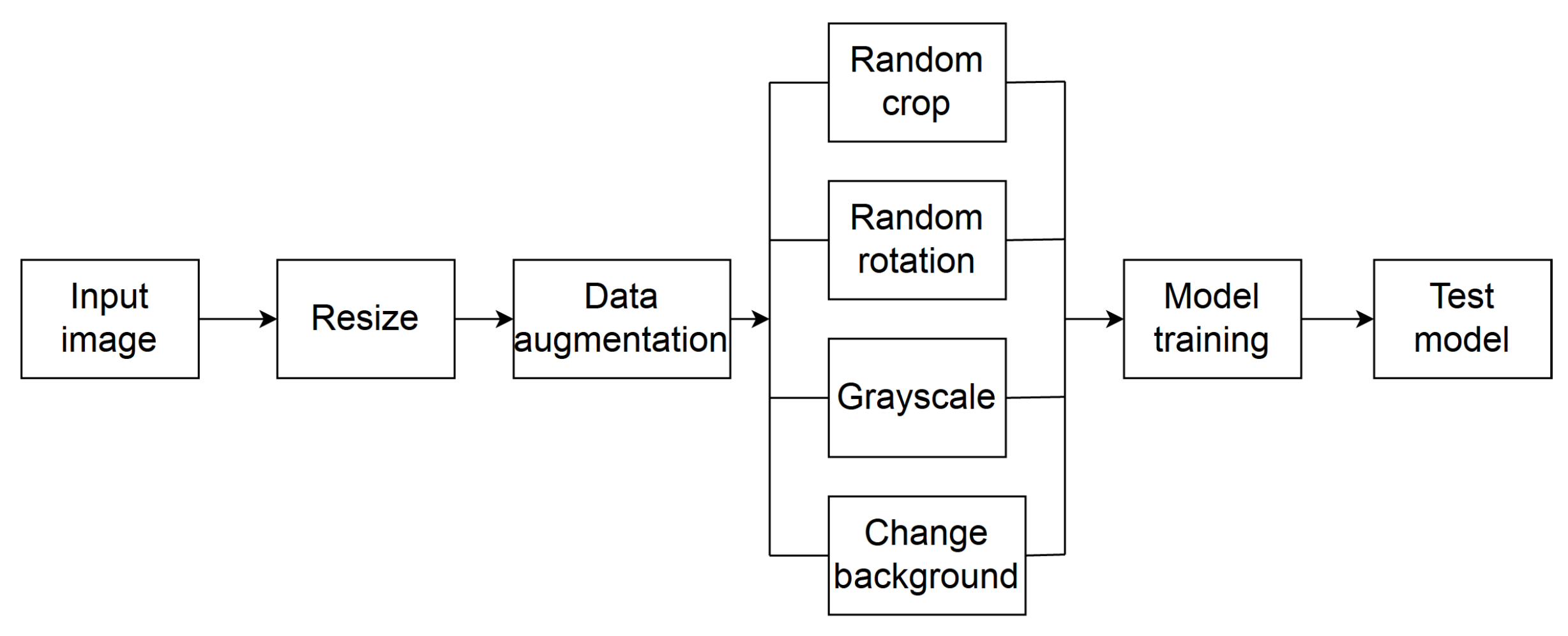

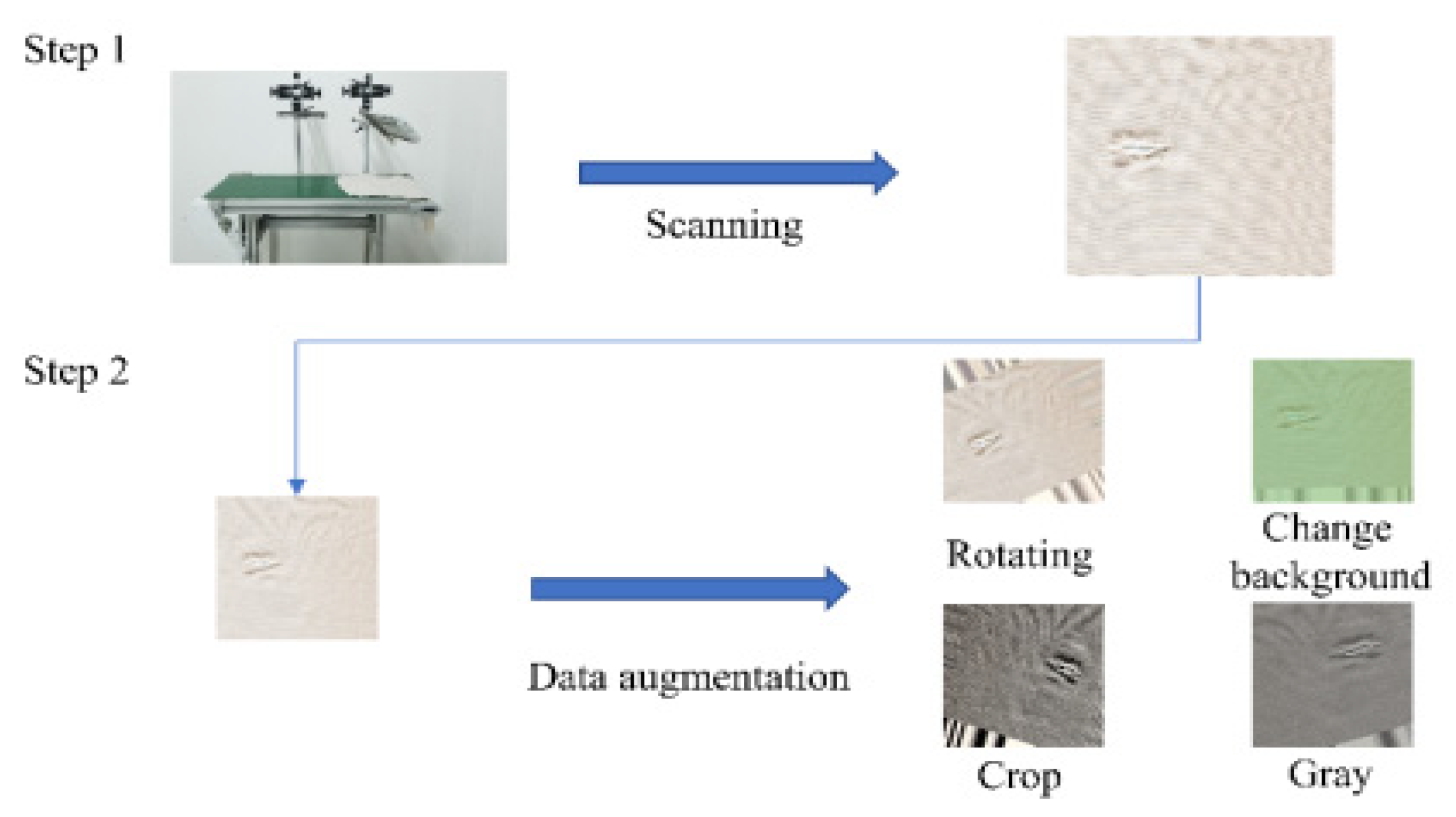

3.2. Data Augmentation

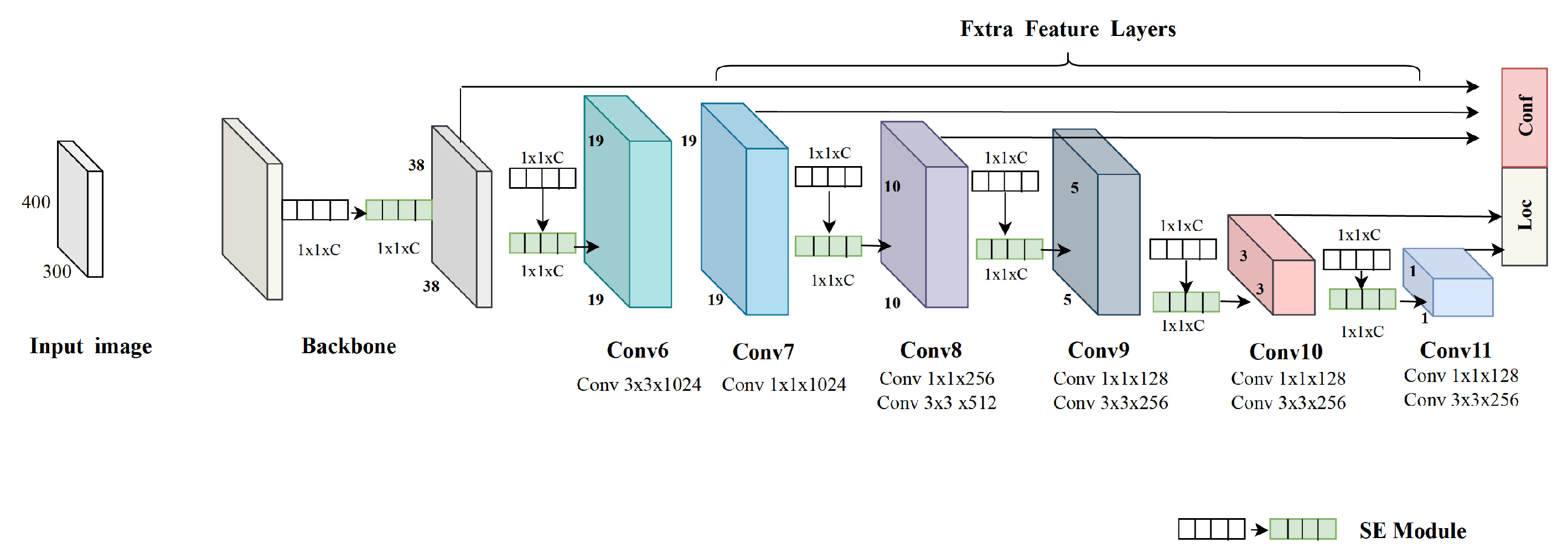

3.3. Network Architecture

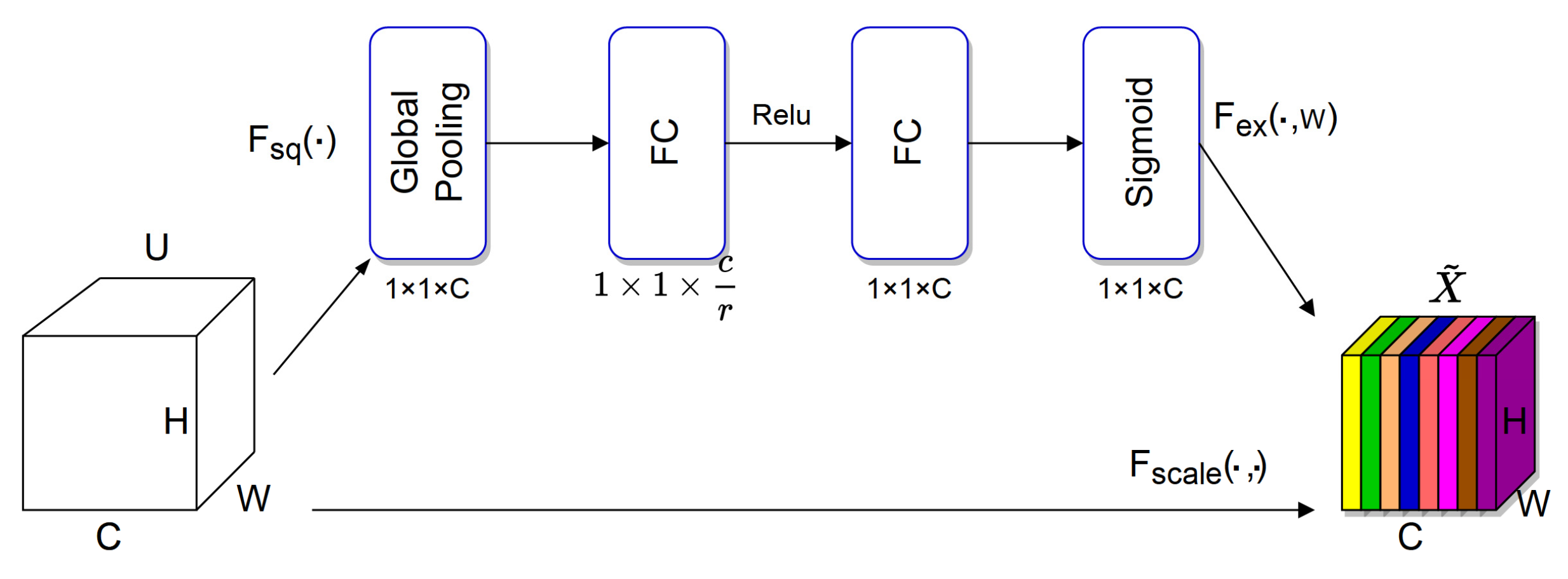

3.3.1. SE Module

3.3.2. Detection Network Architecture

4. Experiments and Discussion

- (1)

- The proposed detection model is applied to two fabric datasets;

- (2)

- The proposed defect detection model is compared with the other three representative detection models.

4.1. Experimental Setup

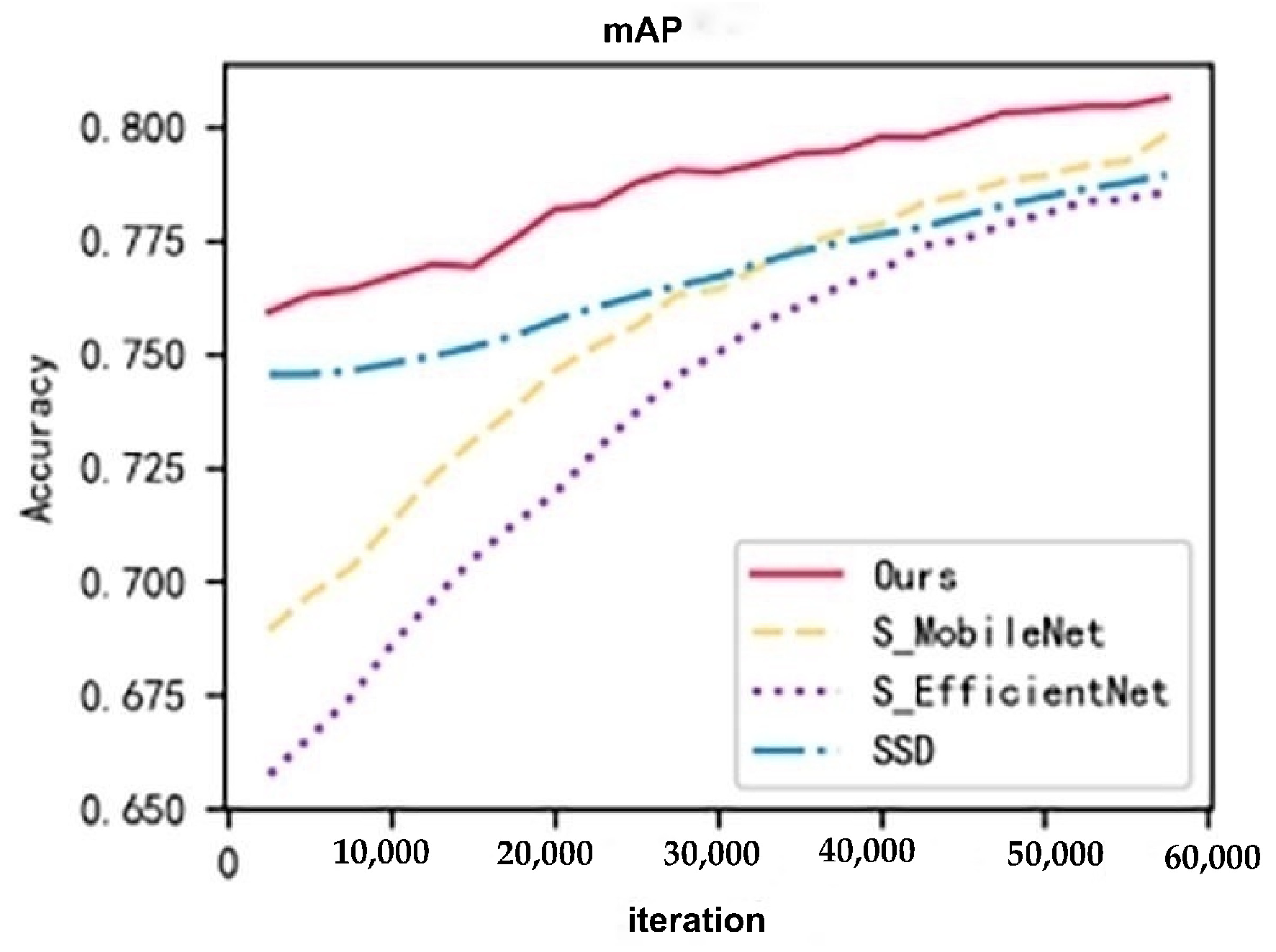

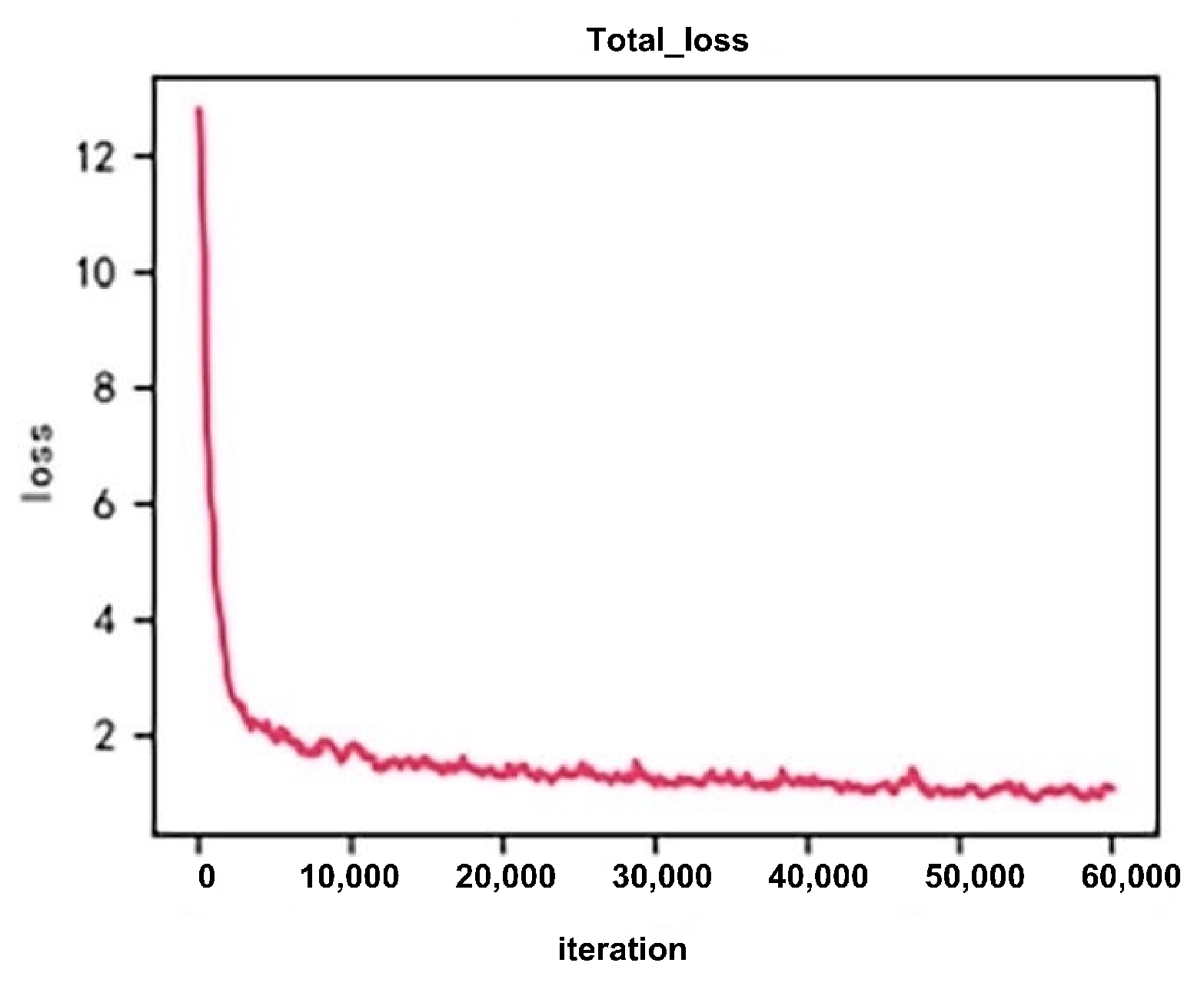

4.2. Result Analysis

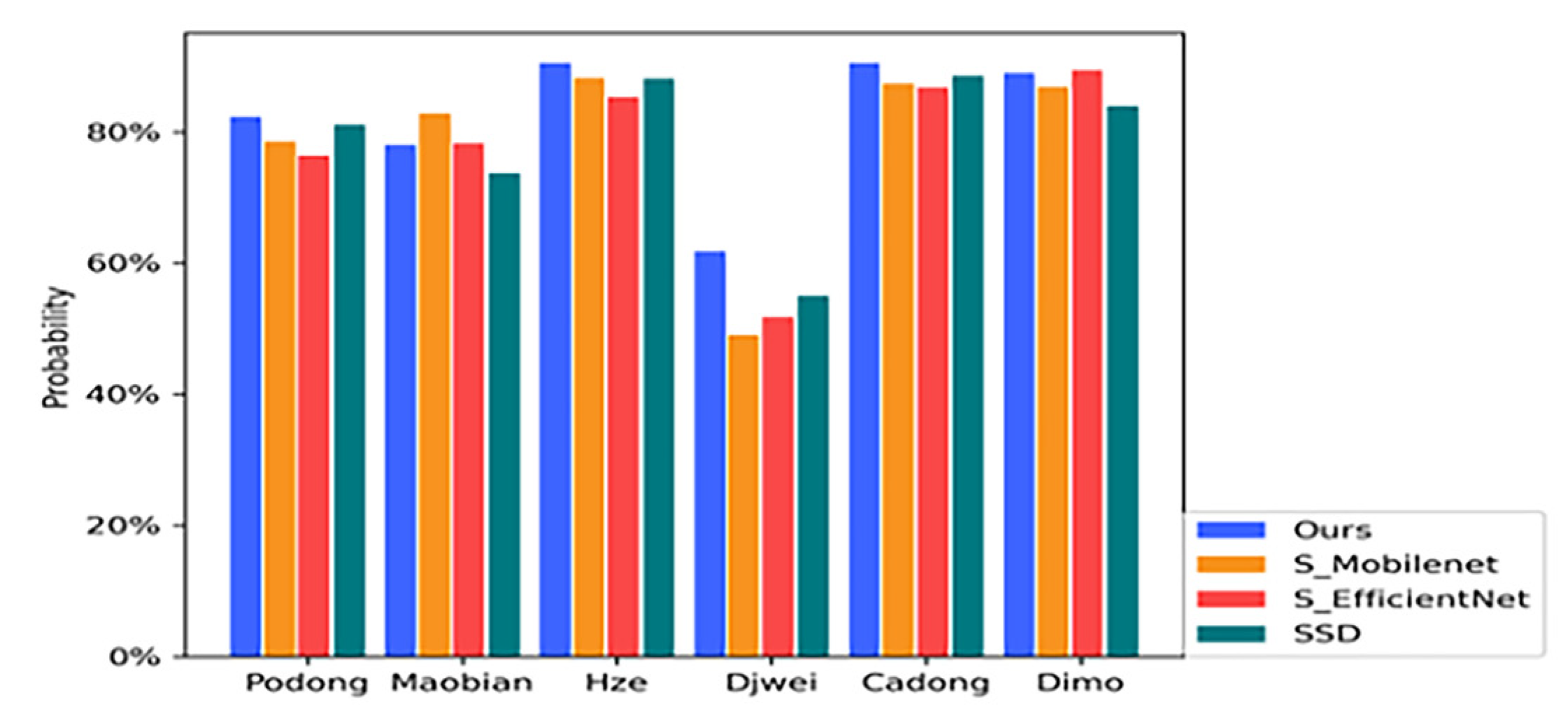

4.3. Method Comparison

4.4. Discussion

- (1)

- For point defects of fabrics, sometimes there are misjudgments. For such defects, we adopt the fabric surface defect detection algorithm based on the GAN network [33] and Hough transform, by introducing the generative GAN network to simulate defect samples, performing random Hough transform on the obtained defect samples. Then, plotting the defect location in the original fabric image and returning the coordinate value of the defect location greatly improves the detection efficiency.

- (2)

- For the probability problem of texture affecting defect misclassification, the traditional fabric surface defect detection algorithm based on L0 gradient minimization smoothing [34] and K-means clustering can be used. The core idea is to improve the contrast between the defect region and the background region, use the image smoothing method to remove the image texture and retain the larger edges in the image, then treat the defect region and the background region as two different classes of pixel points. Finally, use the K-means clustering method to segment the defect region and the background region, and binarize the image so that the defects are completely separated.

- (3)

- For real-time consideration, the SSD model can be compressed by reducing the number of channels and setting a priori frame values that are more in line with the data according to the characteristics of cloth defects, especially the loss function can be optimized, and different model frames can be used for different textures of cloth. As the training time of SE-SSD is too long, the data set can be divided according to the proportion, 90% of the data set is divided into the training set and 10% of the data set is divided into the test set. Specific data enhancement means are applied to the training set to simulate various lighting conditions through color space transformation for data augmentation to improve the generalization ability and real-time performance of the model. The next step focuses on the effect of cloth parameters on the model, thus improving the real-time performance in terms of model prediction.

5. Conclusions and Future Work

5.1. Conclusions

5.2. Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Hanbay, K.; Talu, M.F.; Özgüven, Ö.F. Fabric defect detection systems and methods—A systematic literature review. Optik 2016, 127, 11960–11973. [Google Scholar] [CrossRef]

- Bullon, J.; Arrieta, A.G.; Encinas, A.H.; Dios, A.Q. Manufacturing processes in the textile industry. Expert Systems for fabrics production. Adv. Distrib. Comput. Artificial Intell. J. 2017, 6, 15–23. [Google Scholar]

- Singh, K.; Kaleka, J.; Kaleka, J. Identification and classification of fabric defects. Int. J. Adv. Res. 2016, 4, 1137–1141. [Google Scholar] [CrossRef]

- Song, L.; Li, R.; Chen, S. Fabric defect detection based on membership degree of regions. IEEE Access 2020, 99, 1. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, D.; Lee, D.-J. Automatic fabric defect detection with a wide-and-compact network. Neurocomputing 2019, 329, 329–338. [Google Scholar] [CrossRef]

- Patil; Minal; Verma, S.; de Wako, J. A review on fabric defect detection techniques. Int. Res. J. Eng. 2017, 4, 131–136. [Google Scholar]

- Zhao, Y.; Hao, K.; He, H.; Tang, X.; Wei, B. A visual long-short-term memory based integrated CNN model for fabric defect image classification. Neurocomputing 2019, 380, 259–270. [Google Scholar] [CrossRef]

- Peng, P.; Wang, Y.; Hao, C.; Zhu, Z.; Liu, T.; Zhou, W. Automatic Fabric Defect Detection Method Using PRAN-Net. Appl. Sci. 2020, 10, 8434. [Google Scholar] [CrossRef]

- Li, C.; Li, J.; Li, Y.; He, L.; Fu, X.; Chen, J. Fabric Defect Detection in Textile Manufacturing: A Survey of the State of the Art. Secur. Commun. Netw. 2021, 2021, 13. [Google Scholar] [CrossRef]

- Liu, Z.; Liu, S.; Li, C. Fabric defects detection based on SSD. In Proceedings of the 2nd International Conference on Graphics and Signal Processing: ICGSP’18, Sydney, Australia, 6–8 October 2018. [Google Scholar]

- Jin, L.; Liu, G. An Approach on Image Processing of Deep Learning Based on Improved SSD. Symmetry 2021, 13, 495. [Google Scholar] [CrossRef]

- Zhou, H.; Jang, B.; Chen, Y.; Troendle, D. Exploring faster RCNN for fabric defect detection. In Proceedings of the 2020 Third International Conference on Artificial Intelligence for Industries (AI4I), Irvine, CA, USA, 21–23 September 2020. [Google Scholar]

- Zhao, Z.; Gui, K.; Wang, P. Fabric defect detection based on cascade faster R-CNN. In Proceedings of the 4th Inter-national Conference on Computer Science and Application Engineering, Sanya, China, 20–22 October 2020. [Google Scholar]

- Wu, J.; Le, J.; Xiao, Z.; Zhang, F.; Geng, L.; Liu, Y.; Wang, W. Automatic fabric defect detection using a wide-and-light network. Appl. Intell. 2021, 51, 4945–4961. [Google Scholar]

- Jun, X.; Wang, J.; Zhou, J.; Meng, S.; Pan, R.; Gao, W. Fabric defect detection based on a deep convolutional neural network using a two-stage strategy. Text. Res. J. 2020, 91, 130–142. [Google Scholar] [CrossRef]

- Ouyang, W.; Xu, B.; Hou, J.; Yuan, X. Fabric defect detection using activation layer embedded convolutional neural network. IEEE Access 2019, 7, 70130–70140. [Google Scholar] [CrossRef]

- An, M.; Wang, S.; Zheng, L.; Liu, X. Fabric defect detection using deep learning: An improved faster r-approach. In Proceedings of the International Conference on Computer Vision, Image and Deep Learning, Chongqing, China, 10–12 July 2020; pp. 319–324. [Google Scholar]

- Zhang, K.; Yan, Y.; Li, P.; Jing, J.; Liu, X.; Wang, Z. Fabric Defect Detection Using Salience Metric for Color Dissimilarity and Positional Aggregation. IEEE Access 2018, 6, 49170–49181. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7132–7141. [Google Scholar]

- Zhu, Z.; Han, G.; Jia, G.; Shu, L. Modified dense Net for automatic fabric defect detection with edge computing for minimizing latency. IEEE Internet Things J. 2020, 7, 9623–9636. [Google Scholar] [CrossRef]

- Wang, Z.; Jing, J.F. Pixel-wise fabric defect detection by CNNs without labeled training data. IEEE Access 2020, 8, 161317–161325. [Google Scholar] [CrossRef]

- Huang, Y.Q.; Jing, J.F.; Wang, Z. Fabric defect segmentation method based on deep learning. IEEE Trans. Instrum. Meas. 2021, 70, 1–15. [Google Scholar] [CrossRef]

- Pourkaramdel, Z.; Fekri-Ershad, S.; Nanni, L. Fabric defect detection based on completed local quartet patterns and majority decision algorithm. Expert Syst. Appl. 2022, 198, 116827. [Google Scholar] [CrossRef]

- Fekri-Ershad, Shervan, Tajeripour, Multi-Resolution and Noise-Resistant Surface Defect Detection Approach Using New Version of Local Binary Patterns. Appl. Artif. Intell. 2017, 31, 395–410. [CrossRef]

- Hu, J.; Shen, L.; Albanie, S.; Sun, G.; Wu, E. Squeeze-and-Excitation Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 2011–2023. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.-Y.; Berg, A.C. SSD: Single Shot MultiBox Detector, Computer Vision—ECCV 2016 Lecture Notes in Computer Scienc; Springer International Publishing AG: Berlin/Heidelberg, Germany, 2016; pp. 21–37. [Google Scholar]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.-C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for MobileNetV3. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 1314–1324. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, 1409–1556. [Google Scholar] [CrossRef]

- Chu, J.; Zhang, Y.; Li, S.; Leng, L.; Miao, J. Syncretic-NMS: A Merging Non-Maximum Suppression Algorithm for Instance Segmentation. IEEE Access 2020, 8, 114705–114714. [Google Scholar] [CrossRef]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.; Sadeghian, A.; Reid, I.; Savarese, S. Generalized intersection over union: A metric and a loss for bounding box regression. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 658–666. [Google Scholar]

- Li, X.; Wang, W.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized focal loss v2: Learning reliable localization quality estimation for dense object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 11632–11641. [Google Scholar]

- Nemoto, K.; Hamaguchi, R.; Imaizumi, T.; Hikosaka, S. Classification of rare building change using CNN with multi-class focal loss. In Proceedings of the 2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 4663–4666. [Google Scholar]

- Liu, J.; Wang, C.; Su, H.; Du, B.; Tao, D. Multistage GAN for Fabric Defect Detection. IEEE Trans. Image Process. 2020, 29, 3388–3400. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H.; Ma, J.; Jing, J.; Li, P. Fabric Defect Detection Using L0 Gradient Minimization and Fuzzy C-Means. Appl. Sci. 2019, 9, 3506. [Google Scholar] [CrossRef]

| One-Stage Detectors | Two-Stage Detectors |

|---|---|

| YOLO | Faster RCNN |

| SSD | Mask RCNN |

| YOLOv5/v6/v7 | Cascade RCNN |

| RefineDet | FPN |

| RetinaNe | R-FCN |

| PoDong | Mobian | Hze | Djwei | Cadong | Dimo | |

|---|---|---|---|---|---|---|

| Ours | 82.28% | 78.01% | 90.54% | 61.75% | 90.49% | 86.99% |

| S_Mobilenet | 78.48% | 82.77% | 88.22% | 49.05% | 87.31% | 86.83% |

| S_Efficientnet | 76.39% | 78.21% | 85.26% | 51.75% | 86.70% | 89.35% |

| SSD | 81.10% | 73.72% | 88.11% | 55.03% | 88.54% | 83.88% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhao, H.; Zhang, T. Fabric Surface Defect Detection Using SE-SSDNet. Symmetry 2022, 14, 2373. https://doi.org/10.3390/sym14112373

Zhao H, Zhang T. Fabric Surface Defect Detection Using SE-SSDNet. Symmetry. 2022; 14(11):2373. https://doi.org/10.3390/sym14112373

Chicago/Turabian StyleZhao, Hanqing, and Tuanshan Zhang. 2022. "Fabric Surface Defect Detection Using SE-SSDNet" Symmetry 14, no. 11: 2373. https://doi.org/10.3390/sym14112373

APA StyleZhao, H., & Zhang, T. (2022). Fabric Surface Defect Detection Using SE-SSDNet. Symmetry, 14(11), 2373. https://doi.org/10.3390/sym14112373