1. Introduction

In 2019, Wuhan is a commercial center of Hubei province in China that faced a flare-up of a novel 2019 coronavirus that killed more than hundreds and infected over thousands of individuals within the initial days of the novel coronavirus pestilence. The Chinese researchers named the novel virus as the 2019 novel coronavirus (2019-nCov) or the Wuhan virus [

1]. The International Committee of Viruses titled the virus of 2019 as the Severe Acute Respiratory Syndrome CoronaVirus-2 (SARS-CoV-2) and the malady as Coronavirus disease 2019 (COVID-19) [

2,

3,

4]. The subgroups of the coronaviruses family are alpha-CoV (α), beta-CoV (β), gamma-CoV (δ), and delta-CoV (γ) coronavirus. SARS-CoV-2 was announced to be an organ of the beta-CoV (β) group of coronaviruses. In 2003, the Kwangtung people were infected with a 2013 virus lead to the Severe Acute Respiratory Syndrome (SARS-CoV). SARS-CoV was assured as a family of the beta-CoV (β) subgroup and was title as SARS-CoV [

5]. Historically, SRAS-CoV, across 26 countries in the world, infected more than 8000 individuals with a death rate of 9%. Moreover, SARS-CoV-2 infected more than 750,000 individuals with a death rate of 4%, across 150 states, untill the date of this lettering. It demonstrates that the broadcast rate of SARS-CoV-2 is higher than SRAS-CoV. The transmission ability is enhanced because of authentic recombination of S protein in the RBD region [

6].

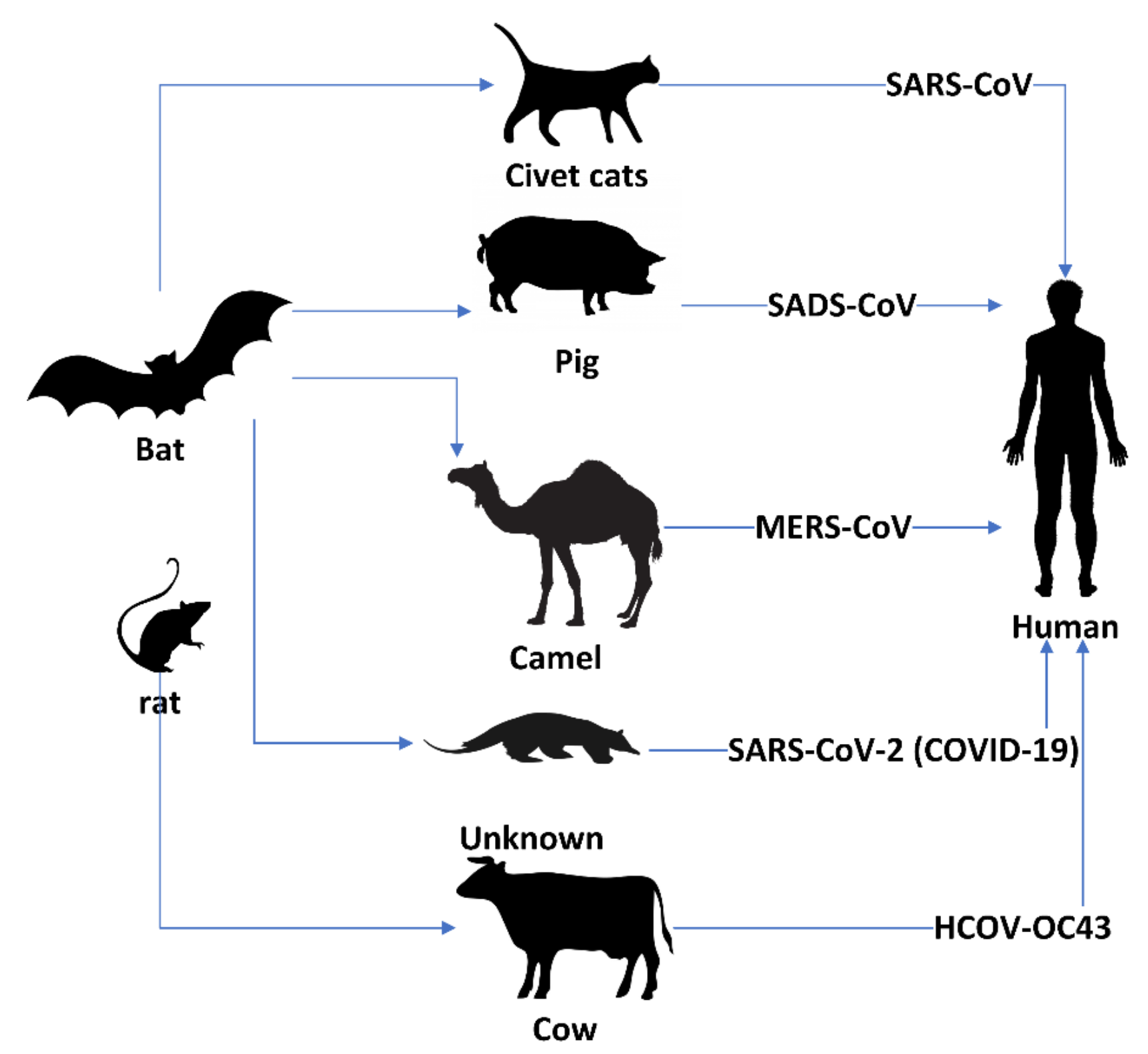

Beta-coronaviruses have caused malady to people that have had wild animals generally either in bats or rats [

7,

8]. SARS-CoV-1 and MERS-CoV (camel flu) were transmitted to people from some wild cats and Arabian camels respectively as shown in

Figure 1. The sale and buy of unknown animals may be the provenance of coronavirus infection. The invention of the various progeny of pangolin coronavirus and their propinquity to SARS-CoV-2 suggests that pangolins should be a thinker as possible hosts of novel 2019 coronaviruses. Wild animals must be taken away from wild animal markets to stop animal coronavirus transmission [

9]. Coronavirus transmission has been assured by World Health Organization (WHO) and by The Centers for Diseases of the US, with evidence of human-to-human conveyance from five different cases outside China, namely in Italy [

10], US [

11], Nepal [

12], Germany [

13], and Vietnam [

14]. On 31 March 2020, SARS-CoV-2 confirmed more than 750,000 cases, 150,000 recovered cases, and 35,000 death cases.

Table 1 show some statistics about SARS-CoV-2 [

15].

1.1. Deep Learning

Nowadays, Deep Learning (DL) is a subfield of machine learning concerned with techniques inspired by neurons of the brain [

16]. Today, DL is quickly becoming a crucial technology in image/video classification and detection. DL depends on algorithms for reasoning process simulation and data mining, or for developing abstractions [

17]. Hidden deep layers on DL maps input data to labels to analyze hidden patterns in complicated data [

18]. Besides their use in medical X-ray recognition, DL architectures are also used in other areas in the application of image processing and computer vision in medical. DL improves such a medical system to realize higher outcomes, widen illness scope, and implementing applicable real-time medical image [

19,

20] disease detection systems.

Table 2 shows a series of major contributions in the field of the neural network to deep learning [

21].

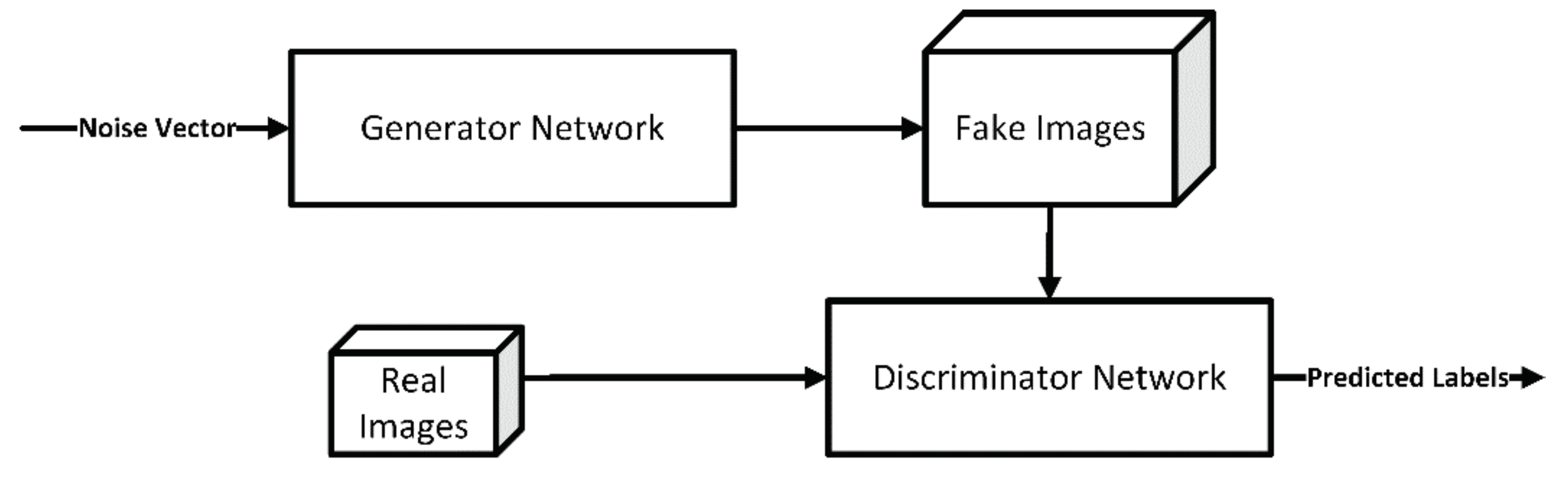

1.2. Generative Adversarial Network

Generative Adversarial Network (GAN) is a class of deep learning models invented by Ian Goodfellow in 2014 [

23]. GAN models have two main networks, called the generative network and discriminative network. The first neural network is the generator network, responsible for generating new fake data instances that look like training data. The discriminator tries to distinguish between real data and fake (artificially generated) data generated by the generator network as shown in

Figure 2. The mission GANs models that generator network is to try fooling the discriminator network and the discriminator network tries to fight from being fooled [

24,

25,

26,

27].

1.3. Convolution Neural Networks

Convolutional Neural Networks (ConvNets or CNNs) are a category of deep learning techniques used primarily to recognize and classify the image. Convolutional Neural Networks have accomplished extraordinary success for medical image/video classification and detection. In 2012, Ciregan et al. and Krizhevsky and et al. [

28,

29] showed how CNNs based on Graphics Processing Unit (GPU) can enhance many vision benchmark records such as MNIST [

30], Chinese characters [

31], Arabic digits recognition [

32], Arabic handwritten characters recognition [

33], NORB (jittered, cluttered) [

34], traffic signs [

35], and large-scale ImageNet [

36] benchmarks. In the following years, various advances in ConvNets further increased the accuracy rate on the image detection/classification competition tasks. ConvNets pre-trained models introduced significant improvements in succeeding in the annual challenges of ImageNet Large Scale Visual Recognition Competition (ILSVRC). Deep Transfer Learning (DTL) is a deep learning (DL) model that focuses on storing weights gained while solving one image classification and applying it to a related problem. Many DTL models were introduced like VGGNet [

37], GoogleNet [

38], ResNet [

39], Xception [

40], Inception-V3 [

41] and DenseNet [

42].

The novelty of this paper is conducted as follows: i) the introduced ConvNet models have end-to-end structure without classical feature extraction and selection methods. ii) We show that GAN is an effective technique to generate X-ray images. iii) Chest X-ray images are one of the best tools for the classification of SARS-CoV-2. iv) The deep transfer learning models have been shown to yield very high outcomes in the small dataset COVID-19. The rest of the paper is organized as follows.

Section 2 explores related work and determines the scope of this works.

Section 3 discusses the dataset used in our paper.

Section 4 presents the proposed models, while

Section 5 illustrates the achieved outcomes and its discussion. Finally,

Section 6 provides conclusions and directions for further research.

2. Related Works

This part conducts a survey on the recent scientific researches for applying machine learning and deep learning in the field of medical pneumonia and coronavirus X-ray classification. Classical image classification stages can be divided into three main stages: image preprocessing, feature extraction, and feature classification. Stephen et al. [

43] proposed a new study of classifying and detect the presence of pneumonia from a collection of chest X-ray image samples based on a ConvNet model trained from scratch based on dataset [

44]. The outcomes obtained were training loss = 12.88%, training accuracy = 95.31%, validation loss = 18.35%, and validation accuracy = 93.73%.

In [

45], the Authors introduced an early diagnosis system from Pneumonia chest X-ray images based on Xception and VGG16. In this study, a database containing approximately 5800 frontal chest X-ray images introduced by Kermany et al [

44] 1600 normal case, 4200 up-normal pneumonia case in the Kermany X-ray database. The trial outcomes showed that VGG-16 network better than X-ception network with a classification rate of 87%. Forasmuch X-ception network better than VGG-16 network by sensitivity 85%, precision 86% and recall 94%. X-ception network is more felicitous for classifying X-ray images than VGG-16 network. Varshni et al. [

46] proposed pre-trained ConvNet models (VGG-16, Xception, Res50, Dense-121, and Dense-169) as feature-extractors followed by different classifiers (SVM, Random Forest, k-nearest neighbors, Naïve Bayes) for the detection of normal and abnormal pneumonia X-rays images. The prosaists used ChestX-ray14 introduced by Wang et al. [

47].

Chouhan et al. [

48] introduced an ensemble deep model that combines outputs from all transfer deep models for the classification of pneumonia using the connotation of deep learning. The Guangzhou Medical Center [

44] database introduced a total of approximately 5200 X-ray images, divided to 1300 X-ray normal, 3900 X-rays abnormal. The proposed model reached a miss-classification error of 3.6% with a sensitivity of 99.6% on test data from the database. Ref. [

49] proposed a Compressed Sensing (CS) with a deep transfer learning model for automatic classification of pneumonia on X-ray images to assist the medical physicians. The dataset used for this work contained approximately 5850 X-ray data of two categories (abnormal /normal) obtained from Kaggle. Comprehensive simulation outcomes have shown that the proposed approach detects the classification of pneumonia (abnormal /normal) with 2.66% miss-classification.

In this research, we introduced a transfer of deep learning models to classify COVID-19 X-ray images. To input adopting X-ray images of the chest to the convolutional neural network, we embedded the medical X-ray images using GAN to generate X-ray images. After that, a classifier is used to ensemble the outputs of the classification outcomes. The proposed transfer model was evaluated on the proposed dataset.

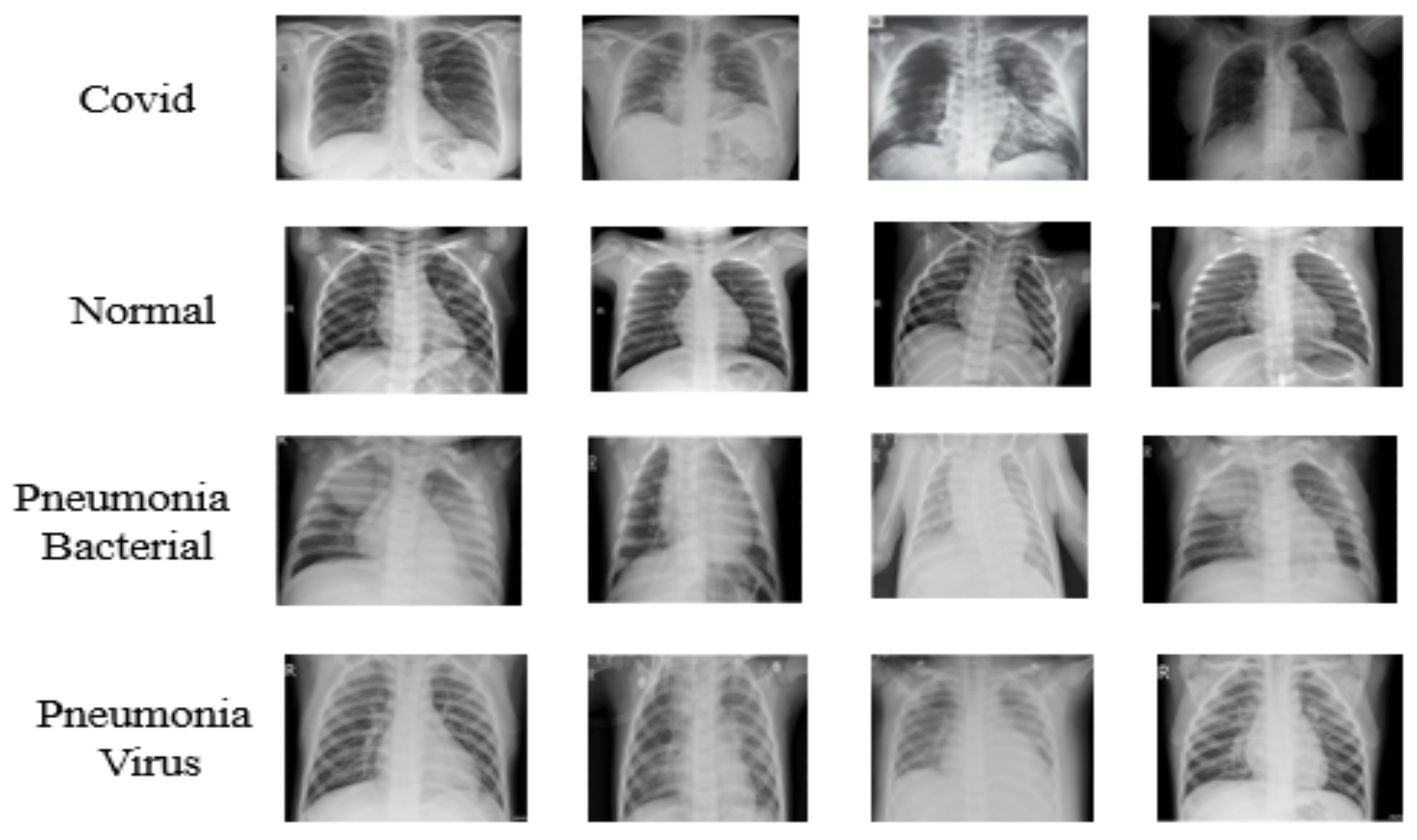

3. Dataset

The COVID-19 dataset [

50] utilized in this research [

51] was created by Dr. Joseph Cohen, a postdoctoral fellow at the University of Montreal. The Pneumonia [

44] dataset Chest X-ray Images was used to build the proposed dataset. The dataset [

52] is organized into two folders (train, test) and contains sub-folders for each image category (COVID-19/normal/pneumonia bacterial/ pneumonia virus). There are 306 X-ray images (JPEG) and four categories (COVID-19/normal/pneumonia bacterial/ pneumonia virus). The number of images for each class is presented in

Table 3.

Figure 3 illustrates samples of images used for this research.

Figure 4 also illustrates that there is a lot of variation of image sizes and features that may reflect on the accuracy of the proposed model which will be presented in the next section.

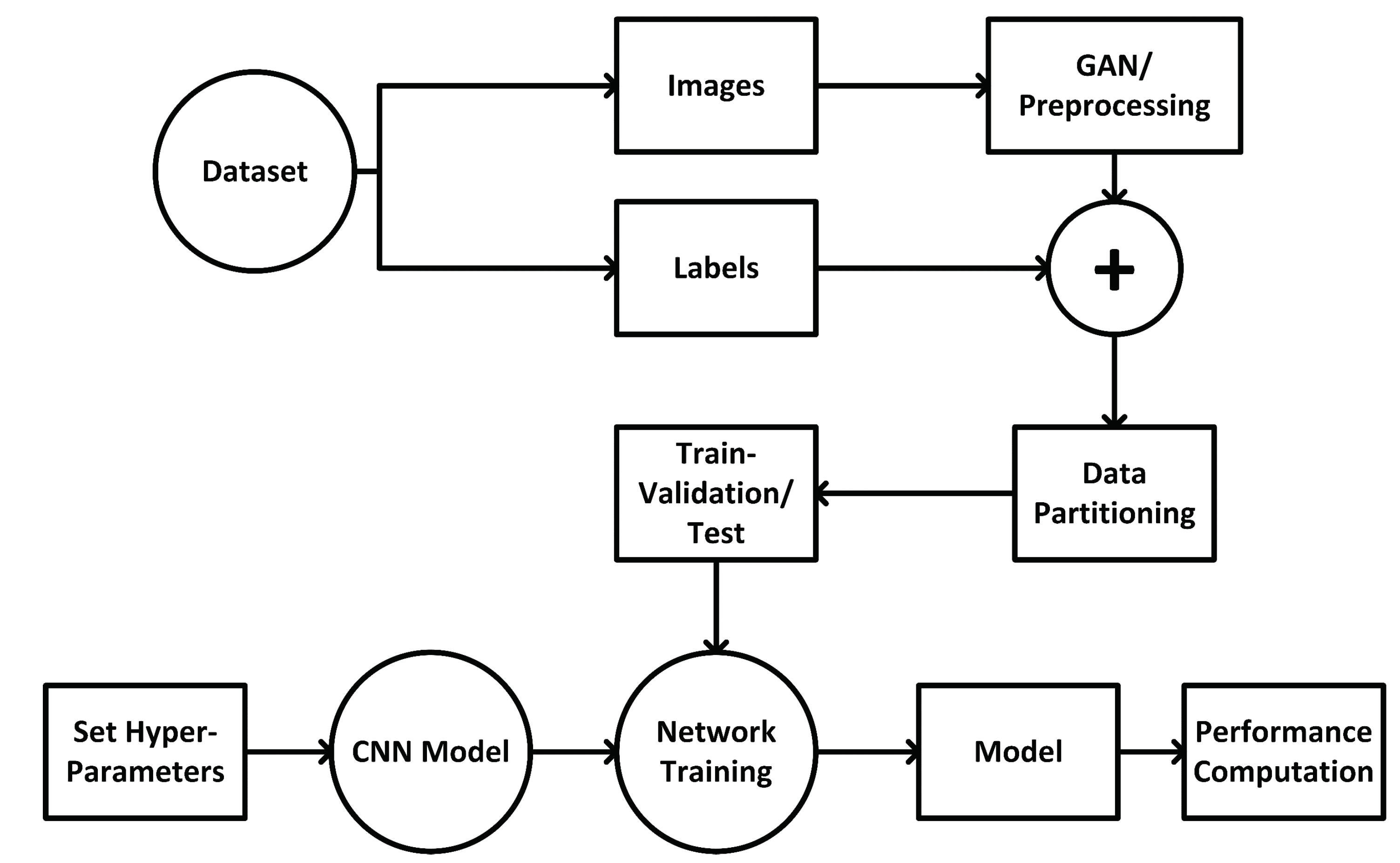

4. The Proposed Model

The proposed model includes two main deep learning components, the first component is GAN and the second component is the deep transfer model.

Figure 4 illustrates the proposed GAN/Deep transfer learning model. Mainly, the GAN used for the preprocessing phase while the deep transfer model used in the training, validation and testing phase.

Algorithm 1 introduces the proposed transfer model in detail. Let

{Alexnet, Googlenet, Resnet18} be the set of transfer models. Each deep transfer model is fine-tuned with the COVID-19 X-ray Images dataset

; where

the set of

input data, each of size, 512 lengths × 512 widths, and

have the identical class,

{COVID-19; normal; pneumonia bacterial; pneumonia virus }}. The dataset divided to train and test, training set

for 90% percent for the training and then validation while 10% percent for the testing. The 90% percent was divided into 80% for training and 20% for the validation. The selection of 80% for the training and 20% in the validation proved it is efficient in many types of research such as [

53,

54,

55,

56,

57]. The training data then divided into mini-batches, each of size

, such that

;

and iteratively optimizes the DCNN model

to reduce the functional loss as illustrated in Equation (1).

where

is the ConvNet model that true label

for input

given

is a weight and

is the multi-class entropy loss function.

This research relied on the deep transfer learning CNN architectures to transfer the learning weights to reduce the training time, mathematical calculations and the consumption of the available hardware resources. There are several types of research in [

53,

58,

59] tried to build their architecture, but those architecture are problem-specific and cannot fit the data presented in this paper. The used deep transfer learning CNN models investigated in this research are Alexnet [

29], Resnet18 [

39], Googlenet [

60], The mentioned CNN models had a few numbers of layers if it is compared to large CNN models such as Xception [

40], Densenet [

42], and Inceptionresnet [

61] which consist of 71, 201 and 164 layers accordingly. The choice of these models will reflect on reducing the training time and the complexity of the calculations.

| Algorithm 1 Introduced algorithm. |

- 1:

Input data: COVID-19 Chest X-ray Images ; where {COVID-19; normal; pneumonia bacterial; pneumonia virus}} - 2:

Output data: The transfer model that detected the COVID-19 Chest X-ray image - 3:

Pre-processing steps: - 4:

modify the X-ray input to dimension 512 height × 512 width - 5:

Generate X-ray images using GAN - 6:

Mean normalize each X-ray data input - 7:

download and reuse transfer models D {Alexnet, Googlenet, Resnet18} - 8:

Replace the last layer of each transfer model by (4 × 1) layer dimension. - 9:

foreachdo - 10:

- 11:

for epochs = 1 to 20 do - 12:

foreach mini-batch do Modify the coefficients of the transfer if the error rate is increased for five epochs then end end - 13:

end - 14:

end - 15:

foreachdo - 16:

the outcome of all transfer architectures, - 17:

end

|

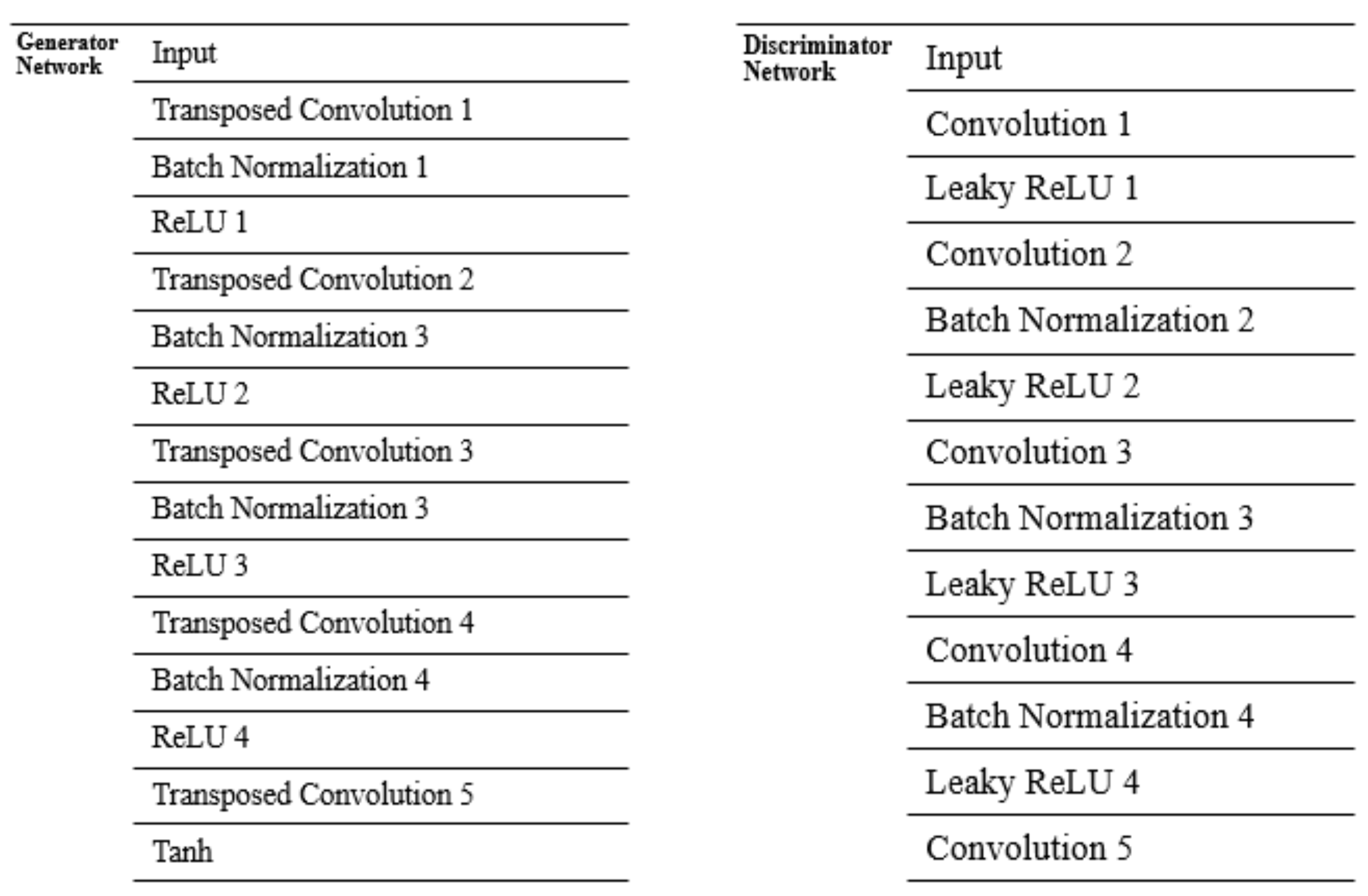

4.1. Generative Adversarial Network

GANs consist of two different types of networks. Those networks are trained simultaneously. The first network is trained on image generation while the other is used for discrimination. GANs are considered a special type of deep learning models. The first network is the generator, while the second network is the discriminator. The generator network in this research consists of five transposed convolutional layers, four ReLU layers, four batch normalization layers, and Tanh Layer at the end of the model, while the discriminator network consists of five convolutional layers, four leaky ReLU, and three batch normalization layers. All the convolutional and transposed convolutional layers used the same window size of 4*4* pixel with 64 filters for each layer.

Figure 5 presents the structure and the sequence of layers of the GAN network proposed in this research.

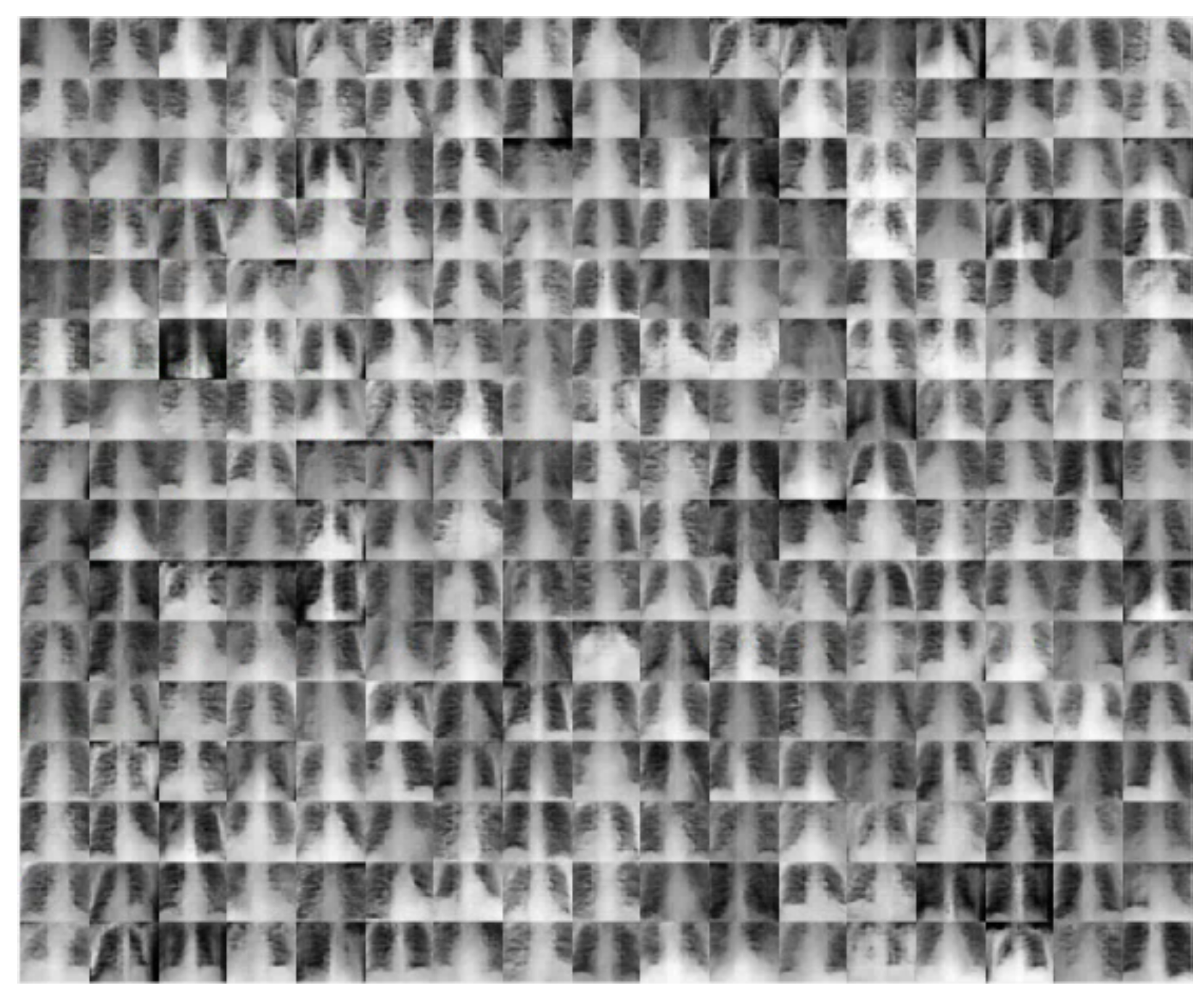

The GAN network helped in overcoming the overfitting problem caused by the limited number of images in the dataset. Moreover, it increased the dataset images to be 30 times larger than the original dataset. The dataset number of images reached 8100 images after using the GAN network for 4 classes. This will help in achieving a remarkable testing accuracy and performance matrices. The achieved results will be deliberated in detail in the experimental outcomes section.

Figure 6 presents samples of the output of the GAN network for the COVID-19 class.

4.2. Deep Transfer Learning

Convolutional Neural Networks (ConvNet) is the most successful type of model for image classification and detection. A single ConvNet model contains many different layers of neural networks that work on labeling edges and simple/complex features on neural network layers and more complex deep features in deeper network layers. An image is convolved with filters (kernels) and then max pooling is applied, this process may go on for some layers and at last recognizable features are obtained. Take the size of

(where

is width

height) feature map and a filterbank in layer

for example within

kernels at the size of

, augmenting the other two coefficients stride

and padding

, the outcome feature box in layer

is

as shown in Equation (2):

where [·] indicate to floor math. Kernels must be equal to that of the input map. as in Equation (3):

where

and

are indexes of input/output network maps at a range of

and

respectively.

here indicates the receptive field of kernel and

is the bias term. In equation (3),

is a non-linearity function applied to get non-linearity in deep transfer learning. In our transfer method, we used ReLU in equation (4) as the non-linearity function for rapid training process:

Our cost function in Equation (5):

where

is output label

while

and

denote

of bounding boxes.

consider the boxes of non-background (if

is background). This cost function have detection loss

and regression loss

, in Equations (6)–(8):

and

where:

In terms of optimizer technique, the momentum Stochastic Gradient Descent (SGD) [

62] with momentum 0.9 is chosen as our optimizer technique, which updates weights parameters. This optimizer technique updates the weights of the gradient at the previous iteration and fine-tuning of the gradient. To bypass deep learning network overfitting problems, we utilize this problem by using the dropout technique [

63] and the early-stopping technique [

64] to select the best training steps. As to the learning rate policy, the step size technique is performed in SGD. We introduced the learning rate (

) to 0.01 and the number of iterations to be 2000. The mini-batch size is set to 64 and early-stopping to be five epochs if the accuracy did not improve.

5. Experimental Results

The introduced model was coded using a software package (MATLAB). The development was CPU specific. All outcomes were conducted on a computer server equipped by an Intel Xeon processor (2 GHz), 96 GB of RAM. The proposed model has been tested under three different scenarios, the first scenario is to test the proposed model for 4 classes, the second scenario for three classes and the third one for two classes. All the test experiment scenarios included the COVID-19 class. Every scenario consists of the validation phase and the testing phase. In the validation phase, 20% of total generated images will be used while in the testing phase consists of around 10% from the original dataset will be used.

The main difference between the validation phase and testing phase accuracy is in the validation phase, the data used to validate the generalization ability of the model or for the early stopping, during the training process. In the testing phase, the data used for other purposes other than training and validating. The data used in training, validation, and testing never overlap with each other to build a concrete result about the proposed model.

Before listing the major results of this research,

Table 4 presents the validation and the testing accuracy for four classes before using GAN as an image augmenter. The presented results in

Table 4 show that the validation and testing accuracy is quite low and not acceptable as a model for the detection of coronavirus.

5.1. Verification and Testing Accuracy Measurement

Testing accuracy is one of the estimations which demonstrates the precision and the accuracy of any proposed models. The confusion matrix also is one of the accurate measurements which give more insight into the achieved validation and testing accuracy. First, the four classes scenario will be investigated with the three types of deep transfer learning which include Alexnet, Googlenet, and Resnet18.

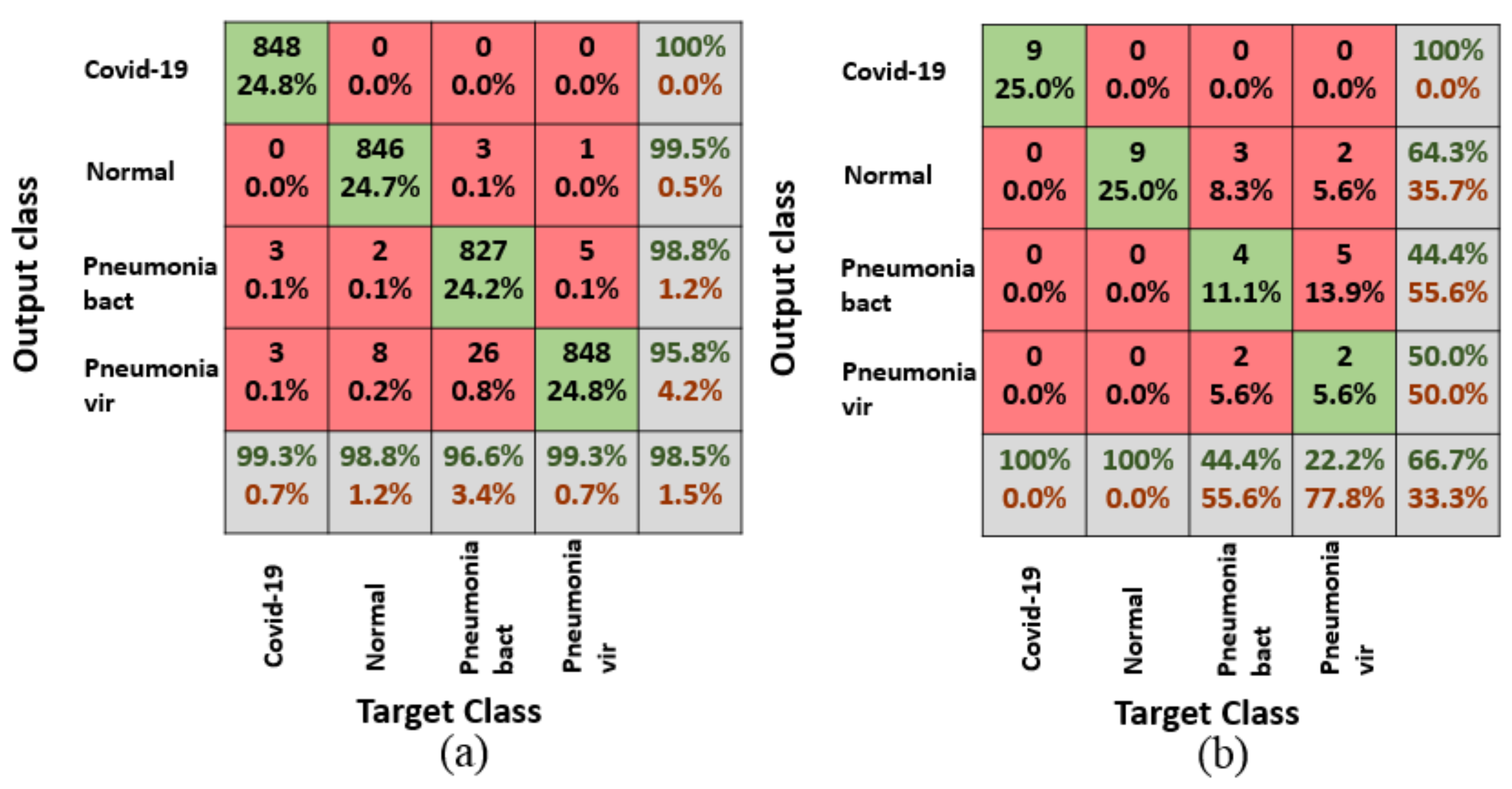

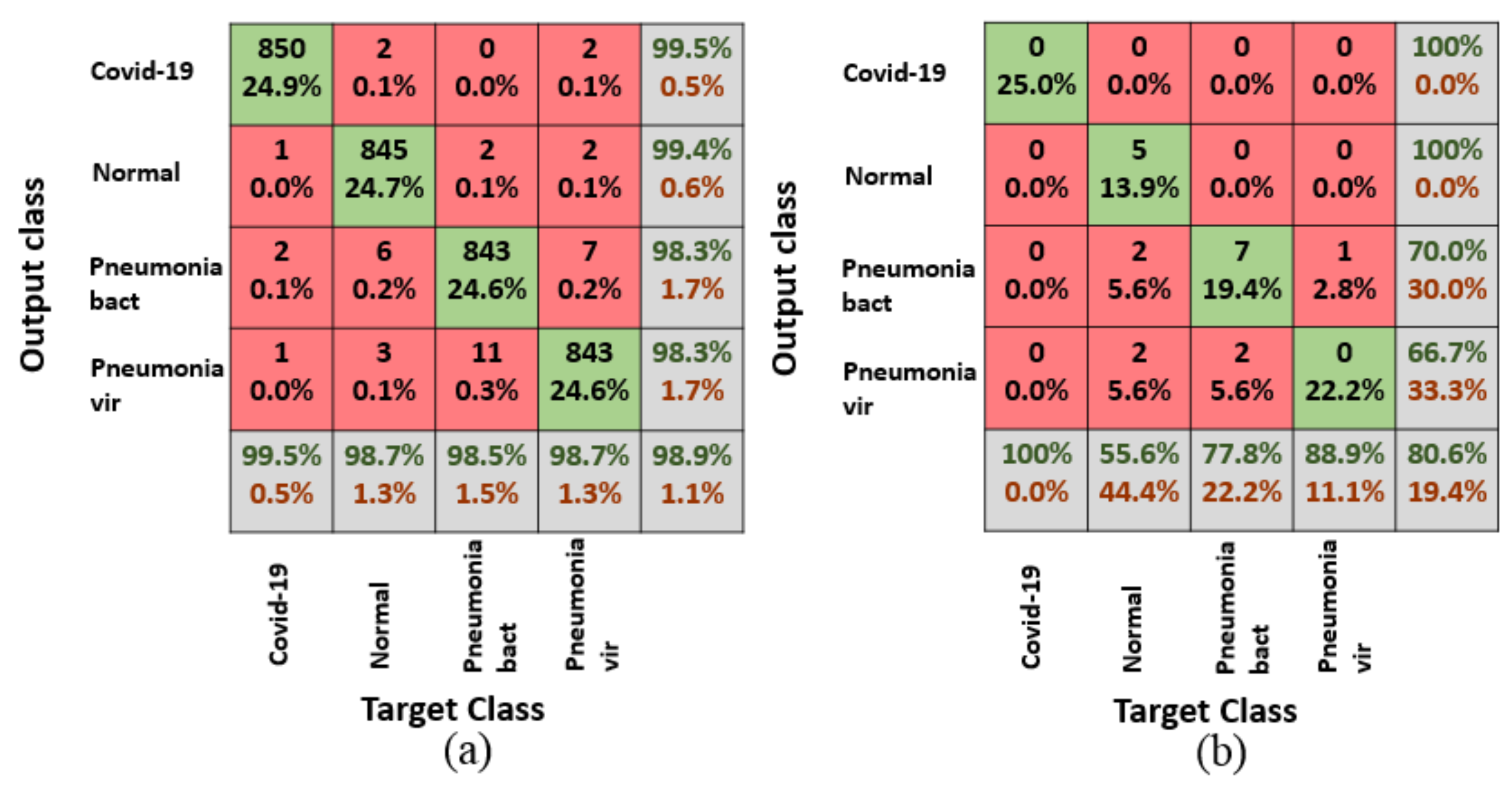

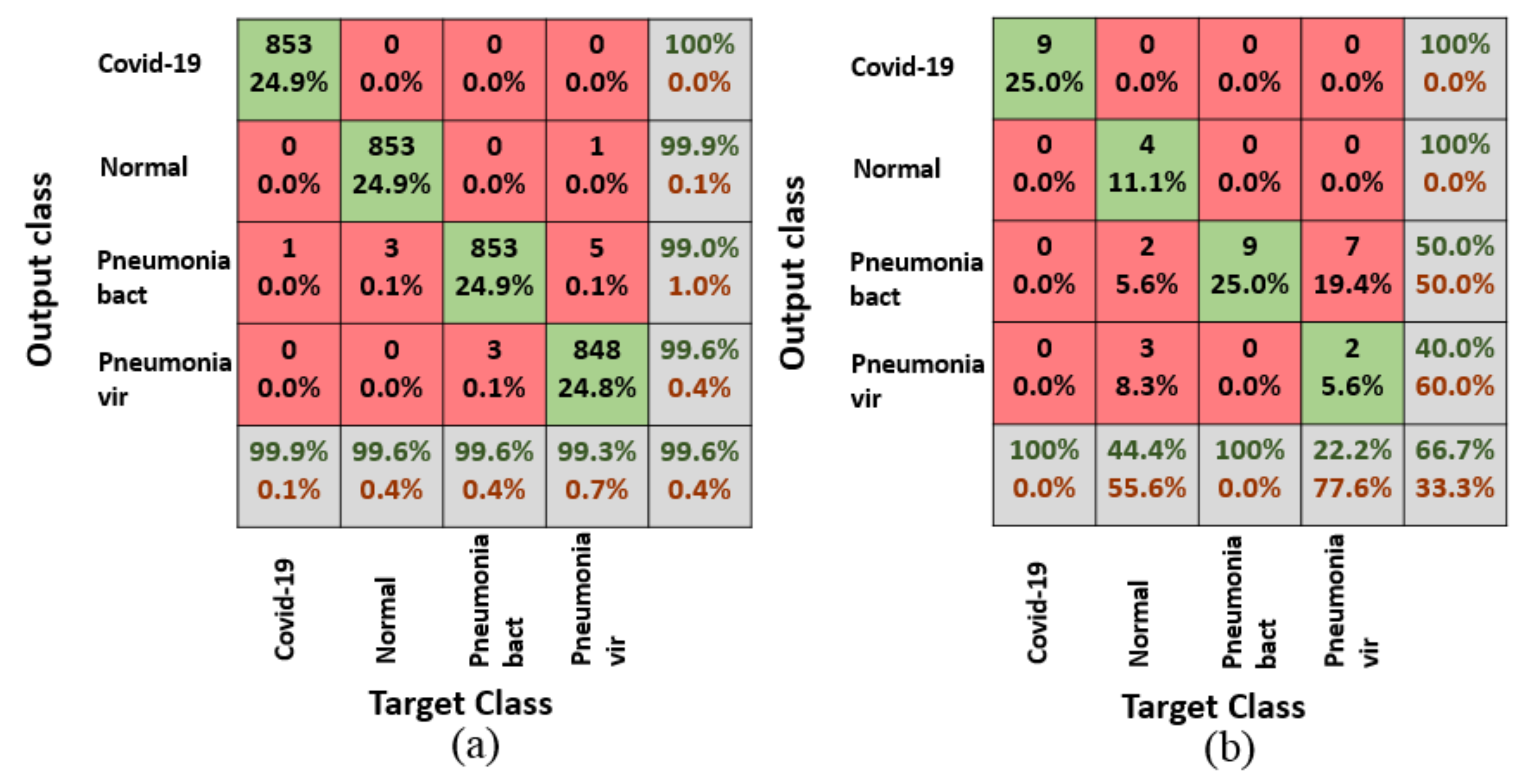

Figure 7,

Figure 8 and

Figure 9 illustrates the confusion matrices for the validation and testing phases for four classes in the dataset.

Table 5 summarizes the validation and the testing accuracy for the different deep transfer models for four classes. The table illustrates according to validation accuracy, the Resnet18 achieved the highest accuracy with 99.6%, this is due to the large number of parameters in the Resnet18 architecture which contains 11.7 million parameters which are not larger than Alexnet but the Alexnet only include 8 layers while the Resnet18 includes 18 layers. According to testing accuracy, the Googlenet achieved the highest accuracy with 80.6%, this is due to a large number of layers if it is compared to other models as it contains about 22 layers.

The second scenario to be tested in this research when the dataset only contains three classes.

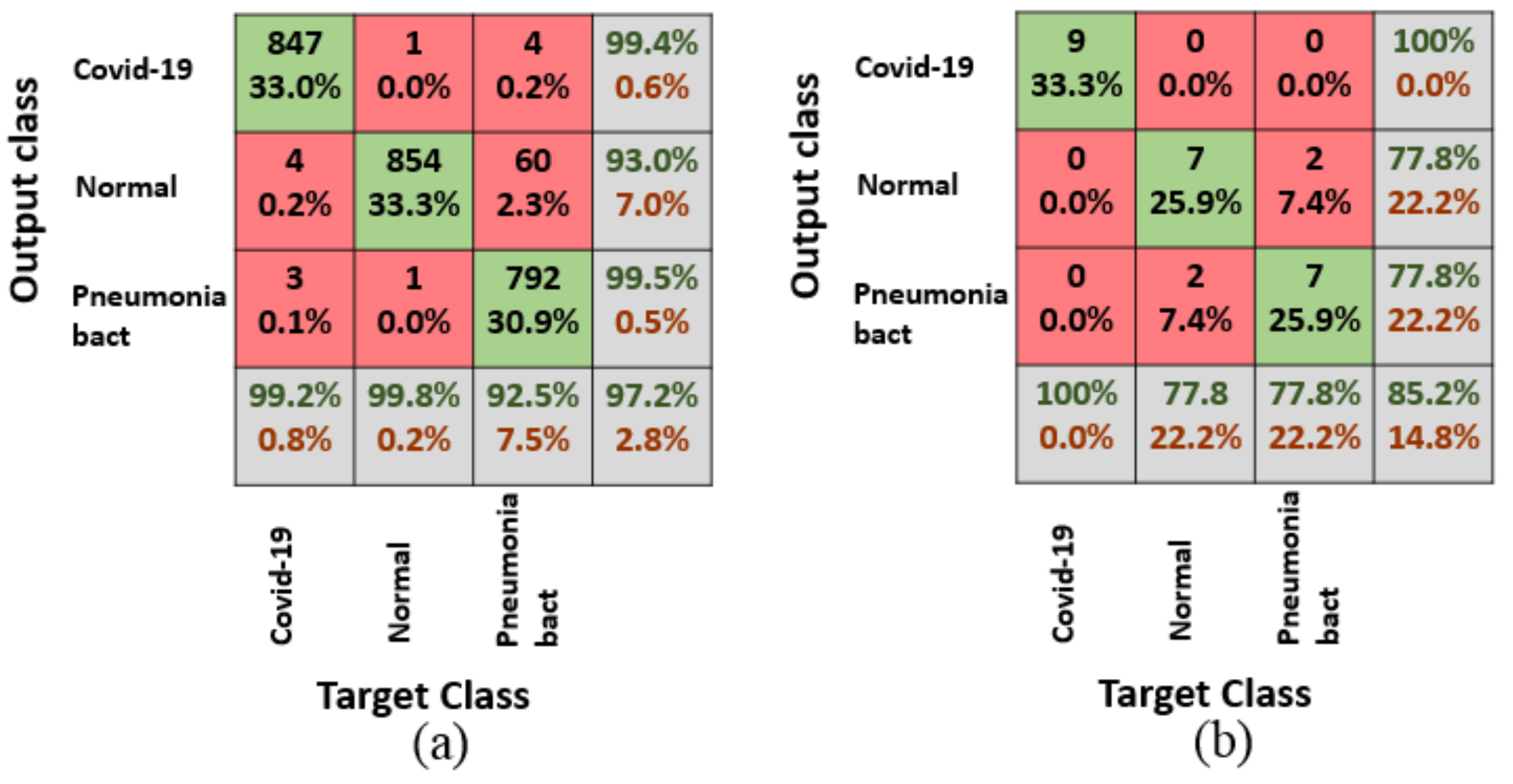

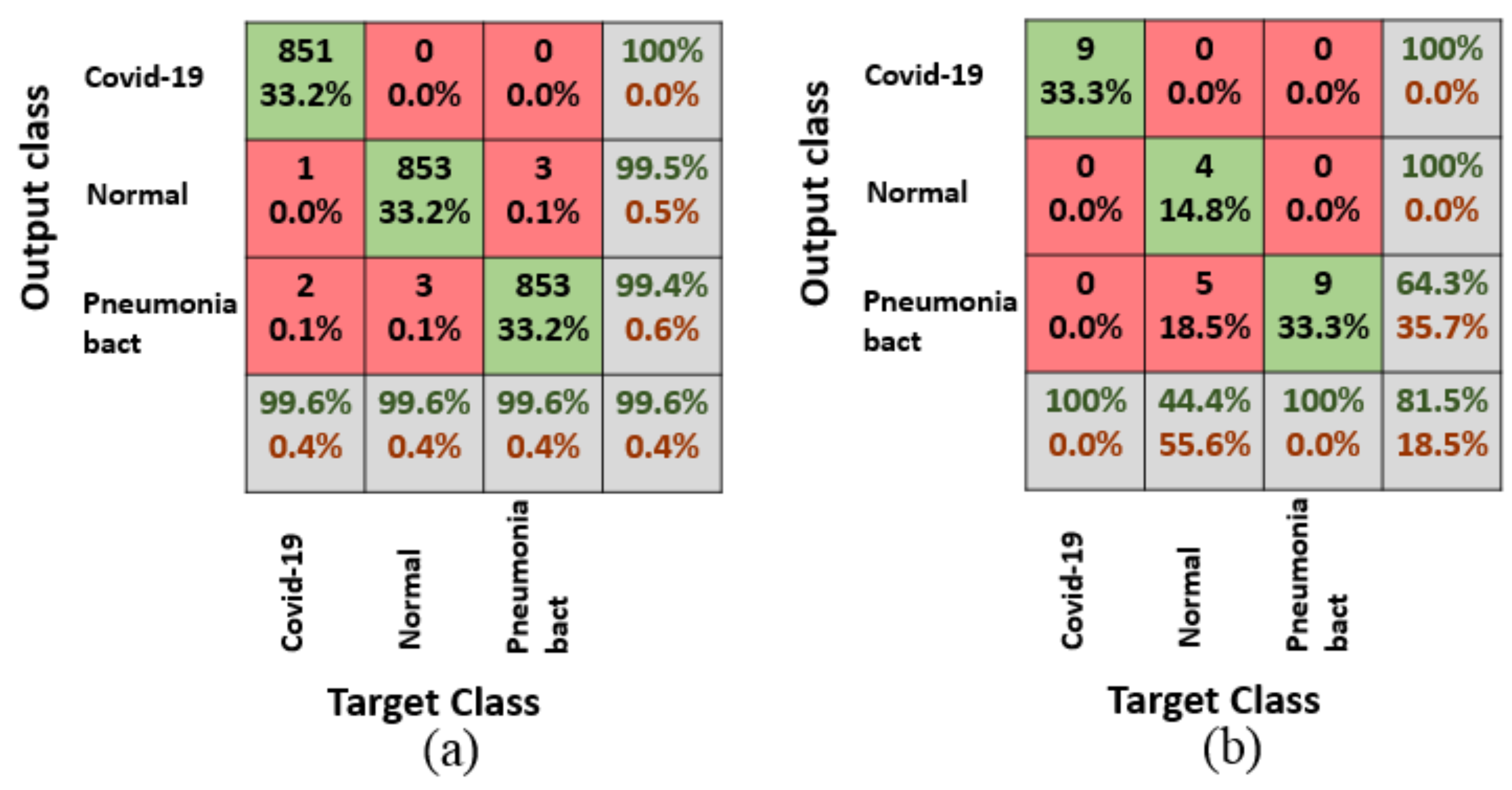

Figure 10,

Figure 11 and

Figure 12 illustrate the confusion matrices for the validation and testing phases for three classes in the dataset including the Covid class.

Table 6 summarizes the validation and the testing accuracy for the different deep transfer models for 3 classes. The table illustrates according to validation accuracy, the Resnet18 achieved the highest accuracy with 99.6%. According to testing accuracy, the Alexnet achieved the highest accuracy with 85.2%, this is maybe due to the large number of parameters in the Alexnet architecture which include 61 million parameters and also due to the elimination of the fourth class which include the pneumonia virus which has similar features if it is compared to COVID-19 which is also considered a type of pneumonia virus. The elimination of the pneumonia virus helps in achieving better testing accuracy for the all deep transfer model than when it is trained over four classes as mentioned before as COVID-19 is a special type of pneumonia virus.

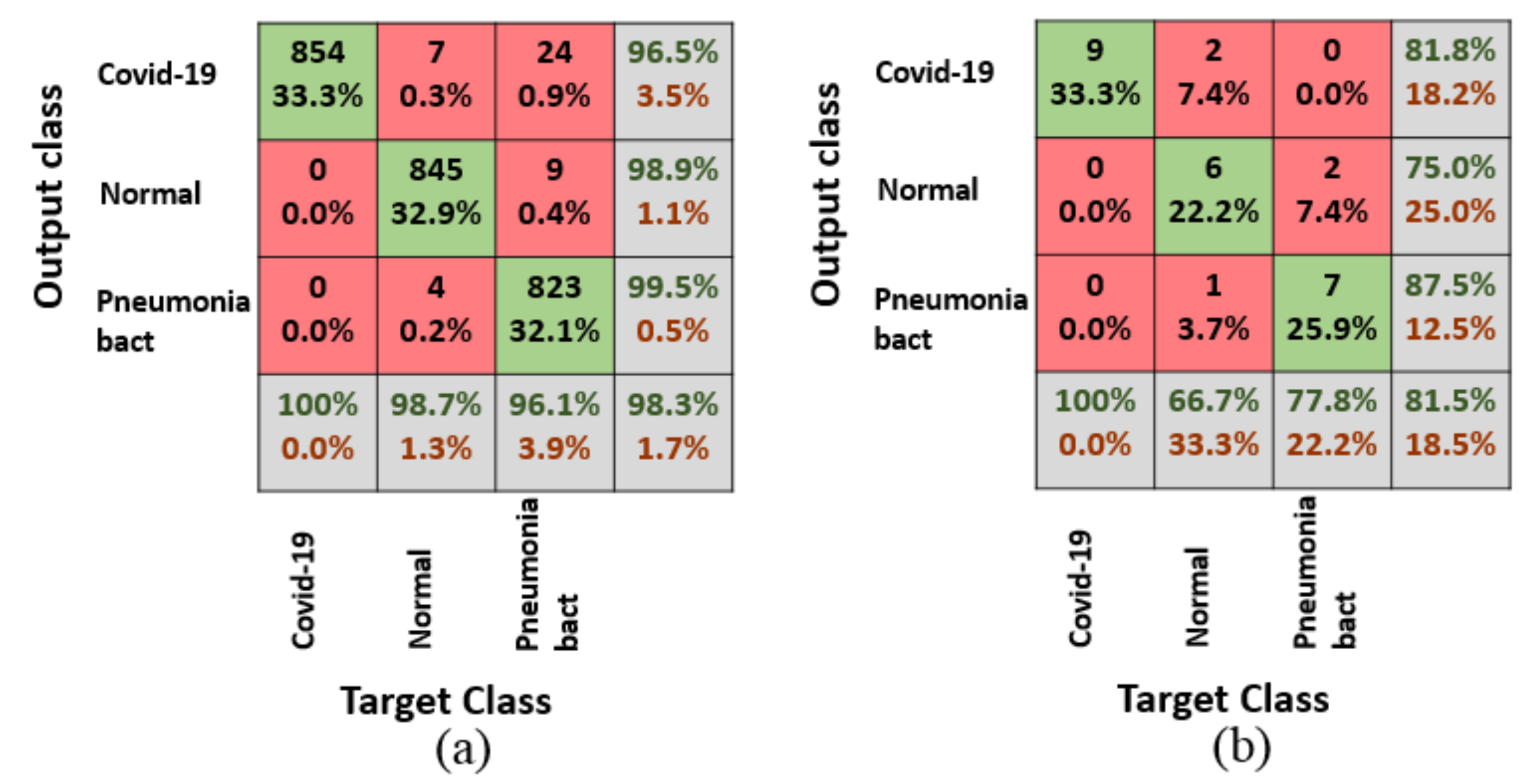

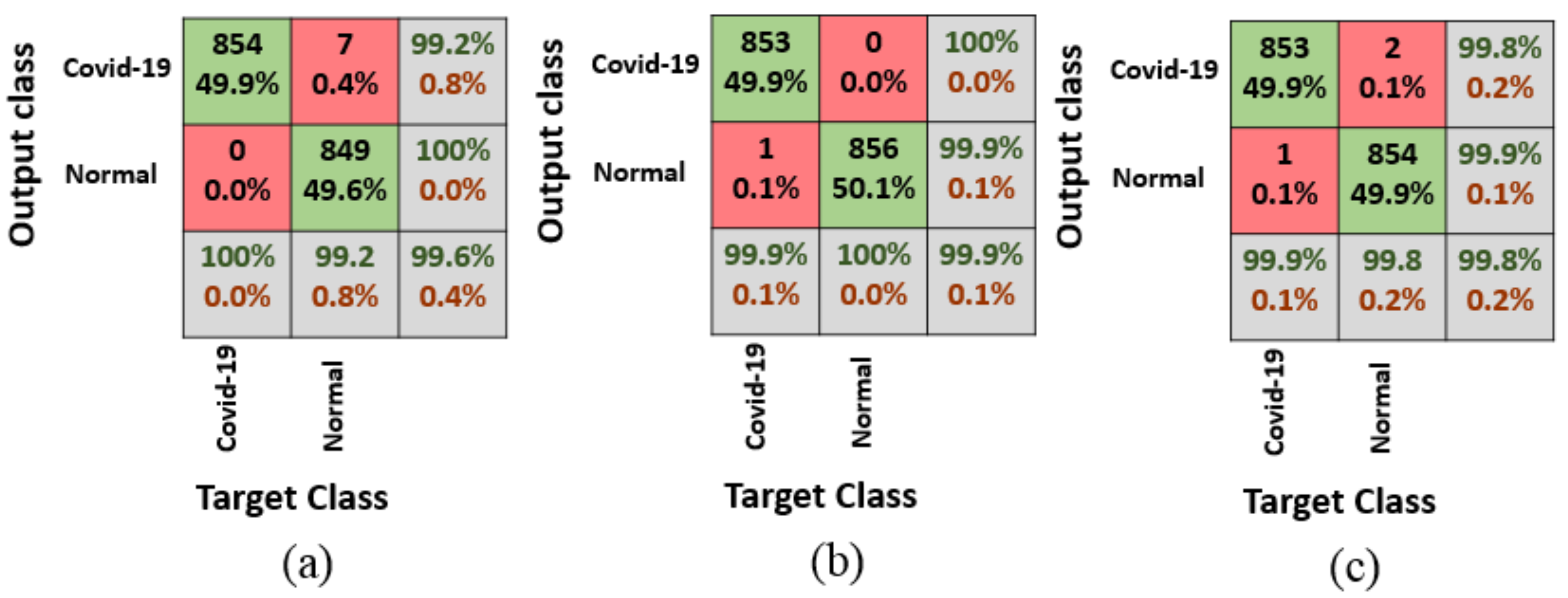

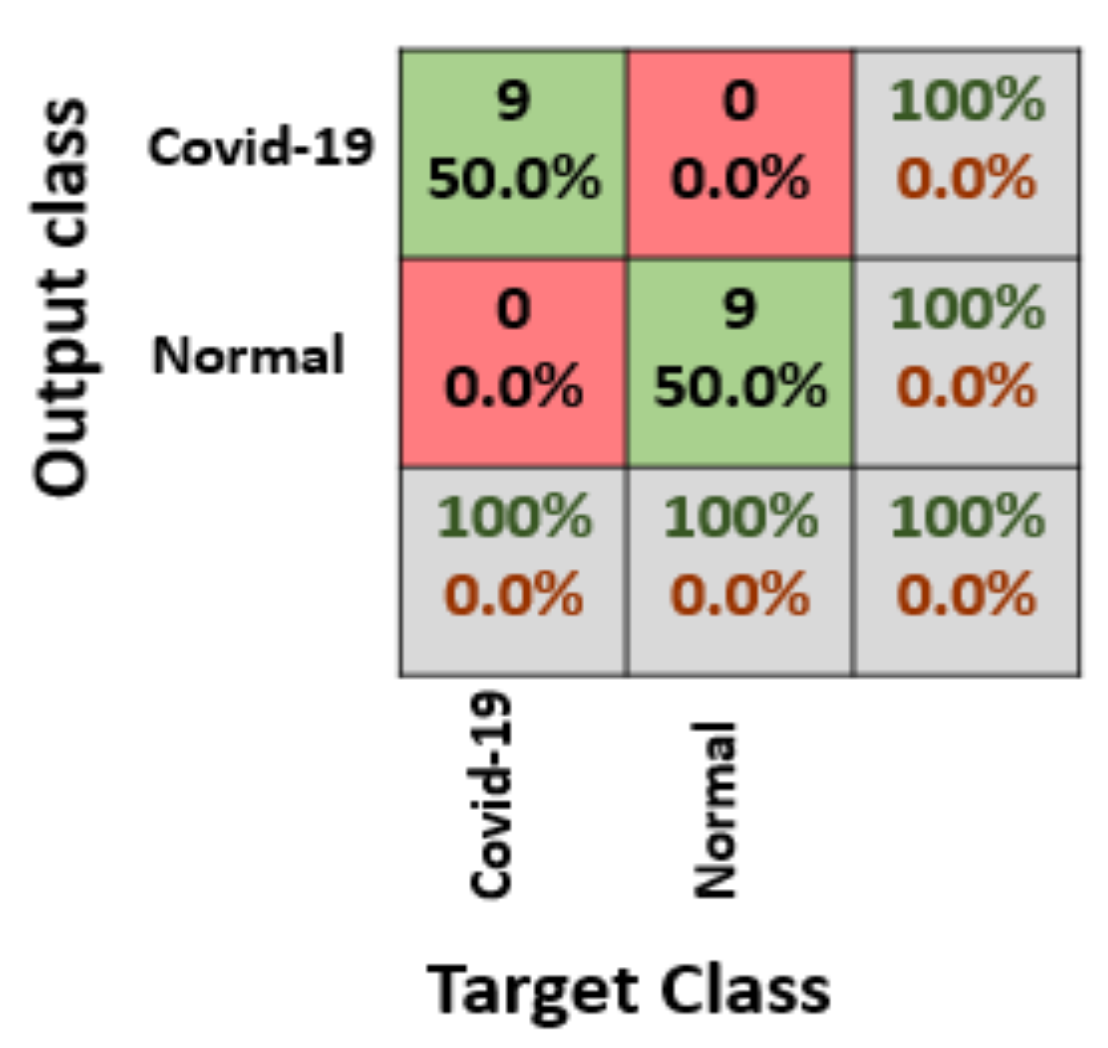

The third scenario to be tested when the dataset only includes two classes, the covid class, and the normal class.

Figure 13 illustrates the confusion matrix for the three different transfer models for validation accuracy, While the confusion matrix for testing accuracy is presented in

Figure 14 which is the same for all the deep transfer models selected in this research.

Table 7 summarizes the validation and the testing accuracy for the different deep transfer models for two classes. The table illustrates according to validation accuracy, the Googlenet achieved the highest accuracy with 99.9%. According to testing accuracy, all the pre-trained model Alexnet, Goolgenet, and Resnet18 achieved the highest accuracy with 100%, This due to the elimination of the third and the fourth class which includes pneumonia bacterial and pneumonia virus which has similar features if it is compared to COVID-19. This leads to a noteworthy enhancement in the testing accuracy which reflects on whatever the deep transfer model will be used the testing accuracy will reach 100%. The choice of the best model here will be according to validation accuracy which achieved 99.9%. So the Googlenet will be the selected deep transfer model in the third scenario.

To conclude this part, every scenario has it is own deep transfer model. In the first scenario, Googlenet was selected, while the second scenario, Alexnet was selected, and finally, in the third scenario, Googlenet was selected as a deep transfer model. To draw a full conclusion for the selected deep transfer learning that fit the dataset and all scenarios, testing accuracy for every class is required for the different deep transfer model.

Table 7 presents the testing accuracy for every class for the different three scenarios.

Table 8 does not help much to determine the deep transfer model that fits all scenarios but for the distinction of COVID-19 class among the other classes, Alexnet and Resent18 will be the selected as deep transfer model as they achieved 100% testing accuracy for COVID-19 class whatever the number of classes is 2,3 or 4.

5.2. Performance Evaluation and Discussion

To estimate the performance of the proposed model, extra performance matrices are required to be explored through this study. The most widespread performance measures in the field of deep learning are Precision, Sensitivity (recall), F1 Score [

65] and they are presented from Equation (9) to Equation (11).

where TrueP is the count of true positive samples, TrueN is the count of true negative samples, FalseP is the count of false positive samples, and FalseN is the count of false negative samples from a confusion matrix.

Table 9 presents the performance metrics for different scenarios and deep transfer models for the testing accuracy. The table illustrates that in the first scenario which contains four classes, Googlenet achieved the highest percentage for precision, sensitivity and F1 score metrics which strengthen the research decision for choosing Googlenet as a deep transfer model. The table also illustrates that in the second scenario which contains three classes, Alexnet achieved the highest percentage for precision and recall score metrics while Resnet achieved the highest score in F1 with 88.10% but overall the Alexnet had the highest testing accuracy which also strengthens the research decision for choosing Alexnet as deep transfer model.

Table 9 also illustrates that in the third scenario, which contains two classes, all deep transfer learning models achieved similar the highest percentage for precision, recall and F1 score metrics which strengthen the research decision for choosing Googlenet as it achieved the highest validation accuracy with 99.9% as illustrated in

Table 6.

6. Conclusions and Future Works

The 2019 novel Coronaviruses (COVID-19) are a family of viruses that leads to illnesses ranging from the common cold to more severe diseases and may lead to death according to World Health Organization (WHO), with the advances in computer algorithms and especially artificial intelligence, the detection of this type of virus in early stages will help in fast recovery. In this paper, a GAN with deep transfer learning for COVID-19 detection in limited chest X-ray images is presented. The lack of benchmark datasets for COVID-19 especially in chest X-rays images was the main motivation of this research. The main idea is to collect all the possible images for COVID-19 and use the GAN network to generate more images to help in the detection of the virus from the available X-ray’s images. The dataset in this research was collected from different sources. The number of images of the collected dataset was 307 images for four types of classes. The classes are the covid, normal, pneumonia bacterial, and pneumonia virus.

Three deep transfer models were selected in this research for investigation. Those models are selected for investigation through this research as it contains a small number of layers on their architectures, this will result in reducing the complexity and the consumed memory and time for the proposed model. A three-case scenario was tested through the paper, the first scenario which included the four classes from the dataset, while the second scenario included three classes and the third scenario included two classes. All the scenarios included the COVID-19 class as it was the main target of this research to be detected. In the first scenario, the Googlenet was selected to be the main deep transfer model as it achieved 80.6% in testing accuracy. In the second scenario, the Alexnet was selected to be the main deep transfer model as it achieved 85.2% in testing accuracy while in the third scenario which included two classes(COVID-19, and normal), Googlenet was selected to be the main deep transfer model as it achieved 100% in testing accuracy and 99.9% in the validation accuracy. One open door for future works is to apply the deep models with a larger dataset benchmark.