Building Group Key Establishment on Group Theory: A Modular Approach

Abstract

1. Introduction

- Instead of the random oracle model, we use the common reference string model. An (expected) price we pay for this, is the need of a decisional assumption instead of a computational one that is used in [21].

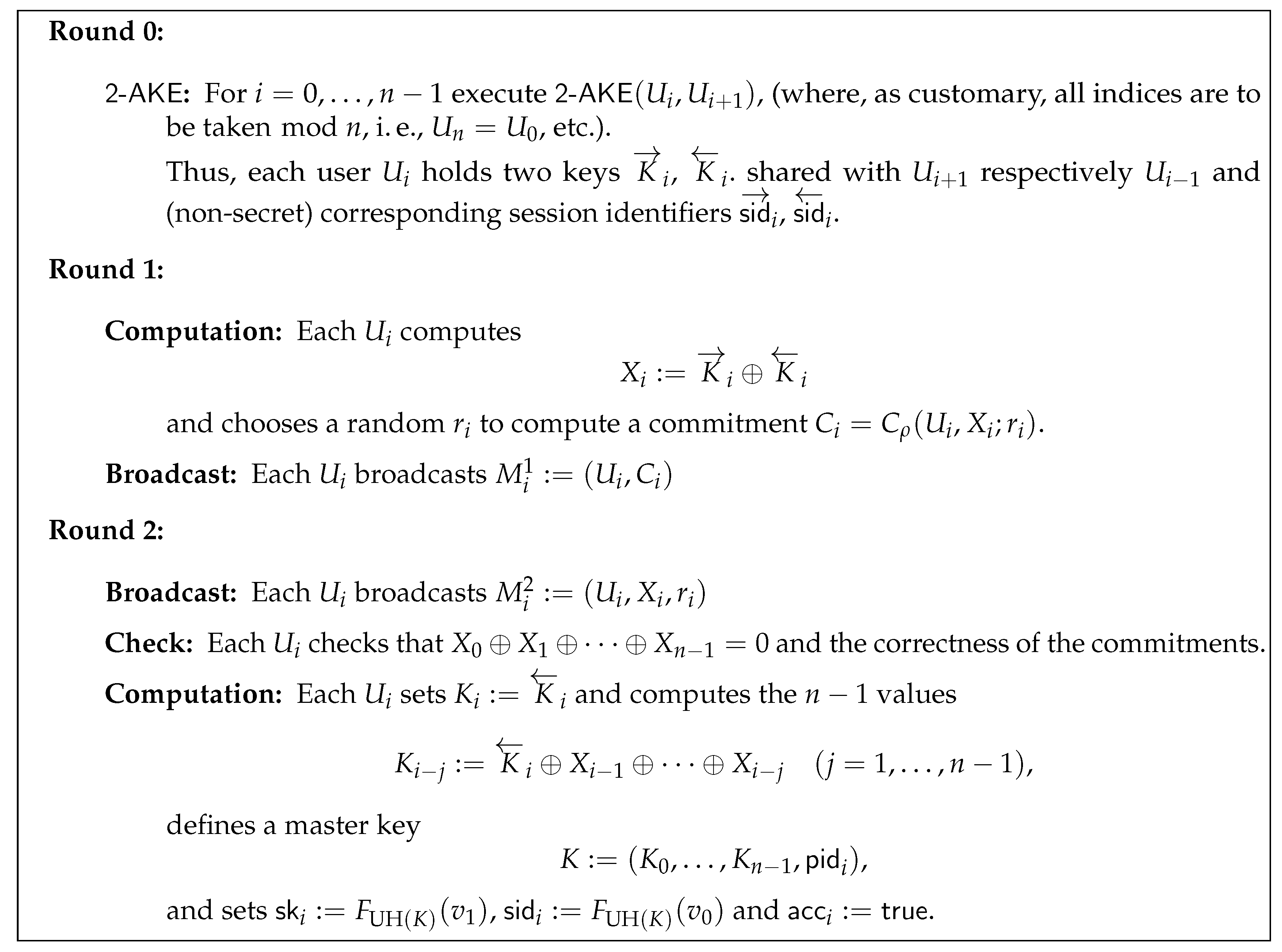

- Instead of setting out for a group key establishment directly, we suggest a construction for the two-party case and thereafter apply a protocol compiler of Abdalla et al. [22].

2. Preliminaries: Security Model and Protocol Goals

- Two values , These will be the input for a pseudorandom function at the time of computing the session identifier and session key;

- The information necessary to implement a non-interactive and non-malleable commitment scheme (see Section 3.1 for further details);

- Two elements, chosen independently and uniformly at random, each taken from a family of universal hash functions (one as needed for the compiler in [22] and one for our two-party solution as detailed in Section 3.1).

2.1. Communication Model and Adversarial Capabilities

2.1.1. Protocol Instances

- will indicate whether this instance is or has been used for a protocol run. The flag is set through a protocol message received by the corresponding instance due to a call to the oracle (see below);

- stores the state information needed during the protocol execution;

- indicates if the execution has terminated;

- denotes a session identifier (which may be public) which may be later use as identifier for the session key (in particular, the adversary is thus allowed to learn session identifiers);

- stores the user identities that aims at establishing a key with. This set includes himself;

- indicates that the protocol instance completed a protocol successfully. That is, whether the involved user accepted the session key or not;

- stores a distinguished null value in the beginning. After a session key is accepted by this session key replaces the null value.

2.1.2. Communication Network

2.1.3. Adversarial Capabilities

- Send(Ui, si, M)

- This oracle sends a message M to instance and returns the message generated by this instance. In case the instance is previously unused and the message contains a set of user identities, the -flag is set, initialized with . initiates the protocol with the first message which is returned.

- Reveal(Ui, si)

- This outputs the computed key of the instance stored in .

- Test(Ui, si)

- If the corresponding session key is defined (i. e., and null) and instance is fresh (see Definition 4), can execute this oracle query at any time when being activated. Then, if the session key is returned, while if a uniformly chosen random session key is returned. An arbitrary number of queries is allowed for the adversary , but once the oracle returned a value for an instance , the same value will be returned for all instances partnered with (see Definition 3).

- Corrupt(Ui)

- This oracle models forward secrecy, as this query will output the secret signing key of user .

2.2. Goals of a Key Establishment Protocol: Correctness, Integrity, and Security

- For somea querywas executed before a query of the formhas taken place where.

- The adversary queriedwithandbeing partnered.

3. Building on a Group-Theoretic Assumption

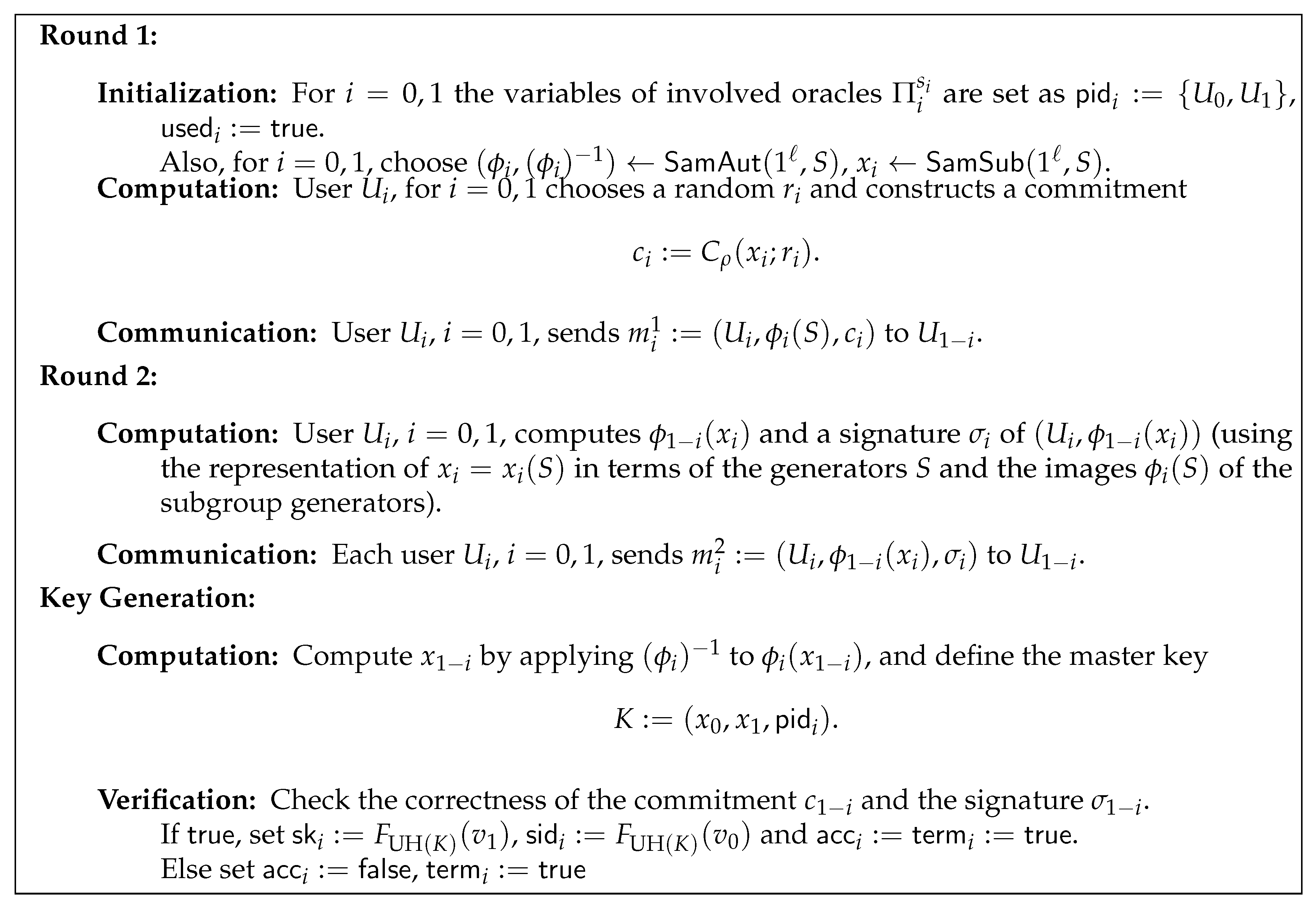

3.1. A Two-Party Solution

- A non-interactive non-malleable commitment scheme , satisfying the following requirements:

- –

- It is perfectly binding in the sense that every commitment can be decommitted to at most one value.

- –

- It is non-malleable for multiple commitments. This means that an adversary who knows commitments to a polynomial sized set of values , will not be able to output commitments to a polynomial sized set of values related to in a meaningful way. It is well-known that in the CRS model such a commitment scheme can be implemented by means of any IND-CCA2 secure public key encryption scheme, for instance.

- A family of universal hash functions mapping triples consisting of two elements from G and a -value onto a superpolynomial sized set . A universal hash function will be selected by the CRS from this family.

- A collision-resistant pseudorandom function family (see Katz and Shin [28]). We assume to be indexed by and further denote by a publicly known value such that no ppt adversary can find two different indices such that . We further use another public value fulfilling the same requirement as for deriving the session key (this can also be included in the CRS—see [28] for more details).

- , the domain parameter generation algorithm, is a (stateless) ppt algorithm that, upon input of the security parameter , outputs a finite sequence S of elements in G. The subgroup of G spanned by S, , will be publicly known. Note that, for the special case of applying our framework to a DDH-assumption, S specifies a public generator of a cyclic group.

- , the automorphism group sampling algorithm, is a (stateless) ppt algorithm that, upon input of the security parameter and a sequence S output by , returns a description of an automorphism on the subgroup , so that both and can be efficiently evaluated. For example, for a cyclic group, could be given as an exponent, or for an inner automorphism the conjugating group element could be specified.

- , the subgroup sampling algorithm, is a (stateless) ppt that, upon input of the security parameter and a sequence S output by , returns a word representing an element . Intuitively, chooses a random , so that it is hard to recognize x if we know elements of x’s orbit under . Thus, our protocol requires an explicit representation of x in terms of the generators S.

- “DDH solution ⇒ DAA solution”:

- When facing, the DAA problem, we obtain as input a tuplewhere either, or y has been chosen uniformly at random from—independently of x and thes. Given a DDH oracle, we just query it withto see with non-negligible success probability which is the case.

- “DDH solution ⇒ DAA solution”:

- When facing the DDH problem, we obtain as input a tuple, where either, or y has been chosen uniformly at random from—independently of x and. Choosing another random, we can compute the inputneeded for a DAA attacker. Running a successful DAA attacker with this input, we immediately obtain the desired DDH attacker.

3.2. Security Analysis for the Two-Party Case: Proof of Proposition 1

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Anshel, I.; Anshel, M.; Goldfeld, D. An Algebraic Method for Public-Key Cryptography. Math. Res. Lett. 1999, 6, 287–291. [Google Scholar] [CrossRef]

- Ko, K.H.; Lee, S.J.; Cheon, J.H.; Han, J.W.; Kang, J.S.; Park, C. New Public-Key Cryptosystem Using Braid Groups. In Proceedings of the Advances in Cryptology—CRYPTO 2000, Santa Barbara, CA, USA, 20–24 August 2000; pp. 166–183. [Google Scholar]

- Anshel, I.; Anshel, M.; Fisher, B.; Goldfeld, D. New Key Agreement Protocols in Braid Group Cryptography. In Proceedings of the Topics in Cryptology—CT-RSA 2001, San Francisco, CA, USA, 8–12 April 2001; pp. 13–27. [Google Scholar]

- Grigoriev, D.; Ponomarenko, I. Constructions in public-key cryptography over matrix groups. In Contemporary Mathematics: Algebraic Methods in Cryptography; American Mathematical Society: Providence, RI, USA, 2006; Volume 418, pp. 103–119. [Google Scholar]

- Lee, H.K.; Lee, H.S.; Lee, Y.R. An Authenticated Group Key Agreement Protocol on Braid groups. Cryptology ePrint Archive: Report 2003/018. 2003. Available online: http://eprint.iacr.org/2003/018 (accessed on 1 December 2019).

- Shpilrain, V.; Ushakov, A. Thompson’s Group and Public Key Cryptography. In Proceedings of the ACNS 2005—Third International Conference on Applied Cryptography and Network Security, New York, NY, USA, 7–10 June 2005; Volume 3531, pp. 151–163. [Google Scholar]

- Shpilrain, V.; Zapata, G. Combinatorial group theory and public key cryptography. Appl. Algebra Eng. Commun. Comput. 2006, 17, 291–302. [Google Scholar] [CrossRef][Green Version]

- Shpilrain, V.; Ushakov, A. A new key exchange protocol based on the decomposition problem. In Contemporary Mathematics: Algebraic Methods in Cryptography; American Mathematical Society: Providence, RI, USA, 2006; Volume 418, pp. 161–167. [Google Scholar]

- Anshel, I.; Anshel, M.; Goldfeld, D.; Lemieux, S. Key agreement, the Algebraic EraserTM, and lightweight cryptography. In Contemporary Mathematics: Algebraic Methods in Cryptography; American Mathematical Society: Providence, RI, USA, 2006; Volume 418, pp. 1–34. [Google Scholar] [CrossRef]

- Anshel, I.; Atkins, D.; Goldfeld, D.; Gunnells, P.E. Ironwood Meta Key Agreement and Authentication Protocol. arXiv 2017, arXiv:1702.02450. [Google Scholar]

- Bellare, M.; Rogaway, P. Entitiy Authentication and Key Distribution. In Proceedings of the CRYPTO 1993—13th Annual International Cryptology Conference on Advances in Cryptology, Santa Barbara, CA, USA, 22–26 August 1993; Volume 773, pp. 232–249. [Google Scholar]

- Bellare, M.; Canetti, R.; Krawczyk, H. A Modular Approach to the Design and Analysis of Authentication and Key Exchange Protocols. In Proceedings of the 30th Annual ACM Symposium on Theory of Computing STOC, Dallas, TX, USA, 24–26 May 1998; pp. 319–428. [Google Scholar]

- Shoup, V. On Formal Models for Secure Key Exchange (Version 4). Revision of IBM Research Report RZ 3120 (April 1999). 1999. Available online: http://www.shoup.net/papers/skey.pdf (accessed on 1 December 2019).

- Bellare, M.; Pointcheval, D.; Rogaway, P. Authenticated Key Exchange Secure Against Dictionary Attacks. In Proceedings of the EUROCRYPT 2000—Advances in Cryptology, Bruges, Belgium, 14–18 May 2000; Volume 1807, pp. 139–155. [Google Scholar]

- Bresson, E.; Chevassut, O.; Pointcheval, D.; Quisquater, J.J. Provably Authenticated Group Diffie–Hellman Key Exchange. In Proceedings of the 8th ACM Conference on Computer and Communications Security; Samarati, P., Ed.; ACM Press: New York, NY, USA, 2001; pp. 255–264. [Google Scholar]

- Canetti, R.; Krawczyk, H. Analysis of Key-Exchange Protocols and Their Use for Building Secure Channels. In Advances in Cryptology—EUROCRYPT 2001; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2001; Volume 2045, pp. 453–474. [Google Scholar]

- Ben-Zvi, A.; Blackburn, S.R.; Tsaban, B. A Practical Cryptanalysis of the Algebraic Eraser. In Advances in Cryptology—CRYPTO 2016 Proceedings, Part I; Lecture Notes in Computer Science; Robshaw, M., Katz, J., Eds.; Springer: Berlin/Heidelberg, Germany, 2016; Volume 9814, pp. 179–189. [Google Scholar]

- Cramer, R.; Shoup, V. Universal Hash Proofs and a Paradigm for Adaptive Chosen Ciphertext Secure Public-Key Encryption. In Advances in Cryptology—EUROCRYPT 2002; Lecture Notes in Computer Science; Knudsen, L., Ed.; Springer: Berlin/Heidelberg, Germany, 2002; Volume 2332, pp. 45–64. [Google Scholar]

- González Vasco, M.I.; Martínez, C.; Steinwandt, R.; Villar, J.L. A new Cramer-Shoup like methodology for group based provably secure schemes. In Proceedings of the 2nd Theory of Cryptography Conference (TCC 2005); Lecture Notes in Computer Science; Kilian, J., Ed.; Springer: Berlin/Heidelberg, Germany, 2005; Volume 3378, pp. 495–509. [Google Scholar]

- Catalano, D.; Pointcheval, D.; Pornin, T. IPAKE: Isomorphisms for Password-based Authenticated Key Exchange. In Advances in Cryptology—CRYPTO 2004; Lecture Notes in Computer Science; Franklin, M.K., Ed.; Springer: Berlin/Heidelberg, Germany, 2004; Volume 3152, pp. 477–493. [Google Scholar]

- Bohli, J.M.; Glas, B.; Steinwandt, R. Towards Provably Secure Group Key Agreement Building on Group Theory. In Proceedings of VietCrypt 2006; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2006; Volume 4341, pp. 322–336. [Google Scholar]

- Abdalla, M.; Bohli, J.; González Vasco, M.I.; Steinwandt, R. (Password) Authenticated Key Establishment: From 2-Party to Group. In Proceedings of the 4th Theory of Cryptography Conference TCC 2007; Lecture Notes in Computer Science; Vadhan, S.P., Ed.; Springer: Berlin/Heidelberg, Germany, 2007; Volume 4392, pp. 499–514. [Google Scholar]

- Burmester, M.; Desmedt, Y. A Secure and Efficient Conference Key Distribution System. In Advances in Cryptology—EUROCRYPT’94; Lecture Notes in Computer Science; Santis, A.D., Ed.; Springer: Berlin/Heidelberg, Germany, 1995; Volume 950, pp. 275–286. [Google Scholar]

- Gennaro, R.; Lindell, Y. A Framework for Password-Based Authenticated Key Exchange. Cryptology ePrint Archive: Report 2003/032. 2003. Available online: http://eprint.iacr.org/2003/032 (accessed on 1 December 2019).

- Gennaro, R.; Lindell, Y. A Framework for Password-Based Authenticated Key Exchange (Extended Abstract). In Advances in Cryptology—EUROCRYPT 2003; Lecture Notes in Computer Science; Biham, E., Ed.; Springer: Berlin/Heidelberg, Germany, 2003; Volume 2656, pp. 524–543. [Google Scholar]

- Bohli, J.M.; González Vasco, M.I.; Steinwandt, R. Secure group key establishment revisited. Int. J. Inf. Secur. 2007, 6, 243–254. [Google Scholar] [CrossRef]

- Bohli, J.M.; González Vasco, M.I.; Steinwandt, R. Password-authenticated group key establishment from smooth projective hash functions. Int. J. Appl. Math. Comput. Sci. 2019, 29, 797–815. Available online: http://eprint.iacr.org/2006/214 (accessed on 1 December 2019). [CrossRef]

- Katz, J.; Shin, J.S. Modeling insider attacks on group key-exchange protocols. In Proceedings of the 12th ACM Conference on Computer and Communications Security (CCS 2005); Atluri, V., Meadows, C.A., Juels, A., Eds.; ACM: New York, NY, USA, 2005; pp. 180–189. Available online: http://eprint.iacr.org/2005/163 (accessed on 1 December 2019).

- Nam, J.; Paik, J.; Won, D. A security weakness in Abdalla et al.’s generic construction of a group key exchange protocol. Inf. Sci. 2011, 181, 234–238. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bohli, J.-M.; González Vasco, M.I.; Steinwandt, R. Building Group Key Establishment on Group Theory: A Modular Approach. Symmetry 2020, 12, 197. https://doi.org/10.3390/sym12020197

Bohli J-M, González Vasco MI, Steinwandt R. Building Group Key Establishment on Group Theory: A Modular Approach. Symmetry. 2020; 12(2):197. https://doi.org/10.3390/sym12020197

Chicago/Turabian StyleBohli, Jens-Matthias, María I. González Vasco, and Rainer Steinwandt. 2020. "Building Group Key Establishment on Group Theory: A Modular Approach" Symmetry 12, no. 2: 197. https://doi.org/10.3390/sym12020197

APA StyleBohli, J.-M., González Vasco, M. I., & Steinwandt, R. (2020). Building Group Key Establishment on Group Theory: A Modular Approach. Symmetry, 12(2), 197. https://doi.org/10.3390/sym12020197