Development of Optimal Eighth Order Derivative-Free Methods for Multiple Roots of Nonlinear Equations

Abstract

1. Introduction

2. Development of Method

Some Particular Forms of Proposed Family

- (1)

- Let us consider the following function which satisfies the conditions of Theorem 1Then, the corresponding eighth-order iterative scheme is given by

- (2)

- Next, consider the rational functionSatisfying the conditions of Theorem 1. Then, corresponding eighth-order iterative scheme is given by

- (3)

- Consider another rational function satisfying the conditions of Theorem 1, which is given byThen, corresponding eighth-order iterative scheme is given by

- (4)

- Next, we suggest another rational function satisfying the conditions of Theorem 1, which is given byThen, corresponding eighth-order iterative scheme is given by

- (5)

- Lastly, we consider yet another function satisfying the conditions of Theorem 1Then, the corresponding eighth-order method is given as

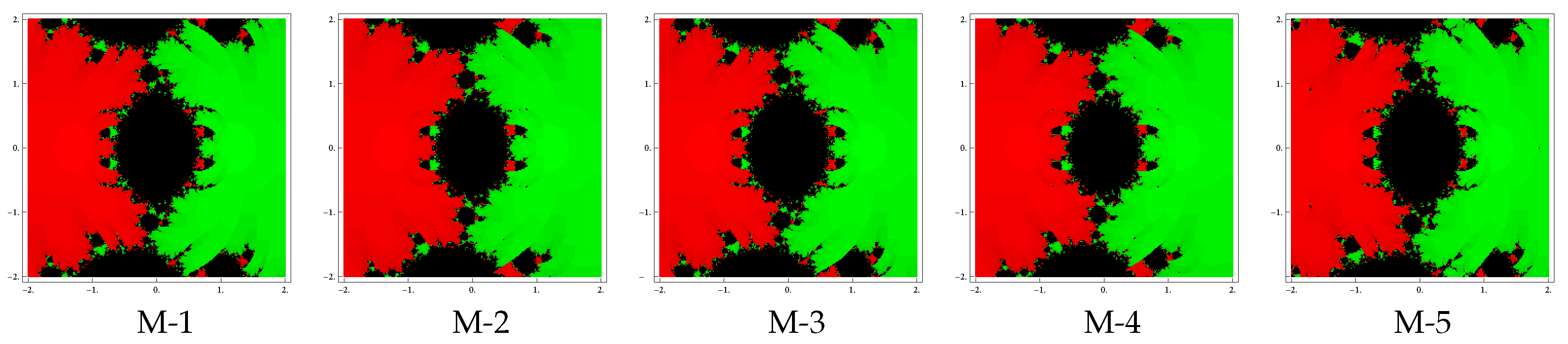

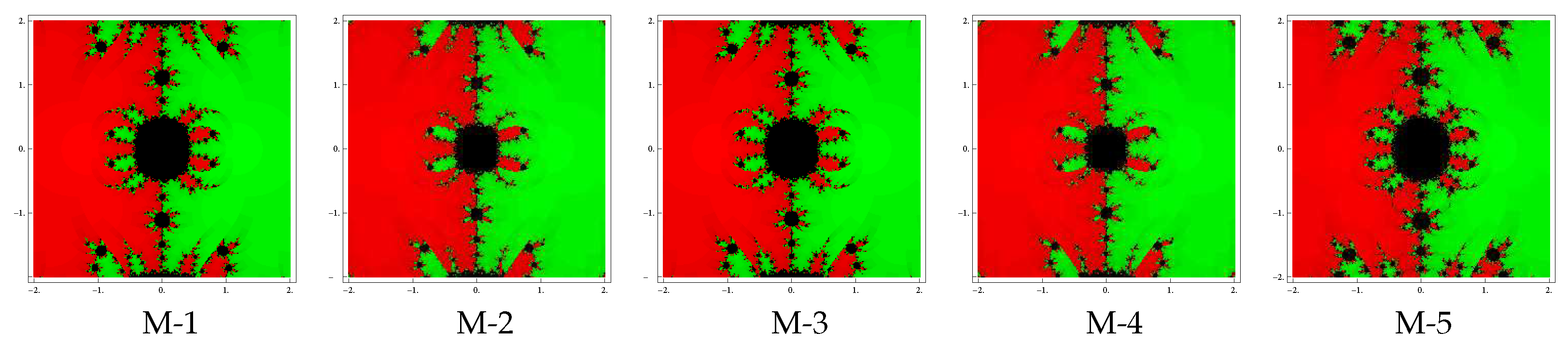

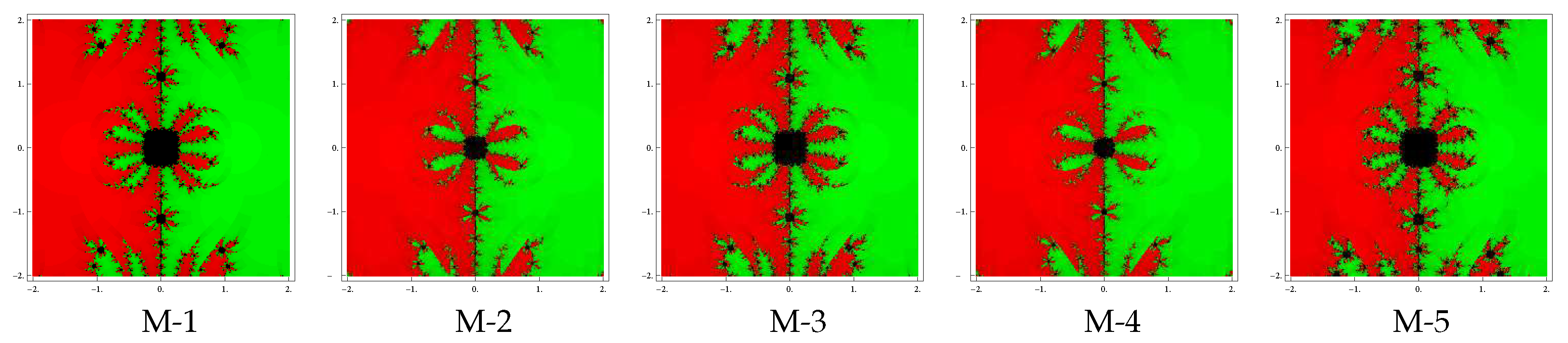

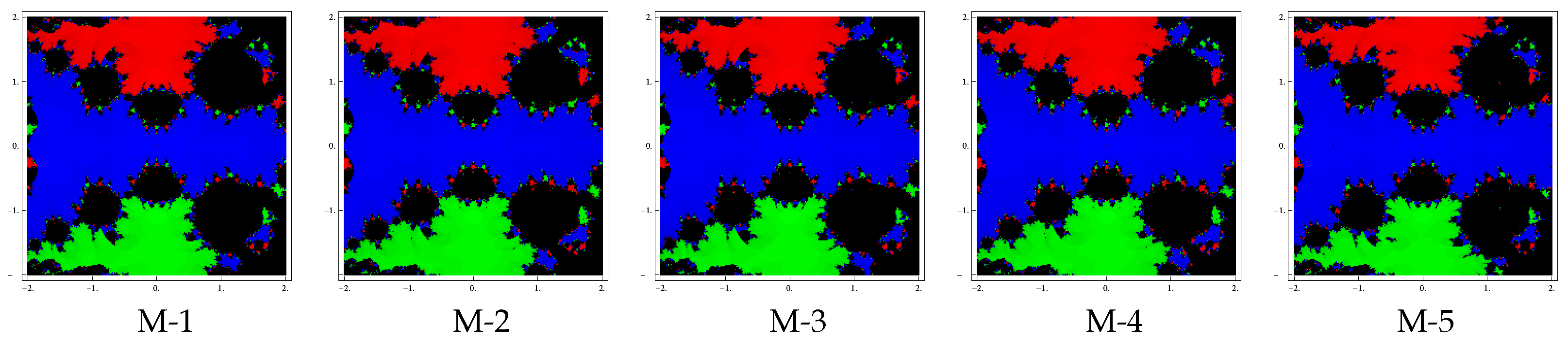

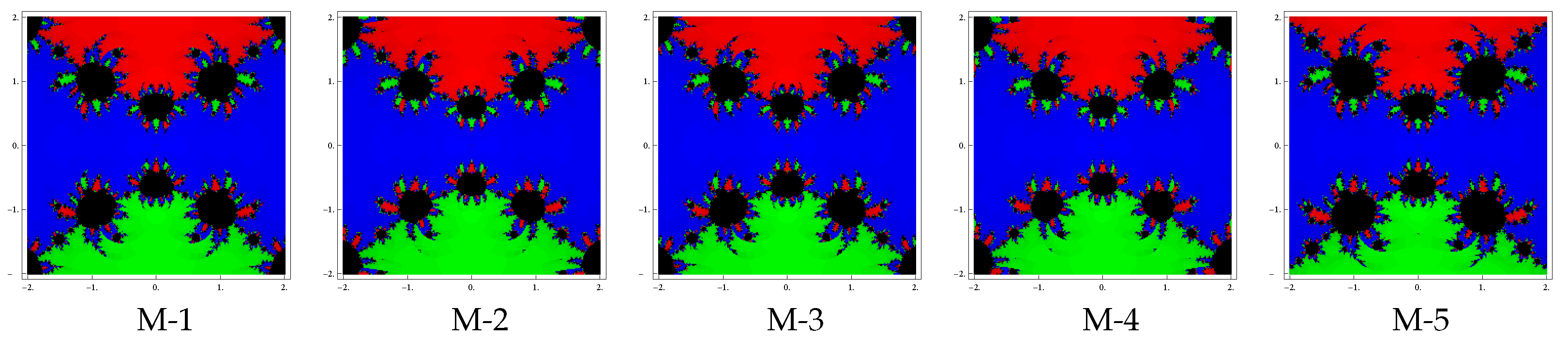

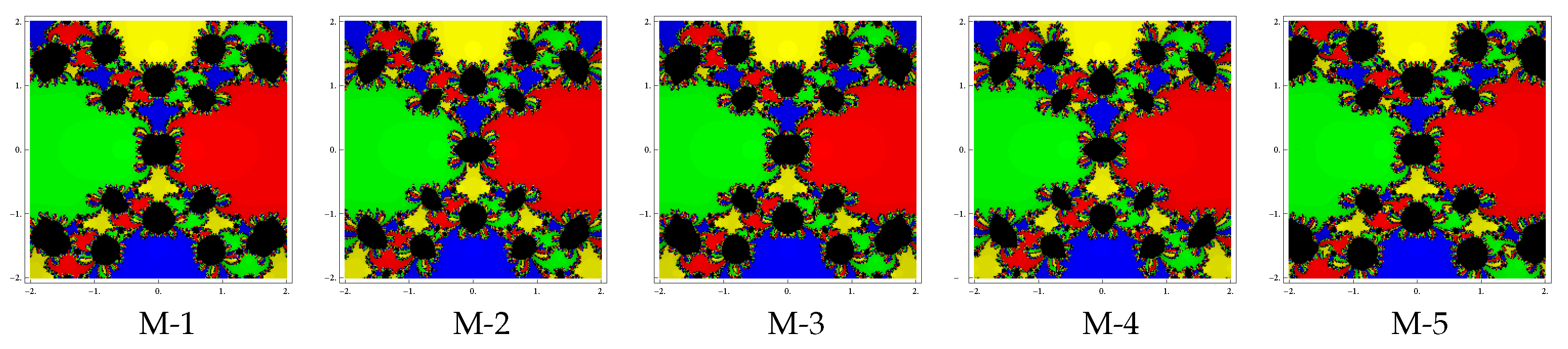

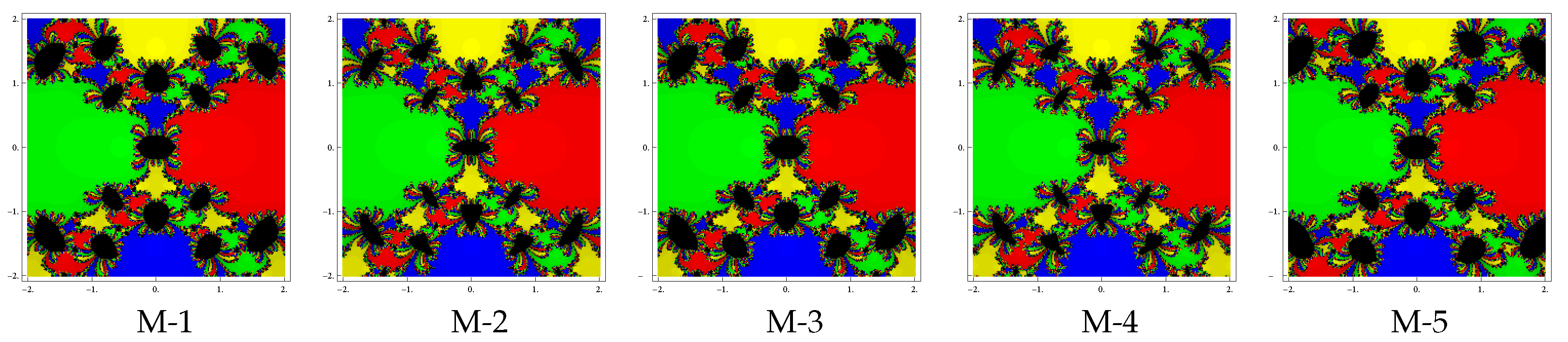

3. Complex Dynamics of Methods

4. Numerical Results

a=2; b=3.5; k=1; x0=0.5*(a+b+Sign[f[a]]*NIntegrate[Tanh[k *f[x]],{x,a,b}])

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Traub, J.F. Iterative Methods for the Solution of Equations; Chelsea Publishing Company: New York, NY, USA, 1982. [Google Scholar]

- Hoffman, J.D. Numerical Methods for Engineers and Scientists; McGraw-Hill Book Company: New York, NY, USA, 1992. [Google Scholar]

- Argyros, I.K. Convergence and Applications of Newton-Type Iterations; Springer-Verlag: New York, NY, USA, 2008. [Google Scholar]

- Argyros, I.K.; Magreñán, Á.A. Iterative Methods and Their Dynamics with Applications; CRC Press: New York, NY, USA, 2017. [Google Scholar]

- Schröder, E. Über unendlich viele Algorithmen zur Auflösung der Gleichungen. Math. Ann. 1870, 2, 317–365. [Google Scholar] [CrossRef]

- Hansen, E.; Patrick, M. A family of root finding methods. Numer. Math. 1977, 27, 257–269. [Google Scholar] [CrossRef]

- Victory, H.D.; Neta, B. A higher order method for multiple zeros of nonlinear functions. Int. J. Comput. Math. 1983, 12, 329–335. [Google Scholar] [CrossRef]

- Dong, C. A family of multipoint iterative functions for finding multiple roots of equations. Int. J. Comput. Math. 1987, 21, 363–367. [Google Scholar] [CrossRef]

- Osada, N. An optimal multiple root-finding method of order three. J. Comput. Appl. Math. 1994, 51, 131–133. [Google Scholar] [CrossRef]

- Neta, B. New third order nonlinear solvers for multiple roots. Appl. Math. Comput. 2008, 202, 162–170. [Google Scholar] [CrossRef]

- Li, S.; Liao, X.; Cheng, L. A new fourth-order iterative method for finding multiple roots of nonlinear equations. Appl. Math. Comput. 2009, 215, 1288–1292. [Google Scholar]

- Li, S.G.; Cheng, L.Z.; Neta, B. Some fourth-order nonlinear solvers with closed formulae for multiple roots. Comput. Math. Appl. 2010, 59, 126–135. [Google Scholar] [CrossRef]

- Sharma, J.R.; Sharma, R. Modified Jarratt method for computing multiple roots. Appl. Math. Comput. 2010, 217, 878–881. [Google Scholar] [CrossRef]

- Zhou, X.; Chen, X.; Song, Y. Constructing higher-order methods for obtaining the multiple roots of nonlinear equations. J. Comput. Math. Appl. 2011, 235, 4199–4206. [Google Scholar] [CrossRef]

- Kansal, M.; Kanwar, V.; Bhatia, S. On some optimal multiple root-finding methods and their dynamics. Appl. Appl. Math. 2015, 10, 349–367. [Google Scholar]

- Geum, Y.H.; Kim, Y.I.; Neta, B. A class of two-point sixth-order multiplezero finders of modified double-Newton type and their dynamics. Appl. Math. Comput. 2015, 270, 387–400. [Google Scholar]

- Behl, R.; Cordero, A.; Motsa, S.S.; Torregrosa, J.R.; Kanwar, V. An optimal fourth-order family of methods for multiple roots and its dynamics. Numer. Algor. 2016, 71, 775–796. [Google Scholar] [CrossRef]

- Geum, Y.H.; Kim, Y.I.; Neta, B. Constructing a family of optimal eighth-order modified Newton-type multiple-zero finders along with the dynamics behind their purely imaginary extraneous fixed points. J. Comp. Appl. Math. 2017. [Google Scholar] [CrossRef]

- Zafar, F.; Cordero, A.; Quratulain, R.; Torregrosa, J.R. Optimal iterative methods for finding multiple roots of nonlinear equations using free parameters. J. Math. Chem. 2017. [Google Scholar] [CrossRef]

- Zafar, F.; Cordero, A.; Sultana, S.; Torregrosa, J.R. Optimal iterative methods for finding multiple roots of nonlinear equations using weight functions and dynamics. J. Comp. Appl. Math. 2018, 342, 352–374. [Google Scholar] [CrossRef]

- Zafar, F.; Cordero, A.; Torregrosa, J.R. An efficient family of optimal eighth-order multiple root finders. Mathematics 2018, 6, 310. [Google Scholar] [CrossRef]

- Behl, R.; Cordero, A.; Motsa, S.S.; Torregrosa, J.R. An eighth-order family of optimal multiple root finders and its dynamics. Numer. Algor. 2018, 77, 1249–1272. [Google Scholar] [CrossRef]

- Behl, R.; Zafar, F.; Alshormani, A.S.; Junjua, M.U.D.; Yasmin, N. An optimal eighth-order scheme for multiple zeros of unvariate functions. Int. J. Comput. Meth. 2018. [Google Scholar] [CrossRef]

- Behl, R.; Alshomrani, A.S.; Motsa, S.S. An optimal scheme for multiple roots of nonlinear equations with eighth-order convergence. J. Math. Chem. 2018. [Google Scholar] [CrossRef]

- Sharma, J.R.; Kumar, D.; Argyros, I.K. An efficient class of Traub-Steffensen-like seventh order multiple-root solvers with applications. Symmetry 2019, 11, 518. [Google Scholar] [CrossRef]

- Kung, H.T.; Traub, J.F. Optimal order of one-point and multipoint iteration. J. Assoc. Comput. Mach. 1974, 21, 643–651. [Google Scholar] [CrossRef]

- Vrscay, E.R.; Gilbert, W.J. Extraneous fixed points, basin boundaries and chaotic dynamics for Schröder and König rational iteration functions. Numer. Math. 1988, 52, 1–16. [Google Scholar] [CrossRef]

- Varona, J.L. Graphic and numerical comparison between iterative methods. Math. Intell. 2002, 24, 37–46. [Google Scholar] [CrossRef]

- Scott, M.; Neta, B.; Chun, C. Basin attractors for various methods. Appl. Math. Comput. 2011, 218, 2584–2599. [Google Scholar] [CrossRef]

- Lotfi, T.; Sharifi, S.; Salimi, M.; Siegmund, S. A new class of three-point methods with optimal convergence order eight and its dynamics. Numer. Algorithms 2015, 68, 261–288. [Google Scholar] [CrossRef]

- Weerakoon, S.; Fernando, T.G.I. A variant of Newton’s method with accelerated third-order convergence. Appl. Math. Lett. 2000, 13, 87–93. [Google Scholar] [CrossRef]

- Wolfram, S. The Mathematica Book, 5th ed.; Wolfram Media: Champaign, IL, USA, 2003. [Google Scholar]

- Yun, B.I. A non-iterative method for solving non-linear equations. Appl. Math. Comput. 2008, 198, 691–699. [Google Scholar] [CrossRef]

- Bradie, B. A Friendly Introduction to Numerical Analysis; Pearson Education Inc.: New Delhi, India, 2006. [Google Scholar]

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 4 | 8.000 | 0.608 | |||||||||

| ZM−2 | 4 | 8.000 | 0.671 | |||||||||

| BM−1 | 5 | 3.000 | 0.687 | |||||||||

| BM−2 | 5 | 3.000 | 0.702 | |||||||||

| BM−3 | 4 | 8.000 | 0.640 | |||||||||

| M−1 | 4 | 8.000 | 0.452 | |||||||||

| M−2 | 4 | 8.000 | 0.453 | |||||||||

| M−3 | 4 | 8.000 | 0.468 | |||||||||

| M−4 | 4 | 8.000 | 0.437 | |||||||||

| M−5 | 4 | 8.000 | 0.421 |

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 4 | 8.000 | 0.140 | |||||||||

| ZM−2 | 4 | 8.000 | 0.187 | |||||||||

| BM−1 | 5 | 3.000 | 0.140 | |||||||||

| BM−2 | 5 | 3.000 | 0.140 | |||||||||

| BM−3 | 4 | 8.000 | 0.125 | |||||||||

| M−1 | 4 | 8.000 | 0.125 | |||||||||

| M−2 | 4 | 8.000 | 0.110 | |||||||||

| M−3 | 4 | 8.000 | 0.125 | |||||||||

| M−4 | 4 | 8.000 | 0.109 | |||||||||

| M−5 | 4 | 8.000 | 0.093 |

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 0 | 3 | 7.995 | 2.355 | ||||||||

| ZM−2 | 0 | 3 | 7.986 | 2.371 | ||||||||

| BM−1 | 4 | 3.000 | 2.683 | |||||||||

| BM−2 | 4 | 3.000 | 2.777 | |||||||||

| BM−3 | 0 | 3 | 8.002 | 2.324 | ||||||||

| M−1 | 0 | 3 | 7.993 | 1.966 | ||||||||

| M−2 | 0 | 3 | 7.996 | 1.982 | ||||||||

| M−3 | 0 | 3 | 7.993 | 1.965 | ||||||||

| M−4 | 0 | 3 | 7.996 | 1.981 | ||||||||

| M−5 | 0 | 3 | 7.992 | 1.903 |

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 6 | 8.000 | 0.124 | |||||||||

| ZM−2 | Fails | – | – | – | – | – | ||||||

| BM−1 | 7 | 3.000 | 0.109 | |||||||||

| BM−2 | 7 | 3.000 | 0.110 | |||||||||

| BM−3 | 5 | 7.988 | 0.109 | |||||||||

| M−1 | 5 | 8.000 | 0.084 | |||||||||

| M−2 | 6 | 8.000 | 0.093 | |||||||||

| M−3 | 5 | 8.000 | 0.095 | |||||||||

| M−4 | 6 | 8.000 | 0.094 | |||||||||

| M−5 | 5 | 8.000 | 0.089 |

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 0 | 3 | 7.983 | 0.702 | ||||||||

| ZM−2 | 4 | 8.000 | 0.873 | |||||||||

| BM−1 | 5 | 3.000 | 0.920 | |||||||||

| BM−2 | 5 | 3.000 | 0.795 | |||||||||

| BM−3 | 0 | 3 | 7.989 | 0.671 | ||||||||

| M−1 | 0 | 3 | 7.982 | 0.593 | ||||||||

| M−2 | 0 | 3 | 7.990 | 0.608 | ||||||||

| M−3 | 0 | 3 | 7.982 | 0.562 | ||||||||

| M−4 | 0 | 3 | 7.989 | 0.530 | ||||||||

| M−5 | 0 | 3 | 7.982 | 0.499 |

| Methods | k | COC | CPU-Time | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ZM−1 | 4 | 8.000 | 1.217 | |||||||||

| ZM−2 | 4 | 7.998 | 1.357 | |||||||||

| BM−1 | 0 | 3 | 8.000 | 0.874 | ||||||||

| BM−2 | 0 | 3 | 8.000 | 0.889 | ||||||||

| BM−3 | 4 | 8.000 | 1.201 | |||||||||

| M−1 | 0 | 3 | 8.000 | 0.448 | ||||||||

| M−2 | 0 | 3 | 8.000 | 0.452 | ||||||||

| M−3 | 0 | 3 | 8.000 | 0.460 | ||||||||

| M−4 | 0 | 3 | 8.000 | 0.468 | ||||||||

| M−5 | 0 | 3 | 8.000 | 0.436 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sharma, J.R.; Kumar, S.; Argyros, I.K. Development of Optimal Eighth Order Derivative-Free Methods for Multiple Roots of Nonlinear Equations. Symmetry 2019, 11, 766. https://doi.org/10.3390/sym11060766

Sharma JR, Kumar S, Argyros IK. Development of Optimal Eighth Order Derivative-Free Methods for Multiple Roots of Nonlinear Equations. Symmetry. 2019; 11(6):766. https://doi.org/10.3390/sym11060766

Chicago/Turabian StyleSharma, Janak Raj, Sunil Kumar, and Ioannis K. Argyros. 2019. "Development of Optimal Eighth Order Derivative-Free Methods for Multiple Roots of Nonlinear Equations" Symmetry 11, no. 6: 766. https://doi.org/10.3390/sym11060766

APA StyleSharma, J. R., Kumar, S., & Argyros, I. K. (2019). Development of Optimal Eighth Order Derivative-Free Methods for Multiple Roots of Nonlinear Equations. Symmetry, 11(6), 766. https://doi.org/10.3390/sym11060766