3.2. Feature Extraction and the Target Emotion Classes

The Butterworth filter with a cutoff frequency of 4.0 and 45.0 Hz was used to filter the noise in the EEG data [

37]. Then, an independent component analysis (ICA) was employed to eliminate muscular artifacts [

38]. In each trial, 60-s continuous EEG signals were selected and split into three segments: 3-s baseline segment, 6-s (10%) validating segment, and 54-s working segment. The validating segment was used to rank features and avoid overfitting in the M-TRFE model, while the working segment was used to select features and perform the classifier training and testing. The baseline segment was discarded in this work because it is collected before the subject watches the video.

In this work, 11 channels out of 32 channels were picked. These channels are F3, F4, Fz, C3, C4, Cz, P3, P4, Pz, O1 and O2. This particular choice of channels follows the channel employment in previous work of Zhang et al. [

39]. Overall, 137-dimensions EEG features were extracted, which consists of 60 frequency domain features and 77 time domain features. By using a fast Fourier transformation, the frequency features (60 power features, 16 power difference features) were prepared. In each channel, the power features were computed on four frequency bands, i.e., theta (4–8 Hz), alpha (8–12 Hz), beta (12–30 Hz) and gamma (30–45 Hz). Power difference features were employed to detect the variation in cerebral activity between the left and right cortical areas. There are four channel pairs, F4-F3, C4-C3, P4-P3 and O2-O1, used for power differences extraction with each pair, contributing four features of four bands. For each channel, seven temporal features were computed as seven indexes: mean, variance, zero crossing rate, Shannon entropy, spectral entropy, kurtosis, and skewness. All features were standardized with mean = 0 and s.d. = 1. The detailed descriptions of the features are shown in

Table 1.

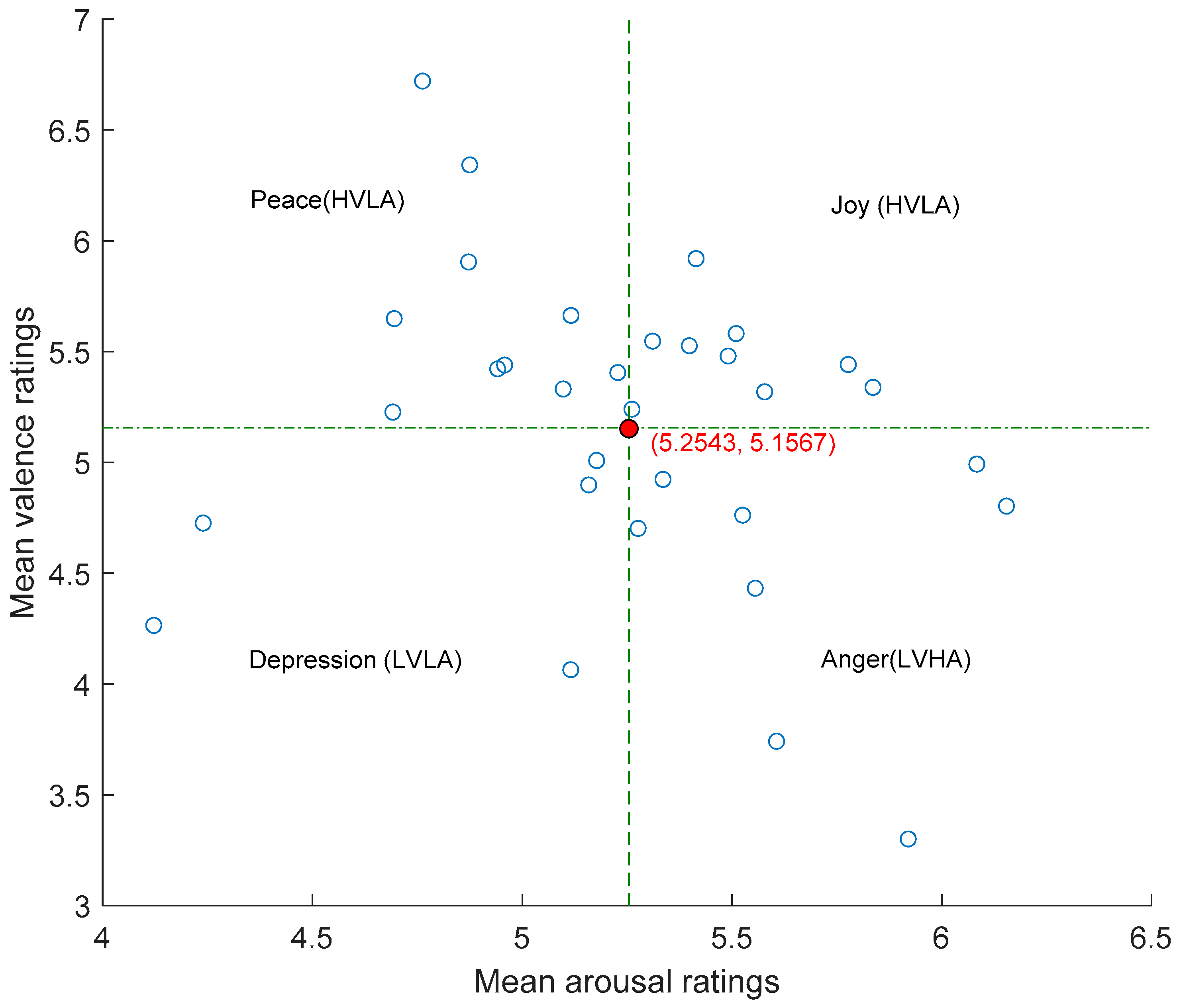

Emotion classification achieved based on supervised learning requires predetermined emotion labels. In the DEAP database, participants used self-assessment manikins to rate the valence and arousal levels in the range from 1 to 9. The subject rated valence or arousal levels from the lowest of 1 to the highest of 9. A threshold is conventionally set up and calculated to determine high/low valence or arousal classes. The value of the threshold point here was determined in a participant generic manner. With each of 32 subjects selected rating values, the mean values of both valence and arousal indexes from all subjects and trials were calculated. For every subject’s 40 arousal ratings a

1, a

2, …, a

40 (

ai ∈

R2), arousal threshold point c

1 is computed as follows:

The same process using valence ratings was used to compute the valence threshold point, c2. It was found that c1 = 5.2543 and c2 = 5.1567 were the threshold values for arousal and valence dimensions. The ratings above c1 were assigned as the state of high arousal and the ratings above c2 were the state of high valence.

The entire V-A plane was split into 4 parts: HVHA (high valence high arousal), HVLA (high valence low arousal), LVHA (low valence high arousal), and LVLA (low valence low arousal). This is illustrated in

Figure 1. Finally, the four emotions of joy, peace, depression and anger were assigned to each respective quadrant in the V-A plane.

3.3. Multiple Transferable Feature Elimination Based on LSSVM

M-TRFE was developed via LSSVM due to its merits in faster-training and better performance in avoiding overfitting. Here is the principle to select the feature instance. Given the training set

with the input data

and the corresponding output labels

. The nonlinear mapping

was used to generate a higher dimensional feature space aiming at finding the optimal decision function,

In Equation (2),

stands for the weight vector of the classification separating hyperplane and

is the linear estimation function in feature space. To achieve minimization of structural risk, the scheme was carried out as below:

where

is the regularization parameter for adjusting the punishment degree of training error,

is in control of the complexity of the model, and the last term

is an empirical error on the training set, where the slack variable

is introduced in case of nonlinear separable of the instances in two classes. The Lagrangian function can be constructed with the kernel function

to find solutions of a linear equation system. Applying the least square method, a nonlinear prediction model is exposed via kernel function

:

According to the equations above, M-TRFE measures if a feature is salient by checking the classification margin and the loss of the margin when the

kth feature is eliminated, i.e.,

In Equation (5), is the weight vector of the classification plane with the kth feature eliminated. If the elimination of a particular feature leads to the largest , the corresponding feature is considered as the most influential one.

The goal of M-TRFE is to determine a set of best indicators among a group of participants. It is noted that the binary LSSVM is not capable of the four-class classification task. Here the one against one ensemble (OvO) of multiclass classifiers is utilized to fulfill the task. With OvO structure, each two emotion classes are tackled as a pair via an M-TRFE-LSSVM model. The details are shown as follows.

Given a sample set

with

, initialize the feature set

, the feature-ranking set

, and the feature ranking vector

. Combine two training samples as a pair, and eventually generate

novel training sample, the last classifier can be built as:

In the first step, we can use the obtained

to train an LSSVM model with the computed weight vector

. Then, the sorting criterion score can be calculated as follows,

Update the feature ranking set as and delete the feature in S. This process repeats till .

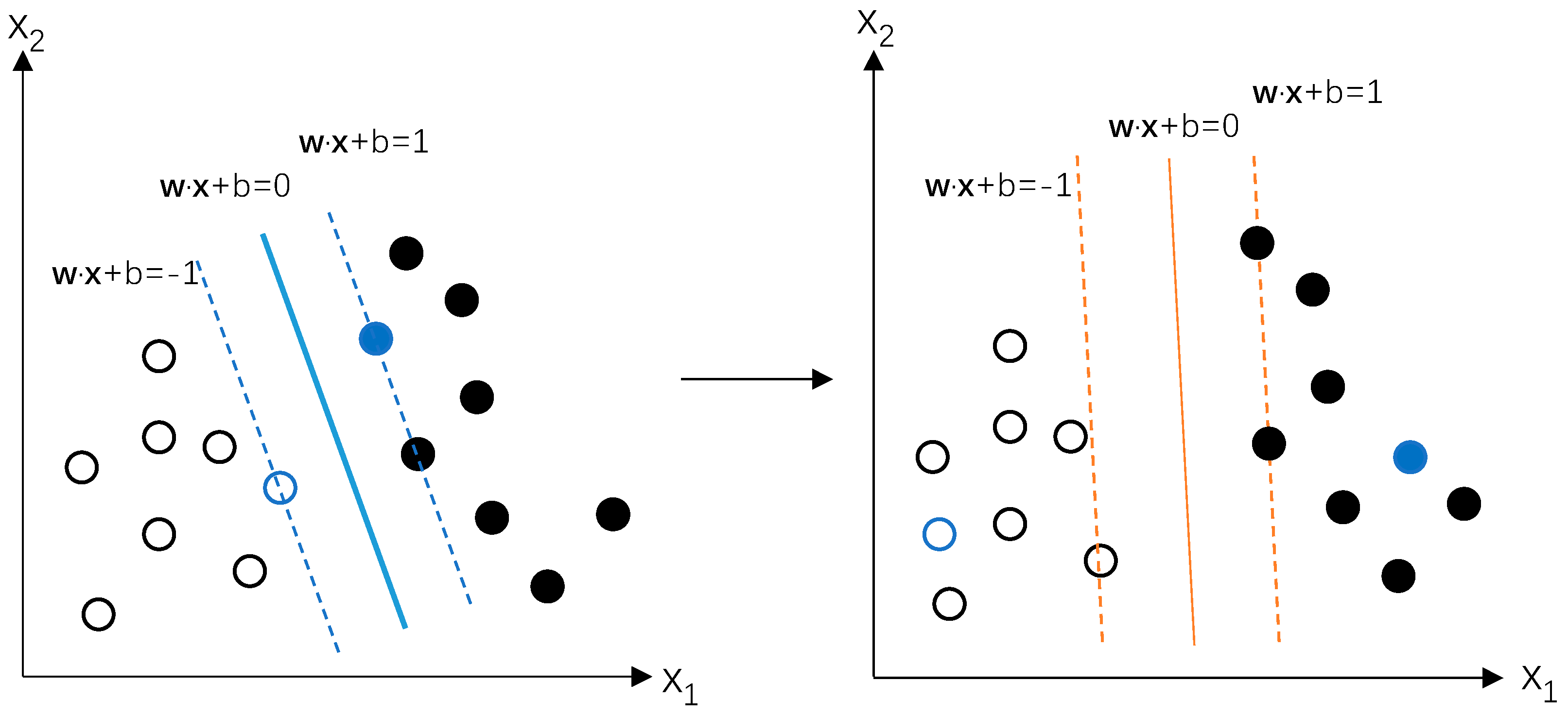

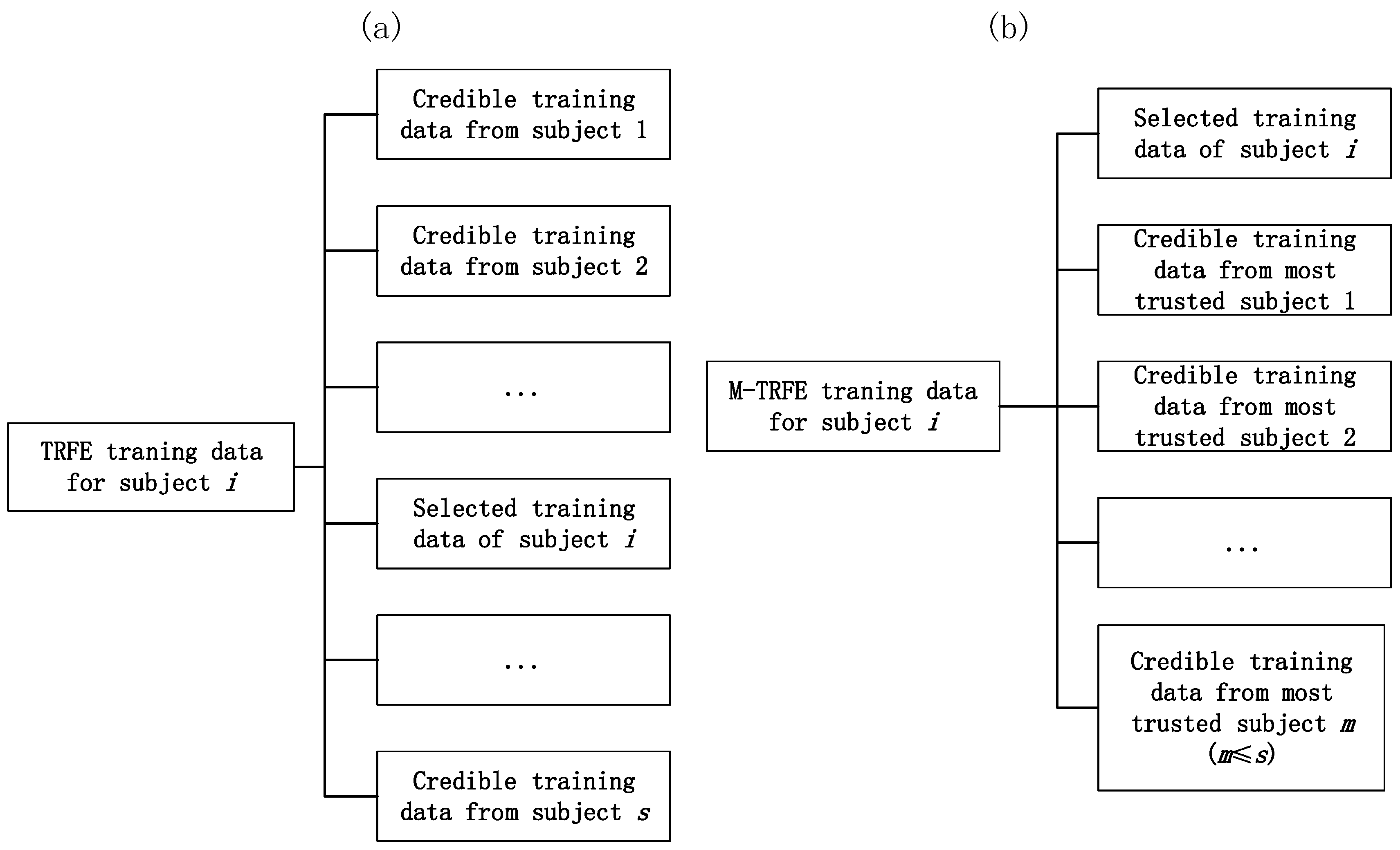

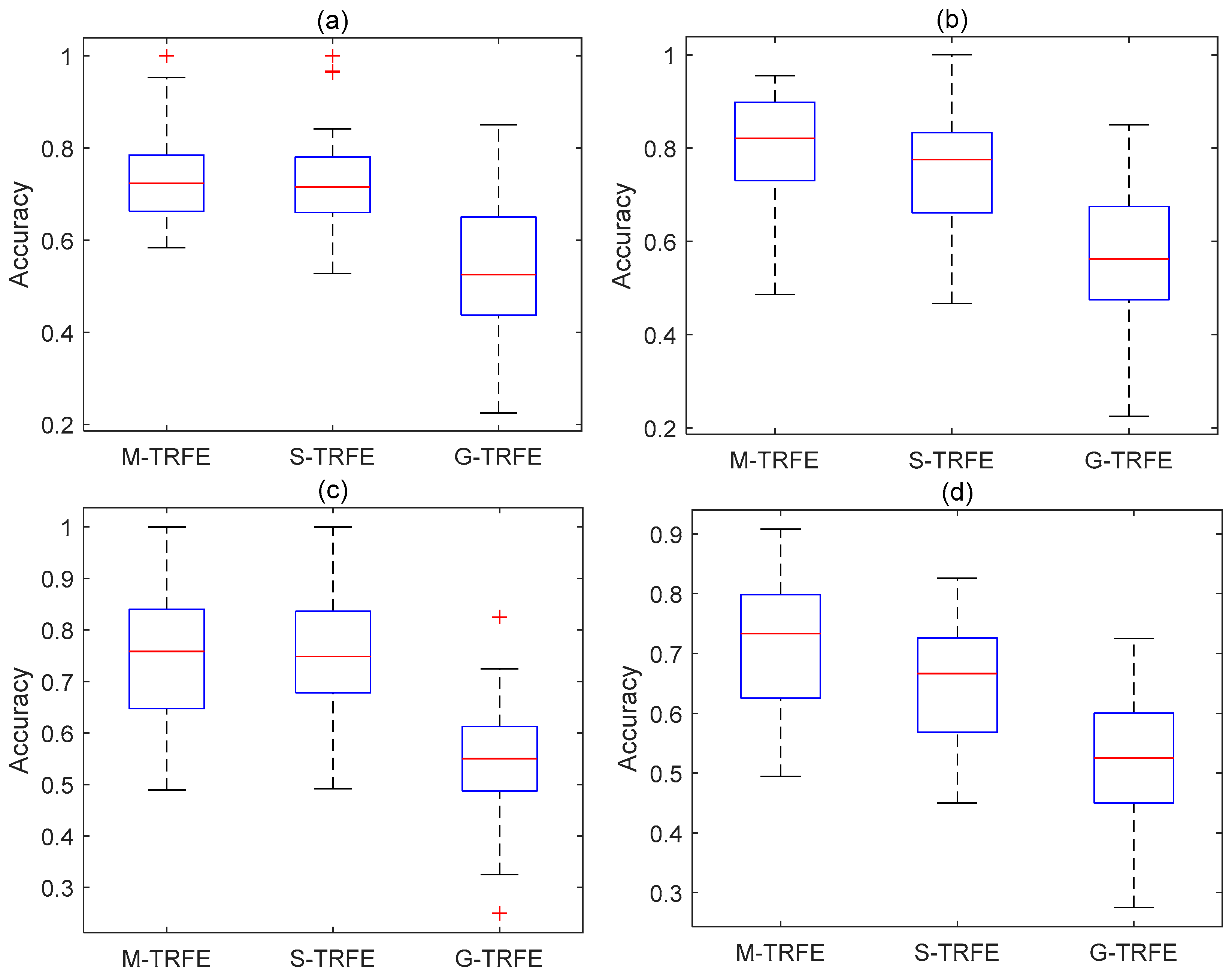

In M-TRFE, credible training data contain the best feature instances from others. At the same time, the selected training data eliminate some of the worst performing features from the original training set. M-TRFE will also rate the pick of only a few subjects to take part in the building of this set. The influence of the variation of the training set of the TRFE concept is illustrated in

Figure 2. The construction of the M-TRFE novel training set is unfolded in

Figure 3a.

Notably for the use of multiclass M-TRFE, for the training set

with the multiclass label

, several separate binary classifiers robustly analyze each emotion and encode the label into binary values

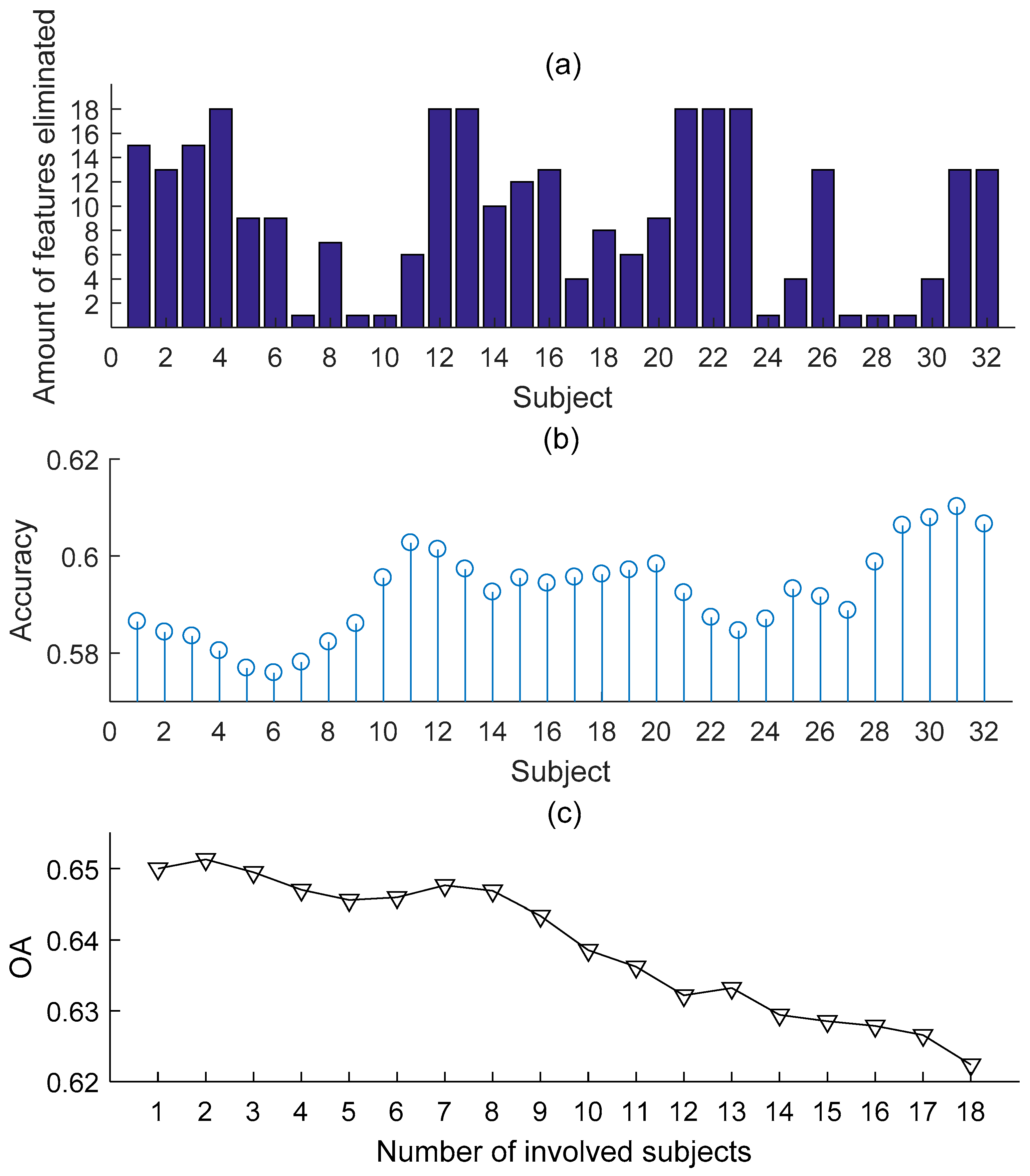

. This would give each emotion a feature ranking and subject selection. To fulfill the multiclass subject-generic emotion feature selection, a mutual feature-ranking list is generated based on four separate rankings of joy, peace, anger and depression, to detect the best features and most trusted subjects for each emotion. We consider one subject is more trusted if he achieves better results in cross-subject classification accuracy. The more one subject is trusted, the more he contributes to the transferring training set. Meanwhile, the least trusted ones stop contributing the set as is shown in

Figure 3b. The weighted score of each feature can be averaged from all ranking lists.

In Equation (8), if one feature is determined as the worst feature, the ranking index r-value will be 0. The r-value of the second worst feature would be considered as 1 and so on. By choosing the least trusted 10 features, rankings for different emotions would be given. In fact, the value evaluates how favorable one feature is. Moreover, instead of using separate feature ranking arrays, the mutual array can be calculated in the proposed M-TRFE multiclass model.

The workflow of M-TRFE is as follows. For a given high emotion state (V-A or multiclass) as V

H and the low emotion state as V

L, the corresponding state centers

and

are computed. For the

feature of the

subject, we define the Euclidean distance between the original EEG feature set to a novel transferring set as

The H-value can be calculated as

In Equation (10),

is the cardinal number of

Oi, while

Oi is the newly extended space. H < 0 indicates the feature value is far away from V

H and such feature will be eliminated from the high class. The details of M-TRFE are written in the form of pseudo codes and are given in

Table 2 and

Table 3.

There are several details that need to be explained in

Table 2.

s in line 2 stands for the number of subjects that took part in the trials, while

f value in line 4 controls the number of folds if L-fold cross-validation technique is applied. In this study,

s = 32 and

f = 10.

j1 from line 9 starts the subject ranking.

records cross-subject performance of subject

i.

In

Table 3, the high state of emotion is taken as an example. The low state of emotion can also track the pseudo codes. There are several parameters that need to be explained.

L = 137 in line 10 represents the dimensionality of the feature set. Since

L is a prime number in this work, the step length of iteration of each elimination has to be taken as 1. In line 15, d

H (and d

L) quantifies the distance between the original set and transferring set for the high (and low) class, and the distance difference

is considered equally influential as LSSVM margin loss

by taking

. An auxiliary function

has also been used in the pseudo codes, which is introduced to simplify the representation of the algorithm:

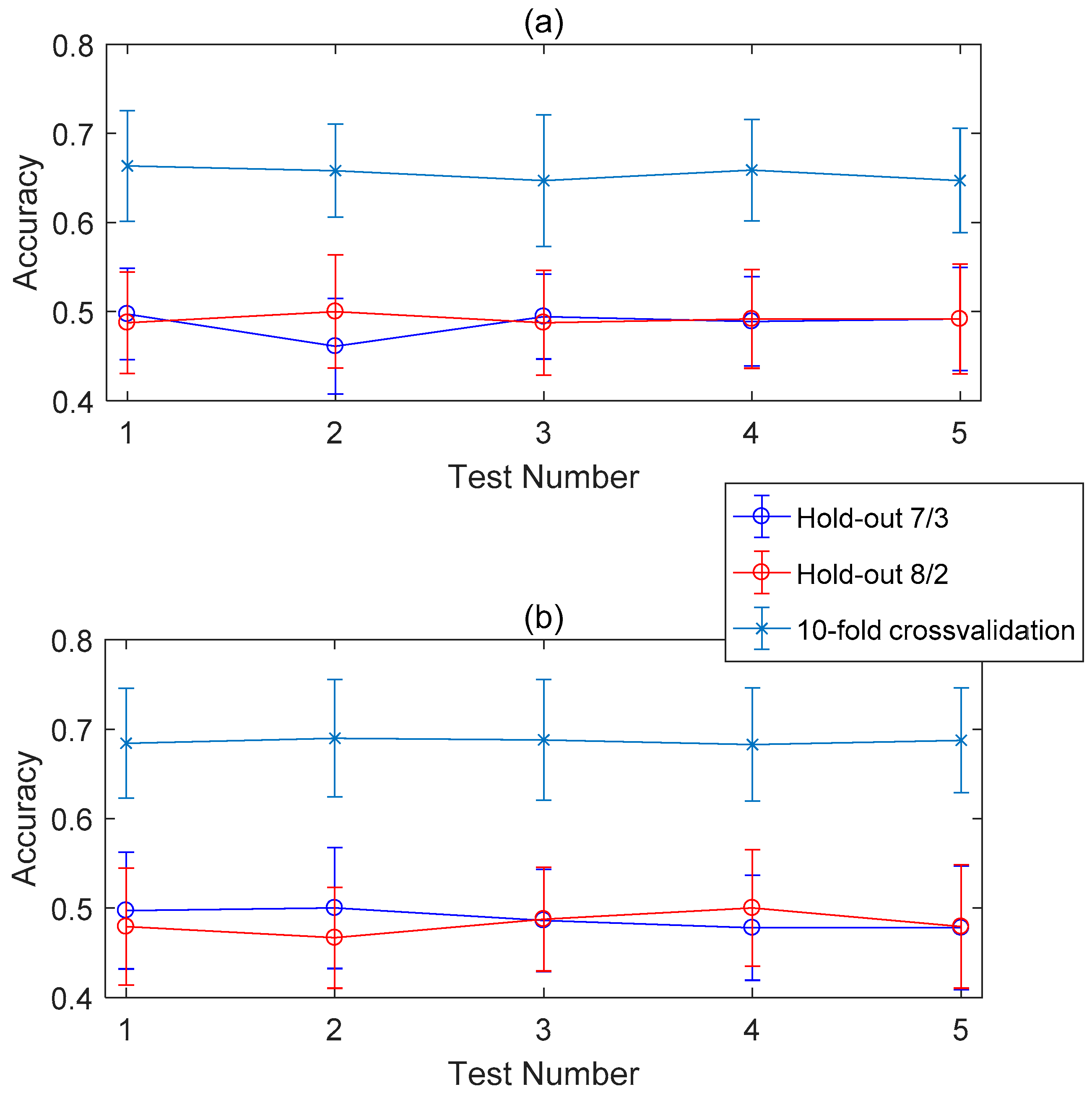

To evaluate the classification performance of the proposed feature selection model, several assessment metrics are introduced as accuracy, F1 score, and kappa value.