Assessing the Efficacy of the SWAT Auto-Irrigation Function to Simulate Irrigation, Evapotranspiration, and Crop Response to Management Strategies of the Texas High Plains

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Area

2.2. Climate Data Collection and Analysis

2.3. Lysimeter, LAI, and Crop Yield Data Collection and Analysis

2.4. SWAT Single HRU Setup and Calibration

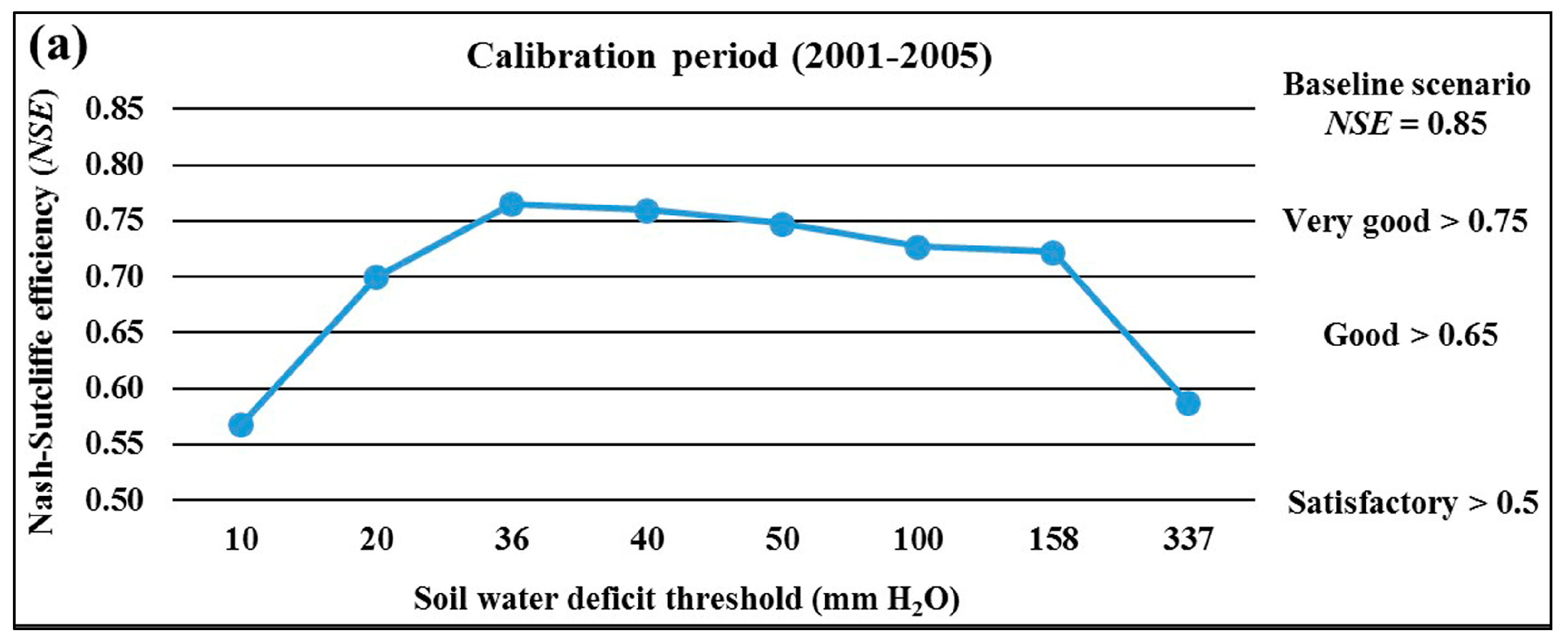

2.5. Auto-irrigation Scenario Design and Assessment

3. Results and Discussion

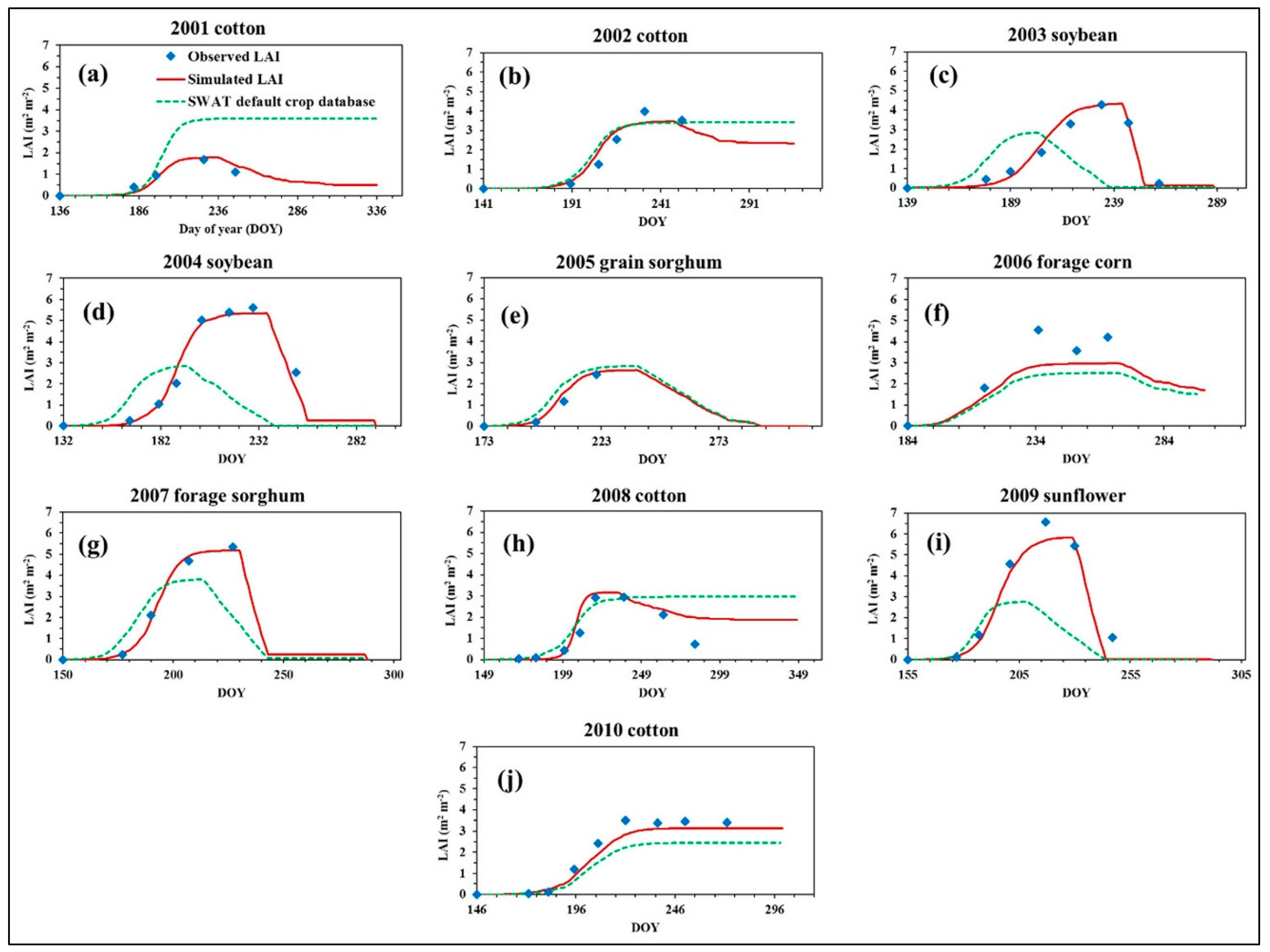

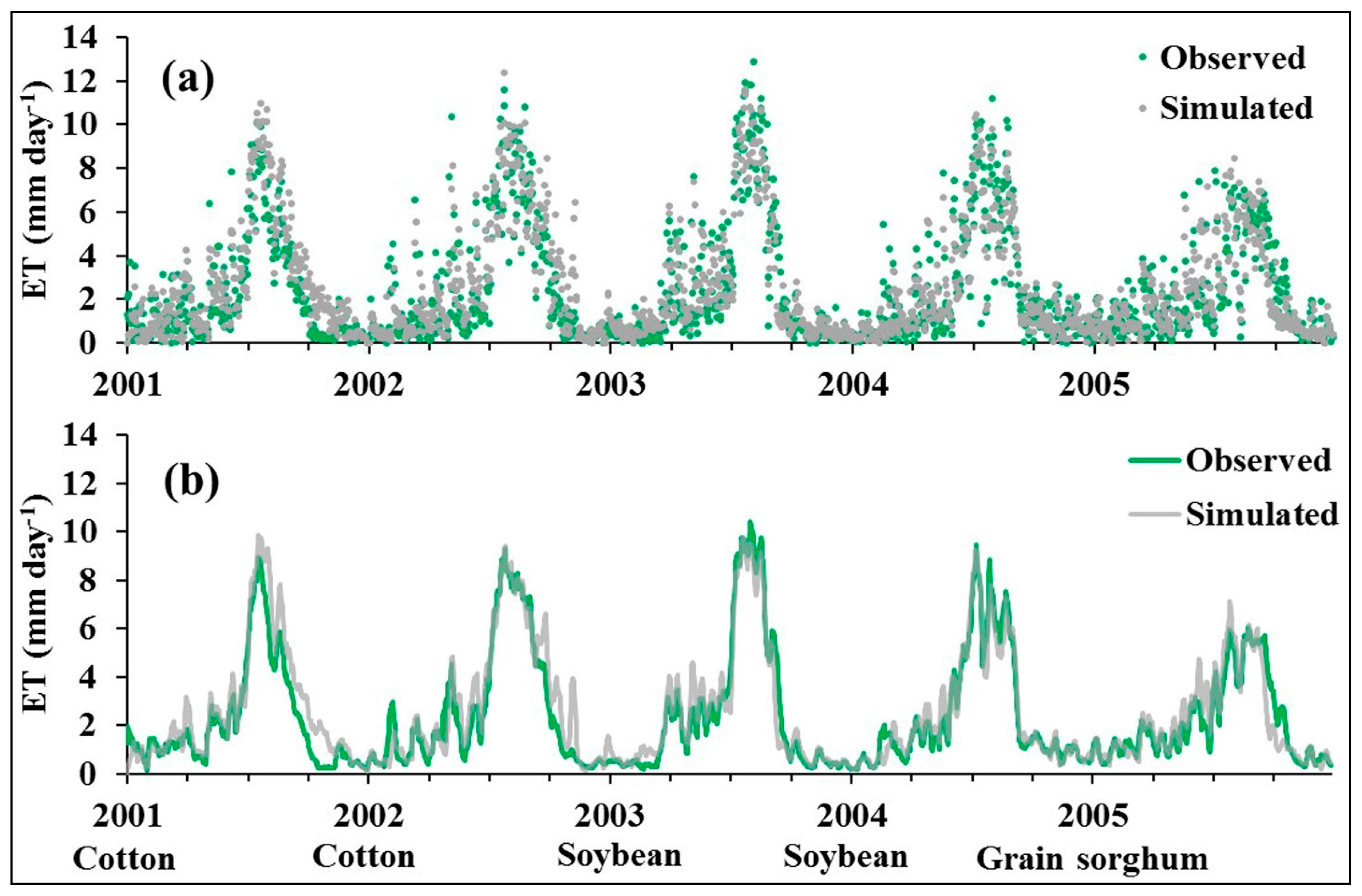

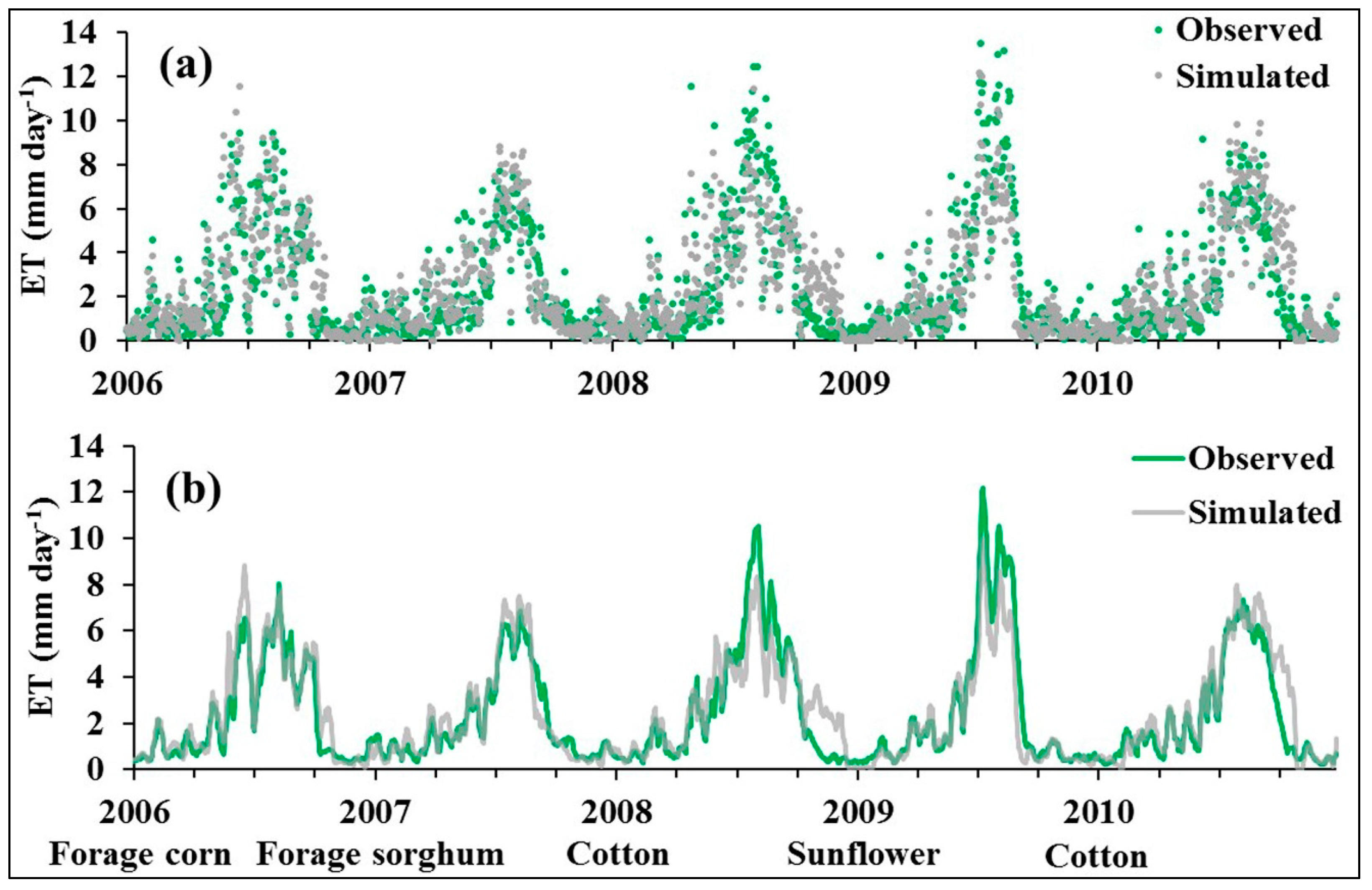

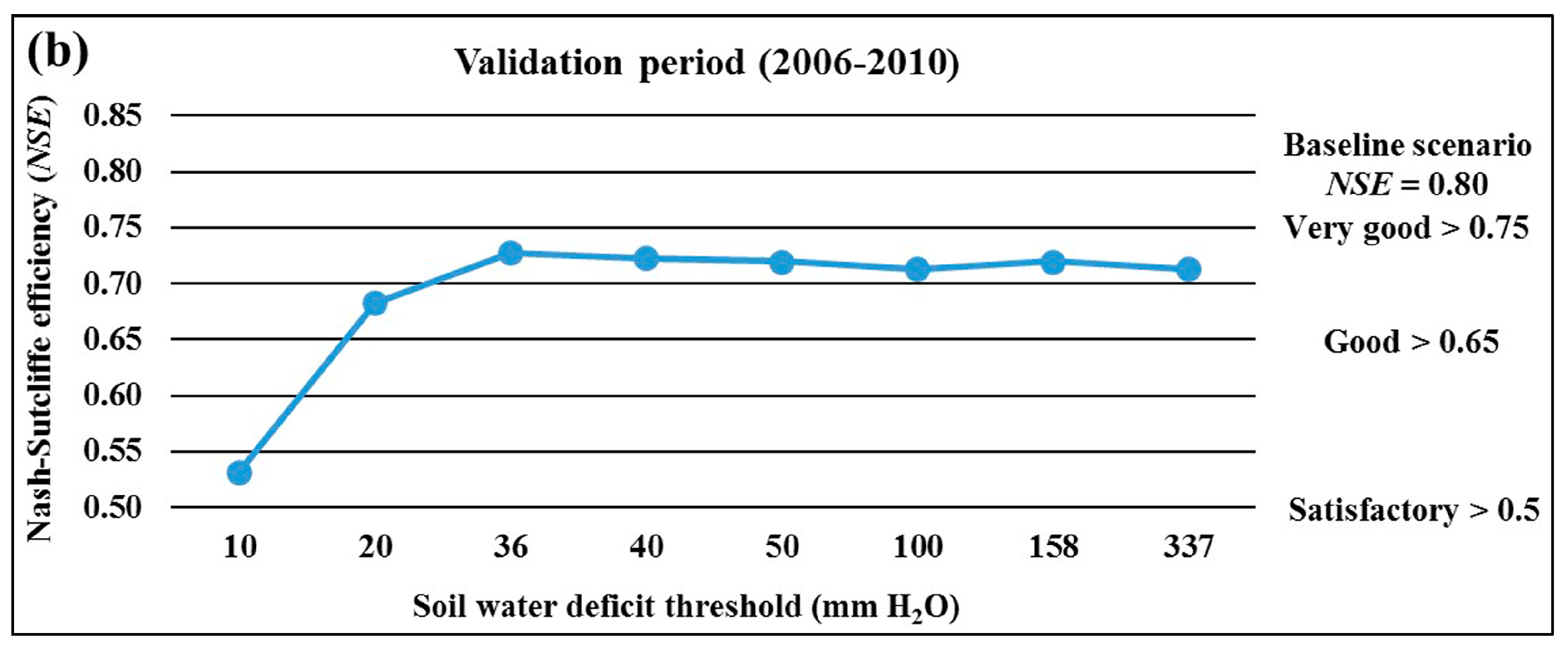

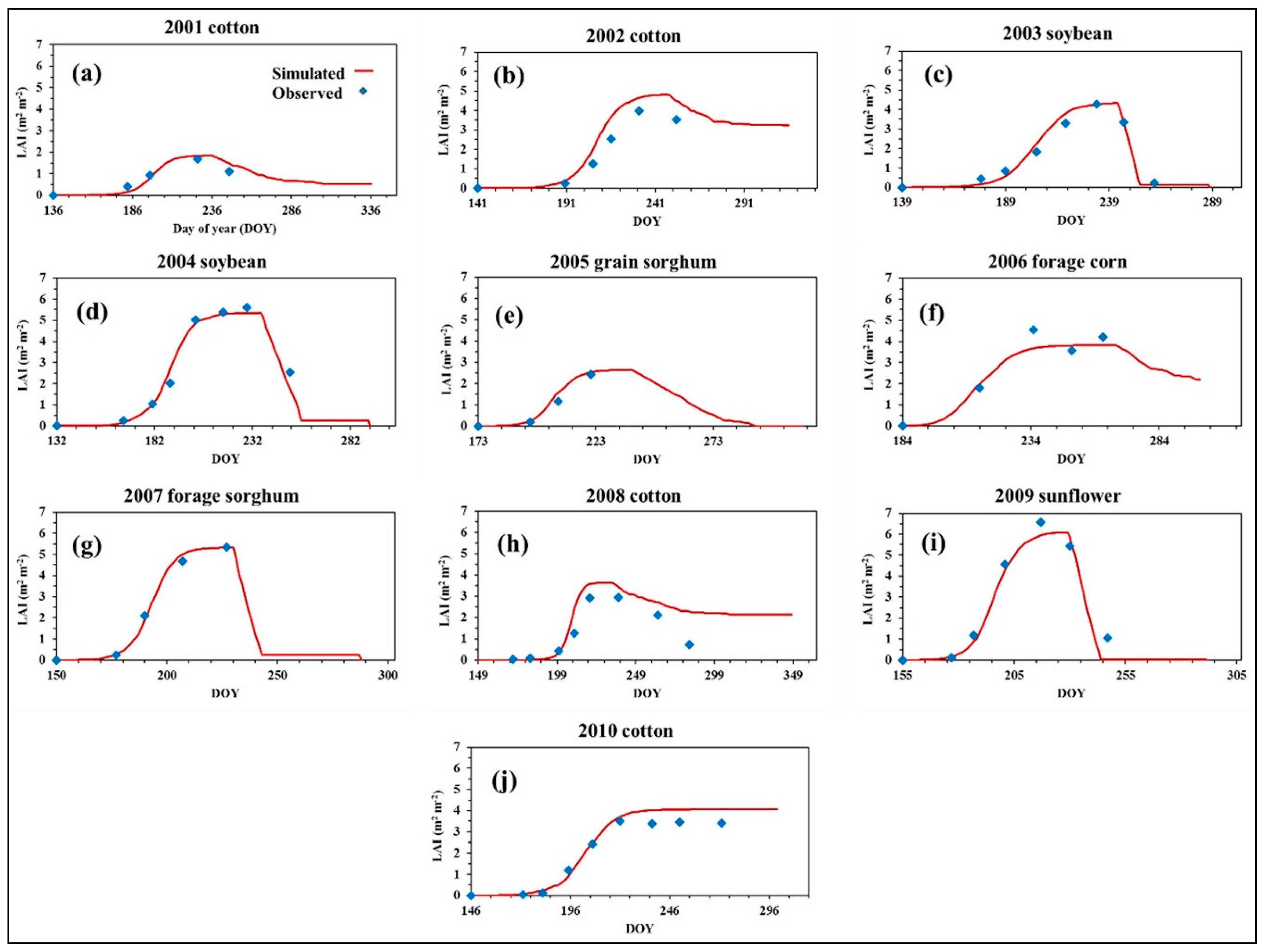

3.1. Evaluation of the SWAT Single-HRU Method for LAI and ET Simulations

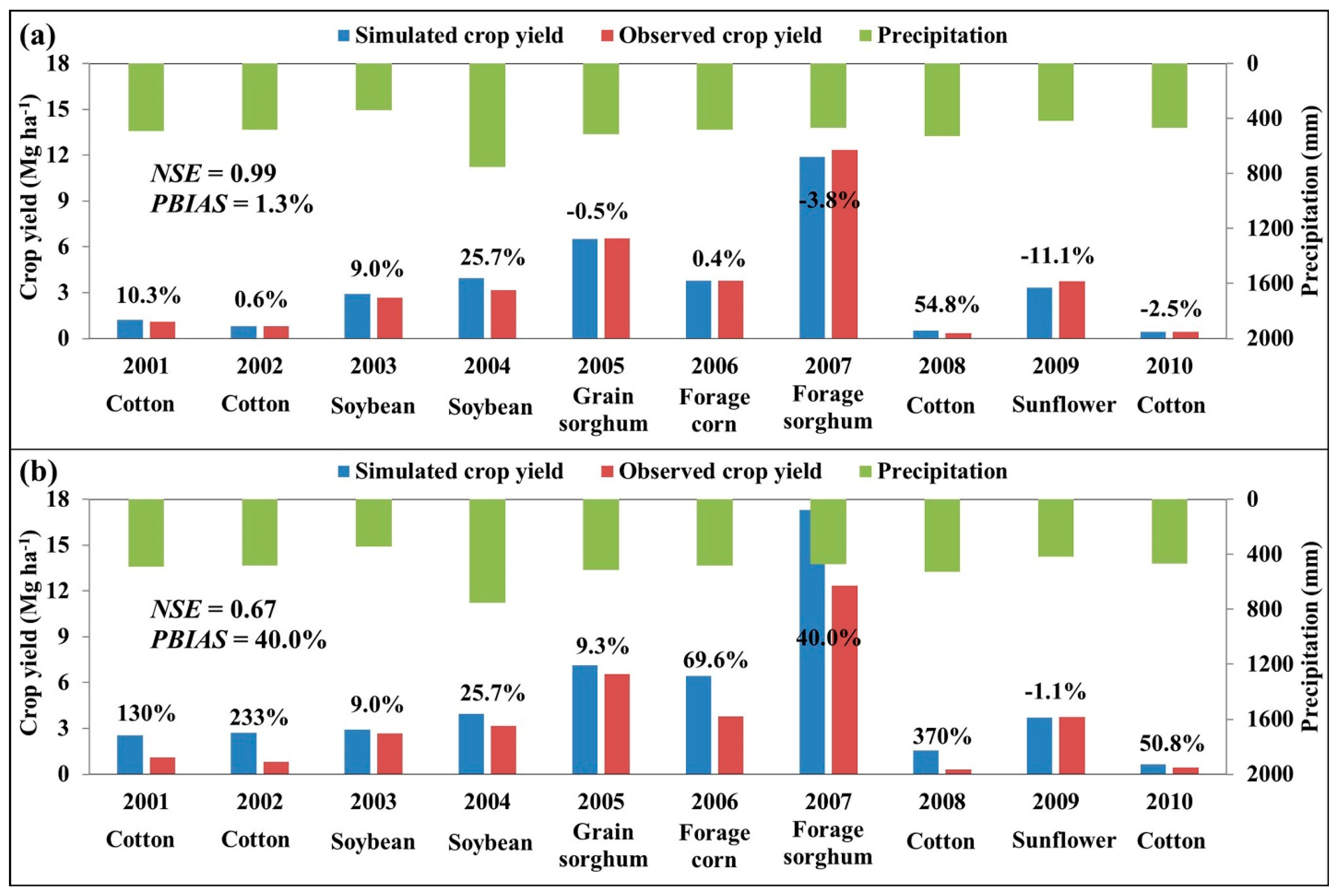

3.2. Comparison of SWAT-Simulated Crop Yields with Field Observations

3.3. Simulated ET, Crop Response, and Irrigation Scheduling under SWAT Auto-Irrigation Scenarios

4. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Arnold, J.G.; Srinivasan, R.; Muttiah, R.S.; Williams, J.R. Large-area hydrologic modeling and assessment: Part I. Model development. J. Am. Water Resour. Assoc. 1998, 34, 73–89. [Google Scholar] [CrossRef]

- Williams, J.R. The EPIC model. In Computer Models of Watershed Hydrology; Singh, V.P., Ed.; Water Resources Publications: Highlands Ranch, CO, USA, 1995; pp. 909–1000. [Google Scholar]

- Refsgaard, J.C.; Storm, B. MIKE SHE. In Computer Models of Watershed Hydrology; Singh, V.P., Ed.; Water Resources Publications: Highlands Ranch, CO, USA, 1995; pp. 809–846. [Google Scholar]

- Wellen, C.; Kamran-Disfani, A.; Arhonditsis, G.B. Evaluation of the current state of distributed watershed nutrient water quality modeling. Environ. Sci. Technol. 2015, 49, 3278–3290. [Google Scholar] [CrossRef] [PubMed]

- Marek, G.W.; Gowda, P.H.; Evett, S.R.; Baumhardt, R.L.; Brauer, D.K.; Howell, T.A.; Marek, T.H.; Srinivasan, R. Calibration and validation of the SWAT model for predicting daily ET over irrigated crops in the Texas High Plains using lysimetric data. Trans. ASABE 2016, 59, 611–622. [Google Scholar] [CrossRef]

- Marek, G.W.; Gowda, P.H.; Evett, S.R.; Baumhardt, R.L.; Brauer, D.K.; Howell, T.A.; Marek, T.H.; Srinivasan, R. Estimating evapotranspiration for dryland cropping systems in the semiarid Texas High Plains using SWAT. J. Am. Water Resour. Assoc. 2016, 52, 298–314. [Google Scholar] [CrossRef]

- Jung, C.G.; Lee, D.R.; Moon, J.W. Comparison of the Penman-Monteith method and regional calibration of the Hargreaves equation for actual evapotranspiration using SWAT-simulated results in the Seolma-cheon basin, South Korea. Hydrol. Sci. J. 2016, 61, 793–800. [Google Scholar] [CrossRef][Green Version]

- Beven, K. A manifesto for the equifinality thesis. J. Hydrol. 2006, 320, 18–36. [Google Scholar] [CrossRef]

- Kirchner, J.W. Getting the right answers for the right reasons: Linking measurements, analyses, and models to advance the science of hydrology. Water Resour. Res. 2006, 42, W03S04. [Google Scholar] [CrossRef]

- SWAT|Soil and Water Assessment Tool—Texas A&M University. Available online: http://swat.tamu.edu/ (accessed on 9 July 2017).

- Neitsch, S.L.; Arnold, J.G.; Kiniry, J.R.; Williams, J.R. Soil and Water Assessment Tool Theoretical Documentation Version 2009; Texas Water Resources Institute: College Station, TX, USA, 2011. [Google Scholar]

- Akhavan, S.; Abedi-Koupai, J.; Mousavi, S.F.; Afyuni, M.; Eslamian, S.S.; Abbaspour, K.C. Application of SWAT model to investigate nitrate leaching in Hamadan-Bahar Watershed, Iran. Agric. Ecosyst. Environ. 2010, 139, 675–688. [Google Scholar] [CrossRef]

- Dechmi, F.; Burguete, J.; Skhiri, A. SWAT application in intensive irrigation systems: Model modification, calibration and validation. J. Hydrol. 2012, 470–471, 227–238. [Google Scholar] [CrossRef]

- Chen, Y.; Ale, S.; Rajan, N.; Munster, C. Assessing the hydrologic and water quality impacts of biofuel-induced changes in land use and management. Glob. Chang. Biol. Bioenergy 2017. [Google Scholar] [CrossRef]

- Marek, G.W.; Gowda, P.H.; Marek, T.H.; Porter, D.O.; Baumhardt, R.L.; Brauer, D.K. Modeling long-term water use of irrigated cropping rotations in the Texas High Plains using SWAT. Irrig. Sci. 2017, 35, 111–123. [Google Scholar] [CrossRef]

- Githui, F.; Thayalakumaran, T.; Selle, B. Estimating irrigation inputs for distributed hydrological modelling: A case study from an irrigated catchment in southeast Australia. Hydrol. Process. 2016, 30, 1824–1835. [Google Scholar] [CrossRef]

- Xie, X.; Cui, Y. Development and test of SWAT for modeling hydrological processes in irrigation districts with paddy rice. J. Hydrol. 2011, 396, 61–71. [Google Scholar] [CrossRef]

- Unger, P.W.; Pringle, F.B. Pullman Soils: Distribution Importance, Variability, and Management; Bulletin B-1372; Texas Agricultural Experiment Station: College Station, TX, USA, 1981. [Google Scholar]

- American Society of Civil Engineers (ASCE). The ASCE Standardized Reference Evapotranspiration Equation; ASCE Environmental and Water Resources Institute: Reston, VA, USA, 2005. [Google Scholar]

- Marek, T.H.; Porter, D.O.; Howell, T.A. The Texas High Plains Evapotranspiration Network: An Irrigation Scheduling Technology Transfer Tool; Technical Report for Contract No. 2004-358-008; Texas Water Development Board: Austin, TX, USA, 2005. [Google Scholar]

- Marek, T.H.; Schneider, A.D.; Howell, T.A.; Ebeling, L.L. Design and construction of large weighing monolithic lysimeters. Trans. ASAE 1988, 31, 477–484. [Google Scholar] [CrossRef]

- Howell, T.A.; Schneider, A.D.; Dusek, D.A.; Marek, T.H.; Steiner, J.L. Calibration and scale performance of Bushland weighing lysimeters. Trans. ASAE 1995, 38, 1019–1024. [Google Scholar] [CrossRef]

- Evett, S.R.; Schwartz, R.C.; Howell, T.A.; Baumhardt, R.L.; Copeland, K.S. Can weighing lysimeter ET represent surrounding field ET well enough to test flux station measurements of daily and sub-daily ET? Adv. Water Resour. 2012, 50, 79–90. [Google Scholar] [CrossRef]

- Marek, G.W.; Evett, S.R.; Gowda, P.H.; Howell, T.A.; Copeland, K.S.; Baumhardt, R.L. Post-processing techniques for reducing errors in weighing lysimeter evapotranspiration (ET) datasets. Trans. ASABE 2014, 57, 499–515. [Google Scholar] [CrossRef]

- Srinivasan, R.; Zhang, X.; Arnold, J.G. SWAT ungauged: Hydrological budget and crop yield predictions in the upper Mississippi river basin. Trans. ASABE 2010, 53, 1533–1546. [Google Scholar] [CrossRef]

- Moloney, C.; Cibin, R.; Chaubey, I. Using a Single HRU SWAT Model to Examine and Improve Representation of Field-Scale Processes. 2015. Available online: http://swat.tamu.edu/conferences/international/2015-purdue/material/ (accessed on 9 July 2017).

- Cibin, R.; Chaubey, I.; Helmers, M.; Sudheer, K.P.; White, M.; Arnold, J.G. Improved Physical Representation of Vegetative Filter Strip in SWAT. 2015. Available online: http://swat.tamu.edu/conferences/international/2015-purdue/material/ (accessed on 9 July 2017).

- Sinnathamby, S.; Douglas-Mankin, K.R.; Craige, C. Field-scale calibration of crop-yield parameters in the Soil and Water Assessment Tool (SWAT). Agric. Water Manag. 2017, 180, 61–69. [Google Scholar] [CrossRef]

- Nash, J.E.; Sutcliffe, J.V. River flow forecasting through conceptual models, Part I-a discussion of principles. J. Hydrol. 1970, 10, 282–290. [Google Scholar] [CrossRef]

- Yimam, Y.T.; Ochsner, T.E.; Kakani, V.G. Evapotranspiration partitioning and water use efficiency of Switchgrass and biomass sorghum managed for biofuel. Agric. Water Manag. 2015, 155, 40–47. [Google Scholar] [CrossRef]

- Moriasi, D.N.; Arnold, J.G.; Van Liew, M.W.; Binger, R.L.; Harmel, R.D.; Veith, T. Model evaluation guidelines for systematic quantification of accuracy in watershed simulations. Trans. ASABE 2007, 50, 885–900. [Google Scholar] [CrossRef]

- Faramarzi, M.; Abbaspour, K.C.; Schulin, R.; Yang, H. Modeling blue and green water resources availability in Iran. Hydrol. Process. 2009, 23, 486–501. [Google Scholar] [CrossRef]

- Chen, Y.; Ale, S.; Rajan, N.; Morgan, C.L.S.; Park, J.Y. Hydrological responses of land use change from cotton (Gossypium hirsutum L.) to cellulosic bioenergy crops in the Southern High Plains of Texas, USA. Glob. Chang. Biol. Bioenergy 2016, 8, 981–999. [Google Scholar] [CrossRef]

- Mittelstet, A.R.; Storm, D.E.; Stoecker, A.L. Using SWAT and an empirical relationship to simulate crop yields and salinity levels in the North Fork River Basin. Int. J. Agric. Biol. Eng. 2015, 8, 110–124. [Google Scholar] [CrossRef]

- Ton, P. Cotton and climate change in west Africa. In The Impact of Climate Change on Drylands; Environment & Policy Series; Springer: Dordrecht, The Netherlands, 2004; Volume 39, pp. 97–115. [Google Scholar]

| Year | Irrigated Crop | Planting Date | Fertilizer Application (kg ha−1) * | Harvest Date |

|---|---|---|---|---|

| 2000 | Cotton | 16 May | 504.4 | 6 December |

| 2001 | Cotton | 16 May | 326.2 | 3 December |

| 2002 | Cotton | 21 May | 571.6 | 13 November |

| 2003 | Soybean | 19 May | Not applied | 15 October |

| 2004 | Soybean | 12 May | Not applied | 19 October |

| 2005 | Grain sorghum | 22 June | 612.0 | 7 November |

| 2006 | Forage corn | 3 July | 68.4 | 14 December |

| 2007 | Forage sorghum | 30 May | 510.0 | 15 October |

| 2008 | Cotton | 21 May | 408.0 | 15 December |

| 2009 | Sunflower | 4 June | 510.0 | 19 October |

| 2010 | Cotton | 26 May | Not applied | 28 October |

| Soil Information | Layer 1 | Layer 2 | Layer 3 | Layer 4 |

|---|---|---|---|---|

| Depth (mm) | 0–180 | 180–860 | 860–1800 | 1800–2300 |

| Bulk density (g cm−3) | 1.43 | 1.38 | 1.38 | 1.45 |

| Available water capacity (mm H2O per mm soil) | 0.20 | 0.18 | 0.19 | 0.14 |

| Saturated hydraulic conductivity (mm hr−1) | 9.72 | 2.16 | 2.16 | 9.72 |

| Clay content (% soil mass) | 33.9 | 42.1 | 39.4 | 39.1 |

| Silt content (% soil mass) | 53.8 | 48.0 | 47.6 | 47.1 |

| Sand content (% soil mass) | 12.3 | 9.9 | 13.0 | 13.8 |

| Parameter | Description | Range | Calibrated Value |

|---|---|---|---|

| ESCO | Plant uptake compensation factor | 0.0–1.0 | 1.000 |

| EPCO | Soil evaporation compensation factor | 0.0–1.0 | 0.900 |

| FFCB | Initial soil water storage | 0.0–1.0 | 0.750 |

| EVPOT | Pothole evaporation coefficient | 0.0–1.0 | 0.5 |

| POT_VOLX | Maximum volume of water stored in the pothole (mm) over the entire HRU | 0–∞ | 50 mm |

| EVLAI | Leaf area index at which no evaporation occurs from the water surface | 0.0–10.0 | 4.0 |

| No. | Parameter | Description | Default Value | Calibrated Value | Source |

|---|---|---|---|---|---|

| 2001 cotton | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 2 | Measured * |

| 2 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.6 | Estimated # |

| 3 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.95 | 0.7 | Estimated |

| 2002 cotton | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 5 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.2 | Estimated |

| 3 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.6 | Estimated |

| 4 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.95 | 0.7 | Estimated |

| 2003 soybean | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 3 | 4.5 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.3 | Estimated |

| 3 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.8 | Estimated |

| 4 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.6 | 0.92 | Estimated |

| 2004 soybean | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 3 | 5.5 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.3 | Estimated |

| 3 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.65 | Estimated |

| 4 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.6 | 0.85 | Estimated |

| 2005 grain sorghum | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 3 | 2.8 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.2 | Estimated |

| 2006 forage corn | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 5 | Measured |

| 2007 forage sorghum | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 5.5 | Measured |

| 2 | BIO_E | Biomass/energy ratio [(kg ha−1)/(MJ m−2)] | 33.5 | 45 | Sinnathamby et al. [28] |

| 3 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.25 | Estimated |

| 4 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.6 | Estimated |

| 5 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.64 | 0.84 | Estimated |

| 2008 cotton | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 3.8 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.3 | Estimated |

| 3 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.95 | 0.58 | Estimated |

| 2009 sunflower | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 3 | 7 | Measured |

| 2 | FRGRW1 | Fraction of the plant growing season corresponding to the 1st point on the optimal leaf area development curve | 0.15 | 0.2 | Estimated |

| 3 | FRGRW2 | Fraction of the plant growing season corresponding to the 2nd point on the optimal leaf area development curve | 0.5 | 0.7 | Estimated |

| 4 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.62 | 0.85 | Estimated |

| 2010 cotton | |||||

| 1 | BLAI | Max leaf area index (m2/m2) | 4 | 5.8 | Measured |

| 2 | DLAI | Fraction of the plant growing season when leaf area begins to decline | 0.95 | 0.8 | Estimated |

| Manual Input All the Field Observations (Baseline Scenario) | Daily ET | |

|---|---|---|

| Statistics | 2001–2005 | 2006–2010 |

| Measured mean (mm) | 2.40 | 2.42 |

| Simulated mean (mm) | 2.58 | 2.48 |

| Nash-Sutcliffe efficiency (NSE) | 0.85 | 0.80 |

| Percent bias (PBIAS) | 7.2% | 2.4% |

| Year and Crop | Actual Frequency | 10 mm * | 20 mm | 36 mm | 40 mm | 50 mm | 100 mm | 158 mm | 337 mm |

|---|---|---|---|---|---|---|---|---|---|

| 2001 cotton | 18 | 57 | 34 | 26 | 25 | 24 | 21 | 18 | 10 |

| 2002 cotton | 23 | 70 | 38 | 25 | 24 | 23 | 19 | 17 | 12 |

| 2003 soybean | 31 | 69 | 36 | 24 | 24 | 23 | 20 | 18 | 7 |

| 2004 soybean | 16 | 54 | 27 | 17 | 16 | 14 | 12 | 10 | 1 |

| 2005 grain sorghum | 14 | 57 | 22 | 12 | 12 | 11 | 8 | 5 | 5 |

| 2006 forage corn | 27 | 68 | 37 | 24 | 23 | 20 | 15 | 13 | 7 |

| 2007 forage sorghum | 18 | 52 | 20 | 14 | 13 | 13 | 10 | 8 | 5 |

| 2008 cotton | 20 | 68 | 34 | 23 | 22 | 20 | 14 | 11 | 6 |

| 2009 sunflower | 17 | 57 | 25 | 16 | 16 | 15 | 13 | 10 | 17 |

| 2010 cotton | 14 | 66 | 33 | 22 | 22 | 21 | 19 | 18 | 70 |

| Total frequencies | 198 | 618 | 306 | 203 | 197 | 184 | 151 | 128 | 10 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, Y.; Marek, G.W.; Marek, T.H.; Brauer, D.K.; Srinivasan, R. Assessing the Efficacy of the SWAT Auto-Irrigation Function to Simulate Irrigation, Evapotranspiration, and Crop Response to Management Strategies of the Texas High Plains. Water 2017, 9, 509. https://doi.org/10.3390/w9070509

Chen Y, Marek GW, Marek TH, Brauer DK, Srinivasan R. Assessing the Efficacy of the SWAT Auto-Irrigation Function to Simulate Irrigation, Evapotranspiration, and Crop Response to Management Strategies of the Texas High Plains. Water. 2017; 9(7):509. https://doi.org/10.3390/w9070509

Chicago/Turabian StyleChen, Yong, Gary W. Marek, Thomas H. Marek, David K. Brauer, and Raghavan Srinivasan. 2017. "Assessing the Efficacy of the SWAT Auto-Irrigation Function to Simulate Irrigation, Evapotranspiration, and Crop Response to Management Strategies of the Texas High Plains" Water 9, no. 7: 509. https://doi.org/10.3390/w9070509

APA StyleChen, Y., Marek, G. W., Marek, T. H., Brauer, D. K., & Srinivasan, R. (2017). Assessing the Efficacy of the SWAT Auto-Irrigation Function to Simulate Irrigation, Evapotranspiration, and Crop Response to Management Strategies of the Texas High Plains. Water, 9(7), 509. https://doi.org/10.3390/w9070509