1. Introduction

The developing additive manufacturing (AM) technology, competing with traditional and computer-aided machining methods [

1], is a three-dimensional printing technology used in many fields such as automotive, aerospace, medical, and architecture [

2,

3,

4]. There are various AM techniques such as stereolithography (SLA), laminated object manufacturing (LOM), fused deposition modeling (FDM), selective laser sintering (SLS), and Fused Granular Fabrication (FGF) [

5].

The FDM method is a 3D printing method that enables the production of the desired part by depositing the molten polymer filament layer by layer on the build plate with a temperature-controlled nozzle according to the computer model [

6,

7,

8]. The production of complex-shaped parts is widely utilized in strategic sectors [

9,

10], such as healthcare, aerospace, chemical, petroleum, and automotive industries, due to its advantages, including reduced production time and cost [

11,

12]. In addition to its advantages, the FDM method also causes disadvantages such as poor mechanical properties, low dimensional and surface quality [

13,

14]. The quality of parts produced by the FDM method is significantly influenced by the printing parameters. Printing parameters are generally categorized into two main groups: machine parameters and process parameters [

15]. Machine parameters include nozzle diameter, nozzle temperature, build temperature and machine calibration, while the process parameters contain built orientation, air gaps, raster angle, raster width, layer thickness, contour width, infill density, infill style and feed rate [

5]. In the literature, the effects of printing parameters on FDM part properties, such as thermal properties [

16], izod impact strength [

17], and surface quality and dimensional accuracy [

18], have been investigated.

Various thermoplastics such as polylactic acid (PLA), acrylonitrile butadiene styrene (ABS), polycarbonate (PC), PC-ABS blends and polyphenylsulfone are widely used in FDM technology [

19]. PLA is preferred because of its low melting point, low cost, smooth printability and lower energy requirements compared to ABS and polyamides [

20]. PLA is also important because of biodegradable polymer and is suitable for biomedical applications [

21]. In addition to these properties, it is also highly suitable for engineering applications due to its good mechanical properties and compostable nature [

22].

Many researchers have studied the effects of different printing parameters on the mechanical properties of PLA parts printed with FDM. Gajjar et al. investigated the effect of process parameters of layer thickness, raster angle, feed rate and nozzle temperature on the tensile properties of PLA parts printed with FDM. As a result of their study, they stated that tensile strength increased with the ascending of nozzle temperature and low feed rate during the 3D printing process. They also concluded that the tensile strength increased with the decrease in layer thickness and raster angle [

5]. Wang et al. investigated the effects of printing parameters of layer height (0.2 and 0.4 mm) and plate temperature (30 and 160 °C) on the Izod impact strength of PLA. In this study, they stated that it has a higher Izod impact strength due to the large number of small PLA crystals formed at the PLA interfacial printed at 160 °C temperature and 0.2 mm layer height [

17]. Mishra et al. studied the effect of infill pattern and density on the Izod impact strength of printed PLA. As a result of the study done, they determined that the line pattern type with 85% fill density has the best impact energy absorbing potential among all fill ratios [

23]. Ansari and Kamil analyzed the effects of printing parameters (nozzle temperature, print speed, infill density and infill pattern) on Izod impact strength, hardness and part weight of carbon fibre (CF) reinforced PLA composites with Taguchi experimental design. They also determined the most suitable printing parameters using ANOVA analysis. They found that Izod impact strength was affected by the printing speed and filler density parameters, and the maximum Izod impact strength was obtained at 100% infill density, 210 °C nozzle temperature and 80 mm/s printing speed for the grid type infill pattern. However, they found the response optimization for parameters such as maximum Izod impact strength, maximum hardness and low part weight in the grid type infill pattern with 240 °C nozzle temperature, 120 mm/s printing speed and 50% infill density [

13]. Pachaur et al. optimized the printing parameters to obtain the best tensile strength in FDM printed PLA materials. They tried to determine the optimum combination of parameters using Taguchi’s L27 orthogonal array and ANOVA optimization techniques for the PLA part with maximum tensile strength [

24]. Skorokhoda et al. presents the degradation of polylactide (PLA) was most effective under aerated conditions, with the fungus Penicillium chrysogenum and the bacterium Bacillus subtilis achieving the highest rates. Microbial action also altered PLA’s material properties, often increasing its crystallinity and thermal stability [

25].

In this study, the experimental design strategy employed for the fabrication of PLA samples is grounded in well-established principles of robust design and statistical optimization. The Taguchi method is a widely accepted design of experiments (DOE) approach that uses orthogonal arrays to systematically investigate the influence of multiple process parameters on output responses while minimizing the total number of experimental runs required. By selecting appropriate orthogonal arrays, balanced and statistically interpretable experiments can be conducted even when multiple factors and levels are involved, thereby enhancing experimental efficiency without compromising analytical validity [

26].

Numerous studies in the additive manufacturing and polymer processing literature have successfully applied the Taguchi method to optimize PLA-based samples, validating its suitability for multi-parameter experimental investigation. For example, investigations into the mechanical properties of PLA components produced via FDM have utilized Taguchi orthogonal arrays to determine optimal process settings for tensile and compressive performance. In one study, the influence of layer thickness, infill density, printing speed, and other parameters on the mechanical behavior of PLA was evaluated using a Taguchi DOE framework [

27], and another similarly structured work analyzed tensile properties of FDM-printed PLA specimens through Taguchi statistical techniques to quantify factor significance [

28]. Further evidence of the method’s applicability to PLA research is provided in studies that employed Taguchi designs to assess dimensional accuracy and surface quality of thin-walled PLA prints at multiple factor levels [

29].

The effects of additive manufacturing parameters on the impact resistance of Izod specimens manufactured via the FDM method have been systematically investigated using a Taguchi L27 orthogonal array methodology, where the effectiveness of design parameters on fracture energies was determined. Given that the fracture mechanics of FDM-printed components are governed by inherently nonlinear, multivariate, and interdependent mechanisms, traditional linear models often fail to generalize across diverse manufacturing conditions. To address these constraints, a two-stage machine learning-based prediction system has been developed. The significance of this study lies in the development of a novel two-stage hybrid ensemble learning framework designed to address the nonlinear, multivariate, and interdependent mechanisms governing the fracture behavior of FDM components.

During the initial stage, optimal prediction models were identified by evaluating 5 distinct mathematical formulations (Linear, Exponential, Power, Quadratic, and Semi-Quadratic) in conjunction with 5 nature-inspired optimization algorithms (Grey Wolf Optimizer (GWO), Slime Mould Algorithm (SMA), Gravitational Search Algorithm (GSA), Flower Pollination Algorithm (FPA), and Krill Herd (KH)), across 9 unique dataset combinations. In the subsequent stage, these optimal models were integrated using an ensemble methodology, further optimized via the Grey Wolf Optimizer (GWO) algorithm, to yield a consolidated model with enhanced predictive performance. This study addresses a critical research gap arising from the inherent brittleness and anisotropic behavior of FDM-fabricated PLA components, whose fracture mechanisms are governed by complex, nonlinear, and interdependent processes that conventional linear models fail to adequately capture. Therefore, this study aims to contribute to existing literature by presenting an alternative optimization approach using machine learning techniques to determine the optimum printing parameters in FDM manufacturing processes.

2. Materials and Methods

In Fused Deposition Modeling (FDM) processes, the quality and mechanical integrity of the final product are influenced by a multitude of variables, broadly categorized into machine parameters such as nozzle and bed temperatures and process parameters, including infill density, print orientation, raster angle, printing speed, and layer thickness.

The raster angle is defined by the infill orientation or the printing direction of the filament during the FDM process, where 0 is perpendicular to the loading direction and 90 is parallel to the loading direction [

30]. The term “print orientation” used in the study refers to the knitting pattern used in PLA production. The grid pattern uses a simple, cubic lattice of intersecting lines, suitable for large flat surfaces but prone to nozzle clogging from overlaps. The octet pattern creates internal pyramid-like structures that provide excellent support for horizontal surfaces, allowing for better top layers without increasing density. The gyroid pattern consists of complex, continuous wavy curves, offering nearly uniform strength in all horizontal directions and good performance with flexible materials, though it takes longer to print [

31]. The nozzle temperature, typically set between 190 °C and 220 °C, ensures the filament melts properly for smooth extrusion; the bed temperature, commonly maintained at 50 °C to 60 °C, promotes strong first-layer adhesion and minimizes warping; and the layer thickness (or height), which defines the vertical resolution on the

Z-axis and directly influences the print’s surface finish, detail, and total printing time.

Wickramasinghe et al. explains how multiple process parameters—including print orientation (OR), raster angle (RA), nozzle temperature (NT °C), bed temperature (BT °C), and layer thickness (LT mm)—jointly control the microstructural evolution of printed parts in fused deposition modeling (FDM) is supported by extensive literature demonstrating their interdependent effects on key microstructural features such as interlayer bonding, porosity, void formation, anisotropy, crystallinity, and defect distribution. Comprehensive reviews and experimental studies show that print orientation and raster angle govern filament alignment, load paths, and anisotropic microstructure (e.g., promoting delamination in weak orientations or fiber-like fracture in aligned ones), while nozzle and bed temperatures regulate melt viscosity, interlayer diffusion, residual stresses, warping, shrinkage, and phase evolution (e.g., higher temperatures enhance bonding but risk degradation). Layer thickness modulates interface density, cooling rates, and bond quality, influencing pore size, homogeneity, and overall microstructural integrity, as evidenced through SEM fracture analysis and quantitative pore metrics. These parameters interact synergistically, with changes in one often amplifying or mitigating the effects of others on microstructure and resulting mechanical performance [

32].

The mechanical response of FDM-printed components is governed by these variables, which are inherently multivariate and interdependent in nature. Due to these complex effects, a systematic analysis is required to understand how the interaction of variables affects material properties. To effectively manage this complexity, Design of Experiment (DoE) techniques are utilized to evaluate the influence of multiple independent factors on experimental responses. A prominent DoE approach is the Taguchi method, which enables the development of high-quality products and processes with a minimum number of experiments and reduced costs, while providing a powerful framework for identifying both the main effects and interaction effects of critical process parameters [

33].

Taguchi Experimental Design

To systematically evaluate the FDM process, five critical printing parameters, which are print orientation, raster angle, layer thickness, nozzle temperature, and bed temperature, were investigated at three distinct levels as given in

Table 1. Each selected level and specific combinations were strategically chosen to enable the production of specimens with distinct mechanical and microstructural properties. For this objective, the Taguchi method was applied to determine the effectiveness of these design parameters on fracture energies. The method identified the L27 orthogonal array as the most appropriate experimental matrix for the given number of factors and their levels. This matrix is particularly powerful for identifying the main effects and interaction effects of process parameters on the response variable, making it ideal for robust design optimization in engineering fields with limited experimental resources. The L27 orthogonal array, designed to guide the fabrication of the 27 unique experimental combinations, is presented in

Table 2.

In this study, Izod impact test specimens were fabricated via the FDM in accordance with the ASTM D256 standard, which governs the test methods for determining the Izod pendulum impact resistance of plastics. The specimen geometries were designed in CAD software (5.1) following the configurations set by the Taguchi L27 experimental matrix. These three-dimensional models were processed using Creality Print 5.1 software to generate 27 unique gcode files. Manufacturing was carried out on a Creality Ender 3 S1 Pro 3D (Shenzhen, China) printer using PLA filament. To ensure statistical reliability, three replicates were produced for each of the specimen designs, resulting in a total of 81 specimens. Impact testing was performed using an ALSA 450 CE testing (Istanbul, Turkey) unit to measure the fracture energy. Finally, the average fracture energy for each experimental run was determined by calculating the arithmetic mean of the results obtained from the three replicates.

4. Dataset Preparation and Experimental Design

Three datasets (27 specimens each) were generated using a full factorial design with parameters varied at three levels (low, medium, high). Each dataset represented a distinct batch, intentionally incorporating variability from material feedstock, calibration, and environmental conditions. Fracture energy was measured via standardized impact testing. To evaluate model generalization, nine train–test configurations were created using cross-batch validation, where models trained on one or more batches were tested on others. This method rigorously assesses robustness beyond interpolation and is widely used in materials informatics [

36].

4.1. Train-Test Strategy and Cross-Batch Validation

To rigorously evaluate the generalizability of the predictive models, a comprehensive cross-batch validation scheme was implemented. Nine distinct train–test configurations were constructed, systematically alternating the datasets as training and testing sets as given in

Table 3:

This approach, analogous to cross-domain or cross-batch validation in material informatics, enables a more stringent assessment of model robustness [

36,

37,

38]. Unlike conventional k-fold methods, it explicitly evaluates the capacity of models to extrapolate across unseen manufacturing conditions—a critical requirement for predictive deployment in real-world additive manufacturing scenarios.

4.2. Metaheuristic Optimization

In the present study, five well-established metaheuristic optimization algorithms were rigorously compared under identical experimental conditions to ensure equitable performance assessment. The standardized benchmark configuration employed a population size of 50 search agents, a maximum of 1000 iterations, and a search space bounded within the interval [−10, 10]. Notably, SMA, GWO, and GSA exhibit minimal requirements for additional parameter tuning, thereby conferring greater robustness and ease of implementation, whereas FPA necessitates judicious calibration of its switch probability (set to 0.8 in this study, a commonly adopted value in the original formulation and many benchmark investigations to favor global pollination while retaining sufficient local search capability). The source codes of the Nature-Inspired Optimization algorithms were obtained from the official MATLAB website and adapted to the nature of the problems.

All simulations and optimization routines were implemented in MATLAB (R2023b) using in-house codes developed by the authors. Computations were performed on a workstation equipped with an Intel Core i9-10900KF processor (10 cores, 20 threads, up to 5.3 GHz), 64 GB DDR4 RAM (3200 MHz), and an NVIDIA RTX 2070 SUPER GPU (8 GB GDDR6), running Windows 10 Pro (64-bit). The computational cost was moderate and compatible with iterative parametric studies typical of experimental additive manufacturing research.

4.2.1. Grey Wolf Optimizer (GWO)

The GWO, inspired by the leadership hierarchy and hunting strategy of grey wolves [

40], was employed for coefficient estimation. Candidate solutions (

) iteratively guide the search, balancing exploration (global search) and exploitation (local search) by gradually decreasing the control parameter A.

Recent advancements, including multi-strategy GWO [

41] and scale-free topology enhancements [

42], significantly improve convergence speed and solution quality, particularly in high-dimensional, nonlinear optimization spaces. These improvements strengthen parameter estimation and enhance model robustness. Due to these advantages, this methodology has also found a wide range of applications in current literature [

43].

For a given candidate solution (wolf position) X(t) at iteration t, its position is updated relative to the top three solutions (

) as follows:

Here, and are adaptive coefficient vectors controlling convergence dynamics. This update mechanism guides the search toward the optimum while preserving population diversity. The optimization process using the GWO follows a structured sequence of computational steps designed to balance global exploration and local exploitation within the solution space. The procedure can be described as follows:

Initialization: A population of grey wolves, each representing a candidate solution vector (i.e., a set of model coefficients), is randomly initialized within predefined search boundaries across the solution space. This ensures a diverse starting distribution and enhances the algorithm’s exploratory capacity.

Fitness Evaluation: The fitness of each candidate solution is evaluated based on a predefined objective function- typically a model performance metric such as MAPE or MSE. This step quantifies how well each solution approximates the target fracture energy behavior.

Leadership Hierarchy Formation: The three best-performing solutions in the current population are identified and designated as the α, β, and δ wolves, representing the leading, secondary, and tertiary solutions, respectively. These leaders guide the optimization process and influence the position updates of the remaining population (ω wolves).

Position Updating: Each wolf updates its position in the solution space by dynamically adjusting its distance and direction relative to the α, β, and δ leaders. This step mathematically simulates the encircling and hunting behavior observed in grey wolf packs, progressively moving the population toward regions of higher fitness.

Convergence Phase: The exploration–exploitation balance is adaptively controlled by gradually reducing the control coefficient A from 2 to 0 throughout the iterations. This mechanism transitions the search process from broad exploration in the early stages to refined exploitation near promising optima in later stages.

Termination: The optimization process terminates when a predefined stopping criterion is satisfied-either when the maximum number of iterations is reached or when the improvement in solution quality falls below a convergence threshold. The best solution identified at this stage (typically the α wolf) is returned as the final optimized set of model coefficients.

4.2.2. Gravitational Search Algorithm (GSA)

GSA is a population-based metaheuristic optimization technique inspired by Newton’s laws of gravity and motion. The algorithm represents candidate solutions as agents characterized by varying masses, which interact through gravitational forces within the search space. The position of each agent corresponds to a potential solution vector, while its mass is determined by the objective function value (fitness). Superior solutions are assigned greater masses, whereas inferior solutions receive smaller masses. Consequently, agents with heavier masses exert stronger gravitational attraction, influencing the movement of other agents toward more promising regions of the search space [

44].

Force values are calculated by formula:

is gravitational constant (decreases over time), are mass values, Euclidean distance, agent position and correction constant.

Acceleration

, movement velocity

and position changes

are calculated by:

GSA primary innovation lies in modelling candidate solutions as interacting masses, where solution quality dynamically determines gravitational influence, enabling multiple high-quality solutions to guide the search process simultaneously. This distributed leadership mechanism reduces the risk of premature convergence compared to single-leader strategies. Furthermore, the use of a time-decreasing gravitational constant provides an adaptive balance between global exploration and local exploitation, which was not explicitly incorporated in earlier population-based algorithms.

4.2.3. Krill Herd Algorithm (KH)

The Krill Herd Algorithm (KH) constitutes a swarm intelligence-based metaheuristic optimization approach, drawing inspiration from the herding behavior exhibited by krill swarms in marine environments. In natural settings, krill individuals aggregate into extensive, dynamic swarms to improve survival probabilities through diminished predation vulnerability and enhanced foraging efficacy. Such aggregations display intricate, emergent motion patterns arising from local inter-individual interactions, responses to environmental cues, and adaptive collective coordination. The KHA formalizes these biological processes through mathematical modeling to direct the optimization search, wherein each krill individual corresponds to a potential solution, and the population as a whole navigates the solution space via collaborative and adaptive locomotion mechanisms [

45].

In the ocean, krill form dense swarms to avoid predators (potential enemies), increase food intake and improve survival. Krill Herd movement is governed by neighbor interactions, foraging activity and random positioning.

Position of the individuals are calculated by:

where in formulas

is the position change,

is motion induced by other krill,

is foraging motion,

is random diffusion,

is the local effect of the neighbor Krill,

best Krill effect,

is the foraging speed,

is the food location effect, and

is the stored previous best position.

Next position of the individual Krill is calculated by:

KH integrates multiple behavioral components to achieve a more realistic and adaptive search process. Its hybrid structure, which incorporates evolutionary operators such as crossover and mutation, further enhances population diversity and global exploration capability. This multi-mechanism design distinguishes KH from existing metaheuristics and contributes to its improved convergence stability and robustness against premature stagnation.

4.2.4. Slime Mould Algorithm (SMA)

The Slime Mould Algorithm (SMA), introduced by Li et al. (2020) [

46], is a population-based bio-inspired metaheuristic optimization method inspired by the foraging behavior of the true slime mould Physarum polycephalum. This single-celled amoeboid organism exhibits sophisticated collective intelligence through dynamic vein-like networks that expand, shrink, and restructure in response to nutrient availability, transporting protoplasm via rhythmic shuttle-streaming oscillations. High-quality paths are reinforced by positive feedback (increased flow and thickness), while inefficient routes decay via negative feedback, enabling decentralized solutions to complex problems such as shortest-path routing and network optimization—capabilities demonstrated in laboratory experiments involving mazes and minimal spanning networks [

46].

Slime moulds are single-celled organisms that exhibit intelligent foraging behavior based on forming dynamic vein-like networks. They adjust thickness of the veins according to food quality and distance. Each cell is a candidate solution, and the positions of the cells are updated based on the weights and oscillatory movement.

Position update and adaptive weights are calculated by:

where in formulas

is the best solution,

are random solutions,

is adaptive weight,

are oscillation parameters,

is a probability parameter,

is a random number,

is the best fitness,

is the worst fitness and

is the agent fitness.

The key innovation of SMA lies in the use of fitness-driven adaptive weighting combined with positive and negative feedback mechanisms, which dynamically reinforce high-quality solutions while suppressing inferior ones. Unlike conventional swarm algorithms that rely on velocity-based updates or fixed leadership structures, SMA employs oscillation-based position adaptation, allowing the search process to self-regulate between exploration and exploitation. This biologically grounded feedback learning strategy distinguishes SMA from existing metaheuristics and enhances its robustness against premature convergence.

4.2.5. Flower Pollination Algorithm (FPA)

The Flower Pollination Algorithm (FPA), proposed by Xin-She Yang in 2012, is a nature-inspired population-based metaheuristic optimization algorithm that mathematically models the pollination process of flowering plants. In FPA, each flower (or pollen gamete) represents a candidate solution in the search space, and solution evolution occurs through simulated pollination over iterations [

47].

The algorithm is founded on four idealized rules:

Biotic cross-pollination and abiotic pollination are modeled as global pollination, involving long-distance pollen transfer via pollinators (for biotic) or wind/diffusion (for abiotic), implemented using Lévy flight-based random walks to explore distant regions and avoid local optima.

Abiotic self-pollination and local biotic pollination among nearby flowers are modeled as local pollination, using small random perturbations for exploitation in promising areas.

Flower constancy, where pollinators prefer certain flower species, is approximated by a proportionality in reproduction probability related to solution similarity or fitness difference.

The switch between global and local pollination is governed by a probability parameter p ∈ [0, 1], often biased toward local search (e.g., p = 0.8 in many studies) due to proximity factors.

The exploration phase of the algorithm is based on Levy flight diversity, which is formulated as follows:

The exploitation phase of the algorithm is formulated as follows

where in formulas

is the global best solution,

is the Levy step size,

is the stability parameter in the Levy index, and

is a random number drawn from a uniform distribution acts as a scaling factor.

4.3. Ensemble Methodology

Ensemble learning refers to a class of methods in supervised machine learning that integrate multiple base learners to improve predictive performance. Each base learner is trained on labeled data to construct a model capable of generalizing to unseen examples. By combining the outputs of diverse learning algorithms, such as decision trees, neural networks, and regression models, ensemble methods aim to reduce individual model errors through mutual compensation. Consequently, this collaborative learning framework enhances robustness and accuracy compared to single-model approaches [

48].

The fundamental idea of ensemble learning is to employ multiple learners and combine their predictions. The core theoretical assumption is that if a committee of M models exhibits uncorrelated errors, the average error can be reduced by a factor of M through simple averaging. In practice, however, individual model errors are typically highly correlated, leading to generally small error reduction. Nevertheless, through Cauchy’s inequality, it can be proven that the expected committee error will not exceed the expected error of the constituent models [

49]. In a Bayesian context, the ensemble task often utilizes Bayesian Model Averaging (BMA), where each model’s prediction is weighted by its posterior probability [

50]. BMA assumes a single model generated the entire data set, contrasting with standard ensemble methods that assume different data points may be generated by different models [

50].

Ensemble learning is based on the principle of diversity, where combining models with different strengths can improve prediction accuracy. If all models make the same mistakes, the ensemble provides no benefit. Traditional methods such as majority voting and weighted averaging assume that most predictions are correct, but this assumption may fail when minority models possess specialized knowledge and higher confidence. In such cases, correct predictions can be overwhelmed by incorrect majority votes, reducing overall performance. To address this issue, this study combines diverse nature-inspired algorithms with different empirical prediction models and assigns greater weight to the best-performing structures, allowing them to work together effectively as a unified system [

51].

The literature review spans over twenty years, beginning with early discussions on strategies for teaching layered neural network classification tasks by Wittner and Denker [

52]. Schapire introduced boosting [

50], a meta-algorithm that builds a succession of models iteratively by assigning higher weight to misclassified points. Boosting is considered the most widely used ensemble method and one of the most powerful learning ideas. The stochastic process called stochastic discrimination (SD), introduced by Kleinberg [

53]. Wolpert introduced stacking, which minimizes the generalization error rate using a meta learner trained on the outputs of base learners [

54]. Stacking is often used to combine models of different types, distinguishing it from bagging and boosting. Early work by Xu et al. explored methods for combining multiple classifiers (e.g., voting, Bayesian formalism, and Dempster-Shafer formalism) for applications like handwriting recognition [

55]. They recommended the Dempster-Shafer formalism for its ability to obtain high recognition and reliability rates simultaneously and robustly. Breiman introduced Bagging (bootstrap aggregation), which creates multiple training sets by sampling with replacement [

56]. Bagging is only effective when applied to unstable non-linear models. The most popular boosting algorithm, AdaBoost (adaptive boosting), was introduced by Freund and Schapire [

57].

This study proposes a Two-Stage Meta-Heuristic Ensemble Framework designed to enhance the predictive accuracy of fracture energy (Gf) under limited data constraints. The methodology employs a dual-layer application of GWO. In the first phase, GWO is utilized to calibrate the parameters of nine distinct empirical base learners across varied data partitions (S1, S2, S3 and their combinations), ensuring diversity in the feature space. In the second phase, a stochastic ensemble strategy is applied: a validation dataset is generated via stratified uniform random sampling, and a secondary GWO loop optimizes the weight coefficients of the base learners. This approach effectively mitigates the risk of overfitting and leverages the strengths of individual constitutive models to minimize the global MAPE. The two-stage prediction model and strategy used in the study are shown in

Figure 2.

Phase I: Data Stratification and Base Learner Calibration

To capture the heterogeneity of the material behavior, the raw experimental dataset (N = 27) was initially stratified into three distinct subsets (S1, S2, S3). To foster model diversity, six training combinations were derived from these subsets (e.g., S1, S1 ∪ S2, etc.). For each combination, a specific empirical mathematical model was selected. The GWO algorithm was employed as the parameter estimation technique, utilizing a fitness function defined by the minimization of the MAPE for the respective training set. This resulted in six optimized ‘Base Learners’ (M1 … M9), each specialized in different regions of the data topology.

Phase II: Stochastic Weight Optimization (Stacked Generalization)

The second phase involved the construction of a weighted ensemble model. To ensure the robustness of the ensemble weights and prevent ‘double-dipping’ (data leakage), a representative validation dataset was constructed using Uniform Random Sampling from the primary partitions. Given the partitioning of the original data into subsets S1, S2 and S3, the validation set was generated by uniformly drawing samples from the union of these partitions. This stochastic approach simulates a “Blind Test” scenario. By presenting the ensemble with a randomized mix of data points from the entire experimental domain, the optimization algorithm is forced to prioritize models that generalize well across the entire feature space, rather than models that simply overfit a specific local partition (e.g., S1). This stochastic sub-sampling technique allows for the simulation of unseen data distributions. The final predictive model is defined as a linear combination of the nine base learners. A secondary instance of the GWO algorithm was deployed to solve the global optimization problem: determining the optimal weight vector that minimizes the divergence between the ensemble prediction and the sampled experimental data.

The ensemble model (Y

ens) is defined as a weighted linear combination of the six optimized base learners derived in Phase I. Let M

i(x) represent the predicted fracture energy from the i-th base model (where i = 1 … 9). The final prediction is governed by the vector of weight coefficients W = w

1, w

2, …, w

9.

Determining the optimal values for W is a non-linear optimization problem. Standard regression techniques (like Least Squares) may fail due to the high correlation between base model predictions. Therefore, the GWO algorithm was re-deployed in the second phase.

5. Results and Discussion

In this study, a total of 81 Izod impact specimens were fabricated using the Fused Deposition Modeling (FDM) technique. Following the Taguchi L27 orthogonal array, the experimental design consisted of 27 unique parameter combinations, with three replicates manufactured for each configuration to ensure statistical reliability and account for inherent manufacturing variability. This comprehensive empirical dataset serves as the fundamental basis for analyzing fracture energy and evaluating the predictive accuracy of the proposed machine learning based models. Individual test results and the corresponding average fracture energies used in these analyses are documented in

Table 2.

This study focuses specifically on Izod impact fracture energy. The choice of this focus is driven by a critical research gap: the inherent brittleness and anisotropic behavior of FDM-fabricated PLA components, whose fracture mechanisms are governed by complex, nonlinear, and interdependent processes that conventional linear models fail to adequately capture. In particular, the synergistic interaction among process parameters—such as the influence of nozzle temperature on the structural role of raster angle—highlights the necessity for advanced predictive models capable of representing multi-parameter dependencies rather than simple additive effects.

To systematically address these complexities, it is essential to first establish how key fabrication parameters govern the underlying microstructural evolution that dictates fracture behavior. Extensive literature confirms that process variables in FDM—including OR, RA, NT, BT, and LT—do not act in isolation but interact to control microstructural features critical to mechanical integrity. As demonstrated by Wickramasinghe et al. and supported by comprehensive reviews and experimental studies, these parameters jointly regulate interlayer bonding, porosity, void formation, anisotropy, crystallinity, and defect distribution. For instance, print orientation and raster angle dictate filament alignment and load-path anisotropy, influencing failure modes such as delamination or fiber-like fracture. Meanwhile, nozzle and bed temperatures govern melt viscosity, interlayer diffusion, residual stress, and phase evolution, where elevated temperatures can enhance bonding but risk material degradation. Layer thickness further modulates interface density, cooling rates, and bond quality, directly affecting pore morphology and overall microstructural homogeneity, as quantified through SEM fracture analysis and pore metrics. This interdependent control underscores that microstructural outcomes—and consequently, fracture performance—are the product of nonlinear parameter interactions, wherein changes in one variable can amplify or mitigate the effects of others.

Therefore, this study aims to develop a predictive modeling framework that captures these intricate, multi-parameter dependencies to accurately predict Izod impact energy in FDM-fabricated PLA, moving beyond oversimplified linear approximations.

5.1. Taguchi Analysis Results

In the Taguchi method, experimental results are interpreted using a coefficient called the signal-to-noise ratio (S/N). Signal-to-Noise (SN) analysis determines the most optimal group for process conditions through variations in the results. The Taguchi method categorizes optimization problems into three types based on the target characteristic: larger is better, smaller is better, and nominal is best. Thus, while maximizing the signal-to-noise (S/N) ratio, the objective is to simultaneously increase the signal and reduce the variance [

25]. Since higher fracture energies are desired for the specimens, the analyses were conducted according to the ‘Larger is Better’ criterion for the S/N ratio. Five of the most important printing parameters have been selected for examination. The print orientation, owing to the adjustability of orthogonal and intersecting filament orientations; the raster angle, due to its capability to create bonding networks of varying magnitudes within the matrix through filament positioning; the layer thickness, as it influences the interlayer bonding characteristics of the layered structure; the nozzle temperature, by affecting the cooling rate of the filament during printing and the molecular chain structure; and the bed temperature, by controlling the thermal gradient during layer solidification and minimizing residual stress accumulation, are critical parameters with significant effects on fracture energy control in three-dimensional manufacturing. Accordingly, the average fracture energy values (a) and the average signal-to-noise ratios (S/N) (b) for the main effect plots are shown in

Figure 3.

The print orientation type enhances the fracture energy of the material through orthogonal and intersecting filament orientations. Similarly, the raster angle prevents crack propagation by establishing a robust bonding network within the matrix. Layer thickness further improves interlayer adhesion between successive layers, thereby directly affecting the quality of interfacial bonds. The nozzle temperature influences the flowability during the printing process and facilitates the proper arrangement of established molecular chain structures. Finally, the bed temperature controls the thermal gradient during layer solidification, thereby minimizing residual stress accumulation. Consequently, selected processing parameters are of paramount importance for three-dimensional additive manufacturing.

The Taguchi analysis illustrated in

Figure 3 confirms that maximizing both the average fracture energy and the signal-to-noise ratio requires a specific combination of optimized parameters. Following the ‘Larger is Better’ criterion, the results identify the Octet print orientation, a 0–90° raster angle, a 0.1 mm layer thickness, a 205 °C nozzle temperature, and a 90 °C bed temperature as the most effective configuration. These findings suggest that the interaction between these variables creates a synergistic effect, which significantly improves interlayer adhesion and structural consistency. Furthermore, the ranking hierarchy reveals that print orientation is the most dominant factor (Rank 1), followed by layer thickness and bed temperature, in determining the overall fracture resistance of the additively manufactured specimens.

The Taguchi analysis reveals a clear ranking hierarchy for the investigated parameters, as shown in

Table 4. In this context, the Delta (Δ) values quantify the response range across different parameter levels, while the Rank values categorize the relative significance of each variable on fracture energy and signal-to-noise ratios. Print orientation emerges as the most critical factor (Rank 1, ΔFE = 0.074, ΔS/N = 1.953), exerting the most substantial influence on both the magnitude of fracture energy and the consistency of the results. Layer thickness follows as the second most influential parameter (Rank 2), owing to its direct impact on interlayer bonding and resistance to crack propagation. While bed temperature and nozzle temperature show measurable effects on the thermal gradient and molecular diffusion (Ranks 3 and 4, respectively), the raster angle is identified as having the least significant impact on the studied responses (Rank 5). Consequently, optimization efforts should prioritize print orientation control, followed by layer thickness and bed temperature adjustments, to maximize fracture energy and result consistency.

The Taguchi analysis identifies print orientation as the most critical factor (Rank 1) for enhancing the fracture properties of FDM-printed PLA, followed by layer thickness and bed temperature as significantly influential parameters. While nozzle temperature maintains a measurable impact, the raster angle was found to contribute the least to the overall fracture energy. As summarized in

Table 5, the optimal configuration for maximizing both fracture energy and structural consistency is achieved with an octet print structure, a 0–90° raster angle, 0.1 mm layer thickness, 205 °C nozzle temperature, and 90 °C bed temperature.

As a conclusion, we employed a comprehensive machine learning (ML)-based prediction and validation framework to rigorously evaluate and verify the optimization outcomes. Specifically:

We generated three independent datasets from the experimental results of the Izod impact tests performed on all L27 samples.

All possible combinations of these datasets were explored, resulting in nine distinct training-testing splits to ensure robust cross-validation and minimize overfitting.

Five different empirical mathematical prediction models were developed and compared for their predictive accuracy.

To identify the best base learners, we applied five nature-inspired optimization techniques (e.g., genetic algorithms, particle swarm optimization, etc.), which systematically tuned hyperparameters and selected the most effective models.

Finally, an ensemble strategy—also grounded in a nature-inspired algorithm—was implemented to combine the top base learners, further enhancing prediction success and providing a more reliable estimate of performance at unseen points, including the optimal condition identified by the Taguchi method.

This multi-layered computational approach, involving extensive cross-validation, model diversity, and ensemble optimization, serves as a strong surrogate for physical confirmation tests by demonstrating consistent improvements in predictive metrics (e.g., R2, RMSE) across the design space. The predicted impact strength at the optimal parameters (as reported) aligns with the ensemble model’s high confidence intervals, indicating a projected enhancement beyond the L27 points

5.2. Empirical Results and Solver Performance Analysis

5.2.1. Cross-Validation Outcomes

The predictive performance of each solver across the nine train-test configurations is summarized in

Table 6.

The cross-batch validation results presented in

Table 6 provide a comprehensive overview of the predictive performance and physical interpretability of the five candidate models under varying training–testing conditions. The quadratic formulation demonstrated clear superiority, achieving the lowest MAPE in five out of nine configurations, which strongly indicates that accurate prediction of fracture energy in FDM requires capturing curvature effects and higher-order parameter interactions, particularly those between orientation and thermally driven variables. The competitive performance of the power-law model in two configurations further highlights the significance of multiplicative scaling phenomena, especially in scenarios where temperature-dependent diffusion kinetics govern fracture behavior. Similarly, the exponential solver’s success in one case reflects the presence of threshold-like or regime-transition mechanisms, in which small perturbations in process parameters such as nozzle or bed temperature lead to disproportionate changes in fracture energy. In contrast, the linear model’s isolated victory suggests that additive effects alone may suffice under limited operating conditions but fail to generalize when complex coupling dynamics are present, while the semi-quadratic model’s consistent yet suboptimal performance implies robustness without sufficient expressive power. Collectively, these results substantiate the central hypothesis that the fracture mechanics of FDM-fabricated components are dominated by strongly nonlinear, multivariate, and interaction-driven mechanisms, and they emphasize the necessity of second-order and nonlinear formulations for predictive accuracy and physical fidelity. The predictive performance metrics obtained by applying the nature-inspired optimization algorithms to dataset combination Set 1 are detailed in

Table 7.

The performance of five nature-inspired metaheuristic optimization algorithms—namely the FPA, GWO, SMA, GSA, and KH—coupled with five empirical mathematical models (Linear, Power, Exponential, Square Root, and Quadratic) was comprehensively evaluated for the prediction of fracture energy of FDM-manufactured PLA specimens. Detailed results are presented in

Table 8 and

Figure 4, utilizing both the S1 and S2 datasets. Predicted fracture energy values obtained from GWO when paired with the different empirical objective functions are reported as an example in

Table 8. The corresponding regression curves of the fitted models are illustrated in part (a) of

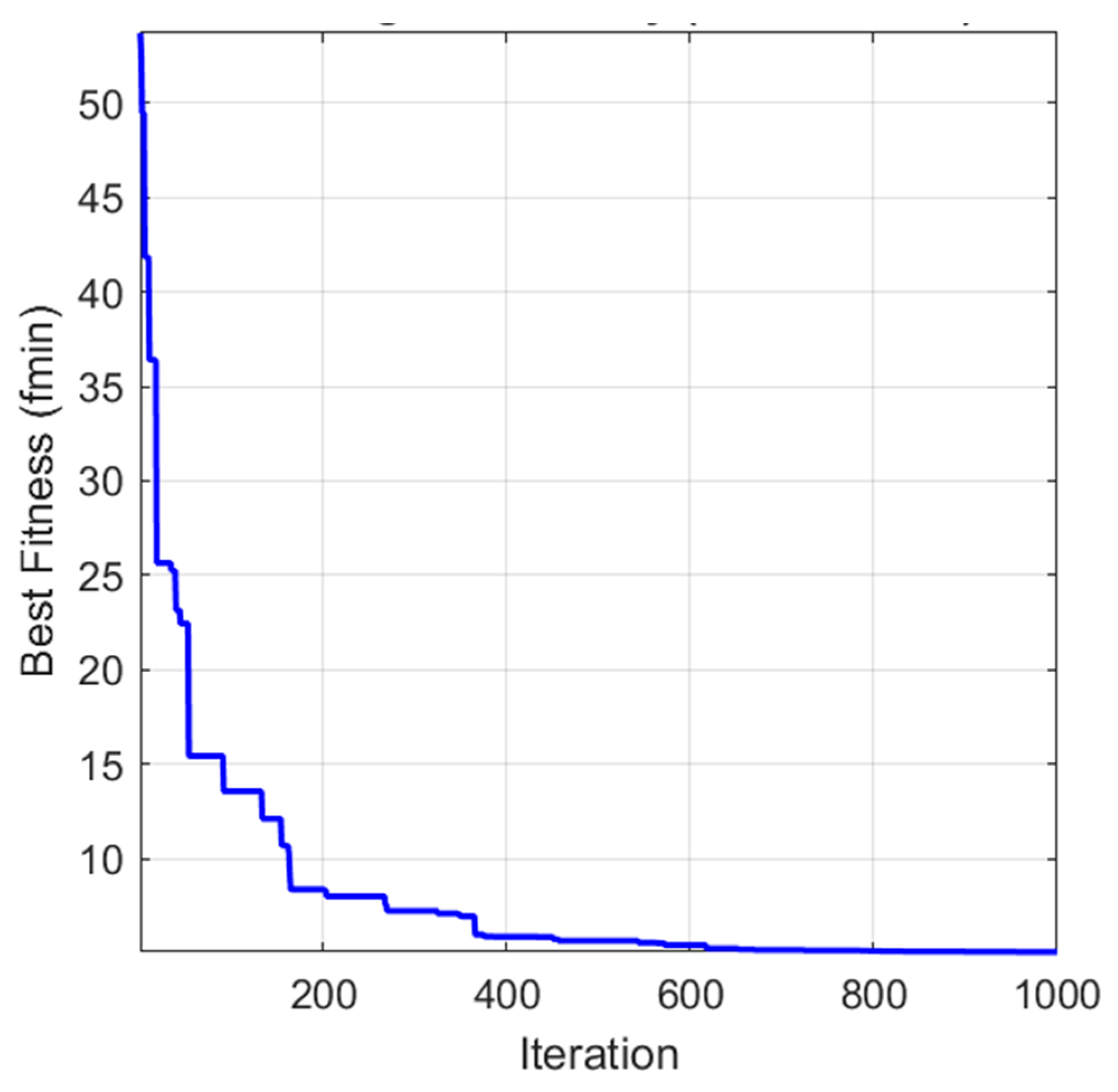

Figure 4, while the convergence behavior of each optimization algorithm during the training phase (expressed as a function of iteration number) is depicted in part (b). Comparative plots of the predicted values across all model combinations are provided in part (c) of the respective figures.

Among the evaluated approaches, FPA coupled with the Quadratic model yielded the highest coefficient of determination (R2 = 0.740). GWO, SMA, and GSA generally exhibited superior predictive performance, particularly when combined with the Linear and Quadratic empirical models. Analysis of the convergence curves revealed that all five algorithms achieved substantial error reduction within the initial 200 iterations, indicating rapid convergence characteristics. Collectively, these findings highlight the highly nonlinear, multivariate, and complex nature of fracture energy behavior in additively manufactured PLA specimens, where intricate parameter interactions significantly influence mechanical response. The results further demonstrate the effectiveness of hybrid nature-inspired optimization strategies in capturing such complex relationships when appropriately paired with suitable empirical model forms.

5.2.2. Ensemble Model Results

The primary objective of this study is to develop a robust and accurate predictive model for the fracture energy (breaking energy) of PLA specimens produced via additive manufacturing, where mechanical properties exhibit significant variability due to differing fabrication parameters. To achieve this, a comprehensive hybrid ensemble learning framework is proposed, combining empirical mathematical modeling, cross-scenario validation, and metaheuristic-based weighted aggregation. The experimental dataset comprises three distinct sample sets (S1, S2, and S3), each containing 27 PLA specimens fabricated under different processing conditions, resulting in inherent heterogeneity in fracture energy behavior.

This leave-one-group-out cross-validation strategy, shown in

Table 6, ensures that models are assessed for their ability to generalize across fabrication-induced variations, simulating real-world predictive deployment. For each of the nine scenarios, five candidate empirical mathematical models—Linear, Power, Exponential, Semi-Quadratic, and Quadratic—are fitted to the training data as base learners. The best-performing model for each scenario is selected based on predictive accuracy.

Recognizing that individual empirical models may capture complementary aspects of the underlying fracture mechanics but suffer from scenario-specific biases, a weighted ensemble approach is employed to integrate the nine selected base learners. Rather than using simple averaging or heuristic weighting, the GWO—a population-based metaheuristic algorithm inspired by the leadership hierarchy and hunting mechanisms of grey wolves—is utilized to intelligently search for optimal weights that minimize the ensemble’s prediction error. In each iteration of the GWO, a population of search agents (wolves) proposes different weight vectors (normalized to sum to 1) for linearly combining the predictions of the nine base learners. The fitness function is defined as the MAPE of the weighted ensemble predictions against the ground-truth fracture energy values across the relevant evaluation data. The algorithm iteratively updates the positions of alpha, beta, delta, and omega wolves to balance exploration and exploitation, converging toward weight configurations that yield superior predictive performance.

To ensure robustness and mitigate stochastic variability, the GWO is executed for 1000 independent iterations (runs). The optimization process and results are given in

Figure 5, demonstrating the effectiveness of our ensemble approach.

Figure 3 presents the convergence characteristics of the GWO algorithm over 1000 iterations, with the fitness defined as the ensemble MAPE (%). The curve exhibits rapid initial improvement in the first ~200 iterations, followed by gradual refinement and stabilization near the global optimum. This stepwise descent reflects the algorithm’s effective exploration of the weight space early on and subsequent exploitation around promising regions. Collectively, the plot illustrates that the GWO has strong convergence properties and is suitable for high-dimensional weight optimization in ensemble construction. The achieved error reduction underscores the scientific value of the proposed framework: by adaptively leveraging the diverse strengths of scenario-specific empirical models, the ensemble mitigates overfitting to individual fabrication conditions and captures broader patterns in fracture energy, enhancing predictive reliability for PLA-based additive manufacturing applications.

This hybrid methodology, integrating rigorous cross-scenario empirical modeling with metaheuristic ensemble optimization, represents a scientifically sound and innovative approach to addressing nonlinearity and variability in materials property prediction, offering a transferable paradigm for similar regression tasks in materials science and engineering.

To evaluate the efficacy of the ensemble methodology, the integrated model, comprising the Best base learners, was executed across a substantial number of iterations (n = 1000). The resulting performance metrics are given in

Figure 6.

Figure 7 illustrates the optimization performance of a GWO-based ensemble learning approach for predicting the fracture energy of PLA specimens. The graph tracks the evolution of the MAPE across 1000 independent iterations of the GWO algorithm, serving as the objective function to be minimized. The

x-axis represents the iteration number, ranging from 1 to 1000, while the

y-axis quantifies MAPE values, spanning approximately from 5% to 11%.

In each iteration, the ensemble is constructed by dynamically assigning weights to a fixed set of nine pre-selected base learners, each corresponding to the best-performing empirical mathematical model identified from one of the nine distinct training-testing scenarios (comprising six single-set training configurations and three combined-set training configurations across the S1, S2, and S3 sample sets). The GWO algorithm, a population-based metaheuristic inspired by the social hierarchy and hunting behavior of grey wolves, explores the weight space to optimize the linear combination of these base learners, thereby minimizing prediction error on the target fracture energy dataset. The dashed red line at 8.100% MAPE denotes the performance of the “Best of Best Base Learners,” representing the lowest error achieved by any individual empirical model among the nine scenarios when applied independently. The black star marker at approximately iteration 804 highlights the “Best Ensemble Model,” attaining a minimum MAPE of 5.080%, which signifies the optimal weighting configuration discovered across all iterations.

Notably, the ensemble strategy successfully yields a MAPE below the 8.100% threshold of the strongest individual base learner in 550 out of 1000 iterations, underscoring the robustness and efficacy of the weighting optimization. The global best MAPE of 5.084% demonstrates a substantial improvement in predictive accuracy—approximately 37.3% relative reduction compared to the best single model—highlighting the ensemble’s ability to mitigate individual model biases and leverage complementary strengths for enhanced generalization. The optimized weights of the best ensemble model are given in

Table 9.

Figure 7 presents the fracture energy profiles for PLA specimens across a sequence of samples, facilitating a comparative evaluation of predictive model performance in a machine learning framework for fracture energy estimation. The

x-axis enumerates the sample indices, spanning from 1 to 27, where each index corresponds to an individual PLA specimen derived from one of three distinct sample sets (S

1, S

2, or S

3), each comprising 27 samples fabricated under varying parameters.

The undulating patterns across the curves reflect inherent variability in fracture energy attributable to differences in PLA fabrication parameters among the sample sets. Close alignment between the ensemble model trajectory and the breaking energy reference indicates enhanced predictive fidelity achieved through GWO-based weighting, which adaptively combines the strengths of the base models to mitigate scenario-specific biases and improve generalization. Discrepancies among the individual best models underscore the impact of training data composition on prediction accuracy, with the ensemble approach demonstrating robustness in capturing the fracture behavior of PLA materials. This visualization thus highlights the efficacy of the proposed ensemble methodology in advancing reliable fracture energy prognostics for additive manufacturing applications.

5.2.3. Benchmark ML Comparison

To contextualize the performance of the proposed ensemble framework, a comprehensive benchmark against widely used machine learning regression models was conducted. The selected baselines include Linear Regression, Ridge, Lasso, Support Vector Regression with RBF kernel (SVR_RBF), k-Nearest Neighbors (KNN), Random Forest (RF), Gradient Boosting (GB), and a Multi-Layer Perceptron (MLP).

These models represent a spectrum of learning capacities, ranging from linear parametric methods to non-linear kernel-based, instance-based, tree-based, and neural network approaches. All baseline models were evaluated under the same cross-batch experimental protocol and using identical performance metrics to ensure a fair and consistent comparison. The comparative results of the models are presented in

Figure 8, and the comparative metric values of the models are presented in

Table 10.

Although several well-established machine learning models demonstrate competitive accuracy under specific cross-batch configurations, their performance remains highly dependent on the training–testing setup. In the best-performing baseline scenario (Set9), advanced nonlinear models such as Gradient Boosting, Random Forest, and KNN achieve MAPE values in the range of 6.55–6.70%. A summary analysis of the results for the proposed prediction model and benchmark models is provided in

Table 11.

However, these models exhibit notable sensitivity to batch composition, with no single baseline consistently outperforming others across all configurations. In contrast, the proposed GWO-based ensemble framework achieves a substantially lower MAPE of 5.08%, corresponding to an approximately 37.3% improvement over the best individual empirical model and a clear performance gain relative to all evaluated standalone machine learning baselines.

6. Conclusions

This study systematically investigated the effects of additive manufacturing parameters on the impact resistance of Izod specimens manufactured via the FDM method. The research utilized the Taguchi orthogonal array methodology for experimental design and initial analysis. Furthermore, the study aimed to contribute to the existing literature by presenting an alternative optimization approach using machine learning algorithms via the ensemble methodology to determine the most optimal impact toughness prediction and printing parameters in FDM manufacturing processes.

The Taguchi analysis revealed a clear hierarchy of influence among the parameters studied. Print orientation emerged as the most critical factor (Rank 1), demonstrating the strongest influence on fracture energy and result consistency, followed by layer thickness and bed temperature (Rank 2–3). The optimal parameter configuration identified for maximizing fracture energy and result consistency was achieved through an octet structure as the print orientation, 0–90° raster angle orientation, 0.1 mm layer thickness, 205 °C nozzle temperature, and 90 °C bed temperature.

To achieve a more robust prediction, the study employed multiple model formulations and utilized 5 different robust Nature-Inspired machine learning algorithms. The cross-batch validation results demonstrated that none of the empirical equations or optimization techniques showed clear superiority among the various dataset types, capturing curvature effects and higher-order parameter interactions necessary for accurate prediction. These results substantiate the hypothesis that the fracture mechanics of FDM-fabricated components are dominated by strongly nonlinear, multivariate, and interaction-driven mechanisms.

Recognizing that individual empirical models suffer from scenario-specific biases, a comprehensive hybrid ensemble learning framework was proposed, utilizing GWO for metaheuristic-based weighted aggregation of base learners. This approach led to significant advancements in predictive accuracy: The best ensemble model achieved a MAPE of 5.0847%, representing a substantial improvement (approximately 37.3% relative reduction) over the best individual base learner (8.1%). The ensemble consistently outperformed the strongest single model in 55% of all iterations, underscoring its robustness and efficacy in mitigating individual model biases and enhancing generalization.

This hybrid methodology—integrating rigorous cross-scenario empirical modeling with metaheuristic ensemble optimization—represents a scientifically sound and innovative approach to addressing nonlinearity and variability in materials property prediction. It offers a transferable paradigm for similar regression tasks in materials science and engineering, advancing reliable fracture energy prognostics for additive manufacturing applications. Nevertheless, the present work contributes a reproducible optimization workflow and highlights the critical and often underestimated role of secondary printing parameters in determining the impact performance of FDM-printed PLA components.

The findings of this study demonstrate the effective application of nature-inspired optimization methods to FDM processing of PLA, enabling accurate estimation and improvement of fracture energy. These results allow industrial practitioners and researchers to replace traditional trial-and-error approaches with more efficient, data-driven parameter selection, thereby reducing experimental time and raw material waste. The shared optimized methodologies and data provide a practical foundation for developing advanced PLA-based materials with enhanced fracture performance.