1. Introduction

Modern collaborative software development relies heavily on distributed version control systems such as Git, which record both source code changes and corresponding textual descriptions known as commit messages. These messages capture what was modified and, ideally, the rationale behind the change. High-quality commit messages facilitate code review, traceability, and maintenance, while poor or missing documentation hinders debugging, regression analysis, and onboarding.

Despite their importance, commit messages in practice are often incomplete or inconsistent. Under time pressure or without enforced standards, developers frequently produce vague descriptions (e.g., “fix bug”, “update code”), reducing the value of the commit history for future contributors. Prior work has shown that unclear commit documentation introduces technical debate at the communication level and increases maintenance costs [

1,

2,

3].

Recent progress in Large Language Models (LLMs) has created a realistic opportunity to automate commit message authoring. Transformer-based models such as ChatGPT, DeepSeek, and Qwen demonstrate strong capability in summarizing source code diffs, reasoning about intent, and producing concise, human like text [

4,

5,

6]. However, several open questions remain unresolved:

How do different LLM paradigms such as zero-shot prompting, retrieval-augmented generation (RAG), and fine-tuned models compare in their ability to generate accurate and informative commit messages?

To what extent do automatic evaluation metrics (BLEU, ROUGE-L, METEOR, and Adequacy) correlate with human judgments of clarity and usefulness?

How well do such models generalize across programming languages and commit types within large, heterogeneous datasets?

To answer these questions, we presents a comprehensive empirical comparison of representative LLMs using the CommitBench dataset, which contains over one million real commits across six major programming languages [

7,

8]. We evaluate each paradigm under controlled experimental settings and validate quantitative results through a human study of one hundred sampled commits rated on a 1–5 scale for Adequacy and Fluency.

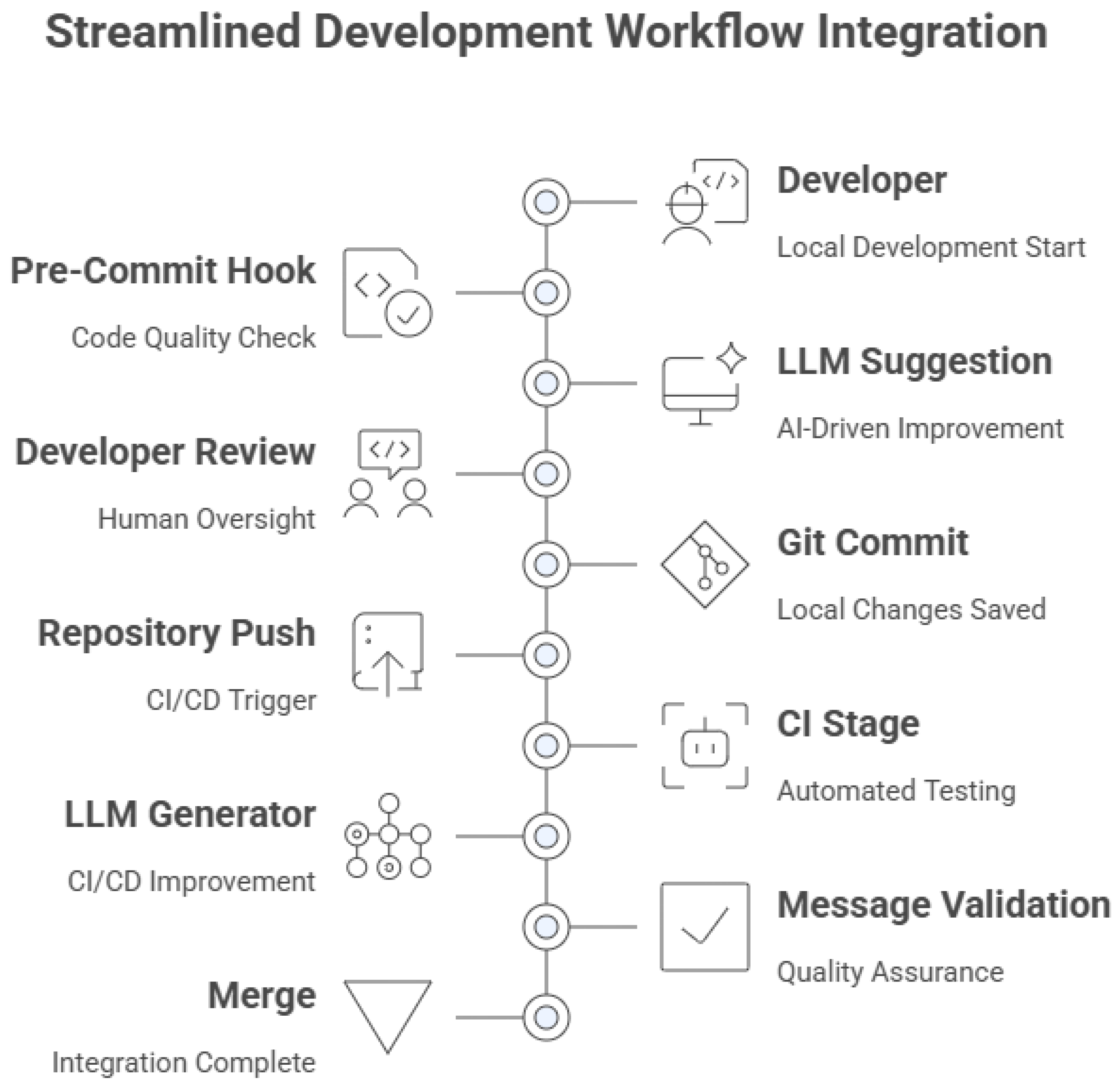

While the primary focus of this work is empirical evaluation, we also discuss how the resulting models could be conceptually integrated into modern development workflows, emphasizing a human-in-the-loop design. This conceptual illustration is not presented as an implementation but as a forward-looking extension of the findings.

1.1. Contributions

This paper presents a systematic comparative study of LLM based commit message generation with and without retrieval, grounded in reproducible experimentation. Our main contributions are:

Evaluation of three dominant modeling paradigms. Rather than benchmarking all state-of-the-art models, this work compares three dominant paradigms for commit message generation under controlled and comparable experimental settings: (i) ChatGPT (zero-shot prompting); (ii) DeepSeek-RAG (retrieval-augmented generation); and (iii) Qwen-Commit (supervised fine-tuning). These configurations are used as operational representatives to study paradigm-level effects rather than model-scale competitiveness.

Creation of a balanced evaluation subset from CommitBench. We use the CommitBench corpus (> commits) to create a balanced subset covering six major programming languages and standardized pairs of diff messages, making the comparison fair.

Analysis of human and automatic evaluation alignment. We analyze the correlation between automatic metrics and human judgments by pointing out where metrics agree or diverge, and discuss implications for future evaluation practices.

Development of a CI/CD pipeline integration sketch. We outline a practical, human-in-the-loop workflow for embedding the best performing configuration(s) into pre-commit hooks and CI pipelines to assist with documentation and code review.

Positioning and Novelty

Unlike prior studies that typically examine a single model family, training strategy, or evaluation setting, this work provides the first controlled, large scale comparison of three distinct LLM paradigms: zero-shot prompting, retrieval-augmented generation, and supervised fine-tuning on the same multilingual CommitBench benchmark under identical preprocessing, prompting, and evaluation conditions. Consequently, the observed paradigm-level performance differences cannot be inferred from existing CommitBench-based studies that analyze these approaches in isolation. In addition, this work complements automatic metrics with a systematic human–metric alignment analysis across adequacy and fluency dimensions, providing insights into evaluation reliability that go beyond prior benchmarking efforts.

1.2. Research Questions

To guide the study, we pose two core research questions:

- RQ1:

How do current LLMs, namely ChatGPT, DeepSeek, and the fine-tuned Qwen model, compare in their ability to generate accurate and natural commit messages, both with and without retrieval augmentation?

- RQ2:

To what extent do automatic evaluation metrics (BLEU, ROUGE-L, METEOR, Adequacy) correlate with human judgments of Adequacy (semantic accuracy) and Fluency (linguistic quality)?

These questions reflect the key developer-oriented concerns of selecting an appropriate model and trusting metric-based evaluations as reliable quality indicators.

1.3. Paper Structure

The remainder of this paper is organized as follows.

Section 2 reviews prior work on automated commit message generation, LLM-based code summarization, and evaluation practices.

Section 3 then introduces the CommitBench dataset and outlines the preprocessing pipeline used to construct a balanced, high-quality benchmark.

Section 4 describes the overall experimental methodology including model configurations, fine-tuning of the Qwen model, retrieval-augmented generation and evaluation metrics.

Section 5 details the experimental setup and human evaluation design.

Section 6 reports and analyzes the comparative results, whereas

Section 7 interprets the findings and discusses practical implications for CI/CD pipeline integration. Finally,

Section 8 summarizes current limitations and directions for future research, followed by the conclusion in

Section 9.

2. Related Work

2.1. Role of Commit Messages in Software Engineering

Commit messages are crucial contexts for code evolution; they not only describe what has changed but also explain why [

1,

2]. Good-quality messages decrease the cognitive load when doing code reviews, speed up onboarding, and facilitate debugging and auditing. Tian et al. [

3] reported that developers appreciate messages specifying both the technical change and its rationale, but many commits do not include one or both of these components. Poor documentation at this level leads to the growth of technical debt in communication and knowledge transfer.

2.2. Traditional Heuristic and Template-Based Methods

Early automation efforts relied on heuristic rules or hand-crafted templates [

9]. These approaches usually inserted filenames or identifiers into fixed sentence patterns. Although useful for repetitive updates, they failed to capture higher-level intent, describing what changed but not why [

1]. Their rigidity limited generalization across projects and programming styles.

2.3. Neural and Sequence to Sequence Approaches

The task was later reframed as code to text translation, mapping diffs (source) to commit messages (target). Sequence to sequence models using RNNs, attention, and subsequently transformer encoders–decoders improved fluency and contextual coherence [

10,

11,

12]. These systems learned statistical correspondences between code edits and summary phrases but still struggled with long diffs, ambiguous intent, and overly generic wording.

2.4. Broader Intelligent Generative Models Across Domains

Beyond software engineering, intelligent deep learning methods such as adaptive variational autoencoding generative adversarial networks and multimodal fusion frameworks have been successfully applied in a variety of complex, domain-specific settings, including industrial monitoring. For instance, adaptive VAE-GAN architectures have been shown to effectively enhance fault diagnosis performance in industrial systems through data augmentation and adaptive feature learning [

13]. Similarly, multimodal fusion frameworks that combine attention-based autoencoders with generative adversarial networks have demonstrated strong capabilities in detecting anomalies from heterogeneous industrial sensor data [

14]. These approaches highlight the maturity of adaptive and data-driven generative modeling across heterogeneous inputs and problem domains. While such architectures demonstrate strong generalization within their target applications, the present study focuses specifically on language-based generation from code diffs, where large language models offer a more direct, scalable, and reproducible solution aligned with software engineering workflows.

2.5. Transformer Models and Large Language Models

Transformer-based LLMs brought a step change in performance [

6]. With large-scale pretraining, they can reason over code context and generate concise, human-like summaries of software changes [

4,

15,

16]. Recent studies extend their use to inferring developer intent, linking commits to bug reports or feature requests, and producing audit-ready explanations [

6,

16]. Prompt engineering and retrieval-augmented generation (RAG) further enable context adaptive outputs without full retraining [

5,

17].

2.6. Datasets and Benchmarks

The introduction of large, multilingual datasets such as CommitBench has improved reproducibility and comparability across models [

7,

8,

18]. Earlier work often relied on private or single project corpora, limiting external validity. CommitBench aggregates over one million real commits from diverse repositories and languages, offering a robust basis for evaluating both general purpose and fine-tuned models under realistic noise conditions.

2.7. Evaluation Practices and Human Factors

Automatic metrics (BLEU, ROUGE-L, and METEOR) remain the dominant evaluation tools. In this context, they quantify lexical overlap between generated and reference messages. However, multiple studies note that these metrics correlate imperfectly with developer preferences, penalizing legitimate paraphrases or rewarding surface similarity over semantic adequacy [

2,

6]. Consequently, recent research complements automatic scores with human assessments focused on meaning accuracy and linguistic quality [

15,

18].

While LLMs have demonstrated remarkable capability for code summarization, prior work seldom provides a unified and cross-model comparison grounded in both automatic and human evaluation. This study directly addresses that gap through a reproducible, dual-evaluation framework encompassing ChatGPT, DeepSeek, and a fine-tuned Qwen model.

3. Dataset and Preprocessing

3.1. CommitBench Overview

We base our experiments on CommitBench, a large-scale benchmark for commit message generation introduced by Schall et al. [

7] and later extended by Kosyanenko and Bolbakov [

8]. CommitBench aggregates more than one million real commits collected from thousands of open-source repositories across six major programming languages: Python, Java, JavaScript, Go, PHP, and Ruby. Each instance contains a git-diff, the corresponding human-written commit message, and metadata such as author, timestamp, and repository name.

To our knowledge, this is the first systematic preprocessing of CommitBench that balances commits across six programming languages while removing bot-generated and trivial messages at scale.

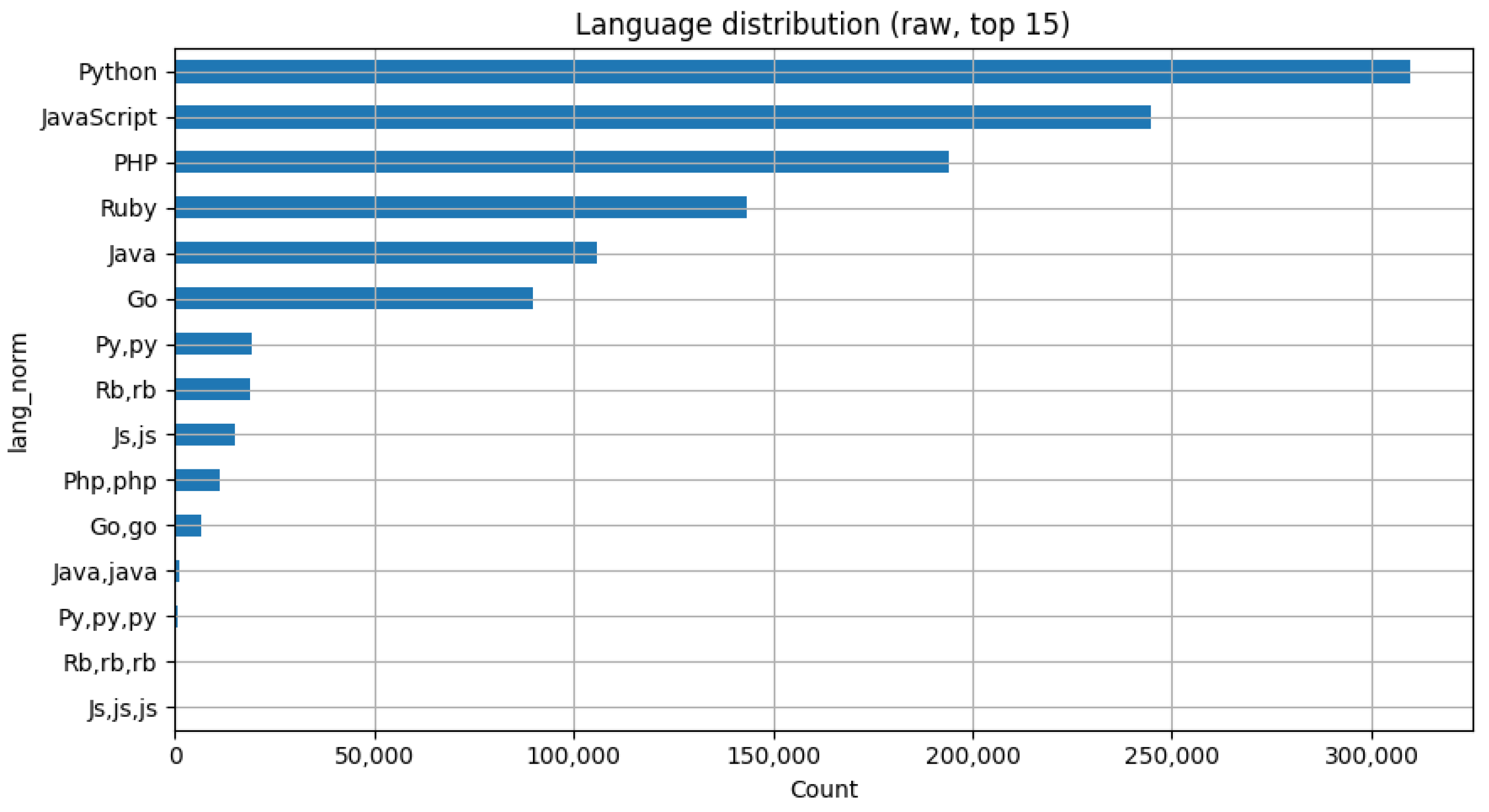

Figure 1 shows the overall language distribution in the raw dataset, because, as we can see, the irregularities, including duplicate labels (e.g.,

py,py), need to be taken into consideration.

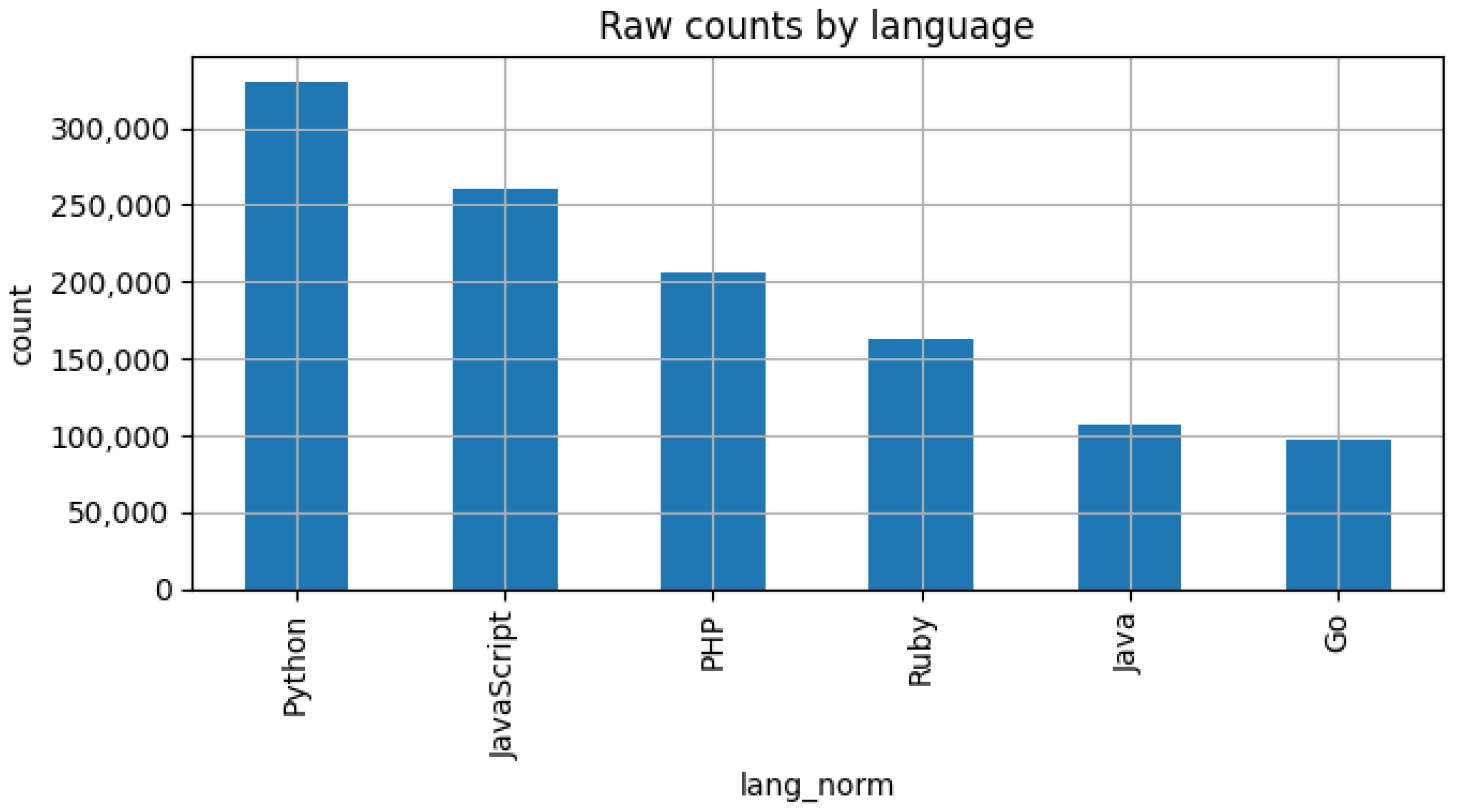

Figure 2 provides the number of commits per repository group. The dataset is inherently imbalanced, as Python and Java projects dominate other programming languages. Therefore, additional preprocessing was required to construct a balanced evaluation subset.

3.2. Noise Filtering and Quality Control

Because the original data originate from heterogeneous public repositories, we applied multiple filtering stages to remove noise and low-information samples. We identified and discarded two main categories of noisy commits:

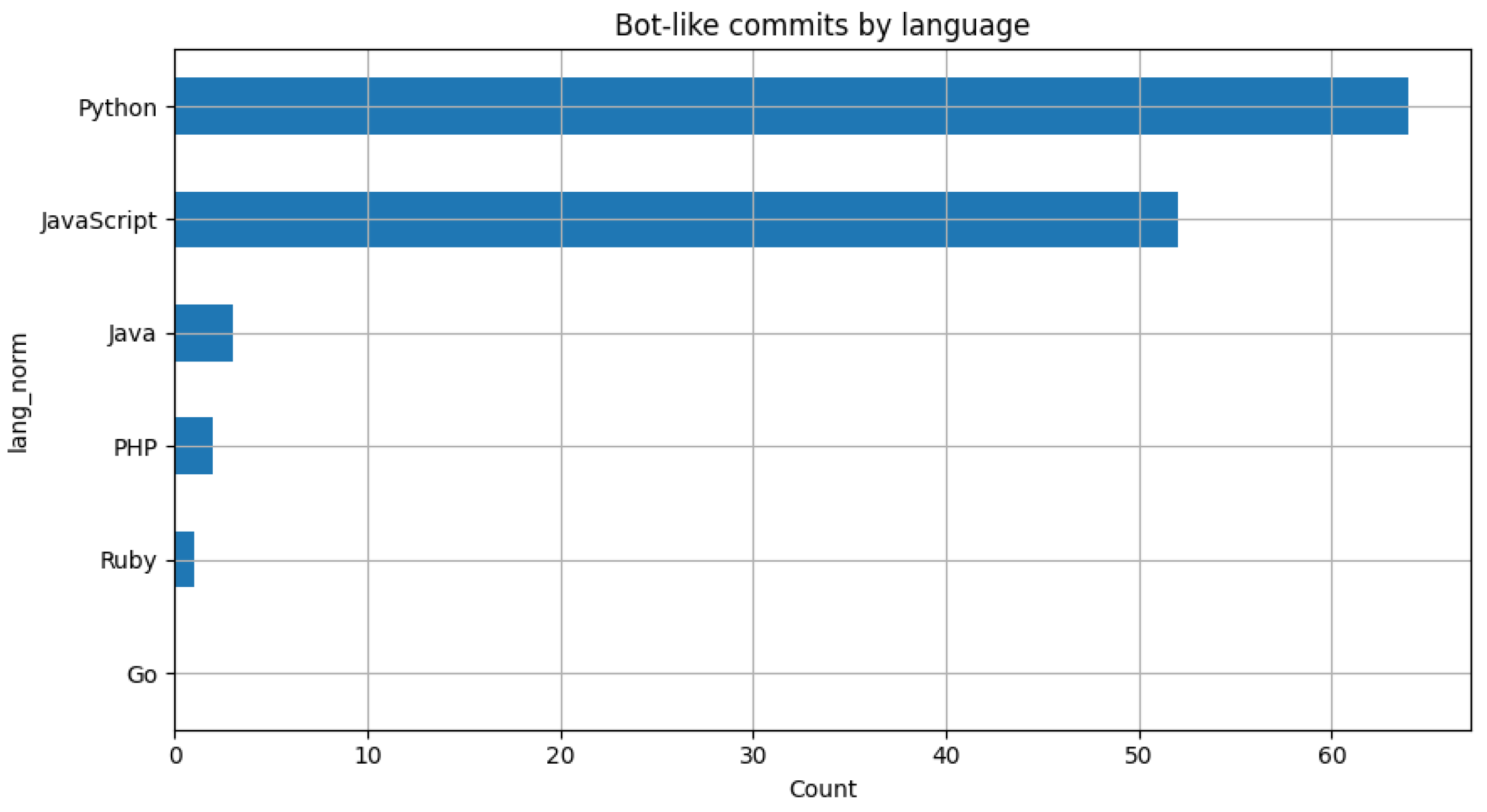

Bot-generated or automated commits, typically produced by continuous integration agents such as “dependabot”, “jenkins”, or “github-actions”;

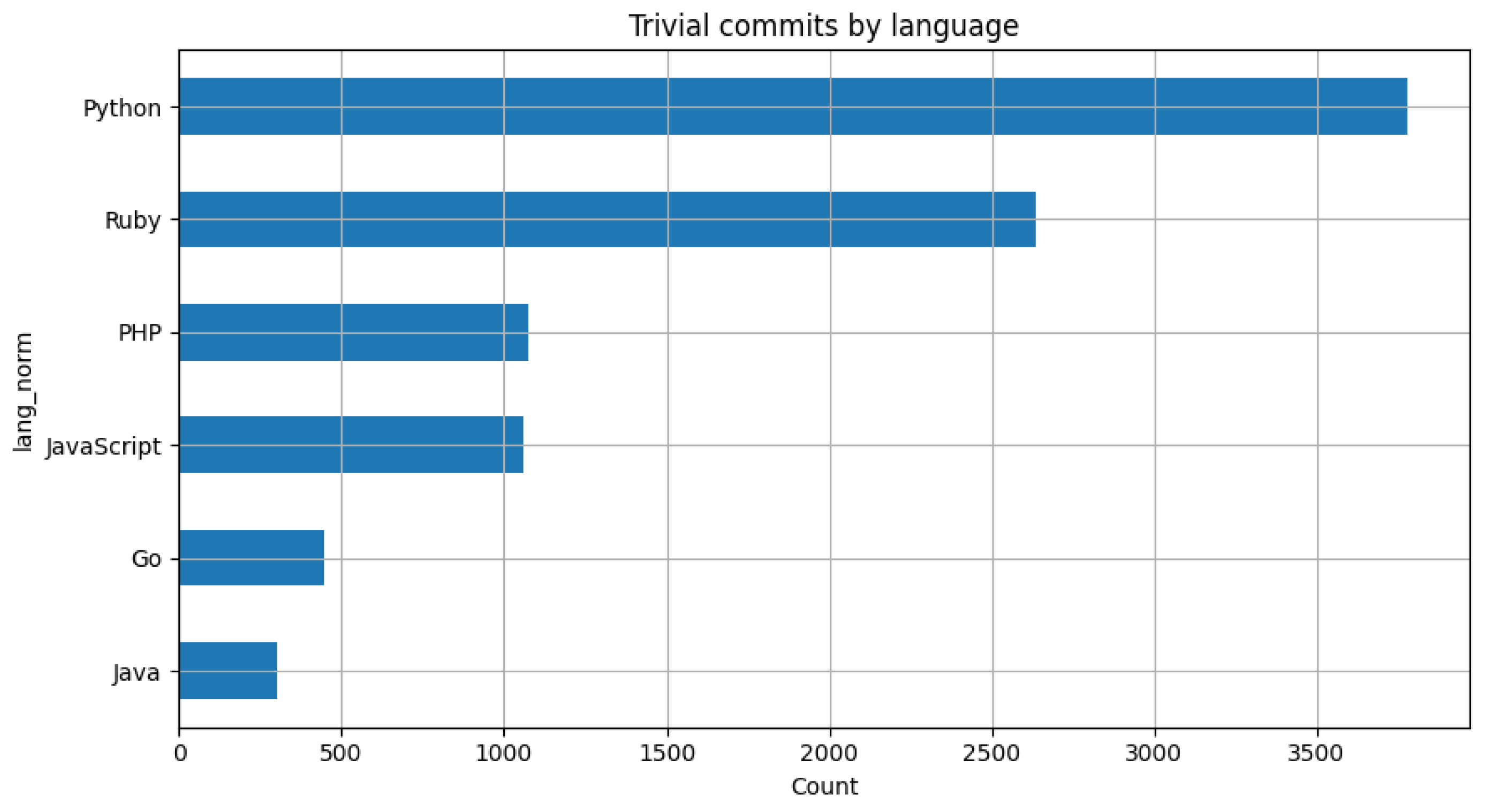

Trivial or redundant messages, such as “update readme”, “merge branch”, or “fix typo”, which provide little semantic value.

Figure 3 and

Figure 4 illustrate the distribution of these cases prior to filtering. Regular expressions and heuristic keyword detection were used to flag such commits, following recommendations from prior work [

15,

18].

3.3. Length and Structural Analysis

To understand the natural distribution of commit message lengths, we computed descriptive statistics over tokenized messages. As shown in

Figure 5, the median message length ranged between 7 and 15 tokens depending on the programming language, which aligns with prior findings [

11,

19]. Messages shorter than three tokens were automatically discarded, as they typically corresponded to boilerplate or placeholder text.

3.4. Balancing and Final Subset Construction

After cleaning, the dataset was stratified by programming language and randomly sampled to create a balanced subset.

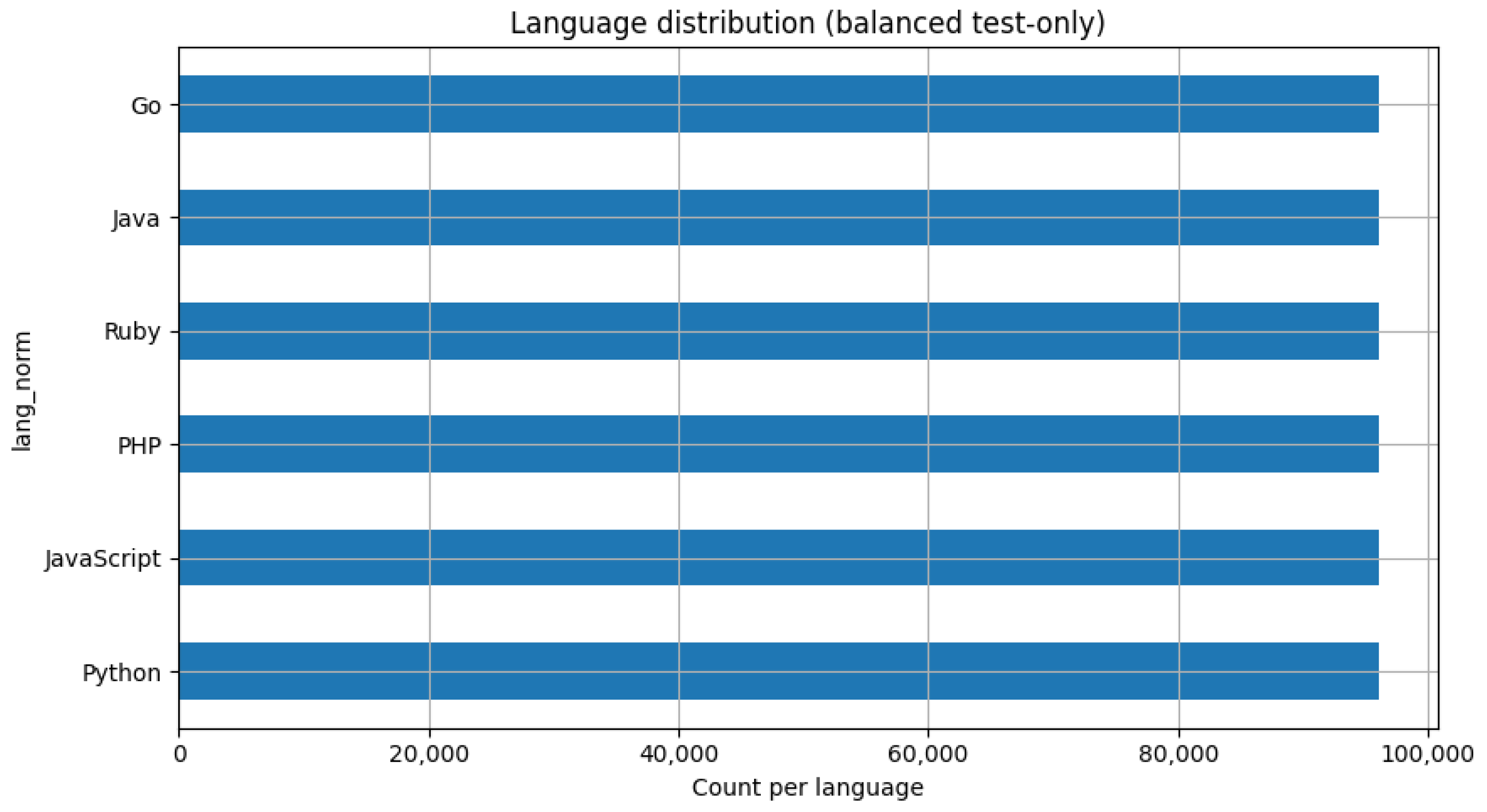

Figure 6 shows the resulting distribution. Thereafter, it was partitioned into training, validation, and test partitions in an 80/10/10 ratio; with care taken so that commits from the same repository did not appear across splits, thus preventing data leakage.

For large-scale automatic evaluation, we used the entire balanced corpus (576,342 commits across six languages).

3.5. Preprocessing Pipeline and Summary

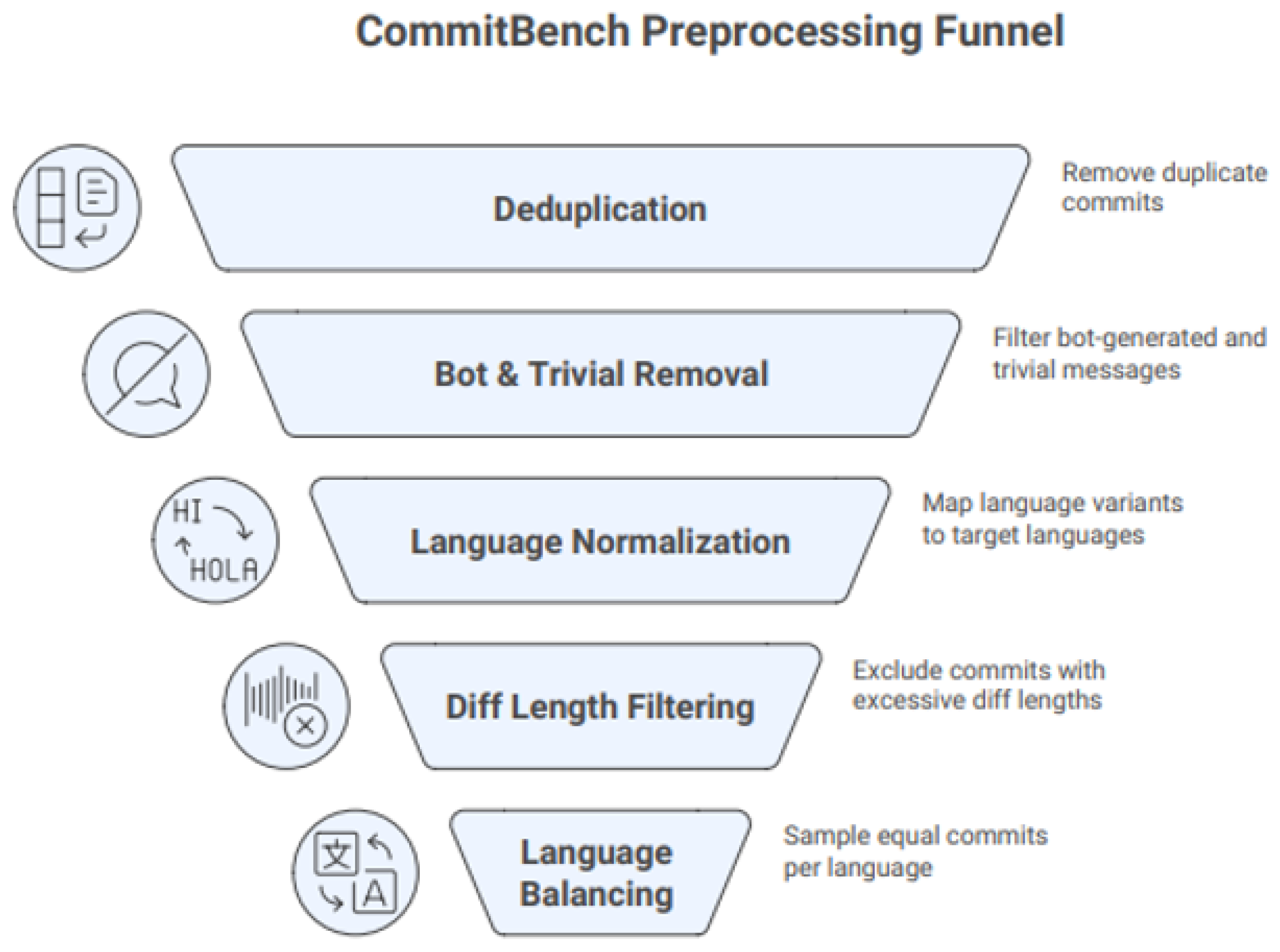

Figure 7 depicts the entire preprocessing funnel applied to the raw CommitBench dataset. This pipeline is divided into five sequential stages: deduplication, removal of bot and trivial messages, language normalization, diff-length filtering, and balancing by programming language. It refines data quality in a series of steps by removing redundancy, removing automated or low information commits, and making sure representations across all six languages are consistent.

Table 1 gives a quantitative summary of the number of commits remaining after each filtering stage. The original dataset contained approximately 1.16 million commits after language normalization. After duplicate removal, bot detection, trivial message exclusion, and length-based cleaning, sequential filtering resulted in a corpus of 1.15 million high-quality samples. From this, a balanced evaluation subset of 576,342 commits across six programming languages was extracted for subsequent experiments.

With this preprocessing, each commit message was ensured to be semantically relevant, linguistically diverse, and well-represented across all six programming languages. The resultant cleaned and stratified subset forms the basis of the comparative LLM experiments shown in

Section 4. The preprocessed dataset is publicly released on Zenodo [

20].

4. Methodology

4.1. Experimental Overview

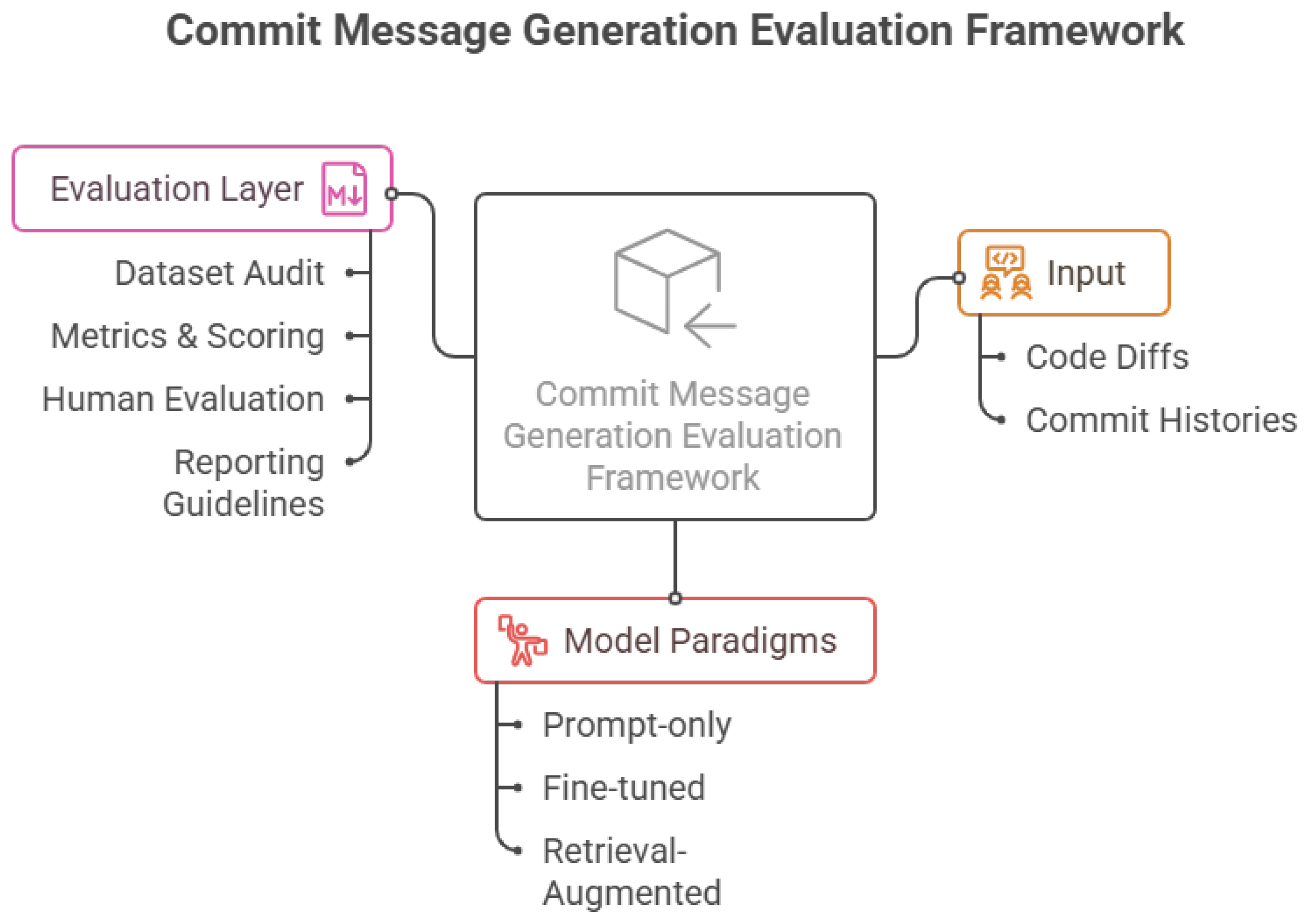

Our methodology is designed to evaluate large language models (LLMs) for commit message generation along three complementary dimensions: (i) model architecture and training paradigm; (ii) retrieval and prompting strategy; and (iii) evaluation criteria combining automatic metrics and human judgments. The workflow is illustrated in

Figure 8, which shows the end-to-end pipeline from dataset preprocessing (

Section 3) to quantitative and qualitative analysis.

4.2. Model Suite

To capture the diversity of current approaches in automated commit message generation, we evaluated three complementary LLM paradigms [

5,

6,

16]: zero-shot, retrieval-augmented generation (RAG) variant, and fine-tuned models:

General-purpose models (ChatGPT and DeepSeek). Both are proprietary transformer-based LLMs accessed via API. We evaluated them in two configurations: a standard zero-shot setting and a RAG variant, where the prompt was enriched with the top-

k semantically similar historical commits retrieved from the CommitBench index (see

Section 4.4). This dual setup assesses whether contextual retrieval improves coherence, style consistency, and repository alignment.

Fine-tuned open model (Qwen-Commit). Locally hosted, open-source model fine-tuned on CommitBench diffs and reference messages using parameter-efficient adaptation. It is based on Qwen-7B transformer architecture. Supervised fine-tuning was performed using parameter-efficient Low Rank Adaptation (LoRA) under 4-bit quantization (QLoRA-style) to enable scalable training on large commit corpora. LoRA was applied to the attention projection layers with rank , scaling factor , and dropout of . The tokenizer was initialized from the base model, and the padding token was set to the end of sequence token. The model was fine-tuned on the CommitBench training split using a maximum input length of 512 tokens. Only LoRA parameters were updated during training, resulting in less than 1% of model parameters being trainable. Qwen-Commit was also tested in both standalone and RAG-enhanced modes to examine the combined effect of domain-specific fine-tuning and retrieval grounding. It is designed to emphasize data privacy and on-premise feasibility while keeping domain alignment high.

All models have undergone evaluation under identical input and output conditions: Each was given the same preprocessed git-diff, a unified prompt template, and a fixed maximum generation length to avoid verbosity and API overflow (128 tokens). This standardization ensures that observed differences are due to the model architecture and adaptation strategy, not because of changes in the prompt.

4.3. Prompt Engineering

Prompt formulation was a factor that impacted the clarity and succinctness of the auto-generated text, as illustrated by similar findings from the study by Lopes et al. [

15]. A set of pilot experiments revealed that simple questions like “Summarize this diff”resulted in verbose and redundant responses lacking explicit explanation. Upon several rounds of tuning on the validation subset, the following final template instruction has been adopted:

You are a senior software engineer. Write a short (<=12 words) Git commit message that clearly describes what changed and why.

This instruction enforces an imperative tone and brevity aligned with established open-source conventions [

3]. The limit of 12 words per line makes models generate concise, active voice messages much like actual developer commits, while still allowing for the inclusion of both technical changes and their motivations.

4.4. Retrieval-Augmented Generation (RAG)

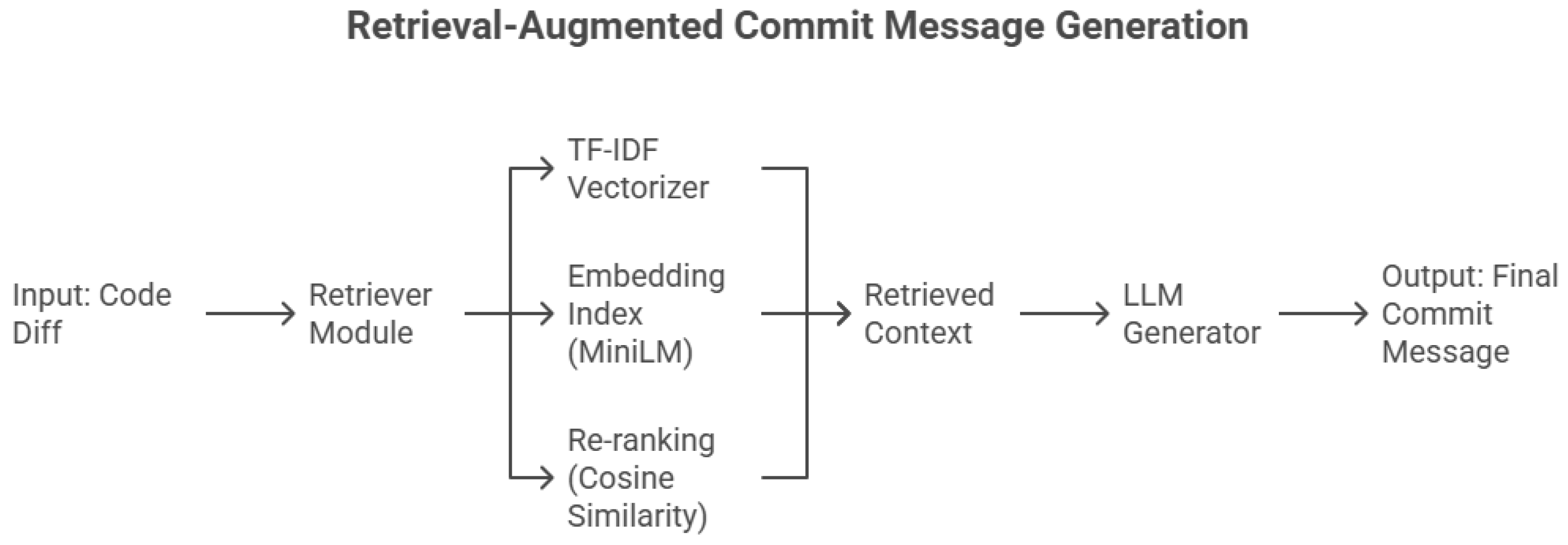

To improve the contextual relevance and repository-specific consistency, we extended the baseline generation pipeline with a retrieval-augmented generation (RAG) mechanism. Instead of relying solely on internal parameters of a model, RAG augments the input prompt with external exemplars of historical (diff, message) pairs most similar to the current code change. This allows general-purpose LLMs to more accurately reflect local naming conventions, stylistic norms, and commit structures without explicit fine-tuning.

Each input diff is first encoded as a dense vector by a sentence transformer model trained on code text pairs. The resulting embeddings are compared against an indexed “commit memory” built from CommitBench using a FAISS similarity search. The top-

k examples retrieved for every new diff are inserted, empirically setting

, before the prompt template described in

Section 4.3. This retrieval step provides concrete, domain-aligned context that guides message generation.

Figure 9 shows the detailed architecture of the retrieval-augmented generation (RAG) pipeline. It explains how the retriever module combines TF-IDF vectorization and MiniLM embeddings, uses cosine similarity for re-ranking, retrieves contextual examples, and injects them into the LLM prompt in order to generate commit messages.

4.4.1. Embedding Model and Similarity Computation

Subsequently, we used the

sentence transformers library to convert each commit diff in the preprocessed dataset into a dense vector representation using the

all-MiniLM-L6-v2 sentence transformer model. This embedding model has been chosen due to its strong balance between semantic accuracy and computational efficiency [

5,

6]. MiniLM learns both lexical and structural similarities, allowing the retrieval mechanism to generalize across code and natural language fragments.

For every new diff

, the embedding

was compared against all indexed commit vectors

in a FAISS flat index [

21]. Cosine similarity was computed as

The

k most similar examples (by default

) were retrieved and passed to the model as contextual exemplars.

4.4.2. Re-Ranking Strategy

Beyond raw similarity scores, a lightweight re-ranking module prioritized examples with higher lexical overlap in function names and identifiers. Specifically, a TF-IDF similarity matrix was computed, and the top-

retrieved samples were rescored by combining embedding and lexical similarities:

where

was empirically tuned on the validation subset to balance semantic and lexical information. The final top-

samples after re-ranking were appended to the prompt in the following format:

Example Commit 1: <diff>\nMessage: <msg>

Example Commit 2: <diff>\nMessage: <msg>

Now write a commit message for the following diff: ⋯

This two-tier retrieval ensures that the retrieved examples are both semantically and syntactically aligned with the query diff.

4.4.3. TF–IDF vs. Hybrid Retrieval

We evaluated two retrieval schemes to assess the relative contributions of lexical and semantic matching:

TF-IDF retrieval: Purely lexical retrieval based on tokenized diff text. It effectively captures explicit term overlap (e.g., variable names or function identifiers) but struggles when similar intents use different identifiers.

Hybrid retrieval: Integrates TF-IDF and embedding-based similarity using the weighted score above. This approach captures both surface overlap and latent semantic relationships, such as between “refactor login handler” and “rewrite authentication function.”

In practice, TF-IDF retrieval produced more stable results for short diffs (bug fixes or single-file changes), while hybrid retrieval performed better on semantically complex diffs involving refactoring or multi-file updates. The hybrid mode thus acts as a semantic memory of project history.

4.4.4. Hyperparameters and Efficiency

The FAISS index stored 576,000 vectors (one per commit) in 384 dimensions, requiring the native output size of MiniLM to be approximately 1.1 GB of CPU memory. Average retrieval latency was 0.06 s per query, and full RAG-based generation averaged 2.3 s per diff, remaining practical for CI/CD pipeline automation [

22,

23]. All retrieval and scoring components were implemented in Python 3.10 using

scikit-learn,

sentence transformers, and

faiss-cpu. This retrieval setup was integrated into the unified evaluation pipeline described in

Section 5.

Table 2 summarizes the full configuration of the retrieval-augmented generation (RAG) pipeline, including the retriever type, embedding model, indexing strategy, and key hyperparameters.

To ensure controlled evaluation and avoid introducing heuristic bias, no query rewriting, conversational memory, or commit-history chaining was employed in the RAG pipeline. Each commit diff was processed independently using a static retrieval index, allowing for the isolation of retrieval effects from adaptive or stateful mechanisms.

4.5. Evaluation Metrics

To address RQ1–RQ2, we combined automatic metrics with human evaluation to capture both lexical correspondence and perceived usefulness of generated commit messages.

4.5.1. Automatic Metrics

We computed four complementary automatic metrics on the test split, each quantifying a different aspect of message quality:

BLEU-

(Bilingual Evaluation Understudy) measures

-gram precision between the generated (

) and reference (

) messages, with a brevity penalty to discourage overly short outputs:

where

is the

-gram precision,

are uniform weights, and

,

are candidate and reference lengths [

24].

ROUGE-L (Recall-Oriented Understudy for Gisting Evaluation) computes the longest common subsequence (LCS) between

and

, forming an F-measure of sequence overlap:

where

weights recall higher than precision, and

,

are message lengths [

25].

METEOR is a recall-oriented metric that incorporates stemming and synonym matching:

where

is the harmonic mean of unigram precision and recall, and

penalizes word-order mismatches [

26].

Adequacy measures semantic coverage of informative tokens in the generated message relative to ref. [

27]:

where

and

denote the sets of non stopword tokens in

and

.

BLEU and ROUGE emphasize surface-level lexical overlap, whereas METEOR and Adequacy capture deeper semantic fidelity and tolerance to paraphrasing. Adequacy, in particular, was designed to align more closely with human perceptions of meaning preservation in commit message generation.

Metric extensibility. Automatic evaluation metrics for natural language generation are continuously evolving, with new semantic and model-based evaluators (e.g., BERTScore, BLEURT and G-Eval) being proposed and refined on a yearly basis. In this study, we adopt established reference-based metrics (BLEU-4, ROUGE-L, METEOR and Adequacy), which are widely used in the commit message generation literature, to ensure comparability with prior work. The proposed evaluation framework is intentionally designed to be extensible, allowing newer semantic metrics to be incorporated as drop-in components without modifying the dataset preprocessing, experimental protocol, or reporting structure. We therefore consider the integration of emerging evaluation metrics as a natural extension of this work.

4.5.2. Human Evaluation

Because automatic metrics alone do not capture developer perception [

6,

15,

18], we conducted a human study on 100 sampled commits, balanced across six programming languages: Go, Java, PHP, Python, JavaScript, and Ruby. Each commit was rated on the following two measures by three experienced open-source contributors using a 1–5 Likert scale:

Adequacy (Meaning Accuracy): how well the message describes the intent and effect of the code change;

Fluency (Naturalness): grammatical correctness, readability, and alignment with the conventional commit style.

To ensure transparency in ethics, participants were also asked for their consent for the acknowledgment of their names in the paper.

Inter-rater agreement was computed using Cohen’s , and mean scores were reported per model.

This mixed evaluation protocol addresses RQ2 directly by linking human judgments to automatic metrics.

4.6. Summary

Above, the methodology describes an overall process for assessing automatically generated commit message summaries under various paradigms. Moreover, by including standard preprocessing, prompt designing, retrieval augmentation, and evaluation by both automated metrics and human raters, the task ensures methodological consistency and comparability, as outlined in our previous work [

28]. Empirical findings will be presented in subsequent sections (

Section 5 and

Section 6) in the form of tables and visual summaries, showing the relative merits of the zero-shot, retrieval, and fine-tuned paradigms. This design allows for a balanced treatment of both the quantitative measures and the qualitative assessment of the developer. This makes it easier to compare the performance of the models.

5. Experimental Setup and Implementation

5.1. Environment and Hardware Configuration

Experiments were performed in a hybrid setup combining a local workstation for preprocessing and evaluation with a GPU server for model inference and fine-tuning.

The local environment used for dataset cleaning, retrieval indexing, and metric computation featured:

Model inference and fine-tuning were conducted on an NVIDIA A100 GPU node (40 GB VRAM) using the transformers and accelerate libraries. All experiments were implemented in Python 3.10 within a managed conda environment. The main software dependencies included:

transformers = 4.44.2 model loading and inference;

datasets = 2.20.0 data handling and splits;

sentence-transformers = 2.6.1 embedding generation;

faiss-cpu = 1.8.0 vector similarity search;

scikit-learn = 1.5.2 preprocessing and re-ranking;

evaluate = 0.4.3 BLEU, ROUGE, and METEOR computation.

All scripts and evaluation notebooks were version controlled in a private GitHub repository, and environment configurations were stored as environment.yml snapshots for full reproducibility.

5.2. Dataset Splits and Consistency Control

We divided the balanced CommitBench subset (576,342 commits; see

Section 3) into the following splits:

Training set: 461,073 commits (80%);

Validation set: 57,634 commits (10%);

Test set: 57,635 commits (10%).

Each repository was assigned to exactly one split to avoid data leakage. File-level hashing verified that no diff appeared in multiple partitions. For the fine-tuned Qwen-Commit model, the training subset was used to adapt the decoder head and final embedding projection, while the zero-shot and RAG models were evaluated directly on the test set.

To preserve fairness, all models received the same inputs (diffs truncated to 512 tokens) and identical preprocessing rules for whitespace and diff headers.

5.3. Inference Configuration and Decoding Parameters

Each model was executed under deterministic decoding to ensure reproducibility.

Table 3 lists the main hyperparameters applied to all model variants.

All outputs were normalized to lowercase and stripped of punctuation before automatic metric computation. To prevent prompt leakage across models, each inference pipeline reloaded a fresh session per 1000 samples. For DeepSeek-RAG, retrieval was computed once and embedded using FAISS IDs, resulting in a 35% reduction in generation latency compared to on-demand retrieval.

5.4. Human Evaluation Protocol

To complement the automatic evaluation, we conducted a human-centered survey with 12 volunteer developers recruited from GitHub and graduate research groups. A total of 100 commits were randomly selected from the test set, balanced across six programming languages and commit types. For each commit, raters were shown:

The original git diff;

The human-written commit message (hidden until rating completion);

three anonymized model outputs (order randomized);

and two 1–5 scale grading fields for Adequacy and Fluency.

We collected ratings using a customized web-based survey. Each rater rated between 25 and 40 samples to ensure that there was overlap to compute interrater reliability. Values of Cohen’s above 0.72 indicated substantial agreement, thus confirming the reliability of human judgments.

The correlation between human and automatic metrics was analyzed to address RQ2. In addition, qualitative error inspection was performed for the top and bottom 10% of commits according to human ratings, revealing recurring failure patterns such as misinterpreted diff context and overgeneralization.

5.5. Reproducibility and Open Research Practices

To ensure transparency and replicability, all preprocessing scripts, evaluation templates, and survey materials were deposited in a public repository (to be released upon publication). The repository includes:

The complete preprocessing and data filtering pipeline with versioned thresholds;

Python scripts for embedding generation, FAISS indexing, and re-ranking;

Evaluation notebooks computing BLEU, ROUGE-L, METEOR, and Adequacy metrics;

Anonymized developer survey data with per-sample Adequacy and Fluency scores;

Google Apps Script used to automatically generate balanced Google Forms (12 samples per form, two per language);

A detailed README.md explaining installation, environment setup, and evaluation execution.

All random seeds were fixed (seed = 42) for dataset shuffling, retrieval sampling and model generation. Human evaluation forms followed a standard five-point Likert scale for semantic adequacy and linguistic fluency alike.

All retrieval, indexing, and re-ranking parameters used in the RAG pipeline are explicitly reported in

Table 2 to support full reproducibility.

Developers were also asked for consent as to whether they allowed their names to be mentioned in the acknowledgments of the paper, following open research ethics.

This setup ensures that our comparison among ChatGPT, DeepSeek-RAG, and Qwen-Commit is fully reproducible, transparent, and ethically aligned, with a stable foundation for the quantitative and qualitative results in

Section 6.

6. Results and Analysis

6.1. Overview

This section presents the experimental results of the three LLM paradigms: ChatGPT, a zero-shot model; DeepSeek RAG, a retrieval-augmented model; and Qwen, a fine-tuned open model. We analyze the automatic evaluation metrics and human ratings, followed by per-language and qualitative insights. The results answer the two research questions (RQ1–RQ2) presented in

Section 1.

6.2. Automatic Evaluation Results (RQ1)

Table 4 summarizes the quantitative results across BLEU, ROUGE-L, METEOR, and Adequacy. Scores are averaged over the full test set (57,635 commits), with standard deviations computed over three runs.

This shows that the fine-tuned Qwen-Commit model outperforms the zero-shot and retrieval-augmented models on all metrics. Retrieval-augmented generation (DeepSeek-RAG) showed remarkable improvements over the zero-shot baseline, with a maximum improvement on Adequacy (+5.9 pp) and ROUGE-L (+2.6 pp), which supports our hypothesis that contextual retrieval helps adapt general-purpose models to project-specific conventions without retraining.

The performance comparisons in

Table 4 are based on mean scores computed over the full test set and highlight comparative trends across modeling paradigms. We do not claim the formal statistical significance of the observed differences. Confidence intervals or even paired hypothesis tests could be further applied to quantify uncertainty. Our main task is therefore to make a comparison, controlled and paradigm-level, under identical conditions of preprocessing and evaluation. Accordingly, any improvements reported should be treated as directional rather than inferential.

Concrete Example of Metric Limitations

To substantiate our correlation findings with concrete evidence, we analyze a representative example where human judgment diverges sharply from lexical metrics. This case, selected from our human evaluation sample, illustrates why BLEU and ROUGE-L often misrepresent commit message quality when models produce legitimate paraphrases.

Example: Paraphrased Spelling Check Removal. This commit removes a spelling check from build prerequisites to prevent widespread failures. The reference message explains the rationale conversationally, while the generated version states the action directly in standard commit style:

Metric Scores and Analysis:

Lexical Metrics (Very Low): BLEU-2 = 0.084, ROUGE-L F1 = 0.105;

Semantic Adequacy (Moderate-High): Adequacy score = 0.509;

Human Rating: Adequacy = 4/5 (Good).

BLEU and ROUGE scores are close to zero due to the low overlap of words, except for the words “spelling” and “check”, which appear in both messages. However, analysis of the message content shows a high level of equivalence because both messages contain the same context, which is the disabling of the spelling check for the purpose of ensuring that the builds are stable. The human judges were able to determine that the messages were equivalent since the synthetic message was rated as “Good” (4/5) based on its appropriateness.

This one example illustrates the biggest problem with lexical metrics in general: they measure and penalize legitimate variants in style. The model creates a brief and imperative-style message similar to those one usually finds in technical commits, whereas the original message was written in an expository style. Both descriptions are correct but differ in semantics.

Summing up, RQ1 is answered positively: LLMs are very different in the quality of generated commit messages. Model specialization, either fine-tuning or RAG, brings consistent gains over zero-shot inference.

6.3. Correlation Between Automatic Metrics and Human Ratings

Our further analysis of the human evaluation shows that developer judgments align much more strongly with the Adequacy metric than with similarity-based metrics like BLEU, ROUGE-L, or METEOR. Though BLEU and ROUGE reward lexical overlap, human raters consistently valued messages that captured the meaning and intent of the code change, even when the wording was different from that of the reference. The measure of Adequacy captures semantic coverage rather than surface similarity, and therefore reflects human perception far more accurately. This explains why the models with higher scores on Adequacy were preferred more often in the human study.

6.4. Human Evaluation Results (RQ2)

Human evaluation was done using the balanced survey forms described in

Section 4. Each developer rated 12 samples per form (two from each programming language: Go, Java, PHP, Python, JavaScript, and Ruby), ensuring a uniform distribution across languages.

For every generated commit message, participants provided two distinct scores, Adequacy (Meaning Accuracy) and Fluency (Naturalness), on a five-point Likert scale ranging from 1 (Very Poor) to 5 (Excellent). Developers were also asked for consent regarding the mention of their names in the acknowledgment section, in line with ethical research practices.

Table 5 reports the mean human ratings for the three qualitative criteria, clarity, informativeness, and usefulness, aggregated from the Adequacy and Fluency dimensions of 100 manually assessed samples. Each sample was evaluated by three annotators, and final scores were averaged to reduce subjectivity.

Fine-tuning again produced the most human-preferred messages, often described as “concise yet descriptive.” Retrieval augmentation improved informativeness and contextual grounding, particularly for configuration and refactoring commits, but sometimes introduced verbosity. Annotators noted that the hybrid DeepSeek-RAG model tended to capture project specific phrasing better than zero-shot ChatGPT, though with less stylistic precision than Qwen-Commit.

6.5. Qualitative Error Analysis

To better understand model behavior, we manually inspected 50 low-scoring samples (bottom 10% by Adequacy). Three recurring error categories emerged:

Shallow summaries: models describe the change superficially (e.g., “update tests”) without rationale;

Misinterpreted context: RAG occasionally retrieves semantically unrelated examples, leading to incorrect intent inference;

Overgeneralization: fine-tuned models sometimes omit filenames or key entities to maintain brevity, reducing specificity.

Interestingly, some “errors” were rated positively by human evaluators, suggesting that concise summaries may still be acceptable if semantically aligned—a nuance not captured by lexical metrics.

7. Discussion

7.1. Interpreting the Results

Our results clearly demonstrate that both fine-tuned and RAG models are substantially better than zero-shot prompt-based approaches for message generation. However, the lesson learned extends beyond the results, as message quality can be achieved not only by scaling-up model sizes but also by the appropriateness of the model’s contextual alignment with the repository’s evolution.

The use of retrieval to assist generation addresses semantic drift, a problem whereby general-purpose models lack grounding in real-world project specifics such as naming conventions and code structure. While these models are automatically aligned, it does incur additional costs and privacy worries.

It is particularly noticeable that a close relationship between human ratings and the Adequacy score suggests a promising approach to this problem.

As surface-based Adequacy measures, BLEU and ROUGE score alignment primarily concentrates on word overlaps, unlike Adequacy. These aspects make Adequacy a promising approach for benchmark research on commit message generation.

The following strategy could be adopted: The application of retrieval-augmented generation could be favored when the goal turns to adaptability rather than precision, whereas fine-tuning might be the best choice in other situations, such as when a stable repository and sufficient labeled history are available.

7.2. Practical Deployment Considerations

Bringing commit message generation into real development workflows requires attention to several practical factors:

Latency and performance. Retrieval plus generation averages under 2.5 s per commit (as discussed in

Section 4.4). This is acceptable for continuous integration, though large repositories may benefit from batching or async execution.

Privacy and compliance. Fine-tuning on local infrastructure ensures that sensitive code never leaves the organization, an essential feature for teams in regulated industries.

Customization. Teams can scope retrieval indexes to specific repositories or branches, offering contextual adaptation without requiring full retraining.

Toolchain compatibility. Integration with Git hooks, IDE extensions (e.g., VS Code, IntelliJ), and REST APIs makes it easy for developers to adopt the system without altering their workflows.

The three paradigms evaluated in this study exhibit distinct computational cost profiles. Zero-shot prompting introduces minimal engineering overhead but relies entirely on remote inference and external APIs. Retrieval-augmented generation incurs additional inference time costs due to embedding computation, vector similarity search, and prompt expansion; however, these overheads remain moderate in practice, as evidenced by the sub-second retrieval latency reported in

Section 4.4. Fine-tuning requires the highest upfront computational investment for training and infrastructure, but benefits from lower inference latency, predictable costs, and stronger privacy guarantees during deployment. Accordingly, the choice between paradigms reflects a trade-off between upfront training cost, inference-time latency, and operational constraints.

Beyond standalone use, this framework can be embedded into DevOps pipelines as part of continuous documentation practices. For example, the system can be triggered via local Git hooks or during CI/CD stages (e.g., GitHub Actions, GitLab CI). Generated messages can then be reviewed and edited by developers before merging, preserving a human-in-the-loop process.

This approach aligns naturally with DevOps goals of traceability, automation, and reproducibility, enabling lightweight, AI-assisted documentation that respects human oversight.

Figure 10 illustrates how the proposed framework can be integrated into a real world workflow, including local pre-commit hooks and CI/CD pipeline stages.

7.3. Ethical and Technical Considerations

Automation of commit messages raises other issues, apart from the technical correctness of the messages themselves. On the one hand, LLMs can be useful in making the messages standardized, thereby making the task easier. On the other hand, there is an indirect transfer of the responsibility of message authorship from the programmer.

As a matter of transparency and accountabilities, it is recommended that system deployments be accompanied by aspects of tagging auto-generated messages and record-keeping of the model version used. Developers should still have a scope for overriding and altering outputs.

In practice, mixed initiative systems, where AI offers suggestions but humans remain in control, are best aligned with responsible AI adoption in software engineering [

6,

17]. Continuous feedback loops and editorial control foster both quality and trust in these hybrid workflows.

7.4. Summary of Key Findings

The main outcomes of this study can be summarized as follows:

RQ1: Fine-tuned and retrieval-augmented LLMs clearly outperform zero-shot prompting in commit message generation;

RQ2: The Adequacy metric shows the strongest correlation with human judgment, suggesting that semantic metrics are better suited than lexical ones;

The models exhibit consistent performance across multiple programming languages, suggesting generalizability;

Fine-tuned models offer high precision, while retrieval-augmented models offer flexibility representing complementary strengths;

The proposed framework is lightweight, fast, and easily integrated into real world workflows without disrupting developer autonomy.

Together, these findings put LLM-based commit message generation as a viable, human-centered enhancement to modern software engineering practices and will bridge academic research with actionable tools for industry.

8. Limitations and Future Work

Although this study provides very important information, a number of limitations exist in order to improve in future.

8.1. Internal Validity

As with all studies involving large language models trained on publicly available code repositories, it is not possible to fully exclude the presence of overlapping samples between the CommitBench dataset and the pretraining corpora of proprietary or open-source models. This potential contamination is a known limitation in empirical studies based on GitHub data. However, this risk applies uniformly across all evaluated models and does not affect the relative comparison between modeling paradigms conducted in this work.

Code differences alone are used for evaluation, without relying on other information such as issue names, comments in PRs, and CI status. In reality, commit messages in real-world projects often incorporate this extra information. One possible future research direction is in multi-modal conditioning.

8.2. External Validity

Our experimental setup utilizes the CommitBench dataset [

7,

8]. Although this dataset supports six different languages, it is probable that it will not cover all repositories. Areas such as embedded systems or infrustructure code repositories are less considered. Such a study can be reproduced in proprietary or domain-specific repositories.

While our results demonstrate consistent comparative trends across the six programming languages represented in CommitBench, these findings should not be interpreted as universal robustness across all software repositories or development domains. The evaluated repositories primarily reflect open-source projects with conventional version control practices, and may not capture domain specific characteristics such as embedded systems, infrastructure as code, or highly specialized industrial codebases. Accordingly, claims of robustness are scoped to the CommitBench setting and the languages evaluated, and further validation on additional repositories and domains is required to establish broader generalizability.

While our preprocessing explicitly balances the evaluation subset across the six programming languages in CommitBench, we did not explicitly enforce stratification by commit category (e.g., bug fixes, refactoring, feature additions) during subset construction. As a result, some commit types may be over- or under-represented within certain languages, which can influence conclusions about cross-task generalization.

8.3. Construct Validity

The human evaluation conducted in this study is intended solely as a qualitative validation of automated metrics and does not support statistical inference or population-level generalization. The evaluation was performed on a sample of 100 commits, each independently reviewed by three developers using five-point Likert scales.

To explicitly control sampling bias, the 100 commits were selected using stratified random sampling across multiple dimensions, including (i) the six programming languages considered in CommitBench (Java, Python, JavaScript, PHP, Ruby, and Go); (ii) major commit categories (bug fixes, refactoring, new features, documentation updates, and configuration changes); and (iii) diff length buckets to ensure coverage of both short edits and long or structurally complex diffs. This design avoids convenience sampling and mitigates the risk of selective reporting.

Nevertheless, due to the limited sample size relative to the diversity of CommitBench, the human evaluation results should be interpreted as indicative rather than statistically representative. Larger-scale human studies with expanded samples and additional annotators are left for future work.

8.4. Model Representativeness

This study does not claim to benchmark all state-of-the-art available LLMs at the time of writing. Recent models such as DeepSeek-Coder-V2, Qwen2.5-Coder, Claude-3.5, and Gemini-1.5 were not included, as the primary objective was to isolate paradigm-level differences between zero-shot prompting, retrieval-augmented generation, and fine-tuning. Extending the proposed evaluation framework to these newer code-centric models constitutes an important direction for future work.

8.5. Model and Retrieval Constraints

The performance of RAG heavily relies on the quality of the retrieval task. Incorrect examples can lead to semantic drift, especially in situations with poor lexical overlap. To improve this, future research can focus more on exploring

neural re-ranking [

6,

17] or hybrid selectors that balance diversity and precision. Additionally, while smaller open models offer privacy benefits, they may struggle with complex reasoning compared to larger proprietary systems.

8.6. Opportunities for Future Research

Several promising directions emerge:

Adaptive learning. Systems can incorporate developer edits into learning loops that adjust retrieval weights or model parameters over time.

Explainability. Build systems where generated message tokens can be traced back to a particular diff segment.

Cross-task generalization. Apply this comparative study paradigm to other relevant tasks such as generating a change log, linking issues, and code summarization.

Auditing and accountability. Include support for auditing machine-generated commit messages with added metadata.

Field validation. Perform longitudinal studies to assess effects on developer productivity, document quality, and satisfaction in a real world setting.

Overall, this study supports the development of human-centered automation for software documentation. Combining technical rigor with responsible deployment practices will enable future systems to be transparent, adaptive, and grounded in real developer needs.

9. Conclusions

In this paper, a comparative analysis of different large language model approaches for commit message generation is presented using the CommitBench dataset in a controlled setting. For this analysis, we chose to compare outcomes based on not one but three different paradigms: ChatGPT, DeepSeek, and Qwen.

Our findings indicate a marked superiority of both fine-tuned and retrieval-augmented models over zero-shot methods in providing brief, accurate, and relevant commit message descriptions (RQ1). Moreover, among all evaluation metrics, the most relevant one to a subjective assessment emphasizing semantic relevance over superficial similarity is indicated by the Adequacy metric (RQ2). Aside from this, this paper proposes a reproducible framework involving data processing, information retrieval, and reproducible evaluation. Moreover, this research will show how LLMs can be applied in a realistic setting in developer environments for privacy, transparency, and efficiency. In conclusion, commit message generation is no longer a topic of speculation. The tool is now a practical, measurable, and morally deployable implementation technique in modern software development. The future will likely see AI systems that continuously learn, adapt to context, and empower developers rather than replace them. As Sudesh notes, AI tools should work alongside developers, not instead of them.

The conclusions of this study should be interpreted in light of the limitations discussed in

Section 8. Specifically, while fine-tuning and retrieval-augmented generation consistently outperform zero-shot prompting under our controlled experimental setting, these results reflect paradigm-level trends rather than performance guarantees across all repositories, domains, or organizational contexts. Limitations related to dataset coverage, model representativeness, and the qualitative scale of the human evaluation constrain the degree of generalization that can be claimed. Accordingly, practical deployment recommendations should be conditioned on contextual factors such as privacy requirements, computational resources, and latency constraints.

The next phase of AI-assisted development is not about replacing developers, it is about giving them tools that write with them, not for them.

Author Contributions

Conceptualization, M.M.T. and F.M.; methodology, M.M.T., M.A. and F.M.; software, M.M.T.; validation, M.M.T. and W.G.A.-K.; formal analysis, M.M.T.; investigation, M.M.T.; resources, M.M.T.; data curation, M.M.T.; writing—original draft preparation, W.G.A.-K. and M.A.; writing—review and editing, M.M.T., M.A. and W.G.A.-K.; visualization, M.M.T. and M.A.; supervision, W.G.A.-K. All authors have read and agreed to the published version of the manuscript.

Funding

The funding support is provided by the Interdisciplinary Research Center for Intelligent Secure Systems (IRC-ISS) at King Fahd University of Petroleum & Minerals under Project No. INSS2522.

Data Availability Statement

The benchmark dataset (CommitBench) used in this study is publicly available, as described in [

1,

4,

7]. Processed splits, prompt templates, and evaluation scripts can be shared upon reasonable request.

Acknowledgments

The authors also acknowledge the use of GPT-4o for enhancing the language and readability of this manuscript, and Napkin AI for the generation of select figures.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Zhang, Y.; Qiu, Z.; Stol, K.-J.; Zhu, W.; Zhu, J.; Tian, Y.; Liu, H. Automatic commit message generation: A critical review and directions for future work. IEEE Trans. Softw. Eng. 2024, 50, 816–835. [Google Scholar] [CrossRef]

- Li, J.; Ahmed, I. Commit message matters: Investigating impact and evolution of commit message quality. In Proceedings of the 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE), Melbourne, Australia, 15–16 May 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 806–817. [Google Scholar]

- Tian, Y.; Zhang, Y.; Stol, K.-J.; Jiang, L.; Liu, H. What makes a good commit message? In Proceedings of the 44th International Conference on Software Engineering (ICSE ’22), Pittsburgh, PA, USA, 21–29 May 2022; ACM: New York, NY, USA, 2022; pp. 2389–2401. [Google Scholar] [CrossRef]

- Xue, P.; Wu, L.; Yu, Z.; Jin, Z.; Yang, Z.; Li, X.; Yang, Z.; Tan, Y. Automated commit message generation with large language models: An empirical study and beyond. IEEE Trans. Softw. Eng. 2024, 50, 3208–3224. [Google Scholar] [CrossRef]

- Zhang, Q.; Fang, C.; Xie, Y.; Zhang, Y.; Yang, Y.; Sun, W.; Yu, S.; Chen, Z. A survey on large language models for software engineering. arXiv 2023, arXiv:2312.15223. [Google Scholar] [CrossRef]

- Hou, X.; Zhao, Y.; Liu, Y.; Yang, Z.; Wang, K.; Li, L.; Luo, X.; Lo, D.; Grundy, J.; Wang, H. Large language models for software engineering: A systematic literature review. ACM Trans. Softw. Eng. Methodol. 2024, 33, 220. [Google Scholar] [CrossRef]

- Schall, M.; Czinczoll, T.; De Melo, G. Commitbench: A benchmark for commit message generation. In Proceedings of the 2024 IEEE International Conference on Software Analysis, Evolution and Reengineering (SANER), Rovaniemi, Finland, 12–15 March 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 728–739. [Google Scholar]

- Kosyanenko, I.A.; Bolbakov, R.G. Dataset collection for automatic generation of commit messages. Russ. Technol. J. 2025, 13, 7–17. [Google Scholar] [CrossRef]

- Jiang, S.; Armaly, A.; McMillan, C. Automatically generating commit messages from diffs using neural machine translation. In Proceedings of the 2017 32nd IEEE/ACM International Conference on Automated Software Engineering (ASE), Urbana, IL, USA, 30 October–3 November 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 135–146. [Google Scholar]

- Liu, Z.; Xia, X.; Hassan, A.E.; Lo, D.; Xing, Z.; Wang, X. Neural-machine-translation-based commit message generation: How far are we? In Proceedings of the 33rd ACM/IEEE International Conference on Automated Software Engineering, Montpellier, France, 3–7 September 2018; pp. 373–384. [Google Scholar]

- Nie, L.Y.; Gao, C.; Zhong, Z.; Lam, W.; Liu, Y.; Xu, Z. Coregen: Contextualized code representation learning for commit message generation. Neurocomputing 2021, 459, 97–107. [Google Scholar] [CrossRef]

- He, Y.; Wang, L.; Wang, K.; Zhang, Y.; Zhang, H.; Li, Z. Come: Commit message generation with modification embedding. In Proceedings of the 32nd ACM SIGSOFT International Symposium on Software Testing and Analysis, Seattle, WA, USA, 17–21 July 2023; pp. 792–803. [Google Scholar]

- Wang, X.; Jiang, H.; Wu, Z.; Yang, Q. Adaptive variational autoencoding generative adversarial networks for rolling bearing fault diagnosis. Adv. Eng. Inform. 2023, 56, 102027. [Google Scholar] [CrossRef]

- Qu, X.; Liu, Z.; Wu, C.Q.; Hou, A.; Yin, X.; Chen, Z. MFGAN: Multimodal fusion for industrial anomaly detection using attention-based autoencoder and generative adversarial network. Sensors 2024, 24, 637. [Google Scholar] [CrossRef] [PubMed]

- Lopes, C.V.; Klotzman, V.I.; Ma, I.; Ahmed, I. Commit messages in the age of large language models. arXiv 2024, arXiv:2401.17622. [Google Scholar] [CrossRef]

- Li, J.; Faragó, D.; Petrov, C.; Ahmed, I. Only diff is not enough: Generating commit messages leveraging reasoning and action of large language model. Proc. ACM Softw. Eng. 2024, 1, 745–766. [Google Scholar] [CrossRef]

- Gao, C.; Hu, X.; Gao, S.; Xia, X.; Jin, Z. The current challenges of software engineering in the era of large language models. ACM Trans. Softw. Eng. Methodol. 2025, 34, 127. [Google Scholar] [CrossRef]

- Huang, Z.; Huang, Y.; Chen, X.; Zhou, X.; Yang, C.; Zheng, Z. An empirical study on learning-based techniques for explicit and implicit commit messages generation. In Proceedings of the 39th IEEE/ACM International Conference on Automated Software Engineering, Sacramento, CA, USA, 27 October–1 November 2024; pp. 544–555. [Google Scholar]

- Zhang, L.; Zhao, J.; Wang, C.; Liang, P. Using large language models for commit message generation: A preliminary study. In Proceedings of the 2024 IEEE International Conference on Software Analysis, Evolution and Reengineering (SANER), Rovaniemi, Finland, 12–15 March 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 126–130. [Google Scholar]

- BalancedCommitBench Authors. BalancedCommitBench: A Language-Balanced Commit Messages Subset Extracted from CommitBench; Zenodo: Genève, Switzerland, 2026. [Google Scholar] [CrossRef]

- Bogomolov, E.; Eliseeva, A.; Galimzyanov, T.; Glukhov, E.; Shapkin, A.; Tigina, M.; Golubev, Y.; Kovrigin, A.; van Deursen, A.; Izadi, M.; et al. Long code arena: A set of benchmarks for long-context code models. arXiv 2024, arXiv:2406.11612. [Google Scholar] [CrossRef]

- Joshi, S. A review of generative AI and DevOps pipelines: CI/CD, agentic automation, MLOps integration, and large language models. Int. J. Innov. Res. Comput. Sci. Technol. 2025, 13, 1–14. [Google Scholar] [CrossRef]

- Allam, H. Intelligent automation: Leveraging LLMs in DevOps toolchains. Int. J. AI Bigdata Comput. Manag. Stud. 2024, 5, 81–94. [Google Scholar] [CrossRef]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.-J. BLEU: A method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics (ACL), Philadelphia, PA, USA, 7–12 July 2002; pp. 311–318. [Google Scholar]

- Lin, C.-Y. ROUGE: A Package for Automatic Evaluation of Summaries. In Proceedings of Text Summarization Branches Out: ACL Workshop; Association for Computational Linguistics: Stroudsburg, PA, USA, 2004. [Google Scholar]

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization; Association for Computational Linguistics: Stroudsburg, PA, USA, 2005. [Google Scholar]

- Xu, S.; Yao, Y.; Xu, F.; Gu, T.; Tong, H.; Lu, J. Commit message generation for source code changes. In Proceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence (IJCAI-19), Macao, China, 10–16 August 2019. [Google Scholar]

- Trigui, M.M.; Al-Khatib, W.G. LLMs for Commit Messages: A Survey and an Agent-Based Evaluation Protocol on CommitBench. Computers 2025, 14, 427. [Google Scholar] [CrossRef]

Figure 1.

Distribution of programming languages in the raw CommitBench dataset.

Figure 1.

Distribution of programming languages in the raw CommitBench dataset.

Figure 2.

Commit counts per language and repository group before filtering.

Figure 2.

Commit counts per language and repository group before filtering.

Figure 3.

Detection and removal of bot-like commit messages across languages.

Figure 3.

Detection and removal of bot-like commit messages across languages.

Figure 4.

Trivial commit messages filtered during preprocessing.

Figure 4.

Trivial commit messages filtered during preprocessing.

Figure 5.

Distribution of token lengths across commit messages before balancing.

Figure 5.

Distribution of token lengths across commit messages before balancing.

Figure 6.

Balanced subset of CommitBench after filtering and normalization.

Figure 6.

Balanced subset of CommitBench after filtering and normalization.

Figure 7.

End-to-end preprocessing pipeline used to construct the final CommitBench subset.

Figure 7.

End-to-end preprocessing pipeline used to construct the final CommitBench subset.

Figure 8.

Methodology overview showing the three evaluation paradigms: zero-shot prompting, retrieval-augmented generation (RAG), and fine-tuned inference.

Figure 8.

Methodology overview showing the three evaluation paradigms: zero-shot prompting, retrieval-augmented generation (RAG), and fine-tuned inference.

Figure 9.

Retrieval-augmented generation (RAG) pipeline. Each input diff is embedded, matched against an indexed memory of historical commits, and supplemented with the top-k exemplars before message generation.

Figure 9.

Retrieval-augmented generation (RAG) pipeline. Each input diff is embedded, matched against an indexed memory of historical commits, and supplemented with the top-k exemplars before message generation.

Figure 10.

Practical integration of the proposed framework into software workflows. The model operates at two levels: (1) as a local pre-commit hook offering real-time suggestions, and (2) as a CI/CD component enhancing or validating messages before merge. This hybrid setup enables automation with built-in human review.

Figure 10.

Practical integration of the proposed framework into software workflows. The model operates at two levels: (1) as a local pre-commit hook offering real-time suggestions, and (2) as a CI/CD component enhancing or validating messages before merge. This hybrid setup enables automation with built-in human review.

Table 1.

Overview of preprocessing steps and resulting dataset sizes.

Table 1.

Overview of preprocessing steps and resulting dataset sizes.

| Step | Count Remaining |

|---|

| Raw total (after normalization) | 1,165,213 |

| Remove duplicates | 1,165,213 |

| Remove bot-like commits | 1,165,091 |

| Remove trivial/low-information messages | 1,155,797 |

| Length-based filtering | 1,154,926 |

| Balanced evaluation subset (six languages) | 576,342 |

Table 2.

Summary of retrieval-augmented generation (RAG) configuration.

Table 2.

Summary of retrieval-augmented generation (RAG) configuration.

| Component | Configuration |

|---|

| Retriever type | Hybrid (dense embeddings + TF–IDF) |

| Embedding model | all-MiniLM-L6-v2 (384 dimensions) |

| Vector index | FAISS flat index (CPU) |

| Index size | 576,342 commit diffs |

| Chunking strategy | Full commit diff (no chunk splitting) |

| Similarity metric | Cosine similarity |

| Initial retrieval | Top-10 candidates |

| Re-ranking | Hybrid embedding + TF–IDF () |

| Final context size | Top- exemplars |

| Query rewriting | None |

| Commit history chaining | None |

| Prompt injection | Inline exemplars before generation prompt |

| Index update strategy | Static (built once from training data) |

Table 3.

Model inference and decoding parameters.

Table 3.

Model inference and decoding parameters.

| Parameter | ChatGPT-4.1-mini | DeepSeek-RAG | Qwen-Commit |

|---|

| Max tokens (output) | 40 | 40 | 40 |

| Temperature | 0.2 | 0.2 | 0.3 |

| Top-p (nucleus) | 0.9 | 0.9 | 0.95 |

| Top-k | 40 | 40 | 50 |

| Stop sequence | “\n” | “\n” | “\n” |

| Context window | 4096 | 8192 (with retrieval) | 4096 |

| Batch size | 16 | 8 | 8 |

Table 4.

Automatic evaluation metrics averaged across the test set (higher is better).

Table 4.

Automatic evaluation metrics averaged across the test set (higher is better).

| Model | BLEU-2 | ROUGE-L | METEOR | Adequacy |

|---|

| ChatGPT (zero-shot) | 0.184 | 0.326 | 0.291 | 0.553 |

| DeepSeek-RAG (hybrid retrieval) | 0.216 | 0.352 | 0.317 | 0.612 |

| Qwen-Commit (fine-tuned) | 0.244 | 0.384 | 0.333 | 0.635 |

Table 5.

Human evaluation of message quality (Likert scale 1–5).

Table 5.

Human evaluation of message quality (Likert scale 1–5).

| Model | Adequacy | Fluency | Overall Mean |

|---|

| ChatGPT (zero-shot) | 3.92 | 3.61 | 3.77 |

| DeepSeek-RAG (hybrid) | 4.24 | 3.97 | 4.11 |

| Qwen-Commit (fine-tuned) | 4.45 | 4.19 | 4.32 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |