FGPE+: The Mobile FGPE Environment and the Pareto-Optimized Gamified Programming Exercise Selection Model—An Empirical Evaluation

Abstract

1. Introduction

2. Overview of Approaches to Gamification in Educational Apps

- The Points, Badges, and Leaderboard (PBL) model entails allocating points to accomplished tasks or achievements, awarding badges for particular achievements, and keeping leaderboards to display student standings [36]. It uses competition and prizes to inspire students and promote a sense of accomplishment and growth.

- Quests and Levels model, in which learning activities are arranged into quests or missions, and learners move through a series of obstacles or levels [37]. Each level often provides new concepts or programming tasks, resulting in a logical learning path. Completing quests or levels opens additional content or features, giving the player a sense of advancement and success.

- Storytelling and narrative model that includes storytelling and narrative components into the programming learning process [38]. It develops immersive settings, characters, and plotlines to emotionally engage learners and relate learning information to real-world circumstances. Learners take on parts in the plot and embark on quests or missions, making learning more interesting and meaningful [39].

- The Achievements and unlockables model, as in video games, provides a system of achievements and unlockables [40]. Learners receive rewards when they complete particular programming assignments, grasp concepts, or reach milestones. Unlockables are extra challenges, added content, or special features that become available as learners progress and meet certain goals.

- The Companion or virtual pets model involves the incorporation of companion characters or virtual pets into the programming learning environment [41]. By completing programming assignments or demonstrating expertise, students can care for their virtual pets, earn rewards, and unlock additional capabilities. Throughout the learning process, the companions provide comments, direction, and encouragement [42].

- By integrating collaborative challenges and tournaments [43], the Collaborative challenge and tournament model stimulates collaboration and competitiveness among learners. Students create groups, collaborate to solve programming issues, and compete against other groups. It promotes camaraderie and pleasant rivalry while encouraging cooperation, communication, and problem-solving abilities [44].

- The Simulations and Virtual Environments Model [45] use simulations or virtual environments to provide realistic programming scenarios. Within the simulated environment, learners participate in hands-on coding activities such as constructing virtual apps or solving virtual programming tasks. Simulations provide a secure environment for experimentation and practice, allowing students to apply principles in a real-world setting [46].

- Gamification models almost always some include feedback and progress monitoring [47]. Learners immediately receive feedback on their coding performance, flagging problems and offering improvements. Progress tracking techniques such as progress bars, skill trees, or visual representations assist learners in seeing their progress and providing a sense of success [35].

- Gamified Learning Analytics combines learning analytics [48] with gamification [49]. It collects data from learners’ interactions with gamified programming environments to gather insights into their learning habits, progress, and areas for growth. To improve learning outcomes, these insights enable tailored feedback, adaptive interventions, and instructional decision-making.

- Goal-Structure Theory emphasizes the importance of defining specific objectives and creating a structured learning environment [51]. It underlines the significance of defining distinct, difficult, and attainable goals in programming assignments. Clear goals provide learners a feeling of direction and purpose, which promotes motivation and attention throughout the learning process.

- Learner autonomy, competence, and relatedness are all emphasized in Self-Determination Theory [52]. It demonstrates that learners are motivated when they feel in charge of their learning, recognize their competency in programming tasks, and feel connected to others. Gamification components that encourage autonomy, skill development, and social interaction can boost student motivation and engagement.

- The goal of Flow Theory [53] is to generate a state of “flow” in which learners are completely absorbed and interested in programming tasks. Flow occurs when learners confront tasks that are appropriate for their ability level, receive fast feedback, and feel a sense of control and concentration. Flow experiences in programming education can be facilitated by gamification features that increase challenge, feedback, and focused attention.

- In programming education, Social Cognitive Theory stresses the importance of observational learning, social interaction, and feedback [54]. Learners may watch and learn from other people’s programming techniques, participate in collaborative coding exercises, and receive constructive comments from peers or instructors. Based on this principle, gamification models stimulate social learning, give chances for knowledge exchange, and promote positive reinforcement [55].

- The Cognitive burden Theory [56] is concerned with controlling cognitive burden during programming activities. It implies that instructional design should aim to reduce external cognitive strain while increasing internal cognitive demand. Based on this principle, gamification models may include interactive components, step-by-step assistance, and scaffolded learning to minimize cognitive load and improve learning efficiency [57].

- Mastery Learning [58] advocates for a mastery-based approach to programming instruction. It pushes students to grasp basic programming concepts and abilities before moving on to more difficult topics. Gamification methods based on mastery learning give adaptive feedback, tailored learning routes, and chances for purposeful practice to assist learners in achieving mastery and establishing a solid programming foundation.

- Personalized and adaptive gamification models adjust the learning experience to the qualities, interests, and needs of the individual learner [59]. They use student data, such as performance history and learning styles, to modify the difficulty level, material sequencing, and feedback in programming exercises dynamically. Personalization and adaptability boost student engagement, improve learning efficiency, and accommodate a wide range of learning profiles [60].

3. Materials and Methods

3.1. Materials

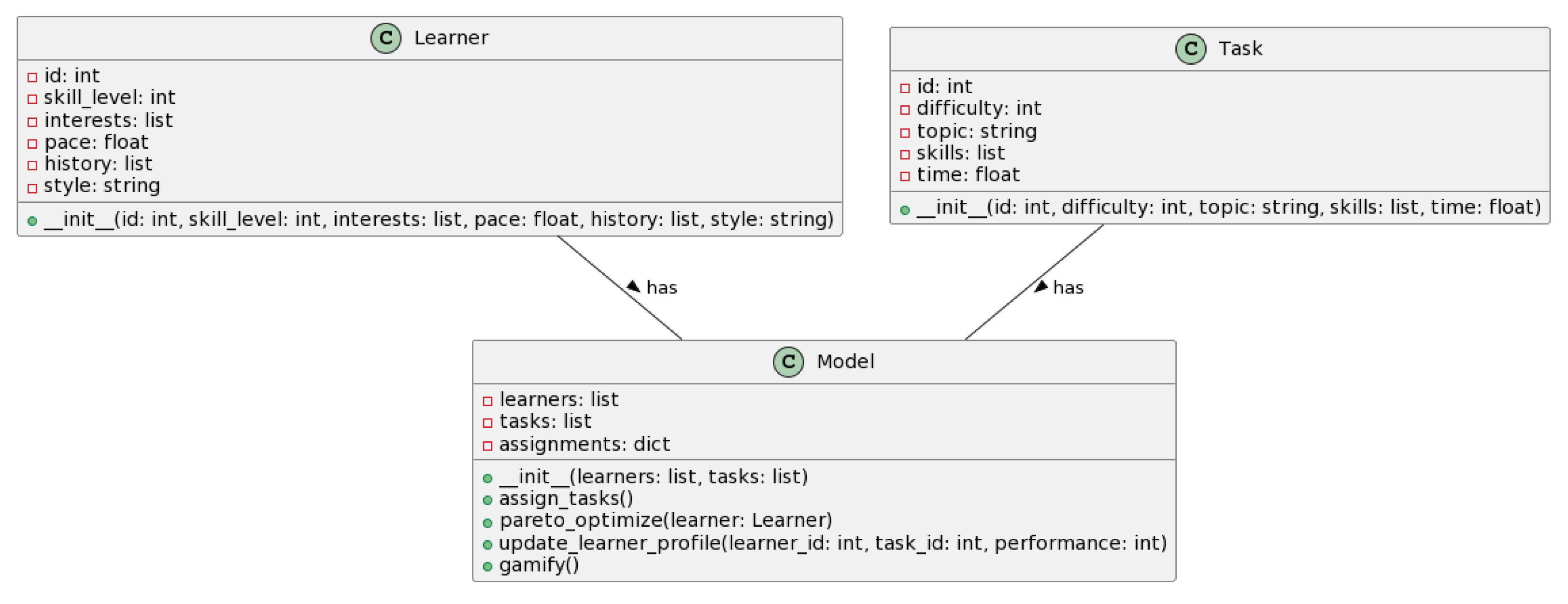

3.2. Pareto-Optimized Gamified Programming Task Selection Model

- Programming task bank: A repository of programming tasks, each classified according to their difficulty level, related topic, required skills, estimated completion time, etc.

- Learner profile: A dynamic profile for each learner capturing their programming skill level, areas of interest, learning pace, historical performance on tasks, preferred learning style, etc.

- Gamification elements: Incorporation of game design elements such as points, badges, leaderboards, achievement tracking, feedback, progress bars, storyline, etc.

3.3. Implementation

- The exercise abstract class represents a programming exercise. It has attributes such as id (exercise identifier), difficulty (difficulty level of the exercise), and learningOutcomes (a list of learning outcomes associated with the exercise). It provides methods to access these attributes and defines three virtual methods: evaluateObjectiveWeights(to evaluate the objective weights of the exercise), calculateObjectiveValues() (to calculate the objective values of the exercise), and compareTo() (to compare two exercises based on their objective values).

- ParetoExercise class represents an exercise that includes objective values. It inherits from the Exercise class and has an additional attribute called objectiveValues, which is a map that stores objective values for the exercise. It provides methods to obtain and set objective values for specific objectives and defines the dominates() method to check whether it dominates another ParetoExercise based on their objective values.

- ExerciseSelector abstract class serves as the base class for the exercise selection algorithm. It has attributes exercises (a list of exercises to select from) and objectiveWeights (a map that holds the weights of different objectives). It provides methods to select exercises, sets the exercises and objective weights, and defines six virtual methods that outline different steps of the algorithm: evaluateExercises() (to evaluate the exercises based on objectives), paretoOptimization() (to perform Pareto optimization on the exercises), diversityEnhancement() (to enhance the diversity of the exercise set), gamificationIntegration() (to integrate gamification elements into the exercises), personalization() (to personalize the exercise selection), and evaluationAndFeedbackLoop() (to evaluate and refine the exercise selection based on feedback).

- MyExerciseSelector. This class represents a specific implementation of ExerciseSelector. It adds an additional attribute called the threshold (a threshold value for evaluation) and overrides the paretoOptimization() and evaluationAndFeedbackLoop() methods to provide custom implementation based on the defined threshold.

- Objective class represents an objective to optimize in the exercise selection. It has an attribute name (the name of the objective) and provides a method to access the name.

4. Results

4.1. Evaluation of the PWA Version of the FGPE PLE Platform by Mobile Device Users

- (Q1)

- How do you generally rate the mobile version of the FGPE PLE platform?Answer range: 1 (bad)–5 (excellent).

- (Q2)

- Do you think the mobile version of the FGPE PLE platform makes sense for students learning at home?Answer range: 1 (bad)–5 (excellent).

- (Q3)

- Do you think the mobile version of the FGPE PLE platform makes sense for students who study on the go to school/work?Answer range: 1 (bad)–5 (excellent).

- (Q4)

- Do you think it is possible to learn to write code only using the mobile version of the FGPE PLE platform—and without using the PC version at all?Answer range: 1 (bad)–5 (excellent).

4.2. User Experience Analysis

- Attractiveness: covers the overall impression of the product, including whether it is pleasant or enjoyable to use.

- Perspicuity: measures how easy it is for users to understand how to use the product.

- Efficiency: evaluates the perception of how efficiently users can complete tasks using the product.

- Dependability: measures how reliable and predictable users find the product.

- Stimulation: evaluates how exciting and motivating the product is to use.

- Novelty: assesses whether the design of the product is creative and innovative and whether it meets users’ expectations.

- Attractiveness: The Lithuanian group had a higher average score (mean = 1.4141, std = 0.6081) compared to the Polish group (mean = 0.6607, std = 0.5785). This indicates that the Lithuanian students found the learning environment more attractive and appealing than the Polish students.

- Perspicuity: The Lithuanian group also scored higher (mean = 1.4914, std = 0.7604) than the Polish group (mean = 0.5580, std = 0.7732), suggesting that the Lithuanian students found the learning environment more clear and understandable.

- Efficiency: Again, the Lithuanian group’s score was higher (mean = 1.5193, std = 0.6714) than the Polish group (mean = 0.2727, std = 0.6650), indicating that the Lithuanian students found the learning environment more efficient for achieving their tasks.

- Dependability: The Lithuanian group had a higher mean score (mean = 1.2462, std = 0.8367) than the Polish group (mean = 0.7047, std = 0.7909), indicating they found the learning environment more reliable and dependable.

- Stimulation: The Lithuanian group scored slightly higher in this dimension (mean = 1.4972, std = 0.6408) than the Polish group (mean = 1.2548, std = 0.7053). This means that the Lithuanian students found the learning environment slightly more exciting and motivating.

- Novelty: Lastly, the Lithuanian group scored higher in terms of novelty (mean = 1.3701, std = 0.6361) compared to the Polish group (mean = 0.5738, std = 0.6177). This suggests that the Lithuanian students found the learning environment more innovative and creative.

4.3. Knowledge Evaluation Survey: FGPE Approach vs. Classic Moodle Course

4.4. Effectiveness of Using Sharable Content Object Reference Model

5. Discussion

5.1. Importance of FGPE+ for STEM Education

5.2. Limitations

- This study used a subset of students from Poland and Lithuania, which may not be typical of the whole population. We believe that extending the study’s size and altering the demographics of the participants would potentially increase generalizability.

- This research relied heavily on self-report measures, which are subjective and prone to bias. Incorporating objective measures, such as performance-based assessments or tracking system data, could provide a more comprehensive evaluation of the platform’s effectiveness.

- The study aimed to assess the FGPE PLE platform in various educational settings and learning scenarios. The findings may not fully represent the intricacies and complexity of various educational settings. Future study might look at the platform’s efficacy in other educational institutions, student backgrounds, and instructional environments.

- The PWA version of the FGPE PLE platform was assessed particularly for programming instruction in the research. The findings may not be applicable in other domains or topic areas. Replicating the study in additional educational fields would be advantageous in determining the platform’s generalizability.

- The investigation focused on the initial user experience and perceived knowledge. Understanding the long-term impact of the FGPE PLE platform on learners’ programming skill development and information retention would necessitate additional research outside the scope of this study.

5.3. Potential Lines of Research

- We concentrated on evaluating the platform’s initial user experience and perceived knowledge. More study might be conducted to investigate the long-term consequences of utilizing the FGPE PLE platform on mobile devices. Longitudinal studies might look into the long-term influence on learning outcomes, programming skill development, and knowledge retention.

- In terms of perceived knowledge, we compared the FGPE+ model to a traditional Moodle course. Future study might compare the efficacy of other educational methodologies, such as gamified platforms such as FGPE+ vs. traditional teaching methods. Comparative research might assist to uncover the advantages and disadvantages of each strategy and provide ideas into how to improve learning experiences.

- The platform was evaluated mostly using self-report measures in this study. Tracking user interactions, completion rates, and performance statistics, for example, might give a more objective evaluation of learners’ progress and engagement with the platform if learning analytics approaches are used. Analyzing such data might aid in the discovery of trends, the identification of areas for development, and the implementation of individualized learning interventions.

- Further study might look into the efficacy of certain instructional tactics used inside the FGPE PLE platform. Investigating how various gamification aspects, adaptive learning algorithms, or social interaction features influence motivation, engagement, and learning results might provide useful insights for building and enhancing educational systems.

- Our investigation discovered some cross-national disparities in user experience perceptions. More thorough cross-cultural research might provide insight on how cultural backgrounds and educational environments impact FGPE PLE platform acceptability and efficacy. Understanding these cultural differences may help with the customization and localization of educational systems for varied learner groups.

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Mishra, L.; Gupta, T.; Shree, A. Online teaching-learning in higher education during lockdown period of COVID-19 pandemic. Int. J. Educ. Res. Open 2020, 1, 100012. [Google Scholar] [CrossRef]

- Breiki, M.A.; Yahaya, W.A.J.W. Using Gamification to Promote Students’ Engagement While Teaching Online During COVID-19. In Teaching in the Post COVID-19 Era; Springer International Publishing: Cham, Switzerland, 2021; pp. 443–453. [Google Scholar] [CrossRef]

- Pedro, L.; Santos, C. Has Covid-19 emergency instruction killed the PLE? In Proceedings of the Ninth International Conference on Technological Ecosystems for Enhancing Multiculturality (TEEM’21), Barcelona, Spain, 26–29 October 2021; ACM: New York, NY, USA, 2021. [Google Scholar] [CrossRef]

- Redondo, R.P.D.; Rodríguez, M.C.; Escobar, J.J.L.; Vilas, A.F. Integrating micro-learning content in traditional e-learning platforms. Multimed. Tools Appl. 2020, 80, 3121–3151. [Google Scholar] [CrossRef]

- Jayalath, J.; Esichaikul, V. Gamification to Enhance Motivation and Engagement in Blended eLearning for Technical and Vocational Education and Training. Technol. Knowl. Learn. 2020, 27, 91–118. [Google Scholar] [CrossRef]

- da Silva, J.P.; Silveira, I.F. A systematic review on open educational games for programming learning and teaching. Int. J. Emerg. Technol. Learn. 2020, 15, 156–172. [Google Scholar] [CrossRef]

- Paiva, J.C.; Leal, J.P.; Queirós, R. Fostering programming practice through games. Information 2020, 11, 498. [Google Scholar] [CrossRef]

- Maryono, D.; Budiyono, S.; Akhyar, M. Implementation of Gamification in Programming Learning: Literature Review. Int. J. Inf. Educ. Technol. 2022, 12, 1448–1457. [Google Scholar] [CrossRef]

- Maskeliūnas, R.; Kulikajevas, A.; Blažauskas, T.; Damaševičius, R.; Swacha, J. An interactive serious mobile game for supporting the learning of programming in javascript in the context of eco-friendly city management. Computers 2020, 9, 102. [Google Scholar] [CrossRef]

- Cuervo-Cely, K.D.; Restrepo-Calle, F.; Ramírez-Echeverry, J.J. Effect Of Gamification On The Motivation Of Computer Programming Students. J. Inf. Technol. Educ. Res. 2022, 21, 001–023. [Google Scholar] [CrossRef]

- Chinchua, S.; Kantathanawat, T.; Tuntiwongwanich, S. Increasing Programming Self-Efficacy (PSE) Through a Problem-Based Gamification Digital Learning Ecosystem (DLE) Model. J. High. Educ. Theory Pract. 2022, 22, 131–143. [Google Scholar]

- Ašeriškis, D.; Damaševičius, R. Gamification Patterns for Gamification Applications. Procedia Comput. Sci. 2014, 39, 83–90. [Google Scholar] [CrossRef]

- Panskyi, T.; Rowińska, Z. A Holistic Digital Game-Based Learning Approach to Out-of-School Primary Programming Education. Inform. Educ. 2021, 20, 1–22. [Google Scholar] [CrossRef]

- Swacha, J.; Maskeliūnas, R.; Damaševičius, R.; Kulikajevas, A.; Blažauskas, T.; Muszyńska, K.; Miluniec, A.; Kowalska, M. Introducing sustainable development topics into computer science education: Design and evaluation of the eco jsity game. Sustainability 2021, 13, 4244. [Google Scholar] [CrossRef]

- Damaševičius, R.; Maskeliūnas, R.; Blažauskas, T. Serious Games and Gamification in Healthcare: A Meta-Review. Information 2023, 14, 105. [Google Scholar]

- Francillette, Y.; Boucher, E.; Bouchard, B.; Bouchard, K.; Gaboury, S. Serious games for people with mental disorders: State of the art of practices to maintain engagement and accessibility. Entertain. Comput. 2021, 37, 100396. [Google Scholar] [CrossRef]

- Zhao, D.; Muntean, C.H.; Chis, A.E.; Rozinaj, G.; Muntean, G. Game-Based Learning: Enhancing Student Experience, Knowledge Gain, and Usability in Higher Education Programming Courses. IEEE Trans. Educ. 2022, 65, 502–513. [Google Scholar] [CrossRef]

- Mohanarajah, S.; Sritharan, T. Shoot2learn: Fix-And-Play Educational Game For Learning Programming; Enhancing Student Engagement By Mixing Game Playing And Game Programming. J. Inf. Technol. Educ. Res. 2022, 21, 639–661. [Google Scholar] [CrossRef] [PubMed]

- Xinogalos, S.; Satratzemi, M. The Use of Educational Games in Programming Assignments: SQL Island as a Case Study. Appl. Sci. 2022, 12, 6563. [Google Scholar] [CrossRef]

- Barmpakas, A.; Xinogalos, S. Designing and Evaluating a Serious Game for Learning Artificial Intelligence Algorithms: SpAI War as a Case Study. Appl. Sci. 2023, 13, 5828. [Google Scholar] [CrossRef]

- Costa, J.M. Using game concepts to improve programming learning: A multi-level meta-analysis. Comput. Appl. Eng. Educ. 2023, 31, 1098–1110. [Google Scholar] [CrossRef]

- Soboleva, E.V.; Suvorova, T.N.; Grinshkun, A.V.; Bocharov, M.I. Applying Gamification in Learning the Basics of Algorithmization and Programming to Improve the Quality of Students’ Educational Results. Eur. J. Contemp. Educ. 2021, 10, 987–1002. [Google Scholar]

- Toda, A.M.; Valle, P.H.D.; Isotani, S. The Dark Side of Gamification: An Overview of Negative Effects of Gamification in Education. In Communications in Computer and Information Science; Springer International Publishing: Cham, Switzerland, 2018; pp. 143–156. [Google Scholar] [CrossRef]

- Imran, H. An Empirical Investigation of the Different Levels of Gamification in an Introductory Programming Course. J. Educ. Comput. Res. 2022, 61, 847–874. [Google Scholar] [CrossRef]

- Chatterjee, S.; Majumdar, D.; Misra, S.; Damaševičius, R. Adoption of mobile applications for teaching-learning process in rural girls’ schools in India: An empirical study. Educ. Inf. Technol. 2020, 25, 4057–4076. [Google Scholar] [CrossRef]

- Tuparov, G.; Keremedchiev, D.; Tuparova, D.; Stoyanova, M. Gamification and educational computer games in open source learning management systems as a part of assessment. In Proceedings of the 2018 17th International Conference on Information Technology Based Higher Education and Training (ITHET), Olhao, Portugal, 26–28 April 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Pérez-Berenguer, D.; García-Molina, J. A standard-based architecture to support learning interoperability: A practical experience in gamification. Software Pract. Exp. 2018, 48, 1238–1268. [Google Scholar] [CrossRef]

- Calle-Archila, C.R.; Drews, O.M. Student-Based Gamification Framework for Online Courses. In Communications in Computer and Information Science; Springer International Publishing: Cham, Switzerland, 2017; pp. 401–414. [Google Scholar] [CrossRef]

- Sheppard, D. Introduction to Progressive Web Apps. In Beginning Progressive Web App Development; Apress: New York, NY, USA, 2017; pp. 3–10. [Google Scholar] [CrossRef]

- Hajian, M. PWA with Angular and Workbox. In Progressive Web Apps with Angular; Apress: New York, NY, USA, 2019; pp. 331–345. [Google Scholar] [CrossRef]

- Devine, J.; Finney, J.; de Halleux, P.; Moskal, M.; Ball, T.; Hodges, S. MakeCode and CODAL: Intuitive and efficient embedded systems programming for education. J. Syst. Archit. 2019, 98, 468–483. [Google Scholar] [CrossRef]

- Lee, J.; Kim, H.; Park, J.; Shin, I.; Son, S. Pride and Prejudice in Progressive Web Apps. In Proceedings of the 2018 ACM SIGSAC Conference on Computer and Communications Security, Toronto, ON, Canada, 15–19 October 2018; ACM: New York, NY, USA, 2018. [Google Scholar] [CrossRef]

- FGPE PLE Environment. Available online: https://github.com/FGPE-Erasmus/fgpe-ple-v2 (accessed on 10 June 2023).

- Sutadji, E.; Hidayat, W.N.; Patmanthara, S.; Sulton, S.; Jabari, N.A.M.; Irsyad, M. Measuring user experience on SIPEJAR as e-learning of Universitas Negeri Malang. IOP Conf. Ser. Mater. Sci. Eng. 2020, 732, 012116. [Google Scholar] [CrossRef]

- Nah, F.F.H.; Zeng, Q.; Telaprolu, V.R.; Ayyappa, A.P.; Eschenbrenner, B. Gamification of Education: A Review of Literature. In Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2014; pp. 401–409. [Google Scholar] [CrossRef]

- Barik, T.; Murphy-Hill, E.; Zimmermann, T. A perspective on blending programming environments and games: Beyond points, badges, and leaderboards. In Proceedings of the 2016 IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC), Cambridge, UK, 4–8 September 2016; pp. 134–142. [Google Scholar] [CrossRef]

- Prokhorov, A.V.; Lisovichenko, V.O.; Mazorchuk, M.S.; Kuzminska, O.H. Developing a 3D quest game for career guidance to estimate students’ digital competences. CEUR Workshop Proc. 2020, 2731, 312–327. [Google Scholar] [CrossRef]

- Padilla-Zea, N.; Gutiérrez, F.L.; López-Arcos, J.R.; Abad-Arranz, A.; Paderewski, P. Modeling storytelling to be used in educational video games. Comput. Hum. Behav. 2014, 31, 461–474. [Google Scholar] [CrossRef]

- Hadzigeorgiou, Y. Narrative Thinking and Storytelling in Science Education. In Imaginative Science Education; Springer International Publishing: Cham, Switzerland, 2016; pp. 83–119. [Google Scholar] [CrossRef]

- Kusuma, G.P.; Wigati, E.K.; Utomo, Y.; Suryapranata, L.K.P. Analysis of Gamification Models in Education Using MDA Framework. Procedia Comput. Sci. 2018, 135, 385–392. [Google Scholar] [CrossRef]

- Wu, M.; Liao, C.C.; Chen, Z.H.; Chan, T.W. Designing a Competitive Game for Promoting Students’ Effort-Making Behavior by Virtual Pets. In Proceedings of the 2010 Third IEEE International Conference on Digital Game and Intelligent Toy Enhanced Learning, Kaohsiung, Taiwan, 12–16 April 2010; pp. 234–236. [Google Scholar] [CrossRef]

- Chen, Z.H.; Liao, C.; Chien, T.C.; Chan, T.W. Animal companions: Fostering children’s effort-making by nurturing virtual pets. Br. J. Educ. Technol. 2009, 42, 166–180. [Google Scholar] [CrossRef]

- Slavin, R.E. Cooperative Learning: Applying Contact Theory in Desegregated Schools. J. Soc. Issues 1985, 41, 45–62. [Google Scholar] [CrossRef]

- Zakaria, E.; Iksan, Z. Promoting Cooperative Learning in Science and Mathematics Education: A Malaysian Perspective. EURASIA J. Math. Sci. Technol. Educ. 2007, 3, 35–39. [Google Scholar] [CrossRef]

- Correia, A.; Fonseca, B.; Paredes, H.; Martins, P.; Morgado, L. Computer-Simulated 3D Virtual Environments in Collaborative Learning and Training: Meta-Review, Refinement, and Roadmap. In Progress in IS; Springer International Publishing: Cham, Switzerland, 2016; pp. 403–440. [Google Scholar] [CrossRef]

- Doumanis, I.; Economou, D.; Sim, G.R.; Porter, S. The impact of multimodal collaborative virtual environments on learning: A gamified online debate. Comput. Educ. 2019, 130, 121–138. [Google Scholar] [CrossRef]

- Wanick, V.; Bui, H. Gamification in Management: A systematic review and research directions. Int. J. Serious Games 2019, 6, 57–74. [Google Scholar] [CrossRef]

- Hooda, M.; Rana, C.; Dahiya, O.; Rizwan, A.; Hossain, M.S. Artificial Intelligence for Assessment and Feedback to Enhance Student Success in Higher Education. Math. Probl. Eng. 2022, 2022, 1–19. [Google Scholar] [CrossRef]

- Maher, Y.; Moussa, S.M.; Khalifa, M.E. Learners on Focus: Visualizing Analytics Through an Integrated Model for Learning Analytics in Adaptive Gamified E-Learning. IEEE Access 2020, 8, 197597–197616. [Google Scholar] [CrossRef]

- Dichev, C.; Dicheva, D. Gamifying education: What is known, what is believed and what remains uncertain: A critical review. Int. J. Educ. Technol. High. Educ. 2017, 14, 9. [Google Scholar] [CrossRef]

- Skaalvik, E.M.; Skaalvik, S. Collective teacher culture and school goal structure: Associations with teacher self-efficacy and engagement. Soc. Psychol. Educ. 2023, 26, 945–969. [Google Scholar] [CrossRef]

- Vasconcellos, D.; Parker, P.D.; Hilland, T.; Cinelli, R.; Owen, K.B.; Kapsal, N.; Lee, J.; Antczak, D.; Ntoumanis, N.; Ryan, R.M.; et al. Self-determination theory applied to physical education: A systematic review and meta-analysis. J. Educ. Psychol. 2020, 112, 1444–1469. [Google Scholar] [CrossRef]

- dos Santos, W.O.; Bittencourt, I.I.; Isotani, S.; Dermeval, D.; Marques, L.B.; Silveira, I.F. Flow Theory to Promote Learning in Educational Systems: Is it Really Relevant? Rev. Bras. Inform. Educ. 2018, 26, 29. [Google Scholar] [CrossRef]

- Schunk, D.H.; DiBenedetto, M.K. Motivation and social cognitive theory. Contemp. Educ. Psychol. 2020, 60, 101832. [Google Scholar] [CrossRef]

- Torre, D.; Durning, S.J. Social cognitive theory: Thinking and learning in social settings. Res. Med. Educ. 2022, 105–116. [Google Scholar] [CrossRef]

- Gao, T.; Kuang, L. Cognitive Loading and Knowledge Hiding in Art Design Education: Cognitive Engagement as Mediator and Supervisor Support as Moderator. Front. Psychol. 2022, 13, 837374. [Google Scholar] [CrossRef] [PubMed]

- Fleih, N.H.; Rushd, I. The theory of cognitive burden, its concept, importance, types, principles, strategies, in the educational learning process. Ann. Fac. Arts 2020, 48, 53–69. [Google Scholar] [CrossRef]

- Armacost, R.; Pet-Armacost, J. Using mastery-based grading to facilitate learning. In Proceedings of the 33rd Annual Frontiers in Education, Westminster, CO, USA, 5–8 November 2003; FIE, 2003; Volume 1, pp. TA3–20. [Google Scholar] [CrossRef]

- Bennani, S.; Maalel, A.; Ghezala, H.B. Adaptive gamification in E-learning: A literature review and future challenges. Comput. Appl. Eng. Educ. 2021, 30, 628–642. [Google Scholar] [CrossRef]

- López, C.; Tucker, C. Toward Personalized Adaptive Gamification: A Machine Learning Model for Predicting Performance. IEEE Trans. Games 2020, 12, 155–168. [Google Scholar] [CrossRef]

- Manzano-León, A.; Camacho-Lazarraga, P.; Guerrero, M.A.; Guerrero-Puerta, L.; Aguilar-Parra, J.M.; Trigueros, R.; Alias, A. Between Level Up and Game Over: A Systematic Literature Review of Gamification in Education. Sustainability 2021, 13, 2247. [Google Scholar] [CrossRef]

- Rodrigues, L.; Toda, A.M.; Oliveira, W.; Palomino, P.T.; Avila-Santos, A.P.; Isotani, S. Gamification Works, but How and to Whom? In Proceedings of the 52nd ACM Technical Symposium on Computer Science Education, Virtual Event, 13–20 March 2021; ACM: New York, NY, USA, 2021. [Google Scholar] [CrossRef]

- Hammerschall, U. A Gamification Framework for Long-Term Engagement in Education Based on Self Determination Theory and the Transtheoretical Model of Change. In Proceedings of the 2019 IEEE Global Engineering Education Conference (EDUCON), Dubai, United Arab Emirates, 8–11 April 2019; pp. 95–101. [Google Scholar] [CrossRef]

- Huang, B.; Hew, K.F. Implementing a theory-driven gamification model in higher education flipped courses: Effects on out-of-class activity completion and quality of artifacts. Comput. Educ. 2018, 125, 254–272. [Google Scholar] [CrossRef]

- Duggal, K.; Gupta, L.R.; Singh, P. Gamification and Machine Learning Inspired Approach for Classroom Engagement and Learning. Math. Probl. Eng. 2021, 2021, 1–18. [Google Scholar] [CrossRef]

- Sánchez, D.O.; Trigueros, I.M.G. Gamification, social problems, and gender in the teaching of social sciences: Representations and discourse of trainee teachers. PLoS ONE 2019, 14, e0218869. [Google Scholar] [CrossRef]

- Landers, R.N.; Armstrong, M.B.; Collmus, A.B. How to Use Game Elements to Enhance Learning. In Serious Games and Edutainment Applications; Springer International Publishing: Cham, Switzerland, 2017; pp. 457–483. [Google Scholar] [CrossRef]

- Kalogiannakis, M.; Papadakis, S.; Zourmpakis, A.I. Gamification in Science Education. A Systematic Review of the Literature. Educ. Sci. 2021, 11, 22. [Google Scholar] [CrossRef]

- Chou, Y.K. The Octalysis Framework for Gamification & Behavioral Design. 2013. Available online: https://yukaichou.com/gamification-examples/octalysis-complete-gamification-framework/ (accessed on 2 June 2023).

- Werbach, K.; Hunter, D. For the Win: How Game Thinking Can Revolutionize Your Business; Wharton Digital Press: Philadelphia, PA, USA, 2012. [Google Scholar]

- Kim, A.J. Game Thinking: Innovate Smarter & Drive Deep Engagement with Design Techniques from Hit Games; gamethinking.io, 2018. [Google Scholar]

- Hunicke, R.; Leblanc, M.; Zubek, R. MDA: A Formal Approach to Game Design and Game Research. In Proceedings of the AAAI Workshop on Challenges in Game AI, San Jose, CA, USA, 25-29 July 2004; 2004; Volume 1. [Google Scholar]

- Zichermann, G.; Cunningham, C. Gamification by Design: Implementing Game Mechanics in Web and Mobile Apps; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2011. [Google Scholar]

- Marczewski, A. The Intrinsic Motivation RAMP. 2014. Available online: https://www.gamified.uk/gamification-framework/the-intrinsic-motivation-ramp/ (accessed on 2 June 2023).

- Keller, J.M. Motivational Design for Learning and Performance: The ARCS Model Approach; Springer: Berlin/Heidelberg, Germany, 2009. [Google Scholar] [CrossRef]

- Hornbæk, K.; Law, E.L.C. Meta-Analysis of Correlations among Usability Measures. In Proceedings of the CHI ’07 SIGCHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 28 April 2007–3 May 2007; pp. 617–626. [Google Scholar] [CrossRef]

- O’Brien, H.L.; Lebow, M. Mixed-methods approach to measuring user experience in online news interactions. J. Am. Soc. Inf. Sci. Technol. 2013, 64, 1543–1556. [Google Scholar] [CrossRef]

- Laugwitz, B.; Held, T.; Schrepp, M. Construction and Evaluation of a User Experience Questionnaire. In Proceedings of the HCI and Usability for Education and Work; Holzinger, A., Ed.; Springer: Berlin/Heidelberg, Germany, 2008; pp. 63–76. [Google Scholar]

- Saleh, A.M.; Abuaddous, H.Y.; Alansari, I.S.; Enaizan, O. The Evaluation of User Experience on Learning Management Systems Using UEQ. Int. J. Emerg. Technol. Learn. (iJET) 2022, 17, 145–162. [Google Scholar] [CrossRef]

- Swacha, J.; Queirós, R.; Paiva, J.C. GATUGU: Six Perspectives of Evaluation of Gamified Systems. Information 2023, 14, 136. [Google Scholar] [CrossRef]

- Najafi, H.a. Shareable Content Object Reference Model: A model for the production of electronic content for better learning. Bimon. Educ. Strateg. Med. Sci. 2016, 9, 335–350. [Google Scholar]

- Ng, J.Y.M.; Lim, T.W.; Tarib, N.; Ho, T.K. Development and validation of a progressive web application to educate partial denture wearers. Health Inform. J. 2022, 28, 146045822110695. [Google Scholar] [CrossRef] [PubMed]

- Gómez-Sierra, C.J. Design and development of a PWA-Progressive Web Application, to consult the diary and programming of a technological event. IOP Conf. Ser. Mater. Sci. Eng. 2021, 1154, 012047. [Google Scholar] [CrossRef]

- Case, D.M.; Steeve, C.; Woolery, M. Progressive Web Apps are a Game-Changer! Use Active Learning to Engage Students and Convert Any Website into a Mobile-Installable, Offline-Capable, Interactive App. In Proceedings of the 51st ACM Technical Symposium on Computer Science Education, Portland, OR, USA, 11–14 March 2020; ACM: New York, NY, USA, 2020. [Google Scholar] [CrossRef]

- Sidekerskienė, T.; Damaševičius, R. Out-of-the-Box Learning: Digital Escape Rooms as a Metaphor for Breaking Down Barriers in STEM Education. Sustainability 2023, 15, 7393. [Google Scholar] [CrossRef]

- Bonora, L.; Martelli, F.; Marchi, V.; Vagnoli, C. Gamification as educational strategy for STEM learning: DIGITgame project a collaborative experience between Italy and Turkey high schools around the Smartcity concept. In Proceedings of the IMSCI 2019-13th International Multi-Conference on Society, Cybernetics and Informatics, Proceedings, Orlando, FL, USA, 6–9 July 2019; Volume 2, pp. 122–127. [Google Scholar]

- Paulauskas, L.; Paulauskas, A.; Blažauskas, T.; Damaševičius, R.; Maskeliūnas, R. Reconstruction of Industrial and Historical Heritage for Cultural Enrichment Using Virtual and Augmented Reality. Technologies 2023, 11, 36. [Google Scholar] [CrossRef]

| N | M (SD) | t | p | |

|---|---|---|---|---|

| FGPE+ group | 30 | 4.11 (0.51) | 4.21 | 0.03 |

| Moodle course group | 35 | 3.67 (0.56) | 3.63 | 0.04 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Maskeliūnas, R.; Damaševičius, R.; Blažauskas, T.; Swacha, J.; Queirós, R.; Paiva, J.C. FGPE+: The Mobile FGPE Environment and the Pareto-Optimized Gamified Programming Exercise Selection Model—An Empirical Evaluation. Computers 2023, 12, 144. https://doi.org/10.3390/computers12070144

Maskeliūnas R, Damaševičius R, Blažauskas T, Swacha J, Queirós R, Paiva JC. FGPE+: The Mobile FGPE Environment and the Pareto-Optimized Gamified Programming Exercise Selection Model—An Empirical Evaluation. Computers. 2023; 12(7):144. https://doi.org/10.3390/computers12070144

Chicago/Turabian StyleMaskeliūnas, Rytis, Robertas Damaševičius, Tomas Blažauskas, Jakub Swacha, Ricardo Queirós, and José Carlos Paiva. 2023. "FGPE+: The Mobile FGPE Environment and the Pareto-Optimized Gamified Programming Exercise Selection Model—An Empirical Evaluation" Computers 12, no. 7: 144. https://doi.org/10.3390/computers12070144

APA StyleMaskeliūnas, R., Damaševičius, R., Blažauskas, T., Swacha, J., Queirós, R., & Paiva, J. C. (2023). FGPE+: The Mobile FGPE Environment and the Pareto-Optimized Gamified Programming Exercise Selection Model—An Empirical Evaluation. Computers, 12(7), 144. https://doi.org/10.3390/computers12070144