Assessment of Multi-Layer Perceptron Neural Network for Pulmonary Function Test’s Diagnosis Using ATS and ERS Respiratory Standard Parameters

Abstract

1. Introduction

2. Materials and Methods

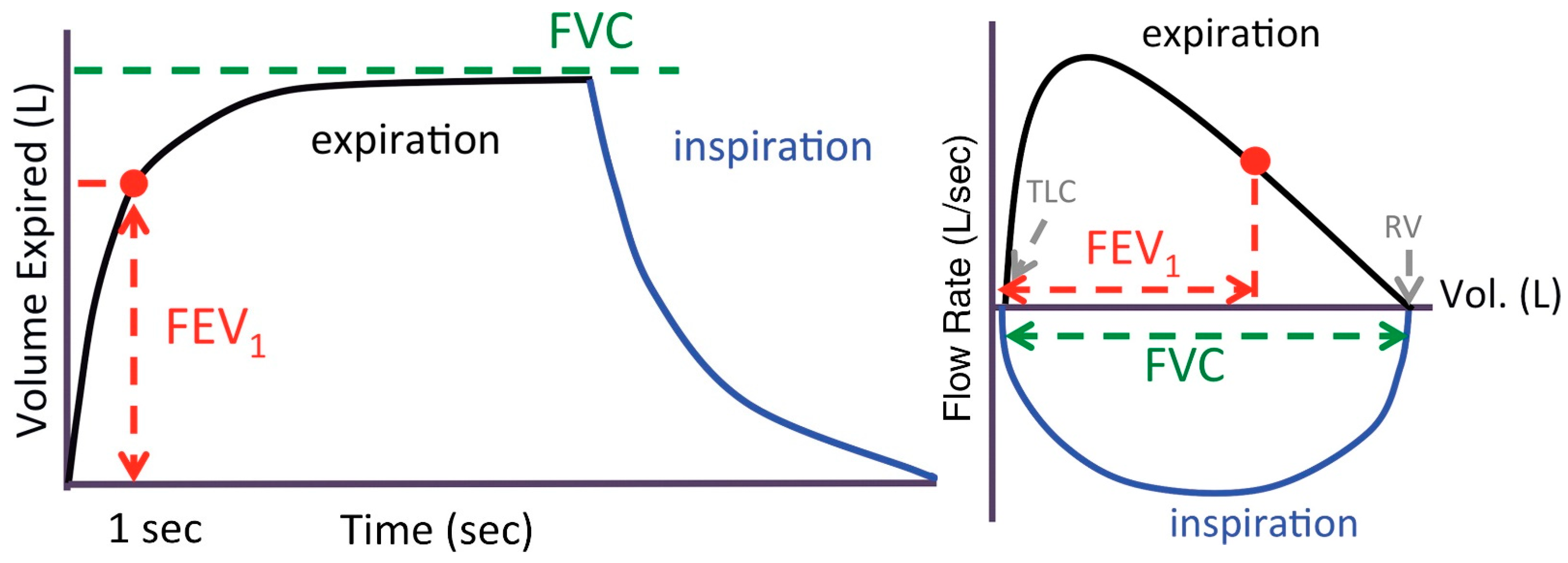

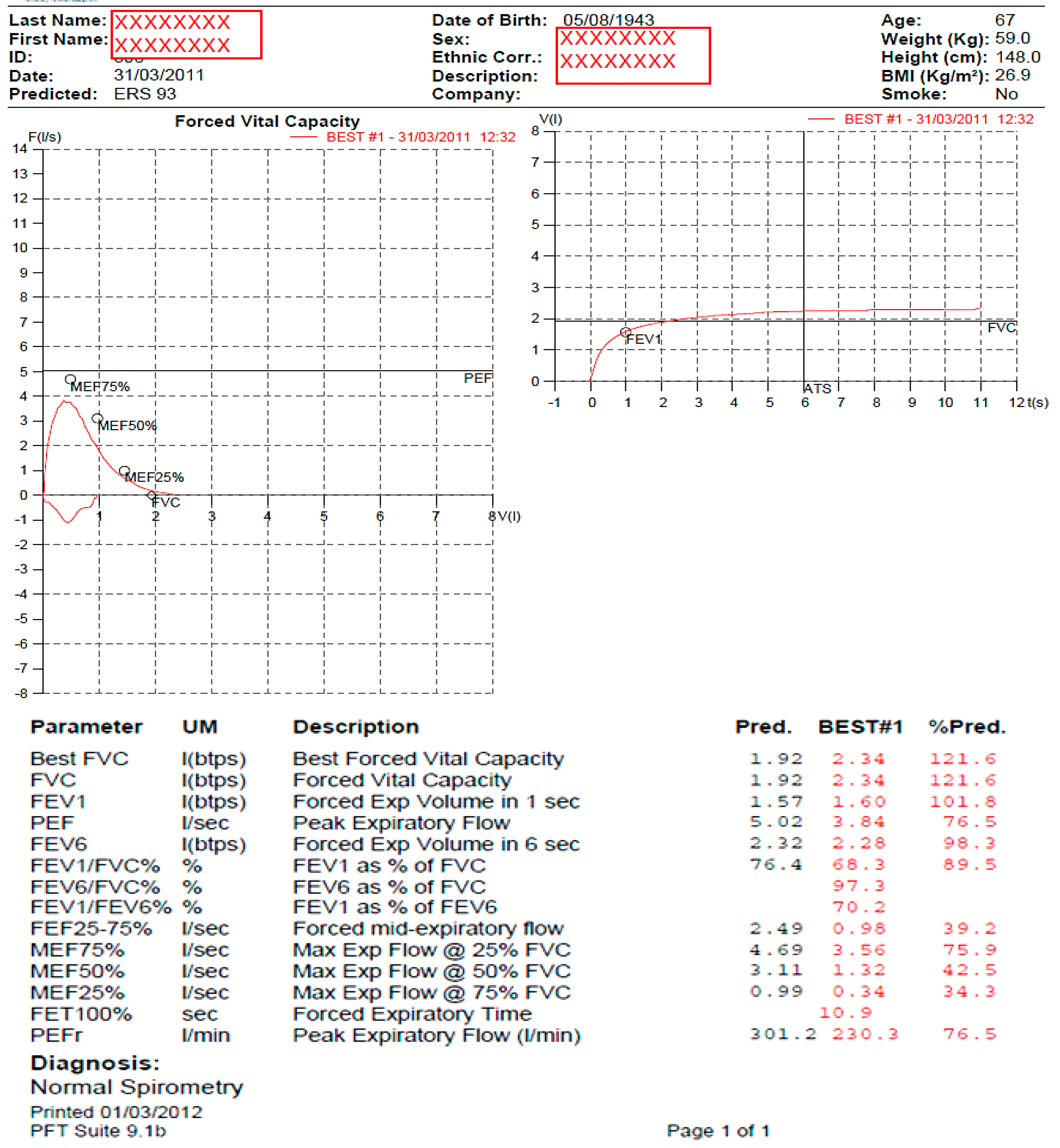

2.1. Spirometer Procedure

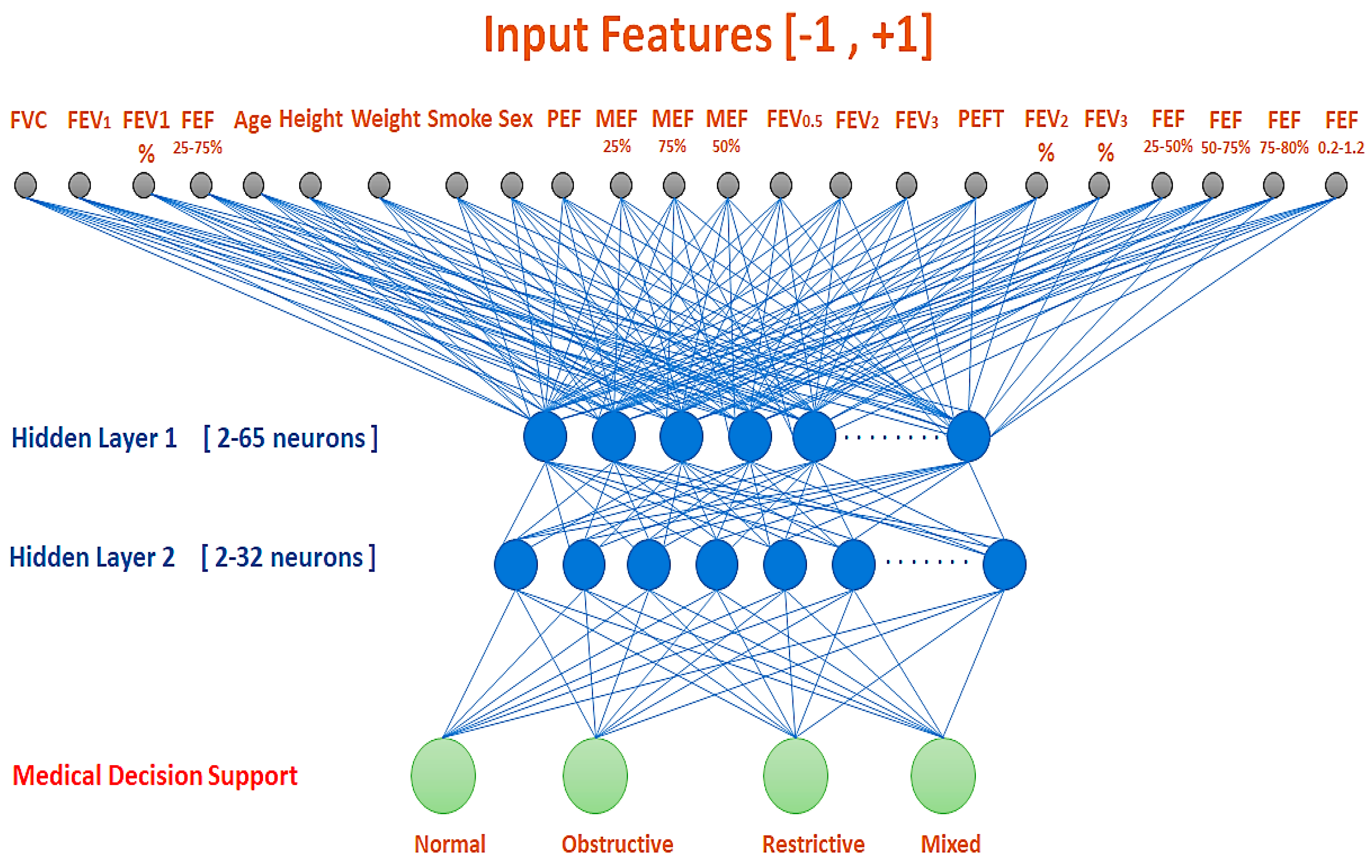

2.2. MLP Neural Network

2.3. Measures of Classification Performance

3. Results

4. Results Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Peters, J.I.; Levine, S.M. Introduction to Pulmonary Function Testing (Chapter 27). In Respiratory Disorders; McGraw Hill Medical: New York, NY, USA, 2009; pp. 271–278. [Google Scholar]

- Miller, M.R.; Hankinson, J.; Brusasco, V.; Burgos, F.; Casaburi, R.; Coates, A.; Crapo, R.; Enright, P.; Van Der Grinten, C.P.M.; Gustafsson, P.; et al. Standardization of spirometry. Eur. Respir. J. 2005, 26, 319–338. [Google Scholar] [CrossRef] [PubMed]

- Giri, P.C.; Chowdhury, A.M.; Bedoya, A.; Chen, H.; Lee, H.S.; Lee, P.; Henriquez, C.; MacIntyre, N.R.; Huang, Y.-C.T. Application of Machine Learning in Pulmonary Function Assessment Where Are We Now and Where Are We Going? Front. Physiol. 2021, 12, 678540. [Google Scholar] [CrossRef] [PubMed]

- Topalovic, M.; Laval, S.; Aerts, J.-M.; Troosters, T.; Decramer, M.; Janssens, W. Automated Interpretation of Pulmonary Function Tests in Adults with Respiratory Complaints. Respiration 2017, 93, 170–178. [Google Scholar] [CrossRef] [PubMed]

- Haykin, S. Neural Networks and Learning Machines; Prentice Hall: New York, NY, USA, 2009. [Google Scholar]

- Veezhinathan, M.; Ramakrishnan, S. Detection of Obstructive Respiratory Abnormality Using Flow–Volume Spirometry and Radial Basis Function Neural Networks. J. Med. Syst. 2007, 31, 461–465. [Google Scholar] [CrossRef]

- Manoharan, S.C.; Veezhinathan, M.; Ramakrishnan, S. Comparison of Two ANN Methods for Classification of Spirometer. Data Meas. Sci. 2008, 8, 53–57. [Google Scholar] [CrossRef]

- Manoharan, S.C.; Ramakrishnan, S. Prediction of Forced Expiratory Volume in Pulmonary Function Test using Radial Basis Neural Networks and k-means Clustering. J. Med. Syst. 2009, 33, 347–351. [Google Scholar] [CrossRef]

- Waghmare, K.A.; Wakode, B.V.; Chatur, P.N. Spirometry Data Analysis and Classification Using Artificial Neural Network: An Approach. Int. J. Emerg. Technol. Adv. Eng. 2012, 2, 67–70. [Google Scholar]

- Iadanza, E.; Mudura, V.; Melillo, P.; Gherardelli, M. An automatic system supporting clinical decision for chronic obstructive pulmonary disease. Health Technol. 2020, 10, 487–498. [Google Scholar] [CrossRef]

- Baemani, M.; Monadjemi, A.; Moallem, P. Detection of Respiratory Abnormalities Using Artificial Neural Networks. J. Comput. Syst. Sci. 2008, 4, 663–667. [Google Scholar] [CrossRef]

- Jafari, H.; Arabalibeik, H.; Agin, K. Classification of Normal and Abnormal Respiration Patterns Using Flow Volume Curve and Neural Network. In Proceedings of the IEEE International Symposium on Health Informatics and Bioinformatics (HIBIT), Antalya, Turkey, 20–22 April 2010. [Google Scholar] [CrossRef]

- Badnjevic, A.; Cifrek, M.; Koruga, D.; Osmankovic, D. Neuro-fuzzy classification of asthma and chronic obstructive pulmonary disease. BMC Med. Inform. Decis. Mak. 2015, 15, S1. [Google Scholar] [CrossRef]

- Badnjevic, A.; Gurbeta, L.; Custovic, E. An Expert Diagnostic System to Automatically Identify Asthma and Chronic Obstructive Pulmonary Disease in Clinical Settings. Sci. Rep. 2018, 8, 11645. [Google Scholar] [CrossRef] [PubMed]

- Loachimescu, O.C.; Stoller, J.K. An Alternative Spirometric Measurement: Area under the Expiratory Flow–Volume Curve. Ann. Am. Thorac. Soc. 2020, 17, 582–588. [Google Scholar] [CrossRef] [PubMed]

- Kalantary, S.; Pourbabaki, R.; Jahani, A.; Yarandi, M.S.; Samiei, S.; Jahani, R. Development of a decision support system tool to predict the pulmonary function using artificial neural network approach. Concurr. Comput. Pract. Exper. 2021, 33, e6258. [Google Scholar] [CrossRef]

- Hakan, A.; Guler, I.; Sener, M.U. A Low-Cost Mobile Adaptive Tracking System for Chronic Pulmonary Patients in Home Environment. Telemed. e-Health 2013, 19, 24–30. [Google Scholar] [CrossRef]

- Trivedy, S.; Goyal, M.; Mohapatra, P.R.; Mukherjee, A. Design and Development of Smartphone Enabled Spirometer with a Disease Classification System Using Convolutional Neural Network. IEEE Trans. Instrum. Meas. 2020, 69, 7125–7135. [Google Scholar] [CrossRef]

- Er, O.; Yumusak, N.; Temurtas, F. Chest diseases diagnosis using artificial neural networks. Expert Syst. Appl. 2010, 37, 7648–7655. [Google Scholar] [CrossRef]

- Kavitha, A.; Sujatha, C.M.; Ramakrishnan, S. Prediction of Spirometric Forced Expiratory Volume (FEV1) Data Using Support Vector Regression. Meas. Sci. Rev. 2010, 10, 63–67. [Google Scholar] [CrossRef][Green Version]

- Sahin, D.; Ubeyli, E.; Ilbay, G. Diagnosis of Airway Obstructive or Restrictive Spirometry Patterns by Multiclass Support Vector Machines. J. Med. Syst. 2010, 34, 967–973. [Google Scholar] [CrossRef]

- Spathis, D.; Vlamos, P. Diagnosing asthma and chronic obstructive pulmonary disease with machine learning. Health Inform. J. 2009, 25, 811–827. [Google Scholar] [CrossRef]

- Junwale, P.D.; Bhade, A.W.; Chatur, P.N. Statistical Data Mining Approach for Spiro metric Data Classification: Review Paper. Int. J. Comput. 2012, 3, 348–351. [Google Scholar]

- Bodduluri, S.; Nakhmani, A.; Reinhardt, J.M.; Wilson, C.G.; McDonald, M.-L.; Rudraraju, R.; Jaeger, B.; Bhakta, N.R.; Castaldi, P.J.; Sciurba, F.C.; et al. Deep neural network analyses of spirometry for structural phenotyping of chronic obstructive pulmonary disease. JCI Insight 2020, 5, e132781. [Google Scholar] [CrossRef] [PubMed]

- Murdaca, G.; Caprioli, S.; Tonacci, A.; Billeci, L.; Greco, M.; Negrini, S.; Cittadini, G.; Zentilin, P.; Spagnolo, E.V.; Gangemi, S. A Machine Learning Application to Predict Early Lung Involvement in Scleroderma: A Feasibility Evaluation. Diagnostics 2021, 11, 1880. [Google Scholar] [CrossRef] [PubMed]

- Kavitha, A.; Sujatha, C.M.; Ramakrishnan, S. Evaluation of Flow–Volume Spirometric Test Using Neural Network Based Prediction and Principal Component. J. Med. Syst. 2011, 35, 127–133. [Google Scholar] [CrossRef] [PubMed]

- Pellegrino, R.; Viegi, G.; Brusasco, V.; Crapo, R.O.; Burgos, F.; Casaburi, R.; Coates, A.; Van Der Grinten, C.P.M.; Gustafsson, P.; Hankinson, J.; et al. Interpretative strategies for lung function tests. Eur. Respir. J. 2005, 26, 948–968. [Google Scholar] [CrossRef] [PubMed]

- World Health Organization (WHO). The Top 10 Causes of Death. Available online: https://www.who.int/news-room/fact-sheets/detail/the-top-10-causes-of-death (accessed on 6 July 2021).

- Al-Naami, B.; Fraihat, H.; Al-Nabulsi, J.; Gharaibeh, N.Y.; Visconti, P.; Al-Hinnawi, A.R. Assessment of Dual Tree Complex Wavelet Transform to improve SNR in collaboration with Neuro-Fuzzy System for Heart Sound Identification. Electronics 2022, 11, 938. [Google Scholar] [CrossRef]

- Tseng, H.-J.; Veeraraghavan, S.; Henry, T.S.; Mittal, P.K.; Little, B.P. Pulmonary Function Tests for the Radiologist. Radio Graph. 2017, 37, 1037–1058. [Google Scholar] [CrossRef]

- COSMED Company for Pulmonary Function Equipment. Quark PFT—Innovative Modularity and Networking for Truly Customised PFT Solutions. Available online: https://www.cosmed.com/en/products/pulmonary-function/quark-pft (accessed on 6 July 2021).

- Demuth, H.; Beale, M.; Hagan, M. Neural Network Toolbox 6: User’s Guide; MathWorks Inc.: Natick, MA, USA, 2017; Available online: http://www.mathworks.com (accessed on 14 June 2021).

- Stathakis, D. How many hidden layers and nodes? Int. J. Remote Sens. 2009, 30, 2133–2147. [Google Scholar] [CrossRef]

- Kurkova, V. Kolmogorov’s theorem and multilayer neural networks. Neural Netw. 1992, 5, 501–506. [Google Scholar] [CrossRef]

- Huang, G.B. Learning capability and storage capacity of two-hidden-layer feed forward networks. IEEE Trans. Neural Netw. 2003, 14, 274–281. [Google Scholar] [CrossRef]

- Hecht-Nielsen, R. Kolmogorov’s mapping neural network existence theorem. In Proceedings of the IEEE First Annual International Conference on Neural Networks, San Diego, CA, USA, 21–24 June 1987; Volume III, pp. 11–14. [Google Scholar]

- Zhu, W.; Zeng, N.; Wang, N. Sensitivity, specificity, accuracy, associated confidence interval and ROC analysis with practical SAS implementations, Maryland, Baltimore. In Proceedings of the Health Care and Life Sciences NESUG 2010, Baltimore, MD, USA, 14–17 November 2010; pp. 1–9. Available online: https://www.lexjansen.com/nesug/nesug10/hl/hl07.pdf (accessed on 2 September 2020).

- Al-Naami, B.; Fraihat, H.; Abu Owida, H.; Al-Hamad, K.; De Fazio, R.; Visconti, P. Automated Detection of Left Bundle Branch Block from ECG signal utilizing the Maximal Overlap Discrete Wavelet Transform with ANFIS. Computers 2022, 11, 93. [Google Scholar] [CrossRef]

- Topalovic, M.; Das, N.; Burgel, P.-R.; Daenen, M.; Derom, E.; Haenebalcke, C.; Janssen, R.; Kerstjens, H.A.; Liistro, G.; Louis, R.; et al. Artificial intelligence outperforms pulmonologists in the interpretation of pulmonary function tests. Eur. Respir. J. 2019, 53, 1801660. [Google Scholar] [CrossRef] [PubMed]

- Hoens, T.R.; Chawla, N.V. Imbalanced Datasets: From Sampling to Classifiers. In Imbalanced Learning: Foundations, Algorithms, and Applications—Chapter 3; He, H., Ma, Y., Eds.; Wiley-IEEE Press: Hoboken, NJ, USA, 2013; p. 216. [Google Scholar] [CrossRef]

- Kuhn, M.; Johnson, K. Remedies for Severe Class Imbalance. In Applied Predictive Modeling; Springer Science & Business Media: New York, NY, USA, 2013; pp. 419–443. [Google Scholar] [CrossRef]

- Guyon, I.; Elisseeff, A. An Introduction to Variable and Feature Selection. J. Mach. Learn. Res. 2003, 3, 1157–1182. [Google Scholar] [CrossRef]

- Kuhn, M.; Johnson, K. An Introduction to Feature Selection. In Applied Predictive Modeling; Springer Science & Business Media: New York, NY, USA, 2013; pp. 487–519. [Google Scholar] [CrossRef]

| Group | Parameters |

|---|---|

| Group 1 | FVC, FEV1, (FEV1/FVC) %, FEF (25–75%) |

| Group 2 | Age, Weight, Height, Sex, Smoking/non-smoking |

| Group 3 | PEF, MEF25%, MEF50%, MEF75%, FEV0.5, FEV2, FEV3, PEFT, FEV2/FVC, FEV3/FVC, FEF25–50%, FEF50–75%, FEF75–85%, FEF0.2–1.2 |

| Normal | Obstructive | Restrictive | Mixed | |

|---|---|---|---|---|

| Training (75%) | 76 | 55 | 8 | 7 |

| Test (25%) | 26 | 19 | 6 | 3 |

| Total | 102 | 74 | 14 | 10 |

| # | PFT Examination Parameters | Normal 103 Samples | Obstructive 74 Samples | Restrictive 14 Samples | Mixed 10 Samples | ||||

|---|---|---|---|---|---|---|---|---|---|

| Mean ± | SD | Mean ± | SD | Mean ± | SD | Mean ± | SD | ||

| 1 | FVC (L) | 4.23 | 0.91 | 3.31 | 0.70 | 2.68 | 0.45 | 2.67 | 0.39 |

| 2 | FEV1 (L) | 3.14 | 0.72 | 1.91 | 0.49 | 2.14 | 0.40 | 1.93 | 0.37 |

| 3 | (FEV1/FVC)% | 74.06 | 5.36 | 57.45 | 5.68 | 79.71 | 6.10 | 71.56 | 7.96 |

| 4 | FEF25–75% (L/min) | 2.58 | 0.96 | 0.98 | 0.33 | 2.52 | 1.15 | 1.57 | 0.60 |

| 5 | Age (year) | 50.08 | 11.51 | 55.85 | 10.35 | 46.93 | 12.22 | 51.60 | 12.60 |

| 6 | Height (cm) | 167.72 | 7.74 | 166.14 | 5.93 | 164.86 | 8.63 | 170.70 | 7.10 |

| 7 | Weight (kg) | 78.70 | 12.73 | 76.43 | 14.59 | 73.36 | 16.41 | 75.30 | 10.58 |

| 8 | Sex (men/%) | 80.00 | 77.7% | 64.00 | 86.5% | 11.00 | 78.6% | 8.00 | 80.0% |

| 9 | Smoking (+/%) | 7.00 | 6.8% | 8.00 | 10.8% | 3.00 | 21.4% | 1.00 | 10.0% |

| 10 | PEF (L/min) | 7.10 | 1.65 | 4.67 | 1.16 | 6.43 | 1.71 | 5.08 | 0.82 |

| 11 | MEF25% (L/min) | 1.10 | 0.52 | 0.41 | 0.14 | 1.08 | 0.54 | 0.72 | 0.37 |

| 12 | MEF50% (L/min) | 3.32 | 1.11 | 1.23 | 0.43 | 3.16 | 1.39 | 1.91 | 0.75 |

| 13 | MEF75% (L/min) | 5.90 | 1.30 | 2.63 | 0.87 | 5.56 | 2.09 | 3.76 | 1.32 |

| 14 | FEV0.5 (L) | 2.35 | 0.53 | 1.34 | 0.35 | 1.74 | 0.37 | 1.47 | 0.34 |

| 15 | FEV2 (L) | 3.67 | 0.83 | 2.47 | 0.60 | 2.41 | 0.43 | 2.29 | 0.38 |

| 16 | FEV3 (L) | 3.89 | 0.86 | 2.77 | 0.64 | 2.52 | 0.46 | 2.44 | 0.37 |

| 17 | PEFT (s) | 98.69 | 32.50 | 66.50 | 44.65 | 78.95 | 32.33 | 67.94 | 30.88 |

| 18 | (FEV2/FVC)% | 86.59 | 4.16 | 74.46 | 4.80 | 89.74 | 3.56 | 84.86 | 5.85 |

| 19 | (FEV3/FVC)% | 91.86 | 3.20 | 83.54 | 4.07 | 93.75 | 2.66 | 90.84 | 4.28 |

| 20 | FEF25–50% (L/min) | 4.42 | 1.22 | 1.74 | 0.59 | 4.24 | 1.75 | 2.64 | 1.04 |

| 21 | FEF50–75% (L/min) | 1.86 | 0.78 | 0.69 | 0.24 | 1.82 | 0.88 | 1.15 | 0.49 |

| 22 | FEF75–85% (L/min) | 0.72 | 0.37 | 0.31 | 0.10 | 0.63 | 0.33 | 0.49 | 0.26 |

| 23 | FEF0.2–1.2 (L/min) | 6.07 | 1.57 | 2.78 | 1.24 | 5.34 | 1.92 | 3.42 | 1.46 |

| # | BP Algorithm | No. of Neurons Hidden Layer 1 | No. of Neurons Hidden Layer 2 | Epoch | LR | Accuracy (Training) | Accuracy (Test) |

|---|---|---|---|---|---|---|---|

| 1 | Levenberg Marquardt (LM) | 7 | 21 | 21 | <0.01 | 0.99 | 0.90 |

| 2 | Bayesian Regularization (BR) | 6 | 24 | 20 | 2.36 | 0.96 | 0.89 |

| 3 | Resilient Back Propagation (RBP) | 47 | 16 | 60 | 0.01 | 0.98 | 0.89 |

| 4 | Scaled Conjugate Gradient (CGS) | 47 | 28 | 55 | <0.01 | 0.96 | 0.87 |

| 5 | Polak–Ribiere Conjugate Gradient (CGP) | 36 | 18 | 47 | 0.01 | 0.97 | 0.87 |

| 6 | Powell–Beale Conjugate Gradient (CGB) | 45 | 30 | 42 | 0.01 | 0.97 | 0.92 |

| 7 | Fletcher–Powell Conjugate Gradient (CGF) | 43 | 19 | 31 | 0.01 | 0.96 | 0.90 |

| 8 | One Step Secant (OSS) | 4 | 28 | 43 | 0.01 | 0.96 | 0.89 |

| 9 | Gradient Descent with Momentum and Adaptive Learning Rate Rule (GDX) | 50 | 15 | 158 | 2.62 | 0.96 | 0.88 |

| 10 | Gradient Descent with Adaptive Learning Rule (GDA) | 64 | 4 | 212 | 0.68 | 0.94 | 0.90 |

| 11 | Gradient Descent (GD-1) | 24 | 29 | 1000 | 0.01 | 0.92 | 0.87 |

| 12 | Sequential Order Incremental Training with Learning Functions (SOIT) | 25 | 10 | 1000 | NA | 0.92 | 0.88 |

| 13 | Batch Training with Weight and Bias Learning Rules (BT) | 31 | 30 | 1000 | NA | 0.92 | 0.87 |

| # | BP Algorithm | Accuracy | Sensitivity | Specificity | PPV | NPV | AUC |

|---|---|---|---|---|---|---|---|

| 1 | Levenberg Marquardt (LM) | 0.90 | 0.71 | 0.92 | 0.73 | 0.92 | 0.88 |

| 2 | Bayesian Regularization (BR) | 0.89 | 0.76 | 0.92 | 0.55 | 0.91 | 0.94 |

| 3 | Resilient Back Propagation (RBP) | 0.89 | 0.54 | 0.92 | 0.50 | 0.91 | 0.93 |

| 4 | Powell–Beale Conjugate Gradient (CGB) | 0.92 | 0.81 | 0.94 | 0.63 | 0.93 | 0.93 |

| 5 | One Step Secant (OSS) | 0.89 | 0.57 | 0.92 | 0.55 | 0.91 | 0.94 |

| 6 | Gradient Descent with Adaptive Learning Rule (GDA) | 0.90 | 0.77 | 0.93 | 0.57 | 0.92 | 0.90 |

| Author/s | ANN Method | Features | # | Medical Decision | Samples | Accuracy [%] |

|---|---|---|---|---|---|---|

| Veezhinathan et al. [6] | RBF | FVC, FEV1, FEV1%, PEF, 3 pressures, 3 resistances | 10 | Normal and obstructive | 100 | 90 |

| Baemani et al. [11] | MLP | FVC, FEV1, FEV1%, PEF, FEF25–75%, age, height, weight, sex, smoker, race | 11 | Normal, obstructive, and restrictive | 250 | 92.3 |

| Manoharan et al. [7] | RBF/MLP | FVC, FEV1, FEV1%, PEF, FEF75%, 5 anthropometric, and 5 percentage values | 15 | Normal and abnormal | 150 | 100/96 |

| Sahin et al. [21] | SVM | FVC, FEV1, FEV1% | 3 | Normal, obstructive, and restrictive | 499 | 97.3 |

| Jafari et al. [12] | MLP (LM) | Predicted (FVC, FEV1, FEV1%, and PEF) + 6 fitted-curve coefficients | 10 | Normal, obstructive, restrictive, and mixed | 205 | 97.6 |

| Hakan et al. [17] | MLP | FVC, FEV1, FEV1%, FEF25-75, PEF | 5 | Normal, obstructive, and restrictive | 486 | 98.7 |

| Badnjevic et al. [13] | MLP (LM) + Fuzzy | FVC, FEV1, FEV1%, resistance, reactance, frequency (using IOS *) | 6 | Normal, COPD, and asthma | 455 | 99.5 |

| Spathis and Vlamos [22] | NN, NB, LogR, SVM, KNN, RFC | FEV1, FVC, FEV1%, PEF, MEF25/50/75/25-75, Sex, Smoke, pulse, O2 sat., age, and 9 symptoms | 13 | Asthma and COPD | 132 | 89 |

| Badnjevic et al. [14] | MLP | FVC, FEV1, FEV1%, VC, probability of disease | 5 | Normal, COPD, and Asthma | ~5300 | 98.7 |

| Topalovic et al. [4,39] | Decision Tree | FEV1, FVC, FEV1%, PEF, FEF25/50/75/25-75, Raw, sGaw, VC, RV, TGV, TLC, DLco, Kco, age, Smoke, CAT, gender, BMI | 21 | Asthma, COPD, OBD, NMD, TD, ILD, PVD, N | 1430 + 50 + 136 | 82 |

| Iadanza et al. [10] | RBNN + SVM + C5.0 | FEV1, FVC, SVC, FEV1%, FEV1/SVC, FEF25-75, PEF, VC, TLC, RV, FRC, ERV, DLco, VA, DLco/VA, Height, Weight, Sex, Age | 19 | Mild, moderate, severe COPD | 414 | 94.5 |

| Loachimescu et al. [15] | MLP | Percent predicted (FVC, FEV1 & FEV1%) + sqrt AEX ** | 4 | Normal, obstructive, restrictive, and mixed | 15,308 | 83.5 91.6 |

| Bodduluri et al. [24] | FCN + RFC | FEV1/FVC, FEV1 pred. | 3 | Normal, airway disease, emphysema, mixed | 8980 | 80 Normal, 78 airway disease, 78 emphysema, 91 mixed |

| Kalantary et al. [16] | MLP DSS | Gender, age, weight, stature, body mass index, smoking, type of work, fat mass, fat free mass, and work history. | 10 | Normal and abnormal | 130 | 93.6 (train) 84.6% (test) 91.5 (all) |

| This research work | MLP | 23 parameters as specified by ATS and ERS (Table 1) | 23 | Normal, obstructive, restrictive, and mixed | 201 | 92–99 training, 87–92 test |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Almazloum, A.A.; Al-Hinnawi, A.-R.; De Fazio, R.; Visconti, P. Assessment of Multi-Layer Perceptron Neural Network for Pulmonary Function Test’s Diagnosis Using ATS and ERS Respiratory Standard Parameters. Computers 2022, 11, 130. https://doi.org/10.3390/computers11090130

Almazloum AA, Al-Hinnawi A-R, De Fazio R, Visconti P. Assessment of Multi-Layer Perceptron Neural Network for Pulmonary Function Test’s Diagnosis Using ATS and ERS Respiratory Standard Parameters. Computers. 2022; 11(9):130. https://doi.org/10.3390/computers11090130

Chicago/Turabian StyleAlmazloum, Ahmad A., Abdel-Razzak Al-Hinnawi, Roberto De Fazio, and Paolo Visconti. 2022. "Assessment of Multi-Layer Perceptron Neural Network for Pulmonary Function Test’s Diagnosis Using ATS and ERS Respiratory Standard Parameters" Computers 11, no. 9: 130. https://doi.org/10.3390/computers11090130

APA StyleAlmazloum, A. A., Al-Hinnawi, A.-R., De Fazio, R., & Visconti, P. (2022). Assessment of Multi-Layer Perceptron Neural Network for Pulmonary Function Test’s Diagnosis Using ATS and ERS Respiratory Standard Parameters. Computers, 11(9), 130. https://doi.org/10.3390/computers11090130