2D Normalized Iterative Hard Thresholding Algorithm for Fast Compressive Radar Imaging

Abstract

:1. Introduction

2. Brief Introduction of Compressive Sensing and the NIHT Algorithm

2.1. Compressive Sensing

2.2. NIHT Algorithm

3. Radar Imaging Model Based on Compressive Sensing

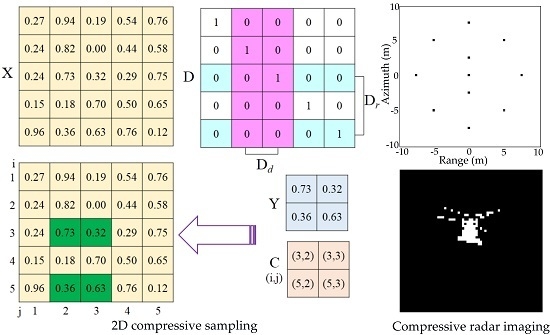

3.1. 2D Compressive Sensing

3.2. Compressive Radar Imaging Model

4. 2D-NIHT Algorithm

4.1. Description of the 2D-NIHT Algorithm

| Algorithm 1. 2D normalized iterative hard thresholding algorithm. |

| Input: , , , . |

| Initialize: , . |

| Iterate for , until the stopping criterion is met: |

| Step 1. ; |

| Step 2. ; |

| Step 3. ; |

| Step 4. ; |

| Step 5. If is equal to , then go to Step 6; otherwise |

| set , |

| and repeat the following procedures until : |

| , , |

| ; |

| Step 6. ; |

| Step 7. ; |

| Step 8. . |

| Output: . |

4.2. Convergence of the 2D-NIHT Algorithm

5. Results

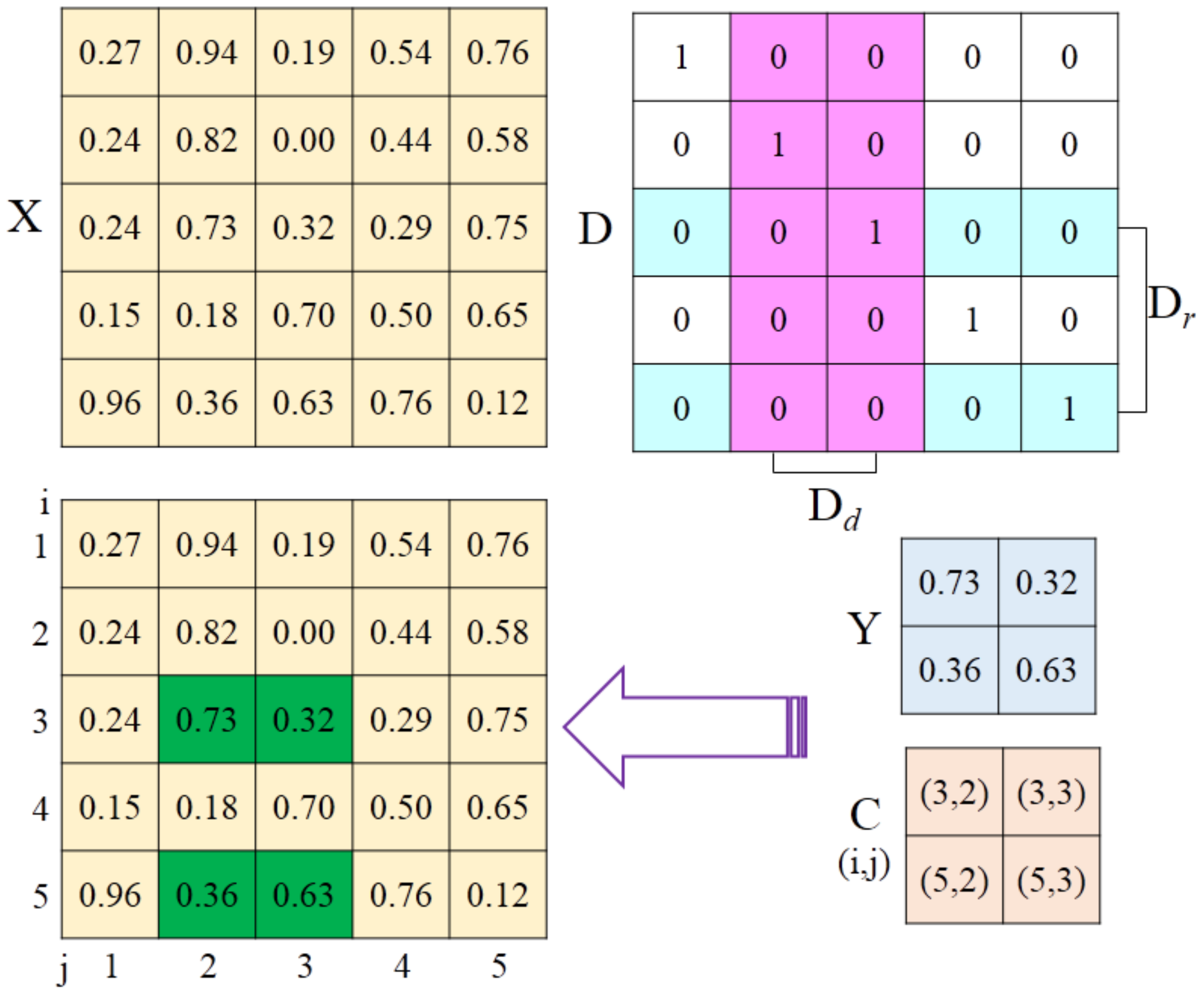

5.1. Experiments on Synthetic Images

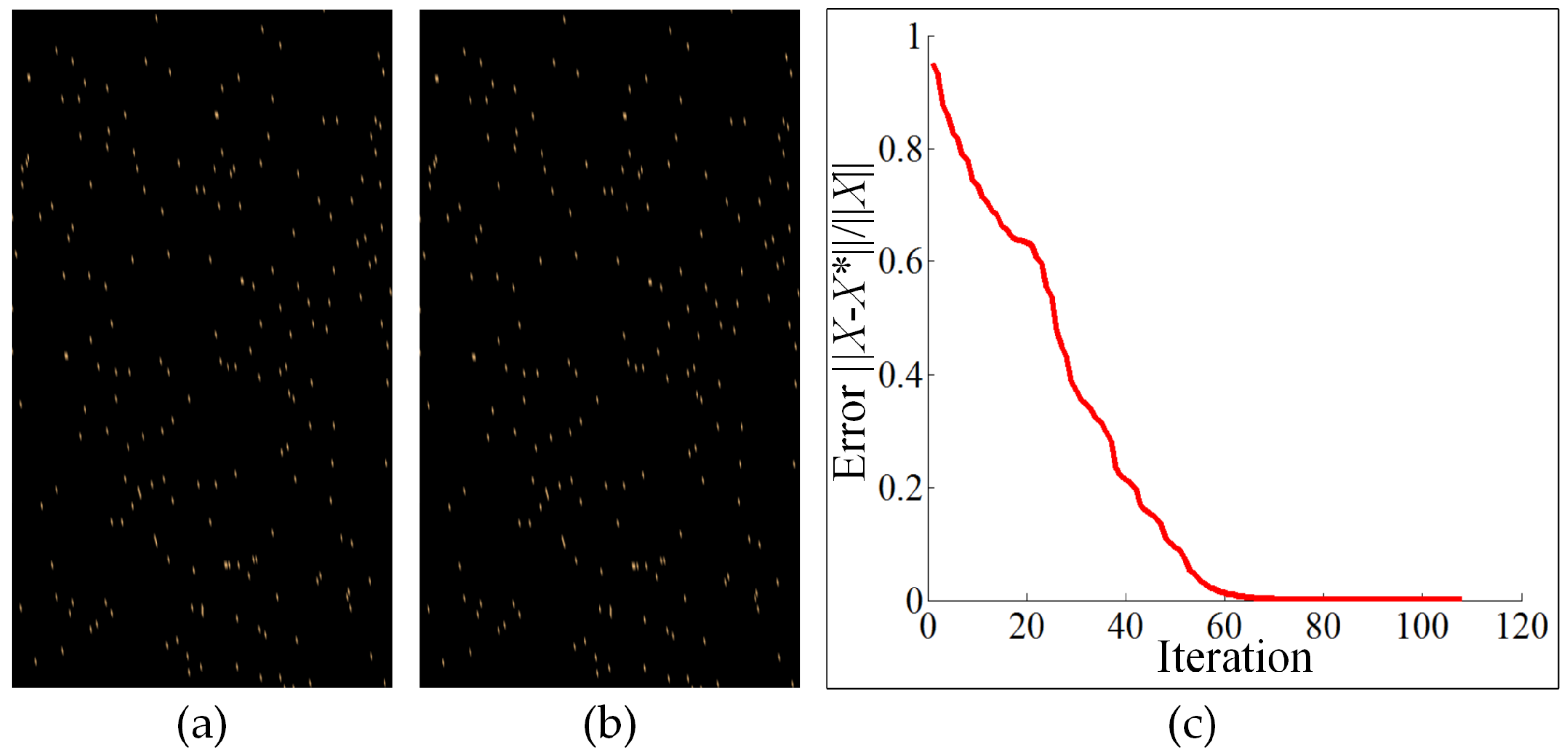

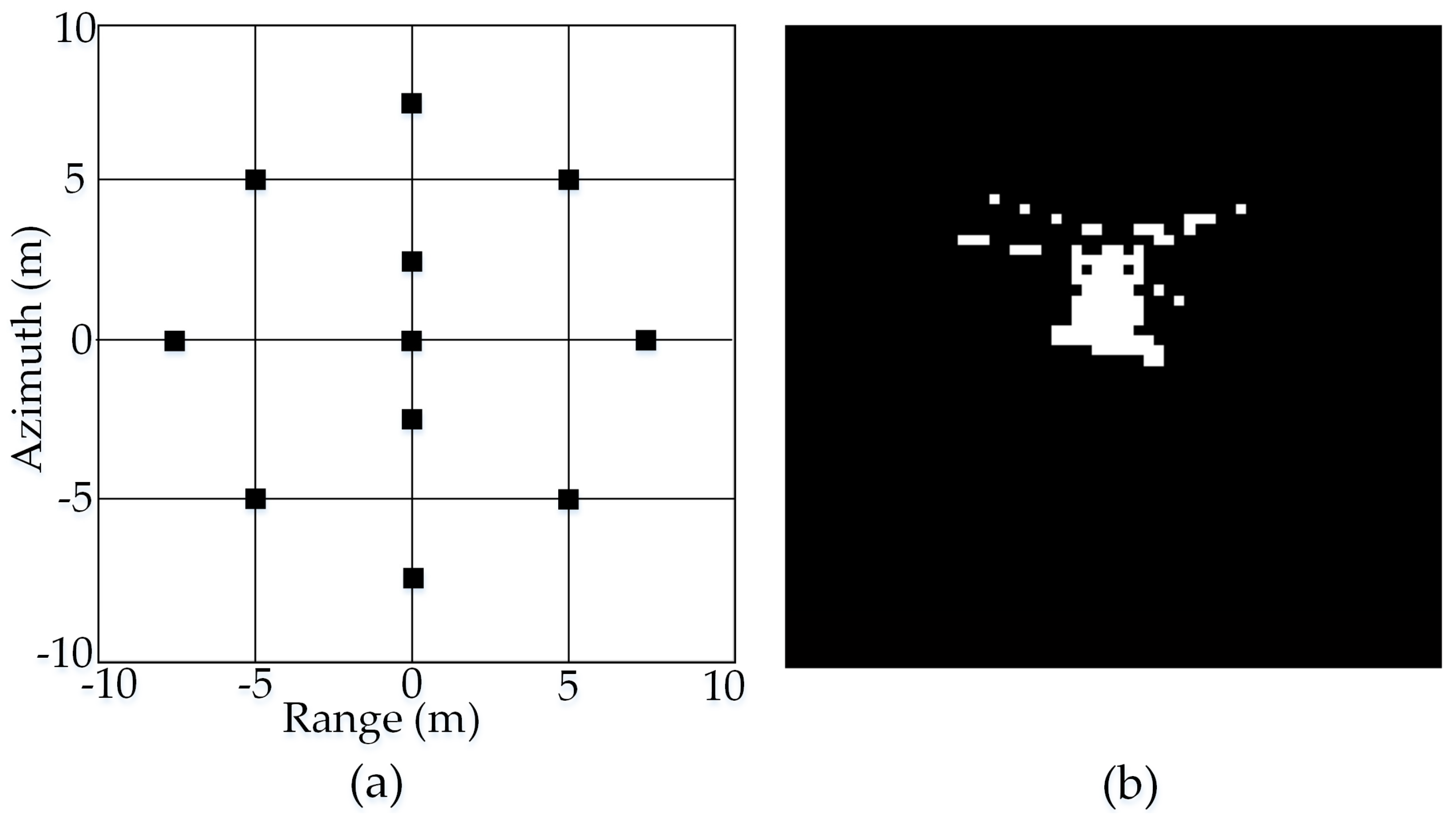

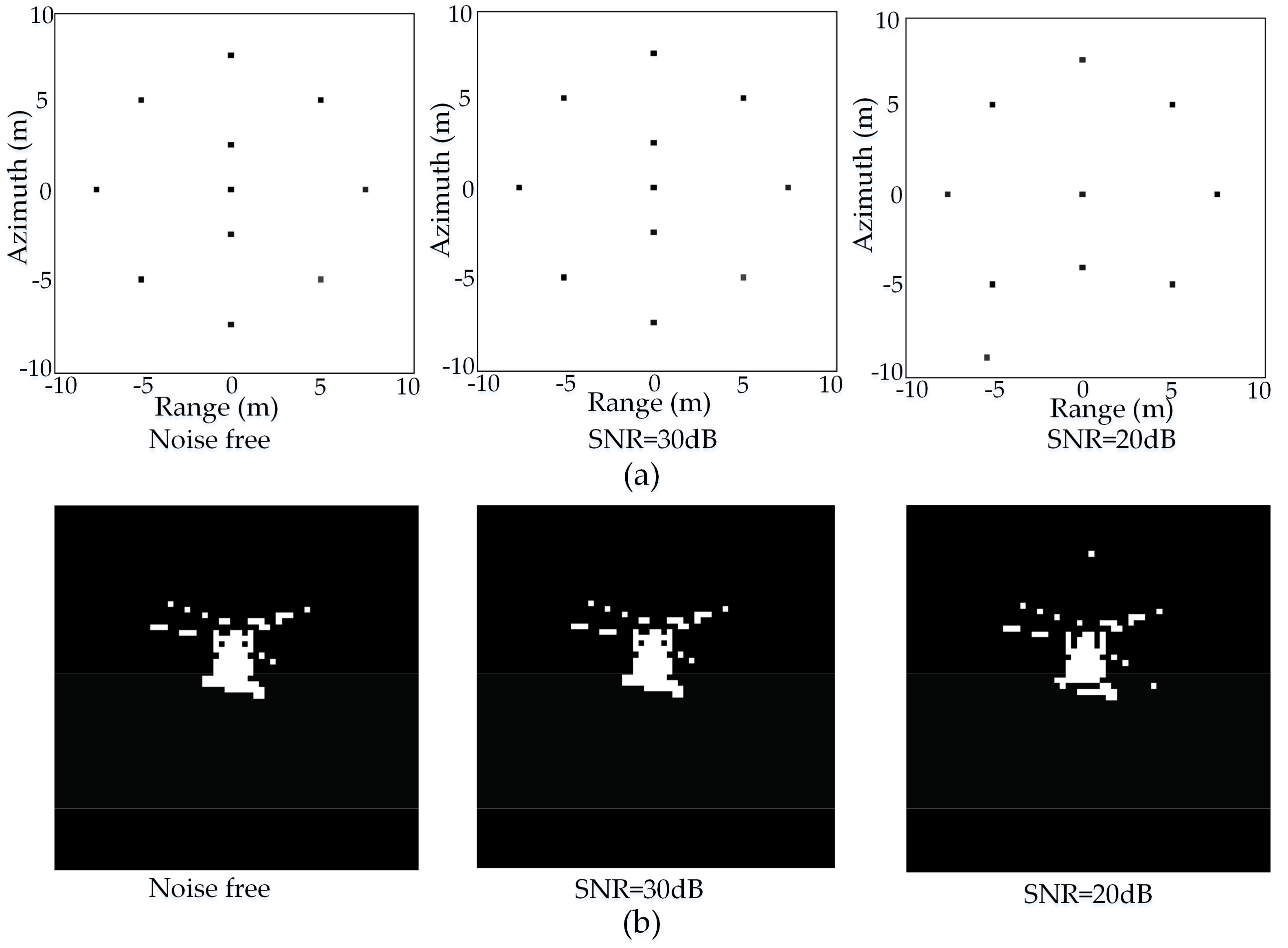

5.1.1. Efficiency of the 2D-NIHT Algorithm in 2D Signal Reconstruction

5.1.2. Comparison with the NIHT Algorithm

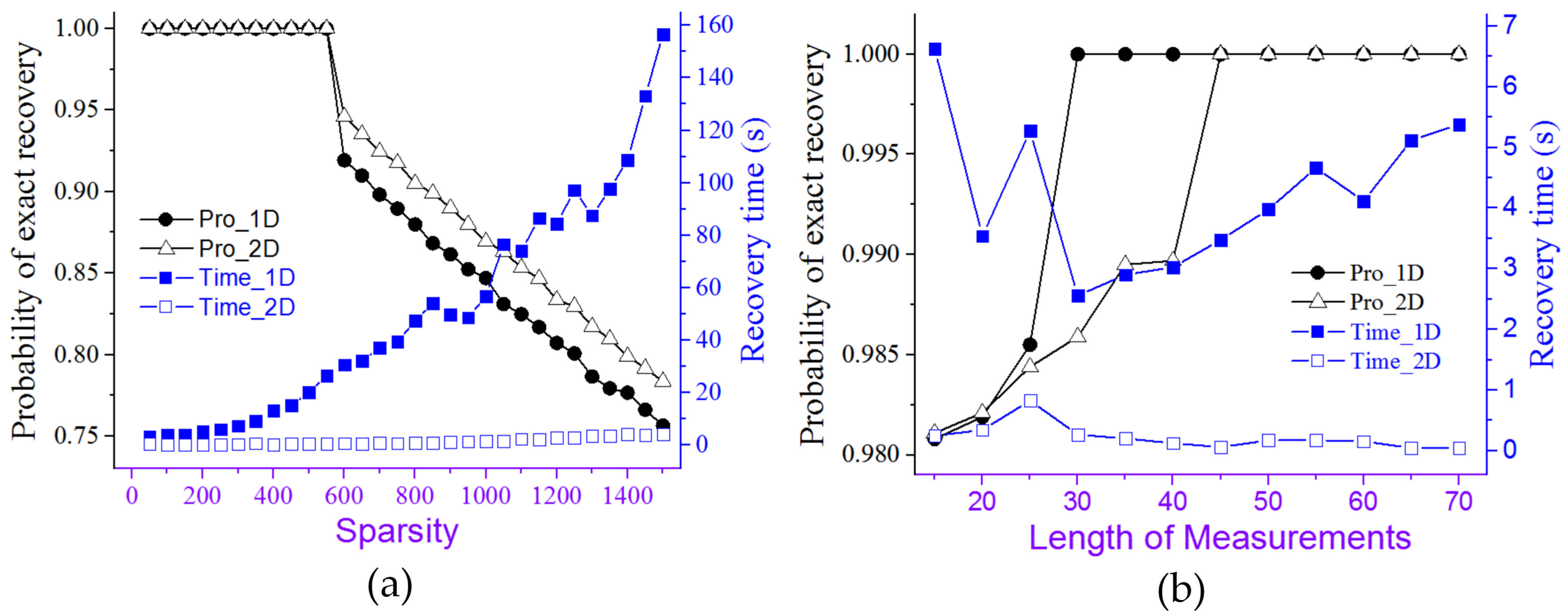

5.2. Experiments on Actual Radar Images

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A

References

- Herman, M.A.; Strohmer, T. High-resolution radar via compressed sensing. IEEE Trans. Signal Process. 2009, 57, 2275–2284. [Google Scholar] [CrossRef]

- Baraniuk, R.G. Compressive sensing. IEEE Signal Process. Mag. 2007, 24, 118–124. [Google Scholar] [CrossRef]

- Baraniuk, R.; Steeghs, P. Compressive radar imaging. In Proceedings of the 2007 IEEE Radar Conference, Boston, MA, USA, 17–20 April 2007; pp. 128–133. [Google Scholar]

- Zhang, L.; Xing, M.D.; Qiu, C.W.; Li, J.; Bao, Z. Achieving higher resolution ISAR imaging with limited pulses via compressed sampling. IEEE Geosci. Remote Sens. 2009, 6, 567–571. [Google Scholar] [CrossRef]

- Yoon, Y.S.; Amin, M.G. Compressed sensing technique for high–Resolution radar imaging. Proc. SPIE 2008. [Google Scholar] [CrossRef]

- Ender, J. A brief review of compressive sensing applied to radar. In Proceedings of the 14th International Radar Symposium, Dresden, Germany, 19–21 June 2013; pp. 3–16. [Google Scholar]

- Potter, L.C.; Ertin, E.; Parker, J.T.; Cetin, M. Sparsity and compressed sensing in radar imaging. Proc. IEEE 2010, 98, 1006–1020. [Google Scholar] [CrossRef]

- Wen, F.Q.; Zhang, G. Multi-way compressive sensing based 2D DOA estimation algorithm for monostatic mimo radar with arbitrary arrays. Wirel. Pers. Commun. 2015, 85, 2393–2406. [Google Scholar] [CrossRef]

- Ender, J.H.G. On compressive sensing applied to radar. Signal Process. 2010, 90, 1402–1414. [Google Scholar] [CrossRef]

- Zhang, L.; Xing, M.D.; Qiu, C.W.; Li, J.; Sheng, J.L.; Li, Y.C.; Bao, Z. Resolution enhancement for inversed synthetic aperture radar imaging under low SNR via improved compressive sensing. IEEE Trans. Geosci. Remote Sens. 2010, 48, 3824–3838. [Google Scholar] [CrossRef]

- Xie, X.; Zhang, Y. Fast compressive sensing radar imaging based on smoothed l0 norm. In Proceedings of the 2nd Asian-Pacific Conference on Synthetic Aperture Radar, Xi’an, China, 26–30 October 2009; pp. 443–446. [Google Scholar]

- Bhattacharya, S.; Blumensath, T.; Mulgrew, B.; Davies, M. Synthetic aperture radar raw data encoding using compressed sensing. In Proceedings of the 2008 IEEE Radar Conference, Rome, Italy, 26–30 May 2008; pp. 1–5. [Google Scholar]

- Bhattacharya, S.; Blumensath, T.; Mulgrew, B.; Davies, M. Fast encoding of synthetic aperture radar raw data using compressed sensing. In Proceedings of the 2007 IEEE/Sp 14th Workshop on Statistical Signal, Madison, WI, USA, 26–29 August 2007; pp. 448–452. [Google Scholar]

- Yu, L.; Yang, Y.; Sun, H.; He, C. Turbo–Like iterative thresholding for SAR image recovery from compressed measurements. In Proceedings of the 2nd Asian–Pacific Conference on Synthetic Aperture Radar, Xi’an, China, 26–30 October 2009; pp. 664–667. [Google Scholar]

- Ye, J.P. Generalized low rank approximations of matrices. Mach. Learn. 2005, 61, 167–191. [Google Scholar] [CrossRef]

- Eftekhari, A.; Babaie-Zadeh, M.; Moghaddam, H.A. Two–Dimensional random projection. Signal Process. 2011, 91, 1589–1603. [Google Scholar] [CrossRef]

- Fang, Y.; Wu, J.J.; Huang, B.M. 2D sparse signal recovery via 2D orthogonal matching pursuit. Sci. China Inf. Sci. 2012, 55, 889–897. [Google Scholar] [CrossRef]

- Chen, G.; Li, D.F.; Zhang, J.S. Iterative gradient projection algorithm for two–Dimensional compressive sensing sparse image reconstruction. Signal Process. 2014, 104, 15–26. [Google Scholar] [CrossRef]

- Huang, J.; Huang, T.Z.; Zhao, X.L.; Xu, Z.B.; Lv, X.G. Two soft–Thresholding based iterative algorithms for image deblurring. Inf. Sci. 2014, 271, 179–195. [Google Scholar] [CrossRef]

- Ghaffari, A.; Babaie-Zadeh, M.; Jutten, C. Sparse decomposition of two dimensional signals. In Proceedings of the 2009 IEEE International Conference on Acoustics, Speech, and Signal Processing, Taipei, Taiwan, 19–24 April 2009; pp. 3157–3160. [Google Scholar]

- Liu, J.H.; Xu, S.K.; Gao, X.Z.; Li, X. Compressive radar imaging methods based on fast smoothed l0 algorithm. Procedia Eng. 2012, 29, 2209–2213. [Google Scholar]

- Blumensath, T.; Davies, M.E. Normalized iterative hard thresholding: Guaranteed stability and performance. IEEE J. Sel. Top. Signal Process. 2010, 4, 298–309. [Google Scholar] [CrossRef]

- Needell, D.; Tropp, J.A. Cosamp: Iterative signal recovery from incomplete and inaccurate samples. Appl. Comput. Harmon. Anal. 2009, 26, 301–321. [Google Scholar] [CrossRef]

- Blumensath, T.; Davies, M.E. Iterative hard thresholding for compressed sensing. Appl. Comput. Harmon. Anal. 2009, 27, 265–274. [Google Scholar] [CrossRef]

- Mohimani, H.; Babaie–Zadeh, M.; Jutten, C. A fast approach for overcomplete sparse decomposition based on smoothed l0 norm. IEEE Trans. Signal Process. 2009, 57, 289–301. [Google Scholar] [CrossRef]

- Li, K.; Cong, S. State of the art and prospects of structured sensing matrices in compressed sensing. Front. Comput. Sci. 2015, 9, 665–677. [Google Scholar] [CrossRef]

- Candes, E.J. The restricted isometry property and its implications for compressed sensing. Comptes Rendus Math. 2008, 346, 589–592. [Google Scholar] [CrossRef]

- Alonso, M.T.; Lopez–Dekker, P.; Mallorqui, J.J. A novel strategy for radar imaging based on compressive sensing. IEEE Trans. Geosci. Remote Sens. 2010, 48, 4285–4295. [Google Scholar] [CrossRef]

- Li, G.; Wang, W.; Wang, Y.; Yuan, S.; Yang, W.; Xi, N.; Liu, L. Nano–Manipulation based on real–Time compressive tracking. IEEE Trans. Nanotechnol. 2015, 14, 837–846. [Google Scholar] [CrossRef]

- Li, G.; Li, P.; Wang, Y.; Wang, W.; Xi, N.; Liu, L. Efficient imaging and real-time display of scanning ion conductance microscopy based on block compressive sensing. Int. J. Optom. 2014, 8, 218–227. [Google Scholar] [CrossRef]

- Mun, S.; Fowler, J.E. Block compressed sensing of images using directional transforms. In Proceedings of the 16th IEEE International Conference on Image, Cairo, Egypt, 4 March 2010; pp. 3021–3024. [Google Scholar]

| CoSaMP | SL0 | BCS | NIHT | 2D-NIHT | ||

|---|---|---|---|---|---|---|

| 11 point targets | Recovery time (s) | 0.069 ± 0.134 | 30.80 ± 1.64 | 0.049 ± 0.024 | 0.629 ± 0.115 | 0.055 ± 0.037 |

| Probability of exact recovery | 1 | 1 | 0.652 ± 0.038 | 1 | 1 | |

| helicopter | Recovery time (s) | 128.79 ± 3.495 | 34.679 ± 0.902 | 0.172 ± 0.044 | 5.176 ± 0.13 | 0.237 ± 0.139 |

| Probability of exact recovery | 0.976 | 1 | 0.476 ± 0.020 | 0.976 | 1 | |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, G.; Yang, J.; Yang, W.; Wang, Y.; Wang, W.; Liu, L. 2D Normalized Iterative Hard Thresholding Algorithm for Fast Compressive Radar Imaging. Remote Sens. 2017, 9, 619. https://doi.org/10.3390/rs9060619

Li G, Yang J, Yang W, Wang Y, Wang W, Liu L. 2D Normalized Iterative Hard Thresholding Algorithm for Fast Compressive Radar Imaging. Remote Sensing. 2017; 9(6):619. https://doi.org/10.3390/rs9060619

Chicago/Turabian StyleLi, Gongxin, Jia Yang, Wenguang Yang, Yuechao Wang, Wenxue Wang, and Lianqing Liu. 2017. "2D Normalized Iterative Hard Thresholding Algorithm for Fast Compressive Radar Imaging" Remote Sensing 9, no. 6: 619. https://doi.org/10.3390/rs9060619

APA StyleLi, G., Yang, J., Yang, W., Wang, Y., Wang, W., & Liu, L. (2017). 2D Normalized Iterative Hard Thresholding Algorithm for Fast Compressive Radar Imaging. Remote Sensing, 9(6), 619. https://doi.org/10.3390/rs9060619