1. Introduction

Advances in remote sensing platforms and Earth observation technologies have markedly improved the spatial resolution of remote sensing (RS) imagery, enabling finer and more reliable observations of the Earth’s surface. Semantic segmentation, which assigns a semantic label to each pixel, has become a core technique for fine-grained RS scene understanding. It supports precise identification and delineation of geographic objects and serves as a fundamental tool in a wide range of applications, including road extraction [

1,

2,

3], traffic monitoring [

4,

5], urban planning [

6,

7,

8], land cover classification [

9,

10,

11], and disaster assessment [

12,

13].

Driven by rapid advances in deep learning and the increasing availability of large-scale, annotated datasets, automatic segmentation of RS imagery has achieved remarkable success in recent years. However, despite these advances, accurately segmenting geospatial objects in high-resolution RS images remains a significant challenge, primarily due to several inherent characteristics of RS data.

Complex Background: RS images often exhibit highly complex and heterogeneous backgrounds, where target objects are frequently embedded in or obscured by large, cluttered surroundings. This complexity often leads to higher false-positive rates in segmentation. In certain datasets, irrelevant background regions may occupy more than 90% of the total image area [

14].

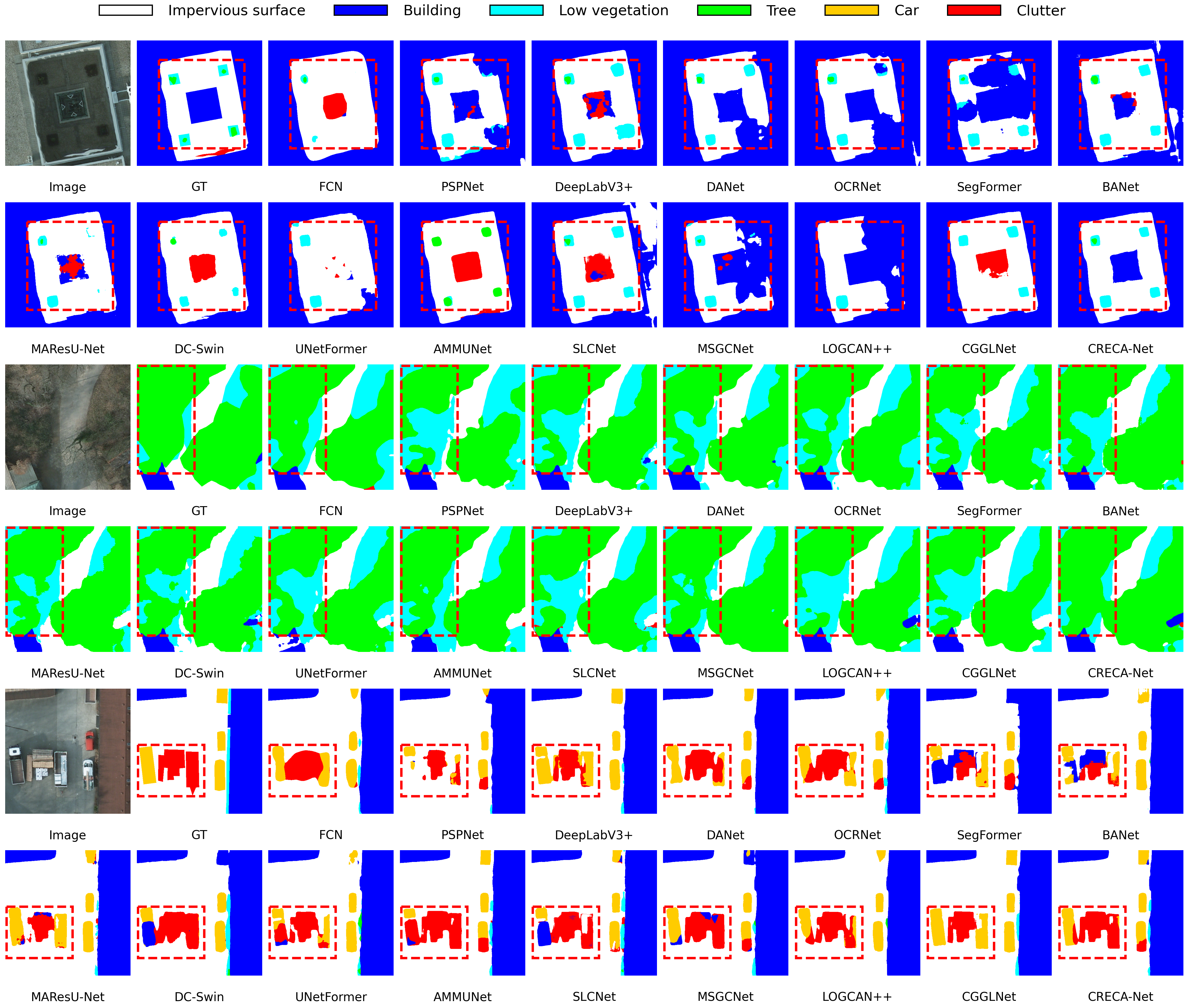

Large Intra-Class Variance and Small Inter-Class Variance: Although fine spatial resolution provides richer visual cues, such as detailed textures, shapes, and subtle color variations, it also exacerbates intra-class diversity while reducing inter-class separability. This issue is further intensified by structural ambiguity and boundary uncertainty arising from complex geographic layouts and the top-down imaging perspective. Consequently, distinguishing visually similar and easily confusable categories becomes particularly challenging in high-resolution RS imagery. For instance, objects of the same category (e.g., cars) may differ considerably in size, color, and orientation even within a single scene, while similar categories (e.g., trees vs. low vegetation or rooftops vs. ground surfaces) are often difficult to distinguish due to highly similar spectral or textural properties. As illustrated in

Figure 1, these factors collectively contribute to large intra-class variance (yellow arrows) and small inter-class variance (blue arrows), making purely appearance-based feature discrimination inadequate. To address these challenges, numerous studies [

15,

16,

17,

18] have attempted to improve segmentation accuracy by aggregating contextual information.

Existing context aggregation methods in semantic segmentation can be broadly categorized into two paradigms: spatial and relational context aggregation.

Spatial context aggregation methods commonly expand the receptive field by capturing multi-scale spatial contexts. Representative approaches such as PSPNet [

15] and the DeepLab series [

16,

19,

20] exploit pyramid pooling and dilated convolutions to aggregate contextual information from multiple spatial scales. Although effective, these methods rely on fixed isotropic receptive windows that aggregate contextual information in a category-agnostic manner, thereby indiscriminately integrating category-relevant and irrelevant regions. As a result, the aggregated context becomes susceptible to interference from background noise and visually similar yet semantically different categories, a problem that is particularly pronounced in RS imagery characterized by cluttered scenes and subtle inter-class differences.

To overcome these limitations, relational context aggregation methods employ attention mechanisms to adaptively model long-range dependencies, allowing contextual cues to be selected based on semantic relevance rather than spatial proximity. According to the type of relationships, these methods can be categorized into pixel-level and class-level relational modeling methods.

Pixel-level relational modeling explicitly computes pairwise dependencies among all pixels to perform dynamic feature aggregation. For example, NonLocal [

17] constructs a pairwise affinity matrix to capture global dependencies, while DANet [

21] introduces spatial and channel attention mechanisms to refine pixel representations. Transformer-based segmentation frameworks [

22,

23,

24,

25] generalize this idea by applying self-attention to image tokens or patches, enabling powerful global contextual reasoning. Related efforts have also explored Transformer-inspired models or Transformer frameworks, for cross-view feature matching [

26,

27], providing valuable methodological insights for multimodal semantic segmentation [

28,

29]. However, the quadratic computational and memory complexity of full attention limits their scalability to high-resolution RS imagery. Although sparse attention variants [

30,

31] and efficient Transformer architectures [

32,

33,

34] alleviate computational burdens through structured or hierarchical attention mechanisms, they still operate at the pixel–pixel or token–token level, failing to explicitly model class-level semantic structures. Consequently, in complex RS scenes with subtle inter-class differences, the absence of such class-aware guidance may lead the model to aggregate context from visually similar yet semantically irrelevant regions, ultimately degrading segmentation performance.

In contrast, class-level context modeling aims to suppress interference from irrelevant or confusing regions and enhance feature discriminability by explicitly capturing the relationships between pixels and class-level semantic representations. Representative approaches such as ACFNet [

35] and OCRNet [

18] generate class centers (or object region representations) either by averaging the embeddings of pixels assigned to each predicted class or by aggregating all pixel features using coarse segmentation probability maps. While effective in natural image segmentation, these strategies encounter notable limitations when applied to high-resolution RS imagery, which typically features complex backgrounds, low inter-class variability, and large intra-class variability. Under these conditions, existing class-level context modeling approaches encounter two fundamental limitations.

Inaccuracy and weakly discriminative class prototypes: Under these challenging characteristics of RS imagery, class prototypes tend to become highly sensitive to noise, prediction errors, and ambiguous regions. Simple averaging (

Figure 2a) assigns equal importance to all pixels and disregards variations in pixel confidence and representativeness, often leading to biased class prototypes. Although probability-weighted aggregation (

Figure 2b) partially alleviates this issue, the soft aggregation of all pixels inevitably allows non-target pixels to contribute to class prototypes, potentially contaminating the learned representations. Moreover, most existing methods lack explicit inter-class separation constraints, leading to insufficiently discriminative class prototypes, an issue particularly pronounced in RS imagery with inherently low inter-class separability.

Insufficient optimization of hard samples: These characteristics of RS imagery also lead to highly uneven pixel-wise segmentation difficulty. Boundary pixels, occluded regions, and pixels belonging to visually similar categories are significantly harder to classify than those in homogeneous regions. Despite this inherent imbalance, class-level context modeling frameworks are usually trained using the standard cross-entropy (CE) loss, which implicitly assumes uniform segmentation difficulty across pixels. Consequently, abundant easy pixels dominate optimization process, while hard samples receive inadequate supervision, leading to biased feature learning and reduced robustness in complex RS scenes.

To address these challenges, we propose CRECA-Net, a class representation-enhanced class-aware segmentation framework that simultaneously improves class prototypes and strengthens hard sample learning. Concretely, CRECA-Net integrates three key components: a class prototype refinement (CPR) module, which produces more reliable and discriminative prototypes by applying pixel selection, confidence-aware contribution weighting, and inter-class prototype separation regularization; a set of class-level context aggregation (CLCA) modules, which explicitly model pixel-to-class prototype correlations via cross-attention and progressively inject class-aware context into decoder features; and a difficulty-aware (DA) loss that dynamically estimates pixel-wise segmentation difficulty and redistributes the intra-image loss to emphasize hard samples.

Our main contributions are summarized as follows:

We present CRECA-Net, a class-aware segmentation framework that simultaneously enhances class representation learning and difficulty-adaptive optimization, effectively alleviating segmentation difficulty arising from low inter-class separability in high-resolution RS imagery.

We propose a CPR module that constructs reliable and discriminative class prototypes through pixel selection, confidence-aware contribution weighting, and inter-class prototype separation regularization, thereby mitigating prototype bias and facilitating more effective class-level context modeling in subsequent CLCA modules.

We introduce DA loss that dynamically estimates pixel-wise difficulty and adjusts the intra-image loss distribution to strengthen hard samples learning, resulting in more stable optimization and enhanced robustness. Extensive experiments on two benchmark datasets demonstrate that CRECA-Net achieves consistent performance improvements with minimal computational overhead.

The remainder of this paper is organized as follows:

Section 2 reviews related work.

Section 3 presents the architecture of CRECA-Net and details its key components.

Section 4 describes the datasets, evaluation metrics, and experimental setup.

Section 5 reports the comparative and ablation studies. Finally,

Section 6 and

Section 7 present the discussion of experimental results and the conclusion with future research directions, respectively.

3. Proposed Method

3.1. Overall Architecture

The overall architecture of the proposed CRECA-Net is illustrated in

Figure 3. The network follows a classical encoder-decoder structure with skip connections to balance global context modeling and spatial detail preservation. We employ a ResNet-50 [

62] backbone, pre-trained on ImageNet [

63], as the encoder for hierarchical feature extraction. Given an input RS image

I, the encoder generates multi-scale feature maps

,

,

, and

with spatial resolutions of 1/4, 1/8, 1/16, and 1/32 of the input size, respectively. In the decoder, all feature maps are first projected into a unified channel dimension (

) via a

convolution. Next, we introduce a CPR module that operates on the deepest feature map

to generate refined class prototypes

C. These prototypes then guide top-down feature refinement through four class-level context aggregation modules (

). Specifically,

takes

as input and outputs an enhanced representation

. This representation is fused with the higher-resolution feature map

and processed by

to obtain

. The same refinement procedure is subsequently applied through

and

, yielding progressively enriched features

and

that incorporate both semantic cues and spatial details. Finally, all enhanced feature maps

are upsampled to a uniform spatial resolution and aggregated through element-wise summation, followed by a final

convolution to produce the segmentation prediction. The entire network is trained end-to-end using a joint objective that combines DA loss and inter-class prototype separation loss, enabling more effective learning from hard samples while encouraging discriminative prototype formation.

3.2. Class Prototype Refinement Module

High-resolution RS images often contain visually similar objects from different categories, such as trees vs. low vegetation, or buildings vs. impervious surfaces, resulting in low inter-class variance. Complex backgrounds further aggravate this issue, making it challenging for existing class prototype estimation methods to derive accurate and well-separated class prototypes. Since reliable prototypes are crucial for constructing a discriminative embedding space and guiding class-aware context reasoning, we introduce the CPR module to refine class representations from three complementary aspects: pixel selection, confidence-aware contribution weighting, and inter-class prototype separation. These strategies collectively improve the accuracy, representativeness, and discriminability of class prototypes, thus enabling subsequent CLCA modules to perform more precise class-aware context aggregation.

3.2.1. Pixel Selection

Pixel selection improves the reliability of class prototype estimation by restricting the pixels used for prototype computation. Instead of estimating class prototypes through probability-weighted averaging over all pixels, as commonly adopted in prior work, we retain only those pixels predicted as class k. This selective mechanism reduces cross-class interference and ensures that each prototype is computed from semantically consistent feature regions.

As shown in

Figure 3b, given the deepest feature map

, where

and

denotes its spatial height and width, respectively, a classification head

H implemented using a

convolution projects

into the class space, producing the pre-classification logits:

where

K denotes the number of semantic categories. A coarse segmentation mask

is then obtained by assigning each pixel to the class with the highest logit response along the category dimension. This mask is subsequently supervised using the proposed DA loss, as described in

Section 3.4:

where

denotes the predicted class label for pixel

. Based on

M, the feature map

is partitioned into class-specific regions

, each containing the pixel features predicted as class

k:

where

is the number of pixels assigned to class

k. Similarly, the pre-classification logits

D are grouped into class-specific subsets:

This pixel grouping strategy ensures that each class prototype is computed from semantically coherent and prediction-consistent regions. Consequently, the estimated prototypes better capture the intrinsic characteristics of each category and are more robust to interference from visually similar but semantically distinct classes.

3.2.2. Confidence-Aware Contribution Weighting

Although the pixel selection module confines the candidate set for class k to the pixels predicted as belonging to class k, these pixels still vary in confidence and representativeness. Treating them equally may bias prototype estimation. To address this, we propose a confidence-aware contribution weighting (CACW) strategy, which assigns each pixel a contribution score computed from three complementary confidence indicators: prediction probability, logit margin, and prediction certainty. These indicators are defined as follows.

Prediction Probability Confidence: The predicted probability for the assigned class provides a direct estimate of model confidence for each pixel. Pixels with higher predicted probabilities are considered more reliable and therefore exert a stronger influence on the class prototype.

Given the pre-classification logits

(as defined above), the class probability distribution is obtained by applying the softmax operation along the category dimension:

Here,

denotes the predicted probability that the

i-th pixel is classified into category

c (for

). The probability-based confidence vector for class

k is computed as:

This indicator naturally down-weights low-confidence pixels while emphasizing more reliable pixels.

Logit Margin Confidence: To complement the absolute confidence provided by probability values, we further incorporate the margin between the largest and second-largest logits. A larger margin indicates clearer separation between the top competing classes, reflecting stronger representativeness of the assigned class.

Let

denote the

i-th largest element of a vector

x. Given the pre-classification logits

, the logit margin score for each pixel is computed as:

Here,

denotes the logit vector of the

i-th pixel.

This indicator complements the probability-based confidence by capturing relative certainty among competing classes, encouraging contributions from pixels with higher discriminativeness.

Prediction Certainty Confidence: In addition to probability and logit margin, entropy provides a measure of predictive uncertainty. Prior studies on uncertainty estimation [

64,

65] have shown that greater entropy indicates greater prediction uncertainty and a higher likelihood of misclassification. Accordingly, pixels with lower entropy should contribute more to the prototype.

For each pixel

i, the entropy is computed from its predicted probability distribution

as:

where

denotes the index of the pixels assigned to class

k. To convert entropy into a confidence-like weight, we first normalize the entropy values by the maximum possible entropy and then invert them:

where

corresponds to the maximum entropy of a uniform

K-class distribution. Pixels with lower entropy, indicating higher certainty, receive larger weights. This indicator explicitly captures the overall uncertainty in predictions and provides a complementary perspective to both the probability and logit margin confidence measures.

Confidence Fusion and Normalization: After obtaining the three confidence indicators, they are integrated to derive a contribution score for each pixel. Specifically, for pixels predicted as class

k, the aggregated confidence is computed as the sum of the three confidence indicators:

Here,

represents the contribution scores of the

pixels predicted as class

k. To ensure comparability among pixels within the same class, these aggregated scores are normalized via a softmax function:

The normalized scores

quantify each pixel’s relative importance in shaping the final class prototype.

Weighted Prototype Computation: Based on the normalized contribution weights, the class prototype for class

k is computed as a weighted mean of its selected pixel features:

Here,

denotes the feature vector of the

i-th pixel in

. Repeating this process for all

K categories yields the full prototype matrix:

where each row corresponds to a refined class representation. The complete computation procedure for class prototype generation is summarized in Algorithm 1.

| Algorithm 1 Class Prototype Generation |

Require: Feature map ,

Classifier Head ,

Number of categories K

Ensure:

Refined class prototypes - 1:

Feature Projection: Obtain the pre-classification logits D according to Equation ( 1) - 2:

Mask Generation: Generate the coarse segmentation mask M as defined in Equation ( 2) - 3:

for each class to K do - 4:

Extract the feature subset and logit subset following Equations ( 3) and ( 4) - 5:

Compute the probability distribution according to Equation ( 5) - 6:

for each pixel to do - 7:

Compute the confidence indicators: prediction probability confidence , logit margin confidence , and prediction certainty confidence as defined in Equations ( 6), ( 7), and ( 9)

- 8:

Compute aggregated confidence weight using Equation ( 10)

- 9:

end for - 10:

Compute Normalize confidence weight according to Equation ( 11) - 11:

Compute the refined class prototype with Equation ( 12) - 12:

end for - 13:

Return: Refined class prototypes C

|

This weighting scheme ensures that highly confident and semantically representative pixels contribute more strongly, while uncertain or noisy samples contribute less. As a result, the generated prototypes are more robust and discriminative, providing a reliable basis for subsequent class-aware context modeling.

3.2.3. Inter-Class Prototype Separation Loss

Although the CPR module improves the reliability of individual class prototypes, ensuring sufficient separability among different categories remains essential for robust semantic segmentation, particularly in RS imagery, where semantically distinct classes often exhibit highly similar appearances (e.g., tree vs. low vegetation, building vs. impervious surface). However, existing class-level context aggregation methods typically use the derived class centers directly for context modeling without enforcing explicit separation, which may lead to overly clustered prototypes and weakened class discriminability.

To mitigate this issue, we introduce an inter-class prototype separation loss, which explicitly encourages larger angular margins between class centers by penalizing excessive similarity. This constraint prevents feature-space collapse and promotes the formation of more discriminative and well-separated class embeddings.

Definition of Inter-class Similarity Penalty: To explicitly penalize overly close class centers, we define a penalty term based on the cosine similarity between class centers:

where

denote the refined prototypes for classes

p and

q, respectively. The cosine similarity is defined as:

The threshold

sets the maximum allowable similarity between two class centers. Only prototype pairs whose similarity exceeds

incur a penalty, thereby pushing overly close prototypes farther apart.

Inter-class Prototype Separation Loss: The loss evaluates pairwise similarities among all class prototypes and penalizes those pairs whose similarity exceeds the threshold

:

Minimizing

effectively enlarges the angular margins among class centers, enhances inter-class separability, and produces clearer semantic boundaries particularly in visually ambiguous regions. This loss operates solely on learned prototypes, requires no additional supervision, and introduces negligible computational overhead, making it practical for end-to-end training.

It is noteworthy that the class centers are computed exclusively from the deepest feature map

, which possesses a larger receptive field and captures richer semantic information [

15]. This enables more accurate coarse segmentation masks and more reliable prototype initialization. The coarse masks are then refined under ground-truth supervision.

Unlike previous works [

18,

61] that compute prototypes by aggregating all pixel features via probability-based weighting, our method forms each class representation using only the pixels predicted as belonging to that class, preventing cross-class interference. Furthermore, each pixel’s contribution is adaptively modulated by three complementary confidence indicators:

(1) absolute confidence measured by the predicted probability; (2) relative confidence quantified by the logit margin; and (3) overall prediction certainty indicated by entropy. By combining these indicators, high-confidence and semantically representative pixels receive larger contribution weights, producing prototypes that are robust and discriminative. Furthermore, the proposed inter-class prototype separation loss enforces angular margins among prototypes, effectively alleviating the prevalent problem of low inter-class variance in high-resolution RS imagery.

3.3. Class-Level Context Aggregation Module

Self-attention-based pixel-level relational modeling has been extensively employed in RS image segmentation owing to its strong capability in capturing long-range dependencies. However, computing pairwise similarities among all pixels incurs a quadratic complexity of , where n and d denote the number of pixels and feature dimension, respectively. For high-resolution RS images, this leads to prohibitive computational and memory costs. Additionally, aggregating information from all pixels may incorporate features from visually similar but semantically irrelevant regions, especially in scenes containing complex backgrounds or visually similar land-cover categories. These factors make pixel-to-pixel affinities unreliable and ultimately degrade segmentation accuracy.

To address these limitations, we introduce the CLCA module, which replaces dense pixel-to-pixel interactions with pixel-to-class prototype correlations. Unlike standard self-attention that captures dependencies only at the pixel level without semantic guidance, CLCA leverages class prototypes to guide attention and aggregates semantic information across all pixels predicted as belonging to the same class. This design reduces the computational complexity of pixel-level self-attention from to (where K denotes the number of semantic classes), while injecting explicit semantic guidance into the attention mechanism. Consequently, CLCA allows the network to capture long-range contextual cues while suppressing activations from irrelevant or confusing regions, thus enhancing the discriminability of pixel representations. Compared with generic cross-attention variants, which compute attention between two feature maps without explicit class-level guidance, CLCA explicitly incorporates class-level prototypes to guide semantic aggregation, which is not easily achievable with standard self-attention or cross-attention alone.

As illustrated in

Figure 3a, the CLCA module operates at each decoder stage and takes two inputs

(1) the stage-wise feature map

and

(2) the refined class prototypes

generated by the CPR module. Following a cross-attention paradigm,

is projected into a query matrix

, while the class prototypes are mapped to a key matrix

and a value matrix

. Here,

,

, and

represent learnable

convolutions followed by batch normalization and ReLU activation. The pixel-to-class attention map

is computed using a scaled dot-product followed by a row-wise softmax:

Based on

, the aggregated feature

is obtained by:

The resulting feature is first reshaped to match the spatial dimensions of the original feature

, then passed through an output mapping

, and fused with the original feature map

via channel concatenation (denoted as ⊕). The fused feature is subsequently refined using two

convolution layers with batch normalization and ReLU activation (

) to produce the refined aggregated feature

:

The above procedure defines a single CLCA module, which outputs for stage i.

The CLCA module is applied in a top-down manner to progressively refine decoder features. For decoder stages

, the current-stage feature is first updated by fusing it with the upsampled refined aggregated feature from the deeper stage:

where

denotes

bilinear upsampling. The updated feature

is then fed into the CLCA module at the current stage to generate the corresponding refined aggregated output:

This hierarchical refinement strategy progressively strengthens semantic consistency while preserving spatial detail. By repeatedly incorporating class-aware global cues from deep layers into fine-grained spatial structures of shallower layers, CLCA improves segmentation coherence and boundary localization.

3.4. Loss Function

Difficulty-aware Loss: Semantic segmentation in high-resolution RS imagery presents substantial variability in pixel-wise classification difficulty due to complex backgrounds, large intra-class diversity, and small inter-class differences. Pixels located at object boundaries, in occluded areas, or belonging to visually similar categories are inherently harder to classify than those in homogeneous regions. However, the standard CE loss assigns equal importance to all pixels, implicitly assuming uniform difficulty. This mismatch leads to a training imbalance: (1) Early in training, gradients are dominated by abundant easy samples, impeding effective learning of hard samples; (2) Later in training, although hard samples generate larger errors, their small quantity limits their impact on optimization. Consequently, hard regions receive insufficient supervision, ultimately degrading segmentation performance.

These limitations arise from the static nature of the standard CE loss, which cannot adaptively adjust sample importance as training evolves. To address this issue, we propose a DA loss that incorporates a dynamic difficulty estimation mechanism and an adaptive loss scheduling strategy, which stabilizes early-stage optimization while progressively emphasizing challenging samples as training proceeds.

Dynamic Difficulty Estimation Mechanism: To estimate pixel-wise classification difficulty, we adopt a normalized, confidence-driven weighting scheme inspired by focal loss [

66]. The difficulty weight for the

i-th pixel is defined as:

where

denotes the predicted probability of the ground-truth class,

N is the total number of pixels, and

is a focusing parameter controlling the emphasis on hard samples. The resulting difficulty-weighted loss is expressed as:

The normalization term

preserves gradient stability by keeping the loss magnitude comparable to the standard CE loss. This difficulty-aware redistribution of pixel-wise loss within an image assigns greater weights to lower confidence pixels, encouraging the model to focus on hard regions.

Adaptive Loss Scheduling Mechanism: Because model predictions are unreliable at early training stages, directly applying difficulty weighting may negatively impact optimization stability. To address this, we introduce an adaptive loss scheduling mechanism based on an annealing function that gradually shifts the optimization focus from easy samples to hard samples. The overall DA loss is defined as:

where

denotes the standard CE loss, and

is a monotonically increasing annealing function of training step

t. As

increases, the loss smoothly transitions from uniform CE supervision to difficulty-aware weighting, which stabilizes early-stage optimization while progressively increasing the model’s focus on hard regions. Representative annealing strategies are listed in

Table 1, where

denotes the annealing step count marking the end of the annealing period; its selection is discussed in

Section 5.3.3.

Overall loss: The total training loss combines the DA loss with the inter-class prototype separation loss to jointly optimize pixel-level learning and inter-class discrimination:

where

and

represent the DA loss applied to the final prediction and the auxiliary coarse mask

M, respectively, and

is the inter-class prototype separation loss introduced in

Section 3.2.3. The coefficient

controls the relative contribution of the auxiliary branch.

4. Experiment Settings

4.1. Datasets

The performance of the proposed CRECA-Net was evaluated on two widely used benchmark datasets for RS image segmentation: the ISPRS Vaihingen [

67] and ISPRS Potsdam [

67] datasets.

ISPRS Potsdam Dataset: The Potsdam dataset consists of 38 orthophoto tiles, each sized at 6000 × 6000 pixels with a ground sampling distance (GSD) of 5 cm. Each tile provides four multispectral bands, near-infrared (NIR), red (R), green (G), and blue (B), along with corresponding digital surface model (DSM) and normalized DSM (NDSM) data. In our experiments, only the RGB bands were used, excluding DSM and NDSM data. The dataset contains dense annotations for six land-cover categories: impervious surfaces, buildings, low vegetation, trees, cars, and clutter/background. Following established protocols, 23 tiles with IDs 2_10, 2_11, 2_12, 3_10, 3_11, 3_12, 4_10, 4_11, 4_12, 5_10, 5_11, 5_12, 6_7, 6_8, 6_9, 6_10, 6_11, 6_12, 7_7, 7_8, 7_9, 7_11, and 7_12 were allocated for training (note that tile 7_10 was excluded due to annotation errors), while the remaining 14 tiles were reserved for testing. Each tile was further divided into fixed-size, non-overlapping patches of 1024 × 1024 pixels for training and evaluation.

ISPRS Vaihingen Dataset: The Vaihingen dataset comprises 33 orthophoto tiles with an average resolution of 2494 × 2494 pixels and a GSD of 9 cm. Each tile includes three spectral bands, near-infrared (NIR), red (R), and green (G), together with corresponding DSM and NDSM data. The land-cover categories are the same six classes as defined for the Potsdam dataset. Following prior work, 15 tiles (IDs: 1, 3, 5, 7, 11, 13, 15, 17, 21, 23, 26, 28, 32, 34, and 37) were used for training, and the remaining 17 tiles were used for testing. All tiles were cropped into 1024 × 1024 patches using a sliding window with a 512-pixel stride, ensuring uniform patch dimensions across the dataset.

4.2. Evaluation Metrics

To comprehensively evaluate the performance of CRECA-Net and ensure fair comparisons with existing methods, three widely recognized evaluation metrics were used: Overall Accuracy (OA), mean Intersection over Union (mIoU), and F1 score (F1).

Overall Accuracy (OA): This metric measures the proportion of correctly classified pixels among all pixels and is defined as:

where

K denotes the total number of categories, and

,

,

, and

represent the true positives, false positives, true negatives, and false negatives for the

k-th class, respectively.

Mean Intersection over Union (mIoU): This metric measures the average overlap between the predicted and ground-truth regions across all categories, defined as the ratio of their intersection to their union:

F1 Score (F1): The F1 score for class

k is the harmonic mean of precision and recall. The overall F1 score (mean F1) is computed as the mean of

over all classes.

For class

k,

denotes the proportion of true positives among all predicted positives, and

is the ratio of true positives to the total number of actual positives, defined as:

4.3. Implementation Details

To ensure consistent and fair comparisons, all experiments were implemented using the PyTorch (version 2.0.0) framework and conducted on a single NVIDIA RTX A40 GPU (NVIDIA Corporation, Santa Clara, CA, USA). We employed the Stochastic Gradient Descent (SGD) optimizer with an initial learning rate of 0.01 and a weight decay of 0.0001. A polynomial decay policy [

41] was adopted to dynamically adjust the learning rate according to the schedule

, where the

was set to 0.9. The mini-batch size was 8, with 80 epochs on the Potsdam dataset and 150 epochs on the Vaihingen dataset. The introduced hyperparameters, including the similarity threshold

, focusing parameter

, and auxiliary loss coefficient

, were empirically determined through ablation studies and set to 0.125, 1.0, and 0.8, respectively, and kept fixed across all experiments. To enhance generalization, several data augmentation techniques were applied during training, including random cropping (crop size of 512 × 512), random scaling (scale factors of 0.5, 0.75, 1.0, 1.25, 1.5), random horizontal/vertical flipping, random rotation, and random Gaussian blur. During testing, multi-scale inference, flipping, and rotation were applied as test-time augmentation to improve robustness.

6. Discussion

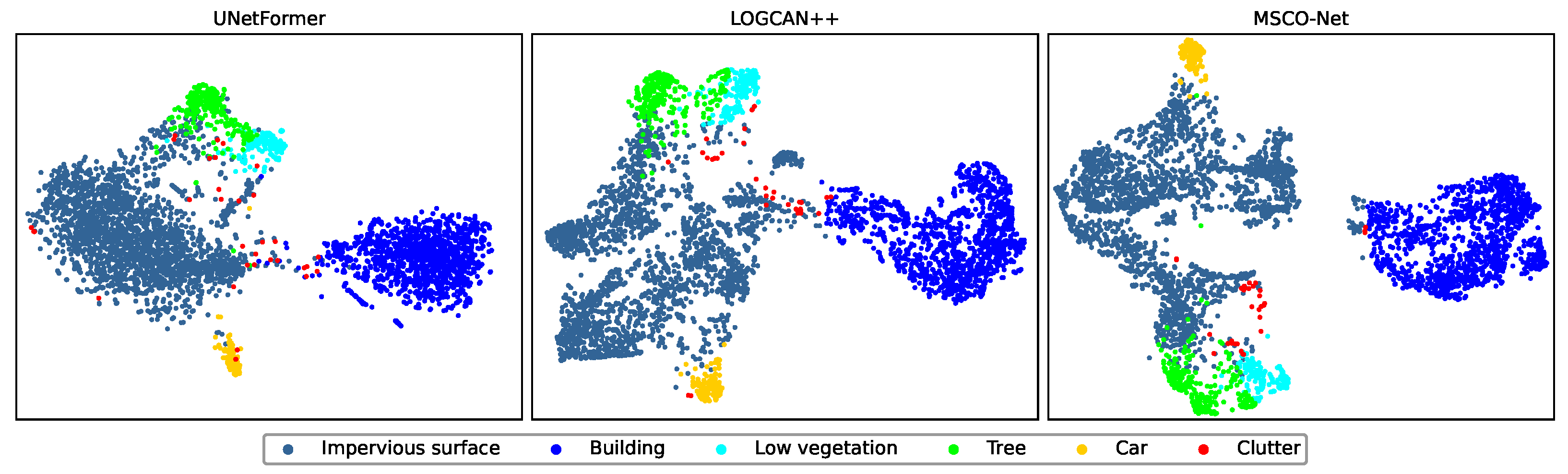

The consistent performance improvements of CRECA-Net on the ISPRS Potsdam and Vaihingen datasets primarily resulted from the design of the CPR module, CLCA module, and the DA loss. The CPR module enhances the reliability and discriminability of class prototypes through three key components: pixel selection, CWCA, and the inter-class prototype separation loss. Specifically, pixel selection ensured that class prototypes were computed from semantically coherent and prediction-consistent regions, CWCA allowed high-confidence pixels to contribute more to prototype estimation, while the inter-class prototype separation loss explicitly promoted separability between different category prototypes. The CLCA module employed cross-attention to model pixel-to-class prototype correlations, thereby capturing long-range contextual dependencies under semantic prototype guidance and reducing prediction ambiguity in complex scenes. In addition, the DA loss introduced a dynamic difficulty estimation mechanism with an adaptive loss scheduling strategy, which adaptively adjusted pixel-wise loss weights within each image, enabling the model to gradually shift its learning focus from easy samples to more challenging ones, such as boundary regions and visually confusing categories, while ensuring stable training.

These results indicated that jointly improving class representation quality and training dynamics provided an effective strategy for addressing the challenges of high-resolution RS image segmentation, particularly in scenes characterized by large intra-class variation and complex backgrounds. Despite these improvements, accurately delineating fine-grained boundary details and distinguishing subtle transitions between visually similar categories remained challenging, particularly in complex scenes where boundary ambiguity and category confusion were more pronounced.