HySIMU: An Open-Source Toolkit for Hyperspectral Remote Sensing Forward Modelling

Highlights

- HySIMU, an open-source and modular Python-based hyperspectral remote sensing forward modelling starter toolkit that integrates various non-proprietary modules and libraries into an established processing workflow.

- HySIMU enables the community to perform sensitivity tests for various survey scenarios to optimize mission planning and design.

- HySIMU provides synthetic image generation capabilities to support and enhance image-processing algorithm development.

Abstract

1. Introduction

2. Methods: HySIMU Framework

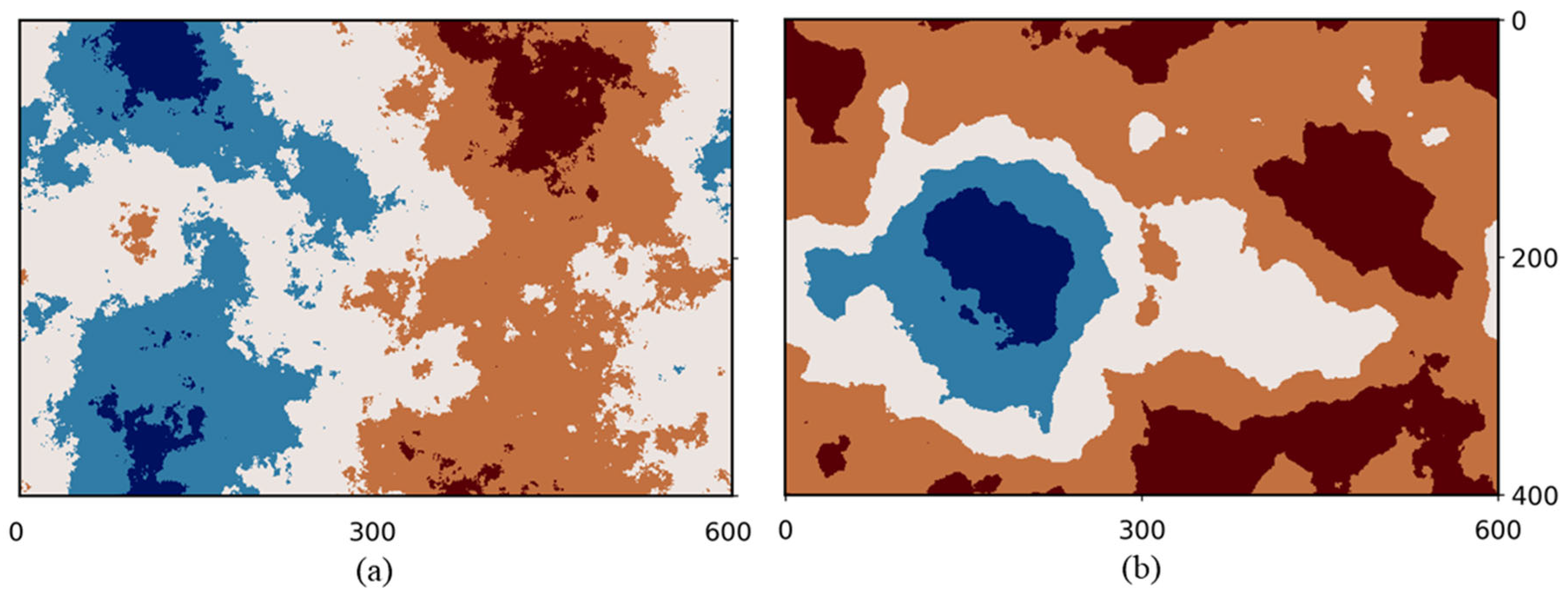

2.1. Ground Truth Builder

- i.

- A set of Discrete Fourier Transform (DFT) frequencies using a Fast Fourier Transform (FFT) algorithm is created for a user-specified grid.

- ii.

- A power spectrum synthesis is then computed from these frequencies according to the power law Equation (1) [32]:

- iii.

- An Inverse Discrete Fourier Transform (IDFT) is performed to transform the coefficients that have been generated from the frequency grid and scaled with the noise into the spatial domain, yielding a representation of a random two-dimensional (2D) fractal field.

- iv.

- The fractal field is subsequently discretized based on pixel values and indexed into distinct regions to represent the spectral zones. Each index is associated with the corresponding material spectra from the input.

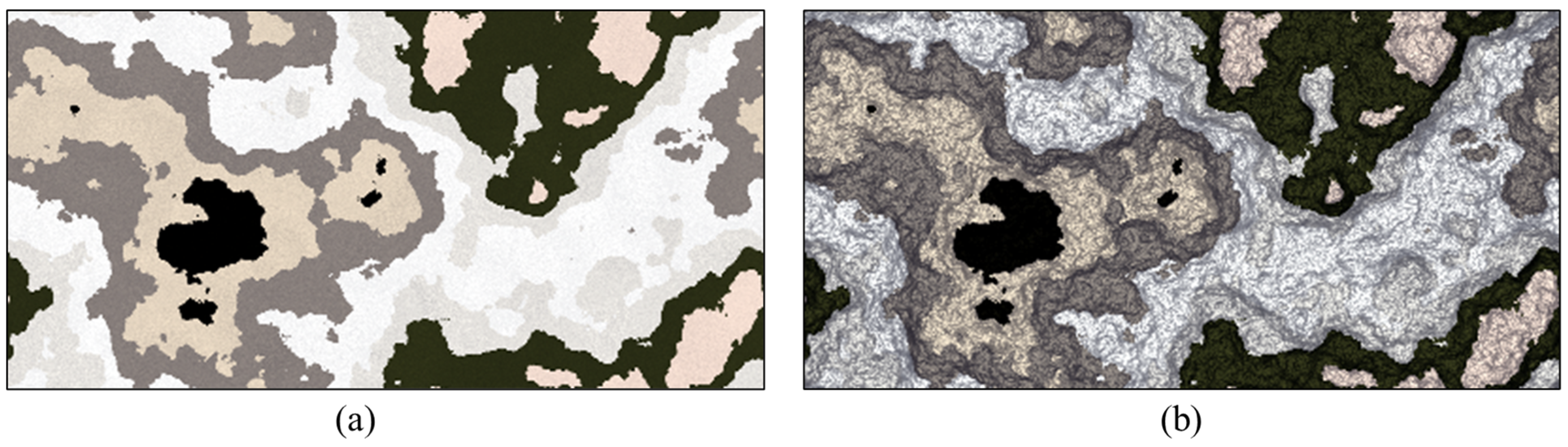

2.2. Datacube Texturing

2.3. Solar and View Geometry Calculations

2.4. Radiative Transfer Modelling

2.5. Spatial Resampling

- i.

- An optical PSF introduced by the energy distribution spread in the sensor’s focal plane.

- ii.

- An image motion PSF caused by a shift during the motion of the sensor during the integration time.

- iii.

- A detector PSF that represents the non-zero spatial area of each detector in the sensor.

- iv.

- An electronic PSF introduced by the electronic filter of the sensor.

3. Discussion: Validation and Potential Applications

4. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| 6S | Simulation of a Satellite Signal in the Solar Spectrum |

| AVIRIS | Airborne Visible/InfraRed Imaging Spectrometer |

| CASI | Compact Airborne Spectrographic Imager |

| CHIMES | Cranfield Hyperspectral Image Modelling and Evaluation System |

| CRISM | Compact Reconnaissance Imaging Spectrometer for Mars |

| DEM | Digital Elevation Model |

| DFT | Discrete Fourier Transform |

| DIRSIG | Digital Imaging and Remote Sensing Image Generation |

| EnMAP | Environmental Mapping and Analysis Program |

| EO | Earth Observation |

| EeteS | EnMAP End-to-End Simulation Tool |

| FFT | Fast Fourier Transform |

| FASSP | Forecasting and Analysis of Spectroradiometric System Performance |

| GLORIA | GLObal Reflectance community dataset for Imaging and optical sensing of Aquatic environments |

| HPC | High-Performance Computing |

| HRS | Hyperspectral Remote Sensing |

| HSI | Hyperspectral Imaging |

| HYDICE | Hyperspectral Digital Imagery Collection Experiment |

| HySIMU | Hyperspectral SIMUlator |

| IDFT | Inverse Discrete Fourier Transform |

| JR | AVIRIS Jasper Ridge hyperspectral image |

| libRadtran | library for Radiative transfer |

| MaxRSS | Maximum Resident Set Size |

| MV-N | multivariate normal distribution |

| NIR | Near-infrared |

| PACE-OCI | Plankton, Aerosol, Cloud, ocean Ecosystem–Ocean Color Instrument |

| PC1 | the first principal component |

| PC2 | the second principal component |

| PRISMA | PRecursore IperSpettrale della Missione Applicativa |

| PSF | Point Spread Function |

| PST | Pacific Standard Time |

| RGB | Red-Green-Blue |

| RTM | Radiative Transfer Model |

| TOA | Top-Of-Atmosphere |

| UAV | Uncrewed Aerial Vehicle |

| UR | HYDICE Urban hyperspectral image |

| USGS | United States Geological Survey |

References

- Transon, J.; d’Andrimont, R.; Maugnard, A.; Defourny, P. Survey of Hyperspectral Earth Observation Applications from Space in the Sentinel-2 Context. Remote Sens. 2018, 10, 157. [Google Scholar] [CrossRef]

- Jia, J.; Wang, Y.; Chen, J.; Guo, R.; Shu, R.; Wang, J. Status and Application of Advanced Airborne Hyperspectral Imaging Technology: A Review. Infrared Phys. Technol. 2020, 104, 103115. [Google Scholar] [CrossRef]

- Qian, S.-E. Hyperspectral Satellites, Evolution, and Development History. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 7032–7056. [Google Scholar] [CrossRef]

- Qian, S. Overview of Hyperspectral Imaging Remote Sensing from Satellites. In Advances in Hyperspectral Image Processing Techniques; Chang, C.-I., Ed.; Wiley: Hoboken, NJ, USA, 2022; pp. 41–66. ISBN 978-1-119-68776-4. [Google Scholar]

- Bhargava, A.; Sachdeva, A.; Sharma, K.; Alsharif, M.H.; Uthansakul, P.; Uthansakul, M. Hyperspectral Imaging and Its Applications: A Review. Heliyon 2024, 10, e33208. [Google Scholar] [CrossRef] [PubMed]

- Pixxel Space Technologies Hyperspectral Imagery. Available online: https://www.pixxel.space/hyperspectral-imagery (accessed on 3 June 2025).

- Wyvern Inc. Hyperspectral Data Products. Available online: https://www.wyvern.space/product (accessed on 16 March 2026).

- Orbital Sidekick Technology. Available online: https://www.orbitalsidekick.com/technology (accessed on 1 March 2026).

- Zhang, Z.; Huang, L.; Wang, Q.; Jiang, L.; Qi, Y.; Wang, S.; Shen, T.; Tang, B.-H.; Gu, Y. UAV Hyperspectral Remote Sensing Image Classification: A Systematic Review. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 3099–3124. [Google Scholar] [CrossRef]

- Yao, H.; Qin, R.; Chen, X. Unmanned Aerial Vehicle for Remote Sensing Applications—A Review. Remote Sens. 2019, 11, 1443. [Google Scholar] [CrossRef]

- Adão, T.; Hruška, J.; Pádua, L.; Bessa, J.; Peres, E.; Morais, R.; Sousa, J. Hyperspectral Imaging: A Review on UAV-Based Sensors, Data Processing and Applications for Agriculture and Forestry. Remote Sens. 2017, 9, 1110. [Google Scholar] [CrossRef]

- Zhong, Y.; Wang, X.; Xu, Y.; Wang, S.; Jia, T.; Hu, X.; Zhao, J.; Wei, L.; Zhang, L. Mini-UAV-Borne Hyperspectral Remote Sensing: From Observation and Processing to Applications. IEEE Geosci. Remote Sens. Mag. 2018, 6, 46–62. [Google Scholar] [CrossRef]

- Engineering Village. Search Results for “Hyperspectral Remote Sensing”. Elsevier. Available online: https://www.engineeringvillage.com (accessed on 3 June 2025).

- Lv, Z.; Zhang, M.; Sun, W.; Lei, T.; Benediktsson, J.A.; Liu, T. Land Cover Change Detection with Hyperspectral Remote Sensing Images: A Survey. Inf. Fusion 2025, 123, 103257. [Google Scholar] [CrossRef]

- Lu, B.; Dao, P.; Liu, J.; He, Y.; Shang, J. Recent Advances of Hyperspectral Imaging Technology and Applications in Agriculture. Remote Sens. 2020, 12, 2659. [Google Scholar] [CrossRef]

- Kerekes, J.P.; Landgrebe, D.A. Simulation of Optical Remote Sensing Systems. IEEE Trans. Geosci. Remote Sens. 1989, 27, 762–771. [Google Scholar] [CrossRef]

- Börner, A.; Wiest, L.; Keller, P.; Reulke, R.; Richter, R.; Schaepman, M.; Schläpfer, D. SENSOR: A Tool for the Simulation of Hyperspectral Remote Sensing Systems. ISPRS J. Photogramm. Remote Sens. 2001, 55, 299–312. [Google Scholar] [CrossRef]

- Zahidi, U.A.; Yuen, P.W.T.; Piper, J.; Godfree, P.S. An End-to-End Hyperspectral Scene Simulator with Alternate Adjacency Effect Models and Its Comparison with CameoSim. Remote Sens. 2019, 12, 74. [Google Scholar] [CrossRef]

- Inamdar, D.; Kalacska, M.; Leblanc, G.; Arroyo-Mora, J.P. Characterizing and Mitigating Sensor Generated Spatial Correlations in Airborne Hyperspectral Imaging Data. Remote Sens. 2020, 12, 641. [Google Scholar] [CrossRef]

- Inamdar, D.; Kalacska, M.; Darko, P.O.; Arroyo-Mora, J.P.; Leblanc, G. Spatial Response Resampling (SR2): Accounting for the Spatial Point Spread Function in Hyperspectral Image Resampling. MethodsX 2023, 10, 101998. [Google Scholar] [CrossRef]

- Segl, K.; Guanter, L.; Rogass, C.; Kuester, T.; Roessner, S.; Kaufmann, H.; Sang, B.; Mogulsky, V.; Hofer, S. EeteS—The EnMAP End-to-End Simulation Tool. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 5, 522–530. [Google Scholar] [CrossRef]

- Parente, M.; Clark, J.T.; Brown, A.J.; Bishop, J.L. End-to-End Simulation and Analytical Model of Remote-Sensing Systems: Application to CRISM. IEEE Trans. Geosci. Remote Sens. 2010, 48, 5491159. [Google Scholar] [CrossRef]

- Kerekes, J.P.; Baum, J.E. Full-Spectrum Spectral Imaging System Analytical Model. IEEE Trans. Geosci. Remote Sens. 2005, 43, 571–580. [Google Scholar] [CrossRef]

- Schott, J.R.; Brown, S.D.; Raqueño, R.V.; Gross, H.N.; Robinson, G. An Advanced Synthetic Image Generation Model and Its Application to Multi/Hyperspectral Algorithm Development. Can. J. Remote Sens. 1999, 25, 99–111. [Google Scholar] [CrossRef]

- Goodenough, A.A.; Brown, S.D. DIRSIG5: Next-Generation Remote Sensing Data and Image Simulation Framework. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 4818–4833. [Google Scholar] [CrossRef]

- Guanter, L.; Segl, K.; Kaufmann, H. Simulation of Optical Remote-Sensing Scenes With Application to the EnMAP Hyperspectral Mission. IEEE Trans. Geosci. Remote Sens. 2009, 47, 2340–2351. [Google Scholar] [CrossRef]

- Atarita, F.; Braun, A. Synthetic Hyperspectral Sensing Simulator: A Tool for Optimizing Applications in Mineral Exploration. In Proceedings of the SPIE Future Sensing Technologies 2021; Valenta, C.R., Shaw, J.A., Kimata, M., Eds.; SPIE: Bellingham, WA, USA, 2021; p. 35. [Google Scholar]

- Atarita, F.; Braun, A. HYSIMU: A Hyperspectral Simulator for Airborne Remote Sensing of Soils. In Proceedings of the the Application of Proximal and Remote Sensing Technologies for Soil Investigations, Virtual, 16–19 August 2021. [Google Scholar]

- Beaulne, D.; Atarita, F.; Fotopoulos, G.; Braun, A. Simulating At-Sensor Hyperspectral Satellite Data for Inland Water Algal Blooms. Sci. Total Environ. 2025, 1000, 180313. [Google Scholar] [CrossRef]

- Strahler, A.H.; Woodcock, C.E.; Smith, J.A. On the Nature of Models in Remote Sensing. Remote Sens. Environ. 1986, 20, 121–139. [Google Scholar] [CrossRef]

- Bourke, P. Frequency Synthesis of Landscapes (and Clouds). Available online: https://paulbourke.net/fractals/noise/ (accessed on 3 June 2025).

- Wang, Y.; Azam, A.; Wilson, M.C.; Neville, A.; Morina, A. Generating Fractal Rough Surfaces with the Spectral Representation Method. In Proceedings of the Institution of Mechanical Engineers, Part. J: Journal of Engineering Tribology; SAGE Publications: London, UK, 2021. [Google Scholar] [CrossRef]

- Müller, S.; Schüler, L.; Zech, A.; Heße, F. GSTools v1.3: A Toolbox for Geostatistical Modelling in Python. Geosci. Model Dev. 2022, 15, 3161–3182. [Google Scholar] [CrossRef]

- Chakrabarti, A.; Zickler, T. Statistics of Real-World Hyperspectral Images. In Proceedings of the CVPR 2011; IEEE: Colorado Springs, CO, USA, 2011; pp. 193–200. [Google Scholar]

- Meerdink, S.K.; Hook, S.J.; Roberts, D.A.; Abbott, E.A. The ECOSTRESS Spectral Library Version 1.0. Remote Sens. Environ. 2019, 230, 111196. [Google Scholar] [CrossRef]

- Boggs, T. Spectral Python [Python Package]. Available online: http://spectralpython.net (accessed on 16 March 2026).

- Schott, J.R.; Salvaggio, C.; Brown, S.D.; Rose, R.A. Incorporation of Texture in Multispectral Synthetic Image Generation Tools; Watkins, W.R., Clement, D., Eds.; SPIE: Bellingham, WA, USA, 1995; pp. 189–196. [Google Scholar]

- Manolakis, D.G.; Marden, D.; Kerekes, J.P.; Shaw, G.A. Statistics of Hyperspectral Imaging Data; Shen, S.S., Descour, M.R., Eds.; SPIE: Bellingham, WA, USA, 2001; pp. 308–316. [Google Scholar]

- Kokaly, R.F.; Clark, R.N.; Swayze, G.A.; Livo, K.E.; Hoefen, T.M.; Pearson, N.C.; Wise, R.A.; Benzel, W.; Lowers, H.A.; Driscoll, R.L.; et al. USGS Spectral Library Version 7; Data Series; U.S. Geological Survey: Reston, VA, USA, 2017; p. 68.

- Rouberol, B. Haversine [Python Package]. Available online: https://github.com/mapado/haversine (accessed on 3 June 2025).

- Anderson, K.S.; Hansen, C.W.; Holmgren, W.F.; Jensen, A.R.; Mikofski, M.A.; Driesse, A. Pvlib Python: 2023 Project Update. J. Open Source Softw. 2023, 8, 5994. [Google Scholar] [CrossRef]

- Holmgren, W.F.; Hansen, C.W.; Mikofski, M.A. Pvlib Python: A Python Package for Modeling Solar Energy Systems. J. Open Source Softw. 2018, 3, 884. [Google Scholar] [CrossRef]

- Corripio, J.G. Insolation [Python Package]. Available online: https://www.meteoexploration.com/insol/python/index.html (accessed on 16 March 2026).

- Duffie, J.A.; Beckman, W.A. Solar Engineering of Thermal Processes, 1st ed.; Wiley: Hoboken, NJ, USA, 2013; ISBN 978-0-470-87366-3. [Google Scholar]

- Vermote, E.F.; Tanre, D.; Deuze, J.L.; Herman, M.; Morcette, J.-J. Second Simulation of the Satellite Signal in the Solar Spectrum, 6S: An Overview. IEEE Trans. Geosci. Remote Sens. 1997, 35, 675–686. [Google Scholar] [CrossRef]

- Emde, C.; Buras-Schnell, R.; Kylling, A.; Mayer, B.; Gasteiger, J.; Hamann, U.; Kylling, J.; Richter, B.; Pause, C.; Dowling, T.; et al. The libRadtran Software Package for Radiative Transfer Calculations (Version 2.0.1). Geosci. Model Dev. 2016, 9, 1647–1672. [Google Scholar] [CrossRef]

- Mayer, B.; Kylling, A. Technical Note: The libRadtran Software Package for Radiative Transfer Calculations-Description and Examples of Use. Atmos. Chem. Phys. 2005, 5, 1855–1877. [Google Scholar] [CrossRef]

- Kotchenova, S.Y.; Vermote, E.F. Validation of a Vector Version of the 6S Radiative Transfer Code for Atmospheric Correction of Satellite Data. Part II. Homogeneous Lambertian and Anisotropic Surfaces. Appl. Opt. 2007, 46, 4455–4464. [Google Scholar] [CrossRef]

- Kotchenova, S.Y.; Vermote, E.F.; Matarrese, R.; Frank, J.; Klemm, J. Validation of a Vector Version of the 6S Radiative Transfer Code for Atmospheric Correction of Satellite Data. Part I: Path Radiance. Appl. Opt. 2006, 45, 6762–6774. [Google Scholar] [CrossRef]

- Kotchenova, S.Y.; Vermote, E.F.; Levy, R.; Lyapustin, A. Radiative Transfer Codes for Atmospheric Correction and Aerosol Retrieval: Intercomparison Study. Appl. Opt. 2008, 47, 2215–2226. [Google Scholar] [CrossRef]

- Evans, C. Statistical Comparison Between Various Atmospheric Correction Statistical Comparison Between Various Atmospheric Correction Methods and the LibRadtran Package Methods and the LibRadtran Package. Int. J. Remote Sens. 2002, 23, 2651–2671. [Google Scholar] [CrossRef]

- Obregón, M.A.; Serrano, A.; Costa, M.J.; Silva, A.M. Validation of libRadtran and SBDART Models under Different Aerosol Conditions. IOP Conf. Ser. Earth Environ. Sci. 2015, 28, 012010. [Google Scholar] [CrossRef]

- Govaerts, Y.; Nollet, Y.; Leroy, V. Radiative Transfer Model Comparison with Satellite Observations over CEOS Calibration Site Libya-4. Atmosphere 2022, 13, 1759. [Google Scholar] [CrossRef]

- Wilson, R.T. Py6S: A Python Interface to the 6S Radiative Transfer Model. Comput. Geosci. 2013, 51, 166–171. [Google Scholar] [CrossRef]

- Gryspeerdt, E. pyLRT [Python Package]. Available online: https://github.com/EdGrrr/pyLRT (accessed on 11 February 2025).

- Chander, G.; Markham, B.L.; Helder, D.L. Summary of Current Radiometric Calibration Coefficients for Landsat MSS, TM, ETM+, and EO-1 ALI Sensors. Remote Sens. Environ. 2009, 113, 893–903. [Google Scholar] [CrossRef]

- ASTM G173-03(2020); Tables for Reference Solar Spectral Irradiances: Direct Normal and Hemispherical on 37 Tilted Surface. G03 Committee ASTM International: West Conshohocken, PA, USA, 2008. [CrossRef]

- Kruse, F.A.; Lefkoff, A.B.; Boardman, J.W.; Heidebrecht, K.B.; Shapiro, A.T.; Barloon, P.J.; Goetz, A.F.H. The Spectral Image Processing System (SIPS)—Interactive Visualization and Analysis of Imaging Spectrometer Data. Remote Sens. Environ. 1993, 44, 145–163. [Google Scholar] [CrossRef]

- Schowengerdt, R.A. Remote Sensing, Models, and Methods for Image Processing, 3rd ed.; Academic Press: Burlington, MA, USA, 2007; ISBN 978-0-12-369407-2. [Google Scholar]

- Blonski, S.; Cao, C.; Gasser, J.; Ryan, R.; Zanoni, V.; Stanley, T. Satellite Hyperspectral Imaging Simulation. In Proceedings of the Proceedings of the International Symposium on Spectral Sensing Research (ISSSR) 1999; US Corps of Engineers: Washington, DC, USA, 2000. [Google Scholar]

- Gonzalez, R.C.; Woods, R.E. Digital Image Processing, 4th ed.; Global Edition; Pearson: New York, NY, USA, 2017; ISBN 978-0-13-335672-4. [Google Scholar]

- Zhu, F.; Wang, Y.; Fan, B.; Meng, G.; Pan, C. Effective Spectral Unmixing via Robust Representation and Learning-Based Sparsity. arXiv 2014. [Google Scholar] [CrossRef]

- Zhu, F.; Wang, Y.; Fan, B.; Meng, G.; Xiang, S.; Pan, C. Spectral Unmixing via Data-Guided Sparsity. arXiv 2014. [Google Scholar] [CrossRef] [PubMed]

- Zhu, F.; Wang, Y.; Xiang, S.; Fan, B.; Pan, C. Structured Sparse Method for Hyperspectral Unmixing. ISPRS J. Photogramm. Remote Sens. 2014, 88, 101–118. [Google Scholar] [CrossRef]

- Schober, P.; Boer, C.; Schwarte, L.A. Correlation Coefficients: Appropriate Use and Interpretation. Anesth. Analg. 2018, 126, 1763–1768. [Google Scholar] [CrossRef] [PubMed]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image Quality Assessment: From Error Visibility to Structural Similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [PubMed]

- Rodarmel, C.; Shan, J. Principal Component Analysis for Hyperspectral Image Classification. Surv. Land Inf. Sci. 2002, 62, 115–122. [Google Scholar]

- Pahlevan, N.; Mangin, A.; Balasubramanian, S.V.; Smith, B.; Alikas, K.; Arai, K.; Barbosa, C.; Bélanger, S.; Binding, C.; Bresciani, M.; et al. ACIX-Aqua: A Global Assessment of Atmospheric Correction Methods for Landsat-8 and Sentinel-2 over Lakes, Rivers, and Coastal Waters. Remote Sens. Environ. 2021, 258, 112366. [Google Scholar] [CrossRef]

- Zhu, F. Hyperspectral Unmixing: Ground Truth Labeling, Datasets, Benchmark Performances and Survey. arXiv 2017. [Google Scholar] [CrossRef]

- Ford, S.J.; Kalp, D.; McGlone, J.C.; McKeown, D.M., Jr. Preliminary Results on the Analysis of HYDICE Data for Information Fusion in Cartographic Feature Extraction. In Proceedings of the Integrating Photogrammetric Techniques with Scene Analysis and Machine Vision III; McKeown, D.M., Jr., McGlone, J.C., Jamet, O., Eds.; SPIE: Bellingham, WA, USA, 1997; Volume 3072, pp. 67–86. [Google Scholar]

- Mitchell, P.A. Hyperspectral Digital Imagery Collection Experiment (HYDICE). In Proceedings of the Geographic Information Systems, Photogrammetry, and Geological/Geophysical Remote Sensing; Lurie, J.B., Pearson, J.J., Zilioli, E., Eds.; SPIE: Bellingham, WA, USA, 1995; Volume 2587, pp. 70–95. [Google Scholar]

- Lehmann, M.K.; Gurlin, D.; Pahlevan, N.; Alikas, K.; Anstee, J.M.; Balasubramanian, S.V.; Barbosa, C.C.F.; Binding, C.; Bracher, A.; Bresciani, M.; et al. GLORIA-A Global Dataset of Remote Sensing Reflectance and Water Quality from Inland and Coastal Waters; Pangaea: Bremen, Germany, 2022; p. 72. [Google Scholar]

- Lehmann, M.K.; Gurlin, D.; Pahlevan, N.; Alikas, K.; Conroy, T.; Anstee, J.; Balasubramanian, S.V.; Barbosa, C.C.F.; Binding, C.; Bracher, A.; et al. GLORIA-A Globally Representative Hyperspectral in Situ Dataset for Optical Sensing of Water Quality. Sci. Data 2023, 10, 100. [Google Scholar] [CrossRef]

- Hanson, N.; Manke, P.; Birkholz, S.; Mühlbauer, M.; Heine, R.; Brandes, A. Cuvis.Ai: An Open-Source, Low-Code Software Ecosystem for Hyperspectral Processing and Classification. In 2024 14th Workshop on Hyperspectral Imaging and Signal Processing: Evolution in Remote Sensing (WHISPERS); IEEE: Piscataway, NJ, USA, 2024. [Google Scholar]

- Mao, Y.; Betters, C.H.; Evans, B.; Artlett, C.P.; Leon-Saval, S.G.; Garske, S.; Cairns, I.H.; Cocks, T.; Winter, R.; Dell, T. OpenHSI: A Complete Open-Source Hyperspectral Imaging Solution for Everyone. Remote Sens. 2022, 14, 2244. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Sensor | AVIRIS-based |

| Bands | 224 |

| Spectral range | 370–2500 nm |

| Flight altitude | 20,000 m |

| Spatial resolution | 20 m |

| Acquisition date | 2 September 1992 |

| Acquisition time | 12:00:00 PST |

| View azimuth angle | 90° |

| View zenith angle | 5° |

| Image output | Reflectance |

| RTM | libRadtran |

| Atmospheric profile | mid-latitude summer |

| Aerosol profile | rural |

| PSF option | simplified |

| Platform speed | 206 m/s |

| Sensor FOV | 36° |

| Sensor integration time | 0.087 s |

| REF1 | REF2 | |

|---|---|---|

| GT image size | 100 × 100 pixels | 200 × 200 pixels |

| Spectral bands | 224 | |

| Processor | Intel® Xeon® Processor E7-8867 v3 @ 2.5 GHz | |

| Cores | 64 | 64 |

| Runtime | 01:02:59 | 03:57:12 |

| CPU time | 2–19:10:56 | 10–16:32:00 |

| MaxRSS | 35.36 GB | 35.97 GB |

| JR–REF1 | JR–REF2 | |

|---|---|---|

| rs | 0.740 | 0.741 |

| Mean SSIM | 0.460 | 0.404 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Atarita, F.; Braun, A. HySIMU: An Open-Source Toolkit for Hyperspectral Remote Sensing Forward Modelling. Remote Sens. 2026, 18, 943. https://doi.org/10.3390/rs18060943

Atarita F, Braun A. HySIMU: An Open-Source Toolkit for Hyperspectral Remote Sensing Forward Modelling. Remote Sensing. 2026; 18(6):943. https://doi.org/10.3390/rs18060943

Chicago/Turabian StyleAtarita, Fadhli, and Alexander Braun. 2026. "HySIMU: An Open-Source Toolkit for Hyperspectral Remote Sensing Forward Modelling" Remote Sensing 18, no. 6: 943. https://doi.org/10.3390/rs18060943

APA StyleAtarita, F., & Braun, A. (2026). HySIMU: An Open-Source Toolkit for Hyperspectral Remote Sensing Forward Modelling. Remote Sensing, 18(6), 943. https://doi.org/10.3390/rs18060943