ECO-DEAU: An Ecologically Constrained Deep Learning Autoencoder for Sub-Pixel Land Cover Unmixing in Arid and Semi-Arid Regions

Highlights

- ECO-DEAU significantly outperforms traditional linear and unconstrained deep learning models, achieving a maximum overall of 0.749 in heterogeneous zones and effectively decoupling spectrally similar classes like impervious surfaces and bare soil.

- Embedding ecological priors into deep autoencoders effectively overcomes local optima limitations of traditional unmixing methods, ensuring both high accuracy and biophysical interpretability.

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Area

2.2. Datasets

2.2.1. Multi-Temporal Remote Sensing Data

2.2.2. Topographic Elevation Data

2.2.3. Land Cover Abundance Validation Data

2.3. Ecologically Constrained Deep Autoencoder (ECO-DEAU) Method

2.3.1. Endmember Extraction and Weight Initialization

2.3.2. Architecture of Ecologically Constrained Deep Autoencoder (ECO-DEAU)

- (1)

- Deep Feature Encoder: The encoder is designed to compress and map the input spectral vector into a latent feature vector, where denotes the number of spectral bands (, corresponding to the stacked multi-seasonal dataset). To capture the inherent non-linearity and complexity of spectral features, a multi-layer Fully Connected Network (FCN) is employed. This process captures complex non-linear spatial-spectral features, an ability that has proven essential in recent deep learning applications for hyperspectral target perception and robust video tracking [52,53]. Hidden layers utilize the Rectified Linear Unit (ReLU) activation function to enhance the network’s capacity for non-linear feature representation. Batch Normalization (BN) layers are interleaved between dense layers to accelerate model convergence and mitigate the vanishing gradient problem. Ultimately, the encoder outputs a feature vector with dimension (number of endmembers, ), yielding a preliminary estimation of abundances.

- (2)

- Abundance Physical Constraint Layer: This layer enforces strict constraints on the encoder’s output to guarantee the physical interpretability of the unmixing results. Spectral Unmixing mandates that the estimated abundance vector adhere to two physical conditions: the Abundance Non-negative Constraint (ANC) and the Abundance Sum-to-One Constraint (ASC), defined as:

- (3)

- Physics-Driven Decoder: The decoder maps the low-dimensional abundance vector back to the high-dimensional spectral space to reconstruct the original pixel . This decoder is constructed in strict accordance with the LMM assumption. It is implemented as a bias-free single linear layer, mathematically equivalent to a matrix multiplication:where is the spectral endmember matrix. Crucially, the weights of this decoder matrix are initialized using the multi-temporal spectral profiles of the five land cover types extracted in Section 2.3.1.

2.3.3. Objective Functions

2.4. Estimating Abundance from Sentinel-2 Using ECO-DEAU

2.5. Comparison Methods

3. Results

3.1. Comparison with Baseline Methods

3.1.1. Endmember Spectral Visualization

3.1.2. Quantitative Comparison of Unmixing Accuracy Between the Proposed ECO-DEAU Model and the Baseline AE Models

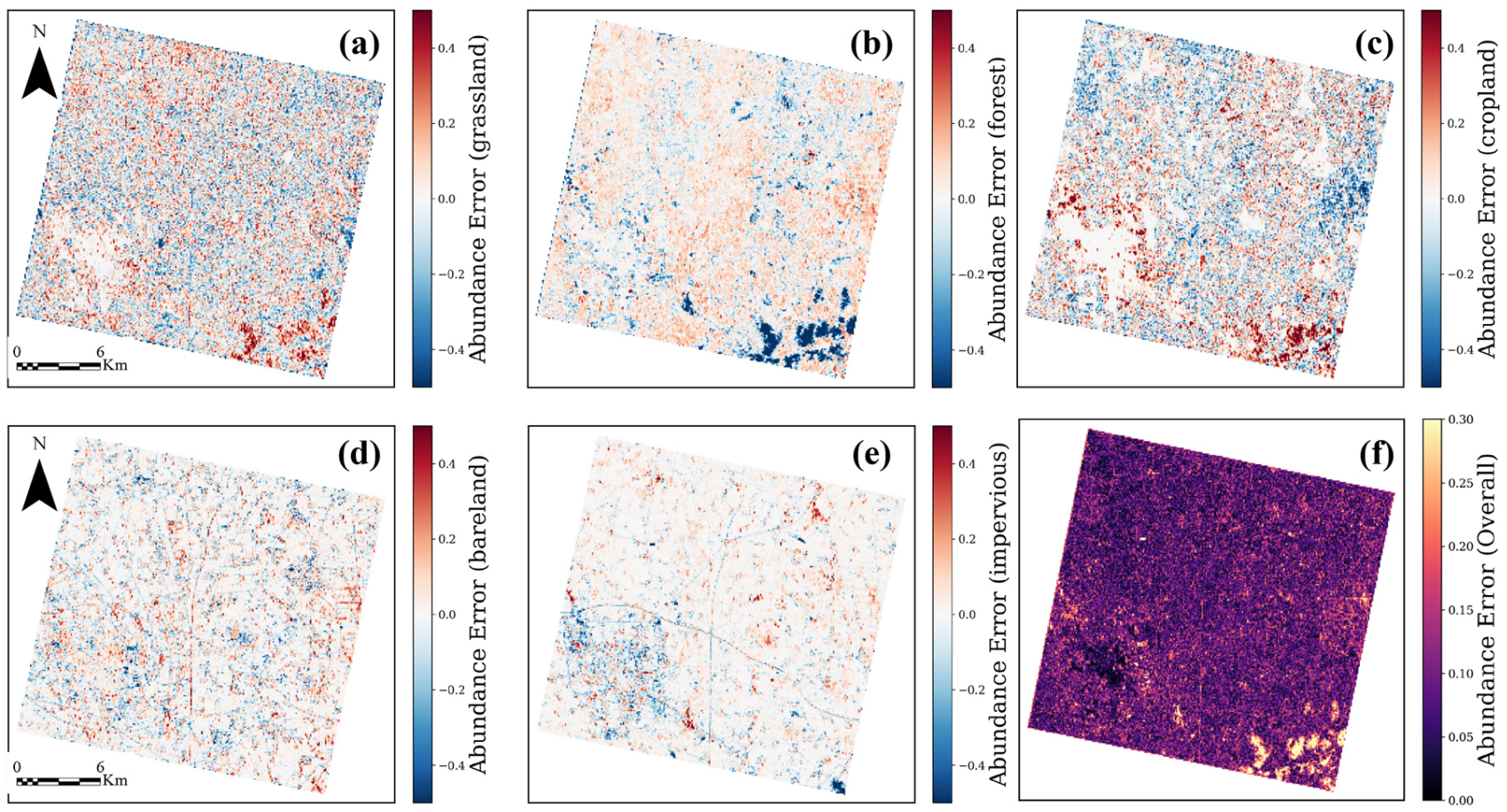

3.2. Accuracy Assessment with GF-2 Imagery Abundance

3.2.1. Accuracy Assessment in Urban-Mountain Transition Zone, Hohhot

3.2.2. Accuracy Assessment in Agricultural Aggregation Area, Bayannur

3.3. Spatial Distribution Patterns of Land Cover Abundances in the Study Area

4. Discussion

4.1. Reliability of Data and Validation Strategy

4.2. Reliability of Model Architecture and Ecological Constraints

4.3. Transferability of ECO-DEAU

4.4. Uncertainties and Limitations

4.5. Future Work

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Reynolds, J.F.; Smith, D.M.S.; Lambin, E.F.; Turner, B.L.; Mortimore, M.; Batterbury, S.P.J.; Downing, T.E.; Dowlatabadi, H.; Fernández, R.J.; Herrick, J.E.; et al. Global Desertification: Building a Science for Dryland Development. Science 2007, 316, 847–851. [Google Scholar] [CrossRef]

- Poulter, B.; Frank, D.; Ciais, P.; Myneni, R.B.; Andela, N.; Bi, J.; Broquet, G.; Canadell, J.G.; Chevallier, F.; Liu, Y.Y.; et al. Contribution of Semi-Arid Ecosystems to Interannual Variability of the Global Carbon Cycle. Nature 2014, 509, 600–603. [Google Scholar] [CrossRef]

- Ahlström, A.; Raupach, M.R.; Schurgers, G.; Smith, B.; Arneth, A.; Jung, M.; Reichstein, M.; Canadell, J.G.; Friedlingstein, P.; Jain, A.K.; et al. The Dominant Role of Semi-Arid Ecosystems in the Trend and Variability of the Land CO2 Sink. Science 2015, 348, 895–899. [Google Scholar] [CrossRef]

- Huang, J.; Yu, H.; Guan, X.; Wang, G.; Guo, R. Accelerated Dryland Expansion under Climate Change. Nat. Clim. Change 2016, 6, 166–171. [Google Scholar] [CrossRef]

- Huang, J.; Li, Y.; Fu, C.; Chen, F.; Fu, Q.; Dai, A.; Shinoda, M.; Ma, Z.; Guo, W.; Li, Z.; et al. Dryland Climate Change: Recent Progress and Challenges. Rev. Geophys. 2017, 55, 719–778. [Google Scholar] [CrossRef]

- Chen, C.; Park, T.; Wang, X.; Piao, S.; Xu, B.; Chaturvedi, R.K.; Fuchs, R.; Brovkin, V.; Ciais, P.; Fensholt, R.; et al. China and India Lead in Greening of the World through Land-Use Management. Nat. Sustain. 2019, 2, 122–129. [Google Scholar] [CrossRef]

- Li, B.; Liu, D.; Yu, E.; Wang, L. Warming-and-Wetting Trend over the China’s Drylands: Observational Evidence and Future Projection. Glob. Environ. Change 2024, 86, 102826. [Google Scholar] [CrossRef]

- Bryan, B.A.; Gao, L.; Ye, Y.; Sun, X.; Connor, J.D.; Crossman, N.D.; Stafford-Smith, M.; Wu, J.; He, C.; Yu, D.; et al. China’s Response to a National Land-System Sustainability Emergency. Nature 2018, 559, 193–204. [Google Scholar] [CrossRef] [PubMed]

- Lu, F.; Hu, H.; Sun, W.; Zhu, J.; Liu, G.; Zhou, W.; Zhang, Q.; Shi, P.; Liu, X.; Wu, X.; et al. Effects of National Ecological Restoration Projects on Carbon Sequestration in China from 2001 to 2010. Proc. Natl. Acad. Sci. USA 2018, 115, 4039–4044. [Google Scholar] [CrossRef] [PubMed]

- Fang, J.; Yu, G.; Liu, L.; Hu, S.; Chapin, F.S. Climate Change, Human Impacts, and Carbon Sequestration in China. Proc. Natl. Acad. Sci. USA 2018, 115, 4015–4020. [Google Scholar] [CrossRef]

- Li, L.; Liu, K.; Wang, S.; Li, H.; Bo, Y.; Li, X. Mapping Irrigated Cropland at 30 m Spatial Resolution in Northern China over the Past Three Decades. GIScience Remote Sens. 2025, 62, 2563394. [Google Scholar] [CrossRef]

- Aguiar, M.R.; Sala, O.E. Patch Structure, Dynamics and Implications for the Functioning of Arid Ecosystems. Trends Ecol. Evol. 1999, 14, 273–277. [Google Scholar] [CrossRef]

- Keshava, N.; Mustard, J.F. Spectral Unmixing. IEEE Signal Process. Mag. 2002, 19, 44–57. [Google Scholar] [CrossRef]

- Small, C. The Landsat ETM+ Spectral Mixing Space. Remote Sens. Environ. 2004, 93, 1–17. [Google Scholar] [CrossRef]

- Foody, G.M. Status of Land Cover Classification Accuracy Assessment. Remote Sens. Environ. 2002, 80, 185–201. [Google Scholar] [CrossRef]

- DeFries, R.S.; Field, C.B.; Fung, I.; Collatz, G.J.; Bounoua, L. Combining Satellite Data and Biogeochemical Models to Estimate Global Effects of Human-induced Land Cover Change on Carbon Emissions and Primary Productivity. Glob. Biogeochem. Cycles 1999, 13, 803–815. [Google Scholar] [CrossRef]

- Liu, X.; Wang, S.; Zhang, X.; Zhen, L.; Ma, C.; Naing, S.Y.; Liu, K.; Li, H. Monitoring Spatiotemporal Dynamics of Farmland Abandonment and Recultivation Using Phenological Metrics. Land 2025, 14, 1745. [Google Scholar] [CrossRef]

- Smith, M.O.; Ustin, S.L.; Adams, J.B.; Gillespie, A.R. Vegetation in Deserts: I. A Regional Measure of Abundance from Multispectral Images. Remote Sens. Environ. 1990, 31, 1–26. [Google Scholar] [CrossRef]

- Elmore, A.J.; Mustard, J.F.; Manning, S.J.; Lobell, D.B. Quantifying Vegetation Change in Semiarid Environments: Precision and Accuracy of Spectral Mixture Analysis and the Normalized Difference Vegetation Index. Remote Sens. Environ. 2000, 73, 87–102. [Google Scholar] [CrossRef]

- Nascimento, J.M.P.; Dias, J.M.B. Vertex Component Analysis: A Fast Algorithm to Unmix Hyperspectral Data. IEEE Trans. Geosci. Remote Sens. 2005, 43, 898–910. [Google Scholar] [CrossRef]

- Winter, M.E. N-FINDR: An Algorithm for Fast Autonomous Spectral End-Member Determination in Hyperspectral Data. In Imaging Spectrometry V; SPIE: Bellingham, DC, USA, 1999; Volume 3753, pp. 266–275. [Google Scholar]

- Nonlinear Unmixing of Hyperspectral Images: Models and Algorithms. Available online: https://xplorestaging.ieee.org/document/6678284 (accessed on 30 January 2026).

- Heylen, R.; Parente, M.; Gader, P. A Review of Nonlinear Hyperspectral Unmixing Methods. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 1844–1868. [Google Scholar] [CrossRef]

- Zhao, D.; Hu, B.; Jiang, W.; Zhong, W.; Arun, P.V.; Cheng, K.; Zhao, Z.; Zhou, H. Hyperspectral Video Tracker based on Spectral Difference Matching Reduction and Deep Spectral Target Perception Features. Opt. Lasers Eng. 2025, 194, 109124. [Google Scholar] [CrossRef]

- Su, Y.; Zhu, Z.; Gao, L.; Plaza, A.; Li, P.; Sun, X.; Xu, X. DAAN: A deep autoencoder-based augmented network for blind multilinear hyperspectral unmixing. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5512715. [Google Scholar] [CrossRef]

- Zhang, M.; Yang, M.; Xie, H.; Yue, P.; Zhang, W.; Jiao, Q.; Xu, L.; Tan, X. A global spatial-spectral feature fused autoencoder for nonlinear hyperspectral unmixing. Remote Sens. 2024, 16, 3149. [Google Scholar] [CrossRef]

- Mantripragada, K.; Qureshi, F.Z. Hyperspectral pixel unmixing with latent dirichlet variational autoencoder. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5507112. [Google Scholar] [CrossRef]

- Su, Y.; Li, J.; Plaza, A.; Marinoni, A.; Gamba, P.; Chakravortty, S. DAEN: Deep Autoencoder Networks for Hyperspectral Unmixing. IEEE Trans. Geosci. Remote Sens. 2019, 57, 4309–4321. [Google Scholar] [CrossRef]

- Palsson, B.; Ulfarsson, M.O.; Sveinsson, J.R. Convolutional Autoencoder for Spectral–Spatial Hyperspectral Unmixing. IEEE Trans. Geosci. Remote Sens. 2021, 59, 535–549. [Google Scholar] [CrossRef]

- Chen, J.; Zhao, M.; Wang, X.; Richard, C.; Rahardja, S. Integration of physics-based and data-driven models for hyperspectral image unmixing: A summary of current methods. IEEE Signal Process. Mag. 2023, 40, 61–74. [Google Scholar] [CrossRef]

- Zhao, M.; Chen, J.; Dobigeon, N. AE-RED: A hyperspectral unmixing framework powered by deep autoencoder and regularization by denoising. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5512115. [Google Scholar] [CrossRef]

- Hong, D.; Gao, L.; Yao, J.; Yokoya, N.; Chanussot, J.; Heiden, U.; Zhang, B. Endmember-guided unmixing network (EGU-Net): A general deep learning framework for self-supervised hyperspectral unmixing. IEEE Trans. Neural Netw. Learn. Syst. 2021, 33, 6518–6531. [Google Scholar] [CrossRef]

- Bhatt, J.S.; Joshi, M.V. Deep Learning in Hyperspectral Unmixing: A Review. In Proceedings of the IGARSS 2020—2020 IEEE International Geoscience and Remote Sensing Symposium; IEEE: Piscataway, NJ, USA, 2020; pp. 2189–2192. [Google Scholar]

- Advances in Nonlinear Spectral Unmixing of Hyperspectral Images-All Databases. Available online: https://webofscience.clarivate.cn/wos/alldb/full-record/CSCD:4934739 (accessed on 21 May 2024).

- Dynamic Vegetation Responses to Climate and Land Use Changes over the Inner Mongolia Reach of the Yellow River Basin, China. Available online: https://www.mdpi.com/2072-4292/15/14/3531 (accessed on 30 January 2026).

- Yang, J.; Jia, L.; Hao, J.; Luo, Q.; Chi, W.; Wang, Y.; Zheng, H.; Yuan, R.; Na, Y. Temporal and Spatial Variation Characteristics of the Ecosystem in the Inner Mongolia Section of the Yellow River Basin. Atmosphere 2024, 15, 827. [Google Scholar] [CrossRef]

- Zhang, H.; Zhang, J.; Lv, Z.; Yao, L.; Zhang, N.; Zhang, Q. Spatio-Temporal Assessment of Landscape Ecological Risk and Associated Drivers: A Case Study of the Yellow River Basin in Inner Mongolia. Land 2023, 12, 1114. [Google Scholar] [CrossRef]

- Chen, Q.; Zhu, M.; Zhang, C.; Zhou, Q. The Driving Effect of Spatial-Temporal Difference of Water Resources Carrying Capacity in the Yellow River Basin. J. Clean. Prod. 2023, 388, 135709. [Google Scholar] [CrossRef]

- Liu, J.; Zhang, Z.; Xu, X.; Kuang, W.; Zhou, W.; Zhang, S.; Li, R.; Yan, C.; Yu, D.; Wu, S.; et al. Spatial Patterns and Driving Forces of Land Use Change in China during the Early 21st Century. J. Geogr. Sci. 2010, 20, 483–494. [Google Scholar] [CrossRef]

- Drusch, M.; Del Bello, U.; Carlier, S.; Colin, O.; Fernandez, V.; Gascon, F.; Hoersch, B.; Isola, C.; Laberinti, P.; Martimort, P.; et al. Sentinel-2: ESA’s Optical High-Resolution Mission for GMES Operational Services. Remote Sens. Environ. 2012, 120, 25–36. [Google Scholar] [CrossRef]

- Korhonen, L.; Hadi; Packalen, P.; Rautiainen, M. Comparison of Sentinel-2 and Landsat 8 in the Estimation of Boreal Forest Canopy Cover and Leaf Area Index. Remote Sens. Environ. 2017, 195, 259–274. [Google Scholar] [CrossRef]

- Shen, B.; Guo, J.; Li, Z.; Chen, J.; Fang, W.; Kussainova, M.; Amarjargal, A.; Pulatov, A.; Yan, R.; Anenkhonov, O.A.; et al. Comparative Verification of Leaf Area Index Products for Different Grassland Types in Inner Mongolia, China. Remote Sens. 2023, 15, 4736. [Google Scholar] [CrossRef]

- Zhu, Z.; Woodcock, C.E. Object-Based Cloud and Cloud Shadow Detection in Landsat Imagery. Remote Sens. Environ. 2012, 118, 83–94. [Google Scholar] [CrossRef]

- Zhao, D.; Wang, M.; Huang, K.; Zhong, W.; Arun, P.V.; Li, Y.; Asano, Y.; Wu, L.; Zhou, H. OCSCNet-Tracker: Hyperspectral Video Tracker based on Octave Convolution and Spatial-Spectral Capsule Network. Remote Sens. 2025, 17, 693. [Google Scholar] [CrossRef]

- Zhu, Z.; Wang, S.; Woodcock, C.E. Improvement and Expansion of the Fmask Algorithm: Cloud, Cloud Shadow, and Snow Detection for Landsats 4–7, 8, and Sentinel 2 Images. Remote Sens. Environ. 2015, 159, 269–277. [Google Scholar] [CrossRef]

- Zhao, D.; Tang, L.; Arun, P.V.; Asano, Y.; Zhang, L.; Xiong, Y.; Tao, X.; Hu, J. City-Scale Distance Estimation via Near-Infrared Trispectral Light Extinction in Bad Weather. Infrared Phys. Technol. 2023, 128, 104507. [Google Scholar] [CrossRef]

- Main-Knorn, M.; Pflug, B.; Louis, J.; Debaecker, V.; Müller-Wilm, U.; Gascon, F. Sen2Cor for Sentinel-2. In Proceedings of the Image and Signal Processing for Remote Sensing XXIII; SPIE: Bellingham, DC, USA, 2017; Volume 10427, pp. 37–48. [Google Scholar]

- Immitzer, M.; Vuolo, F.; Atzberger, C. First Experience with Sentinel-2 Data for Crop and Tree Species Classifications in Central Europe. Remote Sens. 2016, 8, 166. [Google Scholar] [CrossRef]

- Griffiths, P.; van der Linden, S.; Kuemmerle, T.; Hostert, P. A Pixel-Based Landsat Compositing Algorithm for Large Area Land Cover Mapping. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 2088–2101. [Google Scholar] [CrossRef]

- Farr, T.G.; Rosen, P.A.; Caro, E.; Crippen, R.; Duren, R.; Hensley, S.; Kobrick, M.; Paller, M.; Rodriguez, E.; Roth, L.; et al. The Shuttle Radar Topography Mission. Rev. Geophys. 2007, 45, RG2004. [Google Scholar] [CrossRef]

- Wu, Q.; Zhong, R.; Zhao, W.; Song, K.; Du, L. Land-Cover Classification Using GF-2 Images and Airborne Lidar Data Based on Random Forest. Int. J. Remote Sens. 2019, 40, 2410–2426. [Google Scholar] [CrossRef]

- Pettorelli, N.; Vik, J.O.; Mysterud, A.; Gaillard, J.-M.; Tucker, C.J.; Stenseth, N.C. Using the Satellite-Derived NDVI to Assess Ecological Responses to Environmental Change. Trends Ecol. Evol. 2005, 20, 503–510. [Google Scholar] [CrossRef]

- Zhao, D.; Zhong, W.; Ge, M.; Jiang, W.; Zhu, X.; Arun, P.V.; Zhou, H. SiamBSI: Hyperspectral Video Tracker based on Band Correlation Grouping and Spatial-Spectral Information Interaction. Infrared Phys. Technol. 2025, 151, 106063. [Google Scholar] [CrossRef]

- Zhao, D.; Xu, X.; You, M.; Arun, P.V.; Zhao, Z.; Ren, J.; Wu, L.; Zhou, H. Local Sub-block Contrast and Spatial-spectral Gradient Features Fusion for Hyperspectral Anomaly Detection. Remote Sens. 2025, 17, 695. [Google Scholar] [CrossRef]

- Wang, J.; Xu, J. A Spectral-Spatial Attention Autoencoder Network for Hyperspectral Unmixing. In Proceedings of the IGARSS 2023-2023 IEEE International Geoscience and Remote Sensing Symposium; IEEE: Piscataway, NJ, USA, 2023; pp. 7519–7522. [Google Scholar]

- Jin, D.; Yang, B. Graph attention convolutional autoencoder-based unsupervised nonlinear unmixing for hyperspectral images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 7896–7906. [Google Scholar] [CrossRef]

- Ozkan, S.; Kaya, B.; Akar, G.B. Endnet: Sparse autoencoder network for endmember extraction and hyperspectral unmixing. IEEE Trans. Geosci. Remote Sens. 2018, 57, 482–496. [Google Scholar] [CrossRef]

- Dhaini, M.; Berar, M.; Honeine, P.; Van Exem, A. End-to-end convolutional autoencoder for nonlinear hyperspectral unmixing. Remote Sens. 2022, 14, 3341. [Google Scholar] [CrossRef]

- Zhao, M.; Wang, M.; Chen, J.; Rahardja, S. Hyperspectral unmixing via deep autoencoder networks for a generalized linear-mixture/nonlinear-fluctuation model. arXiv 2019, arXiv:1904.13017. [Google Scholar]

- Borsoi, R.A.; Imbiriba, T.; Bermudez, J.C.M.; Richard, C.; Chanussot, J.; Drumetz, L.; Tourneret, J.L.; Zare, A.; Jutten, C. Spectral variability in hyperspectral data unmixing: A comprehensive review. IEEE Geosci. Remote Sens. Mag. 2021, 9, 223–270. [Google Scholar] [CrossRef]

- Somers, B.; Asner, G.P.; Tits, L.; Coppin, P. Endmember variability in spectral mixture analysis: A review. Remote Sens. Environ. 2011, 115, 1603–1616. [Google Scholar] [CrossRef]

| Sample Zone | Mixing Type | Location |

|---|---|---|

| PEZ_1 | Forest | Daqingshan Core Reserve |

| PEZ_2 | Cropland | Core of Hetao Irrigation District |

| PEZ_3 | Impervious | Baotou Old City District |

| PEZ_4 | Bareland | Hinterland of Kubuqi Desert |

| PEZ_5 | Grassland | Daqingshan Mountain Meadow |

| ECMZ_1 | Forest/Grassland/Bareland | Southern Foothills of Daqingshan |

| ECMZ_2 | Cropland/Grassland/Bareland | Hetao Irrigation District |

| ECMZ_3 | Bareland/Grassland/Cropland | Eastern Edge of Ulan Buh Desert |

| ECMZ_4 | Impervious/Cropland/Bareland | Urban-Rural Fringe of Hohhot |

| ECMZ_5 | Bareland/Grassland | Mining Area of Daqingshan |

| Land Cover Type | Model | RMSE | MAE | |

|---|---|---|---|---|

| Grassland | ECO-DEAU | 0.623 | 0.179 | 0.134 |

| Baseline AE | 0.569 | 0.192 | 0.146 | |

| Attention-AE | 0.553 | 0.196 | 0.155 | |

| Sparse-AE | 0.541 | 0.198 | 0.154 | |

| 1DCNN-AE | 0.311 | 0.243 | 0.191 | |

| FCLS | 0.287 | 0.247 | 0.195 | |

| Forest | ECO-DEAU | 0.593 | 0.157 | 0.102 |

| Baseline AE | 0.561 | 0.163 | 0.106 | |

| Attention-AE | 0.628 | 0.136 | 0.080 | |

| Sparse-AE | 0.623 | 0.137 | 0.080 | |

| 1DCNN-AE | 0.441 | 0.167 | 0.098 | |

| FCLS | 0.456 | 0.165 | 0.096 | |

| Cropland | ECO-DEAU | 0.802 | 0.075 | 0.034 |

| Baseline AE | 0.766 | 0.081 | 0.037 | |

| Attention-AE | 0.745 | 0.084 | 0.041 | |

| Sparse-AE | 0.777 | 0.079 | 0.039 | |

| 1DCNN-AE | 0.537 | 0.114 | 0.050 | |

| FCLS | 0.536 | 0.114 | 0.050 | |

| Bareland | ECO-DEAU | 0.592 | 0.125 | 0.087 |

| Baseline AE | 0.551 | 0.130 | 0.083 | |

| Attention-AE | 0.501 | 0.138 | 0.093 | |

| Sparse-AE | 0.496 | 0.138 | 0.094 | |

| 1DCNN-AE | 0.314 | 0.162 | 0.109 | |

| FCLS | 0.303 | 0.163 | 0.111 | |

| Impervious | ECO-DEAU | 0.825 | 0.090 | 0.049 |

| Baseline AE | 0.779 | 0.101 | 0.057 | |

| Attention-AE | 0.717 | 0.115 | 0.067 | |

| Sparse-AE | 0.701 | 0.118 | 0.069 | |

| 1DCNN-AE | 0.498 | 0.153 | 0.088 | |

| FCLS | 0.486 | 0.155 | 0.089 | |

| Overall | ECO-DEAU | 0.749 | 0.131 | 0.079 |

| Baseline AE | 0.645 | 0.133 | 0.086 | |

| Attention-AE | 0.708 | 0.138 | 0.086 | |

| Sparse-AE | 0.704 | 0.139 | 0.087 | |

| 1DCNN-AE | 0.548 | 0.172 | 0.107 | |

| FCLS | 0.542 | 0.174 | 0.107 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhou, L.; Li, L.; Li, D.; Bo, Y.; Li, H.; Liu, K.; Wang, S. ECO-DEAU: An Ecologically Constrained Deep Learning Autoencoder for Sub-Pixel Land Cover Unmixing in Arid and Semi-Arid Regions. Remote Sens. 2026, 18, 941. https://doi.org/10.3390/rs18060941

Zhou L, Li L, Li D, Bo Y, Li H, Liu K, Wang S. ECO-DEAU: An Ecologically Constrained Deep Learning Autoencoder for Sub-Pixel Land Cover Unmixing in Arid and Semi-Arid Regions. Remote Sensing. 2026; 18(6):941. https://doi.org/10.3390/rs18060941

Chicago/Turabian StyleZhou, Leixuan, Long Li, Dehui Li, Yong Bo, Hang Li, Kai Liu, and Shudong Wang. 2026. "ECO-DEAU: An Ecologically Constrained Deep Learning Autoencoder for Sub-Pixel Land Cover Unmixing in Arid and Semi-Arid Regions" Remote Sensing 18, no. 6: 941. https://doi.org/10.3390/rs18060941

APA StyleZhou, L., Li, L., Li, D., Bo, Y., Li, H., Liu, K., & Wang, S. (2026). ECO-DEAU: An Ecologically Constrained Deep Learning Autoencoder for Sub-Pixel Land Cover Unmixing in Arid and Semi-Arid Regions. Remote Sensing, 18(6), 941. https://doi.org/10.3390/rs18060941