A Hybrid RTM-Informed Machine Learning Framework with Crop-Specific Canopy Structural Parameterization for Crop Fractional Vegetation Cover Estimation

Highlights

- A physically consistent crop FVC retrieval framework was developed by dynamically parameterizing the G (0) function within a PROSAIL-based inversion scheme.

- Large-scale validation across China demonstrates that the proposed method significantly improves FVC estimation accuracy for four major crops compared with SNAP and GEOV3.

- Dynamic treatment of canopy structural parameters reduces structural uncertainty in RTM-based crop FVC retrieval.

- The proposed approach provides a robust and scalable solution for high-resolution crop monitoring using Sentinel-2 imagery.

Abstract

1. Introduction

2. Data and Methods

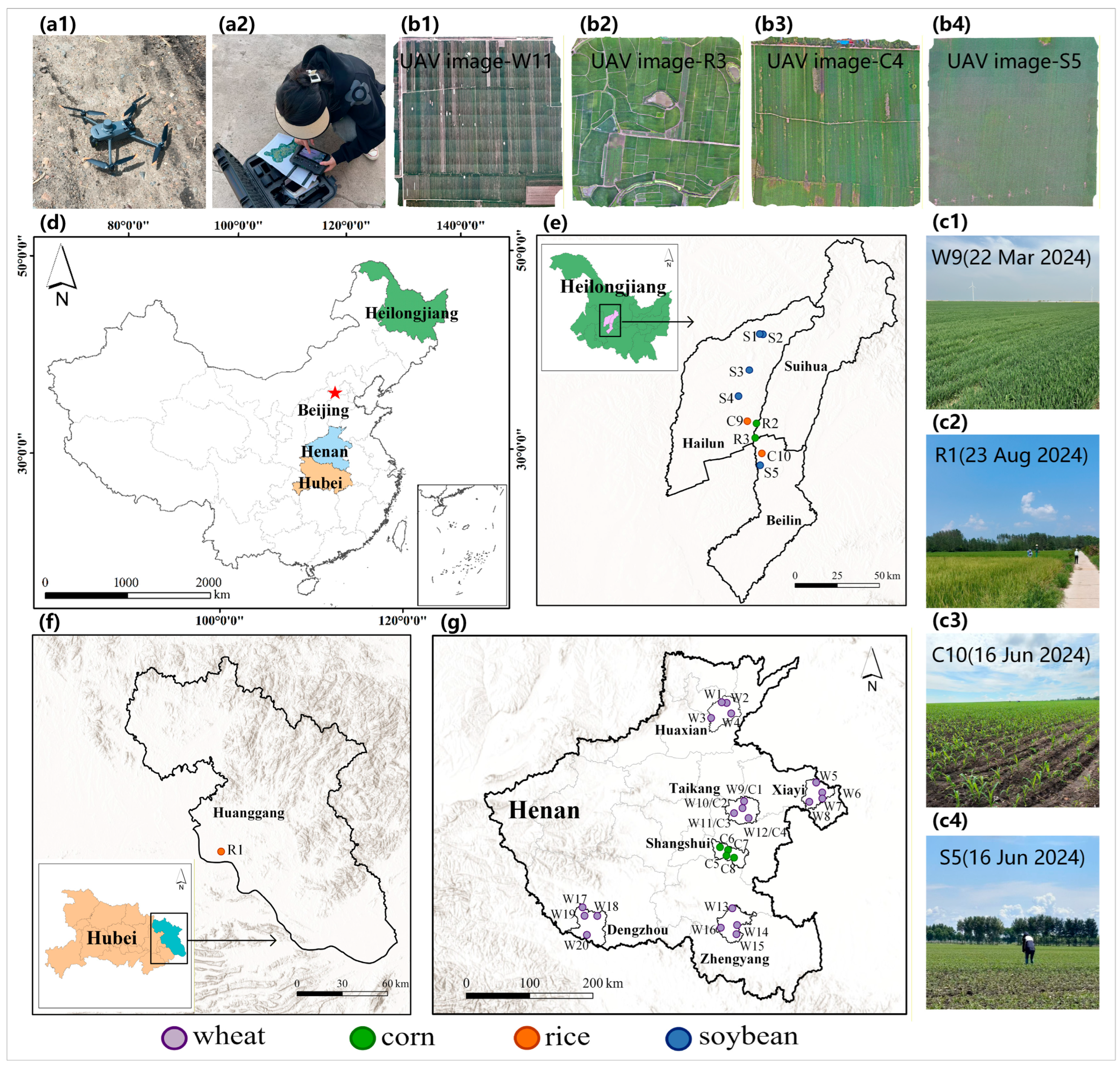

2.1. Study Area and Field Sampling

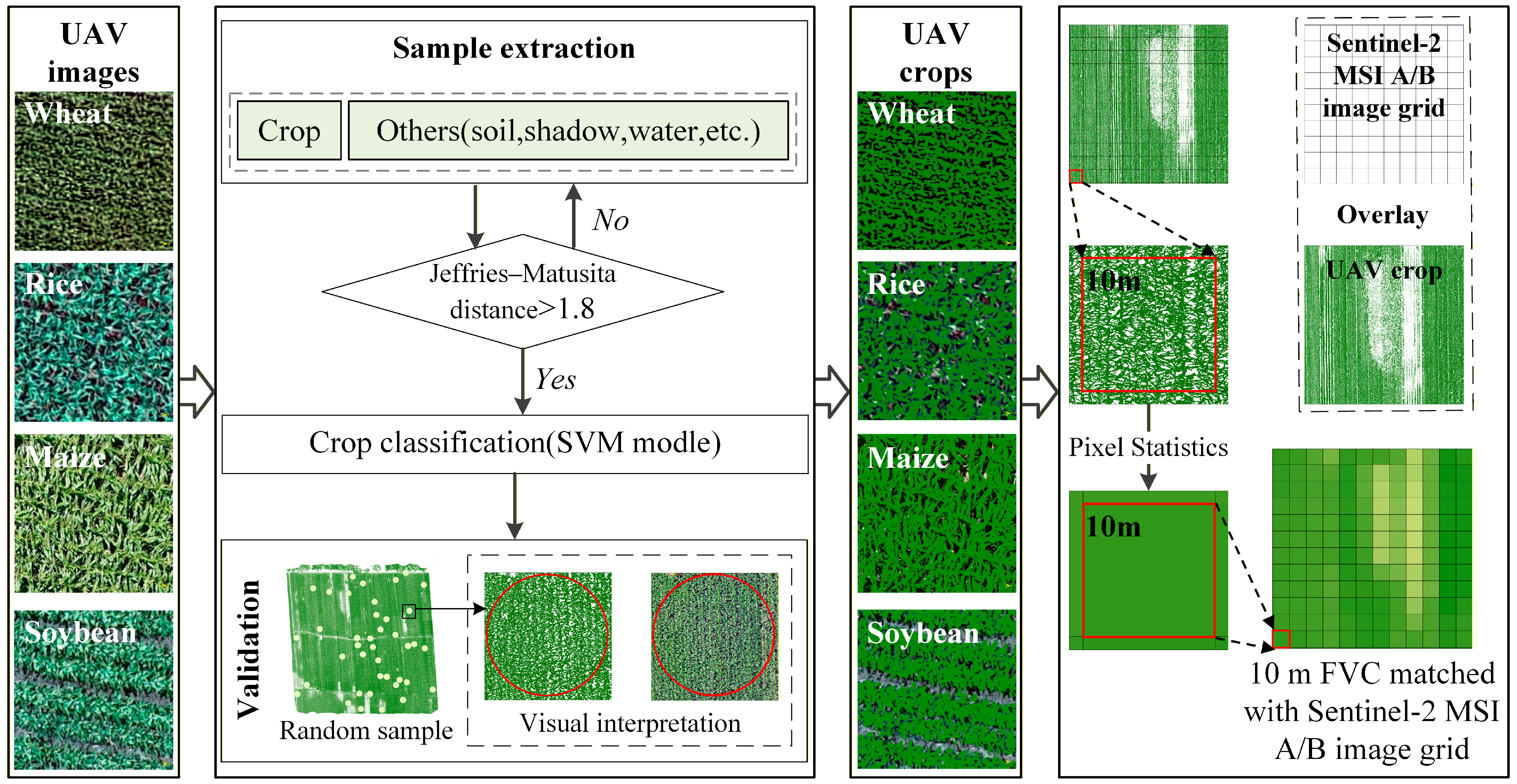

2.2. Construction of 10 m FVC Samples

2.3. Remote Sensing Imagery

2.4. A Gap Fraction-Refined Hybrid FVC Inversion Framework

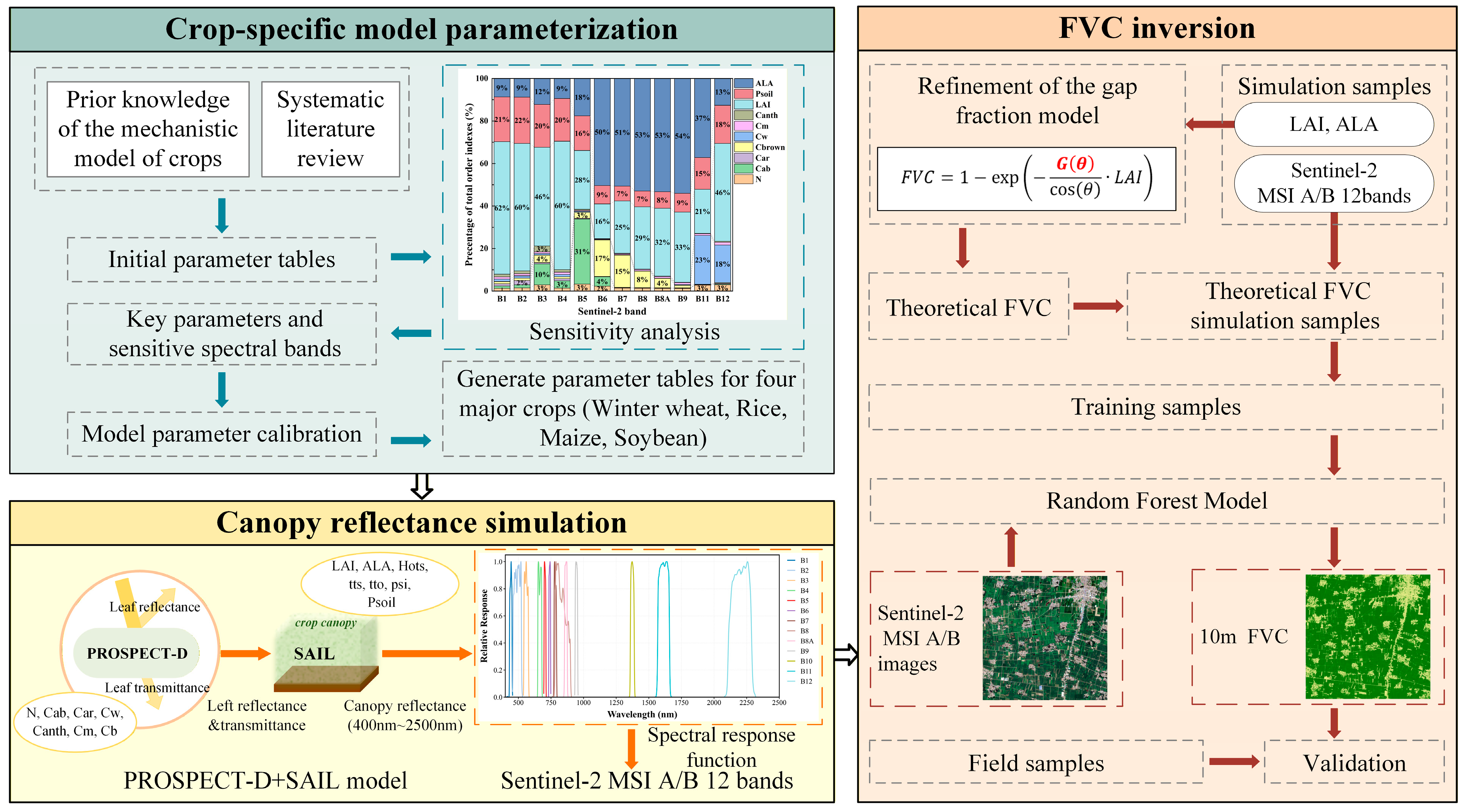

2.4.1. Crop-Specific Parameterization of the PROSAIL-D Model

2.4.2. Canopy Reflectance Simulation

2.4.3. Refinement of the Gap Fraction Model

2.4.4. RTM-Informed Random Forest Training for CropFVC Retrieval

2.5. Model Evaluation

3. Results

3.1. Model Validation Using Field Samples of 2024

3.2. Performance Comparison

3.2.1. Comparison with SNAP FVC

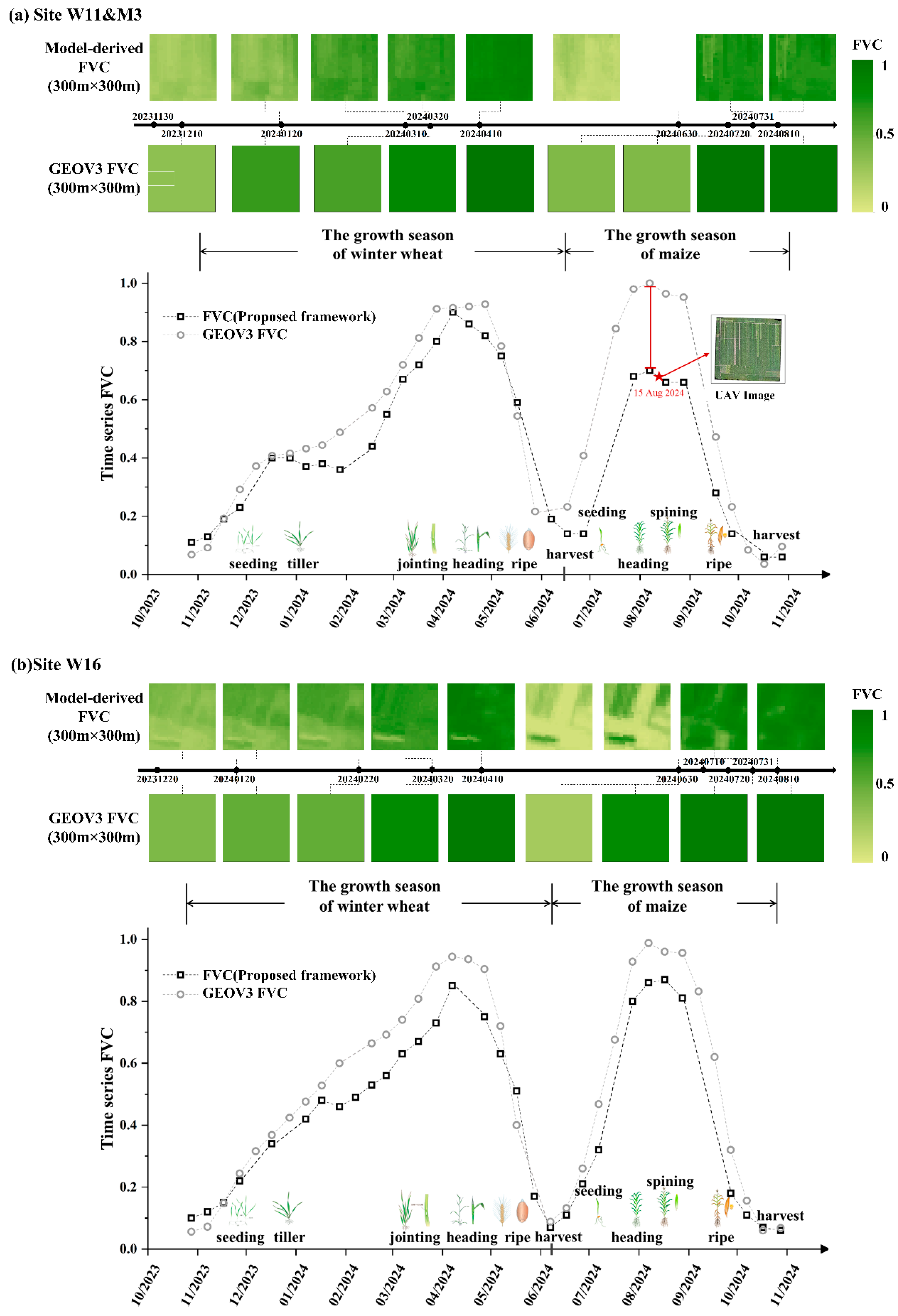

3.2.2. Comparison with GEOV3 FVC

4. Discussion

4.1. Impact of Canopy Structural Refinement on CropFVC Estimation

- (1)

- Crop-specific parameterization of the RTM. This study constructed differentiated PROSAIL input parameter tables for each crop by incorporating prior agronomic knowledge and systematically reviewing previous research. Previous research has demonstrated that a tailored physical model parameterization improves the representation of forward-modeled canopy variables for specific vegetation cover. For example, Berger [16] summarized typical PROSAIL-D parameter ranges for wheat, rice, maize, soybean, and sugar beet, providing important reference values for input settings. Jiao [29] optimized ALA through field spectral measurements and RF, identifying mean angles of 62° for wheat and 45° for soybean, which markedly improved chlorophyll inversion accuracy. These findings underline the importance of crop-specific parameterization in producing more realistic synthetic datasets while reducing redundancy. Therefore, PROSAIL-D parameters in our study were calibrated in a crop-specific manner to ensure that canopy structure and optical traits were more accurately represented for each individual crop type. In this context, LAI and ALA are introduced as simulation constraints within the PROSAIL-based training process rather than as operational input variables. Here, ALA serves as a proxy for canopy angular structure, ensuring that the framework remains applicable at large scales without requiring direct structural measurements.

- (2)

- Crop-specific refinement of the projection function with dynamic G (0). The canopy projection function G (0) plays a significant role in FVC estimation [46] but is often oversimplified as a constant value of 0.5, corresponding to the assumption of a spherical LAD where leaf normal is uniformly distributed with MLIA approximate 57.3° [46,47]. However, studies have shown that this spherical assumption may significantly underestimate canopy transmittance [48]. In crop-specific applications, such an assumption may be invalid due to the diversity, seasonality, and heterogeneity of crop canopies [30].

4.2. Toward Crop-Specific High-Resolution FVC Products

4.3. Limitations and Outlooks

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gitelson, A.A.; Kaufman, Y.J.; Stark, R.; Rundquist, D. Novel algorithms for remote estimation of vegetation fraction. Remote Sens. Environ. 2002, 80, 76–87. [Google Scholar]

- Dashpurev, B.; Dorj, M.; Phan, T.N.; Bendix, J.; Lehnert, L.W. Estimating fractional vegetation cover and aboveground biomass for land degradation assessment in eastern Mongolia steppe: Combining ground vegetation data and remote sensing. Int. J. Remote Sens. 2023, 44, 452–468. [Google Scholar]

- Fang, H.; Liu, W.; Wei, S.; Liu, X.; Myneni, R.B. New insights of global vegetation structural properties through an analysis of canopy clumping index, fractional vegetation cover, and leaf area index. Sci. Remote Sens. 2021, 4, 100027. [Google Scholar]

- Jiapaer, G.; Chen, X.; Bao, A. A comparison of methods for estimating fractional vegetation cover in arid regions. Agr. Forest Meteorol. 2011, 151, 1698–1710. [Google Scholar]

- Ye, X.; Zhu, W.; Liu, R.; He, B.; Yang, X.; Zhao, C. An automated method for estimating fractional vegetation cover from camera-based field measurements: Saturation-adaptive threshold for ExG (SATE). ISPRS J. Photogramm. Remote Sens. 2025, 229, 170–187. [Google Scholar] [CrossRef]

- Jia, K.; Yao, Y.; Wei, X.; Gao, S.; Jiang, B.; Zhao, X. A review on fractional vegetation cover estimation using remote sensing. Adv. Earth Sci. 2013, 28, 774–782. [Google Scholar]

- Verrelst, J.; Malenovský, Z.; Van der Tol, C.; Camps-Valls, G.; Gastellu-Etchegorry, J.P.; Lewis, P.; Moreno, J. Quantifying vegetation biophysical variables from imaging spectroscopy data: A review on retrieval methods. Surv. Geophys. 2019, 40, 589–629. [Google Scholar] [CrossRef]

- Yue, J.; Guo, W.; Yang, G.; Zhou, C.; Feng, H.; Qiao, H. Method for accurate multi-growth-stage estimation of fractional vegetation cover using unmanned aerial vehicle remote sensing. Plant Methods 2021, 17, 51. [Google Scholar]

- García-Haro, F.J.; Camacho, F.; Verger, A.; Meliá, J. Current status and potential applications of the LSA SAF suite of vegetation products. In Proceedings of the 29th EARSeL Symposium, Chania, Greece, 15–18 June 2009; pp. 15–18. [Google Scholar]

- Mu, X.; Song, W.; Gao, Z.; McVicar, T.R.; Donohue, R.J.; Yan, G. Fractional vegetation cover estimation by using multi-angle vegetation index. Remote Sens. Environ. 2018, 216, 44–56. [Google Scholar]

- Zhang, X.; Liao, C.; Li, J.; Sun, Q. Fractional vegetation cover estimation in arid and semi-arid environments using HJ-1 satellite hyperspectral data. Int. J. Appl. Earth Obs. Geoinf. 2013, 21, 506–512. [Google Scholar]

- Zeng, X.; Dickinson, R.E.; Walker, A.; Shaikh, M.; DeFries, R.S.; Qi, J. Derivation and evaluation of global 1-km fractional vegetation cover data for land modeling. J. Appl. Meteorol. 2000, 39, 826–839. [Google Scholar] [CrossRef]

- Song, W.; Mu, X.; McVicar, T.R.; Knyazikhin, Y.; Liu, X.; Wang, L.; Yan, G. Global quasi-daily fractional vegetation cover estimated from the DSCOVR EPIC directional hotspot dataset. Remote Sens. Environ. 2022, 269, 112835. [Google Scholar] [CrossRef]

- Filipponi, F.; Valentini, E.; Nguyen Xuan, A.; Guerra, C.A.; Wolf, F.; Andrzejak, M.; Taramelli, A. Global MODIS fraction of green vegetation cover for monitoring abrupt and gradual vegetation changes. Remote Sens. 2018, 10, 653. [Google Scholar] [CrossRef]

- Ma, X.; Lu, L.; Ding, J.; Zhang, F.; He, B. Estimating fractional vegetation cover of row crops from high spatial resolution image. Remote Sens. 2021, 13, 3874. [Google Scholar] [CrossRef]

- Berger, K.; Atzberger, C.; Danner, M.; D’Urso, G.; Mauser, W.; Vuolo, F.; Hank, T. Evaluation of the PROSAIL model capabilities for future hyperspectral model environments: A review study. Remote Sens. 2018, 10, 85. [Google Scholar] [CrossRef]

- Atzberger, C. Object-based retrieval of biophysical canopy variables using artificial neural nets and radiative transfer models. Remote Sens. Environ. 2004, 93, 53–67. [Google Scholar] [CrossRef]

- Tu, Y.; Jia, K.; Wei, X.; Yao, Y.; Xia, M.; Zhang, X.; Jiang, B. A time-efficient fractional vegetation cover estimation method using the dynamic vegetation growth information from time series Glass FVC product. IEEE Geosci. Remote Sens. Lett. 2019, 17, 1672–1676. [Google Scholar] [CrossRef]

- Song, D.X.; Wang, Z.; He, T.; Wang, H.; Liang, S. Estimation and validation of 30 m fractional vegetation cover over China through integrated use of Landsat 8 and Gaofen 2 data. Sci. Remote Sens. 2022, 6, 100058. [Google Scholar] [CrossRef]

- Yang, L.; Jia, K.; Liang, S.; Liu, J.; Wang, X. Comparison of four machine learning methods for generating the GLASS fractional vegetation cover product from MODIS data. Remote Sens. 2016, 8, 682. [Google Scholar] [CrossRef]

- Wu, Z.; Zheng, X.; Ding, Y.; Tao, Z.; Sun, Y.; Li, B.; Li, X. A Method for Retrieving Maize Fractional Vegetation Cover by Combining 3D Radiative Transfer Model and Transfer Learning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 15671–15684. [Google Scholar] [CrossRef]

- Baret, F.; Hagolle, O.; Geiger, B.; Bicheron, P.; Miras, B.; Huc, M.; Leroy, M. LAI, fAPAR and fCover CYCLOPES global products derived from VEGETATION: Part 1: Principles of the algorithm. Remote Sens. Environ. 2007, 110, 275–286. [Google Scholar] [CrossRef]

- Baret, F.; Weiss, M.; Verger, A.; Smets, B. Atbd for Lai, Fapar and Fcover from Proba-V Products at 300 m Resolution (Geov3); Imagines_rp2. 1_atbd-lai, 300; INRAE: Paris, France, 2016. [Google Scholar]

- Verger, A.; Sánchez-Zapero, J.; Weiss, M.; Descals, A.; Camacho, F.; Lacaze, R.; Baret, F. GEOV2: Improved smoothed and gap filled time series of LAI, FAPAR and FCover 1 km Copernicus Global Land products. Int. J. Appl. Earth Obs. Geoinf. 2023, 123, 103479. [Google Scholar]

- Liu, D.; Jia, K.; Jiang, H.; Xia, M.; Tao, G.; Wang, B.; Li, J. Fractional vegetation cover estimation algorithm for FY-3B reflectance data based on random forest regression method. Remote Sens. 2021, 13, 2165. [Google Scholar] [CrossRef]

- Wang, X.; Jia, K.; Liang, S.; Li, Q.; Wei, X.; Yao, Y.; Tu, Y. Estimating fractional vegetation cover from landsat-7 ETM+ reflectance data based on a coupled radiative transfer and crop growth model. IEEE Trans. Geosci. Remote Sens. 2017, 55, 5539–5546. [Google Scholar] [CrossRef]

- Wan, L.; Zhu, J.; Du, X.; Zhang, J.; Han, X.; Zhou, W.; Cen, H. A model for phenotyping crop fractional vegetation cover using imagery from unmanned aerial vehicles. J. Exp. Bot. 2021, 72, 4691–4707. [Google Scholar] [CrossRef]

- Jacquemoud, S.; Verhoef, W.; Baret, F.; Bacour, C.; Zarco-Tejada, P.J.; Asner, G.P.; Ustin, S.L. PROSPECT+ SAIL models: A review of use for vegetation characterization. Remote Sens. Environ. 2009, 113, S56–S66. [Google Scholar] [CrossRef]

- Jiao, Q.; Sun, Q.; Zhang, B.; Huang, W.; Ye, H.; Zhang, Z.; Qian, B. A random forest algorithm for retrieving canopy chlorophyll content of wheat and soybean trained with PROSAIL simulations using adjusted average leaf angle. Remote Sens. 2021, 14, 98. [Google Scholar] [CrossRef]

- Li, S.; Fang, H. Mapping global leaf inclination angle (LIA) based on field measurement data. Earth Syst. Sci. Data Discuss. 2024, 17, 1347–1366. [Google Scholar] [CrossRef]

- Liu, B.; Shi, Y.; Duan, Y.; Wu, W. UAV-BASED CROPS CLASSIFICATION WITH JOINT FEATURES FROM ORTHOIMAGE AND DSM DATA. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2018, XLII-3, 1023–1028. [Google Scholar] [CrossRef]

- Eskandari, R.; Mahdianpari, M.; Mohammadimanesh, F.; Salehi, B.; Brisco, B.; Homayouni, S. Meta-analysis of Unmanned Aerial Vehicle (UAV) Imagery for Agro-environmental Monitoring Using Machine Learning and Statistical Models. Remote Sens. 2020, 12, 3511. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F.; Jay, S. S2ToolBox Level 2 Products: LAI, FAPAR, FCOVER (Version 2.1); INRAE/ESA: Paris, France, 2020. [Google Scholar]

- Li, D.; Chen, J.M.; Yu, W.; Zheng, H.; Yao, X.; Zhu, Y.; Cao, W.; Cheng, T. A chlorophyll-constrained semi-empirical model for estimating leaf area index using a red-edge vegetation index. Comput. Electron. Agric. 2024, 220, 108891. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F. S2ToolBox Level-2 Products: LAI, FAPAR, FCOVER (ATBD); European Space Agency: Paris, France, 2016. [Google Scholar]

- Verrelst, J.; Rivera, J.P.; Veroustraete, F.; Muñoz-Marí, J.; Clevers, J.G.P.W.; Camps-Valls, G.; Moreno, J. Optical remote sensing and the retrieval of terrestrial vegetation bio-geophysical properties—A review. ISPRS J. Photogramm. Remote Sens. 2015, 108, 273–290. [Google Scholar] [CrossRef]

- Féret, J.B.; Gitelson, A.A.; Noble, S.D.; Jacquemoud, S. PROSPECT-D: Towards modeling leaf optical properties through a complete lifecycle. Remote Sens. Environ. 2017, 193, 204–215. [Google Scholar] [CrossRef]

- Verhoef, W. Light scattering by leaf layers with application to canopy reflectance modeling: The SAIL model. Remote Sens. Environ. 1984, 16, 125–141. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F.; Myneni, R.; Pragnère, A.; Knyazikhin, Y. Investigation of a model inversion technique to estimate canopy biophysical variables from spectral and directional reflectance data. Agronomie 2000, 20, 3–22. [Google Scholar] [CrossRef]

- Yang, R.; Li, S.; Zhang, B.; Jiao, Q.; Peng, D.; Yang, S.; Yu, R. A Multispectral Feature Selection Method Based on a Dual-Attention Network for the Accurate Estimation of Fractional Vegetation Cover in Winter Wheat. Remote Sens. 2024, 16, 4441. [Google Scholar] [CrossRef]

- Monteith, J.L.; Unsworth, M.H.; Webb, A. Principles of environmental physics. Q. J. R. Meteorol. Soc. 1994, 120, 1699. [Google Scholar] [CrossRef]

- Campbell, G.S. Extinction coefficients for radiation in plant canopies calculated using an ellipsoidal inclination angle distribution. Agr. Forest Meteorol. 1986, 36, 317–321. [Google Scholar] [CrossRef]

- Campbell, G.S. Derivation of an angle distribution of foliage from a distribution of leaf inclination. Agr. Forest Meteorol. 1990, 49, 173–182. [Google Scholar]

- Wang, W.M.; Li, Z.L.; Su, H.B. Comparison of leaf angle distribution functions: Effects on extinction coefficient and fraction of sunlit foliage. Agr. Forest Meteorol. 2007, 143, 106–122. [Google Scholar] [CrossRef]

- Campbell, G.S.; Norman, J.M. Environmental Biophysics, 2nd ed.; Springer: Berlin/Heidelberg, Germany, 1998. [Google Scholar]

- Zhao, J.; Li, J.; Liu, Q.; Xu, B.; Yu, W.; Lin, S.; Hu, Z. Estimating fractional vegetation cover from leaf area index and clumping index based on the gap probability theory. Int. J. Appl. Earth Obs. Geoinf. 2020, 90, 102112. [Google Scholar] [CrossRef]

- Zhao, J.; Li, J.; Liu, Q.; Xu, B.; Mu, X.; Dong, Y. Generation of a 16 m/10-day fractional vegetation cover product over China based on Chinese GaoFen-1 observations: Method and validation. Int. J. Digit. Earth. 2023, 16, 4229–4246. [Google Scholar] [CrossRef]

- Stadt, K.J.; Lieffers, V.J. MIXLIGHT: A flexible light transmission model for mixed-species forest stands. Agr. Forest Meteorol. 2000, 102, 235–252. [Google Scholar]

- Liu, D.; Jia, K.; Wei, X.; Xia, M.; Zhang, X.; Yao, Y.; Wang, B. Spatiotemporal comparison and validation of three global-scale fractional vegetation cover products. Remote Sens. 2019, 11, 2524. [Google Scholar]

- Hu, Q.; Yang, J.; Xu, B.; Huang, J.; Memon, M.S.; Yin, G.; Liu, K. Evaluation of global decametric-resolution LAI, FAPAR and FVC estimates derived from Sentinel-2 imagery. Remote Sens. 2020, 12, 912. [Google Scholar] [CrossRef]

- Jiang, Q.; Wang, Y. Leaf angle regulation toward a maize smart canopy. Plant J. 2025, 117, 447–460. [Google Scholar] [CrossRef]

- Elli, E.F.; Edwards, J.; Yu, J.; Trifunovic, S.; Eudy, D.M.; Kosola, K.R.; Schnable, P.S.; Lamkey, K.R.; Archontoulis, S.V. Maize leaf angle genetic gain is slowing down in the last decades. Crop Sci. 2023, 63, 3520–3533. [Google Scholar] [CrossRef]

- Du, M.; Li, M.; Noguchi, N.; Ji, J.; Ye, M. Retrieval of fractional vegetation cover from remote sensing image of unmanned aerial vehicle based on mixed pixel decomposition method. Drones 2023, 7, 43. [Google Scholar] [CrossRef]

| Parameters (Units) | Range (or Value) | Steps | |||

|---|---|---|---|---|---|

| Wheat | Rice | Maize | Soybean | ||

| N | 1–1.5 | 1–1.5 | 1.2–1.8 | 1.2–2 | 0.5/0.5/0.6/0.8 |

| Cab | 10–80 | 20/10/10/20 | |||

| Car | 25% Cab | - | |||

| Cw | 0.02 | - | |||

| Cm | 0.002–0.008 | 0.002–0.008 | 0.004–0.02 | 0.004–0.032 | 0.006/0.003/0.016/0.014 |

| Canth | 2 | - | |||

| Cb | 0 | - | |||

| LAI | 0–7 | 0.5 | |||

| ALA (°) | 40–70 | 50–70 | 50–70 | 30–60 | 5 |

| Psoil | 0–1 | 0–0.3 | 0–1 | 0–1 | 0.25 |

| Hots | 0.05 | - | |||

| tts (°) | 0–60 | 20 | |||

| tto (°) | 0 | - | |||

| psi (°) | 0 | - | |||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xu, L.; Zhang, J.; Cheng, T.; Jiao, Q.; Qin, Y.; Ma, H.; Wu, H. A Hybrid RTM-Informed Machine Learning Framework with Crop-Specific Canopy Structural Parameterization for Crop Fractional Vegetation Cover Estimation. Remote Sens. 2026, 18, 751. https://doi.org/10.3390/rs18050751

Xu L, Zhang J, Cheng T, Jiao Q, Qin Y, Ma H, Wu H. A Hybrid RTM-Informed Machine Learning Framework with Crop-Specific Canopy Structural Parameterization for Crop Fractional Vegetation Cover Estimation. Remote Sensing. 2026; 18(5):751. https://doi.org/10.3390/rs18050751

Chicago/Turabian StyleXu, Lili, Junya Zhang, Tao Cheng, Quanjun Jiao, Yelu Qin, Haoyan Ma, and Hao Wu. 2026. "A Hybrid RTM-Informed Machine Learning Framework with Crop-Specific Canopy Structural Parameterization for Crop Fractional Vegetation Cover Estimation" Remote Sensing 18, no. 5: 751. https://doi.org/10.3390/rs18050751

APA StyleXu, L., Zhang, J., Cheng, T., Jiao, Q., Qin, Y., Ma, H., & Wu, H. (2026). A Hybrid RTM-Informed Machine Learning Framework with Crop-Specific Canopy Structural Parameterization for Crop Fractional Vegetation Cover Estimation. Remote Sensing, 18(5), 751. https://doi.org/10.3390/rs18050751