Cross-Domain Hyperspectral Image Classification Combined Sharpness-Aware Minimization with Local-to-Global Feature Enhancement

Highlights

- This study proposes a novel paradigm for classifying hyperspectral satellite imagery using UAV hyperspectral data, enabling effective utilization of large amounts of unlabeled satellite data. By integrating cross-domain learning with the high spatial resolution and abundant labeled information of UAV hyperspectral data, the proposed method significantly enhances the fine-grained classification performance of satellite hyperspectral images in broad-area scenes. This approach offers a new research direction for the intelligent interpretation of hyperspectral remote sensing data acquired from heterogeneous sensor platforms.

- The proposed method achieves state-of-the-art classification performance, significantly outperforming advanced cross-domain classification approaches and the SOTA method DSFormer on four standard benchmark datasets.

- A local–global feature extraction model is developed. Initially, the model captures local edge information from cross-domain data, followed by global feature alignment through an improved self-attention mechanism. This strategy enhances boundary detail representation through local feature extraction and optimizes cross-domain feature consistency via global feature alignment, thereby improving the model’s adaptability and robustness in cross-domain hyperspectral classification tasks.

- An improved sharpness perception minimization (ISAM) strategy is proposed to overcome local optima and reduced generalization resulting from spectral shift in hyperspectral cross-domain classification tasks. To reduce computational complexity and improve training efficiency, this work refines the gradient perturbation strategy by using a single forward propagation to compute approximate perturbations. Furthermore, by combining square root gradient approximation perturbation with a nonlinear gradient scaling mechanism, the gradient update amplitude exhibits gradual growth relative to the gradient size. This adaptive adjustment of feature update intensity suppresses the dominance of large gradients, enhances the influence of small gradients, and ensures more balanced cross-domain feature alignment.

Abstract

1. Introduction

- This study proposes a novel paradigm for classifying hyperspectral satellite imagery using UAV hyperspectral data, enabling effective utilization of large amounts of unlabeled satellite data. By integrating cross-domain learning with the high spatial resolution and abundant labeled information of UAV hyperspectral data, the proposed method significantly enhances the fine-grained classification performance of satellite hyperspectral images in broad-area scenes. This approach offers a new research direction for the intelligent interpretation of hyperspectral remote sensing data acquired from heterogeneous sensor platforms;

- A local–global feature extraction model is developed. Initially, the model captures local edge information from cross-domain data, followed by global feature alignment through an improved self-attention mechanism. This strategy enhances boundary detail representation through local feature extraction and optimizes cross-domain feature consistency via global feature alignment, thereby improving the model’s adaptability and robustness in cross-domain hyperspectral classification tasks;

- An improved sharpness perception minimization (ISAM) strategy is proposed to overcome local optima and reduced generalization resulting from spectral shift in hyperspectral cross-domain classification tasks. To reduce computational complexity and improve training efficiency, this work refines the gradient perturbation strategy by using a single forward propagation to compute approximate perturbations. Furthermore, by combining square root gradient approximation perturbation with a nonlinear gradient scaling mechanism, the gradient update amplitude exhibits gradual growth relative to the gradient size. This adaptive adjustment of feature update intensity suppresses the dominance of large gradients, enhances the influence of small gradients, and ensures more balanced cross-domain feature alignment.

2. Related Works

2.1. Hyperspectral Image Classification via Deep Neural Network

2.2. Strategies for Enhancing Model Generalization

3. Proposed SAMLFE for HSI Classification

3.1. Spectral Dimension Mapping Model Between Source and Target Domains

| Algorithm 1 Pseudocode for the Training Process of the Proposed SAMLFE |

| Input: ST, QT of Dt, SS, QS of DS, the number of training episodes. |

| Output: The classification accuracy of each class of the target dataset. |

| 1: Spectral Dimension Mapping |

| 2: Calculate , by Equation (1); |

| 3: Local-to-Global Feature Extraction |

| 4: for episode = 1: episodes do |

| 5: Randomly selected ST, QT from Dt, SS, QS from DS; |

| 6: Extract deep representations from the mapped data; |

| 7: Calculate local features yLFEM by Equation (8); |

| 8: Calculate global features O by Equation (14); |

| 9: Few-shot learning on extracted features; |

| 10: Calculate , by Equations (18) and (19); |

| 11: Lossfsl = + ; |

| 12: Lossfsl.backward() |

| 13: end for |

| 14: Conditional Domain Discriminator |

| 15: for episode = 1: episodes do |

| 16: Calculate Ld by Equation (22); |

| 17: Loss = Lfsl + Ld; |

| 18: Loss.backward() |

| 19: end for |

| 20: ISAM Parameter Optimization |

| 21: for episode = 1: episodes do |

| 22: Calculate ew, and by Equations (24)–(26); |

| 23: Update model parameters to reduce loss fluctuations; |

| 24: Calculate Wnew by Equation (27) |

| 25: end for |

3.2. Local-to-Global Feature Extraction Model

3.3. Source and Target Few-Shot Learning

3.4. Conditional Domain Discriminator Model

3.5. Improved Sharpness-Aware Minimization Strategy

4. Experimental Validation and Analysis

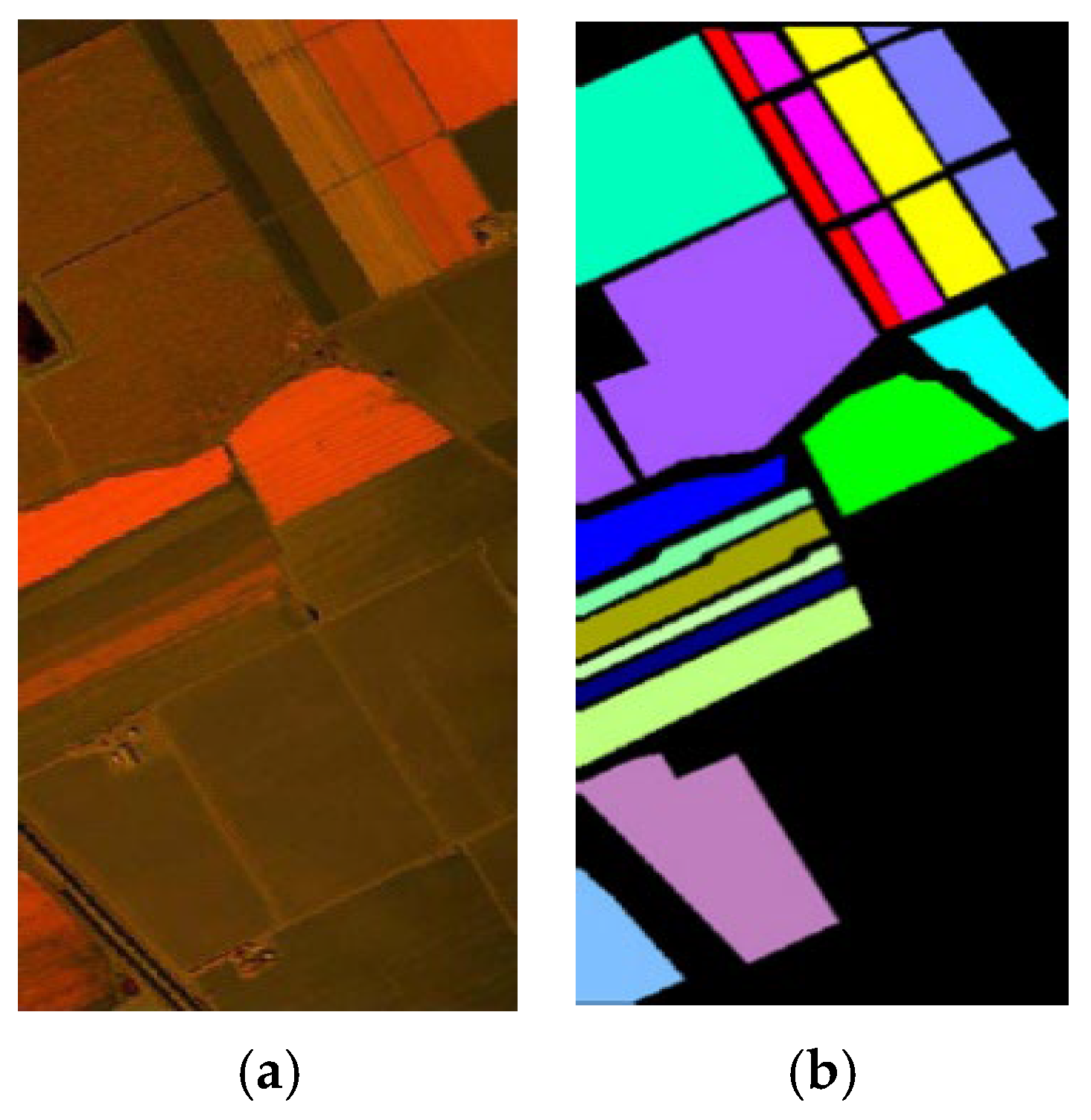

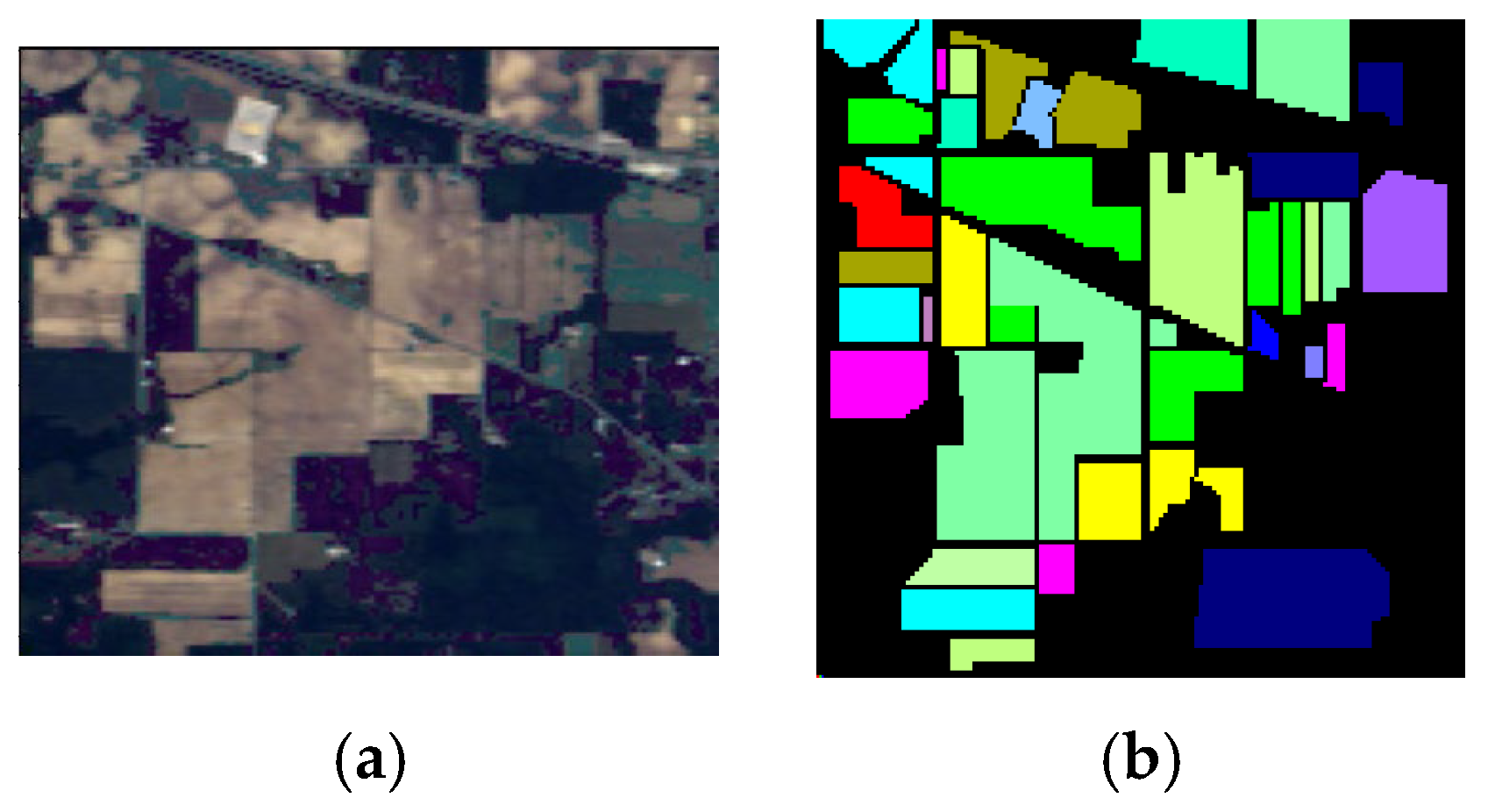

4.1. Dataset Descriptions

4.2. Experimental Setting

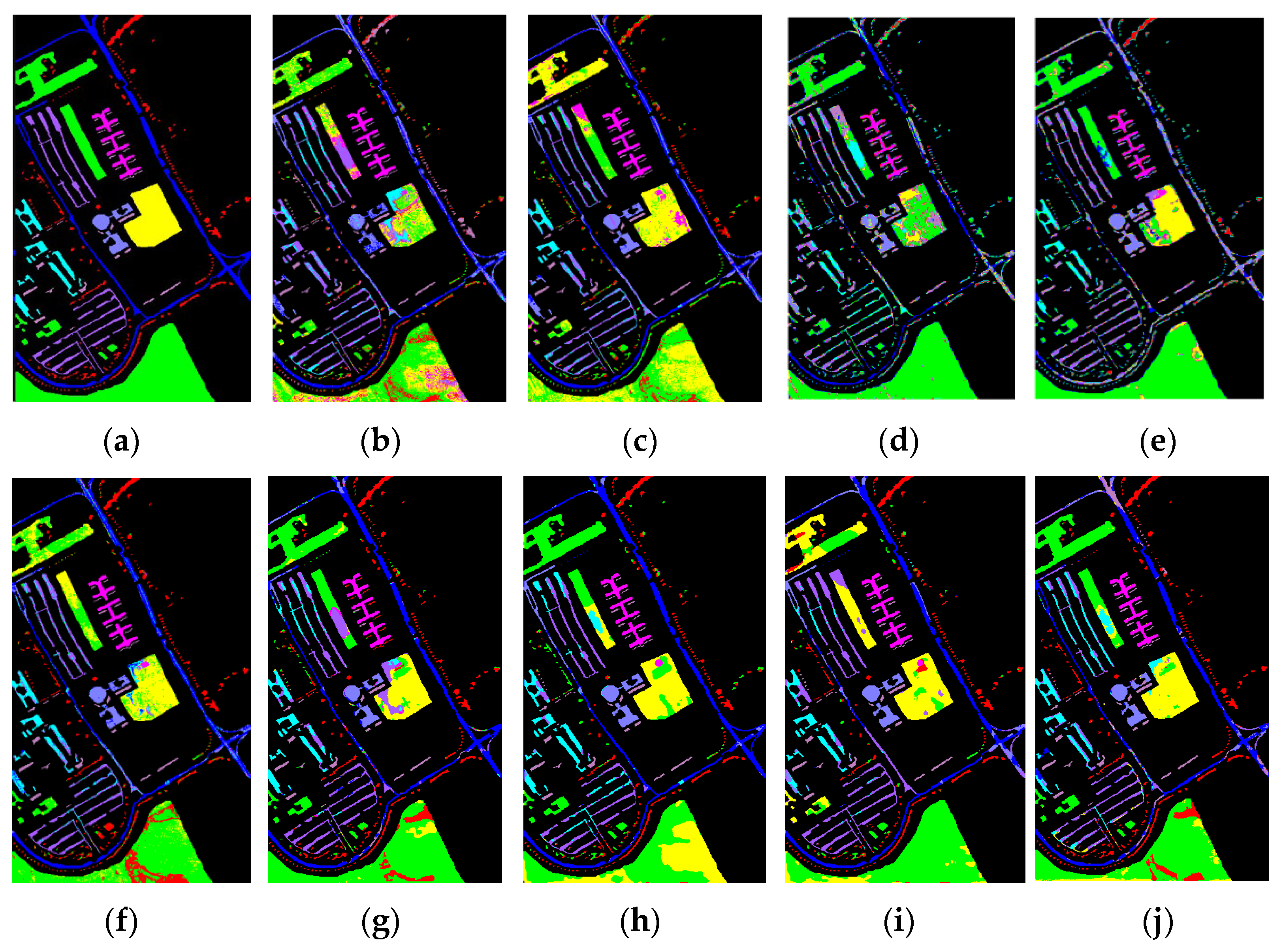

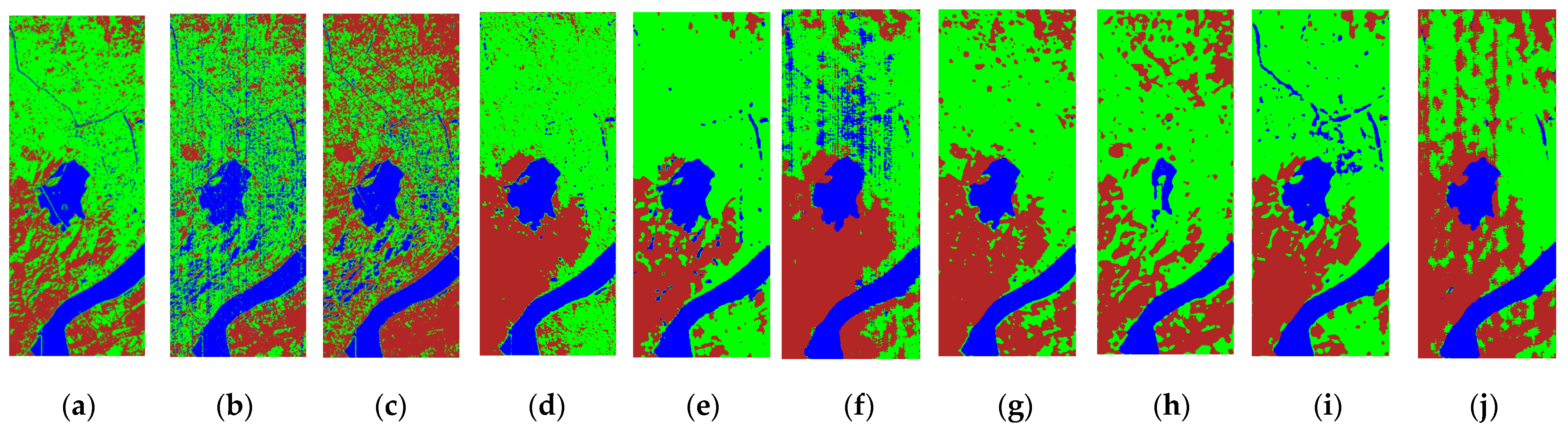

4.3. Classification Maps and Categorized Results

4.4. Ablation Study of SAMLFE Model

5. Discussion

5.1. Effectiveness of the Number of Hyperparameters on the Model

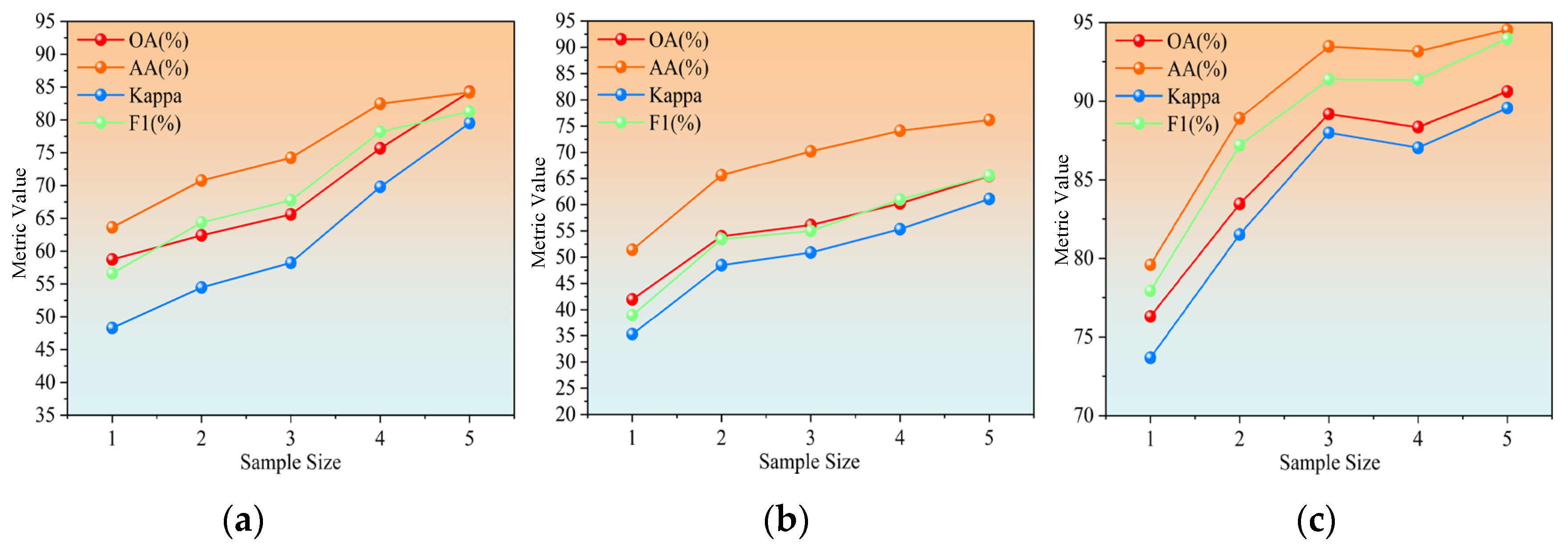

5.2. Analysis of the Impact of Sample Size on SAMLFE Classification Accuracy

5.3. Analyzing the Impact of Batch Size on the SAMLFE Framework

5.4. Analyzing the Impact of Parameter Gamma on the Improved Self-Attention

5.5. Feature Visualization of the Target Domain

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wang, Q.; Huang, J.; Wang, S.; Zhang, Z.; Shen, T.; Gu, Y. Community Structure Guided Network for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2025, 63, 4404115. [Google Scholar] [CrossRef]

- Tu, B.; Ren, Q.; Li, Q.; He, W.; He, W. Hyperspectral Image Classification Using a Superpixel–Pixel–Subpixel Multilevel Network. IEEE Trans. Geosci. Remote Sens. 2023, 72, 5013616. [Google Scholar] [CrossRef]

- Weber, C.; Aguejdad, R.; Briottet, X.; Avala, J.; Fabre, S.; Demuynck, J.; Zenou, E.; Deville, Y.; Karoui, M.S.; Benhalouche, F.Z.; et al. Hyperspectral Imagery for Environmental Urban Planning. In Proceedings of the IGARSS 2018—2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; pp. 1628–1631. [Google Scholar]

- Yang, X.; Yu, Y. Estimating Soil Salinity Under Various Moisture Conditions: An Experimental Study. IEEE Trans. Geosci. Remote Sens. 2017, 55, 2525–2533. [Google Scholar] [CrossRef]

- Liang, L.; Di, L.; Zhang, L.; Deng, M.; Qin, Z.; Zhao, S.; Lin, H. Estimation of crop LAI using hyperspectral vegetation indices and a hybrid inversion method. Remote Sens. Environ. 2015, 165, 123–134. [Google Scholar] [CrossRef]

- Hao, Q.; Pei, Y.; Zhou, R.; Sun, B.; Sun, J.; Li, S.; Kang, X. Fusing Multiple Deep Models for in Vivo Human Brain Hyperspectral Image Classification to Identify Glioblastoma Tumor. IEEE Trans. Instrum. Meas. 2021, 70, 4007314. [Google Scholar] [CrossRef]

- Kanthi, M.; Sarma, T.H.; Bindu, C.S. A 3D-Deep CNN Based Feature Extraction and Hyperspectral Image Classification. In Proceedings of the 2020 IEEE India Geoscience and Remote Sensing Symposium, Ahmedabad, India, 1–4 December 2020; pp. 229–232. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Zhong, Z.; Li, J.; Luo, Z.; Chapman, M. Spectral–Spatial Residual Network for Hyperspectral Image Classification: A 3-D Deep Learning Framework. IEEE Trans. Geosci. Remote Sens. 2018, 56, 847–858. [Google Scholar] [CrossRef]

- Wei, W.; Tong, L.; Guo, B.; Zhou, J.; Xiao, C. Few-Shot Hyperspectral Image Classification Using Relational Generative Adversarial Network. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5539016. [Google Scholar] [CrossRef]

- Yu, C.; Gong, B.; Song, M.; Zhao, E.; Chang, C.-I. Multiview Calibrated Prototype Learning for Few-Shot Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5544713. [Google Scholar] [CrossRef]

- Tang, H.; Zhang, C.; Tang, D.; Lin, X.; Yang, X.; Xie, W. Few-Shot Hyperspectral Image Classification with Deep Fuzzy Metric Learning. IEEE Geosci. Remote Sens. Lett. 2025, 22, 5502205. [Google Scholar] [CrossRef]

- Liu, S.; Fu, C.; Duan, Y.; Wang, X.; Luo, F. Spatial–Spectral Enhancement and Fusion Network for Hyperspectral Image Classification with Few Labeled Samples. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5502414. [Google Scholar] [CrossRef]

- Mu, C.; Liu, Y.; Yan, X.; Ali, A.; Liu, Y. Few-Shot Open-Set Hyperspectral Image Classification with Adaptive Threshold Using Self-Supervised Multitask Learning. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5526618. [Google Scholar] [CrossRef]

- Zhao, C.; Qin, B.; Feng, S.; Zhu, W.; Zhang, L.; Ren, J. An Unsupervised Domain Adaptation Method Towards Multi-Level Features and Decision Boundaries for Cross-Scene Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5546216. [Google Scholar] [CrossRef]

- Matasci, G.; Volpi, M.; Kanevski, M.; Tuia, D. Semisupervised Transfer Component Analysis for Domain Adaptation in Remote Sensing Image Classification. IEEE Trans. Geosci. Remote Sens. 2015, 53, 3550–3564. [Google Scholar] [CrossRef]

- Zhou, X.; Prasad, S. Deep Feature Alignment Neural Networks for Domain Adaptation of Hyperspectral Data. IEEE Trans. Geosci. Remote Sens. 2018, 56, 5863–5872. [Google Scholar] [CrossRef]

- Deng, B.; Jia, S.; Shi, D. Deep Metric Learning-Based Feature Embedding for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2020, 58, 1422–1435. [Google Scholar] [CrossRef]

- Wang, Y.; Liu, G.; Yang, L.; Liu, J.; Wei, L. An Attention-Based Feature Processing Method for Cross-Domain Hyperspectral Image Classification. IEEE Signal Process. Lett. 2025, 32, 196–200. [Google Scholar] [CrossRef]

- Li, Z.; Liu, M.; Chen, Y.; Xu, Y.; Li, W.; Du, Q. Deep Cross-Domain Few-Shot Learning for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5501618. [Google Scholar] [CrossRef]

- Xi, B.; Li, J.; Li, Y.; Song, R.; Hong, D.; Chanussot, J. Few-Shot Learning with Class-Covariance Metric for Hyperspectral Image Classification. IEEE Trans. Image Process. 2022, 31, 5079–5092. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, W.; Zhang, M.; Wang, S.; Tao, R.; Du, Q. Graph Information Aggregation Cross-Domain Few-Shot Learning for Hyperspectral Image Classification. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 1912–1925. [Google Scholar] [CrossRef] [PubMed]

- Zhou, L.; Ma, L. Extreme Learning Machine-Based Heterogeneous Domain Adaptation for Classification of Hyperspectral Images. IEEE Geosci. Remote Sens. Lett. 2019, 16, 1781–1785. [Google Scholar] [CrossRef]

- Dang, Y.; Li, H.; Liu, B.; Zhang, X. Cross-Domain Few-Shot Learning for Hyperspectral Image Classification Based on Global-to-Local Enhanced Channel Attention. IEEE Geosci. Remote Sens. Lett. 2025, 22, 5501905. [Google Scholar] [CrossRef]

- Feng, S.; Zhang, H.; Xi, B.; Zhao, C.; Li, Y.; Chanussot, J. Cross-Domain Few-Shot Learning Based on Decoupled Knowledge Distillation for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5534414. [Google Scholar] [CrossRef]

- Jiang, Z.; Li, Z.; Wang, Y.; Li, W.; Wang, K.; Tian, J.; Wang, C.; Du, Q. Lifelong Learning with Adaptive Knowledge Fusion and Class Margin Dynamic Adjustment for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5505619. [Google Scholar] [CrossRef]

- Zhong, Y.; Hu, X.; Luo, C.; Wang, X.; Zhao, J.; Zhang, L. WHU-Hi: UAV-Borne Hyperspectral with High Spatial Resolution (H2) Benchmark Datasets and Classifier for Precise Crop Identification Based on Deep Convolutional Neural Network with CRF. Remote Sens. Environ. 2020, 250, 112012. [Google Scholar] [CrossRef]

- Wei, L.; Yu, M.; Zhong, Y.; Zhao, J.; Liang, Y.; Hu, X. Spatial-Spectral Fusion Based on Conditional Random Fields for the Fine Classification of Crops in UAV-Borne Hyperspectral Remote Sensing Imagery. Remote Sens. 2019, 11, 780. [Google Scholar] [CrossRef]

- Zhong, Y.; Xu, Y.; Wang, X.; Jia, T.; Xia, G.; Ma, A.; Zhang, L. Pipeline Leakage Detection for District Heating Systems Using Multisource Data in Mid and High-Latitude Regions. ISPRS J. Photogramm. Remote Sens. 2019, 151, 207–222. [Google Scholar] [CrossRef]

- Han, Z.; Yang, J.; Gao, L.; Zeng, Z.; Zhang, B.; Chanussot, J. Subpixel Spectral Variability Network for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5504014. [Google Scholar] [CrossRef]

- Han, Z.; Zhang, C.; Gao, L.; Zeng, Z.; Ng, M.K.; Zhang, B.; Chanussot, J. Multisource Collaborative Domain Generalization for Cross-Scene Remote Sensing Image Classification. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5535815. [Google Scholar] [CrossRef]

- Hu, W.; Huang, Y.; Wei, L.; Zhang, F.; Li, H. Deep Convolutional Neural Networks for Hyperspectral Image Classification. J. Sensors. 2015, 2015, 258619. [Google Scholar] [CrossRef]

- Mou, L.; Ghamisi, P.; Zhu, X.X. Deep Recurrent Neural Networks for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3639–3655. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, H.; Shen, Q. Spectral–Spatial Classification of Hyperspectral Imagery with 3D Convolutional Neural Network. Remote Sens. 2017, 9, 67. [Google Scholar] [CrossRef]

- Liu, X.; Liu, S.; Chen, W.; Qu, S. HDECGCN: A Heterogeneous Dual Enhanced Network Based on Hybrid CNNs Joint Multiscale Dynamic GCNs for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5515717. [Google Scholar] [CrossRef]

- Ahmad, M.; Ghous, U.; Usama, M.; Mazzara, M. WaveFormer: Spectral–Spatial Wavelet Transformer for Hyperspectral Image Classification. IEEE Geosci. Remote Sens. Lett. 2024, 21, 5502405. [Google Scholar] [CrossRef]

- Zhang, S.; Chen, Z.; Wang, D.; Wang, Z.J. Cross-Domain Few-Shot Contrastive Learning for Hyperspectral Images Classification. IEEE Geosci. Remote Sens. Lett. 2022, 19, 5514505. [Google Scholar] [CrossRef]

- Ye, Z.; Wang, J.; Sun, T.; Zhang, J.; Li, W. Cross-Domain Few-Shot Learning Based on Graph Convolution Contrast for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5504614. [Google Scholar] [CrossRef]

- Miftahushudur, T.; Grieve, B.; Yin, H. Permuted KPCA and SMOTE to Guide GAN-Based Oversampling for Imbalanced HSI Classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 489–505. [Google Scholar] [CrossRef]

- Paoletti, M.E.; Haut, J.M.; Plaza, J.; Plaza, A. Neighboring Region Dropout for Hyperspectral Image Classification. IEEE Geosci. Remote Sens. Lett. 2020, 17, 1032–1036. [Google Scholar] [CrossRef]

- Wang, W.; Wang, X.; Liu, Y.; Yang, J. Rethinking Maximum Mean Discrepancy for Visual Domain Adaptation. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 264–277. [Google Scholar] [CrossRef]

- Li, Y.; Hu, H.; Wang, D. Learning Visually Aligned Semantic Graph for Cross-Modal Manifold Matching. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; pp. 3412–3416. [Google Scholar]

- Mei, S.; Ji, J.; Hou, J.; Li, X.; Du, Q. Learning Sensor-Specific Spatial-Spectral Features of Hyperspectral Images via Convolutional Neural Networks. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4520–4533. [Google Scholar] [CrossRef]

- Yang, J.; Zhao, Y.; Chan, J.C. Learning and Transferring Deep Joint Spectral–Spatial Features for Hyperspectral Classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4729–4742. [Google Scholar] [CrossRef]

- Othman, E.; Bazi, Y.; Melgani, F.; Alhichri, H.; Alajlan, N.; Zuair, M. Domain Adaptation Network for Cross-Scene Classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4441–4456. [Google Scholar] [CrossRef]

- Wang, Z.; Du, B.; Shi, Q.; Tu, W. Domain Adaptation with Discriminative Distribution and Manifold Embedding for Hyperspectral Image Classification. IEEE Geosci. Remote Sens. Lett. 2019, 16, 1155–1159. [Google Scholar] [CrossRef]

- Wang, M.; Chen, J.; Wang, Y.; Wang, S.; Li, L.; Su, H.; Gong, Z. Joint Adversarial Domain Adaptation With Structural Graph Alignment. IEEE Trans. Netw. Sci. Eng. 2024, 11, 604–612. [Google Scholar] [CrossRef]

- Liu, L.; Zhang, Y.; Tang, J.; Chen, Q. Generalizable Prompt Learning via Gradient Constrained Sharpness-Aware Minimization. IEEE Trans. Multimed. 2025, 27, 1100–1113. [Google Scholar] [CrossRef]

- He, X.; Chen, Y.; Ghamisi, P. Heterogeneous Transfer Learning for Hyperspectral Image Classification Based on Convolutional Neural Network. IEEE Trans. Geosci. Remote Sens. 2020, 58, 3246–3263. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Zhong, S.; Chang, C.-I.; Zhang, Y. Iterative Support Vector Machine for Hyperspectral Image Classification. In Proceedings of the 2018 25th IEEE International Conference on Image Processing, Athens, Greece, 7–10 October 2018; pp. 3309–3312. [Google Scholar]

- Zhao, Z.; Xu, X.; Li, S.; Plaza, A. Hyperspectral Image Classification Using Groupwise Separable Convolutional Vision Transformer Network. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5511817. [Google Scholar] [CrossRef]

- Xu, Y.; Wang, D.; Zhang, L.; Zhang, L. Dual Selective Fusion Transformer Network for Hyperspectral Image Classification. Neural Netw. 2025, 187, 107311. [Google Scholar] [CrossRef]

| Class | Name | Pixels | Class | Name | Pixels |

|---|---|---|---|---|---|

| 1 | Strawberry | 44,735 | 9 | Grass | 9469 |

| 2 | Cowpea | 22,753 | 10 | Red roof | 10,516 |

| 3 | Soybean | 10,287 | 11 | Gray roof | 16,911 |

| 4 | Sorghum | 5353 | 12 | Plastic | 3679 |

| 5 | Water spinach | 1200 | 13 | Bare soil | 9116 |

| 6 | Watermelon | 4533 | 14 | Road | 18,560 |

| 7 | Greens | 5903 | 15 | Bright object | 1136 |

| 8 | Trees | 17,978 | 16 | Water | 75,401 |

| Class | Name | Pixels |

|---|---|---|

| 1 | Asphalt | 6631 |

| 2 | Meadows | 18,649 |

| 3 | Gravel | 2099 |

| 4 | Trees | 3064 |

| 5 | Sheets | 1345 |

| 6 | Bare soil | 5029 |

| 7 | Bitumen | 1330 |

| 8 | Bricks | 3682 |

| 9 | Shadow | 947 |

| Class | Name | Pixels | Class | Name | Pixels |

|---|---|---|---|---|---|

| 1 | Brocoli_green_weeds_1 | 2009 | 9 | Soil_vinyard_develop | 6203 |

| 2 | Brocoli_green_weeds_2 | 3726 | 10 | Corn_senesced_green_weeds | 3278 |

| 3 | Fallow | 1976 | 11 | Lettuce_romaine_4wk | 1068 |

| 4 | Fallow_rough_plow | 1394 | 12 | Lettuce_romaine_5wk | 1927 |

| 5 | Fallow_smooth | 2678 | 13 | Lettuce_romaine_6wk | 916 |

| 6 | Stubble | 3959 | 14 | Lettuce_romaine_7wk | 1070 |

| 7 | Celery | 3579 | 15 | Vinyard_untrained | 7268 |

| 8 | Grapes_untrained | 11,271 | 16 | Vinyard_vertical_trellis | 1807 |

| Class | Name | Pixels |

|---|---|---|

| 1 | Water | 18,043 |

| 2 | Land/building | 77,450 |

| 3 | Plants | 40,207 |

| Class | Name | Pixels | Class | Name | Pixels |

|---|---|---|---|---|---|

| 1 | Alfalfa | 46 | 9 | Oats | 20 |

| 2 | Corn-notill | 1428 | 10 | Sovbean-notill | 972 |

| 3 | Corn-mintill | 830 | 11 | Soybean-mintill | 2455 |

| 4 | Corn | 237 | 12 | Soybean-cleam | 593 |

| 5 | Grass-pasture | 483 | 13 | Wheat | 205 |

| 6 | Grass-tree | 730 | 14 | Woods | 1265 |

| 7 | Grass-pasture-mowed | 28 | 15 | Buildings-Grass-Trees-Drives | 386 |

| 8 | Hay-windrowed | 478 | 16 | Stone-Steel-owers | 93 |

| Class | XGBoost | SVM | 3DCNN | SSRN | DCFSL | Gia-CFSL | GSCViT | DSFormer | SAMLFE |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 47.89 ± 20.40 | 66.69 ± 4.50 | 56.87 ± 3.23 | 47.65 ± 8.38 | 78.44 ± 6.92 | 79.98 ± 2.79 | 77.63 ± 13.19 | 68.04 ± 2.40 | 80.75 ± 4.06 |

| 2 | 47.52 ± 0.43 | 56.21 ± 13.62 | 70.50 ± 12.39 | 85.85 ± 8.06 | 84.24 ± 10.93 | 89.41 ± 6.01 | 79.76 ± 7.25 | 87.37 ± 13.34 | 88.29 ± 4.98 |

| 3 | 34.13 ± 18.91 | 56.96 ± 8.67 | 62.37 ± 5.88 | 66.33 ± 18.29 | 67.24 ± 12.22 | 67.83 ± 7.93 | 57.45 ± 23.96 | 89.26 ± 5.32 | 70.83 ± 11.23 |

| 4 | 63.11 ± 18.78 | 73.44 ± 22.72 | 71.76 ± 7.97 | 83.93 ± 4.69 | 93.26 ± 3.94 | 92.64 ± 2.78 | 90.11 ± 7.31 | 87.09 ± 6.08 | 93.83 ± 3.03 |

| 5 | 96.77 ± 1.41 | 96.84 ± 1.58 | 96.57 ± 4.17 | 99.81 ± 0.19 | 99.09 ± 1.06 | 96.24 ± 6.56 | 99.97 ± 0.05 | 100.00 ± 0.00 | 99.05 ± 0.85 |

| 6 | 39.98 ± 1.49 | 52.38 ± 25.54 | 49.77 ± 19.42 | 77.63 ± 6.91 | 76.24 ± 7.90 | 65.38 ± 13.48 | 77.77 ± 5.55 | 66.81 ± 9.87 | 79.28 ± 6.74 |

| 7 | 66.84 ± 18.39 | 77.46 ± 12.14 | 81.03 ± 6.41 | 99.70 ± 0.30 | 78.88 ± 10.58 | 81.18 ± 3.33 | 94.78 ± 5.68 | 99.12 ± 0.51 | 82.72 ± 10.73 |

| 8 | 50.92 ± 8.20 | 69.93 ± 2.88 | 45.77 ± 16.01 | 86.32 ± 2.83 | 69.83 ± 14.18 | 69.23 ± 13.37 | 91.09 ± 4.02 | 78.90 ± 6.23 | 69.60 ± 11.79 |

| 9 | 89.53 ± 8.09 | 99.86 ± 0.16 | 90.66 ± 7.47 | 99.68 ± 0.32 | 94.08 ± 6.13 | 94.73 ± 8.84 | 99.52 ± 0.52 | 93.84 ± 10.48 | 93.38 ± 7.39 |

| OA(%) | 50.51 ± 2.20 | 62.73 ± 5.15 | 65.10 ± 4.28 | 79.08 ± 2.87 | 81.49 ± 4.77 | 82.64 ± 2.28 | 81.35 ± 4.09 | 82.20 ± 4.70 | 84.27 ± 1.90 |

| AA(%) | 59.63 ± 0.11 | 72.20 ± 2.95 | 69.48 ± 1.31 | 82.98 ± 2.01 | 82.37 ± 2.71 | 81.85 ± 1.60 | 85.34 ± 0.33 | 85.60 ± 1.00 | 84.19 ± 1.77 |

| K × 100 | 40.01 ± 2.66 | 53.78 ± 5.10 | 55.83 ± 4.06 | 73.01 ± 3.39 | 76.17 ± 5.49 | 77.25 ± 2.63 | 76.01 ± 4.82 | 76.92 ± 5.04 | 79.51 ± 2.31 |

| F1 | 49.59 ± 3.11 | 66.70 ± 0.66 | 61.12 ± 5.06 | 79.78 ± 1.84 | 79.28 ± 2.69 | 79.48 ± 3.54 | 75.67 ± 0.48 | 76.28 ± 4.72 | 81.26 ± 1.87 |

| Model size (MB) | — | — | 0.12 | 0.76 | 0.29 | 0.86 | 0.59 | 2.58 | 0.27 |

| Time(s) | 0.34 | 0.01 | 61.05 | 75.06 | 1989.37 | 5503.92 | 16.48 | 58.45 | 5030.01 |

| Class | XGBoost | SVM | 3DCNN | SSRN | DCFSL | Gia-CFSL | GSCViT | DSFormer | SAMLFE |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 68.29 ± 12.91 | 67.48 ± 17.13 | 63.90 ± 7.46 | 97.56 ± 2.44 | 94.15 ± 8.81 | 84.88 ± 9.05 | 97.22 ± 3.93 | 99.39 ± 1.22 | 95.37 ± 5.71 |

| 2 | 24.43 ± 18.83 | 28.48 ± 8.69 | 26.52 ± 9.32 | 39.72 ± 7.15 | 40.93 ± 6.69 | 43.18 ± 8.06 | 44.79 ± 23.94 | 45.06 ± 2.25 | 43.13 ± 12.66 |

| 3 | 25.13 ± 15.00 | 34.34 ± 11.28 | 27.01 ± 8.83 | 32.61 ± 23.17 | 45.70 ± 6.39 | 47.64 ± 11.66 | 62.38 ± 1.12 | 51.18 ± 1.72 | 53.53 ± 7.37 |

| 4 | 29.60 ± 2.87 | 60.20 ± 3.51 | 32.50 ± 12.38 | 54.20 ± 25.76 | 72.16 ± 16.83 | 81.12 ± 5.41 | 85.90 ± 11.84 | 91.49 ± 6.43 | 71.38 ± 15.32 |

| 5 | 48.26 ± 19.90 | 50.28 ± 4.51 | 61.88 ± 13.14 | 84.73 ± 2.63 | 71.23 ± 7.87 | 73.26 ± 6.03 | 63.01 ± 1.49 | 71.97 ± 13.11 | 74.54 ± 7.53 |

| 6 | 63.91 ± 6.22 | 77.61 ± 2.68 | 75.03 ± 13.12 | 74.24 ± 22.87 | 83.35 ± 7.09 | 76.86 ± 6.79 | 95.28 ± 0.59 | 92.76 ± 2.91 | 85.83 ± 5.24 |

| 7 | 68.12 ± 20.55 | 85.51 ± 17.57 | 88.70 ± 14.45 | 96.74 ± 5.65 | 98.70 ± 3.91 | 96.52 ± 3.25 | 100.00 ± 0.00 | 94.57 ± 10.87 | 98.70 ± 1.99 |

| 8 | 36.36 ± 3.96 | 70.54 ± 12.76 | 81.61 ± 7.81 | 80.92 ± 12.81 | 81.16 ± 13.84 | 92.35 ± 4.12 | 76.50 ± 33.24 | 86.36 ± 9.27 | 84.55 ± 10.50 |

| 9 | 62.22 ± 23.41 | 91.11 ± 15.40 | 98.67 ± 2.67 | 76.67 ± 20.41 | 99.33 ± 2.00 | 97.33 ± 5.33 | 95.00 ± 7.07 | 100.00 ± 0.00 | 98.67 ± 2.67 |

| 10 | 29.85 ± 15.35 | 45.81 ± 3.38 | 40.02 ± 7.05 | 53.77 ± 18.93 | 56.29 ± 10.77 | 58.47 ± 6.59 | 59.25 ± 16.02 | 52.43 ± 1.97 | 56.83 ± 12.41 |

| 11 | 19.69 ± 3.84 | 35.01 ± 16.04 | 50.52 ± 11.45 | 45.90 ± 9.25 | 57.49 ± 12.78 | 56.78 ± 7.13 | 48.16 ± 13.73 | 57.43 ± 8.72 | 63.40 ± 7.00 |

| 12 | 24.94 ± 8.33 | 38.72 ± 7.23 | 24.97 ± 6.09 | 54.00 ± 11.48 | 43.84 ± 15.17 | 43.47 ± 12.82 | 48.97 ± 19.77 | 39.67 ± 9.63 | 39.44 ± 10.89 |

| 13 | 82.33 ± 9.65 | 92.50 ± 9.10 | 97.60 ± 4.55 | 98.38 ± 1.98 | 96.35 ± 4.99 | 94.10 ± 3.44 | 99.75 ± 0.36 | 99.63 ± 0.25 | 96.80 ± 2.23 |

| 14 | 57.17 ± 6.98 | 63.97 ± 14.02 | 54.40 ± 10.98 | 79.86 ± 8.72 | 86.21 ± 6.27 | 81.30 ± 6.26 | 88.05 ± 16.00 | 86.19 ± 3.65 | 86.33 ± 7.92 |

| 15 | 27.56 ± 7.83 | 37.97 ± 16.19 | 32.02 ± 6.46 | 61.09 ± 16.02 | 68.92 ± 8.71 | 52.81 ± 9.21 | 59.84 ± 1.13 | 67.06 ± 8.99 | 72.89 ± 11.42 |

| 16 | 89.39 ± 8.83 | 80.30 ± 10.92 | 84.55 ± 7.35 | 98.58 ± 1.86 | 98.64 ± 1.42 | 95.91 ± 6.53 | 91.57 ± 11.93 | 100.00 ± 0.00 | 97.50 ± 3.40 |

| OA(%) | 34.70 ± 0.76 | 46.82 ± 6.07 | 47.32 ± 3.92 | 57.46 ± 3.83 | 62.65 ± 2.60 | 62.17 ± 2.41 | 63.23 ± 8.61 | 64.45 ± 2.55 | 65.45 ± 2.93 |

| AA(%) | 47.33 ± 1.64 | 59.99 ± 3.93 | 58.74 ± 2.66 | 70.56 ± 7.17 | 74.65 ± 1.93 | 73.50 ± 1.58 | 75.98 ± 3.58 | 77.20 ± 1.70 | 76.18 ± 1.88 |

| K × 100 | 28.47 ± 1.26 | 40.99 ± 6.07 | 40.83 ± 3.99 | 52.31 ± 4.61 | 58.08 ± 2.72 | 57.39 ± 2.80 | 58.87 ± 9.58 | 60.07 ± 2.70 | 61.06 ± 3.15 |

| F1 | 36.46 ± 1.25 | 48.38 ± 2.04 | 48.04 ± 3.20 | 58.69 ± 2.48 | 61.37 ± 1.77 | 58.96 ± 1.31 | 59.55 ± 4.90 | 58.32 ± 0.81 | 65.52 ± 2.54 |

| Model size (MB) | — | — | 0.43 | 1.32 | 0.33 | 0.89 | 2.44 | 2.59 | 0.33 |

| Time(s) | 0.69 | 0.01 | 23.61 | 35.46 | 3211.04 | 7602.74 | 23.99 | 61.53 | 6266.61 |

| Class | XGBoost | SVM | 3DCNN | SSRN | DCFSL | Gia-CFSL | GSCViT | DSFormer | SAMLFE |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 92.65 ± 1.57 | 95.43 ± 2.80 | 98.25 ± 0.20 | 97.85 ± 4.29 | 99.61 ± 0.85 | 99.54 ± 0.24 | 99.75 ± 0.47 | 100.00 ± 0.00 | 99.64 ± 0.55 |

| 2 | 72.92 ± 6.67 | 95.05 ± 2.42 | 98.70 ± 1.25 | 95.87 ± 8.26 | 99.01 ± 1.23 | 99.11 ± 0.69 | 99.81 ± 0.18 | 96.41 ± 5.07 | 99.56 ± 0.37 |

| 3 | 61.15 ± 18.37 | 87.23 ± 12.36 | 95.61 ± 0.43 | 93.08 ± 13.71 | 90.27 ± 10.25 | 90.54 ± 7.60 | 83.95 ± 12.85 | 97.64 ± 3.05 | 96.00 ± 3.95 |

| 4 | 97.43 ± 0.96 | 98.61 ± 0.83 | 97.34 ± 0.94 | 98.08 ± 1.77 | 99.40 ± 0.48 | 97.48 ± 2.29 | 99.69 ± 0.32 | 99.89 ± 0.04 | 99.12 ± 0.76 |

| 5 | 94.71 ± 2.87 | 88.04 ± 7.32 | 93.08 ± 4.08 | 95.23 ± 2.64 | 91.50 ± 2.81 | 93.00 ± 2.39 | 92.40 ± 5.91 | 84.36 ± 5.57 | 90.18 ± 6.38 |

| 6 | 87.73 ± 8.53 | 99.36 ± 0.22 | 99.73 ± 0.27 | 99.94 ± 0.11 | 99.39 ± 0.97 | 98.41 ± 1.57 | 99.37 ± 0.61 | 100.00 ± 0.00 | 99.05 ± 1.16 |

| 7 | 90.68 ± 7.75 | 97.96 ± 2.35 | 96.75 ± 1.82 | 99.96 ± 0.06 | 98.27 ± 1.33 | 97.37 ± 1.57 | 99.94 ± 0.09 | 99.94 ± 0.01 | 98.52 ± 1.10 |

| 8 | 45.81 ± 16.89 | 66.11 ± 5.72 | 71.39 ± 8.25 | 60.08 ± 25.00 | 75.88 ± 10.53 | 71.11 ± 11.98 | 68.59 ± 12.54 | 70.00 ± 17.16 | 79.06 ± 5.53 |

| 9 | 72.95 ± 19.01 | 90.01 ± 8.68 | 93.63 ± 0.34 | 99.74 ± 0.35 | 99.32 ± 0.76 | 99.26 ± 0.62 | 98.62 ± 2.20 | 99.98 ± 0.02 | 99.36 ± 0.73 |

| 10 | 65.70 ± 8.87 | 82.43 ± 0.76 | 83.13 ± 8.65 | 94.01 ± 1.36 | 88.00 ± 4.35 | 84.32 ± 4.79 | 89.01 ± 7.33 | 95.95 ± 0.28 | 88.57 ± 4.07 |

| 11 | 56.22 ± 27.12 | 87.80 ± 10.74 | 77.28 ± 15.95 | 99.34 ± 0.48 | 98.78 ± 1.16 | 95.71 ± 3.99 | 96.06 ± 4.55 | 89.84 ± 3.19 | 97.22 ± 4.28 |

| 12 | 81.70 ± 8.77 | 96.86 ± 0.62 | 98.47 ± 1.53 | 99.38 ± 0.86 | 99.14 ± 1.48 | 97.61 ± 2.51 | 98.95 ± 1.12 | 99.24 ± 1.06 | 99.80 ± 0.18 |

| 13 | 93.08 ± 4.32 | 97.91 ± 0.11 | 97.64 ± 1.92 | 99.30 ± 0.89 | 99.33 ± 0.78 | 98.70 ± 0.61 | 99.39 ± 1.36 | 97.86 ± 3.02 | 98.63 ± 1.82 |

| 14 | 82.60 ± 7.21 | 90.67 ± 0.38 | 84.46 ± 6.43 | 97.78 ± 2.41 | 97.91 ± 1.57 | 98.70 ± 0.82 | 98.28 ± 2.76 | 96.47 ± 4.44 | 98.04 ± 1.72 |

| 15 | 53.69 ± 16.02 | 52.93 ± 5.40 | 55.73 ± 0.40 | 61.66 ± 27.80 | 76.02 ± 8.09 | 78.05 ± 8.51 | 82.82 ± 12.11 | 81.50 ± 17.90 | 77.56 ± 5.67 |

| 16 | 83.94 ± 4.97 | 71.29 ± 8.13 | 88.43 ± 7.41 | 91.01 ± 6.16 | 89.54 ± 6.77 | 95.21 ± 3.16 | 93.70 ± 7.07 | 99.14 ± 0.97 | 92.26 ± 7.13 |

| OA(%) | 69.40 ± 0.84 | 81.09 ± 0.61 | 84.14 ± 3.14 | 84.82 ± 1.79 | 89.45 ± 1.86 | 88.49 ± 1.74 | 88.90 ± 2.78 | 89.54 ± 1.14 | 90.61 ± 0.93 |

| AA(%) | 77.06 ± 2.82 | 87.36 ± 0.51 | 89.35 ± 3.38 | 92.64 ± 1.35 | 93.83 ± 0.93 | 93.38 ± 0.90 | 93.77 ± 1.50 | 94.26 ± 0.13 | 94.53 ± 0.82 |

| K × 100 | 66.34 ± 1.03 | 78.99 ± 0.70 | 82.37 ± 3.46 | 83.16 ± 1.93 | 88.28 ± 2.03 | 87.24 ± 1.88 | 87.69 ± 3.08 | 88.41 ± 1.21 | 89.56 ± 1.03 |

| F1 | 72.86 ± 1.76 | 84.35 ± 2.03 | 84.77 ± 6.11 | 86.69 ± 5.61 | 93.68 ± 0.35 | 92.41 ± 0.39 | 91.95 ± 1.95 | 93.19 ± 2.88 | 93.97 ± 1.00 |

| Model size (MB) | — | — | 0.44 | 1.35 | 0.33 | 0.89 | 0.69 | 2.59 | 0.33 |

| Time(s) | 0.55 | 0.01 | 117.98 | 150.75 | 3217.59 | 7705.78 | 26.43 | 62.64 | 7129.28 |

| Class | XGBoost | SVM | 3DCNN | SSRN | DCFSL | Gia-CFSL | GSCViT | DSFormer | SAMLFE |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 97.25 ± 3.08 | 89.58 ± 9.33 | 86.07 ± 2.13 | 89.93 ± 3.10 | 84.74 ± 2.01 | 83.63 ± 3.65 | 83.17 ± 13.02 | 87.73 ± 4.05 | 83.61 ± 1.94 |

| 2 | 64.42 ± 11.16 | 62.65 ± 14.45 | 70.14 ± 9.25 | 78.19 ± 10.29 | 78.86 ± 2.55 | 77.79 ± 4.52 | 76.80 ± 20.60 | 73.47 ± 16.83 | 84.40 ± 5.17 |

| 3 | 48.51 ± 3.22 | 67.61 ± 17.24 | 72.57 ± 9.38 | 65.26 ± 5.38 | 72.84 ± 6.01 | 76.19 ± 6.41 | 69.74 ± 18.49 | 77.61 ± 9.34 | 72.35 ± 7.29 |

| OA(%) | 64.07 ± 6.92 | 67.70 ± 1.90 | 72.98 ± 3.12 | 75.92 ± 3.87 | 77.86 ± 1.74 | 78.09 ± 2.95 | 75.56 ± 6.00 | 76.59 ± 8.61 | 80.73 ± 1.21 |

| AA(%) | 70.06 ± 3.77 | 73.28 ± 4.04 | 76.26 ± 1.31 | 77.80 ± 0.61 | 78.81 ± 1.84 | 79.20 ± 2.39 | 76.57 ± 3.20 | 79.60 ± 4.31 | 80.12 ± 0.44 |

| K × 100 | 41.76 ± 8.75 | 47.69 ± 1.05 | 54.22 ± 3.85 | 58.87 ± 4.20 | 61.38 ± 3.16 | 62.50 ± 4.53 | 58.12 ± 6.03 | 60.77 ± 12.11 | 65.78 ± 1.52 |

| F1 | 62.46 ± 5.84 | 67.44 ± 2.69 | 75.12 ± 2.81 | 75.05 ± 5.15 | 79.14 ± 1.72 | 78.57 ± 3.97 | 49.20 ± 2.56 | 49.83 ± 5.96 | 81.37 ± 0.77 |

| Model size (MB) | — | — | 0.08 | 1.31 | 0.32 | 0.89 | 0.68 | 2.58 | 0.30 |

| Time(s) | 0.17 | 0.01 | 137.64 | 160.03 | 1574.63 | 5960.51 | 17.73 | 55.75 | 4282.37 |

| Dataset | ID | ISAM | Improved Self-Attention | Asymmetric Residual Block | OA | AA | K × 100 | F1 |

|---|---|---|---|---|---|---|---|---|

| PU | 1 | × | × | × | 81.49 ± 4.77 | 82.37 ± 2.71 | 76.17 ± 5.49 | 79.28 ± 2.69 |

| 2 | √ | × | × | 82.75 ± 3.57 | 82.25 ± 1.42 | 77.61 ± 4.26 | 80.35 ± 4.08 | |

| 3 | √ | × | √ | 81.53 ± 1.05 | 82.48 ± 0.15 | 76.92 ± 1.19 | 79.29 ± 1.10 | |

| 4 | × | √ | √ | 81.62 ± 3.91 | 83.79 ± 0.98 | 77.41 ± 4.41 | 79.93 ± 1.15 | |

| 5 | √ | √ | × | 81.59 ± 2.08 | 82.98 ± 2.49 | 76.31 ± 2.38 | 79.31 ± 0.71 | |

| 6 | √ | √ | √ | 84.27 ± 1.90 | 84.19 ± 1.77 | 79.51 ± 2.31 | 81.26 ± 1.87 | |

| IP | 1 | × | × | × | 62.65 ± 2.60 | 74.65 ± 1.93 | 58.08 ± 2.72 | 61.37 ± 1.77 |

| 2 | √ | × | × | 63.85 ± 2.05 | 76.39 ± 2.17 | 59.24 ± 2.26 | 63.11 ± 5.79 | |

| 3 | √ | × | √ | 64.31 ± 3.87 | 77.01 ± 1.62 | 59.87 ± 4.02 | 64.85 ± 1.88 | |

| 4 | × | √ | √ | 64.27 ± 2.88 | 77.00 ± 1.54 | 59.67 ± 3.16 | 64.74 ± 1.87 | |

| 5 | √ | √ | × | 64.15 ± 3.75 | 76.08 ± 2.95 | 59.80 ± 4.02 | 64.12 ± 1.83 | |

| 6 | √ | √ | √ | 65.45 ± 2.93 | 76.18 ± 1.88 | 61.06 ± 3.15 | 65.52 ± 2.54 | |

| SA | 1 | × | × | × | 89.45 ± 1.86 | 93.83 ± 0.93 | 88.28 ± 2.03 | 93.68 ± 0.35 |

| 2 | √ | × | × | 91.50 ± 0.86 | 95.28 ± 0.64 | 90.54 ± 0.96 | 94.52 ± 1.31 | |

| 3 | √ | × | √ | 90.71 ± 0.81 | 94.24 ± 0.29 | 89.65 ± 0.89 | 93.60 ± 4.81 | |

| 4 | × | √ | √ | 90.63 ± 0.64 | 93.61 ± 1.51 | 89.57 ± 0.72 | 93.19 ± 0.63 | |

| 5 | √ | √ | × | 89.62 ± 2.14 | 94.58 ± 1.35 | 88.46 ± 2.35 | 93.56 ± 0.45 | |

| 6 | √ | √ | √ | 90.61 ± 0.93 | 94.53 ± 0.82 | 89.56 ± 1.03 | 93.97 ± 1.00 |

| Sample Size | PU | IP | SA | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 1 | 2 | 3 | 4 | 5 | 1 | 2 | 3 | 4 | 5 | |

| OA(%) Std | 58.74 ± 2.59 | 62.41 ± 11.28 | 65.60 ± 10.20 | 75.66 ± 9.65 | 84.27 ± 1.90 | 41.97 ± 0.84 | 53.97 ± 2.55 | 56.11 ± 2.64 | 60.21 ± 0.74 | 65.45 ± 2.93 | 76.31 ± 0.09 | 83.46 ± 2.19 | 89.17 ± 0.46 | 88.34 ± 0.78 | 90.61 ± 0.93 |

| AA(%) Std | 63.63 ± 2.93 | 70.76 ± 2.69 | 74.22 ± 2.25 | 82.46 ± 3.42 | 84.19 ± 1.77 | 51.40 ± 0.67 | 65.59 ± 0.31 | 70.22 ± 0.55 | 74.12 ± 0.41 | 76.18 ± 1.88 | 79.59 ± 3.00 | 88.91 ± 0.73 | 93.47 ± 1.10 | 93.17 ± 0.24 | 94.53 ± 0.82 |

| K × 100 Std | 48.29 ± 2.21 | 54.45 ± 11.71 | 58.23 ± 10.16 | 69.79 ± 10.83 | 79.51 ± 2.31 | 35.29 ± 1.44 | 48.48 ± 2.71 | 50.88 ± 2.65 | 55.34 ± 0.77 | 61.06 ± 3.15 | 73.68 ± 0.23 | 81.52 ± 2.46 | 87.99 ± 0.49 | 87.03 ± 0.84 | 89.56 ± 1.03 |

| F1 Std | 56.61 ± 2.63 | 64.35 ± 5.62 | 67.75 ± 4.43 | 78.17 ± 4.60 | 81.26 ± 1.87 | 38.92 ± 1.24 | 53.42 ± 0.55 | 54.97 ± 2.44 | 60.96 ± 1.19 | 65.52 ± 2.54 | 77.93 ± 0.25 | 87.19 ± 0.01 | 91.38 ± 1.30 | 91.36 ± 0.32 | 93.97 ± 1.00 |

| Batch Size | PU | IP | SA | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 32 | 64 | 128 | 150 | 32 | 64 | 128 | 150 | 32 | 64 | 128 | 150 | |

| OA(%) Std | 77.26 ± 7.46 | 79.23 ± 4.86 | 84.27 ± 1.90 | 81.31 ± 4.50 | 65.22 ± 0.91 | 64.59 ± 0.79 | 65.45 ± 2.93 | 63.68 ± 1.65 | 89.53 ± 0.08 | 90.11 ± 0.51 | 90.61 ± 0.93 | 89.96 ± 1.78 |

| AA(%) Std | 80.08 ± 4.79 | 81.87 ± 2.97 | 84.19 ± 1.77 | 83.01 ± 2.67 | 77.61 ± 0.94 | 77.20 ± 0.54 | 76.18 ± 1.88 | 77.09 ± 1.34 | 92.95 ± 0.60 | 94.04 ± 0.70 | 94.53 ± 0.82 | 94.60 ± 0.71 |

| K × 100 Std | 70.86 ± 9.29 | 73.48 ± 5.90 | 79.51 ± 2.31 | 76.07 ± 5.42 | 60.67 ± 1.18 | 60.32 ± 0.78 | 61.06 ± 3.15 | 59.21 ± 1.77 | 88.32 ± 0.11 | 88.96 ± 0.58 | 89.56 ± 1.03 | 88.85 ± 1.97 |

| F1 Std | 74.63 ± 6.26 | 75.09 ± 4.16 | 81.26 ± 1.87 | 78.63 ± 3.12 | 64.22 ± 1.79 | 64.11 ± 0.85 | 65.52 ± 2.54 | 63.04 ± 1.51 | 92.94 ± 0.39 | 93.79 ± 1.06 | 93.97 ± 1.00 | 93.59 ± 4.66 |

| Algorithm | Index | Baseline | Without Gamma | With Gamma | |

|---|---|---|---|---|---|

| Dataset | |||||

| PU | OA | 81.49 ± 4.77 | 75.93 ± 5.80 | 82.37 ± 5.43 | |

| AA | 82.37 ± 2.71 | 80.05 ± 2.81 | 83.18 ± 2.12 | ||

| Kappa | 76.17 ± 5.49 | 69.73 ± 6.38 | 77.48 ± 6.18 | ||

| F1 | 79.28 ± 2.69 | 76.18 ± 2.30 | 79.39 ± 2.92 | ||

| IP | OA | 62.65 ± 2.60 | 62.45 ± 1.67 | 63.96 ± 1.47 | |

| AA | 74.65 ± 1.93 | 72.63 ± 1.96 | 75.52 ± 1.83 | ||

| Kappa | 58.08 ± 2.72 | 57.69 ± 2.04 | 59.44 ± 1.61 | ||

| F1 | 61.37 ± 1.77 | 61.68 ± 3.12 | 63.33 ± 1.82 | ||

| SA | OA | 89.45 ± 1.86 | 88.13 ± 0.89 | 90.39 ± 1.84 | |

| AA | 93.83 ± 0.93 | 93.16 ± 1.28 | 94.94 ± 1.09 | ||

| Kappa | 88.28 ± 2.03 | 86.82 ± 0.96 | 89.33 ± 2.05 | ||

| F1 | 93.68 ± 0.35 | 91.97 ± 1.32 | 94.09 ± 0.92 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Liu, C.; Wang, A.; Wang, M.; Wu, H.; Yan, S.; Zhao, L. Cross-Domain Hyperspectral Image Classification Combined Sharpness-Aware Minimization with Local-to-Global Feature Enhancement. Remote Sens. 2026, 18, 740. https://doi.org/10.3390/rs18050740

Liu C, Wang A, Wang M, Wu H, Yan S, Zhao L. Cross-Domain Hyperspectral Image Classification Combined Sharpness-Aware Minimization with Local-to-Global Feature Enhancement. Remote Sensing. 2026; 18(5):740. https://doi.org/10.3390/rs18050740

Chicago/Turabian StyleLiu, Chengyang, Aili Wang, Minhui Wang, Haibin Wu, Siqi Yan, and Lin Zhao. 2026. "Cross-Domain Hyperspectral Image Classification Combined Sharpness-Aware Minimization with Local-to-Global Feature Enhancement" Remote Sensing 18, no. 5: 740. https://doi.org/10.3390/rs18050740

APA StyleLiu, C., Wang, A., Wang, M., Wu, H., Yan, S., & Zhao, L. (2026). Cross-Domain Hyperspectral Image Classification Combined Sharpness-Aware Minimization with Local-to-Global Feature Enhancement. Remote Sensing, 18(5), 740. https://doi.org/10.3390/rs18050740