Text2AIRS: Fine-Grained Airplane Image Generation in Remote Sensing from Nature Language

Highlights

- This study proposes Text2AIRS, the first text-to-image generation method to explicitly incorporate ground sample distance (GSD) as a dual-level (prompt and feature) constraint, significantly enhancing the scale consistency and realism of synthesized fine-grained aircraft in remote sensing images.

- Extensive experiments demonstrate that Text2AIRS outperforms state-of-the-art methods on the Fair1M benchmark in terms of both perceptual quality and downstream utility for object detection, leading to notable improvements in detection accuracy.

- The work offers a promising and cost-effective solution to address data scarcity and class imbalance in fine-grained remote sensing analysis by generating high-fidelity, semantically-aligned synthetic data that adheres to physical imaging constraints.

- By transforming GSD from passive metadata into an active generative prior, the method establishes a new paradigm for physically-grounded remote sensing image synthesis, with potential to benefit various downstream tasks such as data augmentation and model robustness enhancement.

Abstract

1. Introduction

- We introduce Text2AIRS, a novel method that integrates ground sample distance (GSD) as a guiding constraint for generating remote sensing images. To the best of our knowledge, this is the first approach to incorporate GSD into the remote sensing image generation process, significantly enhancing the realism and consistency of the generated images.

- We extract fine-grained objects from remote sensing images and annotate them with detailed captions containing fine-grained information. This marks the first time that fine-grained instances are explicitly considered and utilized in remote sensing image generation, addressing a critical gap in the field.

- Extensive experimental results on both image generation and object detection tasks demonstrate the authenticity and practicality of the generated images. Our approach not only produces visually realistic outputs but also improves the performance of downstream object detection models, showcasing its potential for real-world applications.

2. Related Work

2.1. Text-to-Image Generation in Natural Images

2.2. Text-to-Image Generation in Remote Sensing Images

2.3. Ground Sample Distance

2.4. Data Augmentation

2.5. Object Detection

3. Method

3.1. Overview

3.2. Encoder and Decoder

3.3. Text-to-Image

3.4. Channel Attention Layer

3.5. Ground Sample Distance Feature Extraction

4. Experiments and Results

4.1. Datasets

4.2. Evaluation Metrics

4.3. Implementation Details

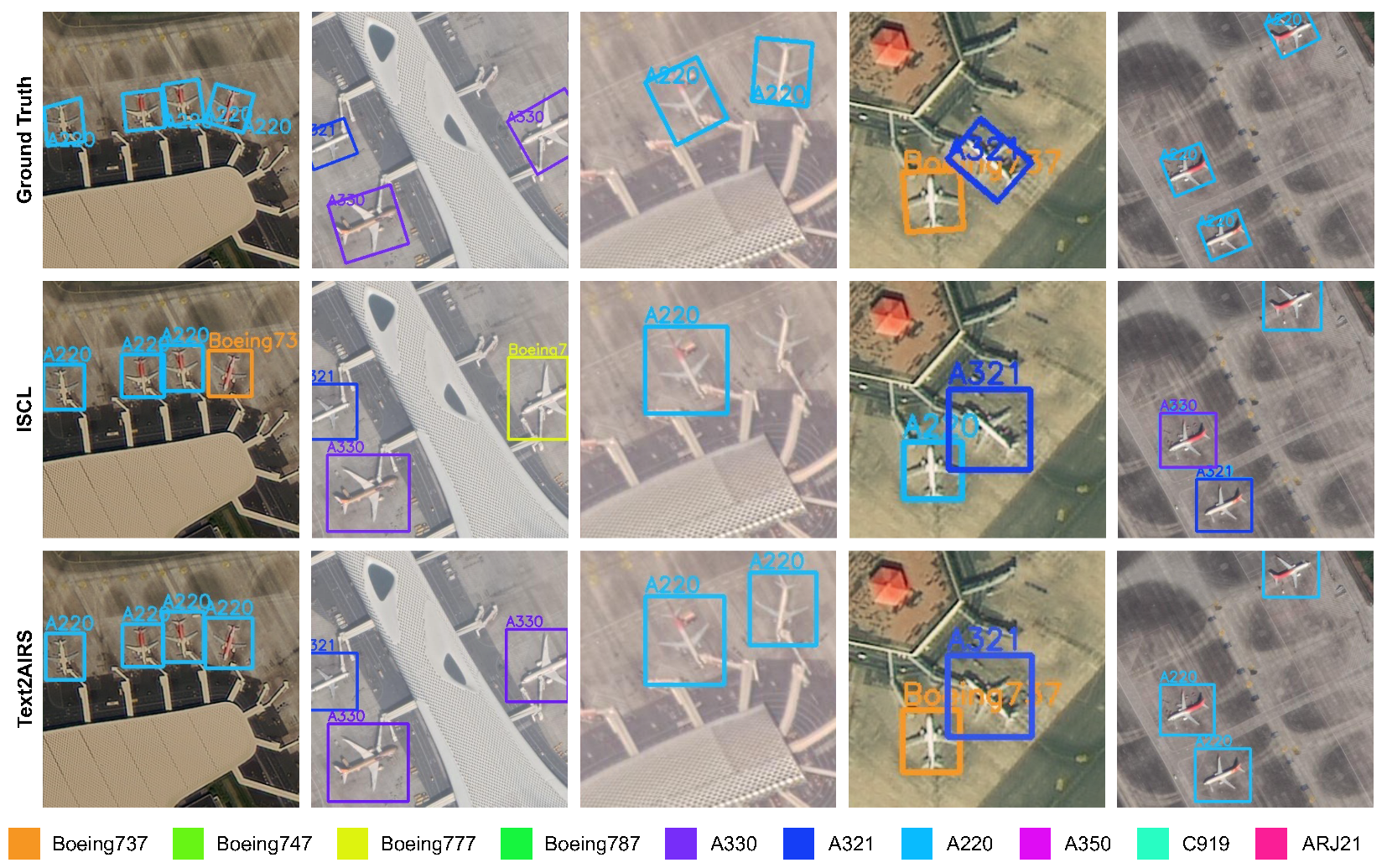

4.4. Comparison and Ablation Studies in Generation Result

4.5. Application

4.6. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wang, Z.; Ma, Y.; Zhang, Y. Review of pixel-level remote sensing image fusion based on deep learning. Inf. Fusion 2023, 90, 36–58. [Google Scholar] [CrossRef]

- Xia, Y.; Wu, Q.; Li, W.; Chan, A.B.; Stilla, U. A Lightweight and Detector-Free 3D Single Object Tracker on Point Clouds. IEEE Trans. Intell. Transp. Syst. 2023, 24, 5543–5554. [Google Scholar] [CrossRef]

- Hao, X.; Liu, L.; Yang, R.; Yin, L.; Zhang, L.; Li, X. A Review of Data Augmentation Methods of Remote Sensing Image Target Recognition. Remote Sens. 2023, 15, 827. [Google Scholar] [CrossRef]

- Sun, X.; Wang, P.; Lu, W.; Zhu, Z.; Lu, X.; He, Q.; Li, J.; Rong, X.; Yang, Z.; Chang, H.; et al. Ringmo: A remote sensing foundation model with masked image modeling. IEEE Trans. Geosci. Remote Sens. 2022, 61, 5612822. [Google Scholar] [CrossRef]

- Li, W.; Wei, W.; Zhang, L. GSDet: Object detection in aerial images based on scale reasoning. IEEE Trans. Image Process. 2021, 30, 4599–4609. [Google Scholar] [CrossRef]

- Yang, Y.; Wang, C.; Cai, Z.; Song, P.; Huang, G.; Cheng, M.; Zang, Y. GSDDet: Ground Sample Distance Guided Object Detection for Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2023, 62, 5626012. [Google Scholar] [CrossRef]

- Zhang, F.; Du, B.; Zhang, L. Saliency-guided unsupervised feature learning for scene classification. IEEE Trans. Geosci. Remote Sens. 2014, 53, 2175–2184. [Google Scholar] [CrossRef]

- Lu, X.; Wang, B.; Zheng, X.; Li, X. Exploring models and data for remote sensing image caption generation. IEEE Trans. Geosci. Remote Sens. 2017, 56, 2183–2195. [Google Scholar] [CrossRef]

- Cheng, Q.; Huang, H.; Xu, Y.; Zhou, Y.; Li, H.; Wang, Z. NWPU-captions dataset and MLCA-net for remote sensing image captioning. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5629419. [Google Scholar] [CrossRef]

- Xu, Y.; Yu, W.; Ghamisi, P.; Kopp, M.; Hochreiter, S. Txt2Img-MHN: Remote sensing image generation from text using modern Hopfield networks. IEEE Trans. Image Process. 2023, 32, 5737–5750. [Google Scholar] [CrossRef] [PubMed]

- Ramsauer, H.; Schäfl, B.; Lehner, J.; Seidl, P.; Widrich, M.; Adler, T.; Gruber, L.; Holzleitner, M.; Pavlović, M.; Sandve, G.K.; et al. Hopfield networks is all you need. arXiv 2020, arXiv:2008.02217. [Google Scholar]

- Dieste, Á.G.; Argüello, F.; Heras, D.B. ResBaGAN: A Residual Balancing GAN with Data Augmentation for Forest Mapping. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 6428–6447. [Google Scholar] [CrossRef]

- Sebaq, A.; ElHelw, M. RSDiff: Remote Sensing Image Generation from Text Using Diffusion Model. arXiv 2023, arXiv:2309.02455. [Google Scholar] [CrossRef]

- Xia, G.S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A large-scale dataset for object detection in aerial images. In Proceedings of the CVPR, Salt Lake City, UT, USA, 18–23 June 2018; pp. 3974–3983. [Google Scholar]

- Li, K.; Wan, G.; Cheng, G.; Meng, L.; Han, J. Object detection in optical remote sensing images: A survey and a new benchmark. ISPRS J. Photogramm. Remote Sens. 2020, 159, 296–307. [Google Scholar] [CrossRef]

- Sun, X.; Wang, P.; Yan, Z.; Diao, W.; Lu, X.; Yang, Z.; Zhang, Y.; Xiang, D.; Yan, C.; Guo, J.; et al. Automated high-resolution earth observation image interpretation: Outcome of the 2020 Gaofen challenge. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 8922–8940. [Google Scholar] [CrossRef]

- Sun, X.; Wang, P.; Yan, Z.; Xu, F.; Wang, R.; Diao, W.; Chen, J.; Li, J.; Feng, Y.; Xu, T.; et al. FAIR1M: A benchmark dataset for fine-grained object recognition in high-resolution remote sensing imagery. ISPRS J. Photogramm. Remote Sens. 2022, 184, 116–130. [Google Scholar] [CrossRef]

- Guo, B.; Zhang, R.; Guo, H.; Yang, W.; Yu, H.; Zhang, P.; Zou, T. Fine-Grained Ship Detection in High-Resolution Satellite Images With Shape-Aware Feature Learning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 1914–1926. [Google Scholar] [CrossRef]

- Reed, S.; Akata, Z.; Yan, X.; Logeswaran, L.; Schiele, B.; Lee, H. Generative adversarial text to image synthesis. In Proceedings of the International Conference on Machine Learning (ICML 2016), New York, NY, USA, 20–22 June 2016; pp. 1060–1069. [Google Scholar]

- Reed, S.E.; Akata, Z.; Mohan, S.; Tenka, S.; Schiele, B.; Lee, H. Learning what and where to draw. Adv. Neural Inf. Process. Syst. 2016, 29, 217–225. [Google Scholar]

- Zhang, H.; Xu, T.; Li, H.; Zhang, S.; Wang, X.; Huang, X.; Metaxas, D.N. Stackgan: Text to photo-realistic image synthesis with stacked generative adversarial networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 5907–5915. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International Conference on Machine Learning (ICML 2021), PMLR. Virtual, 18–24 July 2021; pp. 8748–8763. [Google Scholar]

- Ramesh, A.; Dhariwal, P.; Nichol, A.; Chu, C.; Chen, M. Hierarchical text-conditional image generation with clip latents. arXiv 2022, arXiv:2204.06125. [Google Scholar] [CrossRef]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 2020, 33, 1877–1901. [Google Scholar]

- Bubeck, S.; Chandrasekaran, V.; Eldan, R.; Gehrke, J.; Horvitz, E.; Kamar, E.; Lee, P.; Lee, Y.T.; Li, Y.; Lundberg, S.; et al. Sparks of artificial general intelligence: Early experiments with gpt-4. arXiv 2023, arXiv:2303.12712. [Google Scholar] [CrossRef]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. Adv. Neural Inf. Process. Syst. 2020, 33, 6840–6851. [Google Scholar]

- Saharia, C.; Chan, W.; Saxena, S.; Li, L.; Whang, J.; Denton, E.L.; Ghasemipour, K.; Gontijo Lopes, R.; Karagol Ayan, B.; Salimans, T.; et al. Photorealistic text-to-image diffusion models with deep language understanding. Adv. Neural Inf. Process. Syst. 2022, 35, 36479–36494. [Google Scholar]

- Rombach, R.; Blattmann, A.; Lorenz, D.; Esser, P.; Ommer, B. High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 10684–10695. [Google Scholar]

- Ruiz, N.; Li, Y.; Jampani, V.; Pritch, Y.; Rubinstein, M.; Aberman, K. Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 22500–22510. [Google Scholar]

- Quattrochi, D.A.; Ridd, M.K. Analysis of vegetation within a semi-arid urban environment using high spatial resolution airborne thermal infrared remote sensing data. Atmos. Environ. 1998, 32, 19–33. [Google Scholar] [CrossRef]

- Atkinson, P.M.; Curran, P.J. Choosing an appropriate spatial resolution for remote sensing investigations. Photogramm. Eng. Remote Sens. 1997, 63, 1345–1351. [Google Scholar]

- Benjamin, S.; Gaydos, L. Spatial resolution requirements for automated cartographic road extraction. Photogramm. Eng. Remote Sens. 1990, 56, 93–100. [Google Scholar]

- Abdollahi, A.; Pradhan, B.; Shukla, N.; Chakraborty, S.; Alamri, A. Deep learning approaches applied to remote sensing datasets for road extraction: A state-of-the-art review. Remote Sens. 2020, 12, 1444. [Google Scholar] [CrossRef]

- Mikołajczyk, A.; Grochowski, M. Data augmentation for improving deep learning in image classification problem. In Proceedings of the 2018 International Interdisciplinary PhD Workshop (IIPhDW), Swinoujscie, Poland, 9–12 May 2018; IEEE: New York, NY, USA, 2018; pp. 117–122. [Google Scholar]

- Li, B.; Hou, Y.; Che, W. Data augmentation approaches in natural language processing: A survey. AI Open 2022, 3, 71–90. [Google Scholar] [CrossRef]

- Paschali, M.; Simson, W.; Roy, A.G.; Göbl, R.; Wachinger, C.; Navab, N. Manifold exploring data augmentation with geometric transformations for increased performance and robustness. In Proceedings of the Information Processing in Medical Imaging: 26th International Conference, IPMI 2019, Hong Kong, China, 2–7 June 2019; Proceedings 26; Springer: Cham, Switzerland, 2019; pp. 517–529. [Google Scholar]

- Ledig, C.; Theis, L.; Huszár, F.; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.; Tejani, A.; Totz, J.; Wang, Z.; et al. Photo-realistic single image super-resolution using a generative adversarial network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4681–4690. [Google Scholar]

- Shorten, C.; Khoshgoftaar, T.M. A survey on image data augmentation for deep learning. J. Big Data 2019, 6, 60. [Google Scholar] [CrossRef]

- He, Q.; Sun, X.; Yan, Z.; Li, B.; Fu, K. Multi-object tracking in satellite videos with graph-based multitask modeling. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5619513. [Google Scholar] [CrossRef]

- Wang, J.; Yang, W.; Li, H.C.; Zhang, H.; Xia, G.S. Learning center probability map for detecting objects in aerial images. IEEE Trans. Geosci. Remote Sens. 2020, 59, 4307–4323. [Google Scholar] [CrossRef]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 2015, 28, 91–99. [Google Scholar] [CrossRef] [PubMed]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.S.; Lu, Q. Learning RoI transformer for oriented object detection in aerial images. In Proceedings of the CVPR, Long Beach, CA, USA, 15–20 June 2019; pp. 2849–2858. [Google Scholar]

- Yang, X.; Yang, J.; Yan, J.; Zhang, Y.; Zhang, T.; Guo, Z.; Sun, X.; Fu, K. Scrdet: Towards more robust detection for small, cluttered and rotated objects. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 8232–8241. [Google Scholar]

- Xu, Y.; Fu, M.; Wang, Q.; Wang, Y.; Chen, K.; Xia, G.S.; Bai, X. Gliding vertex on the horizontal bounding box for multi-oriented object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 43, 1452–1459. [Google Scholar] [CrossRef]

- Yang, X.; Yan, J.; Feng, Z.; He, T. R3det: Refined single-stage detector with feature refinement for rotating object. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 2–9 February 2021; Volume 35, pp. 3163–3171. [Google Scholar]

- Han, J.; Ding, J.; Xue, N.; Xia, G.S. Redet: A rotation-equivariant detector for aerial object detection. In Proceedings of the CVPR, Nashville, TN, USA, 20–25 June 2021; pp. 2786–2795. [Google Scholar]

- Zhang, J.; Lei, J.; Xie, W.; Fang, Z.; Li, Y.; Du, Q. SuperYOLO: Super resolution assisted object detection in multimodal remote sensing imagery. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5605415. [Google Scholar] [CrossRef]

- Li, C.; Cheng, G.; Wang, G.; Zhou, P.; Han, J. Instance-aware distillation for efficient object detection in remote sensing images. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5602011. [Google Scholar] [CrossRef]

- Dhariwal, P.; Nichol, A. Diffusion models beat gans on image synthesis. Adv. Neural Inf. Process. Syst. 2021, 34, 8780–8794. [Google Scholar]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 11534–11542. [Google Scholar]

- Qin, Z.; Zhang, P.; Wu, F.; Li, X. Fcanet: Frequency channel attention networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, BC, Canada, 11–17 October 2021; pp. 783–792. [Google Scholar]

- Qin, C.Z.; Zhan, L.J.; Zhu, A.X. How to apply the geospatial data abstraction library (GDAL) properly to parallel geospatial raster I/O? Trans. GIS 2014, 18, 950–957. [Google Scholar] [CrossRef]

- Zeng, L.; Guo, H.; Yang, W.; Yu, H.; Yu, L.; Zhang, P.; Zou, T. Instance Switching-Based Contrastive Learning for Fine-Grained Airplane Detection. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5633416. [Google Scholar] [CrossRef]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask r-cnn. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2961–2969. [Google Scholar]

- Cai, Z.; Vasconcelos, N. Cascade r-cnn: Delving into high quality object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 6154–6162. [Google Scholar]

- Qiao, S.; Chen, L.C.; Yuille, A. Detectors: Detecting objects with recursive feature pyramid and switchable atrous convolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 10213–10224. [Google Scholar]

- Xie, X.; Lang, C.; Miao, S.; Cheng, G.; Li, K.; Han, J. Mutual-assistance learning for object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 15171–15184. [Google Scholar] [CrossRef] [PubMed]

- Zhang, S.; Wang, X.; Wang, J.; Pang, J.; Lyu, C.; Zhang, W.; Luo, P.; Chen, K. Dense Distinct Query for End-to-End Object Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 7329–7338. [Google Scholar]

| Category | FID ↓ | LPIPS ↓ | PSNR ↑ | SSIM ↑ | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| VQGAN | SD | Text2AIRS | VQGAN | SD | Text2AIRS | VQGAN | SD | Text2AIRS | VQGAN | SD | Text2AIRS | |

| Boeing737 | 222.76 | 194.83 | 148.92 | 0.40 | 0.46 | 0.37 | 12.66 | 13.19 | 14.98 | 0.63 | 0.52 | 0.64 |

| Boeing747 | 301.49 | 207.06 | 148.93 | 0.53 | 0.66 | 0.43 | 13.16 | 10.02 | 14.67 | 0.52 | 0.17 | 0.51 |

| Boeing777 | 267.88 | 260.27 | 145.14 | 0.54 | 0.65 | 0.31 | 12.43 | 10.00 | 13.19 | 0.51 | 0.17 | 0.48 |

| Boeing787 | 288.04 | 241.60 | 152.82 | 0.50 | 0.69 | 0.37 | 13.49 | 10.52 | 14.73 | 0.57 | 0.17 | 0.52 |

| A350 | 286.71 | 241.42 | 153.83 | 0.56 | 0.64 | 0.26 | 12.30 | 10.24 | 13.23 | 0.50 | 0.19 | 0.43 |

| A330 | 259.85 | 234.97 | 115.46 | 0.39 | 0.53 | 0.39 | 12.70 | 10.42 | 14.09 | 0.56 | 0.20 | 0.53 |

| A321 | 258.32 | 164.65 | 118.34 | 0.40 | 0.47 | 0.31 | 12.87 | 12.25 | 14.34 | 0.66 | 0.55 | 0.70 |

| A220 | 192.99 | 201.47 | 148.88 | 0.32 | 0.46 | 0.19 | 15.18 | 13.93 | 16.83 | 0.68 | 0.55 | 0.71 |

| ARJ21 | 340.90 | 302.50 | 142.16 | 0.40 | 0.53 | 0.35 | 13.93 | 12.69 | 14.03 | 0.64 | 0.50 | 0.59 |

| C919 | 340.56 | 303.26 | 123.09 | 0.41 | 0.57 | 0.21 | 13.07 | 10.91 | 13.92 | 0.63 | 0.45 | 0.76 |

| SD | + Text | + Fea. | FID | LPIPS | PSNR | SSIM | |||

|---|---|---|---|---|---|---|---|---|---|

| - | - | - | - | 63.21 | 69.43 | 68.59 | |||

| ✓ | 253.20 | 0.57 | 11.42 | 0.35 | 64.49 | 70.41 | 70.27 | ||

| ✓ | ✓ | 170.49 | 0.44 | 13.56 | 0.54 | 64.78 | 71.09 | 70.24 | |

| ✓ | ✓ | 231.25 | 0.57 | 11.76 | 0.37 | 66.71 | 73.06 | 72.35 | |

| ✓ | ✓ | ✓ | 139.76 | 0.32 | 14.40 | 0.59 | 67.35 | 74.01 | 73.49 |

| Method | B737 | B747 | B777 | B787 | A220 | A321 | A330 | A350 | ARJ21 | C919 | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Faster R-CNN [42] | 70.92 | 55.98 | 31.32 | 86.61 | 73.68 | 78.57 | 78.29 | 66.34 | 90.20 | 0.00 | 63.21 |

| Mask R-CNN [55] | 69.83 | 36.57 | 35.23 | 81.55 | 74.74 | 80.40 | 81.18 | 63.07 | 98.51 | 0.00 | 62.11 |

| Cascade R-CNN [56] | 70.68 | 56.14 | 46.10 | 83.89 | 72.62 | 78.90 | 74.15 | 63.07 | 93.92 | 0.00 | 63.95 |

| DetectoRS [57] | 71.56 | 55.74 | 29.80 | 84.80 | 73.42 | 78.09 | 83.53 | 59.70 | 94.32 | 0.00 | 63.10 |

| MADet [58] | 74.64 | 55.64 | 43.62 | 83.23 | 78.94 | 81.58 | 83.58 | 64.22 | 94.79 | 0.00 | 66.02 |

| DDQ DETR [59] | 70.32 | 56.26 | 58.21 | 93.04 | 78.68 | 81.51 | 81.64 | 48.55 | 95.17 | 0.78 | 66.42 |

| ISCL [54] | 72.04 | 67.99 | 44.28 | 84.96 | 78.68 | 81.58 | 82.19 | 62.63 | 95.74 | 0.00 | 67.01 |

| Txt2Img-MHN [10] | 71.23 | 40.56 | 38.48 | 90.21 | 73.21 | 83.76 | 82.62 | 63.07 | 97.30 | 0.00 | 64.04 |

| Faster R-CNN + Aug | 68.17 | 52.79 | 52.68 | 91.00 | 74.36 | 80.27 | 74.83 | 63.07 | 93.83 | 0.00 | 65.10 |

| Text2AIRS | 70.52 | 61.05 | 53.44 | 84.03 | 76.11 | 82.08 | 83.82 | 69.50 | 93.01 | 0.00 | 67.35 |

| Method | B737 | B747 | B777 | B787 | A220 | A321 | A330 | A350 | ARJ21 | C919 | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Faster R-CNN [42] | 84.39 | 91.62 | 88.64 | 89.2 | 86.51 | 89.32 | 92.64 | 88.65 | 88.94 | 83.60 | 88.35 |

| Cascade R-CNN [56] | 84.00 | 91.97 | 88.60 | 89.67 | 86.19 | 89.90 | 90.36 | 91.14 | 90.96 | 87.49 | 89.03 |

| Mask R-CNN [55] | 85.09 | 92.99 | 90.46 | 90.35 | 87.89 | 90.04 | 93.26 | 91.56 | 93.46 | 80.61 | 89.57 |

| ISCL [54] | 83.20 | 93.14 | 89.31 | 90.05 | 86.14 | 89.01 | 92.81 | 91.70 | 92.68 | 89.76 | 89.78 |

| Txt2Img-MHN [10] | 82.99 | 91.61 | 88.34 | 87.57 | 86.61 | 89.23 | 91.95 | 88.81 | 90.48 | 89.23 | 88.68 |

| Text2AIRS | 84.79 | 91.97 | 90.70 | 90.79 | 87.60 | 90.59 | 92.83 | 91.61 | 91.72 | 87.54 | 90.01 |

| Baseline | +GSD | |||

|---|---|---|---|---|

| Mask-RCNN | 62.11 | 67.85 | 67.08 | |

| ✓ | 67.71 (+5.60) | 73.32 (+5.47) | 73.26 (+6.18) | |

| Cascade-RCNN | 63.95 | 70.00 | 69.47 | |

| ✓ | 70.07 (+6.12) | 75.5 (+5.50) | 75.36 (+5.89) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yang, Y.; Cheng, Y.; Hu, J.; Xia, Y.; Zang, Y. Text2AIRS: Fine-Grained Airplane Image Generation in Remote Sensing from Nature Language. Remote Sens. 2026, 18, 511. https://doi.org/10.3390/rs18030511

Yang Y, Cheng Y, Hu J, Xia Y, Zang Y. Text2AIRS: Fine-Grained Airplane Image Generation in Remote Sensing from Nature Language. Remote Sensing. 2026; 18(3):511. https://doi.org/10.3390/rs18030511

Chicago/Turabian StyleYang, Yunuo, Youwei Cheng, Jinlong Hu, Yan Xia, and Yu Zang. 2026. "Text2AIRS: Fine-Grained Airplane Image Generation in Remote Sensing from Nature Language" Remote Sensing 18, no. 3: 511. https://doi.org/10.3390/rs18030511

APA StyleYang, Y., Cheng, Y., Hu, J., Xia, Y., & Zang, Y. (2026). Text2AIRS: Fine-Grained Airplane Image Generation in Remote Sensing from Nature Language. Remote Sensing, 18(3), 511. https://doi.org/10.3390/rs18030511