GPRAformer: A Geometry-Prior Rational-Activation Transformer for Denoising Multibeam Sonar Point Clouds of Exposed Subsea Pipelines

Highlights

- A Transformer model based on geometric priors and rational activation mechanism (GPRAformer) is proposed, which can achieve more accurate noise segmentation of MBES point clouds in complex seabed environments.

- High-precision and robust MBES point-cloud noise segmentation in complex seabed environments is achieved through the pipeline-informed prior encoder (PIPE) feature-sampling module, the rational-activation Kolmogorov–Arnold network Transformer (RaKANsformer) feature-extraction module, and the class-adaptive loss (CAL)-constrained noise-segmentation module.

- The PIPE feature sampling module extracts pipeline geometric priors to enhance the separability between pipeline and noise points; the RaKANsformer feature extraction module strengthens feature extraction through self-attention, gated attention, and rational activations; and the CAL constraint noise segmentation module mitigates false and missed detections arising from class imbalance in MBES point-cloud data via class-adaptive weighting.

- This method is capable of completely preserving the geometric contour of the exposed pipeline, which validates its outstanding performance and strong stability in complex marine environments.

Abstract

1. Introduction

- (1)

- To address the tendency of current point-cloud noise-segmentation algorithms to disregard pipeline characteristics and thereby confuse pipeline and noise points, we propose a pipeline-informed prior encoder (PIPE) sampling module that constructs prior features via pipe-axis estimation and cylindrical-coordinate feature design to enhance the separability between pipeline and noise points.

- (2)

- To address the insufficient nonlinear representation and weak interpretability of existing models in complex seafloor environments, this paper proposes a rational-activated KAN transformer (RaKANsformer) feature extraction module. This module leverages self-attention to fully model the global dependencies of point clouds, employs gated attention to highlight salient features, and integrates the rational-activated KAN (RaKAN) block as its nonlinear modeling component. Compared with conventional piecewise-linear activations, the rational activation provides higher curvature expressiveness with a stable response to extreme inputs, making it better suited for modeling the coexistence of regular pipeline geometry and irregular geometric disturbances in MBES point clouds.

- (3)

- To address class imbalance between noise points and non-noise points, which often leads to missed detections and misclassifications, we propose a class-adaptive loss (CAL) constraints noise-segmentation module. By establishing intra-class consistency loss (LICC) and inter-class separation loss (LICS) through neighborhood consistency-based graph smoothing constraints and local support degree-based outlier penalty mechanisms, the problem of mis-detection and missed detection caused by the imbalance of noise points and non-noise points in MBES point clouds is effectively alleviated.

2. Materials

3. Method

3.1. PIPE Feature Sampling Module

3.2. RaKANsformer Feature Extraction Module

3.3. CAL Constraint Noise Segmentation Module

4. Results

4.1. Experimental Data and Setup

4.2. Evaluation Indicators

4.3. Noise Segmentation Experimental Results

5. Discussion

5.1. Ablation Experiment

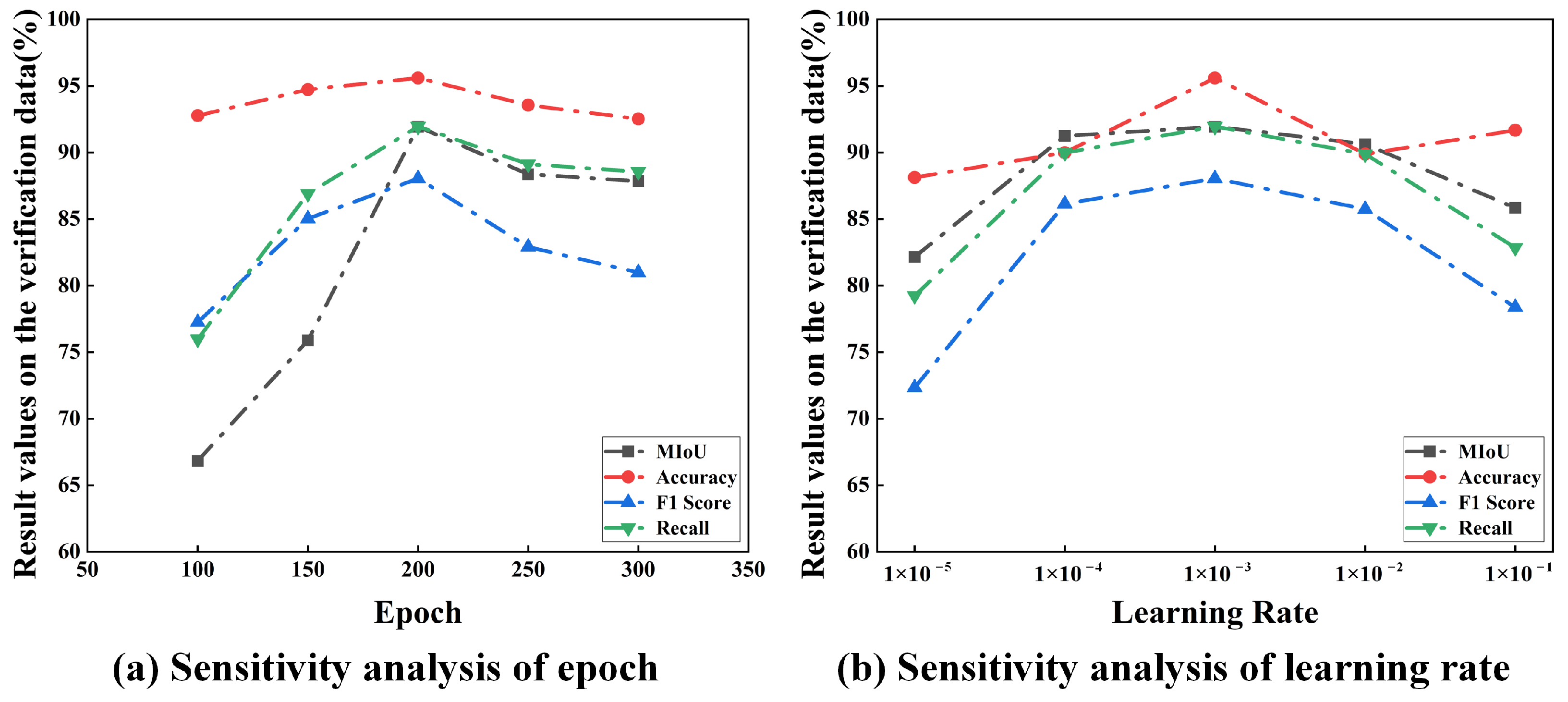

5.2. Hyperparameter Sensitivity Analysis

5.3. Complexity and Inference Time Analysis

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Cui, X.; Li, Y.; Li, J.; Zhang, J. Cross-PIC: A cross-scale in-context learning network for 3D multibeam point cloud segmentation of submarine pipelines. Ocean Eng. 2025, 315, 119778. [Google Scholar] [CrossRef]

- Downing, E.; O’Reilly, L.; Majcher, J.; O’Mahony, E.; Peters, J. A semi-automated, hybrid GIS–AI approach to seabed boulde’ detection using high-resolution multibeam echosounder. Remote Sens. 2025, 17, 2711. [Google Scholar] [CrossRef]

- Gościewski, D.; Gerus-Gościewska, M.; Szczepańska, A. Application of polynomial interpolation for iterative complementation of the missing nodes in a regular network of squares used for the construction of a digital terrain model. Remote Sens. 2024, 16, 999. [Google Scholar] [CrossRef]

- Zhou, J.; Koge, H.; Maki, T. Automation of MBES noise reduction: An approach based on seafloor bathymetry features derived from manual editing procedures. Ocean Eng. 2024, 299, 117397. [Google Scholar] [CrossRef]

- Xu, B.; Chen, Z.; Zhu, Q.; Ge, X.; Huang, S.; Zhang, Y.; Liu, T.; Wu, D. Geometrical segmentation of multi-shape point clouds based on adaptive shape prediction and hybrid voting RANSAC. Remote Sens. 2022, 14, 2024. [Google Scholar] [CrossRef]

- Zhao, F.; Huang, H.; Xiao, N.; Yu, J.; Geng, G. A point cloud segmentation algorithm based on multi-feature training and weighted random forest. Meas. Sci. Technol. 2024, 36, 015407. [Google Scholar] [CrossRef]

- Chen, X.; Mao, J.; Zhao, B.; Wu, C.; Qin, M. Facet-segmentation of point cloud based on multiscale hypervoxel region growing. J. Indian Soc. Remote Sens. 2025, 53, 3775–3796. [Google Scholar] [CrossRef]

- Yan, Z.; Zhao, H. Inner wall defect detection in oil and gas pipelines using point cloud data segmentation. Autom. Constr. 2025, 173, 106098. [Google Scholar] [CrossRef]

- Ye, J.; Liu, X.; Madhusudanan, H.; Wang, Y.; Zhu, J.; Wang, Y.; Ru, C.; Liu, X.; Sun, Y. Automatic point cloud clustering for surface defect diagnosis. IEEE Trans. Autom. Sci. Eng. 2025, 22, 12538–12547. [Google Scholar] [CrossRef]

- Chung, K.-L.; Chang, W.-T. Centralized RANSAC-based point cloud registration with fast convergence and high accuracy. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 5431–5442. [Google Scholar] [CrossRef]

- Li, T.; Lin, Y.; Cheng, B.; Ai, G.; Yang, J.; Fang, L. PU-CTG: A point cloud upsampling network using transformer fusion and GRU correction. Remote Sens. 2024, 16, 450. [Google Scholar] [CrossRef]

- Zhou, W.; Wang, Q.; Jin, W.; Shi, X.; He, Y. Graph transformer for 3D point clouds classification and semantic segmentation. Comput. Graph. 2024, 124, 104050. [Google Scholar] [CrossRef]

- Yuan, T.; Yu, Y.; Wang, X. Semantic segmentation of large-scale point clouds by integrating attention mechanisms and transformer models. Image Vis. Comput. 2024, 146, 105019. [Google Scholar] [CrossRef]

- Chu, X.; Zhao, S.; Dai, H. AIFormer: Adaptive interaction transformer for 3D point cloud understanding. Remote Sens. 2024, 16, 4103. [Google Scholar] [CrossRef]

- Lee, A.; Gomes, H.M.; Zhang, Y.; Kleijn, W.B. Kolmogorov–Arnold networks still catastrophically forget but differently from MLP. Proc. AAAI Conf. Artif. Intell. 2025, 39, 18053–18061. [Google Scholar] [CrossRef]

- Ren, J.; Wen, C.; Zhang, L.; Su, H.; Yang, C.; Lv, Y.; Yang, N.; Qin, X. High performance point-voxel feature set abstraction with mamba for 3D object detection. Expert Syst. Appl. 2025, 286, 128127. [Google Scholar] [CrossRef]

- Yang, X.; Wang, X. Kolmogorov–Arnold Transformer. arXiv 2025, arXiv:2409.10594. [Google Scholar]

- Guo, M.-H.; Cai, J.-X.; Liu, Z.-N.; Mu, T.-J.; Martin, R.R.; Hu, S.-M. PCT: Point cloud transformer. Comput. Vis. Media 2021, 7, 187–199. [Google Scholar] [CrossRef]

- Wang, T.; Xi, W.; Cheng, Y.; Zhang, J.; Yin, R.; Yang, Y. Enhancing primitive segmentation through transformer-based cross-task interaction. Eng. Appl. Artif. Intell. 2025, 158, 111307. [Google Scholar] [CrossRef]

- Liang, Z.; Lai, X. Multilevel geometric feature embedding in transformer network for ALS point cloud semantic segmentation. Remote Sens. 2024, 16, 3386. [Google Scholar] [CrossRef]

- Zhou, X.; Shi, C.; Zhu, D.; Zhou, C. KANFilter: A simple and effective multi-module point cloud denoising model. Clust. Comput. 2025, 28, 423. [Google Scholar] [CrossRef]

- Zhou, G.; Ye, W.; Li, S.; Zhao, J.; Wang, Z.; Li, G.; Li, J. FGPointKAN++ point cloud segmentation and adaptive key cutting plane recognition for cow body size measurement. Artif. Intell. Agric. 2025, 15, 783–801. [Google Scholar] [CrossRef]

- Li, Y.; Liu, S.; Wu, J.; Sun, W.; Wen, Q.; Wu, Y.; Qin, X.; Qiao, Y. Multi-scale Kolmogorov–Arnold network (KAN)-based linear attention network: Multi-scale feature fusion with KAN and deformable convolution for urban scene image semantic segmentation. Remote Sens. 2025, 17, 802. [Google Scholar] [CrossRef]

- Zhang, R.; Huang, G.; Bao, F.; Guo, X. Multi-neighborhood sparse feature selection for semantic segmentation of LiDAR point clouds. Remote Sens. 2025, 17, 2288. [Google Scholar] [CrossRef]

- Farhadpour, S.; Warner, T.A.; Maxwell, A.E. Selecting and interpreting multiclass loss and accuracy assessment metrics for classifications with class imbalance: Guidance and best practices. Remote Sens. 2024, 16, 533. [Google Scholar] [CrossRef]

- Xu, H.; Huai, Y.; Zhao, X.; Meng, Q.; Nie, X.; Li, B.; Lu, H. SK-TreePCN: Skeleton-embedded transformer model for point cloud completion of individual trees from simulated to real data. Remote Sens. 2025, 17, 656. [Google Scholar] [CrossRef]

- Ciou, T.-S.; Lin, C.-H.; Wang, C.-K. Airborne LiDAR point cloud classification using ensemble learning for DEM generation. Sensors 2024, 24, 6858. [Google Scholar] [CrossRef] [PubMed]

| Category | Specification |

|---|---|

| Attention Heads per block | 8 |

| KNN Neighborhood Size | 32 |

| Rational function numerator degree | 2 |

| Rational function denominator degree | 2 |

| Initial Learning Rate | 0.001 |

| Batch Size | 16 |

| Training Epochs | 200 |

| Category | Specification |

|---|---|

| Input dimensions | [16, 50,000, 3] |

| Output dimension of PIPE feature sampling | [16, 128, 32, 7] |

| Input dimension of RaKANsformer feature extraction | [16 × 128, 7, 32] |

| Output dimension of RaKANsformer feature extraction | [16, 128, 256] |

| Output dimensions | [16, 50,000, 2] |

| Method | mIoU (%) | Accuracy (%) | F1-Score (%) | Recall (%) |

|---|---|---|---|---|

| RASCAN [10] | 42.21 | 69.88 | 56.88 | 75.06 |

| KANFilter [21] | 81.10 | 92.47 | 80.57 | 85.62 |

| FGPointKAN++ [22] | 70.85 | 93.82 | 82.93 | 85.75 |

| MGFE-T [20] | 47.39 | 70.76 | 57.54 | 75.52 |

| PCT [18] | 85.11 | 85.46 | 77.19 | 85.46 |

| Proposed | 91.94 | 95.60 | 88.05 | 91.95 |

| RaKAN | PIPE | CAL | mIoU (%) | Accuracy (%) | F1-Score (%) | Recall (%) |

|---|---|---|---|---|---|---|

| ✓ | × | × | 87.51 | 91.86 | 79.83 | 88.19 |

| ✓ | ✓ | × | 89.95 | 94.63 | 85.07 | 89.71 |

| ✓ | ✓ | ✓ | 91.94 | 95.60 | 88.05 | 91.95 |

| Method | Params (M) | Inference Time (s) |

|---|---|---|

| RANSAC | - | 2.8 |

| PCT | 3.0 | 4.5 |

| MGFE-T | 8.4 | 5.3 |

| KANFilter | 2.6 | 8.0 |

| FGPointKAN++ | 10.8 | 8.7 |

| GPRAformer | 7.2 | 11.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, J.; Dai, S.; Jiang, W.; Cui, X.; Li, J. GPRAformer: A Geometry-Prior Rational-Activation Transformer for Denoising Multibeam Sonar Point Clouds of Exposed Subsea Pipelines. Remote Sens. 2026, 18, 439. https://doi.org/10.3390/rs18030439

Zhang J, Dai S, Jiang W, Cui X, Li J. GPRAformer: A Geometry-Prior Rational-Activation Transformer for Denoising Multibeam Sonar Point Clouds of Exposed Subsea Pipelines. Remote Sensing. 2026; 18(3):439. https://doi.org/10.3390/rs18030439

Chicago/Turabian StyleZhang, Jingyao, Song Dai, Weihua Jiang, Xuerong Cui, and Juan Li. 2026. "GPRAformer: A Geometry-Prior Rational-Activation Transformer for Denoising Multibeam Sonar Point Clouds of Exposed Subsea Pipelines" Remote Sensing 18, no. 3: 439. https://doi.org/10.3390/rs18030439

APA StyleZhang, J., Dai, S., Jiang, W., Cui, X., & Li, J. (2026). GPRAformer: A Geometry-Prior Rational-Activation Transformer for Denoising Multibeam Sonar Point Clouds of Exposed Subsea Pipelines. Remote Sensing, 18(3), 439. https://doi.org/10.3390/rs18030439