2.2.1. Network Architecture for Extracting Building Footprints and Shadows

A sparse edge-aware convolution-transformer neural network (SECT-Net) is proposed to extract building footprints and shadows. It is built upon the UNetFormer architecture [

29], which combines a lightweight CNN encoder and a Transformer-based decoder with a global-local attention mechanism for efficient multi-scale contextual modeling.

To adapt the framework for building footprints extraction from high-resolution Jilin-1 remote sensing images, three modifications are conducted: (1) Multi-scale edge supervision module (MESM): During multi-scale feature extraction in the encoder, MESM explicitly supervises edge information at different resolutions for enhancing the network’s ability to capture fine building contour details; (2) Dual-path CNN-Transformer block (DP-CTB): Inspired by the sparse token transformers [

48], the original global-local Transformer module is replaced with a dual-path CNN-Transformer block with sparse global attention. This design preserves the ability to capture global contextual dependencies while alleviating redundant global interactions through sparse token sampling; (3) Multi-scale auxiliary supervision (MAS): Auxiliary prediction heads are attached to intermediate layers of the decoder to provide additional supervision signals for multi-level features.

The overall architecture of SECT-Net comprises a ResNet50-based encoder and a DP-CTB-based decoder (

Figure 2). The encoder begins with a convolutional stem (Conv Stem) and sequentially stacks four residual blocks (ResBlocks) to extract multi-scale features at 1/4, 1/8, 1/16, and 1/32 of the input resolution. During the encoding stage, the MESM is applied to each scale of the feature map to explicitly enhance the learning of the building’s edge information. In the decoding phase, multi-scale features are fused via a weighted integration mechanism and subsequently passed through a series of DP-CTB modules for progressive feature aggregation and semantic reconstruction. Finally, the feature refinement head [

29] refines the fused features to produce predictions of building footprints and shadows.

- (1)

Multi-scale Edge Supervision Module (MESM)

Edge information is a critical fine-grained feature in building extraction. To enhance boundary modeling, the proposed MESM introduces explicit edge supervision at multiple encoder stages and injects edge features into the decoder using scale-specific strategies.

Specifically, the encoder outputs multi-scale feature maps

and

, corresponding to the shallow and intermediate layers. For each scale, a lightweight edge head

is designed to generate corresponding edge predictions:

where

denotes the predicted edge map at the

-th scale.

is implemented as a lightweight convolutional module composed of a 3 × 3 convolution, batch normalization, and ReLU activation, followed by a 1 × 1 convolution for channel projection.

To effectively utilize edge information, different integration strategies are adopted for different scales. For the intermediate stage, the predicted edge map

is concatenated with

along the channel dimension to form an enhanced feature representation:

where

denotes concatenation along the channel dimension.

is the sigmoid activation.

For the shallow stage, the finer edge prediction

is used to guide feature refinement via a spatial gating mechanism:

where

denotes element-wise multiplication, and

is a learnable gating map derived from

via a 1 × 1 convolution. This mechanism enables the network to focus on fine-grained boundary details during reconstruction.

During training, edge ground truths are generated by rasterizing building footprint vectors and extracting inner boundaries, producing single-channel masks for supervision.

- (2)

Dual-path CNN-Transformer Block (DP-CTB)

To balance global structural consistency and local detail accuracy, a DP-CTB is proposed (

Figure 3a). This module consists of a local convolutional branch and a sparse global attention branch.

Given an input feature map , the local branch aims to capture fine-grained spatial and channel interactions using depth-wise separable convolution followed by channel recalibration via a squeeze-and-excitation mechanism, yielding a local feature representation .

To facilitate global context modeling, the global branch first projects the input into a reduced feature space via a 1 × 1 convolution. A Spatial-Channel Token Sampler (

Figure 3b) is then employed to select informative tokens from both spatial and channel dimensions.

For spatial token sampling, a three-route strategy is adopted to enhance diversity and robustness: block-aware sampling ensures uniform spatial coverage, boundary-aware sampling emphasizes high-gradient regions (e.g., object contours) via gradient magnitude estimation, and region-aware sampling focuses on semantically salient areas. The sampled tokens are aggregated as:

where

denotes concatenation followed by duplicate removal.

In parallel, channel tokens are selected based on channel importance derived from globally pooled features and further aggregated to produce channel-wise modulation.

In practice, the number of spatial and channel tokens is fixed to = 196 and = 32, respectively. For spatial sampling, the proportions of block-aware, boundary-aware, and region-aware tokens are set to 0.3, 0.3, and 0.4, respectively.

Based on the sampled tokens, position-enhanced sparse attention is performed:

where

denote query, key, and value matrices, and

,

represent learnable positional embeddings.

The attended tokens are projected back to the spatial domain via cross-attention, producing a spatial global feature map . In parallel, channel attention yields a channel-enhanced feature map .

The spatial and channel global features are concatenated and projected:

Finally, the outputs of the local and global branches are adaptively fused:

where

is a learnable balance factor.

The DP-CTB effectively integrates fine-grained local details with long-range structural dependencies through a structure-aware sparse modeling strategy. Instead of performing dense global interactions, the proposed token sampling mechanism selectively focuses on informative spatial regions (e.g., boundaries and salient areas), thereby reducing redundant computations while preserving representative global context.

- (3)

Multi-scale Auxiliary Supervision (MAS)

To enhance semantic consistency and facilitate optimization, a multi-scale auxiliary supervision module is introduced into the multi-stage decoder features.

Specifically, the decoder generates three intermediate feature maps at different stages:

here,

originates from the deepest decoder stage and contains the richest semantic information, while

comes from a shallower stage and preserves more local details.

For each feature map, a lightweight auxiliary head

is designed to generate a probability map at the corresponding scale:

where

denotes the predicted probability map at the

i-th scale. Each

consists of a convolutional block with a 3 × 3 convolution, followed by batch normalization and ReLU activation, a dropout layer, and a 1 × 1 convolution for channel projection.

During training, these auxiliary predictions are up-sampled to the original input resolution to compute the loss and provide supervision. This design facilitates gradient propagation to shallow layers, accelerates model convergence, and enhances the semantic representation capability across multiple scales.

- (4)

Loss Function

In the training phase, the proposed network is supervised by a composite loss function that consists of three components: a principal loss

, an auxiliary loss

, and an edge loss

. The overall loss can be expressed as:

where

and

are the weight coefficients of the auxiliary and edge losses, respectively. In our experiments,

is set to 0.4 and

is set to 0.2 by default.

The principal loss supervises the final prediction of building footprints and shadows. It is formulated as a combination of the soft Dice loss

and the cross-entropy loss

:

where

denotes the soft Dice loss computed on predicted probabilities, and

denotes the pixel-wise cross-entropy loss.

The auxiliary loss is designed to guide intermediate feature representations within the decoder. It is applied to the outputs of the auxiliary heads, which process features from multiple decoder stages to produce auxiliary predictions. Following the same formulation as the principal loss, each auxiliary prediction is supervised using a combination of Dice loss

and the cross-entropy loss

:

where

denotes the weight assigned to the auxiliary prediction at stage

.

and

denote the losses computed for the

-th auxiliary prediction. In our implementation, the auxiliary losses are weighted as

, where shallower features are given larger weights to emphasize fine-grained details.

During training, both edge predictions are supervised at the original image resolution. To address the severe class imbalance between boundary and non-boundary pixels, a dynamically weighted binary cross-entropy loss is adopted. In addition, a Dice loss is introduced to enforce structural consistency of the predicted edges. The final edge loss is defined as a weighted combination of the two scales:

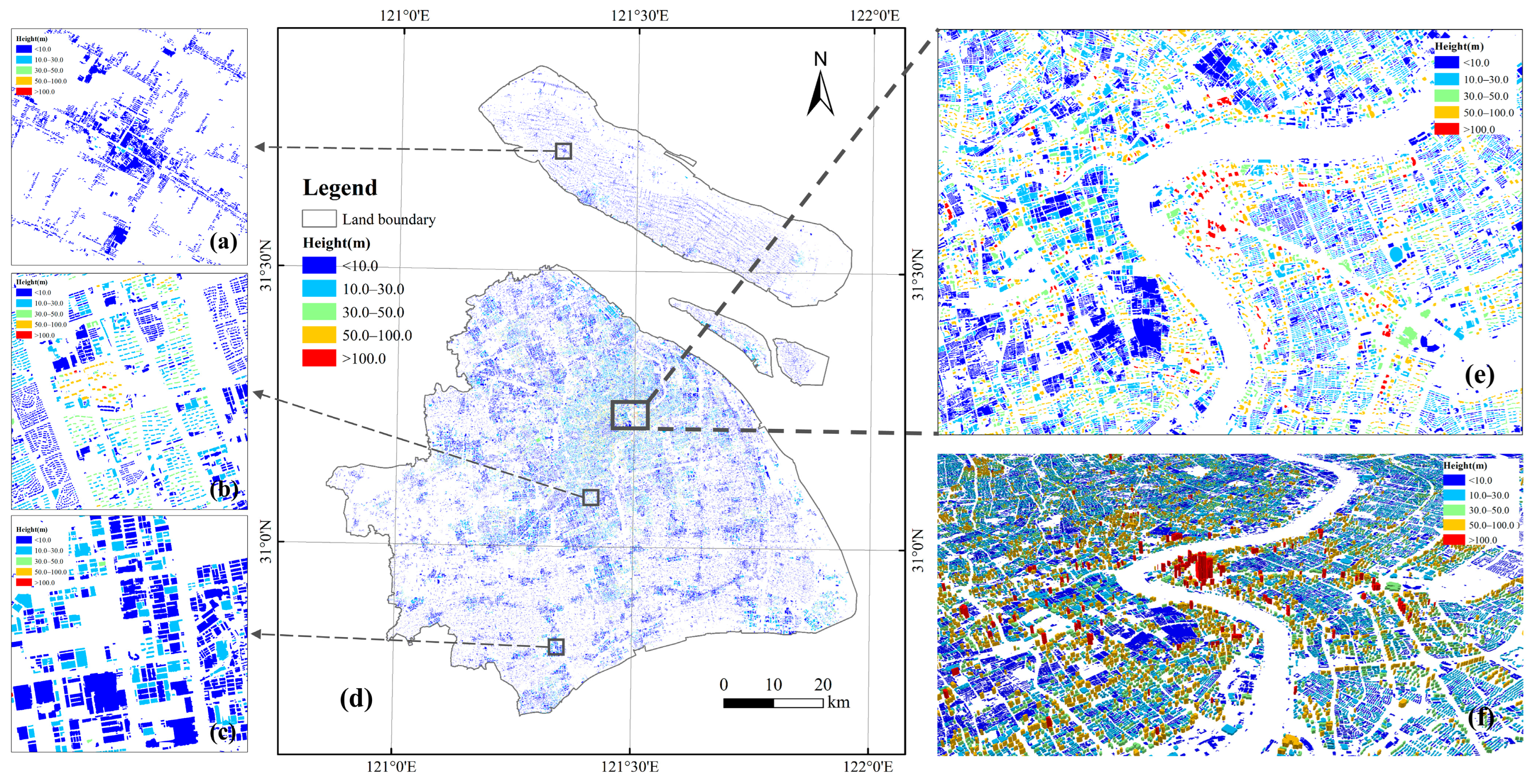

2.2.2. Building Height Estimation Based on Shadow Lengths

Shadow-based height estimation from single-view high-resolution imagery is widely used. The building height is calculated from the extracted shadow length projected onto the ground and several key imaging-geometry parameters, such as the solar elevation angle, solar azimuth, sensor elevation angle, and sensor azimuth (

Figure 4).

For the case where the sun and the sensor are on opposite sides of the building, the sensor can capture the complete ground shadow BC of the building (

Figure 4a). The building height H is calculated by:

When the sun and sensor are on the same side of the building, part of the shadow (segment BE in

Figure 4b) may be occluded by the building itself and thus cannot be fully observed. In this case, the shadow length measured in the image is denoted as

, which corresponds to the projection along the sensor’s line of sight (EC) and is derived from the detected building rooftop area and the shadow region (

Figure 4b). Building height H is then calculated using the following geometric correction formula [

49]:

where

α denotes the sensor elevation angle,

β the solar elevation angle,

θ the sensor azimuth,

γ the solar azimuth.

denotes the measured shadow length in the image, which corresponds to the complete ground shadow BC in the opposite-side case (

Figure 4a) and the observable shadow segment EC due to partial occlusion of the ground shadow (

Figure 4b).

The Jilin-1 images used for shadow-based height estimation were acquired on 16 February 2024. The solar elevation and azimuth angles at acquisition time were 40.8° and 149.2°, respectively. Satellite’s elevation and azimuth angles were 81.1° and 148.8°, respectively. Both the sun and the sensor were positioned on the same side of the buildings. Shadow occlusion detection was first conducted for each extracted building using its footprint and the shadow geometry (

Figure 5). Building footprints often fall within shadows cast by neighboring buildings. When a large proportion of the footprint area is covered by neighboring shadows, the building’s own shadow region becomes difficult to reliably identify, leading to unstable shadow-length measurements. In this study, an overlap ratio threshold of 70% was adopted to filter out severely occluded samples. Buildings exceeding this threshold were excluded from further analysis.

According to the solar azimuth angles, projection sampling points were uniformly generated along the building boundary (

N = 50). Only points located on the shadow-facing side were retained, resulting in approximately 20–30 valid sampling points per building. For each valid sampling point, pixel-level shadow tracing along the solar illumination direction continues until a non-shadow pixel is encountered. As a result, multiple candidate shadow-length samples were generated for each building. To reduce the effects of roof self-occlusion and overshadowing by nearby buildings, all candidate shadow-length samples were subjected to a

outlier filtering procedure [

50]. Specifically, the mean

and standard deviation

were first computed for a set of shadow-length

and samples were removed when satisfying:

For each building, the effective shadow length was defined as the mean of all high-quality shadow-length samples that passed the quality control procedure. This representative shadow length was subsequently used to estimate the building height by Equation (15).