LargeStitch: Efficient Seamless Stitching of Large-Size Aerial Images via Deep Matching and Seam-Band Fusion

Highlights

- Superior Alignment Robustness: The framework demonstrates high precision in challenging scenarios involving significant rotation, size variation, low overlap, and textureless regions.

- Optimized Computational Efficiency: Significant speedup is achieved via a pre-stitching filtering strategy and a Seam-band fusion approach that avoids the heavy overhead of traditional Seam-driven optimization.

- Seamless Visual Reconstruction: The method transitions from pixel-level blending to feature-level content coordination, ensuring ghosting-free, seamless panoramas for large-size imagery.

- Practical Solutions for Large-size Monitoring: The proposed method provides a high-speed stitching solution for applications such as environmental monitoring, enabling the rapid acquisition of refined and comprehensive spatial intelligence for target areas.

Abstract

1. Introduction

- We propose a novel robust stitching framework. To the best of our knowledge, it is the first method integrating deep learning for efficient, seamless stitching of large-size images.

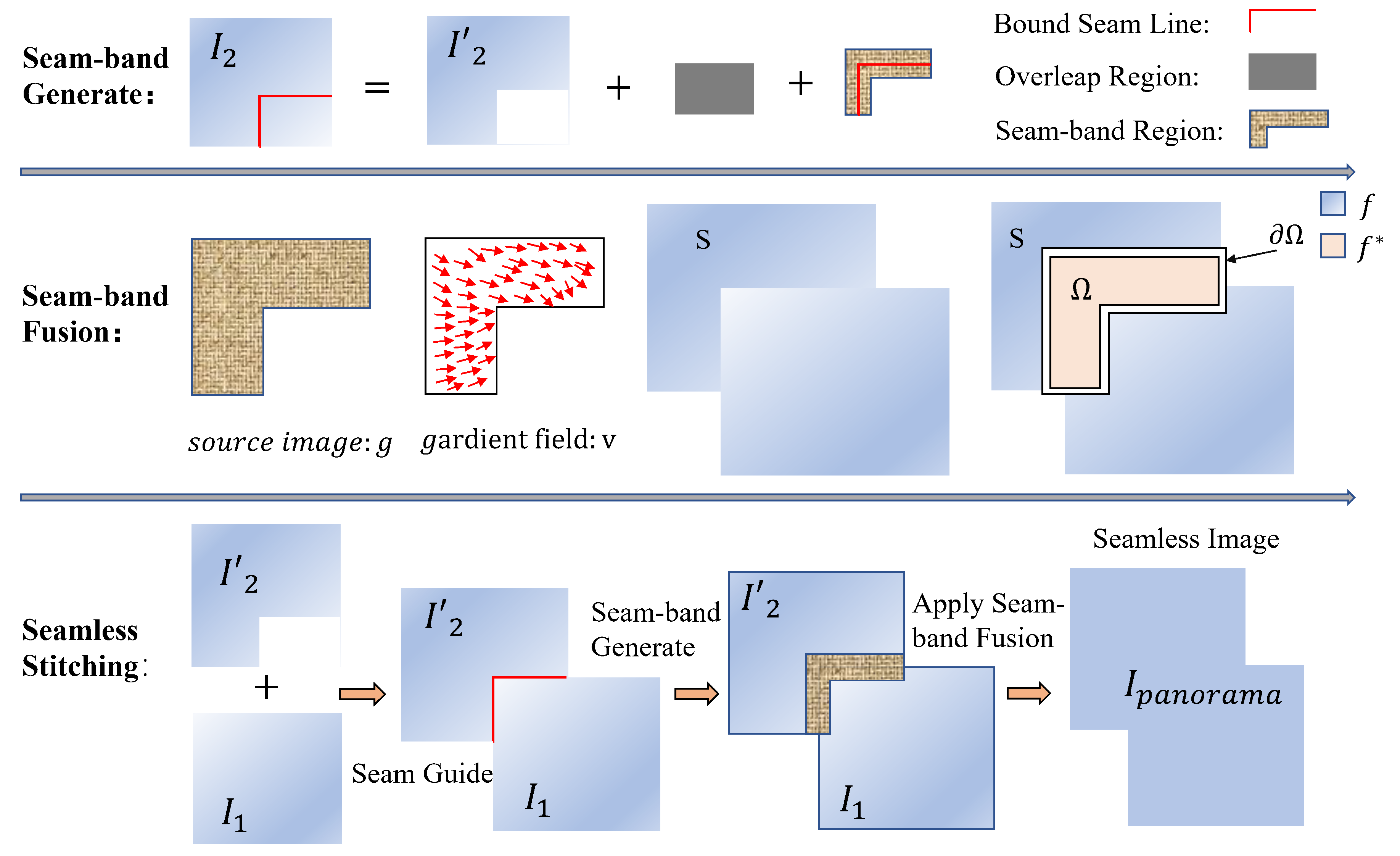

- We propose a novel Seam-band fusion method that transforms the traditional pixel-level composition problem into an image content harmonization task, effectively reducing misalignment and artifacts.

- We design a mask-based image filtering strategy to reduce redundant images, optimize computational resources, and minimize cumulative errors during stitching.

- Extensive experiments demonstrate that the proposed LargeStitch method outperforms several state-of-the-art stitching techniques in both qualitative analysis and quantitative metrics.

2. Related Work

2.1. Traditional Feature-Based Image Stitching Methods

2.2. Deep Learning-Based Image Stitching Methods

2.3. Georeferencing-Based Image Stitching Method

3. Materials and Methods

3.1. Deep Feature Matching

3.2. Graph-Cut RANSAC for Robust Outlier Removal

3.3. Image Alignment

3.4. Image Harmonization Based on Seam-Band

3.5. Mask-Based Pre-Stitching Image Filtering Strategy

3.6. Algorithm

| Algorithm 1 LargeStitch: deep learning-based aerial image stitching framework |

|

4. Results

4.1. Dataset and Implementation Details

4.2. Parameter Sensitivity Analysis

4.2.1. PSNR (Peak Signal-to-Noise Ratio)

4.2.2. SSIM (Structural Similarity Index)

4.2.3. LPIPS (Learned Perceptual Image Patch Similarity)

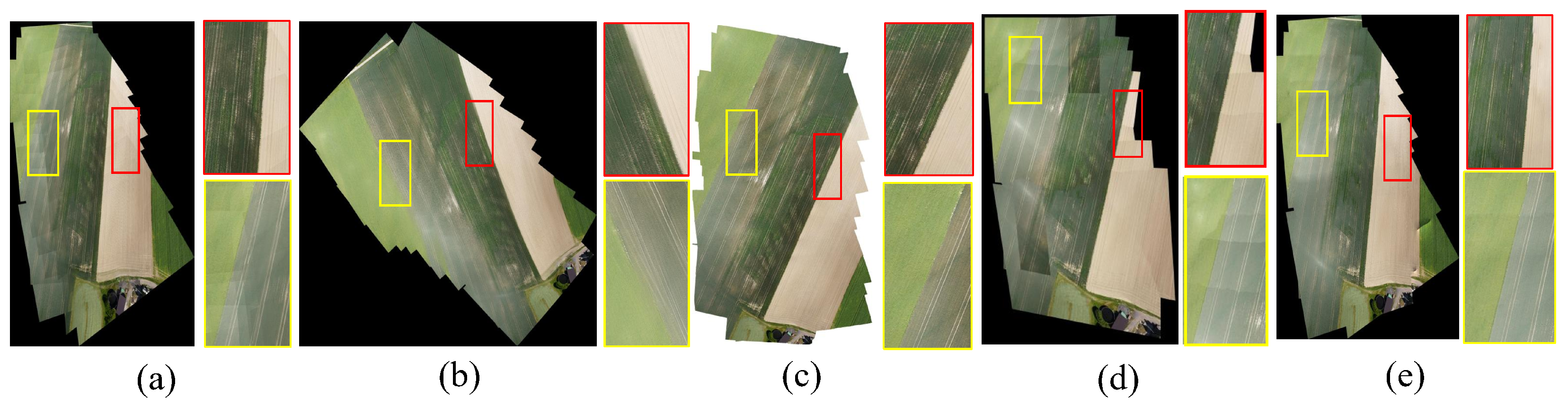

4.3. Subjective Visual Quality Qualitative Comparison

4.3.1. Results of Multi-Image Panoramic Stitching

4.3.2. Running Time

4.3.3. Robustness

4.4. Objective Quantitative Evaluation Metric Comparison

4.5. Ablation Experiments

4.5.1. Effectiveness of Deep Feature Matching Algorithm

4.5.2. Seam-Band Fusion of Image Harmonization

4.5.3. Mask-Based Pre-Stitching Strategy

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Trinh, H.L.; Kieu, H.T.; Pak, H.Y.; Pang, D.S.C.; Cokro, A.A.; Law, A.W.-K. A framework for survey planning using portable Unmanned Aerial Vehicles (p UAVs) in coastal hydro-environment. Remote Sens. 2022, 14, 2283. [Google Scholar] [CrossRef]

- Xu, Q.; Chen, J.; Luo, L.; Gong, W.; Wang, Y. UAV image stitching based on mesh-guided deformation and ground constraint. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 4465–4475. [Google Scholar]

- Cai, W.; Du, S.; Yang, W. UAV image stitching by estimating orthograph with RGB cameras. J. Vis. Commun. Image Represent. 2023, 94, 103835. [Google Scholar] [CrossRef]

- Alphen, R.V.; Rodgers, M.; Malservisi, R.; Wang, P.; Cheng, J.; Valleé, M. Application of UAV Structure-From-Motion Photogrammetry to a Nourished Beach for Assessment of Storm Surge Impacts, Pinellas County, Florida. IEEE Trans. Geosci. Remote Sens. 2024, 62, 4409812. [Google Scholar]

- Wei, C.; Xia, H.; Qiao, Y. Fast unmanned aerial vehicle image matching combining geometric information and feature similarity. IEEE Geosci. Remote Sens. Lett. 2020, 18, 1731–1735. [Google Scholar]

- Gomez, C.; Purdie, H. UAV-based photogrammetry and geocomputing for hazards and disaster risk monitoring—A review. Geoenviron. Disasters 2016, 3, 23. [Google Scholar] [CrossRef]

- Dolcetti, G.; Krynkin, A.; Alkmim, M.; Cuenca, J.; Ryck, L.D.; Sailor, G.; Muraro, F.; Tait, S.J.; Horoshenkov, K.V. Reconstruction of the frequency-wavenumber spectrum of water waves with an airborne acoustic Doppler array for non-contact river monitoring. IEEE Trans. Geosci. Remote Sens. 2024, 62, 4202214. [Google Scholar] [CrossRef]

- Acharya, B.; Barber, M.E. Post-Fire Streamflow Prediction: Remote Sensing Insights from Landsat and an Unmanned Aerial Vehicle. Remote Sens. 2025, 17, 3690. [Google Scholar] [CrossRef]

- Cirillo, D.; Tangari, A.C.; Scarciglia, F.; Lavecchia, G.; Brozzetti, F. UAV-PPK photogrammetry, GIS, and soil analysis to estimate long-term slip rates on active faults in a seismic gap of Northern Calabria (Southern Italy). Remote Sens. 2025, 17, 3366. [Google Scholar] [CrossRef]

- Sishodia, R.P.; Ray, R.L.; Singh, S.K. Applications of remote sensing in precision agriculture: A review. Remote Sens. 2020, 12, 3136. [Google Scholar] [CrossRef]

- Ruiz, J.; Caballero, F.; Merino, L. MGRAPH: A multigraph homography method to generate incremental mosaics in real-time from UAV swarms. IEEE Robot. Autom. Lett. 2018, 3, 2838–2845. [Google Scholar]

- Luo, X.; Zhao, H.; Liu, Y.; Liu, N.; Chen, J.; Yang, H. A High-Precision Virtual Central Projection Image Generation Method for an Aerial Dual-Camera. Remote Sens. 2025, 17, 683. [Google Scholar] [CrossRef]

- Chen, J.; Wan, Q.; Luo, L.; Wang, Y.; Luo, D. Drone image stitching based on compactly supported radial basis function. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2019, 12, 4634–4643. [Google Scholar] [CrossRef]

- Zhou, H.; Yu, W.; Zhang, J.; Liu, X. Seamless stitching of large area UAV images using modified camera matrix. In Proceedings of the 2016 IEEE International Conference on Real-time Computing and Robotics (RCAR), Angkor Wat, Cambodia, 6–10 June 2016; IEEE: New York, NY, USA, 2016; pp. 561–566. [Google Scholar]

- Liu, J.; Wang, L.; Chen, X.; Zhao, M. A novel adjustment model for mosaicking low-overlap sweeping images. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4089–4097. [Google Scholar]

- Ren, M.; Li, J.; Song, L.; Li, H.; Xu, T. MLP-based efficient stitching method for UAV images. IEEE Geosci. Remote Sens. Lett. 2022, 19, 2503305. [Google Scholar] [CrossRef]

- Mehrdad, S.; Khosravi, M.; Mohammadi, A.; Karami, E. Toward real time UAVs’ image mosaicking. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 941–946. [Google Scholar]

- Zhang, F.; Xu, Q.; Wu, H.; Li, G. Image-only real-time incremental UAV image mosaic for multi-strip flight. IEEE Trans. Multimed. 2020, 23, 1410–1425. [Google Scholar] [CrossRef]

- Peng, Z.; Zhang, X.; Liu, H.; Wang, Y. Seamless UAV hyperspectral image stitching using optimal seamline detection via graph cuts. IEEE Trans. Geosci. Remote Sens. 2023, 61, 1–13. [Google Scholar]

- Zaragoza, J.; Chin, T.-J.; Tran, Q.-H.; Brown, M.; Suter, D. As-projective-as-possible image stitching with moving DLT. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Portland, OR, USA, 23–28 June 2013; IEEE: New York, NY, USA, 2013; pp. 2339–2346. [Google Scholar]

- Chang, C.; Sato, Y.; Chuang, Y. Shape-preserving half-projective warps for image stitching. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; IEEE: New York, NY, USA, 2014; pp. 3254–3261. [Google Scholar]

- Li, R.; Gao, P.; Cai, X.; Chen, X.; Wei, J.; Cheng, Y.; Zhao, H. A real-time incremental video mosaic framework for UAV remote sensing. Remote Sens. 2023, 15, 2127. [Google Scholar] [CrossRef]

- Wei, Z.; Lan, C.; Xu, Q.; Wang, L.; Gao, T.; Yao, F.; Hou, H. SatellStitch: Satellite imagery-assisted UAV image seamless stitching for emergency response without GCP and GNSS. Remote Sens. 2024, 16, 309. [Google Scholar]

- Chen, J.; Luo, Y.; Wang, J.; Tang, H.; Tang, Y.; Li, J. Elimination of irregular boundaries and seams for UAV image stitching with a diffusion model. Remote Sens. 2024, 16, 1483. [Google Scholar] [CrossRef]

- Xiang, T.; Liu, Q.; Zhang, D.; Chen, J. Image stitching by line-guided local warping with global similarity constraint. Pattern Recognit. 2018, 83, 481–497. [Google Scholar] [CrossRef]

- Xu, Y.; Zhang, L.; Li, P.; Huang, B. Mosaicking of unmanned aerial vehicle imagery in the absence of camera poses. Remote Sens. 2016, 8, 204. [Google Scholar] [CrossRef]

- Botterill, T.; Mills, S.; Green, R. Real-time aerial image mosaicing. In Proceedings of the 2010 25th International Conference of Image and Vision Computing New Zealand (IVCNZ), Queenstown, New Zealand, 8–10 November 2010; IEEE: New York, NY, USA, 2010; pp. 1–8. [Google Scholar]

- Kekec, T.; Yildirim, A.; Unel, M. A new approach to real-time mosaicing of aerial images. Robot. Auton. Syst. 2014, 62, 1755–1767. [Google Scholar] [CrossRef]

- Zhao, Y.; Li, F.; Chen, X.; Wu, L. RTSfM: Real-time structure from motion for mosaicing and DSM mapping of sequential aerial images with low overlap. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–15. [Google Scholar] [CrossRef]

- Mo, Y.; Zhang, H.; Wang, L.; Chen, J. A robust UAV hyperspectral image stitching method based on deep feature matching. IEEE Trans. Geosci. Remote Sens. 2021, 60, 1–14. [Google Scholar] [CrossRef]

- Li, W. SuperGlue-Based Deep Learning Method for Image Matching from Multiple Viewpoints. In Proceedings of the 2023 8th International Conference on Mathematics and Artificial Intelligence, Tokyo, Japan, 20–23 March 2023; Association for Computing Machinery: New York, NY, USA, 2023; pp. 53–58. [Google Scholar]

- Yuan, X.; Liu, Y.; Zhang, Q.; Sun, P. Large aerial image tie point matching in real and difficult survey areas via deep learning method. Remote Sens. 2022, 14, 3907. [Google Scholar] [CrossRef]

- Pan, W.; Li, A.; Liu, X.; Deng, Z. Unmanned aerial vehicle image stitching based on multi-region segmentation. IET Image Process. 2024, 18, 4607–4622. [Google Scholar] [CrossRef]

- Low, D. Distinctive image features from scale-invariant keypoints. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Bay, H.; Ess, A.; Tuytelaars, T.; Van Gool, L. Speeded-up robust features (SURF). Comput. Vis. Image Underst. 2008, 110, 346–359. [Google Scholar] [CrossRef]

- Rublee, E.; Rabaud, V.; Konolige, K.; Bradski, G. ORB: An efficient alternative to SIFT or SURF. In Proceedings of the 2011 International Conference on Computer Vision (ICCV), Barcelona, Spain, 6–13 November 2011; IEEE: New York, NY, USA, 2011; pp. 2564–2571. [Google Scholar]

- Nie, L.; Zhang, F.; Xu, H.; Yang, Y. Unsupervised deep image stitching: Reconstructing stitched features to images. IEEE Trans. Image Process. 2021, 30, 6184–6197. [Google Scholar] [CrossRef]

- Sun, Y.; Zhang, Z.; Liu, H.; Li, F. Image stitching method of aerial image based on feature matching and iterative optimization. In Proceedings of the 2021 40th Chinese Control Conference (CCC), Shanghai, China, 26–28 July 2021; IEEE: New York, NY, USA, 2011; pp. 3024–3029. [Google Scholar]

- Brown, M.; Lowe, D. Automatic panoramic image stitching using invariant features. Int. J. Comput. Vis. 2007, 74, 59–73. [Google Scholar]

- Garcia-Fidalgo, E.; Ortiz, A.; Ponsa, D.; Andrade-Cetto, J. Fast image mosaicking using incremental bags of binary words. In Methods for Appearance-Based Loop Closure Detection: Applications to Topological Mapping and Image Mosaicking; Springer: Berlin, Germany, 2018; pp. 141–156. [Google Scholar]

- Liu, B.; Zhang, J.; Li, Z. An improved APAP algorithm via line segment correction for UAV multispectral image stitching. In Proceedings of the IGARSS 2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: New York, NY, USA, 2011; pp. 6057–6060. [Google Scholar]

- He, L.; Zhao, Q.; Liu, S.; Wang, J. VSP-based warping for stitching many UAV images. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5624717. [Google Scholar] [CrossRef]

- Gao, J.; Li, S.; Kim, S.; Brown, M. Seam-driven image stitching. In Proceedings of Eurographics (Short Papers), Girona, Spain, 6–10 May 2013; The Eurographics Association: Eindhoven, The Netherlands, 2013; pp. 45–48. [Google Scholar]

- Zomet, A.; Levin, A.; Peleg, S.; Weiss, Y. Seamless image stitching by minimizing false edges. IEEE Trans. Image Process. 2006, 15, 969–977. [Google Scholar] [CrossRef] [PubMed]

- Li, L.; Zhang, W.; Liu, Y.; Wu, X. Optimal seamline detection for multiple image mosaicking via graph cuts. ISPRS J. Photogramm. Remote Sens. 2016, 113, 1–16. [Google Scholar]

- Chen, Q.; Xu, W.; Zhang, H.; Li, Y. Automatic seamline network generation for urban orthophoto mosaicking with the use of a digital surface model. Remote Sens. 2014, 6, 12334–12359. [Google Scholar]

- Pan, J.; Zhou, Q.; Wang, M. Seamline determination based on segmentation for urban image mosaicking. IEEE Geosci. Remote Sens. Lett. 2013, 11, 1335–1339. [Google Scholar] [CrossRef]

- Laaroussi, S.; Benjdira, B.; Koubaa, A.; Ammar, A. Dynamic mosaicking: Region-based method using edge detection for an optimal seamline. Multimed. Tools Appl. 2019, 78, 23225–23253. [Google Scholar]

- Yu, L.; Chen, J.; Wang, T.; Huang, X. Towards the automatic selection of optimal seam line locations when merging optical remote-sensing images. Int. J. Remote Sens. 2012, 33, 1000–1014. [Google Scholar]

- Szeliski, R.; Kang, S.B.; Uyttendaele, M. Image alignment and stitching: A tutorial. In Foundations and Trends in Computer Graphics and Vision; Now Publishers Inc.: Hanover, MA, USA, 2007; Volume 2, pp. 1–104. [Google Scholar]

- Shi, Z.; Liu, R.; Tang, L.; Xu, X. An image mosaic method based on convolutional neural network semantic features extraction. J. Signal Process. Syst. 2020, 92, 435–444. [Google Scholar]

- Nie, L.; Zhang, F.; Xu, H.; Yang, Y. A view-free image stitching network based on global homography. J. Vis. Commun. Image Represent. 2020, 73, 102950. [Google Scholar] [CrossRef]

- Nie, L.; Zhang, F.; Xu, H.; Yang, Y. Parallax-tolerant unsupervised deep image stitching. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; IEEE: NewYork, NY, USA, 2020; pp. 7399–7408. [Google Scholar]

- Lai, W.-S.; Gallo, O.; Gu, J.; Sun, D.; Yang, M.-H.; Kautz, J. Video stitching for linear camera arrays. arXiv 2019, arXiv:1907.13622. [Google Scholar] [CrossRef]

- Nie, L.; Zhang, F.; Xu, H.; Yang, Y. Eliminating warping shakes for unsupervised online video stitching. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2025; Springer: Berlin/Heidelberg, Germany, 2025; pp. 390–407. [Google Scholar]

- Rahaman, H.; Champion, E. To 3D or not 3D: Choosing a photogrammetry workflow for cultural heritage groups. Heritage 2019, 2, 1835–1851. [Google Scholar] [CrossRef]

- Santana, L.S.; Ferreira, R.; Almeida, J.; Gonçalves, G. Influence of flight altitude and control points in the georeferencing of images obtained by unmanned aerial vehicle. Eur. J. Remote Sens. 2021, 54, 59–71. [Google Scholar] [CrossRef]

- Agisoft LLC. Agisoft Metashape. Available online: https://www.agisoft.com/ (accessed on 16 February 2026).

- Pix4D. The Datasets of Agriculture Field and Building. Available online: https://support.pix4d.com/hc/en-us/articles/360000235126 (accessed on 16 February 2026).

- Chen, J.; Wang, Y.; Li, B.; Zhou, X. UAV image stitching based on optimal seam and half-projective warp. Remote Sens. 2022, 14, 1068. [Google Scholar] [CrossRef]

- Shen, X.; Cai, Z.; Yin, W.; Müller, M.; Li, Z.; Wang, K.; Chen, X.; Wang, C. Gim: Learning generalizable image matcher from internet videos. arXiv 2024, arXiv:2402.11095. [Google Scholar] [CrossRef]

- Sarlin, P.; DeTone, D.; Malisiewicz, T.; Rabinovich, A. SuperGlue: Learning feature matching with graph neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: NewYork, NY, USA, 2020; pp. 4938–4947. [Google Scholar]

- Sun, J.; Shen, Z.; Wang, Y.; Bao, H. LoFTR: Detector-free local feature matching with transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; IEEE: NewYork, NY, USA, 2020; pp. 8922–8931. [Google Scholar]

- Hedborg, J.; Forssén, P.; Felsberg, M. Fast and accurate structure and motion estimation. In Advances in Visual Computing: 5th International Symposium (ISVC 2009), Las Vegas, NV, USA, 30 November–2 December 2009; Springer: Berlin/Heidelberg, Germany, 2009; pp. 211–222. [Google Scholar]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Barath, D.; Matas, J. Graph-cut RANSAC. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; IEEE: NewYork, NY, USA, 2018; pp. 6733–6741. [Google Scholar]

- Edstedt, J.; Kjellström, H.; Felsberg, M.; Danelljan, M. DKM: Dense kernelized feature matching for geometry estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 18–22 June 2023; IEEE: NewYork, NY, USA, 2023; pp. 17765–17775. [Google Scholar]

- Perez, P.; Gangnet, M.; Blake, A. Poisson image editing. In Proceedings of ACM SIGGRAPH 2003 Papers, San Diego, CA, USA, 27–31 July 2003; Association for Computing Machinery: New York, NY, USA, 2003; pp. 313–318. [Google Scholar]

- Alcantarilla, P.F.; Solutions, T. Fast explicit diffusion for accelerated features in nonlinear scale spaces. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 34, 1281–1298. [Google Scholar]

- Xu, W.; Zhang, L.; Liu, H.; Wang, X. UAV-VisLoc: A large-scale dataset for UAV visual localization. arXiv 2024, arXiv:2405.11936. [Google Scholar]

- Hossein-Nejad, Z.; Nasri, M. Natural image mosaicing based on redundant keypoint elimination method in SIFT algorithm and adaptive RANSAC method. Signal Data Process. 2021, 18, 147–162. [Google Scholar] [CrossRef]

- Huynh-Thu, Q.; Ghanbari, M. Scope of validity of PSNR in image/video quality assessment. Electron. Lett. 2008, 44, 800–801. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [PubMed]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The unreasonable effectiveness of deep features as a perceptual metric. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; IEEE: NewYork, NY, USA, 2018; pp. 586–595. [Google Scholar]

- Jia, Q.; Li, Z.; Fan, X.; Zhao, H.; Teng, S.; Ye, X.; Latecki, L.J. Leveraging Line-Point Consistence to Preserve Structures for Wide Parallax Image Stitching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; IEEE: NewYork, NY, USA, 2021; pp. 12186–12195. [Google Scholar]

- Liao, T.; Chen, J.; Xu, Y. Quality evaluation-based iterative seam estimation for image stitching. Signal Image Video Process. 2019, 13, 1199–1206. [Google Scholar] [CrossRef]

- Zarei, A.; Ghaffari, M.; Mahdianpari, M.; Homayouni, S. MegaStitch: Robust large-scale image stitching. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4408309. [Google Scholar] [CrossRef]

| Dataset Name | Resolution | Num. | Height | Location (Coordinates) | Description of the Source |

|---|---|---|---|---|---|

| Dataset_grass | 5472 × 3648 | 55 | 150 m | Qingdao, China (36°16′N, 120°16′E) | UAV-AIRPAI dataset [16] |

| Dataset_village | 3976 × 2652 | 52 | 460 m | Taizhou, China (32°18′N, 119°54′E) | UAV-VisLoc dataset [70] |

| Dataset_field | 6000 × 4000 | 25 | 650 m | Lausanne, Switzerland (46°38′N, 6°36′E) | Switzerland dataset [59] |

| Dataset_building | 5472 × 3648 | 26 | 230 m | Roseau, Dominica (15°17′N, 61°22′W) | Loubiere dataset [59] |

| Method | Dataset_Grass | Dataset_Village | Dataset_Field | Dataset_Building |

|---|---|---|---|---|

| Hossein’s [71] | 612.75 | 160.62 | 552.85 | 163.65 |

| Autostitch [39] | 988.00 | 592.00 | 651.00 | 496.00 |

| Metashape [58] | 472.00 | 343.00 | 332.00 | 321.00 |

| Peng’s [19] | 6954.00 | 9749.00 | 7429.00 | 2160.00 |

| Proposed | 212.92 | 259.67 | 97.46 | 142.12 |

| Metrics | Methods | Dataset_Grass | Dataset_Village | Dataset_Field | Dataset_Building |

|---|---|---|---|---|---|

| PSNR (↑) | Hossein’s [71] | 29.68 | 33.56 | 29.91 | 29.38 |

| UDIS++ [53] | 28.74 | 30.43 | 28.89 | Failed | |

| StableStitch2 [55] | 28.26 | 29.30 | 28.41 | 28.17 | |

| Autostitch [39] | 28.65 | 30.38 | 28.44 | 28.30 | |

| SPHP [21] | 29.17 | 31.26 | 29.02 | 28.73 | |

| MGRAPH [11] | 28.97 | 31.61 | 28.95 | Failed | |

| MegaStitch [77] | 29.26 | 31.23 | 28.91 | 28.66 | |

| Proposed | 29.81 | 34.38 | l30.03 | 29.46 | |

| SSIM (↑) | Hossein’s [71] | 0.8031 | 0.9157 | 0.7776 | 0.8214 |

| UDIS++ [53] | 0.7329 | 0.8645 | 0.7114 | Failed | |

| StableStitch2 [55] | 0.7049 | 0.8313 | 0.7048 | 0.7236 | |

| Autostitch [39] | 0.7258 | 0.8584 | 0.6811 | 0.7422 | |

| SPHP [21] | 0.7510 | 0.8682 | 0.7035 | 0.7812 | |

| MGRAPH [11] | 0.7418 | 0.8832 | 0.6919 | Failed | |

| MegaStitch [77] | 0.7587 | 0.8772 | 0.6889 | 0.7805 | |

| Proposed | 0.8146 | 0.9353 | 0.7894 | 0.8216 | |

| LPIPS (↓) | Hossein’s [71] | 0.0834 | 0.0539 | 0.0624 | 0.1767 |

| UDIS++ [53] | 0.3160 | 0.3932 | 0.3917 | Failed | |

| StableStitch2 [55] | 0.6675 | 0.5570 | 0.5809 | 0.6736 | |

| Autostitch [39] | 0.3535 | 0.3608 | 0.5432 | 0.5127 | |

| SPHP [21] | 0.1518 | 0.2485 | 0.2980 | 0.2793 | |

| MGRAPH [11] | 0.2086 | 0.2194 | 0.2939 | Failed | |

| MegaStitch [77] | 0.1183 | 0.2198 | 0.2970 | 0.2590 | |

| Proposed | 0.0701 | 0.0310 | 0.0566 | 0.1560 |

| Match Method | Homography Estimation Time (s) | RMSE (↓) |

|---|---|---|

| 76.83 | 32.738 | |

| SIFT | 546.48 | 87.85 |

| Method | Seam-Band | PSNR (↑) | SSIM (↑) | LPIPS (↓) |

|---|---|---|---|---|

| lProposed | ✓ | 29.81 | 0.8146 | 0.0701 |

| Proposed | × | 29.71 | 0.8143 | 0.0714 |

| Baseline [71] | ✓ | 29.70 | 0.8088 | 0.0702 |

| Baseline [71] | × | 29.68 | 0.8031 | 0.0834 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhou, J.; Wei, Z.; Zhong, Y.; He, X. LargeStitch: Efficient Seamless Stitching of Large-Size Aerial Images via Deep Matching and Seam-Band Fusion. Remote Sens. 2026, 18, 1481. https://doi.org/10.3390/rs18101481

Zhou J, Wei Z, Zhong Y, He X. LargeStitch: Efficient Seamless Stitching of Large-Size Aerial Images via Deep Matching and Seam-Band Fusion. Remote Sensing. 2026; 18(10):1481. https://doi.org/10.3390/rs18101481

Chicago/Turabian StyleZhou, Jianglei, Zhaoyu Wei, Yisen Zhong, and Xianqiang He. 2026. "LargeStitch: Efficient Seamless Stitching of Large-Size Aerial Images via Deep Matching and Seam-Band Fusion" Remote Sensing 18, no. 10: 1481. https://doi.org/10.3390/rs18101481

APA StyleZhou, J., Wei, Z., Zhong, Y., & He, X. (2026). LargeStitch: Efficient Seamless Stitching of Large-Size Aerial Images via Deep Matching and Seam-Band Fusion. Remote Sensing, 18(10), 1481. https://doi.org/10.3390/rs18101481