ConFAS-Net: Few-Shot SAR Target Recognition via Confusion-Aware Attention and Adaptive Decision Scaling

Highlights

- We propose the ConFAS-Net model, which integrates three innovative modules—MS-CA multi-scale channel attention, CACL category-confusion-aware loss, and CADA category-adaptive decision adjustment—to systematically address the core issues of insufficient feature utilisation and severe category confusion in small-sample SAR target recognition.

- On the MSTAR dataset under 5/10/15/30-shot settings, the model achieved recognition accuracies of 73.25%, 87.43%, 94.97%, and 96.87%, respectively, representing a maximum improvement of 2.93 percentage points over baseline methods, whilst maintaining superior parameter efficiency and balancing accuracy with computational efficiency.

- The establishment of a full-chain optimisation paradigm comprising ‘feature enhancement—loss optimisation—decision adjustment’ provides an innovative and practical technical solution for small-sample target recognition tasks.

- The model’s lightweight design is tailored to the application requirements of resource-constrained scenarios, offering a viable approach for the engineering implementation of SAR target recognition under limited-sample conditions.

Abstract

1. Introduction

2. Materials and Methods

2.1. Overview of the Overall Structure of ConFAS-Net

2.2. MS-CA Module

2.3. CACL Module

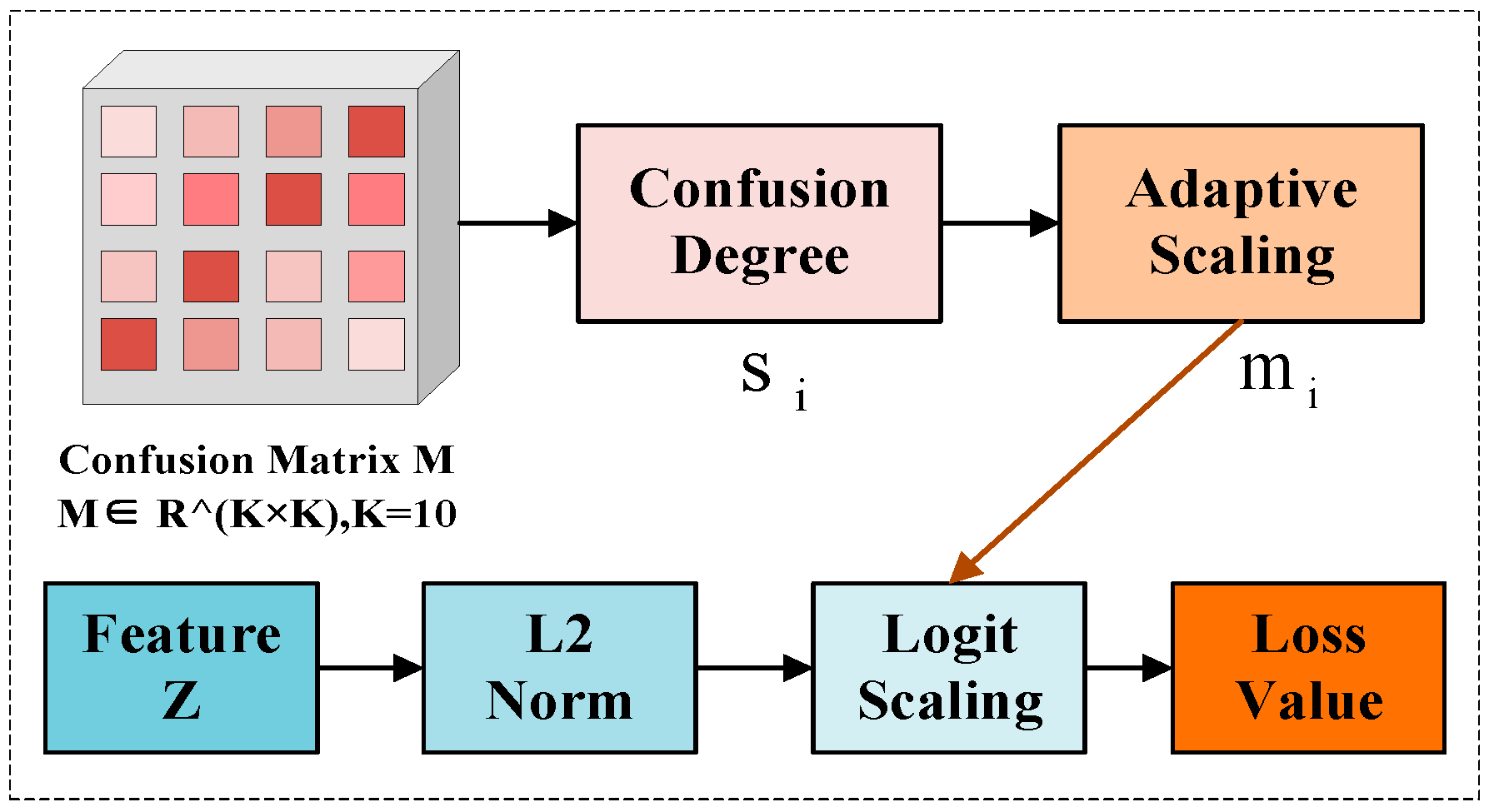

2.4. CADA Module

3. Results

3.1. Experimental Dataset

3.2. Experimental Setup

3.3. Comparative Experiment

3.3.1. Recognition Performance Under Different K-Shot Settings on MSTAR Dataset

3.3.2. Comparative Experiment on the MSTAR Dataset

3.3.3. Comparative Experiment on the SAMPLE Dataset

3.4. Ablation Experiment

3.4.1. A Comparison of Strategies for Updating the CACL Confusion Matrix

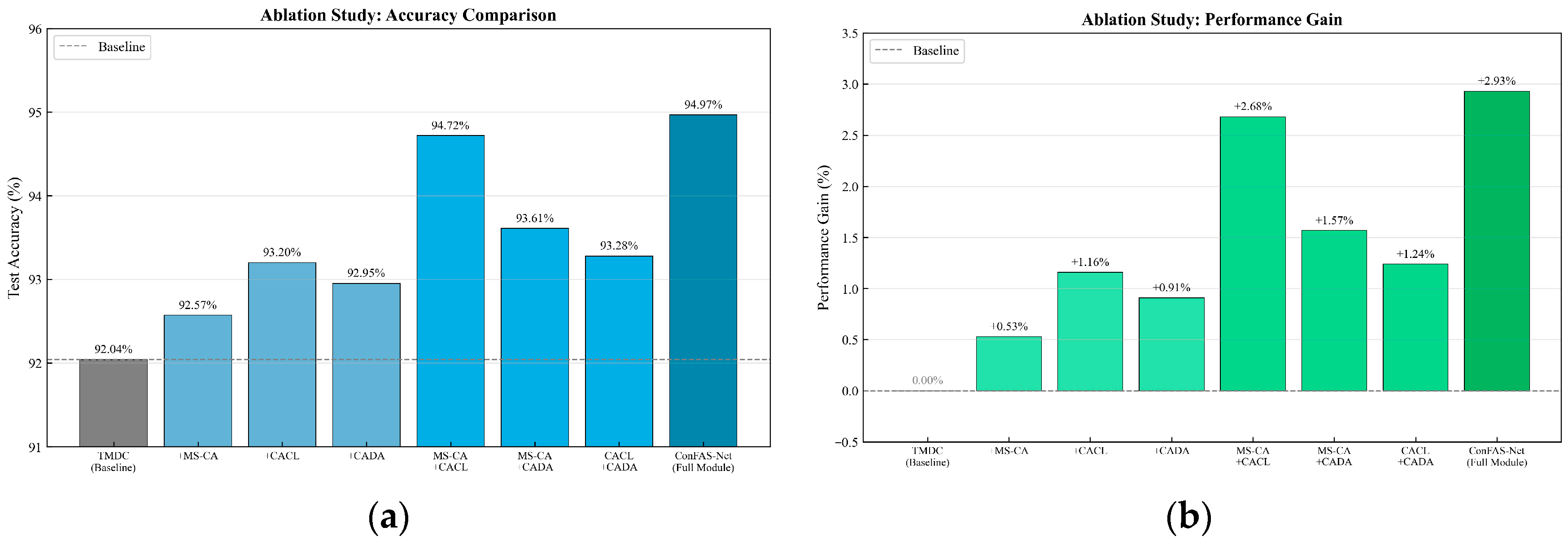

3.4.2. Complete Module Ablation Experiment

4. Discussion

4.1. Module Interoperability Analysis

4.2. Performance Analysis

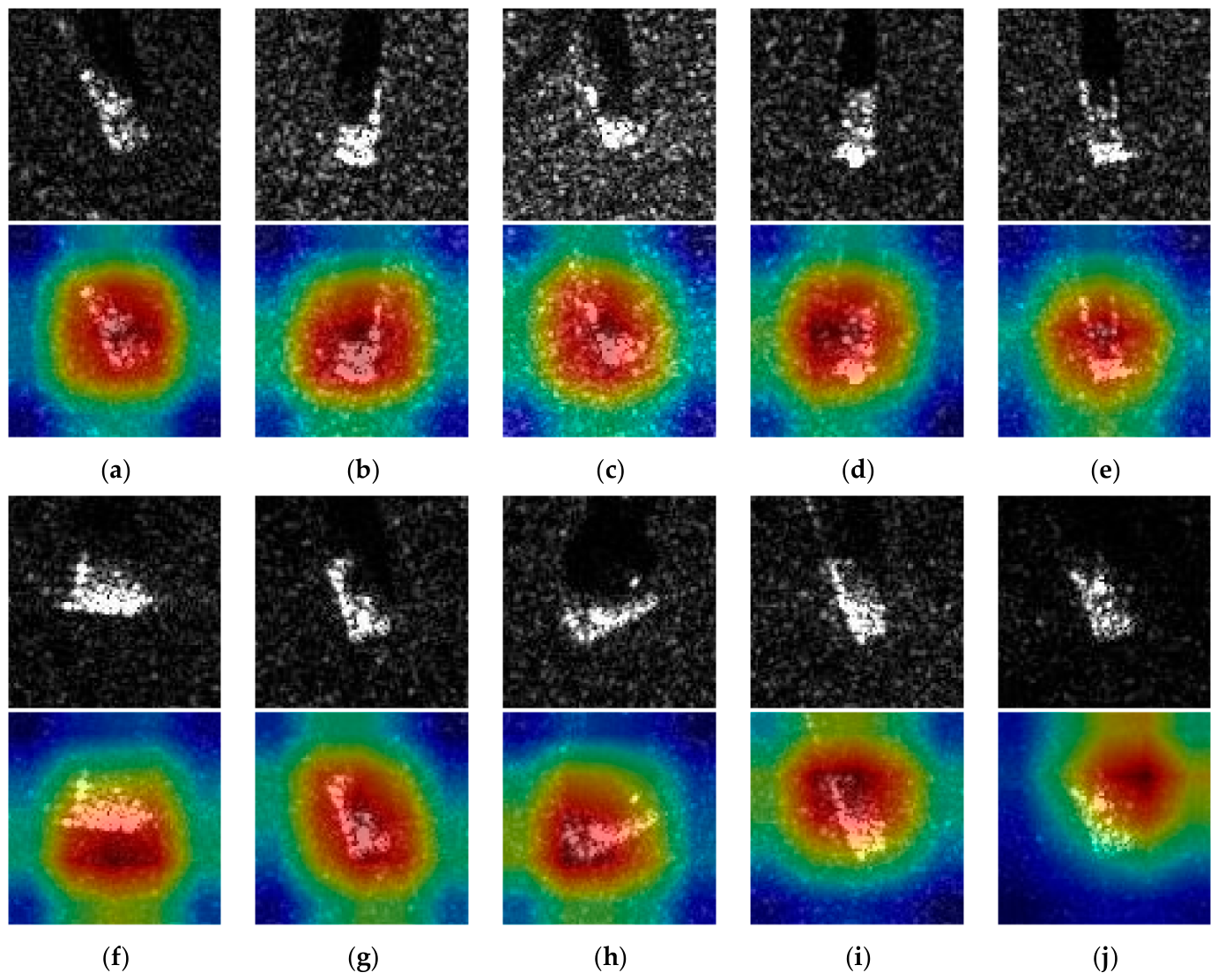

4.3. Visual Analysis

4.3.1. Comparative Analysis of Confusion Matrices

4.3.2. Analysis of the Evolution of T-SNE Feature Distributions

4.3.3. Class-Based Activation Heatmap Visualisation Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Li, J.; Yu, Z.; Yu, L.; Cheng, P.; Chen, J.; Chi, C. A comprehensive survey on SAR ATR in deep-learning era. Remote Sens. 2023, 15, 1454. [Google Scholar] [CrossRef]

- Wen, Y.; Wang, X.; Peng, L.; Qiao, Y. A coarse-to-fine hierarchical feature learning for SAR automatic target recognition with limited data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 13646–13656. [Google Scholar] [CrossRef]

- Moreira, A.; Prats-Iraola, P.; Younis, M.; Krieger, G.; Hajnsek, I.; Papathanassiou, K.P. A tutorial on synthetic aperture radar. IEEE Geosci. Remote Sens. Mag. 2013, 1, 6–43. [Google Scholar] [CrossRef]

- Deng, H.; Pi, D.; Zhao, Y. Ship target detection based on CFAR and deep learning SAR image. J. Coast. Res. 2019, 94, 161–164. [Google Scholar] [CrossRef]

- Liu, B.; He, K.; Han, M.; Hu, X.; Ma, G.; Wu, M. Application of UAV and GB-SAR in mechanism research and monitoring of Zhonghaicun landslide in southwest China. Remote Sens. 2021, 13, 1653. [Google Scholar] [CrossRef]

- Clemente, C.; Pallotta, L.; Gaglione, D.; De Maio, A.; Soraghan, J.J. Automatic target recognition of military vehicles with Krawtchouk moments. IEEE Trans. Aerosp. Electron. Syst. 2017, 53, 493–500. [Google Scholar] [CrossRef]

- Pei, J.; Huang, Y.; Huo, W.; Zhang, Y.; Yang, J.; Yeo, T.-S. SAR automatic target recognition based on multiview deep learning framework. IEEE Trans. Geosci. Remote Sens. 2018, 56, 2196–2210. [Google Scholar] [CrossRef]

- Wang, L.; Bai, X.; Xue, R.; Zhou, F. Few-shot SAR automatic target recognition based on Conv-BiLSTM prototypical network. Neurocomputing 2021, 443, 235–246. [Google Scholar] [CrossRef]

- Li, Y.; Chen, W.; Hu, X.; Chen, B.; Wang, D.; Qu, C.; Meng, F.; Wang, P.; Liu, H. AOT: Aggregation optimal transport for few-shot SAR automatic target recognition. IEEE Trans. Aerosp. Electron. Syst. 2025, 61, 5088–5103. [Google Scholar] [CrossRef]

- Li, W.; Yang, W.; Liu, T.; Hou, Y.; Li, Y.; Liu, Z.; Liu, Y.; Liu, L. Predicting gradient is better: Exploring self-supervised learning for SAR ATR with a joint-embedding predictive architecture. ISPRS J. Photogramm. Remote Sens. 2024, 218, 326–338. [Google Scholar] [CrossRef]

- Ding, B.; Wen, G.; Huang, X.; Ma, C.; Yang, X. Target recognition in synthetic aperture radar images via matching of attributed scattering centers. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 3334–3347. [Google Scholar] [CrossRef]

- Rui, J.; Wang, C.; Zhang, H.; Jin, F. Multi-sensor SAR image registration based on object shape. Remote Sens. 2016, 8, 923. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, L.; Wen, Z.; Li, K.; Pan, Q. Multilevel scattering center and deep feature fusion learning framework for SAR target recognition. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5227914. [Google Scholar] [CrossRef]

- Gao, F.; Huang, T.; Sun, J.; Wang, J.; Hussain, A.; Yang, E. A new algorithm for SAR image target recognition based on an improved deep convolutional neural network. Cogn. Comput. 2019, 11, 809–824. [Google Scholar] [CrossRef]

- Liu, J.; Xing, M.; Yu, H.; Sun, G. EFTL: Complex convolutional networks with electromagnetic feature transfer learning for SAR target recognition. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5209811. [Google Scholar] [CrossRef]

- Liao, L.; Du, L.; Chen, J.; Cao, Z.; Zhou, K. EMI-Net: An end-to-end mechanism-driven interpretable network for SAR target recognition under EOCs. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5205118. [Google Scholar] [CrossRef]

- Li, R.; Wang, X.; Wang, J.; Song, Y.; Lei, L. SAR target recognition based on efficient fully convolutional attention block CNN. IEEE Geosci. Remote Sens. Lett. 2022, 19, 4005905. [Google Scholar] [CrossRef]

- Shi, B.; Zhang, Q.; Wang, D.; Li, Y. Synthetic aperture radar SAR image target recognition algorithm based on attention mechanism. IEEE Access 2021, 9, 140512–140524. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 7132–7141. [Google Scholar]

- Lin, H.; Xie, Z.; Zeng, L.; Yin, J. Multi-scale time-frequency representation fusion network for target recognition in SAR imagery. Remote Sens. 2025, 17, 2786. [Google Scholar] [CrossRef]

- Li, C.; Ni, J.; Luo, Y.; Wang, D.; Zhang, Q. A dual-branch spatial-frequency domain fusion method with cross attention for SAR image target recognition. Remote Sens. 2025, 17, 2378. [Google Scholar] [CrossRef]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 11531–11539. [Google Scholar]

- Lin, T.-Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 318–327. [Google Scholar] [CrossRef]

- Wen, Y.; Zhang, K.; Li, Z.; Qiao, Y. A discriminative feature learning approach for deep face recognition. In Proceedings of the European Conference on Computer Vision (ECCV), Amsterdam, The Netherlands, 11–14 October 2016; pp. 499–515. [Google Scholar]

- Geng, J.; Ma, W.; Jiang, W. Causal intervention and parameter-free reasoning for few-shot SAR target recognition. IEEE Trans. Circuits Syst. Video Technol. 2024, 34, 12702–12714. [Google Scholar] [CrossRef]

- Zhou, X.; Tang, T.; He, Q.; Zhao, L.; Kuang, G.; Liu, L. Simulated SAR prior knowledge guided evidential deep learning for reliable few-shot SAR target recognition. ISPRS J. Photogramm. Remote Sens. 2024, 216, 1–14. [Google Scholar] [CrossRef]

- Wang, S.; Wang, Y.; Liu, H.; Sun, Y.; Zhang, C. A few-shot SAR target recognition method by unifying local classification with feature generation and calibration. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5200319. [Google Scholar] [CrossRef]

- Aladwani, T.; Pantazi-Kypraiou, M.; Goudroumanis, G.R.; Floros, G.; Anagnostopoulos, C. A federated few-shot learning siamese network framework with data label imbalance. In Proceedings of the 2025 IEEE 45th International Conference on Distributed Computing Systems Workshops (ICDCSW), Glasgow, UK, 21–23 July 2025; pp. 56–62. [Google Scholar]

- Snell, J.; Swersky, K.; Zemel, R. Prototypical networks for few-shot learning. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NeurIPS), Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Sung, F.; Yang, Y.; Zhang, L.; Xiang, T.; Torr, P.H.S.; Hospedales, T.M. Learning to compare: Relation network for few-shot learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 1199–1208. [Google Scholar]

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep networks. arXiv 2017, arXiv:1703.03400. [Google Scholar]

- Xu, J.; Liu, B.; Xiao, Y. A multitask latent feature augmentation method for few-shot learning. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 6976–6990. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16×16 words: Transformers for image recognition at scale. In Proceedings of the International Conference on Learning Representations (ICLR), Vienna, Austria, 3–7 May 2021. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the 37th International Conference on Machine Learning (ICML), Virtual, 13–18 July 2020; pp. 1597–1607. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.-Y.; Kweon, I.S. CBAM: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Liu, J.-J.; Hou, Q.; Cheng, M.-M.; Wang, C.; Feng, J. Improving convolutional networks with self-calibrated convolutions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10093–10102. [Google Scholar]

- Ross, T.; Worrell, S.; Velten, V.; Mossing, J.; Bryant, M. Standard SAR ATR evaluation experiments using the MSTAR public release data set. Proc. SPIE 1998, 3370, 566–573. [Google Scholar]

- Keydel, E.R.; Lee, S.W.; Moore, J.T. MSTAR extended operating conditions. Proc. SPIE 1996, 2757, 228–242. [Google Scholar]

- Lewis, B.; Scarnati, T.; Sudkamp, E.; Nehrbass, J.; Rosencrantz, S.; Zelnio, E. A SAR dataset for ATR development: The Synthetic and Measured Paired Labeled Experiment (SAMPLE). In Proceedings of the SPIE Defense + Commercial Sensing (SPIE DCS), Baltimore, MD, USA, 14–18 April 2019; p. 10987. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A.A. Inception-v4, Inception-ResNet and the impact of residual connections on learning. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), San Francisco, CA, USA, 4–9 February 2017; pp. 4278–4284. [Google Scholar]

- Huang, G.; Liu, Z.; Van der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2261–2269. [Google Scholar]

- Guan, J.; Liu, J.; Feng, P.; Wang, W. Multiscale deep neural network with two-stage loss for SAR target recognition with small training set. IEEE Geosci. Remote Sens. Lett. 2022, 19, 4011405. [Google Scholar] [CrossRef]

- Wang, Q.; Xu, H.; Yuan, L.; Wen, X. Dense capsule network for SAR automatic target recognition with limited data. Remote Sens. Lett. 2022, 13, 533–543. [Google Scholar] [CrossRef]

- Zhang, C.; Cai, Y.; Lin, G.; Shen, C. DeepEMD: Differentiable earth mover’s distance for few-shot learning. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 5632–5648. [Google Scholar] [CrossRef] [PubMed]

- Zhang, C.; Dong, H.; Deng, B. Improving pre-training and fine-tuning for few-shot SAR automatic target recognition. Remote Sens. 2023, 15, 1709. [Google Scholar] [CrossRef]

- Zhang, L.; Leng, X.; Feng, S.; Ma, X.; Ji, K.; Kuang, G.; Liu, L. Optimal azimuth angle selection for limited SAR vehicle target recognition. Int. J. Appl. Earth Obs. Geoinf. 2024, 128, 103707. [Google Scholar] [CrossRef]

| Class | Training | Test | ||

|---|---|---|---|---|

| Depression | Number | Depression | Number | |

| 2S1 | 17° | 299 | 15° | 274 |

| BMP2 | 17° | 232 | 15° | 195 |

| BRDM2 | 17° | 298 | 15° | 274 |

| BTR60 | 17° | 256 | 15° | 195 |

| BTR70 | 17° | 233 | 15° | 196 |

| D7 | 17° | 299 | 15° | 274 |

| T62 | 17° | 299 | 15° | 273 |

| T72 | 17° | 232 | 15° | 196 |

| ZIL131 | 17° | 299 | 15° | 274 |

| ZSU23/4 | 17° | 299 | 15° | 274 |

| Module | Parameter | Symbol | Value |

|---|---|---|---|

| CACL | Label shift factor | τ | 0.3 |

| CACL | Base margin | m0 | 0.0 |

| CACL | Margin scaling factor | λm | 0.15 |

| CADA | Scaling strength | λs | 0.1 |

| Two-stage | CE loss weight | β | 0.05 |

| Two-stage | Stage transition ratio | α | 0.6 |

| MS-CA | Channel reduction ratio | r | 16 |

| K-Shot | Test Accuracy (%) | Training Samples | Total Training Samples |

|---|---|---|---|

| 5-shot | 73.25 | 5 × 10 classes | 50 |

| 10-shot | 87.43 | 10 × 10 classes | 100 |

| 15-shot | 94.97 | 15 × 10 classes | 150 |

| 30-shot | 96.87 | 30 × 10 classes | 300 |

| Modelling Methods | 5-Shot | 10-Shot | 15-Shot | 30-Shot |

|---|---|---|---|---|

| ResNet-18 [40] | 55.92 | 72.01 | 80.59 | 90.44 |

| Inception [41] | 58.52 | 74.64 | 82.14 | 91.32 |

| DenseNet [42] | 59.52 | 75.16 | 82.78 | 92.05 |

| Prototypical Networks [29] | 70.37 | 82.46 | 91.56 | 94.92 |

| TMDC-CNNs [43] | 73.17 | 85.00 | 92.04 | 95.59 |

| Dens-CapsNet [44] | 66.90 | 80.26 | - | 94.56 |

| DeepEMD [45] | 52.24 | 56.04 | ||

| FTL-dis [46] | 72.13 | 81.21 | - | - |

| Prior-EDL [26] | 60.05 | 71.62 | 86.50 | 92.70 |

| PD Network [47] | 70.15 | 83.73 | - | 94.63 |

| ConFAS-Net (ours) | 73.25 | 87.43 | 94.97 | 96.87 |

| Modelling Methods | 5-Shot | 10-Shot | 15-Shot | 30-Shot |

|---|---|---|---|---|

| ResNet-18 [40] | 84.72 | 86.39 | 88.16 | 90.27 |

| Inception [41] | 60.45 | 72.20 | 75.37 | 82.57 |

| DenseNet [42] | 84.15 | 85.64 | 87.22 | 89.13 |

| Prototypical Networks [29] | 77.38 | 82.46 | 88.56 | 90.92 |

| TMDC-CNNs [43] | 80.68 | 81.42 | 83.54 | 85.68 |

| ConFAS-Net (ours) | 92.50 | 93.40 | 95.44 | 96.33 |

| Update Strategy | Update Frequency | 5-Shot | 10-Shot | 15-Shot | 30-Shot | Training Times |

|---|---|---|---|---|---|---|

| Offline | 300 iterations | 73.25 | 87.43 | 94.97 | 96.87 | 1.0× |

| Semi-online | 50 iterations | 73.41 | 87.61 | 95.08 | 96.95 | 1.02× |

| Online EMA | 1 iteration | 73.58 | 87.72 | 95.15 | 97.01 | 1.05× |

| NO. | MS-CA | CACL | CADA | Accuracy (%) | Gain (%) |

|---|---|---|---|---|---|

| 1 | × | × | × | 92.04 | - |

| 2 | √ | × | × | 92.57 | +0.53 |

| 3 | × | √ | × | 93.20 | +1.16 |

| 4 | × | × | √ | 92.95 | +0.91 |

| 5 | √ | √ | × | 94.72 | +2.68 |

| 6 | √ | × | √ | 93.61 | +1.57 |

| 7 | × | √ | √ | 93.28 | +1.24 |

| 8 | √ | √ | √ | 94.97 | +2.93 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhao, X.; Xue, X.; Tian, Y.; Yang, J.; Lu, B.; Zhang, W.; Wang, W. ConFAS-Net: Few-Shot SAR Target Recognition via Confusion-Aware Attention and Adaptive Decision Scaling. Remote Sens. 2026, 18, 1482. https://doi.org/10.3390/rs18101482

Zhao X, Xue X, Tian Y, Yang J, Lu B, Zhang W, Wang W. ConFAS-Net: Few-Shot SAR Target Recognition via Confusion-Aware Attention and Adaptive Decision Scaling. Remote Sensing. 2026; 18(10):1482. https://doi.org/10.3390/rs18101482

Chicago/Turabian StyleZhao, Xin, Xiaorong Xue, Yishuo Tian, Jingtong Yang, Bingyan Lu, Wen Zhang, and Wancheng Wang. 2026. "ConFAS-Net: Few-Shot SAR Target Recognition via Confusion-Aware Attention and Adaptive Decision Scaling" Remote Sensing 18, no. 10: 1482. https://doi.org/10.3390/rs18101482

APA StyleZhao, X., Xue, X., Tian, Y., Yang, J., Lu, B., Zhang, W., & Wang, W. (2026). ConFAS-Net: Few-Shot SAR Target Recognition via Confusion-Aware Attention and Adaptive Decision Scaling. Remote Sensing, 18(10), 1482. https://doi.org/10.3390/rs18101482