1. Introduction

With the continuous progress of the global social economy, urbanization is advancing at an unprecedented rate. Although urbanization significantly promotes economic growth, infrastructure improvement, and optimization of residents’ living conditions, its rapid and often poorly planned urban expansion has simultaneously triggered a series of pressing challenges [

1]. Spatially, the high concentration in urban cores contrasts sharply with the sprawling expansion of informal settlements on the periphery, leading to drastic changes in land use patterns and increasingly complex spatial structures [

2,

3]. Furthermore, rapid urbanization in the absence of adequate planning often induces land use disorder, fragmented spatial patterns, and increased pressure on the ecological environment, further intensifying deep-seated social contradictions such as income inequality and industrial misallocation [

4]. In this context, systematic and precise monitoring of urbanization, specifically focused on the rationality of urban planning, becomes crucial. Systematically analyzing urban land evolution data helps in understanding the intrinsic mechanisms of urban expansion and spatial restructuring, thereby providing theoretical support and technical tools for optimizing urban governance and spatial planning [

5].

In traditional urban monitoring, remote sensing and Geographic Information Systems (GIS) form the primary technical foundation. Among them, the spectral index method [

6] is a classic approach, often utilizing indices like the Normalized Difference Built-up Index (NDBI) for feature classification [

7,

8]. However, this method performs poorly with suboptimal image quality or in complex environments. To address these limitations, machine learning methods have been gradually introduced. Support Vector Machine (SVM) demonstrates good boundary discrimination capability when classifying medium-resolution images like Landsat [

9,

10,

11,

12], but it is relatively dependent on data quality and parameter settings. Random Forest (RF) [

13,

14,

15,

16] enhances robustness to multi-source data features by integrating multiple decision trees and is widely used for land use dynamic analysis, yet its computational efficiency remains challenging for large-scale applications.

In recent years, with continuous breakthroughs in deep learning, its application in urban monitoring has expanded [

17,

18,

19,

20,

21,

22,

23,

24], promoting a shift towards intelligent monitoring techniques. Technologies represented by Convolutional Neural Networks (CNNs), due to their excellent local feature extraction capability, have become mainstream tools in urban remote sensing tasks, with related research often focusing on CNN-based semantic segmentation architectures [

25,

26]. For instance, ref. [

27] employed the SegNet model for land cover classification in the Namhan and Bukhan River basins, achieving 91.54% accuracy, verifying its potential in efficiently identifying surface dynamics like urban expansion. The U-Net model has proven effective in extracting built-up area information even with limited labeled samples [

28,

29]. Furthermore, ref. [

30] showed that in complex urban areas, a hybrid strategy using RF for multi-class land cover classification combined with U-Net for extracting built-up areas of different densities could yield higher-accuracy Land Use and Land Cover (LULC) results. Beyond classification tasks, CNNs are also widely used in urban change detection; for example, SNUNet [

31] and HDA-Net [

32] improved change detection performance for Very High-Resolution (VHR) imagery by enhancing network architectures; [

33] introduced a spatial pyramid pooling mechanism to better preserve the geometry of changed areas. However, traditional CNNs, constrained by limited local receptive fields, struggle to fully capture the long-range spatial dependencies involved in urban expansion processes [

34,

35,

36]. To address this, researchers have introduced self-attention mechanisms to enhance the modeling capacity of CNNs for long-range dependencies. Refs. [

37,

38] improved feature discriminability by integrating CNN features with self-attention modules. The Transformer architecture shows unique advantages in this domain due to its self-attention mechanism; ref. [

39] combined Transformer with spatial and channel attention to optimize feature representation, while [

40] proposed ChangeFormer, a framework entirely based on Transformer for change detection. However, models relying solely on Transformers are often limited by high model complexity and computational cost when processing complex high-resolution urban scenes [

41].

Recent research attempts to balance global modeling capability and computational efficiency by designing convolutional architectures with large receptive fields [

36,

42]. ConvNeXt [

43], by experimenting with different kernel sizes, found that increasing the size from 3 × 3 to 7 × 7 effectively enhanced network performance; SLaK [

44] further increased the kernel size to 51 × 51, achieving performance comparable or superior to state-of-the-art hierarchical Transformers and ConvNeXt on multiple vision tasks; LSKNet [

45] adapted the receptive field dynamically to meet the needs of remote sensing object detection. These results highlight the significant role of large kernels in enhancing contextual information capture. However, merely increasing kernel size may potentially sacrifice local detail information. Subsequent research, UniRepLKNet [

46], indicated that combining large-kernel convolutions with parallel small-kernel convolutions can capture both global context and local fine-grained features simultaneously; this architecture achieved leading performance on multiple vision tasks. Nevertheless, the multi-scale features extracted by existing parallel convolutional structures are typically fused only through simple linear superposition (e.g., element-wise addition). This crude integration strategy fails to fully leverage the advantages of multi-scale information and may even cause feature confusion.

To address the aforementioned issues, this paper takes Accra, the capital of Ghana, as a case study, constructs urban level category labels and establishes a multi-modal Accra dataset using Sentinel-2 multispectral imagery, using this as a benchmark for experiments. In terms of methodological design, this paper proposes the Multi-Scale Spatial-Channel Attention Network (MSCANet). Its core component, the Multi-Scale Spatial-Channel Attention Module (MSCAM), jointly models spatial and channel dimensions to achieve dynamic weighted selection of multi-scale features, thereby optimizing the feature redundancy and mixing issues associated with parallel large-kernel convolutions. Notably, our work does not focus on exploring the application potential of large-kernel structures in urbanization monitoring or on structural innovations to large-kernel designs themselves. Instead, the innovation of MSCAM lies in its dedicated architectural integration within the Parallel Large-Kernel framework. While its design principles for spatial-channel weighting draw inspiration from established attention mechanisms, it is specifically tailored to dynamically refine and select the multi-scale features extracted by parallel large kernels, thereby addressing a key limitation in this emerging paradigm for remote sensing applications, which essentially serves as an optimization module integrated into the parallel large-kernel convolutional structure. Furthermore, we observed that MSCAM also possesses potential for cross-modal feature selection when modeling spatial-channel weights. Therefore, we adapt it to propose the Multi-Scale Spatial-Channel Attention Feature Fusion Module (MSCA-FFM) for integrating multi-modal features during the fusion stage. Moreover, to thoroughly validate the model’s adaptability and generalization capability, supplementary experiments were performed on the publicly available ISPRS Potsdam dataset. This paper conducts urbanization monitoring research using Accra as an example, at both the data and methodological levels, aiming to provide a scalable technical solution for urbanization monitoring. The main contributions of this paper can be summarized as follows:

- (1)

This paper constructs the urbanization monitoring network structure MSCANet and proposes its core module MSCAM. This module is specifically designed to enhance the parallel large-kernel framework by achieving joint spatial-channel modeling, thereby effectively mitigating its shortcomings in multi-scale feature fusion.

- (2)

Building upon MSCAM’s potential for cross-modal feature selection, we further propose the MSCA-FFM module adapted for multi-modal fusion. Experiments show that this module performs excellently in multi-modal feature fusion, validating the effectiveness and extensibility of MSCAM in processing multi-modal data.

2. Methodology

2.1. Framework Overview

The overall framework of MSCANet (Multi-Scale Spatial-Channel Attention Network) is shown in

Figure 1a. The network adopts a dual-branch structure designed for True Color Images (TCI) and Urban Classification Images (UC) modal inputs, respectively, to fully capture the complementary information contained in different modal images. The two branches work cooperatively in parallel, with each branch independently learning and preserving the feature representations of its respective modality, thereby enhancing the network’s representational capacity under multi-modal input conditions.

In the backbone part, each branch consists of four stages (

Figure 1a). Stage 1’s Basic-Layer comprises two consecutive 3 × 3 convolutional layers for extracting shallow features. Stages 2 to 4 employ the Multi-Scale Spatial-Channel Attention layer (MSCA-Layer) as the core unit. The MSCA-Layer internally contains a sequential structure of an Multi-Scale Spatial-Channel Attention Block (MSCA-Block) (

Figure 1b) and a 3 × 3 convolutional layer, followed by an MLP module for further non-linear mapping and feature transformation. The layer count configuration for each stage is

. After progressive extraction and representation through the four stages, the features generated by the dual branches are fused in the Multi-Scale Spatial-Channel Attention Feature Fusion Module (MSCA-FFM) (

Figure 1c) and finally fed into a decoder based on UnetFormer [

47].

The core improvement in the methodology of this study lies in proposing the Multi-Scale Spatial-Channel Attention Module (MSCAM), which is not only the key component of the MSCA-Block but is also extended for use in the fusion module MSCA-FFM. MSCAM was originally designed to address the feature mixing problem caused by parallel large-kernel convolutions. Specifically, parallel large kernels can generate multi-scale features through different kernel sizes, but without an effective selection and integration mechanism, these multi-scale features may interfere with each other, weakening the overall expressive power. MSCAM models both spatial and channel dimensions simultaneously, performing dynamic weighted selection on multi-scale features to distill more discriminative feature representations. Furthermore, we observed that MSCAM, in the process of capturing multi-scale spatial-channel weights, also possesses the potential for selectively fusing cross-modal features. Therefore, while retaining its core mechanism, we made simple adaptive adjustments to propose the MSCA-FFM module, enabling it to effectively integrate information from different modal inputs during the feature fusion stage.

In the following subsections, we will focus on the structural design and implementation principles of the MSCA-Block and MSCA-FFM.

2.2. Muti-Scale Spatial-Channel Attention Block

Numerous recent studies have shown that convolutional structures with large receptive fields, when properly designed, can effectively enhance a network’s global modeling capability. Particularly, parallel large convolutional kernel modules can leverage different kernel sizes to generate multi-scale features, thereby providing rich contextual information at the receptive field level. However, existing methods often use simple operations (e.g., element-wise addition) to fuse these features, which not only fails to fully utilize the advantages of multi-scale information but may also cause mutual interference between features, leading to degraded representation capability. To address this, this study proposes the Multi-Scale Spatial-Channel Attention Module (MSCAM) (see

Figure 1b), which introduces attention weighting mechanisms in both spatial and channel dimensions to dynamically select and integrate the multi-scale features generated by parallel convolutions, thereby obtaining more discriminative feature representations.

Algorithm 1 shows the feature processing process of MSCA-Block in pseudocode. Before entering MSCAM, the input feature

is first processed by the Parallel Large-Kernel Module (PLKM) for multi-scale feature extraction. PLKM is an improvement based on the Dilated Reparam Block proposed in [

46], containing seven parallel depthwise convolutional branches. The kernel size k and dilation rate r for each branch are

. Zero padding is applied after convolution to maintain consistent feature map size, followed by a BN layer for normalization, resulting in seven feature maps, each ∈

. On the one hand, these features are directly summed to generate the fused feature

; on the other hand, they are fed into MSCAM to generate the final spatial-channel attention weights.

| Algorithm 1. Pseudocode of the MSCA-Block’s Workflow |

Input: Feature map:

Output: Feature map: |

- 1:

// Parallel Large-Kernel Module: Multi-scale dilated convolution feature extraction - 2:

features = [] - 3:

for (k, r) in [(9,1), (7,1), (5,1), (3,1), (3,2), (3,3), (3,4)]: do - 4:

append BN((X)) to features - 5:

end for - 6:

// Multi-scale feature fusion - 7:

Fused = 0 - 8:

for i ← 0 to features.length: do - 9:

U += features[i] // fused shape: (N,C,H,W) - 10:

end for - 11:

// Feature compression for attention computation - 12:

compression_maps = [] - 13:

for j ← 0 to features.length: do - 14:

append {j+1} (features[j]) to compression_maps - 15:

end for - 16:

// Spatial attention mechanism - 17:

F = concatenate compression_maps along channe dimension // shape: (N, 7C/2, H, W) - 18:

agg = concatenate([avg_pool(F), max_pool(F)]) // shape: (N,2,H,W) - 19:

SW = Sigmoid((agg)) // shape: (N,7,H,W) - 20:

SAF = concatenate(SW ), i = 1…7 // shape: (N, 7C/2, H, W) - 21:

// Channel attention mechanism - 22:

s = global_pool(SAF) // shape: (N, 7C/2, 1, 1) - 23:

z = fc1(s) // shape: (N, d, H, W); d = 7C/32 - 24:

CW = sigmoid(fc2(z)) // shape: (N, 7C/2, 1, 1) - 25:

attn = SAF CW // shape: (N, 7C/2, H, W) - 26:

// Final projection and feature modulation - 27:

SCW = (attn) // shape: (N, C, H, W) - 28:

Y = SCW U - 29:

return Y

|

Inside MSCAM, seven independent 1 × 1 convolutions first compress the channel number of the seven feature maps generated by PLKM to C/2, which are then concatenated along the channel dimension to obtain feature

, alleviating subsequent computational cost. Next, the module introduces a spatial attention mechanism to select key spatial context information. Specifically, global average pooling and global max pooling are applied to

along the channel dimension, respectively. The results are concatenated, passed through a 7 × 7 convolution and a Sigmoid activation function to obtain the spatial attention weight

. This weight corresponds to the seven different scale branch features along the channel dimension. Subsequently, the attention weight of each channel is element-wise multiplied with the compressed feature of the corresponding branch, completing adaptive weighting in the spatial dimension. Finally, the weighted results of each branch are concatenated along the channel dimension to obtain the spatially enhanced feature

. These processes are mathematically expressed as:

where

denotes the sigmoid function and ⊙ denotes element-wise multiplication.

After spatial modeling, MSCAM further applies a channel attention mechanism to optimize the selection of cross-scale channel information. Specifically, global average pooling is applied to SAF along the spatial dimensions, obtaining a statistical feature of size

. This is then mapped through two fully connected layers: the first layer compresses the channel number to

, and the second layer restores it to the original channel dimension, followed by a Sigmoid activation to generate the channel attention weight

. Subsequently, the channel weight CW is element-wise multiplied with SAF, followed by a 1 × 1 convolution for fusion, yielding the spatially and channel-wise dual-enhanced feature representation

. Finally, SCW is multiplied by the initial fused feature U to obtain the output of MSCAM,

. These processes are mathematically expressed as:

where fc denotes a fully connected layer.

2.3. Muti-Scale Spatial-Channel Attention Feature Fusion Module

The structure of the feature fusion module MSCA-FFM is shown in

Figure 1c. As this module shares the MSCAM foundation with the MSCA-Block and is structurally highly similar, we focus here on its specific role and design adaptations for the multi-modal fusion task.

Algorithm 2 shows the feature processing process of MSCA-FFM in pseudocode. Specifically, input features from different modalities are first compressed via 1 × 1 convolutions, then concatenated to form feature

. Subsequently, global average pooling and max pooling are applied to F along the spatial dimensions. The results are concatenated, processed by a 7 × 7 convolution and a Sigmoid function to generate the spatial attention weight

. This weight corresponds to the different modality features along the channel dimension. Each modality’s compressed feature is multiplied by its corresponding spatial weight slice, and the weighted results are summed element-wise to yield the spatially enhanced fusion feature

. This is expressed as:

Furthermore, to explore cross-modal channel dependencies, the module applies global average pooling to SAF along the spatial dimensions to obtain channel statistics. These are then compressed and restored through two fully connected layers, generating the channel attention weight

. This weight is applied to SAF, and the result finally passes through a 1 × 1 convolution to restore the channel count, outputting the fused result Fused. The overall process is defined as:

| Algorithm 2. Pseudocode of the MSCA-FFM’s Workflow |

Input: Three feature maps:

Output: Feature map: |

- 1:

// Feature compression for each modality - 2:

compression_maps = [] - 3:

for m in {, }: do - 4:

append (m) to compression_maps - 5:

end for - 6:

// Aggregation of features and generation of spatial attention weights - 7:

F = concatenate compression_maps along channe dimension - 8:

agg = concatenate([avg_pool(F), max_pool(F)]) // shape: (N, 2, H, W) - 9:

SW = Sigmoid((agg)) // shape: (N, 2, H, W) - 10:

SAF = - 11:

// Computation and application of channel attention weights - 12:

s = global_pool(weighted_attn) // shape: (N, C/2, 1, 1) - 13:

z = fc1(s) // shape: (N, C/4, 1, 1) - 14:

CW = sigmoid(fc2(z)) // shape: (N, C/2, 1, 1) - 15:

attn = SAF CW - 16:

// Final channel restoration and output - 17:

= (attn) // shape: (N, C, H, W) - 18:

return

|

2.4. Network Architecture

Overall Network Architecture: As shown in

Figure 1a, MSCANet is a symmetric dual-branch encoder-decoder architecture. The model takes two input images of size 1024 × 1024 × 3 and extracts features through two structurally identical yet parameter-independent Backbones. Each Backbone contains four stages and outputs feature maps from these four stages, with channel dimensions of 64, 128, 320, and 512, respectively, while the spatial resolution progressively decreases across stages. Specifically, Stage 1 downsamples the input to 256 × 256 × 64 through a 7 × 7 convolution (stride = 2), Batch Normalization (BN), ReLU activation, and a 3 × 3 max pooling (stride = 2). Stages 2 to 4 further downsample through Feature size compression layer, outputting feature maps of sizes 128 × 128 × 128, 64 × 64 × 320, and 32 × 32 × 512, respectively. Subsequently, corresponding stage features from the two branches are fused stage-by-stage via our proposed MSCA-FFM modules, rather than only at the bottleneck. Specifically, four MSCA-FFM modules operate on the four levels of features output by the two Backbones, enabling comprehensive information interaction from shallow to deep layers.

The features fused by MSCA-FFM are then fed into the decoder for multi-scale feature aggregation and progressive upsampling. The decoder adopts a four-stage design, corresponding to the four feature hierarchies of the encoder. The decoding process starts from the deepest features (32 × 32 × 512): first, the channel dimension is compressed to the decoding channel number (channels = 64) via a 1 × 1 convolution, then the features are enhanced by a Block incorporating global-local attention mechanisms. Subsequently, the current features are fused with the encoder’s Stage 3 features (64 × 64 × 320) through a Weighted Fusion (WF) module: the current features are first upsampled by a factor of 2 to 64 × 64 via bilinear interpolation and adaptively weighted with the encoder features whose channels are adjusted by a 1 × 1 convolution. The fused features are further processed by another Block. This process is repeated for Stage 2 and Stage 1: features are successively upsampled to 128 × 128 and 256 × 256 and fused with the corresponding encoder features (128 × 128 × 128, 256 × 256 × 64) via WF modules. In the final Stage 1 fusion, the decoder employs a more sophisticated FeatureRefinementHead, which not only includes weighted fusion but also integrates position attention and channel attention mechanisms to enhance feature representation. Finally, the fused 256 × 256 × 64 features are passed through a segmentation head (comprising a 3 × 3 convolution, Dropout, and a 1 × 1 convolution) to generate initial predictions, which are then upsampled by a factor of 4 via bilinear interpolation to produce the final prediction map with the same resolution as the input, i.e., 1024 × 1024 × C (where C is the number of classes). The entire decoder progressively integrates multi-scale information from the encoder through skip connections, achieving fine boundary recovery and multi-scale context aggregation.

Detailed Structure of MSCANet Backbone: Stage 1 of the Backbone consists of two consecutive 3 × 3 convolutional layers (BasicBlock) for extracting shallow features. Stages 2 to 4 are constructed with the MSCA-Layer as the core building unit. Each MSCA-Layer comprises two main submodules: a feature extraction module composed of an MSCA-Block followed by a 3 × 3 convolutional layer, and a Multi-Layer Perceptron (MLP) module, both employing residual connections, as illustrated in

Figure 1a. Specifically, the input to each MSCA-Layer first undergoes Batch Normalization (BatchNorm2d) and then enters the attention module: this module consists sequentially of a 1 × 1 convolution, a GELU activation function, the MSCA-Block, an additional 3 × 3 convolution, and another 1 × 1 convolution. The output of this submodule is first element-wise multiplied by a learnable layer-scale parameter (initialized to 1 × 10

−2), then added to the original input after DropPath regularization, forming the first residual connection. Subsequently, the features are again normalized by BatchNorm and fed into the MLP module, which also consists of two 1 × 1 convolutions and a depthwise separable convolution (DWConv), with GELU as the activation function. The output of the MLP module is similarly scaled by a layer-scale parameter and combined with the input via DropPath, forming the second residual connection.

The MSCA-Block is the core innovation of this paper, designed to capture multi-scale contextual information. It first extracts multi-scale features through a Parallel Large-Kernel Module (PLKM): a fixed 9 × 9 depthwise convolution and six groups of depthwise separable convolutions with different kernel sizes (7, 5, 3, 3, 3, 3) and dilation rates (1, 1, 1, 2, 3, 4) process the input features in parallel. All convolutional layers are followed by Batch Normalization (BatchNorm2d) layers but without immediate activation functions. The resulting seven groups of features are first fused by element-wise summation to generate the base multi-scale feature (denoted as U). Concurrently, these seven groups of features are each compressed by a 1 × 1 convolution and then concatenated before being fed into the Multi-Scale Spatial-Channel Attention Module (MSCAM). The MSCAM first aggregates the average-pooled and max-pooled features, then passes the concatenated result through a 7 × 7 convolution and a Sigmoid function to generate spatial weight masks. The channel attention involves global average pooling, two fully connected layers (with ReLU activation in between), and a Sigmoid function to produce channel weights. The attention-weighted features are finally projected back to the original channel dimension via a 1 × 1 convolution and element-wise multiplied with the aforementioned U to achieve feature recalibration (details can be found in

Section 2.2). The GELU activation function is extensively used within this module. Subsequently, the output of the MSCA-Block passes through an additional 3 × 3 convolution for local feature smoothing and finally through another 1 × 1 convolution to complete the projection.

3. Experimental Setting

3.1. Datasets

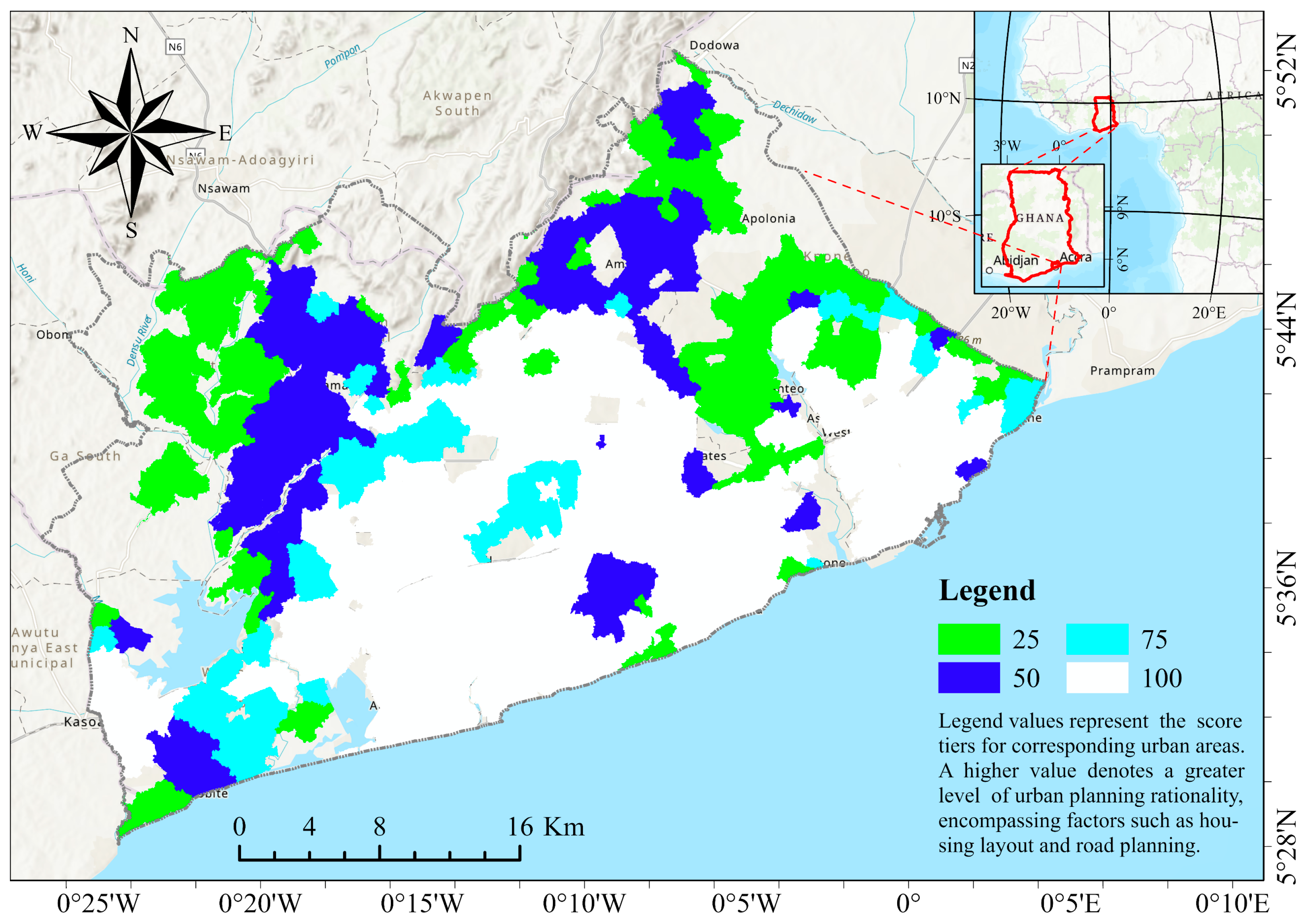

This study first constructed an urbanization monitoring dataset focusing on the core urban area of Accra, the capital of Ghana, with its extent shown in

Figure 2. As one of the fastest-growing metropolises in West Africa, Accra’s central city exhibits high-density development, accompanied by large-scale sprawl of informal settlements in its periphery [

48,

49]. This rapid and uneven urban expansion has introduced a series of complex challenges, including drastic changes in land use structure and increased pressure on ecosystems [

50]. This dual pattern of high-density core development and peripheral informal sprawl encapsulates the typical characteristics of urban growth in many African cities. Consequently, studying Accra’s urbanization offers transferable insights and technical solutions for monitoring similar processes across the continent.

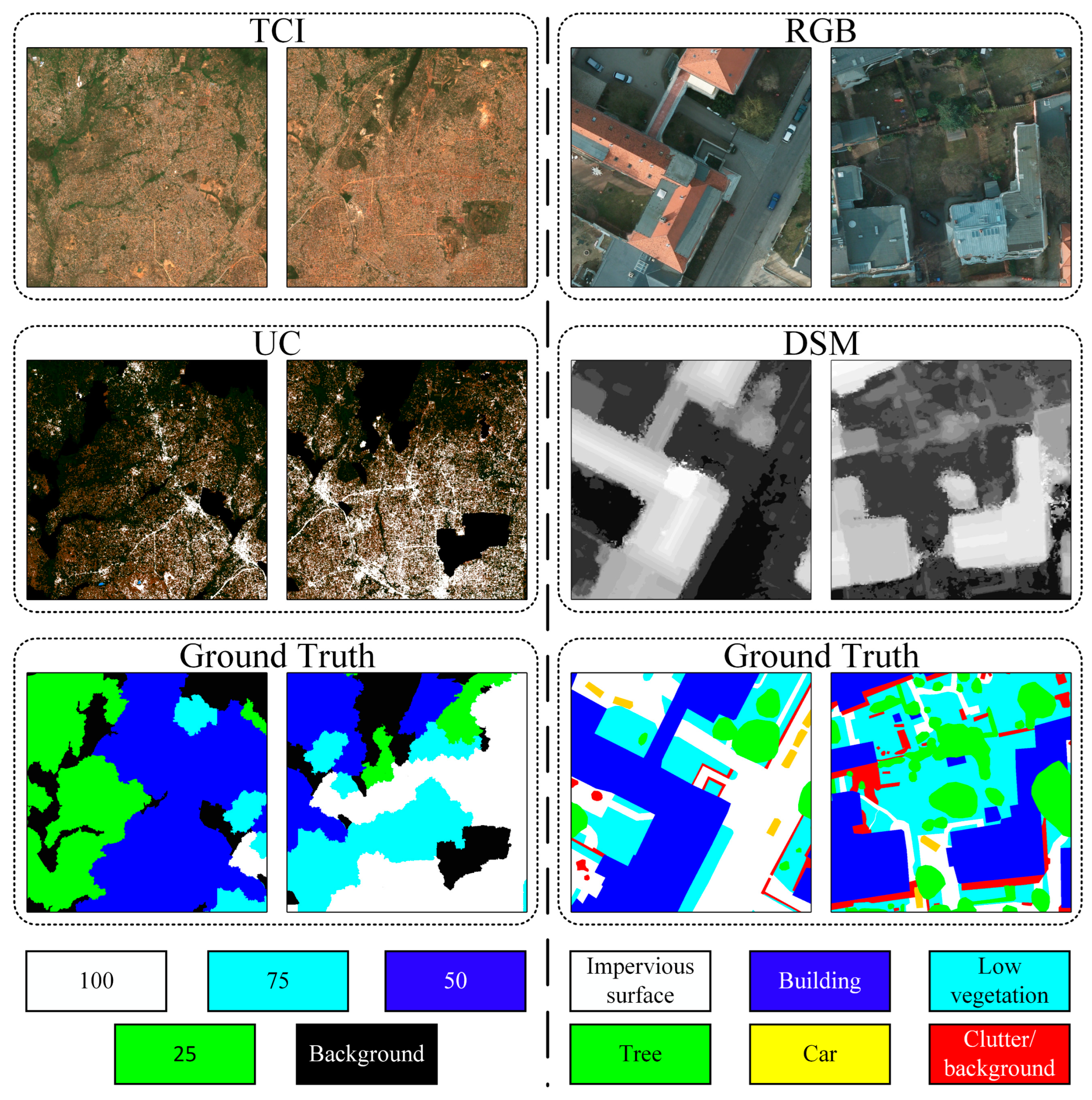

For labeling the urban spatial structure of the study area, urban areas were categorized into four classes, with the result shown in

Figure 3. The classification standard was set to four levels (100, 75, 50, 25) to reflect degree of urbanization, as further described below. The labels were obtained through our collaboration with the University of Ghana. The labeling procedure, and detailed information regarding the formal guidelines or rubric can be found in the reference [

51] provided by the Accra collaborators. Here, we only outline a brief overview of the labeling process.

The label was designed to assess urbanization levels with a focus on planning quality. It is based on a combination of automated segmentation of Sentinel-2B images (band 2, 3, 4 and 8) acquired on 25 December 2017 (east) and 4 January 2017 (west), followed by a visual inspection using both Sentinel-2 and Google Earth (GE) satellite data. The multi-resolution segmentation function of the eCognition (V10.0.1) software was applied with a scale parameter of 225, a shape parameter value of 0.8 and a compactness parameter value of 0.8, producing segment boundaries that, based on visual inspection, correspond reasonably well to the boundaries between urban areas of different densities and structures, while minimizing over-segmentation. The segment boundaries were subsequently extracted and superimposed on detailed GE imagery to enable a more precise assessment of urbanization levels. Based on visual interpretations, each segment was classified into one of five categories indicating the approximate percentage covered by urban development, which serves as a proxy for the intensity and orderliness of development. The categories are: (100) Fully Urbanized/Well-Planned (approximately 75–100% urban cover), (75) Medium-High Urbanization/Moderately Planned (approximately 50–75% urban cover), (50) Medium-Low Urbanization/Sub-Optimally Planned (approximately 25–50% urban cover), (25) Low Urbanization/Poorly Planned (approximately 5–25% urban cover), and Non-Urban. The percentage values represent the average coverage of built-up area within a segment and are closely correlated with the rationality of urban layout; higher coverage in a well-structured pattern typically corresponds to better planning outcomes.

Furthermore, to thoroughly validate the model’s adaptability and generalization capability in urbanization monitoring tasks, this study also conducted additional experiments using the public ISPRS Potsdam dataset (

https://www.isprs.org/, accessed on 21 December 2025). The ISPRS Potsdam dataset, released by the International Society for Photogrammetry and Remote Sensing (ISPRS), is a widely recognized public benchmark for semantic segmentation of high-resolution remote sensing imagery in urban areas. Geographically located in the historic city of Potsdam, Germany, the dataset consists of 38 aerial orthophotos with a very high spatial resolution of 5 cm per pixel, each with dimensions of 6000 × 6000 pixels, providing exceptionally detailed ground information. Commonly utilized for algorithm development and performance evaluation in the remote sensing community, the dataset includes very high-resolution orthophotos in RGB, near-infrared (NIR), and false-color infrared (IRRG) compositions, as well as corresponding Digital Surface Models (DSM). It also provides pixel-level semantic labels covering five foreground classes (impervious surface, building, low vegetation, tree, and car) and one background class (clutter/background).

3.2. Data Preprocessing

Accra Dataset: During the construction of the Accra dataset, we acquired Sentinel-2 remote sensing data from two time points (4 January 2017, and 26 February 2019) from the Copernicus Data Space Ecosystem (

https://dataspace.copernicus.eu/, accessed on 21 December 2025). The original Sentinel-2 multispectral imagery was atmospherically corrected using Sen2Cor (V02.12.03) software to generate 24-bit, three-channel (B02, B03, B04) True Color Images (TCI), which served as the primary modal data for studying urbanization in Accra. Simultaneously, referencing the urban classified algorithm provided by SentinelHub (

https://custom-scripts.sentinel-hub.com/, accessed on 21 December 2025), bands B02, B03, B04, B08, and B11 were selected to synthesize the Normalized Difference Water Index (NDWI), Normalized Difference Vegetation Index (NDVI), and a Bare Soil Index. These indices were then synthesized into three-channel 24-bit Urban Classification Images (UC) as auxiliary modal data by applying specific thresholds to each index. Readers interested in the details of this synthesis are referred to the website provided above for further information, as this method is not a core contribution of our work and will not be elaborated on in detail here. To ensure input data consistency, both TCI and UC were uniformly down-sampled to a 10 m spatial resolution in the WGS 1984 coordinate system. Finally, all images were cropped into 1024 × 1024 patches.

The urban labels used in this study were derived from a 2017 collaboration with the University of Ghana, reflecting the urban structural patterns and planning quality at that time. Consequently, the 2017 imagery is fully aligned with the label’s temporal reference. We employed the 2019 imagery as an independent test set to evaluate the model’s generalization capability based on the 2017 labels. Data from 2017 was used for training and validation, while data from 2019 served as the test set, ultimately constructing a dataset containing 638 training samples, 274 validation samples, and 446 test samples (as shown on the left side of the dashed line in

Figure 4).

This split design is based on two key considerations. First, while Accra is undergoing rapid expansion, the core urban fabric (including the layout of major roads, formal settlements, and key infrastructure) exhibits significant temporal stability over a 2-year period. The model’s task is to learn the spatial patterns (e.g., building density, layout regularity) associated with planning rationality, which are relatively persistent features of the urban landscape. Second, and more importantly, we aimed to test the model’s predictive capability. Testing on imagery from a different year (2019) provides a more rigorous and realistic assessment of the model’s robustness and applicability. It simulates a practical scenario where a model trained on past data (and labels) is deployed to analyze a more recent scene, thereby testing its ability to generalize across minor temporal variations, rather than merely memorizing the specific spectral signatures of the training year. We acknowledge that the absence of labels for 2019 may introduce a potential domain shift or error; however, the strong performance achieved on the 2019 test set (as reported in

Section 4.1) suggests that the learned features are temporally transferable for the task of urban structure assessment, which is the core focus of this work.

ISPRS Potsdam Dataset: We used the RGB images from the ISPRS Potsdam Dataset as the primary modal input and the DSM as the auxiliary modality. All data were uniformly cropped into 1024 × 1024 patches, resulting in 2376 training samples, 36 validation samples, and 504 test samples (as shown on the right side of the dashed line in

Figure 4).

3.3. Model Variants

To comprehensively evaluate the effectiveness and module contribution of the proposed method, this study constructed six model variants, as detailed in

Table 1. These variants can be divided into two categories: performance comparison models and ablation study models. The performance comparison part includes the single-branch MSCANet-1 and the dual-branch MSCANet-2, evaluated on the Accra and ISPRS Potsdam datasets, respectively. All models used in the performance comparison experiments, along with their corresponding Floating Point Operations per Second (FLOPs), parameter counts, Memory and Frames Per Second (FPS), are summarized in

Table 2.

The ablation study part consists of the baseline model and its three improved versions: MSCANet-a, MSCANet-b, and MSCANet-c, all employing the dual-branch framework. The Baseline model retains only the Parallel Large-Kernel Module (PLKM), removing the core Multi-Scale Spatial-Channel Attention Module (MSCAM). Multi-scale features extracted by PLKM are fused via simple element-wise addition, and multi-modal features are processed using direct channel concatenation.

3.4. Implementation Details

To comprehensively evaluate model performance, this study employs Overall Accuracy (OA), mean F1-score (mF1), and mean Intersection over Union (mIoU) as the primary evaluation metrics, ensuring a fair comparison between the proposed MSCANet and other representative methods.

All experiments were conducted on an NVIDIA GeForce RTX 4090 GPU with 24 GB VRAM, implemented using the PyTorch 1.11.0 framework. The AdamW optimizer was used uniformly during training, with an initial learning rate of 0.0004, a weight decay coefficient of 0.01, a fixed batch size of 8, and random seed 42. All models were trained from scratch without using pre-trained weights, for a maximum of 225 epochs. For the loss function, a combination of Soft Cross Entropy Loss and Dice Loss was used.

To further strengthen the evaluation of urbanization element extraction capability, this study additionally introduced the Building Density Root Mean Squared Error (BDRMSE) and Impervious Surface Coverage Root Mean Squared Error (ICRMSE) metrics on the ISPRS Potsdam dataset. The establishment of these metrics aims to align the evaluation with the annotation objectives of another core dataset (Accra) in this study. The Accra dataset employs score labels that reflect the rationality of urban planning, focusing on continuous attributes such as building density and layout regularity. To validate the model’s generalization capability for such planning-related tasks on the widely used ISPRS Potsdam dataset, we designed BDRMSE and ICRMSE. These metrics directly measure the model’s estimation accuracy of the overall density and coverage of key urban elements by calculating the error between the predicted and ground-truth ratios of building/impervious surface pixels across the entire image, thereby providing supplementary quantitative evidence for the model’s applicability in practical urban planning analysis. Their calculation formulas are defined as:

where

and

represent the pixel counts of the prediction and ground truth for a single sample, respectively,

is the total number of pixels in a single sample image, and n is the total number of samples.

4. Experiment and Analysis

4.1. Analysis and Results of Accra Dataset

Systematic comparative experiments were conducted on the Accra dataset between the proposed MSCANet and various representative models, covering both single-modal and multi-modal approaches. Single-modal methods, including MANet [

52], MAResU-Net [

53], DC-Swin [

54], UnetFormer [

47], FT-UnetFormer [

47], LSKNet [

45], and the parallel large-kernel structured UniRepLKNet [

46], used only TCIs as input. Multi-modal methods, including UiSNet [

55], SA-GATE [

56], CMX [

57], and CMGFNet [

58], incorporated UC data in addition to TCI. Our MSCANet was also evaluated under both single-modal and dual-modal conditions.

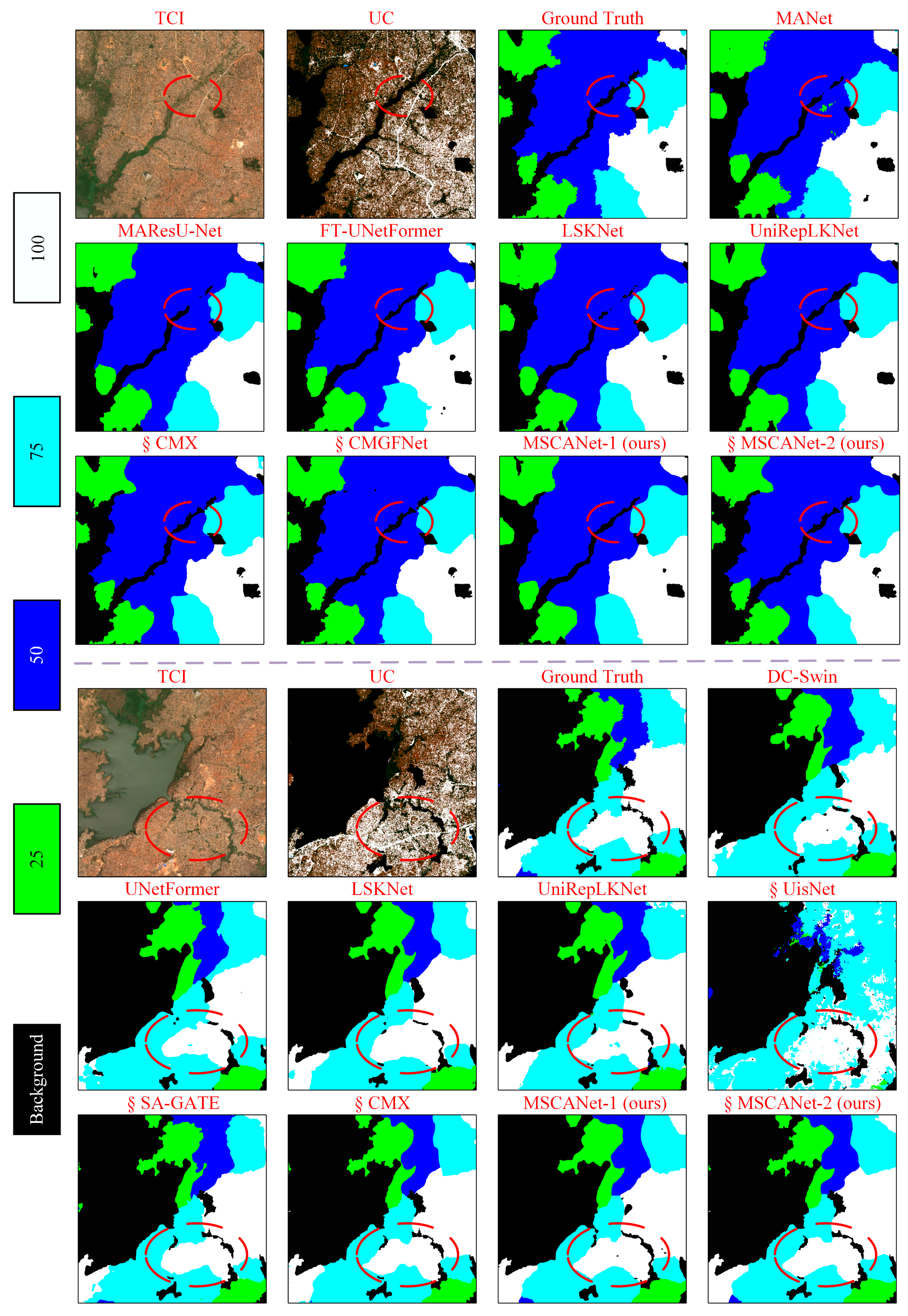

As shown in

Table 3, in the single-modal task, MSCANet-1 achieved more prominent performance compared to both the mainstream Transformer architecture FT-UnetFormer and the large-kernel convolutional structured UniRepLKNet. Specifically, MSCANet-1 achieved an mIoU of 90.49%, OA of 96.97%, and mF1 of 94.95%. Compared to UniRepLKNet, which also employs a parallel large-kernel structure, MSCANet-1 improved mIoU, OA, and mF1 by 2.03%, 0.67%, and 1.22%, respectively. This result not only verifies the excellent performance of MSCANet-1 on the Accra dataset but also indicates the rationality and effectiveness of the structural adjustments and optimizations based on UniRepLKNet. Furthermore, it confirms the effectiveness of the MSCA-Block module, demonstrating its superior design and performance in fusing multi-scale features extracted by parallel large kernels compared to the original UniRepLKNet model. The parallel large-kernel convolution within this module extracts rich multi-scale features from the image, while the subsequent multi-scale spatial-channel attention mechanism dynamically filters and assigns weights to these features, enabling the model to focus more accurately on key information, thereby enhancing overall performance.

In the multi-modal task, MSCANet-2, fusing TCI and UC data, further achieved an mIoU of 95.02%, OA of 98.70%, and mF1 of 97.43%. Compared to other multi-modal methods, MSCANet-2 surpassed SA-GATE, CMX, and CMGFNet in mIoU by 6.56%, 0.66%, and 4.61%, respectively. This indicates that MSCANet-2 not only exhibits stronger representational capacity in the feature extraction stage but also effectively integrates features from different modalities during the fusion stage, leading to results superior to comparable methods.

Regarding computational efficiency, our MSCANet series also demonstrates strong performance on the Accra dataset. Among all compared models, the single-modal MSCANet-1 requires 104.24 G FLOPs, while the dual-modal MSCANet-2 requires 201.41 G FLOPs. MSCANet-1 achieves the second-lowest FLOPs among single-modal models, slightly higher than UnetFormer (96.71 G FLOPs) at a marginal cost increase. Crucially, MSCANet-1 delivers the best performance among its peers. For instance, its mIoU (90.49%) surpasses the range of other single-modal models (81.47–89.06%), while most competitors, except UnetFormer (96.71 G FLOPs, 81.47% mIoU), consume over 127.23 G FLOPs. This confirms that MSCANet-1 successfully balances high performance with computational efficiency. A focused comparison with the representative parallel large-kernel model UniRepLKNet (88.37% mIoU, 202.91 G FLOPs) reveals that MSCANet-1 achieves a 2.12% higher mIoU while reducing FLOPs by 98.67 G, representing a significant optimization. Similarly, in multi-modal comparisons, MSCANet-2 (95.02% mIoU, 201.41 G FLOPs) achieves the best mIoU while its FLOPs are substantially lower than other multi-modal models, all requiring over 627.94 G FLOPs. Its efficiency even surpasses many single-modal models. Lower FLOPs imply faster training, lower deployment costs, and better suitability for urban monitoring applications.

Additional efficiency metrics are summarized in

Table 2. MSCANet-1 has 30.16 M parameters, a memory of 480.50 MB, and processes 82.62 frames per second (FPS). MSCANet-2 has 60.48 M parameters, a memory footprint of 630.31 MB, and an FPS of 45.70. Compared to their respective model groups, the MSCANet series ranks above average across these metrics. Specifically, MSCANet-1’s parameters (30.16 M), memory (480.50 MB), and FPS (82.62) are superior to the single-modal averages (32.49 M, 557.52 MB, 78.4). MSCANet-2’s parameters (60.48 M) are better than the multi-modal average (67.65 M), while its memory (630.31 MB) and FPS (45.70) significantly outperform the corresponding averages (1611.98 MB, 22.65). In summary, our models exhibit well-balanced performance and efficiency across multiple metrics, demonstrating successful optimization of the large-kernel architecture. This aligns with the original design intent of such architectures—enhancing global modeling and performance without excessive computational cost and meets the practical requirements of urban monitoring tasks.

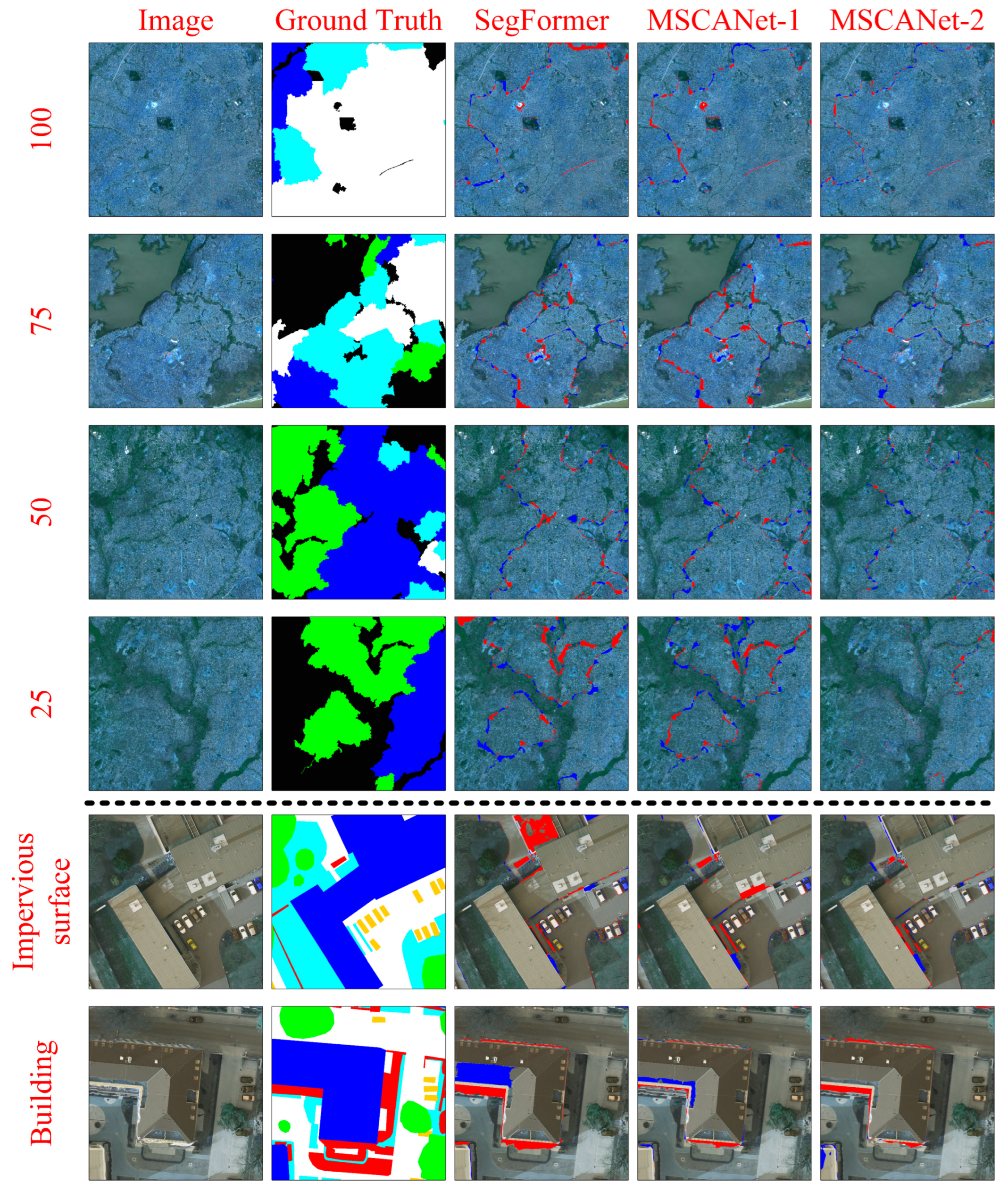

Furthermore, visualization results of various models on the Accra dataset (

Figure 5) show that the MSCANet series models achieve more accurate class recognition, less confusion, better delineation of class boundaries, and overall segmentation contours closer to the Ground Truth.

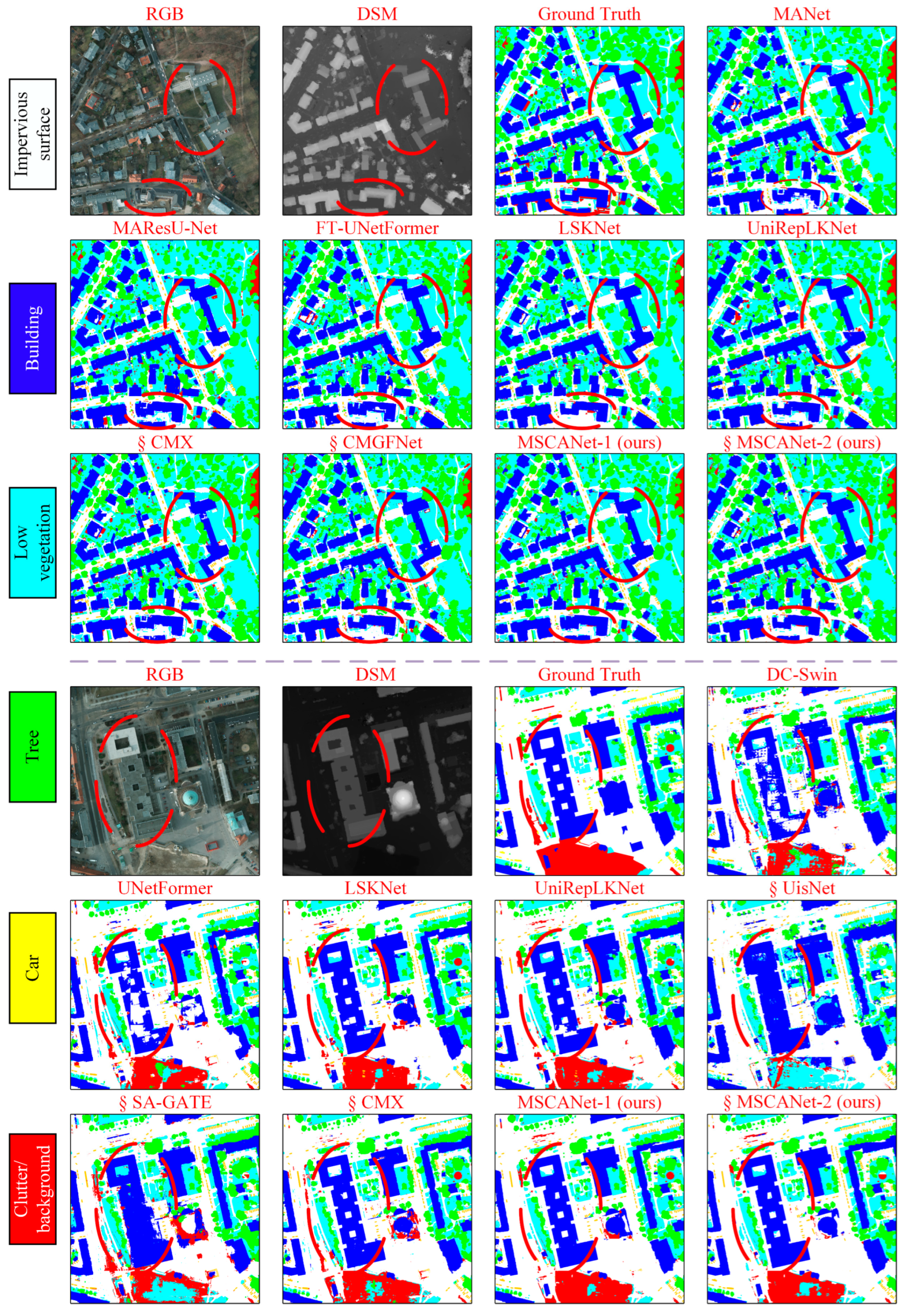

4.2. Analysis and Results of ISPRS Potsdam Dataset

To further validate the generalization capability of MSCANet, systematic experiments were conducted on the public ISPRS Potsdam dataset. As shown in

Table 4, the MSCANet series models demonstrate advantages in identifying urbanization-related elements. The single-modal model MSCANet-1 achieved the best performance among all compared models, with an mIoU of 80.92% and an mF1 of 89.31%. Critically, its performance on the application-oriented non-standard metrics is also strong. Its BDRMSE is 0.8063%, which is highly competitive among single-modal methods and is only slightly higher than the best result from the dual-modal SA-GATE model (0.7012%). This indicates MSCANet-1’s excellent capability in accurately estimating the overall building coverage, a key metric for urban spatial structure analysis. Its ICRMSE is 1.9227%, demonstrating competent performance in quantifying impervious surface area. After introducing DSM data for dual-modal fusion, MSCANet-2 achieved an mIoU of 80.81%, OA of 88.56%, and mF1 of 89.21%. It performed particularly well in the “Building” class, achieving an mIoU of 92.26%, which is 1.95% higher than the sub-optimal comparable method CMGFNet, highlighting its advantage in building feature representation. Furthermore, MSCANet-2 achieved the lowest BDRMSE (0.7966%) among all dual-modal methods in the comparison, underscoring that the proposed fusion mechanism significantly enhances the model’s precision in estimating building density. Its ICRMSE is 2.0994%, maintaining a satisfactory level for impervious surface estimation. Notably, even under single-modal conditions, MSCANet-1’s performance surpassed most dual-modal methods. It achieved mIoU scores of 90.26% for “Building” and 84.63% for “Impervious surface”, exceeding all single-modal methods and most dual-modal models. Concurrently, MSCANet-2 under dual-modal conditions also surpassed all compared methods in these two key categories, further validating the excellent performance of the MSCANet series in urban-related feature recognition. The strong results across both standard segmentation metrics and the application-specific density/coverage error metrics collectively verify that the MSCANet architecture is not only effective for pixel-wise classification but also highly capable of supporting quantitative urban morphology analysis.

In addition to the overall metrics, a detailed per-class analysis provides deeper insight into the model’s morphological segmentation capabilities. For the Impervious surface class, MSCANet-1 achieved the highest IoU of 84.63% among all compared models, demonstrating superior performance in extracting continuous urban surfaces. MSCANet-2 also performed strongly in this category with 84.49% IoU, surpassing all other dual-modal methods. In the critical Building class, MSCANet-2 achieved a remarkable IoU of 92.26%, which is not only the highest score in the comparison but also represents a significant 1.95% improvement over the next best method (CMGFNet, 90.31%), highlighting the model’s exceptional capability in capturing structural boundaries and geometric details. For Low vegetation, MSCANet-1 attained the best IoU of 73.59%, while MSCANet-2 achieved 72.80%, both outperforming other dual-modal approaches and indicating robust feature discrimination for ground-level vegetation. In the Tree category, MSCANet-1 again led with 73.83% IoU, and MSCANet-2 followed closely with 73.32%, showcasing consistent performance in delineating vegetation canopy. Finally, for the Car class, MSCANet-1 achieved a high IoU of 82.26%, surpassed only marginally by UniRepLKNet (82.38%) among single-modal methods, and MSCANet-2 attained 81.19%. This comprehensive per-class evaluation confirms that the MSCANet series delivers balanced and state-of-the-art performance across all urban land cover categories, with particular strengths in impervious surfaces and buildings.

Visualization results (

Figure 6) validate the model’s generalization capability from another perspective. In densely built areas, the segmentation results of the MSCANet series models are superior to comparison methods in terms of completeness and edge sharpness, more accurately reconstructing the spatial structure of complex building clusters. Simultaneously, MSCANet demonstrates stronger differentiation capability when handling categories with highly similar pixel features like buildings and impervious surfaces, mitigating common misclassification issues.

4.3. Ablation Study

4.3.1. Module Ablation Study

As shown in

Table 5, on the Accra dataset, MSCANet-a, which introduces the Multi-Scale Spatial-Channel Attention Module (MSCAM) in the feature extraction block, improved mIoU by 2.02% compared to the Baseline, while MSCANet-b, which uses MSCA-FFM, improved it by 2.41%. On the ISPRS Potsdam dataset, the introduction of the respective modules also brought performance improvements. MSCANet-a achieved the best overall performance, with an mIoU of 80.86%, OA of 88.62%, and mF1 of 89.25%, representing a 1.27% mIoU improvement over the Baseline. This validates the effectiveness of the Multi-Scale Spatial-Channel Attention Module (MSCAM). This module applies spatial and channel-wise attention weighting to the multi-scale features extracted by the Parallel Large-Kernel Module (PLKM), thereby enhancing the model’s discriminative ability. Meanwhile, MSCANet-b and MSCANet-c also improved mIoU over the Baseline by 0.57% and 1.22%, respectively.

Regarding model efficiency metrics, the MSCA-Block and MSCA-FFM modules impacted computational cost and inference speed in different combinations. Compared to the Baseline (FLOPs 161.40 G, Params 43.32 M), MSCANet-a introduced moderate computational overhead (FLOPs increased to 200.07 G) while improving performance, with a slight decrease in inference speed (45.80 FPS). The computational complexity of MSCANet-b (162.74 G FLOPs) was close to the Baseline, but its speed increased to 59.05 FPS, indicating that the introduction of MSCA-FFM offers certain efficiency advantages while maintaining performance gains. MSCANet-c, integrating both modules, had FLOPs increased to 201.41 G and inference speed maintained at 45.70 FPS, with its performance-efficiency balance slightly lower than MSCANet-a. MSCANet-a achieves a better efficiency-accuracy trade-off while ensuring performance improvement, demonstrating comprehensive advantages better aligned with the practical needs of urbanization monitoring tasks.

From the visualization results of the ablation study (

Figure 7), it can be observed that on the Accra dataset, the MSCANet variant models achieve more accurate class recognition and higher segmentation completeness for urban areas. On the ISPRS Potsdam dataset, compared to the Baseline, the MSCANet series models show improved completeness in identifying urbanization-related categories like “Building”. The confusion observed in the Baseline model was alleviated after using either MSCA-Block or MSCA-FFM. Furthermore, even for non-focus categories (e.g., “Car”), the recognition effectiveness of the MSCANet variants was significantly enhanced. These visualization results further confirm the effectiveness of the core module, the Multi-Scale Spatial-Channel Attention Module (MSCAM). This module effectively fuses the multi-scale features extracted by parallel large kernels, overcoming the limitations of simple element-wise addition for multi-scale feature fusion, and also demonstrates good performance in multi-modal feature fusion.

4.3.2. Modal Ablation Study

To thoroughly analyze the effectiveness of multimodal fusion and validate the core role of the MSCA-FFM module, this section conducts detailed modal ablation experiments. To this end, we take the proposed MSCANet-1 as the single-modal baseline model, feeding only the TCI or UC modality on the Accra dataset, and only the RGB or DSM modality on the ISPRS Potsdam dataset for experimentation. Meanwhile, the MSCANet-2 model equipped with MSCA-FFM is used for corresponding dual-modal (TCI+UC, RGB+DSM) experiments, with results shown in

Table 6. It should be noted that the MSCANet-a model listed in

Table 6 is the control model used in the module ablation experiments in the previous subsection (i.e., MSCANet-2 with MSCA-FFM removed). The introduction of this model aims to provide a more comprehensive comparison.

Analyzing the data in

Table 6 reveals that the benefits of multimodal fusion vary across datasets. On the Accra dataset, dual-modal fusion yields significant gains. The complete MSCANet-2 (TCI+UC) achieves the best performance among all models (mIoU 95.02%, OA 98.70%). Compared with the best-performing single-modal model MSCANet-1 (TCI) (mIoU 90.49%), its mIoU shows an absolute improvement of 4.53%. More importantly, compared with the MSCANet-a model, which also uses dual-modal input but removes MSCA-FFM (mIoU 93.53%), MSCANet-2 further achieves a 1.49% mIoU improvement. This comparison strongly demonstrates that on the Accra dataset, the performance improvement does not merely come from the simple combination of TCI and UC modal information. The MSCA-FFM module effectively integrates features from TCI and UC by dynamically adjusting spatial-channel weights of multimodal features.

However, on the ISPRS Potsdam dataset, the experimental observations reveal the complexity of multimodal fusion. The single-modal model MSCANet-1 (RGB) already shows strong competitiveness (mIoU 80.92%), while the DSM single-modal model performs relatively weakly (mIoU 57.54%). In this context, the dual-modal fusion results of both the complete MSCANet-2 and the MSCANet-a (mIoU 80.81% and 80.86%, respectively) are at the same level as the performance of the RGB single-modal model, showing no significant improvement. Moreover, the results of MSCANet-2 and MSCANet-a are extremely close. This outcome indicates that the benefits of multimodal fusion depend on data characteristics and the quality of modal information. We analyze that potential reasons may include: for the high-resolution urban scenes in the ISPRS Potsdam dataset, RGB imagery itself contains extremely rich semantic and texture information sufficient to support accurate segmentation, leading the model to rely heavily on it. In contrast, the DSM modality provided in this dataset serves as single-channel additional elevation information. MSCA-FFM may not be advantageous or proficient in processing such single-channel elevation information; unlike UC, which is a satellite remote sensing multispectral composite image rich in information, DSM as single-channel elevation data may inherently contain limited detailed information. It is possible that MSCA-FFM is not adept at handling such single-channel data with sparse informative details, failing to effectively extract and refine features for fusion with RGB data. Therefore, in this scenario, the role of the MSCA-FFM module is more about maintaining the dominance of the primary RGB modality and filtering out unnecessary information from the DSM modality, rather than stimulating a significant performance leap. Thus, this may also represent a current limitation of MSCA-FFM: it does not adequately refine features for fusion when processing single-channel data with limited detailed information. This also points us toward future directions for improving MSCA-FFM.

4.4. Analysis of Model Stability and Generalization Capability

To rigorously evaluate the stability and robustness of the proposed model, this section designs repeated experiments with random initialization. The performance of deep learning models can be influenced by weight initialization, especially when the amount of data is relatively limited. To quantify this uncertainty and provide a more statistically meaningful performance evaluation, we repeatedly train and test all models using three different random seeds (42, 3407, 2025). The final results are presented in the form of mean ± standard deviation. Meanwhile, to enhance the persuasiveness of the comparison, we introduce the recently high-performing SegFormer model as a baseline, and retain the parallel large-kernel model UniRepLKNet and the advanced multimodal fusion model CMX, which are closely related to this study, for comparison. The results are summarized in

Table 7 (Accra dataset) and

Table 8 (ISPRS Potsdam dataset).

Analyzing the data in

Table 7 shows that on the Accra dataset, the proposed MSCANet-2 (TCI+UC) model achieves the best performance across all overall metrics (mIoU 94.95% ± 0.065%, mF1 97.39 ± 0.035%). Furthermore, its standard deviations are generally smaller than or comparable to those of other comparative models. For instance, its mIoU standard deviation (0.065) is significantly lower than that of UniRepLKNet (0.268). This indicates that MSCANet-2 not only exhibits superior performance but also shows good stability against different weight initializations. Notably, even the single-modal MSCANet-1 (TCI) outperforms the single-modal SegFormer and UniRepLKNet, confirming the effectiveness of the MSCANet backbone design. Compared to the multimodal fusion model CMX, MSCANet-2 shows improvements in the vast majority of categories and overall metrics, with smaller performance fluctuations, demonstrating that the MSCA-FFM module possesses superior and more stable fusion capabilities when integrating TCI and UC modalities.

On the ISPRS Potsdam dataset (

Table 8), the results present different characteristics. The single-modal MSCANet-1 (RGB) model achieves the most competitive mIoU (80.69% ± 0.205%), surpassing SegFormer, UniRepLKNet, and the multimodal model CMX (RGB+DSM). This highlights the powerful representational capacity of the MSCANet architecture when using only RGB information. However, MSCANet-2 (RGB+DSM), which incorporates the DSM modality, shows an overall mIoU (80.78% ± 0.047%) nearly identical to that of MSCANet-1. However, Its mIoU variance (0.047) is far lower than that of MSCANet-1 (0.205). This extremely low standard deviation (0.047) strongly proves that the MSCANet-2 model possesses exceptional robustness; its performance is minimally affected by random initialization, yielding highly consistent outputs. In contrast, the CMX model exhibits significant fluctuation (standard deviation 1.400) on the “Car” category, suggesting its fusion mechanism may be less stable. Through systematic random initialization experiments, we have verified that the MSCANet series models not only exhibit excellent performance across different datasets but also demonstrate good stability and low sensitivity to initial conditions. Especially in the multimodal setting, MSCANet-2 achieves a balance between performance and stability.

Concurrently, to quantitatively evaluate the model’s capability in delineating urban structural boundaries, we generated false positive/false negative (FP/FN) overlay error maps, as shown in

Figure 8. This figure comparatively displays the segmentation error results of the MSCANet series models and the strong baseline model SegFormer on the two datasets. For the Accra dataset, we selected samples from all four planning rationality grades (100, 75, 50, 25) for visualization, comprehensively covering the categories of research interest. For the ISPRS Potsdam dataset, the focus is on visualizing two categories closely related to urban structures—“Impervious Surface” and “Building”—to clearly reflect the model’s ability to distinguish key object boundaries. From

Figure 8, it can be visually observed that on the Accra dataset, the error distributions of MSCANet-1 and SegFormer are relatively similar. In contrast, MSCANet-2, which incorporates multimodal fusion, shows a significant reduction in misclassified pixels (red and blue) at the boundaries of various categories, indicating a clear improvement in boundary segmentation accuracy. On the ISPRS Potsdam dataset, SegFormer exhibits obvious confusion between “Impervious Surface” and “Building” (e.g., misclassifying some “Impervious Surface” as “Building,” or vice versa). In comparison, the MSCANet series models show markedly fewer errors in such confused regions, verifying their stronger ability to distinguish semantically similar urban elements, thereby enabling more precise delineation of urban structures.

5. Discussion

The motivation behind this study was the suboptimal fusion of multi-scale features in existing parallel large-kernel models. To address this, we proposed the Multi-scale Spatial Channel Attention Module (MSCAM). Through adaptive adjustments, we extended it into the MSCA-FFM module and integrated it into our dual-modal model, MSCANet-2. Extensive experiments were conducted on both the self-built Accra dataset and the public ISPRS Potsdam dataset. The results demonstrate that our MSCANet series models, which integrate MSCAM and the Parallel Large-Kernel Module (PLKM) as their core feature extraction blocks, exhibit outstanding performance and efficiency. Our models consistently delivered excellent results in both single-modal and dual-modal experimental comparisons.

However, a detailed analysis of the ablation studies (

Table 5 and

Table 6) and stability experiments (

Table 7 and

Table 8) reveals a disparity in the utility of our proposed MSCA-FFM across different datasets. It contributed to significant performance gains on the Accra dataset but did not yield a clear improvement on the ISPRS Potsdam dataset. Although the dual-branch model MSCANet-2, which incorporates MSCA-FFM, demonstrated superior robustness in terms of stability, its performance on the ISPRS Potsdam dataset being comparable to our single-branch model MSCANet-1 is an issue that cannot be overlooked.

We currently propose several hypotheses for this. First, it may be related to the characteristics of the ISPRS Potsdam data. The primary RGB modality presents detailed surface information, while the auxiliary single-channel DSM modality provides elevation data. This type of modality, with fewer details in a single channel, poses a greater challenge for fusion modules, requiring stronger feature extraction and fusion capabilities to effectively process such single-channel feature information. Our comprehensive experiments indicate that MSCA-FFM currently has limitations when handling this type of single-channel data. Second, it is possible that since MSCA-FFM is essentially an adaptation of the MSCAM, its simultaneous use with the MSCA-Block—which already contains MSCAM—might result in consecutive spatial-channel attention operations. This redundancy may not be beneficial and could, in some cases, impair feature representation. This is suggested by the module ablation results in

Table 5, where MSCANet-b (using only MSCA-FFM) showed a clear improvement over the Baseline on the ISPRS Potsdam dataset. However, when both MSCA-Block and MSCA-FFM were used together (MSCANet-c), the results were no notable different from variant models using only MSCA-Block (MSCANet-a), failing to deliver further gains.

This observation does not entirely negate the role of MSCAM. The MSCA-Block, constructed with the combination of MSCAM and PLKM at its core, has performed excellently in variant models like MSCANet-1, and its effectiveness across both datasets has been thoroughly validated through comprehensive experiments. The strong performance of MSCANet-1 itself has been extensively verified. These results robustly confirm the efficacy of MSCAM and its successful optimization of the PLKM, achieving our primary research objective. The adaptability and generalization of its derivative, the MSCA-FFM module, may require further investigation and refinement in future work.

6. Conclusions

Addressing the lack of monitoring datasets for rapidly urbanizing areas and issues such as feature confusion in multi-scale feature fusion within existing parallel large-kernel convolution methods, this study proposes a technical framework for urbanization monitoring covering both dataset construction and model design. Data-wise, we constructed a multi-modal Accra dataset integrating urbanization level category labels with Sentinel-2 multispectral imagery, serving as an experimental benchmark. Model-wise, we proposed the Multi-Scale Spatial-Channel Attention Network (MSCANet). Its core module, the Multi-Scale Spatial-Channel Attention Module (MSCAM), enables joint modeling of spatial and channel dimensions, achieving dynamic weighted selection of multi-scale features and effectively mitigating feature confusion in parallel large-kernel convolutional architectures. Furthermore, by adaptively modifying the MSCAM, we proposed the MSCA-FFM module for more effective multi-modal feature fusion.

Experiments on the self-built Accra dataset and the public ISPRS Potsdam dataset demonstrate that the MSCANet series models achieve a favorable balance between accuracy and efficiency, exhibiting competitive overall performance. They surpass comparable models in terms of the balance between computational complexity, parameter count, and inference speed. Ablation studies further validate the effectiveness of MSCAM in handling multi-scale features and fusing multi-modal information.

Overall, the urbanization monitoring technical solution proposed in this study provides new ideas and methodological references for intelligent urban monitoring. We anticipate that the multi-modal data constructed based on Sentinel-2 multispectral remote sensing data and level category labels provided by University of Ghana, along with the comprehensive technical pathway from model construction to optimization, can offer a feasible monitoring reference for rapidly developing urban areas. This work aims to support the execution of practical urbanization monitoring tasks and provide a technical basis for planning and management in relevant regions.