Image Texture Analysis Enhances Classification of Fire Extent and Severity Using Sentinel 1 and 2 Satellite Imagery

Abstract

1. Introduction

2. Materials and Methods

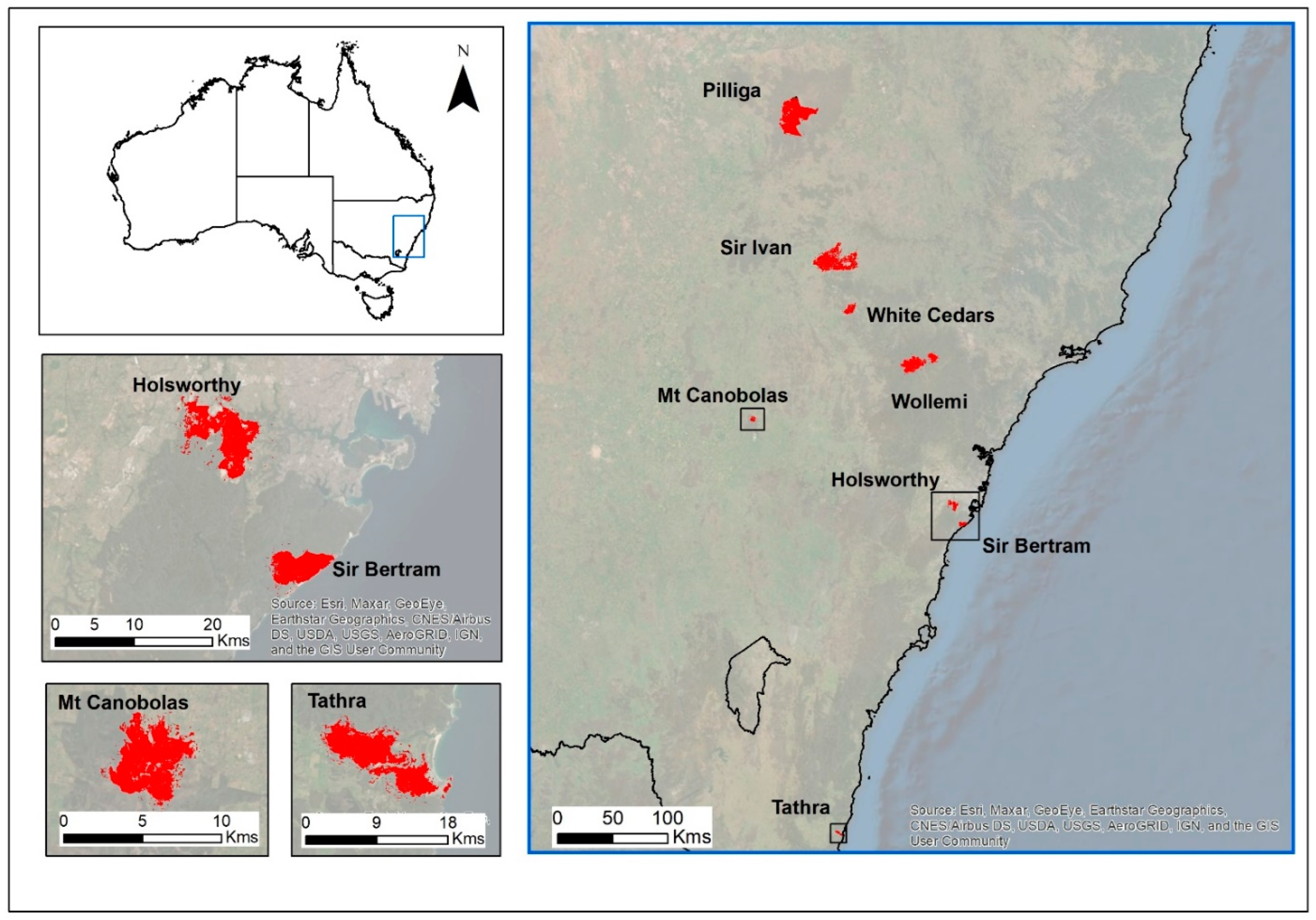

2.1. Study Area

2.2. Imagery Selection and Pre-Processing

2.3. Input Indices

2.4. Independent Training and Validation Datasets

2.5. Accuracy Assessment

3. Results

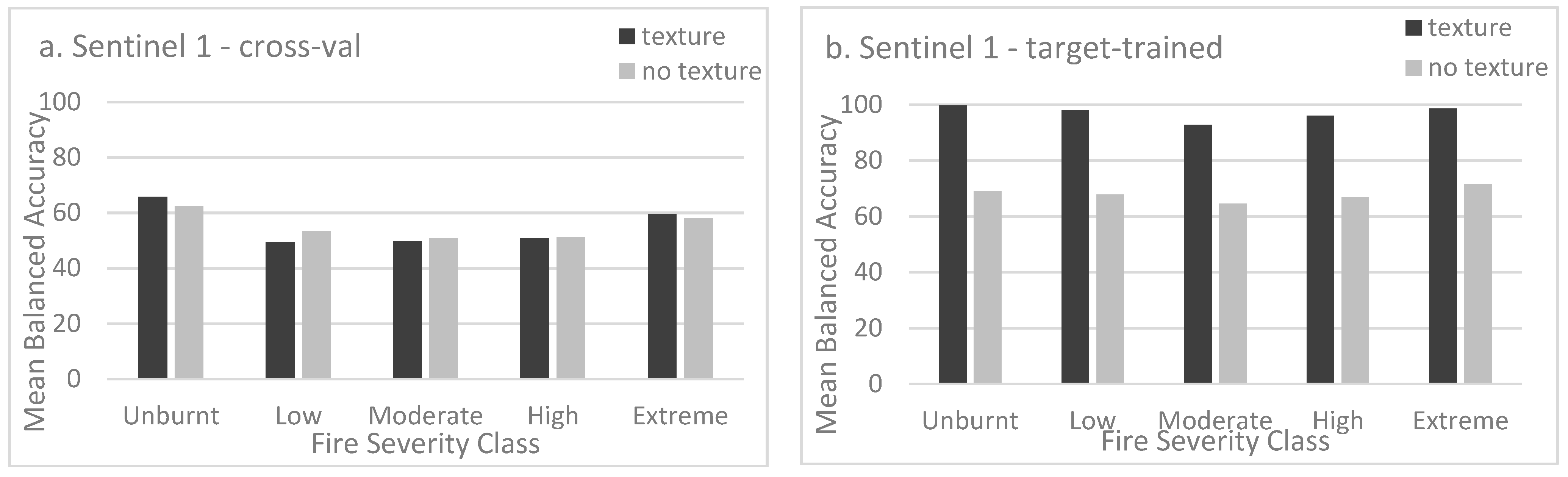

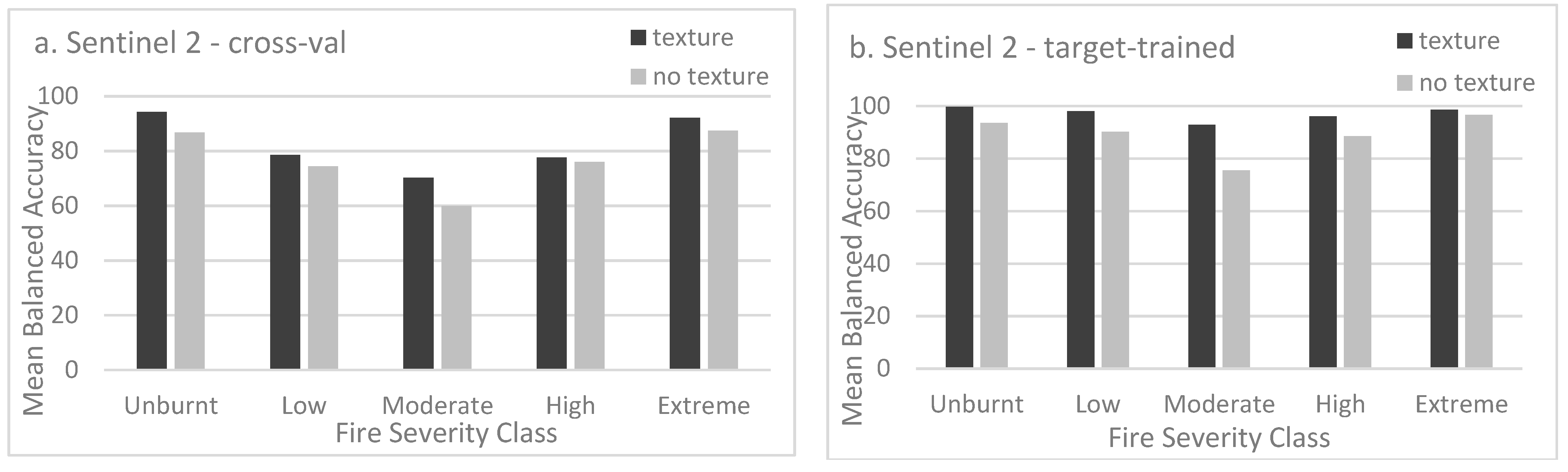

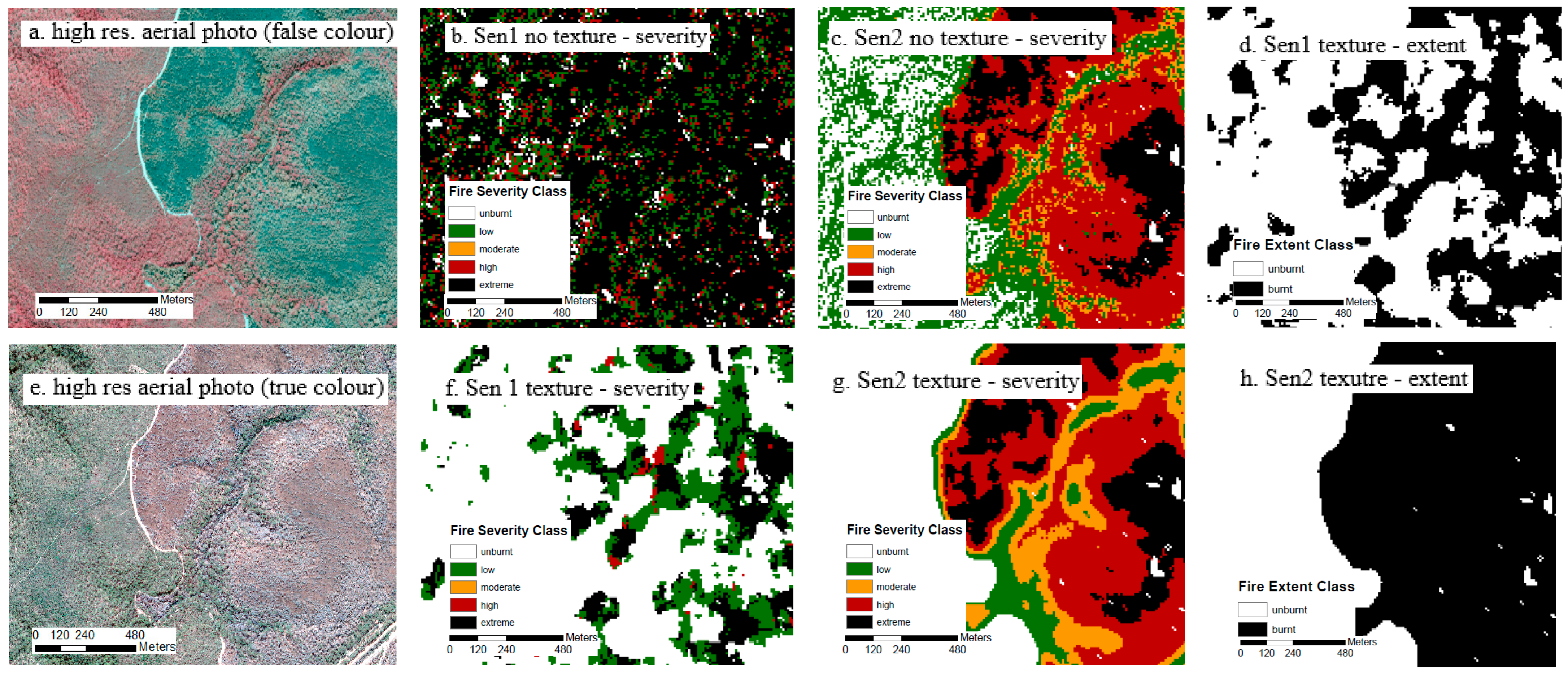

3.1. Fire Severity

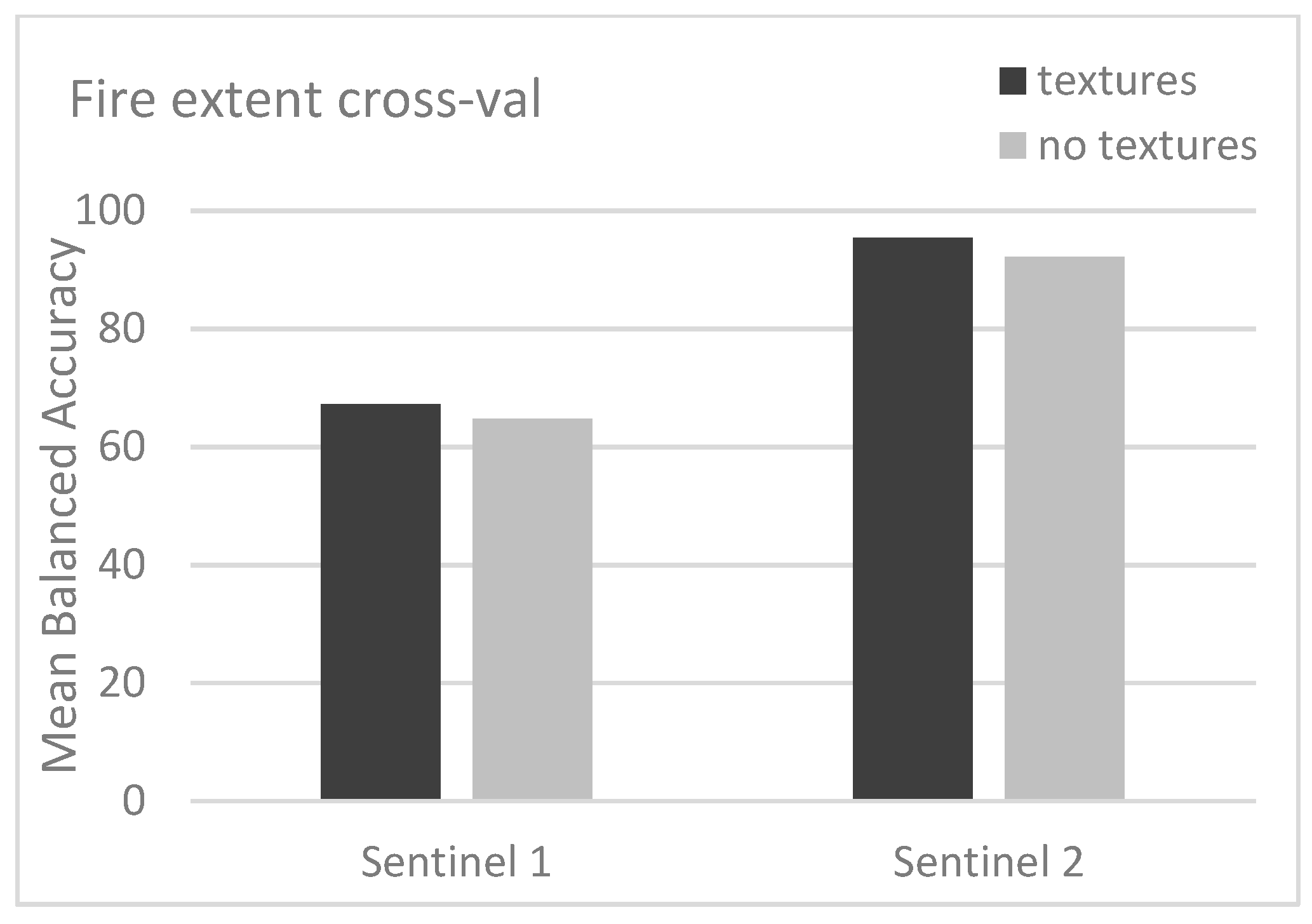

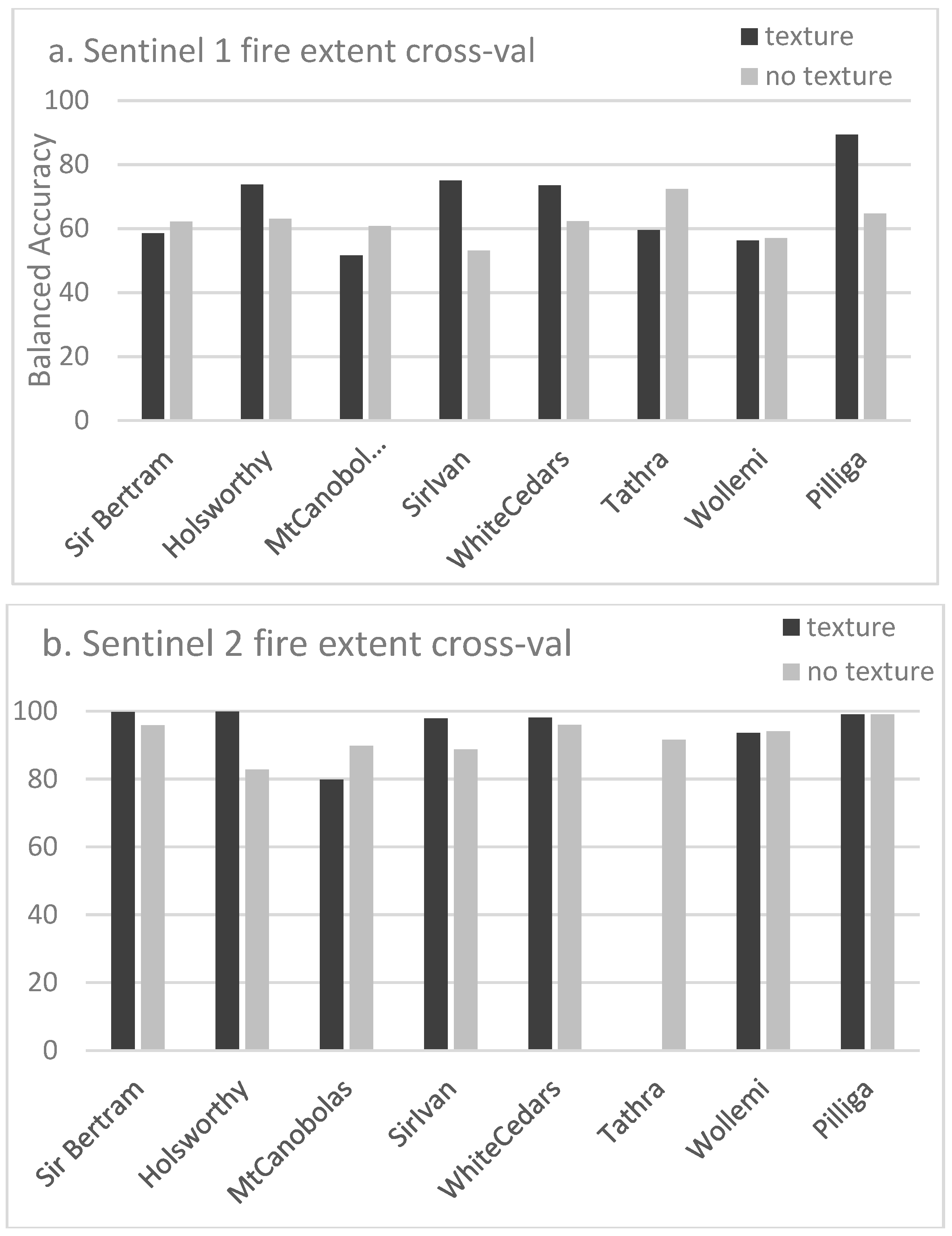

3.2. Fire Extent

3.3. Variable Importance

4. Discussion

4.1. Sensitivity to Texture Indices

4.2. Differences Due to Sensor Type

4.3. Data Fusion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Belward, A.S.; Skoien, J.O. Who launched what, when and why: Trends in global land-cover observation capacity from civilian earth observation satellites. ISPRS J. Photogramm. Remote Sens. 2015, 103, 115–128. [Google Scholar] [CrossRef]

- Wekerle, T.; Filho, J.B.P.; da Costa, L.E.V.L.; Trabasso, L.G. Status and trends of Smallsats and their launch vehicles - an up-to-date review. J. Aerosp. Technol. Manag. 2017, 9, 269. [Google Scholar] [CrossRef]

- Peral, E.; Im, E.; Wye, L.; Lee, S.; Tanelli, S.; Rahmat-Samii, Y.; Horst, S.H. Radar technologies for earth remote sensing from CubeSat platforms. Proc. IEEE 2018, 106, 404–418. [Google Scholar] [CrossRef]

- Liao, X.; Zhang, Y.; Su, F.; Yue, H.; Ding, Z.; Liu, J. UAVs surpassing satellites and aircraft in remote sensing over China. Int. J. Remote Sens. 2018, 39, 7138–7153. [Google Scholar] [CrossRef]

- Manfreda, S.; McCabe, M.F.; Miller, P.; Lucas, R.; Madrigal, V.P.; Mallinis, G.; Dor, E.B.; Helman, D.; Estes, L.; Ciraolo, G.; et al. On the use of unmanned aerial systems for environmental monitoring. Remote Sens. 2018, 10, 641. [Google Scholar] [CrossRef]

- Chen, Z.; Pasher, J.; Duffe, J.; Behnamian, A. Mapping arctic coastal ecosystems with high resolution optical satellite imagery using a hybrid classification approach. Can. J. Remote Sens. 2017, 43, 513–527. [Google Scholar] [CrossRef]

- Dhingra, S.; Kumar, D. A review of remotely sensed satellite image classifiacation. Int. J. Electr. Comput. Eng. 2019, 9, 1720–1731. [Google Scholar]

- Sghaier, M.O.; Hammami, I.; Foucher, S.; Lepage, R. Flood extent mapping from time-series SAR images based on texture analysis and data fusion. Remote Sens. 2018, 10, 237. [Google Scholar] [CrossRef]

- Mohanaiah, P.; Sathyanarayana, P.; GuruKumar, L. Image texture feature extraction using GLCM approach. Int. J. Sci. Res. Publ. 2013, 3, 2250–3153. [Google Scholar]

- Guo, W.; Rees, W.G. Altitudinal forest-tundra ecotone categorisation using texture-based classification. Remote Sens. Environ. 2019, 232, 111312. [Google Scholar] [CrossRef]

- Murray, H.; Lucieer, A.; Williams, R. Texture-based classification of sub-Antarctic vegetation communities on Heard island. Int. J. Appl. Earth Obs. Geoinf. 2010, 12, 138–149. [Google Scholar] [CrossRef]

- Wood, E.M.; Pidgeon, A.M.; Radeloff, V.C.; Keuler, N.S. Image texture as a remotely sensed measure of vegetation structure. Remote Sens. Environ. 2012, 121, 516–526. [Google Scholar] [CrossRef]

- Wulder, M. Optical remote-sensing techniques for the assessment of forest inventory and biophysical parameters. Prog. Phys. Geogr. 1998, 22, 449–476. [Google Scholar] [CrossRef]

- Liu, D.; Kelly, M.; Gong, P. A spatial-temporal approach to monitoring forest disease spread using multi-temporal high spatial resolution imagery. Remote Sens. Environ. 2006, 101, 167–180. [Google Scholar] [CrossRef]

- Haralick, R.M.; Shanmugam, K.; Dinstein, I. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, 3, 610–621. [Google Scholar] [CrossRef]

- Hall-Beyer, M. Practical guidelines for choosing GLCM textures to use in landscape classification tasks over a range of moderate spatial scales. Int. J. Remote Sens. 2017, 38, 1312–1338. [Google Scholar] [CrossRef]

- Keeley, J.E. Fire intensity, fire severity and burn severity—A brief review and suggested usage. Int. J. Wildland Fire 2009, 18, 116–126. [Google Scholar] [CrossRef]

- Chafer, C.J.; Noonan, M.; Macnaught, E. The post-fire measurement of fire severity and intensity in the Christmas 2001 Sydney wildfires. Int. J. Wildland Fire 2004, 13. [Google Scholar] [CrossRef]

- Lentile, L.B.; Holden, Z.A.; Smith, A.M.S.; Falkowski, M.J.; Hudak, A.T. Remote sensing techniques to assess active fire characteristics and post-fire effects. Int. J. Wildland Fire 2006, 15, 319–345. [Google Scholar] [CrossRef]

- Miller, J.D.; Thode, A.E. Quantifying burn severity in a heterogenous landscape with a relative version of the delta Normalized Burn Ratio (dNBR). Remote Sens. Environ. 2007, 109, 66–80. [Google Scholar] [CrossRef]

- Hall, R.J.; Freeburn, J.T.; de Groot, W.J.; Pritchard, J.M.; Lynham, T.J.; Landry, R. Remote sensing of burn severity: Experience from western Canada boreal fires. Int. J. Wildland Fire 2008, 17, 476–489. [Google Scholar] [CrossRef]

- Cansler, C.A.; McKenzie, D. Climate, fire size and biophysical setting control fire severity and spatial pattern in the northern Cascade Range, USA. Ecol. Appl. 2014, 24, 1037–1056. [Google Scholar] [CrossRef]

- Pettorelli, N.; Laurance, W.F.; O’Brien, T.G.; Wegmann, M.; Nagendra, H.; Turner, W. Satellite remote sensing for applied ecologists: Opportunities and challenges. J. Appl. Ecol. 2014, 51, 839–848. [Google Scholar] [CrossRef]

- Miller, J.D.; Knapp, E.E.; Key, C.H.; Skinner, C.N.; Isbell, C.J.; Creasy, R.M.; Sherlock, J.W. Calibration and validation of the relative differenced Normalised Burn Ration (RdNBR) to three measures of fire severity in the Sierra Nevada and Klamath Mountains, California, USA. Remote Sens. Environ. 2009, 113, 645–656. [Google Scholar] [CrossRef]

- Hoy, E.E.; French, N.H.F.; Turetsky, M.R.; Trigg, S.N.; Kasischke, E.S. Evaluating the potential of Landsat TM/ETM+ imagery for assessing fire severity in Alaskan black spruce forests. Int. J. Wildland Fire 2008, 17, 500–514. [Google Scholar] [CrossRef]

- Smith, A.M.S.; Eitel, J.U.H.; Hudak, A.T. Spectral analysis of charcoal on soils: Implications for wildland fire severity mapping methods. Int. J. Wildland Fire 2010, 19, 976–983. [Google Scholar] [CrossRef]

- Marino, E.; Guillen-Climent, M.; Ranz, P.; Tome, J.L. Fire severity mapping in Garajonay National Park: Comparison between spectral indices. FLAMMA 2014, 7, 22–28. [Google Scholar]

- Epting, J.; Verbyla, D.; Sorbel, B. Evaluation of remotely sensed indices for assessing burn severity in interior Alaska using Landsat TM and ETM+. Remote Sens. Environ. 2005, 96, 328–339. [Google Scholar] [CrossRef]

- Morgan, P.; Keane, R.E.; Dillon, G.K.; Jain, T.B.; Hudak, A.T.; Karau, E.C.; Sikkink, P.G.; Holden, Z.A.; Strand, E.K. Challenges of assessing fire and burn severity using field measures, remote sensing and modelling. Int. J. Wildland Fire 2014, 23, 1045–1060. [Google Scholar] [CrossRef]

- Meddens, A.J.H.; Kolden, C.A.; Lutz, J.A. Detecting unburned areas within wildfire perimeters using Landsat and ancillary data across the northwestern United States. Remote Sens. Environ. 2016, 186, 275–285. [Google Scholar] [CrossRef]

- Gibson, R.; Danaher, T.; Hehir, W.; Collins, L. A remote sensing approach to mapping fire severity in south-eastern Australia using sentinel 2 and random forest. Remote Sens. Environ. 2020, 240, 111702. [Google Scholar] [CrossRef]

- Collins, L.; Griffioen, P.; Newell, G.; Mellor, A. The utility of Random Forests in Google Earth Engine to improve wildfire severity mapping. Remote Sens. Environ. 2018, 216, 374–384. [Google Scholar] [CrossRef]

- Sinha, S.; Jeganathan, C.; Sharma, L.K.; Nathawat, M.S. A review of radar remote sensing for biomass estimation. Int. J. Environ. Sci. Technol. 2015, 12, 1779–1792. [Google Scholar] [CrossRef]

- Treuhaft, R.N.; Law, B.E.; Asner, G.P. Forest attributes from radar interferometric structure and its fusion with optical remote sensing. Bioscience 2004, 54, 561–571. [Google Scholar] [CrossRef]

- Tanase, M.A.; Santoro, M.; De La Riva, J.; Pérez-Cabello, F.; Le Toan, T. Sensitivity of X-, C- and L-band SAR backscatter to burn severity in Mediterranean Pine forests. IEEE Trans. Geosci. Remote Sens. 2010, 48, 3663–3675. [Google Scholar] [CrossRef]

- Philipp, M.B.; Levick, S.R. Exploring the potential of C-band SAR in contributing to burn severity mapping in tropical savanna. Remote Sens. 2020, 12, 49. [Google Scholar] [CrossRef]

- Plank, S.; Karg, S.; Martinis, S. Full polarimetric burn scar mapping-the differences of active fire and post-fire situations. Int. J. Remote Sens. 2019, 40, 253–268. [Google Scholar] [CrossRef]

- Ban, Y.; Zhang, P.; Nascetti, A.; Bevington, A.R.; Wilder, M.A. Near real-time wildfire progression monitoring with Sentinel-1 SAR time series and deep-learning. Nat. Sci. Rep. 2000, 10, 1322. [Google Scholar] [CrossRef]

- Lestari, A.I.; Rizkinia, M.; Sudiana, D. Evaluation of combining optical and SAR imagery for burned area mapping using machine learning. In Proceedings of the IEEE 11th Annual Computing and Communication Workshop and Conference, Las Vegas, NV, USA, 27–30 January 2021. [Google Scholar]

- Mutai, S.C. Analysis of Burnt Scar Using Optical and Radar Satellite Data; University of Twente: Enschede, The Netherlands, 2019. [Google Scholar]

- Tariq, A.; Jiango, Y.; Lu, L.; Jamil, A.; Al-ashkar, I.; Kamran, M.; El Sabagh, A. Integrated use of Sentinel-1 and Sentinel-2 and open-source machine learning algorithms for burnt and unburnt scars. Geomat. Nat. Hazards Risk 2023, 14. [Google Scholar] [CrossRef]

- Hutchinson, M.F.; McIntyre, S.; Hobbs, R.J.; Stein, J.L.; Garnett, S.; Kinloch, J. Integrating a global agro-climatic classification with bioregional boundaries in Australia. Glob. Ecol. Biogeogr. 2005, 14, 197–212. [Google Scholar] [CrossRef]

- Filipponi, F. Sentinel-1 GRD preprocessing workflow. In Proceedings of the 3rd International Electronic Conference on Remote Sensing, Online, 22 May–5 June 2019. [Google Scholar]

- Chini, M.; Pelich, R.; Hostache, R.; Matgen, P.; Lopez-Martinez, C. Towards a 20m global building map from Sentinel-1 SAR data. Remote Sens. 2018, 10, 1833. [Google Scholar] [CrossRef]

- Sen, P.K. Estimates of the regression coefficient based on Kendall’s tau. J. Am. Statisics Assoc. 1968, 63, 1379–1389. [Google Scholar] [CrossRef]

- Flood, N.; Danaher, T.; Gill, T.; Gillingham, S. An operational scheme for deriving standardised surface reflectance from Landsat TM/ETM+ and SPOT HRG imagery for eastern Australia. Remote Sens. 2013, 5, 83–109. [Google Scholar] [CrossRef]

- Farr, T.G.; Rosen, P.A.; Caro, E.; Crippen, R.; Duren, R.; Hensley, S.; Kobrick, M.; Paller, M.; Rodriguez, E.; Roth, L.; et al. The Shuttle Radar Topography Mission. Rev. Geophys. 2007, 45. [Google Scholar] [CrossRef]

- Gallant, J.; Read, A. Enhancing the SRTM Data for Australia. Proc. Geomorphometry 2009, 31, 149–154. [Google Scholar]

- Guerschman, J.P.; Scarth, P.F.; McVicar, T.R.; Renzullo, L.J.; Malthus, T.J.; Stewart, J.B.; Rickards, J.E.; Trevithick, R. Assessing the effects of site heterogeneity and soil properties when unmixing photosynthetic vegetation, non-photosynthetic vegetation and bare soil fractions for Landsat and MODIS data. Remote Sens. Environ. 2015, 161, 12–26. [Google Scholar] [CrossRef]

- Morresi, D.; Vitali, A.; Urbinati, C.; Garbarino, M. Forest Spectral Recovery and Regeneration Dynamics in Stand-Replacing Wildfires of Central Apennines Derived from Landsat Time Series. Remote Sens. 2020, 11, 308. [Google Scholar] [CrossRef]

- Yu, H.; Zhang, Z. New Directions: Emerging satellite observations of above-cloud aerosols and direct radiative forcing. Atmos. Environ. 2013, 72, 36–40. [Google Scholar] [CrossRef]

- Zvoleff, A.; Calculate textures from Grey-level Co-occurrence Matrices (GLCMs). R Statisical Computing Software: 2022. Available online: https://www.r-project.org/ (accessed on 1 July 2022).

- Dorigo, W.; Lucieer, A.; Podobnikara, T.; Carnid, A. Mapping invasive Fallopia japonica by combined spectral, spatial and temporal analysis of digital orthophotos. Int. J. Appl. Earth Obs. Geoinf. 2012, 19, 185–195. [Google Scholar] [CrossRef]

- Franklin, S.E.; Hall, R.J.; Moskal, L.M.; Lavigne, M.B. Incorporating texture into classification of forest species composition from airborne multispectral images. Int. J. Remote Sens. 2000, 21, 61–79. [Google Scholar] [CrossRef]

- Kuhn, M.; Wing, J.; Weston, S.; Williams, A.; Keefer, C.; Engelhardt, A.; Cooper, T.; Mayer, Z.; Kenkel, B. Classification and Regression Training; R Statistical Computing Software. 2023. Available online: https://www.r-project.org/ (accessed on 1 July 2022).

- Breiman, L.; Friedman, J.H.; Olshen, R.A.; Stone, C.J. Classification and Regression Trees; Wadsworth International Group: Belmont, CA, USA, 1984. [Google Scholar]

- Breiman, L. Random Forests. Mach. Learn. 2001, 5, 5–32. [Google Scholar] [CrossRef]

- Millard, K.; Richardson, M. On the importance of training data sample selection in random forest image classification: A case study in peatland ecosystem mapping. Remote Sens. 2015, 7, 8489–8515. [Google Scholar] [CrossRef]

- Hudak, A.T.; Robichaud, P.R.; Evans, J.S.; Clark, J.; Lannom, K.; Morgan, P.; Stone, C. Field validation of burned area reflectance classification (BARC) products for post fire assessment. In Remote Sensing for Field Users, Proceedings of the Tenth Forest Service Remote Sensing Applications Conference, Salt Lake City, Utah, 5–9 April 2004; American Society of Photogrammetry and Remote Sensing: Salt Lake City, UT, USA, 2004; p. 13. [Google Scholar]

- McCarthy, G.; Moon, K.; Smith, L. Mapping fire severity and fire extent in forest in Victoria for ecological and fuel outcomes. Ecol. Manag. Restor. 2017, 18, 54–64. [Google Scholar] [CrossRef]

- Tanase, M.A.; Kennedy, R.; Aponte, C. Radar burn ratio for fire severity estimation at canopy level: An example for temperate forests. Remote Sens. Environ. 2015, 170, 14–31. [Google Scholar] [CrossRef]

- Otukei, J.R.; Blaschke, T.; Collins, M. A decision tree approach for identifying the optimum window size for extracting texture features from TerraSAR-X data. Environ. Sci. Math. 2012. [Google Scholar]

- Zhou, T.; Li, Z.; Pan, J. Multi-feature classification of multi-sensors satellite imagery based on dual-polarimetric Sentinel-1A, Landsat-8 OLI and Hyperion images for urban land-cover classification. Sensors 2018, 18, 373. [Google Scholar] [CrossRef]

- Belenguer-Plomer, M.A.; Tanase, M.; Fernandez-Carrillo, A.; Chuvieco, E. Burned area detection and mapping using Sentinel-1 backscatter coefficient and thermal anomalies. Remote Sens. Environ. 2019, 233, 111345. [Google Scholar] [CrossRef]

- Amarsaikhan, D.; Douglas, T. Data fusion and multisource image classification. Int. J. Remote Sens. 2010, 25, 3529–3539. [Google Scholar] [CrossRef]

- Zhang, Q.; Ge, L.; Zhang, R.; Metternicht, G.I.; Du, Z.; Kuang, J.; Xu, M. Deep-learning-based burned area mapping using the synergy of Sentinel-1 and 2 data. Remote Sens. Environ. 2021, 264, 112575. [Google Scholar] [CrossRef]

- Alencar, A.A.; Arruda, V.L.S.; da Silva, W.V.; Conciani, D.E.; Costa, D.P.; Crusco, N.; Duverger, S.G.; Ferreira, N.C.; Franca-Rocha, W.; Hasenack, H.; et al. Long-Term Landsat-Based Monthly Burned Area Dataset for the Brazilian Biomes Using Deep Learning. Remote Sens. 2022, 14, 2510. [Google Scholar] [CrossRef]

- Farasin, A.; Colomba, L.; Garza, P. Double-Step U-Net: A Deep Learning-Based Approach for the Estimation of Wildfire Damage Severity through Sentinel-2 Satellite Data. Appl. Sci. 2020, 10, 4332. [Google Scholar] [CrossRef]

- Lee, I.K.; Trinder, J.C.; Sowmya, A. Application of U-net convolutional neural network to bushfire monitoring in Australia with Sentinel-1/2 data. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, XLIII-B1-2020, 573–578. [Google Scholar] [CrossRef]

- Sudiana, D.; Lestari, A.I.; Riyanto, I.; Rizkinia, M.; Arief, R.; Prabuwono, A.S.; Sumantyo, J.T.S. A hybrid convolutional neural network and random forest for burned area identification with optical and synthetic aperture radar (SAR) data. Remote Sens. 2023, 15, 728. [Google Scholar] [CrossRef]

- Lefsky, M.A.; Cohen, W.B.; Parker, G.G.; Harding, D.J. Lidar remote sensing for ecosystem studies. Bioscience 2002, 52, 19–30. [Google Scholar] [CrossRef]

- Tanase, M.A.; Santoro, M.; Wegmüller, U.; De La Riva, J.; Pérez-Cabello, F. Properties of X-, C- and L-band repeat-pass interferometric SAR coherence in Mediterranean pine forests affected by fires. Remote Sens. Environ. 2010, 114, 2182–2194. [Google Scholar] [CrossRef]

- Tanase, M.A.; Santoro, M.; Aponte, C.; De La Riva, J. Polarimetric properties of burned forest areas at C- and L-band. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 267–276. [Google Scholar] [CrossRef]

| Fire Name | Fire Start Date | Fire End Date | Aerial Photography | Date of Capture | Fire Size (Ha) |

|---|---|---|---|---|---|

| Sir Ivan | 11 February 2017 | 17 February 2017 | 50 cm 4-band ADS | 18 February 2017 (1) | 47,105 |

| White Cedars | 12 February 2017 | 17 February 2017 | 20 cm 4-band ADS | 16 February 2017 (−1) | 5217 |

| Wollemi | 18 January 2018 | 15 February 2018 | 50 cm 4-band ADS | 17 March 2018 (30) | 14,178 |

| Mt Canobolas | 10 February 2018 | 15 February 2018 | 30 cm 4-band ADS | 9 March 2018 (21) | 1891 |

| Sir Bertram | 20 January 2018 | 15 January 2018 | 50 cm 4-band ADS | 11 March 2018 (38) | 2241 |

| Pilliga | 19 January 2018 | 25 February 2018 | 50 cm 4-band ADS | 11 March 2018 (38) | 57,822 |

| Tathra | 18 March 2018 | 19 March 2018 | 10 cm 4-band ADS | 20 March 2018 (1) | 1258 |

| Holsworthy | 13 April 2018 | 18 April 2018 | 50 cm 4-band ADS | 24 April 2018 (6) | 3955 |

| Sensor | Base Index | Texture Metric | Texture Type | Kernels | Variable Names | |

|---|---|---|---|---|---|---|

| Sentinel 1 | dVH | None | None | None | radar_inputVH | |

| Sentinel 1 | dVH | Mean | 1st order | 7, 11 | glcm_radarVH_mean_7 | glcm_radarVH_mean_11 |

| Sentinel 1 | dVH | Variance | 1st order | 7, 11 | glcm_radarVH_var_7 | glcm_radarVH_var_11 |

| Sentinel 1 | dVH | 2nd moment | 2nd order | 7, 11 | glcm_radarVH_2mom_7 | glcm_radarVH_2mom_11 |

| Sentinel 1 | dVH | Contrast | 2nd order | 7, 11 | glcm_radarVH_con_7 | glcm_radarVH_con_11 |

| Sentinel 1 | dVH | Correlation | 2nd order | 7, 11 | glcm_radarVH_corr_7 | glcm_radarVH_corr_11 |

| Sentinel 1 | dVH | Dissimilarity | 2nd order | 7, 11 | glcm_radarVH_dissim_7 | glcm_radarVH_dissim_11 |

| Sentinel 1 | dVH | Homogeneity | 2nd order | 7, 11 | glcm_radarVH_hom_7 | glcm_radarVH_hom_11 |

| Sentinel 1 | dVV | None | None | 7, 11 | radar_inputVV | |

| Sentinel 1 | dVV | Mean | 1st order | 7, 11 | glcm_radarVV_mean_7 | glcm_radarVV_mean_11 |

| Sentinel 1 | dVV | Variance | 1st order | 7, 11 | glcm_radarVV_var_7 | glcm_radarVV_var_11 |

| Sentinel 1 | dVV | 2nd moment | 2nd order | 7, 11 | glcm_radarVV_2mom_7 | glcm_radarVV_2mom_11 |

| Sentinel 1 | dVV | Contrast | 2nd order | 7, 11 | glcm_radarVV_con_7 | glcm_radarVV_con_11 |

| Sentinel 1 | dVV | Correlation | 2nd order | 7, 11 | glcm_radarVV_corr_7 | glcm_radarVV_corr_11 |

| Sentinel 1 | dVV | Dissimilarity | 2nd order | 7, 11 | glcm_radarVV_dissim_7 | glcm_radarVV_dissim_11 |

| Sentinel 1 | dVV | Homogeneity | 2nd order | 7, 11 | glcm_radarVV_hom_7 | glcm_radarVV_hom_11 |

| Sentinel 2 | NBR | None | None | None | dNBR | RdNBR |

| Sentinel 2 | NBR2 | None | None | None | SWIR_dNBR2 | SWIR_RdNBR2 |

| Sentinel 2 | Fractional Cover | None | None | None | RdFCTotal | dFCBare |

| Sentinel 2 | dFCBare | Mean | 1st order | 5, 7 | mean_dFCBare_5 | mean_dFCBare_7 |

| Sentinel 2 | dFCBare | Variance | 1st order | 5, 7 | var_dFCBare_5 | var_dFCBare_7 |

| Sentinel 2 | dNBR | Mean | 1st order | 5, 7 | mean_dNBR_5 | mean_dNBR_7 |

| Sentinel 2 | dNBR | Variance | 1st order | 5, 7 | var_dNBR_5 | var_dNBR_7 |

| Sentinel 2 | SWIR_dNBR2 | Mean | 1st order | 5, 7 | glcm_SWIR_dNBR2_mean_5 | glcm_SWIR_dNBR2_mean_7 |

| Sentinel 2 | SWIR_dNBR2 | Variance | 1st order | 5, 7 | glcm_SWIR_dNBR2_var_5 | glcm_SWIR_dNBR2_var_7 |

| Sentinel 2 | SWIR_dNBR2 | 2nd moment | 2nd order | 5, 7 | glcm_SWIR_dNBR2_2mom_5 | glcm_SWIR_dNBR2_2mom_7 |

| Sentinel 2 | SWIR_dNBR2 | Contrast | 2nd order | 5, 7 | glcm_SWIR_dNBR2_con_5 | glcm_SWIR_dNBR2_con_7 |

| Sentinel 2 | SWIR_dNBR2 | Correlation | 2nd order | 5, 7 | glcm_SWIR_dNBR2_corr_5 | glcm_SWIR_dNBR2_corr_7 |

| Sentinel 2 | SWIR_dNBR2 | Dissimilarity | 2nd order | 5, 7 | glcm_SWIR_dNBR2_dissim_5 | glcm_SWIR_dNBR2_dissim_7 |

| Sentinel 2 | SWIR_dNBR2 | Homogeneity | 2nd order | 5, 7 | glcm_SWIR_dNBR2_hom_5 | glcm_SWIR_dNBR2_hom_7 |

| NA | Random pixels | None | None | None | random | |

| Pixel Colour | Severity Class | Definition | % Foliage Affected by Fire | N Sampling Points |

|---|---|---|---|---|

| Unburnt | Unburnt | 0% of canopy and understory burnt | 40,257 |

| Low | Burnt surface with unburnt canopy | >10% of understory burnt >90% green canopy | 11,198 |

| Moderate | Partial canopy scorched | 20–90% of canopy scorched | 7142 |

| High | Full canopy scorched (+/− partial canopy consumption) | >90% of canopy scorched <50% of canopy biomass consumed | 24,479 |

| Extreme | Full canopy consumption | >50% of canopy biomass consumed | 20,760 |

| Indices | Mean Decrease Gini | Relative Rank |

|---|---|---|

| mean_dFCBare7 | 1262 | 1 |

| mean_dFCBare5 | 1112 | 2 |

| mean_dNBR7 | 832 | 3 |

| mean_dNBR5 | 803 | 4 |

| RdNBR | 762 | 5 |

| dFCBare | 731 | 6 |

| RdFCTotal | 557 | 7 |

| dNBR | 542 | 8 |

| SWIR_RdNBR2 | 384 | 9 |

| SWIR_dNBR2 | 327 | 10 |

| glcm_SWIR_dNBR2_mean_7 | 273 | 11 |

| glcm_SWIR_dNBR2_var_7 | 268 | 12 |

| var_dFCBare7 | 249 | 13 |

| glcm_SWIR_dNBR2_mean_5 | 241 | 14 |

| glcm_SWIR_dNBR2_var_5 | 226 | 15 |

| var_dFCBare5 | 168 | 16 |

| var_dNBR7 | 106 | 17 |

| glcm_radarVH_con_11 | 101 | 18 * |

| glcm_radarVH_mean_11 | 99 | 19 |

| var_dNBR5 | 97 | 20 |

| … | … | … |

| rndm | 31 | 61 |

| Sentinel 1 Fire Severity Indices | Average Rank |

|---|---|

| glcm_radarVH_mean_11 | 1.5 |

| glcm_radarVH_var_11 | 1.8 |

| glcm_radarVV_var_11 | 4.1 |

| glcm_radarVV_mean_11 | 4.3 |

| glcm_radarVH_var_7 | 5.6 |

| glcm_radarVH_mean_7 | 5.9 |

| VHbackscatter | 7.0 |

| glcm_radarVH_con_11 | 9.1 |

| VVbackscatter | 9.4 |

| glcm_radarVV_var_7 | 10.1 |

| Sentinel 2 Fire Severity Indices | Average Rank |

|---|---|

| RdNBR | 1.3 |

| mean_dNBR5 | 3.1 |

| mean_dNBR7 | 3.1 |

| mean_dBare7 | 3.6 |

| mean_dBare5 | 4.1 |

| SWIR_RdNBR2 | 6.5 |

| dNBR | 7.5 |

| dBare | 8.3 |

| glcm_SWIR_dNBR2_var_7 | 9.6 |

| SWIR_dNBR2 | 10.1 |

| Sentinel 1 Fire Extent Indices | Average Rank |

|---|---|

| glcm_radarVH_var_11 | 3 |

| glcm_radarVV_mean_11 | 5.9 |

| glcm_radarVH_mean_11 | 6 |

| glcm_radarVV_var_11 | 6.2 |

| glcm_radarVH_var_7 | 7.4 |

| glcm_radarVH_mean_7 | 9.2 |

| radar_inputVH | 9.2 |

| glcm_radarVV_mean_7 | 10.7 |

| glcm_radarVV_var_7 | 11.5 |

| glcm_radarVH_2mom_11 | 11.7 |

| VHbackscatter | 12.2 |

| Sentinel 2 Fire Extent Indices | Average Rank |

|---|---|

| mean_dBare7 | 6.0 |

| mean_dNBR7 | 6.0 |

| glcm_SWIR_dNBR2_mean_7 | 6.4 |

| glcm_SWIR_dNBR2_var_7 | 6.8 |

| mean_dNBR5 | 6.9 |

| mean_dBare5 | 7.3 |

| glcm_SWIR_dNBR2_var_5 | 8.4 |

| glcm_SWIR_dNBR2_mean_5 | 8.9 |

| RdNBR | 9.0 |

| dNBR | 10.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gibson, R.K.; Mitchell, A.; Chang, H.-C. Image Texture Analysis Enhances Classification of Fire Extent and Severity Using Sentinel 1 and 2 Satellite Imagery. Remote Sens. 2023, 15, 3512. https://doi.org/10.3390/rs15143512

Gibson RK, Mitchell A, Chang H-C. Image Texture Analysis Enhances Classification of Fire Extent and Severity Using Sentinel 1 and 2 Satellite Imagery. Remote Sensing. 2023; 15(14):3512. https://doi.org/10.3390/rs15143512

Chicago/Turabian StyleGibson, Rebecca Kate, Anthea Mitchell, and Hsing-Chung Chang. 2023. "Image Texture Analysis Enhances Classification of Fire Extent and Severity Using Sentinel 1 and 2 Satellite Imagery" Remote Sensing 15, no. 14: 3512. https://doi.org/10.3390/rs15143512

APA StyleGibson, R. K., Mitchell, A., & Chang, H.-C. (2023). Image Texture Analysis Enhances Classification of Fire Extent and Severity Using Sentinel 1 and 2 Satellite Imagery. Remote Sensing, 15(14), 3512. https://doi.org/10.3390/rs15143512