A Rapid Self-Supervised Deep-Learning-Based Method for Post-Earthquake Damage Detection Using UAV Data (Case Study: Sarpol-e Zahab, Iran)

Abstract

1. Introduction

- Adaptation of the EMS-98 standard’s damage levels to very-high-resolution UAV images.

- Proposing a novel method for automatic selection of reliable training data needed for consecutive supervised damage detection.

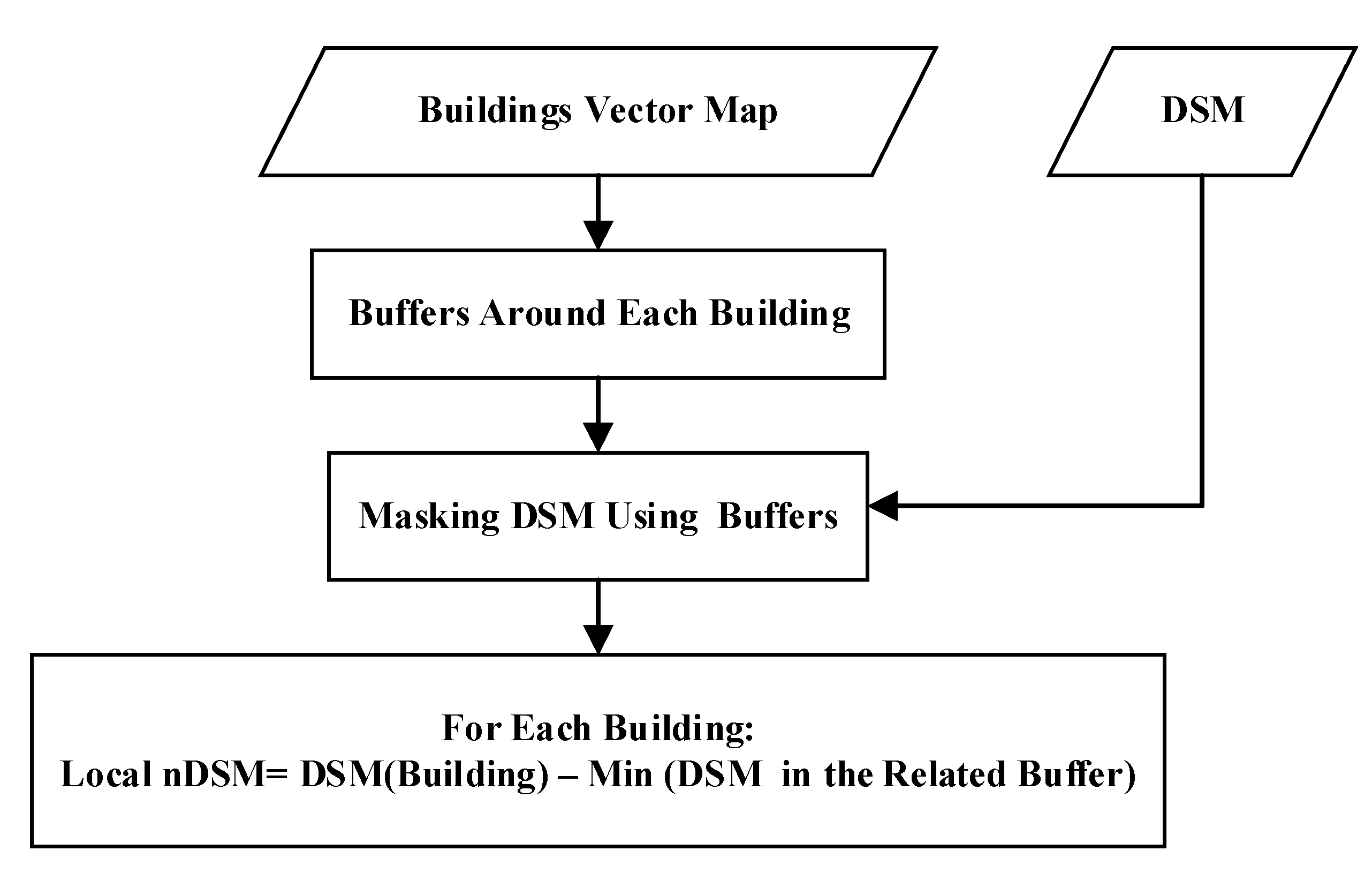

- Presenting a rapid and local-normalized digital surface model (nDSM) for expediting the damage mapping procedure.

- Investigating various deep and non-deep features and the fusion of them in the framework of eight machine/deep learning algorithms.

| Damage Level(s) | Methodology | Data-Resolution (m) | Study |

|---|---|---|---|

| Undamaged, minor damage, and collapsed | Rule-based—OBIA | Arial—0.5 | [30] |

| Intact, damage, and collapsed | Machine learning—OBIA | UAV—0.5 | [31] |

| Damaged and undamaged | Machine learning—OBIA | Arial—0.1 | [32] |

| Damaged and undamaged | Rule-based—OBIA | UAV—0.2/Arial—0.61 | [11] |

| Intact, broken, and debris | Deep learning (CNN) | Arial—0.67 | [33] |

| Intact, partially collapsed, and collapsed | Deep learning (Deeplab v2) | Arial—0.5 | [6] |

| Undamaged and debris | Deep learning (SSD) | Arial—0.3 | [17] |

| Undamaged, minor damage, and debris | Deep learning (CNN) | Arial—0.25 | [7] |

| 0.025 m: Basically intact, slight damage, partially collapsed, completely collapsed 0.079 m: Basically intact, partially collapsed, completely collapsed | Rule-based | UAV (orthophoto+ point cloud)—0.025 and 0.079 | [34] |

| Slight damage, moderate damage, and serious damage | Deep learning (Inception V3) | Arial—0.3 | [35] |

| Collapsed | Deep learning (YOLO V3) | Arial—0.5 | [16] |

| Damaged and undamaged | Deep learning (ADGAN) | UAV—0.02 and 0.06 | [36] |

| A wide range of minor to major damage | Deep learning (CNN) | UAV—0.09 | [37] |

| Collapsed | Faster R (CNN) | UAV—0.1 and 0.15 | [38] |

2. Study Area and Dataset

3. Methods

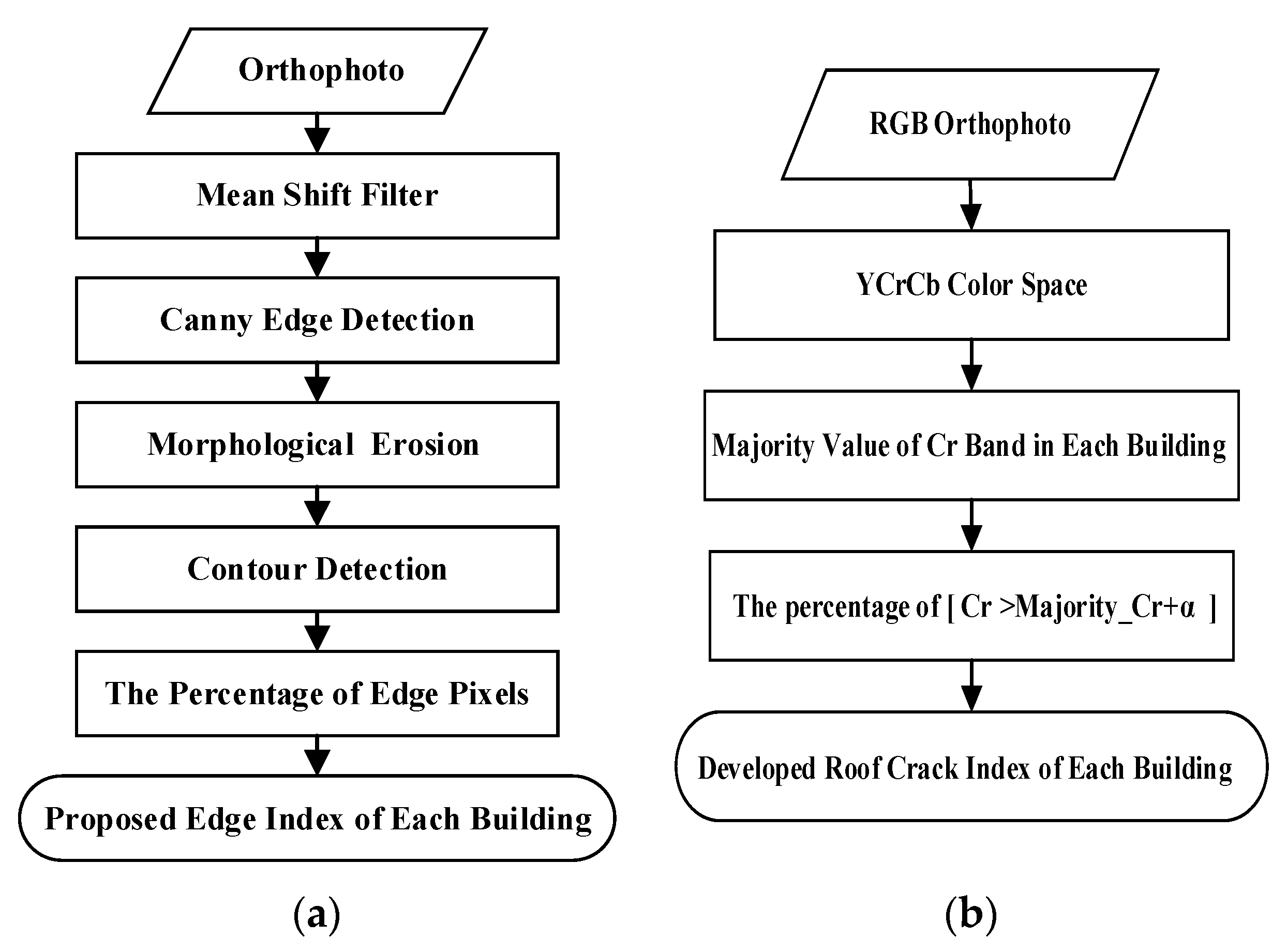

3.1. Feature-Extraction Strategies

3.2. Automated Selection of Training Samples

- Minor damage to buildings can predominantly be seen as a noticeable amount of roof surface cracking. Based on our examination, these cracks do not result in tangible differences in height. In light of this, optical data should be incorporated since elevation information of DSM is not appropriate for distinguishing L2 damage levels from L1.

- Buildings in Iran often have cracks appearing in brown spots due to roof elements peeling away, commonly bituminous waterproof layers. The important point to be noted here is that the resemblance of shadows and crack spots in terms of having low orthophoto digital number value results in identifying shadows besides cracks in most crack-detection algorithms. Therefore, because of the mentioned fact, an actually intact building which has shadows in the image according to the illumination conditions can be identified as crack spots by mistake. Hence, the shade of a building acts as a destructive factor in determining minor damage to buildings.

3.3. AI-Based Classification of Building Damage

4. Results and Discussion

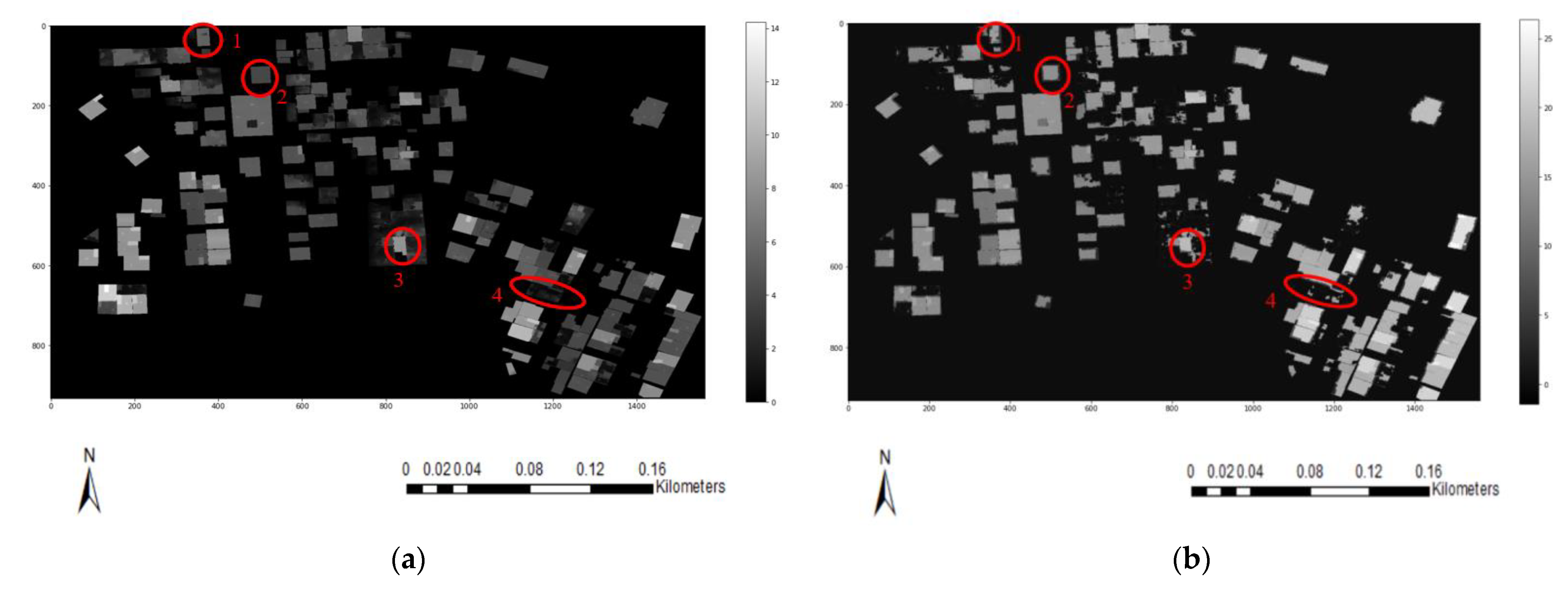

4.1. Features Production

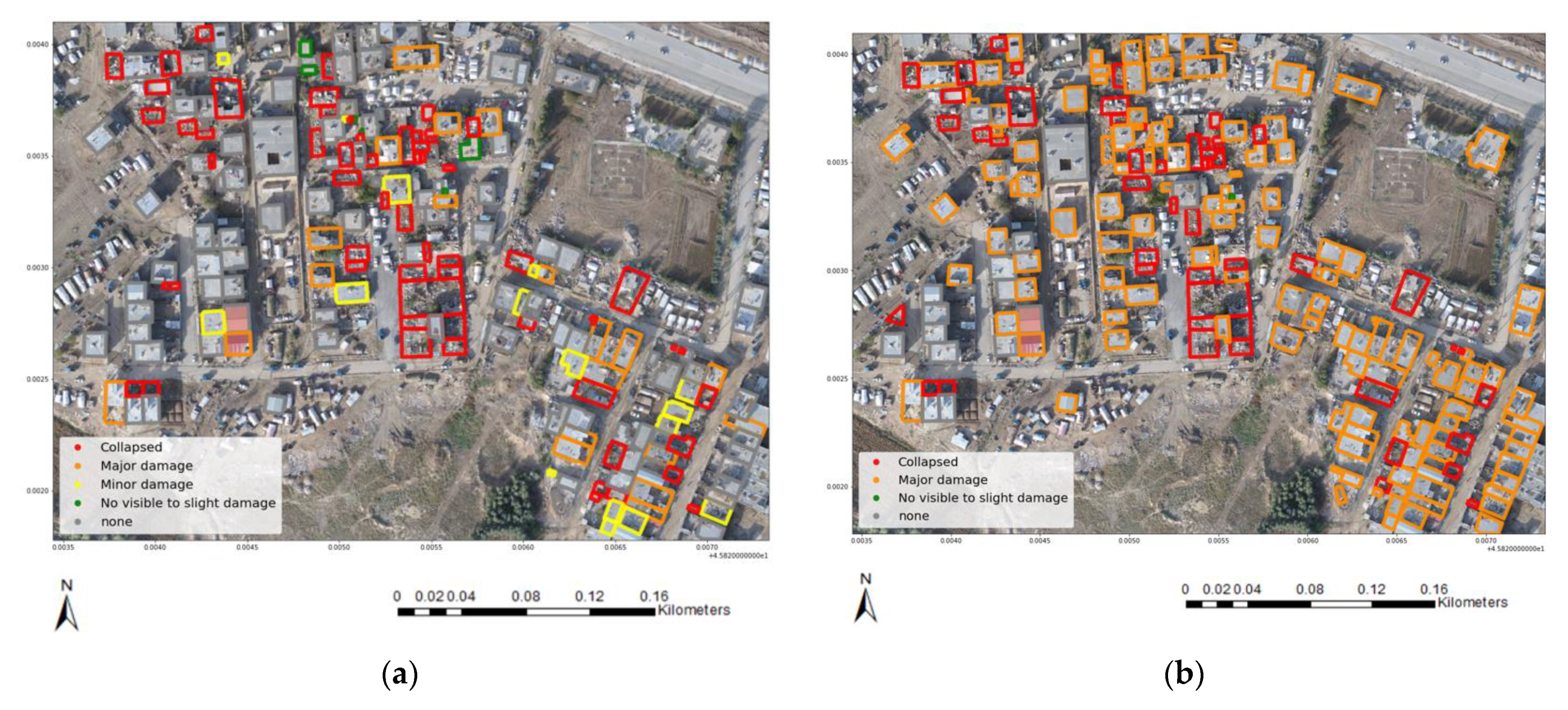

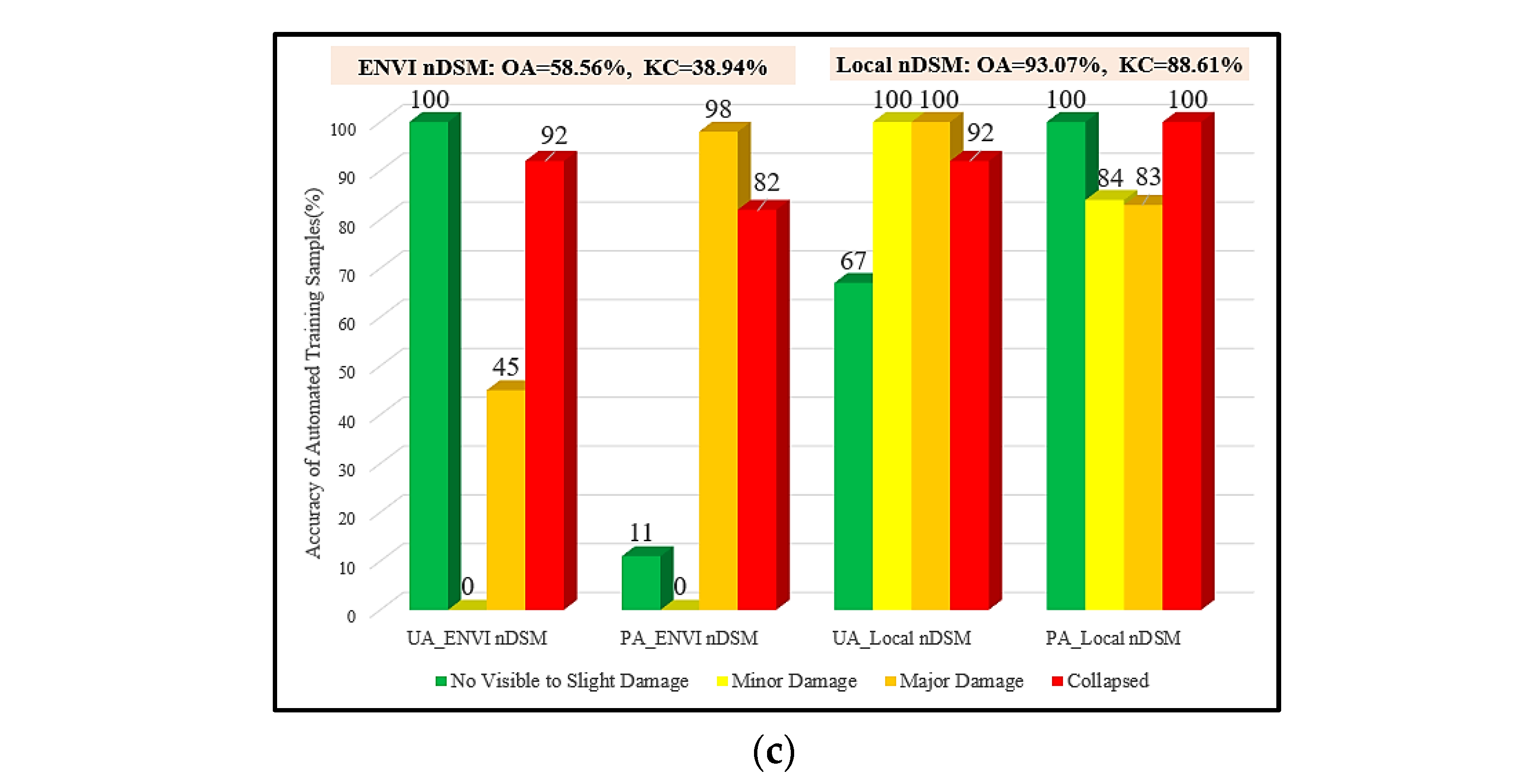

4.2. Implementation of Automatic Training Samples Selection

4.3. Damage Mapping and Evaluation

4.4. Automatic vs. Manual Training Samples

5. Conclusions and Future Research

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Altan, O.; Toz, G.; Kulur, S.; Seker, D.; Volz, S.; Fritsch, D.; Sester, M. Photogrammetry and geographic information systems for quick assessment, documentation and analysis of earthquakes. ISPRS J. Photogramm. Remote Sens. 2001, 55, 359–372. [Google Scholar] [CrossRef]

- Axel, C.; van Aardt, J. Building damage assessment using airborne lidar. J. Appl. Remote Sens. 2017, 11, 1. [Google Scholar] [CrossRef]

- Ji, M.; Liu, L.; Du, R.; Buchroithner, M.F. A comparative study of texture and convolutional neural network features for detecting collapsed buildings after earthquakes using pre- and post-event satellite imagery. Remote Sens. 2019, 11, 1202. [Google Scholar] [CrossRef]

- Moradi, M.; Shah-Hosseini, R. Earthquake Damage Assessment Based on Deep Learning Method Using VHR Images. Environ. Sci. Proc. 2020, 5, 16. [Google Scholar] [CrossRef]

- Omarzadeh, D.; Karimzadeh, S.; Matsuoka, M.; Feizizadeh, B. Earthquake Aftermath from Very High-Resolution WorldView-2 Image and Semi-Automated Object-Based Image Analysis (Case Study: Kermanshah, Sarpol-e Zahab, Iran). Remote Sens. 2021, 13, 4272. [Google Scholar] [CrossRef]

- Song, D.; Tan, X.; Wang, B.; Zhang, L.; Shan, X.; Cui, J. Integration of super-pixel segmentation and deep-learning methods for evaluating earthquake-damaged buildings using single-phase remote sensing imagery. Int. J. Remote Sens. 2020, 41, 1040–1066. [Google Scholar] [CrossRef]

- Kalantar, B.; Ueda, N.; Al-najjar, H.A.H.; Halin, A.A. Assessment of Convolutional Neural Network Architectures for Earthquake-Induced Building Damage Detection based on Pre- and Post-Event Orthophoto Images. Remote Sens. 2020, 12, 3529. [Google Scholar] [CrossRef]

- Abdi, G.; Jabari, S. A Multi-Feature Fusion Using Deep Transfer Learning for Earthquake Building Damage Detection A Multi-Feature Fusion Using Deep Transfer Learning for Earthquake. Can. J. Remote Sens. 2021, 47, 337–352. [Google Scholar] [CrossRef]

- Yuan, X.; Azimi, S.M.; Henry, C.; Gstaiger, V.; Codastefano, M.; Manalili, M.; Cairo, S.; Modugno, S.; Wieland, M.; Schneibel, A.; et al. Automated building segmentation and damage assessment from satellite images for disaster relief. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci.-ISPRS Arch. 2021, 43, 741–748. [Google Scholar] [CrossRef]

- Kerle, N.; Nex, F.; Gerke, M.; Duarte, D.; Vetrivel, A. UAV-based structural damage mapping: A review. ISPRS Int. J. Geo-Inf. 2019, 9, 14. [Google Scholar] [CrossRef]

- Chen, J.; Chen, W.; Dou, A.; Li, W.; Sun, Y. Research on optimal observation scale for damaged buildings after earthquake based on optimal feature space. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci.-ISPRS Arch. 2018, 42, 155–160. [Google Scholar] [CrossRef]

- Zhu, X.X.; Tuia, D.; Mou, L.; Xia, G.-S.; Zhang, L.; Xu, F.; Fraundorfer, F. Deep learning in remote sensing: A review. arXiv 2017, arXiv:1710.03959. [Google Scholar] [CrossRef]

- Cai, Z.; Jiang, Z.; Yuan, Y. Task-Related Self-Supervised Learning for Remote Sensing Image Change Detection. In Proceedings of the ICASSP 2021—2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; pp. 1535–1539. [Google Scholar]

- Dang, B.; Li, Y. Msresnet: Multiscale residual network via self-supervised learning for water-body detection in remote sensing imagery. Remote Sens. 2021, 13, 3122. [Google Scholar] [CrossRef]

- Guo, D.; Xia, Y.; Luo, X. Self-Supervised GANs with Similarity Loss for Remote Sensing Image Scene Classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2021, 14, 2508–2521. [Google Scholar] [CrossRef]

- Ma, H.; Liu, Y.; Ren, Y.; Yu, J. Detection of Collapsed Buildings in Post—Earthquake Remote Sensing Images Based on the Improved YOLOv3. Remote Sens. 2020, 12, 44. [Google Scholar] [CrossRef]

- Li, Y.; Hu, W.; Dong, H.; Zhang, X. Building Damage Detection from Post-Event Aerial Imagery Using Single Shot Multibox Detector. Appl. Sci. 2019, 9, 1128. [Google Scholar] [CrossRef]

- Guo, Q.; Wang, Z. A Self-Supervised Learning Framework for Road Centerline Extraction from High-Resolution Remote Sensing Images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 4451–4461. [Google Scholar] [CrossRef]

- Dong, H.; Ma, W.; Wu, Y.; Zhang, J.; Jiao, L. Self-supervised representation learning for remote sensing image change detection based on temporal prediction. Remote Sens. 2020, 12, 1868. [Google Scholar] [CrossRef]

- Ghaffarian, S.; Kerle, N.; Pasolli, E.; Arsanjani, J.J. Post-disaster building database updating using automated deep learning: An integration of pre-disaster OpenStreetMap and multi-temporal satellite data. Remote Sens. 2019, 11, 2427. [Google Scholar] [CrossRef]

- Comisión Sismológica Europea European Macroseismic Scale 1998 EMS-98; Comisión Sismológica Europea: Luxembourg, 1998; Volume 15, ISBN 2879770084.

- Fiol, M. Introduction to UNOSAT & Mine Action Related Activities. 2022. Available online: https://www.gichd.org/fileadmin/GICHD/what-we-do/events/UNMAT-2015/Statements-UNMAT-2015/07_Cooperation_Standards_and_Tools_09_UNOSAT_UNITAR_Manuel_Fiol.pdf, (accessed on 25 December 2022).

- Schweier, C.; Markus, M. Assessment of the search and rescue demand for individual buildings. In Proceedings of the 13th World Conference on Earthquake Engineering, Vancouver, BC, Canada, 1–6 August 2004; pp. 3092–3103. [Google Scholar]

- Achkar, Z.A.; Baker, I.L.; Raymond, N.A. Satellite Imagery Interpretation Guide Intentional Burning of Tukuls; Harvard University Press: Cambridge, MA, USA, 2014; Available online: https://hhi.harvard.edu/files/humanitarianinitiative/files/siig_ii_burned_tukuls_3.pdf?m=1610658910 (accessed on 25 December 2022).

- Cotrufo, S.; Sandu, C.; Giulio Tonolo, F.; Boccardo, P. Building damage assessment scale tailored to remote sensing vertical imagery. Eur. J. Remote Sens. 2018, 51, 991–1005. [Google Scholar] [CrossRef]

- Dell’Acqua, F.; Gamba, P. Remote sensing and earthquake damage assessment: Experiences, limits, and perspectives. Proc. IEEE 2012, 100, 2876–2890. [Google Scholar] [CrossRef]

- Matin, S.S.; Pradhan, B. Challenges and limitations of earthquake-induced building damage mapping techniques using remote sensing images-A systematic review. Geocarto Int. 2021, 37, 6186–6212. [Google Scholar] [CrossRef]

- COPERNICUS-Emergency Management Service—Mapping. Available online: https://emergency.copernicus.eu/mapping/ems/what-copernicus (accessed on 8 December 2022).

- The International Charter Space and Major Disasters. Available online: https://disasterscharter.org/web/guest/home;jsessionid=954CBACEF7A2E0A4A75B9105042163FD.APP1 (accessed on 8 December 2022).

- Li, X.; Yang, W.; Ao, T.; Li, H.; Chen, W. An improved approach of information extraction for earthquake-damaged buildings using high-resolution imagery. J. Earthq. Tsunami 2011, 5, 389–399. [Google Scholar] [CrossRef]

- Zeng, T.; Yang, W.N.; Li, X.D. Seismic damage information extent about the buildings based on low-altitude remote sensing images of mianzu quake-stricken areas. Appl. Mech. Mater. 2012, 105–107, 1889–1893. [Google Scholar] [CrossRef]

- Frank, J.; Rebbapragada, U.; Bialas, J.; Oommen, T.; Havens, T.C. Effect of label noise on the machine-learned classification of earthquake damage. Remote Sens. 2017, 9, 803. [Google Scholar] [CrossRef]

- Huang, H.; Sun, G.; Zhang, X.; Hao, Y.; Zhang, A.; Ren, J.; Ma, H. Combined multiscale segmentation convolutional neural network for rapid damage mapping from postearthquake very high-resolution images. J. Appl. Remote Sens. 2019, 18, 35001–35007. [Google Scholar] [CrossRef]

- Li, S.; Tang, H. Classification of Building Damage Triggered by Earthquakes Using Decision Tree. Math. Probl. Eng. 2020, 2020, 2930515. [Google Scholar] [CrossRef]

- Ma, H.; Liu, Y.; Ren, Y.; Wang, D.; Yu, L.; Yu, J. Improved CNN classification method for groups of buildings damaged by earthquake, based on high resolution remote sensing images. Remote Sens. 2020, 12, 260. [Google Scholar] [CrossRef]

- Tilon, S.; Nex, F.; Kerle, N.; Vosselman, G. Post-disaster building damage detection from earth observation imagery using unsupervised and transferable anomaly detecting generative adversarial networks. Remote Sens. 2020, 12, 4193. [Google Scholar] [CrossRef]

- Calantropio, A.; Chiabrando, F.; Codastefano, M.; Bourke, E. Deep Learning for Automatic Building Damage Assessment: Application in Post-Disaster Scenarios Using Uav Data. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2021, 5, 113–120. [Google Scholar] [CrossRef]

- Ding, J.; Zhang, J.; Zhan, Z.; Tang, X.; Wang, X. A Precision Efficient Method for Collapsed Building Detection in Post-Earthquake UAV Images Based on the Improved NMS Algorithm and Faster R-CNN. Remote Sens. 2022, 14, 663. [Google Scholar] [CrossRef]

- Miyamjima, M.; Fallahi, A.; Ikemoto, T.; Samaei, M.; Karimzadeh, S.; Setiawan, H.; Talebi, F.; Karashi, J. Site Investigation of the Sarpole-Zahab Earthquake, Mw 7.3 in SW Iran of November 12, 2017. JSCE J. Disaster Fact Sheets 2018. [Google Scholar]

- Hunt, E.R., Jr.; Perry, E.M.; Hunt, E.R., Jr.; Doraiswamy, P.C.; McMurtrey, J.E.; Daughtry, C.S.T.; Perry, E.M.; Akhmedov, B. A visible band index for remote sensing leaf chlorophyll content at the canopy scale. Int. J. Appl. Earth Obs. Geoinf. 2013, 21, 103–112. [Google Scholar] [CrossRef]

- Seydi, S.T.; Akhoondzadeh, M.; Amani, M. Wildfire Damage Assessment over Australia Using Sentinel-2 Imagery and Wildfire Damage Assessment over Australia Using Sentinel-2 Imagery and MODIS Land Cover Product within the Google Earth Engine Cloud Platform. Remote Sens. 2021, 13, 220. [Google Scholar] [CrossRef]

- Anand, A. An Improved Edge Detection Using Morphological Laplacian of Gaussian Operator. In Proceedings of the 2015 2nd International Conference on Signal Processing and Integrated Networks (SPIN), Noida, India, 19–20 February 2015; pp. 532–536. [Google Scholar]

- Sohn, G.; Dowman, I. Terrain Surface Reconstruction by the Use of Tetrahedron Model with the Mdl Criterion. Int. Arch. Photogramm. Remote Sens. 2002, 24, 336–344. [Google Scholar]

- Axelsson, P. DEM Generation from Laser Scanner Data Using adaptive TIN Models. Int. Arch. Photogramm. Remote Sens. 2000, 23, 110–117. [Google Scholar]

- Lopez Pinaya, W.H.; Vieira, S.; Garcia-Dias, R.; Mechelli, A. Autoencoders. In Methods and Applications to Brain Disorders; Academic Press: New York, NY, USA, 2019; pp. 193–208. [Google Scholar] [CrossRef]

- Shrestha, A.; Mahmood, A. Review of deep learning algorithms and architectures. IEEE Access 2019, 7, 53040–53065. [Google Scholar] [CrossRef]

- Sewak, M.; Sahay, S.K.; Rathore, H. An overview of deep learning architecture of deep neural networks and autoencoders. J. Comput. Theor. Nanosci. 2020, 17, 182–188. [Google Scholar] [CrossRef]

- Coşkun, M.; Özal, Y.; Ayşegül, U. An Overview of Popular Deep Learning Methods. J. Eur. Ejt Tech. 2017, 7, 207–218. [Google Scholar] [CrossRef]

- Lattari, F.; Leon, B.G.; Asaro, F.; Rucci, A.; Prati, C.; Matteucci, M. Deep learning for SAR image despeckling. Remote Sens. 2019, 11, 1532. [Google Scholar] [CrossRef]

- Qianqian, Z. SAR Image Despeckling Based on Convolutional Denoising Autoencoder. arXiv 2020, arXiv:2011.14627. [Google Scholar]

- Deng, S.; Du, L.; Li, C.; Ding, J.; Liu, H. SAR Automatic Target Recognition Based on Euclidean Distance Restricted Autoencoder. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 3323–3333. [Google Scholar] [CrossRef]

- El Housseini, A.; Toumi, A.; Khenchaf, A. Deep Learning for Target recognition from SAR images Convolutioin Auto-encoder. In Proceedings of the 2017 Seminar on Detection Systems Architectures and Technologies (DAT), Algiers, Algeria, 20–22 February 2017. [Google Scholar]

- Geng, J.; Wang, H. Change Detection of SAR Images Based on Supervised Contractive Autoencoders and Fuzzy Clustering. In Proceedings of the 2017 International Workshop on Remote Sensing with Intelligent Processing (RSIP), Shanghai, China, 18–21 May 2017. [Google Scholar]

- Liu, G.; Li, L.; Jiao, L.; Dong, Y.; Li, X. Stacked Fisher autoencoder for SAR change detection. Pattern Recognit. 2019, 96, 106971. [Google Scholar] [CrossRef]

- Mesquita, D.B.; Santos, R.F.D.; MacHaret, D.G.; Campos, M.F.M.; Nascimento, E.R. Fully Convolutional Siamese Autoencoder for Change Detection in UAV Aerial Images. IEEE Geosci. Remote Sens. Lett. 2020, 17, 1455–1459. [Google Scholar] [CrossRef]

- Zhao, H.; Qing, C.; Yang, Z.; Marshall, S. Novel Segmented Stacked AutoEncoder for Effective Dimensionality Reduction and Feature Extraction in Hyperspectral Imaging. Neurocomputing 2015, 185, 1–10. [Google Scholar] [CrossRef]

- Ramamurthy, M.; Robinson, Y.H.; Vimal, S.; Suresh, A. Auto encoder based dimensionality reduction and classification using convolutional neural networks for hyperspectral images. Microprocess. Microsyst. 2020, 79, 103280. [Google Scholar] [CrossRef]

- Rahimzad, M.; Homayouni, S.; Naeini, A.A.; Nadi, S. An efficient multi-sensor remote sensing image clustering in urban areas via boosted convolutional autoencoder (BCAE). Remote Sens. 2021, 13, 2501. [Google Scholar] [CrossRef]

- Shahabi, H.; Rahimzad, M.; Piralilou, S.T.; Ghorbanzadeh, O.; Homayouni, S.; Blaschke, T.; Lim, S.; Ghamisi, P. Unsupervised deep learning for landslide detection from multispectral sentinel-2 imagery. Remote Sens. 2021, 13, 4698. [Google Scholar] [CrossRef]

- Shahi, K.R.; Ghamisi, P.; Rasti, B.; Scheunders, P.; Gloaguen, R. Unsupervised Data Fusion with Deeper Perspective: A Novel Multisensor Deep Clustering Algorithm. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2022, 15, 284–296. [Google Scholar] [CrossRef]

- Santurkar, S.; Tsipras, D.; Ilyas, A. How Does Batch Normalization Help Optimization? arXiv 2018, arXiv:1805.11604. [Google Scholar]

- Hinton, G. Dropout: A Simple Way to Prevent Neural Networks from Overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

| UAV Device | Phantom 4 Pro |

|---|---|

| Flight altitude | 98.8 m |

| Camera | FC6310 |

| Focal length | 8.8 mm |

| Image dimension | 3648 ∗ 5472 pixel |

| Pixel size | 2.41 |

| Utilized Features | Features Type | Strategy No. |

|---|---|---|

| RGB bands of orthophoto, TGI, BTH_DSM, LOG_orthophoto, novel local nDSM | Non-deep features (NDF) | 1 |

| OECAE-based deep features extracted from stacked input orthophoto and DSM | Deep features (DF) | 2 |

| OECAE-based deep features extracted from non-deep features of strategy 1 | Fused non-deep/deep features (FF) | 3 |

| Selection Rule(s) | Damage Level (in Order) |

|---|---|

| Median(nDSM) < 3 and Edge Index > 90% | Collapsed |

| Normalized_SD (nDSM) ≥ 0.3 | Major Damage |

| Normalized_SD (nDSM) < 0.05 and Crack Index < 5% | No Visible to Slight Damage |

| Normalized_SD (nDSM) < 0.05 and Crack Index > 10% | Minor Damage |

| Hyperparameter | Value |

|---|---|

| Optimizer | Adam |

| Loss | Mean Squared Error (MSE) |

| Learning rate | 0.001 |

| Number of epochs | 1 |

| Batch size | 128 |

| Block | Unit | Input Shape | Kernel Size | Output Shape |

|---|---|---|---|---|

| Encoder | Conv2D+ReLU+BN+Droput Conv2D+ReLU+BN+Droput Flatten Dense | 8 8 4 4 2 2 1 1 | 3 3 3 3 2 2 1 1 | 4 4 2 2 1 1 1 1 |

| Decoder | Dense Reshape Conv2D+ReLU+BN Conv2D+ReLU+BN Conv2+Dropout+Sigmoid | 1 1 1 1 2 2 4 4 8 8 | 1 1 2 2 3 3 3 3 1 1 | 1 1 2 2 4 4 8 8 8 8 |

| Learning Algorithm | Hyperparameters |

|---|---|

| SVM | C = 100, kernel = ‘poly’, degree = 2, gamma = ‘auto’, coef0 = 0.1, random_state = 0 |

| RF | n_estimators = 200,max_depth = 10, random_state = 0 |

| GB | n_estimators = 5000, learning_rate = 0.1, max_depth = 1, random_state = 0 |

| XGB | n_estimators = 5000, max_depth = 10 |

| DT | max_depth = 2, random_state = 0, min_samples_leaf = 5 |

| KNN | n_neighbors = 2 |

| AB | n_estimators = 5000, random_state = 0, learning_rate = 0.1 |

| MPL | hidden_layer_sizes = (20,15), random_state = 0, verbose = True, learning_rate_init = 0.03, max_iter = 5000 |

| Automated Training Samples Selection and Classification | Feature-Extraction Strategy | ||

|---|---|---|---|

| 18.6 s | FF = 4 min 2 s | DF = 2 min 55 s | NDF = 9.8 s |

| Manual Training Samples (Train/Test Ratio %) | Automated Training Samples | ||||

|---|---|---|---|---|---|

| 50/50 | 60/40 | 70/30 | 80/20 | ||

| OA (%) | 64 | 65.85 | 65.57 | 60 | 82 |

| KC (%) | 49 | 50.86 | 51.36 | 40.79 | 74.01 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Takhtkeshha, N.; Mohammadzadeh, A.; Salehi, B. A Rapid Self-Supervised Deep-Learning-Based Method for Post-Earthquake Damage Detection Using UAV Data (Case Study: Sarpol-e Zahab, Iran). Remote Sens. 2023, 15, 123. https://doi.org/10.3390/rs15010123

Takhtkeshha N, Mohammadzadeh A, Salehi B. A Rapid Self-Supervised Deep-Learning-Based Method for Post-Earthquake Damage Detection Using UAV Data (Case Study: Sarpol-e Zahab, Iran). Remote Sensing. 2023; 15(1):123. https://doi.org/10.3390/rs15010123

Chicago/Turabian StyleTakhtkeshha, Narges, Ali Mohammadzadeh, and Bahram Salehi. 2023. "A Rapid Self-Supervised Deep-Learning-Based Method for Post-Earthquake Damage Detection Using UAV Data (Case Study: Sarpol-e Zahab, Iran)" Remote Sensing 15, no. 1: 123. https://doi.org/10.3390/rs15010123

APA StyleTakhtkeshha, N., Mohammadzadeh, A., & Salehi, B. (2023). A Rapid Self-Supervised Deep-Learning-Based Method for Post-Earthquake Damage Detection Using UAV Data (Case Study: Sarpol-e Zahab, Iran). Remote Sensing, 15(1), 123. https://doi.org/10.3390/rs15010123