Augmentation-Based Methodology for Enhancement of Trees Map Detalization on a Large Scale

Abstract

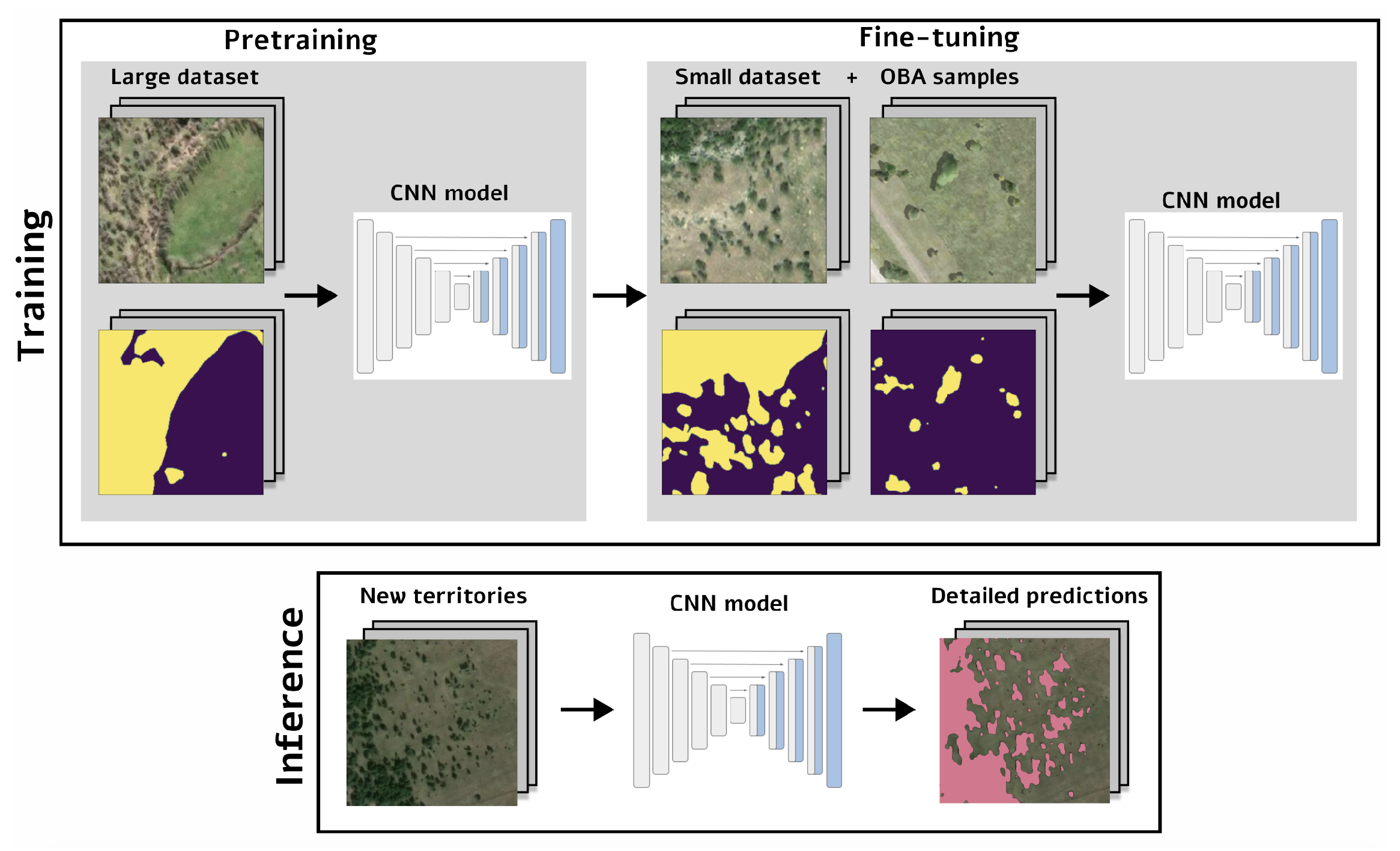

:1. Introduction

- We propose and validate a pipeline for detailed forest mask segmentation using CNN

- We provide an open-access tool for detailed forest mask segmentation that can be used for environmental studies, which is available in an SAAS platform through the link provided [31].

2. Materials and Methods

2.1. Large Dataset

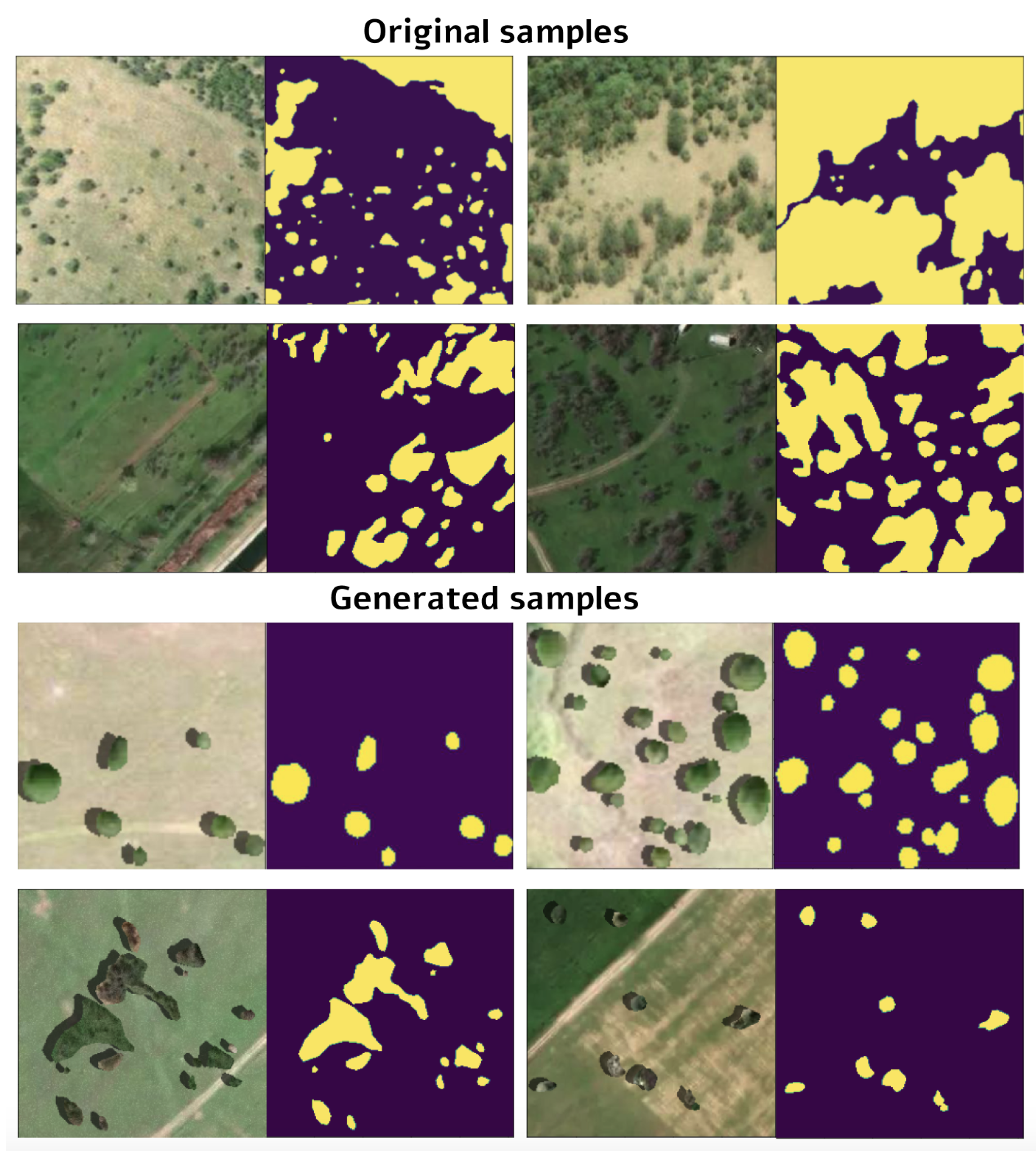

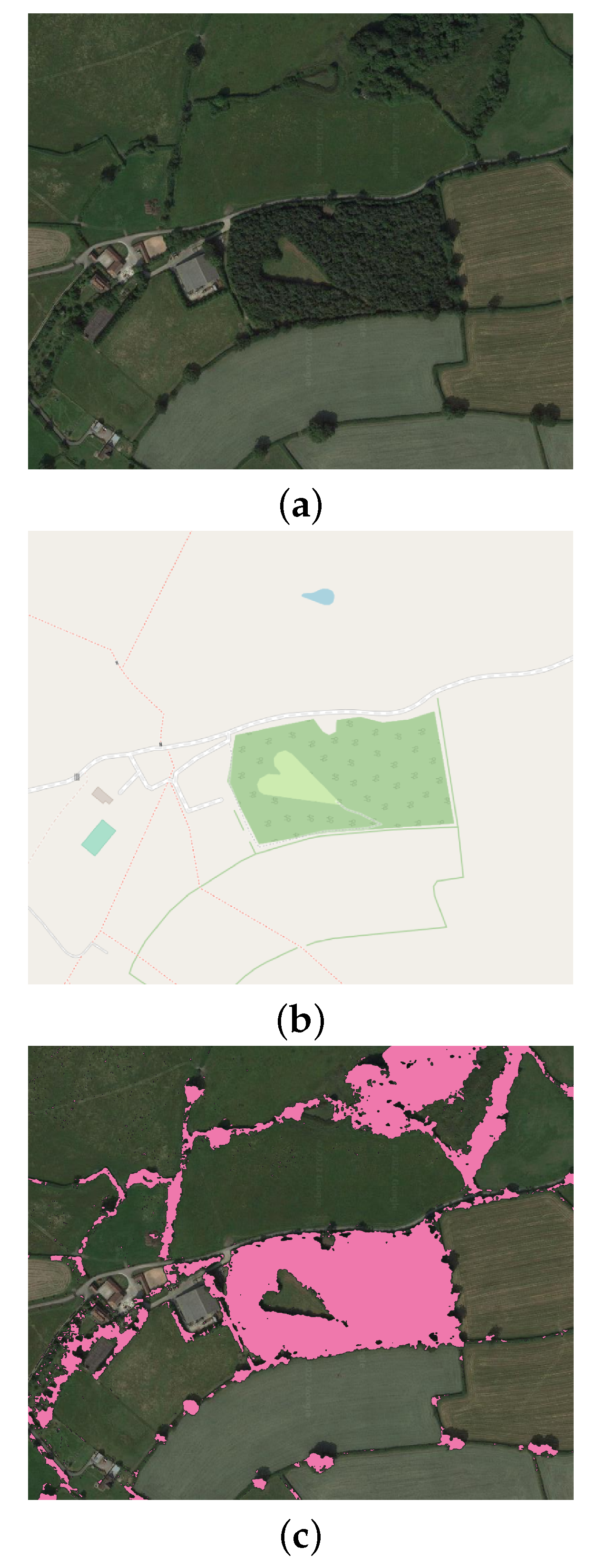

2.2. Detailed Small Dataset

2.3. Baseline Forest Segmentation

2.4. Object-Based Augmentation

2.5. Different Dataset Size

2.6. Experimental Setup

2.7. Evaluation

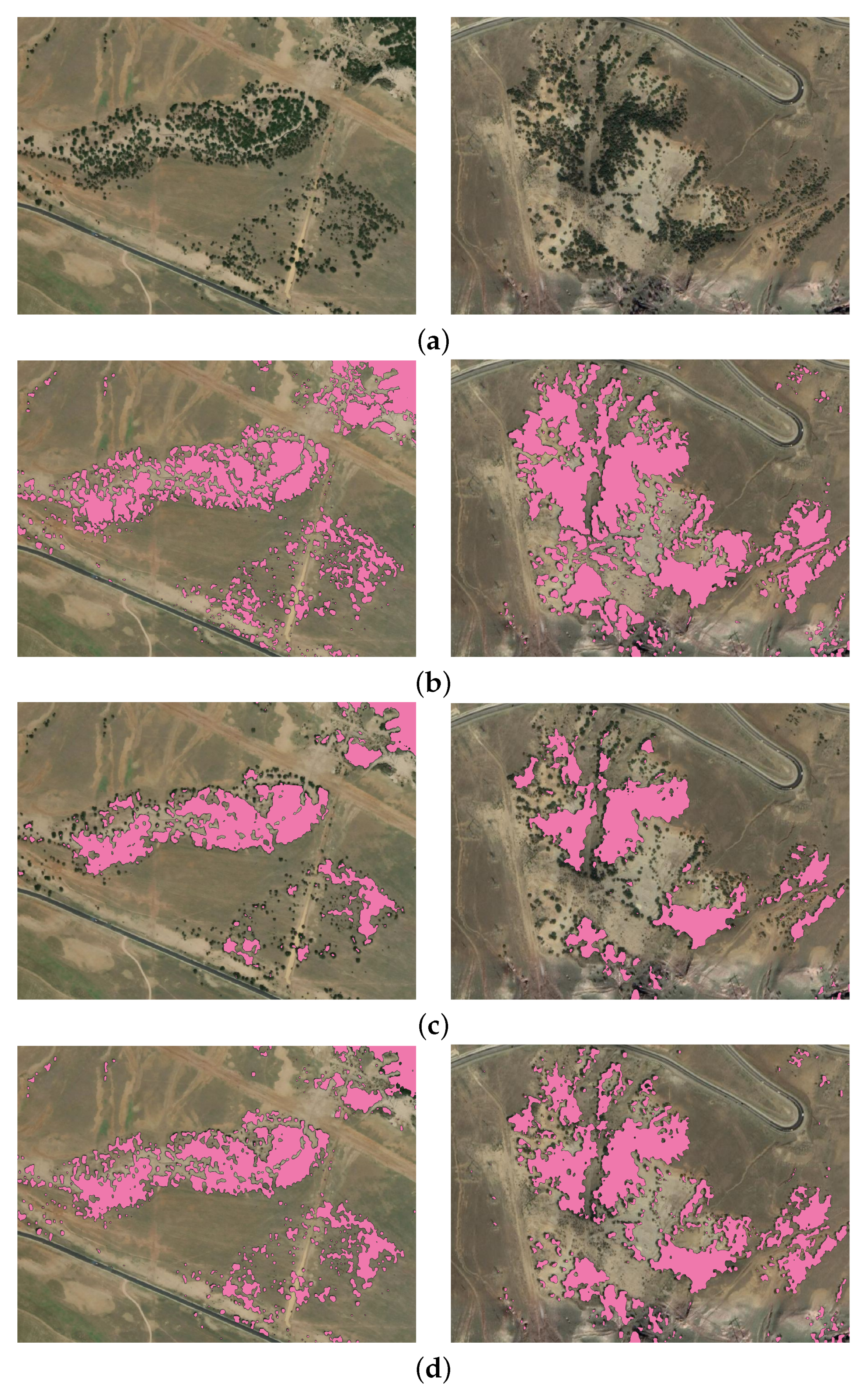

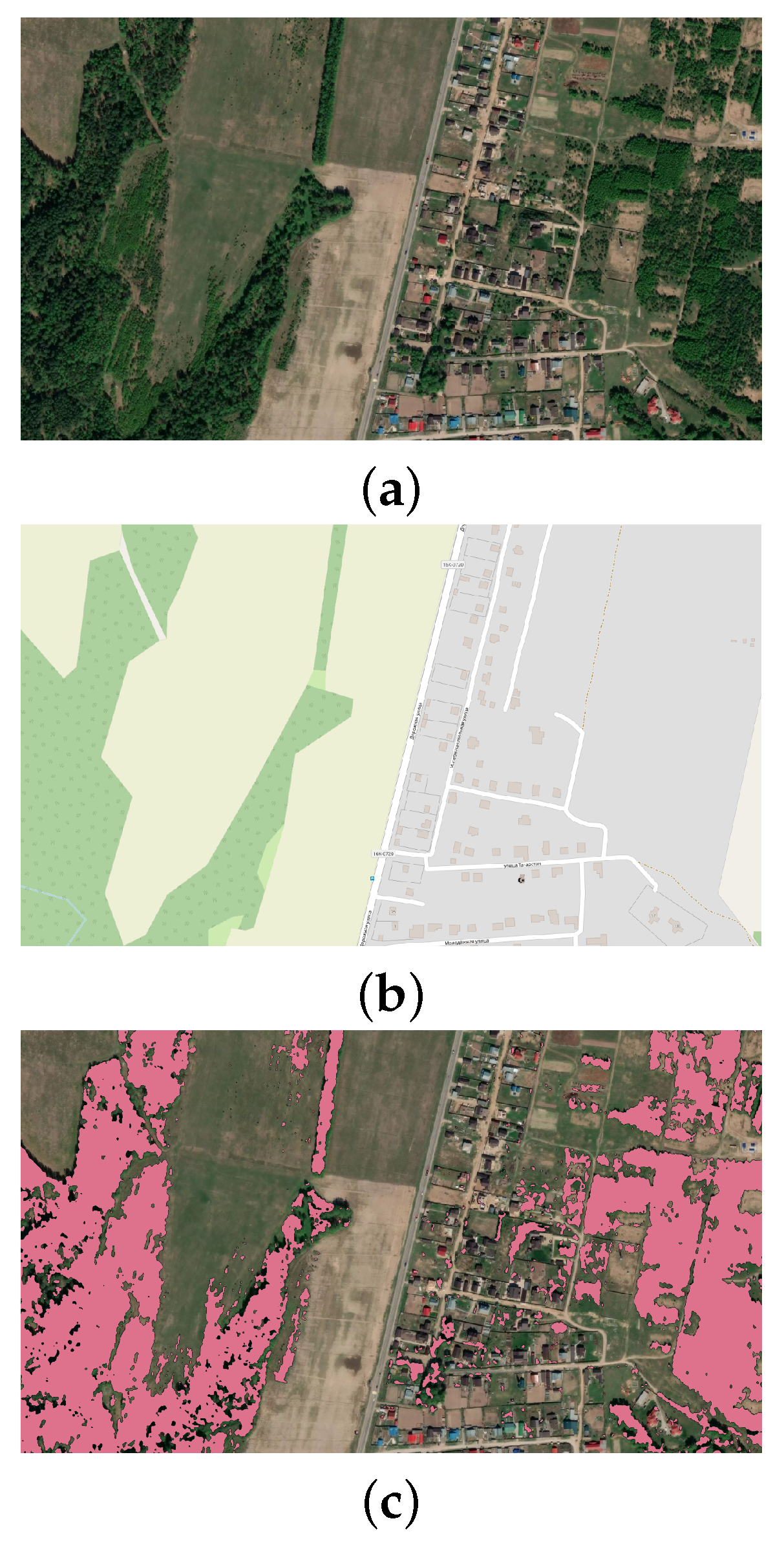

3. Results and Discussion

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Cheng, X.; Yu, J. RetinaNet with Difference Channel Attention and Adaptively Spatial Feature Fusion for Steel Surface Defect Detection. IEEE Trans. Instrum. Meas. 2020, 70, 1–11. [Google Scholar] [CrossRef]

- Shan, Y.; Yao, X.; Lin, H.; Zou, X.; Huang, K. Lidar-Based Stable Navigable Region Detection for Unmanned Surface Vehicles. IEEE Trans. Instrum. Meas. 2021, 70, 1–13. [Google Scholar] [CrossRef]

- Yu, J.; Peng, X.; Li, S.; Lu, Y.; Ma, W. A Lightweight Ship Detection Method in Optical Remote Sensing Image under Cloud Interference. In Proceedings of the 2021 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Glasgow, UK, 17–20 May 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 1–6. [Google Scholar]

- Angelini, M.G.; Costantino, D.; Di Nisio, A. ASTER image for environmental monitoring Change detection and thermal map. In Proceedings of the 2017 IEEE International Instrumentation and Measurement Technology Conference (I2MTC), Turin, Italy, 22–25 May 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 1–6. [Google Scholar]

- Kattenborn, T.; Leitloff, J.; Schiefer, F.; Hinz, S. Review on Convolutional Neural Networks (CNN) in vegetation remote sensing. ISPRS J. Photogramm. Remote Sens. 2021, 173, 24–49. [Google Scholar] [CrossRef]

- Illarionova, S.; Trekin, A.; Ignatiev, V.; Oseledets, I. Neural-Based Hierarchical Approach for Detailed Dominant Forest Species Classification by Multispectral Satellite Imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 14, 1810–1820. [Google Scholar] [CrossRef]

- Nevavuori, P.; Narra, N.; Lipping, T. Crop yield prediction with deep convolutional neural networks. Comput. Electron. Agric. 2019, 163, 104859. [Google Scholar] [CrossRef]

- Hirschmugl, M.; Deutscher, J.; Sobe, C.; Bouvet, A.; Mermoz, S.; Schardt, M. Use of SAR and optical time series for tropical forest disturbance mapping. Remote Sens. 2020, 12, 727. [Google Scholar] [CrossRef] [Green Version]

- Li, H.; Hu, B.; Li, Q.; Jing, L. CNN-Based Tree Species Classification Using Airborne Lidar Data and High-Resolution Satellite Image. In Proceedings of the IGARSS 2020–2020 IEEE International Geoscience and Remote Sensing Symposium, Waikoloa, HI, USA, 26 September–2 October 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 2679–2682. [Google Scholar]

- Malinowski, R.; Lewiński, S.; Rybicki, M.; Gromny, E.; Jenerowicz, M.; Krupiński, M.; Nowakowski, A.; Wojtkowski, C.; Krupiński, M.; Krätzschmar, E.; et al. Automated production of a land cover/use map of Europe based on Sentinel-2 imagery. Remote Sens. 2020, 12, 3523. [Google Scholar] [CrossRef]

- Flores-Martínez, J.J.; Martínez-Pacheco, A.; Rendón-Salinas, E.; Rickards, J.; Sarkar, S.; Sánchez-Cordero, V. Recent forest cover loss in the core zones of the Monarch Butterfly Biosphere Reserve in Mexico. Front. Environ. Sci. 2019, 7, 167. [Google Scholar] [CrossRef]

- Thomas, N.; Baltezar, P.; Lagomasino, D.; Stovall, A.; Iqbal, Z.; Fatoyinbo, L. Trees outside forests are an underestimated resource in a country with low forest cover. Sci. Rep. 2021, 11, 7919. [Google Scholar] [CrossRef]

- Malkoç, E.; Rüetschi, M.; Ginzler, C.; Waser, L.T. Countrywide mapping of trees outside forests based on remote sensing data in Switzerland. Int. J. Appl. Earth Obs. Geoinf. 2021, 100, 102336. [Google Scholar] [CrossRef]

- Qiu, Z.; Feng, Z.K.; Wang, M.; Li, Z.; Lu, C. Application of UAV photogrammetric system for monitoring ancient tree communities in Beijing. Forests 2018, 9, 735. [Google Scholar] [CrossRef] [Green Version]

- D’Amico, G.; Vangi, E.; Francini, S.; Giannetti, F.; Nicolaci, A.; Travaglini, D.; Massai, L.; Giambastiani, Y.; Terranova, C.; Chirici, G. Are we ready for a National Forest Information System? State of the art of forest maps and airborne laser scanning data availability in Italy. IForest-Biogeosci. For. 2021, 14, 144. [Google Scholar] [CrossRef]

- Otero, V.; Van De Kerchove, R.; Satyanarayana, B.; Martínez-Espinosa, C.; Fisol, M.A.B.; Ibrahim, M.R.B.; Sulong, I.; Mohd-Lokman, H.; Lucas, R.; Dahdouh-Guebas, F. Managing mangrove forests from the sky: Forest inventory using field data and Unmanned Aerial Vehicle (UAV) imagery in the Matang Mangrove Forest Reserve, peninsular Malaysia. For. Ecol. Manag. 2018, 411, 35–45. [Google Scholar] [CrossRef]

- Karlson, M.; Reese, H.; Ostwald, M. Tree crown mapping in managed woodlands (parklands) of semi-arid West Africa using WorldView-2 imagery and geographic object based image analysis. Sensors 2014, 14, 22643–22669. [Google Scholar] [CrossRef] [PubMed]

- Wagner, F.H.; Sanchez, A.; Aidar, M.P.; Rochelle, A.L.; Tarabalka, Y.; Fonseca, M.G.; Phillips, O.L.; Gloor, E.; Aragao, L.E. Mapping Atlantic rainforest degradation and regeneration history with indicator species using convolutional network. PLoS ONE 2020, 15, e0229448. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Marx, A.; Tetteh, G.O. A forest vitality and change monitoring tool based on RapidEye imagery. IEEE Geosci. Remote Sens. Lett. 2017, 14, 801–805. [Google Scholar] [CrossRef]

- Miettinen, J.; Stibig, H.J.; Achard, F. Remote sensing of forest degradation in Southeast Asia—Aiming for a regional view through 5–30 m satellite data. Glob. Ecol. Conserv. 2014, 2, 24–36. [Google Scholar] [CrossRef]

- Hansen, M.C.; Shimabukuro, Y.E.; Potapov, P.; Pittman, K. Comparing annual MODIS and PRODES forest cover change data for advancing monitoring of Brazilian forest cover. Remote Sens. Environ. 2008, 112, 3784–3793. [Google Scholar] [CrossRef]

- Terra & Aqua Moderate Resolution Imaging Spectroradiometer (MODIS). Available online: https://modis.gsfc.nasa.gov/about/ (accessed on 20 November 2021).

- Fernandez-Carrillo, A.; de la Fuente, D.; Rivas-Gonzalez, F.; Franco-Nieto, A. A Sentinel-2 unsupervised forest mask for European sites. In Proceedings of the Earth Resources and Environmental Remote Sensing/GIS Applications X. International Society for Optics and Photonics, Strasbourg, France, 9–12 September 2019; Volume 11156, p. 111560Y. [Google Scholar]

- Mondal, P.; McDermid, S.S.; Qadir, A. A reporting framework for Sustainable Development Goal 15: Multi-scale monitoring of forest degradation using MODIS, Landsat and Sentinel data. Remote Sens. Environ. 2020, 237, 111592. [Google Scholar] [CrossRef]

- Reiche, J.; Hamunyela, E.; Verbesselt, J.; Hoekman, D.; Herold, M. Improving near-real time deforestation monitoring in tropical dry forests by combining dense Sentinel-1 time series with Landsat and ALOS-2 PALSAR-2. Remote Sens. Environ. 2018, 204, 147–161. [Google Scholar] [CrossRef]

- Ganz, S.; Adler, P.; Kändler, G. Forest Cover Mapping Based on a Combination of Aerial Images and Sentinel-2 Satellite Data Compared to National Forest Inventory Data. Forests 2020, 11, 1322. [Google Scholar] [CrossRef]

- Pałaś, K.W.; Zawadzki, J. Sentinel-2 Imagery Processing for Tree Logging Observations on the Białowieża Forest World Heritage Site. Forests 2020, 11, 857. [Google Scholar] [CrossRef]

- Bragagnolo, L.; da Silva, R.V.; Grzybowski, J.M.V. Amazon forest cover change mapping based on semantic segmentation by U-Nets. Ecol. Informat. 2021, 62, 101279. [Google Scholar] [CrossRef]

- Chen, B.; Xiao, X.; Li, X.; Pan, L.; Doughty, R.; Ma, J.; Dong, J.; Qin, Y.; Zhao, B.; Wu, Z.; et al. A mangrove forest map of China in 2015: Analysis of time series Landsat 7/8 and Sentinel-1A imagery in Google Earth Engine cloud computing platform. ISPRS J. Photogramm. Remote Sens. 2017, 131, 104–120. [Google Scholar] [CrossRef]

- Fernandez-Carrillo, A.; Patočka, Z.; Dobrovolnỳ, L.; Franco-Nieto, A.; Revilla-Romero, B. Monitoring bark beetle forest damage in Central Europe. A remote sensing approach validated with field data. Remote Sens. 2020, 12, 3634. [Google Scholar] [CrossRef]

- Mapflow.ai. 2021. Available online: https://mapflow.ai/ (accessed on 10 February 2022).

- Mapbox Service. Available online: https://www.mapbox.com/ (accessed on 29 April 2022).

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; Springer: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [Green Version]

- Yakubovskiy, P. Segmentation Models. 2021. Available online: https://github.com/qubvel/segmentation_models (accessed on 20 November 2021).

- Illarionova, S.; Nesteruk, S.; Shadrin, D.; Ignatiev, V.; Pukalchik, M.; Oseledets, I. Object-Based Augmentation for Building Semantic Segmentation: Ventura and Santa Rosa Case Study. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), Montreal, BC, Canada, 11–17 October 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 1659–1668. [Google Scholar]

- Illarionova, S. Satellite Object Augmentation. 2021. Available online: https://github.com/LanaLana/satellite_object_augmentation (accessed on 20 November 2021).

- Buslaev, A.; Iglovikov, V.I.; Khvedchenya, E.; Parinov, A.; Druzhinin, M.; Kalinin, A.A. Albumentations: Fast and Flexible Image Augmentations. Information 2020, 11, 125. [Google Scholar] [CrossRef] [Green Version]

- Illarionova, S.; Trekin, A.; Ignatiev, V.; Oseledets, I. Tree Species Mapping on Sentinel-2 Satellite Imagery with Weakly Supervised Classification and Object-Wise Sampling. Forests 2021, 12, 1413. [Google Scholar] [CrossRef]

- Szegedy, C.; Ioffe, S.; Vanhoucke, V.; Alemi, A.A. Inception-v4, inception-resnet and the impact of residual connections on learning. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017. [Google Scholar]

- Keras. 2020–2021. Available online: https://keras.io/ (accessed on 20 November 2021).

- Wu, B.; Xu, C.; Dai, X.; Wan, A.; Zhang, P.; Yan, Z.; Tomizuka, M.; Gonzalez, J.; Keutzer, K.; Vajda, P. Visual transformers: Token-based image representation and processing for computer vision. arXiv 2020, arXiv:2006.03677. [Google Scholar]

- Nesteruk, S.; Illarionova, S.; Akhtyamov, T.; Shadrin, D.; Somov, A.; Pukalchik, M.; Oseledets, I. XtremeAugment: Getting More From Your Data Through Combination of Image Collection and Image Augmentation. IEEE Access 2022, 10, 24010–24028. [Google Scholar] [CrossRef]

- Skole, D.L.; Samek, J.H.; Mbow, C.; Chirwa, M.; Ndalowa, D.; Tumeo, T.; Kachamba, D.; Kamoto, J.; Chioza, A.; Kamangadazi, F. Direct Measurement of Forest Degradation Rates in Malawi: Toward a National Forest Monitoring System to Support REDD+. Forests 2021, 12, 426. [Google Scholar] [CrossRef]

- Zacharov, I.; Arslanov, R.; Gunin, M.; Stefonishin, D.; Bykov, A.; Pavlov, S.; Panarin, O.; Maliutin, A.; Rykovanov, S.; Fedorov, M. “Zhores”—Petaflops supercomputer for data-driven modeling, machine learning and artificial intelligence installed in Skolkovo Institute of Science and Technology. Open Eng. 2019, 9, 512–520. [Google Scholar] [CrossRef] [Green Version]

| Large Dataset | Small Dataset | |||||

|---|---|---|---|---|---|---|

| Precision | Recall | F1 | Precision | Recall | F1 | |

| U-Net | 0.965 | 0.963 | 0.964 | 0.862 | 0.851 | 0.856 |

| FPN | 0.961 | 0.958 | 0.959 | 0.856 | 0.849 | 0.852 |

| DeepLab | 0.963 | 0.962 | 0.962 | 0.856 | 0.854 | 0.855 |

| Baseline_no_augm | Simple_augm | OBA | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Training set size | 1/3 | 2/3 | 1 | 1/3 | 2/3 | 1 | 1/3 | 2/3 | 1 |

| F1-score | |||||||||

| Small dataset test | 0.861 | 0.866 | 0.871 | 0.867 | 0.875 | 0.888 | 0.913 | 0.921 | 0.929 |

| Large dataset test | 0.956 | 0.959 | 0.962 | 0.964 | 0.965 | 0.967 | 0.966 | 0.969 | 0.971 |

| Precision | |||||||||

| Small dataset test | 0.863 | 0.865 | 0.872 | 0.869 | 0.877 | 0.889 | 0.915 | 0.922 | 0.931 |

| Large dataset test | 0.955 | 0.961 | 0.965 | 0.965 | 0.966 | 0.969 | 0.964 | 0.972 | 0.973 |

| Recall | |||||||||

| Small dataset test | 0.86 | 0.867 | 0.871 | 0.866 | 0.873 | 0.887 | 0.911 | 0.921 | 0.928 |

| Large dataset test | 0.957 | 0.958 | 0.959 | 0.963 | 0.964 | 0.965 | 0.968 | 0.967 | 0.97 |

| IoU | |||||||||

| Small dataset test | 0.754 | 0.761 | 0.768 | 0.774 | 0.783 | 0.799 | 0.851 | 0.856 | 0.867 |

| Large dataset test | 0.835 | 0.847 | 0.856 | 0.878 | 0.884 | 0.891 | 0.895 | 0.899 | 0.912 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Illarionova, S.; Shadrin, D.; Ignatiev, V.; Shayakhmetov, S.; Trekin, A.; Oseledets, I. Augmentation-Based Methodology for Enhancement of Trees Map Detalization on a Large Scale. Remote Sens. 2022, 14, 2281. https://doi.org/10.3390/rs14092281

Illarionova S, Shadrin D, Ignatiev V, Shayakhmetov S, Trekin A, Oseledets I. Augmentation-Based Methodology for Enhancement of Trees Map Detalization on a Large Scale. Remote Sensing. 2022; 14(9):2281. https://doi.org/10.3390/rs14092281

Chicago/Turabian StyleIllarionova, Svetlana, Dmitrii Shadrin, Vladimir Ignatiev, Sergey Shayakhmetov, Alexey Trekin, and Ivan Oseledets. 2022. "Augmentation-Based Methodology for Enhancement of Trees Map Detalization on a Large Scale" Remote Sensing 14, no. 9: 2281. https://doi.org/10.3390/rs14092281

APA StyleIllarionova, S., Shadrin, D., Ignatiev, V., Shayakhmetov, S., Trekin, A., & Oseledets, I. (2022). Augmentation-Based Methodology for Enhancement of Trees Map Detalization on a Large Scale. Remote Sensing, 14(9), 2281. https://doi.org/10.3390/rs14092281