Remote Sensing in Agriculture—Accomplishments, Limitations, and Opportunities

Abstract

1. Introduction

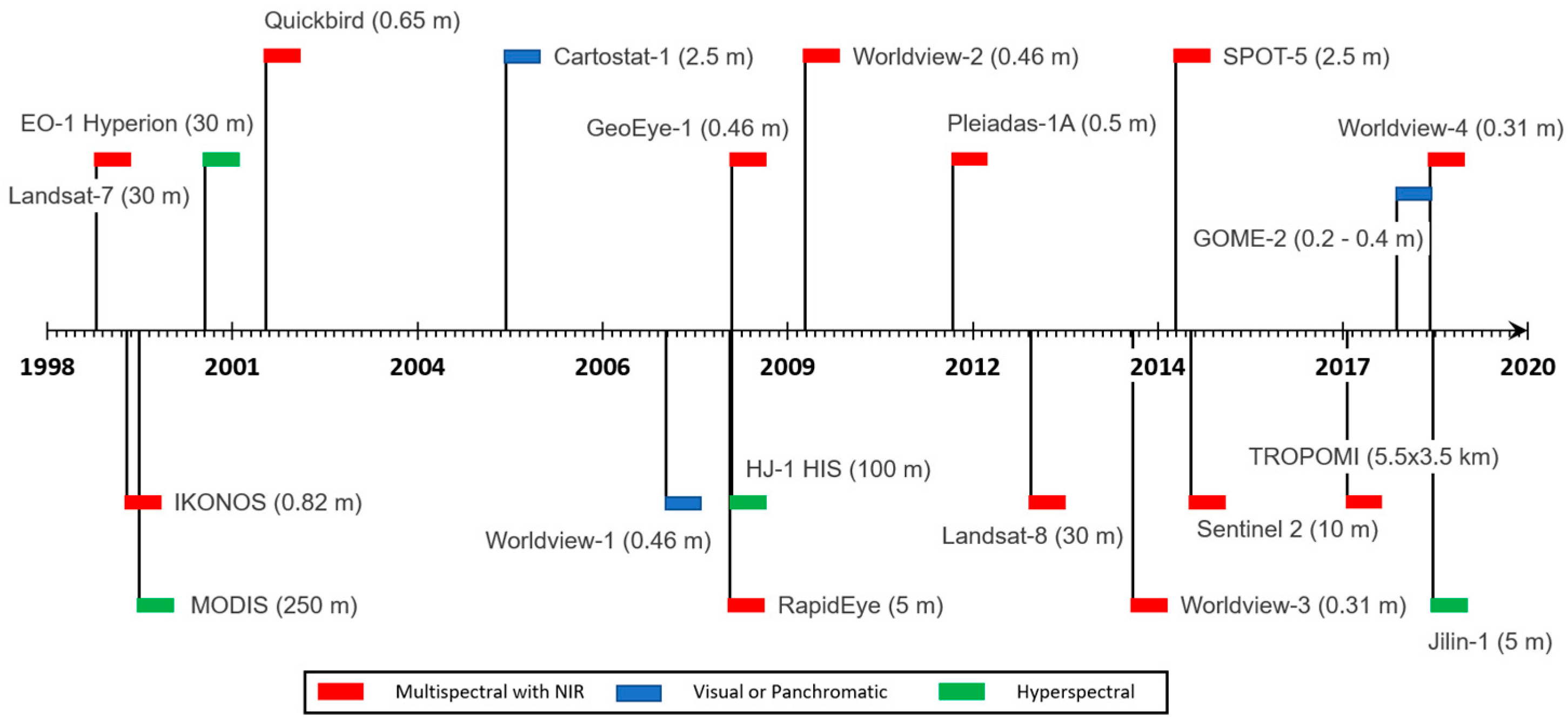

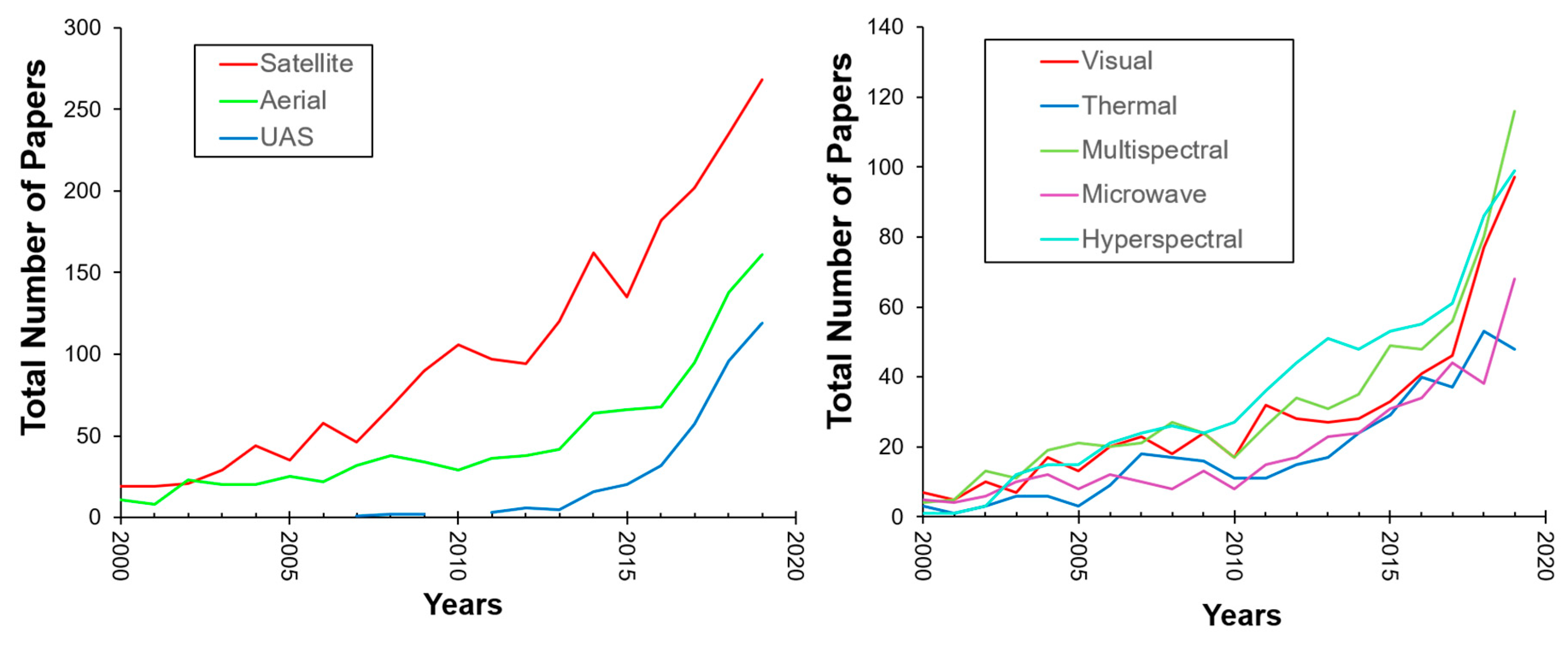

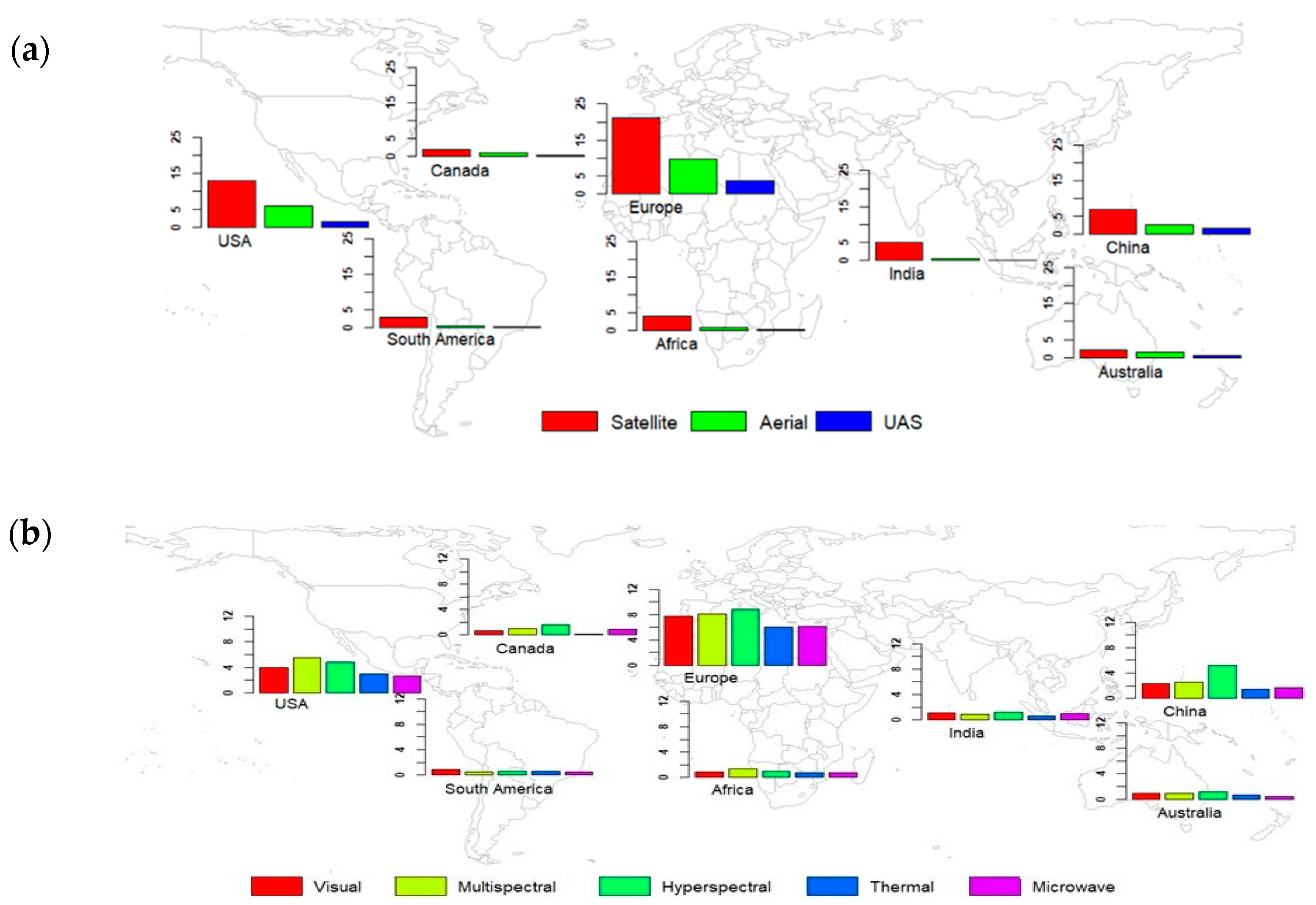

2. Remote Sensing Technologies in Agriculture: A Global Perspective on Past and Present Trends

2.1. Temporal Trends

2.2. Geographical Distribution

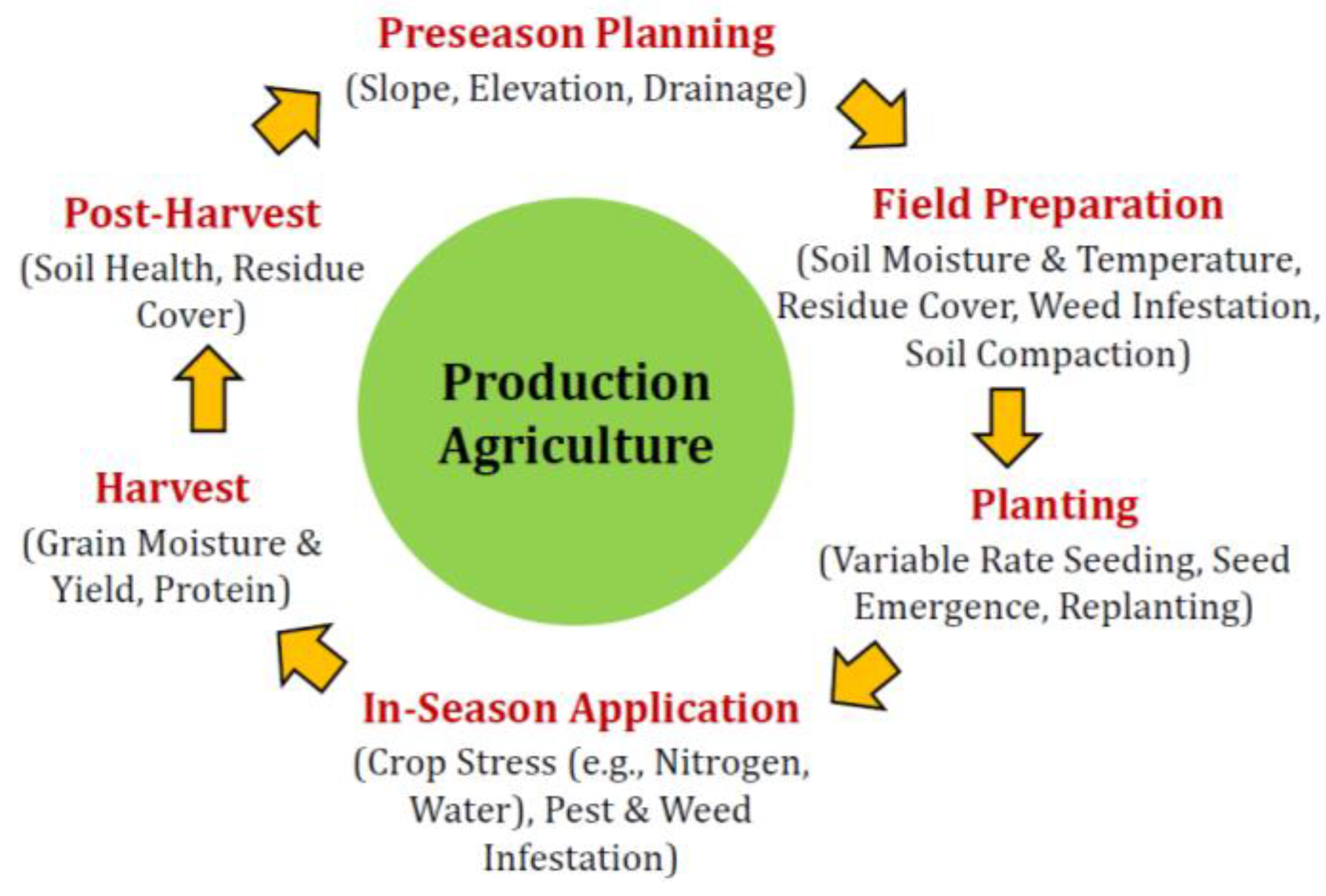

3. Remote Sensing Applications in Precision Agriculture

3.1. Linking Remote Sensing Observations to Variables of Interest in Agriculture

3.2. Remote Sensing Observations to Variables of Interest in Agriculture: A Role of Resolution

3.3. Remote Sensing Applications in Production Agriculture

3.3.1. Preseason Planning

3.3.2. Field Preparation

3.3.3. Planting

3.3.4. In-Season Crop Health Monitoring

3.3.5. Harvest

3.3.6. Post-Harvest

4. Remote Sensing for Precision Agriculture: Challenges, Limitations, and Opportunities

5. Conclusions

- LiDAR-derived data can offer an accurate representation of topography, but recent photogrammetry approaches using visual images collected by UASs have been found promising for within-field variability in topography.

- High-resolution thermal imagery has been found useful for detecting temperature differences between soil surfaces over a drain line and between drain lines, and can help detect sub-surface tile drain lines—a significant advantage over VIS and multispectral imagery.

- A majority of prior RS studies on soil moisture have focused on medium- to coarse-resolution multispectral, thermal, and hyperspectral imagery and were conducted at larger agricultural landscape scales than a field level. With advancements in data analytics and UAS technology, a recent focus has been placed on examining the downscaling of satellite-based soil moisture estimation, as well as applications of UAS for high-resolution soil moisture mapping.

- Unlike other aspects of production agriculture, the applications of RS for soil compaction and grain quality monitoring have been less explored and deserve further investigation.

- Advanced comptuer vision algorithms and analytics on high-resolution visual imagery have provided opportunities to quantify (1) crop emergence and spacing, as well as important crop features, and (2) the identification and classification of weeds and crop diseases.

- Most of the existing RS studies focused on nitrogen stress and yield assessment have been based on empirical approaches. Further studies should focus on leveraging RS data with crop modeling to understand and forecast crop dynamics, including N stresses and yields.

- Thermal RS offer advanatages over visual and multispectral RS in the early detection of crop disease.

- Prior RS works have focused mainly on assessing crop residues at a landscape scale. Site-specific residue management decsions can benefit from high-resolution RS data.

Author Contributions

Funding

Conflicts of Interest

References

- Bauer, M.E.; Cipra, J.E. Identification of agricultural crops by computer processing of ERTS-MSS data. LARS Tech. Rep. Pap. 1973, 20, 205–212. [Google Scholar]

- Yu, Z.; Cao, Z.; Wu, X.; Bai, X.; Qin, Y.; Zhuo, W.; Xiao, Y.; Zhang, X.; Xue, H. Automatic image-based detection technology for two critical growth stages of maize: Emergence and three-leaf stage. Agric. For. Meteorol. 2013, 174, 65–84. [Google Scholar] [CrossRef]

- Jin, X.; Liu, S.; Baret, F.; Hemerlé, M.; Comar, A. Estimates of plant density of wheat crops at emergence from very low altitude UAV imagery. Remote Sens. Environ. 2017, 198, 105–114. [Google Scholar] [CrossRef]

- Gnädinger, F.; Schmidhalter, U. Digital counts of maize plants by Unmanned Aerial Vehicles (UAVs). Remote Sens. 2017, 9, 544. [Google Scholar] [CrossRef]

- Varela, S.; Dhodda, P.R.; Hsu, W.H.; Prasad, P.V.V.; Assefa, Y.; Peralta, N.R.; Griffin, T.; Sharda, A.; Ferguson, A.; Ciampitti, I.A. Early-season stand count determination in Corn via integration of imagery from unmanned aerial systems (UAS) and supervised learning techniques. Remote Sens. 2018, 10, 343. [Google Scholar] [CrossRef]

- Fernandez-Ordoñez, Y.M.; Soria-Ruiz, J. Maize crop yield estimation with remote sensing and empirical models. In Proceedings of the 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 23–28 July 2017; pp. 3035–3038. [Google Scholar] [CrossRef]

- Yao, X.; Wang, N.; Liu, Y.; Cheng, T.; Tian, Y.; Chen, Q.; Zhu, Y. Estimation of wheat LAI at middle to high levels using unmanned aerial vehicle narrowband multispectral imagery. Remote Sens. 2017, 9, 1304. [Google Scholar] [CrossRef]

- Calderón, R.; Navas-Cortés, J.A.; Lucena, C.; Zarco-Tejada, P.J. High-resolution airborne hyperspectral and thermal imagery for early detection of Verticillium wilt of olive using fluorescence, temperature and narrow-band spectral indices. Remote Sens. Environ. 2013, 139, 231–245. [Google Scholar] [CrossRef]

- Khanal, S.; Fulton, J.; Shearer, S. An overview of current and potential applications of thermal remote sensing in precision agriculture. Comput. Electron. Agric. 2017, 139, 22–32. [Google Scholar] [CrossRef]

- Hassan-Esfahani, L.; Torres-Rua, A.; Jensen, A.; Mckee, M. Spatial Root Zone Soil Water Content Estimation in Agricultural Lands Using Bayesian-Based Artificial Neural Networks and High- Resolution Visual, NIR, and Thermal Imagery. Irrig. Drain. 2017, 66, 273–288. [Google Scholar] [CrossRef]

- Park, S.; Ryu, D.; Fuentes, S.; Chung, H.; Hernández-Montes, E.; O’Connell, M. Adaptive estimation of crop water stress in nectarine and peach orchards using high-resolution imagery from an unmanned aerial vehicle (UAV). Remote Sens. 2017, 9, 828. [Google Scholar] [CrossRef]

- Betbeder, J.; Fieuzal, R.; Baup, F. Assimilation of LAI and Dry Biomass Data From Optical and SAR Images Into an Agro-Meteorological Model to Estimate Soybean Yield. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2016, 9, 2540–2553. [Google Scholar] [CrossRef]

- Lopez-Sanchez, J.; Vicente-Guijalba, F.; Erten, E.; Campos-Taberner, M.; Garcia-Haro, F. Retrieval of vegetation height in rice fields using polarimetric SAR interferometry with TanDEM-X data. Remote Sens. Environ. 2017, 192, 30–44. [Google Scholar] [CrossRef]

- Gorelick, N.; Hancher, M.; Dixon, M.; Ilyushchenko, S.; Thau, D.; Moore, R. Google Earth Engine: Planetary-scale geospatial analysis for everyone. Remote Sens. Environ. 2017, 202, 18–27. [Google Scholar] [CrossRef]

- Farg, E.; Ramadan, M.N.; Arafat, S.M. Classification of some strategic crops in Egypt using multi remotely sensing sensors and time series analysis. Egypt. J. Remote Sens. Space Sci. 2019, 22, 263–270. [Google Scholar] [CrossRef]

- Habibie, M.I.; Noguchi, R.; Shusuke, M.; Ahamed, T. Land Suitability Analysis for Maize Production in Indonesia Using Satellite Remote Sensing and GIS-Based Multicriteria Decision Support System. GeoJournal 2019, 5. [Google Scholar] [CrossRef]

- Tasumi, M. Estimating evapotranspiration using METRIC model and Landsat data for better understandings of regional hydrology in the western Urmia Lake Basin. Agric. Water Manag. 2019, 226, 105805. [Google Scholar] [CrossRef]

- Xie, Z.; Phinn, S.R.; Game, E.T.; Pannell, D.J.; Hobbs, R.J.; Briggs, P.R.; McDonald-Madden, E. Using Landsat observations (1988–2017) and Google Earth Engine to detect vegetation cover changes in rangelands—A first step towards identifying degraded lands for conservation. Remote Sens. Environ. 2019, 232, 111317. [Google Scholar] [CrossRef]

- Nock, C.A.; Vogt, R.J.; Beisner, B.E. Functional Traits. eLS 2016, 1–8. [Google Scholar] [CrossRef]

- Baker, R.E.; Peña, J.M.; Jayamohan, J.; Jérusalem, A. Mechanistic models versus machine learning, a fight worth fighting for the biological community? Biol. Lett. 2018, 14, 1–4. [Google Scholar] [CrossRef]

- Raun, W.R.; Solie, J.B.; Stone, M.L.; Martin, K.L.; Freeman, K.W.; Mullen, R.W.; Zhang, H.; Schepers, J.S.; Johnson, G.V. Optical sensor-based algorithm for crop nitrogen fertilization. Commun. Soil Sci. Plant Anal. 2005, 36, 2759–2781. [Google Scholar] [CrossRef]

- Bushong, J.T.; Mullock, J.L.; Miller, E.C.; Raun, W.R.; Brian Arnall, D. Evaluation of mid-season sensor based nitrogen fertilizer recommendations for winter wheat using different estimates of yield potential. Precis. Agric. 2016, 17, 470–487. [Google Scholar] [CrossRef]

- Baret, F.; Houlès, V.; Guérif, M. Quantification of plant stress using remote sensing observations and crop models: The case of nitrogen management. J. Exp. Bot. 2007, 58, 869–880. [Google Scholar] [CrossRef] [PubMed]

- Prasad, A.K.; Chai, L.; Singh, R.P.; Kafatos, M. Crop yield estimation model for Iowa using remote sensing and surface parameters. Int. J. Appl. Earth Obs. Geoinf. 2006, 8, 26–33. [Google Scholar] [CrossRef]

- Bustos-Korts, D.; Boer, M.P.; Malosetti, M.; Chapman, S.; Chenu, K.; Zheng, B.; van Eeuwijk, F.A. Combining Crop Growth Modeling and Statistical Genetic Modeling to Evaluate Phenotyping Strategies. Front. Plant Sci. 2019, 10, 1491. [Google Scholar] [CrossRef]

- Estes, L.D.; Bradley, B.A.; Beukes, H.; Hole, D.G.; Lau, M.; Oppenheimer, M.G.; Schulze, R.; Tadross, M.A.; Turner, W.R. Comparing mechanistic and empirical model projections of crop suitability and productivity: Implications for ecological forecasting. Glob. Ecol. Biogeogr. 2013, 22, 1007–1018. [Google Scholar] [CrossRef]

- Hong, M.; Bremer, D.J.; Merwe, D. Thermal Imaging Detects Early Drought Stress in Turfgrass Utilizing Small Unmanned Aircraft Systems. Agrosyst. Geosci. Environ. 2019, 2, 1–9. [Google Scholar] [CrossRef]

- Möller, M.; Alchanatis, V.; Cohen, Y.; Meron, M.; Tsipris, J.; Naor, A.; Ostrovsky, V.; Sprintsin, M.; Cohen, S. Use of thermal and visible imagery for estimating crop water status of irrigated grapevine. J. Exp. Bot. 2007, 58, 827–838. [Google Scholar] [CrossRef]

- Renschler, C.S.; Flanagan, D.C.; Engel, B.A.; Kramer, L.A.; Sudduth, K.A. Site–specific decision–making based on RTK GPS survey and six alternative elevation data sources: Watershed topography and delineation. Trans. ASABE 2002, 45, 1883–1895. [Google Scholar] [CrossRef][Green Version]

- Renschler, C.S.; Flanagan, D.C. Site-specific decision-making based on RTK GPS survey and six alternative elevation data sources: Soil erosion predictions. Trans. ASABE 2008, 51, 413–424. [Google Scholar] [CrossRef]

- Wang, X.; Holland, D.M.; Gudmundsson, G.H. Accurate coastal DEM generation by merging ASTER GDEM and ICESat/GLAS data over Mertz Glacier, Antarctica. Remote Sens. Environ. 2018, 206, 218–230. [Google Scholar] [CrossRef]

- Gesch, D.B.; Oimoen, M.J.; Evans, G.A. Accuracy Assessment of the U.S. Geological Survey National Elevation Dataset, and Comparison with Other Large-Area Elevation Datasets-SRTM and ASTER. US Geol. Surv. Open-File Rep. 2014, 1008, 18. [Google Scholar] [CrossRef]

- Hodgson, M.E.; Jensen, J.R.; Schmidt, L.; Schill, S.; Davis, B. An evaluation of LIDAR- and IFSAR-derived digital elevation models in leaf-on conditions with USGS Level 1 and Level 2 DEMs. Remote Sens. Environ. 2003, 84, 295–308. [Google Scholar] [CrossRef]

- Vaze, J.; Teng, J.; Spencer, G. Impact of DEM accuracy and resolution on topographic indices. Environ. Model. Softw. 2010, 25, 1086–1098. [Google Scholar] [CrossRef]

- Neugirg, F.; Stark, M.; Kaiser, A.; Vlacilova, M.; Della Seta, M.; Vergari, F.; Schmidt, J.; Becht, M.; Haas, F. Erosion processes in calanchi in the Upper Orcia Valley, Southern Tuscany, Italy based on multitemporal high-resolution terrestrial LiDAR and UAV surveys. Geomorphology 2016, 269, 8–22. [Google Scholar] [CrossRef]

- Whitehead, K.; Hugenholtz, C.H. Remote sensing of the environment with small unmanned aircraft systems (UASs), part 1: A review of progress and challenges. J. Unmanned Veh. Syst. 2014, 02, 69–85. [Google Scholar] [CrossRef]

- Allred, B.; Eash, N.; Freeland, R.; Martinez, L.; Wishart, D.B. Effective and efficient agricultural drainage pipe mapping with UAS thermal infrared imagery: A case study. Agric. Water Manag. 2018, 197, 132–137. [Google Scholar] [CrossRef]

- Allred, B.; Martinez, L.; Fessehazion, M.K.; Rouse, G.; Williamson, T.N.; Wishart, D.; Koganti, T.; Featheringill, R. Overall results and key findings on the use of UAV visible-color, multispectral, and thermal infrared imagery to map agricultural drainage pipes. Agric. Water Manag. 2020, 232, 106036. [Google Scholar] [CrossRef]

- Williamson, T.N.; Dobrowolski, E.G.; Meyer, S.M.; Frey, J.W.; Allred, B.J. Delineation of tile-drain networks using thermal and multispectral imagery—Implications for water quantity and quality differences from paired edge-of-field sites. J. Soil Water Conserv. 2018, 74, 1–11. [Google Scholar] [CrossRef]

- Verma, A.K.; Cooke, R.A.; Wendte, L. Mapping Subsurface Drainage Systems with Color Infrared Aerial Photographs; Department of Agricultural Engineering, University of Illinois: Urbana, IL, USA; Champaign, IL, USA, 1996. [Google Scholar]

- Naz, B.S.; Ale, S.; Bowling, L.C. Detecting subsurface drainage systems and estimating drain spacing in intensively managed agricultural landscapes. Agric. Water Manag. 2009, 96, 627–637. [Google Scholar] [CrossRef]

- Smedema, L.K.; Vlotman, W.F.; Rycroft, D. Modern Land Drainage: Planning, Design and Management of Agricultural Drainage Systems; CRC Press: Boca Raton, FL, USA, 2004. [Google Scholar]

- Jensen, J. Remote sensing of soils, minerals, and geomorphology. In Remote Sensing of the Environment; Pearson Education: London, UK, 2007; pp. 457–524. [Google Scholar]

- Mira, M.; Valor, E.; Boluda, R.; Caselles, V.; Coll, C. Influence of the soil moisture effect on the thermal infrared emissivity. Tethys 2007, 4, 3–9. [Google Scholar] [CrossRef]

- Abdel-Hardy, M.; Abdel-Hafez, M.; Karbs, H. Subsurface Drainage Mapping by Airborne Infrared Imagery Techniques. Proc. Okla. Acad. Sci. 1970, 50, 10–18. [Google Scholar]

- Sugg, Z. Assessing US Farm Drainage: Can GIS Lead to Better Estimates of Subsurface Drainage Extent; World Resources Institute: Washington, DC, USA, 2007; p. 20002. [Google Scholar]

- Thayn, J.B.; Campbell, M.; Deloriea, T. Mapping Tile-Drained Agricultural Lands; Institute Geospatial Analsyis Mapping (GEOMAP); Illinois State University: Normal, IL, USA, 2011. [Google Scholar]

- Gökkaya, K.; Budhathoki, M.; Christopher, S.F.; Hanrahan, B.R.; Tank, J.L. Subsurface tile drained area detection using GIS and remote sensing in an agricultural watershed. Ecol. Eng. 2017, 108, 370–379. [Google Scholar] [CrossRef]

- Schwarz, G.E.; Hoos, A.B.; Alexander, R.B.; Smith, R.A. The SPARROW surface water-quality model: Theory, application and user documentation. US Geol. Surv. Tech. Methods Rep. B 2006, 6, 248. [Google Scholar]

- Koch, S.; Bauwe, A.; Lennartz, B. Application of the SWAT model for a tile-drained lowland catchment in North-Eastern Germany on subbasin scale. Water Resour. Manag. 2013, 27, 791–805. [Google Scholar] [CrossRef]

- Naz, B.S.; Bowling, L.C. Automated identification of tile lines from remotely sensed data. Trans. ASABE 2008, 51, 1937–1950. [Google Scholar] [CrossRef]

- Pioneer. Soil Temperature and Corn Emergence; Pioneer: Banora Point, Australia, 2019. [Google Scholar]

- Zhang, K.; Kimball, J.S.; Running, S.W. A review of remote sensing based actual evapotranspiration estimation. WIREs Water 2016, 3, 834–853. [Google Scholar] [CrossRef]

- Carlson, T. An Overview of the ‘Triangle Method’ for Estimating Surface Evapotranspiration and Soil Moisture from Satellite Imagery. Sensors 2007, 7, 1612–1629. [Google Scholar] [CrossRef]

- Zhu, W.; Jia, S.; Lv, A. A Universal Ts-VI Triangle Method for the Continuous Retrieval of Evaporative Fraction From MODIS Products. J. Geophys. Res. Atmos. 2017, 122, 10206–10227. [Google Scholar] [CrossRef]

- Babaeian, E.; Sadeghi, M.; Franz, T.E.; Jones, S.; Tuller, M. Mapping soil moisture with the OPtical TRApezoid Model (OPTRAM) based on long-term MODIS observations. Remote Sens. Environ. 2018, 211, 425–440. [Google Scholar] [CrossRef]

- Verstraeten, W.W.; Veroustraete, F.; Feyen, J. Assessment of Evapotranspiration and Soil Moisture Content Across Different Scales of Observation. Sensors 2008, 8, 70–117. [Google Scholar] [CrossRef]

- Zhang, D.; Zhou, G. Estimation of Soil Moisture from Optical and Thermal Remote Sensing: A Review. Sensors 2016, 16, 1308. [Google Scholar] [CrossRef] [PubMed]

- Chen, S.; She, D.; Zhang, L.; Guo, M.; Liu, X. Spatial downscaling methods of soil moisture based on multisource remote sensing data and its application. Water 2019, 11, 1401. [Google Scholar] [CrossRef]

- Hassan-Esfahani, L.; Torres-Rua, A.; Jensen, A.; McKee, M. Assessment of surface soil moisture using high-resolution multi-spectral imagery and artificial neural networks. Remote Sens. 2015, 7, 2627–2646. [Google Scholar] [CrossRef]

- Aboutalebi, M.; Allen, N.; Torres-Rua, A.F.; McKee, M.; Coopmans, C. Estimation of soil moisture at different soil levels using machine learning techniques and unmanned aerial vehicle (UAV) multispectral imagery. Auton. Air Gr. Sens. Syst. Agric. Optim. Phenotyping IV 2019, 11008, 110080S. [Google Scholar]

- Gao, Z.; Xu, X.; Wang, J.; Yang, H.; Huang, W.; Feng, H. A method of estimating soil moisture based on the linear decomposition of mixture pixels. Math. Comput. Model. 2013, 58, 606–613. [Google Scholar] [CrossRef]

- Soliman, A.; Heck, R.J.; Brenning, A.; Brown, R.; Miller, S. Remote sensing of soil moisture in vineyards using airborne and ground-based thermal inertia data. Remote Sens. 2013, 5, 3729–3748. [Google Scholar] [CrossRef]

- Kalieta, A.L.; Tian, L.F.; Hirschi, M.C. Relationship Between Soil Moisture Content and Soil Surface Reflectance. Trans. ASAE 2005, 48, 1979–1986. [Google Scholar] [CrossRef]

- Peng, J.; Shen, H.; He, S.W.; Wu, J.S. Soil moisture retrieving using hyperspectral data with the application of wavelet analysis. Environ. Earth Sci. 2013, 69, 279–288. [Google Scholar] [CrossRef]

- Mobasheri, M.R.; Amani, M. Soil moisture content assessment based on Landsat 8 red, near-infrared, and thermal channels. J. Appl. Remote Sens. 2016, 10, 026011. [Google Scholar] [CrossRef]

- Amani, M.; Parsian, S.; MirMazloumi, S.M.; Aieneh, O. Two new soil moisture indices based on the NIR-red triangle space of Landsat-8 data. Int. J. Appl. Earth Obs. Geoinf. 2016, 50, 176–186. [Google Scholar] [CrossRef]

- Kulkarni, S.S.; Bajwa, S.G.; Huitink, G. Investigation of the effects of soil compaction in cotton. Am. Soc. Agric. Biol. Eng. 2010, 53, 667–674. [Google Scholar] [CrossRef]

- Wells, L.G.; Stombaugh, T.S.; Shearer, S.A. Application and Assessment of Precision Deep Tillage; American Society of Agricultural and Biological Engineers: St. Joseph, MI, USA, 2013; Volume 1. [Google Scholar]

- Alaoui, A.; Diserens, E. Mapping soil compaction—A review. Curr. Opin. Environ. Sci. Health 2018, 5, 60–66. [Google Scholar] [CrossRef]

- Troldborg, M.; Aalders, I.; Towers, W.; Hallett, P.D.; McKenzie, B.M.; Bengough, A.G.; Lilly, A.; Ball, B.C.; Hough, R.L. Application of Bayesian Belief Networks to quantify and map areas at risk to soil threats: Using soil compaction as an example. Soil Tillage Res. 2013, 132, 56–68. [Google Scholar] [CrossRef]

- Li, B.; Xu, X.; Han, J.; Zhang, L.; Bian, C.; Jin, L.; Liu, J. The estimation of crop emergence in potatoes by UAV RGB imagery. Plant Methods 2019, 15, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Zhao, B.; Zhang, J.; Yang, C.; Zhou, G.; Ding, Y.; Shi, Y.; Zhang, D.; Xie, J.; Liao, Q. Rapeseed seedling stand counting and seeding performance evaluation at two early growth stages based on unmanned aerial vehicle imagery. Front. Plant Sci. 2018, 9, 1–17. [Google Scholar] [CrossRef] [PubMed]

- USDA NASS. United States Department of Agriculture—National Agricultural Statistics Service. 2012 ARMS-Soybean Industry Highlights; 2014; pp. 1–4. Available online: https://www.nass.usda.gov/Surveys/Guide_to_NASS_Surveys/Ag_Resource_Management/ARMS_Soybeans_Factsheet/ARMS_2013_Soybeans.pdf (accessed on 4 October 2020).

- Cilia, C.; Panigada, C.; Rossini, M.; Meroni, M.; Busetto, L.; Amaducci, S.; Boschetti, M.; Picchi, V.; Colombo, R. Nitrogen status assessment for variable rate fertilization in maize through hyperspectral imagery. Remote Sens. 2014, 6, 6549–6565. [Google Scholar] [CrossRef]

- Khanal, S.; Fulton, J.; Douridas, N.; Klopfenstein, A.; Shearer, S. Integrating aerial images for in-season nitrogen management in a corn field. Comput. Electron. Agric. 2018, 148, 121–131. [Google Scholar] [CrossRef]

- Gabriel, J.L.; Zarco-Tejada, P.J.; López-Herrera, P.J.; Pérez-Martín, E.; Alonso-Ayuso, M.; Quemada, M. Airborne and ground level sensors for monitoring nitrogen status in a maize crop. Biosyst. Eng. 2017, 160, 124–133. [Google Scholar] [CrossRef]

- Miao, Y.; Mulla, D.J.; Randall, G.W.; Vetsch, J.A.; Vintila, R. Combining chlorophyll meter readings and high spatial resolution remote sensing images for in-season site-specific nitrogen management of corn. Precis. Agric. 2009, 10, 45–62. [Google Scholar] [CrossRef]

- Muñoz-Huerta, R.F.; Guevara-Gonzalez, R.G.; Contreras-Medina, L.M.; Torres-Pacheco, I.; Prado-Olivarez, J.; Ocampo-Velazquez, R.V. A Review of Methods for Sensing the Nitrogen Status in Plants: Advantages, Disadvantages and Recent Advances. Sensors 2013, 13, 10823–10843. [Google Scholar] [CrossRef]

- Jin, Z.; Archontoulis, S.V.; Lobell, D.B. How much will precision nitrogen management pay off? An evaluation based on simulating thousands of corn fields over the US Corn-Belt. Field Crop. Res. 2019, 240, 12–22. [Google Scholar] [CrossRef]

- West, J.S.; Bravo, C.; Oberti, R.; Lemaire, D.; Moshou, D.; Alastair, M.H. The Potential of Optical Canopy Measurement for Targeted Control of Field Crop Diseases. Annu. Rev. Phytopathol. 2003, 41, 593–614. [Google Scholar] [CrossRef]

- Lorenzen, B.; Jensen, A. Changes in leaf spectral properties induced in barley by cereal powdery mildew. Remote Sens. Environ. 1989, 27, 201–209. [Google Scholar] [CrossRef]

- Franke, J.; Menz, G. Multi-temporal wheat disease detection by multi-spectral remote sensing. Precis. Agric. 2007, 8, 161–172. [Google Scholar] [CrossRef]

- Mahlein, A. Present and Future Trends in Plant Disease Detection. Am. Phytopathol. Soc. 2016, 241–251. [Google Scholar]

- Mahlein, A.K.; Rumpf, T.; Welke, P.; Dehne, H.W.; Plümer, L.; Steiner, U.; Oerke, E.C. Development of spectral indices for detecting and identifying plant diseases. Remote Sens. Environ. 2013, 128, 21–30. [Google Scholar] [CrossRef]

- Mohanty, S.P.; Hughes, D.P.; Salathé, M. Using deep learning for image-based plant disease detection. Front. Plant Sci. 2016, 7, 1–10. [Google Scholar] [CrossRef]

- Barbedo, J.G.A. A review on the main challenges in automatic plant disease identification based on visible range images. Biosyst. Eng. 2016, 144, 52–60. [Google Scholar] [CrossRef]

- Ramcharan, A.; Baranowski, K.; Mccloskey, P.; Ahmed, B.; Legg, J.; Hughes, D.P. Deep Learning for Image-Based Cassava Disease Detection. Front. Plant Sci. 2017, 8, 1–7. [Google Scholar] [CrossRef]

- Stoll, M.; Schultz, H.R.; Baecker, G.; Berkelmann-loehnertz, B. Early pathogen detection under different water status and the assessment of spray application in vineyards through the use of thermal imagery. Precis. Agric. 2008, 9, 407–417. [Google Scholar] [CrossRef]

- Wu, D.; Feng, L.; Zhang, C.; He, Y. Early detection of Botrytis Cinerea on eggplant leaves based on visible and near-infrared spectroscopy. Trans. ASABE 2008, 51, 1133–1139. [Google Scholar] [CrossRef]

- Dammer, K.H.; Möller, B.; Rodemann, B.; Heppner, D. Detection of head blight (Fusarium ssp.) in winter wheat by color and multispectral image analyses. Crop. Prot. 2011, 30, 420–428. [Google Scholar] [CrossRef]

- Kerkech, M.; Hafiane, A.; Canals, R. Deep leaning approach with colorimetric spaces and vegetation indices for vine diseases detection in UAV images. Comput. Electron. Agric. 2018, 155, 237–243. [Google Scholar] [CrossRef]

- Sugiura, R.; Tsuda, S.; Tsuji, H.; Murakami, N. Virus-Infected Plant Detection in Potato Seed Production Field by UAV Imagery Ryo; American Society of Agricultural and Biological Engineers: St. Joseph, MI, USA, 2018; pp. 2–6. [Google Scholar]

- Su, J.; Liu, C.; Coombes, M.; Hu, X.; Wang, C.; Xu, X.; Li, Q.; Guo, L.; Chen, W. Wheat yellow rust monitoring by learning from multispectral UAV aerial imagery. Comput. Electron. Agric. 2018, 155, 157–166. [Google Scholar] [CrossRef]

- Johnson, G.A.; Mortensen, D.A.; Martin, A.R. A simulation of herbicide use based on weed spatial distribution. Weed Res. 1995, 35, 197–205. [Google Scholar] [CrossRef]

- Rew, L.J.; Cussans, G.W.; Mugglestone, M.A.; Miller, P.C.H. A technique for mapping the spatial distribution of Elymus repots, with estimates of the potential reduction in herbicide usage from patch spraying. Weed Res. 1996, 36, 283–292. [Google Scholar] [CrossRef]

- Richardson, A.J.; Wiegand, C.L. Distinguishing vegetation from soil background information. Photogramm. Eng. Remote Sens. 1977, 43, 1541–1552. [Google Scholar]

- Menges, R.M.; Nixon, P.R.; Richardson, A.J. Light reflectance and remote sensing of weeds in agronomic and horticultural crops. Weed Sci. 1985, 33, 569–581. [Google Scholar] [CrossRef]

- Richardson, A.J.; Menges, R.M.; Nixon, P.R. Distinguishing weed from crop plants using video remote sensing. Photogramm. Eng. Remote Sens. 1985, 51, 1785–1790. [Google Scholar]

- Stafford, J.V.; Miller, P.C.H. Spatially variable treatment of weed patches. In Proceedings of the Third International Conference on Precision Agriculture, Minneapolis, MN, USA, 23–26 June 1996; pp. 465–474. [Google Scholar]

- Guyer, D.E.; Miles, G.E.; Schreiber, M.M.; Mitchell, O.R.; Vanderbilt, V.C. Machine vision and image processing for plant identification. Trans. ASAE 1986, 29, 1500–1507. [Google Scholar] [CrossRef]

- Shearer, S.A.; Holmes, R.G. Plant identification using color co-occurrence matrices. Trans. ASAE 1990, 33, 1237–1244. [Google Scholar] [CrossRef]

- Michaud, M.-A.; Watts, C.; Percival, D. Precision pesticide delivery based on aerial spectral imaging. Can. Biosyst. Eng. 2008, 29–215. [Google Scholar] [CrossRef]

- Brown, R.B.; Bennett, K.; Goudy, H.; Tardif, F. Site specific weed management with a direct-injection precision sprayer. In Proceedings of the 2000 ASAE Annual International Meeting, Milwaukee, WI, USA, 9–12 July 2000; pp. 1–13. [Google Scholar]

- Anderson, G.L.; Everitt, J.H.; Richardson, A.J.; Escobar, D.E. Using satellite data to map false broomweed (Ericameria austrotexana) infestations on south Texas rangelands. Weed Technol. 1993, 7, 865–871. [Google Scholar] [CrossRef]

- López-Granados, F.; Torres-Sánchez, J.; De Castro, A.I.; Serrano-Pérez, A.; Mesas-Carrascosa, F.J.; Peña, J.M. Object-based early monitoring of a grass weed in a grass crop using high resolution UAV imagery. Agron. Sustain. Dev. 2016, 36, 1–12. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; Peña, J.M.; de Castro, A.I.; López-Granados, F. Multi-temporal mapping of the vegetation fraction in early-season wheat fields using images from UAV. Comput. Electron. Agric. 2014, 103, 104–113. [Google Scholar] [CrossRef]

- Peña, J.M.; Torres-Sánchez, J.; de Castro, A.I.; Kelly, M.; López-Granados, F. Weed mapping in early-season maize fields using object-based analysis of unmanned aerial vehicle (UAV) images. PLoS ONE 2013, 8, e77151. [Google Scholar]

- Olsen, A.; Konovalov, D.A.; Philippa, B.; Ridd, P.; Wood, J.C.; Johns, J.; Banks, W.; Girgenti, B.; Kenny, O.; Whinney, J.; et al. DeepWeeds: A Multiclass Weed Species Image Dataset for Deep Learning. Sci. Rep. 2019, 9, 2058. [Google Scholar] [CrossRef]

- Wang, A.; Zhang, W.; Wei, X. A review on weed detection using ground-based machine vision and image processing techniques. Comput. Electron. Agric. 2019, 158, 226–240. [Google Scholar] [CrossRef]

- De Castro, A.I.; Ehsani, R.; Ploetz, R.; Crane, J.H.; Abdulridha, J. Optimum spectral and geometric parameters for early detection of laurel wilt disease in avocado. Remote Sens. Environ. 2015, 171, 33–44. [Google Scholar] [CrossRef]

- Gibson, K.D.; Dirks, R.; Medlin, C.R.; Johnston, L. Detection of Weed Species in Soybean Using Multispectral Digital Images. Weed Technol. 2004, 18, 742–749. [Google Scholar] [CrossRef]

- Bern, C.J.; Quick, G.; Herum, F.L. Harvesting and postharvest management. In Corn; AACC International Press: Washington, DC, USA, 2019; pp. 109–145. [Google Scholar]

- Diker, K.; Heermann, D.F.; Bausch, W.C.; Wright, D.K. Relationship between yield monitor and remotely sensed data for corn. In Proceedings of the 2002 ASAE Annual Meeting, Chicago, IL, USA, 28–31 July 2002; p. 1. [Google Scholar]

- Geipel, J.; Link, J.; Claupein, W. Combined spectral and spatial modeling of corn yield based on aerial images and crop surface models acquired with an unmanned aircraft system. Remote Sens. 2014, 6, 10335–10355. [Google Scholar] [CrossRef]

- Du, M.; Noguchi, N. Monitoring of wheat growth status and mapping of wheat yield’s within-field spatial variations using color images acquired from UAV-camera System. Remote Sens. 2017, 9, 289. [Google Scholar] [CrossRef]

- Khanal, S.; Fulton, J.; Klopfenstein, A.; Douridas, N.; Shearer, S. Integration of high resolution remotely sensed data and machine learning techniques for spatial prediction of soil properties and corn yield. Comput. Electron. Agric. 2018, 153, 213–225. [Google Scholar] [CrossRef]

- Lobell, D.B.; Thau, D.; Seifert, C.; Engle, E.; Little, B. A scalable satellite-based crop yield mapper. Remote Sens. Environ. 2015, 164, 324–333. [Google Scholar] [CrossRef]

- Doraiswamy, P.C.; Sinclair, T.R.; Hollinger, S.; Akhmedov, B.; Stern, A.; Prueger, J. Application of MODIS derived parameters for regional crop yield assessment. Remote Sens. Environ. 2005, 97, 192–202. [Google Scholar] [CrossRef]

- Weiss, M.; Jacob, F.; Duveiller, G. Remote sensing for agricultural applications: A meta-review. Remote Sens. Environ. 2020, 236, 111402. [Google Scholar] [CrossRef]

- Wang, Z.J.; Wang, J.H.; Liu, L.Y.; Huang, W.J.; Zhao, C.J.; Wang, C.Z. Prediction of grain protein content in winter wheat (Triticum aestivum L.) using plant pigment ratio (PPR). Field Crop. Res. 2004, 90, 311–321. [Google Scholar] [CrossRef]

- Wang, L.; Tian, Y.; Yao, X.; Zhu, Y.; Cao, W. Predicting grain yield and protein content in wheat by fusing multi-sensor and multi-temporal remote-sensing images. Field Crop. Res. 2014, 164, 178–188. [Google Scholar] [CrossRef]

- Jensen, T.; Apan, A.; Young, F.; Zeller, L. Detecting the attributes of a wheat crop using digital imagery acquired from a low-altitude platform. Comput. Electron. Agric. 2007, 59, 66–77. [Google Scholar] [CrossRef]

- Shah, A.; Darr, M.; Khanal, S.; Lal, R. A techno-environmental overview of a corn stover biomass feedstock supply chain for cellulosic biorefineries. Biofuels 2017, 8, 59–69. [Google Scholar] [CrossRef]

- Sharma, V.; Irmak, S.; Kilic, A.; Sharma, V.; Gilley, J.E.; Meyer, G.E.; Knezevic, S.Z.; Marx, D. Quantification and Mapping of Surface Residue Cover for Maize and Soybean Fields in South Central Nebraska. Trans. ASABE 2016, 59, 925–939. [Google Scholar] [CrossRef]

- Galloza, M.S.; Crawford, M.M.; Heathman, G.C. Crop residue modeling and mapping using landsat, ALI, hyperion and airborne remote sensing data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 446–456. [Google Scholar] [CrossRef]

- Sullivan, D.G.; Shaw, J.N.; Mask, P.L.; Rickman, D.; Guertal, E.A.; Luvall, J.; Wersinger, J.M. Evaluation of Multispectral Data for Rapid Assessment of Wheat Straw Residue Cover. Soil Sci. Soc. Am. J. 2004. [Google Scholar] [CrossRef]

- Daughtry, C. Discriminating Crop Residues from Soil by Shortwave Infrared Reflectance. Agron. J. 2001, 93, 125. [Google Scholar] [CrossRef]

- Higgins, S.; Schellberg, J.; Bailey, J.S. Improving productivity and increasing the efficiency of soil nutrient management on grassland farms in the UK and Ireland using precision agriculture technology. Eur. J. Agron. 2019, 106, 67–74. [Google Scholar] [CrossRef]

- Colaço, A.F.; Bramley, R.G.V. Do crop sensors promote improved nitrogen management in grain crops? Field Crop. Res. 2018, 218, 126–140. [Google Scholar] [CrossRef]

- Vidal, M.; Amigo, J.M. Pre-processing of hyperspectral images. Essential steps before image analysis. Chemom. Intell. Lab. Syst. 2012, 117, 138–148. [Google Scholar] [CrossRef]

- Jia, B.; Wang, W.; Ni, X.; Lawrence, K.C.; Zhuang, H.; Yoon, S.C.; Gao, Z. Essential processing methods of hyperspectral images of agricultural and food products. Chemom. Intell. Lab. Syst. 2020, 198, 103936. [Google Scholar] [CrossRef]

- Pandey, P.C.; Balzter, H.; Srivastava, P.K.; Petropoulos, G.P.; Bhattacharya, B. Future Perspectives and Challenges in Hyperspectral Remote Sensing. Hyperspectral Remote Sens. 2020, 7. [Google Scholar] [CrossRef]

- Woodcock, C.E.; Allen, R.; Anderson, M.; Belward, A.; Bindschadler, R.; Cohen, W.; Gao, F.; Goward, S.N.; Helder, D.; Helmer, E.; et al. Free access to landsat imagery. Science 2008, 320, 1011. [Google Scholar] [CrossRef]

- Drusch, M.; Del Bello, U.; Carlier, S.; Colin, O.; Fernandez, V.; Gascon, F.; Hoersch, B.; Isola, C.; Laberinti, P.; Martimort, P.; et al. Sentinel-2: ESA’s Optical High-Resolution Mission for GMES Operational Services. Remote Sens. Environ. 2012, 120, 25–36. [Google Scholar] [CrossRef]

- Drone Apps. Price Wars: Counting the Cost of Drones, Planes and Satellites. 2015. Available online: https://droneapps.co/price-wars-the-cost-of-drones-planes-and-satellites/ (accessed on 1 January 2020).

- LandInfo. Buying Satellite Imagery: Pricing Information for High Resolution Satellite Imagery; LLC LW: Miami, FL, USA, 2014. [Google Scholar]

- Hulley, G.C.; Hook, S.J.; Fisher, J.B.; Lee, C. Ecostress, a NASA earth—Ventures instrument for studying links between the water cycle and plant health over the diurnal cycle. In Proceedings of the 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 23–28 July 2017; Volume 2, pp. 11–13. [Google Scholar]

- Lagouarde, J.P.; Bhattacharya, B.K.; Crébassol, P.; Gamet, P.; Babu, S.S.; Boulet, G.; Briottet, X.; Buddhiraju, K.M.; Cherchali, S.; Dadou, I.; et al. The Indian-French Trishna mission: Earth observation in the thermal infrared with high spatio-temporal resolution. Int. Geosci. Remote Sens. Symp. 2018, 4078–4081. [Google Scholar] [CrossRef]

- Guanter, L.; Kaufmann, H.; Segl, K.; Foerster, S.; Rogass, C.; Chabrillat, S.; Kuester, T.; Hollstein, A.; Rossner, G.; Chlebek, C.; et al. The EnMAP spaceborne imaging spectroscopy mission for earth observation. Remote Sens. 2015, 7, 8830–8857. [Google Scholar] [CrossRef]

- Song, L.; Guanter, L.; Guan, K.; You, L.; Huete, A.; Ju, W.; Zhang, Y. Satellite sun-induced chlorophyll fluorescence detects early response of winter wheat to heat stress in the Indian Indo-Gangetic Plains. Glob. Chang. Biol. 2018, 24, 4023–4037. [Google Scholar] [CrossRef] [PubMed]

- Guan, K.; Wu, J.; Kimball, J.S.; Anderson, M.C.; Frolking, S.; Li, B.; Hain, C.R.; Lobell, D.B. The shared and unique values of optical, fluorescence, thermal and microwave satellite data for estimating large-scale crop yields. Remote Sens. Environ. 2017, 199, 333–349. [Google Scholar] [CrossRef]

- Nasrallah, A.; Baghdadi, N.; El Hajj, M.; Darwish, T.; Belhouchette, H.; Faour, G.; Darwich, S.; Mhawej, M. Sentinel-1 data for winter wheat phenology monitoring and mapping. Remote Sens. 2019, 11, 2228. [Google Scholar] [CrossRef]

- de Gouw, J.A.; Veefkind, J.P.; Roosenbrand, E.; Dix, B.; Lin, J.C.; Landgraf, J.; Levelt, P.F. Daily Satellite Observations of Methane from Oil and Gas Production Regions in the United States. Sci. Rep. 2020, 10, 1–10. [Google Scholar] [CrossRef]

- Ruwaimana, M.; Satyanarayana, B.; Otero, V.; Muslim, A.M.; Muhammad Syafiq, A.; Ibrahim, S.; Raymaekers, D.; Koedam, N.; Dahdouh-Guebas, F. The advantages of using drones over space-borne imagery in the mapping of mangrove forests. PLoS ONE 2018, 13, 1–22. [Google Scholar] [CrossRef]

- Barsi, J.A.; Schott, J.R.; Hook, S.J.; Raqueno, N.G.; Markham, B.L.; Radocinski, R.G. Landsat-8 thermal infrared sensor (TIRS) vicarious radiometric calibration. Remote Sens. 2014, 6, 11607–11626. [Google Scholar] [CrossRef]

- Wang, S.; Baum, A.; Zarco-Tejada, P.J.; Dam-Hansen, C.; Thorseth, A.; Bauer-Gottwein, P.; Bandini, F.; Garcia, M. Unmanned Aerial System multispectral mapping for low and variable solar irradiance conditions: Potential of tensor decomposition. ISPRS J. Photogramm. Remote Sens. 2019, 155, 58–71. [Google Scholar] [CrossRef]

- Matese, A.; Toscano, P.; Di Gennaro, S.F.; Genesio, L.; Vaccari, F.P.; Primicerio, J.; Belli, C.; Zaldei, A.; Bianconi, R.; Gioli, B. Intercomparison of UAV, aircraft and satellite remote sensing platforms for precision viticulture. Remote Sens. 2015, 7, 2971–2990. [Google Scholar] [CrossRef]

- Yang, C.; Yu, M.; Hu, F.; Jiang, Y.; Li, Y. Utilizing Cloud Computing to address big geospatial data challenges. Comput. Environ. Urban Syst. 2017, 61, 120–128. [Google Scholar] [CrossRef]

- Heung, B.; Ho, H.C.; Zhang, J.; Knudby, A.; Bulmer, C.E.; Schmidt, M.G. An overview and comparison of machine-learning techniques for classification purposes in digital soil mapping. Geoderma 2016, 265, 62–77. [Google Scholar] [CrossRef]

- Verrelst, J.; Malenovský, Z.; Van der Tol, C.; Camps-Valls, G.; Gastellu-Etchegorry, J.P.; Lewis, P.; North, P.; Moreno, J. Quantifying Vegetation Biophysical Variables from Imaging Spectroscopy Data: A Review on Retrieval Methods. Surv. Geophys. 2019, 40, 589–629. [Google Scholar] [CrossRef]

- Ali, I.; Greifeneder, F.; Stamenkovic, J.; Neumann, M.; Notarnicola, C. Review of machine learning approaches for biomass and soil moisture retrievals from remote sensing data. Remote Sens. 2015, 7, 16398–16421. [Google Scholar] [CrossRef]

- Kussul, N.; Lavreniuk, M.; Skakun, S.; Shelestov, A. Deep Learning Classification of Land Cover and Crop Types Using Remote Sensing Data. IEEE Geosci. Remote Sens. Lett. 2017, 14, 778–782. [Google Scholar] [CrossRef]

- Wolfert, S.; Ge, L.; Verdouw, C.; Bogaardt, M.-J. Big Data in Smart Farming—A review. Agric. Syst. 2017, 153, 69–80. [Google Scholar] [CrossRef]

- Reichstein, M.; Camps-Valls, G.; Stevens, B.; Jung, M.; Denzler, J.; Carvalhais, N. Deep learning and process understanding for data-driven Earth system science. Nature 2019, 566, 195–204. [Google Scholar] [CrossRef]

- Yang, Z.; Hu, L.; Yu, G.; Shrestha, R.; Di, L.; Boryan, C.; Mueller, R. Web service-based SMAP soil moisture data visualization, dissemination and analytics based on vegscape framwork. In Proceedings of the 2016 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Beijing, China, 10–15 July 2016; pp. 3624–3627. [Google Scholar] [CrossRef]

- De Filippis, T.; Rocchi, L.; Fiorillo, E.; Genesio, L. A WebGIS application for precision viticulture: From research to operative practices. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci.—ISPRS Arch. 2010, 38, 4. [Google Scholar]

- NASS, U. VegScape—Vegetation Condition Explorer 2020. Available online: https://nassgeodata.gmu.edu/VegScape/ (accessed on 4 October 2020).

- Han, W.; Yang, Z.; Di, L.; Mueller, R. CropScape: A Web service based application for exploring and disseminating US conterminous geospatial cropland data products for decision support. Comput. Electron. Agric. 2012, 84, 111–123. [Google Scholar] [CrossRef]

- Wolfert, S.; Goense, D.; Sørensen, C.A.G. A Future Internet Collaboration Platform for Safe and Healthy Food from Farm to Fork. In Proceedings of the 2014 Annual SRII Global Conference 2014, San Jose, CA, USA, 23–25 April 2014; pp. 266–273. [Google Scholar] [CrossRef]

- Ali, M.M.; Al-Ani, A.; Eamus, D.; Tan, D.K.Y. Leaf nitrogen determination using non-destructive techniques–A review. J. Plant Nutr. 2017, 40, 928–953. [Google Scholar] [CrossRef]

- Marino, S.; Alvino, A. Hyperspectral vegetation indices for predicting onion (Allium cepa L.) yield spatial variability. Comput. Electron. Agric. 2015, 116, 109–117. [Google Scholar] [CrossRef]

- Cao, Q.; Miao, Y.; Li, F.; Gao, X.; Liu, B.; Lu, D.; Chen, X. Developing a new Crop Circle active canopy sensor-based precision nitrogen management strategy for winter wheat in North China Plain. Precis. Agric. 2017, 18, 2–18. [Google Scholar] [CrossRef]

| Spatial/Temporal Resolution | Platform/Sensor/ Data | Accuracy | Crop/Study Sites | References |

|---|---|---|---|---|

| 3.4 m (LiDAR) and 6 m (IFSAR), both collected in June 2000); 30 m (USGS DEMs aerial photography collected in 1978 and 1979) | Airborne LiDAR, IFSAR data, USGS Level 1 and Level 2 DEMs based on aerial photography | LiDAR was better than other data sources even when ground is covered with vegetation (RMSE = 93 cm) | 60% deciduous and pine forest; Swift and Red Bud Creek watersheds, North Carolina, United States | [33] |

| 1 m (LiDAR) 25 m (LiDAR resampled) 25 m (Contour and drainage map) | LiDAR DEM LiDAR resampled DEM contour and drainage map based DEM | 1 m LiDAR DEM was significantly better than other DEMs | Koondrook-Perricoota forest; New South Wales, Australia | [34] |

| Two-time data acquisition over a small stockpile (June and November) | Visual sensor onboard a UAS | RMSE of vertical difference between UAS-derived DEM and global navigation satellite system (GNSS) points −0.097 to 0.106 m | Canada | [36] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy | Crop/Study Sites | References |

|---|---|---|---|---|

| Resampled to 1 m; taken in 1976, 1998, and 2002 | Aerial photographs from manned aircraft | Overall classification accuracy = 84% to 86% | Corn and soybean cropping; West Lafayette, Indiana | [51] |

| 30 m; two-time acquisition in April 2015 | Multispectral satellite images; Landsat 8 | Classification accuracy = 75% to 94% | Corn and soybean cultivation; Shatto Ditch watershed, Indiana | [48] |

| 3 cm (visual), 11 cm (NIR), 22 cm (thermal); two flights on the same day in June 2017 | Visual, NIR, and TIR sensors onboard a UAS | TIR images detected ~60% of the subsurface drainage | Corn and soybean; Central Ohio | [37] |

| Station interval of 5 cm and depth up to 2 m; one-time data acquisition in each field during winter season of 2017/18 | Ground penetrating radar onboard a movable wheeler | Determination of drainage pipes | Bare fields; Betsville, Maryland, and Columbus, Ohio | [38] |

| 6 cm (multispectral) and 11 cm (thermal); two separate data collections during spring of 2017 | Multispectral and thermal sensor onboard a UAS | Results verified against ground penetrating radar data | Bare fields with corn residue; Black Creek watershed, Indiana | [39] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 30 m; wheat and corn-soybean growing season | Red and NIR (multispectral); Landsat TM | R = 0.84 between model-derived and field-measured soil moisture | Model calibration using winter wheat from Shunyi and Tongzhou, China, and validation using corn-soybean data from Walnut Creek, Iowa, United States | [62] |

| 0.6 m; vine growing season | Thermal sensor onboard a manned aircraft | R = 0.5 and 0.3 between remotely sensed thermal inertia and soil moisture, and field-based thermal inertia and soil moisture, respectively | Vine; Ontario, Canada | [63] |

| Point; corn growing season 2002 | Handheld hyperspectral sensor (i.e., spectroradiometer) | R2 = 0.46 to 0.71 for light soil | Corn; Illinois, United States | [64] |

| Point; one-time acquisition on a bare land | Handheld hyperspectral sensor | R2 > 0.7 | Bare land; Wuhan, China | [65] |

| 0.15 m (multispectral), 60 cm (thermal); four-time image acquisition in 2013 | Multispectral and thermal sensors onboard a UAS | R2 = 0.77 when soil moisture was estimated using remote sensing data in a neural network model | Alfalfa and oats; Scipio, Utah, United States | [60] |

| 30 m, images acquired between 29 April 2013 and 16 September 2014 | Red, NIR, and thermal satellite imagery; Landsat 8 | R = 0.56 to 0.92 between predicted and measured soil moisture content | Several agricultural areas in US (25 SCAN sites) | [66] |

| 30 m, images acquired between 29 April 2013 and 16 September 2014 | Red and NIR; Landsat 8 | R = 0.67 to 0.74 between predicted and observed soil moisture | Several agricultural areas in US (25 SCAN sites) | [67] |

| 0.02 and 0.1 m; five-time acquisition in 2017 and 2018 | Multispectral and thermal sensor onboard a UAS | R2 = 0.43 to 0.82 between simulated and observed soil water content | Pasture sites in northern and southern Utah, United States | [61] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| Point; four and three observations in 2003 and 2004, respectively on cotton fields | Handheld hyperspectral sensor | R = 0.53 with green NDVI; R = 0.65 with yield | Cotton; Fayetteville, Arkansas | [68] |

| 1 m; one-time acquisition on a residue covered field | Digital camera on a manned aircraft; NIR filter removed | R = −0.69 to 1 between CI and NIR | Bare field; Kentucky | [69] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| NA; one-time acquisition at 3–5 leaves growth stage | Visual sensor onboard a UAS | R2 = 0.89 between imagery and manual approach for corn counting | Corn; Southern Munich, Germany | [4] |

| 0.2–0.45 mm; one-time acquisition at 1–2 visible leaves | Visual sensor onboard a UAS | R2 = 0.81 to 0.91 between image and ground-truth plant density | Wheat; Southeast France | [3] |

| 2.4 mm; one-flight for each field at two-leaf growth stage | Visual sensor onboard a UAS | Corn count accuracy = 0.68 to 0.96 | Corn; Northeast Kansas, United States | [5] |

| 0.5 cm; Image acquisition: 35 days after plantation (with at least 50% emergence) | Visual sensor onboard a UAS | R2 = 0.96 between image and manual approach | Potato; Chinese Academy of Agricultural Sciences, Hebei, China | [72] |

| 0.18 cm; Images collected on 2 and 12 November 2016 (two-leaf growth stage) | Visual sensor onboard a UAS | R2 = 0.84 to 0.86 between image and ground measured seedling count | Rapeseed; Wuhan, Hubei province, China | [73] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 3.2 m (multispectral) and 0.75 m (hyperspectral); four-time acquisition at corn growth stages V9, R1, R2, and R4 | Multispectral (IKONOS satellite); Hyperspectral (AISA Eagle sensor) on aircraft | R2 = 0.71 to 0.86 (for multispectral bands) and R2 = 0.73 to 0.88 (for hyperspectral bands) in estimating chlorophyll meter reading | Corn; University of Minnesota | [78] |

| 1 m; one-time acquisition at pre-flowering stem elongation stage | Hyperspectral (AISA Eagle sensor) on aircraft | R2 = 0.7 between NNI (from RS and field data | Corn; Northern Italy | [75] |

| 30 cm for hyperspectral and 2.16 cm for multispectral; one-time acquisition at flowering stage | Hyperspectral sensor onboard an airplane, multispectral onboard a drone | R2 = 0.54 to 0.79 between VI calculated from airborne sensor and leaf clip indices | Corn; Madrid, Spain | [77] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 2.4 m (multispectral); two-time and 4 m (hyperspectral); one-time acquisition of winter wheat growing season | Multispectral (QuickBird); hyperspectral (HyMap) | Classification accuracy—multispectral: 56.8% to 88.6%; hyperspectral: 65.9% | Wheat; Rheinbach, Germany | [83] |

| 0.4 mm; vine growing season | Thermal imager mounted on a tripod | R2 = 0.25 to 0.53 between leaf to air temperature and stomatal conductance | Grapevines; Geisenheim, Germany | [89] |

| Point; 7 days after inoculation | Handheld hyperspectral sensor (spectroradiometer) | Prediction accuracy up to 85% in fungal infection prediction | Eggplant; Zhejiang, China | [90] |

| Visual one-time acquisition, multispectral; two-time acquisition, winter wheat growing season | Visual and multispectral mounted on a mobile tool carrier | Multispectral: R2 up to 0.88 with visually detected disease | Winter Wheat; Wolfenbuttel, Germany | [91] |

| Point and 0.29 mm (hyperspectral imager); both one-time acquisition at sugar beet growing stages | Handheld spectroradiometer; hyperspectral imager mounted on manual-positioning XY-frame | Classification accuracy = 84% to 92% | Sugar beet; Einbeck, Germany | [85] |

| 1 cm; one-time acquisition, vine growing season | Visual onboard a UAS | Classification accuracy > 95.8% | Vine; France | [92] |

| 2–4 mm; one-time acquisition during flowering stage | Visual onboard a UAS | Classification accuracy = 84% | Potato; Hokkaido, Japan | [93] |

| 1–1.5 cm; 5 flights on key yellow rust developmental stages | Multispectral onboard a UAS | Classification accuracy = 89.3% | Wheat; Shanxi Province, China | [94] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 1.14–3.8 cm (visible); 1.62–5.51 cm (multispectral); one-time acquisition at 4–6 leaves stage of maize plants | Visual and multispectral sensors onboard a UAS | Weed classification accuracy = 86% to 92% at the lowest altitude (30 m) | Maize; Cordoba, Spain | [106] |

| 2 cm; one-time acquisition at the 4–6 leaves stage of maize plants | Multispectral sensor onboard a UAS | R2 = 0.89 for weed density estimation and classification accuracy = 86% for weed map | Maize; Madrid, Spain | [108] |

| 2.4 m (multispectral); image taken in spring condition (March of 2009) | Multispectral satellite imagery; QuickBird satellite | Classification accuracy = 80% to 98% | Winter Wheat; Andalusia, Spain | [111] |

| 1.3 m; images collected 20 and 30 July 2001 | Multispectral sensor onboard a UAS | Classification accuracy = 92% to 94% | Soybean; Davis-Purdue Agricultural Research Center, West Lafayette, Indiana, United States | [112] |

| Spatial/Temporal Resolution | Platform/Sensor/Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 0.02 m; three-time acquisition during early and mid-season crop development | Visual sensor on board UAS | R2 of up to 0.74 | Corn; University of Hohenheim, Germany | [115] |

| 30 m; imagery for 2008 to 2013 | Multispectral sensor; Landsat 5 and 7 | R2 = 0.14 to 0.58 for corn, and 0.03 to 0.55 for soybean yield estimation | Corn and Soybean; Midwestern US | [118] |

| 2.5 cm; eight-time acquisition from winter wheat heading to ripening | Visual sensor onboard a UAS | R2 = 0.94; regression model for wheat yield | Wheat/Hokkaido; Japan | [116] |

| 0.3 m; bare soil imagery | Multispectral sensor onboard a manned aircraft | R2 = 0.52 to 0.97 for corn yield estimation | Corn; Madison county, Ohio, United States | [117] |

| Spatial/Temporal Resolution | Platform/Sensor/ Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| 0.25 m; one-time acquisition 123 days after sowing (winter crop season of 2003) | Multispectral sensor onboard a balloon | R2= 0.52 to 0.66 between image and in-situ grain protein | Wheat; Queensland, Australia | [123] |

| Point; image acquisition at seven growing stages | Hyperspectral sensor (handheld spectroradiometer) | R2 = 0.85 to 0.97 for PPR-based protein estimation model | Winter Wheat; Beijing, China | [121] |

| 30 m (HJ-CCD) and 2.5 m (SPOT-5); images acquired during the 2008–2009 and 2009–2010 growing season | Multispectral HJ-CCD and SPOT-5 satellites | R = 0.3 to 0.8 between grain protein contents and spectral indices at multiple growth stages | Winter wheat; Jiangsu Province, China | [122] |

| Spatial/Temporal Resolution | Platform/Sensor/ Data | Accuracy Compared to In-Situ Data | Crop/Study Sites | References |

|---|---|---|---|---|

| Spectral reflectance measurement of residues after crop growing season | Handheld hyper spectral sensor (spectroradiometer) | R2 = 0.86 to 0.94 for reflectance as function of relative water content | Corn, soybean, and wheat residues from diverse soils collected from different locations | [128] |

| Point (spectroradiometer)—monthly, April through June and October through December; 2.5 m (ATLAS)—two-time acquisition (June and July, 2001) | Handheld hyperspectral sensor (spectroradiometer)—monthly acquisition; multispectral ATLAS (400–12,500 nm; 15 bands) | R = 0.77 to 0.98 between residue cover and ATLAS bands | Wheat straw residue; Alabama | [127] |

| 30 m (Landsat, ALI, and Hyperion); 2 and 3 m (Airborne SpecTIR) during spring/fall of 2008, 2009, and 2010 | Multispectral imagery (Landsat TM and ALI), Hyperspectral imagery (Hyperion and Airborne SpecTIR) | CAI model from airborne SpecTIR with lowest RMSE of 8.6 | Corn and Soybean residue; Indiana | [126] |

| 30 m; two images in 2013 (May and June) and four in 2014 (March, April, May, and June) | Multispectral Landsat (7 ETM+, 8, and OLI) | R2 = 0.07 to 0.78 for NDTI-based models for various residue cover | Corn and Soybean residue; South central Nebraska | [125] |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Khanal, S.; KC, K.; Fulton, J.P.; Shearer, S.; Ozkan, E. Remote Sensing in Agriculture—Accomplishments, Limitations, and Opportunities. Remote Sens. 2020, 12, 3783. https://doi.org/10.3390/rs12223783

Khanal S, KC K, Fulton JP, Shearer S, Ozkan E. Remote Sensing in Agriculture—Accomplishments, Limitations, and Opportunities. Remote Sensing. 2020; 12(22):3783. https://doi.org/10.3390/rs12223783

Chicago/Turabian StyleKhanal, Sami, Kushal KC, John P. Fulton, Scott Shearer, and Erdal Ozkan. 2020. "Remote Sensing in Agriculture—Accomplishments, Limitations, and Opportunities" Remote Sensing 12, no. 22: 3783. https://doi.org/10.3390/rs12223783

APA StyleKhanal, S., KC, K., Fulton, J. P., Shearer, S., & Ozkan, E. (2020). Remote Sensing in Agriculture—Accomplishments, Limitations, and Opportunities. Remote Sensing, 12(22), 3783. https://doi.org/10.3390/rs12223783