Spatial ETL for 3D Building Modelling Based on Unmanned Aerial Vehicle Data in Semi-Urban Areas

Abstract

1. Introduction

2. Research Related to 3D Building Modelling

2.1. Spatial Data Used for 3D Modelling

2.2. Building Reconstruction Approach

2.3. CityGML

3. Materials and Methods

3.1. Spatial ETL

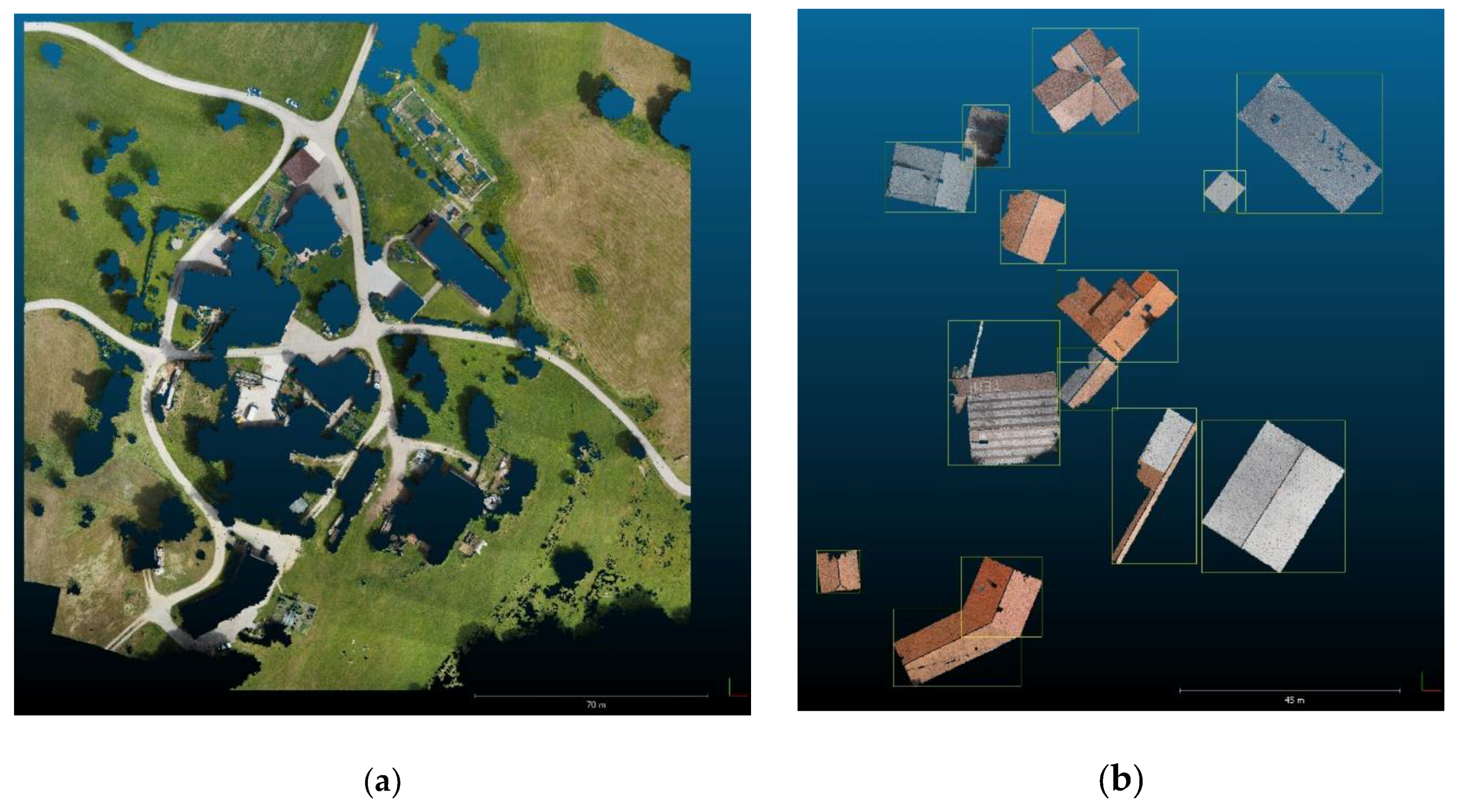

3.2. Study Area and Dataset

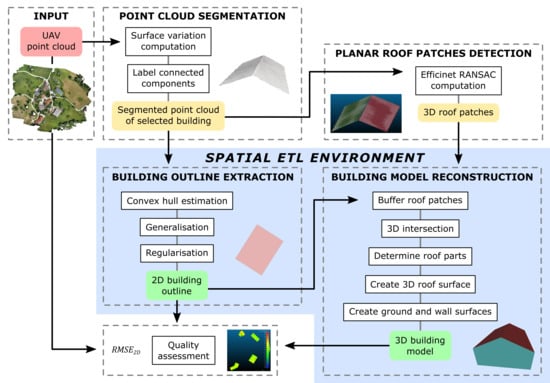

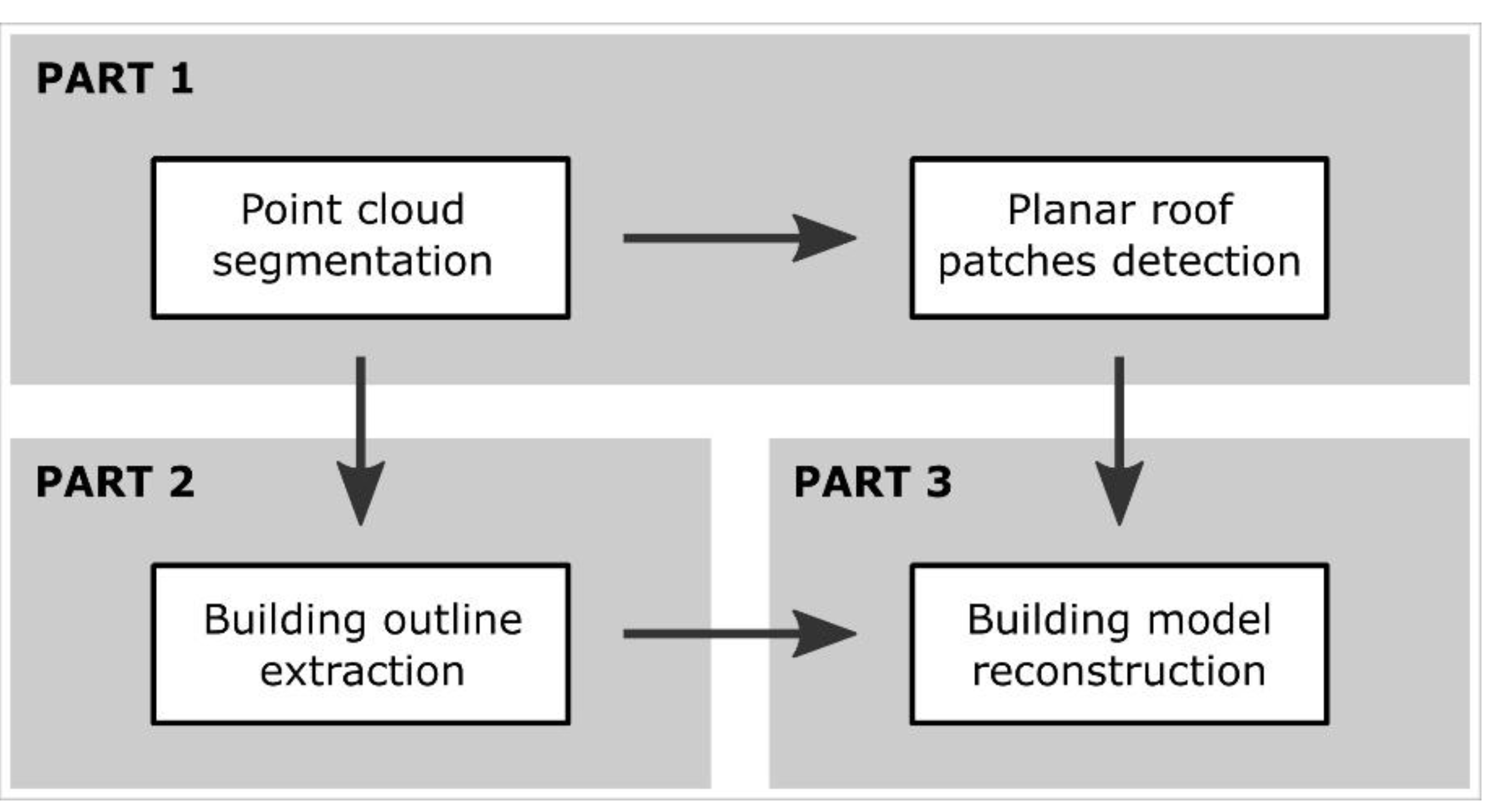

3.3. The Proposed Workflow

3.3.1. Point Cloud Segmentation

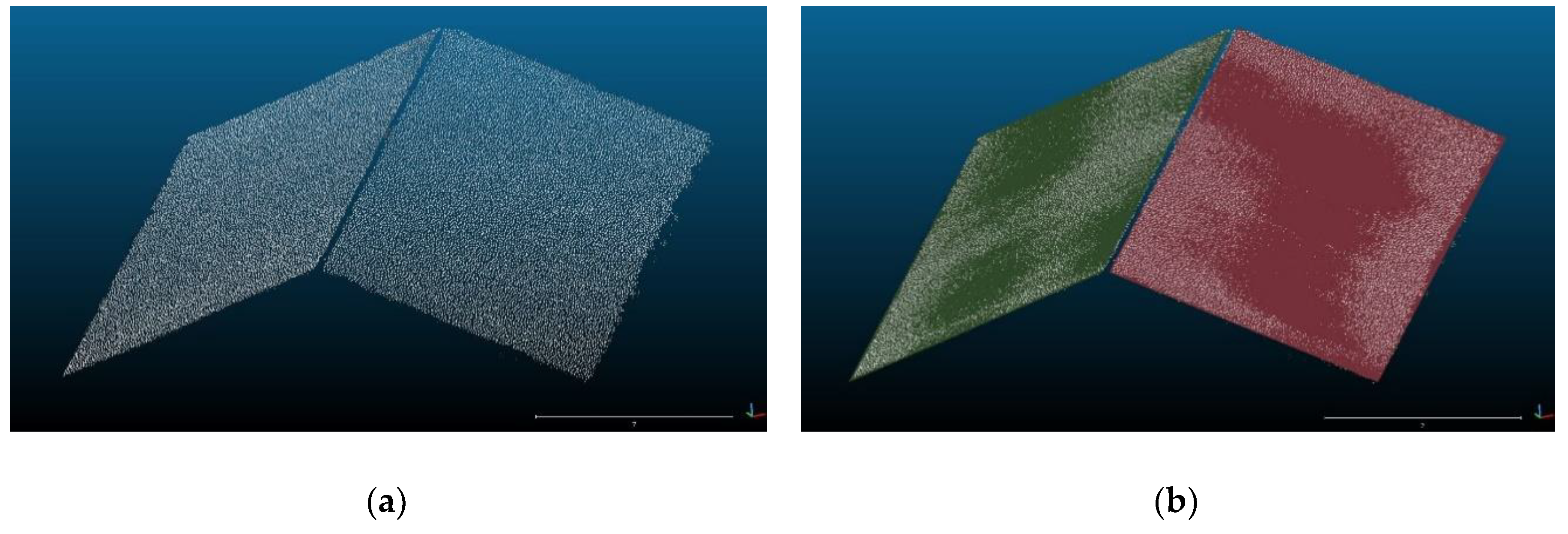

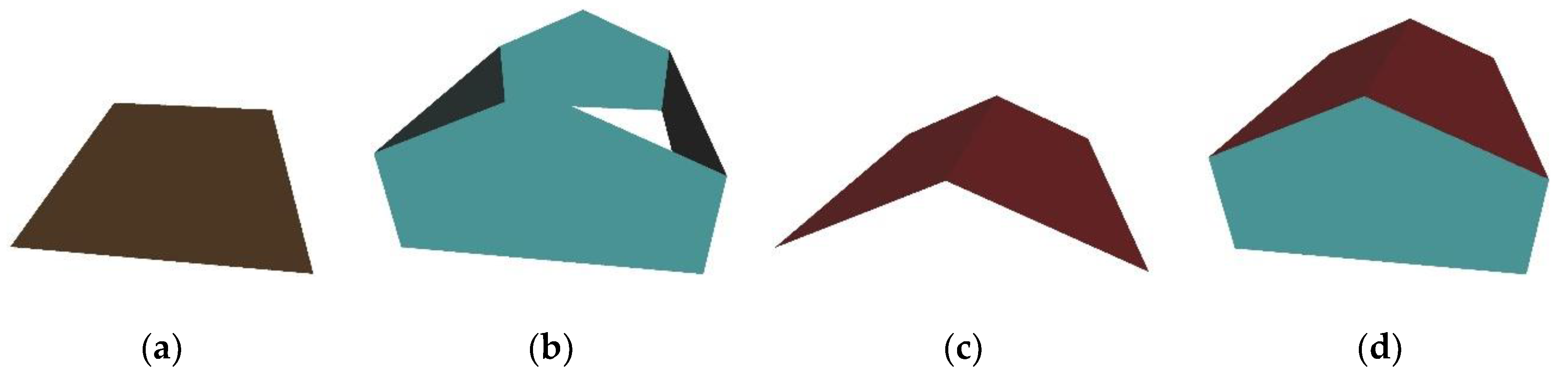

3.3.2. Planar Roof Patches Detection

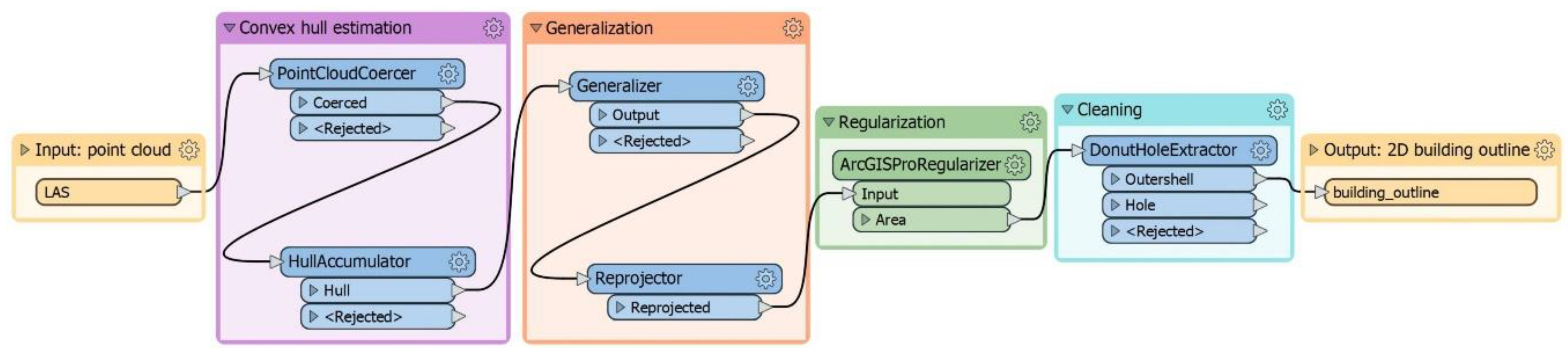

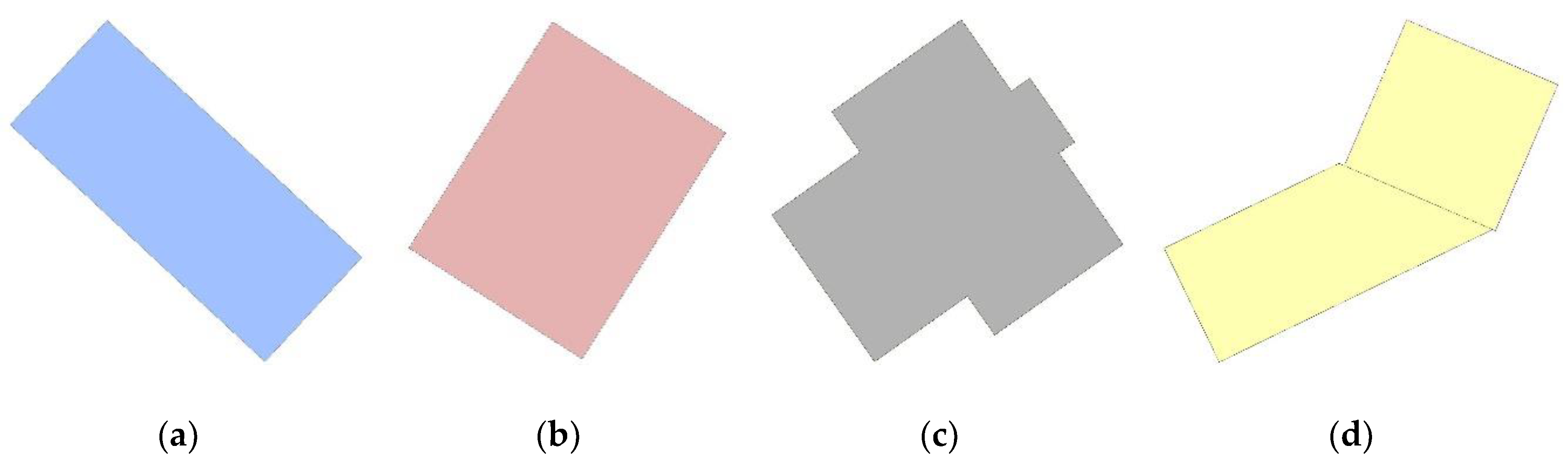

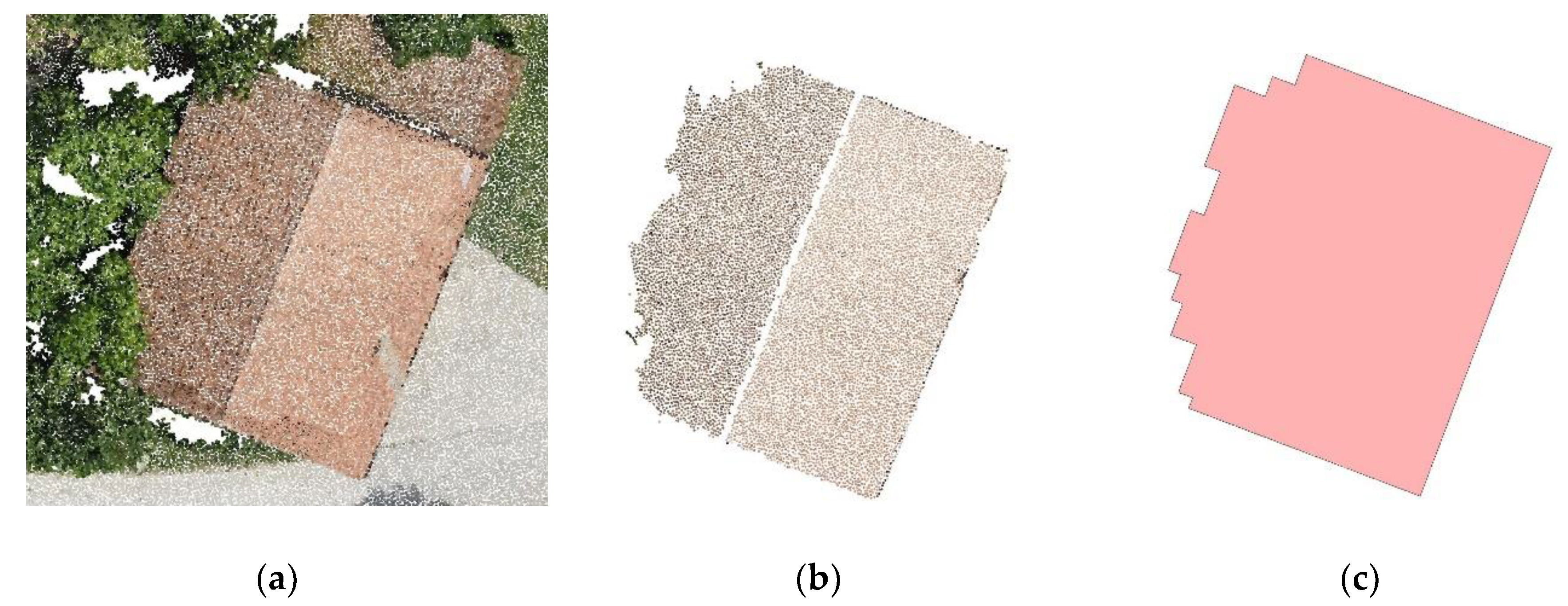

3.3.3. Building Outline Generation

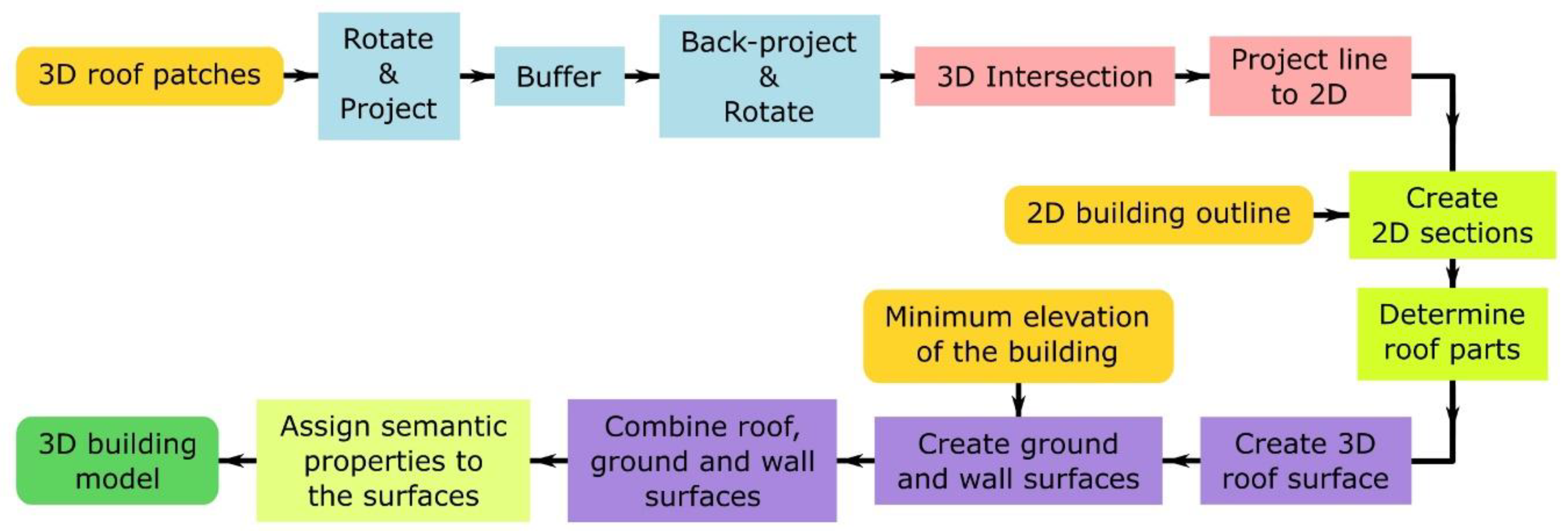

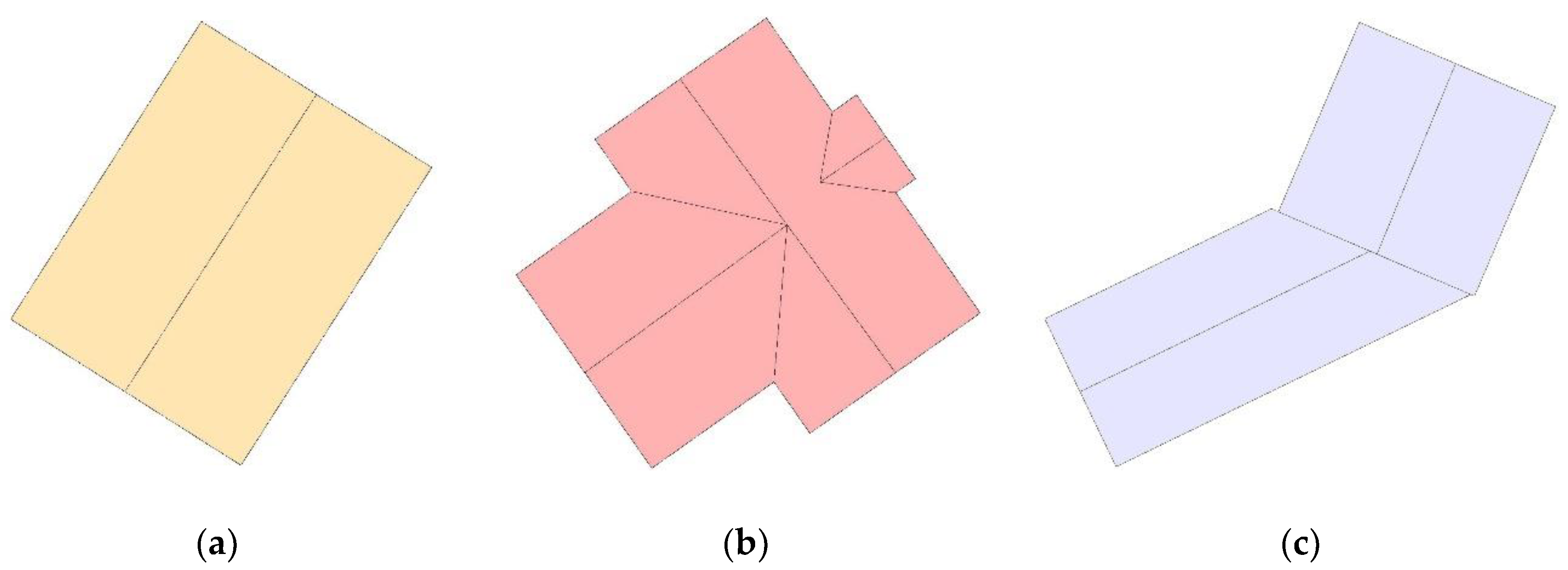

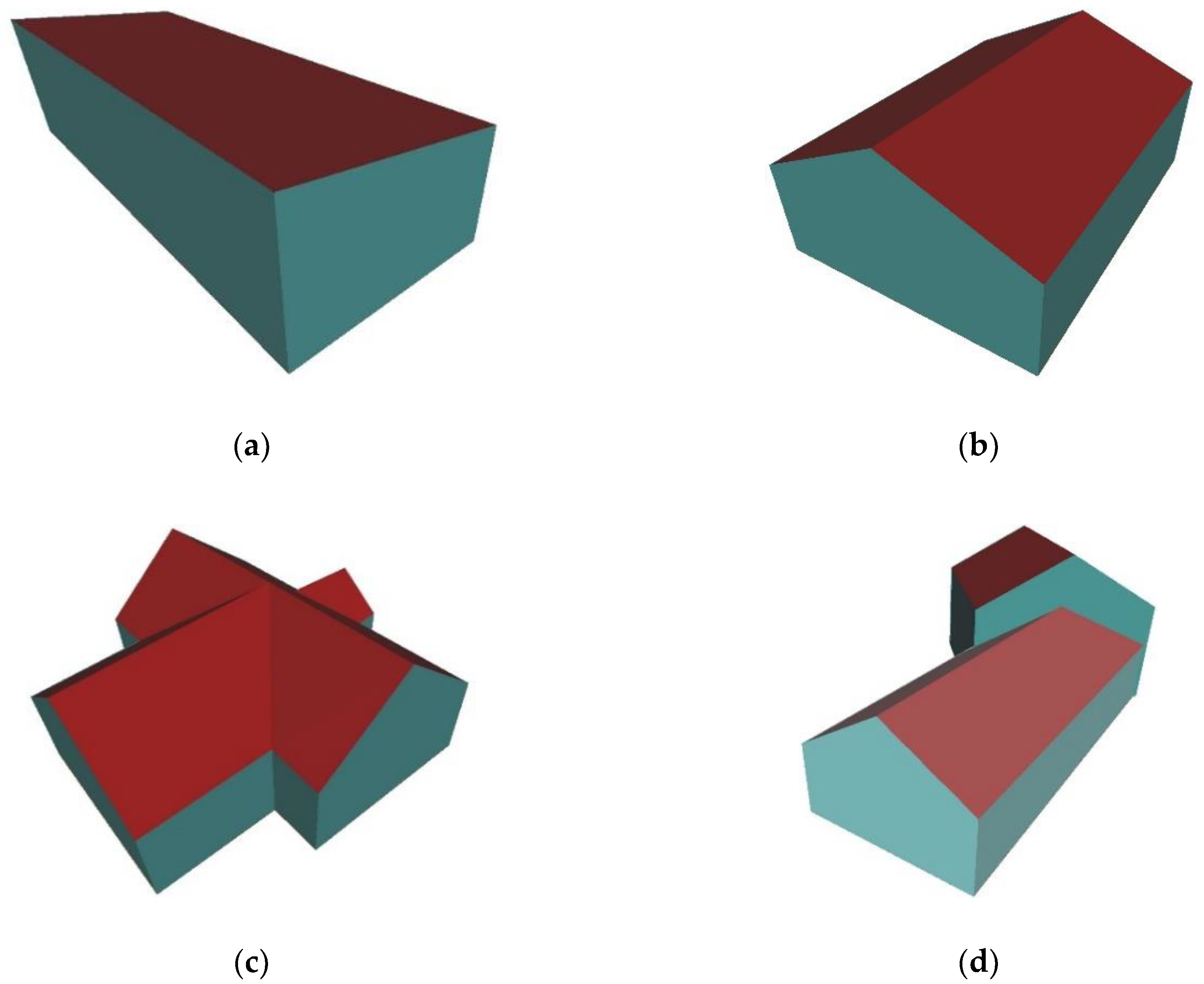

3.3.4. Building Model Reconstruction

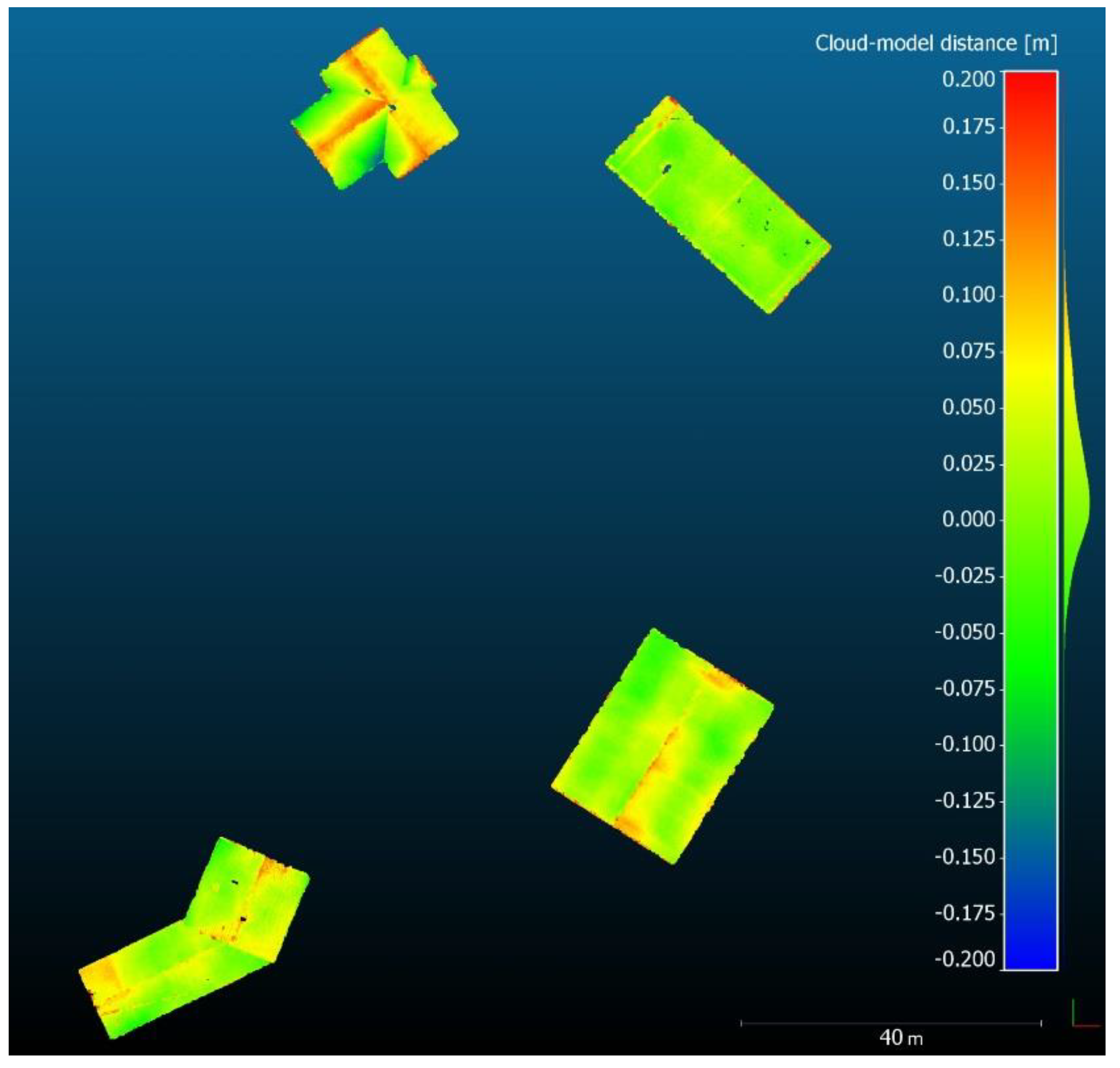

3.4. Quality Analysis

4. Results

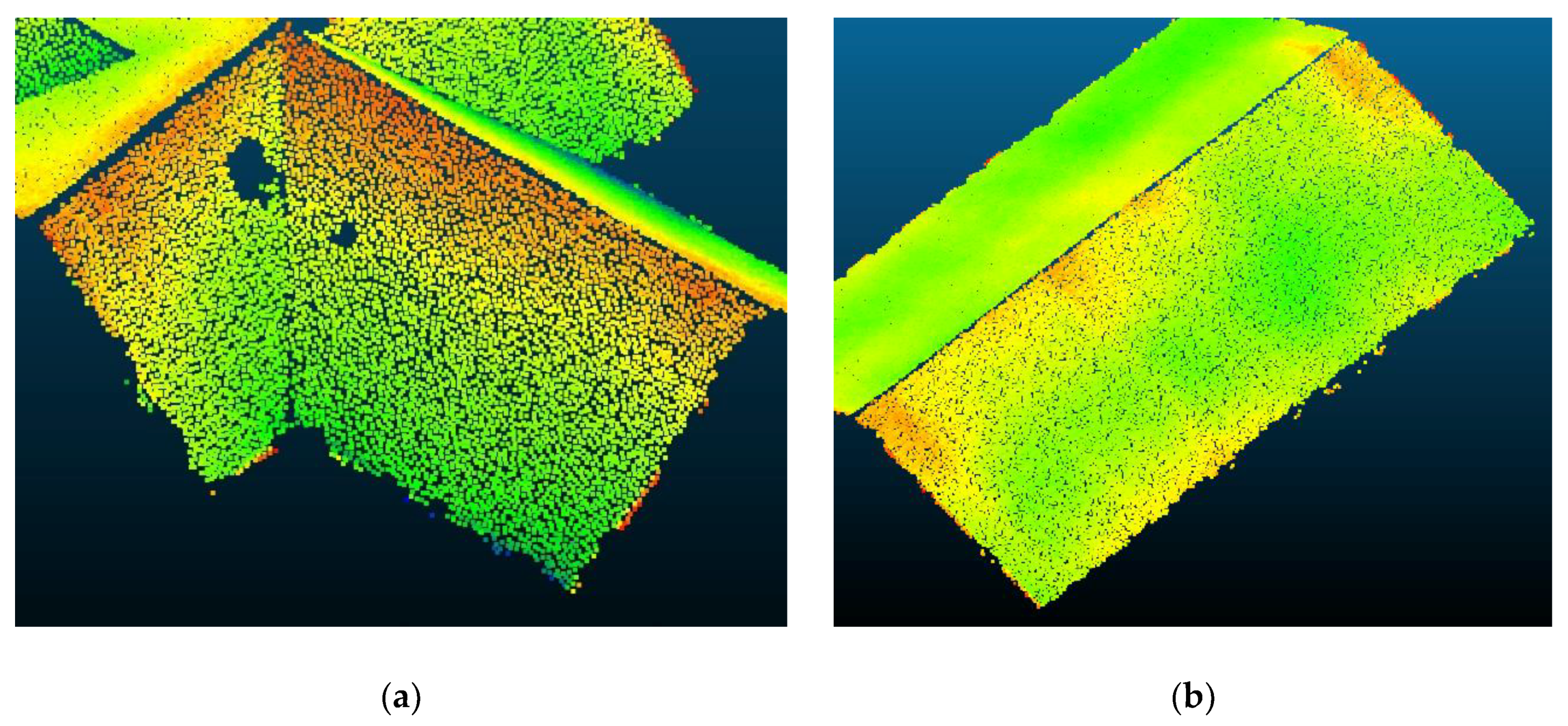

4.1. Segments in the Point Cloud Representing Buildings

4.2. Planar Roof Patches

4.3. Building Outlines

4.4. 3D Building Models

4.5. Quality Assessment

5. Discussion

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Biljecki, F.; Stoter, J.; Ledoux, H.; Zlatanova, S.; Çöltekin, A. Applications of 3D city models: State of the art review. ISPRS Int. J. Geo-Inf. 2015, 4, 2842–2889. [Google Scholar] [CrossRef]

- Rahman, A.A. Advances in 3D Geoinformation; Springer: Berlin/Heidelberg, Germany, 2017. [Google Scholar]

- Thomsen, C. ETL. In Encyclopedia of Big Data Technologies; Sakr, S., Zomaya, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2018; pp. 1–5. ISBN 978-3-319-63962-8. [Google Scholar]

- SafeSoftware, Inc., FME (Feature Manipulation Engine), Desktop Documentation. Available online: https://docs.safe.com/fme/html/FME_Desktop_Documentation/FME_Transformers/transformer-index.htm (accessed on 20 March 2020).

- Open Geospatial Consortium. City Geography Markup Language (CityGML) Encoding Standard, Version 2.0 2012. Available online: https://www.ogc.org/standards/citygml (accessed on 14 April 2020).

- Brenner, C. Building reconstruction from images and laser scanning. Int. J. Appl. Earth Obs. Geoinf. 2005, 6, 187–198. [Google Scholar] [CrossRef]

- Kaartinen, H.; Hyyppä, J.; Gülch, E.; Hyyppä, H.; Matikainen, L.; Vosselman, G.; Hofmann, A.D.; Mäder, U.; Persson, Å.; Söderman, U.; et al. EuroSDR Building Extraction Comparison. In Proceedings of the ISPRS Hannover Workshop 2005: High-Resolution Earth Imaging for Geospatial Information, Hannover, Germany, 17–20 May 2005. [Google Scholar]

- Haala, N.; Kada, M. An update on automatic 3D building reconstruction. ISPRS J. Photogramm. Remote Sens. 2010, 65, 570–580. [Google Scholar] [CrossRef]

- Rottensteiner, F.; Sohn, G.; Gerke, M.; Wegner, J.D.; Breitkopf, U.; Jung, J. Results of the ISPRS benchmark on urban object detection and 3D building reconstruction. ISPRS J. Photogramm. Remote Sens. 2014, 93, 256–271. [Google Scholar] [CrossRef]

- Tomljenovic, I.; Höfle, B.; Tiede, D.; Blaschke, T. Building extraction from Airborne Laser Scanning data: An analysis of the state of the art. Remote Sens. 2015, 7, 3826–3862. [Google Scholar] [CrossRef]

- Dorninger, P.; Pfeifer, N. A comprehensive automated 3D approach for building extraction, reconstruction, and regularization from airborne laser scanning point clouds. Sensors 2008, 8, 7323–7343. [Google Scholar] [CrossRef]

- Oude Elberink, S.; Vosselman, G. Building reconstruction by target based graph matching on incomplete laser data: Analysis and limitations. Sensors 2009, 9, 6101–6118. [Google Scholar] [CrossRef]

- Kada, M.; McKinley, L. 3D building reconstruction from LiDAR based on a cell decomposition approach. In Proceedings of the CMRT09: Object Extraction for 3D City Models, Road Databases and Traffic Monitoring-Concepts, Algorithms and Evaluation, Paris, France, 3–4 September 2009; pp. 47–52. [Google Scholar]

- Pu, S.; Vosselman, G. Knowledge based reconstruction of building models from terrestrial laser scanning data. ISPRS J. Photogramm. Remote Sens. 2009, 64, 575–584. [Google Scholar] [CrossRef]

- Kedzierski, M.; Fryskowska, A. Methods of laser scanning point clouds integration in precise 3D building modelling. Measurement 2015, 74, 221–232. [Google Scholar] [CrossRef]

- Wang, H.; Zhang, W.; Chen, Y.; Chen, M.; Yan, K. Semantic decomposition and reconstruction of compound buildings with symmetric roofs from LiDAR data and aerial imagery. Remote Sens. 2015, 7, 13945–13974. [Google Scholar] [CrossRef]

- Zhang, Z.; Gerke, M.; Vosselman, G.; Yang, M.Y. A patch-based method for the evaluation of dense image matching quality. Int. J. Appl. Earth Obs. Geoinf. 2018, 70, 25–34. [Google Scholar] [CrossRef]

- Xiao, J.; Gerke, M.; Vosselman, G. Building extraction from oblique airborne imagery based on robust façade detection. ISPRS J. Photogramm. Remote Sens. 2012, 68, 56–68. [Google Scholar] [CrossRef]

- Bulatov, D.; Häufel, G.; Meidow, J.; Pohl, M.; Solbrig, P.; Wernerus, P. Context-based automatic reconstruction and texturing of 3D urban terrain for quick-response tasks. ISPRS J. Photogramm. Remote Sens. 2014, 93, 157–170. [Google Scholar] [CrossRef]

- Li, M.; Nan, L.; Smith, N.; Wonka, P. Reconstructing building mass models from UAV images. Comput. Graph. 2016, 54, 84–93. [Google Scholar] [CrossRef]

- Toschi, I.; Nocerino, E.; Remondino, F.; Revolti, A.; Soria, G.; Piffer, S. Geospatial data processing for 3D city model generation, management and visualization. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-1/W1, 527–534. [Google Scholar] [CrossRef]

- Wen, X.; Xie, H.; Liu, H.; Yan, L. Accurate Reconstruction of the LoD3 Building Model by Integrating Multi-Source Point Clouds and Oblique Remote Sensing Imagery. ISPRS Int. J. Geo-Inf. 2019, 8, 135. [Google Scholar] [CrossRef]

- Tack, F.; Buyuksalih, G.; Goossens, R. 3D building reconstruction based on given ground plan information and surface models extracted from spaceborne imagery. ISPRS J. Photogramm. Remote Sens. 2012, 67, 52–64. [Google Scholar] [CrossRef]

- Lafarge, F.; Descombes, X.; Zerubia, J.; Pierrot-Deseilligny, M. Automatic building extraction from DEMs using an object approach and application to the 3D-city modeling. ISPRS J. Photogramm. Remote Sens. 2008, 63, 365–381. [Google Scholar] [CrossRef]

- Musialski, P.; Wonka, P.; Aliaga, D.G.; Wimmer, M.; Van Gool, L.; Purgathofer, W. A survey of urban reconstruction. Comput. Graph. Forum 2013, 32, 146–177. [Google Scholar] [CrossRef]

- Tahar, K.N.; Ahmad, A. An Evaluation on Fixed Wing and Multi-Rotor UAV Images using Photogrammetric Image Processing. Int. J. Comput. Electr. Autom. Control Inf. Eng. 2013, 7, 48–52. [Google Scholar]

- Crommelinck, S.; Bennett, R.; Gerke, M.; Nex, F.; Yang, M.Y.; Vosselman, G. Review of automatic feature extraction from high-resolution optical sensor data for UAV-based cadastral mapping. Remote Sens. 2016, 8, 689. [Google Scholar] [CrossRef]

- Tarsha-Kurdi, F.; Landes, T.; Grussenmeyer, P.; Koehl, M. Model-driven and data-driven approaches using LIDAR data: Analysis and comparison. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2007, XXXVI-3/W4, 87–92. [Google Scholar]

- Tarsha-Kurdi, F.; Landes, T.; Grussenmeyer, P. Extended RANSAC Algorithm for Automatic Detection of Building Roof Planes From Lidar Data. Photogramm. J. Finl. 2008, 21, 97–109. [Google Scholar]

- Brenner, C. Towards fully automatic generation of city models. Int. Arch. Photogramm. Remote Sens. 2000, XXXIII-B3/, 84–92. [Google Scholar]

- Vosselman, G. Building reconstruction using planar faces in very high density height data. Int. Arch. Photogramm. Remote Sens. 1999, XXXII-3/2W, 87–92. [Google Scholar]

- Satari, M.; Samadzadegan, F.; Azizi, A.; Maas, H.G. A Multi-Resolution Hybrid Approach for Building Model Reconstruction from Lidar Data. Photogramm. Rec. 2012, 27, 330–359. [Google Scholar] [CrossRef]

- Rottensteiner, F. Automatic generation of high-quality building models from LiDAR data. IEEE Comput. Graph. Appl. 2003, 23, 42–50. [Google Scholar] [CrossRef]

- Sohn, G.; Huang, X.; Tao, V. Using a Binary Space Partitioning Tree for Reconstructing Polyhedral Building Models from Airborne Lidar Data. Photogramm. Eng. Remote Sens. 2008, 74, 1425–1438. [Google Scholar] [CrossRef]

- Xiong, B.; Jancosek, M.; Oude Elberink, S.; Vosselman, G. Flexible building primitives for 3D building modeling. ISPRS J. Photogramm. Remote Sens. 2015, 101, 275–290. [Google Scholar] [CrossRef]

- Xiong, B.; Oude Elberink, S.; Vosselman, G. A graph edit dictionary for correcting errors in roof topology graphs reconstructed from point clouds. ISPRS J. Photogramm. Remote Sens. 2014, 93, 227–242. [Google Scholar] [CrossRef]

- Gröger, G.; Plümer, L. CityGML- Interoperable semantic 3D city models. ISPRS J. Photogramm. Remote Sens. 2012, 71, 12–33. [Google Scholar] [CrossRef]

- Kolbe, T.H. Representing and exchanging 3D city models with CityGML. In 3D Geo-Information Sciences; Lee, J., Zlatanova, S., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 15–31. ISBN 978-3-540-87395-2. [Google Scholar]

- van den Brink, L.; Stoter, J.; Zlatanova, S. Establishing a national standard for 3D topographic data compliant to CityGML. Int. J. Geogr. Inf. Sci. 2013, 27, 92–113. [Google Scholar] [CrossRef]

- Vassiliadis, P. A Survey of Extract-Transform-Load A Survey of Extract–Transform–Load Technology. Int. J. Data Warehous. Min. 2009, 5, 1–27. [Google Scholar] [CrossRef]

- Astriani, W.; Trisminingsih, R. Extraction, Transformation, and Loading (ETL) Module for Hotspot Spatial Data Warehouse Using Geokettle. Procedia Environ. Sci. 2016, 33, 626–634. [Google Scholar] [CrossRef]

- Šušteršič, K.; Podobnikar, T. Prostorski ETL za boljšo medopravilnost v GIS. Geod. Vestn. 2013, 57, 719–733. [Google Scholar] [CrossRef]

- Safe Software Inc. FME (Feature Manipulation Engine). Available online: https://www.safe.com/ (accessed on 21 December 2019).

- Agisoft Photoscan. Available online: https://www.agisoft.com/ (accessed on 24 May 2020).

- CloudCompare. Available online: https://www.cloudcompare.org/ (accessed on 2 January 2020).

- Hackel, T.; Wegner, J.D.; Schindler, K. Contour detection in unstructured 3D point clouds. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1610–1618. [Google Scholar] [CrossRef]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for Point-Cloud Shape Detection. Comput. Graph. Forum 2007, 26, 214–226. [Google Scholar] [CrossRef]

- Malihi, S.; Zoej, M.J.V.; Hahn, M. Large-scale accurate reconstruction of buildings employing point clouds generated from UAV imagery. Remote Sens. 2018, 10, 1148. [Google Scholar] [CrossRef]

- Ledoux, H. On the validation of solids represented with the international standards for geographic information. Comput. Civ. Infrastruct. Eng. 2013, 28, 693–706. [Google Scholar] [CrossRef]

- Ledoux, H. val3dity: Validation of 3D GIS primitives according to the international standards. Open Geospatial Data Softw. Stand. 2018, 3, 1–12. [Google Scholar] [CrossRef]

- Weinmann, M.; Jutzi, B.; Hinz, S.; Mallet, C. Semantic point cloud interpretation based on optimal neighborhoods, relevant features and efficient classifiers. ISPRS J. Photogramm. Remote Sens. 2015, 105, 286–304. [Google Scholar] [CrossRef]

- Sampath, A.; Shan, J. Segmentation and reconstruction of polyhedral building roofs from aerial lidar point clouds. IEEE Trans. Geosci. Remote Sens. 2010, 48, 1554–1567. [Google Scholar] [CrossRef]

- He, Y.; Zhang, C.; Awrangjeb, M.; Fraser, C.S. Automated Reconstruction of Walls From Airborne Lidar Data for Complete 3D Building Modelling. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, XXXIX-B3, 115–120. [Google Scholar] [CrossRef]

- Gevaert, C.M.; Persello, C.; Vosselman, G. Optimizing multiple kernel learning for the classification of UAV data. Remote Sens. 2016, 8. [Google Scholar] [CrossRef]

- Dai, Y.; Gong, J.; Li, Y.; Feng, Q. Building segmentation and outline extraction from UAV image-derived point clouds by a line growing algorithm. Int. J. Digit. Earth 2017, 10, 1077–1097. [Google Scholar] [CrossRef]

- Henn, A.; Gröger, G.; Stroh, V.; Plümer, L. Model driven reconstruction of roofs from sparse LIDAR point clouds. ISPRS J. Photogramm. Remote Sens. 2013, 76, 17–29. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Focal length | 17 mm |

| Shutter speed | 1/1000 s |

| Camera resolution | 16.1 Mpx |

| Sensor type | CMOS |

| Sensor size | 4608 x 3456 px |

| Pixel size | 3.74 µm |

| Parameter | Value |

|---|---|

| Overlap (forward/sideward) | 85%/65% |

| Flight altitude (above ground level) | 50 m |

| Average Ground Sampling Distance (GSD) | 1.1 cm/px |

| Number of images | 344 |

| Number of Ground Control Points (GCPs) | 9 |

| Algorithm | Parameter | Value |

|---|---|---|

| Surface variation computation | Neighbourhood radius | 0.30 m |

| Surface variation threshold | 0.03 | |

| Label connected components | Minimum points per component | 1000 |

| Minimum distance between components | 0.20 m |

| Parameter | Value |

|---|---|

| Minimum support points per primitive | 300 |

| Primitive | Plane |

| Maximum distance to primitive | e = 0.05 m |

| Sampling resolution | b = 0.10 m |

| Maximum normal deviation | a = 5° |

| Overlooking probability | 0.90 |

| Parameter | Value [m] |

|---|---|

| Alpha value | 0.5–0.7 |

| Douglas-Peucker tolerance | 0.3 |

| Regularisation tolerance | 0.25 |

| Regularisation precision | 0.05 |

| The Roof Type of 3D Building Model | RMSE2D [m] |

|---|---|

| Flat roof | 0.165 |

| Gable roof | 0.109 |

| Cross gable roof | 0.140 |

| Two-level gable roof | 0.131 |

| Average | 0.136 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Drešček, U.; Kosmatin Fras, M.; Tekavec, J.; Lisec, A. Spatial ETL for 3D Building Modelling Based on Unmanned Aerial Vehicle Data in Semi-Urban Areas. Remote Sens. 2020, 12, 1972. https://doi.org/10.3390/rs12121972

Drešček U, Kosmatin Fras M, Tekavec J, Lisec A. Spatial ETL for 3D Building Modelling Based on Unmanned Aerial Vehicle Data in Semi-Urban Areas. Remote Sensing. 2020; 12(12):1972. https://doi.org/10.3390/rs12121972

Chicago/Turabian StyleDrešček, Urška, Mojca Kosmatin Fras, Jernej Tekavec, and Anka Lisec. 2020. "Spatial ETL for 3D Building Modelling Based on Unmanned Aerial Vehicle Data in Semi-Urban Areas" Remote Sensing 12, no. 12: 1972. https://doi.org/10.3390/rs12121972

APA StyleDrešček, U., Kosmatin Fras, M., Tekavec, J., & Lisec, A. (2020). Spatial ETL for 3D Building Modelling Based on Unmanned Aerial Vehicle Data in Semi-Urban Areas. Remote Sensing, 12(12), 1972. https://doi.org/10.3390/rs12121972