An Automobile Environment Detection System Based on Deep Neural Network and its Implementation Using IoT-Enabled In-Vehicle Air Quality Sensors

Abstract

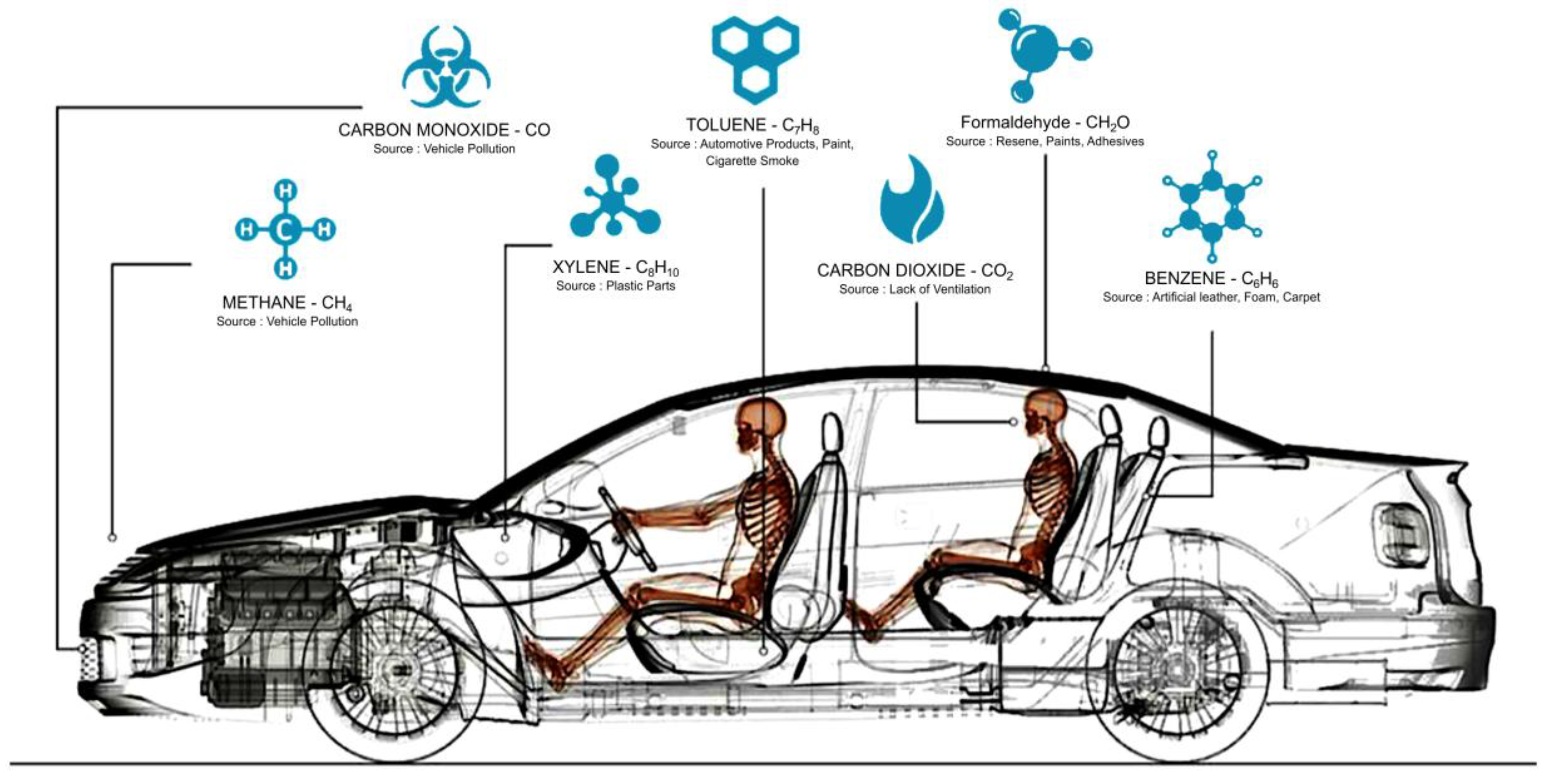

1. Introduction

2. Background and Related Work

2.1. Trends in ADAS Research

2.2. Predicting Driver Drowsiness

2.3. Effect of CO2

3. Deep Learning for Sensor Data Analysis

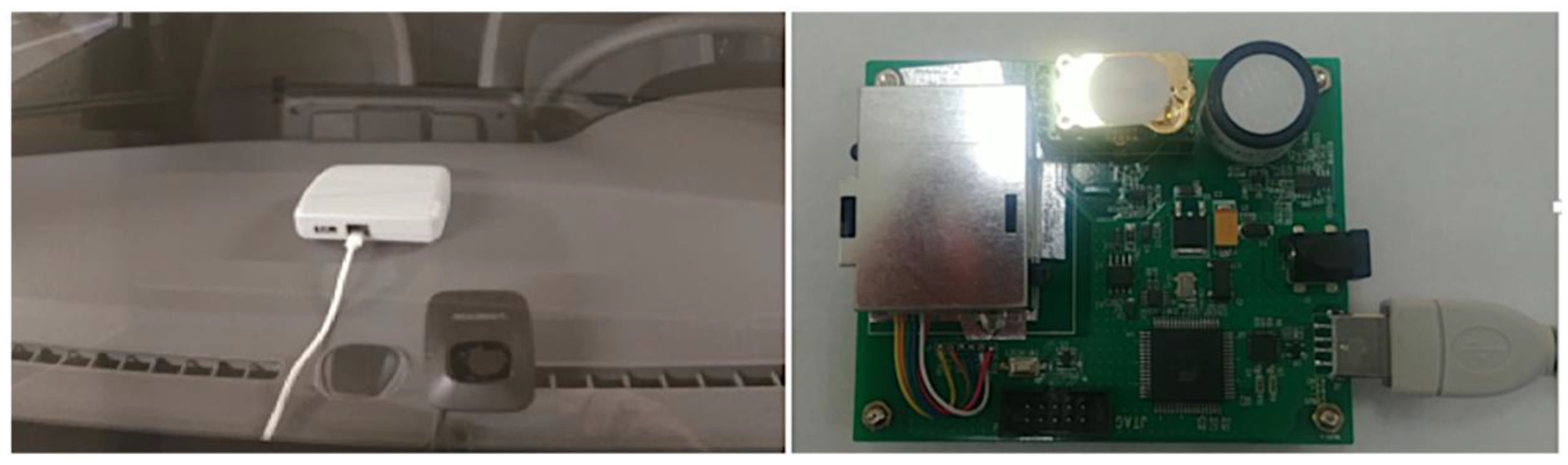

3.1. Air Quality Sensor (AQS)

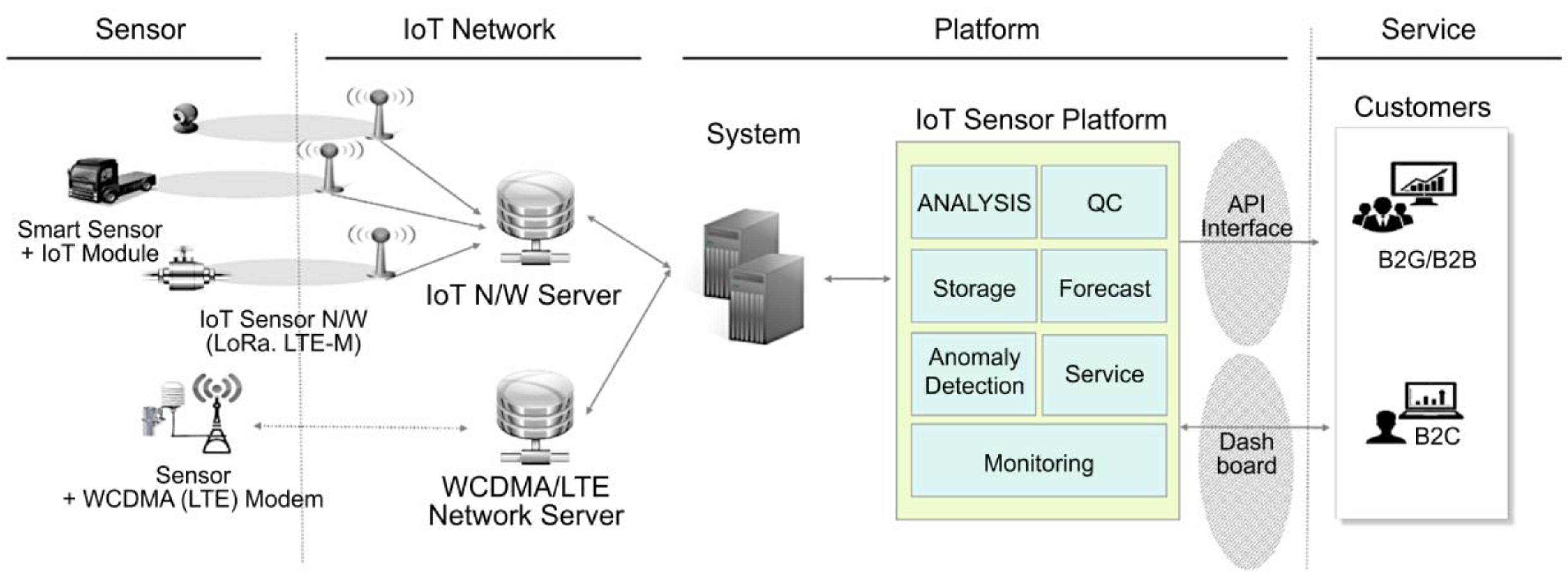

3.2. IoT Sensor Platform

3.3. Deep Learning–Based Sensors

3.4. Deep Learning–Based Anomaly Detection

3.4.1. Long Short-Term Memory (LSTM) Model

3.4.2. Skip-GANs and VAEs

4. Experimentation, Prototyping, and Analysis of Results

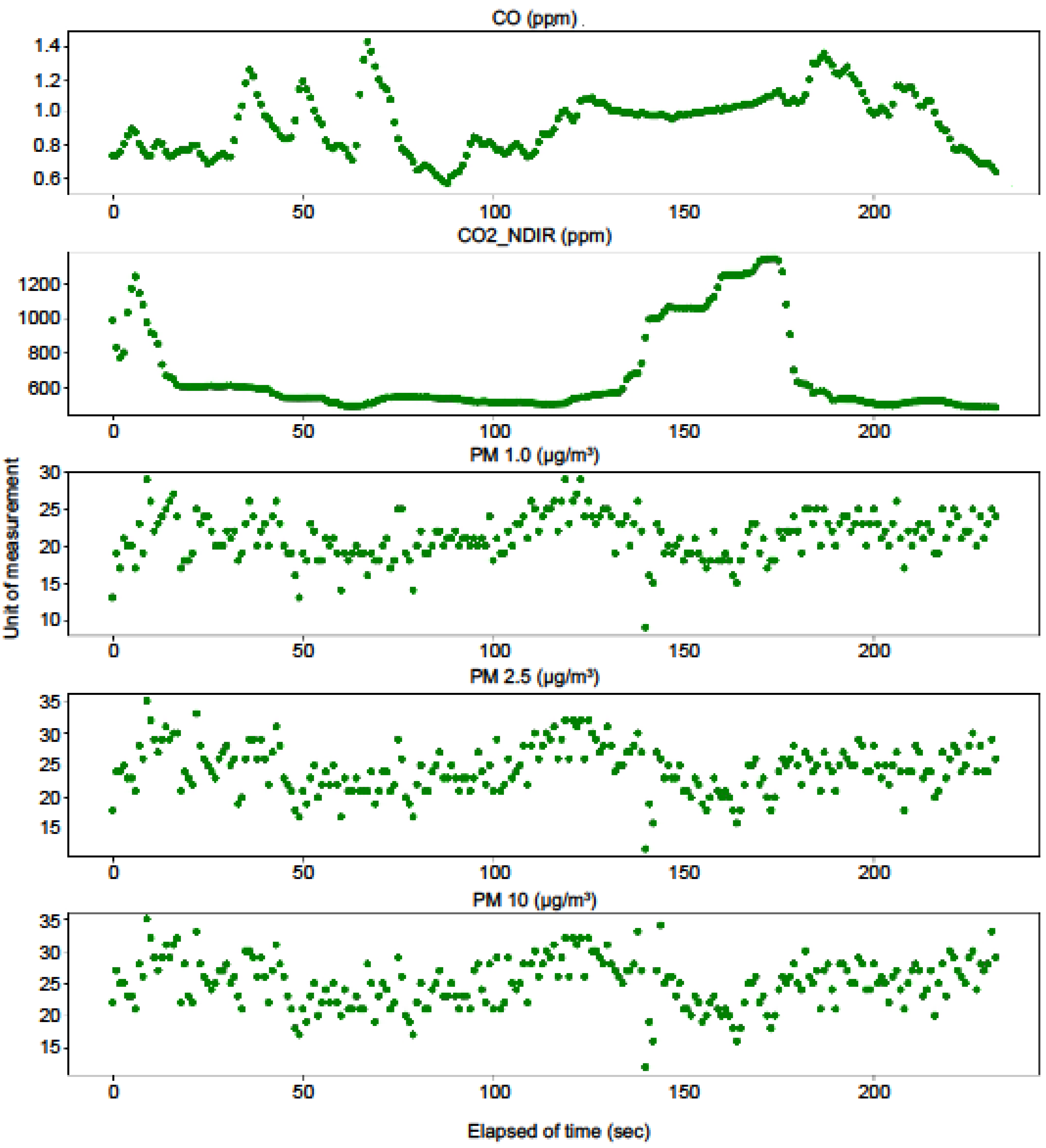

4.1. Input Data Configuration

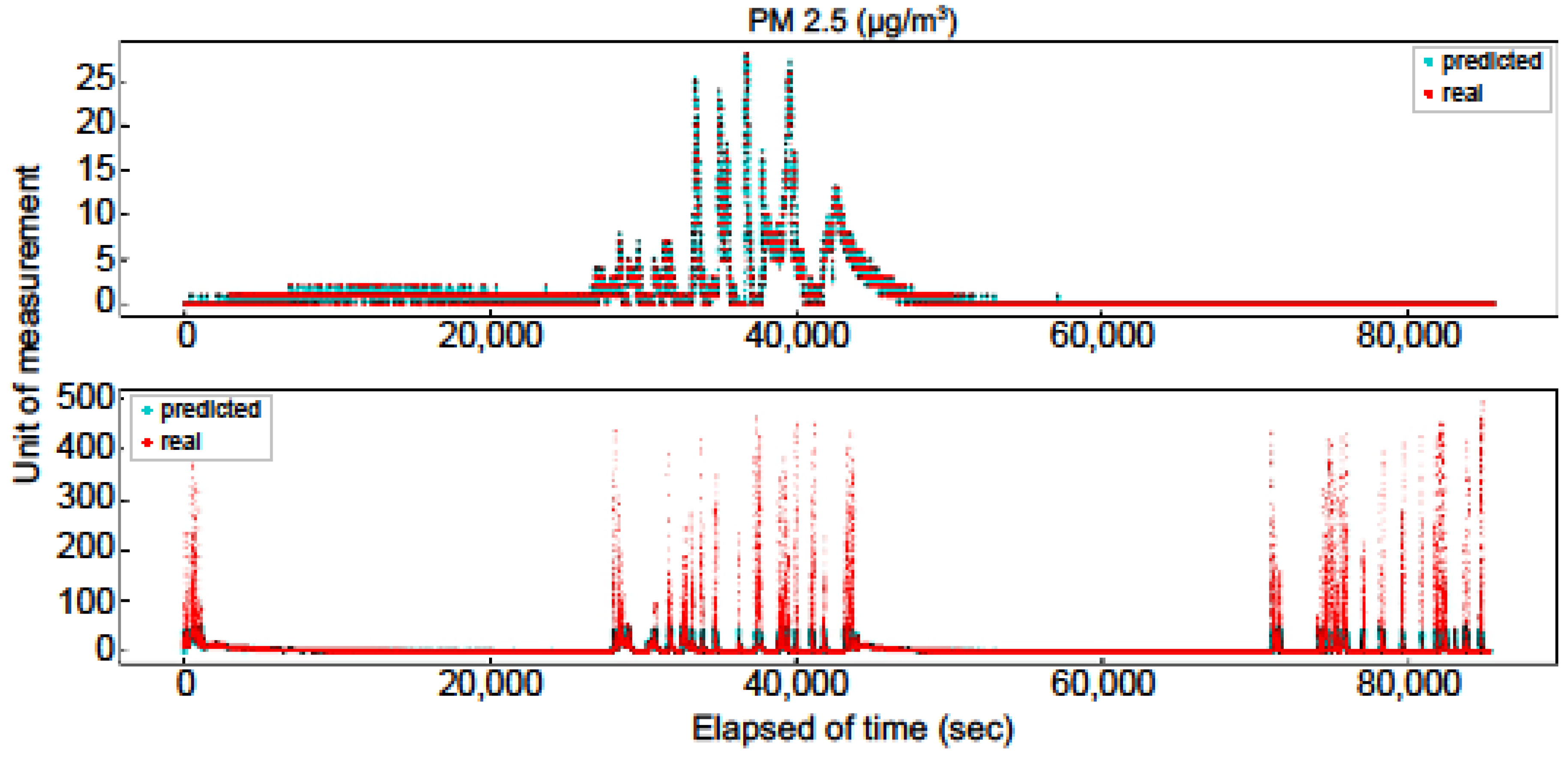

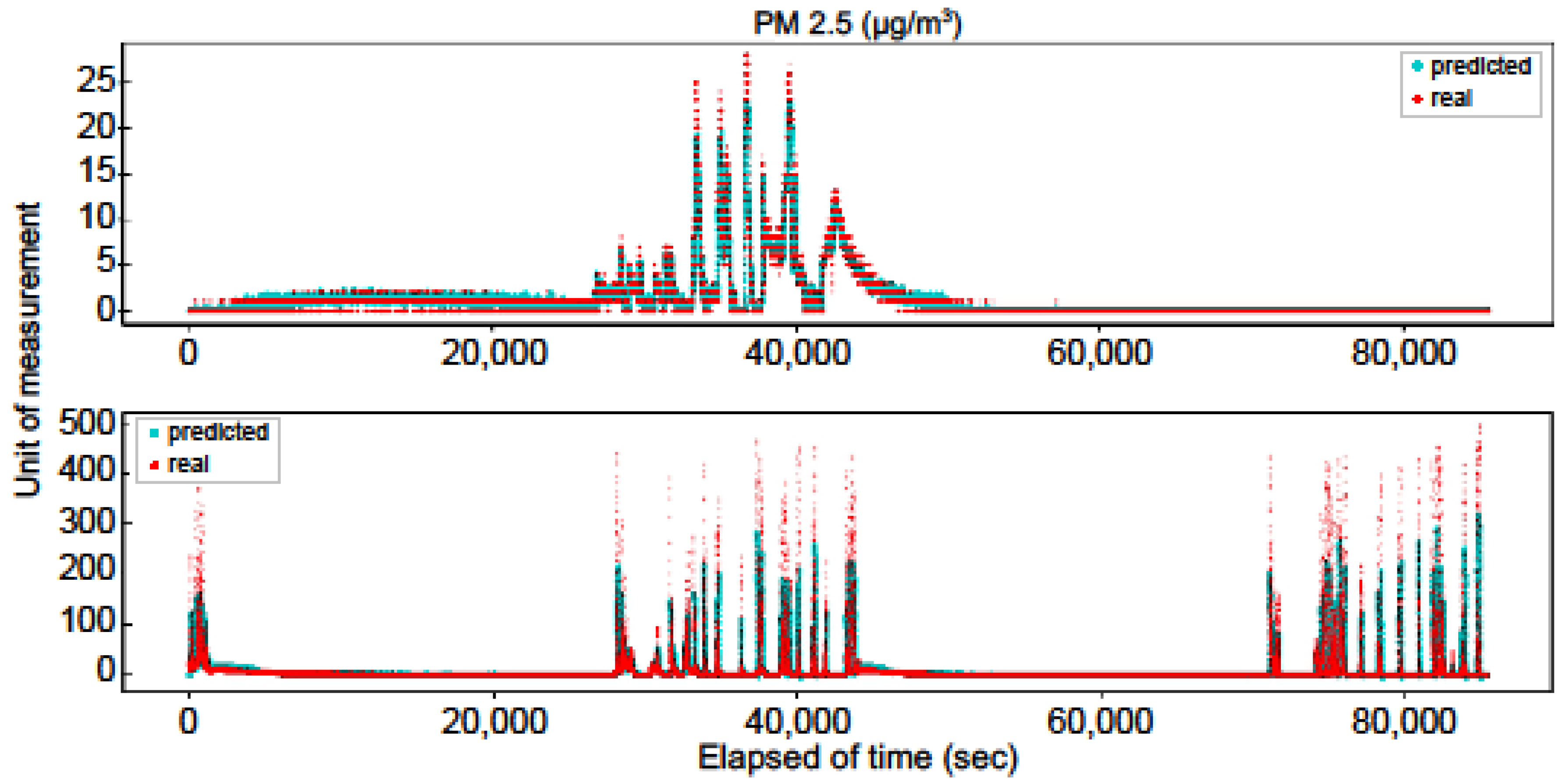

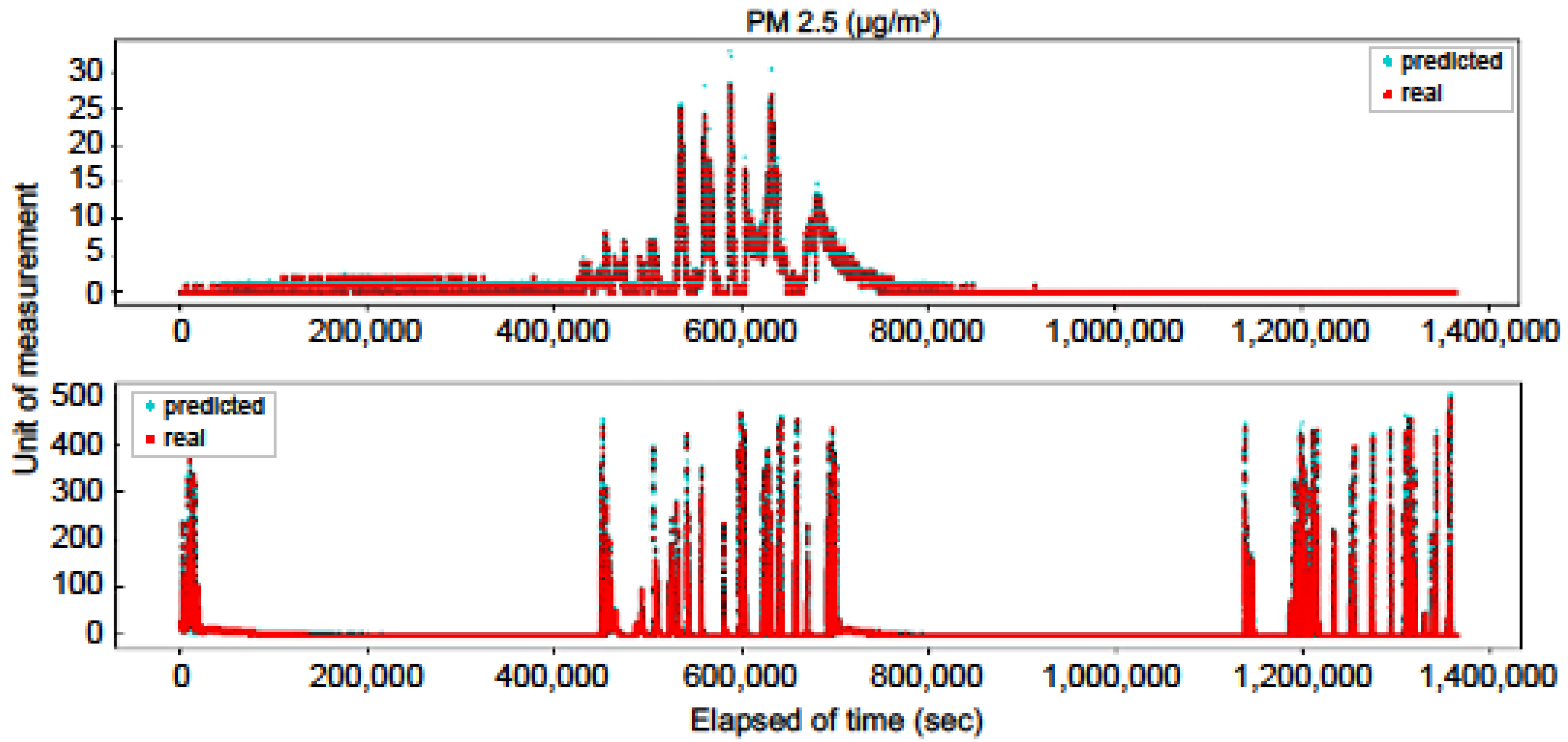

4.2. Results of Deep Learning Model

5. Conclusions

5.1. Managerial Implications

5.2. Practical and Social Implications

5.3. Limitations

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Mathur, G.D. Effect of Cabin Volume on Build-Up of Cabin Carbon Dioxide Concentrations from Occupant Breathing in Automobiles (No. 2018-01-0074); SAE Technical Paper; SAE International: Warrendale, PA, USA, 2018. [Google Scholar]

- Sahayadhas, A.; Sundaraj, K.; Murugappan, M. Detecting driver drowsiness based on sensors: A review. Sensors 2012, 12, 16937–16953. [Google Scholar] [CrossRef] [PubMed]

- Joo, Y.H.; Kim, J.K.; Ra, I.H. Intelligent drowsiness drive warning system. J. Korean Inst. Intell. Syst. 2008, 18, 223–229. [Google Scholar] [CrossRef][Green Version]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Mathur, G.D. Experimental Investigation to Determine Influence of Build-up of Cabin Carbon Dioxide Concentrations for Occupants Fatigue (No. 2016-01-0254); SAE Technical Paper; SAE International: Warrendale, PA, USA, 2016. [Google Scholar]

- Rödjegård, H.; Franchy, M.; Ehde, S.; Zoubir, Y.; Al-Khaldy, S.; Olsson, P.; Bengtsson, C.; Nowak, T.; O’Brien, D. Drowsy Driver & Child Left Behind-Cabin Air Quality v3. 0 (No. 2020-01-0573); SAE Technical Paper; SAE International: Warrendale, PA, USA, 2020. [Google Scholar]

- Kurian, N.; Rishikesh, D. Real time based driver’s safeguard system by analyzing human physiological signals. Int. J. Eng. Trends Technol. 2013, 4, 41–45. [Google Scholar]

- Barnes, N.M.; Ng, T.W.; Ma, K.K.; Lai, K.M. In-cabin air quality during driving and engine idling in 546 air-conditioned private vehicles in Hong Kong. Int. J. Environ. Res. Public Health 2018, 15, 611. [Google Scholar] [CrossRef]

- Nguyen, P. Deep Learning Models for Predicting CO2 Flux Employing Multivariate Time Series; MileTS: Anchorage, AK, USA, 2019. [Google Scholar]

- Yamaguchi, K.; Kato, T.; Ninomiya, Y. Vehicle ego-motion estimation and moving object detection using a monocular camera. In Proceedings of the 18th International Conference on Pattern Recognition (ICPR’06), Hong Kong, China, 20–24 August 2006; Volume 4, pp. 610–613. [Google Scholar]

- Cho, S.; Lee, G.; Hyun, J.; Roh, C. Future direction of designing ADAS from user perspectives. In Proceedings of the KSAE Annual Conference Proceedings, Topeka, KS, USA, 11–12 December 2013. [Google Scholar]

- Ramos, S.; Gehrig, S.; Pinggera, P.; Franke, U.; Rother, C. Detecting unexpected obstacles for self-driving cars: Fusing deep learning and geometric modeling. In Proceedings of the IEEE Intelligent Vehicles Symposium, Los Angeles, CA, USA, 11–14 July 2017; pp. 1025–1032. [Google Scholar]

- Kim, H.; Song, B. Vehicle recognition based on radar and vision sensor fusion for automatic emergency braking. In Proceedings of the 13th International Conference on Control, Automation and Systems, Gwangju, Korea, 20–23 October 2013; pp. 1342–1346. [Google Scholar]

- Reddy, B.; Kim, Y.H.; Yun, S.; Seo, C.; Jang, J. Real-time driver drowsiness detection for embedded system using model compression of deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, HI, USA, 21–26 July 2017; pp. 121–128. [Google Scholar]

- Bojarski, M.; Del Testa, D.; Dworakowski, D.; Firner, B.; Flepp, B.; Goyal, P.; Jackel, L.D.; Monfort, M.; Muller, U.; Zhang, J.; et al. End to end learning for self-driving cars. arXiv 2016, arXiv:1604.07316. [Google Scholar]

- Pham, L.; Molden, N.; Boyle, S.; Johnson, K.; Jung, H. Development of a standard testing method for vehicle cabin air quality index. SAE Int. J. Commer. Veh. 2019, 12. [Google Scholar] [CrossRef]

- Papadelis, C.; Chen, Z.; Kourtidou-Papadeli, C.; Bamidis, P.D.; Chouvarda, I.; Bekiaris, E.; Maglaveras, N. Monitoring sleepiness with on-board electrophysiological recordings for preventing sleep-deprived traffic accidents. Clin. Neurophysiol. 2007, 118, 1906–1922. [Google Scholar] [CrossRef]

- Occupational Safety and Health Administration (OSHA). Sampling and Analytical Methods: Carbon Dioxide in Workplace Atmospheres. 2012. Available online: http://www.osha.gov/dts/sltc/methods/inorganic/id172/id172 (accessed on 20 March 2020).

- American Conference of Governmental Industrial Hygienists (ACGIH). TLVs and BEIs. In Proceedings of the American Conference of Governmental Industrial Hygienists, Cincinnati, OH, USA, 20–23 April 2011. [Google Scholar]

- Kang, C.; Chung, Y.; Chang, Y.J. Injury severity analysis of truck-involved crashes on Korean freeway systems using an ordered probit model. J. Korean Soc. Civ. Eng. 2019, 39, 391–398. [Google Scholar]

- Available online: https://ww3.arb.ca.gov/research/indoor/in-vehsm.htm (accessed on 29 February 2020).

- Ziebinski, A.; Cupek, R.; Erdogan, H.; Waechter, S. A survey of ADAS technologies for the future perspective of sensor fusion. In Proceedings of the International Conference on Computational Collective Intelligence, Halkidiki, Greece, 28–30 September 2016; pp. 135–146. [Google Scholar]

- Batista, J.P. A real-time driver visual attention monitoring system. In Proceedings of the 2nd Iberian Conference on Pattern Recognition and Image Analysis, Estoril, Portugal, 7–9 June 2005; pp. 200–208. [Google Scholar]

- Jung, H.G.; Cho, Y.H.; Kim, J. ISRSS: Integrated side/rear safety system. Int. J. Automot. Technol 2010, 11, 541–553. [Google Scholar] [CrossRef]

- Abtahi, S.; Omidyeganeh, M.; Shirmohammadi, S.; Hariri, B.; Yaw, D.D. A yawning detection dataset. In Proceedings of the 5th ACM Multimedia Systems Conference, Singapore, 19–21 March 2014; pp. 24–28. [Google Scholar]

- Lee, B.-G.; Chung, W.-Y. Driver alertness monitoring using fusion of facial features and bio-signals. IEEE Sens. J. 2012, 12, 2416–2422. [Google Scholar] [CrossRef]

- Oh, M.Y.; Jeong, Y.S.; Park, G.H. Driver drowsiness detection algorithm based on facial feature points. J. Korea Multimed. Soc. 2016, 19, 1852–1861. [Google Scholar] [CrossRef][Green Version]

- Increased Possibility of Drowsy Driving when Running Continuously for Two Hours in a Confined Space. Available online: http://www.korea.kr/news/pressReleaseView.do?newsId=156120968 (accessed on 29 November 2019).

- Zeng, W.; Miwa, T.; Morikawa, T. Application of the support vector machine and heuristic k-shortest path algorithm to determine the most eco-friendly path with a travel time constraint. Transp. Res. D 2017, 57, 458–473. [Google Scholar] [CrossRef]

- Bhatt, P.P.; Trivedi, J.A. Various methods for driver drowsiness detection: An overview. Int. J. Comput. Sci. Eng. 2017, 9, 70–74. [Google Scholar]

- Daza, I.; Bergasa, L.; Bronte, S.; Yebes, J.; Almazán, J.; Arroyo, R. Fusion of optimized indicators from Advanced Driver Assistance Systems (ADAS) for driver drowsiness detection. Sensors 2014, 14, 1106–1131. [Google Scholar] [CrossRef] [PubMed]

- Choi, I.H.; Hong, S.K.; Kim, Y.G. Real-time categorization of driver’s gaze zone using the deep learning techniques. In Proceedings of the IEEE International Conference on Big Data and Smart Computing, Hong Kong, China, 18–20 January 2016; pp. 143–148. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef] [PubMed]

- Ngxande, M.; Tapamo, J.R.; Burke, M. Driver drowsiness detection using behavioral measures and machine learning techniques: A review of state-of-art techniques. In Proceedings of the 2017 Pattern Recognition Association of South Africa and Robotics and Mechatronics (PRASA-RobMech), Bloemfontein, South Africa, 29 November–1 December 2017; pp. 156–161. [Google Scholar]

- Wijnands, J.S.; Thompson, J.; Nice, K.A.; Aschwanden, G.D.P.A.; Stevenson, M. Real-time monitoring of driver drowsiness on mobile platforms using 3D neural networks. Neural Comput. Appl. 2019. [Google Scholar] [CrossRef]

- Mahdavinejad, M.S.; Mohammadreza, R.; Mohammadamin, B. Machine learning for internet of things data analysis: A survey. Digit. Commun. Netw. 2018, 4, 161–175. [Google Scholar] [CrossRef]

- Caron, M.; Bojanowski, P.; Joulin, A.; Douze, M. Deep clustering for unsupervised learning of visual features. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 132–149. [Google Scholar]

- Camero, A.; Toutouh, J.; Stolfi, D.H.; Alba, E. Evolutionary deep learning for car park occupancy prediction in smart cities. In Proceedings of the International Conference on Learning and Intelligent Optimization, Kalamata, Greece, 10–15 June 2018; pp. 386–401. [Google Scholar]

- Xie, X.; Wu, D.; Liu, S.; Li, R. IoT data analytics using deep learning. arXiv 2017, arXiv:1708.03854. [Google Scholar]

- Ma, X.; Dai, Z.; He, Z.; Na, J.; Wang, Y.; Wang, Y. Learning traffic as images: A deep convolutional neural network for large-scale transportation network speed prediction. arXiv 2017, arXiv:1701.04245v4. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Hill, D.J.; Minsker, B.S.; Amir, E. Real-time Bayesian anomaly detection for environmental sensor data. In Proceedings of the Congress-International Association for Hydraulic Research, Venice, Italy, 1–6 July 2007. [Google Scholar]

- Na, S.I.; Kim, H.J. Design of anomaly detection system based on Big Data in Internet of Things. J. Digit. Contents Soc. 2018, 19, 377–383. [Google Scholar]

- Chalapathy, R.; Chawla, S. Deep learning for anomaly detection: A survey. arXiv 2019, arXiv:1901.03407. [Google Scholar]

- Akcay, S.; Atapour-Abarghouei, A.; Breckon, T.P. GANomaly: Semi-supervised anomaly detection via adversarial training. arXiv 2018, arXiv:1805.06725. [Google Scholar]

- Malhotra, P.; Ramakrishnan, A.; Anand, G.; Vig, L.; Agarwal, P.; Shroff, G. LSTM-based encoder-decoder for multi-sensor anomaly detection. arXiv 2016, arXiv:1607.00148. [Google Scholar]

- Gers, F.A.; Schmidhuber, J.; Cummins, F. Learning to forget: Continual prediction with LSTM. In Proceedings of the 9th International Conference on Artificial Neural Networks: ICANN ’99, Edinburgh, UK, 7–10 September 1999. [Google Scholar]

- Chandola, V.; Banerjee, A.; Kumar, V. Anomaly detection: A survey. ACM Comput. Surv. 2009, 41, 15. [Google Scholar] [CrossRef]

- Hayes, M.A.; Capretz, M.A. Contextual anomaly detection framework for big sensor data. J. Big Data 2015, 2, 2. [Google Scholar] [CrossRef]

- Donahue, J.; Krähenbühl, P.; Darrell, T. Adversarial feature learning. arXiv 2016, arXiv:1605.09782. [Google Scholar]

- Syafrudin, M.; Alfian, G.; Fitriyani, N.; Rhee, J. Performance analysis of IoT-Based sensor, big data processing, and machine learning model for real-time monitoring system in automotive manufacturing. Sensors 2018, 18, 2946. [Google Scholar] [CrossRef]

- Zhao, Z.; Chen, W.; Wu, X.; Chen, P.C.Y.; Liu, J. LSTM network: A deep learning approach for short-term traffic forecast. IET Intell. Trans. Sys. 2017, 11, 68–75. [Google Scholar] [CrossRef]

- Omar, S.; Ngadi, M.A.; Jebur, H.H.; Benqdara, S. Machine learning techniques for anomaly detection: An overview. Int. J. Comput. Appl. 2013, 79. [Google Scholar] [CrossRef]

- Xu, H.; Chen, W.; Zhao, N.; Li, Z.; Bu, J.; Li, Z.; Liu, Y.; Zhao, Y.; Pei, D.; Feng, Y.; et al. Unsupervised anomaly detection via variational auto-encoder for seasonal KPIs in web applications. In Proceedings of the World Wide Web Conference, Lyon, France, 23–27 April 2018; pp. 187–196. [Google Scholar]

- Abduljabbar, R.; Dia, H.; Liyanage, S.; Bagloee, S.A. Applications of artificial intelligence in transport: An overview. Sustainability 2019, 11, 189. [Google Scholar] [CrossRef]

- Schlegl, T.; Seeböck, P.; Waldstein, S.M.; Schmidt-Erfurth, U.; Langs, G. Unsupervised anomaly detection with generative adversarial networks to guide marker discovery. In Proceedings of the International Conference on Information Processing in Medical Imaging, Boone, NC, USA, 25–30 June 2017. [Google Scholar]

- Abbasnejad, E.; Dick, A.; van den Hengel, A. Infinite variational autoencoder for semi-supervised learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

| CO2 Concentration (ppm) | Description/Effects |

|---|---|

| 250–350 | Normal outdoor air level |

| 350–1000 | Normal air level in a room with good air circulation |

| 1000–2000 | Discomfort due to poor air quality |

| 2000–5000 | Possible headaches, drowsiness, reduced concentration, loss of attention, increased heart rate, and slight nausea |

| >5000 | Abnormal outdoor air level; possible toxicity and O2 deficiency; exposure limit allowed for daily workplace exposure |

| Sensor | Specifications | Measuring Range |

|---|---|---|

| CO | Type: Electrochemical Sensor Measurement Range: 0–100 ppm Resolution: 0.1 ppm Maximum Overload: 5000 ppm | Operating Temperature: −20–+50 °C Storage Temperature: 0–20 °C Humidity: 15–95% RH |

| CO2 | Type: NDIR (Nondispersive Infrared) Sensor Measurement Range: 0–5,000 ppm Accuracy: 400–5000 ppm ± 75 ppm or 10% of reading, whichever is greater | Operating Temperature: +10–+50 °C Storage Temperature: −30–+70 °C Humidity: 0–95% RH |

| PM (1.0, 2.5, 10) * | Type: Laser-based light scattering Concentration Range: 1–500 µg/m3 Accuracy Error: ± 15% or ± 10 µg/m3 | Operating Temperature: +10–+60 °C Storage Temperature: −20–+70 °C Humidity: 0–95% RH |

| Temperature | Specified Range: −40–+125 °C Resolution: 0.01 °C | Operating Temperature: −40–+125 °C Storage Temperature: −40–+1500 °C |

| Humidity | Specified Range: 0–100% RH − Resolution: 0.01% RH | Operating Temperature: −40–+125 °C Storage Temperature: −40–+1500 °C |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chung, J.-j.; Kim, H.-J. An Automobile Environment Detection System Based on Deep Neural Network and its Implementation Using IoT-Enabled In-Vehicle Air Quality Sensors. Sustainability 2020, 12, 2475. https://doi.org/10.3390/su12062475

Chung J-j, Kim H-J. An Automobile Environment Detection System Based on Deep Neural Network and its Implementation Using IoT-Enabled In-Vehicle Air Quality Sensors. Sustainability. 2020; 12(6):2475. https://doi.org/10.3390/su12062475

Chicago/Turabian StyleChung, Jae-joon, and Hyun-Jung Kim. 2020. "An Automobile Environment Detection System Based on Deep Neural Network and its Implementation Using IoT-Enabled In-Vehicle Air Quality Sensors" Sustainability 12, no. 6: 2475. https://doi.org/10.3390/su12062475

APA StyleChung, J.-j., & Kim, H.-J. (2020). An Automobile Environment Detection System Based on Deep Neural Network and its Implementation Using IoT-Enabled In-Vehicle Air Quality Sensors. Sustainability, 12(6), 2475. https://doi.org/10.3390/su12062475