1. Introduction

The integration of computers, mobile platforms, and cloud computing has simplified modern life but also increased exposure to malicious cyber threats. With digital systems now essential for personal, governmental and industrial operations, this dependency has escalated global cyber vulnerabilities [

1]. Malicious software, such as viruses, Trojans, ransomware, and spyware, continues to pose significant risks, leading to data breaches, financial losses, and the disruption of critical infrastructure. Recent cybersecurity statistics highlight the increasing severity of these threats [

2]. In 2025, more than 560,000 new malware samples were detected daily, with the cumulative number of known malicious programs exceeding one billion [

3]. AV-Test reports that more than 17 million new malware variants emerge each month, reflecting the accelerating evolution of cyber threats. According to Kaspersky, in 2024 cybercriminals executed roughly 2.8 million malware-related attacks monthly on mobile devices, totaling over 33 million incidents annually. Alarmingly, Android banking Trojans increased by 196% between 2023 and 2024, climbing from 420,000 to 1.24 million unique attacks.

Several large-scale ransomware incidents further underscore the destructive potential of modern malware campaigns. The Change Healthcare ransomware attack in February 2024, attributed to the BlackCat/ALPHV group, disrupted U.S. medical claim processing and caused financial losses exceeding

$2.8 billion. Similarly, the RansomHub strain launched in 2024 executed more than 500 attacks worldwide, while in March 2025, the Medusa group targeted more than 300 organizations using double-extortion tactics. These examples demonstrate the increasing sophistication and financial impact of malware operations. To combat such threats, organizations have increased investments in advanced cybersecurity solutions [

4]. However, traditional malware detection techniques such as static and dynamic analysis remain limited by computational cost, slow response times, and weak generalization against obfuscated or novel variants [

5]. Machine learning (ML) approaches relying on handcrafted features also face challenges in scalability and adaptability when encountering unseen malware samples [

6].

Thus, recent research has shifted to image-based malware analysis, which converts executable binaries into visual representations that can be processed by deep learning models [

5,

7]. Each malware binary is treated as a one-dimensional sequence of bytes

, where each byte value is assigned to a grayscale pixel intensity (0–255). The resulting image

I is defined as:

where

H and

W depend on file size. This visual encoding enables convolutional neural networks (CNNs) to capture spatial correlations and texture-like patterns that reflect characteristics of the malware family. Multiple studies have validated the effectiveness of CNN-based and hybrid models in malware image classification. Vasan et al. (2020) [

6] demonstrated improved minority-class recognition using a fine-tuned CNN architecture (IMCFN), while Ahmed et al. (2023) [

8] employed an Inception V3-based transfer learning model that achieved 99.24% accuracy. Similarly, Behera et al. (2025) [

9] introduced a hybrid VGG16–ResNet50 framework achieving 99.28% accuracy on the Malimg dataset. Attention-based CNNs have also proven effective in capturing spatial and channel dependencies [

10], and recent advances have combined CNNs with Vision Transformers to enhance generalization and contextual understanding [

11].

Despite these advancements, challenges persist. Public malware datasets remain imbalanced, with underrepresented families affecting model generalization. Limited labeled data and high intra-class variation further hinder learning stability [

7]. Moreover, deep networks often overfit dominant malware classes, reducing recall for rare variants. To address these challenges, this paper proposes a comprehensive image-based malware detection framework that integrates Deep Convolutional Generative Adversarial Networks (DCGAN) for data augmentation with a hybrid CNN–Transformer architecture for classification. The proposed model aims to balance datasets, extract both local and global features, and improve robustness against diverse malware families.

While prior studies such as Joshi et al. (2025) [

12] and Nazim et al. (2025) [

13] have explored GAN-based augmentation and hybrid architectures, our work differs in three key ways. First, we employ a DCGAN specifically tailored to balance highly imbalanced malware image datasets by generating realistic, class-conditional samples for rare families, including architecture and training choices validated per class; by contrast, Joshi et al. propose a more generic “Dummy Generator” without detailed class-focused augmentation or extensive minority-class evaluation. Second, our hybrid model explicitly integrates a CNN backbone with a Transformer encoder to capture both fine-grained local texture features and long-range global dependencies, whereas Nazim et al. focus on multimodal fusion (image + metadata) and do not leverage Transformer-based global context modeling. Third, we provide comprehensive empirical validation on benchmark datasets (e.g., Malimg, MaleVis) with detailed per-class metrics, high-resolution confusion matrices, and statistical significance testing to demonstrate improvements for both majority and minority classes. Collectively, these additions advance the state-of-the-art by jointly addressing dataset imbalance and representation learning for malware image classification.

The key contributions of this study are as follows:

A DCGAN-based augmentation strategy is introduced to synthetically balance malware image datasets, especially for rare classes.

A hybrid CNN–Transformer architecture is developed to capture both fine-grained spatial textures and long-range dependencies in malware images.

Extensive experiments on benchmark datasets (e.g., Malimg, MaleVis) demonstrate over 90% overall accuracy, outperforming state-of-the-art baselines such as Inception V3 [

8], Attention-CNN [

10], and hybrid VGG16–ResNet50 [

9].

Detailed per-class performance and confusion matrix analyses are presented to enhance interpretability and guide future cybersecurity research.

The remainder of this paper is organized as follows:

Section 2 reviews related work on deep learning and data augmentation for malware detection.

Section 3 introduces the proposed DCGAN–CNN–Transformer framework.

Section 4 describes the experimental setup and evaluation metrics.

Section 5 presents the results and discussion, and

Section 6 concludes the study with future research directions.

3. Materials and Methods

This section describes the dataset used in this study, the DCGAN-based balancing strategy, the CNN–Transformer hybrid classifier, and the experimental configuration used for training and evaluation.

3.1. Dataset

In this work, the Blended Malware Dataset [

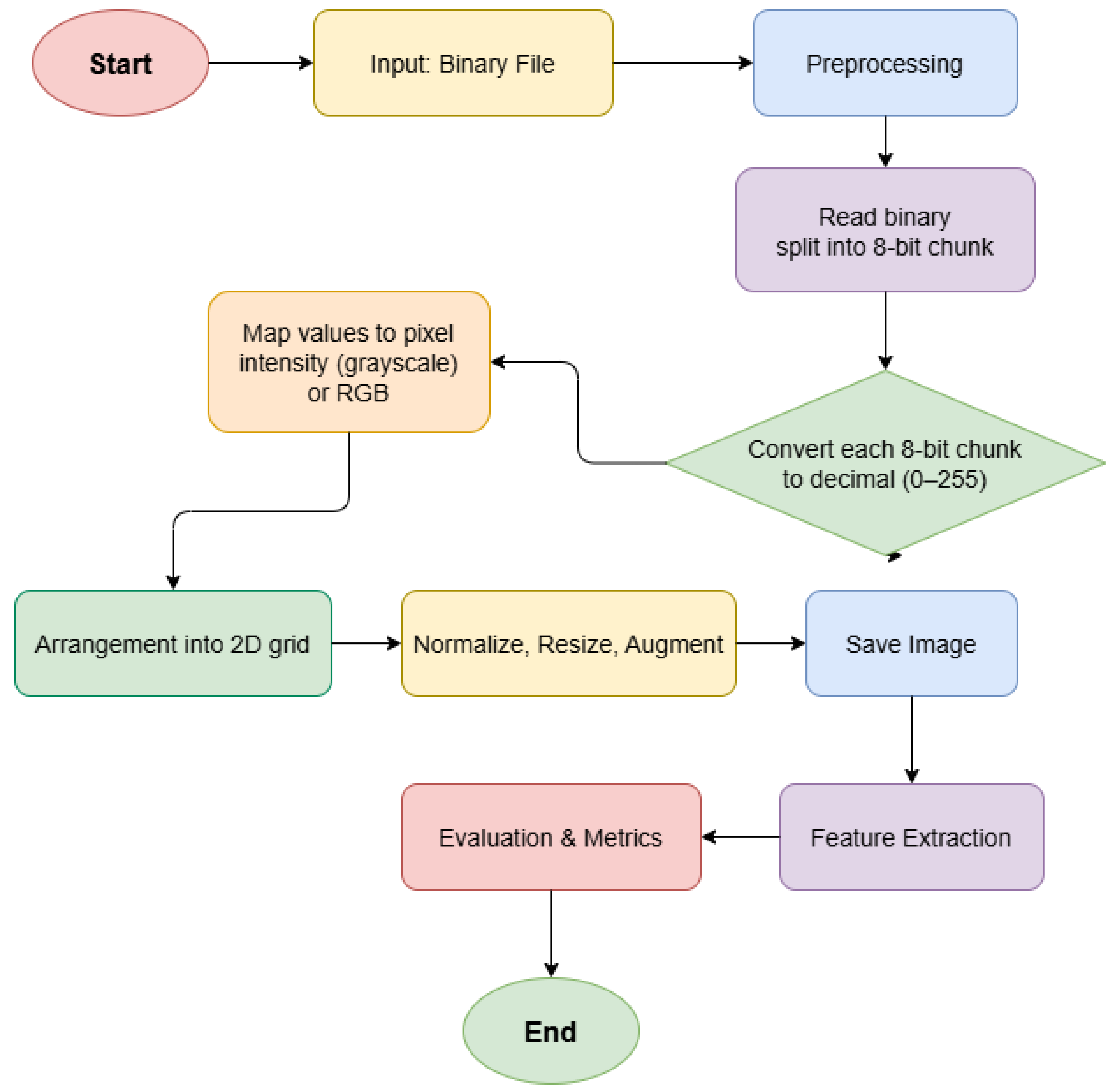

25], obtained from the Kaggle repository, was used for experimental evaluation. The dataset comprises samples from 31 distinct malware families that are widely adopted in image-based malware analysis studies. Each malware instance is originally provided as an executable binary file. To enable compatibility with computer-vision-based learning models, the raw binaries were first converted into one-dimensional sequences of byte values and subsequently reshaped into two-dimensional image representations, as illustrated in

Figure 1. Two alternative image representation strategies were considered:

Grayscale images: Each byte value in the range is mapped to a single pixel intensity, preserving local structural patterns while reducing memory and computational overhead.

RGB images: Some existing studies convert binaries into three-channel images using sliding windows or overlapping byte mappings to enrich feature representations, at the cost of additional preprocessing complexity and higher training overhead.

In this study, grayscale images were selected for efficiency and scalability. All malware images were resized to a uniform resolution of pixels prior to model training.

Let

denote the number of samples in class

i, for

. The degree of class imbalance is quantified using the imbalance ratio defined as

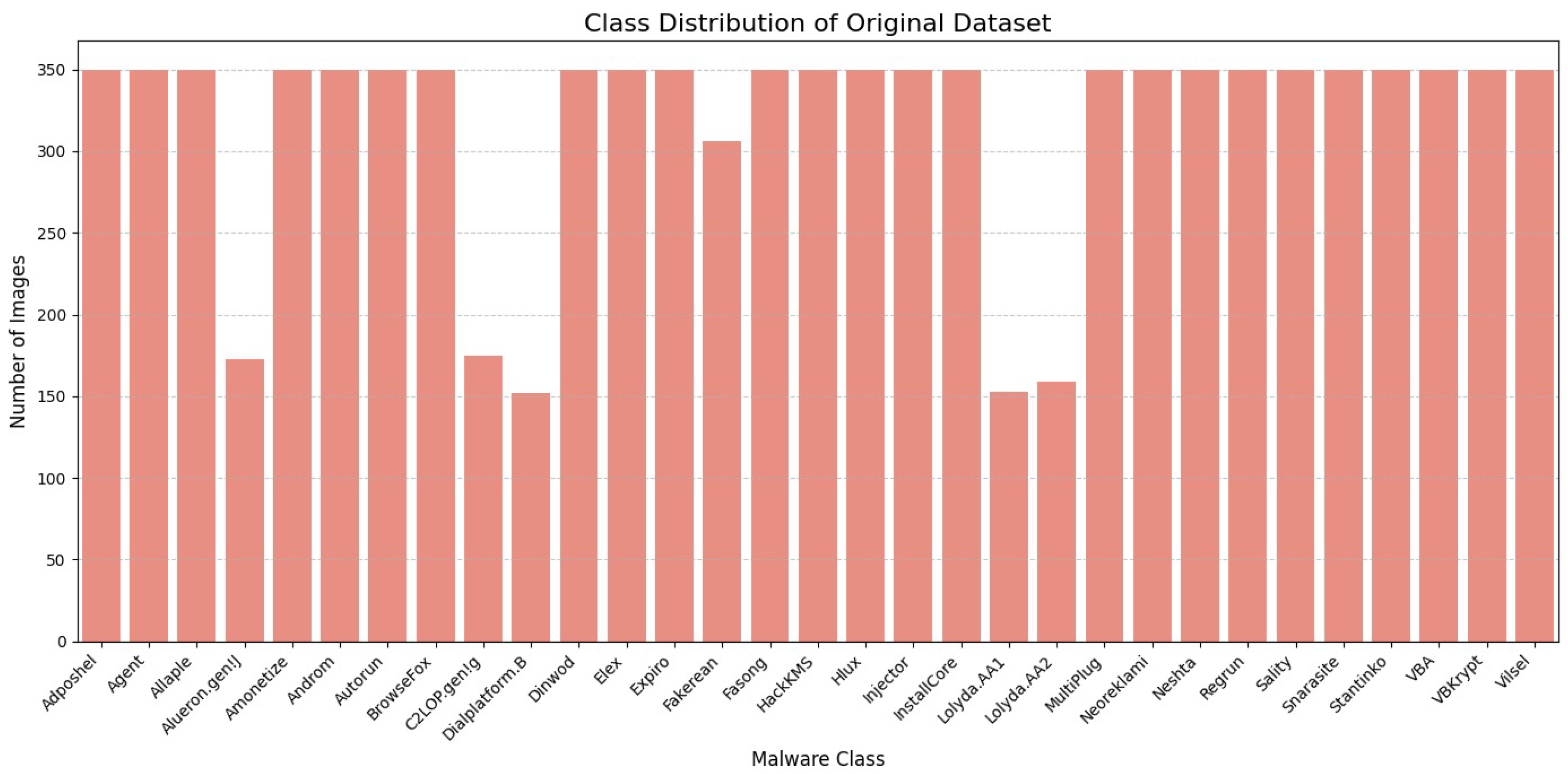

In the considered dataset, majority malware families contain more than 300 samples, whereas minority families include as few as 25 samples, resulting in an imbalance ratio greater than 12. The total number of samples in the dataset is given by

Such pronounced imbalance can bias learning toward majority classes and significantly degrade minority-class recognition, thereby motivating the adoption of a robust data balancing strategy.

3.2. Balancing with DCGAN

To mitigate the severe class imbalance present in the dataset, a Deep Convolutional Generative Adversarial Network (DCGAN) was employed to synthesize realistic malware images for underrepresented families [

26]. The DCGAN framework consists of two adversarial components: (i) a Generator

, which maps a random latent vector

to a synthetic malware image, and (ii) a Discriminator

, which aims to distinguish real malware images

from generated samples

. The adversarial learning process is formulated as the following minimax optimization problem:

After convergence, the trained generator was used to produce synthetic images

for minority malware families. These generated samples were then combined with the original dataset to construct a balanced training set defined as

where

I denotes the set of original malware images. Synthetic samples were generated in a class-wise manner until each malware family reached an approximately uniform number of samples.

Figure 2 and

Figure 3 illustrate the class distribution before and after DCGAN-based balancing, respectively. As observed, the proposed augmentation strategy substantially enriches minority classes, resulting in a more uniform dataset distribution that is better suited for robust and unbiased classifier training.

3.3. Classification Model

The balanced dataset was utilized to train a hybrid CNN–Transformer classification model designed to jointly capture local spatial patterns and global contextual dependencies in malware images. The overall model pipeline consists of three main stages, as described below.

The overall training objective jointly considers the GAN-based data balancing and the classification task, and is formulated as

where

denotes the adversarial loss used during DCGAN training,

is the categorical cross-entropy loss defined as

and

is a weighting factor that controls the trade-off between GAN regularization effects and classification accuracy.

3.4. Experimental Setup

All experiments were conducted on a workstation equipped with an NVIDIA GPU, 32 GB of system memory, and running Python 3.9. The complete experimental pipeline was implemented using the PyTorch (version 2.6.0+cu124) deep learning framework. The key components of the experimental setup are summarized as follows. Malware executable binaries were first converted into grayscale image representations, resized to a fixed resolution of pixels, and normalized to the range to ensure stable and efficient training. To address class imbalance, class-wise DCGAN models were trained for minority malware families until the desired number of synthetic samples was generated. The generated images were then combined with the original dataset to construct the balanced training set . The proposed CNN–Transformer classifier was trained on using the Adam optimizer with an adaptive learning rate schedule and early stopping based on validation loss to prevent overfitting. Model performance was evaluated using standard classification metrics, including Accuracy, Precision, Recall, and F1-score (both macro-averaged and weighted), along with detailed analysis of confusion matrices to assess class-wise prediction behavior.

4. Proposed Methodology

The proposed framework for image-based malware classification integrates data enhancement with a hybrid CNN–Transformer architecture to improve classification accuracy, robustness, and generalization under class imbalance. The methodology comprises three core components, as illustrated in

Figure 4 and formally described in Algorithm 1. First, malware executables are transformed into grayscale image representations, enabling the application of computer vision techniques to binary analysis. Second, to address skewed class distributions and limited sample diversity, a Deep Convolutional Generative Adversarial Network (DCGAN) is employed to synthesize realistic malware images for minority families. In this adversarial setup, a generator

G learns to produce synthetic images from random noise, while a discriminator

D is trained to distinguish between real and generated samples. After convergence, the generated images are combined with the original dataset to form a balanced training set. Finally, a CNN–Transformer hybrid classifier is trained on the augmented data, where convolutional layers capture fine-grained local patterns and Transformer encoders model long-range dependencies across learned feature representations.

| Algorithm 1 Mathematical Representation of the Malware Classification Framework |

- 1:

Input: Malware binaries - 2:

Convert binaries to grayscale images: - 3:

- 4:

Generate synthetic malware images: - 5:

- 6:

Train CNN–Transformer model : - 7:

Evaluate model performance: - 8:

Output: Class probabilities

|

4.1. Algorithmic Workflow

The end-to-end operational flow of the proposed malware classification system is summarized in Algorithm 1. Given a set of malware binaries , each binary is first mapped to a grayscale image through a deterministic transformation function that converts byte sequences into pixel intensities. The resulting image set is then used to train a DCGAN, yielding a generator–discriminator pair . Synthetic malware images are sampled from the trained generator using latent vectors and merged with the original dataset to construct an augmented and class-balanced dataset . The hybrid CNN–Transformer model is subsequently optimized on by minimizing a supervised loss function with respect to the model parameters . Finally, the trained model is evaluated on a held-out test set using standard performance metrics, including Accuracy, Precision, Recall, and F1-score, and outputs posterior class probabilities for malware family prediction.

4.2. Architecture Design

Figure 4 illustrates the complete end-to-end pipeline adopted in this study, covering the workflow from raw malware binaries to final family-level classification using the proposed CNN–Transformer hybrid architecture. The process begins with the malware image dataset, where each executable binary is transformed into a grayscale image representation. During preprocessing, all images are resized to a uniform resolution, normalized to a consistent intensity range, and split into training and testing subsets to ensure uniform and reproducible processing across samples. The first stage of feature learning is carried out by a sequence of convolutional blocks. The initial Conv2D layer applies

kernels, followed by Batch Normalization and ReLU activation, with MaxPooling used to reduce spatial dimensionality. Subsequent convolutional blocks progressively increase the number of filters (from 32 to 64 and then 128), and each block incorporates Batch Normalization, ReLU activation, dropout regularization, and pooling operations. Through this hierarchical structure, the CNN backbone learns increasingly abstract representations, capturing low-level and mid-level spatial patterns such as structural byte distributions, texture-like artifacts, and opcode alignment regions that are characteristic of malware binaries.

In parallel, the dataset is examined for class imbalance across the 31 malware families. As depicted in

Figure 4, the framework evaluates whether the class distribution is uniform. When imbalance is detected, a data enhancement module is activated. This module leverages GAN-generated synthetic malware images, optionally combined with lightweight geometric augmentations such as rotations, flips, and scaling, to enrich minority classes. The generated and original samples are then merged using an imbalanced data sampler, ensuring that each training mini-batch remains approximately class-balanced despite the skewed global distribution. Following augmentation and balanced sampling, the data are fed into the hybrid CNN–Transformer classifier. The final convolutional block outputs a high-dimensional feature map, which is reshaped and passed to a Transformer encoder. The Transformer employs multi-head self-attention to model global and long-range dependencies across spatial regions of the image, enabling the network to capture relationships between distant patches that conventional CNNs may overlook. This capability is particularly important for distinguishing visually similar malware families or variants that differ only in subtle structural patterns.

The output of the Transformer encoder is aggregated using Global Average Pooling, which compresses the spatially distributed representations into a compact feature vector. This vector is then processed by fully connected layers with ReLU activation and dropout regularization to reduce overfitting. Finally, a Softmax-activated output layer produces normalized probability scores corresponding to each of the 31 malware families. Overall, the proposed architecture effectively combines the strengths of convolutional networks for local pattern extraction with Transformer-based global contextual reasoning, while integrating GAN-driven data balancing to ensure robust and unbiased learning under severe class imbalance conditions.

5. Experimental Setup, Results, and Discussion

All experiments were conducted on a benchmark malware image dataset comprising 31 distinct malware families. Each malware executable was transformed into a grayscale image of resolution

pixels to enable learning using computer-vision-based models. The dataset exhibits a pronounced class imbalance, where several dominant malware families contain more than 300 samples, while minority families have fewer than 30 instances. Such skewed distributions are known to bias supervised learning models toward majority classes, thereby degrading detection performance for rare and emerging malware variants. To ensure reproducibility and transparency, the key hyperparameters and model complexity metrics are summarized in

Table 2. The total loss function was optimized using a weighting factor

, providing an equal balance between adversarial and classification objectives. The Transformer Encoder was configured with an embedding dimension

, 4 attention heads, and 2 encoder layers. Notably, the proposed hybrid model is computationally efficient, consisting of approximately 6.99 million trainable parameters and achieving a high throughput of 3498.29 FPS, which supports its feasibility for real-time deployment. To comprehensively evaluate the effectiveness of the proposed framework, multiple standard classification metrics were employed, including Accuracy, Precision, Recall, and F1-score, defined as follows:

where

,

,

, and

denote true positives, true negatives, false positives, and false negatives, respectively.

In addition to per-class performance, both macro-averaged and weighted-averaged metrics were reported to provide a balanced assessment in the multi-class setting. Macro-averaging assigns equal importance to each malware family, highlighting the model’s ability to handle minority classes, while weighted averaging accounts for class frequencies and reflects overall predictive performance. Together, these evaluation criteria ensure that the reported results are not disproportionately influenced by majority malware families and offer a reliable assessment of the robustness of the proposed framework under severe class imbalance.

5.1. DCGAN Results

To address the pronounced class imbalance in the malware image dataset, a Deep Convolutional Generative Adversarial Network (DCGAN) was employed to synthesize additional samples for underrepresented malware families. The quality and diversity of the generated images were quantitatively evaluated using three widely adopted generative performance metrics: Inception Score (IS), Fréchet Inception Distance (FID), and Kernel Inception Distance (KID). The results obtained are summarized in

Table 3.

It is important to note that the Inception Score (IS) and Fréchet Inception Distance (FID) are traditionally computed using an Inception model pretrained on ImageNet, which contains natural images with semantic content very different from malware images. Consequently, these metrics may not fully capture the perceptual quality or meaningful diversity of malware images, and a high IS could sometimes reflect noise rather than useful variation in this context. To mitigate this limitation, we also report the Kernel Inception Distance (KID), which provides an unbiased estimate of distribution similarity and has been shown to be more robust in diverse domains. Additionally, qualitative visual inspection of generated samples confirms that the DCGAN produces structurally coherent and diverse malware images that align well with real data characteristics. Together, these evaluations support the stability and high fidelity of the synthetic samples generated by our DCGAN model.

5.2. Discussion on DCGAN Results

The integration of DCGAN-based data augmentation proved highly effective in mitigating class imbalance by generating realistic and diverse synthetic malware images for minority families. Malware classes that initially contained fewer than 30 samples were expanded to achieve sample counts comparable to those of majority classes, thereby reducing learning bias and improving class-level representation. The favorable IS, FID, and KID scores collectively indicate that the generated samples are authentic, diverse, and strongly aligned with the real data distribution. This enhanced data balance substantially improved the classifier’s ability to learn discriminative features for underrepresented malware families, leading to notable gains in overall accuracy, precision, recall, and F1-score, as demonstrated in subsequent classification results. These findings highlight GAN-based synthetic data generation as a practical and effective strategy for addressing severe class imbalance in image-based malware classification systems.

5.3. Impact of GAN-Based Data Balancing

GAN-based data augmentation effectively alleviated the severe class imbalance present in the original dataset. As illustrated in

Figure 3, minority malware families were enriched to achieve sample counts comparable to those of majority classes, resulting in a more uniform class distribution. This balanced training setup reduced the tendency of the classifier to overfit dominant families and significantly improved its ability to generalize to underrepresented malware categories. The quantitative generative evaluation metrics further support these observations. Specifically, an Inception Score (IS) of

indicates that the synthesized samples exhibit adequate visual quality and diversity, while the Fréchet Inception Distance (FID) of

reflects a strong similarity between the distributions of generated and real malware images. Moreover, the low Kernel Inception Distance (KID) value of

confirms stable and high-fidelity sample generation. Collectively, these results demonstrate that GAN-based data balancing provides a reliable and effective mechanism for mitigating class imbalance in image-based malware classification tasks.

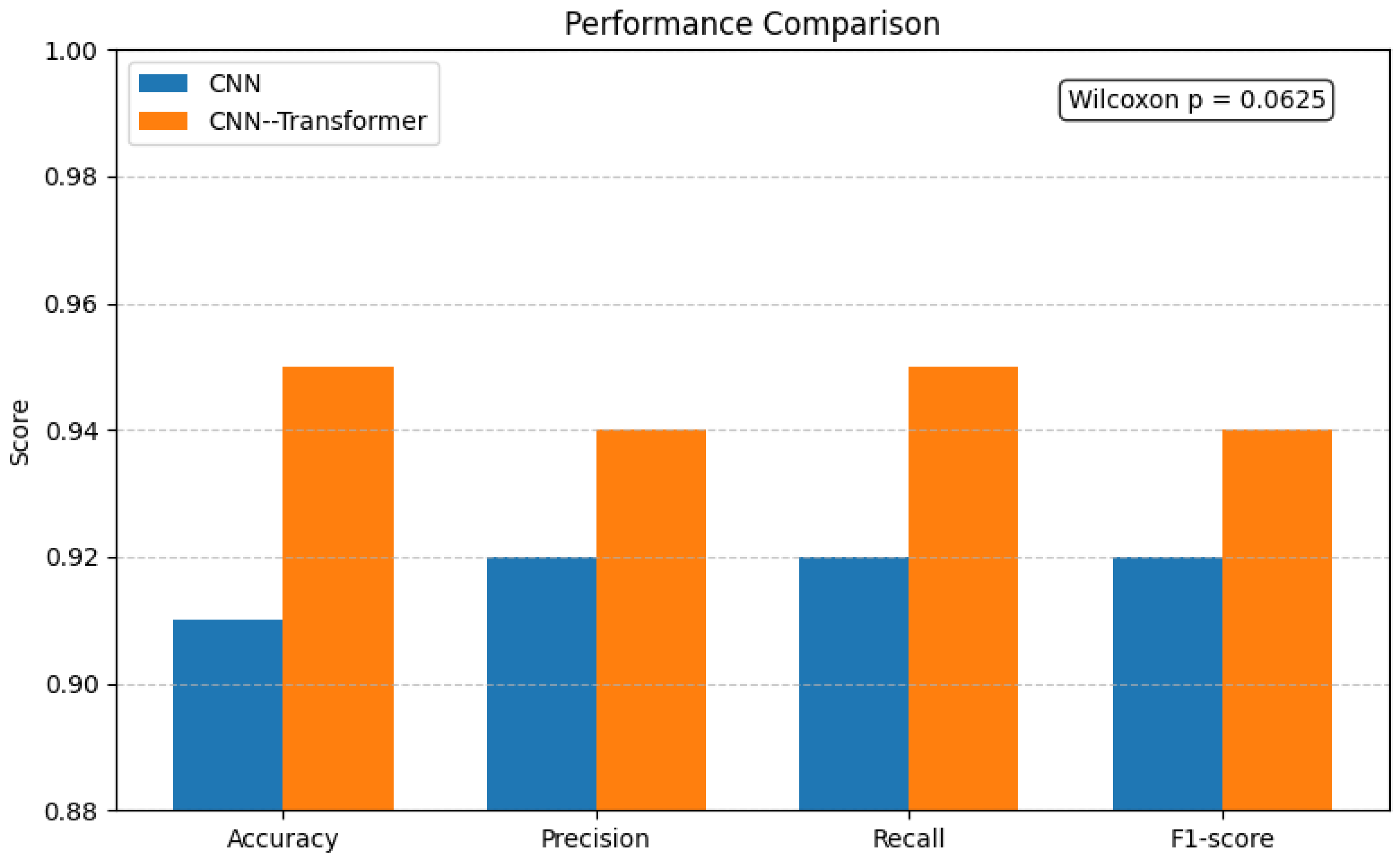

5.4. Classification Performance

The detailed classification results are summarized in

Table 4 and

Table 5. The proposed CNN-Transformer hybrid model achieves an overall accuracy of 95% on the DCGAN-balanced dataset, outperforming the CNN baseline, which attains an accuracy of 91%. In contrast, on the original imbalanced dataset, the CNN and hybrid models achieve lower accuracies of 89% and 92%, respectively, highlighting the benefit of GAN-based balancing. In terms of class-balanced evaluation, the hybrid architecture yields a macro-averaged F1-score of 0.94 on the balanced dataset, compared to 0.92 for the CNN baseline. On the imbalanced dataset, these scores drop to 0.79 and 0.75, respectively, indicating that balancing significantly improves minority-class recognition and overall consistency across malware families. An analysis of training and validation behavior further highlights the advantages of the hybrid design. As reported in

Table 4, the CNN–Transformer model demonstrates substantially improved training accuracy (0.97) and higher validation accuracy (0.95) relative to the CNN baseline (training accuracy of 0.91 and validation accuracy of 0.91). The reduced gap between training and validation metrics for the hybrid model suggests improved convergence stability and generalization, attributable to the combined effects of Transformer-based global modeling and GAN-based data balancing. Notably, minority malware families such as Alueron.gen!J and Dialplatform.B, which originally contained fewer than 30 samples in the test set, benefit significantly from GAN augmentation. These classes exhibit markedly improved recall values, reported at or near 1.00 in the per-class analysis, underscoring the effectiveness of class-wise synthetic sample generation in mitigating bias toward majority families.

5.5. Statistical Analysis

A paired non-parametric Wilcoxon signed-rank test was performed on macro-averaged Accuracy, Precision, Recall, and F1-score values obtained from 10 paired observations (10-fold cross-validation/10 repeated runs using identical splits for both models). The Wilcoxon signed-rank test is appropriate for paired comparisons when normality of differences cannot be assumed. For all four metrics the test produced a statistic of

with

p-value

, indicating that the CNN–Transformer hybrid consistently outperformed the CNN baseline across the paired runs. After applying a Bonferroni correction for the four simultaneous tests (

), all

p-values remain below the corrected threshold and are therefore statistically significant. The directionality of every paired difference (each of the 10 observations favored the hybrid model) and the substantial mean improvements reported in

Table 6 collectively support the robustness of the observed performance gains, as shown in

Figure 5.

5.6. Confusion Matrix Analysis

To gain deeper insight into class-wise prediction behavior across the 31 malware families, confusion matrices were generated for both the CNN baseline and the proposed CNN–Transformer hybrid model, as illustrated in

Figure 6 and

Figure 7. These matrices provide a detailed view of classification strengths and remaining challenges at the family level. The CNN baseline demonstrates reasonable recognition for several dominant malware families; however, it exhibits a higher number of off-diagonal errors, particularly among visually similar families such as Agent, Allaple, Autorun, and Regrun. In addition, minority classes with limited original samples, including Alueron.gen!J and Dialplatform.B, show scattered misclassifications, indicating insufficient feature discrimination under data imbalance. In contrast, the CNN–Transformer hybrid model produces markedly sharper diagonal patterns with substantially fewer off-diagonal entries, reflecting improved class separability. Minority families that originally contained fewer than 30 samples achieve near-perfect or perfect classification, highlighting the effectiveness of GAN-based data augmentation in enriching underrepresented classes with representative samples. Furthermore, the Transformer encoder enhances global feature modeling, reducing confusion among structurally similar families such as FakeRean, Fasong, and Expiro, which appear as cleaner and more concentrated diagonal entries in the hybrid confusion matrix. Overall, this analysis confirms that the proposed hybrid framework significantly improves both majority- and minority-class discrimination, making it well suited for real-world malware detection scenarios.

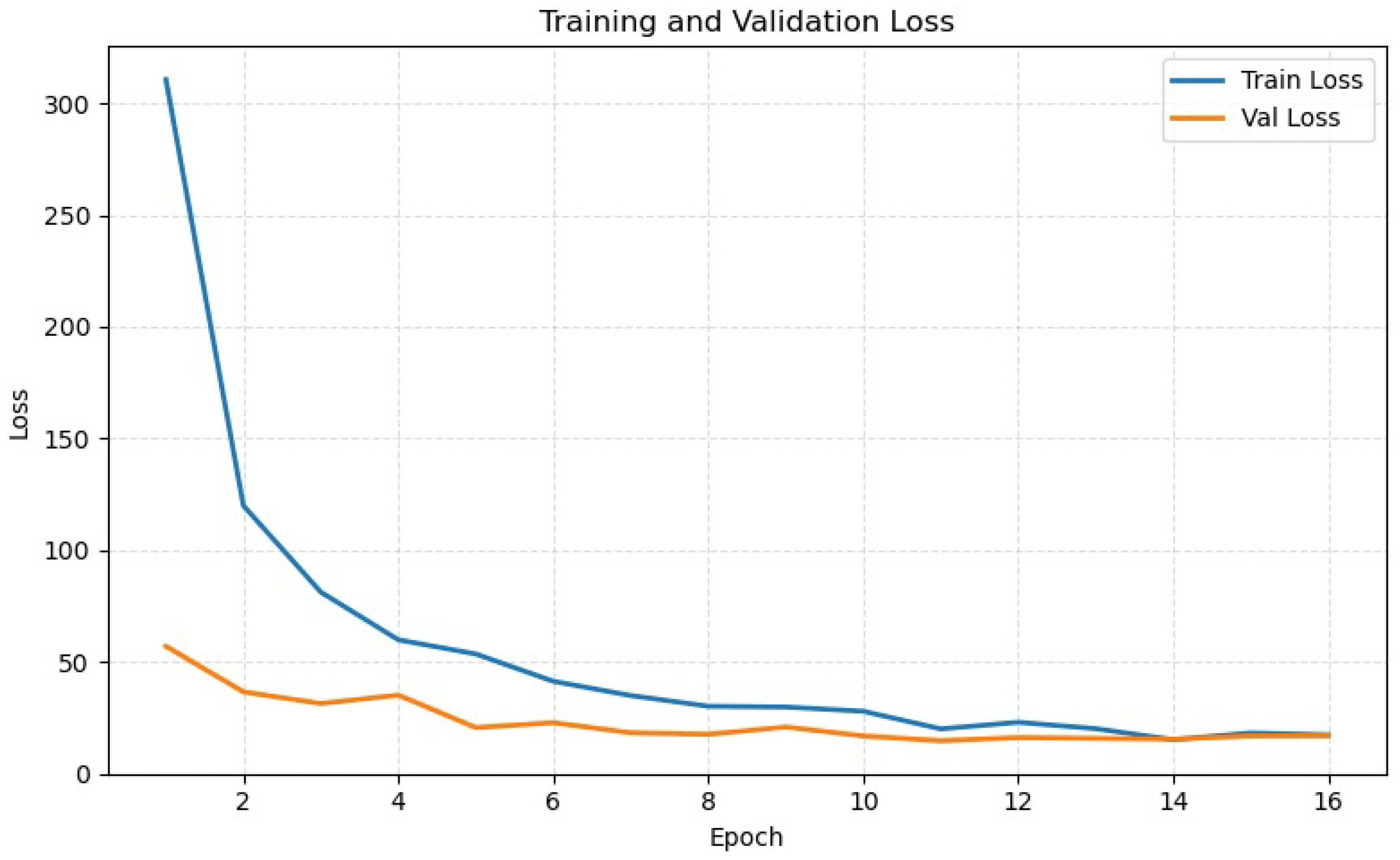

5.7. Training Convergence Behavior

The convergence behavior of the proposed CNN–Transformer hybrid model was analyzed using training and validation accuracy and loss curves, as shown in

Figure 8 and

Figure 9. The results indicate smooth and stable convergence, with both training and validation accuracy steadily increasing as the corresponding losses decrease, reflecting well-conditioned gradient updates and effective optimization. A rapid improvement is observed during the initial epochs, where the CNN backbone quickly learns low-level spatial patterns while the Transformer branch begins capturing global contextual dependencies, leading to a sharp rise in accuracy and a significant reduction in loss. After this early learning phase, the curves gradually plateau, suggesting convergence toward near-optimal performance, with only minor validation fluctuations that indicate good generalization. The consistently small gap between training and validation metrics demonstrates minimal overfitting, which can be attributed to the combined effects of GAN-based data augmentation, dropout regularization, and weight decay. Notably, the final validation accuracy stabilizes around 95%, aligning with the reported macro F1-score and confirming reliable model performance. Furthermore, the inclusion of GAN-generated samples plays a key role in improving convergence stability by providing representative minority-class instances, thereby reducing training oscillations and promoting balanced learning across malware families.

5.8. Ablation Study: Impact of Image Resolution

To justify the selection of a resolution and address the trade-off between information density and computational efficiency, an ablation study was conducted by training the proposed model at a higher resolution of . While higher resolutions theoretically preserve more fine-grained byte patterns, experimental results showed that the configuration yielded a training accuracy of 94.17% (Train Loss: 0.62) and a validation accuracy of 90.67% (Validation Loss: 0.42). Compared to the resolution, which achieved 95% validation accuracy, the higher resolution exhibited slightly lower generalization and significantly higher computational overhead. This suggests that for the proposed CNN–Transformer hybrid model, the resolution provides an optimal balance by filtering out high-frequency noise in the binary-to-image conversion while allowing the Transformer to efficiently model global dependencies without excessive memory constraints.

5.9. Comparison with State-of-the-Art Models

To further evaluate the effectiveness of the proposed framework, we compare it against widely used deep learning architectures on the Blended malware dataset.

Table 7 reports accuracy for several state-of-the-art convolutional models. Among traditional architectures, AlexNet, DenseNet121, MobileNetV2, and ShuffleNet V2 demonstrate competitive accuracy, ranging between 90% and 91.5%. ShuffleNet V2 and ConvNet deliver the fastest inference times (22,129.80 s and 18,855.47 s respectively), making them highly efficient for large-scale malware processing. DenseNet121, although slightly slower, achieves the highest accuracy among the standard models (91.22%). Compared to these baselines, the proposed GAN-balanced CNN-Transformer hybrid model achieves a substantially higher validation accuracy of 95%, while also providing stronger per-class robustness particularly for minority families. The hybrid architecture benefits from both CNN-based spatial feature extraction and Transformer-based global dependency modeling, enabling it to outperform conventional CNN backbones in both accuracy and class-level consistency. This demonstrates that the proposed model is well-suited for real-world malware detection scenarios where class imbalance and structural diversity pose significant challenges.

To address concerns about fair comparison and provide a more informative evaluation under class imbalance, we retrained these baseline models on our DCGAN-augmented balanced dataset (

) and included the Macro F1-score alongside accuracy.

Table 7 summarizes the accuracy for the original baseline results from Liu et al. [

29] and both the accuracy and Macro F1-score for the retrained models on the balanced dataset. As observed, the proposed CNN–Transformer hybrid model achieves a superior Macro F1-score of 0.95, outperforming all retrained baselines. This confirms that the hybrid architecture, supported by DCGAN-based balancing, effectively captures discriminative features across all malware families, ensuring high precision and recall even for previously underrepresented classes.

5.10. Discussion

The experimental evaluation clearly demonstrates the effectiveness of the proposed class-balanced hybrid learning framework for malware image classification. GAN-generated samples significantly enhance minority-class learning by improving recall and mitigating bias toward majority classes, which is critical in realistic malware distributions. The integration of CNNs and Transformers proves particularly effective, as CNNs capture fine-grained spatial and texture-based patterns while Transformers model long-range and global relationships, resulting in complementary and discriminative feature representations. In addition, the diversity introduced through GAN-based augmentation contributes to improved convergence stability and smoother training dynamics by acting as an implicit regularizer. Furthermore, the proposed hybrid model is designed for practical deployment, maintaining a highly efficient computational profile. The architecture consists of approximately 6.99 million trainable parameters, making it significantly more lightweight than many standard deep architectures like VGG16 or ResNet50. In terms of inference performance, the model achieves an average latency of only 0.29 ms per image, translating to a high throughput of 3498.29 frames per second (FPS). This efficiency ensures that the superior accuracy of the CNN-Transformer hybrid does not come at the cost of operational speed, making it suitable for real-time malware scanning in high-traffic network environments. The balanced training strategy further enhances real-world adaptability, enabling the classifier to generalize better to unseen and evolving malware variants. Moreover, quantitative quality assessments using IS, FID, and KID metrics confirm that the synthetic samples are both realistic and diverse, providing meaningful variability rather than noise. Collectively, these findings validate the proposed class-balanced CNN–Transformer framework as a reliable and practical solution for operational malware detection systems.

5.11. Limitations

Despite the high classification accuracy and effective data balancing achieved by the proposed framework, certain limitations remain that provide avenues for future research. First, while the DCGAN successfully addresses class imbalance, the quality of synthetic samples is inherently bounded by the diversity of the original minority-class seeds; extremely rare families with fewer than five samples may still pose challenges for generative stability. Second, the current model focuses exclusively on grayscale visual representations of malware binaries. While effective for capturing structural textures, it does not incorporate multimodal features such as API call sequences or metadata, which could provide complementary semantic information. Third, the robustness of the CNN-Transformer hybrid against adversarial attacks where malware authors might inject “noise” bytes to alter the image texture without changing the malicious functionality has not yet been evaluated.