Differential Privacy Data Publication Based on Scoring Function

Abstract

1. Introduction

2. Related Works

3. Preliminaries

3.1. Differential Privacy

3.2. Bayesian Networks

- is an attribute node in the attribute set.

- is the set of parent nodes of attribute .

4. Score Function-Based SA-PrivBayes Method

4.1. SA-PrivBayes Algorithm Bayesian Network Construction

| Algorithm 1 | Construction of bayesian network of SA-PrivBayes |

| Input: | Dataset D, maximum number of nodes for bayesian network k. |

| Output: | Bayes network N. |

| 1. | ; |

| 2. | Calculate the correlation matrix based on Equation (6); |

| 3. | Based on Equation (7), calculate the average mutual information for all attributes in , and sort the |

| attributes by AMI in descending order; | |

| 4. | Add to N; |

| 5. | Split total privacy budget : (for threshold screening mechanism), (for exponential mechanism); |

| 6. | For do: |

| 7. | ; |

| 8. | for each , add to set ; |

| 9. | Calculate the score of each candidate, and sort; = the value corresponding to the first positions in |

| the sorted list; ; | |

| 10. | For retry in range(): |

| 11. | ; ; |

| 12. | If the score of the selected AP is more than upper_bound: |

| 13. | Skip; |

| 14. | else if the score of the selected AP is less than lower_bound: |

| 15. | Remove from the set ; |

| 16. | else: |

| 17. | If : |

| 18. | Skip; |

| 19. | From , examine the mutual information for each , and use mutual information as a |

| scoring function, the exponential mechanism is employed to select in ; | |

| 20. | Add to N, Add to Scores; |

| 21. | End for; |

| 22. | Return . |

4.2. Differentially Private Conditional Distribution Generation in the SA-PrivBayes Algorithm

4.2.1. Dynamic Privacy Budget Allocation

- High protection level: corresponds to q = 1.2–1.4, suitable for scenarios with higher privacy risks such as data sharing and public release;

- Medium protection level: corresponds to q = 1.0–1.2, suitable for regular scenarios such as internal data analysis and model training;

- Low protection level: corresponds to q = 0.8–1.0, suitable for exploratory analysis of non-sensitive data and utility-priority scenarios.

4.2.2. Algorithm Implementation

| Algorithm 2 | Noise addition method for conditional distributions in SA-PrivBayes |

| Input: | Dataset D, Bayesian network N, the degree of the Bayesian network k, node evaluation |

| function Scores, Parameter q. | |

| Output: | Differential privacy distribution . |

| 1. | ; |

| 2. | For do: |

| 3. | Calculate the joint distribution ; |

| 4. | According to the node scoring function, calculate the privacy budget for the node’s |

| distribution, using the updated privacy budget to perturb the distribution: | |

| ; | |

| 5. | Set the negative values in to 0, and update accordingly; |

| 6. | From , derive , and add the results to ; |

| 7. | End for |

| 8. | For do: |

| 9. | From , derive , and add the results to ; |

| 10. | End for |

| 11. | Return ; |

4.3. Privacy Security Analysis

4.4. Algorithm Time Complexity Analysis

5. Experimental Evaluation and Result Analysis

5.1. Dataset Overview

Dataset Preprocessing Pipeline

- Binary categorical attributes (NLTCS dataset): 0-1 encoding is adopted to directly map attributes to binary values.

- Multi-class unordered attributes: One-Hot Encoding is used to generate binary vectors whose dimension is equal to the number of categories.

- Multi-class ordered attributes: Label Encoding is applied to map attributes to continuous integers according to their ordered levels.

5.2. Experimental Setup

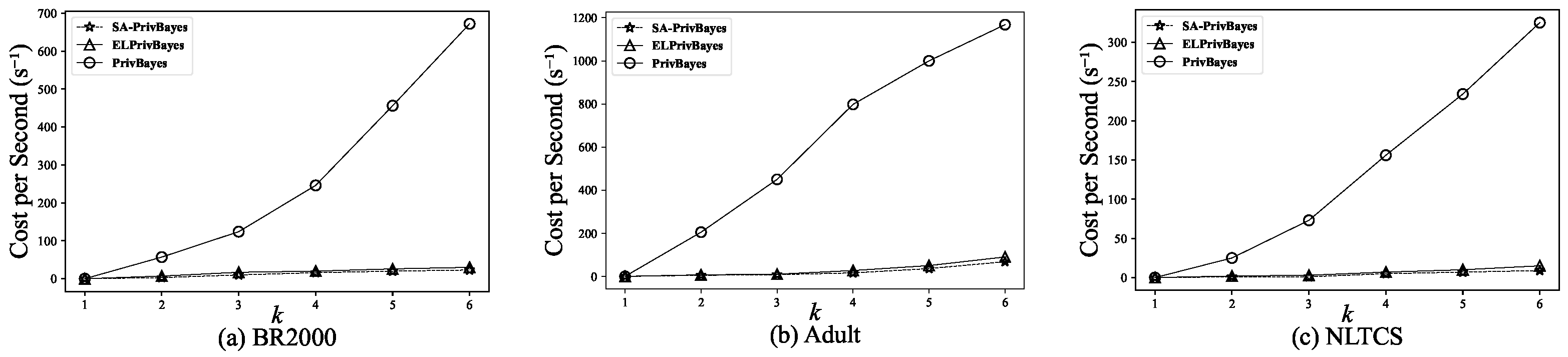

5.3. Computational Cost Evaluation

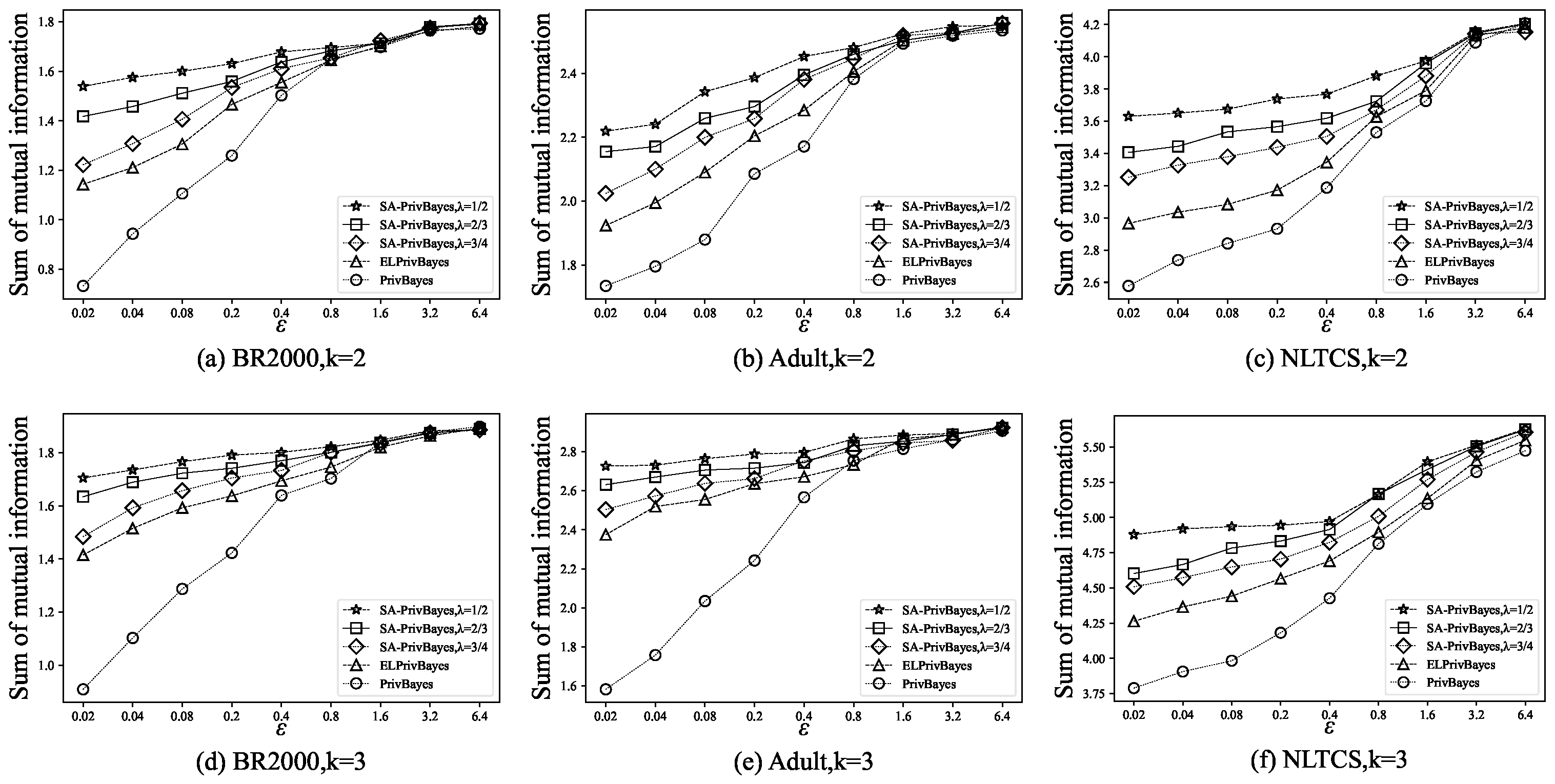

5.4. Bayesian Network Quality Evaluation

5.5. Data Utility Evaluation

5.6. Data Robustness Analysis

- SA-PrivBayes has a mean accuracy of (), while PrivBayes has a mean accuracy of (). The mean difference between them is (), with , ().

- ELPrivBayes has a mean accuracy of (), and the mean difference with SA-PrivBayes is (), with , ().

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Wang, H.; Wang, H. Correlated tuple data release via differential privacy. Inf. Sci. 2021, 560, 347–369. [Google Scholar] [CrossRef]

- SWEENEY, L. k-ANONYMITY: A MODEL FOR PROTECTING PRIVACY. Int. J. Uncertain. Fuzziness Knowl.-Based Syst. 2002, 10, 557–570. [Google Scholar] [CrossRef]

- Machanavajjhala, A.; Kifer, D.; Gehrke, J.; Venkitasubramaniam, M. L-diversity: Privacy beyond k-anonymity. ACM Trans. Knowl. Discov. Data 2007, 1, 3-es. [Google Scholar] [CrossRef]

- Li, N.; Li, T.; Venkatasubramanian, S. t-Closeness: Privacy Beyond k-Anonymity and l-Diversity. In Proceedings of the 2007 IEEE 23rd International Conference on Data Engineering, Istanbul, Turkey, 15–20 April 2007; pp. 106–115. [Google Scholar]

- Wong, R.C.W.; Li, J.; Fu, A.W.C.; Wang, K. (α, k)-anonymity: An enhanced k-anonymity model for privacy preserving data publishing. In Proceedings of the 12th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 20–23 August 2006; KDD ’06, pp. 754–759. [Google Scholar] [CrossRef]

- Dwork, C. Differential Privacy. In Proceedings of the Automata, Languages and Programming; Bugliesi, M., Preneel, B., Sassone, V., Wegener, I., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 1–12. [Google Scholar]

- Dwork, C.; McSherry, F.; Nissim, K.; Smith, A. Calibrating Noise to Sensitivity in Private Data Analysis. In Proceedings of the Theory of Cryptography; Halevi, S., Rabin, T., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 265–284. [Google Scholar]

- Narayanan, A.; Shmatikov, V. Robust De-anonymization of Large Sparse Datasets. In Proceedings of the 2008 IEEE Symposium on Security and Privacy (sp 2008), Oakland, CA, USA, 18–22 May 2008; pp. 111–125. [Google Scholar]

- Wang, Z.; Scott, D.W. Nonparametric density estimation for high-dimensional data—Algorithms and applications. WIREs Comput. Stat. 2019, 11, e1461. [Google Scholar] [CrossRef]

- Amaratunga, D.; Cabrera, J.; Shkedy, Z. Exploration and Analysis of DNA Microarray and Other High-Dimensional Data; John Wiley & Sons: Hoboken, NJ, USA, 2014. [Google Scholar]

- Ren, X.; Yu, C.M.; Yu, W.; Yang, S.; Yang, X.; McCann, J.A.; Yu, P.S. LoPub: High-Dimensional Crowdsourced Data Publication with Local Differential Privacy. IEEE Trans. Inf. Forensics Secur. 2018, 13, 2151–2166. [Google Scholar] [CrossRef]

- Cheng, X.; Tang, P.; Su, S.; Chen, R.; Wu, Z.; Zhu, B. Multi-Party High-Dimensional Data Publishing Under Differential Privacy. IEEE Trans. Knowl. Data Eng. 2020, 32, 1557–1571. [Google Scholar] [CrossRef]

- Zhang, J.; Cormode, G.; Procopiuc, C.M.; Srivastava, D.; Xiao, X. PrivBayes: Private Data Release via Bayesian Networks. ACM Trans. Database Syst. 2017, 42, 25. [Google Scholar] [CrossRef]

- Hu, J. Survey on feature dimension reduction for high-dimensional data. Appl. Res. Comput. 2008, 25, 2601–2606. [Google Scholar]

- Shi, Q.; Cong, S.; Tang, X. LSI_LDA: Mixture method for feature dimensionality reduction. Appl. Res. Comput. 2017, 34, 2269–2273. [Google Scholar]

- Hao, Z.; Wang, R.; Cai, R.; Wen, W. Privacy data publishing method based on Bayesian network and semantic tree. Comput. Eng. 2019, 45, 124–129. [Google Scholar]

- Zhang, X.; Chen, L.; Jin, K.; Meng, X. Private High-Dimensional Data Publication with Junction Tree. J. Comput. Res. Dev. 2018, 55, 2794–2809. [Google Scholar]

- Li, X.; Luo, C.; Liu, P.; Wang, L.e. Information Entropy Differential Privacy: A Differential Privacy Protection Data Method Based on Rough Set Theory. In Proceedings of the 2019 IEEE Intl Conf on Dependable, Autonomic and Secure Computing, Intl Conf on Pervasive Intelligence and Computing, Intl Conf on Cloud and Big Data Computing, Intl Conf on Cyber Science and Technology Congress (DASC/PiCom/CBDCom/CyberSciTech), Fukuoka, Japan, 5–8 August 2019; pp. 918–923. [Google Scholar]

- Day, W.Y.; Li, N. Differentially Private Publishing of High-dimensional Data Using Sensitivity Control. In Proceedings of the 10th ACM Symposium on Information, Computer and Communications Security, New York, NY, USA, 17 March–14 April 2015; ASIA CCS ’15, pp. 451–462. [Google Scholar] [CrossRef]

- Xu, C.; Ren, J.; Zhang, Y.; Qin, Z.; Ren, K. DPPro: Differentially Private High-Dimensional Data Release via Random Projection. IEEE Trans. Inf. Forensics Secur. 2017, 12, 3081–3093. [Google Scholar] [CrossRef]

- Li, M.; Ma, X. Bayesian Networks-Based Data Publishing Method Using Smooth Sensitivity. In Proceedings of the 2018 IEEE Intl Conf on Parallel & Distributed Processing with Applications, Ubiquitous Computing & Communications, Big Data & Cloud Computing, Social Computing & Networking, Sustainable Computing & Communications (ISPA/IUCC/BDCloud/SocialCom/SustainCom), Melbourne, VIC, Australia, 11–13 December 2018; pp. 795–800. [Google Scholar]

- Zhang, W.; Zhao, J.; Wei, F.; Chen, Y. Differentially Private High-Dimensional Data Publication via Markov Network. EAI Endorsed Trans. Secur. Saf. 2019, 6, 1. [Google Scholar] [CrossRef]

- Abay, N.C.; Zhou, Y.; Kantarcioglu, M.; Thuraisingham, B.; Sweeney, L. Privacy Preserving Synthetic Data Release Using Deep Learning. In Proceedings of the Machine Learning and Knowledge Discovery in Databases; Berlingerio, M., Bonchi, F., Gärtner, T., Hurley, N., Ifrim, G., Eds.; Springer: Cham, Switzerland, 2019; pp. 510–526. [Google Scholar]

- Beaulieu-Jones, B.K.; Wu, Z.S.; Williams, C.; Lee, R.; Bhavani, S.P.; Byrd, J.B.; Greene, C.S. Privacy-preserving generative deep neural networks support clinical data sharing. Circ. Cardiovasc. Qual. Outcomes 2019, 12, e005122. [Google Scholar] [CrossRef]

- Frigerio, L.; de Oliveira, A.S.; Gomez, L.; Duverger, P. Differentially Private Generative Adversarial Networks for Time Series, Continuous, and Discrete Open Data. In Proceedings of the ICT Systems Security and Privacy Protection; Dhillon, G., Karlsson, F., Hedström, K., Zúquete, A., Eds.; Springer: Cham, Switzerland, 2019; pp. 151–164. [Google Scholar]

- Li, H.; Xiong, L.; Zhang, L.; Jiang, X. DPSynthesizer: Differentially Private Data Synthesizer for Privacy Preserving Data Sharing. Proc. VLDB Endow. 2014, 7, 1677–1680. [Google Scholar] [CrossRef]

- Qi, X.; Ma, X.; Bai, X.; Li, W. Differential Privacy Preserving Data Publishing Based on Bayesian Network. In Proceedings of the 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom), Guangzhou, China, 29 December 2020–1 January 2021; pp. 1718–1726. [Google Scholar]

- Zhang, Z.; Wang, T.; Li, N.; Honorio, J.; Backes, M.; He, S.; Chen, J.; Zhang, Y. PrivSyn: Differentially Private Data Synthesis. In Proceedings of the 30th USENIX Security Symposium (USENIX Security 21), Vancouver, BC, Canada, 11–13 August 2021; pp. 929–946. [Google Scholar]

- Liu, G.; Tang, P.; Hu, C.; Jin, C.; Guo, S.; Stoyanovich, J.; Teubner, J.; Mamoulis, N.; Pitoura, E.; Mühlig, J. Multi-Dimensional Data Publishing With Local Differential Privacy. In Proceedings of the EDBT, Ioannina, Greece, 28–31 March 2023; pp. 183–194. [Google Scholar]

- Ni, G.; Sun, J. Differential privacy protection algorithm for large data sources based on normalized information entropy Bayesian network. J. Phys. Conf. Ser. 2024, 2813, 012012. [Google Scholar] [CrossRef]

- Li, N.; Yang, J.; Ren, X.; Ma, X.; Li, X. Bayesian networks based on differential privacy for financial data privacy. In Proceedings of the Third International Conference on Electrical, Electronics, and Information Engineering (EEIE 2024); SPIE: Wuhan, China, 2025; Volume 13512, pp. 276–281. [Google Scholar]

- Zhang, S.; Li, X. Differential privacy medical data publishing method based on attribute correlation. Sci. Rep. 2022, 12, 15725. [Google Scholar] [CrossRef]

- Chen, H.; Ni, Z.; Zhu, X.; Jin, Y.; Chen, Q. Differential privacy high dimensional data publishing method based on cluster analysis. J. Comput. Appl. 2021, 41, 2578–2585. [Google Scholar]

- Lan, S.; Hong, J.; Chen, J.; Cai, J.; Wang, Y. Equation Chapter 1 Section 1 Differentially Private High-Dimensional Binary Data Publication via Adaptive Bayesian Network. Wirel. Commun. Mob. Comput. 2021, 2021, 8693978. [Google Scholar] [CrossRef]

- Liu, P.; Duan, L.; Shen, Z.; Wang, H. Differential privacy publishing of high-dimensional data based on attribute classification. Appl. Res. Comput. 2023, 40, 1–8. [Google Scholar]

- Shen, G.; Cai, M.; Huang, Z.; Yang, Y.; Guo, F.; Wei, L. LoHDP: Adaptive local differential privacy for high-dimensional data publishing. Concurr. Comput. Pract. Exp. 2024, 36, e8039. [Google Scholar] [CrossRef]

- Shi, W.; Zhang, X.; Chen, H.; Zhang, X. High dimensional data differential privacy protection publishing method based on association analysis. Electronics 2023, 12, 2779. [Google Scholar] [CrossRef]

- Chen, Q.; Ni, Z.; Zhu, X.; Lyu, M.; Liu, W.; Xia, P. Dynamic Edge-Based High-Dimensional Data Aggregation with Differential Privacy. Electronics 2024, 13, 3346. [Google Scholar] [CrossRef]

- McSherry, F.; Talwar, K. Mechanism Design via Differential Privacy. In Proceedings of the 48th Annual IEEE Symposium on Foundations of Computer Science (FOCS’07), Providence, RI, USA, 21–23 October 2007; pp. 94–103. [Google Scholar]

- Kong, Y.; Tan, F.; Zhao, X.; Zhang, Z.; Bai, L.; Qian, Y. Review of K-means Algorithm Optimization Based on Differential Privacy. J. Comput. Sci. 2021, 49, 162–173. [Google Scholar]

- Lu, X.; Piao, C.; Yang, X.; Bai, Y. Research on Differential Privacy High Dimensional Data Publishing Technology Based on Bayesian Networks. Comput. Eng. 2024, 50, 167–181. [Google Scholar]

- Manton, K.G. National Long-Term Care Survey: 1982, 1984, 1989, 1994, and 2004. 2010. Available online: https://www.icpsr.umich.\-edu/web/NACDA/studies/9681/publications (accessed on 23 May 2025).

- Bache, K.; Lichman, M. UCI Machine Learning Repository, 2013. Available online: http://archive.ics.uci.edu/ml (accessed on 23 May 2025).

- Steven, R.; Katie, G.; Ronald, G.; Josiah, G.; Matthew, S. Integrated Public Use Microdata Series: Version 6.0. (2015). 2015. Available online: https://international.ipums.org (accessed on 23 May 2025).

- Hong, J.; Wu, Y.; Cai, J.; Sun, L. Differentially Private High-Dimensional Binary Data Publication via Attribute Segmentation. J. Comput. Res. Dev. 2022, 59, 182–196. [Google Scholar]

| Algorithm | Key Limitations | Improvements & Advantages of SA-PrivBayes |

|---|---|---|

| PrivBayes [13] | Insufficient protection for core attributes; low-value AP pairs interfere with network accuracy | 1. AMI-based root node selection to strengthen core correlations; 2. Threshold filtering for low-value AP pairs; 3. Cluster-based budget allocation to enhance core attribute protection |

| ELPrivBayes [41] | Irrational budget allocation | 1. Threshold + probabilistic screening to adapt to noise interference; 2. Collaborative budget allocation to balance privacy and utility |

| AprivBayes [27] | High computational complexity, incompatible with large-scale data | 1. Attribute importance-based allocation superior to sub-network equal division; 2. Supports large-scale datasets (e.g., Adult with 41,292 records) |

| DPSynthesizer [26] | High computational overhead | 1. Full-process collaborative optimization of network structure without additional noise; 2. Time complexity O (nd2) for higher efficiency |

| PrivASG [35] | Sensitivity not combined with network structure, poor adaptability | 1. Scoring function integrating network structure and attribute correlation strength; 2. Cluster + dynamic q-value adjustment for multi-scenario adaptation; 3. Stronger cross-domain generality |

| ACDP-Tree [32] | Adopts an “equal division + arithmetic progression adjustment” strategy and fails to dynamically optimize based on attribute correlation | 1. Threshold filtering for low-value AP pairs; 2. Captures correlations among all attributes comprehensively based on Bayesian networks |

| q | A | B | C | D | E | F |

|---|---|---|---|---|---|---|

| 1 | 0.083 | 0.083 | 0.083 | 0.083 | 0.083 | 0.083 |

| 1.1 | 0.076 | 0.076 | 0.083 | 0.083 | 0.091 | 0.091 |

| 1.2 | 0.069 | 0.069 | 0.082 | 0.082 | 0.099 | 0.099 |

| Dataset | Data Type | Dataset Size | Dimension |

|---|---|---|---|

| BR2000 | Non-Binary | 38,000 | 14 |

| Adult | Non-Binary | 41,292 | 13 |

| NLTCS | Binary | 21,574 | 16 |

| Experiment | ELPrivBayes Accuracy (%) | PrivBayes Accuracy (%) | SA-PrivBayes Accuracy (%) |

|---|---|---|---|

| 1 | 79.3 | 78.5 | 81.2 |

| 2 | 79.8 | 79.0 | 80.8 |

| 3 | 79.5 | 78.8 | 81.5 |

| 4 | 79.7 | 79.2 | 80.9 |

| 5 | 79.2 | 78.6 | 81.1 |

| Significance test (SA vs. EL) | (, ), Conclusion: Highly significant () | ||

| Significance test (SA vs. Pr) | (, ), Conclusion: Highly significant () | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yuan, K.; Zhang, Q.; Lin, Y.; Wang, Y.; Jia, C. Differential Privacy Data Publication Based on Scoring Function. Future Internet 2026, 18, 103. https://doi.org/10.3390/fi18020103

Yuan K, Zhang Q, Lin Y, Wang Y, Jia C. Differential Privacy Data Publication Based on Scoring Function. Future Internet. 2026; 18(2):103. https://doi.org/10.3390/fi18020103

Chicago/Turabian StyleYuan, Ke, Quan Zhang, Yinghao Lin, Yuye Wang, and Chunfu Jia. 2026. "Differential Privacy Data Publication Based on Scoring Function" Future Internet 18, no. 2: 103. https://doi.org/10.3390/fi18020103

APA StyleYuan, K., Zhang, Q., Lin, Y., Wang, Y., & Jia, C. (2026). Differential Privacy Data Publication Based on Scoring Function. Future Internet, 18(2), 103. https://doi.org/10.3390/fi18020103