1. Introduction

In recent years, machine learning (ML) has experienced rapid growth, driven by advances in algorithms, data availability, and computational power [

1,

2,

3,

4]. This expansion has intensified the need for hardware-aware benchmarks capable of reflecting real-world training conditions rather than idealized datacenter environments [

5,

6,

7]. Researchers and developers now face the challenge of selecting platforms that balance training time, energy consumption, and reproducibility, all of which directly affect the practical feasibility and sustainability of ML workloads, and studies that integrate these dimensions under experimental conditions representative of commonly available computing environments remain limited.

Several benchmarking initiatives have addressed ML performance from complementary perspectives. MLPerf [

8] established a reference standard for comparing training workloads on high-end GPUs and TPUs under fixed datasets and target accuracy conditions. DAWNBench [

9] introduced metrics centered on time-to-accuracy and cost, whereas DeepEdgeBench [

10] and Geekbench ML [

11] focused primarily on inference tasks on edge and mobile hardware. Although these initiatives have been fundamental for benchmarking standardization, their scope remains limited when the goal is to jointly analyze supervised training, energy consumption, and reproducibility across heterogeneous and widely accessible hardware.

In parallel, recent studies have highlighted the environmental implications of ML training. Works such as

From Clicks to Carbon [

12], Patterson et al. [

13], and

Green AI [

14] show that the choice of hardware and model architecture has a substantial impact on energy use and carbon footprint. More recently, benchmarking-oriented studies have reinforced this perspective by explicitly comparing predictive performance and energy efficiency under controlled experimental settings [

15,

16]. As a result, the selection of computational environments has become not only a matter of performance but also of sustainability. However, these concerns have not yet been systematically translated into reproducible comparative frameworks capable of quantifying, under a unified experimental design, the relationship between computational performance, energy cost, and result stability across heterogeneous hardware platforms [

17,

18].

Recent supervised and deep learning studies have also addressed real industrial energy forecasting scenarios. In [

19] a Temporal Fusion Transformer was proposed for electric load forecasting in a quicklime production plant, showing that model behavior and forecasting suitability depend strongly on the industrial operating context and the evaluation criteria considered. Such studies reinforce the relevance of assessing supervised learning models under realistic application conditions, although they do not focus on the influence of heterogeneous hardware on training performance, energy consumption, and reproducibility.

Despite these advances, most existing benchmarks remain centered on datacenter-scale accelerators or on specific model families. They frequently exclude power analysis or overlook widely used platforms such as consumer-grade x86 CPUs, ARM-based laptops, and low-power embedded devices such as the Raspberry Pi [

20,

21]. In addition, synthetic indicators such as FLOPS or memory bandwidth are insufficient to capture the interaction among software stack overhead, data pipeline constraints, and the computational behavior of different model architectures [

8,

9,

10,

11]. Recent studies have also explored deterministic comparisons of machine learning models and the characterization of deep learning workloads in edge-oriented scenarios [

22,

23], while other works have addressed performance improvements through high-performance computing and parallel strategies in predictive tasks [

24]. However, these efforts do not yet provide a unified benchmark for supervised training that jointly evaluates execution time, energy usage, predictive accuracy, and reproducibility across heterogeneous and accessible hardware platforms. Consequently, a homogeneous evaluation that compares distinct families of supervised learning algorithms across accessible and heterogeneous platforms under consistent conditions is still lacking [

5].

This gap also highlights the need for benchmarking methodologies based on fixed and reproducible experimental designs, in which datasets, model definitions, hyperparameter settings, and repetition schemes are kept constant in order to isolate platform-dependent effects and enable fair comparison across heterogeneous hardware environments [

25,

26,

27]. In addition, cost-oriented benchmarking perspectives have shown that practical hardware evaluation should not be restricted to raw performance alone, but should also consider accessibility-related criteria such as economic efficiency and comparative cost-performance behavior [

9,

14,

28].

To address this need, this work presents a unified and reproducible benchmarking framework for evaluating five representative supervised learning models: CNN [

29,

30], Simple RNN [

31], RNN–LSTM [

32], BiLSTM [

33,

34], and XGBoost [

35], across a set of accessible hardware platforms. The evaluated systems include a desktop computer with an RTX 5060 Ti GPU and Ryzen 7 7800X3D CPU, a laptop with a Ryzen 7 4800H and GTX 1660 Ti, an Apple M4 MacBook evaluated in both CPU and GPU configurations, and a Raspberry Pi 5 based on an ARM SoC, and Google Colab in CPU/GPU configurations.

All models were trained using consistent code, datasets, and hyperparameter settings in order to isolate hardware-specific effects on training time, power usage, energy efficiency, and reproducibility. In addition, the proposed framework incorporates a basic performance-per-dollar perspective in order to account for the economic dimension of hardware accessibility [

9,

14,

28].

This study seeks to overcome the fragmentation observed in the literature and provide a more consistent and reproducible comparative basis.

The results discussed in this article clearly reveal differentiated behaviors across platforms. Apple Silicon exhibited outstanding energy efficiency in recurrent and tree-based workloads, whereas CUDA-enabled GPUs delivered the fastest and most consistent training performance. By contrast, general-purpose CPUs and low-power ARM devices showed significant scalability limitations for deep learning tasks, although algorithms such as XGBoost remained competitive on CPU-based platforms.

Ultimately, this study provides a practical and transparent reference for researchers, educators, and practitioners who need to balance performance, energy efficiency, and reproducibility in supervised learning tasks. It also promotes a benchmarking perspective that incorporates energy and environmental criteria alongside traditional performance metrics, thereby contributing to more practical and accessible machine learning research. In this sense, the contribution of the article lies in providing systematic comparative evidence under a unified and reproducible benchmarking framework for supervised learning across heterogeneous and accessible hardware platforms. In addition, the proposed methodology extends conventional benchmarking by incorporating batch size sensitivity analysis, epoch-based stability validation, and performance-per-dollar evaluation, allowing a more comprehensive characterization of platform performance across different evaluation criteria.

The remainder of this article is organized as follows.

Section 2 presents the methodology adopted in this study, including the hardware selection criteria, the description of the evaluated platforms, and the tested models.

Section 3 reports and analyzes the experimental results obtained for each model, covering CNN [

29,

30], Simple RNN [

31], RNN–LSTM [

32], BiLSTM [

33,

34], and XGBoost [

35].

Section 4 discusses the main findings of the study by comparing the behavior of the different platforms and models from the perspectives of performance, energy efficiency, and stability. Finally,

Section 5 presents the conclusions of the work and outlines future research directions derived from the obtained results.

2. Materials and Methods

The methodology adopted in this study was designed to ensure a consistent, reproducible, and comparable evaluation of heterogeneous computational platforms in supervised learning tasks. To this end, the experimental design explicitly controlled the main factors influencing execution, including hardware characterization, software environment configuration, model definition and implementation, repeated experimental runs, and systematic metric collection.

This approach enables a direct comparison of performance and energy consumption across platforms.

Figure 1 summarizes the general methodological workflow adopted in this study, starting from hardware selection and characterization, continuing with software configuration and controlled model execution, and ending with metric collection, statistical analysis, and cross-platform comparison. The experiments in this study were conducted under standard indoor operating conditions in Tunja, Colombia, a high-altitude location characterized by relatively stable ambient temperatures (approximately 15–20 °C) and high relative humidity levels (typically in the range of 80–90%).

2.1. Benchmarking Methodology Overview

This study adopts a unified benchmarking methodology to evaluate the influence of heterogeneous hardware platforms on supervised learning workloads. The methodology is designed to ensure consistency, reproducibility, and comparability across all experiments by maintaining identical datasets, model definitions, and baseline hyperparameter settings for each platform whenever hardware compatibility allows. In this sense, the fixed experimental design adopted in this work follows the logic of controlled and reproducible benchmarking, in which datasets, model definitions, hyperparameter settings, and repetition schemes are kept constant in order to isolate platform-dependent effects and ensure fair cross-platform comparison [

25,

26,

27,

28]. The evaluation considers representative platforms spanning desktop, laptop, cloud, and embedded environments, including x86 CPUs, NVIDIA CUDA-enabled GPUs, Apple Silicon, and ARM-based low-power systems.

However, while fixed hyperparameter configurations ensure strict comparability, they may not fully capture the peak performance capabilities of each hardware platform. This design also ensures that the selected configurations remain compatible with low-resource platforms, where memory availability can constrain feasible workload sizes. In particular, parameters such as batch size and memory allocation strongly influence hardware utilization, especially in heterogeneous architectures with different memory hierarchies and parallel execution models. Therefore, in addition to the controlled experimental setup, this work incorporates a complementary hardware-aware evaluation, where selected hyperparameters (primarily batch size) are systematically varied to identify near-optimal operating points for each platform. This dual approach allows both reproducible comparison and fair assessment of platform-specific performance. This is particularly important because suboptimal batch size configurations may significantly underestimate the effective performance of certain platforms, especially in CPU-based systems. The complete benchmarking procedure is summarized in Algorithm 1. The workflow comprises four main stages: hardware selection, software environment configuration, model execution, and metric collection. First, hardware platforms are selected to represent distinct computational paradigms in terms of architecture, performance level, and accessibility. Second, each platform is configured with an appropriate software stack, including the required Python version and machine learning libraries. Third, the selected supervised learning models: CNN [

29,

30], Simple RNN [

31], RNN–LSTM [

32], BiLSTM [

33,

34], and XGBoost [

35], are trained under fixed experimental settings. Finally, the results are collected in terms of training time, power (or declared power usage when available), predictive performance, and energy consumption behavior.

For the hardware-aware evaluation, CNN and LSTM models are used as representative workloads to study batch size sensitivity. These models capture two fundamentally different computational patterns: highly parallel workloads (CNN) and sequential, dependency-bound workloads (LSTM). This selection allows analysis of how batch size affects both compute-bound and sequential models without introducing excessive experimental complexity, while still providing representative insights across model families.

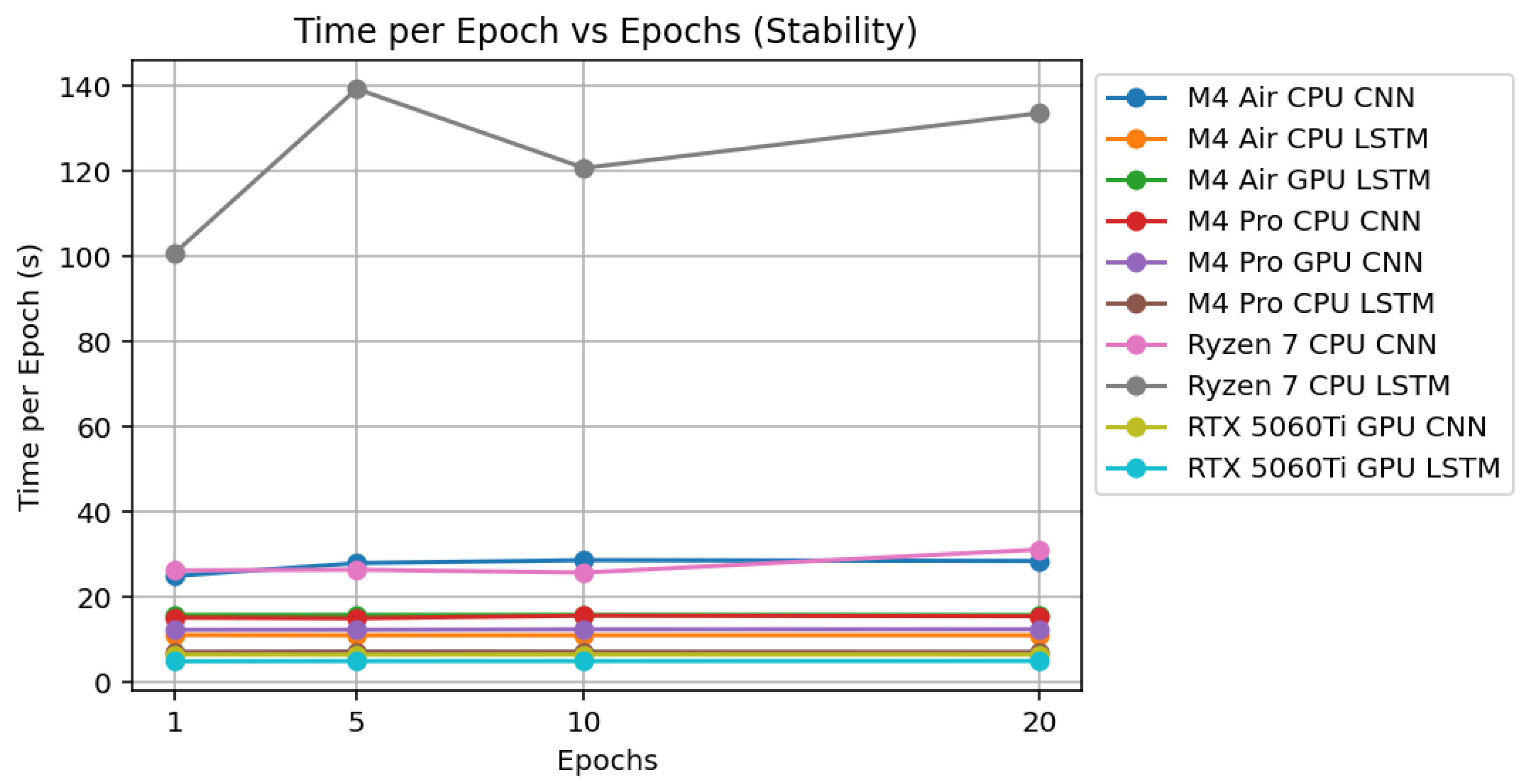

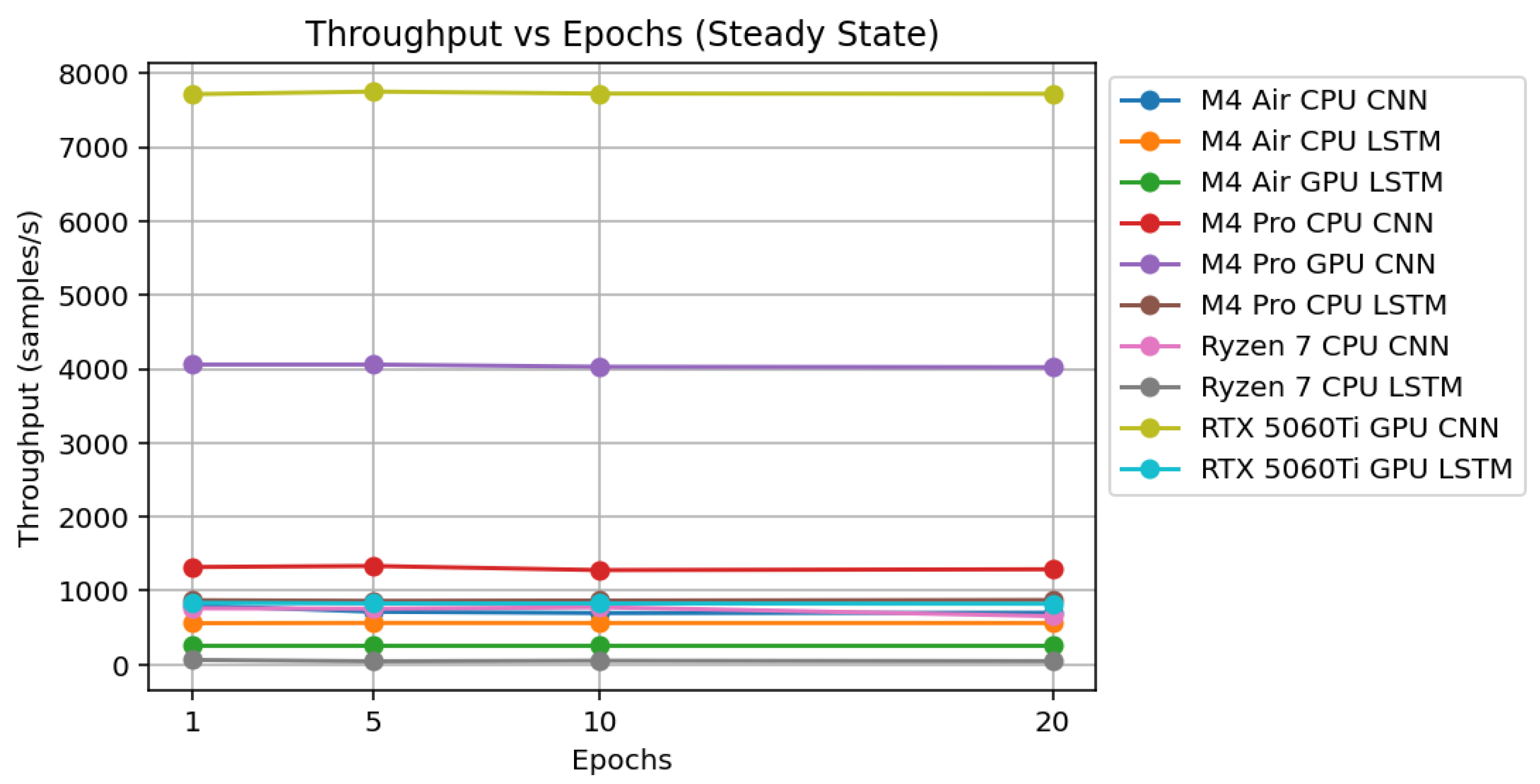

As described in Algorithm 1, each model is executed on each platform under fixed conditions, and all experiments are repeated five times. In addition, experiments were conducted under different epoch configurations in order to evaluate the stability of the training process and verify whether the measured performance reflects steady-state behavior rather than initialization overhead. For the hardware-aware evaluation, multiple batch sizes are explored for each platform, and performance metrics are recorded to identify optimal configurations. To reduce confounding factors, all executions are performed from the command line, and average values are reported in the subsequent analysis. Random seeds are fixed whenever supported by the framework in order to improve reproducibility. This procedure enables a controlled comparison of hardware-dependent effects on different model families, ranging from convolution-based and recurrent architectures to tree-based learning methods.

Unlike conventional benchmarking approaches that focus primarily on execution time or accuracy, the proposed methodology integrates execution time, power consumption, energy estimation, and convergence behavior. Although cost is not directly part of the controlled experimental procedure, the collected performance metrics are later used to derive a performance-per-dollar indicator, enabling a complementary techno-economic interpretation of the results. Furthermore, by incorporating both controlled (fixed configuration) and hardware-aware (tuned configuration) evaluations, the methodology provides a more complete characterization of each platform, capturing both reproducibility and peak performance behavior. This enables a more comprehensive evaluation of hardware performance under realistic and controlled conditions.

| Algorithm 1 Unified benchmarking procedure for heterogeneous hardware evaluation |

| Require: Set of hardware platforms ; set of models ; fixed datasets ; fixed hyperparameter configurations ; number of repetitions ; batch size search space |

| Ensure: Per-platform and per-model summary metrics for training time, predictive performance, power, and energy when available |

- 1:

for all platform do - 2:

Configure the software environment of platform p - 3:

for all model do - 4:

Load dataset corresponding to model m - 5:

Load fixed hyperparameter configuration - 6:

Initialize empty result containers for time, predictive metrics, power, and energy - 7:

Controlled evaluation (fixed configuration) - 8:

for to R do - 9:

Set fixed random seeds whenever supported by the framework - 10:

Initialize model m with configuration - 11:

Train model m on platform p - 12:

Record training time - 13:

Record predictive metric(s) associated with model m - 14:

Record average power consumption when measurable - 15:

if power data are available then - 16:

Compute energy consumption as - 17:

else - 18:

Mark energy consumption as unavailable - 19:

end if - 20:

end for - 21:

Compute mean and standard deviation across the R repetitions for all recorded metrics - 22:

Store summarized controlled results for platform p and model m - 23:

Hardware-aware evaluation (batch size tuning, applied only to selected platforms) - 24:

for all batch size do - 25:

Modify hyperparameter configuration with batch size b - 26:

for to R do - 27:

Initialize model m with updated configuration - 28:

Train model m on platform p - 29:

Record training time and relevant metrics - 30:

end for - 31:

Compute mean performance for batch size b - 32:

end for - 33:

Identify optimal batch size for platform p and model m (e.g., minimum training time or maximum throughput) - 34:

Store optimal configuration results - 35:

end for - 36:

end for - 37:

Compare all summarized results across platforms and model families under both controlled and optimized configurations

|

| Note: E denotes energy (J), P average power (W), and T execution time (s). |

2.2. Hardware Selection Criteria

The hardware platforms considered in this study were selected to reflect practical diversity, software compatibility, accessibility, and reproducibility in supervised learning experiments. The selection procedure is summarized in Algorithm 2. Rather than targeting the maximum achievable performance of each platform through hardware-specific tuning, the benchmark was designed to compare heterogeneous systems under a controlled and homogeneous experimental setting, using consistent model definitions, datasets, and training configurations whenever platform compatibility allowed. The selected hardware set should therefore be interpreted as representative rather than exhaustive, and the reported results are not intended to define a universal scaling law for other GPU or CPU families solely on the basis of CUDA-core or CPU-core counts. The benchmark includes representative systems from desktop, laptop, reference cloud, and embedded environments, covering x86 CPUs, NVIDIA CUDA-enabled GPUs, Apple Silicon, and ARM-based low-power devices.

Google Colab was included as a practical cloud-based reference environment because of its widespread use in academic, educational, and rapid prototyping settings. However, it is not treated as a strictly equivalent counterpart to dedicated local hardware, since it operates on shared and virtualized infrastructure with inherently variable resource allocation. Consequently, its results should be interpreted as an accessibility-oriented reference rather than as a fully controlled physical platform. AMD GPUs are excluded owing to inconsistent support across the software frameworks required in this study, particularly TensorFlow, Keras, and XGBoost, which could compromise reproducibility and comparability. By contrast, NVIDIA hardware benefits from mature CUDA and cuDNN ecosystems, providing a stable baseline for GPU-accelerated training.

The inclusion of ARM-based platforms recognizes their increasing relevance in energy-efficient and mobile computing. In particular, the Raspberry Pi 5 represents a low-power embedded platform, while Apple Silicon provides an example of an integrated ARM-based architecture with unified memory. Together, the selected platforms provide a representative basis for comparison under fixed experimental conditions, while still reflecting the diversity of hardware environments commonly available to researchers and practitioners.

| Algorithm 2 Hardware platform selection procedure |

| Require: Candidate set of hardware platforms |

| Ensure: Selected set of hardware platforms |

- 1:

Initialize - 2:

for all candidate platform do - 3:

if c provides architectural diversity then - 4:

if c is accessible for practical academic or research use then - 5:

if c supports the required software frameworks with sufficient stability then - 6:

Add c to - 7:

end if - 8:

end if - 9:

end if - 10:

end for - 11:

Include x86 CPU and NVIDIA GPU platforms in desktop and laptop configurations - 12:

Include one Apple Silicon platform with integrated GPU - 13:

Include one ARM-based low-power embedded platform - 14:

Include one cloud-based reference environment for remote experimentation and accessibility-oriented comparison - 15:

Exclude platforms with inconsistent support in TensorFlow, Keras, or XGBoost when such limitations may affect reproducibility - 16:

Preserve fixed experimental settings across platforms whenever feasible in order to prioritize methodological consistency over platform-specific peak optimization - 17:

return

|

Based on these criteria, the final benchmark included a desktop computer, a laptop, an Apple Silicon MacBook, a Raspberry Pi 5, and Google Colab as the cloud-based reference environment. The technical specifications of these selected platforms are presented in the following subsection; however, the cloud-based results must be interpreted with additional caution because the underlying infrastructure is not fully controlled by the user.

2.3. Hardware Description

In this subsection, the hardware configurations employed throughout the study are described in detail.

Table 1 summarizes the main technical specifications of each platform, including processor, graphics unit, memory, storage, and declared power characteristics. These configurations provide the experimental basis for the comparative analysis conducted in the subsequent sections.

The Apple M4 Air (Apple Inc., Cupertino, CA, USA) platform is included as an additional device within the Apple Silicon family. Unlike the other platforms, it is primarily used to complement the batch size sensitivity analysis rather than the fixed-configuration benchmark, and therefore it is not included in the main controlled comparison. Its inclusion enables the evaluation of performance scaling within the same architectural family under different power and thermal constraints, particularly considering its fanless design.

2.4. Evaluated Hardware Platforms

x86 Laptop with Dedicated GPU. This gaming-oriented laptop combines an AMD Ryzen 7 4800H processor (AMD, Santa Clara, CA, USA) with an NVIDIA GTX 1660 Ti mobile GPU (NVIDIA Corporation, Santa Clara, CA, USA), representing mainstream mobile hardware capable of handling both general-purpose computing and moderately demanding machine learning tasks. It serves as a practical benchmark for portable deep learning workflows.

x86 Desktop with Dedicated GPU. A high-performance desktop configuration featuring an AMD Ryzen 7 7800X3D processor (AMD, Santa Clara, CA, USA) and an NVIDIA RTX 5060 Ti (16 GB VRAM) (NVIDIA Corporation, Santa Clara, CA, USA). It provides the reference baseline for this study, combining strong CPU throughput, large cache, and sufficient GPU memory to train deep learning models efficiently. This setup balances raw compute power and bandwidth, ideal for testing both CPU-bound and GPU-accelerated algorithms.

Apple MacBook M4 Pro. An ARM-based System-on-Chip from Apple Inc. (Cupertino, CA, USA), integrating CPU, GPU, and NPU under a unified memory architecture. Apple Silicon exemplifies high energy efficiency and cross-platform compatibility within compact devices, offering insight into ARM+GPU performance using the Metal backend. Its inclusion reflects the growing relevance of Apple hardware in portable AI and data-science workflows.

Raspberry Pi 5. A low-power single-board computer developed by Raspberry Pi Ltd. (Cambridge, UK), based on a Broadcom BCM2712 processor (Broadcom Inc., Palo Alto, CA, USA) with a quad-core Cortex-A76 CPU, included to evaluate AI on the edge and supervised learning in constrained or embedded environments. Although limited in raw performance, it provides valuable information on efficiency, thermal stability, and performance-per-watt for edge AI and IoT applications.

Google Colab Free. A widely used cloud-based reference environment provided by Google LLC (Mountain View, CA, USA), offering shared Intel Xeon CPUs (Intel Corporation, Santa Clara, CA, USA) and NVIDIA GPUs (NVIDIA Corporation, Santa Clara, CA, USA) for educational, exploratory, and rapid prototyping purposes. Unlike the dedicated local platforms considered in this study, Google Colab operates on virtualized and dynamically allocated infrastructure, which may introduce variability in CPU availability, storage performance, GPU allocation, and runtime stability. Accordingly, its inclusion is intended to provide an accessibility-oriented reference for remote experimentation rather than a fully controlled physical hardware baseline. Despite these limitations, Colab remains practically relevant because it enables GPU-accelerated training without upfront hardware investment, thereby illustrating the trade-offs between accessibility, convenience, and methodological consistency.

Table 2 summarizes the software environment across all evaluated platforms, including operating systems, programming environments, and library versions. This transposed view facilitates direct comparison of software configurations, ensuring consistency and reproducibility in the experimental setup while also highlighting potential sources of variability across heterogeneous hardware. Differences in software versions arise from the use of stable and platform-compatible releases available for each system. Although software versions differ due to compatibility constraints, all configurations correspond to optimized environments, preserving functional consistency across the benchmarking workflow. These software-stack differences may influence execution time, energy behavior, numerical consistency, and backend-level stability; however, stable and platform-compatible versions were deliberately selected in all cases in order to preserve functional comparability across the benchmark.

Power consumption was measured using a P4400 low-cost consumer power meter, with an accuracy typically ranging between 0.2% and 2%. Measurements were obtained at the system level under consistent power supply conditions, using standard manufacturer-recommended configurations for each platform. For NVIDIA GPUs, nvidia-smi was used to sample GPU power draw during training. For CPU-based and Apple Silicon platforms, system-level power monitoring utilities were employed. In the case of the Raspberry Pi 5, power was estimated from the declared SoC envelope. For Google Colab, no power measurements were available because of the cloud-managed and user-non-transparent nature of the underlying infrastructure. Therefore, Colab-based results are restricted to runtime and predictive-performance indicators and should be interpreted as practical cloud-reference measurements rather than as directly equivalent energy benchmarks relative to dedicated local hardware. Energy consumption was computed as the product of the average measured power and the total training time for each run.

2.5. Tested Models

To benchmark performance and efficiency across heterogeneous platforms, five representative machine learning models were implemented: CNN [

29,

30], Simple RNN [

31], RNN–LSTM [

32], BiLSTM [

33,

34], and XGBoost [

35]. The model selection and organization adopted in this study are summarized in Algorithm 3. These models were chosen to cover distinct computational patterns and architectural characteristics relevant to supervised learning, including convolution-based processing, sequential recurrent computation, gated temporal modeling, bidirectional sequence modeling, and tree-based learning. Together, they provide a broad basis for evaluating how different hardware platforms respond to diverse training workloads. Accordingly, the associated datasets were not intended to provide a common predictive benchmark across all model families, but rather to instantiate representative computational workloads for each learning paradigm. Therefore, dataset size, structure, and diversity are treated here as part of the workload definition, while remaining fixed across all hardware platforms within each experimental case.

The evaluated models differ substantially in their computational behavior. CNNs are highly parallelizable and typically benefit from GPU acceleration. Simple RNN and RNN–LSTM emphasize sequential dependencies and recurrent memory access, exposing latency-sensitive execution patterns. BiLSTM extends this behavior by incorporating bidirectional recurrent processing, thereby increasing the computational complexity of sequence modeling. In contrast, XGBoost represents a tree-based method whose execution characteristics are generally more favorable for CPU-based platforms. This diversity allows the benchmark to capture a wide range of runtime, energy, and stability behaviors across hardware architectures.

All experiments were executed using Python scripts from the command line in order to minimize software overhead and improve reproducibility. The use of Python also ensured compatibility with the main frameworks employed in this study, including TensorFlow, scikit-learn, and XGBoost. Each experiment was repeated five times, and average results were reported in the subsequent analysis. All training codes used in this study are publicly available in the GitHub repository

https://github.com/OscarHSierra/cpu-gpu-training-benchmark (accessed on 28 April 2026).

The objective of this study is to compare computational performance rather than to maximize predictive accuracy. Accordingly, all evaluated techniques share a common training configuration to ensure consistency and fairness across platforms. Each model is executed on all target platforms, including GPU- and CPU-based systems as well as system-on-chip architectures. Given that the total number of training runs scales with both the number of evaluated models and the number of repetitions, memory and execution time become critical constraints, particularly for resource-limited devices. For this reason, the hyperparameter configuration is deliberately selected to ensure bounded and comparable computational workloads across all platforms. While this setup does not aim to achieve state-of-the-art accuracy, it enables a tractable and reproducible evaluation process. A key limitation of this study is that the selected configurations prioritize computational tractability. In this context, batch size was fixed for each model family to ensure consistent and reproducible workloads across all platforms. For convolutional models (CNN), a batch size of 512 was selected to leverage data parallelism, while for recurrent models (RNN, LSTM, and BiLSTM), a smaller batch size of 64 was used due to their sequential nature and higher memory requirements per sample [

36]. These values were selected as a compromise between computational efficiency and cross-platform feasibility, taking into account the memory constraints of low-resource platforms such as embedded devices (e.g., Raspberry Pi). Although these configurations do not necessarily correspond to the optimal batch size for each hardware architecture, they provide a fair and controlled baseline for comparison. Complementary experiments exploring batch size sensitivity are presented in

Section 4.6. The results do not reflect fully optimized training scenarios or state-of-the-art model performance. Model performance is not primarily assessed in terms of predictive accuracy. Instead, execution time, power consumption, and energy usage are emphasized, while the training loss is used as an indicator of convergence behavior. When applicable, classification accuracy is reported as a complementary metric. For classification settings where accuracy is reported, it is defined as

where

N is the number of evaluated samples,

is the predicted class, and

is the corresponding target class. Accordingly, higher values indicate better agreement between predictions and reference labels. However, for sequence modeling tasks, such as the character-level RNN-based models, sparse categorical cross-entropy is used as the primary convergence indicator, as it more accurately reflects probabilistic learning dynamics in sequence prediction tasks [

37,

38]. For character-level sequential models, sparse categorical cross-entropy is defined as

where

denotes the predicted probability assigned to the correct target class. Therefore, lower values indicate better probabilistic fit and more favorable convergence behavior under the same experimental conditions. Direct comparison of predictive metrics across models is not intended, as each model employs task-specific evaluation criteria aligned with its learning objective. Accordingly, predictive metrics are not treated as the primary basis for hardware ranking, but rather as complementary indicators used to verify convergence consistency across platforms under the same experimental configuration.

| Algorithm 3 Model selection and organization for hardware benchmarking |

| Require: Candidate set of supervised learning models |

| Ensure: Final benchmark model set |

- 1:

Initialize - 2:

for all candidate model do - 3:

if m represents a distinct computational pattern then - 4:

if m is relevant for supervised learning benchmarking then - 5:

if m can be implemented reproducibly across the evaluated platforms then - 6:

Add m to - 7:

end if - 8:

end if - 9:

end if - 10:

end for - 11:

Include one convolution-based model (CNN) - 12:

Include one simple recurrent model (Simple RNN) - 13:

Include one gated recurrent model (RNN–LSTM) - 14:

Include one bidirectional recurrent model (BiLSTM) - 15:

Include one tree-based model (XGBoost) - 16:

return

|

2.5.1. CNN

A CNN [

29,

30] was implemented in TensorFlow 2.10.0 to classify CIFAR-10 [

39], a widely used standard benchmark dataset for image classification.

Table 3 presents the architecture of the CNN employed in this study. The model processes RGB images and is organized into three convolutional blocks with increasing depth (64, 128, and 256 filters). Each block comprises two convolutional layers with

kernels, same padding, and swish activation, followed by batch normalization to improve convergence stability. Spatial dimensionality is progressively reduced using max-pooling layers with a pool size of

, while dropout regularization (0.3 and 0.4) is applied to mitigate overfitting.

After feature extraction, a global average pooling layer aggregates spatial information and reduces the number of parameters. The resulting representation is processed by two fully connected layers with 1024 and 512 units, respectively, both using swish activation and followed by dropout (0.5). The final dense layer produces 10 logits corresponding to the CIFAR-10 classes.

Table 4 summarizes the training configuration and hyperparameters. The model is trained on the CIFAR-10 dataset using the Adam optimizer and sparse categorical cross-entropy loss with logits, while accuracy is used as the evaluation metric. A batch size of 512 and a single training epoch are employed.

In this study, final accuracy and loss are interpreted primarily as consistency checks on the learning outcome across platforms, rather than as the main target variables of the benchmark.

2.5.2. Simple RNN

A character-level Simple RNN [

31] was trained on the Shakespeare dataset [

40], a widely used public reference dataset for character-level sequence modeling, to evaluate sequential computation performance.

The architecture includes an embedding layer followed by three stacked SimpleRNN layers with 1024 units each, and a dense output layer over the vocabulary. Training was performed for one epoch using the Adam optimizer and sparse categorical cross-entropy loss.

Table 5 summarizes the architecture of the proposed Simple RNN model. The network operates on input sequences of 100 characters encoded as integer indices. An embedding layer maps each character into a 256-dimensional continuous vector space. The core of the model consists of three stacked

SimpleRNN layers, each configured to return full sequences, enabling the model to capture temporal dependencies across all time steps. Finally, a dense output layer with linear activation produces logits over the vocabulary space.

Table 6 presents the training configuration and hyperparameters used in this study. The model is trained using sequences of 100 characters and a batch size of 64, with a shuffle buffer of 10,000 samples to improve data randomness. The optimization process relies on the Adam optimizer, while the loss function is sparse categorical cross-entropy configured with logits. To ensure reproducibility, a fixed random seed (42) is used for Python, NumPy, and TensorFlow. Accordingly, the reported loss values are used in this study primarily as indicators of convergence consistency across platforms, rather than as the main basis for hardware ranking.

2.5.3. RNN–LSTM

An RNN–LSTM [

32] was trained on the Shakespeare dataset [

40], a widely used public reference dataset for character-level sequence modeling, in order to evaluate gated recurrent computation under sequential training workloads.

Two stacked LSTM layers with 1024 units each replaced the SimpleRNN layers to mitigate vanishing-gradient effects and improve long-range sequence modeling. The model used identical preprocessing, optimizer, and loss functions as the Simple RNN baseline.

Table 7 presents the architecture of the RNN–LSTM model used to evaluate sequential computation performance. The model processes sequences of 100 integer-encoded characters and follows an embedding–recurrent–projection structure. An embedding layer maps the input tokens into a 256-dimensional continuous space, enabling a compact and expressive representation of the discrete vocabulary. The temporal modeling is performed using two stacked LSTM layers with 1024 units each, both configured to return full sequences, allowing the network to capture temporal dependencies at every time step. Finally, a dense output layer produces logits over the vocabulary space, enabling next-character prediction.

Table 8 summarizes the overall configuration and training setup of the RNN–LSTM model. The network is trained on a character-level representation of the Shakespeare dataset, where input sequences of 100 characters are used to predict the subsequent character at each time step. The training process follows the unified benchmarking protocol adopted in this study, employing a single training epoch, the Adam optimizer, and sparse categorical cross-entropy loss. This configuration ensures consistent computational conditions across all evaluated models, facilitating fair comparison across heterogeneous hardware platforms.

Table 9 details the training configuration and hyperparameters of the RNN–LSTM model. A batch size of 64 and a shuffle buffer of 10,000 samples are used to balance computational efficiency and input variability during training. The embedding dimension and LSTM size are set to 256 and 1024 units, respectively, across two recurrent layers. The output dimension matches the vocabulary size. To ensure experimental reliability, a fixed random seed (42) is applied across Python, NumPy, and TensorFlow. Additionally, GPU memory growth is enabled to prevent allocation issues, allowing stable execution across different hardware platforms, with training performed on GPU when available and otherwise on CPU.

2.5.4. XGBoost

XGBoost [

35] version 3.0.2 was benchmarked using a synthetic multiclass dataset with 200,000 samples, 1000 features, and 5 classes. Identical configurations were trained on both CPU and GPU backends to compare computational performance and energy efficiency. GPU execution employed the

gpu_hist tree construction method together with the

gpu_predictor backend. The dataset adopted for XGBoost was not intended as a standard public benchmark, but as a synthetic and controlled tabular workload designed to represent tree-based supervised learning under reproducible computational conditions.

Table 10 presents the training configuration and hyperparameters of the XGBoost model. A multi-class classification objective (

multi:softmax) is used to directly predict discrete class labels across five categories. The model is configured with a maximum tree depth of 6 and a learning rate of 0.1, providing a balance between model capacity and convergence stability. The training process consists of 100 boosting rounds, enabling incremental refinement of the ensemble.

To support heterogeneous hardware evaluation, different tree construction methods are selected depending on the execution platform. The auto method is used for CPU-based training, while gpu_hist is employed for GPU acceleration, enabling efficient histogram-based gradient boosting. The evaluation metric during training is the multi-class logarithmic loss mlogloss, which provides a probabilistic measure of model performance. A fixed random seed (42) is used to ensure reproducibility across runs.

Table 11 summarizes the experimental setup. The model is evaluated on a synthetic dataset composed of 200,000 samples with 1000 features, of which 75 are informative. The dataset is structured as a five-class classification problem and is split into training and testing subsets using an 80%/20% ratio.

The model is trained for 100 boosting rounds, and overall classification accuracy is used as the evaluation metric for reporting results. To ensure consistency with the benchmarking protocol, fixed random seeds are applied across Python, NumPy, and XGBoost, enabling reproducible comparisons across different hardware platforms.

2.5.5. BiLSTM

A lightweight BiLSTM [

33,

34] architecture with embedding, bidirectional recurrent processing, and a dense classification layer was implemented to evaluate sequence modeling performance under bidirectional recurrent workloads. It was trained on synthetic token sequences (vocabulary size = 5000, sequence length = 100, number of classes = 5) for 10 epochs using Adam and categorical cross-entropy loss. The dataset used for the BiLSTM experiment was not introduced as a standard public benchmark, but as a synthetic and controlled workload designed to represent bidirectional sequence-processing behavior under reproducible computational conditions.

Table 12 presents the architecture of the Bidirectional LSTM (BiLSTM) model employed to capture contextual dependencies in sequential data. The model processes input sequences of fixed length and begins with an embedding layer that maps tokens from a vocabulary of 5000 elements into a 64-dimensional continuous space, enabling a compact representation of the input.

The core of the model consists of a bidirectional LSTM layer with 64 units, which processes the sequence in both forward and backward directions. This structure allows the model to incorporate past and future context simultaneously, improving its ability to capture complex temporal relationships compared to unidirectional recurrent architectures. The outputs from both directions are concatenated into a 128-dimensional representation.

A dropout layer with a rate of 0.5 is applied to reduce overfitting by randomly deactivating neurons during training. Finally, a fully connected layer with softmax activation produces probability distributions over the five output classes, enabling multi-class classification.

Table 13 summarizes the training configuration and hyperparameters of the BiLSTM model. The model is trained using the Adam optimizer with a learning rate of 0.001 and categorical cross-entropy as the loss function. A batch size of 64 and a total of 10 training epochs are used, allowing sufficient optimization while maintaining manageable computational cost within the benchmarking framework.

Input sequences consist of 100 tokens drawn from a vocabulary of 5000 elements, with an embedding dimension of 64. The bidirectional LSTM layer uses 64 units per direction, and a dropout rate of 0.5 is applied as the primary regularization mechanism. The model performs classification over five output classes and uses a validation split of 0.2 to monitor training behavior. To ensure experimental reliability, a fixed random seed (42) is applied.

Batch size was fixed for each model family to ensure consistent and reproducible workloads across all platforms. For convolutional models (CNN), a batch size of 512 was selected to leverage data parallelism, while for recurrent models (RNN, LSTM, and BiLSTM), a smaller batch size of 64 was used due to their sequential nature and higher memory requirements per sample. The selected values were chosen as a compromise between computational efficiency and cross-platform feasibility, taking into account the memory constraints of low-resource platforms such as embedded devices (e.g., Raspberry Pi). This ensures that all experiments can be executed reliably across the full set of evaluated hardware. Although these configurations do not necessarily correspond to the optimal batch size for each architecture, they provide a fair and controlled baseline for comparison. Complementary experiments exploring batch size sensitivity were conducted to analyze hardware-aware performance and are discussed in

Section 4.6. Models that do not rely on mini-batch training, such as XGBoost, were executed using their standard training procedures.

3. Results

This section presents the experimental results obtained for each of the evaluated models across the considered hardware platforms and discusses their implications in terms of runtime, energy-related behavior, stability, and predictive consistency. The corresponding tables summarize the quantitative results for each case. All reported values are presented as means obtained from repeated runs, together with their corresponding standard deviations. In the following analysis, runtime refers to total training time, and total energy consumption refers to the product of average power and training time when power data are available. Accuracy and loss are interpreted according to the role they play in each model family and are not used interchangeably across all experiments. In the result tables, the symbol “–” indicates values that were not available or not measured for the corresponding platform or configuration. For consistency and readability in presentation, time and power are reported to two decimal places, energy to one decimal place, and accuracy/loss values to four decimal places. These formatting decisions are purely editorial and do not modify the underlying measurement procedure or the comparative analysis.

3.1. Results for CNN

Table 14 summarizes the results obtained when training the CNN model across the evaluated hardware platforms. The reported metrics include training time, average power consumption, total energy use, accuracy, and loss.

The RTX 5060 Ti, adopted as the baseline platform (1.0×), achieved the shortest training time (11.70 s), except for the initial run, which required 64.14 s, most likely due to a software-related anomaly. The experiment was repeated several times, and the same issue persisted in the first execution. This behavior is also documented in the original table available in the GitHub repository. Therefore, the first measurement was excluded from the reported results, as it was considered an execution artifact rather than representative hardware behavior. Despite this issue, the RTX 5060 Ti remained the fastest platform, likely due to its modern architecture and larger memory capacity.

Following the RTX 5060 Ti, the GTX 1660 Ti (20.79 s, 1.78× slower) and the Apple M4 GPU (21.90 s, 1.87× slower) achieved comparable performance. The latter result indicates that Apple’s Metal backend can provide training performance close to that of a mid-range NVIDIA GPU, despite the absence of CUDA support. The Google Colab GPU T4 required 52.52 s (4.49× slower), reflecting the throughput limitations of the T4-class accelerator typically available in free cloud environments, although it remains a practical no-cost alternative for experimentation.

In contrast, CPU-only configurations exhibited substantially longer training times. The Apple M4 CPU (80.19 s, 6.85× slower), Ryzen 7 7800X3D (162.67 s, 13.90× slower), and Ryzen 7 4800H (277.21 s, 23.69× slower) all lagged far behind the GPU-based baseline. At the lowest performance tier, the Raspberry Pi 5 (1505.50 s, approximately 25 min, 128.65× slower) and the Google Colab CPU (1418.18 s, approximately 24 min, 121.19× slower) further confirmed the practical limitations of training CNN models without GPU acceleration.

In terms of variability, the RTX 5060 Ti, Apple M4 GPU, Apple M4 CPU, GTX 1660 Ti, and Ryzen 7 4800H exhibited the most stable behavior, with standard deviations below 2.6 s, indicating consistent execution across repeated runs. In contrast, the Google Colab GPU T4 and the Ryzen 7 7800X3D showed markedly higher variability, with standard deviations of 21.13 s and 35.38 s, respectively. This dispersion suggests that cloud-based environments and thermally sensitive desktop systems may introduce greater timing instability, either because of dynamic resource allocation in shared infrastructure or because of frequency throttling under sustained load.

Overall, the relatively low variance observed in the remaining platforms indicates that the experimental protocol was stable and reproducible, and that the performance differences reported across devices are mainly attributable to hardware and system-level characteristics—including compute architecture, memory bandwidth, cache organization, software backend efficiency, and thermal-power behavior—rather than random fluctuations or measurement noise.

Regarding predictive performance, all platforms converged to very similar values, with accuracy around 0.47–0.48 and loss around 1.41–1.43. This result indicates that the hardware platform primarily affected execution time and energy behavior, while the final model convergence remained essentially unchanged under the fixed training configuration.

Although the achieved accuracy values are relatively low compared to fully optimized models, this outcome is consistent with the experimental design. The training configuration was intentionally constrained to ensure short and comparable execution times across heterogeneous hardware platforms, enabling multiple repetitions of each experiment. As a result, the model is not expected to reach optimal predictive performance, but rather to provide a consistent and controlled workload for benchmarking purposes.

In this context, accuracy serves primarily as a validation metric to confirm that all platforms converge to similar solutions under identical conditions. The small variations observed across devices further indicate that the computational differences are not affecting the learning outcome, reinforcing that the reported performance differences are attributable to hardware characteristics rather than discrepancies in model training.

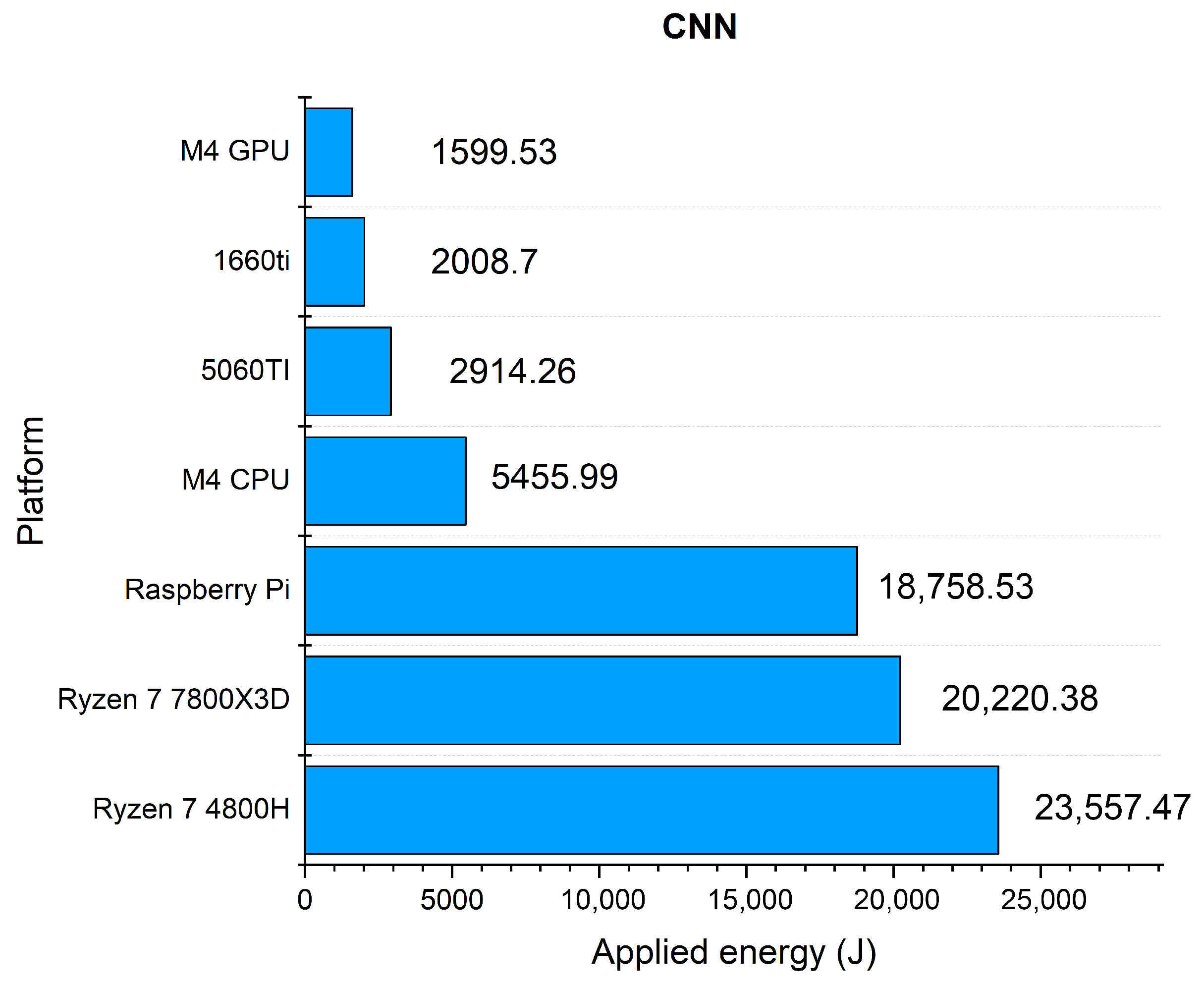

Figure 2 illustrates the energy consumption associated with CNN training across the evaluated platforms. The Apple M4 GPU was the most energy-efficient platform (1599.5 J), followed by the GTX 1660 Ti (2008.7 J) and the RTX 5060 Ti (2914.3 J). Although the RTX 5060 Ti was the fastest platform, it also required more total energy than the M4 GPU and GTX 1660 Ti, reflecting the classical trade-off between raw speed and energy efficiency. Desktop CPUs performed poorly from this perspective: the Ryzen 7 7800X3D (20,220.4 J) and Ryzen 7 4800H (23,557.5 J) consumed between five and eight times more energy than the GPU-based platforms while reaching similar predictive performance. A particularly illustrative case was the Raspberry Pi 5 (18,758.5 J), whose low power draw (12.46 W) did not prevent a high accumulated energy consumption because of its extremely long execution time. By contrast, the Apple M4 CPU (5456.0 J) showed comparatively good efficiency, consuming substantially less energy than the x86 CPUs, likely due to its lower power envelope and ARM-based system-level optimization.

3.2. Results for Simple RNN

Table 15 summarizes the results obtained when training the Simple RNN model across the evaluated hardware platforms. The model was trained for one epoch on the Shakespeare character-level dataset using the Adam optimizer. The optimization objective was the sparse categorical cross-entropy loss, which measures the negative log-likelihood of the correct next character given the preceding sequence. No explicit accuracy metric was computed, since character-level accuracy is not a sufficiently informative indicator of language modeling performance.

Modern GPUs, particularly the RTX 5060 Ti and Google Colab GPU T4, provided the most balanced performance for Simple RNN training, whereas the Apple M4 CPU emerged as a competitive alternative even without dedicated graphics acceleration. The RTX 5060 Ti established the baseline with a training time of 33.80 s, making it the fastest platform and the reference for relative comparisons. The Google Colab GPU T4 required 37.16 s, only 1.10× slower than the baseline, and therefore represented a strong cloud-based alternative. The GTX 1660 Ti required 72.50 s, approximately 2.14× slower than the RTX 5060 Ti, but remained suitable for medium-scale recurrent training workloads.

Among CPU-based platforms, the Apple M4 CPU stood out with a training time of 57.94 s, only 1.71× slower than the baseline and clearly ahead of the Ryzen 7 4800H and Ryzen 7 7800X3D. In comparison, the Ryzen 7 4800H required 395.47 s (11.70× slower), whereas the Ryzen 7 7800X3D required 609.69 s (18.04× slower). These results indicate that the ARM-based Apple CPU handled recurrent workloads more efficiently than the evaluated x86 CPUs under this experimental setup.

At the lowest performance tier were the Raspberry Pi 5 CPU and the Google Colab CPU, with training times of 1275.25 s and 1597.64 s, respectively, corresponding to approximately 21 and 27 min of execution. These values make both platforms impractical for efficient Simple RNN training. The most unfavorable result was observed on the Apple M4 GPU, which required 2264.77 s (about 38 min), equivalent to 67.01× slower than the baseline. This behavior suggests substantial inefficiencies in the Metal backend for recurrent operations, especially when compared with the much stronger CPU performance of the same platform.

In terms of variance, the RTX 5060 Ti, Google Colab GPU T4, GTX 1660 Ti, and Apple M4 CPU exhibited the most stable execution times, all with standard deviations below 1.1 s. By contrast, the Ryzen 7 4800H and, more notably, the Ryzen 7 7800X3D and Apple M4 GPU showed considerably higher dispersion, with standard deviations ranging from 37.78 s up to 430.56 s. This elevated variability may be related to dynamic frequency scaling, thermal throttling, or backend-level scheduling effects under sustained recurrent workloads. The Raspberry Pi 5 and Google Colab CPU showed moderate variability relative to their total runtime, indicating that instability was not the main factor behind their poor overall performance.

Regarding convergence, all platforms reached sparse categorical cross-entropy values in the range of approximately 3.44 to 3.70, indicating broadly consistent Simple RNN training behavior across hardware. Minor differences, such as the slightly higher loss values observed on the Apple M4 CPU, Raspberry Pi 5 CPU, and Google Colab CPU, may be attributable to numerical precision differences or the use of distinct low-level execution libraries, such as Metal versus CUDA. These variations remain within an acceptable range and suggest that differences in hardware performance did not compromise overall learning stability.

Although the reported loss values are relatively high compared to fully optimized language models, this behavior is consistent with the constrained training setup adopted in this study. The model was intentionally trained for a limited number of iterations to ensure manageable execution times across all hardware platforms, enabling repeated measurements under controlled conditions.

In this context, sparse categorical cross-entropy serves as a proxy for learning consistency rather than an absolute performance metric. The close agreement of loss values across platforms indicates that all devices converge to similar model states under identical training conditions. This observation reinforces that the differences observed in execution time, power consumption, and energy usage are attributable to hardware characteristics rather than discrepancies in the optimization process.

Figure 3 presents the energy consumption associated with Simple RNN training. The Apple M4 CPU was the most energy-efficient measured platform, requiring 3609.8 J, followed by the RTX 5060 Ti with 5659.5 J and the GTX 1660 Ti with 7611.1 J. In contrast, the Ryzen 7 4800H and Ryzen 7 7800X3D consumed 28,387.1 J and 73,882.7 J, respectively, making them substantially less favorable from an energy-efficiency perspective. Although the Raspberry Pi 5 exhibited low instantaneous power draw, it accumulated 14,410.4 J because of its very long training time.

The most extreme case was the Apple M4 GPU, which consumed 124,970.0 J. Its disproportionate combination of execution time and total energy strongly suggests that the Metal backend, at least under the tested software configuration, is not well optimized for Simple RNN training. This result contrasts sharply with the favorable performance of the Apple M4 CPU and highlights the importance of backend-specific behavior when evaluating recurrent workloads on heterogeneous hardware.

3.3. Results for RNN–LSTM

Table 16 summarizes the results obtained when training the RNN–LSTM model across the evaluated hardware platforms. The reported metrics include training time, average power consumption, total energy use, and sparse categorical cross-entropy loss.

The evaluation of the RNN–LSTM model revealed a markedly different pattern from that observed for the Simple RNN experiments. In this case, the performance gap between platforms was smaller, suggesting that LSTM-based workloads are better supported by current software backends and compilers. The RTX 5060 Ti established the baseline with the shortest training time (20.34 s, 1.0×). However, the Apple M4 GPU required only 24.59 s (1.21× slower), while the Apple M4 CPU completed training in 26.83 s (1.32× slower). These results indicate that Apple Silicon handled LSTM workloads much more efficiently than it did in the Simple RNN case, and that the Metal backend appears to be better suited for this type of recurrent architecture.

The GTX 1660 Ti and Google Colab GPU T4 also achieved competitive performance, with training times of 37.95 s and 34.48 s, corresponding to 1.87× and 1.70× slower execution than the baseline, respectively. The Google Colab CPU required 76.52 s (3.76× slower), which, although substantially slower than GPU-based platforms, remained within a usable range for moderate experimentation. By contrast, the Ryzen 7 4800H, Ryzen 7 7800X3D, and Raspberry Pi 5 exhibited very poor performance, with training times of 1196.21 s, 1556.77 s, and 2968.15 s, corresponding to 58.81×, 76.54×, and 145.93× slower execution than the RTX 5060 Ti. These runtimes make such platforms impractical for routine RNN–LSTM training.

In terms of variability, the most unstable platform was the Ryzen 7 7800X3D CPU, with a standard deviation of 345.83 s, followed by the Ryzen 7 4800H CPU (99.15 s) and the Raspberry Pi 5 CPU (11.50 s). All remaining platforms exhibited standard deviations below 1.31 s. This result suggests that the x86 CPU platforms were much more sensitive to runtime fluctuations, likely because of cache contention, dynamic frequency scaling, and thermal throttling under sustained workloads. By contrast, the Apple M4 CPU, Apple M4 GPU, RTX 5060 Ti, and Google Colab GPU T4 delivered highly consistent execution times, indicating that Apple Silicon and modern GPU platforms provide not only faster but also more stable performance for LSTM-based sequence modeling.

Regarding convergence, two distinct groups can be observed in terms of final loss values. The Apple M4 GPU, Apple M4 CPU, Google Colab GPU T4, Google Colab CPU, and Raspberry Pi 5 reached average losses around 3.18–3.19, whereas the RTX 5060 Ti, GTX 1660 Ti, Ryzen 7 7800X3D, and Ryzen 7 4800H converged to lower values around 2.84–2.85. Although this difference is moderate, it suggests some sensitivity to backend implementation, numerical precision, or low-level library behavior. Even so, the overall stability of the loss values across repeated runs indicates that the RNN–LSTM training process remained robust across all evaluated platforms.

The observed separation in convergence levels may reflect minor implementation-dependent effects, potentially related to numerical precision or backend-specific optimizations. Despite this difference, all platforms exhibit stable training behavior across repeated runs, indicating that the learning process remains consistent under the fixed experimental conditions. These results support that the observed performance differences are primarily associated with hardware characteristics rather than instability in the optimization process.

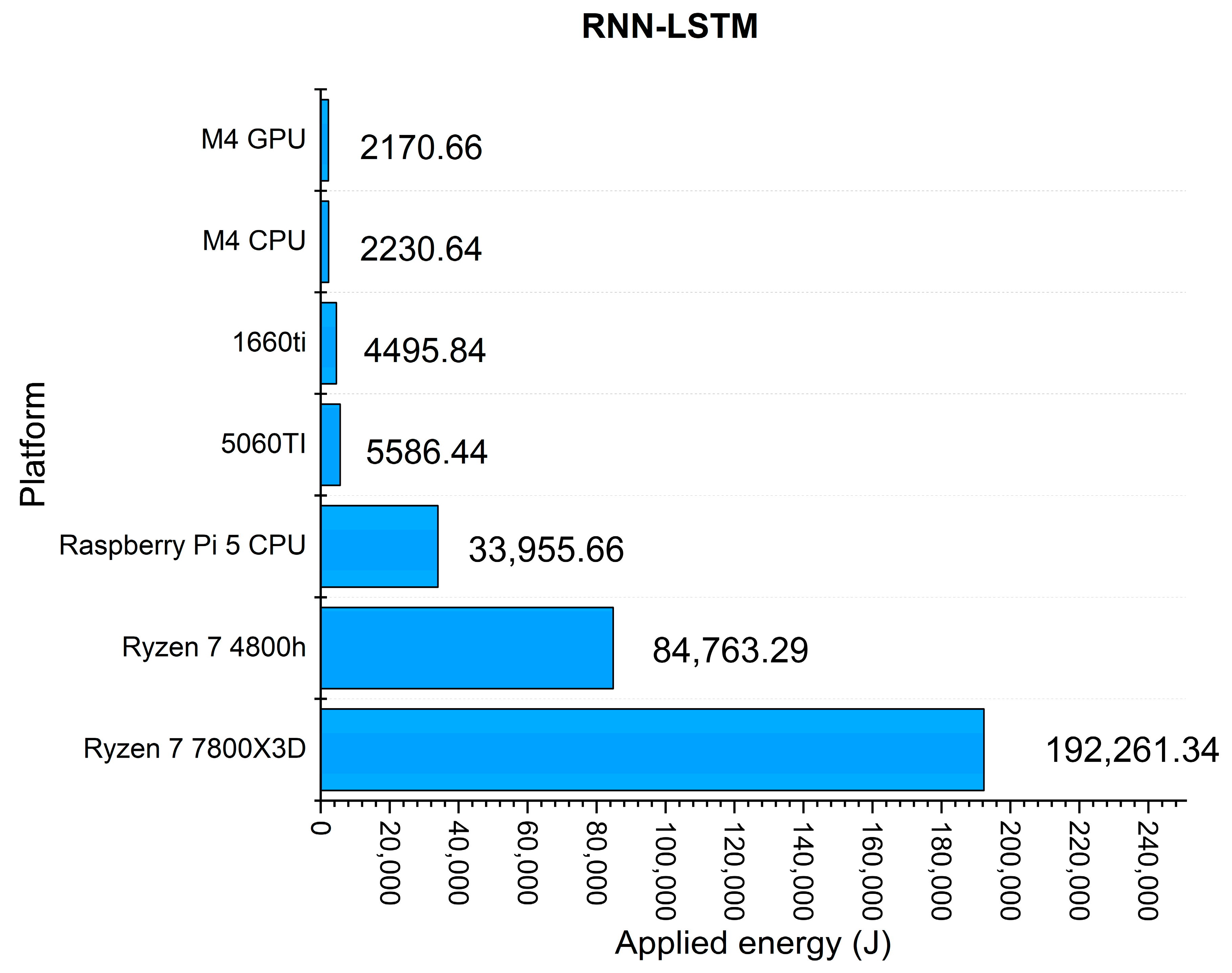

Figure 4 illustrates the energy consumption associated with RNN–LSTM training. The best results were obtained on Apple Silicon: the Apple M4 GPU required 2170.7 J and the Apple M4 CPU required 2230.6 J, both substantially lower than the RTX 5060 Ti, which consumed 5586.4 J despite being the fastest platform. The GTX 1660 Ti also showed a favorable balance between energy use and execution time, requiring 4495.8 J. In contrast, the Ryzen 7 7800X3D CPU (192,261.3 J), Ryzen 7 4800H CPU (84,763.3 J), and Raspberry Pi 5 CPU (33,955.7 J) were markedly inefficient, mainly because of their extremely long runtimes. These results reinforce the advantage of Apple Silicon for LSTM-based workloads when energy efficiency is prioritized alongside acceptable execution speed.

3.4. Results for XGBoost

Table 17 summarizes the results obtained when training the XGBoost model across the evaluated hardware platforms. The reported metrics include training time, average power consumption, total energy use, and classification accuracy. Loss is not reported, as the primary evaluation criterion in this experiment is the final predictive accuracy, which fully characterizes model performance for this supervised classification task.

In the case of XGBoost, the execution dynamics differed markedly from those observed in the neural network models, showing a much more balanced distribution of runtimes across hardware architectures. The RTX 5060 Ti achieved the shortest training time (16.66 s), establishing the reference baseline. The Google Colab GPU T4 (31.30 s, 1.88× slower) and the Apple M4 CPU (32.86 s, 1.97× slower) followed closely, indicating that XGBoost benefits less from massive GPU parallelism and remains highly efficient on modern CPU architectures.

The GTX 1660 Ti required 39.68 s (2.38× slower), maintaining acceptable performance but remaining behind the leading platforms. Among the x86 CPUs, the Ryzen 7 7800X3D required 76.09 s (4.57× slower), whereas the Ryzen 7 4800H required 347.28 s (20.84× slower). At the lowest end, the Google Colab CPU (753.83 s, 45.25× slower) and the Raspberry Pi 5 (1429.53 s, 85.81× slower) proved impractical for medium- or large-scale training because of their long execution times. The Apple M4 GPU was not included in this experiment because it was not supported by the available Metal backend.

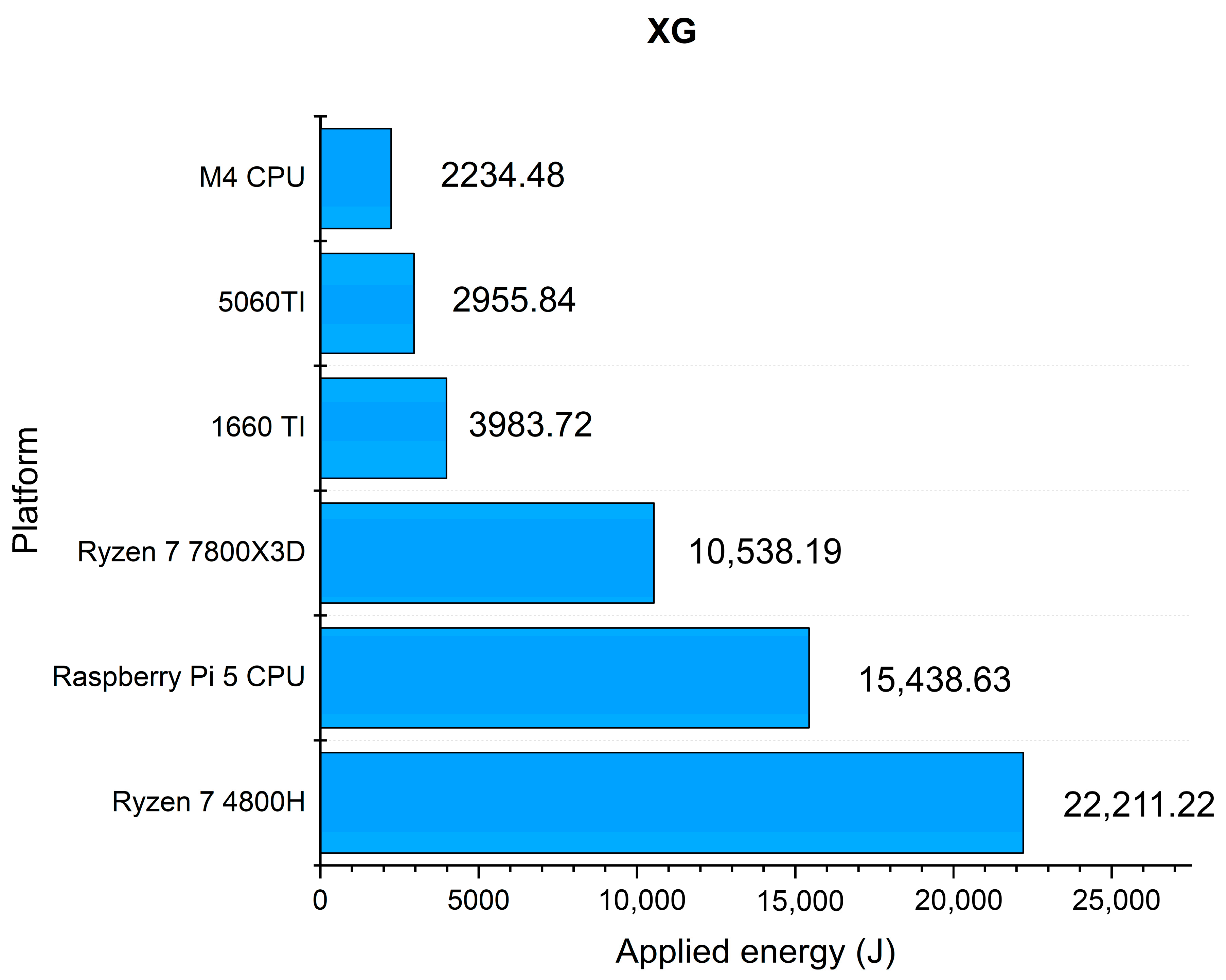

From the perspective of energy efficiency, the Apple M4 CPU was the most favorable measured platform, requiring only 2234.5 J, even lower than the RTX 5060 Ti (2955.8 J), which nonetheless offered the best overall trade-off between speed and energy. The GTX 1660 Ti consumed 3983.7 J, which reduced its relative efficiency despite still providing moderate training speed. The Ryzen 7 7800X3D and Ryzen 7 4800H consumed 10,538.2 J and 22,211.2 J, respectively, confirming that prolonged XGBoost training on higher-power general-purpose CPUs is less efficient than on optimized GPU or ARM-based platforms. The Raspberry Pi 5, despite its low power draw (10.80 W), accumulated 15,438.6 J because of its extremely long runtime. As in previous experiments, no power or energy measurements were available for the Colab platforms, which limits direct comparison on this axis.

Figure 5 reinforces these trends. The Apple M4 CPU and RTX 5060 Ti provided the most favorable balance between execution time and energy use, while the GTX 1660 Ti occupied an intermediate position. In contrast, the Ryzen processors and the Raspberry Pi 5 required substantially more accumulated energy, mainly because of their longer execution times rather than extremely high instantaneous power.

In terms of variance, XGBoost exhibited very stable behavior across all platforms. The RTX 5060 Ti, Apple M4 CPU, and Google Colab GPU T4 showed the lowest variability, with standard deviations below 0.3 s, indicating highly consistent execution and deterministic workload scheduling. The GTX 1660 Ti showed only slightly higher dispersion (0.56 s), which remained negligible relative to its total runtime. Among the CPU-based systems, the Ryzen 7 4800H (11.06 s) and Ryzen 7 7800X3D (7.82 s) displayed moderate variance, likely associated with thermal effects or background process fluctuations during longer runs. The Raspberry Pi 5 (4.48 s) and Google Colab CPU (8.99 s) also showed low relative variance compared with their total runtime, suggesting stable behavior even in constrained or virtualized environments. Overall, the very small standard deviations confirm that XGBoost is a highly deterministic and reproducible algorithm, with limited sensitivity to stochastic or hardware-level variation during training.

Regarding predictive performance, all platforms converged to essentially identical accuracy values in the range of 0.815–0.819. The slight difference between GPU-based platforms (0.819) and CPU-based platforms (0.815) is consistent with the use of different tree construction methods (gpu_hist versus auto), which may produce marginally different histogram binning. This result highlights the strong numerical stability and experimental reliability of XGBoost across heterogeneous hardware platforms. Unlike neural network training, where floating-point precision or backend-specific implementations may introduce slight differences in convergence, XGBoost produced virtually identical results across all tested platforms.

Overall, the runtime analysis revealed behavior markedly different from that observed in deep neural networks. XGBoost is much better optimized for CPU execution and scales efficiently even on non-specialized hardware. Although the RTX 5060 Ti remained the fastest platform, the differences with the Apple M4 CPU and Google Colab GPU T4 were relatively small compared with the gaps observed in CNN, Simple RNN, RNN-LSTM, or BiLSTM experiments. These results indicate that the hardware requirements of XGBoost are less dependent on specialized accelerators and more compatible with efficient CPU-based execution, making it a robust option for supervised learning tasks in environments without high-end GPU resources.

3.5. Results for BiLSTM

Table 18 summarizes the results obtained when training the BiLSTM model across the different hardware platforms. The reported metrics include training time, average power consumption, total energy use, accuracy, and loss.

The evaluation of the BiLSTM model highlights substantial differences in execution efficiency across the tested platforms. The RTX 5060 Ti established the performance baseline with the shortest training time (8.03 s, 1.0×), confirming its advantage for recurrent sequence modeling under GPU acceleration. The GTX 1660 Ti (14.20 s, 1.77× slower) and the Apple M4 GPU (13.91 s, 1.73× slower) achieved similar intermediate performance, while the Google Colab GPU T4 required 39.46 s (4.91× slower), remaining a practical cloud-based alternative for prototyping workloads.

Among CPU-based platforms, the Apple M4 CPU delivered the strongest result, completing training in 82.26 s (10.24× slower than the baseline). The Ryzen 7 7800X3D and Ryzen 7 4800H required 125.50 s and 207.91 s, corresponding to 15.63× and 25.89× slower execution, respectively. These results indicate that recurrent bidirectional sequence modeling can still be handled reasonably well on modern CPUs, although the performance gap relative to GPU-based platforms remains substantial.

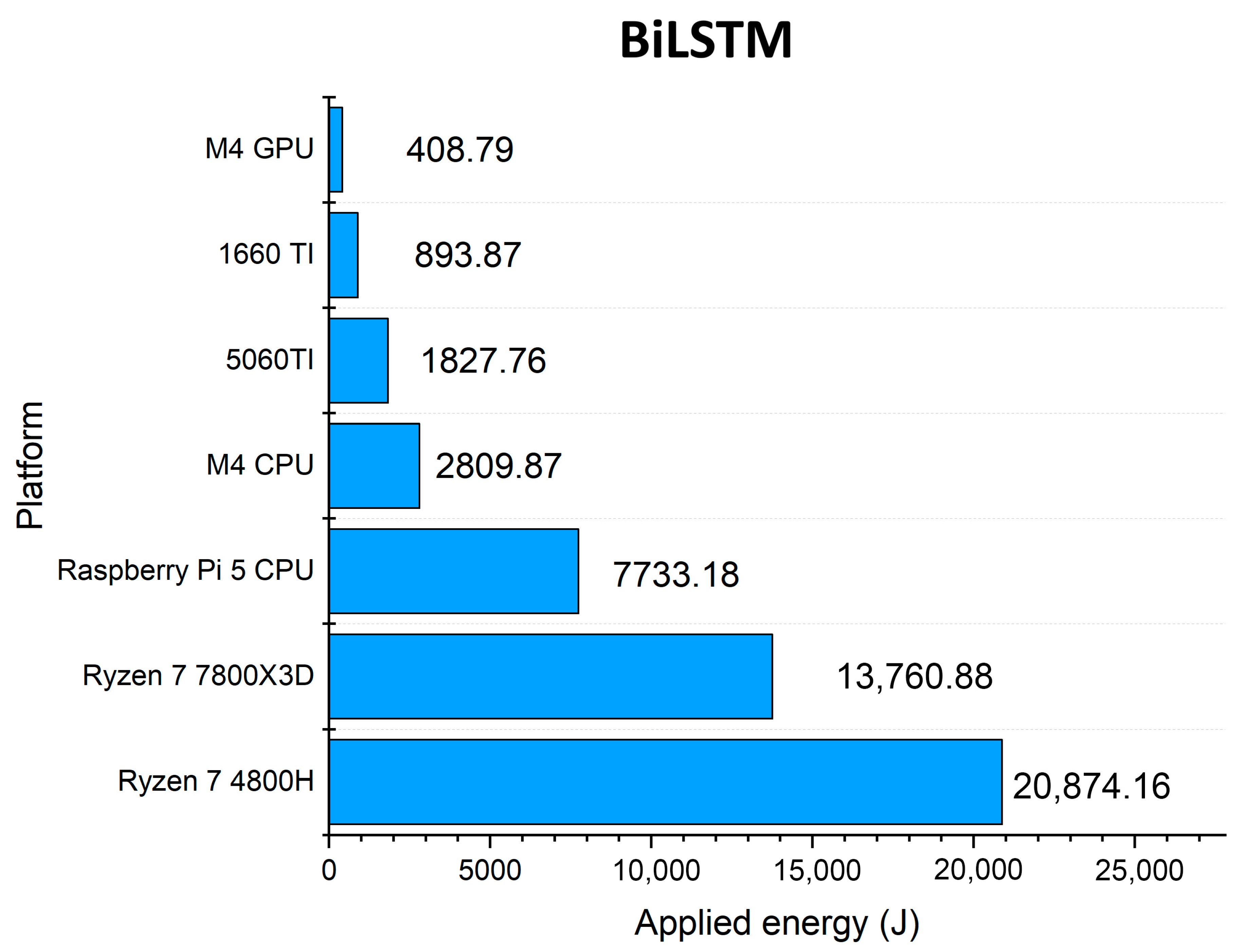

Figure 6 shows the energy consumption during BiLSTM training across the evaluated platforms.

At the lowest end of performance, the Google Colab CPU and the Raspberry Pi 5 required 469.48 s and 739.31 s, respectively, corresponding to 58.47× and 92.07× slower execution than the baseline. Such runtimes make these platforms unsuitable for efficient BiLSTM training beyond educational, low-cost, or baseline experimentation scenarios.

The energy-efficiency analysis confirmed these differences. The Apple M4 GPU was the most energy-efficient platform, requiring only 408.8 J, followed by the GTX 1660 Ti (893.9 J) and the RTX 5060 Ti (1827.8 J). Although the RTX 5060 Ti was the fastest platform, it consumed more total energy than the two mid-tier GPU alternatives. The Apple M4 CPU also showed competitive efficiency at 2809.9 J, substantially lower than the Ryzen 7 7800X3D (13,760.9 J) and Ryzen 7 4800H (20,874.2 J). The Raspberry Pi 5, despite its low power draw, accumulated 7733.2 J because of its long runtime.

Training times also revealed meaningful stability differences across platforms. The RTX 5060 Ti and GTX 1660 Ti exhibited the lowest variability, with standard deviations below 0.2 s, indicating highly consistent performance across repeated runs. The Apple M4 GPU showed moderate dispersion (0.93 s), whereas the Google Colab GPU T4 and the Ryzen 7 7800X3D displayed higher variability, with standard deviations of 10.94 s and 5.21 s, respectively. The Raspberry Pi 5 and Google Colab CPU also showed noticeable dispersion, which is expected given their lower performance and less predictable runtime conditions. Nevertheless, the observed variability did not alter the overall ranking among platforms.

With respect to convergence, all platforms achieved accuracy values between approximately 0.22 and 0.33, and loss values between 1.52 and 1.80. The RTX 5060 Ti, GTX 1660 Ti, and the Ryzen CPUs reached the lowest loss values, all close to 1.52, with accuracy near 0.32. The Apple M4 GPU produced the least favorable convergence metrics, with a loss of 1.8010 and an accuracy of 0.2217, suggesting slight differences in training dynamics, potentially associated with backend implementation or numerical precision. Even so, the spread in convergence values remained relatively limited, indicating that hardware mainly affected speed and energy behavior rather than the overall ability of the model to learn.

Overall, BiLSTM training revealed a clear separation between dedicated GPU accelerators and general-purpose platforms. The RTX 5060 Ti defined the upper bound in speed, while the Apple M4 GPU emerged as the most energy-efficient alternative. CPU-only configurations were strongly penalized in execution time, particularly in low-power or shared-resource environments. These results are consistent with the broader trends observed in the other experiments: hardware acceleration and backend maturity remain decisive factors for efficient supervised learning, while model convergence remains comparatively stable across platforms.

3.6. Cross-Platform Performance

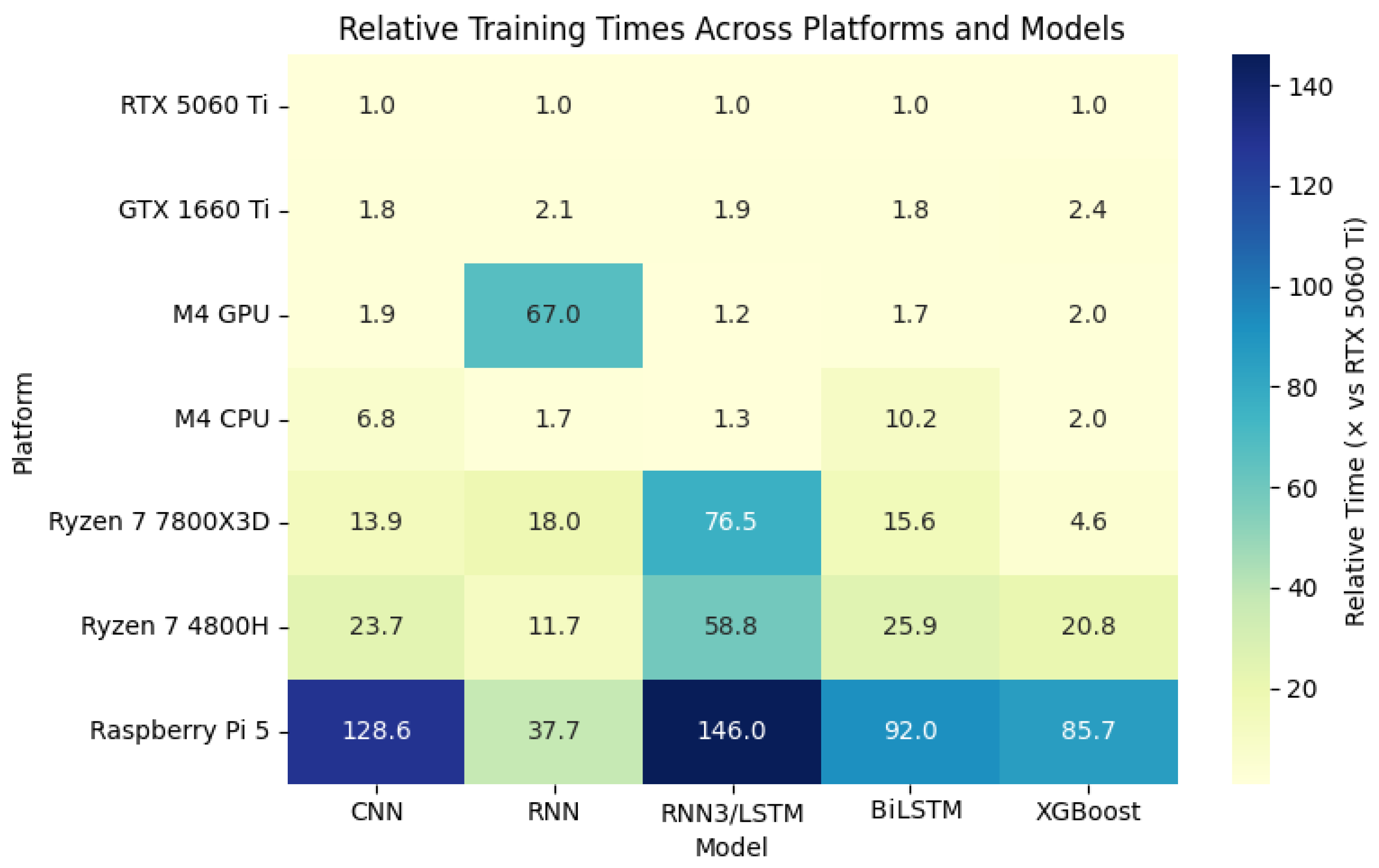

Figure 7 summarizes the relative training times of the evaluated models across all hardware platforms, normalized to the RTX 5060 Ti (1.0×). The X-axis represents model families, while the Y-axis lists the hardware platforms. Each cell indicates the relative training time with respect to the RTX 5060 Ti for that model. The color scale maps lighter tones to faster results and darker tones to slower executions.

This global representation confirms the trends observed in the detailed results: CUDA-enabled GPUs remain dominant in speed, with the RTX 5060 Ti establishing the reference baseline and the GTX 1660 Ti acting as a consistent mid-range performer. Apple Silicon exhibits competitive performance in RNN–LSTM, BiLSTM, and XGBoost, where the M4 CPU and M4 GPU approach GPU-class execution in some workloads. In contrast, x86 CPUs present a clear penalty in both time and energy, while the Raspberry Pi 5 serves mainly as an educational or low-cost baseline reference. Google Colab, in turn, should be interpreted as a practical cloud-based reference environment rather than as a strictly equivalent counterpart to dedicated local hardware, since its observed behavior may be influenced by shared-resource allocation, virtualization overhead, and provider-side scheduling. Overall, the heatmap reinforces that hardware performance is highly model-dependent.

3.7. Batch Size Sensitivity Analysis

Additional experiments were performed by systematically varying the batch size across all platforms. Separate implementations were used for CPU and GPU to ensure reasonable training times while maintaining comparable workloads. All experiments were conducted using the same datasets as the original benchmarks presented earlier, and, under consistent experimental conditions, total training time was recorded for each configuration. To keep the analysis tractable while still capturing representative behaviors, only CNN and LSTM models were considered for batch size evaluation. These models represent two fundamentally different computational patterns: highly parallel workloads (CNN) and sequential, dependency-bound workloads (LSTM). As such, they provide meaningful insight into how batch size affects both compute-bound and sequential models across architectures. The resulting figures reveal the dependence of performance on batch size for different hardware platforms and model types.

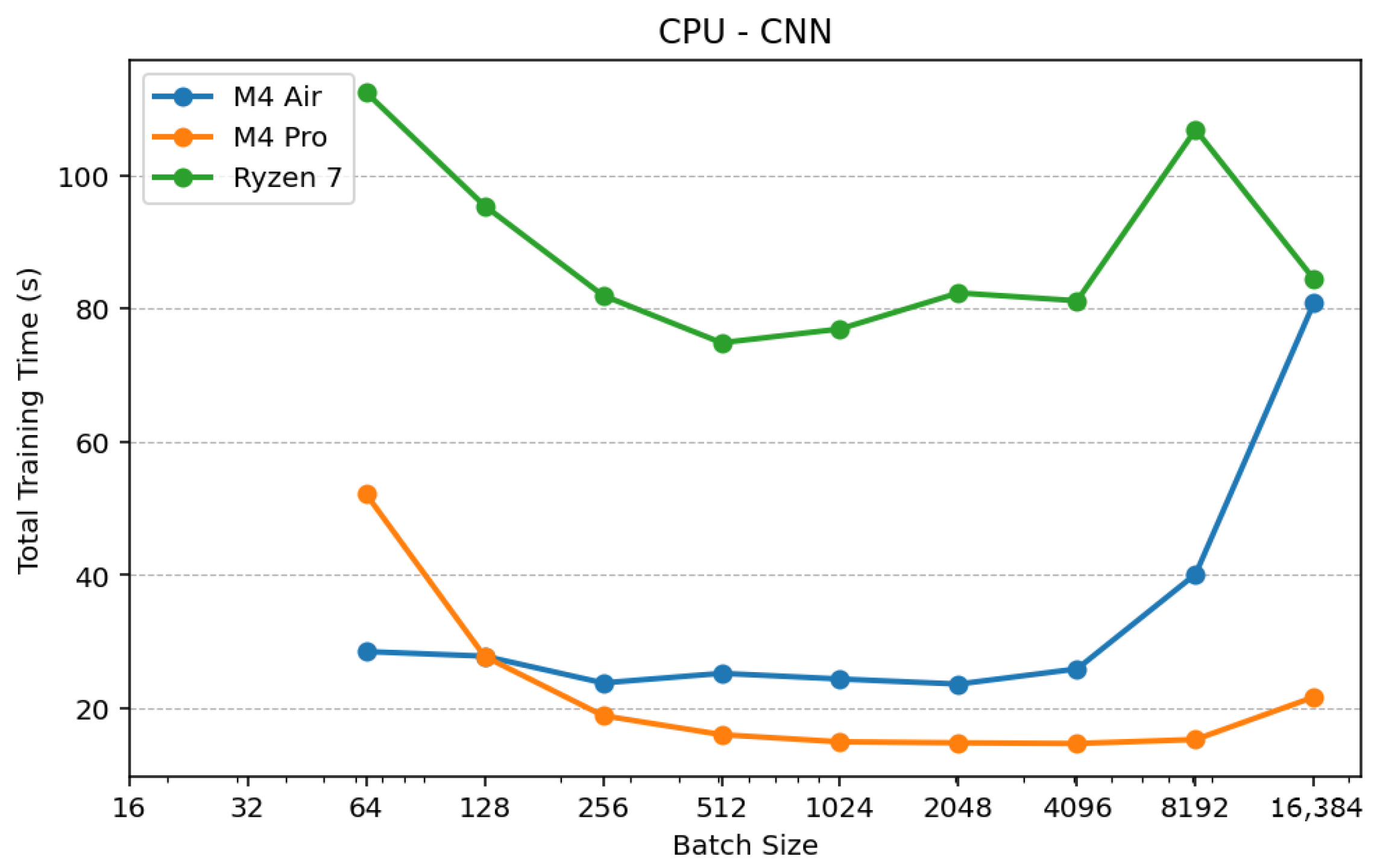

Figure 8 shows that increasing batch size reduces training time across CPU platforms due to lower overhead per update. The Apple M4 Pro reaches optimal performance at batch 4096, after which performance degrades, likely due to memory and cache limitations. The M4 Air follows a similar trend with earlier degradation, while the Ryzen 7 exhibits less stable behavior, indicating sensitivity to memory hierarchy. Overall, CPU performance improves with batch size up to a hardware-dependent optimum.

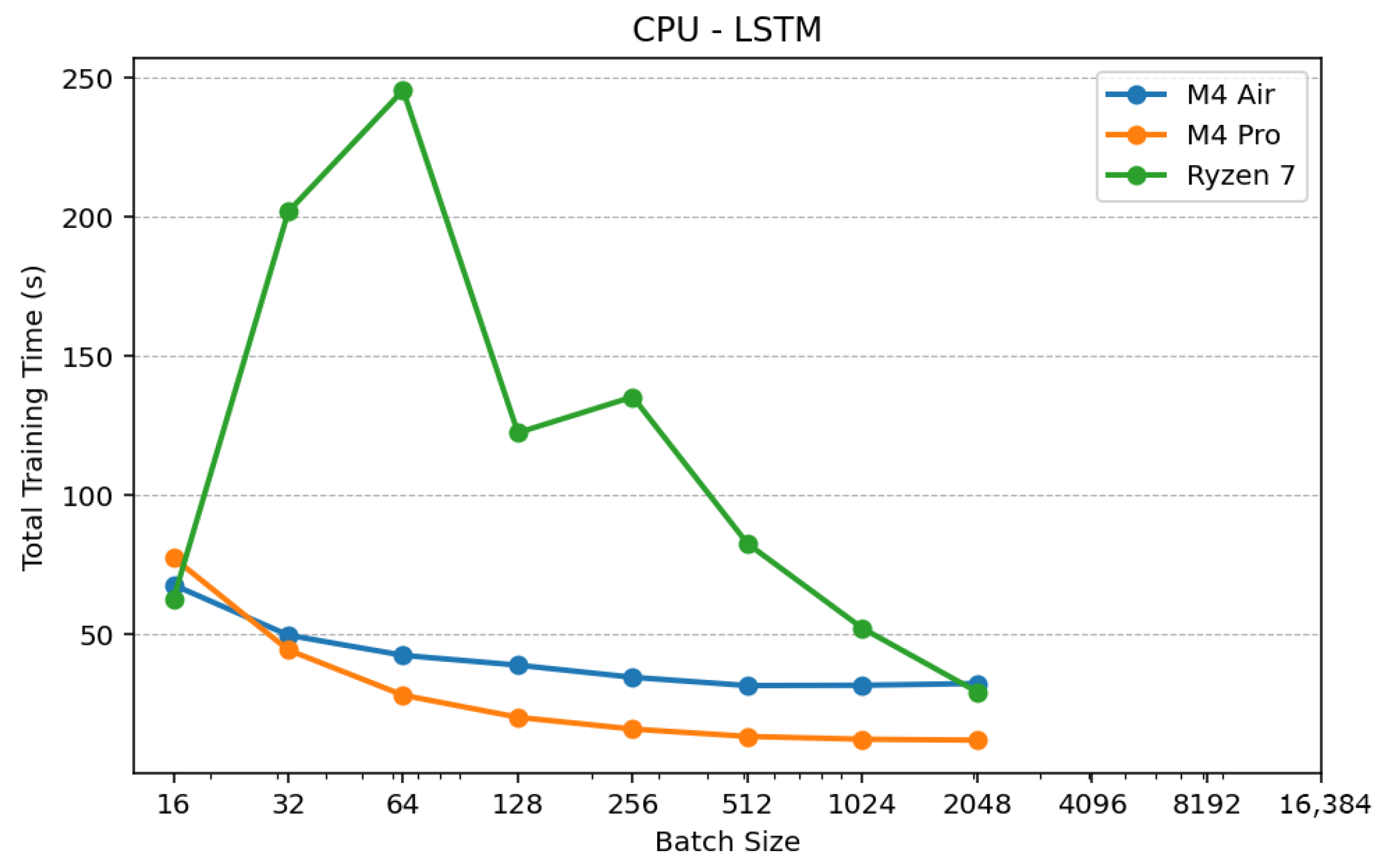

Figure 9 shows that LSTM performance on CPUs improves gradually with batch size, reflecting the sequential nature of the model. The M4 Pro achieves the best performance at batch 2048 with stable behavior, while the M4 Air follows a similar trend with higher latency. The Ryzen 7 shows variability at small batch sizes, indicating inefficiencies in sequential workload handling. Overall, LSTM models benefit from moderate batch increases with limited scalability compared to CNNs.

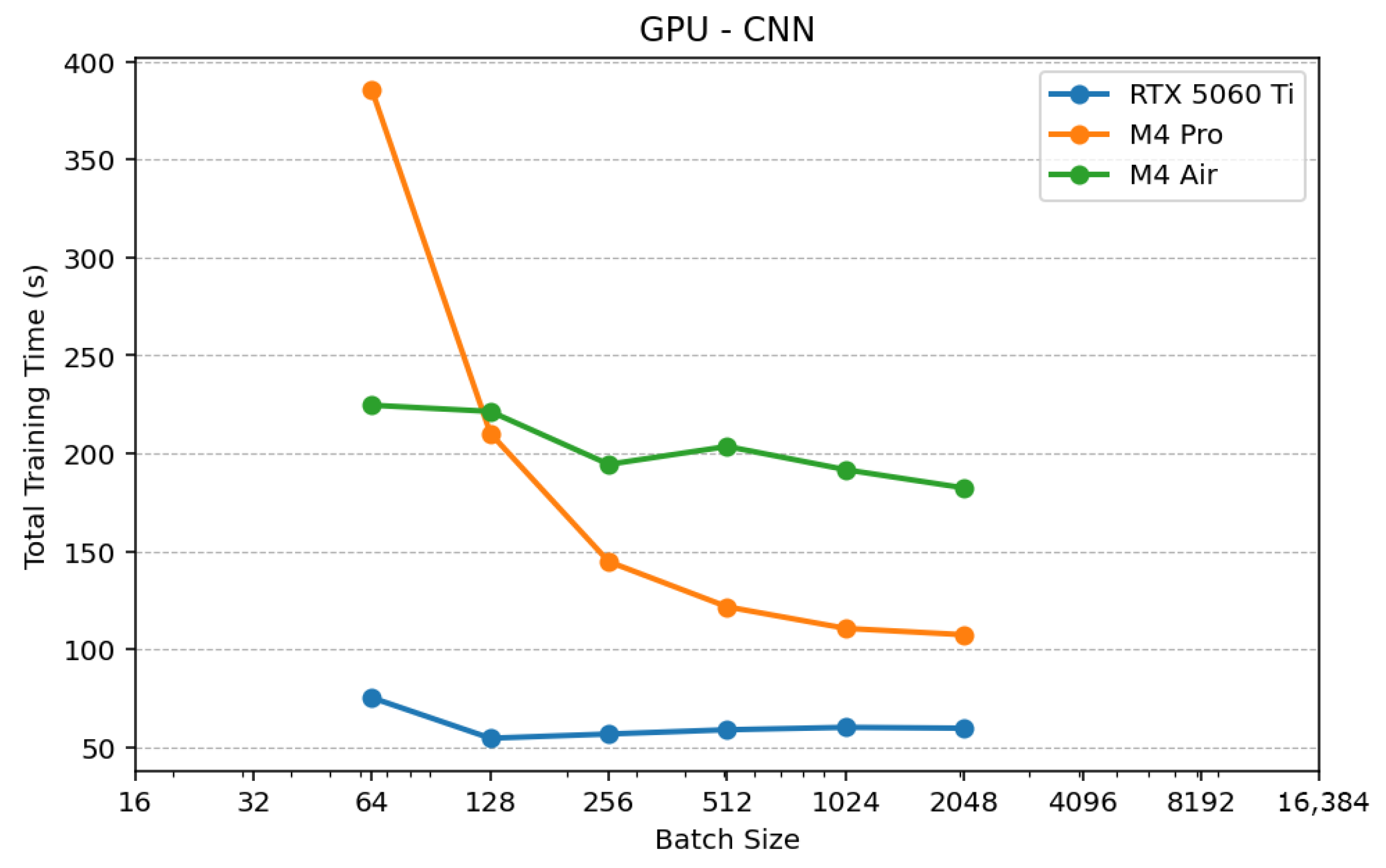

Figure 10 shows that CNN performance on GPUs improves rapidly as batch size increases, followed by a clear saturation point. The RTX 5060 Ti reaches near-optimal performance at batch 128, while Apple GPUs require larger batch sizes (1024–2048) to reach similar regimes. Beyond this point, performance remains stable, indicating full utilization of parallel resources.

Figure 11 shows that LSTM performance on GPUs improves more gradually and stabilizes around batch sizes of 512–1024. Differences between platforms are smaller than in CNN workloads, indicating limited ability of recurrent models to exploit GPU parallelism due to their sequential nature.

The results demonstrate that the impact of batch size depends strongly on both the hardware and the model architecture. GPUs achieve significant gains for CNN workloads up to a saturation point, while CPUs benefit from larger batch sizes but degrade when memory limits are reached. For LSTM models, the effect is less pronounced due to their sequential nature. The experiments show that optimal batch size is not universal and varies across platforms and workloads. Therefore, using a single batch configuration may lead to suboptimal hardware utilization and biased comparisons. This highlights that, while the controlled benchmarking setup ensures fair and reproducible comparison, hardware-aware tuning can provide additional insight into the practical performance limits of each platform.

The optimal batch size for CPU platforms varies significantly across architectures, as shown in

Table 19. The Apple M4 Pro consistently achieves the best performance, requiring large batch sizes (2048–4096) to maximize efficiency. This behavior is enabled by its unified memory architecture and high memory bandwidth, which allow efficient handling of large data blocks and reduced data movement overhead. The M4 Air follows a similar trend with slightly smaller optimal batches, likely due to thermal constraints and reduced sustained performance. In contrast, the Ryzen 7 reaches its best performance at much lower batch sizes, indicating earlier saturation. This behavior can be attributed to its cache hierarchy and memory access patterns, where larger batch sizes increase pressure on caches and memory bandwidth, leading to reduced efficiency. Overall, these results confirm that CPUs benefit from increasing batch size to amortize computational overhead, but the optimal configuration is strongly hardware-dependent and influenced by memory architecture.

For GPU platforms, optimal batch sizes are generally smaller than for CPUs and depend strongly on the underlying architecture, as shown in