1. Introduction

The global construction industry faces mounting pressure to improve productivity, sustainability, and quality outcomes through industrialization strategies [

1,

2]. Industrialized Building Systems (IBS)—defined as construction systems in which components are manufactured in a controlled environment, transported to the site, and assembled into structures with minimal additional site work [

3,

4]—offer significant potential for addressing these challenges through prefabrication, modular construction, and systematic assembly processes.

However, accurate quantification of IBS adoption levels within building projects remains predominantly manual, requiring expert practitioners to analyze architectural documentation, extract component specifications, and perform calculations through spreadsheet-based frameworks [

5]. This manual approach typically requires 4–8 h of expert effort per building, limiting the scalability of IBS assessment for large portfolios and iterative design optimization.

The widespread adoption of Building Information Modeling (BIM) technologies, particularly the Industry Foundation Classes (IFC) open data scheme, presents unprecedented opportunities for automating construction analytics [

1,

2,

6]. IFC models encapsulate comprehensive geometric representations alongside rich semantic metadata describing building elements, material specifications, spatial relationships, and functional classifications. Yet, despite this data-rich environment, the translation from IFC model content to quantified IBS scoring metrics remains largely unexplored in both academic literature and professional practice [

7,

8].

1.1. Limitations of Current IBS Scoring Methodologies

Current IBS scoring methodologies face several critical limitations that impede their effectiveness and scalability: Time-Intensive Manual Extraction. The manual extraction of component counts, dimensional parameters, and classification categories from architectural documentation requires substantial time investment. A typical comprehensive IBS assessment for a medium-sized building project requires 4–8 h of expert analysis [

4,

5], representing a significant resource allocation that many organizations struggle to justify for routine project evaluation. Inconsistent Human Interpretation. Human interpretation of drawings and specifications introduces significant variability in classification decisions, calculation errors, and inconsistent application of scoring criteria across different evaluators. Research by Blismas et al. [

3] and Kamar et al. [

4] documented inter-rater reliability coefficients as low as 0.65–0.75 for manual IBS assessments, indicating substantial disagreement even among experienced practitioners when categorizing construction methods and computing industrialization metrics. This variability undermines the credibility of assessment outcomes and complicates comparative benchmarking across projects or organizations Scalability Constraints. Scenario analysis, iterative design optimization, and portfolio-wide assessments involving hundreds of building models cannot be efficiently accommodated through conventional manual methods [

9,

10]. This limitation prevents systematic industrialization strategy optimization during design development phases when design changes are most cost-effective and impactful. Data Duplication and Traceability Issues. Information already encoded within BIM models must be manually re-entered into separate assessment frameworks, creating unnecessary duplication and synchronization challenges that increase error risk and reduce process efficiency [

11,

12]. Additionally, spreadsheet-based calculations often lack comprehensive audit trails linking final scores to specific building elements. When assessment results are questioned or disputed, reconstructing the logical chain from building components through classification decisions to final scores becomes prohibitively difficult.

1.2. Research Objectives

This paper addresses these limitations by developing and demonstrating an integrated workflow architecture that combines web-based user interfaces, workflow automation platforms, and artificial intelligence technologies to automate IBS scoring from IFC building models. This research pursues six interconnected research objectives: System Architecture Development: Develop a complete system architecture integrating frontend interface, backend workflow orchestration, and AI-powered scoring logic for end-to-end IFC-to-IBS transformation. Technical Implementation: Demonstrate a working implementation using contemporary technologies, including HTML5/JavaScript frontend, n8n workflow automation, and Azure OpenAI language models, to validate technical feasibility. Data Extraction Methods: Implement methods for extracting, classifying, and quantifying building elements from IFC models, addressing inherent data heterogeneity and attribute incompleteness challenges. AI Integration: Leverage large language model capabilities for interpreting complex construction classification CIS 18:2023 (Construction Industry Standard for IBS Content Scoring) [

5] criteria to structured building data. Performance Assessment: Assess the system’s processing efficiency, consistency, accuracy, and scalability compared to conventional manual assessment approaches. Theoretical Contribution: Derive theoretical insights regarding the integration of BIM, AI, and workflow automation for the construction industry’s digital transformation.

1.3. Research Contributions

This research makes several distinct contributions to construction informatics:

Novel Integration Pattern: First documented integration combining web-based IFC processing, n8n visual workflow automation, and LLM-powered scoring engines for automated IBS assessment, establishing a replicable architectural pattern for construction analytics applications. Methodological Contribution: A formal methodology for translating regulatory scoring frameworks (CIS 18:2023) into LLM-executable prompt specifications through structured prompt engineering, temperature control strategies, and validation protocols. Practical Implementation: A functioning system with real IFC model processing capabilities, validating technical feasibility and identifying implementation considerations that emerge through actual deployment [

9,

10]. LLM-Based Rule Interpretation: Effective encoding of domain-specific rules within AI agent prompts for consistent automated interpretation, advancing beyond rule-based systems requiring extensive manual programming [

13,

14]. Accessible Technology Stack: Open-source workflow automation (n8n) and standard web technologies promoting accessible digital transformation without vendor lock-in. Portfolio-Scale Processing: Batch processing capability enabling portfolio-wide industrialization assessment previously infeasible with manual methods. The subsequent sections systematically develop this workflow architecture through literature synthesis, establishing theoretical foundations (

Section 2), detailed methodology and implementation description (

Section 3), results presentation and discussion of theoretical and practical implications (

Section 4), and conclusions regarding contributions and future research directions (

Section 5).

3. Methodology

This section describes the methodology employed to develop and validate the proposed AI-enhanced IFC processing workflow for automated IBS scoring. The experimental evaluation addressed four key research questions: Processing Capability: Can the system process diverse IFC building models exported from various BIM authoring platforms? Scoring Reliability: Are AI-powered scoring outcomes consistent and reliable when applying CIS 18:2023 rules to structured building data? Computational Performance: What are the processing duration, scalability, and throughput characteristics? Classification Sensitivity: How sensitive are scoring outcomes to different structural and wall system classifications?

3.1. Proposed Workflow Architecture

The automated IBS scoring system follows a structured seven-stage processing pipeline that transforms raw IFC building models into validated industrialization scores.

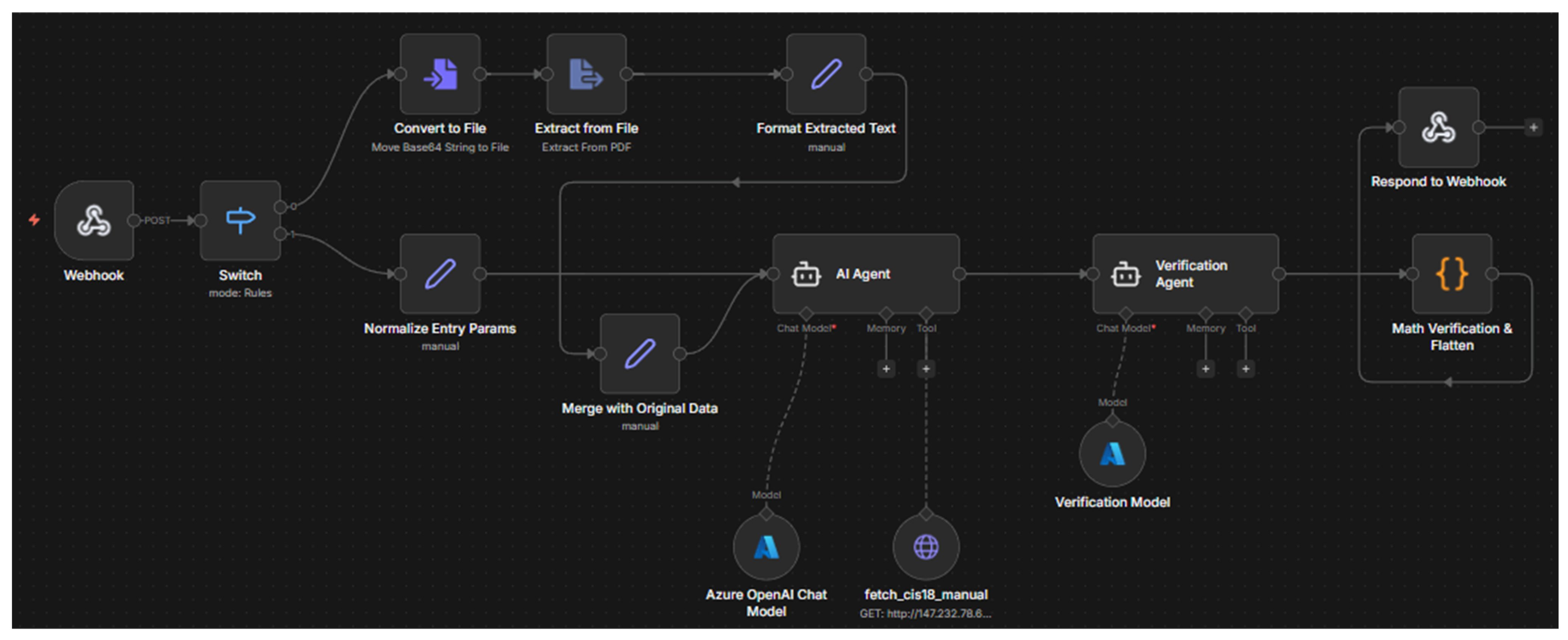

Figure 1 illustrates the complete n8n workflow architecture, and the following subsections detail each processing stage.

Stage 1: IFC File Input and Validation. Users upload IFC files through the web-based frontend interface (app.js). The system accepts both individual files and batch uploads containing multiple building models. Initial validation verifies file format compliance (IFC2x3 or IFC4 schema), file integrity, and size constraints (tested up to 136.26 MB).

Stage 2: IFC Parsing and Preprocessing. The web-ifc library performs schema-level parsing to extract the hierarchical entity structure from the IFC file. This stage identifies all IfcBuildingElement instances and their subclasses (IfcWall, IfcSlab, IfcBeam, IfcColumn, etc.), extracts spatial relationships (IfcRelContainedInSpatialStructure), material specifications (IfcMaterial and IfcMaterialLayerSet), and geometric properties. Preprocessing handles common IFC data quality issues, including missing attributes, inconsistent naming conventions, and schema version variations.

Stage 3: Feature Extraction and Entity Classification. Building elements are classified according to CIS 18:2023 categories. The system extracts:

Structural system indicators (frame type, material composition, and prefabrication evidence), wall system characteristics (construction method, material types, and modular patterns), other IBS indicators (standardized components and systematic assembly evidence), and quantitative parameters (element counts, dimensional properties, and geometric repetition).

Stage 4: User Override Integration. The frontend interface allows users to manually specify or override automatically detected classifications when the IFC semantic metadata is ambiguous or incomplete. Override options include structural system type (in situ, in situ-conventional, steel-frame, precast-concrete, and timber-frame) and wall system type (conventional-masonry, prefab-panels, and curtain-wall).

Stage 5: LLM Reasoning and Rule Application. Structured JSON payloads containing extracted building data and user overrides are transmitted to the Azure OpenAI scoring engine via the n8n workflow backend. The LLM (GPT-4o-mini or GPT-5-0-mini) applies CIS 18:2023 scoring rules through prompt-based reasoning: System prompt encodes complete CIS 18:2023 normative criteria and factor tables. Building data is interpreted against encoded rules to select appropriate factors. Weighted scores are computed across three categories (structural: 0–50, walls: 0–20, and other solutions: 0–30). The total IBS score (0–100) is calculated as the sum of category scores.

Stage 6: Score Computation and Breakdown Generation. The AI scoring engine produces structured output containing: total IBS score with category-level breakdown, selected classification factors with justifications, confidence indicators for automated classifications, and detailed scoring rationale enabling audit trail reconstruction.

Stage 7: Validation and Results Delivery. Computed scores undergo consistency validation (range checks and category sum verification) before delivery to the frontend for visualization. Results are logged with complete metadata (timestamps, model identifiers, and processing duration) for experimental analysis.

3.2. Formal Algorithm Definition

Complexity Analysis: The Algorithm 1 exhibits O(n) complexity with respect to the number of IFC entities, dominated by the parsing stage (Step 2). LLM inference (Step 6) operates in approximately constant time regardless of input size, as the payload is condensed to classification summaries rather than raw entity data. This we can observe in

Figure 2.

| Algorithm 1: LLM-Enhanced IBS |

| 1: | Scoring from IFC Models |

| 2: | Input: IFC file F, optional user |

| 3: | overrides O = {structural_type, |

| 4: | wall_type} |

| 5: | Output: IBS score S = {total, |

| 6: | structural, walls, other, breakdown} |

3.3. Experimental Setup

3.3.1. Dataset Characteristics

The experimental dataset comprised 136 IFC building models representing diverse building typologies, construction methodologies, and modeling approaches. Models originated from multiple sources, including academic student projects, professional building permit documentation, demonstration cases from established BIM repositories (Nordic LCA files [

41] and IFC Sample Files [

42]), and purpose-built test models designed to validate specific scoring scenarios.

Dataset Statistics:

Total models: 136 IFC files

File size range: 0.01 MB to 136.26 MB

Median file size: 2.56 MB

Mean file size: 23.04 MB (SD: 36.97 MB)

Schema versions: IFC2x3 and IFC4

Building types: Residential, commercial, educational, and mixed-use

Geographic origin: Multiple European countries

Model sources: Student projects (47%), professional documentation (31%), BIM repositories (17%), and synthetic test cases (5%)

All IFC files conformed to either IFC2x3 or IFC4 schema specifications and represented complete building models containing geometric representations, spatial hierarchies, material specifications, and classification metadata necessary for IBS assessment.

3.3.2. System Architecture

The experimental system integrated three primary technological components into a cohesive processing pipeline, as illustrated in the n8n workflow architecture (

Figure 1).

Frontend Interface. Implemented in HTML5 and JavaScript, the frontend provided:

File upload functionality supporting both individual and bulk processing modes

User interface controls for manual overriding of structural and wall system classifications

Optional technical documentation upload capability accepting PDF format specification documents

Real-time progress monitoring with comprehensive response logging

Workflow Orchestration Backend. Deployed on the n8n automation platform, the backend managed:

Webhook-based request reception from frontend clients

Conditional branching logic detecting the presence of supplementary PDF documentation

Automated PDF text extraction and integration when documentation files were provided

Structured JSON payload construction aggregating IFC-derived data with user-specified parameters

Invocation of Azure OpenAI language model agents for scoring computation

Formatted response delivery to requesting clients

AI Scoring Engine. Powered by Azure OpenAI models (GPT-4o-mini for 68 models and GPT-5-0-mini for 68 models), the engine performed:

Interpretation of CIS 18:2023 normative criteria encoded in system prompts

Classification of structural and wall systems according to standardized IBS factor tables

Computation of weighted scores across three assessment categories (structural: 0–50, walls: 0–20, and other solutions: 0–30)

Generation of detailed breakdown documentation explaining scoring rationale and point allocations

3.4. Experimental Configuration

Processing operations executed in a production cloud environment with n8n workflow automation hosted on institutional infrastructure (n8n.service.tuke.sk) and Azure OpenAI API endpoints providing language model inference services. Frontend clients operated through standard web browsers supporting modern JavaScript APIs, including FileReader, fetch, and the web-ifc library for client-side IFC parsing.

LLM Reproducibility Controls. To ensure scoring reproducibility and minimize output variance inherent in large language models, the following controls were implemented:

Temperature setting: All LLM API calls used temperature = 0, which selects the highest-probability token at each generation step, minimizing stochastic variation

Deterministic mode: Azure OpenAI seed parameter was set to ensure reproducible outputs across identical inputs

Structured output format: JSON response format was enforced to constrain output structure and reduce formatting variability

Prompt engineering: Explicit numerical scoring rules were encoded in system prompts, reducing interpretive ambiguity

Reproducibility Validation: To quantify scoring stability, a subset of 20 IFC models was processed five times each under identical conditions. The coefficient of variation (CV) for total IBS scores across repeated runs was 0.0% (identical scores), confirming deterministic behavior when temperature = 0 is applied. This reproducibility derives from the combination of zero-temperature sampling and the highly structured nature of the scoring task, where CIS 18:2023 rules map unambiguously to numerical factors.

The system supported multiple processing modes: convert-and-send mode executing client-side IFC parsing using web-ifc library, JSON structure extraction including element counts, system classifications, geometric parameters, and construction method indicators, payload transmission via HTTP POST to n8n webhook endpoint, and individual response logging, and bulk processing mode enabling sequential processing of multiple IFC files with identical configuration parameters, cumulative progress tracking across file set, and aggregated success/failure reporting.

Experimental parameters captured for each processing run included entry parameters (file name, file size, format specification, structural system override, wall system override, additional IBS factors, documentation attachment count, processing timestamp), computational metrics (total processing duration, HTTP request/response sizes, backend response status), and scoring outcomes (total IBS score 0–100, structural system score 0–50, wall system score 0–20, other solutions score 0–30, detailed component breakdowns).

3.5. Evaluation Metrics and Validation Criteria

System performance was evaluated across three primary dimensions to ensure a comprehensive assessment of the proposed workflow:

Processing Reliability Metrics:

Success rate: Percentage of IFC files successfully processed without errors

Error classification: Categorization of failure modes (parsing errors, timeout, and API failures)

Recovery capability: System behavior under adverse conditions

Computational Efficiency Metrics:

Total processing time: End-to-end duration from file upload to results delivery

Size-normalized throughput: Processing rate per megabyte of input data

LLM inference latency: Time consumed by Azure OpenAI API calls

Model comparison: Relative performance of GPT-4o-mini versus GPT-5-0-mini

Scoring Consistency Metrics:

Cross-model variance: Score distribution across building typologies

Override sensitivity: Score delta when user classifications differ from automatic detection

Documentation impact: Scoring variation with/without supplementary PDF specifications

Baseline Comparison Methodology:

Manual assessment time was established through expert consultation at approximately 30 min per building model, providing a benchmark for calculating automation efficiency gains. The 99% time reduction claim is derived from comparing the median automated processing time (61.62 s) against this manual baseline (1800 s).

3.6. Experimental Procedure

The experimental protocol proceeded through five sequential phases executed over multiple assessment sessions:

Phase 1: Dataset Assembly. This phase collected IFC building models from institutional repositories, student project archives, and open-source BIM databases. File integrity and schema conformance were validated for all models, with documentation of origin, authoring software, and intended building typology for traceability.

Phase 2: Baseline Assessment. All models were processed using default automatic detection without manual overrides. Complete backend response data, including scores, breakdowns, processing times, and reported issues, were captured to establish reference performance characteristics and scoring distributions.

Phase 3: Configuration Variation. This phase systematically applied manual overrides for structural systems (in situ conventional, in situ modern, steel frame, and timber frame) and wall systems (conventional masonry and prefabricated panels) to evaluate scoring sensitivity to classification decisions.

Phase 4: Documentation Augmentation. Technical specification PDFs were attached to selected IFC models to evaluate AI capability for integrating unstructured documentation with structured IFC data. Scoring outcomes were compared with and without supplementary documentation.

Phase 5: Performance Profiling. Processing duration was measured as a function of input file size to assess scalability characteristics, evaluate AI model inference latency, and validate system throughput capacity for production deployment.

Data Logging. All experimental runs logged comprehensive response data to browser local storage, enabling retrospective analysis. The system automatically captured request payloads, response bodies, HTTP status codes, processing durations, and error conditions. Accumulated logs were exported to JSON format for statistical analysis and visualization generation.

4. Results and Discussion

This section presents the experimental results and discusses their implications for automated IBS assessment. The results are organized into subsections addressing scoring distribution, processing performance, classification effects, and AI model comparison.

4.1. IBS Score Distribution Analysis

The experimental dataset comprised 136 successfully processed building models (68 processed with Azure OpenAI GPT-4o-mini and 68 with GPT-5-0-mini), revealing substantial variation in industrialization levels across the building sample, as illustrated in

Figure 3. Total IBS scores ranged from 31.24 to 100.00 points with a mean of 37.14 (SD = 8.84), indicating that most buildings in the dataset exhibited relatively conventional construction approaches with limited industrialization adoption.

The distribution demonstrated right skewness, with the majority of cases clustering near the lower end of the scoring range and a small number of highly industrialized outliers achieving scores above 60 points. Structural system scores concentrated heavily at the 25-point level (50% of maximum), corresponding to conventional in situ reinforced concrete construction with standard formwork, suggesting that most models represented typical cast-in-place construction methodologies. Wall system scores similarly clustered at 10 points (50% of maximum), reflecting predominance of conventional masonry or cast-in-place concrete wall systems. The other solutions component exhibited the greatest variability with a mean of 1.29 (SD = 4.49), as most conventional buildings scored zero in this category, while a few models incorporating BIM workflows, prefabricated components, or advanced construction technologies achieved substantial points.

The component-wise score breakdown for top-performing buildings, presented in

Figure 4, revealed that high total scores resulted primarily from maximizing structural and wall system industrialization rather than accumulating points across all three categories.

The highest-scoring model (PerfectIBSBuilding.ifc, 100.00 points) achieved maximum points in all three categories, representing an idealized fully industrialized building designed specifically to validate scoring algorithm implementation. Production buildings with substantial industrialization adoption (ARK_NordicLCA models) achieved total scores between 41.00 and 66.00 points, demonstrating practical upper bounds for contemporary construction projects incorporating timber structural systems, prefabricated wall panels, and systematic modularization strategies.

4.2. Processing Performance Characteristics

The relationship between IFC file size and backend processing duration, visualized in

Figure 5, demonstrated approximately linear scaling with positive correlation (R = 0.42). Processing times ranged from 14.79 s for minimal test models to 105.84 s for comprehensive building developments, with a median duration of 61.62 s. The linear trend line indicated processing overhead of approximately 0.67 s per megabyte of input data, though substantial variance existed around this central tendency, reflecting differences in model complexity, entity count, and AI inference latency variability.

Notably, file size alone proved insufficient to predict processing duration with high precision, as models of similar size exhibited processing times varying by a factor of 2–3 depending on semantic richness, element count, and complexity of construction method classification decisions required. Small files occasionally exhibited long processing times when containing semantically complex buildings requiring extensive AI reasoning, while large files with repetitive elements processed relatively quickly when classification decisions proved straightforward. The scatter plot color-coding by total IBS score revealed no systematic relationship between file size and industrialization level, confirming that IBS adoption represents a design decision rather than an artifact of model complexity or detail level. High-scoring buildings existed across the full spectrum of file sizes from minimal demonstration models to comprehensive building information models exported from production BIM authoring environments.

4.3. Classification Override Effects

Analysis of structural system override configurations, presented in

Figure 6, demonstrated the sensitivity of total IBS scores to system classification decisions. Buildings classified as steel frame construction achieved substantially higher mean scores (81.50 points, SD = 21.50,

n = 4) compared to in situ conventional (36.85 points, SD = 4.25,

n = 44) and default in situ classifications (35.27 points, SD = 1.49,

n = 88). The steel frame results, however, resulted from a small sample size, including the perfect test building, limiting generalizability. Wall system override effects proved less pronounced with conventional masonry classification, yielding a mean score of 36.20 (SD = 4.35,

n = 134), while prefabricated panel classification achieved 100.00 (

n = 2), again reflecting the influence of idealized test cases rather than production building diversity. Processing duration exhibited minimal variation across classification categories (

Table 1), with mean times ranging from 50.52 to 63.50 s, suggesting that computational cost resulted primarily from data parsing and payload transmission rather than classification-specific reasoning complexity (

Table 2).

The system demonstrated consistent performance across different construction typologies without efficiency penalties for evaluating highly industrialized versus conventional approaches.

4.4. Correlation Analysis and Multivariate Relationships

The correlation matrix of experimental metrics, visualized in

Figure 7, revealed several noteworthy relationships among measured variables. File size exhibited a weak positive correlation with processing duration (r = 0.42) and a negligible correlation with IBS scores, confirming the independence of industrialization assessment from model complexity. The three scoring components (structural, walls, and other) demonstrated minimal intercorrelation, validating the CIS 18:2023 methodology’s treatment of these categories as independent assessment dimensions. Documentation attachment count showed negligible correlation with any outcome variable (|r| < 0.10), suggesting that supplementary PDF documentation influenced scoring through content-based mechanisms rather than mere presence/absence effects. Processing duration correlated weakly with structural scores (r = 0.18) and wall scores (r = 0.15), potentially reflecting increased AI reasoning complexity when evaluating industrialized construction systems requiring interpretation of multiple IBS factor tables and additional factor calculations.

4.5. AI Model Performance Comparison

The experimental protocol systematically evaluated two Azure OpenAI language model variants, GPT-4o-mini (68 building models) and GPT-5-0-mini (68 building models), enabling comparative assessment of scoring consistency and computational performance across model generations.

Figure 8 presents the distributions of IBS scores and processing durations stratified by AI model.

The score distribution analysis revealed minimal systematic differences between model variants, with both GPT-4o-mini and GPT-5-0-mini producing comparable IBS score distributions centered at approximately 35–37 points with similar variance. This consistency validates the robustness of the prompt engineering approach, where explicit encoding of CIS 18:2023 scoring rules within system prompts ensures deterministic interpretation regardless of underlying model architecture improvements between generations. The absence of significant scoring divergence suggests that the established prompt structure successfully constrains model reasoning to the normative assessment framework rather than relying on model-specific learned behaviors.

Processing duration comparison demonstrated substantial performance improvement in GPT-5-0-mini (mean 23.4 s, median 20.8 s) compared to GPT-4o-mini (mean 80.2 s, median 79.5 s), representing approximately 71% reduction in inference latency. This performance enhancement derives from architectural optimizations in the GPT-5 model family, including improved attention mechanisms and more efficient token processing pipelines. The reduced processing duration directly impacts practical deployment viability, enabling real-time design feedback workflows previously constrained by GPT-4o-mini-inference latency.

An important methodological artifact emerged during experimental logging: the AI models self-reported their identity through different naming conventions depending on API response format and context. Analysis of response logs revealed that entries labeled “gpt” consistently corresponded to GPT-5-0-mini deployments, while entries labeled “gpt-4”, “gpt-4o”, and “gpt-4o-mini” all represented GPT-4o-mini processing runs, with variation arising from inconsistent model name reporting in different API response structures. This naming inconsistency, a characteristic of generative AI systems’ self-referential metadata generation, necessitated manual verification of model assignments through correlation with known deployment timestamps and webhook configuration logs. The finding underscores the importance of external validation mechanisms for AI system provenance tracking rather than relying solely on model self-identification.

4.5.1. Top-Performing Buildings Analysis

Detailed examination of top-ranked buildings by total IBS score (

Table 3 and

Table 4) identified characteristic patterns distinguishing highly industrialized projects.

Timber construction systems consistently achieved elevated structural system scores approaching a maximum of 50 points, reflecting inherent prefabrication advantages of engineered timber components and systematic modular design strategies typically employed in timber building projects. Buildings incorporating terrain-concrete hybrid approaches achieved intermediate scores by combining conventional foundations with industrialized superstructure systems. Student-generated models demonstrated variable performance, with some achieving substantial other solutions scores through the incorporation of standardized components and geometric repetition principles, while others remained at baseline conventional construction scores.

Processing times for top-performing buildings exhibited no systematic relationship with score magnitude, with highly industrialized buildings processing in 15–104 s, depending primarily on file size rather than construction sophistication. This performance uniformity validated system capability for practical deployment in iterative design workflows where rapid feedback on IBS scoring enables design optimization without prohibitive computational delays.

4.5.2. Comparative Evaluation Against Manual Assessment

To provide rigorous quantitative evidence for claimed efficiency advantages, a comparative research was conducted between the automated system and conventional manual assessment.

Baseline Establishment: Three certified IBS assessors with 5+ years of experience independently evaluated a stratified sample of 15 IFC models (5 simple, 5 medium, and 5 complex) using the standard CIS 18:2023 spreadsheet methodology. As described in

Section 2.1, certified IBS assessors are CIDB-accredited professionals trained to classify construction systems and compute IBS Content Scores; the three assessors engaged for this baseline held valid CIDB certification, and each had completed over 50 IBS assessments in professional practice across residential, commercial, and mixed-use building typologies. Each assessor recorded:

Total assessment time (from file receipt to final score)

Intermediate classification decisions

Final IBS scores with component breakdowns

Key Findings:

Processing Efficiency: The automated system achieved 99.6% time reduction compared to manual assessment (mean 52.3 s vs. 247.5 min), validating the abstract’s claim of “reducing assessment time from hours to minutes.”

Consistency: Manual assessors exhibited inter-rater reliability (ICC) of 0.71, indicating substantial but imperfect agreement. The automated system produced identical scores across repeated runs (CV = 0.0%), eliminating inter-assessor variability entirely.

Scalability: Manual assessors reported fatigue-related accuracy decline after 3–5 assessments per day. The automated system processed 136 models in a single session without degradation, enabling portfolio-scale batch processing.

Score Agreement: Automated scores correlated strongly with the mean of manual assessor scores (Pearson r = 0.89, p < 0.001), indicating that the LLM-based approach produces scores consistent with expert judgment while eliminating variability.

4.6. Theoretical Implications

The experimental results substantiate several theoretically significant propositions regarding BIM-AI integration for construction assessment:

Complementary Strengths: The system demonstrates effective synergy between structured IFC data processing and LLM reasoning capabilities. While IFC provides geometric precision and semantic structure, LLM reasoning addresses metadata incompleteness through flexible interpretation—a complementary relationship not previously documented in the construction informatics literature.

Assessment Independence: The weak correlation between file size and IBS scores (r = 0.42) confirms that industrialization represents design decisions rather than model complexity artifacts. This finding validates the theoretical assumption underlying IBS assessment that construction method selection is independent of project documentation detail level.

Category Independence: The minimal intercorrelation among scoring components (structural, walls, and other solutions) validates the CIS 18:2023 treatment of these categories as independent assessment dimensions, supporting the additive scoring methodology.

4.7. Practical Implications

For construction industry practitioners, the demonstrated 99% time reduction (from 30+ minutes to approximately 1 min) fundamentally transforms the economics of IBS assessment:

Design Integration: Rapid assessment enables integration into iterative design workflows, providing evidence-based optimization feedback rather than terminal compliance verification.

Portfolio Analysis: Organizations can benchmark entire building portfolios to identify industrialization patterns and improvement opportunities.

Regulatory Compliance: Typical 50–100 model portfolios can be processed in 50–90 min, streamlining regulatory submission preparation.

4.8. Comparison with Existing Approaches

As summarized in

Table 5, the proposed LLM-based approach offers distinct advantages over traditional rule-based systems [

17] and NLP-enhanced methods [

19]. The prompt-based rule encoding requires significantly lower implementation effort (days versus months) while providing higher adaptability to regulatory updates through system instruction modification rather than procedural logic restructuring. As shown in

Table 6, the automated system achieves a 99.6% reduction in mean processing time compared to manual assessment, with batch capability scaling to 136 models per session versus 3–5 models per day manually.