1. Introduction

Globally, governments, corporations, and communities are progressively prioritizing environmentally friendly and sustainable energy sources in response to concerns over climate change, pollution, and the exhaustion of limited fossil fuel reserves. In response to these concerns, governments are enacting laws and regulations that encourage and promote the use of renewable energy sources [

1].

Hydro turbines offer a reliable and environmentally advantageous renewable energy source by harvesting the power of flowing water. They facilitate the global shift towards a more environmentally sustainable energy future and provide the basis for future innovations in renewable energy technologies. Hydro turbine technology can substantially address energy demands while reducing dependence on fossil fuels and mitigating environmental impact through ongoing research and application [

2].

Hydro turbines play the important function of transforming the kinetic energy of flowing water into mechanical energy, which is then converted into electrical energy. The electricity supplies generated from hydro turbines are essential for life in any society. Hydropower is the most reliable renewable energy source and contributes significantly compared to other resources due to its ability to handle large loads. This hydropower renewable energy harvester source aligns with Goal 7 of the United Nations’ Sustainable Development Goals, providing clean energy and assisting to reduce our dependence on non-renewable energy sources such as fossil fuels, coal, and petroleum [

3].

The fundamental aerodynamic aspect of blade turbines is influenced by lift and drag forces essential for optimizing the efficiency of the turbine blades. Lift is the force that turns the energy from water flow into rotational motion, while drag creates resistance that can cause energy losses. On the other hand, several hydro turbine blades are fitted to a central rotating shaft or plate. Water flowing through the casing of the enclosed turbine strikes the blades of the turbine, producing torque and making the shaft rotate due to the velocity and pressure of the water [

4].

Previously, researchers estimated and optimized lift and drag forces in hydro turbines by using computational fluid dynamics (CFD) simulations and experimental testing. While CFD is effective, it is expensive, requires longer time to simulate, and at the beginning, the CFD software always requires complex setups [

5]. These restrictions reduce the number of design iterations that can be explored.

However, with recent advancements in data-driven methods and the growing availability of real-world operational data, machine learning (ML) algorithms present a promising alternative for more efficient and accurate predictions of these forces. By utilizing collected data, ML models can forecast lift and drag forces for different turbine designs and flow conditions, allowing for quicker design iterations and optimizations. According to A. Ismiael [

6], ML regression models are used in the design of hydro turbine blades, where Random Forest is reported to be able to provide 98% accuracy. The integration of ML in prediction hydro turbine forces extends several improvements over CFD methods in speeding up the design iteration, improving prediction accuracy, and adaptive learning. ML algorithms, particularly regressors, provide a transformative approach to predicting lift and drag forces in hydro turbine design.

Regressors have found extensive applications across various fields, including in the medical field to predict outcomes of transcatheter aortic valve implantation (TAVI), in education to predict student performance [

7], and in healthcare, for predicting costs [

8]. The performance of regressors is typically evaluated using metrics such as mean squared error, absolute error, and R-squared values. The selection of an appropriate regressor and the optimization of its hyperparameters are critical steps in developing accurate predictive models for continuous outcomes [

9].

In this paper, nine ML regressors are tested for the prediction of hydro turbine lift and drag: Linear Regression (LR), Support Vector Regression (SVR), K-Nearest Neighbor (KNN), Multi-Layer Perceptron Regressor (MLP), Random Forest (RF), Gradient Boosting (GB), Light Gradient-Boosting Machine (LGBM), Categorical Boosting (CatBoost), and Extreme Gradient Boosting (Boost). The mean absolute error (MAE), mean square error (MSE), R-squared (R2), and root mean square error (RMSE) show that CatBoost performed the best, followed by LGBM.

The next section reviews previous works, followed by the

Section 3 Methodology Section, where the applied ML algorithms are briefly presented. The experimental protocol and procedure are also presented in the same section, while

Section 4 presents the results and findings. Finally,

Section 5 concludes the study.

2. Related Works

The Reynolds-Averaged Navier–Stokes (RANS) equations have long been used to solve the problem of precisely estimating lift and drag in the field of fluid dynamics. However, researchers have found that these traditional approaches are quite costly to be used on computers, especially when they are used on complicated systems like hydro turbines. This high cost of processing might be a major issue that halts real-time optimization and iterative design processes. Recent progress in ML has started to provide a hopeful way forward. These methods not only speed up calculations but also keep them accurate, which means that machine learning might either work with or even replace CFD simulations in some cases [

10].

In another instance, Du et al. [

11] achieved substantial progress in this area by using deep learning techniques for predicting performance, which made it possible to rapidly optimize the design of turbine blades. They propose two ways to define parameters; one based on geometric relationships (PGR) and the other based on neural networks (PNN). Their technique resulted in a dual convolutional neural network (DCNN) that predicts physical fields and aerodynamic performance within just milliseconds. Impressively, this model attained a prediction accuracy of over 99% for performance metrics, demonstrating a significant improvement above standard machine learning methods.

Expanding on the convergence of ML and CFD, Huang et al. [

12] combined these technologies to enhance turbine performance forecasts. They gathered numerous operational data in different situations and used a better multi-layer neural network that was upgraded with a better Grey Wolf method. This research indicated that merging different data sources could lead to a large boost in forecast accuracy, highlighting the versatility of machine learning in fluid dynamics.

In another study, Teimourian [

13] studied machine learning ability through the comparison of various algorithms like Random Forest, Gradient Boosting Regression, and Linear Regression in the prediction of the main aerodynamic parameter, that being the lift-to-drag ratio. The finding supported the concept that machine learning approaches play a vital role in efficiently predicting aerodynamic performance, especially when presented with sufficiently diverse information. This has also been stated by Singh, who proposed a technique for power estimation generated by wind turbines. Employing observed and forecasted power, Singh [

14] explained how parametric models and machine learning models may manage variability and detect sub-optimal turbine operation.

For the condition of wind turbine blades, Bi et al. [

15] suggested a BP neural network to diagnose probable damage. The approach technique was demonstrated through experiments and the method not only involved real-time monitoring but also damage assessment with good accuracy, which contributed to improving the reliability of wind turbine operations. Similarly, Zhou et al. [

16] employed machine learning algorithms to optimize airfoil settings for a Mars helicopter, with no requirement for expensive experimental hardware and illustrating the actual-world uses of these technologies.

Further developments were reported by Fitriadhy et al., [

17] who conducted CFD simulations of hydrofoil ships to forecast lift and resistance based on changing angles of attack. Their results demonstrated a direct correlation between the angle of attack and the resultant lift power, stressing the supreme importance of these factors in the design of hydrofoil ships. In the meantime, Malecha et al. looked at artificial intelligence for the prediction of the aerodynamic features of wind turbine profiles, with the Random Forest regression technique being utilized successfully to forecast lift coefficients [

18].

Cappugi et al. [

19] proposed a novel simulation-based technology capable of rapidly estimating wind turbine energy output losses due to leading-edge erosion. The system employs CFD and machine learning in order to enable predictive maintenance across wind farms with a notable boost of operational efficiency [

20]. In another work, a hybrid ML and CFD approach was applied to NASA airfoils, highlighting that the lift-to-drag ratio could be efficiently maximized via temperature fluctuations, reflecting the promise of ML for aerodynamic performance optimization.

A machine learning-based optimization pipeline was illustrated by Masood et al. [

21] in which high-dimensional design spaces were explored, yielding turbine designs with record efficiency improvements from an initial design efficiency of 56.98% to an unprecedented 90.73% while significantly reducing the computational expense.

Despite the promising research in applying ML approaches for forecasting drag and lift in hydro turbine design, several shortcomings in existing research need to be solved. Research trends largely address independent usage of ML and often attempt to optimize particular areas of design inattentive to the overall hydro turbine system. For instance, while research by Du et al. and Huang et al. indicates the usefulness of ML in turbine blade optimization and performance prediction, fewer studies emphasize the integration of these methods across various design phases and operational situations [

11,

12]. This lack of comprehensive frameworks can impede the effective adoption of these techniques because turbine performance is influenced by a broad variety of parameters, including ambient conditions and varying operational loads.

In addition, current work depends on homogenous datasets, which may fall short of capturing the details that exist in actual systems [

13,

14]. Many efforts rely on massive datasets acquired under restricted settings, hence limiting the capacity for generalization. There is an urgent need for future efforts to focus on constructing strong, adaptive machine learning models that will function as well under varied settings. In addition, comprehending these models is crucial so that the practitioners may believe and use these new strategies in hydro turbine design. Through overcoming these gaps, future work can play a key role in boosting hydro turbine performance and operational efficiency in practical scenarios.

3. Methodology

This work began with data collection at the Aerodynamics, Aeroelasticity, and Aeroacoustics Laboratory, Indonesia’s National Research and Innovation Agency (BRIN), using the Educational Low-Speed Wind Tunnel (ELST) as shown in

Figure 1. The hydrokinetic turbine blade model has 2 blades and was tested using three different types of airfoils, NACA 0012, NACA 0030, and NACA 4412, with a chord length of 20 cm. The angle of attack for front and back blades varied between

,

, and

. Three Reynold numbers were tested; 55,000 (4.07 m/s), 170,000 (12.4 m/s), and 390,000 (28,47 m/s). Lastly, three variations of stagger were tested: 1-chord length (St1), 2-chord length (St2), and 3-chord length (St3). Stagger is defined as the horizontal distance between the leading edge of the front and rear airfoils. The stagger variations are provided in

Figure 2. These parameters were varied, and the drag (Ftotx), lift (Ftoty), and moment (Mtotz) were recorded. The parameters are tabulated in

Table 1. The profile of the airfoil tested is shown in

Figure 3 and the specification as tabulated in

Table 2.

The ELST used is a low-speed wind tunnel of the open-circuit suction type with a test section measuring 0.5 m × 0.5 m. The airspeed in the test section can reach 45 m/s without a test model in place, while the operating speed for testing and calibration is limited to a maximum of 30 m/s. The lift, drag, and moment are measured using two 6-component load cells mounted in series at both ends of the model under different parameters settings.

A total of 243 data samples comprising drag, lift, and moment values, along with corresponding design parameters, were collected. These data were subsequently used to train machine learning regression models for predicting lift, drag, and moment.

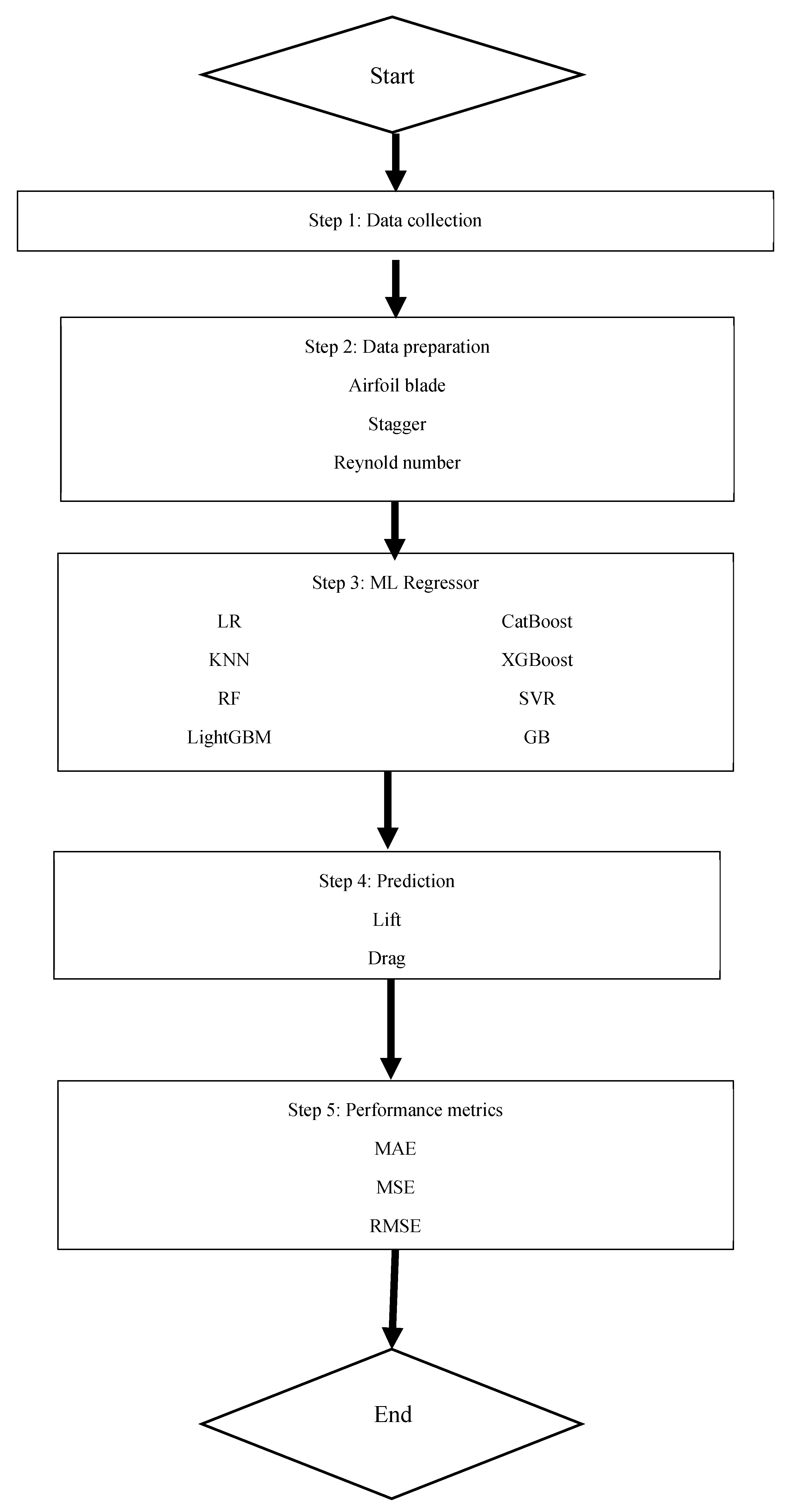

Figure 4 below is a detailed representation of the proposed machine learning technique applied in this study for estimating the hydrodynamic performance of a hydro turbine. It consists of five stages, beginning with data collecting and concluding with the final evaluation of the model. The initial phase contains data gathering, whereby the experiment results on the performance of the hydro turbine are determined through various operating and geometric parameters. This stage supplies the key input employed in developing the machine learning models.

In stage two, data preparation and definition were conducted. The most significant parameters determining the performance of hydro turbines were specified and grouped. These parameters are airfoil blade type, stagger angle, Reynolds number, and angle of attack. They are used to ensure geometric and flow parameters are accurately defined in the data entry process before training the model.

The third stage incorporates the application of machine learning for regression models. In this stage, several regression models are employed to model the nonlinear interactions among the input variables and the hydrodynamic outputs. The models used to assess the findings include LR, KNN, RF, LGBM, SVR, Gb, XGBoost, and CatBoost.

The fourth stage involves applying the taught models to anticipate hydrodynamic reactions in terms of lift, drag forces, and moments, which are crucial in hydro turbine blades. The predictions were then followed by a comparison between the predicted and experimental data to establish the prediction abilities of each model.

Finally, in the fifth stage, the performance of the models is evaluated based on estimates of regression, such as MAE, MSE, and RMSE values, among others, which gives an error level, as well as the ability of the models to fit into the observed values, hence comparing them objectively.

Overall, the offered strategy presents a creative and methodical approach that makes it conceivable to effectively combine empirical knowledge about hydro turbines with the ML process in the context of the prediction of lift, drag, or moment.

3.1. Machine Learning Prediction

The prediction is performed using fundamental components of machine learning, the regressors. Machine learning regressors are algorithms designed to predict continuous numerical values based on input features. These models use labeled training data to establish relationships between the input variables and the target outputs. Common types of regressors include Linear Regression, K-Nearest Neighbor, Support Vector Regression, and neural networks. More sophisticated techniques, such as Random Forest and Gradient-Boosting Machines, are capable of capturing complex nonlinear relationships within the data. In this work we considered nine regressors for lift and drag prediction. The algorithms are LR, SVR, KNN, MLP, RF, GB, LightGBM, CatBoost, and XGBoost. Multivariate versions of the regressors are adopted here to predict the drag (Ftotx), lift (Ftoty), and moment (Mtotz). As can be seen from the hydro turbine parameters, where the values are a combination of numerical and categories, the regressor is trained using mixed data. The nine algorithms are briefly discussed below and summarized in

Table 3.

Figure 4.

Study methodology.

Figure 4.

Study methodology.

3.1.1. Linear Regression

LR is a basic form of regression that models the relationship between a scalar dependent variable and one or more independent variables using a linear function. If only one independent variable is used, it is called a simple LR; if more than one independent variable is used, it is called a multiple LR. Fitting a linear model to a dataset requires estimating the regression coefficients such that the error term is minimized. LR is a simple and straightforward method that helps in understanding the relationship between variables. It does not require complex calculation or much time and is useful for making predictions and forecasting future outcomes based on historical data. Since LR assumes linear relationship between variables, it may not capture the complex relationships between variables. LR also is sensitive to outliers. Extreme data points can disproportionately influence the model [

22].

3.1.2. Support Vector Regression

SVR uses the same principles as Support Vector Machines (SVMs) for classification, but it applies them to predict real values rather than a class. SVR introduces a margin of tolerance (epsilon) where no penalty is given to errors. The main idea is to minimize error, individualizing the hyperplane which maximizes the margin, keeping in mind that part of the error is tolerated. Studies show that SVR offers advantages in handling nonlinear relationships, adaptability, and high prediction accuracy [

23]. However, SVR is sensitive to parameter selection, has potential for overfitting, and is computationally inefficient on large datasets [

24].

3.1.3. K-Nearest Neighbor

KNN is a non-parametric supervised learning classifier used for classification and regression. It is a type of instance-based learning where the function is only approximated locally, and all computation is deferred until classification. A variety of distance metrics can be used, including Euclidean distance, Manhattan distance, or Minkowski distance. In regression, KNN finds the k closest training examples in the feature space and averages their values to make a prediction. The neighbors are taken from a set of objects for which the object property value is known. If k = 1, then the output is assigned to the value of that single nearest neighbor. The KNN is simple and effective for handling nonlinearity and multiple predictors, but it requires large memory and can be slow with large datasets [

25].

3.1.4. Multi-Layer Perceptron Regressor

MLP is a type of neural network suitable for regression tasks. It comprises at least three layers of nodes: an input layer, a hidden layer, and an output layer. MLP uses backpropagation for training the network, where the error is calculated between the predicted and actual outputs and propagated back through the system to update the weights. Studies have suggested that MLPs are advantageous for their high accuracy and versatility in various prediction tasks [

26,

27], but they are limited by their lack of interpretability and high computational complexity [

28].

3.1.5. Random Forest

RF is an ensemble learning method for regression (and classification) that operates by constructing multiple decision trees during training and outputting the average prediction of the individual trees. It reduces overfitting by averaging multiple deep decision trees, each trained on different parts of the same training set, typically with the bagging method. RF is used for prediction in various classification and regression problems [

29]. However, RF’s poor model interpretability limits its use in fields like medical diagnosis and financial fraud detection [

26].

3.1.6. Gradient Boosting

GB is a machine learning technique for regression and classification problems that produces a prediction model in the form of an ensemble of weak prediction models, typically decision trees. It builds the model in a stage-wise fashion like other boosting methods do, and it generalizes them by allowing optimization of an arbitrary differentiable loss function. GB can better capture complex relationships compared to generalized linear model-based approach [

30]. Other regressors that belong to the GB family are XGBoost, LightGBM, and CatBoost.

3.1.7. Light Gradient-Boosting Machine

LightGBM stands for Light Gradient-Boosting Machine. It is a Gradient Boosting framework that uses tree-based learning algorithms and is designed to be distributed and efficient. It differs from other tree-based algorithms in that it grows trees vertically (leaf-wise) rather than horizontally (level-wise), which can lead to better reductions in loss and thus better accuracy in some datasets. LightGBM is reported to be an effective and highly scalable algorithm offering the best predictive performance while consuming significantly less computational time than MLP, RF, SVR, and XGBoost [

31].

3.1.8. CatBoost

CatBoost is an algorithm for Gradient Boosting on decision trees. Developed by Yandex, it is particularly powerful for categorical data and excels in accuracy, handling categorical variables using a permutation-driven alternative compared to the traditional one-hot encoding used in other algorithms. CatBoost is a powerful tool for classification and regression tasks in Big Data. However, its effectiveness varies across various disciplines and its sensitivity to hyperparameters [

32]. For example, the CatBoost model effectively improves classification performance in corporate failure prediction compared to other advanced approaches [

33]. CatBoost also demonstrated remarkable performance in predicting river inflow, enhancing prediction accuracy compared to XGBoost and LGBM [

34]. However, Anudeep and Thangaraj reported that CatBoost achieved lower accuracy compared to LR in predicting myocardinal infarction [

35]. This work uses CatBoost 1.2.7 which a stable release.

3.1.9. XGBoost

XGBoost operates under the Gradient Boosting framework but has been optimized for speed and performance. It delivers a high performance as compared to Gradient Boosting and has support for regularization parameters to prevent overfitting, which is an advantage over traditional Gradient Boosting. XGBoost offers higher accuracy, precision, and recall compared to LR, SVR, and RF [

36]. However, XGBoost’s classification effect in the case of data imbalance is often not ideal [

37].

3.2. Performance Metrics

Four performance metrics are used to evaluate the performance of the regressors: mean absolute error (), mean square error (), root mean square error (), and R-squared ().

measures the average of the absolute differences between actual,

, and predicted value,

, as shown in Equation (1).

in the equation is the total number of data. On the other hand,

measures the average of the squared differences of the actual and predicted values.

is more sensitive to large errors.

The is calculated using the square root of MSE.

Unlike , has the same unit as the data, and thus is easier for analysis.

Lastly is

. This metric is a very important indicator of the prediction model’s goodness of fit. The greater the

value, the better the model.

4. Results and Discussion

The performance metrics of the ML algorithms are tabulated in

Table 4. The metric value and the rank of the algorithms based on each metric is shown in the row below the values. Based on the rank of the algorithm for each metric, the average rank is then calculated and shown in

Table 5. The average rank normalized the performance across all metrics, allowing identification of the overall best algorithm.

It is observed that CatBoost is always ranked first for all metrics involving prediction of lift and moment. Meanwhile, for prediction of drag, CatBoost is ranked first for while for the other metrics it is ranked second. Drag is best predicted by LGBM. LGBM is ranked first for three metrics from the four metrics used for prediction of drag.

The average rank shows that CatBoost is ranked first with average rank of 1.33, followed by GB and XGBoost at 2.83 and 3.25, respectively. LR is ranked the worst with average rank of 8.67. LR is either ranked 9 or 8 for the performance metrics measured for drag, lift, and moment predictions.

Based on the results presented in

Table 5, CatBoost provided optimal performance. The characteristics of the hydro turbine dataset utilized in this study aligned effectively with CatBoost’s capacity to manage heterogeneous datasets, particularly those comprising both numerical and categorical variables. The structured boosting technique primarily mitigates prediction shift and target leakage, two significant issues that degrade performance in classic Gradient Boosting. Consequently, even with very modest datasets, CatBoost can more precisely delineate intricate nonlinear connections.

CatBoost employed ordered target encoding as an effective method for managing categorical data, eliminating the necessity for a laborious preprocessing step such as one-hot encoding. This analysis is particularly pertinent as lift and drag outputs are significantly influenced by categorical data in turbine design parameters, including airfoil type and blade configuration. Enhanced precision will immediately result from the algorithm’s inherent ability to optimize specific attributes.

Additional evidence of CatBoost’s robustness is derived from its symmetrical tree structure, which mitigates overfitting, a prevalent issue in forecasting highly nonlinear phenomena like lift and drag, by maintaining uniform model complexity. In this context, the enhancement of learning robustness is achieved through automated precise parameter selection and integrated regularization methods, particularly when the dataset is affected by multicollinearity or noise.

Given that both LR and SVR are fundamentally linear models predicated on too-simplistic assumptions regarding feature interactions, their subpar performance is anticipated. The nonlinear and linked aspects of fluid-dynamic behavior that generate lift and drag render linear regressors inadequate for effectively simulating these interactions. The efficacy of SVR is constrained when addressing mixed data types and categorical variables, as it performs optimally with entirely numerical and appropriately scaled data.

Concurrently, tree-based ensemble methods, such as Gradient Boosting, proved effective due to their capacity to model nonlinearities. In comparison to Gradient Boosting, CatBoost manages categorical variables more effectively and addresses the issue of target leakage more proficiently. CatBoost attains enhanced generalization and superior predictive accuracy on multivariate hydrodynamic outputs due to advancements in managing categorical and leaky effects.

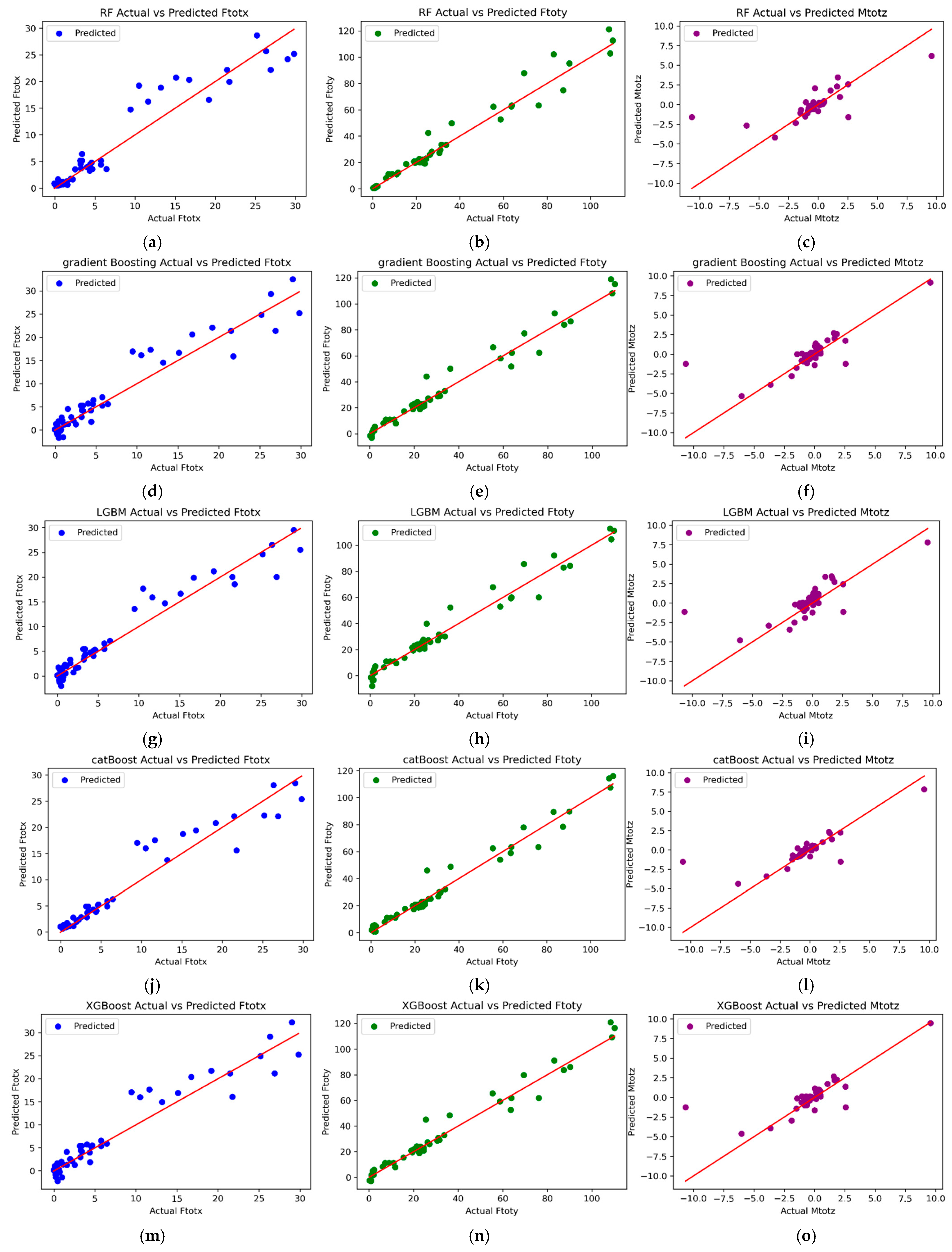

Figure 5 and

Figure 6 display the scatter plots for the actual and anticipated values of the forces of drag (Ftotx), lift (Ftoty), and moment (Mtotz) generated by the machine learning methodologies examined in this study. Among all models, CatBoost has the strongest correlation of predicted points to the ideal result line across all three output components. This is well correlated with the superior quantitative results of CatBoost, as seen by elevated

values and reduced MAE and RMSE. The close grouping of points to the ideal result line indicates that CatBoost performs effectively on all nonlinear relationships defining the hydrodynamic forces. In predicting drag force (Ftotx), LGBM demonstrates a marginally superior distribution of results in approach to the ideal model line compared to CatBoost, hence confirming its slightly enhanced accuracy in predicting drag force, as evidenced by the error analysis. However, in the prediction of lift force (Ftoty) and moment force (Mtotz), CatBoost demonstrated superior consistency again. In contrast, linear models like LR and SVR exhibit clear indications of underfitting, as evidenced by almost uniform scatter plots where the predicted values show minimal variance despite fluctuations in the actual values. These data indicate that linear models inadequately represent the intricate nonlinear relationships between blade geometry parameters, flow conditions, and resultant hydrodynamic forces. Tree-based ensemble models such as Random Forest, Gradient Boosting, and XGBoost have superior predictive trends compared to Linear Regression models. Nonetheless, the scatter plot distributions for these models exhibit greater oscillations for moment prediction, signifying subpar generalization capabilities. These models exhibit significantly lower performance in processing categorical variables and interaction terms compared to CatBoost models. The scatter plot analysis confirms the findings of the quantitative evaluation, demonstrating that the predictions generated by the CatBoost model are the most precise and consistent for the output parameters of drag, lift, and moment.

5. Conclusions

In this paper, CatBoost, LightGBM, and Gradient Boosting have been identified to have a high prediction accuracy in identifying the values of coefficients of lift, drag, and moment, which play a very major role in constructing the blades of hydro turbines. CatBoost has been proven to have a high forecast accuracy since it is able to cope with categorical variables like airfoil, which play a very crucial role in picking the coefficients. Its capacity to deal with complex nonlinear relations also aids substantially in determining the coefficients.

On the contrary, linear models like Linear Regression and Support Vector Regression proved substantially less successful. This can also be attributed to the nature of these models, which are linear and unable to handle nonlinear and multi-variate relationships like fluid dynamics. The outcome can also be attributed to the nature of the experimental dataset, which contains a combination of numerical and categorial variables and highly nonlinear connections. Hence, tree-based models are considerably suitable for this particular task of predictions.

In the future, three essential lines of research will be examined and pursued. The three lines include the extension of the dataset developed within the experiments for the application of deep learning models, the development of systematic hyperparameter tuning for the improvement of the models’ predictive ability, and the incorporation of the models that may effectively predict the performances into the design optimization procedure for hydro turbines.

Author Contributions

Conceptualization, N.A.A.A. and N.H.A.A.; methodology, S.T., D.H., G.A.L.S., and A.S.A.; software, S.T. and D.H.; validation, N.A.A.A. and N.H.A.A.; formal analysis, N.A.A.A.; investigation, S.T., D.H., and G.A.L.S.; resources, A.S.A. and D.H.; data curation, N.M.; writing—original draft preparation, A.K.G. and N.M.; writing—literature review, A.K.G.; writing—review and editing, N.A.A.A. and N.H.A.A.; algorithm development, N.A.A.A.; supervision, N.H.A.A.; project administration, N.H.A.A.; funding acquisition, N.H.A.A. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by a collaborative grant provided by Multimedia University and Universitas Indonesia (MMUI/24000). The study was carried out by researchers from both institutions and the APC was funded by Multimedia University.

Data Availability Statement

The datasets generated and analyzed during the current study are not publicly available due to institutional data-sharing restrictions but are available from the corresponding author on reasonable request.

Acknowledgments

We would like to thank Universitas Indonesia for providing the laboratory facilities and experimental support, and Multimedia University for the research support and resources provided throughout this study.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Di Grande, S.; Berlotti, M.; Cavalieri, S.; Gueli, R. A Machine Learning Approach to Forecasting Hydropower Generation. Energies 2024, 17, 5163. [Google Scholar] [CrossRef]

- Sanduru, B. Renewable self electricity techniques by hydro turbine generation. E3S Web Conf. 2024, 564, 01004. [Google Scholar]

- Wang, Q.; Guo, J.; Li, R. Better renewable with economic growth without carbon growth: A comparative study of impact of turbine, photovoltaics, and hydropower on economy and carbon emission. J. Clean. Prod. 2023, 426, 139046. [Google Scholar] [CrossRef]

- Gimhan, K.; Gayantha, L. Aerodynamics of Wind Turbines. Available online: https://www.researchgate.net/publication/391485976_AERODYNAMICS_OF_WIND_TURBINES (accessed on 10 November 2025).

- Chen, X.; Wang, Z.; Deng, L.; Yan, J.; Gong, C.; Yang, B.; Wang, Q.; Zhang, Q.; Yang, L.; Pang, Y.; et al. Towards a new paradigm in intelligence-driven computational fluid dynamics simulations. Eng. Appl. Comput. Fluid Mech. 2024, 18, 2060. [Google Scholar] [CrossRef]

- Ismaiel, A. A Multivariate Machine Learning Approach for the Prediction of Wind Turbine Blade Structural Dynamics. Appl. Syst. Innov. 2025, 8, 12. [Google Scholar] [CrossRef]

- Musso, M.F.; Cascallar, E.C.; Bostani, N.; Crawford, M. Identifying Reliable Predictors of Educational Outcomes Through Machine-Learning Predictive Modeling. Front. Educ. 2020, 5, 104. [Google Scholar] [CrossRef]

- Vimont, A.; Leleu, H.; Durand-Zaleski, I. Machine learning versus regression modelling in predicting individual healthcare costs from a representative sample of the nationwide claims database in France. Eur. J. Heal. Econ. 2021, 23, 211–223. [Google Scholar] [CrossRef]

- Fernández-Delgado, M.; Sirsat, M.; Cernadas, E.; Alawadi, S.; Barro, S.; Febrero-Bande, M. An extensive experimental survey of regression methods. Neural Netw. 2019, 111, 11–34. [Google Scholar] [CrossRef]

- Ahmed, S.; Kamal, K.; Ratlamwala, T.A.H.; Mathavan, S.; Hussain, G.; Alkahtani, M.; Alsultan, M.B.M. Aerodynamic Analyses of Airfoils Using Machine Learning as an Alternative to RANS Simulation. Appl. Sci. 2022, 12, 5194. [Google Scholar] [CrossRef]

- Du, Q.; Li, Y.; Yang, L.; Liu, T.; Zhang, D.; Xie, Y. Performance prediction and design optimization of turbine blade profile with deep learning method. Energy 2022, 254, 124351. [Google Scholar] [CrossRef]

- Huang, X.; Lu, Q.; Zhou, H.; Huang, W.; Wang, S. Study on hydroturbine power trend prediction based on machine learning. Energy Rep. 2023, 10, 1996–2005. [Google Scholar] [CrossRef]

- Teimourian, A.; Rohacs, D.; Dimililer, K.; Teimourian, H.; Yildiz, M.; Kale, U. Airfoil aerodynamic performance prediction using machine learning and surrogate modeling. Heliyon 2024, 10, e29377. [Google Scholar] [CrossRef]

- Singh, M. A Hybrid—Machine Learning and Possibilistic—Methdology for Predicting Produced Power Using Wind Turbine SCADA Data. Phm Soc. Eur. Conf. 2024, 8, 15. [Google Scholar] [CrossRef]

- Bi, J.-X.; Fan, W.-Z.; Wang, Y.; Ren, J.; Li, H.-B. A Fault Diagnosis Algorithm for Wind Turbine Blades Based on BP Neural Network. IOP Conf. Ser. Mater. Sci. Eng. 2025, 1340, 022032. [Google Scholar] [CrossRef]

- Zhao, P.; Gao, X.; Zhao, B.; Liu, H.; Wu, J.; Deng, Z. Machine Learning Assisted Prediction of Airfoil Lift-to-Drag Characteristics for Mars Helicopter. Aerospace 2023, 10, 614. [Google Scholar] [CrossRef]

- Fitriadhy, A.; Nabila, I.N.; Grosnin, C.B.; Mahmuddin, F.; Baso, S. Computational Investigation into Prediction of Lift Force and Resistance of a Hydrofoil Ship. CFD Lett. 2022, 14, 51–66. [Google Scholar] [CrossRef]

- Malecha, Z.; Sobczyk, A. Using Artificial Intelligence to Predict the Aerodynamic Properties of Wind Turbine Profiles. Computers 2024, 13, 167. [Google Scholar] [CrossRef]

- Cappugi, L.; Castorrini, A.; Bonfiglioli, A.; Minisci, E.; Campobasso, M.S. Machine learning-enabled prediction of wind turbine energy yield losses due to general blade leading edge erosion. Energy Convers. Manag. 2021, 245, 114567. [Google Scholar] [CrossRef]

- Al-Fatlawi, A.W.; Hashemi, J.; Hossain, S.; Assad, M.E.H. Applying Machine Learning in CFD to Study the Impact of Thermal Characteristics on the Aerodynamic Characteristics of an Airfoil. J. Appl. Fluid Mech. 2024, 17, 742–755. [Google Scholar] [CrossRef]

- Masood, Z.; Khan, S.; Qian, L. Machine learning-based surrogate model for accelerating simulation-driven optimisation of hydropower Kaplan turbine. Renew. Energy 2021, 173, 827–848. [Google Scholar] [CrossRef]

- Maulud, D.; Abdulazeez, A.M. A Review on Linear Regression Comprehensive in Machine Learning. J. Appl. Sci. Technol. Trends 2020, 1, 140–147. [Google Scholar] [CrossRef]

- McKearnan, S.B.; Vock, D.M.; Marai, G.E.; Canahuate, G.; Fuller, C.D.; Wolfson, J. Feature selection for support vector regression using a genetic algorithm. Biostatistics 2023, 24, 295–308. [Google Scholar] [CrossRef] [PubMed]

- Musa, B.; Yimen, N.; Abba, S.I.; Adun, H.H.; Dagbasi, M. Multi-State Load Demand Forecasting Using Hybridized Support Vector Regression Integrated with Optimal Design of Off-Grid Energy Systems—A Metaheuristic Approach. Processes 2021, 9, 1166. [Google Scholar] [CrossRef]

- Taunk, K.; De, S.; Verma, S.; Swetapadma, A. A Brief Review of Nearest Neighbor Algorithm for Learning and Classification. In Proceedings of the 2019 International Conference on Intelligent Computing and Control Systems (ICCS), Madurai, India, 15–17 May 2019; pp. 1255–1260. [Google Scholar]

- Zhao, X.; Wu, Y.; Lee, D.L.; Cui, W. iForest: Interpreting Random Forests via Visual Analytics. IEEE Trans. Vis. Comput. Graph. 2019, 25, 407–416. [Google Scholar] [CrossRef]

- Baig, M.A.; Shaikh, S.A.; Khatri, K.K.; Shaikh, M.A.; Khan, M.Z.; Rauf, M.A. Prediction of Students Performance Level Using Integrated Approach of ML Algorithms. Int. J. Emerg. Technol. Learn. 2023, 18, 216–234. [Google Scholar] [CrossRef]

- Zelený, O.; Fryza, T. Multi-Branch Multi Layer Perceptron: A Solution for Precise Regression using Machine Learning. In Proceedings of the 2023 33rd International Conference Radioelektronika, Pardubice, Czech Republic, 19–20 April 2023; pp. 1–5. [Google Scholar] [CrossRef]

- Schonlau, M.; Zou, R.Y. The random forest algorithm for statistical learning. Stata J. Promot. Commun. Stat. Stata 2020, 20, 3–29. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhao, Y.; Canes, A.; Steinberg, D.; Lyashevska, O.; AME Big-Data Clinical Trial Collaborative Group. Predictive analytics with gradient boosting in clinical medicine. Ann. Transl. Med. 2019, 7, 152. [Google Scholar] [CrossRef]

- Zhang, J.; Mucs, D.; Norinder, U.; Svensson, F. LightGBM: An Effective and Scalable Algorithm for Prediction of Chemical Toxicity–Application to the Tox21 and Mutagenicity Data Sets. J. Chem. Inf. Model. 2019, 59, 4150–4158. [Google Scholar] [CrossRef]

- Hancock, J.T.; Khoshgoftaar, T.M. CatBoost for big data: An interdisciplinary review. J. Big Data 2020, 7, 94. [Google Scholar] [CrossRef]

- Ben Jabeur, S.; Gharib, C.; Mefteh-Wali, S.; Ben Arfi, W. CatBoost model and artificial intelligence techniques for corporate failure prediction. Technol. Forecast. Soc. Change 2021, 166, 120658. [Google Scholar] [CrossRef]

- Kumar, V.; Kedam, N.; Sharma, K.V.; Mehta, D.J.; Caloiero, T. Advanced Machine Learning Techniques to Improve Hydrological Prediction: A Comparative Analysis of Streamflow Prediction Models. Water 2023, 15, 2572. [Google Scholar] [CrossRef]

- Anudeep, R.; Thangaraj, S.J.J. Accurate Prediction of Myocardial Infarction by Comparing Logistic Regression Algorithm with CatBoost Classifier. E3S Web Conf. 2023, 399, 04019. [Google Scholar] [CrossRef]

- Liu, J.; Wu, J.; Liu, S.; Li, M.; Hu, K.; Li, K. Predicting mortality of patients with acute kidney injury in the ICU using XGBoost model. PLoS ONE 2021, 16, e0246306. [Google Scholar] [CrossRef] [PubMed]

- Zhang, P.; Jia, Y.; Shang, Y. Research and application of XGBoost in imbalanced data. Int. J. Distrib. Sens. Netw. 2022, 18, 15501329221106935. [Google Scholar] [CrossRef]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |