A Stock Price Prediction Network That Integrates Multi-Scale Channel Attention Mechanism and Sparse Perturbation Greedy Optimization

Abstract

1. Introduction

Review

- We have developed a stock price data set containing four different industries, covering minute-level stock price data from 24 January 2024 to 18 October 2024, with 45,600 records for each data. This data set extracts rich resources of different stock trend characteristics, which help to get a deeper understanding of market dynamics.

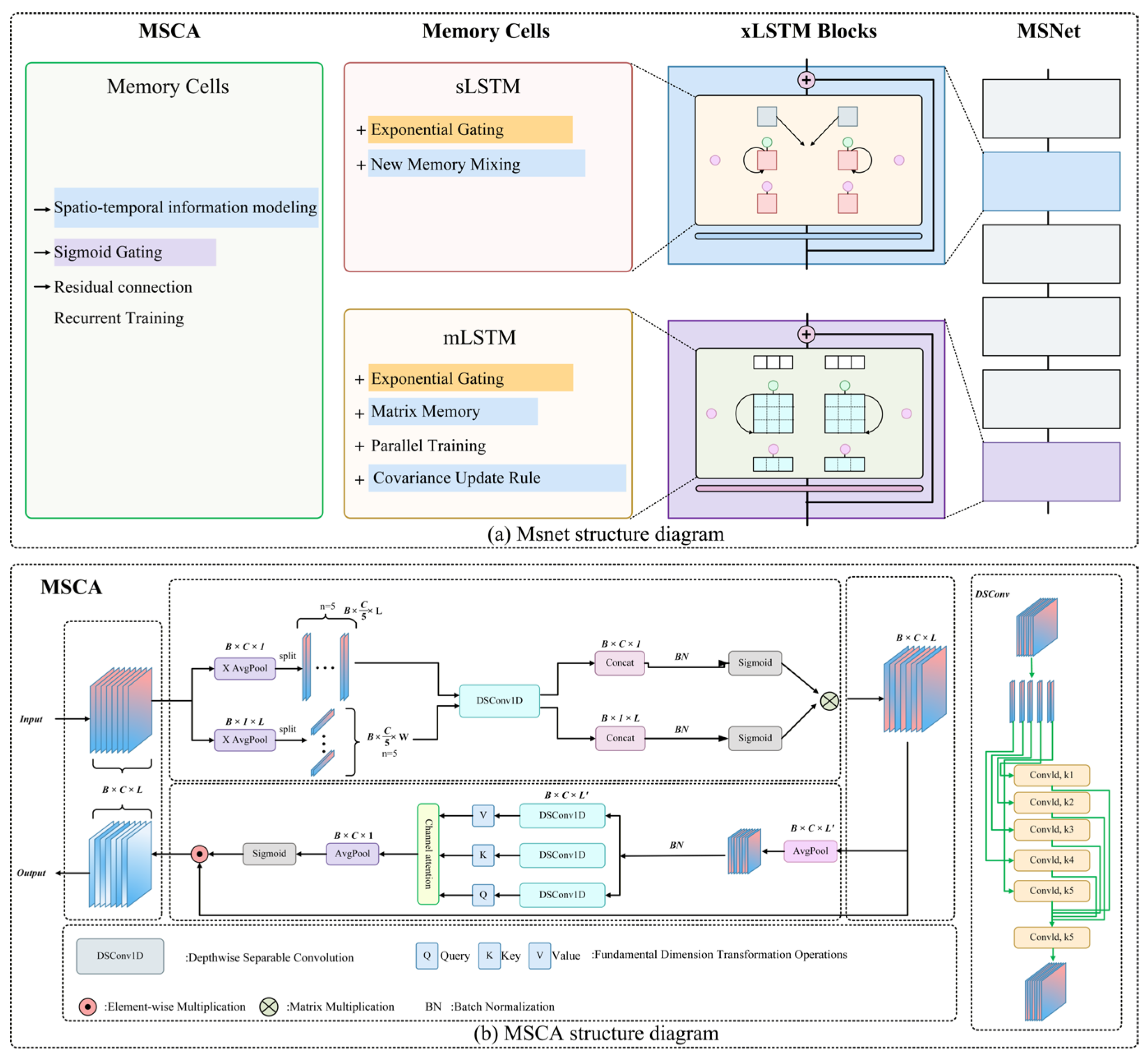

- A new multi-scale channel attention (MSCA) module is proposed, which enhances the expression of temporal features through channel-time gating and grouping multi-scale separable convolution. Firstly, the channel-time gating mechanism is used to decouple the time dimension from the channel dimension, and dynamically distinguish the high-confidence period from the low-confidence period, the important factor and the secondary factor; Then, by grouping multi-scale separable convolution, the characteristics of the stock market are extracted in parallel on multiple receptive field scales, and the attention weight is generated by fusing three-way convolution branches and two-way gating, which effectively retains the key price characteristics. This design can significantly improve the prediction ability of the model in the face of an uncertain stock market.

- A new sparse permutation greedy optimization (SPGO) algorithm is proposed, which improves the traditional greedy search mechanism through sparse random perturbation and differential evolution strategy. Firstly, the experimental solution is constructed for the individual population in each generation of optimization, and the greedy substitution criterion is used to accelerate the convergence. Then, sparse random radius and continuous difference disturbance are introduced to maintain population diversity and alleviate the premature convergence problem in a strong noise environment. The optimization algorithm supports the model to quickly fine-tune the output layer in the short-term fluctuation range, so as to enhance the generalization stability of the model for short-term market fluctuations.

- The MSNet based on xLSTM proposed in this paper obtains 0.0093 MSE and 0.0152 Mae on the self-built dataset. For long series of stock data, it can effectively predict. In general, this method can accurately predict the stock market with high uncertainty.

2. Datasets and Methodology

2.1. Data Acquisition and Preprocessing

2.2. MSNet

2.2.1. Multi-Scale Channel Attention (MSCA)

2.2.2. Sparse Perturbation Greedy Optimization (SPGO)

| Algorithm 1: Sparse Perturbation Greedy Optimization (SPGO) |

| Input: |

| Initial parameter vector to be optimized F(⋅): Gradient-free objective function N: Population size : Lower and upper bounds of box constraints Standard deviation for initial population perturbation cab: Update coefficient for guiding trajectories : Scaling factor for differential evolution Coefficient for contrastive search direction |

| Pseudocode: |

| Initialize |

| While t < T do: |

| Calculate |

| Rank population; identify Top 25% (Elite) and Bottom 25% (Inferior) |

| For i = 1 to N do: |

| Set ; Sample R from Elite, W from Inferior |

| If rand() < 0.5 then: |

| Update |

| Else: |

| Update |

| Compute |

| Select from population |

| Mutation |

| Crossover: Generate Candidate 2 (xd) via binomial crossover on v |

| Generate mask M; |

| Boundary Handling: |

| Evaluate |

| then: |

| End For |

3. Results and Analysis

3.1. Experimental Environment and Training Details

3.2. Evaluation Metric

3.3. Module Effectiveness Experiments

3.3.1. Effectiveness of xLSTM

3.3.2. Effectiveness of MSCA

3.3.3. Effectiveness of SPGO

3.3.4. Model Stability Testing

3.3.5. Comparison Experiments with State-of-the-Art Methods

3.3.6. Discussion

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Zhu, W.; Dai, W.; Tang, C.; Zhou, G.; Liu, Z.; Zhao, Y. MSNet: A time series forecasting model for Chinese stock price prediction. Sci. Rep. 2024, 14, 18351. [Google Scholar] [CrossRef] [PubMed]

- Tang, H. Stock prices prediction based on ARMA model. In Proceedings of the 2021 International Conference on Computer, Blockchain and Financial Development (CBFD), Nanjing, China, 23–25 April 2021; IEEE: New York, NY, USA, 2021. [Google Scholar]

- Ariyo, A.A.; Adewumi, A.O.; Ayo, C.K. Stock price prediction using the ARIMA model. In Proceedings of the 2014 UKSim-AMSS 16th International Conference on Computer Modelling and Simulation, Cambridge, UK, 26–28 March 2014; IEEE: New York, NY, USA, 2014. [Google Scholar]

- Gao, Y.; Wang, R.; Zhou, E. Stock prediction based on optimized LSTM and GRU models. Sci. Program. 2021, 2021, 4055281. [Google Scholar] [CrossRef]

- Hoseinzade, E.; Haratizadeh, S. CNNpred: CNN-based stock market prediction using a diverse set of variables. Expert Syst. Appl. 2019, 129, 273–285. [Google Scholar] [CrossRef]

- Chen, Y.-C.; Huang, W.-C. Constructing a stock-price forecast CNN model with gold and crude oil indicators. Appl. Soft Comput. 2021, 112, 107760. [Google Scholar] [CrossRef]

- Chen, K.; Zhou, Y.; Dai, F. A LSTM-based method for stock returns prediction: A case study of China stock market. In Proceedings of the 2015 IEEE International Conference on Big Data (Big Data), Santa Clara, CA, USA, 29 October–1 November 2015; IEEE: New York, NY, USA, 2015. [Google Scholar]

- Roondiwala, M.; Patel, H.; Varma, S. Predicting stock prices using LSTM. Int. J. Sci. Res. 2017, 6, 1754–1756. [Google Scholar] [CrossRef]

- Chen, C.; Xue, L.; Xing, W. Research on improved GRU-based stock price prediction method. Appl. Sci. 2023, 13, 8813. [Google Scholar] [CrossRef]

- Qi, C.; Ren, J.; Su, J. GRU neural network based on CEEMDAN–wavelet for stock price prediction. Appl. Sci. 2023, 13, 7104. [Google Scholar] [CrossRef]

- Lu, W.; Li, J.; Wang, J.; Qin, L. A CNN-BiLSTM-AM method for stock price prediction. Neural Comput. Applic 2021, 33, 4741–4753. [Google Scholar] [CrossRef]

- Baek, Y.; Kim, H.Y. ModAugNet: A new forecasting framework for stock market index value with an overfitting prevention LSTM module and a prediction LSTM module. Expert Syst. Appl. 2018, 113, 457–480. [Google Scholar] [CrossRef]

- Zhu, P.; Li, Y.; Hu, Y.; Xiang, S.; Liu, Q.; Cheng, D.; Liang, Y. MCI-GRU: Stock Prediction Model Based on Multi-Head Cross-Attention and Improved GRU. arXiv 2024, arXiv:2410.20679. [Google Scholar] [CrossRef]

- Lu, W.; Li, J.; Li, Y.; Sun, A.; Wang, J. A CNN-LSTM-based model to forecast stock prices. Complexity 2020, 2020, 6622927. [Google Scholar] [CrossRef]

- Beck, M.; Pöppel, K.; Spanring, M.; Auer, A.; Prudnikova, O.; Kopp, M.; Klambauer, G.; Brandstetter, J.; Hochreiter, S. xlstm: Extended long short-term memory. Adv. Neural Inf. Process. Syst. 2024, 37, 107547–107603. [Google Scholar]

- Yuan, F.; Huang, X.; Jiang, H.; Jiang, Y.; Zuo, Z.; Wang, L.; Wang, Y.; Gu, S.; Peng, Y. An xLSTM–XGBoost Ensemble Model for Forecasting Non-Stationary and Highly Volatile Gasoline Price. Computers 2025, 14, 256. [Google Scholar] [CrossRef]

- Yadav, A.; Jha, C.K.; Sharan, A. Optimizing LSTM for time series prediction in Indian stock market. Procedia Comput. Sci. 2020, 167, 2091–2100. [Google Scholar] [CrossRef]

- Ali, M.; Khan, D.M.; Alshanbari, H.M.; El-Bagoury, A.A.-A.H. Prediction of complex stock market data using an improved hybrid EMD-LSTM model. Appl. Sci. 2023, 13, 1429. [Google Scholar] [CrossRef]

- Eapen, J.; Bein, D.; Verma, A. Novel deep learning model with CNN and bi-directional LSTM for improved stock market index prediction. In Proceedings of the 2019 IEEE 9th Annual Computing and Communication Workshop and Conference (CCWC), Las Vegas, NV, USA, 7–9 January 2019; IEEE: New York, NY, USA, 2019. [Google Scholar]

- Gülmez, B. Stock price prediction with optimized deep LSTM network with artificial rabbits optimization algorithm. Expert Syst. Appl. 2023, 227, 120346. [Google Scholar] [CrossRef]

- Gupta, U.; Bhattacharjee, V.; Bishnu, P.S. StockNet—GRU based stock index prediction. Expert Syst. Appl. 2022, 207, 117986. [Google Scholar] [CrossRef]

- Baek, H. A CNN-LSTM stock prediction model based on genetic algorithm optimization. Asia-Pac. Financ. Mark. 2024, 31, 205–220. [Google Scholar] [CrossRef]

- Lambora, A.; Gupta, K.; Chopra, K. Genetic algorithm-A literature review. In Proceedings of the 2019 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COMITCon), Faridabad, India, 14–16 February 2019; IEEE: New York, NY, USA, 2019. [Google Scholar]

- Stankevičienė, J.; Akelaitis, S. Impact of public announcements on stock prices: Relation between values of stock prices and the price changes in Lithuanian stock market. Procedia Soc. Behav. Sci. 2014, 156, 538–542. [Google Scholar] [CrossRef]

- Dai, W.; Zhu, W.; Zhou, G.; Liu, G.; Xu, J.; Zhou, H.; Hu, Y.; Liu, Z.; Li, J.; Li, L. AISOA-SSformer: An effective image segmentation method for rice leaf disease based on the transformer architecture. Plant Phenomics 2024, 6, 0218. [Google Scholar] [CrossRef]

- Huang, Z.; Wang, X.; Wei, Y.; Huang, L.; Shi, H.; Liu, W.; Huang, T.S. Ccnet: Criss-cross attention for semantic segmentation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27–28 October 2019. [Google Scholar]

- Liu, R.; Wang, T.; Zhang, X.; Zhou, X. DA-Res2UNet: Explainable blood vessel segmentation from fundus images. Alex. Eng. J. 2023, 68, 539–549. [Google Scholar] [CrossRef]

- Pandian, J.A.; Kumar, V.D.; Geman, O.; Hnatiuc, M.; Arif, M.; Kanchanadevi, K. Plant disease detection using deep convolutional neural network. Appl. Sci. 2022, 12, 6982. [Google Scholar] [CrossRef]

- Cheng, D.; Meng, G.; Cheng, G.; Pan, C. SeNet: Structured edge network for sea-land segmentation. IEEE Geosci. Remote. Sens. Lett. 2017, 14, 247–251. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Hou, Q.; Zhou, D.; Feng, J. Coordinate attention for efficient mobile network design. arXiv 2021, arXiv:2103.02907. [Google Scholar]

- Si, Y.; Xu, H.; Zhu, X.; Zhang, W.; Dong, Y.; Chen, Y.; Li, H. SCSA: Exploring the synergistic effects between spatial and channel attention. Neurocomputing 2025, 634, 129866. [Google Scholar] [CrossRef]

- Ruder, S. An overview of gradient descent optimization algorithms. arXiv 2016, arXiv:1609.04747. [Google Scholar]

- Diederik, K. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. arXiv 2017, arXiv:1711.05101. [Google Scholar]

- Gao, H.; Zhang, Q. Alpha evolution: An efficient evolutionary algorithm with evolution path adaptation and matrix generation. Eng. Appl. Artif. Intell. 2024, 137, 109202. [Google Scholar] [CrossRef]

- Reingold, E.M.; Tarjan, R.E. On a greedy heuristic for complete matching. SIAM J. Comput. 1981, 10, 676–681. [Google Scholar] [CrossRef]

- Misra, D.; Nalamada, T.; Arasanipalai, A.U.; Hou, Q. Rotate to attend: Convolutional triplet attention module. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Online, 5–9 January 2021. [Google Scholar]

- Scabini, L.; Zielinski, K.M.; Ribas, L.C.; Gonçalves, W.N.; De Baets, B.; Bruno, O.M. RADAM: Texture recognition through randomized aggregated encoding of deep activation maps. Pattern Recognit. 2023, 143, 109802. [Google Scholar] [CrossRef]

- Marini, F.; Walczak, B. Particle swarm optimization (PSO). A tutorial. Chemom. Intell. Lab. Syst. 2015, 149, 153–165. [Google Scholar] [CrossRef]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2016, 28, 2222–2232. [Google Scholar] [CrossRef]

- Huang, Z.; Xu, W.; Yu, K. Bidirectional LSTM-CRF models for sequence tagging. arXiv 2015, arXiv:1508.01991. [Google Scholar] [CrossRef]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv 2014, arXiv:1412.3555. [Google Scholar] [CrossRef]

- Zhou, T.; Ma, Z.; Wen, Q.; Wang, X.; Sun, L.; Jin, R. Fedformer: Frequency enhanced decomposed transformer for long-term series forecasting. In Proceedings of the International Conference on Machine Learning PMLR, Baltimore, MD, USA, 17–23 July 2022. [Google Scholar]

- Zhou, H.; Zhang, S.; Peng, J.; Zhang, S.; Li, J.; Xiong, H.; Zhang, W. Informer: Beyond efficient transformer for long sequence time-series forecasting. In Proceedings of the AAAI Conference on Artificial Intelligence, Online, 2–9 February 2021; Volume 35. [Google Scholar]

| Hardware environment | CPU | 16 vCPU Intel(R) Xeon(R) Platinum 8481C |

| GPU | NVIDIA GeForce RTX 4090 | |

| RAM | 32 GB | |

| Video Memory | 24 GB | |

| Software environment | OS | Ubuntu20.04 |

| CUDA Toolkit | V11.3 | |

| CUDNN | V8.2.4 | |

| Python | 3.8.10 | |

| torch | 1.11.0 | |

| torchvision | 0.14.1 |

| Data | Short | Mid | Long | |||

|---|---|---|---|---|---|---|

| Method | LSTM | xLSTM | LSTM | xLSTM | LSTM | xLSTM |

| MSE | 0.0079 | 0.0065 | 0.0107 | 0.0092 | 0.0197 | 0.0136 |

| MAE | 0.0154 | 0.0124 | 0.0195 | 0.0178 | 0.0169 | 0.0169 |

| RMSE | 0.0168 | 0.0147 | 0.0214 | 0.0163 | 0.0214 | 0.0214 |

| R2 | 0.9365 | 0.9483 | 0.8938 | 0.9137 | 0.8553 | 0.8741 |

| Method | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| SE | 0.0183 | 0.0191 | 0.0237 | 0.8446 |

| CBAM | 0.0149 | 0.0168 | 0.0212 | 0.8761 |

| TA | 0.0195 | 0.0199 | 0.0223 | 0.8638 |

| CA | 0.0143 | 0.0165 | 0.0208 | 0.8795 |

| MSCA | 0.0127 | 0.0161 | 0.0191 | 0.8878 |

| Method | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| Adam | 0.0136 | 0.0169 | 0.0214 | 0.8741 |

| AdamW | 0.0144 | 0.0173 | 0.0214 | 0.8734 |

| RAdam | 0.0155 | 0.0181 | 0.0244 | 0.8621 |

| PSO | 0.0167 | 0.0183 | 0.0225 | 0.8602 |

| SPGO | 0.0124 | 0.0161 | 0.0193 | 0.8863 |

| Method | MAE | RMSE | R2 | |

|---|---|---|---|---|

| Rolling verification periods | xLSTM | 0.0275 ± 0.0031 | 0.0304 ± 0.0017 | 0.8462 ± 0.0026 |

| Ours | 0.0228 ± 0.004 | 0.0243 ± 0.0022 | 0.8812 ± 0.0057 | |

| Cross-domain testing | xLSTM | 0.0281 ± 0.0014 | 0.0335 ± 0.0021 | 0.8449 ± 0.0041 |

| Ours | 0.0254 ± 0.0023 | 0.0266 ± 0.0019 | 0.8626 ± 0.0027 |

| Model | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| LSTM | 0.0159 | 0.0207 | 0.0244 | 0.8353 |

| BiLSTM | 0.0175 | 0.0181 | 0.0229 | 0.8551 |

| xLSTM | 0.0136 | 0.0169 | 0.0214 | 0.8741 |

| GRU | 0.0361 | 0.0195 | 0.0247 | 0.8329 |

| FEDformer | 0.0124 | 0.0162 | 0.0205 | 0.8836 |

| Informer | 0.0145 | 0.0168 | 0.0213 | 0.8745 |

| Ours | 0.0093 | 0.0152 | 0.00193 | 0.9067 |

| Model | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| LSTM | 0.0084 | 0.0612 | 0.0936 | 0.9036 |

| BiLSTM | 0.0065 | 0.0533 | 0.0804 | 0.9255 |

| xLSTM | 0.0049 | 0.0479 | 0.0703 | 0.9423 |

| GRU | 0.0066 | 0.0586 | 0.0813 | 0.9238 |

| FEDformer | 0.0042 | 0.0443 | 0.0645 | 0.9519 |

| Informer | 0.0041 | 0.0434 | 0.0634 | 0.9536 |

| Ours | 0.0031 | 0.0368 | 0.0557 | 0.9642 |

| Model | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| LSTM | 0.0073 | 0.0185 | 0.0231 | 0.8533 |

| BiLSTM | 0.0063 | 0.0183 | 0.0228 | 0.8551 |

| xLSTM | 0.0049 | 0.0169 | 0.0221 | 0.8663 |

| GRU | 0.0051 | 0.0172 | 0.0223 | 0.8625 |

| FEDformer | 0.0048 | 0.0178 | 0.0221 | 0.8653 |

| Informer | 0.0042 | 0.0167 | 0.0213 | 0.8757 |

| Ours | 0.0045 | 0.0162 | 0.0206 | 0.8828 |

| Model | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| LSTM [41] | 0.0094 | 0.0232 | 0.0307 | 0.8943 |

| BiLSTM [42] | 0.0082 | 0.0206 | 0.0285 | 0.9088 |

| xLSTM [15] | 0.0075 | 0.0194 | 0.0273 | 0.9167 |

| GRU [43] | 0.0077 | 0.0191 | 0.0277 | 0.9142 |

| FEDformer [44] | 0.0069 | 0.0159 | 0.0263 | 0.9227 |

| Informer [45] | 0.0071 | 0.0171 | 0.0265 | 0.9213 |

| Ours | 0.0063 | 0.0163 | 0.0244 | 0.9331 |

| Model | Median Test MSE | Worst-Case Test MSE |

|---|---|---|

| GHWA | 0.0091 | 0.0121 |

| SZGT | 0.0035 | 0.0067 |

| ZGBA | 0.0049 | 0.0074 |

| GHWA | 0.0060 | 0.0082 |

| Model | MSE | MAE | RMSE | R2 |

|---|---|---|---|---|

| LSTM | 0.0284 | 0.0471 | 0.0533 | 0.9348 |

| BiLSTM | 0.0243 | 0.0405 | 0.0493 | 0.9442 |

| xLSTM | 0.0222 | 0.0381 | 0.0471 | 0.9489 |

| GRU | 0.0329 | 0.0424 | 0.0574 | 0.9244 |

| FEDformer | 0.0261 | 0.0319 | 0.0511 | 0.9433 |

| Informer | 0.0185 | 0.0356 | 0.0431 | 0.9547 |

| Ours | 0.0159 | 0.0327 | 0.0339 | 0.9633 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

He, J.; Wan, F.; He, M. A Stock Price Prediction Network That Integrates Multi-Scale Channel Attention Mechanism and Sparse Perturbation Greedy Optimization. Algorithms 2026, 19, 67. https://doi.org/10.3390/a19010067

He J, Wan F, He M. A Stock Price Prediction Network That Integrates Multi-Scale Channel Attention Mechanism and Sparse Perturbation Greedy Optimization. Algorithms. 2026; 19(1):67. https://doi.org/10.3390/a19010067

Chicago/Turabian StyleHe, Jiarun, Fangying Wan, and Mingfang He. 2026. "A Stock Price Prediction Network That Integrates Multi-Scale Channel Attention Mechanism and Sparse Perturbation Greedy Optimization" Algorithms 19, no. 1: 67. https://doi.org/10.3390/a19010067

APA StyleHe, J., Wan, F., & He, M. (2026). A Stock Price Prediction Network That Integrates Multi-Scale Channel Attention Mechanism and Sparse Perturbation Greedy Optimization. Algorithms, 19(1), 67. https://doi.org/10.3390/a19010067