YOLOv8-ECCα: Enhancing Object Detection for Power Line Asset Inspection Under Real-World Visual Constraints

Abstract

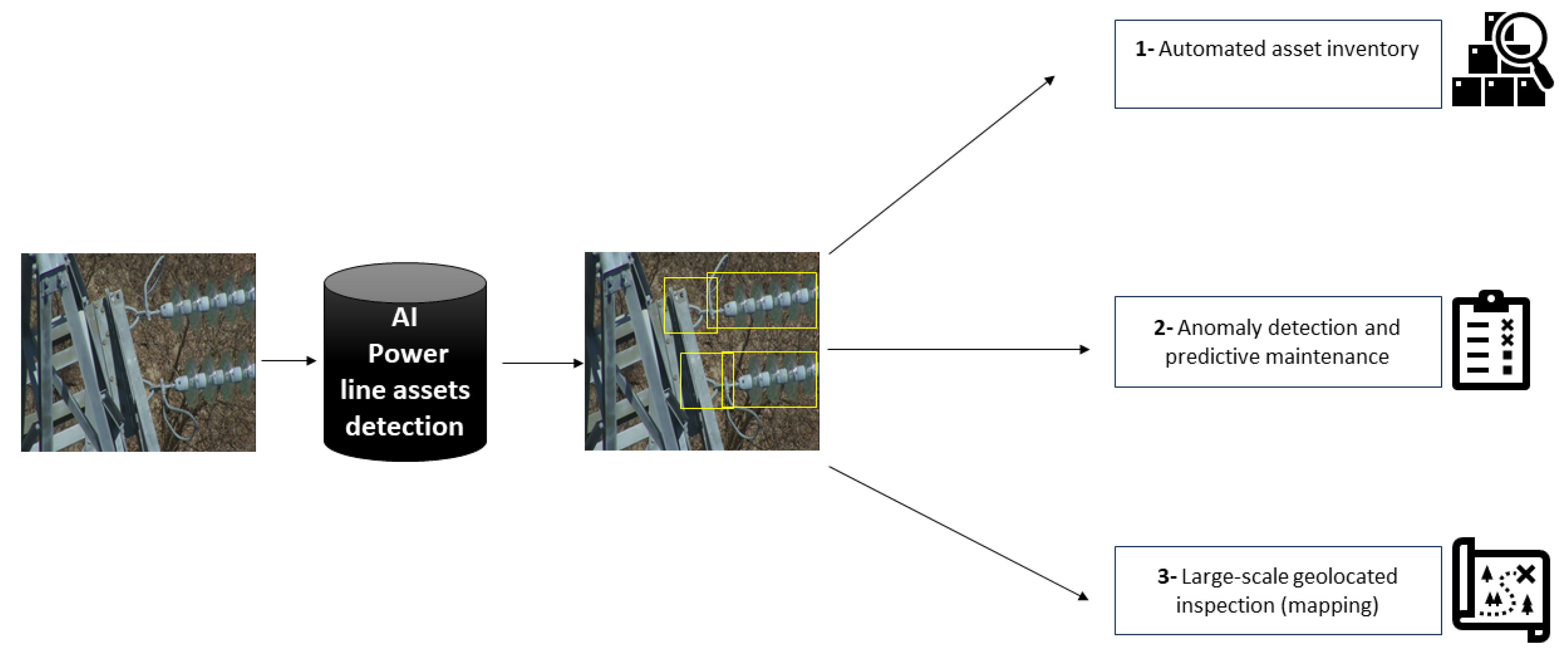

1. Introduction

2. Related Work

3. Materials and Methods

3.1. Justification for Selecting YOLOv8 as the Baseline Model

- Backbone

- b.

- Neck

- c.

- Detection Head and Loss

- denotes the intersection-over-union between the predicted bounding box BB and the ground-truth box B ∗ B*;

- is the squared Euclidean distance between the center points c and c*, promoting accurate centering of predictions;

- d is the diagonal length of the smallest enclosing box covering both B and B*, serving as a normalization factor;

- v measures the divergence between the aspect ratios of the two boxes;

- is a scaling factor that balances the influence of the aspect ratio penalty based on the current IoU.

- is the soft target probability assigned to bin b, typically computed using linear interpolation between two neighboring bins;

- is the model’s predicted probability for bin bb;

- and B is the total number of bins (e.g., 16 or 32).

- Lcls: classification loss (Binary Cross-Entropy);

- Lobj: objectness loss (Focal Loss or BCE);

- Lreg = LCIoU + LDFL: composite regression loss.

3.2. YOLOv8-ECCα: Enhanced Architecture

3.2.1. CoordConv-Augmented C2F

- Edge Object Localization: In wide-angle drone images, critical components such as insulators or dampers may appear near the borders of the frame. CoordConv improves the network’s ability to detect such edge-located targets by embedding coordinate priors.

- Small Target Sensitivity: The small scale and sparse distribution of components in aerial images make them difficult to detect with regular convolutions. CoordConv enhances sensitivity to spatial variation, reducing false negatives.

- Viewpoint Robustness: UAV perspectives vary significantly due to pitch, yaw, and altitude changes. By learning from absolute positions, the model gains resilience against rotation and perspective distortion—challenges common in real-world drone inspection.

3.2.2. Efficient Channel Attention (ECA) Module

3.2.3. α-IoU Losses for Bounding Box Regression

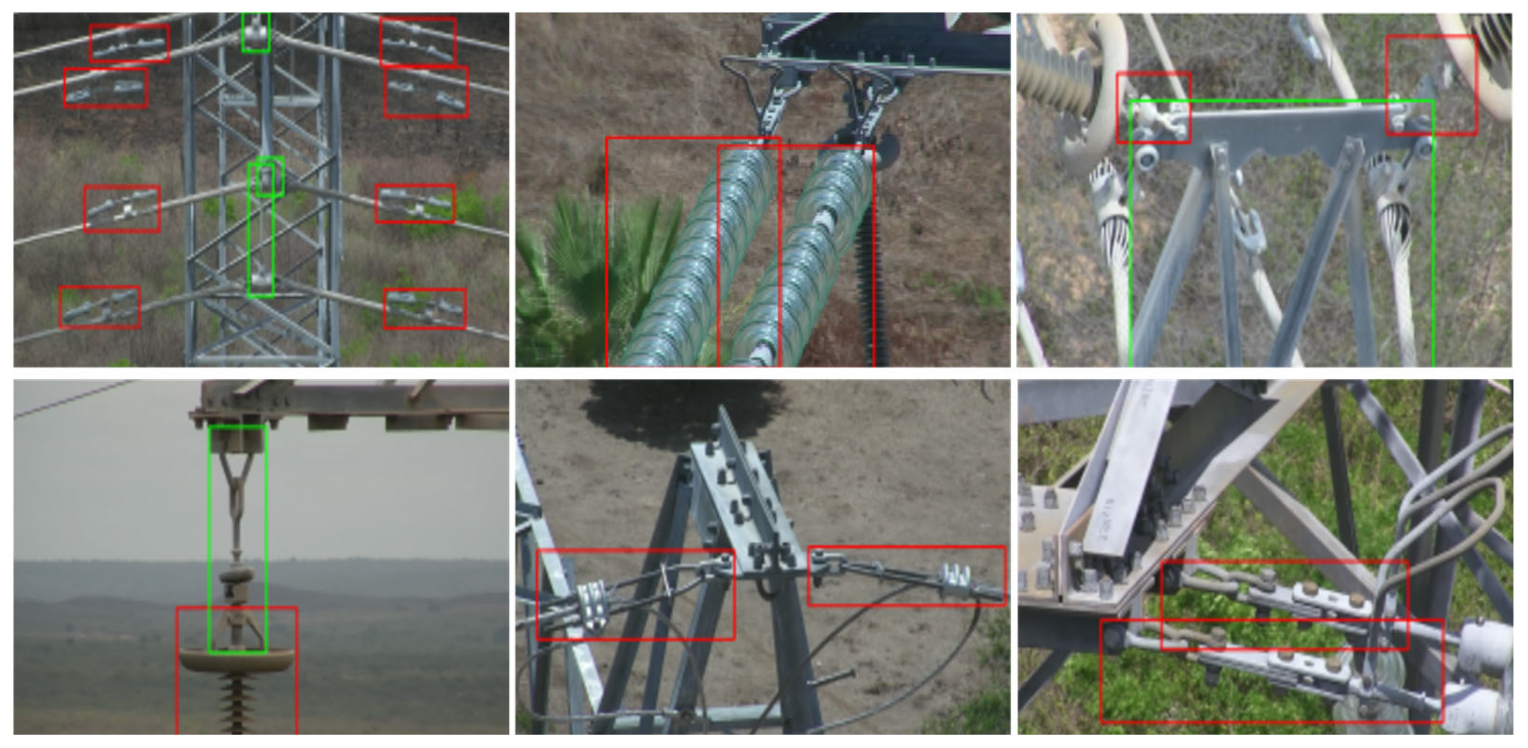

3.3. Dataset

4. Experiments

4.1. Environment Configuration

4.2. Models

- YOLOv7-L: a large variant of the YOLOv7 family, known for its strong accuracy due to a well-balanced backbone and neck design. YOLOv7 [26] introduces re-parameterized convolutions and efficient training strategies that improve performance on standard benchmarks.

- YOLOv8m: the medium variant of YOLOv8, representing the default performance-capacity trade-off in the Ultralytics YOLOv8 lineup. It serves as a direct architectural sibling to our baseline (YOLOv8s), with increased depth and width for better feature representation.

- YOLOv9-E/s [27]: a more recent architecture incorporating generalized efficient layer aggregation and task-aligned detection head, designed to push accuracy while maintaining computational efficiency. It reflects the latest trends in feature fusion and decoupled head design.

4.3. Metrics

4.4. Training Process

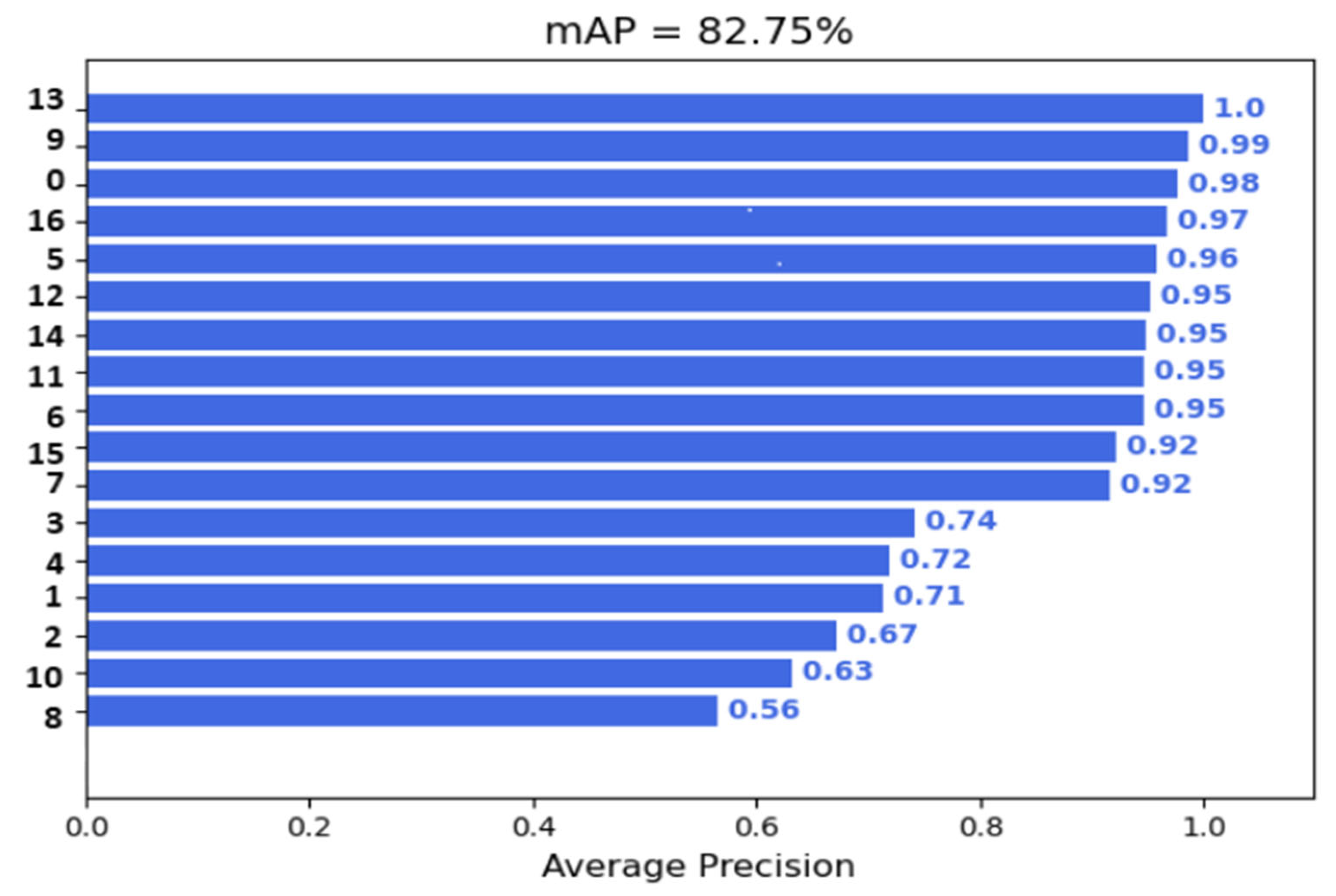

5. Results

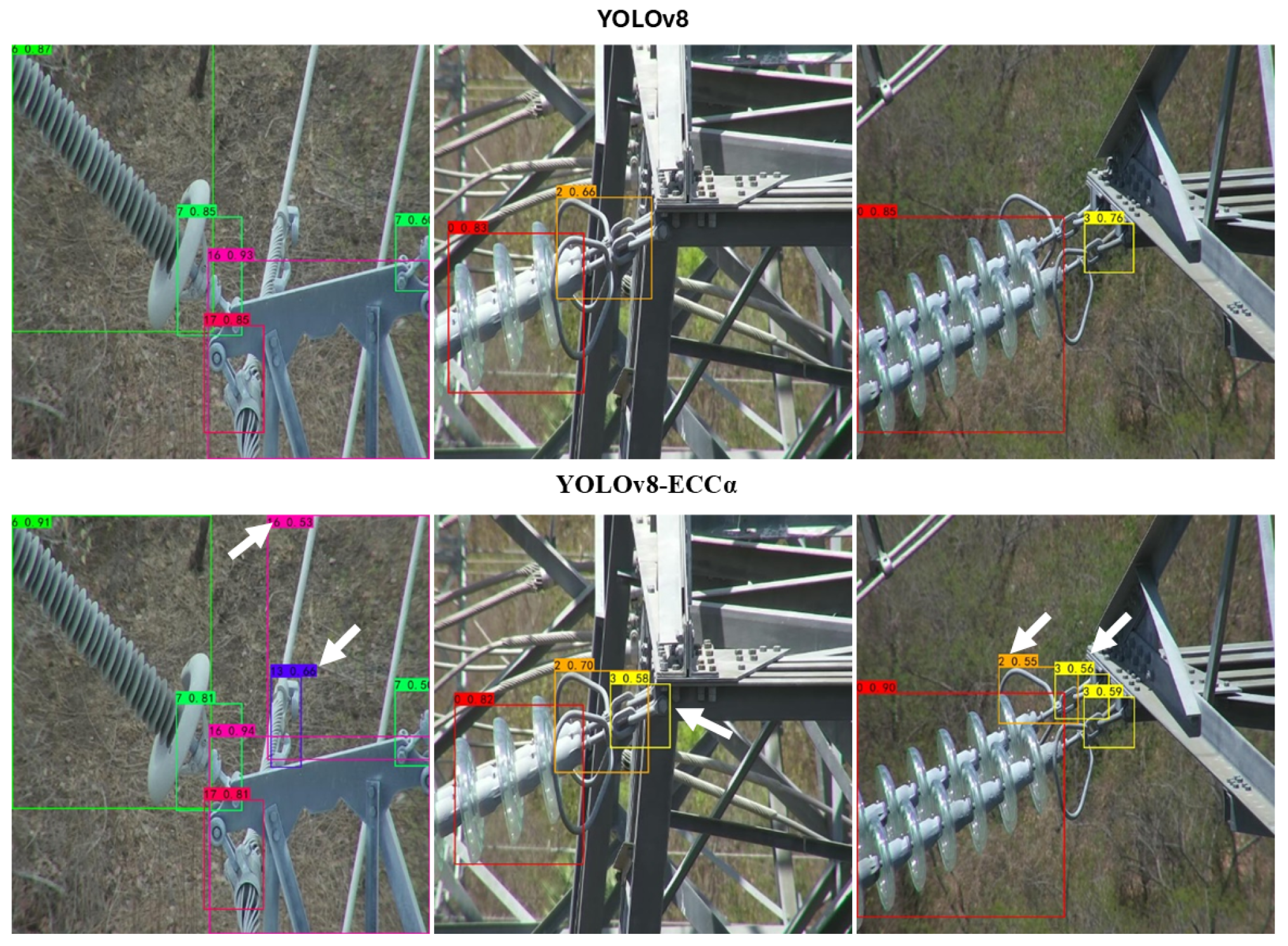

5.1. Comparative Analysis of Our Contribution Against Recent Advancements

5.2. Ablation Study: Contribution of Each Component

- Encoding spatial relationships and maintaining robustness to viewpoint distortions.

- Enhancing object detection in cluttered or congested environments.

- Improving bounding box alignment in cases with low overlap or partial occlusion.

6. Discussion

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Mendu, B.; Mbuli, N. State-of-the-Art Review on the Application of Unmanned Aerial Vehicles (UAVs) in Power Line Inspections: Current Innovations, Trends, and Future Prospects. Drones 2025, 9, 265. [Google Scholar] [CrossRef]

- Faisal, A.A.; Mecheter, I.; Qiblawey, Y.; Fernandez, J.H.; Chowdhury, M.E.; Kiranyaz, S. Deep Learning in Automated Power Line Inspection: A Review. Appl. Energy 2025, 385, 125507. [Google Scholar] [CrossRef]

- Rey, L.; Bernardos, A.M.; Dobrzycki, A.D.; Carramiñana, D.; Bergesio, L.; Besada, J.A.; Casar, J.R. A Performance Analysis of You Only Look Once Models for Deployment on Constrained Computational Edge Devices in Drone Applications. Electronics 2025, 14, 638. [Google Scholar] [CrossRef]

- Edozie, E.; Shuaibu, A.N.; John, U.K.; Sadiq, B.O. Comprehensive Review of Recent Developments in Visual Object Detection Based on Deep Learning. Artif. Intell. Rev. 2025, 58, 277. Available online: https://link.springer.com/article/10.1007/s10462-025-11284-w?utm_source=chatgpt.com (accessed on 24 July 2025). [CrossRef]

- Cao, J.; Peng, B.; Gao, M.; Hao, H.; Li, X.; Mou, H. Object Detection Based on CNN and Vision-Trcesqmcdansformer: A Survey. IET Comput. Vis. 2025, 19, e70028. Available online: https://ietresearch.onlinelibrary.wiley.com/doi/10.1049/cvi2.70028 (accessed on 24 July 2025). [CrossRef]

- Ali, M.L.; Zhang, Z. The YOLO Framework: A Comprehensive Review of Evolution, Applications, and Benchmarks in Object Detection. Computers 2024, 13, 336. Available online: https://www.mdpi.com/2073-431X/13/12/336?utm (accessed on 24 July 2025). [CrossRef]

- Wang, C.-Y.; Liao, H.-Y.M. YOLOv1 to YOLOv10: The fastest and most accurate real-time object detection systems. APSIPA Trans. Signal Inf. Process 2024, 13, 1–38. [Google Scholar] [CrossRef]

- Aitelhaj, R.; Benelmostafa, B.E.; Medromi, H. APF-YOLOV8: Enhancing Multiscale Detection and Intra-Class Variance Handling for UAV-Based Insulator Power Line Inspections. F1000Research 2024, 14, 141. [Google Scholar] [CrossRef]

- Chen, C.; Yuan, G.; Zhou, H.; Ma, Y. Improved YOLOv5s model for key components detection of power transmission lines. Math. Biosci. Eng. 2023, 20, 7738–7760. [Google Scholar] [CrossRef]

- Hu, C.; Min, S.; Liu, X.; Zhou, X.; Zhang, H. Research on an Improved Detection Algorithm Based on YOLOv5s for Power Line Self-Exploding Insulators. Electronics 2023, 12, 3675. Available online: https://www.mdpi.com/2079-9292/12/17/3675 (accessed on 24 July 2025). [CrossRef]

- Zhang, N.; Su, J.; Zhou, Y.; Chen, H. Insulator-YOLO: Transmission Line Insulator Risk Identification Based on Improved YOLOv5. Processes 2024, 12, 2552. Available online: https://www.mdpi.com/2227-9717/12/11/2552 (accessed on 24 July 2025).

- ID-YOLOv7: An Efficient Method for Insulator Defect Detection in Power Distribution Network. Available online: https://ouci.dntb.gov.ua/en/works/4YZD8e3l/ (accessed on 24 July 2025).

- Zhang, Y.; Li, J.; Fu, W.; Ma, J.; Wang, G. A Lightweight YOLOv7 Insulator Defect Detection Algorithm Based on DSC-SE. PLoS ONE 2023, 18, e0289162. [Google Scholar] [CrossRef]

- Xu, J.; Zhao, S.; Li, Y.; Song, W.; Zhang, K. MRB-YOLOv8: An Algorithm for Insulator Defect Detection. Electronics 2025, 14, 830. [Google Scholar] [CrossRef]

- Ling, Z.; Xin, Q.; Lin, Y.; Su, G.; Shui, Z. Optimization of autonomous driving image detection based on RFAConv and triplet attention. arXiv 2024, arXiv:2407.09530. [Google Scholar]

- Zhang, D.; Cao, K.; Han, K.; Kim, C.; Jung, H. PAL-YOLOv8: A Lightweight Algorithm for Insulator Defect Detection. Electronics 2025, 13, 3500. [Google Scholar] [CrossRef]

- Yaseen, M. What is YOLOv8: An In-Depth Exploration of the Internal Features of the Next-Generation Object Detector. arXiv 2024, arXiv:2408.15857. [Google Scholar]

- Jocher, G.; Qiu, J.; Chaurasia, A. Ultralytics YOLO, version 8.0.0; Ultralytics: San Francisco, CA, USA, January 2023. Range, K.; Jocher, G. Brief Summary of YOLOv8 Model Structure. GitHub Issue, 2023. Available online: https://github.com/ultralytics/ultralytics/issues/189 (accessed on 27 April 2023).

- Li, H.; Xiong, P.; An, J.; Wang, L. Pyramid Attention Network for Semantic Segmentation. arXiv 2018, arXiv:1805.10180. [Google Scholar] [CrossRef]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection. arXiv 2017, arXiv:1612.03144. [Google Scholar] [CrossRef]

- Zheng, Z.; Wang, P.; Ren, D.; Liu, W.; Ye, R.; Hu, Q.; Zuo, W. Enhancing Geometric Factors in Model Learning and Inference for Object Detection and Instance Segmentation. arXiv 2021, arXiv:2005.03572. [Google Scholar] [CrossRef]

- Li, X.; Wang, W.; Wu, L.; Chen, S.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized Focal Loss: Learning Qualified and Distributed Bounding Boxes for Dense Object Detection. arXiv 2020, arXiv:2006.04388. [Google Scholar] [CrossRef]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient Channel Attention for Deep Convolutional Neural Networks. arXiv 2020, arXiv:1910.03151. [Google Scholar] [CrossRef]

- He, J.; Erfani, S.; Ma, X.; Bailey, J.; Chi, Y.; Hua, X.S. α-IoU: A Family of Power Intersection over Union Losses for Bounding Box Regression. Adv. Neural Inf. Process Syst. 2021, 34, 20230–20242. [Google Scholar]

- Vieira e Silva, A.L.B.; de Castro Felix, H.; Simões, F.P.M.; Teichrieb, V.; dos Santos, M.; Santiago, H.; Sgotti, V.; Lott Neto, H. Insplad: A dataset and benchmark for power line asset inspection in UAV images. Int. J. Remote Sens. 2023, 44, 7294–7320. [Google Scholar] [CrossRef]

- Wang, C.-Y.; Bochkovskiy, A.; Liao, H.-Y.M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv 2022, arXiv:2207.02696. [Google Scholar]

- Wang, C.-Y.; Yeh, I.-H.; Liao, H.-Y.M. YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information. arXiv 2024, arXiv:2402.13616. [Google Scholar] [CrossRef]

- Ultralytics. Ultralytics YOLOv8 Default Configuration File. Available online: https://github.com/ultralytics/ultralytics/blob/main/ultralytics/cfg/default.yaml (accessed on 15 October 2025).

- Zhao, H.; Yan, L.; Hou, Z.; Lin, J.; Zhao, Y.; Ji, Z.; Wang, Y. Error Analysis Strategy for Long-Term Correlated Network Systems: Generalized Nonlinear Stochastic Processes and Dual-Layer Filtering Architecture. IEEE Internet Things J. 2025, 12, 33731–33745. [Google Scholar] [CrossRef]

- Luo, Y.; Ci, Y.; Jiang, S.; Wei, X. A novel lightweight real-time traffic sign detection method based on an embedded device and YOLOv8. J. Real-Time Image Process 2024, 21, 24. [Google Scholar] [CrossRef]

- Neamah, O.N.; Almohamad, T.A.; Bayir, R. Enhancing Road Safety: Real-Time Distracted Driver Detection Using Nvidia Jetson Nano and YOLOv8. In Proceedings of the Zooming Innovation in Consumer Technologies Conference (ZINC), Novi Sad, Serbia, 22–23 May 2024; pp. 194–198. [Google Scholar]

- Qengineering. YoloV8-TensorRT-Jetson_Nano: A Lightweight C++ Implementation of YoloV8 Running on NVIDIA’s TensorRT Engine. GitHub Repository. 2024. Available online: https://github.com/Qengineering/YoloV8-TensorRT-Jetson_Nano (accessed on 25 December 2025).

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Adam, M.A.A.; Tapamo, J.R. Enhancing YOLOv5 for Autonomous Driving: Efficient Attention-Based Object Detection on Edge Devices. J. Imaging 2025, 11, 263. [Google Scholar] [CrossRef]

| Asset Category | Number of Images | Number of Annotations |

|---|---|---|

| Damper—Spiral | 943 | 1020 |

| Damper—Stockbridge | 1761 | 6953 |

| Glass Insulator | 2778 | 2978 |

| Glass Insulator Big Shackle | 152 | 296 |

| Glass Insulator Small Shackle | 143 | 263 |

| Glass Insulator Tower Shackle | 106 | 195 |

| Lightning Rod Shackle | 112 | 195 |

| Lightning Rod Suspension | 709 | 710 |

| Tower ID Plate | 242 | 242 |

| Polymer Insulator | 3173 | 3244 |

| Pol. Insulator Lower Shackle | 1760 | 1824 |

| Pol. Insulator Upper Shackle | 1691 | 1692 |

| Pol. Insulator Tower Shackle | 567 | 567 |

| Spacer | 93 | 94 |

| Vari-grip | 560 | 1008 |

| Yoke | 1661 | 1661 |

| Yoke Suspension | 2716 | 6520 |

| Model | mAP:50(%) | F1 (%) | Recall (%) | Precision (%) | GFLOPS | Params | Fps |

|---|---|---|---|---|---|---|---|

| Yolov7-L | 78.62 | 77.40 | 76.10 | 78.80 | 37.637 G | 52.401 M | 41.96 |

| Yolov8m | 82.24 | 80.20 | 78.00 | 82.50 | 39.441 G | 25.857 M | 51.73 |

| Yolov8s | 81.89 | 79.50 | 77.74 | 81.47 | 28.538 G | 11.136 M | 84.71 |

| YOLOv9-s | 82.30 | 81.10 | 79.10 | 82.40 | 26.421 G | 7.1 M | 65.5 |

| Yolov9-E | 82.61 | 81.00 | 79.21 | 82.95 | 72.624 G | 48.6455 M | 42.36 |

| Ours | 82.75 | 82.00 | 79.92 | 83.67 | 28.522 G | 11.132 M | 86.73 |

| Seed | Model | mAP@50 (%) | F1 (%) | Recall (%) | Precision (%) |

|---|---|---|---|---|---|

| 0 | Ours | 82.75 | 82.00 | 79.92 | 83.67 |

| 0 | Yolov8s | 81.89 | 79.50 | 77.74 | 81.47 |

| 46 | Ours | 82.90 | 82.20 | 80.35 | 83.95 |

| 46 | YOLOv8-s | 81.60 | 79.20 | 77.30 | 81.20 |

| 2025 | Ours | 83.25 | 82.70 | 80.85 | 84.65 |

| 2025 | YOLOv8-s | 82.05 | 80.10 | 78.10 | 82.05 |

| Model | mAP:50 | F1 | Recall | Precision | GFLOPS | Params | Fps |

|---|---|---|---|---|---|---|---|

| Yolov8s | 81.89% | 0.78 | 77.74% | 81.47% | 28.538 G | 11.136 M | 84.71 |

| Yolov8s + CoordConv | 82.29% | 0.79 | 78.33% | 83.07% | 28.519 G | 11.132 M | 88.45 |

| Yolov8s + CoordConv + ECA | 82.63% | 0.81 | 79.87% | 83.47% | 28.522 G | 11.132 M | 86.73 |

| Yolov8s + CoordConv + ECA + Alpha-IOU (YOLOv8-ECCα) | 82.75% | 0.82 | 79.92% | 83.67% | 28.522 G | 11.132 M | 86.73 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Ait el haj, R.; Benelmostafa, B.-E.; Medromi, H. YOLOv8-ECCα: Enhancing Object Detection for Power Line Asset Inspection Under Real-World Visual Constraints. Algorithms 2026, 19, 66. https://doi.org/10.3390/a19010066

Ait el haj R, Benelmostafa B-E, Medromi H. YOLOv8-ECCα: Enhancing Object Detection for Power Line Asset Inspection Under Real-World Visual Constraints. Algorithms. 2026; 19(1):66. https://doi.org/10.3390/a19010066

Chicago/Turabian StyleAit el haj, Rita, Badr-Eddine Benelmostafa, and Hicham Medromi. 2026. "YOLOv8-ECCα: Enhancing Object Detection for Power Line Asset Inspection Under Real-World Visual Constraints" Algorithms 19, no. 1: 66. https://doi.org/10.3390/a19010066

APA StyleAit el haj, R., Benelmostafa, B.-E., & Medromi, H. (2026). YOLOv8-ECCα: Enhancing Object Detection for Power Line Asset Inspection Under Real-World Visual Constraints. Algorithms, 19(1), 66. https://doi.org/10.3390/a19010066