GRU-Based Short-Term Forecasting for Microgrid Operation: Modeling and Simulation Using Simulink

Abstract

1. Introduction

- A reproducible hour-ahead forecasting benchmark for load, PV, and wind using GRU, LSTM, and a persistence baseline under a consistent 24 to 1 univariate setup.

- Conformal PI90 reporting that complements point metrics and supports uncertainty-aware extensions.

- A dual-branch Simulink coupling that quantifies how forecast uncertainty propagates to time-series behavior, monthly KPIs, and an illustrative flat-tariff cost sensitivity with a throughput-based degradation proxy.

2. Methodology

2.1. Data Acquisition

Descriptive Statistics and Seasonal Characteristics

2.2. Data Preprocessing

2.2.1. Temporal Alignment and Quality Control

2.2.2. Unit Harmonization (MW)

2.2.3. Scaling Renewables to the K0K Microgrid Scope

2.2.4. Physical Plausibility Constraints (Peak Caps)

2.3. Forecasting Setup

2.3.1. Forecasting Task and Input Construction

2.3.2. Train–Calibration–Test Split

2.3.3. Models and Baselines

2.3.4. Prediction Intervals via Conformal Calibration

2.3.5. Evaluation Metrics

2.3.6. Modeling Choice and Scope

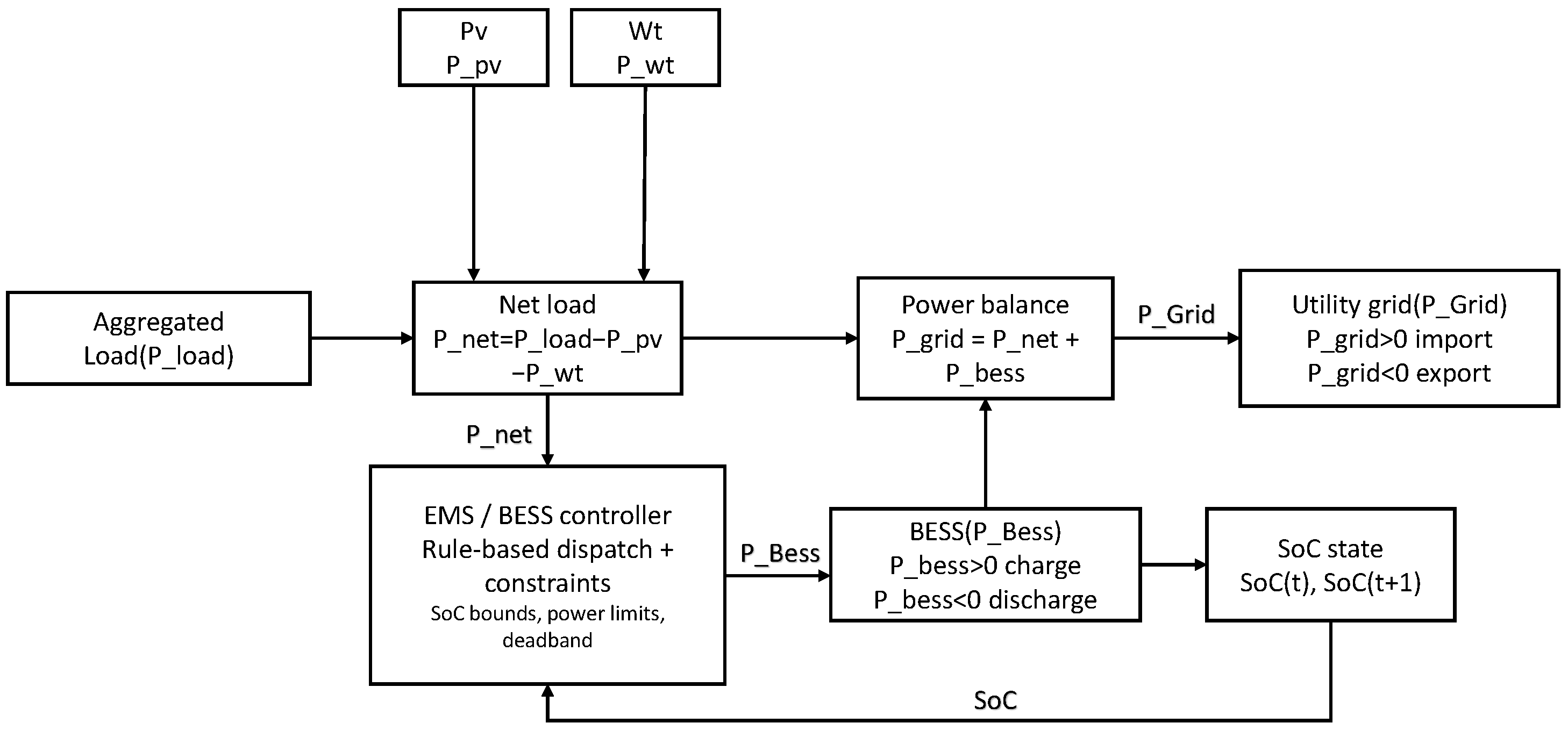

2.4. Microgrid Simulation Coupling

2.4.1. Microgrid Architecture and Components

2.4.2. Two-Branch Design for Pred vs. True

2.4.3. Energy Management Strategy and BESS Control

2.4.4. BESS Parameters and EMS Rules

2.4.5. Simulation Settings and Exported Outputs

2.4.6. Evaluation Metrics and System-Level KPIs

Economic Deviation Under a Flat Tariff and a Throughput-Based Degradation Proxy

3. Results

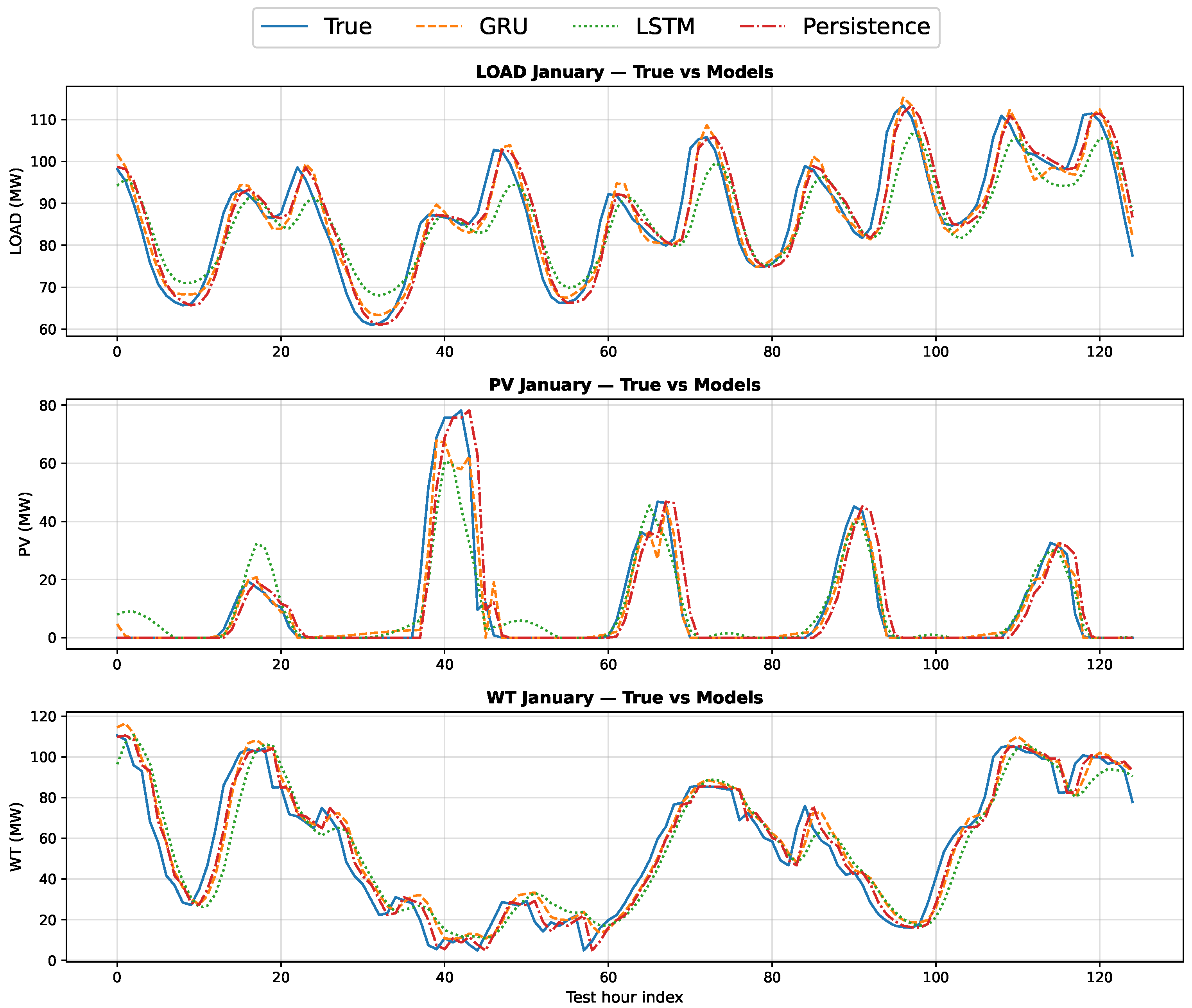

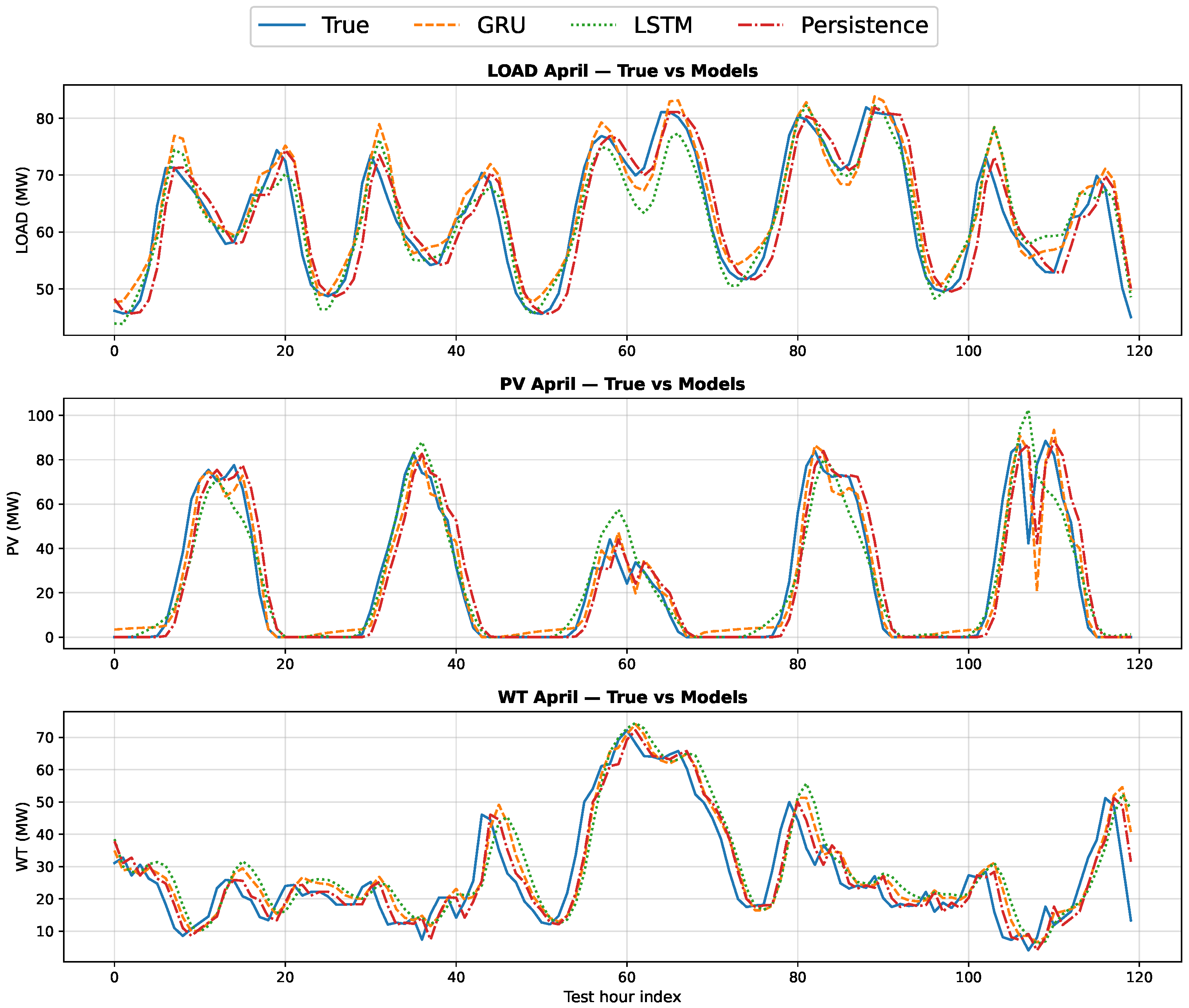

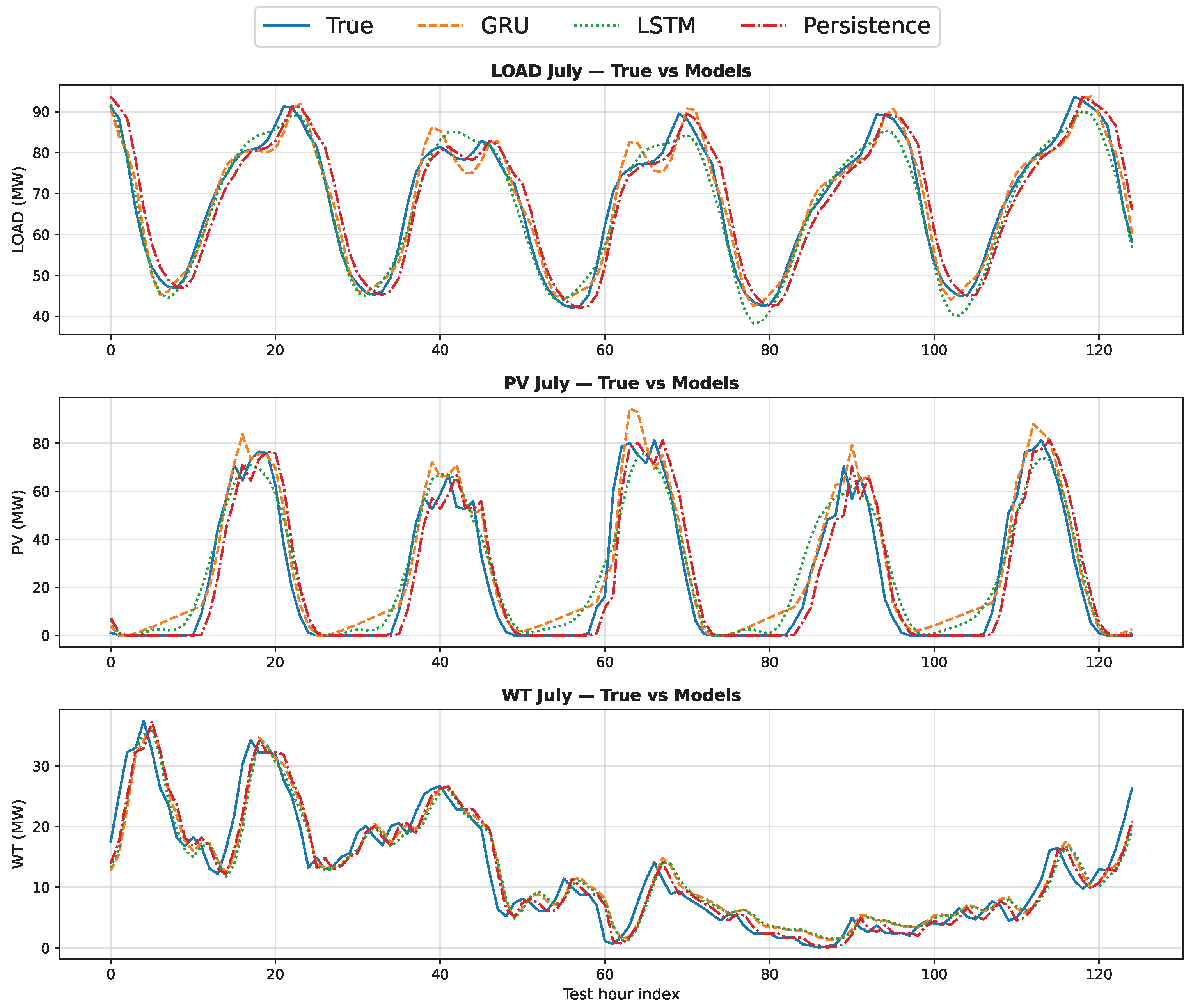

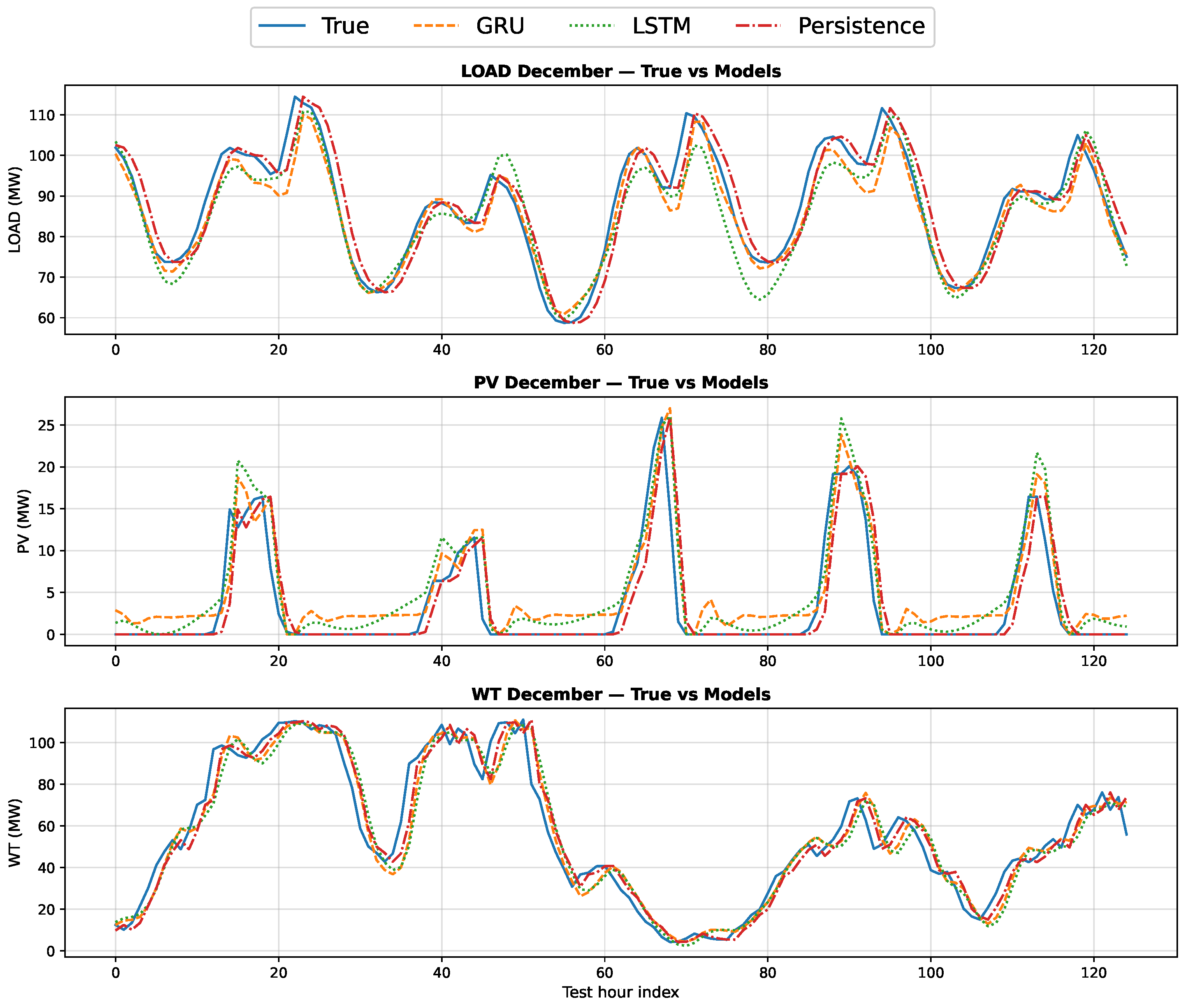

3.1. Forecasting Performance and Model Selection

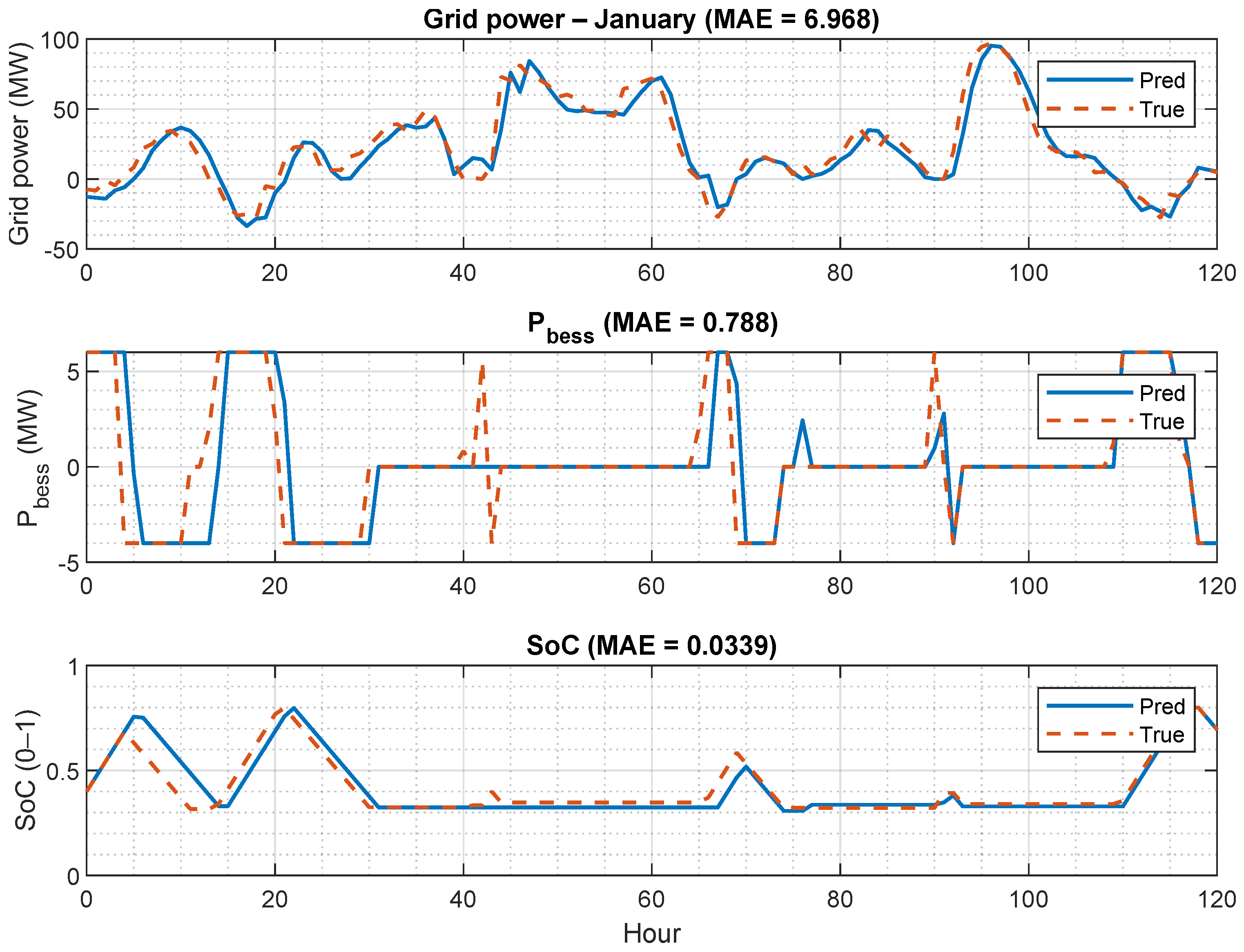

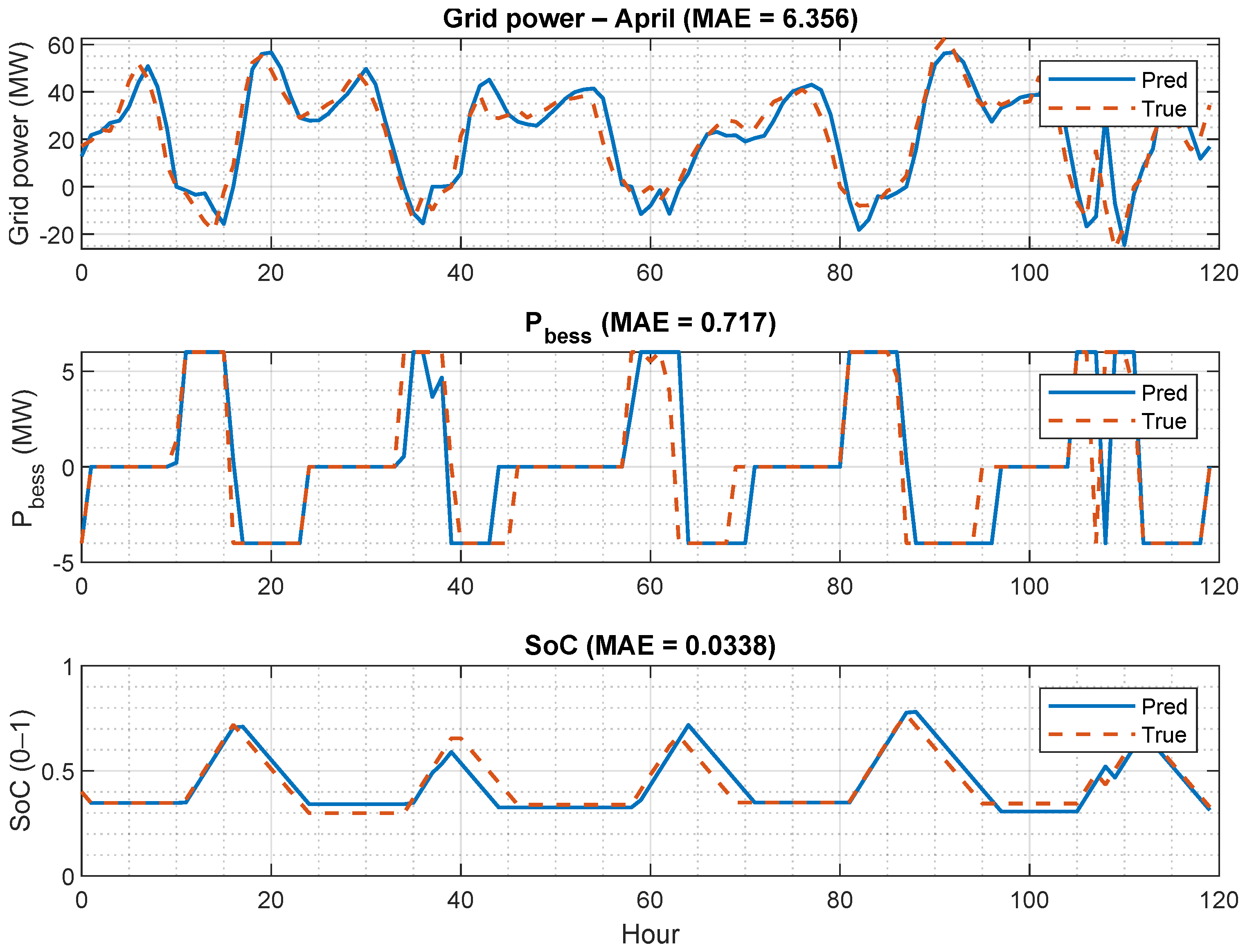

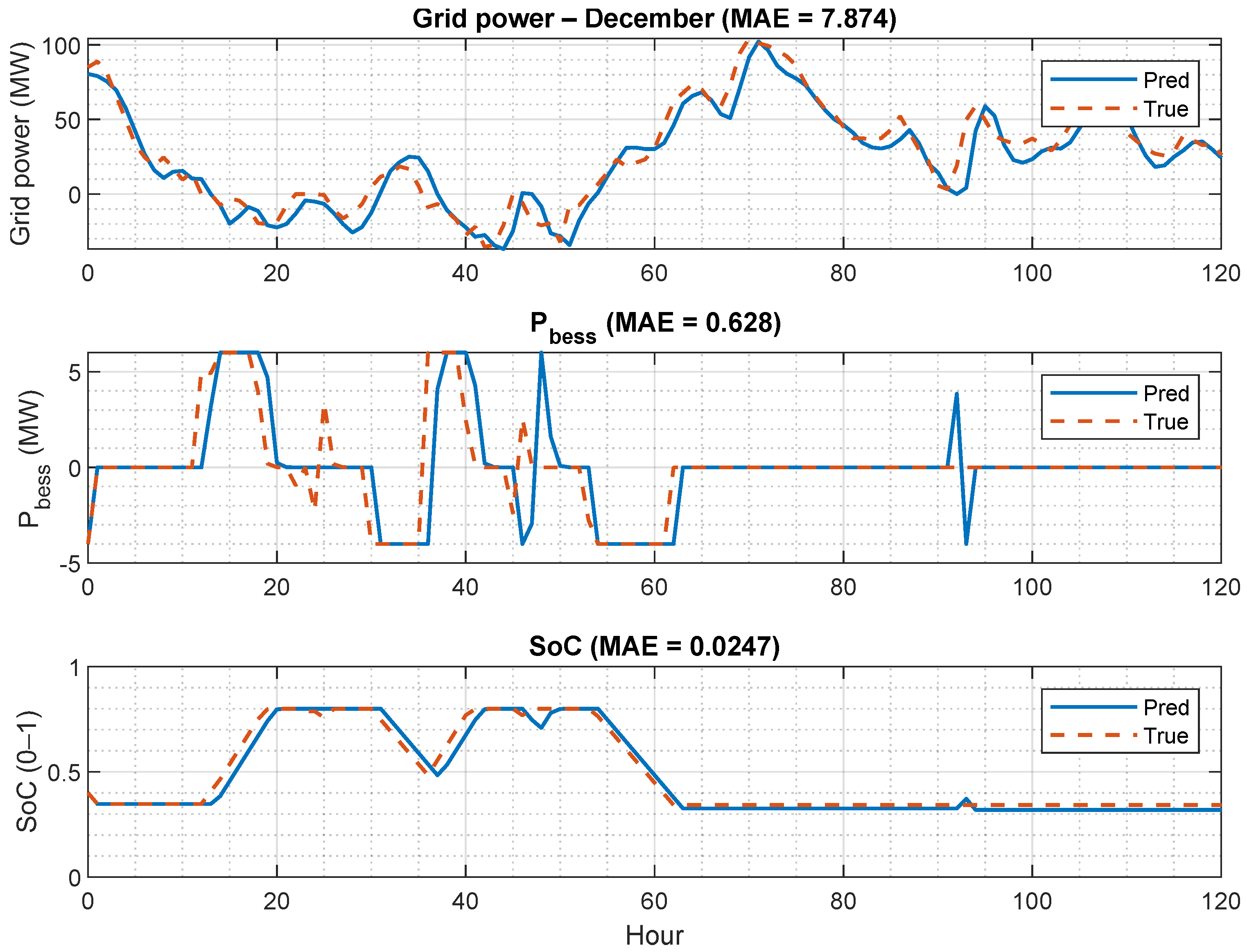

3.2. Microgrid Trajectories Under True vs. Pred Inputs

3.3. Summary of Key Findings

4. Discussion

4.1. Key Findings and Implications

4.2. Interpreting Forecasting Performance Across Targets

4.3. How Forecast Errors Propagate Through EMS Logic

4.4. Sensitivity of Operational KPIs and Operating Costs

4.5. Practical Takeaways for Microgrid Operation

4.6. Limitations and Future Work

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sun, Y.; Zhang, W.; Chen, H.; Li, J. A survey on deep learning methods for power load and renewable energy forecasting in smart microgrids. Renew. Sustain. Energy Rev. 2023, 176, 113162. [Google Scholar] [CrossRef]

- Zhao, X.; Duan, P.; Cao, X.; Xue, Q.; Zhao, B.; Hu, J.; Zhang, C.; Yuan, X. A probabilistic load forecasting method for multi-energy loads based on inflection point optimization and integrated feature screening. Energy 2025, 327, 136391. [Google Scholar] [CrossRef]

- Mahmoudinezhad, S.; Sadi, M.; Ghiasirad, H.; Arabkoohsar, A. A comprehensive review on the current technologies and recent developments in high-temperature heat exchangers. Renew. Sustain. Energy Rev. 2023, 183, 113467. [Google Scholar] [CrossRef]

- Nguyen, T.T.; Nguyen, T.T.; Nguyen, H.P. Optimal operation of battery energy storage system in microgrid to minimize electricity cost based on model predictive control using coyote algorithm. J. Energy Storage 2025, 114, 115904. [Google Scholar] [CrossRef]

- El Qouarti, O.; Nasser, T.; Essadki, A.; Akarne, Y. AC/DC hybrid microgrid energy management optimization as a decisive factor towards De-Carbonization and rational integration of electrical self-generating units using three-objective grey wolf optimization algorithm-power to X and renewable energies solutions-. Int. J. Hydrogen Energy 2025, 138, 1116–1130. [Google Scholar] [CrossRef]

- Tziolis, G.; Livera, A.; Theocharides, S.; Lopez-Lorente, J.; Makrides, G.; Georghiou, G.E. Net load forecasting: A comprehensive literature review. Sustain. Energy Technol. Assessments 2025, 82, 104450. [Google Scholar] [CrossRef]

- Islam, S.; Suaad, A.; Hartmann, M.; Rafajlovski, G. Analysing the Electricity Load and Production by Means of Different Machine Learning Methods: A Case Study of a MG System. Int. J. Inf. Technol. Secur. 2024, 16, 101–108. [Google Scholar] [CrossRef]

- Karamolegkos, S.; Koulouriotis, D.E. Advancing short-term load forecasting with decomposed Fourier ARIMA: A case study on the Greek energy market. Energy 2025, 325, 135854. [Google Scholar] [CrossRef]

- Misiurek, K.; Olkuski, T.; Zyśk, J. Review of Methods and Models for Forecasting Electricity Consumption. Energies 2025, 18, 4032. [Google Scholar] [CrossRef]

- Chang, Z.-H.; Ke, Y.-K. Cumulative Load Forecasting in Microgrid Clusters Using Enhanced-Gated Recurrent Unit Model. IEEE Access 2024, 12, 197907–197916. [Google Scholar] [CrossRef]

- Yunita, A.; Pratama, M.I.; Almuzakki, M.Z.; Ramadhan, H.; Akhir, E.A.P.; Mansur, A.B.F.; Basori, A.H. Performance analysis of neural network architectures for time series forecasting: A comparative study of RNN, LSTM, GRU, and hybrid models. MethodsX 2025, 15, 103462. [Google Scholar] [CrossRef] [PubMed]

- Chan, J.W.; Yeo, H.J. Sparse transformer networks for short-term electrical load forecasting in smart grids. Int. J. Electr. Power Energy Syst. 2024, 158, 110133. [Google Scholar] [CrossRef]

- Piantadosi, A.; Dutto, S.; Galli, A.; Vito, S.D.; Sansone, C.; Francia, G.D. A review of hybrid transformer-based models for photovoltaic power forecasting. Energy AI 2024, 18, 100444. [Google Scholar] [CrossRef]

- Angelopoulos, A.N.; Bates, S. A Gentle Introduction to Conformal Prediction and Distribution-Free Uncertainty Quantification. arXiv 2021, arXiv:2107.07511. [Google Scholar]

- O’Connor, C.; Bahloul, M.; Rossi, R.; Prestwich, S.; Visentin, A. Conformal prediction for electricity price forecasting in the day-ahead and real-time balancing market. Energy and AI 2025, 21, 100571. [Google Scholar] [CrossRef]

- Limouni, T.; Yaagoubi, R.; Bouziane, K.; Guissi, K.; Baali, E.H. Intelligent real time control strategy and power management based on MPC and LSTM-TCN model for standalone DC microgrid with energy storage. Int. J. Electr. Power Energy Syst. 2025, 169, 110761. [Google Scholar] [CrossRef]

- Manzolini, G.; Fusco, A.; Gioffrè, D.; Matrone, S.; Ramaschi, R.; Saleptsis, M.; Simonetti, R.; Sobic, F.; Wood, M.J.; Ogliari, E.; et al. Impact of PV and EV forecasting in the operation of a microgrid. Forecasting 2024, 6, 32. [Google Scholar] [CrossRef]

- Yoo, J.; Jung, S. Modeling forecast errors for microgrid operation using Gaussian process regression. Sci. Rep. 2024, 14, 2166. [Google Scholar] [CrossRef]

- Independent Electricity System Operator (IESO). Public Reports (Power Data). Available online: https://reports-public.ieso.ca/public/ (accessed on 26 January 2026).

- Herodotou, P.; Tziolis, G.; Makrides, G.; Georghiou, G.E. Comparative analysis of machine learning methods for residential net load forecasting of solar-integrated households. Sustain. Energy Grids Netw. 2026, 45, 102106. [Google Scholar] [CrossRef]

- Independent Electricity System Operator (IESO). Hourly Electricity Consumption Data by Forward Sortation Area: Report Description. Available online: https://reports-public.ieso.ca/public/HourlyConsumptionByFSA/ (accessed on 26 January 2026).

- Independent Electricity System Operator (IESO). Generator Output by Fuel Type Hourly Report: Report Description. Available online: https://reports-public.ieso.ca/docrefs/helpfile/GenOutputbyFuelHourly_h1.pdf (accessed on 26 January 2026).

- Pullinger, M.; Zapata-Webborn, E.; Kilgour, J.; Elam, S.; Few, J.; Goddard, N.; Hanmer, C.; McKenna, E.; Oreszczyn, T.; Webb, L. Capturing variation in daily energy demand profiles over time with cluster analysis in British homes (September 2019–August 2022). Appl. Energy 2024, 360, 122683. [Google Scholar] [CrossRef]

- Lyu, C.; Zhang, Y.; Bai, Y.; Yang, K.; Song, Z.; Ma, Y.; Meng, J. Inner-outer layer co-optimization of sizing and energy management for renewable energy microgrid with storage. Appl. Energy 2024, 363, 123066. [Google Scholar] [CrossRef]

- Gabriel, L.F.G.; Ruiz-Cruz, R.; Coss y León Monterde, H.J.; Zúñiga-Grajeda, V.; Gurubel-Tun, K.J.; Coronado-Mendoza, A. Optimizing the penetration of standalone microgrid, incorporating demand side management as a guiding principle. Energy Rep. 2022, 8, 2712–2725. [Google Scholar] [CrossRef]

- Renkema, Y.; Brinkel, N.; Alskaif, T. Conformal prediction for stochastic decision-making of PV power in electricity markets. Electr. Power Syst. Res. 2024, 234, 110750. [Google Scholar] [CrossRef]

- Eren, Y.; Küçükdemiral, İ. A comprehensive review on deep learning approaches for short-term load forecasting. Renew. Sustain. Energy Rev. 2024, 189, 114031. [Google Scholar] [CrossRef]

- Gibbs, I.; Candès, E.J. Adaptive Conformal Inference Under Distribution Shift. arXiv 2021. [Google Scholar] [CrossRef]

- Hasan, M.; Mifta, Z.; Papiya, S.J.; Roy, P.; Dey, P.; Salsabil, N.A.; Chowdhury, N.U.R.; Farrok, O. A state-of-the-art comparative review of load forecasting methods: Characteristics, perspectives, and applications. Energy Convers. Manag. X 2025, 26, 100922. [Google Scholar] [CrossRef]

- Ibrahim, I.A.; Hossain, M.J. Short-term multivariate time series load data forecasting at low-voltage level using optimised deep-ensemble learning-based models. Energy Convers. Manag. 2023, 296, 117663. [Google Scholar] [CrossRef]

- Zhang, K.; Zou, G.; Zhang, J.; Li, H.; Sun, Y.; Li, G. Microgrid energy management strategy considering source-load forecast error. Int. J. Electr. Power Energy Syst. 2025, 164, 110372. [Google Scholar] [CrossRef]

- Liu, X.; Zhao, T.; Deng, H.; Wang, P.; Liu, J.; Blaabjerg, F. Microgrid Energy Management with Energy Storage Systems: A Review. Csee J. Power Energy Syst. 2023, 9, 483–504. [Google Scholar] [CrossRef]

- Putz, D.; Gumhalter, M.; Auer, H. The true value of a forecast: Assessing the impact of accuracy on local energy communities. Sustain. Energy Grids Netw. 2023, 33, 100983. [Google Scholar] [CrossRef]

- Jamal, S.; Pasupuleti, J.; Ekanayake, J. A rule-based energy management system for hybrid renewable energy sources with battery bank optimized by genetic algorithm optimization. Sci. Rep. 2024, 14, 4865. [Google Scholar] [CrossRef] [PubMed]

- Sorourifar, F.; Zavala, V.M.; Dowling, A.W. Integrated Multiscale Design, Market Participation, and Replacement Strategies for Battery Energy Storage Systems. IEEE Trans. Sustain. Energy 2020, 11, 84–92. [Google Scholar] [CrossRef]

- Bordin, C.; Anuta, H.O.; Crossland, A.; Lascurain Gutierrez, I.; Dent, C.J.; Vigo, D. A linear programming approach for battery degradation analysis and optimization in offgrid power systems with solar energy integration. Renew. Energy 2017, 101, 417–430. [Google Scholar] [CrossRef]

| Month | Target | Mean | Std | Min | Max |

|---|---|---|---|---|---|

| January | Load | 94.47 | 18.07 | 55.39 | 146.60 |

| January | WT | 59.66 | 32.28 | 1.09 | 116.91 |

| January | PV | 9.48 | 18.59 | 0.00 | 117.28 |

| April | Load | 71.71 | 12.71 | 42.12 | 110.59 |

| April | WT | 39.82 | 27.73 | 0.50 | 110.70 |

| April | PV | 19.12 | 24.96 | 0.00 | 88.47 |

| July | Load | 74.38 | 18.91 | 40.90 | 119.63 |

| July | WT | 20.35 | 16.37 | 0.06 | 81.56 |

| July | PV | 28.42 | 31.83 | 0.00 | 95.70 |

| December | Load | 84.04 | 13.47 | 52.59 | 114.48 |

| December | WT | 42.61 | 28.72 | 2.44 | 110.99 |

| December | PV | 5.56 | 11.86 | 0.00 | 91.59 |

| Parameter | Value |

|---|---|

| Sampling step | 1 h |

| Energy capacity | 80 MWh |

| Charge power limit | 6 MW |

| Discharge power limit | 4 MW |

| SoC bounds | |

| SoC reserve threshold | |

| Charge efficiency | |

| Discharge efficiency | |

| Deadband | MW |

| Initial SoC |

| Item | Rule |

|---|---|

| Deadband | If the residual power stays within the deadband (deadband = 0.02 MW), the BESS command is set to zero to avoid frequent small switching actions. |

| Charge | If the residual power indicates surplus generation, the controller charges the battery subject to the charge power limit Pchg_max and the SoC upper bound SoC_max. |

| Discharge | If the residual power indicates a deficit, the controller discharges the battery subject to the discharge power limit Pdis_max, the SoC lower bound SoC_min, and the reserve threshold SoC_res. |

| Saturation | Charge and discharge commands are saturated by the configured power limits and SoC bounds to respect the configured constraints. |

| Target | Model | RMSE | MAE | PICP@90 | PINAW | Params | |

|---|---|---|---|---|---|---|---|

| Load | GRU | 3.926 | 3.022 | 0.903 | 0.921 | 0.295 | 12,929 |

| LSTM | 4.523 | 3.517 | 0.865 | 0.915 | 0.330 | 16,961 | |

| Persistence | 4.734 | 3.784 | 0.866 | 0.915 | 0.337 | – | |

| PV | GRU | 6.207 | 3.655 | 0.889 | 0.925 | 0.241 | 12,929 |

| LSTM | 7.103 | 4.342 | 0.860 | 0.923 | 0.274 | 16,961 | |

| Persistence | 8.252 | 4.717 | 0.811 | 0.901 | 0.301 | – | |

| WT | GRU | 7.263 | 5.541 | 0.873 | 0.895 | 0.304 | 12,929 |

| LSTM | 8.503 | 6.547 | 0.837 | 0.903 | 0.349 | 16,961 | |

| Persistence | 6.287 | 4.730 | 0.909 | 0.913 | 0.265 | – |

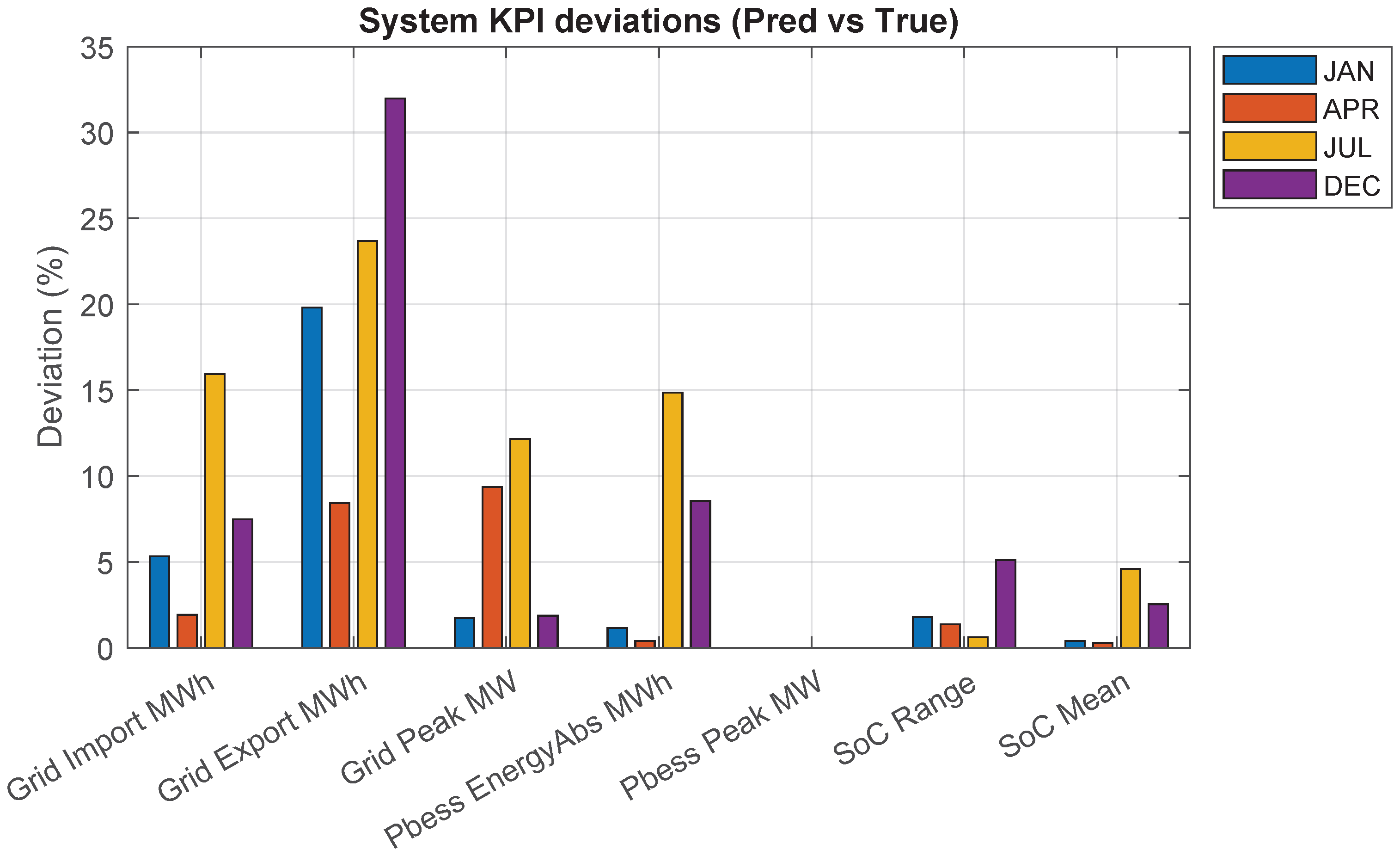

| KPI | Jan | Apr | Jul | Dec |

|---|---|---|---|---|

| Grid-related | ||||

| Grid import energy (%) | 5.32 | 1.92 | 15.95 | 7.48 |

| Grid export energy (%) | 19.81 | 8.43 | 23.69 | 31.98 |

| Grid peak power (%) | 1.74 | 9.36 | 12.16 | 1.87 |

| Battery-related | ||||

| BESS throughput (%) | 1.16 | 0.39 | 14.85 | 8.54 |

| BESS peak power (%) | 0.00 | 0.00 | 0.00 | 0.00 |

| SoC range (%) | 1.79 | 1.36 | 0.61 | 5.11 |

| Mean SoC (%) | 0.40 | 0.29 | 4.59 | 2.54 |

| Month | (EUR) | (EUR) | (EUR) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| True | Pred | Dev. (%) | True | Pred | Dev. (%) | True | Pred | Dev. (%) | |

| Jan | 288,646 | 269,429 | 6.66 | 2524 | 2495 | 1.16 | 291,171 | 271,924 | 6.61 |

| Apr | 267,978 | 272,494 | 1.69 | 3059 | 3047 | 0.39 | 271,037 | 275,541 | 1.66 |

| Jul | 326,254 | 261,713 | 19.78 | 3213 | 3690 | 14.85 | 329,467 | 265,403 | 19.44 |

| Dec | 350,672 | 315,911 | 9.91 | 1393 | 1512 | 8.54 | 352,066 | 317,424 | 9.84 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Liu, Y.-K.; Rafajlovski, G.; Islam, S. GRU-Based Short-Term Forecasting for Microgrid Operation: Modeling and Simulation Using Simulink. Algorithms 2026, 19, 116. https://doi.org/10.3390/a19020116

Liu Y-K, Rafajlovski G, Islam S. GRU-Based Short-Term Forecasting for Microgrid Operation: Modeling and Simulation Using Simulink. Algorithms. 2026; 19(2):116. https://doi.org/10.3390/a19020116

Chicago/Turabian StyleLiu, Yu-Kuei, Goran Rafajlovski, and Saiful Islam. 2026. "GRU-Based Short-Term Forecasting for Microgrid Operation: Modeling and Simulation Using Simulink" Algorithms 19, no. 2: 116. https://doi.org/10.3390/a19020116

APA StyleLiu, Y.-K., Rafajlovski, G., & Islam, S. (2026). GRU-Based Short-Term Forecasting for Microgrid Operation: Modeling and Simulation Using Simulink. Algorithms, 19(2), 116. https://doi.org/10.3390/a19020116